2

Experimentation for Innovation: Best Practices in Highly Innovative Organizations

The committee met with representatives from a number of industries1 and Department of Defense (DoD) organizations engaged in research and development (R&D). The representatives were asked to describe how they developed and matured new products. As part of this, they were asked to address the evolution of existing product lines and introduction of disruptive or innovative new product lines. The committee’s goal was to identify best practices in identifying, developing, and acquiring new products that can lead to both evolutionary and disruptive improvements in Air Force capabilities.

Because it also had available to it a number of existing reports that identified or recommended best practices, the committee believed it would be wise to briefly review the findings of the most relevant of those prior research efforts.

A BRIEF REVIEW OF RELEVANT RESEARCH

Accordingly, before getting into the details of what it learned from its own research, the committee reviewed four earlier studies:

- Government Accountability Office, 2006, Best Practices: Stronger Practices Needed to Improve DOD Technology Transition Processes, GAO-06-883.

___________________

1 Private organizations interviewed include Wharton School of Business at the University of Pennsylvania, Corning, Motive Space Systems, TransAstra, Lightspeed Venture Partners, MITRE Corporation, Leidos, DSI International Inc., Technovation Inc., and the Institute for Defense Analyses.

- Business Executives for National Security (BENS), 2009, Getting to Best: Reforming the Defense Acquisition Enterprise.

- National Research Council (NRC), 2011, Evaluation of U.S. Air Force Preacquisition Technology Development, The National Academies Press, Washington, D.C.

- DoD, Office of the Under Secretary of Defense for Acquisition, Technology, and Logistics, 2013, Performance of the Defense Acquisition System: 2013 Annual Report.

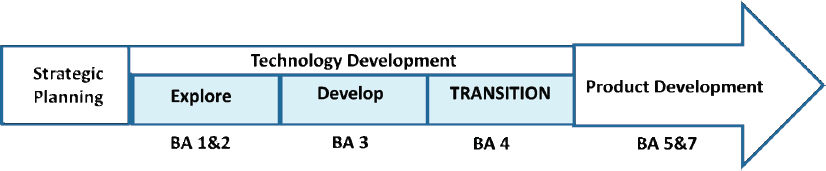

In 2006, the Government Accountability Office (GAO) conducted a thorough evaluation, looking at the breadth of DOD research, development, technology, and evaluation (RDT&E) practices and comparing them with the practices of a number of leading technology companies. Figure 2-1, which shows the DoD budget activities associated with each stage, illustrates this process flow.

For each of the successful companies evaluated by GAO, a similar paradigm was found, as follows:

- Corporate management established strategic plans at least annually, enabling portfolio analysis and ensuring that projects remained matched to market needs;

- R&D activities were organized into “thrusts” (or focus areas), which mapped onto the core markets of their businesses;

- Technology development was kept separate from product development; and

- Early technology development (Explore and Develop) was protected at the corporate level.

It is illuminating to understand what GAO found as constituting each of the stages in technology development:

- In the Explore stage, science and technology is evaluated within corporate thrust (focus) areas, and prospective technologies are identified that hold promise

-

for meeting future market needs. Owing to the very large a priori uncertainty associated with far-reaching new ideas, a high failure rate is expected, and in some ways encouraged, as a multitude of new ideas are explored and winnowed and their feasibility and value for further development are determined. For a technology to exit the Explore stage requires an initial corporate buy-in on the technology.

- In the Develop stage, laboratory prototypes are introduced, experimented with, and evaluated for suitability and for feasibility. Typically, commitment to a product line is required at this stage, and product line managers begin to be engaged in tracking the technology. For the Develop stage, there still are significant risks, but they are much reduced from the level accepted in the Explore stage, and success is clearly anticipated. Nevertheless, a reasonable failure rate is still expected in this stage as the operational feasibility, customer valuation, and practicality (including anticipated cost of production and utilization) of the new technology need to be evaluated and assessed.

- In the Transition stage, an operational prototype is developed, experimented with, and evaluated for suitability and manufacturability. Managers track the technology very closely to ensure that it can readily be integrated into a product line. The exit from this stage comes with corporate buy-in to hand the now matured technology—technology readiness level (TRL) 7 or higher—to a product line manager who then is responsible for getting the technology into its final form, fit, and function before formal production begins.

In this technology development model, it is only following demonstration of operational feasibility that full responsibility for development of a product is handed to a product line manager.

The 2009 report by BENS took an approach similar to that taken by GAO when it compared DoD acquisition policies to those used by high-tech industries. BENS developed specific recommendations for

- Requirements determination,

- The acquisition workforce, and

- Program execution.

The first and third of the BENS recommendations underscore several points in the discussion of technology development in high-tech industries (specifically including DoD) and are worth summarizing. The BENS authors emphasized the use of an iterative and interactive process to establish requirements, whereby a strong in-house engineering base within the Services is allowed to conduct tradeoffs and, when appropriate, to modify requirements based in part on an aggressive yet sensible prototyping effort that should be established to construct and test nonproduction prototypes. In the third recommendation, the authors note that

post-Milestone B efforts should be initiated only once the need is certain, the system concept is clear, the necessary funds are likely to be available for the duration of the proposed effort, and the technology is proven.

The related 2011 NRC report notes as follows:

. . . successful technology developers separate technology development from product development. Technology is developed and matured first, and that is followed by the development of a product incorporating the new technology. These steps are not done concurrently.2

It notes as well that developing technologies and weapons systems in parallel almost inevitably causes cost overruns, schedule slippages, and/or the eventual reduction in planned capabilities, adding, on p. 4, that “simultaneously developing new technology within an acquisition program is a recipe for disaster.”

Finally, a 2013 report by the Office of the Under Secretary of Defense for Acquisition, Technology and Logistics (USD AT&L) evaluated data on the performance of major development acquisition programs (MDAPs) from mid-1992 through 2011. The report considered the impact of immature technologies and immature or changing requirements and specifications for program costs, work content, and schedule. USD AT&L concluded, on p. 109, that “premature contracting without a clear and stable understanding of the engineering and design issues greatly affects contract work, content stability, and cost growth.” As part of the support for this conclusion, the authors note, on p. 51, that of all the MDAPs during the preceding 19 years, “development contracts with early work content stability exhibited significantly lower total cost growth (–61 percent); work content growth (–82 percent); and schedule growth (–31 percent).”3

The BENS, GAO, and NRC reports conclude similarly that development of and experimentation with prototypes should be accomplished as a means of refining requirements, gaining customer buy-in on the value of the product, reducing the risk otherwise inherent in introducing new technologies, and fostering exploration of disruptive innovations. This stream of research, with its emphasis on experimentation and prototyping, provides a good foundation for the original research undertaken in the current study.

___________________

2 National Research Council, Evaluation of U.S. Air Force Preacquisition Technology Development, The National Academies Press, Washington, D.C., 2011, p. 50.

3 Department of Defense, Performance of the Defense Acquisition System: Office of the Under Secretary of Defense, Acquisition, 2013 Annual Report, Technology and Logistics, Washington, D.C., 2013, http://www.defense.gov/Portals/1/Documents/pubs/PerformanceoftheDefenseAcquisitionSystem-2013AnnualReport.pdf.

WHAT IT TAKES TO BUILD AN INNOVATIVE ORGANIZATION

After years of research by industry, government, and academia, there is a good understanding of what it takes to build an innovative organization. The committee’s research drew upon and reinforced this greater body of knowledge (see Box 2-1). Its work was based on the in-depth study of a number of very highly innovative organizations, including the examples shown in Box 2-1.

As one would expect, these organizations included a number of high-tech start-ups, Silicon Valley firms, and older firms that had been able to sustain innovation over decades. One of the central themes of this report is that the USAF knows how to innovate through experimentation and experimentation campaigns—the key is not learning something new, so much as it is achieving more widespread use of what is already known and in use in isolated pockets across the Service.

CRADLE-TO-GRAVE ASPECTS OF EXPERIMENTATION AND INNOVATION

Although the committee focused mostly on innovation and experimentation in the USAF development cycle, as specified in the statement of task, the ideas, tools, and recommendations it addressed could also apply to any phase of the life cycle of a system. As an example, the processes used to develop a new system or

capability is almost, if not completely, identical to the processes needed to add a new capability to an existing weapon system.

The committee recognizes that a normal program has a life cycle from development to end of military use. The opportunity for innovation and experimentation exists throughout the entire program life cycle. Sustainment phases, periodic maintenance phases, and mission upgrade phases of programs also present opportunities for innovative solutions and experimentation to increase mission effectiveness of a system. Indeed, the reason for such mission enhancements can be the result of experimentation to determine if an innovation adds to the effectiveness of an existing system.

Clearly, disruptive DoD innovators, such as the Defense Advanced Research Projects Agency (DARPA), have an important role to play at the cradle stage of the life cycle. A good example is DARPA’s influence on the initial tests supporting stealth and Have Blue, which are discussed below. In another good example, DARPA teamed with the Air Force Research Laboratory (AFRL) and the Air Force test community on efforts with a logistics thrust like $AVE (Surfing Aircraft Vortices for Energy), which is discussed below.

Another important consideration is the time frame of a given initiative. The Air Force must undertake not only longer-term development efforts, which can take years, but must also respond to more immediate, shorter-term capability gaps that require considerable innovation. USAF organizations skilled in addressing these short-term needs include CAOC-X (Box 2-2) and AFRL’s Rapid Reaction Team (Box 2-3).

To the extent the Air Force can recognize these innovative pockets of excellence and the benchmark research they have accomplished, it can maximize their relevance and minimize the “not invented here” pushback to this innovation. The thumbnail sketches of these organizations document that the practices found in other highly innovative organizations have clear relevance in the USAF. An exemplary innovative Special Operations organization, U.S. Special Operations Forces AT&L (SOF AT&L, Box 2-4), in which the USAF is an active joint participant (Air Force Special Operations Command) will also be discussed. Boxes 2-2 and 2-3 describe two examples from within the USAF that exemplify much of what the committee saw in studying highly innovative organizations beyond the USAF, followed by Box 2-4. While these examples illustrate pockets of innovation and experimentation in the Air Force and SOF AT&L, note that they do not represent widespread innovation through experimentation and experimentation campaigns. A core concern of this report is the need for the USAF to suffuse innovation throughout the Air Force culture by means of experimentation and experimentation campaigns.

Nevertheless, the Air Force leadership is interested in seeing innovation through experimentation become a widespread norm, not isolated exceptions. The pockets of success operating today offer proof that experimentation-driven innovation

is possible in the USAF and offers valuable experience to draw upon in spreading the practice of innovation through experimentation more broadly across the organization.

After looking for commonalities between the USAF and a diverse set of highly innovative organizations, the committee has distilled its findings to the points most salient to the Air Force. First, it starts with how the most innovative organizations define the problem, and then it turns to three broad categories of efforts intended to address the problem of innovation from within complex organizations: leadership and organization, tools and processes, and people and culture.

DEFINING THE PROBLEM

Given that a critical part of solving any problem is first clearly defining it, the committee carefully considered how highly innovative organizations look at the challenges of innovation through experimentation.

The Valley of Death—The Universal Problem

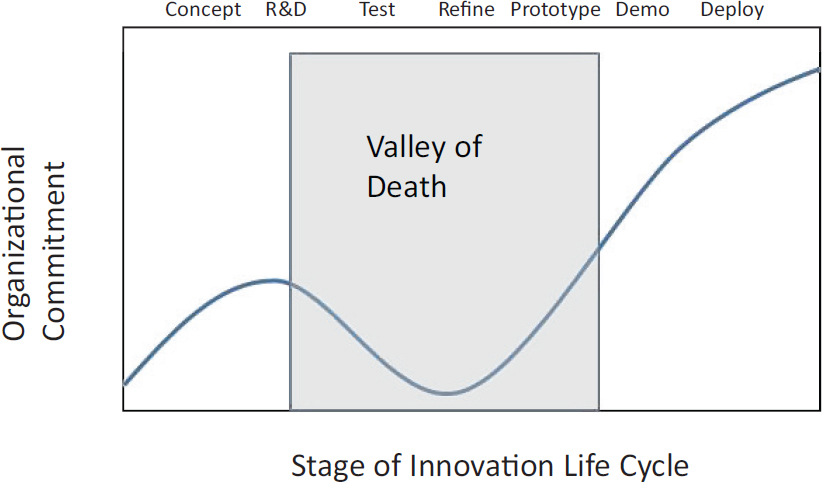

The “valley of death”4 is the label given to a universal challenge in moving an innovation from concept in the laboratory to reality in use. As depicted in Figure 2-2, the development process starts with innovative ideas and future operating concepts. However, the journey from R&D stages to deployment and normal production is difficult and often perilous. The “valley” in this journey refers to the sharp decline in organizational commitment (typically measured in terms of resources and/or capital available to support further development of the technology) until early adopters step in to begin using the technology as it then moves toward maturity and sustainability.

___________________

4 Deborah Jackson describes the valley of death as the “gap in resources for technology demonstration and development” in her white paper “What Is an Innovation Ecosystem?,” National Science Foundation, Arlington, Va., n.d., p. 5.

Research has shown that crossing this so-called valley of death often requires a combination of advocates pushing and pulling the technology.5 The developers of the new technology are commonly the ones pushing it. These are often individuals from the R&D community. Those hungry for the benefits of a better new solution are the usually the ones pulling it. They may be end users looking for better solutions to operating problems or for lower-cost solutions. Either way, highly innovative organizations understand the difficulty of crossing the valley of death

___________________

5 D.J. Jackson, “What Is an Innovation Ecosystem?,” n.d.

and seek to align organizational elements to work together in a combined effort to push and pull the technology across it.

Innovations are particularly vulnerable while they are in the valley; lacking strong advocates, they are susceptible to being “killed” by what we have come to call the “normal production” organization. This is the part of the organization responsible for day-to-day output, be it a product or a service. Daily production depends on smooth operations. Innovations that improve these operations are called sustaining innovations since they strengthen normal production. Innovations that

threaten these operations are called disruptive innovations since they require the organization to rethink and reshape its normal production.6

Normal production is therefore often opposed to disruptive innovation since it threatens the ability of the organization to fulfill its mission of smooth day-today operation. On the other hand, disruptive innovation is often critical to the organization overall because it is often essential to competitiveness and keeping the organization relevant. Moving from analog to digital technology was a huge source of disruptive innovation in the past century. In many organizations, those responsible for normal production resisted this change because it required a complete rework of day-to-day operations. But without changing from analog to digital, many of these same organizations would simply no longer be relevant, left behind by competitors who were able to move the digital innovations across their valleys of death and see them established as the new normal.

The highly innovative organizations studied by the committee all acknowledge the valley of death problem, and they work hard at addressing it. This entails ensuring that today’s needs in the normal production organization are balanced against tomorrow’s needs for breakthrough innovation. An example of how one firm manages this balance is presented in Box 2-5.

___________________

6 C.M. Christensen, The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail, Harvard Business School Press, Boston, Mass., 1997.

As will be seen, much of the success of highly innovative organizations can be attributed to their ability to overcome this problem using a combination of leadership, tools, and culture, as described later in this chapter. First, two additional concepts successful innovators use to define the problem should be considered.

Smart Experimentation Campaigns

Experimentation is fundamental to innovation. Trying something out to see if it works is the core activity for discovering what might become a useful innovation. But, as the committee discussed in the preceding section on the valley of death,

there is often a long and arduous journey between the discovery of the innovation and its actual adoption and use.

One of the most useful means of crossing the valley of death is to design and properly execute an intelligent experimentation campaign. As shown in Box 2-2 at the beginning of the chapter, an experiment is a method for systematically testing assumptions under controlled conditions. A good experimentation campaign is an intelligently sequenced series of experiments and other activities (analysis, modeling, tests, simulations, prototypes, demonstrations, and the like) meant to test assumptions and answer questions as quickly and cheaply as possible. An experiment is one step; an experimentation campaign is a journey. The USAF has successfully used experimentation campaigns (whether or not they were ever called that) to develop many important technological advances.

USAF’S LONG HISTORY OF EXPERIMENTATION CAMPAIGNS

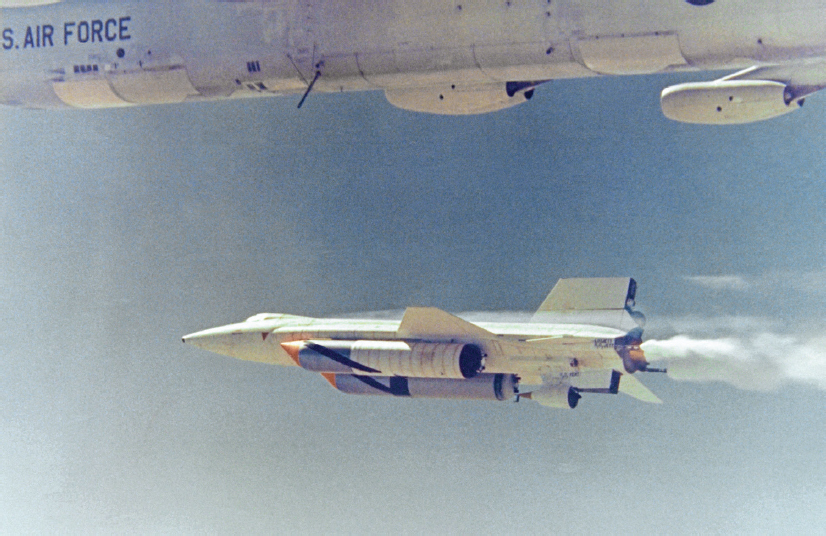

Over its nearly seven-decade existence, the Air Force has run multiple R&D efforts that would, in today’s terminology, be considered experimentation campaigns. Perhaps the best known of these is the so-called X-series research vehicle program, which dates back to 1944, before the establishment of an independent USAF.

This program was begun to address a particular aeronautical crisis: the inability of wind tunnels to furnish reliable test data on transonic flows and their effect on aircraft between Mach 0.75 and Mach 1.25. The Army Air Forces and the U.S. Navy partnered with the National Advisory Committee for Aeronautics (NACA, predecessor of today’s NASA) in March 1944 on a joint program to address the challenges of transonic and supersonic flight. From this sprang the AAF/USAF Bell X-1, which exceeded Mach 1 in October 1947, and the Navy Douglas D-558-2, which exceeded Mach 2 in November 1953.7 This organizational model—the service (or services) working with NACA/NASA (and, more recently, with DARPA)—characterizes the X-series effort to the present day (Figure 2-3).

By the early 1950s, with growing interest in flying above the atmosphere, the X-series program was expanded to include a proposed new hypersonic research aircraft, which became the X-15 (Figure 2-4). This aircraft—arguably the most successful of all research airplanes and the first trans-atmospheric aircraft ever built—completed 199 flights over a roughly decade-long flight research effort that saw it reach altitudes in excess of 67 miles and speeds in excess of Mach 6 (including one to Mach 6.70 in 1967). Key to its success was the formation of a joint Air

___________________

7 R.P. Hallion, Supersonic Flight: Breaking the Sound Barrier and Beyond The Story of the Bell X-1 and the Douglas D-558, National Air and Space Museum of the Smithsonian Institution, Washington, D.C., in association with Macmillan Co., 1972.

Force-Navy-NACA (later NASA) X-15 steering committee to oversee the development of the aircraft, its associated test corridor (the so-called High Range, from Utah to California), and support facilities, aircraft, and systems.8

The desire to create reusable spacecraft generated interest in alternative means of returning to Earth using winged reentry vehicles, triggering several experimentation campaigns in the 1970s. From the 1980s onwards, the X-series addressed both remotely piloted and piloted systems, such as the X-29 forward-sweptwing testbed, the X-31 agility testbed, the X-37/X-37B reusable lifting reentry spacecraft, the X-48 blended wing body, the X-43 hydrogen-fueled scramjet; and the X-51 JP-7 (Figure 2-5) fueled, thermally balanced, scramjet test vehicle.

The 1990s saw renewed NASA interest in using the X-series as a convenient means of achieving “better, cheaper, faster” development of new technology sys-

___________________

8 M. Evans, The X-15 Rocket Plane: Flying the First Wings into Space, University of Nebraska Press, Lincoln, Neb., 2013.

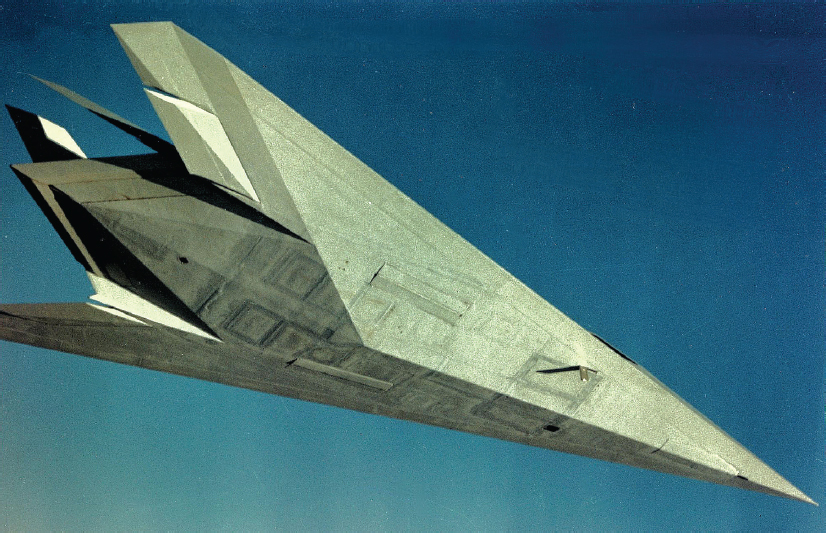

tems. Of all these campaigns, one of the most successful was the so-called stealth revolution, which, over approximately two decades of work, resulted in the introduction of the world’s first very-low-observable attack aircraft system, the F-117.

In 1983, the United States Air Force declared the Lockheed (now Lockheed Martin) F-117A stealth fighter (Figure 2-6) operational. The next 7 years were a time of intensive experimentation, as the F-117 matured. The Service developed a comprehensive mission planning system to support its operations, special weapons (typified by the GBU-27 2,000-lb penetrating laser-guided bomb), and suitable tactics. The wisdom and success of that effort was evident in the 43-day Gulf War air campaign, where the F-117 cracked open the multilayered and redundant Iraqi integrated air defense system, then the most formidable the world had seen, enabling conventional attackers to operate essentially at will across the Iraqi and Kuwaiti theater of operations from the opening night of the war onwards.

The following were key to the success of the F-117:9

- A carefully constructed partnership between industry and the Air Force.

- The early intelligence exploitation of Soviet technical literature that gave Lockheed Skunk Works the ability to calculate the signature of a faceted radar-defeating platform.

- The ability to undertake sophisticated modeling, simulation, and testing against anticipated threat systems.

- The ability of the Air Force (and industry) to undertake comprehensive pole-model studies of shapes having low radar cross-section (RCS), encouraging design studies on low-RCS concepts.

___________________

9 D.C. Aronstein and A.C. Piccirillo, Have Blue and the F-117: Evolution of the “Stealth Fighter,” American Institute of Aeronautics and Astronautics, Reston, Va., 1997; B.R. Rich and L. Janus, Skunk Works: A Personal Memoir of My Years at Lockheed, Little, Brown, Boston, Mass., 1997; R.H. Van Atta, S. Reed, and S. Deitchman, DARPA Technical Accomplishments, Volume II: An Historical Review of Selected DARPA Projects, IDA Paper P-2429, Institute for Defense Analyses, Alexandria, Va., 1991.

- Extensive use of a test range where researchers could evaluate a low-RCS piloted research vehicle (the Have Blue program, Figure 2-7).

- Recognition that the F-117 was a system within a system of systems, so that a total concept of operations and supportive structure grew up around it, each with its own linked experimentation campaigns.

- Rigorous and repetitive testing and training.

- Support by senior leadership and the necessary funding.

This campaign spawned an entire technical field of inquiry and development that produced as well the world’s first low-observable strategic bomber, the B-2; a stealthy cruise missile (the AGM-129); and a foundational analytical database and industrial infrastructure that have since supported numerous other stealth efforts.

As mentioned at the beginning of the chapter, entire books have been written on how to design and execute an experimentation campaign. Here the committee provides only a high-level summary of the concept.

Experimentation campaigns are nothing more than a logical plan for sequencing efforts intended to answer key questions. These questions generally test assumptions (Can we support this load with a carbon strut weighing only 4 pounds?) and reduce risks (We need to know if this mechanism will continue to work after being dropped from 8 feet). The goal of an experimentation campaign is to reduce risk by improving the ratio of knowledge-to-assumptions as cheaply and quickly as possible.

For example, consider AFRL’s $AVE program, an experimentation campaign that pursued fuel savings to be had by flying in the same sort of formation a goose uses to surf on the up wash created by the bird in front of it, using the Von Karman vortex. Progress came from raising and answering a series of questions in a logical sequence.

The first question was, Can we save fuel if we do this? Analysis was able to show theoretical savings, and earlier flights had documented that precise formation flying could deliver fuel savings of 10-15 percent. However, those early tests also demonstrated that manually flying in the required formation was roughly as taxing on the crew as formation flying for refueling, and that this level of effort was simply not humanly possible for extended periods of time. So the next question became, Can we automate the flying required to realize the potential savings?

DARPA and AFRL teamed up to answer this question, building on work done by NASA in its Autonomous Formation Flight program a decade earlier. They first tested the system using F/A-18s and were able to reproduce the 10-15 percent savings while safely relying on the autopilot to guide the trail aircraft. Once the theory and software had been proven on fighters, the next question became, Will it work for “heavies”(very large aircraft like the C-5A or the Boeing 747)? Tests on C-17s again delivered the expected savings but raised new questions about the impact on

engine and airframe life associated with the greater turbulence inside the vortex. Additional flights collected data answering these questions. The chief scientist for the Air Mobility Command (AMC) reported that $AVE had clearly proven that the concept met AMC’s safety, crew workload, and viability criteria, at which point the next task was to analyze the data and investigate the possibility of implementing the technology for other aircraft types.

Note how the work in the $AVE example cycles logically through a series of questions and answers, with each cycle informing the next. Note also the emphasis on getting the simpler and cheaper answers nailed down before tackling the more difficult issues. In other words, in a well-run experimentation campaign, researchers should first do whatever they can do quickly and cheaply to answer really basic questions, and then use these initial answers to inform subsequent steps, likely to cost more money and take more time as one moves along the path from concept to deployed innovation. Paul Kaminski, former U.S. Undersecretary of Defense for Acquisition and Technology, summed up experimentation campaigns very succinctly: “Fail fast before investing big.”

This approach was the basis of innovation in the venture capital (VC) firms the committee studied as part of its research. The VC approach would consider as many as 5,000 new ideas, out of which around 500 would be seriously evaluated. Of these, perhaps 50 would be chosen for an initial round of funding. Typically, a principal from the VC firm would actively engage with each of the start-ups he or she sponsored. Those that looked to be proceeding to some form of success might be rewarded with a second round of funding, at a noticeably higher level. If a third round of funding was warranted, other VC firms might be brought in to spread the risk. The expectation was that a few big winners and a dozen or so lesser winners would provide the return on investment, enabling the VC firm to raise a new round of capital. Notably, the emphasis was not the number of failures tolerated in order to get to these few big wins. Venture capitalists tolerate hundreds of disappointing outcomes because they recognize these failures are their fastest, surest path to success.

Before leaving the discussion of experimentation campaigns, it is worth noting that the two examples given in this section (low observables and $AVE) were both drawn from within the USAF. These examples were very much in keeping with the benchmarks observed by the committee in its research on highly innovative organizations outside the Air Force. In other words, it is clear that the Air Force knows how to run a smart experimentation campaign. And yet, as will be show in Chapter 3, the use of experimentation campaigns to drive the pace of innovation in the Air Force is simply not at the scale or the scope needed. The committee’s research indicates that this is in large part attributable to a very low tolerance for failure, and the next section looks at how highly innovative organizations distinguish between different types of failures.

Edisons versus Edsels

Notice that experimentation campaigns, the key to innovation, are all about failing fast as an inevitable part of learning answers to key questions. Success with experimentation campaigns requires tolerance for the sort of disappointing results inherent in carrying out good groundbreaking research. Innovation through experimentation is all about finding out what works, and this usually entails discovering a lot of things that do not work. As Wilbur Wright said in 1901, comparing flying an airplane to riding a fractious horse: “If you are looking for perfect safety, you will do well to sit on a fence and watch the birds; but if you really wish to learn, you must mount a machine and become acquainted with its tricks by actual trial.”10

There is a risk associated with such innovation—not everything is going to turn out as one would hope—but these disappointments are not failures so much as they are an inherent part of learning. In fact, disappointing results are so fundamental to progress in innovation, that the committee tries to avoid calling them failures. A disappointing result from a well-designed experiment is not best viewed as a failure—it is best viewed as progress. This distinction is so important that the committee has coined a new term to make the distinction more evident. It calls a disappointing result from which an important lesson has been learned an “Edison,” as a reminder that success was the product of more than 6,000 disappointing results during Thomas Edison’s famous experimentation campaign leading to a workable incandescent lightbulb.

The highly innovative organizations studied have come to terms with the distinction between a failure and an Edison. In fact, many of them embraced “smart” failures as an essential piece of their innovation process. By encouraging employees to risk early smart failures, or Edisons, these organizations seek to avoid the ultimate failure of launching a major innovation, one requiring a significant investment, only to see it flop. This represents a true failure of the innovation process, and the committee calls this type of failure an “Edsel” to distinguish it from a smart failure, called an Edison.

The Edsel was a much-ballyhooed car launched in the late 1950s that turned into one of the most spectacular flops of modern-day business. The Edsel was the result of a $400 million investment made largely without the benefit of research testing basic assumptions (like what the car-buying public was interested in buy-

___________________

10 W. Wright, “Some Aeronautical Experiments, 18 September 1901,” p. 100 in The Papers of Wilbur and Orville Wright, including the Chanute-Wright Papers (M.W. McFarland, ed.), McGraw-Hill, New York, N.Y., 1953.

ing) while ignoring extensive findings on other key questions (e.g., what the car model should be named).11

It is important to think very differently about Edison-type failures and Edsel-type failures. It is common to hear that “we need to learn to celebrate failure if we ever want to be innovative.” Actually, this is only partially accurate. The best innovators celebrate their Edison-type smart failures, while making every effort to avoid an Edsel-type ultimate failure. The key to doing this successfully is a well-designed and carefully executed experimentation campaign. Again, “Fail fast before investing big.” Box 2-6 on failure as a strategy for success at SpaceX illustrates this essential point.

The last three sections can be recapped as follows:

- Valley of death: In general, all organizations face a valley of death problem when it comes to innovation. The best innovators have found a way to address this by balancing the normal production organization’s desire to avoid disruption against the overall organization’s need for disruptive innovation.12 As will be seen in Chapter 3, there is an opportunity for the USAF to improve the way it addresses this challenge.

- Experimentation campaigns: These are the best ways to move an innovation along its life cycle from concept to deployed solution as quickly and efficiently as

___________________

11 R. Feloni, “4 Lessons from the Failure of the Ford Edsel, One of Bill Gates’ Favorite Case Studies,” Business Insider, September 5, 2015, http://www.businessinsider.com/lessons-from-the-failure-of-theford-edsel-2015-9.

12 C.M. Christensen, The Innovator’s Dilemma, 1997.

- Edisons and Edsels: The best innovators understand that the key to avoiding Edsels is embracing the value of Edisons. As we will see in Chapter 3, this is a major opportunity for the Air Force.

possible. While it is possible to find great examples of successful campaigns in the USAF, as we will see in Chapter 3, these examples are too few and far between.

Building an organization where the Edisons far outnumber the Edsels is not easy. The best organizations of those that were studied attack the problem on three fronts, starting with leadership and organization.

EXPERIMENTATION LEADERSHIP AND ORGANIZATION

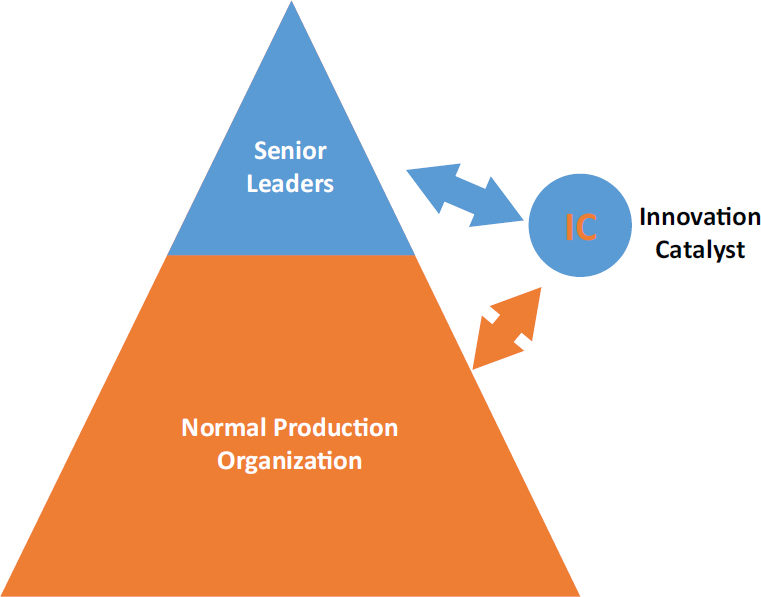

The committee studied a variety of highly innovative organizations, from large, high-tech manufacturing firms to small start-ups, and from venture capitalists to military units.13 Across this diverse sample, one pattern remained constant: There was a leader unambiguously in charge of driving innovation, and this leader had a direct connection to senior leaders at the top of their organization. The elements of this arrangement are shown in Figure 2-8.

First there is the leader who is clearly in charge of driving innovation.14 For reasons explained below, this person is labeled the “Innovation Catalyst.” In the highly innovative organizations studied, someone “owned” the problem. The emphasis here should be placed on some one. The committee did not observe any highly innovative organizations in which the ownership of driving innovation resided in a committee or steering board or faceless process. In every case, a clearly identified individual was assigned responsibility for leading this work, was evaluated on their success in doing so, and woke up every workday focused on how to get it done better. When located at the top of the overall organization (not all of them were, as explained below), this Innovation Catalyst often carried a title such as chief innovation officer or chief technology officer (CTO).15

___________________

13 Examples of the highly innovative organizations studied include the following: LightSpeed Venture Partners, MITRE Corporation, Science & Technology Policy Institute, Institute for Defense Analyses, Leidos, Corning Inc., Wharton School of Business at the University of Pennsylvania, DSI International, Inc., Technovation, Inc., and U.S. Navy’s TANG Program, Deputy Assistant SECDEFEmerging Capability and Prototyping, and the U.S. Marine Corps Warfighting Lab/Futures Directorate. See also Appendix C, Meetings and Speakers.

14 For discussion on leadership’s role in steering innovations across the various stages of the innovation life cycle, including, most importantly, the Valley of Death, see Geoffrey Moore’s classic work, Crossing the Chasm, Harper Collins, New York, N.Y., 1991.

15 While many of the individuals serving in the role of Innovation Catalyst at the top of their organizations carry the CTO title, not all Innovation Catalysts are CTOs. Some CTOs work as Innovation Catalysts; others have a narrower role.

Second, wherever they are located and however they are organized, the Innovation Catalysts would have authority to set priorities and would have discretionary control of a significant innovation fund. Without this, they are toothless tigers, an expensive bother unlikely to take much of a bite out of anything.

Third, this individual was directly connected to the senior-most leadership in their part of the organization. For example, the corporate CTOs the committee interviewed reported directly to the corporation’s CEO and sat on the highest corporate councils. This is essential, for as will be seen, a primary responsibility of the innovation driver is to ensure that innovation is tied to the broader organizational strategy and mission.

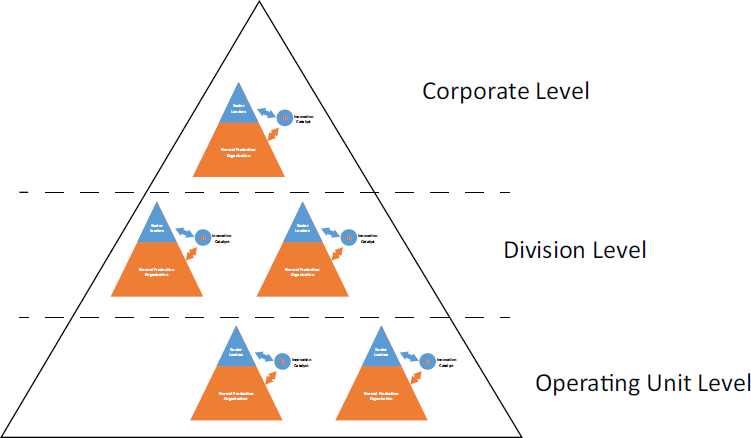

In larger organizations, it was common to find this two-part pattern: a named senior Innovation Catalyst with (1) clear responsibility for driving innovation and (2) operating with direct access to senior leadership. If needed, there may be a junior Innovation Catalyst at the division or operating levels. So, for example,

there might be a corporate-level CTO reporting to the corporation’s CEO with a divisional CTO reporting to the division vice president. When the same two-part pattern was replicated at a variety of levels, depending on varying circumstances across the organization, the committee came to see this recurring pattern as a “fractal” approach to innovation leadership.

A fractal is a naturally occurring phenomenon in which similar patterns recur at different scales. For example, from 30,000 feet, a major river snakes its way through delta country in a sinuous path that is virtually undistinguishable from the pattern of curves found in smaller feeder streams as seen from 3,000 feet. Similarly, the pattern of a focused innovation leader reporting directly to the senior-most leadership in their part of the organization was a fractal pattern found at a variety of levels across larger highly innovative organizations. The fractal nature of this pattern is reflected in Figure 2-9.

The fact that these leaders could be found at so many different levels of the organization, and could be given so many different titles depending on the organization and their level in it, forced the committee to come up with a generic label for them in order to facilitate discussion in general terms of this group of leaders and the work they do. As mentioned above, the label the committee settled on was Innovation Catalyst. Beyond the discipline of chemistry, the term “catalyst” has come to mean a person or event that causes changes to occur more quickly. Leader-

ship from a clearly identified Innovation Catalyst was the single most consistent practice observed by the committee.

Next, it is important to discuss how Innovation Catalysts operated and how their function was positioned in the larger organization.

CONCEPT OF OPERATION

Across the highly innovative organizations it studied, the committee found that Innovation Catalysts, regardless of their actual titles, worked under very similar concepts of operation. While the details varied from organization to organization, the underlying principles were fundamentally similar, as follows:

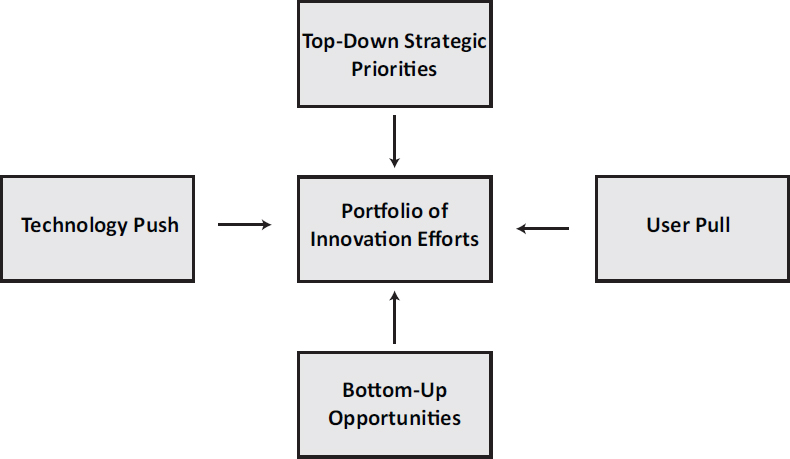

- Overseeing a portfolio of innovation efforts. The Innovation Catalysts worked across a range of innovation efforts. Some efforts were more mature than others. Some focused on products, while others focused on processes. Some were disruptive innovations, while others were sustaining innovations. The point is that the Innovation Catalysts observed had broad and far-reaching responsibility for driving innovation in the organization.

- Balancing and blending four forces for innovation. As shown in Figure 2-10, the portfolios of innovations overseen by the Innovation Catalysts were the result

-

of balancing technology push against user pull and blending top-down strategic priorities against bottom-up opportunities.

- Operating with“known” funding. The funding may come from the divisional level or the corporate level, or some blend of the two. Importantly, to the extent the funding is expected to yield disruptive innovation, it is not tied to normal production. In other words, divisions are not expected to fund innovation that would be detrimental to their current operations, and where the corporation is seeking disruptive innovation, investments in experimentation are made at the corporate level.

Tracking Metrics

There is an old saw about “what gets measured gets managed.” Given the popularity of this saying, the committee went into this work expecting metrics to be a key topic and the centerpiece of best practices. It was surprised that many of the highly innovative organizations it studied said little about metrics, and those that did address them urged caution.

The committee has considered why this might be the case. It knows that metrics are a key part of managing in the normal production world. There, the focus on steady, predictable production to a drumbeat, lends itself to a range of metrics, often arrayed as fairly complicated scorecards. Such metrics bring forth a laser-like focus on achieving the numbers. These metrics also reinforce resistance to anything that might upset the applecart, which, by definition, is precisely what disruptive innovations do. In other words, it is not difficult to find examples of metrics working to suppress innovation.

The committee suspects this is why innovative organizations have a very cautious approach to putting in place the same sort of metrics and scorecards one sees in many normal production organizations. Metrics, if implemented, should be tailored to individual experimental programs and should be expected to evolve over the course of the experimentation campaign. It is easy to imagine a scenario in which a seemingly logical metric could have important unintended consequences for an organization seeking to increase innovation. Take, for example, the metric “R&D Projects Transitioning to Deployed Innovations.” This metric could be seen as a measure of an organization’s success in moving new technologies across the valley of death, a key challenge to innovation, as was already described. Some organizations, however, are wont to take a single metric to its illogical extreme. In this case, an organization seeking to maximize the transition rate metric would be tempted to only take on low-risk R&D, so that a metric intended to increase innovation could actually decrease it. The same is true of any number of metrics intended to drive innovation, so that the single biggest finding of the committee’s research here may be the need for caution. A leader of a highly innovative organi-

zation suggested to the committee that it is better to have no metrics at all than to have bad ones that drive bad behavior and create skepticism, and that one should choose carefully.

The committee did find interesting examples of metrics intended to address the specific innovation challenges of particular organizations and has grouped several, as follows:

- Schedule

- —Milestone delivery to schedule (intermediate milestones delivered to schedule, with typically 5 to 10 key milestones in the life of a major program).

- —Product release on time according to plan (multiple stages of release, all measured independently).

- —X percent of 90-day plans completed on time and per plan.

- Resource utilization

- —Skill group overall utilization (portion of a given skill group that is allocated to projects).

- —Resource intensity (portion of key resource groups allocated to the top priority programs).

- Balanced portfolio

- —Average innovation stage of the top program portfolio (indication of the maturity and resource needs of the portfolio).

- —Aggregate risk score of the top portfolio (green, blue, black diamond, etc.).

- Strong experimentation campaigns

- —Strength of customer interaction (path to a crystallizing customer16).

- —Assumption testing status (portion of key assumptions that are still untested).

- Understanding costs

- —Business model completeness (business model canvas fully understood).

- — Maturity level of the cost model (ranging from “crayon math” to “operationally ready”).

It might be tempting to look at any such list of metrics as a template. The committee would caution against this, and points out that it did not find a common set of metrics in use across any of the highly innovative organizations it studied. It carefully, and cautiously, developed the following metrics to fit individual circumstances:

___________________

16 A crystallizing customer is a customer who, while not yet committed, is progressively developing more interest in the benefits of a given technology or system offering.

- Leadership for experimentation. Innovation depends on experimentation, and the Innovation Catalysts studied were all champions of experimentation. Not only did they operate under the guideline “let’s try a lot of things and keep what works,” but they were smart in the way they went about sequencing the studies, modeling, simulations, experiments, prototyping, and trials that formed the type of experimentation campaign described earlier in this chapter. The tools used in carrying out this work will be discussed later in this chapter.

- Fostering a culture of innovation. The important topic of culture will be addressed in its own section, below, but here it will simply be noted that the Innovation Catalysts studied consistently recognized the importance of culture and their responsibility for helping to shape it in a manner conducive to innovation.

ORGANIZATION

While there was uniform consistency across the highly innovative organizations in terms of their use of one or more Innovation Catalysts, there was greater variation in how the offices of the Innovation Catalysts were organized. Nonetheless, certain patterns and popular options were observed.

- Reporting directly to the top. As has been mentioned, one of the most important features consistently observed related to organization: All the successful Innovation Catalysts reported directly to the senior-most leadership in their part of the larger organization. This connection to leadership might even be to the very top of the organization if the Innovation Catalyst was serving in a corporate-wide role or to senior divisional leaders if the Innovation Catalyst was part of the fractal pattern operating at the division level. This direct reporting line was essential to ensure that the organization’s innovation efforts were directly supportive of the organization’s broader strategy and mission. The direct connection between senior leadership and innovation was also essential to overcome resistance to innovation and protect innovation efforts from the “antibodies” found throughout the larger normal production organization. (See earlier discussion about innovations, especially disruptive innovations, being vulnerable to attack from the normal production organization as they traveled across the valley of death.) And finally, the Innovation Catalyst had to be in a position from which he or she could speak frankly to senior leadership about what was and was not reasonable from a technological perspective, even if it occasionally meant pointing out that “the emperor wore no clothes.”

- Lean staffs. Despite the variety of work undertaken by Innovation Catalysts, another pattern observed was that they did not oversee large staffs or operate

-

through standing committees. There are decades of research17 establishing the importance of using multidisciplinary teams to accomplish innovations. The organizations studied recognized this, but the committee did not seek to assign these teams within the Innovation Catalyst’s “shop.” Usually there was a relatively small group of individuals who worked with and through a large, dispersed network of contacts to form and disband ad hoc virtual teams for addressing issues as they arose and were resolved in a streamlined, throughput-oriented process.18

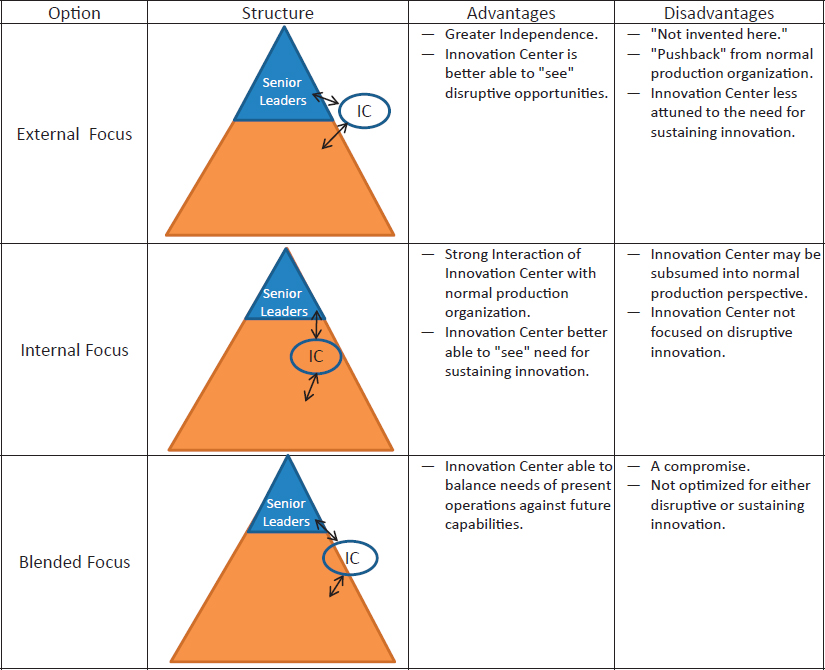

- Inside-outside balance. Innovation Catalysts live with a tension between the need to be a constructive, sustaining part of the larger organization, and the need to deliver the sort of disruptive innovations that trigger change—and even, occasionally, chaos—in the larger organization. The committee did not observe a one-size-fits-all solution for balancing these opposing goals. In fact, it observed a range of solutions, suggesting this is a “Goldilocks problem,” which requires finding the solution that is just right for a particular organization from among three options, as depicted in Figure 2-11 and described below.

The options shown at the left-hand side of Figure 2-11 are described as follows:

- External focus. The intent here is to provide considerable autonomy and freedom to explore, largely unconstrained by the standard procedures and culture of the normal production organization. This type of structure lends itself to disruptive innovation more than sustaining innovation. However, it also reduces the degree of empathy between the two organizations—normal and innovative—and the ability for the innovators to understand and align with the needs, priorities, and objectives of the normal production. This creates the risk of the normal production organization rejecting new ideas, a problem sometimes called the “not-invented-here syndrome.”

- Internal focus. This structure creates an innovation shop within the larger normal production organization. This organizational arrangement is more likely to produce sustaining innovations rather than disruptive innovations. Consequently, the pushback from the normal production organization may be less, but the risk is that this arrangement will produce fewer products, ideas, and processes that challenge the status quo.

- Blended focus. This structure connects the Innovation Catalyst and the normal production organization, while allowing for differences. It enables link-

___________________

17 See Box 2-1 for representative examples of decades of research on innovative organizations. These sources attest to the importance of multidisciplinary teams for innovation.

18 Throughput refers to the amount of material, data, etc., that enters and goes through something (such as a machine or system) (Merriam-Webster Dictionary, http://www.merriam-webster.com/dictionary/throughput).

ages and shared interests and awareness of needs, priorities, and objectives while still maintaining a degree of independence. It provides a connection between the innovation shop’s capabilities and the demand signal coming from the normal production organization. In other words, it seeks to blend and balance the best of the external and internal orientations, and as a practical matter, many organizations are likely to opt for a balanced solution, with the relative emphasis on external versus internal orientation dictated by particular circumstances.

Having introduced the notion of Innovation Catalysts and having considered how they operate and how they are organized, the committee turns to the tools and processes these catalysts use in managing the innovation process.

EXPERIMENTATION PROCESSES AND TOOLS

In this section, the committee looks at the most important processes and tools it observed being used by the highly innovative organizations it studied.

Sandboxes

The most innovative organizations make a concerted effort to create and foster an ecosystem that supports experimentation and innovation. Specifically, they provide funding and facilities to enable their workforce to design and execute experimental investigations ranging from prototypes to simulations to A/B testing in a secure “sandbox.” In the introduction, the committee made the point that the USAF does not have a space in which to innovate because the normal production organization has established its dominance over much of the organization. The notion of a sandbox is a safe place for the small groups to test out innovative ideas and safely carry out much-needed experimentation campaigns. In other words, sandboxes are not tools in the conventional sense, but they provide a place within the organization where it is safe to use the tools required by experimentation campaigns.

Experimentation Campaign Tools

There is a well-understood toolbox for working on experimentation campaigns. This toolbox includes scenario planning, hypothesis testing, analysis, modeling, simulation, prototyping, and demonstrations. Many of these tools are in use every day in the USAF although, as will be seen, they cannot be sewn together to form a complete experimentation campaign.

Experimentation can refer to the iterative trials typically undertaken to prove hypotheses, refine specific technology developments, or test and evaluate component or system performance. It is a well-used methodology in the research world and equally valuable in promoting innovation and shaping a culture of learning by doing. It can, however, be expensive, especially if each iteration requires significant resources, is destructive, or requires a long time to complete. For those reasons (and others), experimentation is not confined to the laboratory or field but can also be accomplished in the digital domain via modeling, simulation, and gaming.

For the purpose of this report, modeling, simulation, and gaming can be defined in the following manner: Modeling refers to the use of physics- or mathematics-based numerical descriptions of technologies and effects that can serve a predictive role in, for example, estimating the performance of a specific technology. Simulations refer to scenario-based models that combine multiple phenomenologies, that can examine the time evolution of systems, or that seek to examine component performance on a larger, integrated scale. Gaming refers to methods that use simu-

lations to explore the behavior of humans in interacting with the technologies and in using the output of simulations for complex decision making.

These tools can expedite the transition of a technology from idea to use by offering a means for repeated experimentation, including in the design phase, by providing less expensive methods for assessing multiple scenarios and by exploring how humans might adopt the technology. While it may not be cost effective to develop detailed models for simple items, like a radio, it is essential for more complex systems, like an airplane.

An excellent example of a tool that supports this type of experimentation is the Simulation and Analysis Facility (SIMAF) which employs live, virtual, and constructive modeling and simulation tools to assess R&D innovations in operational contexts. More specifically, “SIMAF is a state-of-the-art U.S. Air Force facility specializing in high-fidelity, virtual (manned), distributed simulation to support acquisition and test located at Wright-Patterson Air Force Base, Ohio. The SIMAF is charged with supporting capability planning, development, and integration in support of Air Force and Department of Defense acquisition program objectives. Key areas of emphasis include capability development and integration, network-centric system-of-systems development, and electronic warfare.”19 A smart experimentation campaign is one that takes advantage of the economy and speed of these methodologies appropriately. In the highly innovative organizations that were studied, not only could these tools be seen at work, but their use is also carefully orchestrated as part of planning and executing well-conceived experimentation campaigns.

Makerspaces

Also known as hackerspaces, fab labs, Countermeasures Hands-On Program (CHOP) shops, monster garages, and some other names, makerspaces are do-it-yourself spaces where people can gather to experiment, create, invent, and learn. These facilities are often outfitted with modern production capabilities (such as 3D printers, computer numerically controlled (CNC) routers, and design software) that can be used to rapidly produce a series of low-cost prototypes in a variety of configurations. The most effective makerspaces are open and available to any interested party who wants to learn to use the equipment. They are staffed by experienced mentors (part-time volunteers or dedicated staff) who provide guidance and advice to newcomers.

A well-equipped makerspace can provide several important enablers for innovation and experimentation. In addition to design tools and software in a sandbox environment, an open makerspace creates opportunities for networking,

___________________

19 T. Menke and M.W. March, Simulation and Analysis Facility (SIMAF), ITEA Journal 30:469-472, 2009.

mentoring, and collaboration. As people regularly return to the makerspace, they build relationships with other innovators, sharing their experiences and challenges. Senior leaders can serve as enablers, encouragers, and supporters of this construct, providing financial as well as moral support to the facilities and the people who use them. Leaders can also seed ideas and challenges for makerspace users to address.

There is widespread awareness of the power of makerspaces. In 2014, the President launched a Nation of Makers initiative, intended to bring this practice into wider use among entrepreneurs, students, and citizens. The U.S. Navy launched its first makerspace at a Navy facility in 2015, the Fab Lab at the Mid-Atlantic Regional Maintenance Facility in Norfolk, Virginia. According to the White House Office of Science and Technology Policy, this makerspace “provides advanced digital-fabrication training and tools so that Navy personnel can develop innovations to help with ship maintenance and overall operations. The Fab Lab has a set of rapid prototyping equipment, which includes a laser cutter, a 3D printer, a vinyl cutter, an electronics workbench, a CNC mill, and hand tools.”20

In August 2016, the Air Force launched a similar facility, the Maker Hub, as a 1-year pilot project at Wright-Patterson Air Force Base (AFB).21

Partnerships

Several of the highly innovative organizations the committee studied stressed the importance of partnerships in innovation. These ranged from close working relationships with customers and end users, to collaboration with other organizations on technology sharing and co-development of new solutions. There were also examples of important partnerships within a single, larger organization as different units teamed up to help deliver innovations important to the organization as a whole. The operational community is often an overlooked resource in the process of concept development and subsequent experimentation with new technologies. Individuals with experience gained in real-world operations can provide an unexpected source of innovation as well as can problem areas requiring an innovative solution. The analysis of the data they bring to the table is invaluable in developing baselines against which innovations can be measured.22 Benchmarking specific case studies in the Air Force as well as others across the DoD suggests Congress

___________________

20 T. Kalil and S. Santoso, “Makers in the Military,” White House Blog, December 10, 2015, https://www.whitehouse.gov/blog/2015/12/10/makers-military.

21 H. Jordan, “Maker Hub Turns Researchers into Builders,” Wright-Patterson Air Force Base News Article, August 30, 2016, http://www.wpafb.af.mil/News/Article-Display/Article/930177/maker-hub-turns-researchers-into-builders.

22 U.S. Navy submarine programs have successfully implemented the Tactical Advantage for the Next Generation (TANG) Program, the Advanced-Processing-Build (APB) process, and the Engineering Measurement Process (EMP), in part to address this issue.

can be a powerful ally or foe and therefore deserves attention in order to form an effective partnership. Small business is an excellent yet relatively untapped source of technical expertise, suggesting the Small Business Innovative Research (SBIR) program could be an important source of partners.

Regardless of the specific details, in the highly innovative organizations bench-marked by the committee, innovation was viewed as a team sport in which it was important to have strong working partnerships at all points of the compass. This underscores the importance of having people and culture that are supportive of, and engaged in, innovation.

Red Teams

The use of red teams to help ensure that any system lives up to its declared capabilities is obviously a valuable tool that cannot be overrated and should not be ignored. One excellent example of a red team concept was created by the Air Force Materiel Command in the early 2000s. Not only did its CHOP provide a check on the capability of various newly developed systems, but it was also a unique application of a small innovative team. Members of the CHOP team were tasked to try to develop countermeasures to a system using only publicly available information about the system and inexpensive, readily available materials. As an example, the Ballistic Missile Development Organization (BMDO) asked the CHOP team to see if they could determine and test a low-cost countermeasure to the existing Airborne Laser (ABL) system. The CHOP team quickly developed and tested a viable counter to the effects of an ABL system that could be made by an unsophisticated adversary. The CHOP team’s countermeasure solution helped to define what ABL could or could not be expected to accomplish if fielded.

Another example of the value of red teams is demonstrated every year in various war games. As an example, the Air Force Space Command has regularly used red teams in its annual games at Schriever AFB. Spacecom even employs non-DoD personnel who have demonstrated innovational and improvisational skills to look for adversarial ways to counter space capabilities.

PEOPLE AND CULTURE

In its examination of highly innovative organizations, the committee was repeatedly reminded of the importance of people and culture. In addition, its own research found a long history of research by others making the same point. It observed that the most effective organizations rely on four factors to bring about the desired levels of experimentation and innovation.

- Leadership. Leadership is the single biggest factor in culture change. This theme was repeated over and over in discussions with the committee. One of leadership’s primary responsibilities is to set and maintain a vision. Leaders make it a point to talk about the importance of experimentation and innovation in both their external and internal communications as well as how to perform experiments that are aligned with the organization’s larger goals and objectives. They provide regular, public recognition to the individuals and teams that are taking chances, exploring new areas, and performing experiments where the outcomes are not always predictable. Leaders at all levels within the organization create space to allow experimentation and innovation to happen, in terms of funding, facilities, and process flow (i.e., they allow some slack in the process). They deliberately and actively remove organizational, procedural, regulatory barriers to experimentation and innovation, and provide allowances for one-off experiments that are adjacent to the core mission. In a nutshell, they seek, support, and celebrate innovators, holding them up as exemplars for the organization to imitate.

- Peer norms. The workforce itself fosters informal peer networking around the topic of experimentation. Practitioners are actively engaged in learning about, designing, and performing experiments. They share war stories and lessons with their peers, and look up to their colleagues who have a track record of experimentation and innovation. They seek out opportunities to provide and receive mentorship in this area, asking questions and sharing answers. They view experimentation as key to professional development and an opportunity to advance the organization’s goals and objectives. In short, they seek to build a culture in which the norm is a widespread effort to discover new and better ways of doing things.

- Education and training. The most effective innovative organizations ensure that personnel receive education and training in experimentation and innovation principles, practices, and techniques. They make a deliberate effort to foster a learning environment, using both in-house and external experts for instruction, training, and education. These organizations dedicate funding and time to support ongoing workforce education, and they work at making it easy for employees to self-educate by providing easy access to literature, websites, conferences, and so forth.

- Enabling systems. In many organizations, experimentation and innovation are stifled by organizational systems that make it more difficult to innovate, take risks, and the like. Accounting systems that track costs to the penny but largely ignore upside potential, human resource systems that track and promote mistake-free performance in a single discipline, rule-bound acquisition systems that make it virtually impossible to try something that may well not work—these are all examples of systems operating in a manner that limits innovation. In contrast, such

systems in highly innovative organizations are aligned with the goal of innovation and are structured and operated to make experimentation and innovation easier. Detailing these systems is beyond the scope of this report, but this subject will be considered with current practices in the USAF, the subject of Chapter 3.23

___________________

23 See Appendix C for a list of speakers and organizations interviewed by the committee.