5

A New Direction for Risk Assessment and Applications of 21st Century Science

The scientific and technological advances described in Chapters 2–4 offer opportunities to improve the assessment or characterization of risk for the purpose of environmental and public-health decision-making. To facilitate appreciation of the new opportunities, this chapter first discusses the new direction envisioned for risk assessment and then highlights applications (see Box 1-3) of 21st century science that can be used to improve decision-making. It provides concrete examples of pragmatic approaches for using 21st century science along with longstanding toxicological and epidemiological approaches to improve the evidence used in decision-making. The chapter next addresses communication of the new approaches to stakeholders. It concludes with a brief discussion of the challenges that they pose and recommendations for addressing the challenges.

A NEW DIRECTION FOR RISK ASSESSMENT

The seminal 1983 National Research Council (NRC) report Risk Assessment in the Federal Government: Managing the Process (NRC 1983) defined risk assessment as “the use of the factual base to define the health effects of exposure of individuals or populations to hazardous materials and situations.” The report noted that risk assessment had four components—hazard identification, exposure assessment, dose–response assessment, and risk characterization—and that risk assessments contain some or all of them. It stated that various data streams from, for example, toxicological, clinical, epidemiological, and environmental research need to be integrated to provide a qualitative or quantitative description of risk to inform risk-based decisions. It recognized explicitly the uncertainty that arises when information on a particular substance is missing or ambiguous or when there are gaps in current scientific theory, and it called for inferential bridges or inferential guidelines to bridge such gaps to allow the assessment process to continue. Risk assessment then (as now) relied heavily on the measurement of apical responses, such as tumor incidence and developmental delays, in homogeneous animal models, often with little exposure or epidemiological information.

Although today’s risk assessments generally support the same types of decisions as those in 1983, the tools available for asking and answering relevant risk-based questions have evolved substantially. As outlined in Chapters 2–4 of the present report, modern tools in exposure assessment, toxicology, and epidemiology have increased the speed at which information can be collected and the scope of the data available for risk assessment. The focus has also shifted from observing apical responses to understanding biological processes or pathways that lead to the apical responses or to disease. The tools are designed to investigate or measure molecular changes that give insight into the biological pathways. Thus, a “factual base” is being created that is increasingly upstream of the adverse health effects that federal agencies seek to prevent or minimize.

The Tox21 report (NRC 2007) fixed the new direction for risk assessment with its focus on discerning toxicity pathways, which were defined as “cellular response pathways that, when sufficiently perturbed in an intact animal, are expected to result in adverse health effects.” Since publication of that report, the understanding of biological processes underlying disease has increased dramatically and has provided an opportunity to understand the biological basis of how different environmental stressors can affect the same pathway, each potentially contributing to the risk of a particular disease. To operationalize a risk-assessment approach that relies on mechanistic understanding, it will be necessary to understand the critical steps in the pathways, but beginning to apply the approach does not require knowing all pathways. For example, the results of a subchronic rat study might indicate a failure of animals to thrive, which is manifested as decreased weight gain and some deaths over the course of the study, but no obvious target-organ effects. Studies on the molecular effect of the chemical indicate that it is an uncoupler of oxidative phosphorylation. Epidemiological studies could then focus on biological processes that are

energy-intensive, such as heart muscle under stress. Exposure science could be used to measure or estimate population exposure to the stressor over space and time and could align toxicity data with environmental exposures for use in epidemiological studies. Assays to screen for the perturbation along with chemical-structure considerations might help to characterize risks posed by similarly acting chemicals, and exposure estimates could be generated for other chemicals hypothesized to exert a similar response.

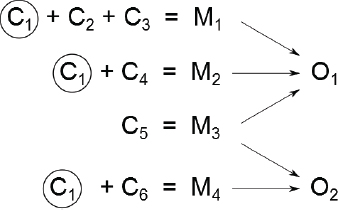

Today, there is an appreciation of the multifactorial nature of disease, that is, a recognition that a single adverse outcome might result from multiple mechanisms that can have multiple components. (See further discussion in Chapter 7.) Thus, the question shifts from whether A causes B to whether A increases the risk of B. Figure 5-1 provides an illustration of that concept, and Box 5-1 provides a concrete example. In the figure, four mechanisms (M1–M4) and various combinations of six components (C1–C6) are involved in producing two outcomes (O1 and O2). For example, three components (C1, C2, and C3) are involved in activating mechanism M1, which leads to outcome O1, and C1 is a component in several mechanisms. Here, a component is defined as a biological factor, event, or condition that when present with other components produces a disease or other adverse outcome; mechanism is considered to be comprised of one or more components that cause disease or other adverse outcomes when they co-occur; and pathways are considered to be components of mechanisms. The model can incorporate societal factors that drive exposure or susceptibility, such as poverty, and that might ultimately lead to cellular responses that activate various mechanisms. For example, in mechanism M1, societal factors could perturb component C1, the same one that the chemical under consideration perturbs. Alternatively, societal factors could perturb components C2 and C3 of mechanism M1, which in combination with the chemical’s direct perturbation of component C1 could fully activate the mechanism. The ability to identify the contribution of various components of a given mechanism and to understand the significance of changes in single components of a mechanism is critical for risk-based decision-making based on 21st century science.

In the challenging context of multifactorial diseases, the 21st century tools allow implementation of the new direction for risk assessment that acknowledges the complexity of the determinants of risk. They can enable the identification of multiple disease contributors and advance understanding of how identified mechanisms, pathways, and components contribute to disease. They can be used to probe specific chemicals for their potential to perturb pathways or activate mechanisms and thereby increase risk. And the new tools provide critical biological information on how a chemical might add to a disease process and how individuals might differ in response; thus, they can provide insight on the shape of the population dose-response curve and on individual susceptibility to move toward the risk characterizations envisioned in the report Science and Decisions: Advancing Risk Assessment (NRC 2009). As noted by the NRC (2007, 2009), people differ in predisposing factors and co-exposures, so the extent to which any particular chemical perturbs a pathway and contributes to disease varies in the population. A challenge for the dose–response assessment is to characterize the extent to which the whole population and sensitive groups might be affected or, at a minimum, whether the perturbation exceeds some de minimis level.

Although the discussion above focuses primarily on the toxicological and epidemiological aspects of the new direction, exposure science will play a critical role. The exposure data arising from the technological advances in exposure science will provide much needed and increasingly rich information. For example, comprehensive exposure assessments that use targeted and nontargeted analyses of environmental and biomonitoring samples or that use computational exposure methods will help to identify chemical mixtures to which people are exposed. Such comprehensive assessments will support evaluating risks of groups of similarly acting chemicals for single end points or investigating chemical exposures that might activate multiple mechanisms that contribute to a specific disease. Advancing our understanding of the pharmacokinetics will further the ability to translate exposure-response relationships observed in in vitro systems to humans, characterize susceptible populations, and ultimately reduce uncertainty in risk assessment. Personalized exposure assessment will provide critical information on individual variability in exposure to complement pharmacodynamic variability assessed in pathway-based biological test systems. Ultimately, these and other advances in exposure

science in combination with advances in toxicology and epidemiology will provide an even stronger foundation for the new direction for risk assessment.

APPLICATIONS

Full implementation of the new direction for risk assessment or the visions described in the NRC report Science and Decisions and the Tox21 and ES21 reports (NRC 2007, 2009, 2012) is not yet possible, but the data being generated today can be used to improve decision-making in several areas. As noted in Chapter 1 (see Box 1-3), priority-setting, chemical assessment, site-specific assessments, and assessments of new chemistries are risk-related tasks that can all benefit from incorporating 21st century science. The methods and data required to support the various tasks will probably differ, and confidence in them will depend to some extent on the context. For example, scientists have a great deal of experience in using laboratory data to support biological plausibility in epidemiology studies, and the new data can be relatively easily applied in that context. In contrast, methods used to support definitive chemical assessments will likely need extensive evaluation, and risk assessors will need to be trained in how to use them. In the following sections, the committee describes approaches that can use the new scientific approaches in specific applications.

Priority-Setting

Tens of thousands of chemicals are used in commerce in the United States (Muir and Howard 2006; Egeghy et al. 2012) in various items—including building materials, consumer products, and craft supplies—and can cause exposure through product use and environmental releases associated with manufacture and disposal. Although the number of chemicals in the environment is large, the number of chemicals for which toxicity, exposure, and epidemiology datasets are complete remains small. Given the finite resources of government agencies and other stakeholders for investigating the risks associated with the wide array of chemicals present in people, places, and goods, mechanisms for setting priorities for chemical evaluation and determining appropriate risk-management strategies—reduction of use, replacement, or removal—are essential.

Some categories of chemicals that are intended to have biological activity, such as drugs and pesticides, are routinely subjected to a suite of toxicity tests as required by law. However, extensive toxicity testing of most chemicals is not required, and the need for testing is determined by priority-setting schemes. For example, the National Toxicology Program (NTP 2016) sets testing priorities on the basis of the extent of human exposure, suspicion of toxicity, or the need for information to fill data gaps in an assessment, and the European Union’s Registration, Evaluation, and Authorization of Chemicals (REACH) testing requirements are based predominantly on production volume (chemical quantity produced per annum) and the potential for widespread exposure or human use, such as would occur with a consumer product (NRC 2006; Rudén and Hansson 2010). Considerations of potential toxicity have generally been limited to alerts based on the presence of specific chemical features, such as a reactive epoxide moiety, or similarity to known potent toxicants. Using only those considerations to set priorities is clearly limited; additional hazard information that covers more biological space and exposure information that provides more detailed estimates of exposure from multiple sources and routes would improve the priority-setting process.

As Tox21 tools—such as high-throughput screening, toxicogenomics,1 and cheminformatics—have become available, priority-setting has been seen as a principal initial application. High-throughput platforms, such as the US Environmental Protection Agency (EPA) ToxCast program described in Chapter 1, have produced data on thousands of chemicals. Toxicogenomic analyses have the potential to increase the biological coverage of in vitro cell-based assays and might be a useful source of data for priority-setting. For example, efforts are under way to assess transcriptomic responses in a suite of human cells by using positive control chemicals ultimately to determine whether biological pathways can be identified on the basis of select patterns of gene expression (Lamb et al. 2006) or whole-genome transcriptomics (de Abrew et al. 2016). Mismatches between in vitro and in vivo results might occur for several reasons, such as a lack of metabolism in the in vitro assays. As discussed in Chapter 3, lack of or low-level metabolic activation of an agent is widely recognized as a potential problem in in vitro studies, and development of methods to introduce metabolic systems into assays that can be run in high-throughput format is under active research.

Cheminformatic approaches can also be used to set priorities for chemical testing by evaluating series of chemicals for the presence of chemical features that are associated with toxicity—for example, through the use of such proprietary tools as DEREK2 —or by using decision trees that evaluate whether there are precedents in the literature for specific chemical features to be associated with a particular toxicity outcome, such as developmental toxicity (Wu et al. 2013). Those methods have been automated and allow for rapid identification of chemicals that have specific chemical features that have been identified as potentially problematic, such as reactive functional groups, or that have a reasonably high similarity to chemicals that are potent toxicants, such as steroid-like substances (Wu et al. 2013).

___________________

1 Toxicogenomics is transcriptomic analysis of responses to chemical exposure.

Several new high-throughput methods—for example, ExpoCast (Wambaugh et al. 2013) or ExpoDat (Shin et al. 2015)—have been developed to provide quantitative exposure estimates for exposure-based and risk-based priority-setting. The new technologies can estimate exposures more explicitly than older simpler models by taking into account chemical properties, chemical production amounts, chemical use and human behavior (likelihood of exposure), potential exposure routes, and possible chemical intake rates. Information produced via high-throughput exposure calculations could be used to refine priority-setting schemes.

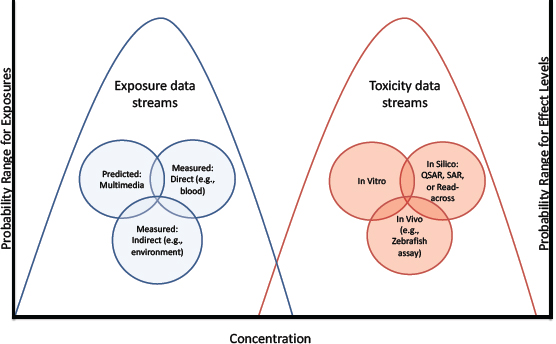

Depending on the context, hazard and exposure information could be used in various ways for priority-setting. For example, screening based only on hazard could be particularly useful in situations, such as those involving changes in product composition, in which exposure information is unknown or evolving and there is an assumption that the product would be used in the same way with roughly the same exposure. Methods have been proposed for risk-based priority-settting that use a combination of high-throughput exposure and hazard information in which the highest estimated exposure and the lowest-measured-effect concentration are identified, and margins of exposure (differences between toxicity and exposure metrics) are calculated (see Figure 5-2). Refinement of the margins of exposure by using reverse pharmacokinetic techniques to estimate exposure has also been proposed (Wetmore et al. 2013). Confidence in the approach should increase with broader biological coverage of the in vitro assays, innovations that add metabolic activation to the assays, methods that take into account toxicity that is associated with a particular route of exposure (such as inhalation), and improved accuracy of computational exposure models to predict human and ecosystem exposures.

Chemical Assessment

Chemical assessments encompass a broad array of analyses, from Integrated Risk Information System assessments that include hazard and dose–response assessments to ones that also incorporate exposure assessments to produce risk characterizations. Moreover, chemical assessments performed by the federal agencies cover chemicals on which there are few data to use in decision-making (data-poor chemicals) and chemicals on which there is a substantial database for decision-making (data-rich chemicals). The following sections address how 21st century data could be used in the contrasting situations.

Assessments of Data-Poor Chemicals

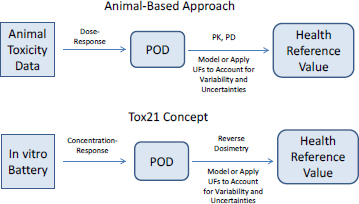

Assessments of some data-poor chemicals might begin by evaluating outcomes whose mechanisms are known. That is, mechanisms of a few toxicity outcomes, such as genotoxicity and skin sensitization, are sufficiently well known for it to be possible to rely on mechanistically based in vitro assays—for example, the Ames assay and direct peptide reactivity assay—for which the Organisation for Economic Co-operation and Development guidelines already exist as the starting point for hazard assessment. For such well-defined outcomes for which in vitro assays are sufficient for characterization, the process of hazard assessment is relatively straightforward. Rather than using animal data as the starting point for establishing hazard, one replaces the animal data with data from the alternative method. In most cases, conclusions are qualitative and binary—for example, the chemical is or is not a genotoxicant. However, efforts are under way to provide quantitative ways of using in vitro test information to describe the dose–response characteristics of chemicals and ultimately to calculate a health reference value, such as a reference dose or a reference concentration (see Figure 5-3). In the approach that uses animal data and in the approach that relies on in vitro results, uncertainty factors (UFs) are typically included to address interindividual differences in human response and the uncertainty associated with extrapolating from a test system to people. Alternatively, a model can be used to extrapolate to low doses. Box 5-2 provides further discussion on uncertainty, variability, UFs, and extrapolation.

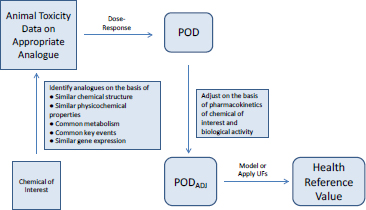

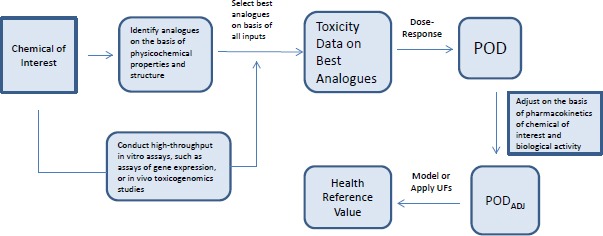

Most toxicity outcomes involve multiple pathways by which chemicals can exert an adverse influence, and not all pathways have been determined for many outcomes, such as organ toxicity and developmental toxicity. For those outcomes, simple replacement of animal-derived information with in vitro information might not be possible. Another possible approach to evaluating chemicals is to use toxicity data on previously well-tested chemicals that are structurally similar to the chemical of interest (see Figure 5-4). Analogues are selected on the basis of similarities in chemical structure, physical chemistry, and biological activity in in vitro assays. Comparisons of analogues with the chemical of interest are based on the premise that the chemical of interest and its analogues are metabolized to common or biologically similar metabolites or that they are sufficiently similar in structure to have the same or similar biological activity (for example, they activate receptors similarly). The similarity supports the inference that the chemical will induce the same type of hazard as the analogues although not necessarily at similar doses.

The method described in Figure 5-4 depends on having a comprehensive database of toxicity data that is searchable by curated and annotated chemical structure (such as ACToR or DSSTox) and a consistent decision process for selecting suitable analogues. Wu et al. (2010) published a set of rules for identifying analogues and categorizing them as suitable, suitable with interpretation, suitable with precondition (such as metabolism), or un-

suitable for analogue-based assessment. The rules consider physical chemistry, potential chemical reactivity, and potential metabolism of the chemical.

In many cases, a close similarity based on atom-by-atom matching is sufficient to classify two or more chemicals as suitable analogues for each other. However, atom-by-atom matching is not sufficient in every case. Small differences can sometimes alter the chemical activity in such a way that one metabolic pathway is favored over another or the chemical reactivity with various biological molecules changes. In practice, analogue-based assessment can be greatly facilitated by expert-rule–based considerations with molecular similarity. The approach was tested in a case study that used a blinded approach and found to be robust (Blackburn et al. 2011). Given that the total dataset for traditional animal toxicity data is large (millions of entries in ACToR and probably tens of thousands of entries for each toxicity outcome), the analogue-based approach could have great utility. Similar approaches are being developed and used for read-across assessment of chemicals submitted under the European REACH regulation.

A structure–activity assessment can be thought of as a testable hypothesis that can be addressed with a variety of methods, such as those described in Chapter 3. Comparable metabolism can be assessed by using established methods for testing xenobiotic metabolism in vivo and in vitro with the recognition that metabolism can be complex for even simple molecules, such as benzene (McHale et al. 2012). Testing for similar biological activity can be based on what is understood about the primary pathways by which the chemicals in the class exert toxicity. If the mechanisms are not known, it is possible to survey some (for example, using ToxCast assays) or all (for example, by using global gene-expression analysis) of the universe of possible pathways that are affected by the chemical to determine the extent to which the biological activities of the chemical of interest and its analogues are comparable. Toxicogenomic analyses have been found to be useful for identifying a mechanism in both in vivo and in vitro models (see, for example, Daston and Naciff 2010). With lower-cost methods now available, large datasets of gene-expression responses for small molecules have become available (for example, the National Institutes of Health’s Library of Network-based Cellular Signatures, LINCS), and these data can support determination of the extent to which chemicals of similar structure are sufficiently comparable for read-across (Liu et al. 2015).

Combining cheminformatic and rapid laboratory-based approaches makes it possible to arrive at a surrogate point of departure for risk assessment that uses analogue data. The surrogate can then be adjusted for pharmacokinetic differences and bioactivity (see Figure 5-5). The committee explored that approach in a case study on alkylphenols (see Box 5-3 and Appendix B).

Eventually, it might be possible to conduct similar assessments of chemicals without adequate analogue data. Cheminformatic and laboratory methods could be used to generate hypotheses about the possible activities of a new chemical, and the hypotheses could be tested virtually in

systems-biology models and verified in higher-order in vitro models. As discussed in Chapter 3, computational models, such as the cell-agent-based model used in the EPA virtual-embryo project, have done a reasonable job of predicting the effects of potent antiangiogenic agents on blood vessel development by using high-throughput screening data and information on key genes in the angiogenic pathway as starting points for model development (Kleinstreuer et al. 2013). The model can be run thousands of times—the virtual equivalent of thousands of experiments—and adjusted on the basis of the simulation results. The outcome of the model was evaluated in in vitro vascular-outgrowth assays and in zebrafish (Tal et al. 2014) and was found to be a good predictor of outcome in the assays. Such an approach clearly depends on a deep understanding of the biology underlying a particular process and how it can be perturbed and on sophisticated laboratory models that will support evaluation of the virtual model. This approach will require some knowledge of the key events that connect the initial interaction of an exogenous chemical with its molecular target and the ultimate adverse outcome.

Regardless of whether the risk assessment is conducted with the read-across approaches depicted in Figures 5-4 and 5-5 or the pathway approach just described, there will be circumstances in which the uncertainty in the assessment needs to be reduced to support decision-making. That situation can arise because the margin of exposure is too small, the possible mechanisms have still not been adequately defined, or the quantitative relationship between effects measured at the molecular or cellular level and adverse outcome have not been adequately defined. In such cases, one might use increasingly complex models—for example, zebrafish or targeted rodent testing—to assess biological activity and the outcomes of a chemical exposure.

Assessment of Data-Rich Chemicals

Some chemicals are the subjects of substantive databases that leave no question regarding the causal relationship between exposure and effect; that is, hazard identification is not an issue for decision-making. However, there might still be unanswered questions that are relevant to regulatory decision-making, such as questions concerning the effects of exposure at low doses, susceptible populations, possible mechanisms for the observed effects, and new outcomes associated with exposure. The advances described in Chapters 2–4 have the potential to reduce uncertainty around such key issues. The committee explores how 21st century science can be used to address various questions in a case study that uses air pollution as an example (see Box 5-4 and Appendix B).

Cumulative Risk Assessment

Cumulative risk assessment could benefit from the mechanistic data that are being generated. It is well understood that everyone is exposed to multiple chemicals simultaneously in the environment, for example, through the air we breathe, the foods we eat, and the products we use. However, risk assessment is still conducted largely on individual chemicals even though chemicals that have a similar mechanism for an outcome or that are associated with similar outcomes are considered as posing a cumulative risk when they are encountered together (EPA 2000; NRC 2008). Cumulative risk assessment of carcinogens is somewhat common in agencies, but cumulative risk assessment of noncarcinogens is not so common. One example of cumulative assessment is that of organophosphate pesticides whose mechanism is known to be acetylcholinesterase inhibition.

Testing systems that evaluate more fundamental levels of biological organization (effects at the cellular or molecular level) might be useful in identifying agents that act via a common mechanism and in facilitating the risk assessment of mixtures. Identifying complete pathways for chemicals (from molecular initiating events to individual or population-level disease) could also be useful in identifying chemicals that result in the same adverse health outcome through different molecular pathways. High-throughput screening systems and global gene-expression analysis are examples of technologies that could provide the required information. The techniques applied in support of cumulative risk assessment will also support multifactorial risk evaluations discussed further in Chapter 7.

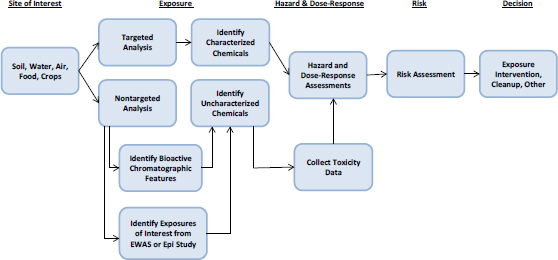

Site-Specific Assessments

Understanding the risks associated with a chemical spill or the extent to which a hazardous-waste site needs to be remediated depends on understanding exposures to various chemicals and their toxicity. The assessment problem contains three elements: identifying and quantifying chemicals present at the site, characterizing their toxicity, and characterizing the toxicity of chemical mixture. Thus, one might consider this situation to be an exposure-initiated assessment in which exposure information is a starting point as illustrated in Figure 5-6. In this context, exposure information means information on newly identified chemicals and more complete characterization of exposure to chemicals previously identified at a site. Box 5-5 provides two specific examples of exposure-initiated assessments.

The advances described in Chapters 2–4 can address each element involved in site-specific assessments. Targeted analytical-chemistry approaches, particularly ones that use gas or high-performance liquid chromatography coupled with mass spectrometry, can identify and quantify chemicals for which standards are available. Nontargeted analyses can help to assign provisional identities to previously unidentified chemicals. The committee ex-

plored the application of advances in exposure science to a case study of a large historically contaminated site (see Box 5-6 and Appendix C).

As for toxicity characterization, assessments of waste sites and chemical releases often involve chemicals on which few toxicity data are available. In the case of waste sites, EPA assigns provisional reference values for a number of chemicals by using the Provisional Peer Reviewed Toxicity Value (PPRTV) process. However, because of the amount or quality of the data available, the PPRTV values tend to entail large uncertainties. Analogue-based methods coupled with high-throughput or high-content screening methods have the potential to improve the PPRTV process. Identification of well-tested appropriate analogues to an untested chemical at clean-up sites can provide more certain estimates of the hazard and potency of the chemical, and the appropriateness of the analogues can be confirmed with high-throughput screening or high-content data that show comparability of biological targets or other end points and relative potency. Although the high-throughput or high-content models still require validation, the read-across approach could be applied immediately.

In the case of chemical releases, few data might be available on various chemicals—a situation similar to waste sites—but decisions might need to be made quickly. The committee uses the scenario of a chemical release as a case study to examine how Tox21 approaches can be used to provide data on a data-poor chemical quickly (see Box 5-6 and Appendix C).

As for understanding the toxicity of chemical mixtures, high-throughput screening methods provide infor-

mation on mechanisms that can be useful in determining whether any mixture components might act via a common mechanism, affect the same organ, or cause the same outcome and thus should be considered as posing a cumulative risk (EPA 2000; NRC 2008). High-throughput methods can also be used to assess the toxicity of mixtures that are present at specific sites empirically rather than assessing individual chemicals. Such real-time generation of hazard data was conducted on the dispersants that were used to treat the crude oil released during the Deepwater Horizon disaster (Judson et al. 2010) to determine whether some had greater endocrine activity or cytotoxicity than others. Endocrine assays were the focus because of the known estrogenic activity of nonylphenol ethoxylates; nonylphenol (the degradation product of nonylphenol ethoxylates) is known to be estrogenic.

It is possible to use high-throughput assay data as the basis of a biological read-across for complex mixtures. For example, an uncharacterized mixture could be evaluated in high-throughput or high-content testing, and the results could be compared with existing results for individual chemicals or well-characterized mixtures. That process is similar to the connectivity mapping approach (Lamb et al. 2006) in which the biological activity of a single chemical entity is compared with the fingerprint of other chemicals in a large dataset, and it is assumed that chemicals with like biological activity have the same mechanism. That approach for single chemicals can be used for uncharacterized mixtures. One would still not know whether the biological activity was attributable to a single chemical entity or to multiple chemicals, but it would not matter if one were concerned only about char-

acterizing the risk associated with that particular mixture. The committee notes that it is possible that a mixture will exhibit more than one biological activity, particularly at high concentrations, but it should be possible to gain a better understanding of the biological activity by testing multiple concentrations of the mixture. The committee explores a biological read-across approach for complex mixtures further in a case study that considers the hypothetical site imagined in the first case study (see Box 5-6 and Appendix C).

Finally, new methods in exposure science, -omics technologies, and epidemiology provide another approach to generate hypotheses about the role of chemicals and chemical mixtures in specific disease states and to gather information about potential risks associated with specific sites. Information generated on chemical mechanisms, particularly of site-specific chemical mixtures, might be useful for identifying highly specific biomarkers of effect that can be measured in people who work or reside near a site of concern. Measurement of biomarkers has advantages over collection of data on disease outcome because many diseases of concern, such as cancer, are manifested only after chronic exposure or after a long latency period. Such measurement could also be of value in determining the effectiveness of remediation efforts at the site if biomarkers can be measured before and after mitigation. Real-time individualized measurements of exposure of people near a site are also possible and could provide richer data about peak exposures or exposure durations.

Assessment of New Chemistries

Green chemistry involves the design of molecules and products that are optimized to have minimal toxicity and limited environmental persistence, are (ideally) derived from renewable sources, and perform comparably with or better than the chemicals that they are replacing. The green-chemistry approach often involves synthesis of new molecules on which there are no toxicity data and that might not have close analogues. Green-chemistry design is another case in which the use of modern in vitro toxicology methods could have great utility by providing guidance on which molecular features are associated with greater or less toxicity and by identifying chemicals that do not affect biological pathways that are known to be relevant for toxicity (Voutchkova et al. 2010). There are a few examples of the use of in vitro toxicity methods to determine whether potential replacement chemicals are less toxic. For example, Nardelli et al. (2015) evaluated the effects of a series of potential replacements for phthalate plasticizers on Sertoli cell function, and high-throughput testing has been used to evaluate alternatives to bisphenol A in the manufacture of can linings (Seltenrich 2015). Using high-throughput methods in this context is not conceptually different from screening prospective therapeutic agents for maximal efficacy and minimal off-target effects. Box 5-7 and Appendix D describe a case study of assessment of new chemistries.

One could use the same methods as described above to evaluate the toxicity of newly discovered chemicals in the environment, for example, from unexpected breakdown products of a widely used pesticide. If breakdown products are chemically related to their parent molecules, cheminformatics (read-across) methods could also be appropriate for estimating their toxicity.

COMMUNICATING THE NEW APPROACHES

Many of the approaches introduced in this chapter will be unfamiliar to some stakeholder groups. Communicating the strengths and limitations of the approaches in a transparent and understandable way will be necessary if the results are to be applied appropriately and will be critical for the ultimate acceptance of the approaches. The information needs and communication strategies will depend on the stakeholder group. The discussion here focuses on four stakeholder groups: risk assessors, risk managers and public-health officials, clinicians, and the lay public.

Risk-assessment practitioners who are responsible for generating health reference values need to have information on the details of the new approaches and on how to apply their results to predict human risk. They probably need formal training in the interpretation and application of new data streams emerging from exposure science, toxicology, and epidemiology. Read-across, for example, is perhaps the most familiar of the alternative approaches described in this chapter, but most risk assessors still need a great deal of training in identifying appropriate chemical analogues on which to base a read-across and in accounting for decreased confidence in the assessment if there are few analogues or less than perfect structural matches. They also need to develop new partnerships that can help them with their tasks, for example, with computational and medicinal chemists who develop strategies for analogue searching, gauge the suitability of each analogue, or determine the likely metabolic pathway of a chemical of interest and its analogues to see whether they become more or less alike as they are biotransformed.

Most risk assessors are already familiar with the integration of traditional data for risk assessment, but they will need help in understanding how to integrate novel data streams and how much confidence they can have in the new data. One approach will be to compare the results from new methods with more familiar data sources, particularly in vivo toxicology studies. For example, EPA recently concluded that a high-throughput battery of estrogenicity assays is an acceptable alternative to the uterotrophic assay for tier 1 endocrine-disrupter screening (Browne et al. 2015; EPA 2015). The communication strategy in this case involved a description of the purpose of the assay battery, an explanation of the biological space covered by the battery (that is, the extent of the estrogen-signaling pathway being evaluated and the redundancy of the assays), a description of a computational model that integrates the data from all the assays and discriminates

between a true response and noise, and a comparison with an existing method that showed the new way working in most cases. Papers like the one cited provide useful models for further technical communication to risk assessors.

Risk managers and public-health officials do not need information that provides details on the assays or how they are applied to risk assessment; they do need to know the uncertainty associated with risk estimates and the confidence that they should place in them. Communication to this group will need to address those issues. There will be cases in which the new approaches will provide information that was heretofore unavailable to them, and the new information will assist them in making decisions about site remediation or acceptable exposure levels. This chapter discussed the possibility of using read-across to increase the number of chemicals evaluated in the PPRTV process, and Appendix C highlights a case study that uses cheminformatic approaches to address the developmental-toxicity potential of 4 methylcyclohexanemethanol, a chemical for which there was no experimental data on that outcome. Both examples illustrate how new approaches can provide information that would not have been available in any other way. However, the uncertainties associated with the new approaches need to be communicated.

As scientists advance the vision of identifying the many components that are responsible for multifactorial diseases, it will be necessary to communicate with clinicians and the public about how the factors have been identified, how each is related to others, and whether it is possible to reduce exposure to one or more factors to decrease disease risk. Physicians are beginning to embrace new methods as genomic information on individual patients becomes more available and personalized medicine becomes more of a reality, but there will still need to be communication to physicians in venues that they are likely to read and with diagnostic and treatment approaches that they are likely to be able to implement.

As for the general public, although many people get their health information from their doctors, some are far more likely to get medical information from the Internet and the popular press. The information that those media outlets require about new approaches is not qualitatively different from what clinicians need, but it needs to be presented in a format that is digestible by educated laypeople.

Finally, enhanced communication among the scientific community both nationally and internationally is vitally important for fully achieving the goals outlined in the Tox21 and ES21 reports and for gaining consensus regarding the utility of the new approaches and their incorporation into decision-making. The communication should include enhanced and more transparent integration of data and technology generated from multiple sources, including academic laboratories. Universities could serve as a communication conduit for multiple stakeholders, particularly clinicians and the lay public; thus, their engagement should be strategically leveraged. Ultimately, a more multidisciplinary and inclusive strategy for scientific discourse will help attain broad understanding and confidence in the new tools.

CHALLENGES AND RECOMMENDATIONS

As noted earlier in this report, there are challenges to achieving the new direction for risk assessment fully. Some, such as model and assay validation, are addressed in later chapters. Here, the committee highlights a few challenges that are specific to the applications and ap-

proaches described in the present chapter and offers some recommendations to address them.

Challenge: For risk assessment of individual chemicals, various approaches, such as cheminformatics and read-across, are already being applied because existing approaches are insufficient to meet the backlog of chemicals that need to be assessed. However, methods for grouping chemicals, assessing the suitability of analogues, and accounting for data quality and confidence in assessment are still being developed or are being applied inconsistently.

Recommendation: Read-across and cheminformatic approaches should be developed further and integrated into environmental-chemical risk assessments. High-throughput, cell-based assays and high–information-content approaches, such as gene-expression analysis, provide a large volume of data that can be used to test the assumptions made in read-across that analogues have the same biological targets and effects. Read-across and cheminformatics approaches depend on high-quality databases that are well curated; data curation and quality assurance should be a routine part of database development and maintenance. New case studies that use cheminformatic and read-across approaches could demonstrate new applications and encourage their use.

Challenge: Approaches that use large data streams to evaluate the potential for toxicity present a challenge in synthesizing information in a way that supports decision-making.

Recommendation: Statistical methods that can integrate multiple data streams and that are easy for risk assessors and decision-makers to use should be developed further and made transparent and user-friendly.

Challenge: Measuring biological events that are far upstream of disease states will introduce new sources of uncertainty into the risk-assessment process. Using data on those events as the starting point for risk assessment will require new approaches for risk assessment that are different from the current methods, which identify a point of departure and apply default uncertainty factors or extrapolate by using mathematical models.

Recommendation: New types of uncertainty will arise as the 21st century tools and approaches are used, and research should be conducted to identify these new sources and their magnitude. Some traditional sources of uncertainty will disappear as scientists rely less on animal models to predict toxicity, and these should also be identified.

REFERENCES

Abdo, N., B.A. Wetmore, G.A. Chappell, D. Shea, F.A. Wright, and I. Rusyn. 2015a. In vitro screening for population variability in toxicity of pesticide-containing mixtures. Environ. Int. 85:147-155.

Abdo, N., M. Xia, C.C. Brown, O. Kosyk, R. Huang, S. Sakamuru, Y.H. Zhou, J.R. Jack, P. Gallins, K. Xiam Y. Li, W.A. Chiu, A.A. Motsinger-Reif, C.P. Austin, R.R. Tice, I. Rusyn, and F.A. Wright. 2015b. Population-based in vitro hazard and concentration-response assessment of chemicals: The 1000 genomes high-throughput screening study. Environ. Health Perspect. 123(5):458-466.

Battaile, K.P, and R.D. Steiner. 2000. Smith-Lemli-Opitz syndrome: The first malformation syndrome associated with defective cholesterol synthesis. Mol. Genet. Metab. 71(1-2):154-162.

Bhattacharya, S., Q. Zhang, P.L. Carmichael, K. Boekelheide, and M.E. Andersen. 2011. Toxicity testing in the 21 century: Defining new risk assessment approaches based on perturbation of intracellular toxicity pathways. PLoS One 6(6):e20887.

Blackburn, K., D. Bjerke, G. Daston, S. Felter, C. Mahony, J. Naciff, S. Robison, and S. Wu. 2011. Case studies to test: A framework for using structural, reactivity, metabolic and physicochemical similarity to evaluate the suitability of analogs for SAR-based toxicological assessments. Regul. Toxicol. Pharmacol. 60(1):120-135.

Browne, P., R.S. Judson, W.M. Casey, N.C. Kleinstreuer, and R.S. Thomas. 2015. Screening chemicals for estrogen receptor bioactivity using a computational model. Environ. Sci. Technol. 49(14):8804-8814.

Daston, G., and J.M. Naciff. 2010. Predicting developmental toxicity through toxicogenomics. Birth Defects Res. C. Embryo Today 90(2):110-117.

De Abrew, K.N., R.M. Kainkaryam, Y.K. Shan, G.J. Overmann, R.S. Settivari, X. Wang, J. Xu, R.L. Adams, J.P. Tiesman, E.W. Carney, J.M. Naciff, and G.P. Daston. 2016. Grouping 34 chemicals based on mode of action using connectivity mapping. Toxicol. Sci. 151(2):447-461.

Eduati, F. L.M. Mangravite, T. Wang, H. Tang, J.C. Bare, R. Huang, T. Norman, M. Kellen, M.P. Menden, J. Yang, X. Zhan, R. Zhong, G. Xiao, M. Xia, N. Abdo, O. Kosyk, S. Friend, A. Dearry, A. Simeonov, R.R. Tice, I. Rusyn, F.A. Wright, G. Stolovitzky, Y. Xie, and J. Saez-Rodriguez. NIEHS-NCATS-UNC DREAM Toxicogenetics Collaboration. 2015. Prediction of human population responses to toxic compounds by a collaborative competition. Nat. Biotechnol. 33(9):933-940.

Egeghy, P.P., R. Judson, S. Gangwal, S. Mosher, D. Smith, J. Vail, and E.A Cohen Hubal. 2012. The exposure data landscape for manufactured chemicals. Sci. Total Environ. 414(1):159-166.

EPA (US Environmental Protection Agency). 2000. Supplementary Guidance for Conducting Risk Assessments of Chemical Mixtures. EPA/630/R-00/002. Risk Assessment Forum Technical Panel, US Environmental Protection Agency, Washington, DC [online]. Available: https://cfpub.epa.gov/ncea/raf/pdfs/chem_mix/chem_mix_08_2001.pdf [accessed September 30, 2016].

EPA (US Environmental Protection Agency). 2015. Use of High Throughput Assays and Computational Tools in the Endocrine Disruptor Screening Program-Overview [online]. Available: https://www.epa.gov/endocrine-disruption/use-high-throughput-assays-and-computational-tools-endocrine-disruptor [accessed December 1, 2016].

Incardona, J.P., W. Gaffield, R.P. Kapur, and H. Roelink. 1998. The teratogenic Veratrum alkaloid cyclopamine inhibits sonic hedgehog signal transduction. Development 125(18):3553-3562.

Jaworska, J., Y. Dancik, P. Kern, F. Gerberick, and A. Natsch. 2013. Bayesian integrated testing strategy to assess skin sensitization potency: From theory to practice. J. Appl. Toxicol. 33(11):1353-1364.

Judson, R.S., M.T. Martin, D.M. Reif, K.A. Houck, T.B. Knudsen, D.M. Rotroff, M. Xia, S. Sakamuru, R. Huang, P. Shinn, C.P. Austin, R.J. Kavlock, and D.J. Dix. 2010. Analysis of eight oil spill dispersants using rapid, in vitro tests for endocrine and other biological activity. Environ. Sci. Technol. 44(15):5979-5985.

Kleinstreuer, N., D. Dix, M. Rountree, N. Baker, N. Sipes, D. Reif, R. Spencer, and T. Knudsen. 2013. A computational model predicting disruption of blood vessel development. PLoS Comput. Biol. 9(4):e1002996.

Knecht, A.L., B.C. Goodale, L. Troung, M.T. Simonich, A.J. Swanson, M.M. Matzke, K.A. Anderson, 1 and R.L. Tanguay. 2013. Comparative developmental toxicity of environmentally relevant 2 oxygenated PAHs. Toxicol. Appl. Pharmacol. 271(2):266-275.

Kolf-Clauw, M, F. Chevy, B. Siliart, C. Wolf, N. Mulliez, and C. Roux. 1997. Cholesterol biosynthesis inhibited by BM15.766 induces holoprosencephaly in the rat. Teratology 56(3):188-200.

Lamb, J., E.D. Crawford, D. Peck, J.W. Modell, I.C. Blat, M.J. Wrobel, J. Lerner, J.P. Brunet, A. Subramanian, K.N. Ross, M. Reich, H. Hieronymus, G. Wei, S.A. Armstrong, S.J. Haggarty, P.A. Clemons, R. Wei, S.A. Carr, E.S. Lander, and T.R. Golub. 2006. The connectivity map: Using gene-expression signatures to connect small molecules, genes, and disease. Science 313(5795):1929-1935.

Liu, C., J. Su, F. Yang, K. Wei, J. Ma, and X. Zhou. 2015. Compound signature detection on LINCS L1000 big data. Mol. Biosyst. 11(3):714-722.

McHale, C.M., L. Zhang, and M.T. Smith. 2012. Current understanding of the mechanism of benzene-induced leukemia in humans: Implications for risk assessment. Carcinogenesis 33(2):240-252.

Muir, D.C., and P.H. Howard. 2006. Are there other persistent organic pollutants? A challenge for environmental chemists. Environ. Sci. Technol. 40(23):7157-7166.

Nardelli, T.C., H.C. Erythropel, and B. Robaire. 2015. Toxicogenomic screening of replacements for di(2-ethylhexyl) phthalate (DEHP) using the immortalized TM4 Sertoli cell line. PLoS One 10(10):e0138421.

NRC (National Research Council). 1983. Risk Assessment in the Federal Government: Managing the Process. Washington, DC: National Academy Press.

NRC (National Research Council). 2006. Human Biomonitoring for Environmental Chemicals. Washington, DC: The National Academies Press.

NRC (National Research Council). 2007. Toxicity Testing in the 21st Century: A Vision and a Strategy. Washington, DC: The National Academies Press.

NRC (National Research Council). 2008. Phthalates and Cumulative Risk Assessment: The Tasks Ahead. Washington, DC: The National Academies Press.

NRC (National Research Council). 2009. Science and Decisions: Advancing Risk Assessment. Washington, DC: The National Academies Press.

NRC (National Research Council). 2012. Exposure Science in the 21st Century: A Vision and a Strategy. Washington, DC: National Academies Press.

NTP (National Toxicology program). 2016. Nominations to the testing program [online]. Available: http://ntp.niehs.nih.gov/testing/noms/index.html [accessed July 22, 2016].

O’Connell, S.G., T. Haigh, G. Wilson, and K.A. Anderson. 2013. An analytical investigation of 24 oxygenated-PAHs (OPAHs) using liquid and gas chromatography-mass spectrometry. Anal Bioanal Chem. 405(27):8885-8896.

Paulik, L.B., B.W. Smith, A.J. Bergmann, G.J. Sower, N.D. Forsberg, J.G. Teeguarden, and K.A. 1 Anderson. 2016.

Passive samplers accurately predict PAH levels in resident crayfish. Sci. Total Environ. 544:782-791.

Rager, J.E., M.J. Strynar, S. Liang, R.L. McMahen, A.M. Richard, C.M. Grulke, J.F. Wambaugh, K.K. Isaacs, R. Judson, A.J. Williams, and J.R. Sobus. 2016. Linking high resolution mass spectrometry data with exposure and toxicity forecasts to advance high-throughput environmental monitoring. Environment Int. 88:269-280.

Roessler, E., E. Belloni, K. Gaudenz, F. Vargas, S.W. Scher-er, L.C. Tsui, and M. Muenke. 1997. Mutations in the C-terminal domain of Sonic Hedgehog cause holoprosencephaly. Hum. Mol. Genet. 6(11):1847-1853.

Rovida, C., N. Alépée, A.M. Api, D.A. Basketter, F.Y. Bois, F. Caloni, E. Corsini, M. Daneshian, C. Eskes, J. Ezen-dam, H. Fuchs, P. Hayden, C. Hegele-Hartung, S. Hoffmann, B. Hubesch, M.N. Jacobs, J. Jaworska, A. Kleensang, N. Kleinstreuer, J. Lalko, R. Landsiedel, F. Lebreux, T. Luechtefeld, M. Locatelli, A. Mehling, A. Natsch, J.W. Pitchford, D. Prater, P. Prieto, A. Schepky, G. Schüürmann, L. Smirnova, C. Toole, E. van Vliet, D. Weisensee, and T. Hartung. 2015. Integrated testing strategies (ITS) for safety assessment. ALTEX 32(1):25-40.

Rudén, C., and S.O. Hansson. 2010. Registration, Evaluation, and Authorization of Chemicals (REACH) is but the first step. How far will it take us? Six further steps to improve the European chemicals legislation. Environ. Health Perspect. 118(1):6-10.

Seltenrich, N. 2015. A hard nut to crack: Reducing chemical migration in food-contact materials. Environ. Health Perspect. 123(7):A174-A179.

Shin, H.M., A. Ernstoff, J.A. Arnot, B.A. Wetmore, S.A. Csiszar, P. Fantke, X. Zhang, T.E. McKone, O. Jolliet, and D.H. Bennett. 2015. Risk-based high-throughput chemical screening and prioritization using exposure models and in vitro bioactivity assays. Environ. Sci. Technol. 49(11):6760-6771.

Tal, T.L., C.W. McCollum, P.S. Harris, J. Olin, N. Kleinstreuer, C.E. Wood, C. Hans, S. Shah, F. A. Merchant, M. Bondesson, T.B. Knudsen, S. Padilla, and M.J. Hemmer. 2014. Immediate and long-term consequences of vascular toxicity during zebrafish development. Reprod. Toxicol. 48:51-61.

Voutchkova, A.M., T.G. Osimitz, and P.T. Anastas. 2010. Toward a comprehensive molecular design framework for reduced hazard. Chem. Rev. 110(10):5845-5882.

Wambaugh, J.F., R.W. Setzer, D.M. Reif, S. Gangwal, J. Mitchell-Blackwood, J.A. Arnot, O. Joliet, A. Frame, J. Rabinowitz, T.B. Knudsen, R.S. Judson, P. Egeghy, D. Vallero, and E.A. Cohen Hubal. 2013. High-throughput models for exposure-based chemical prioritization in the ExpoCast project. Environ. Sci. Technol. 47(15):8479-8488.

Wetmore, B.A., J.F. Wambaugh, S.S. Ferguson, L. Li, H.J. Clewell, III, R.S. Judson, K. Freeman, W. Bao, M.A Sochaski, T.M. Chu, M.B. Black, E. Healy, B. Allen, M.E. Andersen, R.D. Wolfinger, and R.S. Thomas. 2013. Relative impact of incorporating pharmacokinetics on predicting in vivo hazard and mode of action from high-throughput in vitro toxicity assays. Toxicol. Sci. 132(2):327-346.

Wu, S., K. Blackburn, J. Amburgey, J. Jaworska, and T. Federle. 2010. A framework for using structural, reactivity, metabolic and physicochemical similarity to evaluate the suitability of analogs for SAR-based toxicological assessments. Regul. Toxicol. Pharmacol. 56(1):67-81.

Wu, S., J. Fisher, J. Naciff, M. Laufersweiler, C. Lester, G. Daston, and K. Blackburn. 2013. Framework for identifying chemicals with structural features associated with the potential to act as developmental or reproductive toxicants. Chem. Res. Toxicol. 26(12):1840-1861.