7

Interpretation and Integration of Data and Evidence for Risk-Based Decision-Making

Chapters 2–4 highlighted major advances in exposure science, toxicology, and epidemiology that will enable a better understanding of pathways, components, and mechanisms that contribute to disease. As described in those chapters, the new tools and the resulting data will improve the assessment of exposures that are associated with incremental increases in risk and will enhance the characterization of the spectrum of hazards that can be caused by chemicals. Chapter 5 described the new direction of risk assessment that is based on biological pathways and processes. That approach acknowledges the multifactorial and nonspecific nature of disease causation—that is, stressors from multiple sources can contribute to a single disease, and a single stressor can lead to multiple adverse outcomes. The new direction offers great promise for illuminating how various agents cause disease, but 21st century science—with its diverse, complex, and potentially large datasets—poses challenges related to analysis, interpretation, and integration of the data that are used in risk assessment. For example, transparent, reliable, and vetted approaches are needed to analyze toxicogenomic data to detect the signals that are relevant for risk assessment and to integrate the findings with results of traditional whole-animal assays and epidemiological studies. Approaches will also be needed to analyze and integrate different 21st century data streams and ultimately to use them as the basis of inferences about, for example, chemical hazard, dose–response relationships, and groups that are at higher risk than the general population. Agencies have systems of practice, guidelines, and default assumptions to support consistent and efficient approaches to risk assessment in the face of underlying uncertainties, but their practices will need to be updated to accommodate the new data.

In this chapter, the committee offers some recommendations for improving the use of the new data in reaching conclusions for the purpose of decision-making. Steps in the process include analyzing the data to determine what new evidence has been generated (data-analysis step), combining new data with other datasets in integrated analyses (data-integration step), and synthesizing evidence from multiple sources, for example, for making causal inferences, characterizing exposures and dose–response relationships, and gauging uncertainty (evidence-integration step). The three steps should be distinguished from each other. The purpose of data analysis is to determine what has been learned from the new data, such as exposure data or results from individual toxicity assays. The new data might be combined with similar or complementary data in an integrative analysis, and the resulting evidence might then be integrated with prior evidence from other sources. Because the terminology in the various steps has varied among reports from agencies and organizations, the committee that prepared the present report adopts the concepts and terminology in Box 7-1.

The committee begins by considering data interpretation when using the new science in risk assessment and next discusses some approaches for evaluating and integrating data and evidence for decision-making. The committee briefly discusses uncertainties associated with the new data and methods. The chapter concludes by describing some challenges and offering recommendations to address them.

DATA INTERPRETATION AND KEY INFERENCES

Interpreting data and drawing evidence-based inferences are essential elements in making risk-based decisions. Whether for establishing public-health protective limits for air-pollution concentrations or for determining the safety of a food additive, the approach used to draw conclusions from data is a fundamental issue for risk assessors and decision-makers. Drawing inferences about human-health risks that are based on a pathway approach can involve answering the following fundamental questions:

- Can an identified pathway, alone or in combination with other pathways, when sufficiently perturbed, increase the risk of an adverse outcome or disease in humans, particularly in sensitive or vulnerable individuals?

- Do the available data—in vitro, in vivo, computational, and epidemiological data—support the judgment that the chemical or agent perturbs one or more pathways linked to an adverse outcome?

- How does the response or pathway activation change with exposure? By how much does a chemical or agent exposure increase the risk of outcomes of interest?

- Which populations are likely to be the most affected? Are some more susceptible because of co-exposures, pre-existing disease, or genetic susceptibility? Are exposures of the young or elderly of greater concern?

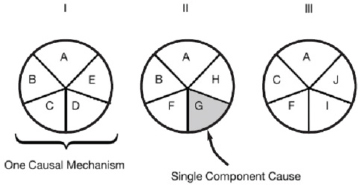

To set the context for the discussion of inference and data interpretation to address the above questions, the committee begins by considering a useful causal model of disease. As discussed in Chapter 5, the focus of toxicological research has shifted from observing apical responses to understanding biological processes or pathways that lead to the apical responses or disease. There is also the recognition that a single adverse outcome might result from multiple mechanisms, which can have multiple components (see Figure 5-1). The 21st century tools, which can be used to determine the degree to which exposures perturb pathways or activate mechanisms, facilitate a new direction in risk assessment that acknowledges the multifactorial nature of disease.

One way in which to consider the multifactorial nature of disease is to use the sufficient-component-cause model (Rothman 1976; Rothman and Greenland 2005). The sufficient-component-cause model is an extension of the counterfactual notion1 and considers sets of actions, events, or states of nature that together lead to the outcome under consideration. The model provides a way to account for multiple factors that can combine to result in disease in an individual or population. It addresses the question, What are the various events that might have caused a particular effect? For example, a house caught fire because of a constellation of events—fire in the fireplace, wooden house, strong wind, and alarm not functioning—that together formed a sufficient causal complex, but no component was sufficient in itself (Mackie 1980). The model leads to the designation of causes or events as necessary, sufficient, or neither.

Figure 7-1 illustrates the sufficient-component-cause concept and shows that the same outcome can result from more than one causal complex or mechanism; each “pie” has multiple components and generally involves the joint action of multiple components. Although most components are neither necessary (contained in every pie) nor sufficient (single-component-cause pie) to produce outcomes or diseases, the removal of any one component will prevent some outcomes. If the component is part of a common complex or part of most complexes, removing it would be expected to result in prevention of a substantial amount of disease or possibly of all disease (IOM 2008). It is important to note that not every component in a complex has to be known or removed to prevent cases of disease. And exposures to each component of a pie do not have to occur at the same time or in the same space, depending on the nature of the disease-producing process. Relevant exposures might accumulate over the life span or occur during a critical age window. Thus, multiple exposures (chemical and nonchemical) throughout the life span might affect multiple components in multiple mechanisms. Moreover, variability in the exposures received by the population and in underlying susceptibility and the multifactorial nature of chronic disease imply that multiple mechanisms can contribute to the disease burden in a population.

___________________

1 The counterfactual is the state that is counter to the facts; for example, what would the risk of lung cancer have been if cigarette-smoking did not exist?

The definitions of component, mechanism, and pathway are the same as those provided in Chapter 5 in the discussion of the new direction in risk assessment. Box 7-2 provides the definitions in the context of the sufficient-component-cause model and is a reminder of the general definitions provided in Chapter 1. Given Figure 7-1 and Box 7-2, a mechanism of a disease will typically involve more than one component or pathway; multiple pathways will likely be involved in the production of disease.

The sufficient-component-cause model is a useful construct for considering methods for interpreting data and drawing inferences for risk assessment on the basis of 21st century data. It can be used to interpret mechanistic data for addressing the four critical questions above. And it is useful for considering whether a mechanism is complete (that is, whether all the necessary components are present or activated sufficiently to produce disease) and for considering the degree to which elimination or suppression of one component might be preventive.

Identifying Components, Mechanisms, and Pathways That Contribute to Disease

Research on the causes of cancer provides a concrete example of the uses of the multifactorial disease concept and consideration of upstream biological characteristics. Ten characteristics of carcinogens have been proposed (IARC 2015; Smith et al. 2016) on the basis of mechanisms associated with chemicals that are known to cause cancer in humans (see Table 7-1). The International Agency for Research on Cancer (IARC) is using the characteristics as a way to organize mechanistic data relevant to agent-specific evaluations of carcinogenicity (IARC 2016a). The committee notes that key characteristics for other hazards, such as cardiovascular and reproductive toxicity, could be developed as a guide for evaluating the relationship between perturbations observed in assays, their potential to pose a hazard, and their contribution to risk.

The IARC characteristics include components and pathways that can contribute to a cancer. For example, “modulates receptor-meditated effects” includes activation of the aryl hydrocarbon receptor, which can initiate downstream events, many of which are linked to cancer, such as thyroid-hormone induction, xenobiotic metabolism, pro-inflammatory response, and altered cell-cycle control. Ones that are linked often fall under other IARC characteristics—for example, “cell-cycle control” falls under “alters cell proliferation”—and therefore are components of other characteristics. At the molecular level, some specific pathways that are ascribed to particular cancers (for example, the “chromosome unstable pathway” for pancreatic cancer) and fall within the IARC characteristics of carcinogens have been curated in the Kyoto Encyclopedia of Genes and Genomes2 databases.

___________________

TABLE 7-1 Characteristics of Carcinogens

| Characteristica | Example of Relevant Evidence |

| Is electrophilic or can be metabolically activated | Parent compound or metabolite with an electrophilic structure (e.g., epoxide or quinone), formation of DNA and protein adducts |

| Is genotoxic | DNA damage (DNA-strand breaks, DNA-protein crosslinks, or unscheduled DNA synthesis), intercalation, gene mutations, cytogenetic changes (e.g., chromosome aberrations or micronuclei) |

| Alters DNA repair or causes genomic instability | Alterations of DNA replication or repair (e.g., topoisomerase II, base-excision, or double-strand break repair) |

| Induces epigenetic alterations | DNA methylation, histone modification, microRNA expression |

| Induces oxidative stress | Oxygen radicals, oxidative stress, oxidative damage to macromolecules (e.g., DNA or lipids) |

| Induces chronic inflammation | Increased white blood cells, myeloperoxidase activity, altered cytokine, or chemokine production |

| Is immunosuppressive | Decreased immunosurveillance, immune-system dysfunction |

| Modulates receptor-mediated effects | Receptor activation or inactivation (e.g., ER, PPAR, AhR) or modulation of endogenous ligands (including hormones) |

| Causes immortalization | Inhibition of senescence, cell transformation |

| Alters cell proliferation, cell death, or nutrient supply | Increased proliferation, decreased apoptosis, changes in growth factors, energetics and signaling pathways related to cellular replication or cell cycle |

a Any characteristic could interact with any other (such as oxidative stress, DNA damage, and chronic inflammation), and a combination provides stronger evidence of a cancer mechanism than one would alone. Sources: IARC 2016; Smith et al. 2016.

One challenge is to evaluate whether a component or specific biological pathway contributes to a particular adverse outcome or disease. The challenge is not trivial given that inferences must be drawn from evidence that is far upstream of the apical outcome. The ability to identify the contributions of various components and pathway perturbations to disease and to understand the importance of changes in them can be critical to 21st century risk-based decision-making. However, the need for such an understanding will be specific to the decision context. In some contexts, the lack of any observable effect on biological processes in adequate testing at levels much above those associated with any human exposure might be sufficient; thus, there is not always the need to associate biological processes directly with potential human health effects. In other cases, it will be critical to understand whether a pathway contributes to disease, for example, in conducting a formal hazard identification or in deciding which whole-animal assays should be used when a chemical is highly ranked in a priority-setting exercise for further testing.

The committee proposes a possible starting point for linking components, pathways, and, more generally, mechanisms to a particular disease or other adverse outcome. The question is whether the components or pathways and other contributing factors cause the disease. The committee draws on and adapts a causal-inference approach to guide the evaluation of the new types of data. Causal inference refers to the process of judging whether evidence is sufficient to conclude that there is a causal relationship between a putative cause (such as a pathway perturbation) and an effect of interest (such as an adverse outcome). The causal guidelines that were developed by Bradford Hill (1965) and by the committee that wrote the 1964 Surgeon General’s report on smoking and health (DHEW 1964) have proved particularly useful for interpreting epidemiological findings in the context of experimental and mechanistic evidence. Those guidelines have

been proposed by others for evaluating adverse-outcome pathways (OECD 2013). Box 7-3 presents the Hill–Surgeon General guidelines and suggests how they can be used to evaluate causal linkages between health effects and components, pathways, and mechanisms.

Only one element of the guidelines, that cause precedes effect (temporality), is necessary, although not sufficient. The remaining elements are intended to guide evaluation of a particular body of observational evidence (consistency and strength of association) and to assess the alignment of that evidence with other types of evidence (coherence). The guidelines were not intended to be applied in an algorithmic or check-list fashion, and operationalizing the guidelines for various applications has not been done (for example, defining how many studies are needed to achieve consistency). Use of the guidelines inherently acknowledges the inevitable gaps and uncertainties in the data considered and the need for expert judgment for synthesis. Guidance and best practices should evolve with increased experience in linking pathways, components, and mechanisms to health effects.

Other approaches have been proposed to link outcomes to pathways or mechanisms. The adverse-outcome-pathway and network approaches represent efforts to map pathways that are associated with various outcomes (see, for example, Knapen et al. 2015), and they are based on general guidance (OECD 2013) similar to that described above. A complementary approach that deserves consideration is the meet-in-the-middle concept described in Chapter 4, in which one tries to link the biomarkers of exposure and early effect with the biomarkers of intermediate effect and outcome (see Figure 4-1). Different scientific approaches—traditional epidemiology at the population level, traditional toxicology at the organism level, and 21st century tools at the mechanistic level—will be used to address the challenge of linking effects with pathways or mechanisms. The multiple data streams combined with expert-judgement–based systems for causal inference (see Box 7-3; DHEW 1964; EPA 2005, 2015; IARC 2006) will probably serve as bridges between effects seen in assay systems and those observed in animal models or in studies of human disease. Expert judgments should ultimately involve assessments by appropriate multidisciplinary groups of experts, whether external to or in an agency.

Linking Agents to Pathway Perturbations

For drawing conclusions about whether a substance contributes to disease by perturbing various pathways or activating some mechanism, the committee finds the practice of IARC to be a reasonable approach. In evaluating whether an agent has one or more of the 10 characteristics of carcinogens noted above, IARC (2016) conducts a broad, systematic search of the peer-reviewed in vitro and in vivo data on humans and experimental systems for each of the 10 characteristics and organizes the specific mechanistic evidence by these characteristics. That approach avoids a narrow focus on specific pathways and hypotheses and provides for a broad, holistic consideration of the mechanistic evidence (Smith et al. 2016). IARC rates the evidence on a given characteristic as “strong,” “moderate,” or “weak” or indicates the lack of substantial data to support an evaluation. The evaluations are incorporated into the overall determinations on the carcinogenicity of a chemical. More recently, after providing the evidence on each of the 10 characteristics, IARC summarized the findings from the Tox21 and ToxCast high-throughput screening programs related to the 10 characteristics with the caveat that “the metabolic capacity of the cell-based assays is variable, and generally limited” (IARC 2015, 2016a).

Integrative approaches are being developed to evaluate high-throughput data in the Tox21 and ToxCast databases for the activity of a chemical in pathways associated with toxicity. Qualitative and quantitative approaches for scoring pathway activity have been applied. For example, scoring systems have been developed for “gene sets” of assays that are directed at activity in receptor-activated pathways, such as pathways involving androgen, estrogen, thyroid-hormone, aromatase, aryl-hydrocarbon, and peroxisome proliferator-activated receptors (Judson et al. 2010; Martin et al. 2010, 2011; EPA 2014) and for “bioactivity sets” that are directed at activity in other general pathways, such as acute inflammation, chronic inflammation, immune response, tissue remodeling, and vascular biology (Kleinstreuer et al. 2014). Chemical mechanisms that are inferred from high-throughput findings do not always match the knowledge of how a chemical affects biological processes that is gained from in vivo and mechanistic studies (Silva et al. 2015; Pham et al. 2016). That discordance underscores the importance of a broad review in associating chemicals to pathways or mechanisms that contribute to health effects. Appendix B provides a case study for a relatively data-sparse chemical that appears to activate the estrogenicity pathway as shown in high-throughput assays; a read-across inference could be drawn by comparing the data-sparse chemical to chemicals in the same structural class that have been studied better.

The causal-guidance topics provided in Box 7-3 can be adapted to guide expert judgments in establishing causal links between chemical exposure and pathway perturbations on the basis of broad, systematic consideration of the evidence from the published literature and government databases. Temporality often is not an issue in the context of experimental assays because the effects are measured after exposure. For epidemiological studies, temporality might be a critical consideration inasmuch as biological specimens that are used to assess exposure might have been collected at times of uncertain relevance

to the underlying disease pathogenesis and biomarkers of effect, and the development of disease might influence exposure patterns. Regarding strength of outcome in the context of Tox21 data, strong responses in multiple assays that are designed to evaluate a specific pathway or mechanism would provide greater confidence that the tested chemical has the potential to perturb the pathway or activate the mechanism. Assessment of the relative potency of test chemicals in activating a mechanism or perturb a pathway will be informed by running assays with carefully selected and vetted positive and negative reference chemicals that have known in vivo effects. As discussed further below, methods or technologies that produce enormous datasets pose special challenges. Procedures to sift through the data to determine signals of importance are needed. As the scientific community develops experience, quantitative criteria and procedures that reflect best practices can be incorporated into guidelines for judging the significance of signals from such data. Regarding consistency, consideration should be given to findings from the same or similar assays in the published literature and government programs and from assays that use appropriately selected reference chemicals. Caution should be exercised in interpreting consistency of results from multiple assays and chemical space because assays might vary in the extent to which they are “fit for purpose” (see Chapter 6). Regarding plausibility and coherence, there are considerations regarding consistency between what is known generally about a chemical or structurally similar chemicals and the outcome of concern and between findings from different types of assays and in different levels of biological organization. In considering the possible applicability of practices adapted from the Bradford Hill guidelines for evaluating the evidence of pathway perturbations by chemicals, the committee emphasizes that the guidelines are not intended to be applied as a checklist.

Assessing Dose–Response Relationships

Chapter 5 and the case studies described in the appendixes show how some of the various 21st century data might be used in understanding dose–response relationships for developing a quantitative characterization of risks posed by different exposures. As noted in Chapter 5, it is not necessary to know all the pathways or components involved in a particular disease for one to begin to apply the new tools in risk assessment, and a number of types of analyses that involve dose–response considerations can incorporate the new data. Table 7-2 lists some of those analyses and illustrates the type of inferences or assumptions that would typically be required in them.

Given that most diseases that are the focus of risk assessment have a multifactorial etiology, it is recognized that some disease components result from endogenous processes or are acquired by the human experience, such as background health conditions, co-occurring chemical exposures, food and nutrition, and psychosocial stressors (NRC 2009). Those additional components might be independent of an environmental stressor under study but nonetheless influence and contribute to the risk and incidence of disease (NRC 2009; Morello-Frosch et al. 2011). They also can increase the uncertainty and complexity of dose–response relationships—a topic discussed at length in the NRC (2009) report Science and Decisions: Advancing Risk Assessment, and the reader is referred to that report for details on deriving dose–response relationships for apical outcomes by using mechanistic and other data. The 21st century tools provide the mechanistic data to support those deviations.

The committee emphasizes the importance of being transparent, clear, and, to the greatest extent appropriate, consistent about the explicit and implicit biological assumptions that are used in data analysis, particularly dose–response analysis. Best practices will develop over time and should be incorporated into formal guidance to ensure the consistent and transparent use of procedures and assumptions in an agency. The development and vetting of such guidance through scientific peer-review and public-comment processes will support the best use of the new data in dose–response practices. The guidelines should address statistical and study-selection issues in addition to the assumptions that are used in the biological and physical sciences for analyzing such data. For example, studies that are used to provide the basis of the dose–response description should generally provide a better quantitative characterization of human dose–response relationships than the studies that were not selected. Some issues related to statistical analyses in the context of large datasets are considered below. Various dose–response issues presented in Table 7-2 involve integration of information in and between data domains, and tools for such integration and the possible implicit biological assumptions needed for their use are discussed later in this chapter.

Characterizing Human Variability and Sensitive Populations

People differ in their responses to chemical exposures, and variability in exposure and response is a critical consideration in risk assessment. For example, protection of susceptible populations is a critical aim in many risk-mitigation strategies, such as the setting of National Ambient Air Quality Standards for criteria air pollutants under the Clean Air Act. Variability in response drives population-level dose–response relationships (NRC 2009), but characterizing variability is particularly challenging given the number of sources of variability in response related to such inherent factors as genetic makeups, life stage, and sex and such extrinsic factors as psychosocial stressors, nutrition, and exogenous chemical exposures. Genetic

makeup has often been seen as having a major role in determining variability, but research indicates that it plays only a minor role in determining variability in response related to many diseases (Cui et al. 2016). Thus, in considering use and integration of 21st century science data, the weight given to data that reflect genetic variability needs to be considered in the context of the other sources of human variability.

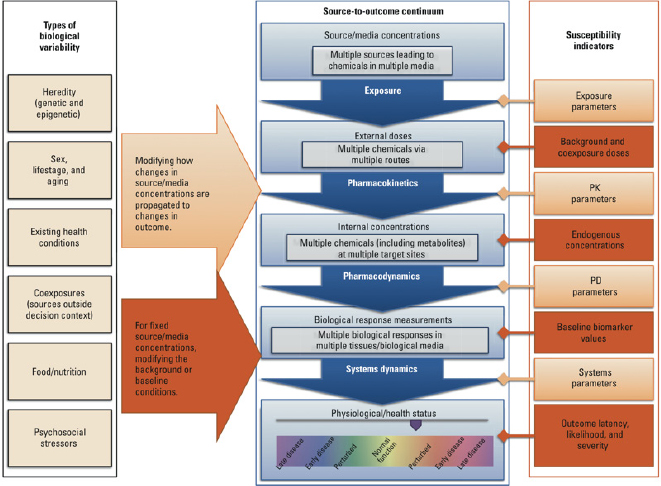

Figure 7-2 illustrates how a wide array of factors—each potentially varying in a population—can combine to affect the overall degree of interindividual variability in a population (Zeise et al. 2013). Variability is shown in the context of the source-to-outcome continuum that has been expanded and elaborated on in Chapters 2 and 3. As described in Chapter 2, environmental chemical exposure at particular concentrations leads to an internal exposure that is modified by pharmacokinetic elements. As described in Chapter 3, internal exposure results in some molecular changes that progress in later steps to outcomes. Figure 7-2 shows how variability in other exposures and in biological factors can affect different points along the source-to-outcome pathway and lead to different outcomes in individuals. Modern exposure, toxicology and epidemiology tools—including biomarkers and measures of physiological status—can all provide indications of susceptibility status. The same indicators can be observed experimentally and used in models to help in drawing inferences about variability that are relevant to humans.

TABLE 7-2 Examples of Inferences or Assumptions Needed to Use 21st Century Data in Various Analyses

| Analysis That Involves Dose–Response Considerations | Examples of Inferences or Assumptions Needed |

| Read-across: health reference values derived from structurally or biologically similar anchor chemicals |

|

| Toxicogenomic screening to determine whether environmental exposures are of negligible concern or otherwise |

|

| Extrapolation of effect or benchmark doses in vitro to human exposures to establish health reference valuesa |

|

| Priority-setting of chemicals for testing on the basis of in vitro screens |

|

| Clarification of low end of dose–response curve (for rich datasets) |

|

| Construction of dose–response curve from population variability characteristics (NRC 2009) |

|

| Selection of method or model for dose–response characterization |

|

a For most outcomes, it is not possible simply to replace a value derived from a whole-animal assay with a value derived from an in vitro assay. The lack of understanding of all the pathways involved makes such direct replacement premature. The lack of metabolic capacity in cell systems and the limitations of biological coverage pose further challenges to the free-standing use of in vitro approaches for derivation of guidance values in most contexts (see Chapters 3 and 5).

Chapter 2 describes pharmacokinetic models of various levels of complexity that can be used to evaluate human interindividual variability in an internal dose that results from a fixed external exposure. Chapter 3 describes relatively large panels of lymphoblastoid cell lines derived from genetically diverse human populations that can be used to examine the genetic basis of interindividual variability in a single pathway. The chapter also describes how genetically diverse panels of inbred mice strains can be used to explore variability and how various studies that use such strains have been able to identify genetic factors associated with liver injury from acetaminophen (Harrill et al. 2009) and tetrachloroethylene (Cichocki et al. in press). The combination of such experimental systems with additional stressors can be used to study other aspects of variability. Chapter 4 covers epidemiological approaches used to observe variability in human populations.

Data-driven variability characterizations have been recommended as a possible replacement for standard defaults used by agencies, in specific cases and in general. Data-driven variability factors can be considered in light of the guidance for departure from defaults provided in NRC (2009), the degree to which the full array of sources of variability have been adequately explored, and the reliability of the evidence integration. The modified causal guidance provided in Box 7-3 can be used to assess the emerging qualitative and quantitative evidence on human variability, and the analysis and integration approaches described later in the chapter are also relevant here.

APPROACHES FOR EVALUATING AND INTEGRATING DATA AND EVIDENCE

The volume and complexity of 21st century data pose many challenges in analyzing them and integrating them with data from other (traditional) sources. As noted earlier, the necessary first step is the analysis of the toxicity-assay results and exposure data. That stage of analysis is followed by the data-integration step in which the new data are combined with other datasets (the combination of similar or complementary data in an integrative analysis). The results of such analyses might then be integrated with prior evidence from other sources (evidence integration). The discussion below first addresses the issues associated

with analyzing individual datasets and studies—that is, evaluating individual study quality and tackling the challenge of big data. Next, approaches for interpreting and integrating data from various studies, datasets, and data streams are described, and some suggestions are provided for their use with 21st century data. The committee notes that recent reports of the National Research Council and the National Academies of Sciences, Engineering, and Medicine have dealt extensively with the issues of data and evidence integration (see, for example, NRC 2014 and NASEM 2015). The committee notes that although formal methods receive emphasis below, findings could be sufficiently compelling without the use of complex analytical and integrative methods. In such cases, decisions might be made on direct examination of the findings.

Analyzing Individual Datasets and Studies

Evaluating Individual Studies

Several NRC reports have emphasized the need to use standardized or systematic procedures for evaluating individual studies and described some approaches for evaluating risk of bias and study quality (see, for example, NRC 2011, Chapter 7; NRC 2014, Chapter 5). Those reports, however, acknowledged the need to develop methods and tools for evaluating risk of bias in environmental epidemiology, animal, and mechanistic studies. Since release of those reports, approaches for assessing risk of bias in environmental epidemiology and animal studies have been advanced (Rooney et al. 2014; Woodruff and Sutton 2014; NTP 2015a). Approaches for assessing risk of bias in mechanistic studies, however, are still not well developed, and there are no established best practices specifically for high-throughput data. The committee emphasizes the need to develop best practices for systematically evaluating 21st century data and for ensuring transparency when a study or -omics dataset is excluded from analysis. There is also a need for data-visualization tools to aid in interpreting and communicating findings. The committee notes that evaluating the quality of an individual study is a step in systematic review, discussed below.

Tackling the Challenge of Big Data

The emerging technologies of 21st century science that generate large and diverse datasets provide many opportunities for improving exposure and toxicity assessment, but they pose some substantial analytical challenges, such as how to analyze data in ways that will identify valid and useful patterns and that limit the potential for misleading and expensive false-positive and false-negative findings. Although the statistical analysis and management of such data are topics of active research, development, and discussion, the committee offers in Box 7-4 some practical advice regarding several statistical issues that arise in analyzing large datasets or evaluating studies that report such analyses.

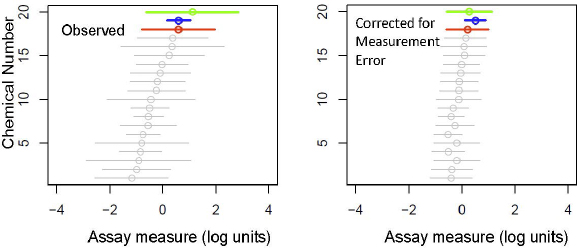

To illustrate one of the statistical issues, the winner’s curse correction, consider an in vitro assay that is used to measure chemicals in a class for a particular activity, such as binding to the estrogen receptor alpha. The application might call for identifying the least or most potent chemical or the range of activity for the class. Figure 7-3 shows how a group of chemicals can appear to differ considerably in an assay—by more than two orders of magnitude in this example. However, if the results of the assay are measured with a comparable degree of error, conclusions can be misleading. After correction for error by using a simple Bayesian approach with a hierarchical model for variation of true effects, chemicals in the group differ from one another in potency by less than 1 order of magnitude, and the chemical that originally was observed to have the highest potency in the assay moves to the second position.

Another illustration is offered by the case study for 4-methylcyclohexanemethanol (MCHM, the chemical spilled into the Elk River in West Virginia) that is discussed in Appendix C. In addition to a number of in vitro and in vivo studies, the National Toxicology Program performed 5-day toxicogenomic studies in rats on MCHM and other chemicals spilled into the river. Initial findings of toxicogenomic signals—referred to as “molecular biological processes” that were indicative of liver toxicity—were made at around 100 mg/kg, which was a dose just below the apical observations of liver toxicity at 300 and 500 mg/kg, for example, for increased triglycerides (NTP 2015b). However, a refined analysis that sought to limit false discovery and maximize reproducibility (S. Auerbach, National Toxicology Program, personal communication, November 1, 2016) found changes in measures of dose-related toxicogenomic activity—activity of at least five genes that are associated with, for example, cholesterol homeostasis by the liver—at doses lower by nearly a factor of 10 (median benchmark dose of 13 mg/kg-day; NTP 2016) than doses previously thought to be the lowest doses to show activity. The example illustrates the challenge of developing approaches to evaluate toxicogenomic data that, while not excluding important biological signals, address the issue of false positives. With the increasing generation and analysis of toxicogenomic data in animal experiments, the additional experience should facilitate the development of best practices. Similar considerations apply to the use of toxicogenomic data from in vitro and epidemiological studies.

Aside from the statistical approaches used for data analysis, other considerations are involved in judging the quality and potential bias of studies that use 21st century data, particularly regarding applicability or generalizability of a study for addressing the question at hand. Such

considerations raised in earlier chapters include the metabolic competence of in vitro assays, the nature of the cells used in in vitro assays, and the representativeness of the nominal dose in in vitro systems.

Approaches for Integrating Information from Studies, Datasets, and Data Streams

Systematic Review

As defined by the Institute of Medicine (IOM 2011, p. 1), systematic review “is a scientific investigation that focuses on a specific question and uses explicit, prespecified scientific methods to identify, select, assess, and summarize the findings of similar but separate studies.” Specifically, it is an approach that formulates an a priori question that specifies a population or participants (the exposed group under study), an exposure (the substance and exposure circumstance), a comparator (subjects who have lower exposures), and outcomes of interest; conducts a comprehensive literature search to identify all relevant articles; screens the literature according to prespecified exclusion and inclusion criteria; evaluates study quality and study bias according to prespecified methods; and summarizes the results. The summary might or might not provide a quantitative estimate (see meta-analysis discussion below), and transparency is emphasized in the overall approach. Systematic review has been used extensively in the field of comparative-effectiveness research in which one attempts to identify the best treatment option in the clinical setting. In that field, the systematic-review process is relatively mature (Silva et al. 2015); guidance is provided in the Cochrane handbook (Higgins and Green 2011). Although there are some challenges in using systematic review in risk assessment, such as formulating a sufficiently precise research question and obtaining access to primary data, its application in human health risk assessments is a rapidly developing field in which frameworks (Rooney et al. 2014; Woodruff and Sutton 2014) and examples (Kuo et al. 2013; Lam et al. 2014; Chappell et al. 2016) are available. The report Review of EPA’s Integrated Risk Information System (IRIS) Process (NRC 2014) provides an extensive discussion of systematic review as applied to the development of IRIS assessments (hazard and dose–response assessments). As indicated in that report, systematic review integrates the data within one data stream (human, animal, or mechanistic), and other approaches are then used to integrate the collective body of evidence. As noted above, one challenge for systematic reviews that address environmental risks to human health has been in developing methods to assess bias in mechanistic studies and their heterogeneity. Practical guidance for systematic review focused on human health risk has recently been developed (NTP 2015a).

Meta-Analysis

Meta-analysis is a broad term that encompasses statistical methods used to combine data from similar studies. Its goal is to combine effect estimates from similar studies into a single weighted estimate with a 95% confidence interval that reflects the pooled data. If there is heterogeneity among the results of different studies, another goal is to explore the reasons for the heterogeneity. Two models—the fixed-effect model and the random-effects model—are typically used to pool data from different studies; each model makes different assumptions about the nature of the studies that contributed the data and

therefore uses different mechanisms for estimating the variance of the pooled effect. As noted in NRC (2014), “although meta-analytic methods have generated extensive discussion (see, for example, Berlin and Chalmers 1988; Dickersin and Berlin 1992; Berlin and Antman 1994; Greenland 1994; Stram 1996; Stroup et al. 2000; Higgins et al. 2009; Al Khalaf et al. 2011), they can be useful when there are similar studies on the same question.”

As one might expect, meta-analyses are often applied to epidemiological studies to assess hazard (for example, does the pooled relative risk differ significantly from 1.0?) or to characterize dose–response relationships (for example, relative risk per unit concentration). They have not seen much use for evaluating animal datasets because of difficulty in assessing and identifying sources of heterogeneity of the data. Similarly, their application to 21st century data streams is expected to be uncommon given the heterogeneity of the data and the need to integrate data from different types of measures even when evaluating the same mechanisms or pathways.

Bayesian Approaches

Bayesian methods provide a natural paradigm for integrating data from various sources while accommodating uncertainty. The method is based on the Bayes theorem and involves representing the state of knowledge about a variable or phenomenon, such as the slope of a dose–response curve or how people differ from one another in their ability to metabolize a chemical, as captured by a probability distribution. As further information is generated about the variable, the “prior” probability distribution is “updated” to a new “posterior” probability distribution that reflects the updated state of knowledge.

Early applications of Bayesian approaches were by DuMouchel and Harris (1983) to evaluate the carcinogenicity of diesel exhaust by combining evidence from human, animal, and mechanistic studies, and by DuMouchel and Groer (1989) to estimate the rate of bone cancer caused by deposited plutonium from data on humans and dogs. Those examples involved strong assumptions about relevance and equivalence of different data streams (for example, human versus animal). Hierarchical, population Bayesian methods have been used to integrate different lines of evidence on metabolism and its variability in risk assessments of tetrachloroethylene (Bois et al. 1996; OEHHA 2001) and trichloroethylene (EPA 2011; Chiu et al. 2014). Bayesian approaches have been used to estimate values of model parameters for physiologically based pharmacokinetic models and to characterize uncertainty and variability in exposure estimates (Bois 1999, 2000; Liao et al. 2007; Wambaugh et al. 2013; Dong et al. 2016). They have also been applied to fate and transport modeling of chemicals at contaminated sites, of natural estrogens from livestock operations, and of bacteria from nonpoint sources (Thomsen et al. 2016) and have been shown to be broadly applicable for evidence integration (NRC 2014; Linkov et al. 2015).

The starting point for a Bayesian analysis is the determination of a prior probability distribution that characterizes the uncertainty in the variable of interest (or hypothesis) before observation of new data. The prior might be elicited on the basis of general knowledge in the literature and the state of scientific knowledge in the field. The process of summarizing information into a prior probability distribution is referred to as prior elicitation. It can be difficult, particularly when little information is available, and it is inherently imperfect in many kinds of applications; there is no best way to obtain and summarize potentially disparate information from the literature and from related studies. Some examples of prior elicitation in environmental risk assessment are provided in Wolfson et al. (1996).

Several strategies have been used to manage the uncertainty in prior elicitation. One involves choosing a prior that is vague. Vague priors can lead to posteriors that are erratic, including posterior densities that have many local bumps and might oscillate as data accumulates between widely divergent values. Gelman et al. (2008) provide some concrete examples of defining probability distributions with weakly informative priors. Another strategy is to estimate parameter values in a prior on the basis of data from related studies. For example, one might be studying a new chemical for which there is not much direct information on mechanism or exact dose–response shapes for different end points; however, there might be much to learn from a collection of the same type of data for similar chemicals. Learning from past data is a version of “empirical Bayes” and can be more easily justified than “subjective Bayes” methods articulated earlier. Potentially, a panel of experts could provide their own priors, which could be combined into a single prior (Albert et al. 2012). However, any one expert tends to be over confident about his or her knowledge and to choose a prior with a variance that is too small. Methods for addressing the over confidence of experts and other deficiencies in expert elicitation are important to consider (NRC 1996, Chapter 4; Morgan 2014). Regardless of the method of elicitation, it is important to assess the plausibility of a selected prior and to conduct sensitivity analyses to understand changes in priors.

Once the prior distribution has been defined, the prior can be updated with information in the likelihood function for each data source. Each time a data source is added, the prior is updated to obtain a posterior distribution that summarizes the new state of knowledge. The posterior distribution can then be used as a prior distribution in future analyses. Bayesian updating can thus be viewed

as a natural method for synthesizing data from different sources.

Sensitivity analysis provides a valuable approach to identify which data uncertainties are the most important in the Bayesian analyses. As noted by NRC (2007), sensitivity analysis can help to set priorities for collecting new data and contribute to a process for systematically managing uncertainties that can improve reliability.

The development of general-purpose, robust, and interpretable Bayesian methods for 21st century data is an active field of research, although hybrid approaches that reduce dimensionality before applying the Bayesian paradigm for synthesis of evidence from different data sources are favored at this point for risk-assessment purposes. The committee provides an example of using Bayesian approaches in a high-dimensional setting in Appendix E.

Guided Expert Judgment

Guided expert judgment is a process that uses the experience and collective judgment of an expert panel to evaluate what is known on a topic, such as whether the overall evidence supports a hazard finding on a chemical (for example, whether a chemical is a carcinogen). Predetermined protocols for judging evidence generally guide the expert panel. The panel might be asked to judge whether the evidence falls into one of several broad categories, such as strong, moderate, or weak. Such approaches are used by the US Environmental Protection Agency (EPA) in its process for evaluating the evidence gathered for the Integrated Science Assessments for the evaluation of National Ambient Air Quality Standards for selected pollutants. Expert-judgment approaches are often criticized because they can lack transparency and reproducibility in that the processes used to synthesize evidence and the resulting judgments made by the experts might be obscured and because different groups of experts can come to different conclusions after reviewing the same data. Furthermore, because modern risk assessments increasingly involve complex, diverse, and large datasets, the use of a guided-expert-judgment approach can be challenging.

The IARC monograph program (IARC 2006; Pearce et al. 2015) uses guided expert judgment for its causal assessment of carcinogenicity that integrates observational human studies, experimental animal data, and other biological data, such as in vitro assays that contribute mechanistic insights. For several agents on which there are few or no human data to assess carcinogenicity, complementary experimental animal data and mechanistic data have been used to support an overall conclusion that a chemical is carcinogenic in humans. The carcinogenicity assessment of ethylene oxide (EO) for which studies in humans are limited by the use of small cohorts of exposed workers is one example. The high mutagenicity and genotoxicity of EO, clear evidence of such activity in humans, and the similarity of the damage induced in animals and humans led IARC working groups (IARC 1994, 2008, 2012) to classify it as a human carcinogen (Group 1) in spite of the limited epidemiological evidence. The most recent review (IARC 2012) noted that “There is strong evidence that the carcinogenicity of ethylene oxide, a direct-acting alkylating agent, operates by a genotoxic mechanism…. Ethylene oxide consistently acts as a mutagen and clastogen at all phylogenetic levels, it induces heritable translocations in the germ cells of exposed rodents, and a dose-related increase in the frequency of sister chromatid exchange, chromosomal aberrations and micronucleus formation in the lymphocytes of exposed workers.” Box 7-5 provides details on the current IARC process.

Some have advocated quantitative approaches to weighting evidence from different sources even if any such weighting approaches can be criticized. A fundamental challenge in evaluating such approaches is that there is often no gold-standard weighting scheme; that is, there are no consensus approaches that are recognized as state-of-the-practice for optimally weighting results obtained from observational epidemiology, laboratory animal studies, in vitro assays, and computational systems for human health risk assessment. Within each of those lines of evidence are studies that vary widely in quality and relevance, and a priori weights established by experts on the basis of general characteristics (for example, animal versus human) fail to account for the scientific nuances. Experts differ as to the best weighting strategy, and formal decision-theory methods do not avoid the need for subjective choices and judgments. Thus, the committee declines to advance quantitative weighting schemes as an approach to integrating evidence from different sources.

Weighting in some cases, however, might be useful in a given data stream or evidence class, such as data from high-throughput assays or in vivo assays with common end points. Weighting typically would follow principles based on statistics and expert judgment. For example, in the absence of additional information, assays that are intended to interrogate the same pathway, mechanism, or end point and are on similar scales can be weighted by using the inverse of sampling variation; this is essentially the approach used in meta-analysis. Assays that evaluate the same end point can be weighted on the basis of prediction accuracy.

Given the current practices, the committee recommends that guided expert judgment be the approach used in the near term to integrate diverse data streams for drawing causal conclusions. Guided expert judgment is not as easily applied to other elements of the risk-assessment process because of the variety of data types and the complexity of decision points in the analyses. Considerable expert review and consultation are recommended for de-

velopment of guidance for those activities to be followed by expert scientific peer review of the final product.

UNCERTAINTIES

Uncertainty accompanies all methods used to generate data inputs for risk assessments. In the case of data from new testing methods, there is the inherent variability of the assays and the qualitative uncertainty associated with their use (see Chapter 3). Such uncertainty arises with other types of assays, such as rodent bioassays, for which standard uncertainty factors are in place and accepted. The Tox21 report acknowledged the need to evaluate “test-strategy uncertainty,” that is, the uncertainty associated with the introduction of a novel series of testing methods. For new assay methods, the quantification of uncertainty and its handling in practice remain to be addressed.

With regard to dealing with analytical uncertainties, the committee notes that the 1983 NRC report Risk Assessment in the Federal Government: Managing the Process remains enlightening. As discussed in Chapter 5, that report laid out the iconic four steps in risk assessment: hazard identification, dose–response assessment, exposure assessment, and risk characterization. The report noted that in each step a number of decision points occur in which “risk to human health can only be inferred from the available evidence.” For each decision point, the 1983 committee recommended the adoption of predetermined choices or inference options ultimately to draw inferences about human risk from data that are not fully adequate. The preferred inference options were also called default options and were to be based on scientific understanding and risk-assessment policy and to be used in the absence of compelling evidence to the contrary. Other NRC committees have reiterated the importance of what have been come to be known simply as defaults and have noted that those used by EPA typically have a relatively strong scientific basis (NRC 1994, 2009). The 1983 committee also called for the establishment of uniform inference guidelines to ensure uniformity and transparency in agency decision-making and called for flexibility in providing for departure from defaults in the presence of convincing scientific evidence. EPA developed a system of guidelines that cover a wide array of risk-assessment topics. The 1983 recommendations have also been reinforced in other NRC reports (NRC 1994, 2009), and the present committee reiterates the importance of establishing uniform guidelines and a system of defaults in the absence of clear scientific understanding and the importance of enhancing the default system as described in Science and Decisions: Advancing Risk Assessment (NRC 2009). The enhancements include making explicit or replacing missing and unarticulated assumptions in risk assessment and developing specific criteria and standards for departing from defaults. The current committee notes, however, that the volume and complexity of 21st century data and the underlying science pose particularly difficult challenges. Systems of defaults and approaches to guide assessment should be advanced once best practices develop, as elaborated in the dose–response section above.

The Tox21 report used test-strategy uncertainty to refer to the overall uncertainty associated with the testing strategy and commented that “formal methods could be developed that use systematic approaches to evaluate uncertainty in predicting from the test battery results the doses that should be without biologic effect in human populations.” Until such methods are developed, judgments as to the strength of evidence on pathway activation will continue to be based on expert judgment that draws on such guidelines as discussed above.

CHALLENGES AND RECOMMENDATIONS

The new direction for risk assessment advanced in this report is based on data from 21st century science on biological pathways and approaches that acknowledge that stressors from multiple sources can contribute to a single disease and that a single stressor can lead to multiple adverse outcomes. The new techniques of 21st century science have emerged quickly and have made it possible to generate large amounts of data that can support the new directions in exposure science, toxicology, and epidemiology. In fact, the technology has evolved far faster than have approaches for analyzing and interpreting data for the purposes of risk assessment and decision-making. This chapter has addressed the challenges related to data interpretation, analysis, and integration; evidence synthesis; and causal inference. The challenges are not new but are now amplified by the scope of the new data streams. The committee lists some of the most critical challenges below with recommendations to address them.

A Research Agenda for Data Interpretation and Integration

Challenge: Insufficient attention has been given to data interpretation and integration as the development of new methods for data generation has outpaced the development of approaches for interpreting the data that they generate. The complexity was recognized in the Tox21 and ES21 reports, but those reports did not attempt to develop an approach for evidence integration and interpretation to make determinations concerning hazards, exposures, and risks.

Recommendation: The committee recommends greater attention to the problem of drawing inferences and proposes the following empirical research agenda:

- The development of case studies that reflect various scenarios of decision-making and data availability. The case studies should reflect the types of data typically available for interpretation and integration in each element of risk assessment—hazard identification, dose–response assessment, exposure assessment, and risk characterization—and include assessing interindividual variability and sensitive populations.

- Testing of the case studies with interdisciplinary and multidisciplinary panels, using best practices and the guided-expert-judgment approaches, such as described above. There is a need to understand how such panels of experts will evaluate the case studies and how various data elements might drive the evaluation process. Furthermore, communication between people from different disciplines, such as Bayesian statisticians and mechanistic toxicologists, will be essential for successful and reliable use of new data; case studies will provide a means of testing how interactions might best be accomplished in practice.

- A comprehensive cataloging of evidence evaluations and decisions that have been taken on various agents so that expert judgments can be tracked and evaluated and the expert processes calibrated. The cataloging should capture the major gaps in evidence and attendant uncertainty that might have figured into evidence evaluation.

- More intensive and systematic consideration of how statistically based tools for combining data and integrating evidence, such as Bayesian approaches, can be used for incorporating 21st century science into hazard, dose–response, exposure, and interindividual-variability assessments and ultimately into the overall risk characterization.

Advancing the Use of Data on Disease Components and Mechanisms in Risk Assessment

Challenge: Data generated from tools that probe components of disease are difficult to use in risk assessment partly because of incomplete understanding of the linkages between disease and components and because of uncertainty around the extent to which mitigation of exposure changes expression of a component and consequently changes the associated risk.

Recommendation: The sufficient-component-cause model should be advanced as an approach for conceptualizing the pathways that contribute to disease risk.

Recommendation: The committee encourages the cataloging of pathways, components, and mechanisms that can be linked to particular hazard traits, similar to the IARC characteristics of carcinogens. This work should draw on existing knowledge and current research in the biomedical fields related to mechanisms of disease that are outside the traditional toxicant-focused literature that has been the basis of human-health risk evaluations and of assessments and toxicology. The work should be accompanied by research efforts to describe the series of assays and responses that provide evidence on pathway activation and to establish a system for interpreting assay results for the purpose of inferring pathway activation from chemical exposure.

Recommendation: High priority should be given to the development of a system of practice related to inferences for using read-across for data-sparse chemicals; that practice area provides great opportunities for advancing various tools and incorporating their use into risk assessment. High priority should also be given to using multiple data streams to evaluate low-dose risk, as elaborated on in NRC (2009).

Developing Best Practices for Data Integration and Interpretation

Challenge: The emergence of new data streams clearly has complicated the long-standing problem of integrating data for hazard identification, which the committee views as analogous to inferring a causal relationship between a putative causal factor and an effect. The committee considers that two challenges are related to data integration and interpretation for hazard identification: (1) using the data from the methods of 21st century science to infer a causal association between a chemical or other exposure and an adverse effect, particularly if it is proximal to an apical effect, and (2) integrating new lines of evidence with those from conventional toxicology and epidemiological studies. Although much has been written on this topic, proposed approaches rely largely on guided expert judgment.

Recommendation: The committee sees no immediate alternative to the use of guided expert judgment as the basis of judgment and recommends its continued use for the time being. Expert judgment should be guided and calibrated in interpreting data on pathways and mechanisms. Specifically, in these early days, the processes of expert judgment should be documented to support the elaboration of best practices, and there should be periodic reviews of how evidence is being evaluated so that the expert-judgment processes can be refined. Those practices will support the development of guidelines with explicit default approaches to ensure consistency in application within particular decision contexts.

Recommendation: In the future, pathway-modeling approaches that incorporate uncertainties and integrate multiple data streams might become an adjunct and perhaps a replacement. Methodological research to advance those approaches is needed.

Challenge: The size of some datasets and the number of outcomes covered complicate communication of findings to the scientific community and to those who use

results for decision-making. There might be distrust because of the need to use methods that are complex and possibly difficult to understand for the large datasets.

Recommendation: Data integration should be complemented by visualization tools to enable effective communication of analytical findings from complex datasets to decision makers and other stakeholders. Transparency of the methods, statistical rigor, and accessibility to the underlying data are key elements for promoting the use and acceptance of the new data in decision-making.

Challenge: Given the complexities of 21st century data and the challenges associated with their interpretation, there is a potential for a decision to be based ultimately on a false-positive or false-negative result. The implications of such an erroneous conclusion are substantial. The challenge is to calibrate analytical approaches to optimize their sensitivity and specificity for identifying true associations. If public-health protection is the underlying goal, an approach that generates more false-positive than false-negative conclusions might be appropriate in some decision contexts. A rigid, algorithmic approach might prove conservative but lead to false-negatives or at least to a delay in decision-making because more evidence is required.

Recommendation: This challenge merits the development of guidelines and best practices that use processes that involve direct discussion among researchers, decision-makers, and other stakeholders who might have different views as to where the balance between sensitivity and specificity should be placed.

Addressing Uncertainties in Using 21st Century Tools in Dose–Response Assessment

Challenge: There are multiple potential complications in moving from in vitro testing and in vivo toxicogenomic studies to applying the resulting dose–response estimates to human populations. Uncertainties are introduced that parallel and might exceed those associated with extrapolation from animal studies to humans. Sources of uncertainty include chemical metabolism, the relevance of pathways, and the generalizability of dose–response relationships that are observed in vitro. There is also the challenge of integration among datasets and multiple lines of evidence.

Recommendation: The challenges noted should be explored in case studies for which the full array of data is available: high-throughput testing, animal studies, and human studies. Bayesian methods need to be developed and evaluated for combining dose–response data from multiple test systems. And a system or practice and default-data integration approaches need to be developed that promote consistent, transparent, and reliable application that explain and account for uncertainties.

Developing Best Practices for Analyzing Big Data for Application in Risk Assessment

Challenge: Enormous datasets that pose substantial analytical challenges are being generated, particularly in relation to identifying biologically relevant signals given the possibility of false-positives resulting from multiple comparisons.

Recommendation: Best practices should be developed through consensus processes to address the statistical issues listed in Box 7-4 that complicate analyses of very large datasets. Those practices might differ by decision context or data type. Adherence to best practices sets a consistent approach for weighing false positives against false negatives and maintaining high integrity in reporting. Analyses should be carried out in transparent and replicable ways to ensure credibility and to enhance review and acceptance of findings for decision-making. Open data access might be critical for ensuring transparency.

REFERENCES

Al Khalaf, M.M., L. Thalib, and S.A. Doi. 2011. Combining heterogeneous studies using the random-effects model is a mistake and leads to inconclusive meta-analyses. J. Clin. Epidemiol. 64(2):119-123.

Albert, I., S. Donnet, C. Guihenneuc-Jouyaux, S. Low-Choy, K. Mengersen, and J. Rousseau. 2012. Combining expert opinions in prior elicitation. Bayesian Anal. 7(3):503-512.

Berlin, J.A., and E.M. Antman. 1994. Advantages and limitations of metaanalytic regressions of clinical trials data. Online J. Curr. Clin. Trials, Document No. 134.

Berlin, J., and T.C. Chalmers. 1988. Commentary on meta-analysis in clinical trials. Hepatology 8(3):690-691.

Bois, F.Y. 1999. Analysis of PBPK models for risk characterization. Ann. NY Acad. Sci. 895:317-337.

Bois, F.Y. 2000. Statistical analysis of Clewell et al. PBPK model of trichloroethylene kinetics. Environ. Health Perspect. 108(Suppl. 2):307-3016.

Bois, F.Y., A. German, J. Jiang, D.R. Maszle, L. Zeise, and G. Alexeeff. 1996. Population toxicokinetics of tetrachloroethylene. Arch. Toxicol. 70(6):347-355.

Chappell, G., I.P. Pogribny, K.Z. Guyton, and I. Rusyn. 2016. Epigenetic alterations induced by genotoxic occupational and environmental human chemical carcinogens: A systematic literature review. Mutat. Res. Rev. Mutat. Res. 768:27-45.

Chiu, W.A., J.L. Campbell, Jr., H.J. Clewell, III, Y.H. Zhou, F.A. Wright, K.Z. Guyton, and I. Rusyn. 2014. Physiologically based pharmacokinetic (PBPK) modeling of inter-strain variability in trichloroethylene metabolism in the mouse. Environ.Health Perspect. 122(5):456-463.

Cichocki, J.A., S. Furuya, A. Venkatratnam, T.J. McDonald, A.H. Knap, T. Wade, S. Sweet, W.A. Chiu, D.W. Thread-

gill, and I. Rusyn. In press. Characterization of variability in toxicokinetics and toxicodynamics of tetrachloroethylene using the Collaborative Cross mouse population. Environ. Health Perspect.

Cui, Y., D.M. Balshaw, R.K. Kwok, C.L. Thompson, G.W. Collman, and L.S. Birnbaum. 2016. The exposome: Embracing the complexity for discovery in environmental health. Environ. Health Perspect. 124(8):A137-A140.

Dickersin, K., and J.A. Berlin. 1992. Meta-analysis: State-of-the-science. Epidemiol. Rev. 14(1):154-176.

DHEW (US Department of Health, Education, and Welfare). 1964. Smoking and Health: Report of the Advisory Committee to the Surgeon General of the Public Health Service. Public Health Service Publication No. 1103. Washington, DC: US Government Printing Office.

Dong, Z., C. Liu, Y. Liu, K. Yan, K.T. Semple, and R. Naidu. 2016. Using publicly available data, a physiologically-based pharmacokinetic model and Bayesian simulation to improve arsenic non- cancer dose-response. Environ. Int. 92-93:239-246.

DuMouchel, W., and P.G. Groër. 1989. Bayesian methodology for scaling radiation studies from animals to man. Health Phys. 57(Suppl. 1):411-418.

DuMouchel, W.H. and J.E. Harris. 1983. Bayes methods for combining the results of cancer studies in humans and other species. J. Am. Stat. Assoc. 78(382):293-308.

Efron, B. 2011. Tweedie’s formula and selection bias. J. Am. Stat. Assoc. 106(496):1602-1614.

EPA (US Environmental Protection Agency). 2005. Guidelines for Carcinogen Risk Assessment. EPA/630/P-03/001F. Risk Assessment Forum, US Environmental Protection Agency, Washington DC. March 2005 [online]. Available: https://www.epa.gov/sites/production/files/2013-09/documents/cancer_guidelines_final_3-25-05.pdf [accessed August 1, 2016].

EPA (US Environmental Protection Agency). 2011. Toxicological Review of Trichloroethylene. EPA/635/R-09/011F. US Environmental Protection Agency, Washington, DC [online]. Available: https://cfpub.epa.gov/ncea/iris/iris_documents/documents/toxreviews/0199tr/0199tr.pdf [accessed November 1, 2016].

EPA (US Environmental Protection Agency). 2014. Integrated Bioactivity and Exposure Ranking: A Computational Approach for the Prioritization and Screening of Chemicals in the Endocrine Disruptor Screening Program. EPA-HQ-OPP-2014-0614-0003. US Environmental Protection Agency Endocrine Disruptor Screening Program (EDSP). FIFRA SAP December 2-5, 2014 [online]. Available: https://www.regulations.gov/document?D=EPA-HQ-OPP-2014-0614-0003 [accessed November 1, 2016].

EPA (US Environmental Protection Agency). 2015. Preamble to Integrated Science Assessments. EPA/600/R-15/067. National Center for Environmental Assessment, Office of Research and Development, US Environmental Protection Agency, Research Triangle Park, NC. November 2015 [online]. Available: https://cfpub.epa.gov/ncea/isa/recordisplay.cfm?deid=310244 [accessed August 1, 2016].

Gatti, D.M., W.T. Barry, A.B. Nobel, I. Rusyn, and F.A. Wright. 2010. Heading down the wrong pathway: On the influence of correlation within gene sets. BMC Genomics 11:574.

Gelman, A., A. Jakulin, M.G. Pittau, and Y.S. Su. 2008. A weakly informative default prior distribution for logistic and other regression models. Ann. Appl. Stat. 2(4):1360-1383.

Gelman, A., J. Hill, and M. Yajima. 2012. Why we (usually) don’t have to worry about multiple comparisons. J. Res. Edu. Effect. 5(2):189-211.

Greenland, S. 1994. A critical look in some popular meta-analytical methods. Am. J. of Epidemiol. 140(3):290-296.

Harrill, A.H., P.B. Watkins, S. Su, P.K. Ross, D.E. Harbourt, I.M. Stylianou, G.A. Boorman, M.W. Russo, R.S. Sackler, S.C. Harris, P.C. Smith, R. Tennant, M. Bogue, K. Paigen, C. Harris, T. Contractor, T. Wiltshire, I. Rusyn, and D.W. Threadgill. 2009a. Mouse population-guided resequencing reveals that variants in CD44 contribute to acetaminophen-induced liver injury in humans. Genome Res. (9):1507-1515.

Higgins, J.P., S.G. Thompson, and D.J. Spiegelhalter. 2009. A re-evaluation of random-effects meta-analysis. J. R. Stat. Soc. Ser. A 172(1):137-159.

Higgins, J.P.T., and S. Green, eds. 2011. Cochrane Handbook for Systematic Reviews of Interventions Version 5.1.0. The Cochrane Collaboration [online]. Available: http://handbook.cochrane.org/ [accessed August 1, 2016].

Hill, A.B. 1965. The environment and disease: Association or causation? Proc. R. Soc. Med. 58:295-300.

Hosack, D.A., G. Dennis, Jr., B.T. Sherman, H.C. Lane, and R.A. Lempicki. 2003. Identifying biological themes within lists of genes with EASE. Genome Biol. 4(10):R70.

IARC (International Agency for Research on Cancer). 1994. Ethylene oxide. Pp. 73-159 in Some Industrial Chemicals. IARC Monograph on the Evaluation of Carcinogenic Risk to Human vol. 60 [online]. Available: http://monographs.iarc.fr/ENG/Monographs/vol60/mono60-7.pdf [accessed November 2, 2016].

IARC (International Agency for Research on Cancer). 2006. Preamble. IARC Monographs on the Evaluation of Carcinogenic Risks to Humans. Lyon: IARC [online]. Available: http://monographs.iarc.fr/ENG/Preamble/CurrentPreamble.pdf [accessed August 2, 2016].

IARC (International Agency for Research on Cancer). 2008. Ethylene oxide. Pp. 185-309 in 1,3-Butadiene, Ethylene Oxide and Vinyl Halides (Vinyl Fluoride, Vinyl Chloride and Vinyl Bromide). IARC Monograph on the Evaluation of Carcinogenic Risk to Humans vol. 97. Lyon, France: IARC [online]. Available: http://monographs.iarc.fr/ENG/Monographs/vol97/mono97-7.pdf [accessed November 8, 2016].

IARC (International Agency for Research on Cancer). 2012. Ethylene oxide. Pp. 379-400 in Chemical Agents and Related Occupations. IARC Monograph on the Evaluation of Carcinogenic Risk to Humans vol. 100F. Lyon, France: IARC [online]. Available: http://monographs.iarc.fr/ENG/Monographs/vol100F/mono100F-28.pdf [accessed November 8, 2016].

IARC (International Agency for Research on Cancer). 2015. Some Organophosphate Insecticides and Herbicides: Diazinon, Glyphosate, Malathion, Parathion, and Tetrachlorvinphos. IARC Monographs on the Evaluation of Carcinogenic Risks to Humans Vol. 112 [online]. Available: http://monographs.iarc.fr/ENG/Monographs/vol112/ [accessed November 1, 2016].

IARC (International Agency for Research on Cancer). 2016a. 2,4-Dichlorophenoxyacetic acid (2,4-D) and Some Organochlorine Insecticides. IARC Monographs on the Evaluation of Carcinogenic Risks to Humans Vol. 113 [online]. Available: http://monographs.iarc.fr/ENG/Monographs/vol113/index.php [accessed November 1, 2016].

IARC (International Agency for Research on Cancer). 2016b. Instructions to Authors for the Preparation of Drafts for IARC Monographs [online]. Available: https://monographs.iarc.fr/ENG/Preamble/previous/Instructions_to_Authors.pdf [accessed November 1, 2016].

IOM (Institute of Medicine). 2008. Improving the Presumptive Disability Decision-Making Process for Veterans. Washington, DC: The National Academies Press.

IOM (Institute of Medicine). 2011. Finding What Works in Health Care: Standards for Systematic Reviews. Washington, DC: The National Academies Press.

Judson, R.S., K.A. Houck, R.J. Kavlock, T.B. Knudsen, M.T. Martin, H.M. Mortensen, D.M. Reif, D.M. Rotroff, I. Shah, A.M. Richard, and D.J. Dix. 2010. In vitro screening of environmental chemicals for targeted testing prioritization: The ToxCast project. Environ. Health Perspect. 118(4):485-492.

Kleinstreuer, N.C., J. Yang, E.L. Berg, T.B. Knudsen, A.M. Richard, M.T. Martin, D.M. Reif, R.S. Judson, M. Polokoff, D.J. Dix, R.J. Kavlock, and K.A. Houck. 2014. Phenotypic screening of the ToxCast chemical library to classify toxic and therapeutic mechanisms. Nat. Biotechnol. 32:583-591.

Knapen, D., L. Vergauwen, D.L. Villeneuve, and G.T. Ankley. 2015. The potential of AOP networks for reproductive and developmental toxicity assay development. Reprod. Toxicol. 56:52-55.

Kuo, C.C., K. Moon, K.A. Thayer, and A. Navas-Acien. 2013. Environmental chemicals and type 2 diabetes: An updated systematic review of the epidemiologic evidence. Curr. Diab. Rep. 13(6):831-849.

Lam, J., E. Koustas, P. Sutton, P.I. Johnson, D.S. Atchley, S. Sen, K.A. Robinson, D.A. Axelrad, and T.J. Woodruff. 2014. The Navigation Guide—evidence-based medicine meets environmental health: Integration of animal and human evidence for PFOA effects on fetal growth. Environ. Health Perspect. 122(10):1040-1051.

Langfelder, P., and S. Horvath. 2008. WGCNA: An R package for weighted correlation network analysis. BMC Bioinformatics 9:559.

Leek, J.T., and J.D. Storey. 2007. Capturing heterogeneity in gene expression studies by surrogate variable analysis. PLoS Genet. 3(9):1724-1735.

Leek, J.T., R.B. Scharpf, H.C. Bravo, D. Simcha, B. Lang-mead, W.E. Johnson, D. Geman, K. Baggerly, and R.A. Irizarry. 2010. Tackling the widespread and critical impact of batch effects in high-throughput data. Nat. Rev. Genet. 11(10):733-739.

Liao, K.H., Y.M. Tan, R.B. Connolly, S.J. Borghoff, M.L. Gargas, M.E. Andersen, and J.H. Clewell, III. 2007. Bayesian estimation of pharmacokinetic and pharmacodynamic parameters in a mode-of- action-based cancer risk assessment for chloroform. Risk Anal. 27(6):1535-1551.

Linkov, I., O. Massey, J. Keisler, I. Rusyn, and T. Hartung. 2015. From “weight of evidence” to quantitative data integration using multicriteria decision analysis and Bayesian methods. ALTEX 32(1):3-8.

Mackie, J.L. 1980. The Cement of the Universe: A Study of Causation. New York: Oxford University Press.

Martin, M.T., D.J. Dix, R.S. Judson, R.J. Kavlock, D.M. Reif, A.M. Richard, D.M. Rotroff, S. Romanov, A. Medvedev, N. Poltoratskaya, M. Gambarian, M. Moeser, S.S. Makarov, and K.A. Houck. 2010. Impact of environmental chemicals on key transcription regulators and correlation to toxicity end points within EPA’s ToxCast program. Chem. Res. Toxicol. 23(3):578-590.

Martin, M.T., T.B. Knudsen, D.M. Reif, K.A. Houck, R.S. Judson, R.J. Kavlock, and D.J. Dix. 2011. Predictive model of rat reproductive toxicity from ToxCast high throughput screening. Biol. Reprod. 85(2):327-339.