4

Understanding Fire: State of the Science and Research Priorities

The presentations of the first panel focused on understanding what the current state of fire science is and on the research priorities that are important for fire science. The four presentations were followed by a discussion session moderated by planning committee member Monica Turner.

FIRE REGIMES AND THE ECOLOGICAL ROLE OF FIRE IN U.S. LANDSCAPES

Meg A. Krawchuk, Oregon State University

Krawchuk said fire ecology covers a great number of ideas about natural and human fire history and fire effects on the environment, species, ecosystems, and landscapes. She presented five topics that represent that state of the science for thinking about fire ecology: (1) domain, (2) variability, (3) temporal and spatial heterogeneity, (4) mosaics, and (5) ecological response.

With regard to domain, Krawchuk said it is well recognized that fire plays an integral ecological role in ecosystems, but it has different tempos depending on location. Fire creates opportunities for the dynamisms of succession, that is, for ecosystems to progress and change over time. It also plays an important role as a disturbance in ecosystems, but that disturbance is only helpful to an ecosystem if it is the right kind of fire. Too little fire, too much fire, or the wrong kind of fire has the potential to put ecosystems out of their “natural balance.”

Fire on a landscape exists as part of a fire regime, which is the range of variability in fire characteristics in a given area over a set period of time. Fire ecologists ask questions to discern what the fire regime is:

- How often does an area burn?

- Where in the vegetation does it burn?

- What caused the fire?

- How large of an area is affected?

- How much biomass was consumed and in what pattern?

- How much biomass was left behind?

- When in the year did the fire occur?

The answers to these questions tell fire ecologists about frequency, type, cause, size, severity, and seasonality of the fire regime.

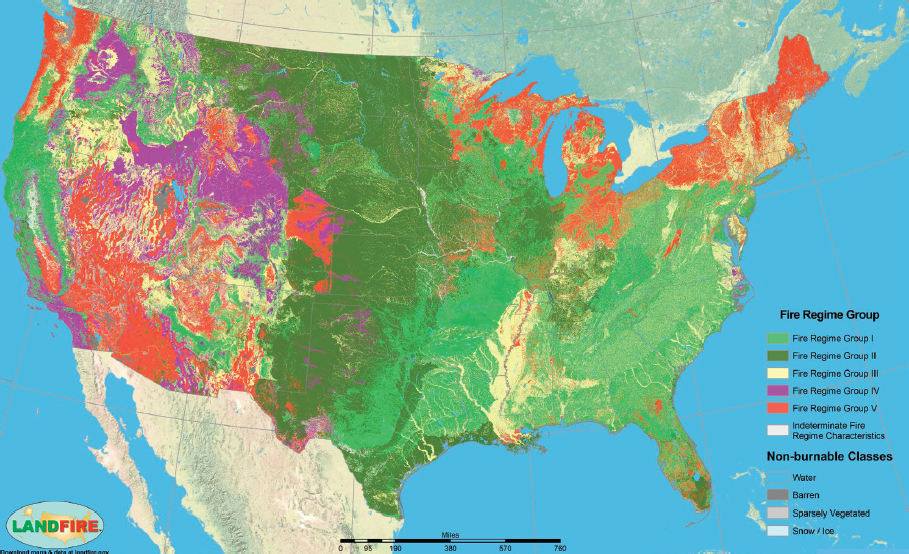

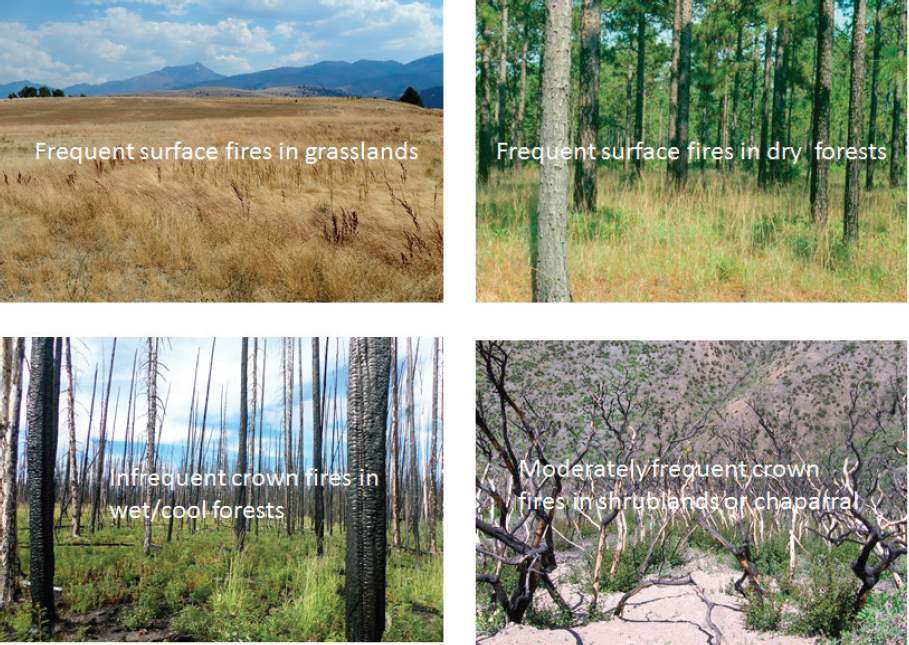

Temporal heterogeneity needs to be thought of in terms of the historical range of variability in fire regimes as well as the contemporary range of variability and how those present characteristics differ from the historical range. The future range of variability needs to be taken into account as well. In terms of spatial heterogeneity, Figure 4-1 illustrates historical fire regimes in the conterminous United States. Krawchuk noted that it is helpful to look at the locations and groups of fire regimes in Figure 4-1 and then at corresponding images of landscapes indicative of these types of fire regimes (Figure 4-2). The images provide examples of historical fire regimes in the United States. For instance, frequent surface fires in

NOTE: Fire Regime Group I: burn frequency 0–35 years, burn severity generally low, replacing less than 25 percent of the dominant overstory vegetation, can include mixed-severity fires that replace up to 75 percent of the overstory; Fire Regime Group II: burn frequency 0–35 years, burn severity high, replacing greater than 75 percent of the dominant overstory vegetation; Fire Regime Group III: burn frequency 35–200 years, burn severity generally mixed, can also include low-severity fires; Fire Regime Group IV: burn frequency 35–200 years, burn severity high, leading to replacement; Fire Regime Group V: burn frequency more than 200 years, burn severity varies but leads to replacement. See https://www.firescience.gov/projects/09-2-01-9/supdocs/09-2-01-9_Chapter_3_Fire_Regimes.pdf. Accessed August 14, 2017.

SOURCE: Krawchuk, slide 4; Fire regime group map, available at https://www.landfire.gov/lf_applications.php#maps. Accessed June 29, 2017. Image courtesy of the U.S. Department of Agriculture and U.S. Department of the Interior.

SOURCE: Krawchuk, slide 5. Photos courtesy of Meg Krawchuk.

grasslands and frequent surface fires in dry forests are considered to be the historical norm, and infrequent crown fires in wet and cool forests are within the historical range of variability. Building on Balch’s point that fire can have benefits to ecosystems, Krawchuk said it is important to realize that there is a place for fire in landscapes, even large crown fires, which are needed to replace tree stands.

Fires create mosaics, both in terms of the structural and spatial heterogeneity within the fire and the variability left on the landscape after the fire. This fourth topic of fire ecology concerns fire severity, which accounts for the organic matter lost from burning, including soil and vegetation components. It also concerns fire legacies—what is left behind and what persists through fire—such as seed banks, standing live and dead vegetation, carbon pools, and resprouting capacity. These structural and life-history adaptations act as the ecological memories that help an ecosystem return back to its pre-fire state.

Fire is one factor in the environmental niche of a species, and an important one contributing to where species occur, persist, and reproduce. Living things have adaptations that enable them to persist within a given fire regime. Fire acts as a filter, influencing who lives where; organisms are well adapted to or even dependent on certain types of fire. Figure 4-3 illustrates the relationship between fire regimes and the biotic membership within those ecosystems; the safe operating space for fire regimes and biota is where the fire regime adaptive traits match quite well with the characteristics of the box of fire regime. Krawchuk concluded by asking: Are humans leaving safe operating space for ecosystems to respond to fire as they have done historically within a given fire regime? To what degree is contem-

SOURCE: Krawchuk, slide 7; adapted from Johnstone et al. (2016).

porary management and ongoing climate change generating a mismatch between ecological communities and fire regimes?

PREDICTING AND MAPPING FIRE AND FIRE EFFECTS

Mark A. Finney, U.S. Forest Service Missoula Fires Sciences Laboratory

Finney’s overview of fire prediction and mapping began with the following observations about wildland fire in the United States:

- Ninety-five percent of the area burned in the United States (including Alaska) each year stems from only 3 percent of the 80,000 fires that occur each year; the other 97 percent of fires are suppressed.

- The burned area covers 1.6–4 million hectares (4–10 million acres) each year.

- Fire response is the responsibility of multiple agencies at the federal, state, county, and city levels.

All of this fire has created a great deal of demand for many decades for fire prediction and for mapping. Today there are a number of tools for prediction and mapping, including systems, models, tools, data, and maps.

With regard to prediction, scientists model different timeframes and spatial extents. The main areas in prediction are operations, which includes predicting where active fires will go. Planning is also involved in prediction and encompasses risk analyses, fire management plans, and fuel treatment analysis and design. Training is another piece of the puzzle because fire prediction is part of how firefighters learn to be safe on the fire line. Finally, prediction includes research into different kinds of ecological models.

The basis for this practice of prediction in the United States is the Rothermel Spread Equation published in 1972, which is based on the culmination of a decade or more of laboratory and field research in the Forest Service’s Missoula laboratory. The model has practical inputs, is fast, and produces reasonable outputs even though it has major limitations, such as the kinds of data and detail that can be ingested, the behavior of the fires that can be simulated, and its output’s fidelity with respect to the actual fire.

Before computers, people used maps, nomograms, calculations of spread rates, and the vectoring of slope and wind effects to produce a fire growth map. Today this work is done by computer systems; one of the best developed is the Wildland Fire Decision Support System built in 2009. All fires under the jurisdiction of the federal government are entered into this system. It provides access to models, data, and decision frameworks and generates fire growth projections, fire behavior calculations, and probabilistic predictions that can be overlaid with values at risk. Infrastructure such as homes, power lines, bridges, and gas lines can be worked into the map. The system outputs provide a true risk framework.

An advantage of such a system is that statistics can be compiled on usage. In the 8 years the Wildland Fire Decision Support System has been in operation, it has run 43,000 analyses for about 4,000 fires, or roughly 500 fires per year. Only 3 percent of the fires on federal lands receive any kind of analysis, and it is not a coincidence that those 3 percent are the same 3 percent that burn 95 percent of the acres because all the other fires are put out; there is no point in modeling fires that do not spread. Less than 1 percent of all the fires in the United States (those on federal lands as well as on nonfederal lands) get any kind of modeling support.

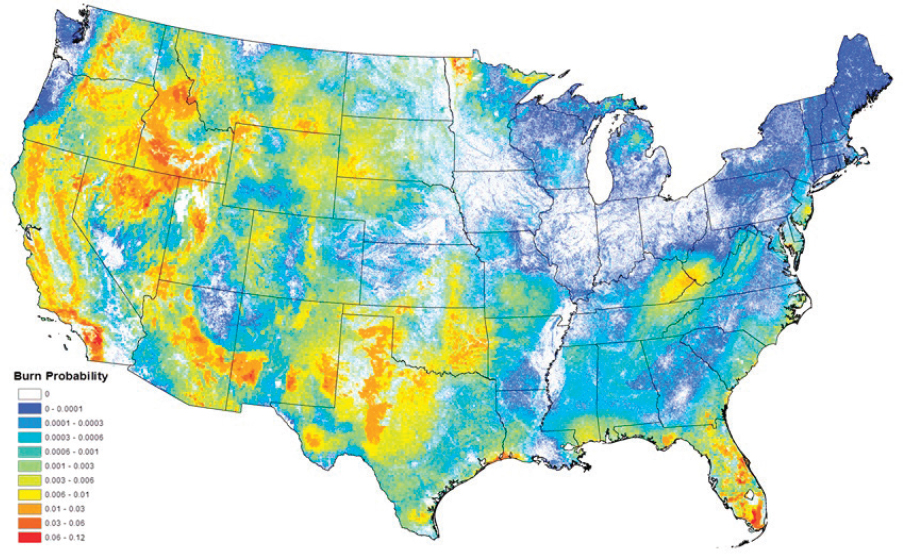

Another type of analysis, which is part of the planning phase of prediction, is risk analysis. Running Monte Carlo simulations on tens of thousands or millions of fires over many different synthetic years creates probabilities of distributions of fire behavior which can then be calibrated with observed fire behavior, size, and number. Figure 4-4 is an example of the amount of computational effort that goes into such a simulation.

SOURCE: Finney, slide 10; Fire Program Analysis Project, U.S. Forest Service–Missoula Fire Sciences. Available at https://www.arcgis.com/home/item.html?id=1fb27ff2aada4a68ac8078bca4fc6480. Accessed June 30, 2017.

The National Fire Danger Rating System is another analysis tool that produces many different maps daily and multiple times a day. It is accessed through the Wildland Fire Assessment System. The Rothermel Spread Equation is the foundation of this system as well.

There are also models for determining fire effects at the stand level, multistand level, and landscape level. Analyses exist that can incorporate fire behavior and fire prediction to understand what consequences such variables will have, for example, on a fire or how fuel treatments should be designed.

In sum, although it consists of operations, planning, training, and research, 98 percent of the effort in modeling is in the planning phase. Most of the fire modeling currently under way in the United States is not associated with active fires. There are not that many fires to model and the decision space—that is, what kind of actions can be taken with those active fires—is narrowed by politics.

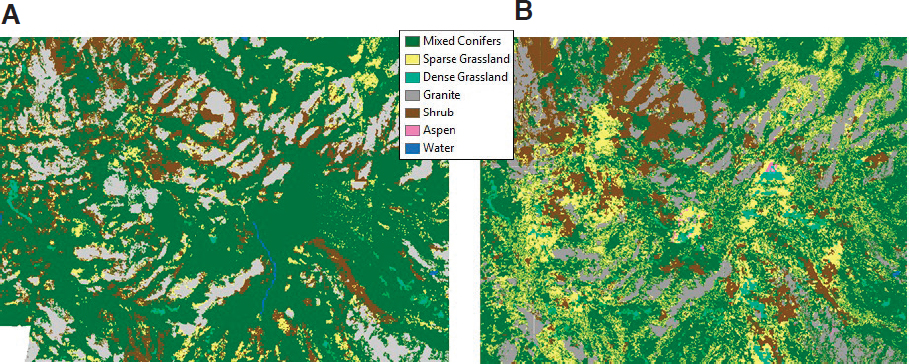

Finney then moved on to mapping. There are many kinds of mapping efforts, such as fuel mapping, fire danger rating, satellite fire detection (for example, with Moderate Resolution Imaging Spectroradiometer [MODIS] and Visible Infrared Imaging Radiometer Suite [VIIRS]), the MTBS record (see Figure 3-5), Burned Area Emergency Rehabilitation, and airborne infrared through the national infrared operations. Starting with fuel mapping, LANDFIRE (Landscape Fire and Resource Management Planning Tools Project) is operated by the Forest Service and the U.S. Department of the Interior and provides landscape-scale geospatial data to produce vegetation, wildland fuel, and fire regime maps (see Figure 4-1). It is the basis for almost all fire decision support systems in the United States. It has 20 layers of vegetation and fuel-related information and is updated every 2 years. With regard to fire danger rating, the Advanced Very High Resolution Radiometer satellite imagery provides greenness information at 1-km resolution. In terms of satellite fire detection, MODIS imagery allows people to look at large fires in detail. Finney demonstrated through a series of images how MODIS images showed that, after a 2002 fire, areas in which fuel treatments were performed before the fire were green with vegetation following the fire compared to other burned areas. MTBS, administered by the U.S. Geological Survey (USGS) National Center for Earth Resources Observation and Science and the U.S. Forest Service Remote Sensing Applications Center, maps all fires greater than 400 hectares (1,000 acres) in the West and 200 hectares (500 acres) in the East. Additionally, there are several new technologies assisting mapping efforts, including remote-sensing tools (such as LIDAR), images from unmanned aerial vehicles, and numerical simulations (for example, gridded wind fields and high-resolution gridded weather).

With all these tools, Finney posed the questions: What is holding back practical advances in prediction? Why are these tools not being used in operational predictions? The biggest hurdle, he said, is limited knowledge about how fire spreads. The physical and empirical models do not resolve the physical processes that produce fire spread and behavior. Finney cited examples from a couple of papers to illustrate his point that understanding the processes involved in fire behavior and spread is still a scientific challenge (Clark et al., 2003; Sullivan, 2009). The question about fire spread should be answerable, but if how fire spreads is unknown, then it is hard to take advantage of all the data collected through remote sensing.

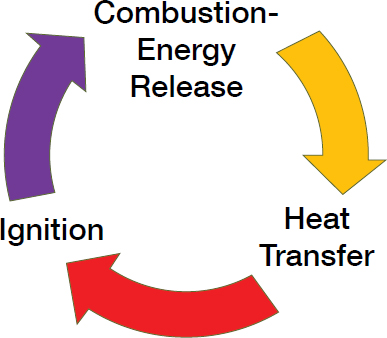

Fire spread is a nonstop reaction involving combustion and energy release that causes heat transfer to ignite new fuels that then feed back into the spread process (Figure 4-5). The challenge is that the reaction from heat transfer to ignition is operating at minute temporal and spatial scales, less than a second in time and smaller than a millimeter. Finney provided an example of the difficulty of understanding fire spread. He postulated a wildland fire, the radiant heat of which can be duplicated by a simple chicken heater for a garage. If fine

SOURCE: Finney, slide 27.

particles (about a millimeter thick) and a wooden block (38x25x13 mm) are put next to the heater, which will ignite first? Contrary to expectations, the wood block will ignite in less than 30 seconds and the fine particles will not light at all.

CHANGING ENVIRONMENTAL DRIVERS, TIPPING POINTS, AND RESILIENCE IN FIRE-PRONE ECOSYSTEMS

Craig D. Allen, U.S. Geological Survey New Mexico Landscapes Field Station

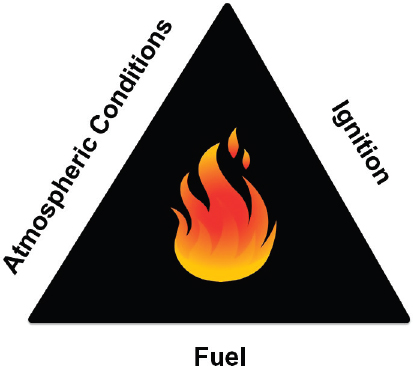

Allen began by noting that in the last few decades forests in the West have experienced an increase in tipping point–type changes in terms of fire behavior and forest disturbance processes. Such changes include more high-severity fire and more vegetation die-off in forests. However, they are understandable because they correspond to changes in the environmental drivers that affect the components of fire, that is, atmospheric conditions, ignition, and fuel (Figure 4-6).

Environmental drivers related to atmospheric conditions are numerous and include temperature, humidity, precipitation, and wind. The number of such drivers is one of the reasons that the modeling Finney reviewed is so difficult. Any of them can drive big changes in fire behavior. Additionally, changes in atmospheric conditions involve both short-term fire weather and longer-term climate variability and directional change in climate. Examples of tipping points or nonlinear changes related to atmospheric-condition drivers are the increases in fire frequency and in the area burned related to warming in the western United States.

In terms of fuel, the type of fuel (for example, grass, shrub, or tree), the quantity of fuel, the horizontal connectivity and vertical structure of fuel, and the moisture content are just some of the environmental drivers that are undergoing change. Allen pointed to the “grassification” of the American West as an example. Bare inner spaces between perennial plants and woody plants in western desert areas are filling in with nonnative grasses, which causes change in fire behavior that the local flora and ecosystems are not adapted to.1 Another example is the drought- and heat-induced stress on conifers and the associated insect outbreaks that have caused immense mortality in western North American forests in

___________________

1 See also Balch’s discussion of cheatgrass in the Great Basin in Chapter 3.

SOURCE: Allen, slide 3.

the last 20 years. There are debates and ongoing research about how much stress and insect pressure actually changes fire hazard and fire behavior, but regardless, millions of hectares in western North America are facing these conditions. Similar circumstances exist for forests on the global scale.

Key environmental drivers of ignition are the cause (lightning or human), the quantity, the location, and the timing or season. Allen reiterated Balch’s point of the number of human-ignited fires and the predominance of human ignitions in the eastern United States.

There are many different factors that influence these environmental drivers. Some drivers are changing for natural reasons—such as natural variability in the atmosphere and successional changes in forests—and for anthropogenic reasons—such as land-use change and greenhouse gas emissions. The manifestations of these changes are increases in frequency of fires, changes in area burned, a lengthening of the fire season, and an increase in fire severity. Allen provided an anecdote about changes in fire severity. On a Sunday afternoon with high wind in a forest that was closed to the public to try to reduce human-ignited fires, an aspen tree fell on a power line and ignited, which started the Las Conchas fire in New Mexico. In less than an hour, there were 150-meter (500-foot) flames (Figure 4-7A). Within 14 hours, the fire had burned 17,000 hectares (43,000 acres). It burned from high-energy, high-density fuels and immolated an area further down the slope (Figure 4-7B). The destruction caused by the Las Conchas fire demonstrates why fire-severity metrics is an area of important research today.

Watersheds and vegetation are also affected by fire severity. Areas denuded by fire can become prone to flooding and erosion. With regard to vegetation, an area of active research today is what grows back on the land after conifer seed sources are removed. Many conifer tree species that are adapted to higher frequency regimes need mother trees to survive, but these mother trees are being eliminated over large areas by high-severity fire. In such places, resprouting plants such as shrubs and grasses are favored in the ecosystem over the conifers.

Changes in fire activity affect human communities. Allen reiterated the extent of the wildland–urban interface, particularly in the eastern United States. There is a great deal of human-ignited fire in the East, but generally the East has moister conditions than the West, so the above-ground biomass is not as available for burning as it would be under western conditions. However, if and when the time comes that the above-ground biomass (or fuel) becomes more available during more of the year (that is, if drier conditions occur in the

SOURCE: Allen, slides 22, 25. Photo A courtesy of Jeff Dube, U.S. Forest Service. Photo B courtesy of Craig D. Allen, U.S. Geological Survey.

East), the potential will increase for more fire events like the one in Gatlinburg, Tennessee, in 2016. Indeed, the likelihood of such scenarios is actually quite large under a number of climate change models.

Allen said while many people have been surprised at the increase in frequency of fires and in the area burned in the West, those forests have been responding as they must. They are changing in response to the environmental drivers. As environmental drivers change, tipping-point events will become more common in the future and will occur in places they have not before, such as in a (drier, hotter) eastern United States.

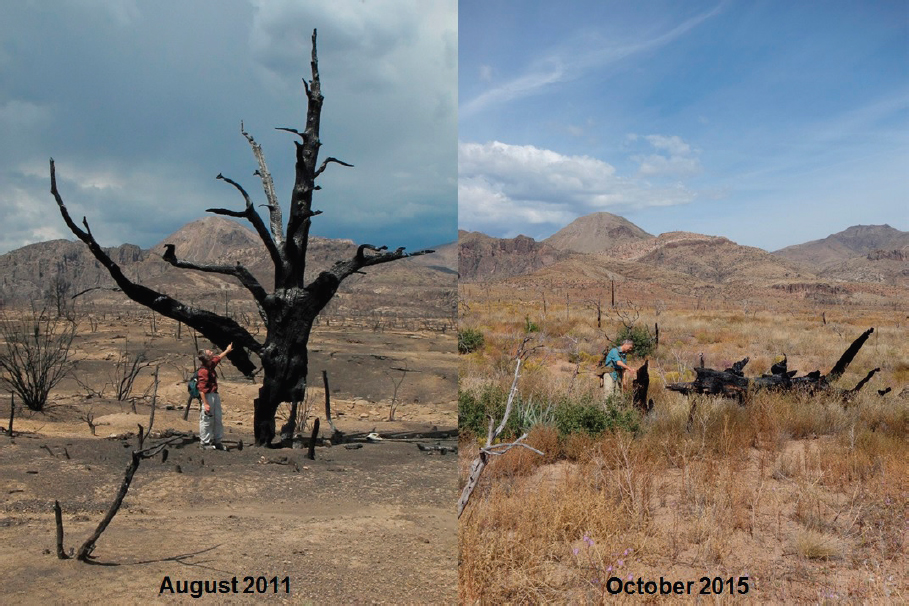

Historic range of variability concepts have been used to drive management the last few decades. However, Allen supported Pyne’s statement that an inflection point may have been passed; concepts based on historic range may not be useful as tipping points become more common. Instead, management will need to anticipate and adapt to an uncertain future range of variability. This shift will require a great deal of learning by scientists, managers, and society at large. For example, what will follow these tipping points that are so far outside the historic range of variability? The Las Conchas fire is an example of this, too. Five growing seasons after the fire, the ecosystem is not similar to its pre-fire state and is outside its historic range of variability (Figure 4-8).

In closing, Allen laid out a number of research priorities. The first is to learn more about nonlinear responses to systems following fires. It will be important to determine the thresholds and tipping points for environmental drivers of large fires and fast fires and the ecosystem changes that accompany them. These changes are embedded in physical, biological, and ecological processes and in human societies. Temperature-sensitive processes need particular attention because they will be the most robust climate driver in the future. Another research priority, one that is associated with nonlinear responses, is the need to better understand how extreme climate events, such as drought and heat waves, operate as triggers of tipping-point disturbance processes. A third area of research is to understand the interactions and feedbacks of these disturbances across spatial scales. All these priorities relate to a paper that was forthcoming at the time of the workshop by USGS scientists on anticipatory natural resource management in a dynamic future (Bradford et al., 2017). Such a management approach is one of the future challenges USGS is currently undertaking.

FIRE AND FUELS MANAGEMENT: WHAT WORKS WHERE?

Scott Stephens, University of California, Berkeley

Stephens started his presentation with the idea of fire regimes. Some forests are adapted to high-severity, infrequent fire—for example, crown fires in Rocky Mountain lodgepole pine—while other forests are adapted to frequent fire and can handle these disturbances within a desired range. The type of fire regime needs to be considered when fuel treatments are undertaken. Prescribed burning and thinning might be carried out at regular intervals in a crown fire ecosystem for infrastructure purposes, but such treatments are outside the ecology of that fire regime.

An example of a crown fire system that has burned too frequently is a predominantly jack-pine forest in the Northwest Territories, Canada. Jack pine is a closed-coned, serotinous species that burns every 70 to 150 years. However, in Wood Buffalo National Park, jack pine burned in 2004 and then burned again in 2014. Because jack-pine seedlings establish quickly and grow rapidly after a fire, the second fire destroyed many young trees that had sprouted after the first fire but had not yet reached maturity. There were few seeds left over in the seedbank after the second fire for another round of seedlings to generate.

NOTE: The vegetation that has reoccupied the burned area is outside the historic range of variability. Woody plants used to dominate the site, but they are not thriving post-fire.

SOURCE: Allen, slide 37. Photos courtesy of Collin Haffey, U.S. Geological Survey.

Transect surveys following the second fire found one seedling in an area of almost 50 square meters. This elimination of the seed stock is an example of major vegetation changes over a short amount of time and of the abrupt changes to the fire regime that climate warming causes.

Another example of fire regime change is evident from historical data in the central Sierra Nevada in California. In 1911, there were 19 trees per acre in an inventoried area that included parts of the Stanislaus National Forest and Yosemite National Park; in 2013, the density had increased to 224 trees per acre (Collins et al., 2015). While Stephens noted that the 1911 data should not be considered a target point for restoration, he posited that the difference in the density of the forest in 1911 versus 2013 can help with understanding resilient systems.

In regimes adapted to frequent fire, the Southeast region has been a leader in the United States in the use of fire on the landscape, due in no small part to the research of H. H. Chapman, H. L. Stoddard, and E. V. Komarek. Approximately 75 percent of prescribed burning in the United States each year takes place in this region, and burning is culturally acceptable. Stephens hoped that such burning practices will continue even as people who are less familiar with prescribed fire move into the region.

There were many notable scientists at the Forest Service, the National Park Service, and universities who contributed to early research into fire and fuels, but Stephens focused on

one scientist of note from the Bureau of Indian Affairs. Harold Weaver performed extensive research on the use of fire in management in the middle of the 20th century. With small crews, he burned 277,000 hectares (684,890 acres) of ponderosa pine forest in the Colville Reservation in eastern Washington between 1945 and 1955. He found that prescribed fires reduced wildfire damage by 87 percent and reduced the cost of fire control by 54 percent as compared to adjacent areas that had not been burned (Weaver, 1957). His conclusion was “this is a presentation of a management system—not just prescribed burning” (Weaver, 1957). Unfortunately, after Weaver retired, institutional support did not continue for this burning program, and it was not widely adopted elsewhere.

However, one place that did pursue Weaver’s ideas is the San Carlos Apache Reservation in Arizona. During his career, Weaver had also worked in this region and supported the use of prescribed fires in its low-intensity, high-frequency fire regime. Today research is under way on the reservation on alternative fire suppression strategies. Stephens thought the outcomes of this current research will help inform how to better manage fire. Unlike the program in Washington, the San Carlos Apache Reservation program had continual institution support following Weaver’s departure.

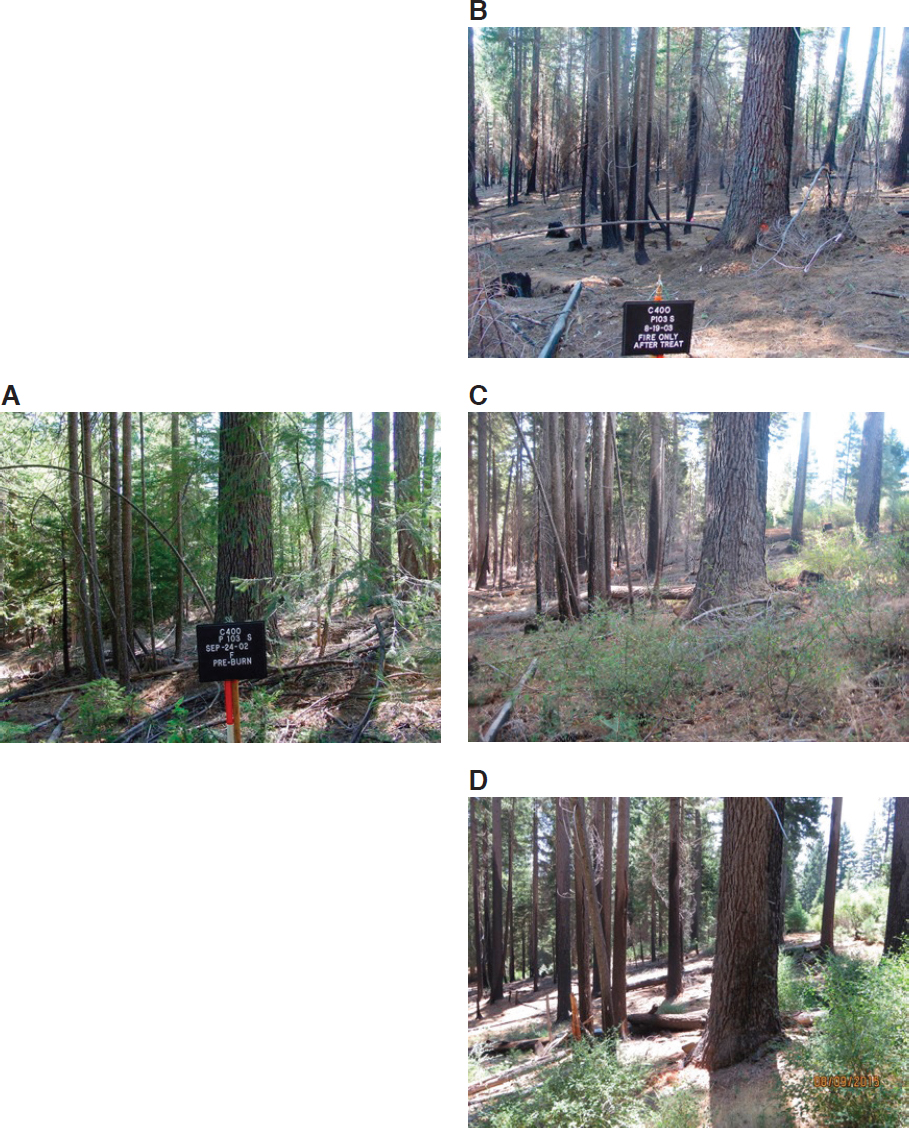

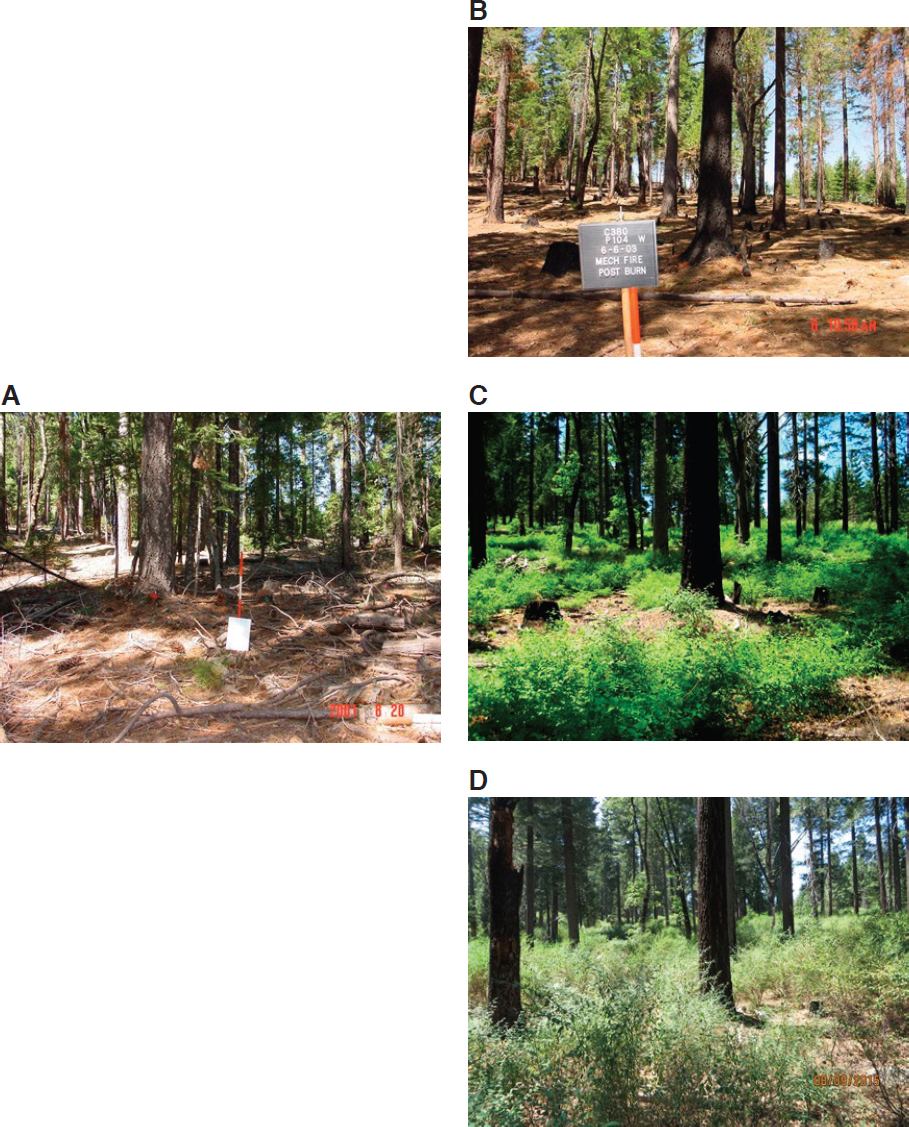

Figure 4-9 shows the response of a site in the central Sierra Nevada to prescribed burning, while Figure 4-10 displays the response of a different site in the same area to mechanical thinning followed by prescribed burning. In both series of images, shrub response is evident a few years after treatment (Figures 4-9C and 4-10C). Thirteen years after the initial treatment, the shrubs have grown larger (Figures 4-9D and 4-10D). These responses show that management is a constant conversation with a land. Prescribed burning management has to be done continually rather than as a one-time occurrence.

Experiments involving fuel treatment demonstrate that, if ladder fuels and surface fuels can be reduced, fire hazards and fire effects can be lessened in frequent-fire adapted forests. Treatments can increase the vigor, resistance, and resilience of remaining trees to adapt to climate change. Research results also have found that there are few unintended negative ecological consequences from these fuel treatments (Stephens et al., 2012). The longevity of the treatments can span 5–25 years because response and efficacy depend on the system. In some places, lightning-ignited fire can be incorporated into a treatment plan, which is the case in Region 3 of the Forest Service.2 Research by Safford and colleagues and Martinson and Omi has determined that fuel treatments in frequent-fire adapted forests are effective when those forests are later burned by wildfires (Safford et al., 2012; Martinson and Omi, 2013); however, the use of fuel treatment is low compared to the area in need of treatment.

Lightning-ignited fires as a management tool have been used in Yosemite National Park since 1974. Collins and colleagues have shown that, when a fire intersected an area that had burned 9 years previously or earlier, the fire went out on its own in 90 percent of the cases examined (Collins and Stephens, 2007; Collins et al., 2009). Figure 4-11 is an image of one of these locations, in which lightning-ignited fire has created openings in the landscape and an opportunity for forest recovery. Figure 4-12 shows maps of the same area in 1969 and 2012. In 1969, fire had been suppressed on the land for almost 100 years (Figure 4-12A). Fire returned to the landscape in 1974 and after 40 years of using lightning-ignited fire as a management tool, there has been an increase in wet meadows, dry meadows, and shrublands and a decrease in forested area (Figure 4-12B). Compared to three watersheds where fire was suppressed, fewer trees have been killed by beetles during droughts in

___________________

2 Region 3 encompasses 8.3 million hectares (20.6 million acres) of land in Arizona, New Mexico, and the panhandles of Texas and Oklahoma.

NOTE: A, site in 2002, before treatment. B, site in 2003, after a prescribed fire. C, site in 2008, just before a second prescribed fire. D, site in 2016 after second prescribed fire.

SOURCE: Stephens, slide 7. Photos courtesy of Scott Stephens.

NOTE: A, site in 2002, before treatment. B, site in 2003, after mechanical thinning followed by a prescribed fire. C, site in 2008. D, site in 2016.

SOURCE: Stephens, slide 7. Photos courtesy of Scott Stephens.

NOTE: In the foreground, the forest has been changed to grassland. The forest in the background is regenerating.

SOURCE: Stephens, slide 10. Photo courtesy of Scott Stephens.

NOTE: A, study site in 1969, after 100 years of fire suppression. B, study site in 2012, after more than 40 years of lightning-ignited fire used as a management tool. Wet and dry meadows have increased 200 percent compared to 1969, and shrublands have increased 30 percent; forested area has decreased by 22 percent.

SOURCE: Stephens, slide 11; adapted from Boisramé et al., 2016.

the area managed with fire and stream water volume has been stable or increased. In areas with fire suppression, stream water volume has decreased since 1974.

In conclusion, Stephens acknowledged that frequent-fire adapted forests have changed greatly in the western United States. Though climate change has made the situation worse, it is not the main problem. Fuel treatment is needed, and in frequent-fire adapted systems, it is an option. The use of managed wildfire or prescribed fire can reduce stress on the system, increase tree resilience to stress, and facilitate adaptation of the system to climate change. Crown fire systems, which rely on infrequent, high-severity fires, have fewer management options available. Prescribed burning will not be used in these systems. Stephens noted that in some such systems in Australia, land managers are reseeding burned areas via airplanes because the systems are burning too frequently for the vegetation to re-establish after a fire. Such intervention is one management option. Another option is to let the vegetation on the land change in response to the frequent fires. Which approach to take is a difficult decision.

Some resources that would help address such difficult choices are land management funding that is not linked to fire suppression, longer employment for seasonal workers so they can use fire more on the landscape, and strategies for using lightning-ignited fire as a management tool more often throughout the country (Stephens et al., 2016). Stephens said forest resilience should be a standalone land management priority for the United States. Akin to the Clean Water Act, the Endangered Species Act, and the Clean Air Act, there should be a Forest Resiliency Act. The existing acts have the power to shape policy. Such ability is needed for forests, particularly as the next 3 decades are critical for reversing the current trajectory of forest resilience. Now is the opportune time for the Forest Service and the National Park Service to set a policy that leaves a legacy of better fire and forest management for the next generation.

MODERATED DISCUSSION

A participant noted that he is stationed in Sequoia National Park in California, which instituted prescribed burning in the early 1960s and burns about 2,000 hectares (5,000 acres) a year. The primary limitation of burning is air-quality concerns. Given the size of the park and the constraints posed by air quality, it is not possible to conduct enough prescribed burning to return historical fire regimes to that landscape. He asked the panel what the solution would be to this dilemma. Scott Stephens acknowledged that the parks in the Sierra Nevada are some of those leading the way in using fire on the landscape, but over the last 20 years, the use of burning has decreased. He suggested that the best course of action is to be strategic by prioritizing important areas for management, such as giant sequoia groves. Stephens thought managed lightning-ignited fire will have to be a larger piece of the equation when it comes to using burning as a management tool. With regard to the Clean Air Act requirement that Class 1 airsheds never have impeded visibility and the public health reasons in the act for limiting smoke, Stephens admitted that there is no way to have a fire-sustainable future without smoke in the air. From his point of view, this dilemma is why a congressional act prioritizing forest resilience is needed as a prime objective of federal policy; such an act may need to supersede other policies, including those carried out under the Endangered Species Act, the Clean Water Act, and the Clean Air Act, to ensure the long-term viability of forest ecosystems. The questioner responded that the park managers are often prevented from using lightning-ignited fires as a substitute for prescribed burning because the Air Quality Board requires the fires to be extinguished if they are a threat to air quality. Stephens acknowledged this was the case and said that approach needs to change. In his opinion, federal leadership by Congress and the administration needs to revise the policy to help improve forest sustainability.

Another participant noted that two dominant themes of the panel’s presentations and the keynote addresses were the uncertainty of future fire regimes and the difficulty or even inability to direct change in those regimes. The concept of resilience was also discussed, and the participant wondered what the metric for resiliency was when considering forest management in the future. Krawchuk agreed that the idea of what is defined as resilience is interesting. The theory of resilience first emerged in the 1970s, but at that time the specter of changing climate was not on the horizon. The theory put forward the idea that a system should have the capacity to absorb disturbances or shocks and retain the same state, function, and feedbacks within the same basin of attraction as it had before the disturbance. That idea of resilience corresponds to historic ranges of variability in fire regimes, climate and species, and structures of ecosystems. However, the present trajectory of change in climate suggests that the future range of variability may make the historic basin of attraction irrelevant. Therefore, there are ongoing discussions to update the idea of and the definition of resilience. The former definition of resilience needs to be put in the category of a basic resilience, and another layer needs to be added to capture adaptive resilience: that is, the resilient component is actually the capacity to transform and adapt under pressures that are novel and extreme, such as the stressors happening under climate change. Adaptive resilience, which embraces transformations and the potential to adapt by changing ecosystem state and species, is an applicable concept to forest management in the future.

Allen agreed with Krawchuk and said that the challenges for a land manager are those abrupt transformations of the system where it changes from something familiar to something novel with no legacy point of reference. Ecosystems are already adaptively responding, and it is up to managers and researchers to make sure that the systems can respond more incrementally rather than abruptly. Krawchuk added that patience is needed because, even though abrupt changes are being seen, the window of observed time is relatively small. The adaptive capacities of systems are not always known and need to be explored. The question needs to be asked whether that long-term recovery is natural or has precedent or whether the abrupt transformation leads to a different state or function. Turner noted that, because trees take a long time to grow and because fires are infrequent events (though becoming more frequent), there needs to be more empirical, observational studies or monitoring of the mechanisms that underpin the ability of the forest to recover, such as tree density, tree area, carbon, and ability to produce water. The results of those studies then need to be fed into models that can help anticipate the ranges of uncertainties and assess what those uncertainties are. This multipronged approach will contribute substantially toward understanding resilience.

A participant observed that the physics and ecology of fire science and management had been discussed, but the human dimension had not been raised. Humans are the problem and the solution. To ensure the sustainability of the system, should the human dimension be explored further to complement the work being done in the physical and ecological systems? Turner noted that the second panel had a social-science emphasis because the participant’s point was so important; the first panel had a natural-science focus. Stephens shared that he was optimistic because forests and their ecosystems are so important to humans. He agreed that the social and political issues were critical when it comes to forest resilience. Land managers, including the Forest Service and National Park Service, are trying to help forests become more resilient and they need to be empowered to continue to find solutions.

A viewer of the webcast asked Finney about the use of remote sensing in forest and fire management. Finney said that there are tremendous technological tools that are able to remotely measure different vegetation and fuel attributes. However, much of that information cannot be used to make active predictions because most models cannot ingest the data. Those models that can ingest the data are too slow for real-time predictions and can only

be used for research. Nevertheless, these technologies are going to continue to advance. It is incumbent upon the fire behavior sciences to figure out how fire spreads so remote-sensing data can be used and more accurate tools can be developed for the many dimensions of fire management, including mitigation. This knowledge would help close the gap in understanding with regard to how fuels are related to fire behavior. Right now fuel models act as surrogates for real fuel descriptions. The models can inform firefighters and frontline personnel about how fire behaves and how their observations actually have physical meaning about things that could be dangerous or could be opportunities for suppression. However, the predictive ability of the models for these purposes is limited. If the predictive ability of the models could be improved by having a better understanding of the physics of fire, then other sources of error could be addressed. Such improvements in the predictive capabilities of models may in turn make people more comfortable living with fire and thereby make the use of fire easier in the different ways discussed in the panel’s presentations. If science is the means by which people can become more comfortable with fire as an ecological process, that would be a positive outcome, Finney concluded.

Another viewer of the webcast asked Allen about negative feedback loops. If there is more fire in a hotter, drier climate, presumably there will be no more fuel to burn eventually in some of these ecosystems. Allen said that modelers are looking at those feedbacks. However, the source of fuels changes, and there will continue to be fuels on the land for a long time, particularly in frequent-fire adapted systems where fire suppression has allowed woody fuel to build up for a century or more. Nonetheless, at some point in time, even these systems will experience some self-limiting characteristics related to fuel.

The director of the National Academies’ Board on Atmospheric Sciences and Climate (BASC) shared with the panel that BASC is turning its attention to how to move carbon out of the atmosphere and store it in natural ecosystems or geological formations. Much of the conversation at the workshop had focused on more frequent fire and more intentional burning, which of course release carbon into the air. The BASC director asked if the fire community is thinking about forest systems’ potential as places to store more carbon in the future. Krawchuk gave an initial response by returning to the idea of frequent fire in dry forests. When fire is frequent in these dry forests, a relatively small amount of carbon is burned off. Following the fire, photosynthesis takes hold and brings that carbon back into the terrestrial system. This pattern has no real net loss. However, fire exclusion and suppression in these dry forests has led to the establishment of ladder fuels that carry fire from the surface up into the canopies, which may result in more severe crown fires that consume whole trees and lose more biomass. Growth may not be able to regenerate following such intense fires, and this situation can create the potential for less uptake by large trees (which act as big carbon-sequestering devices) of those carbon pools due to reduced photosynthesis. Therefore, more frequent fire would avoid those tipping points that release large amounts of carbon. Even though there is a flux of carbon sequestration and release in a frequent fire system, there is not necessarily a huge release of carbon. An exception would be tundra fire that burns down into long-established pools of carbon, but that is a different kind of system from the crown fires of the West.

Stephens added that Matt Hurteau of the University of New Mexico and Malcolm North of the Forest Service have looked at the sustainable carbon carrying capacity of a forest. The fire regime is really important to that capacity. For example, coastal forests of the Pacific Northwest, which are comprised of species like Douglas fir, western red cedar, and redwood, experience few fires and can sequester a great deal of carbon. In fire-prone areas like the Sierra Nevada, above-ground carbon stocks have historically been low. In the 1911 data from the California Sierra Nevada that Stephens cited in his presentation,

the amount of carbon per hectare was approximately 100 megagrams. That is a very low number compared to the carbon stocks of today’s California Sierra Nevada mixed conifer forest. High carbon stocks will not work in a frequent-fire adapted ecosystem and will lead to tipping points, to which Krawchuk alluded. Carbon in forests is important, but the historical range of a regime’s ability to hold and sequester it needs to be considered. The ability of most of the areas of the western United States to hold carbon is probably lower than the amount of carbon that is being held in these regimes today. Continuing to hold the carbon stocks of today in western forests will not be sustainable, in Stephens’ view.

A participant in the audience said that, with the climate changing and fuel building up over the last century, more fire in the foreseeable future is guaranteed. While more fire on the landscape is needed, prescribed crown fires are nearly impossible to conduct. Therefore, managers are dependent on acts of God to set crown fires in places where they are needed. In the view of the participant, it is irrational to wait for fires to occur because they may start at the wrong time when, instead, fires could be set at the right time, even though sometimes unintended negative consequences will occur. Setting such fires obviously has a social, human dimension, but it also has a modeling dimension. One of the challenges to pursuing such actions that the participant heard in the panel discussion was uncertainty in modeling. He asked Finney if he could expand on what the missing pieces in modeling are that would enable more accurate modeling of fire spread.

Finney responded first that crown fires are not impossible to ignite for prescribed-burning purposes. This approach is used in the Northern Rocky Mountains, and the Canadians are experts at starting such fires with the use of helicopters. The proscription against these kinds of burns is sociopolitical and cultural; technically, they can be performed successfully.

With regard to the modeling, Finney said there are many fine-scale physical unknowns. For example, how long do fuels burn? What is the burning rate of a given fuel complex? How do fuels ignite? How does slope affect wildland fire spread? Are the effects of slope different from those of wind? Finney said there is good evidence that slope and wind have different effects but that the difference is not well understood. The coefficients of the two are currently applied algebraically without a real physical explanation.

A bigger question relevant to modeling for which the answer is unknown is: Are large fires different than larger small fires? They appear to be because they have different characteristics at a macro-scale that make them partly an atmospheric phenomenon. The burning rate probably changes and is not just a function of the physical layout of the fuels and their loading by size class.

Therefore, Finney concluded, there are dozens of fundamental questions that need to be asked and answered in order to build reliable physical representations of those questions and answers in models. Without this understanding, opportunities are being missed to have simple physical models that are fast and reliable. Whether the inputs can be gathered to model precisely the answers to such questions is yet another question. However, with the advance of technology, it certainly seems that input-gathering is not a current limitation.

This page intentionally left blank.