Proceedings of a Workshop

| IN BRIEF | |

|

June 2017 |

Examining the Mistrust of Science

Proceedings of a Workshop—in Brief

The Government-University-Industry Research Roundtable held a meeting on February 28 and March 1, 2017, to explore trends in public opinion of science, examine potential sources of mistrust, and consider ways that cross-sector collaboration between government, universities, and industry may improve public trust in science and scientific institutions in the future.

The keynote address on February 28 was given by Shawn Otto, co-founder and producer of the U.S. Presidential Science Debates and author of The War on Science. “There seems to be an erosion of the standing and understanding of science and engineering among the public,” Otto said. “People seem much more inclined to reject facts and evidence today than in the recent past. Why could that be?” Otto began exploring that question after the candidates in the 2008 presidential election declined an invitation to debate science-driven policy issues and instead chose to debate faith and values.

“Wherever the people are well-informed, they can be trusted with their own government,” wrote Thomas Jefferson. Now, some 240 years later, science is so complex that it is difficult even for scientists and engineers to understand the science outside of their particular fields. Otto argued, “The question is, are people still well-enough informed to be trusted with their own government? Of the 535 members of Congress, only 11—less than 2 percent—have a professional background in science or engineering. By contrast, 218—41 percent—are lawyers. And lawyers approach a problem in a fundamentally different way than a scientist or engineer. An attorney will research both sides of a question, but only so that he or she can argue against the position that they do not support. A scientist will approach the question differently, not starting with a foregone conclusion and arguing towards it, but examining both sides of the evidence and trying to make a fair assessment.”

According to Otto, anti-science positions are now acceptable in public discourse, in Congress, state legislatures and city councils, in popular culture, and in presidential politics. Discounting factually incorrect statements does not necessarily reshape public opinion in the way some trust it to. What is driving this change?

“Science is never partisan, but science is always political,” said Otto. “Science takes nothing on faith; it says, ‘show me the evidence and I’ll judge for myself.’ But the discoveries that science makes either confirm or challenge somebody’s cherished beliefs or vested economic or ideological interests. Science creates knowledge—knowledge is power, and that power is political.”

![]()

Otto reviewed the longer narrative of the intersection of science and politics in the United States. He said, “One hundred years ago, science was a source of national pride and a driver of economic growth and job creation. But the dropping of the atomic bomb in 1945 shifted perceptions of science, prompting public doubts about the risks versus the benefits of science. The launch of Sputnik in 1957 transformed that doubt into fear, which set off a space race and a science race.” This rebranded science as a source of American pride, and funding for the National Science Foundation and other federal granting agencies increased.

Otto continued, “With this new dedication of public funding, there was an accompanying inward transition of the scientific community. Scientists no longer engaged with the public the way they once did. Then the publication of Rachel Carson’s Silent Spring in 1962 introduced the idea that science was somehow poisoning us, which captured public imagination and transformed the public discussion about science into one of worry. The change in discourse gave birth to environmental science and the environmental movement. At that same time, advances in reproductive science caused rising discomfort among religious conservatives. The split around environmental and biological advances caused a realignment in U.S. politics. On the right, we see anti-science arguments by legacy industry and religion around issues such as climate change, evolution, reproductive technology, and vaccination; these are largely pro-corporate, anti-regulatory, anti-reproductive control positions. On the left, we see anti-science arguments about hidden dangers to health and the environment, with objections centering on issues like vaccines, GMOs, fluoridation, and EMF pollution—none of them supported by the evidence. These arguments are largely pro-choice, pro-regulation, and anti-corporate.”

Otto described the political schism even more distinctly: “On the right, the theme is: Liberal scientists with a socialist agenda want to control your life and limit your freedom. On the left, the theme is: Impersonal doctors, greedy corporations, and mechanistic scientists hide the real dangers to health, the environment, and our spirits. Most of this political discussion has gone on without the involvement of scientists, who have largely stayed quiet for the last two generations.” He outlined what he sees as three main “battlefields” of science: (1) the identity politics war on science, based on the postmodern argument that science is “just another way of knowing,” especially as it plays out in the journalistic idea that “there is no such thing as objectivity; (2) the ideological war on science, in which fundamentalist religions, feeling challenged by advances in the biosciences, develop “alternative theories” and “alternative facts;” and (3) the industrial war on science, driven by corporate PR campaigns that work to create uncertainty about science and scientific conclusions, with the goal of forestalling legislation based on scientific evidence that is seen as a threat to companies’ bottom lines.

Otto considers several battle plans for “winning” the war on science in his book, The War on Science: Who’s Waging It, What It Matters, What We Can Do About It (Milkweed Editions, 2016). Otto’s suggestions include a proposal that academics create a national center for science and self-government; journalists could improve attempts to hold the powerful accountable to evidence; and granting agencies could require and support public outreach by funding full-time science communicators in each lab. Lastly, Otto believes, scientists need to speak out, using inclusive, accessible, and direct language.

Measuring Public Trust in Science

The first presentation on March 1 was offered by Cary Funk from the Pew Research Center, who spoke about Pew’s efforts to measure public trust in science. “The data are mixed,” she said. “There are both positive and negative findings, which leads to some ambiguity and uncertainty in how to interpret the results.”

A nationally representative survey taken by Pew in 2016 shows that a majority of Americans have said that science as a whole has had a mostly positive effect on society, a finding consistent with other studies. Funk reported that scientists as a career group also measure well on public trust; when people were asked how much different groups could be trusted to do what is best for society, medical scientists and scientists came in second—after the military—and fared better than K-12 principals, religious leaders, elected officials, and journalists. “Trust in the scientific and medical community has remained stable over time,” Funk said.

Pew also looked at public opinion across 22 different science-related issues and concluded that there is no single story about what people think about science across all issues. Some science issues are strongly driven by politics and ideology, some are strongly connected with religious beliefs, and others with gender.

As a follow up, the researchers looked in depth at three different areas of science:

- Climate, energy issues, and the environment. The survey showed beliefs about climate change are sharply divided by political party. Nearly every judgment about climate scientists’ understanding of climate change—how well scientists understand whether climate change is occurring, and how well they understand its causes—differs widely depending on political party and ideology. However, there is more agreement—and shared skepticism—about how well scientists know how to address climate change.1

- Food issues, especially organics and genetically modified (GM) foods. There are strong divisions in public beliefs about foods, but beliefs were not divided along political or religious lines. Overall, 55 percent of the population thought organic produce are better for your health than conventionally grown produce while 39 percent said GM foods are worse for your health than other foods.2

- Vaccines, specifically the MMR vaccine. Overall, 88 percent of Americans said that the benefits of vaccines outweighed the risks. Two-thirds said that the risk of side effects is low. Again, there are no differences by political party or major differences by religion. Two groups—parents with children ages 0 to 4, and younger adults more generally—had comparatively more concern about the risks versus the benefits of vaccines.3

“Overall, there are divides in public opinion, but only one of these divides—climate change—is rooted in political party differences,” said Funk. Pew researchers also looked at how the public perceives scientific consensus across these three issues—estimating what share of scientists agree that vaccines are safe, that climate change is caused by human activity, and that GM food is safe—and compared these perceptions with scientists’ own perception of the consensus. The researchers found much more division among the members of the public in their understanding of the amount of consensus than among the scientists.

Examining Public Mistrust and the Polarization of Science

Dan Kahan of Yale Law School spoke next. He opened by explaining research that he and his colleagues did using a figure promoted by the American Association for the Advancement of Science (AAAS) of a pie chart showing that 97 percent of climate change scientists agree that humans are causing climate change.4

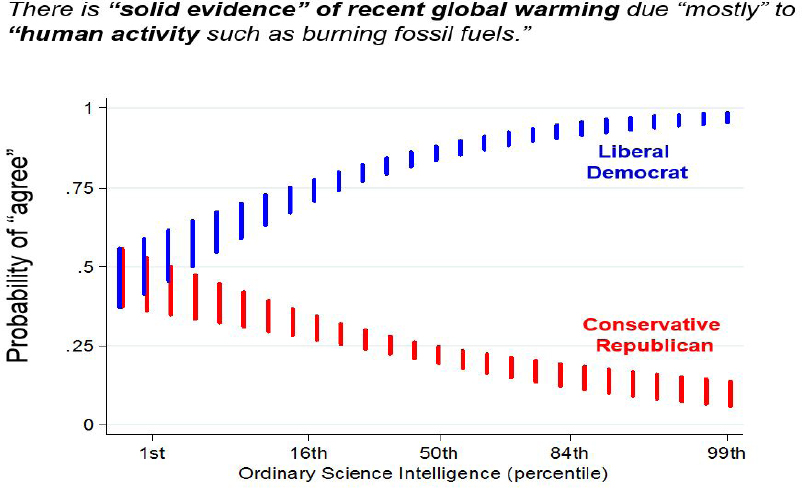

The researchers asked a large sample of people who saw the figure whether they believed in the consensus among 97 percent of climate change scientists. Fifty-two percent of respondents said yes, and 48 percent said no. This is a breakdown comparable to results of other surveys that ask people whether they believe humans are causing climate change. According to Kahan, his findings suggested that belief in scientists’ conclusions about climate change is divided on partisan lines. Conservative Republicans were far less likely than Liberal Democrats to believe either what AAAS said about the consensus, or to believe in human-caused climate change (see Figure 1).

“Greater science knowledge among subjects led to even greater polarization,” said Kahan. “Among those who were left-leaning, the higher a person’s score on an assessment of science knowledge, the more likely he or she was to believe the AAAS statement. But among the right-leaning, the higher a person’s score on an assessment of science knowledge, the less likely they were to believe AAAS about the consensus. In other words, no one was convinced by AAAS’s pie chart unless they had already believed what the pie chart showed—which shows a lot of distrust of scientists.”

___________________

1 “The Politics of Climate,” Cary Funk and Brian Kennedy, Pew Research Center, October 2016.

2 “The New Food Fights: U.S. Public Divides Over Food Science,” Cary Funk and Brian Kennedy, Pew Research Center, December 2016.

3 “Vast Majority of Americans Say Benefits of Childhood Vaccines Outweigh Risks,” Cary Funk, Brian Kennedy, and Meg Hefferon, Pew Research Center, February 2017.

4 What We Know initiative of the American Association for the Advancement of Science (AAAS). 2014 http://whatweknow.aaas.org/.

NOTES: [Data from CCP/Annenberg Public Policy Center, Jan. 5-19, 2016. N=2383. Nationally representative sample. “Liberal Democrat” and “Conservative Republican” reflect values for predictors set to those values on 5-point ideology & 7-point-identification items. Colored bars denote 0.95 CIs.]

SOURCE: Dan Kahan, Yale Law School.

Kahan added that there is far less ideological difference on vaccines, in terms of trust in what medical scientists say dispelling links between vaccines and autism.

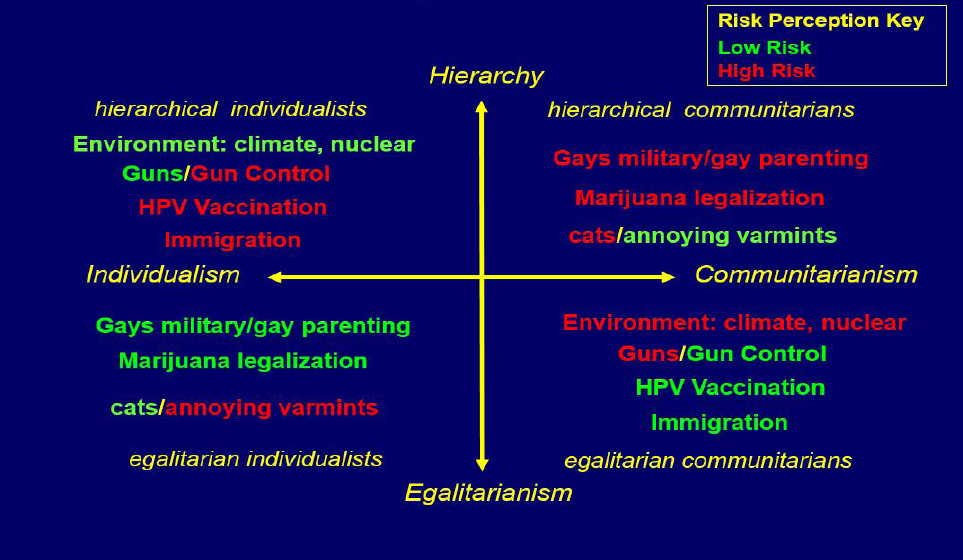

Kahan also explained the results of a study that examined how people’s values about how society should be organized affect their perception of scientists. Kahan and his colleagues asked study subjects whether three highly credentialed, accomplished scientists were experts on the subject matter of global warming, nuclear power, and gun control. They told half the subjects that the featured scientist was taking a “high-risk” perception of something (for example, “climate change is happening, humans are causing it, it is serious”) and the other half that the featured scientist was taking the “low-risk” position (“there’s too much uncertainty, let’s do nothing and wait and see”). Kahan charted the issues on a grid that displays preferences for individualism versus communitarianism on one plane and hierarchical versus egalitarian social structures on a bisecting plane (see Figure 2).

The study found that a person with egalitarian and communitarian leanings was more likely to view a scientist as an expert if the scientist took a high-risk position on climate change. A hierarchical individualist was less likely to categorize a scientist as an expert if the scientist took the high-risk position. If the scientist was not taking the position that aligned with the respondent’s own beliefs and cultural group, then the scientist was not seen as being an expert, regardless of the scientist’s credentials.

“I do not think there is a war against science being waged by the public,” Kahan continued. “Disagreements stem from considerations particular to the issues. There is something about climate change that promotes this controversy, which is not there with vaccines or many other issues. A principal source of conflict over decision-relevant science is that scientific facts get entangled in antagonistic social meanings. Facts get transformed into badges of cultural identity, and people make cultural judgments and inferences about you based on whether you hold certain views. You could be ostracized, for example, if what you say and believe about climate change is out of line with your cultural group’s position.”

SOURCE: Presentation by Dan Kahan, Yale Law School, to GUIRR, March 1, 2017.

According to Kahan, when policy-relevant facts become entangled in antagonistic social meanings, citizens do not actually lose trust in scientists; rather, they lose the practical ability to recognize what scientists know. None of the groups think that what they believe is opposed to the scientific consensus; it is important to them to be on the side of the scientists. “Ending polarization over these issues demands that institutions and norms protect the science communication environment from antagonistic social meanings and keep that environment pristine, so that people can get the benefits of what scientists know.”

The next presentation was given by Gordon Gauchat of the University of Wisconsin-Milwaukee, who noted that people have limited time and energy to spend thinking about scientific knowledge. “Unlike interest groups who are engaged in these issues, most people do not really have strong attitudes about science or science issues, unless those issues are useful for making distinctions about cultural identities. People are good at drawing social cartographies—figuring out who are allies and who are adversaries. The question that remains unanswered is whether science and scientists have become intertwined with these broader cultural identities, leading to the polarization of science.”

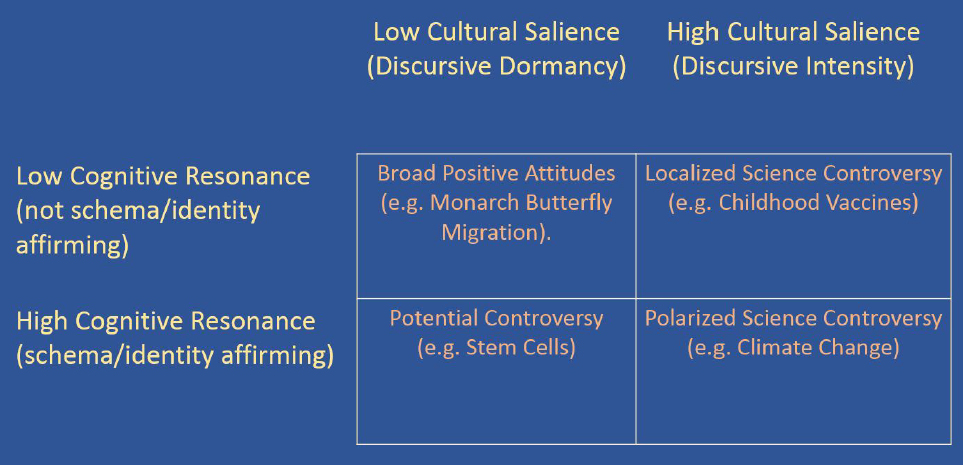

“We know that science has become increasingly relevant to public policy, so interest groups present these issues in public discourse in order to affect policy,” Gauchat said. “Whether or not an issue becomes politicized and polarizing depends on (1) if there are interested groups with resources trying to create a controversy, and (2) if the issue matches with some sort of cognitive schema (a tool used in psychology and cognitive science to represent the relationships between categories of thought patterns and information) that people use to understand their social world. When those two things happen simultaneously, we should worry” (see Figure 3).

“It is possible for general attitudes toward science to fall into a politicized, polarized situation,” said Gauchat. “Political identity is an ever-more prominent way for people to map the social world. Face-to-face networks are changing, and people are moving around more, so perhaps political identity is filling in some of the social gaps. Also, information is presented to people on a global scale—about subjects people have no immediate contact with—so they need a way to make sense of all of this.”

SOURCE: Presentation by Gordon Gauchat, University of Wisconsin-Milwaukee to GUIRR, March 1, 2017.

“In gauging very broad attitudes toward science being positive or negative (after accounting for measurement error and control for things like education, income, race, and gender), the strongest association among all indicators is political left-right ideology,” said Gauchat. “So the case is not quite closed on the question of general attitudes toward science.”

Gauchat called for more attention to be paid to the types of schema people are using to map the world, as those schema are often the cause of polarization. “We cannot separate out the practice of science from its relationship to governance and regulation; the public is going to see them as deeply intertwined when they care about an issue.”

Science Communication and New Media

The next panel explored science communication and new media. The first presentation was delivered by Rick Borchelt of the U.S. Department of Energy. “Whether or not people love scientists is not the problem that matters,” said Borchelt. “The problem that matters is: How do we guarantee better policymaking? Answering that requires a fundamental understanding of politics, and not of science. Policy and science are two different things—generating policy is a democratic action involving the amelioration of a number of different agendas and viewpoints, and scientists cannot always rule that discussion. The problem is on the policy-making side; we need to figure out how to better integrate science into the political process. Studying science does not help you do that, but studying politics does.”

Borchelt explained three different types of trust, starting with compounded trust, which is what happens when you put science in the proximity of what the public sees as a less-accomplished actor. Borchelt clarified, “If a quote in a news release is attributed to a university research scientist, the credibility of the news release is very high. If you attribute the same quote to a government scientist, expect it to drop about 20 points in credibility; for an industry scientist, expect to drop below 50 percent in credibility. It is the same quote, but the identity of the actor affects how people respond to the quote and to the news release content.”

Appropriated trust happens when someone who is not a scientist takes that mantle and invokes research they have read. “Trust always flows to the lowest level, so a person who talks about science attaches his or her credibility level to that science, not the other way around. Because of this, a good PR person will connect media and others to the actual scientists.”

Amplified trust happens when, because of consensus, trust is strong. “When consensus is perceived to weaken, so, too, does trust in the evidence. A similar thing happens with the embargo system, which drives all news stories on a study or subject to come out at once; the message is amplified because it is repeated by a lot of parties at once. But the embargo system is diminishing; new media is fragmenting and disaggregating the messages coming out of the sciences.”

Susan Matthews from Slate discussed two recent batches of news stories as examples of the kind of articles driving public whiplash and confusion about science. One set of articles was based on the experience of a single patient who had passed kidney stones after taking a roller coaster ride, and a subsequent experiment by his urologist, who took a 3D-printed kidney on a roller coaster to test the idea and saw the same result. The media reported on this experiment with the main message, “If you have a kidney stone, go on a roller coaster, and it will cure you.”

Another group of articles reported on power posing, following a researcher’s suggestion that standing tall before meetings activated testosterone, which influences the brain to prompt an alpha-male response, making the poser more confident. This theory has since been soundly debunked, but at the time, a typical headline about this research was: “This simple power pose can change your life and career.”

“While such stories may engage the public in reading about science, and help scientists get support for their work, whether the stories work in terms of telling readers something true about the world is murkier,” said Matthews. She explained how Slate covered these two stories, which focused on debunking the misinformation circulated in previous news articles.

Matthews closed by explaining what scientists can do to get better information out to the public: (1) Pitch stories to editors. “The best story to write is not one on your research and the study you just published. The best stories are when you are sick of the media reporting on something wrongly, and you want to help them set the record straight.” (2) Be a good and patient source. “Tell a journalist if you believe that the story they are chasing is not the true story; or, if they do not have a good understanding of the full body of research, give them the information they need to see the bigger picture.” (3) Fix the system. “Right now, many journalists in online media have incentives to churn out articles that will get clicks to drive ad revenue. It is the PR people’s job to get good PR for their institutions. It is the researchers’ job to do good research and have it seen by the press and to get more grants and tenure. The entire system is incentivized to reward behavior whose end result is not necessarily conveying what is true about the world. We need to change the incentives for science coverage by the media.”

Joe Palca of National Public Radio (NPR) spoke next, and explained how his work as a science journalist has evolved during his career and what continues to motivate him. “I went into science journalism because I thought it was important for the public to understand something about science, given the decisions and political judgments they needed to make in their lives,” said Palca. “I wanted to help the public understand science so they could make decisions in a conscious way, and that is what I believed for a long time. But now, my belief in that mission has changed, mostly based on Dan Kahan’s work. I think that the information deficit model—‘If they just understood, they will get it, and we will not have to worry about anything’—is not the case. This information deficit model is dead; people are interested in information, but it is not what they are using to make decisions.”

Palca noted that the mechanism known as the legacy media is changing. In his mind, this leaves a vacuum that might offer a particular opportunity for young scientists taking responsibility for communicating about their work to the public. He said in closing, “Instead of one journalist reaching a million people, we need 1,000 science communicators reaching 1,000 people.” He is trying to assemble a group of young people—about 200 graduate students in the sciences—who like the idea of communicating to the public and are willing to talk to people one-on-one, outside of traditional academic spaces.

Organizing Science to Regain Trust in Today’s Political Climate

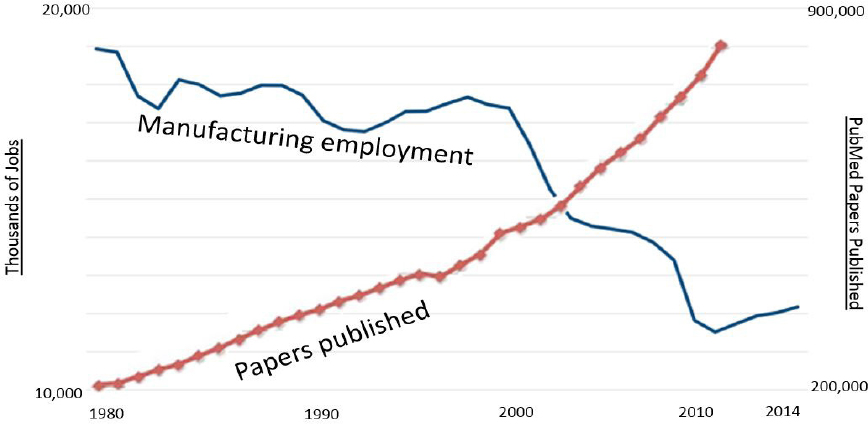

The next presentation was offered by Dan Sarewitz of Arizona State University, who explored the question of whether science should be trusted. He showed a graph depicting the decline of manufacturing jobs juxtaposed against the increase in scientific peer-reviewed articles published over the past 40 years (see Figure 4).

SOURCE: Presentation by Dan Sarewitz, Arizona State University, to GUIRR, March 1, 2017.

He acknowledged that it was simply a playful correlation, but also said that there is something more to that juxtaposition. According to a survey done a few years ago on how views on how technological risk differ between scientists and the public, scientists are not especially concerned about the implications of new technologies on jobs, but the non-scientist public is concerned. New waves of transformational technologies supported by scientific and engineering breakthroughs may create new opportunities for growth, but they also create disruption in job markets regionally and nationally.

“The connection between science and jobs is part of the canon of why we support science,” Sarewitz said. “The claim that investments in science are connected to jobs and well-being is one of the justifications for public spending on science in the postwar era. Vannevar Bush in his seminal 1945 report Science: The Endless Frontier argued that investments in basic research would pay off in terms of national defense, health, and jobs. And a lot of that happened, though not necessarily in the idealized way that Bush envisioned.”

“Now, of course, we are racked with discomfort, uncertainty, and political turmoil around the questions of what will create wealth and jobs and well-being in the future, and what will allow the benefits of these gains to be broadly distributed. If we are truly interested in maintaining the goodwill of the public, how do we keep delivering on the promise Vannevar Bush made about the connection between public investments in science and jobs and well-being?”

An article Sarewitz wrote for Nature immediately after the 2016 election explored the question: what might a science policy strategy that addresses the economic disenfranchisement and deindustrialization of parts of the country look like? According to Sarewitz, “This question is important to for us to think about if we want to continue making claims about the connection between science and public welfare.”

Sarewitz made a plea to the scientific community to revive a more sophisticated form of the foundational political debate around science policy that happened between 1945 and 1950 over the creation of the National Science Foundation. The debate—between Vannevar Bush and Harley Kilgore, a populist Democrat from West Virginia—was over how public institutions ought to understand their investments in science and technology.

“For Bush it was about the free play of intellects to work on subjects of their own choice; Kilgore did not disagree with that, but he also thought it should be socially purposeful research aimed to address major problems such as areas of entrenched poverty. Bush won decisively in terms of the way we think about science today—that curiosity and intellectual autonomy will lead to solutions to society’s problems.”

“Informed by 30-40 years of knowledge and theories and case studies and data about how innovation really works and how it connects to the creation of wealth and well-being and jobs, I think we need to revisit this debate,” Sarewitz argued. “In particular, how do we organize knowledge creation and its applications in a regional sense, so that the benefits are not just felt by certain parts of the country?” As examples, he pointed to Manufacturing USA, which has hubs that bring together the private sector and universities and municipalities to think about how to foster innovation, and the Defense Department’s environmental science and technology program, which showed how problems of environment and problems of defense could be brought together in counterintuitive ways.

“If we are really going to take seriously the issue of science contributing to quality of life in a country that’s highly divided, we are going to need to be much more creative in terms of the type of institutional arrangements that we embrace,” said Sarewitz. “If we do not take that seriously, then the trust issue may raise its head in a way that will be very difficult for us to come back from.”

Back to Basics: The Role of Science in Society

The day’s final presentation was given by Arati Prabhakar, former director of the Defense Advanced Research Projects Agency (DARPA), who spoke about how her experience at DARPA, at the National Institute of Standards and Technology (NIST), and in Silicon Valley have shaped her ideas concerning the future of science. “The frameworks we have for answering big questions and thinking about technology are still rooted in the middle of the last century,” she said. “It is time to recast those questions for the years ahead, including the fundamental question: what is the role of science and technology in society?”

Like Sarewitz, Prabhakar also referenced Vannevar Bush’s Science: The Endless Frontier, and was struck by how much has changed in the 70 years since its publication. According to Prabhakar, “Much of the rationale Bush laid out was based on the idea that the United States had relied on Europe for far too long and needed to develop its own capacity. What followed was many decades of the United States having a preeminent role in science and technology.”

“But while we are still very strong, we are no longer alone,” she said. “The pace of technology development has also changed dramatically, as has its distributed nature. So has where funding comes from; over many decades, the share provided by public and private sectors has shifted. In earlier years, the preponderance of the R&D budget was provided by the federal government; now, two-thirds of the funding comes from the private sector.”

Given these shifts, as well as shifting societal needs and opportunities, Prabhakar offered some questions for discussion.

- How are science and technology linked to job creation? “One major reason for public sector investment in science is to create jobs and benefit the economy. Much of public investment in R&D is predicated on the idea that science and technology will go in one side of a black box and growth of good jobs for Americans comes out the other side. There is evidence that this happened for a period of time, but is this connection still working?”

- Is the silver-bullet model of defense innovation producing the same benefits? National security and defense have historically been huge drivers of science and technology innovation. “Traditionally we thought about defense technologies from the perspective of silver bullets—that by working hard for a period of time you could create an advanced technology that would give the United States an advantage for decades—which worked for stealth and precision strike and other technologies. In today’s globalized world and at today’s pace of technology innovation, is that silver-bullet model—the advanced tech that holds everyone else at bay for decades—still the right one?”

- Do the measurements of innovative success reflect the kind of innovation system we want? “Is the debate about reproducibility in the biomedical community really about the reproducibility of research results, or is it about the fact that an enormous number of publications simply never get used? If the only purpose they serve is tenure and keeping track of people’s professional progress, are they becoming unanchored from public purpose? Does measuring success by these metrics reflect the values of our national research enterprise?”

- What is the right level of R&D funding? “Bush articulated the intrinsic value of having greater knowledge about the world. If that is the sole rationale for public investment, should the sciences be funded at the same level as the arts, which exist to enhance the human experience? Factoring in inflation of the growth in GDP, we are now funding public R&D at the level of 10-100 times what Bush envisioned. Is it time to take a fresh look at the entire R&D system?”

Raising these questions is important, but Prabhakar cautioned that some elements of our strategy must not change. One is the wide exploration of research without immediate ties to an obviously known problem. Another is the important implicit and explicit partnerships between the public and private sectors. She closed, “Ultimately, the most important thing that has not changed since Vannevar Bush’s era is that the fruits of science and technology—while not an unalloyed good—are forces that advance not only our society and our country, but also humanity.”

DISCLAIMER: This Proceedings of a Workshop—in Brief was prepared by Sara Frueh as a factual record of what occurred at the meeting. The statements made are those of the author or individual meeting participants and do not necessarily represent the views of all meeting participants, the planning committee, or the National Academies of Sciences, Engineering, and Medicine.

REVIEWERS: To ensure that it meets institutional standards for quality and objectivity, this Proceedings of a Workshop—in Brief was reviewed by Jeffrey Alexander, RTI International; Holly Falk-Krzesinski, Elsevier; and Sarah Rovito, Association of Public and Land-grant Universities. The review comments and draft manuscript remain confidential to protect the integrity of the process.

PLANNING COMMITTEE: Bradley W. Fenwick (Chair), Elsevier, Inc.; Rachel E. Levinson, Arizona State University; Robert Powell, University of California, Davis Health System.

STAFF: Susan Sauer Sloan, Director, GUIRR; Megan Nicholson, Associate Program Officer; Claudette Baylor-Fleming, Administrative Coordinator; Cynthia Getner, Financial Associate.

SPONSORS: This workshop was supported by the Government-University-Industry Research Roundtable Membership, National Institutes of Health, and the United States Department of Agriculture.

For more information, visit http://sites.nationalacademies.org/PGA/guirr/PGA_177700.

Suggested citation: National Academies of Sciences, Engineering, and Medicine. 2017. Examining the Mistrust of Science: Proceedings of Workshop—in Brief. Washington, DC: The National Academies Press, doi: https://doi.org/10.17226/24819.

Government-University-Industry Research Roundtable

Policy and Global Affairs

Copyright 2017 by the National Academy of Sciences. All rights reserved.