4

Strategies to Prepare for and Mitigate Large-Area, Long-Duration Blackouts

INTRODUCTION

This chapter focuses on strategies that can help to avoid, prepare for, and reduce the likelihood, magnitude, and duration of large-area, long-duration outages.1 Although this report is predominantly concerned with large-scale outages, many of the preventative approaches described in this chapter also decrease the likelihood of small localized outages and can help limit the spread and impact of small disruptions before major recovery efforts (see Chapter 6) are required.

This chapter concentrates on two broad aspects of improving grid resilience, considering both physical and cyber impairments. The first, planning and design, describes actions to enhance resilience that can be taken well before a potentially severe physical or cyber event occurs. The second, operations, describes how the grid is operated and strategies to enhance resilience during a severe event. Certainly there is overlap between these two, and the dividing line can blur as the planning time horizon moves closer to the real-time world of operations.

PLANNING AND DESIGN

The electric utility industry has a long history of planning, and the present high levels of reliability attest to its success in this area. However, the majority of this planning and design work has been directed toward increasing system reliability, while focusing on designing the system for optimal operations during normal conditions and creating the ability to respond to events similar to those that have been previously encountered by grid operators. Planning and design for resilience is different, with challenges that touch on essentially all aspects of the electric grid.

A resilient design requires a holistic consideration of both the resilience of the individual components that comprise modern electric grids and the resilience of the system as a whole. There is, of course, overlap between the two: system resilience can be enhanced by improved component resilience. However, improved resilience also involves consideration of the system as a whole, including not just the electric infrastructure itself, but also the interdependent infrastructures such as natural gas infrastructure, support infrastructure for the supply of other key inputs, and the commercial communications systems used in operating the grid. Last, improved resilience requires regulatory consideration of how upgrades will be funded.

Component Hardening and Physical Security

Creating reliable and secure components, investing in system hardening, and pursuing damage prevention activities are all strategies that improve the reliability of the grid and likewise play a role in preventing and mitigating the extent of large-area, long-duration outages. Utilities are generally aware of local hazards; however, these hazards may change over time, and utilities may not be aware of the compound vulnerabilities that become increasingly possible. Strategies used to address these hazards include appropriate design standards, siting methods, construction, maintenance, inspection, and operating practices. For example, a transmission line traversing high mountains must be designed for heavy ice loading, which may not be a design consideration for infrastructure located in desert environments. Design considerations for generation facilities, substations, transmission lines, and distribution lines frequently include environmental conditions such as extreme heat, cold, ice, and floods among other known threats. Utilities have less experience in design and hardening for uncommon threats such as geomagnetic disturbance (GMD) or electromagnetic pulse (EMP); nonetheless, these have been the focus of increasing attention and strategies to reduce system vulnerability.

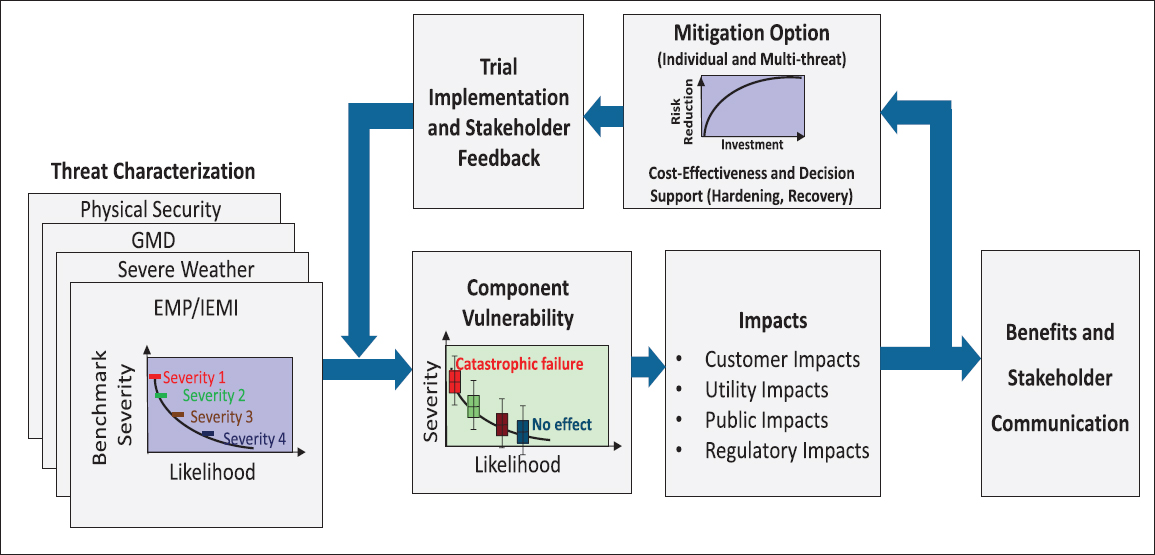

Utility investment in system hardening is typically informed by a risk-based cost-performance optimization that strives to be economically efficient by investing in

___________________

1 Such events overlap with what the North American Electric Reliability Corporation (NERC) calls a “severe event,” defined as an “emergency situation so catastrophic that complete restoration of electric service is not possible” (NERC, 2012a).

mitigation strategies with the greatest reduction in risk at the lowest cost (Figure 4.1). In principle, an infinite amount of money could be spent hardening and upgrading the system with costs passed on to ratepayers or taken from shareholder returns. However, utilities and their regulators (or boards) are typically conservative in these investments. All mitigation strategies have cost-performance trade-offs, and it may be difficult to estimate the actual reduction in risk or improvement in resilience associated with a specific action. In most cases, an electricity system that is designed, constructed, and operated solely on the basis of economic efficiency to meet standard reliability criteria will not be sufficiently resilient. If some comprehensive quantitative metric of resilience becomes available, it should be combined with reliability metrics to select a socially optimal level of investment. In the meantime, decision makers must employ heuristic procedures to choose a level of additional investment they believe will achieve a socially adequate level of system redundancy, flexibility, and adaptability.

Finding: Design choices based on economic efficiency using only classical reliability metrics are typically insufficient for guiding investment in hardening and mitigation strategies targeted toward resilience. Such choices will typically result in too little attention to system resilience. If adequate metrics for resilience are developed, they could be employed to achieve socially optimal designs. Until then, decision makers may employ heuristic procedures to choose the level of additional investment they believe will achieve socially adequate levels of system redundancy, flexibility, and adaptability.

Hardening and mitigation strategies can improve electricity grid reliability and resilience, and utilities routinely employ many techniques when deemed cost appropriate. Common examples are described in the following paragraphs.

Vegetation Management

Many outages, particularly those in the distribution system, are caused by trees and vegetation that encroach on the right-of-way of power lines. Overhead transmission lines are not directly insulated and instead require minimum separation distances for air to provide insulation. If trees or objects are allowed to get too close and draw an arc, short circuits of the energized conductor can result. When they are heavily loaded, transmission line conductors heat up, expand, and sag lower into the right-of-way, which increases the likelihood of a fault at times of peak transmission loading. Therefore, inadequate vegetation management in transmission line rights-of-way is a common cause of blackouts. On the lower-voltage distribution system, separation requirements are much smaller, and line sag is less of a consideration. However, during high wind or icy conditions, falling trees

NOTE: GMD, geomagnetic disturbance; EMP, electromagnetic pulse; IEMI, intentional electromagnetic interference.

SOURCE: Courtesy of the Electric Power Research Institute. Graphic reproduced by permission from the Electric Power Research Institute from presentation by Rich Lordan to the NCSL-NARUC Energy Risk & Critical Infrastructure Protection Workshop, Transmission Resiliency & Security: Response to High Impact Low Frequency Threats. EPRI, Palo Alto, Calif.: 2016.

and limbs can either create a short circuit or tear down the wires themselves. This can be extremely hazardous when the energized wires are in close proximity to people. So while there are different vegetation management practices for transmission (clearing vegetation below the wires) and distribution (clearing vegetation from around and above the wires), vegetation management is a key factor that influences the reliability of the transmission and distribution (T&D) system. Following the widely publicized blackout of August 14, 2003, new national standards for vegetation management of transmission lines were implemented. However, the vegetation management practices for distribution utilities vary dramatically, influenced by a variety of factors including geography, public sentiment, and regulatory encouragement.

Undergrounding

Undergrounding of T&D lines is often more expensive than building aboveground infrastructure. Outside of dense urban environments, T&D assets are typically not installed underground unless land constraints, aesthetics, or other community concerns justify the cost. Undergrounding protects against some threats to the resilience of the electric grid, such as severe storms—a leading cause of outages—but it does not address all threats (e.g., seismic or flooding) and may even make recovery more challenging. Furthermore, undergrounding may be impractical in some areas, based on geologic or other constraints (e.g., areas with a high water table). Therefore, the decision of whether or not to underground T&D assets varies considerably based on local factors; while undergrounding may have resilience benefits in some circumstances, it does not offer a universal resilience benefit.

Reinforcement of Poles and Towers

Building the T&D network to withstand greater physical stresses can help prevent or mitigate the catastrophic effects of major events. Structurally reinforcing towers and poles (referred to as robustness) is more common in areas where heavy wind or ice accumulations are possible, but the degree to which they are reinforced presents a cost trade-off with clear resilience implications.

Dead-End Structures

To minimize cost, transmission towers are often designed to support only the weight of the lines, with lateral support provided by the lines themselves, which are connected to adjacent towers. Thus, if one tower is compromised, it can potentially create a domino effect whereby multiple towers fail. To limit this, utilities install dead-end structures with sufficient strength to stop such a domino effect. However, there is a cost trade-off associated with how often such structures should be installed (e.g., changing the spacing from having one dead-end structure every 4 miles versus one dead-end structure every 10 miles).

Water Protection

Flooding is often a greater concern for substations and generation plants than transmission and distribution lines, and storm surge is particularly challenging for some coastal assets. When siting new facilities, it is possible to avoid low lying and flood prone areas. There are, however, many legacy facilities located in high hazard areas. Given that much of the population lives in coastal areas, it is impossible to address this risk completely through siting alone. Common techniques include installing dikes and/or levees, if land permits, or elevating system components above flood levels, which can be expensive when retrofitting legacy facilities.

Emerging Strategies for Geomagnetic Disturbance and Electromagnetic Pulse

There are various electromagnetic threats to the power system, including GMD (naturally occurring) and EMP (man-made). Both of these threats have resilience considerations at the component level and from a system-wide perspective. While they have different mechanisms of coupling to the grid and inducing damage, they are similar in that they can damage high-value assets, such as transformers. The EMP threat is unique in that it can directly incapacitate digital equipment such as microprocessors and integrated circuits that are not military hardened. NERC has new planning requirements for mitigating GMD (NERC, 2016a), and various commissions (e.g., the Commission to Assess the Threat to the United States from Electromagnetic Pulse [EMP] Attack2) have explored the degree to which it is appropriate to harden civilian infrastructure to address the EMP threat.

Physical Security

The immense size and exposed nature of electricity infrastructure makes complete physical protection from attacks impossible; thus, there is a spectrum of physical security practices employed across the grid. Utilities selectively protect critical system components, and NERC standard CIP-014-2 (NERC, 2014a) is enforced on the transmission system. Distribution systems are outside the scope of NERC jurisdiction. Because many generation facilities are staffed, they are relatively well protected. Additional federal requirements apply to protecting nuclear and other key assets, such as federally owned dams. Other assets essential to the operation of the system, such as control centers, can resemble bunkers and are well guarded. Many substations are

___________________

2 Reports from the Commission to Assess the Threat to the United States from Electromagnetic Pulse (EMP) Attack can be found at http://www.empcommission.org, accessed August 2, 2017.

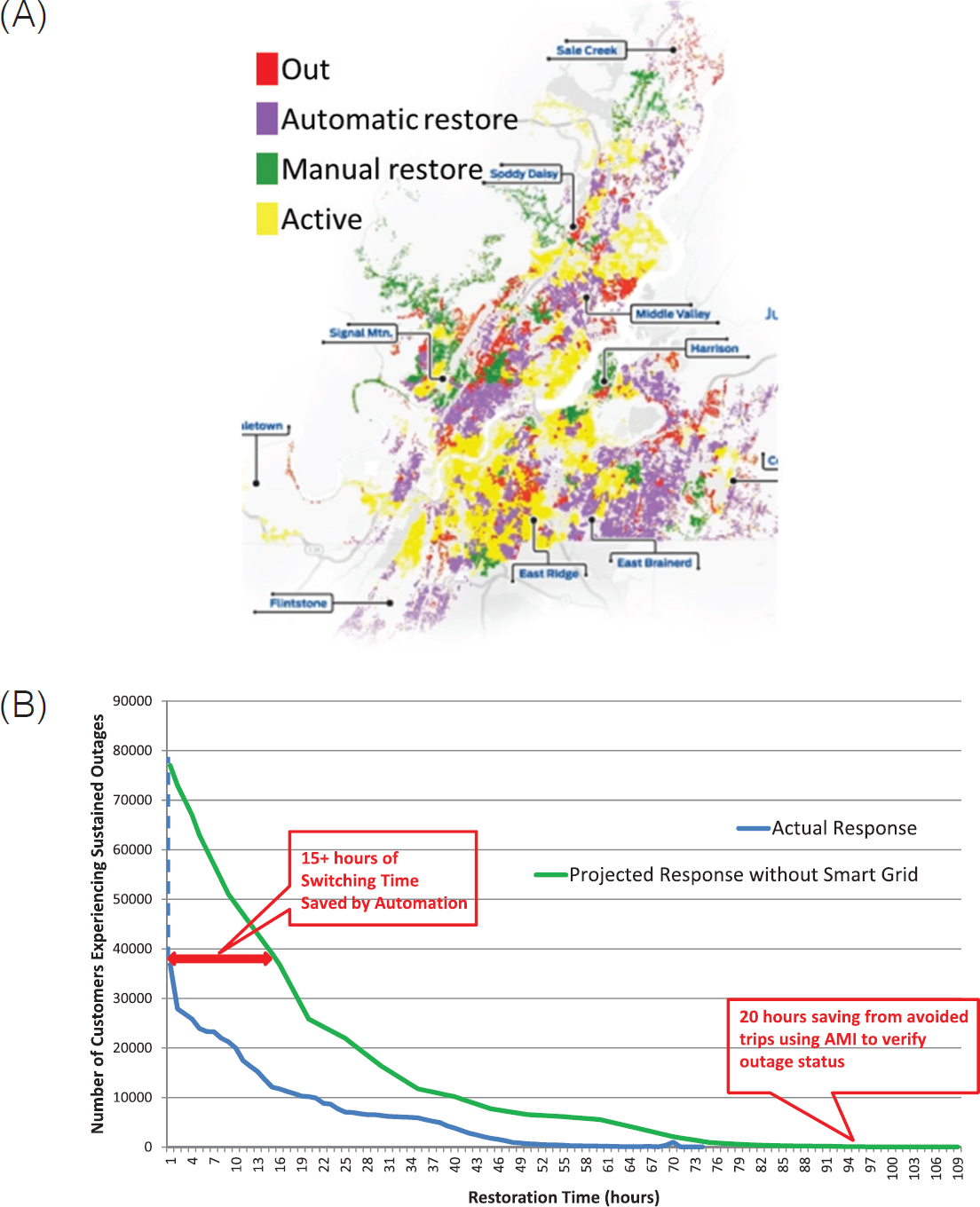

NOTE: AMI, advanced metering infrastructure.

SOURCE: Glass (2016).

less protected and have only surveillance, locks, and other deterrents. However, historical events such as the Metcalf incident (see Box 3.1) and a recent “white hat” break-in and hack of a utility shared on YouTube call attention to the limitations of these strategies. Alternative strategies include redesigning substation layout to minimize exposure, deploying barriers, protecting information about the location of critical components, and improving adoption of best practices and standards (ICF, 2016). Examples of these practices learned from the Metcalf incident include greater emphasis on outside-the-fence measures, including camera coverage, lighting, and vegetation clearing.

Distribution System Resilience

As noted in Chapter 2, the wires portion of the electric grid is usually divided into two parts: the high-voltage transmission grid and the lower-voltage distribution system. The transmission system is usually networked, so that any particular location in the system will have at least two incident transmission lines. The advantage of a networked system is that loss of any particular line would not result in a power outage. In contrast, the typical distribution system is radial (i.e., there is just a single supply), although networked distribution systems are often used in some urban areas (NASEM, 2016a). Most aspects of resilience to severe events ultimately involve the transmission system; however, improved distribution system resilience can play an important role.

There is wide variation in the level of technological sophistication in distribution systems. The most advanced distribution utilities have dedicated fiber-optic communications networks, are moving away from the traditional radial feeder design toward more networked architectures, and have sectionalizing switches that allow isolation of damaged components. In response to damage on a distribution circuit, these systems automatically reconfigure the distribution network to minimize the number of customers affected. In one notable example, shown in Figure 4.2 and detailed in Box 4.1, the Chattanooga Electric Power Board (EPB) installed significant distribution automation technology with a $111 million grant from the Department of Energy (DOE) through its Smart Grid Investment Grant program (authorized by the 2009 American Recovery and Reinvestment Act). The sophisticated and extensive project entailed installing a dedicated fiber-optics communications system, smart distribution switches, advanced metering infrastructure, and other equipment to automate restoration (DOE, 2011). It decreased restoration times for EPB’s customers, increased savings to EPB, and demonstrated possibilities for other utilities to emulate. However, pursuing a closed-loop fiber-optic system may be a challenge in other utility service areas that are larger geographically and in terms of population. While fiber-optic communication offers an advantage, it is not required to integrate the other technologies used at EPB. However, the deployment of a fiber-optic system lays the foundation for technologies that result in very high data exchange rates, such as phasor measurement units (PMUs), and offers the ability to provide broadband access to the community.

A distribution fault anticipation application based on “waveform analytics” (Wischkaemper et al., 2014, 2015) is another example of a technology that could be applied today. The key idea behind this approach is to utilize fast sensing of the distribution voltages and currents to detect precursor waveforms, which indicate that a component on a distribution circuit will soon fail. This is in contrast to the traditional approach of waiting for the component to fail and cause an outage before doing repairs. Examples of problems that can be detected by such pre-fault waveform analysis include cracked bushings, pre-failure of a capacitor vacuum switch, fault-induced conductor slap (in which a fault current in the distribution circuit induces magnetic forces in another location, causing the conductors to slap together), and pre-failure of clamps and switches.

Finding: While many distribution automation technologies are available that would enhance system resilience, their cost of deployment remains a barrier, particularly in light of challenges in monetizing the benefits of such installations.

Recommendation 4.1: Building on ongoing industry efforts to enhance system resilience, the Department of Energy and utility regulators should support a modest grant program that encourages utility investment in innovative solutions that demonstrate resilience enhancement. These projects should be selected to reduce barrier(s) to entry by improving

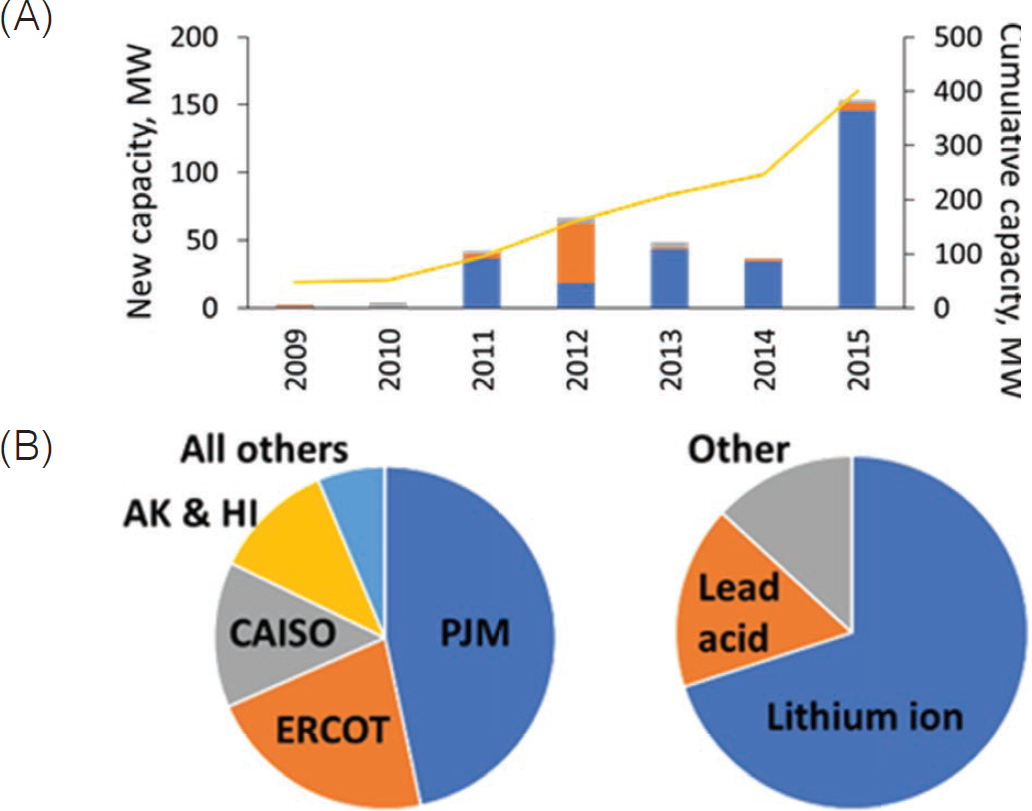

NOTE: CAISO, California Independent System Operator; ERCOT, Electric Reliability Council of Texas.

SOURCE: Data from Hart and Sarkissian (2016).

regulator and utility confidence, thereby promoting wider adoption in the marketplace.

Utility-Scale Battery Storage

Utility-scale battery storage is a relatively new tool available to operators to manage power system stability, which can potentially help prevent or mitigate the extent of outages. Of course even large batteries can only supply power for periods of hours, but such systems have value in other ways. They can be used to dispatch large amounts of power for frequency regulation, potentially preventing propagation of system disturbances, and provide additional flexibility for managing stability in lieu of demand response or load shedding. Installations of large utility-scale batteries (as opposed to behind-the-meter batteries) have increased significantly in several regions of the United States over the past 5 years. The DOE Global Energy Storage Database has information on more than 200 utility-scale battery projects in the United States, with more than 400 MW installed or approved capacity by the end of 2015 (Figure 4.3) (Hart and Sarkissian, 2016). This data set may underestimate such storage capacity.3 Other areas leading installation are in the Electric Reliability Council of Texas (ERCOT) and in California, driven largely by state policies (NREL, 2014). The small (relative to the scale of the three North American interconnections) Railbelt Electric System in Alaska was an early adopter (2003) of utility-scale battery energy storage, in part owing to instability challenges associated with operating a small, low-inertia “islanded” grid. Most utility-scale batteries on the grid employ lithium-ion chemistry and are used primarily for power conditioning and, to a lesser extent, for peak load management. Lithium-ion chemistry using existing electrolytes

___________________

3 The committee believes there is approximately 400 MW capacity installed in the PJM service territory alone.

is not ideal for bulk storage of electricity from large-scale, variable renewable generation sources, but alternative battery chemistries have yet to reach the cost, performance, and manufacturing scale to impact utility operations.

Distributed Energy Resources

Distributed energy resources (DERs)—including distributed generation from photovoltaics, diesel generators, small natural gas turbines, battery storage, and demand response—have the potential to help prevent the occurrence of large-area, long-duration outages as well as to provide local power to critical services during an outage. In California, for example, storage aggregators are contracting with utilities to provide tens of MW of storage capacity—alongside 70 MW of utility-scale storage—to help manage local resource adequacy and reliability following the closure of the Aliso Canyon facility (see Box 4.2). However, the reliability and resilience benefits of DERs to the bulk power system vary significantly, based on their technical characteristics and capacities as well as their location and local grid characteristics. Historically, DER adoption has been driven by environmental considerations and consumer preferences; only recently has resilience become an explicit design consideration. The greatest resilience benefits can be realized through coordinated planning and upgrading of T&D systems, as well as by providing operators the ability to monitor and control the operating characteristics of DERs in real time and at scale. This may require changes to technical standards, regulations, and contractual agreements.

Strategically placed DERs (that are visible to and controllable by utilities) not only provide local generation at the end of vulnerable transmission lines, but also can be operated to relieve congestion and potentially avoid the need for new transmission infrastructure. Thus, some of the early applications of DERs for enhanced resilience were motivated by local system concerns—in locations with constraints on transmission expansion or at the end of lines that are known to be problematic.

Inverter Standards for Increased Visibility and Control

At current levels of installation (relatively low except in certain areas such as Hawaii), DERs are not likely to be used

explicitly for the purpose of preventing or mitigating large-scale outages. Nonetheless, as DER installations continue to grow, it may become possible to coordinate their dispatch to help prevent outages (i.e., maintain system stability) and to expedite restoration (as described in Chapter 6). However, realizing these system benefits would require that system operators—whether distribution utilities or independent parties—have visibility and an appropriate level of control over the majority of DERs in a region.

This will require changes in interconnection standards, notably regarding inverters that are the interface between many types of DERs and the distribution system. In the past, these standards, which are under revision as of this writing, have required that DERs disconnect from the grid under fault conditions. This is undesirable behavior because it can jeopardize system stability under significant DER penetration levels. In the revised standards (IEEE, 2017), inverters will be required to ride through grid events, and they will have the ability to provide voltage and frequency regulation. Future inverters will provide operators with updated information on DER performance (e.g., generation level, state of charge), who could in turn actively utilize these resources in running the grid (e.g., when implementing adaptive islanding or intelligent load shedding schemes).

A non-exhaustive list of advanced inverter functionalities that could help prevent or mitigate outages, if they can be leveraged at scale, includes the following:

- Frequency-watt function. Adjusts real power output based on service frequency and can aid in frequency regulation during an event.

- Volt-var and volt-watt function. Adjusts reactive and/or real power output based on service voltage; this is necessary to maintain distribution feeder voltages within acceptable bounds when DER penetration is high, but it could also be used for transmission-level objectives.

- Low/high voltage and frequency ride-through. Defines voltage and frequency ranges for the inverter to remain online during a disturbance, which becomes a key feature at high DER penetration levels.

- DER settings for multiple grid configurations. Enables a system operator to provide a DER with alternate settings, which may be needed when the local grid configuration changes (e.g., during islanding or circuit switching).

Finding: DERs have a largely untapped potential to improve the resilience of the electric power system but do not contribute to this inherently. Rather, resilience implications must be explicitly considered during planning and design decisions. In addition, the possibility exists to further utilize DER capabilities during the operational stage.

Recommendation 4.2: The Department of Energy and the National Science Foundation, in coordination with state agencies and international organizations, should initiate research, development, and demonstration activities to explore the extent to which distributed energy resources could be used to prevent large-area outages. Such programs should focus on the technical, legal, and contractual challenges to providing system operators with visibility and control over distributed energy resources in both normal and emergency conditions. This involves interoperability requirements and standards for integration with distribution management systems, which are ideally coordinated at the national and international levels.

Interconnected Electric Grid Modeling and Simulation

From the start of the power industry in the 1880s, modeling and simulation have played a crucial role, with much expertise gained over this time period. Over the past 60 years or so, much of this expertise has been embedded in software of increasing sophistication, with power-flow, contingency-analysis, security-constrained optimal power-flow, transient-stability, and short-circuit analysis some of the key modeling packages (NASEM, 2016b). Modeling and simulation occur on time frames ranging from real time, in the case of operations, to looking ahead for multiple decades when planning high-voltage transmission line additions.

While the tools are well established for these traditional applications, enhancing resilience presents some unique challenges. First, multidimensional modeling is needed because severe events are likely to affect not just the electric grid, but also other infrastructures. Second, in order to enhance resilience, simulations should be specifically designed to consider rare events that severely stress the grid. Many rare high-impact events will stress the power grid in new and often unexpected ways; as a consequence, most will also likely stress the existing power system modeling software. The degree of power system impact often requires detailed modeling of physical and/or cyber systems associated with the initiating event. For example, correctly modeling the impacts of large earthquakes requires coupled modeling between the power grid and seismic simulations (Veeramany et al., 2016). This requires interdisciplinary collaboration and research between power engineers and people from a potentially wide variety of different disciplines. On the cyber side, for example, one must be able to correctly model the occurrence, nature, and impact of a large-scale distributed cyber attack like the one in Ukraine in 2015.

Because such events are rare, there is typically little or no historical information to accurately quantify or characterize the risk: some of the more extreme events could be considered extreme manifestations of more common occurrences (NASEM, 2016b). Thus, a large-scale attack could be considered a more severe manifestation of the more regular disturbances (such as those due to the weather). However, others would be more novel. As an example, consider the modeling and simulation work being done to

study the impact of GMD on the power grid. GMDs, which are caused by coronal mass ejections from the sun, cause low frequency (<< 0.1 Hz) variations in the earth’s magnetic field. The changing magnetic field can then induce electric fields on the earth’s surface that cause quasi-direct current geomagnetically induced currents to flow in the high-voltage transmission system, potentially causing saturation in the high-voltage transformers. A moderate GMD, with a peak electric field estimated to be about 2 V/km, caused a blackout for the entire province of Québec, Canada, in 1989 (Boteler, 1994), while much larger GMD events occurred in North America in 1859 and 1921.

As noted by Albertson et al. (1973), the potential for GMD to interfere with power grid operations has been known at least since the early 1940s. However, power grid GMD assessment is still an active area of research and development; much of that work has occurred in the past few years through interdisciplinary research focusing not just on the power grid, but also on the sun, the earth’s upper atmosphere, space weather hazards, and the earth’s geophysical properties. The assumptions on modeling the driving electric fields in software have evolved from a uniform electric field (NERC, 2012b); to scaled uniform direction electric fields, based on ground conductivity regions (based on one-dimensional earth models) (NERC, 2016a); to varying magnitude and electric fields, based on three-dimensional earth models using recent National Science Foundation Earthscope results (Bedrosian and Love, 2015). Over the past few years, GMD analysis has been integrated into commercial power system planning tools including the power flow (Overbye et al., 2012) and transient stability analysis software (Hutchins and Overbye, 2016).

Determining the magnitudes of the severe events to model can be challenging since there is often little historical record. This was highlighted in 2016 by the Federal Energy Regulatory Commission (FERC) in their Order 830,4 which directed NERC to modify its Standard TPL-007-1 GMD benchmark event so as not to be solely based on spatially averaged data. The challenges of using measurements of the earth’s magnetic field variation over about 25 years to estimate the magnitude of a 100-year GMD are illustrated by Rivera and Backhaus (2015). Determining the scenarios to consider for human-caused severe events, such as a combined cyber and physical attack, are even more challenging.

Finding: Enhancing power grid resilience requires being able to accurately simulate the impact of a wide variety of severe physical events and malicious cyber attacks on the power grid. Usually these simulations will require models for either coupled physical and cyber infrastructures or physical systems. There is a need both for basic research on the nature of these simulations and applied work to develop adequate simulations to model these severe events and malicious cyber attacks.

Recommendation 4.3: The National Science Foundation should continue to expand support for research looking at the interdisciplinary modeling and mitigation of power grid severe events. The Department of Energy should continue to support research to develop the methods needed to simulate these events.

A key driver for the research and development of simulation tools for improved resilience is access to realistic models of large-scale electric grids and their associated supporting infrastructures, especially communications. Some of this information was publicly available in the 1990s, but, as a result of the Patriot Act of 2001, the U.S. electric power grid is now considered critical infrastructure, and access to data has become much more restricted. While some access to power grid modeling data is available under non-disclosure agreements, these restrictions greatly hinder the exchange of the models and results needed for other qualified researchers to reproduce the results. This need is particularly acute for resilience studies, in which models need to be shared among researchers in a variety of fields for interdisciplinary work.

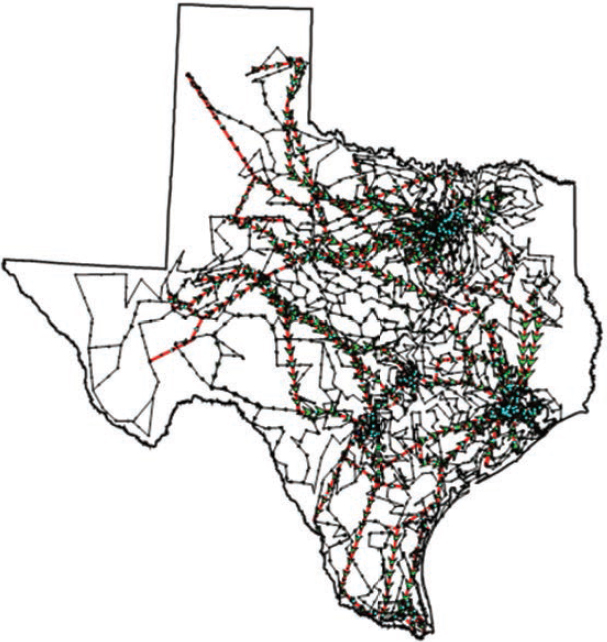

A solution that protects critical infrastructure information is to create entirely synthetic models that mimic the complexity of the actual grid but contain no confidential information about the actual grid. Such models are now starting to appear, driven in part by the DOE Advanced Research Projects Agency-Energy Grid Data program (ARPA-E, 2016), which is focused on developing realistic, open-access power grid models primarily for use in the development of optimal power flow algorithms. A quite useful characteristic of such synthetic models would be to include realistic geographic coordinates in order to allow the coupling between the power grid and other infrastructures or the actual geography. Birchfield et al. (2016) suggest using an electric load distribution that matches the actual population in a geographic footprint, public data on the actual generator locations, and algorithms to create an entirely synthetic transmission grid. As an example, Figure 4.4 shows a 2000-bus entirely synthetic network sited geographically in Texas. The embedding of geographic coordinates with the existing Institute of Electrical and Electronics Engineers’145-bus test system is used by Veeramany et al. (2016) to present a multi-hazard risk-assessment framework for study of power grid earthquake vulnerabilities.

While there has been some progress in creating synthetic models for the physical side of the electric grid, there has been very little progress in creating realistic models for the communications that support grid operations, both to represent its complexity and extent and to represent its coupling with the physical portion of the grid. Such models are necessary to understand the overall resilience of the power grid. Without such models, it is impossible to understand the impact of a cyber attack on the physical portion of the grid and hence its ability to deliver power despite a cyber attack.

___________________

4 156 FERC ¶ 61,215.

SOURCE: © 1969 IEEE. Reprinted, with permission, from Power Systems, IEEE Transactions on Grid Structural Characteristics as Validation Criteria for Synthetic Networks.

Finding: A key objective for research and development of simulation tools for improved resilience is shareable access to realistic models of large-scale electric grids, considering both the grid’s physical and cyber infrastructure and, equally important, the coupling between the two infrastructure sides. Because the U.S. power grid is considered critical infrastructure, such models are not broadly available to the power systems research community. Therefore, there is a need to develop synthetic models of the power grid physical and cyber infrastructure that match the size and complexity of the actual grid but contain no confidential information and hence can be fully publicly available.

Recommendation 4.4: The Department of Energy should support and expand its research and development on the creation of synthetic power grid physical and cyber infrastructure models. These models should have geographic coordinates and appropriate cyber and physical model detail to represent the severe events needed to develop algorithms to model and enhance resilience.

Interconnected Electric Grid Planning

Planning for resilience requires providing sufficient redundancy in generation, transmission, and distribution capacity. Current reliability standards issued by NERC (that are mandatory for operators of the bulk electricity system) require that the transmission system have enough redundant paths to withstand an outage by one major line or other important component (NERC, 2005). In most cases, the transmission system can continue operating with the loss of several transmission lines. At the distribution level, some state public utility commissions provide performance-based incentives that encourage distribution utilities to improve reliability metrics such as SAIDI and SAIFI, although these measures do not typically include outages associated with major events. Although NERC standards have largely been effective in addressing credible contingencies and have been recently expanded to include consideration of extreme events,5 designing the grid to ride through catastrophic events such as major storms and cyber attacks pushes their limit. Furthermore, designing and building the system to withstand such major events is expensive, and while the electricity system is designed to be economically efficient (subject to reliability-based constraints such as adequacy requirements in design and operational contingency requirements in operation), additional analyses and changes in

___________________

5 NERC TPL-001-4 requires studies to be performed to assess the impact of the extreme events; if the analysis concludes there is a cascading outage caused by the occurrence of extreme events, an evaluation of possible actions designed to reduce the likelihood or mitigate the consequences of the event(s) must be conducted (NERC, 2005).

planning, operational, and regulatory criteria may be needed to build incentives to design, plan, and operate the system to consider resilience in a cost-effective manner. Pushed too far, traditional strategies to make the system more robust can become cost-prohibitive, so planning and designing for graceful degradation and rapid recovery has become increasingly important for utilities.

With respect to transmission system level generation planning, the reliability standard followed in North America is a loss of load probability (LOLP) of 1 day in 10 years—enough generation capacity available to satisfy the load demand 99.97 percent of the time. If one can predict the maximum yearly load demand over many years, and good statistics of the central generator outage rates are available, one can calculate the schedule and amount of new generation capacity construction to meet this level of reliability.

As growing amounts of intermittent solar power have been added to distribution systems, the central plant generator models used in the traditional generation planning studies may be inadequate. The availability statistics were either unavailable or inadequate as the technologies were evolving. If the availability of demand curtailment, which is the same as generation availability, is also considered, the model for that will again be different, as this is dependent on factors other than weather. Finally, the addition of storage requires models that are even more complicated, as these can behave as either loads or generation with their own optimal charge/discharge schedules.

Although the generation planning criterion of the LOLP being 1 day in 10 years assures that the available generation capacity exceeds the load demand, the process ignores whether the transmission grid can move the generation to the load centers. The transmission planning process assures this by running power flow and transient stability studies on scenarios of extreme loading of the transmission grid. The planning criterion is that the system would operate normally (i.e., without voltage and loading violations) even if one major piece of equipment (e.g., line, transformer, generator) is lost for any reason—this is known as the “N-1” criterion.6 Note that this is a worst case deterministic criterion, not a probabilistic criterion like LOLP; this is because no one has yet found a workable stochastic calculation that can compute the probability of meeting all the operational constraints of the grid.

These generation planning requirements work well for scenarios where there are a few central generator stations but if meeting the generation reliability requires the availability of the DERs on the distribution side (including demand and storage management), then it is not enough to run studies on only the transmission system. On the other hand, modeling the vast numbers of distribution feeders into the contingency analysis studies would increase the model sizes by at least one magnitude. Even though this may not pose a challenge to the new generation of computers, it does pose a huge challenge to the present capabilities of gathering, validating, exchanging, and securing the model data.

The decision to invest in new generation, transmission, and distribution is more impacted by cost considerations where reliability objectives are otherwise being met. The least cost consideration must take into account not just the capital cost, but also the operational cost over the lifetime of the generation, transmission, or distribution. This cost optimization process has to include the operational scenarios over several decades, resulting in a dynamic optimization.

A major procedural hurdle has been the fact that generation (and even transmission, which is regulated) can be built by third parties whose optimal decision may or may not coincide with the optimal decision for the whole system. This multi-party decision making has essentially made the process much more difficult, and there is concern that the present decision making is too fragmented to guarantee the needed robustness of the future grid.

It is difficult enough to include all of the control and protection that is part of the grid today, but the use of distributed generation, demand response, and storage will require much more control and protection. Moreover, the rapid deployment of better measurement (advanced metering infrastructure, distribution management systems, and phasor measurement units) and communication (fiber optics) technologies are enabling a new class of control and protection that are not yet embedded into commercial-grade simulation packages.

Architectural Strategies to Reduce the Criticality of Components

A reliable system includes reliable components and a system architecture design that reduces the criticality of individual components needed to maintain grid functionality. A redundant and diverse architecture can enhance resilience of the system by reducing the dependencies on single components and how they contribute to the overall system objectives. Considerations of cascading failures, fault tolerant and secure system design, and mutual dependencies are important to develop resilient architectures. While many design characteristics of the modern power grid employ these concepts, it is important to improve resilient architecture design principles to enhance the capability of the system and to have a high degree of operational autonomy under off-normal conditions.

Historically, one of the primary means of achieving system resilience in the event of accidental component failure is through redundancy. This approach has been adopted by the electricity industry since its inception and has served

___________________

6 The N-1 criterion, referring to surviving the loss of the single largest component, is shorthand for a more complex set of NERC standards that specify the analysis of various categories of “credible contingencies” and acceptable system responses.

the customers well. For particularly important components or subsystems, this redundancy can also include diversity of design so as to prevent common mode failures or deliberate attacks from compromising both the primary and secondary components. Both redundancy and diversity in design are often employed in communication networks.

In addition, there is a need to design systems with insights provided by simulation of cascading failure sequences, so that technical or procedural countermeasures to thwart cascading failure scenarios can be applied. This preemptive analysis (and configuring the system to avoid conditions where cascading failure is a credible outcome) is particularly important because the speed of cascading failure sequences can often exceed the capability of automatic control responses, especially when the wide-area nature of the grid, and inherent communication delays, are taken into account.

One approach of resilient system design is to install controls that respond appropriately to limit the consequences or even stop a cascading failure sequence, regardless of the specific scenario that initiated the event. Thus, the system remains resilient even if events occur that are not envisioned or beyond the design basis of the system. Under-frequency load shedding is a notable example of this type of control. It operates when the system is in distress, and the resulting action of this control serves to help bring the system back into equilibrium. This design is elegant in that it is always appropriate to shed load when the system is experiencing a prolonged low frequency condition and that these controls can be autonomous and isolated, making them very secure and robust. Therefore, the presence of this type of control helps to enhance resilience, independent of the specific scenario or sequence of events that led up to its activation. Future implementation of under-frequency load shedding schemes will need to take into account the number of DERs on distribution feeders. These schemes may need to rely on intelligent load shedding instead of disconnecting entire distribution feeders.

Intelligent Load Shedding

Automatic under-frequency load shedding is a common strategy designed into systems, which maintains the stability of the grid when there is an unanticipated loss of generation. Load shedding events typically impact entire circuits, with all customers on the circuit losing power (NERC, 2015). However, with increasing deployment of advanced metering infrastructure (AMI) and sectionalizing switches on distribution systems, opportunities exist to significantly improve the precision and reduce unwanted outages associated with load shedding events. In the near future, it may be possible for utilities to disconnect specific meters on a distribution circuit as opposed to disconnecting the entire circuit at the substation. Some AMI provide greater granularity in control, allowing fractional supply as opposed to only full or no supply. Load shedding could be made even more selective with the installation of “smart” circuit breakers within customer facilities that would disconnect specific circuits within a residence or facility, based on providing appropriate financial incentives to customers. This could be done automatically, as a function of parameters like frequency, or it could be done under a systems optimization controller, but these different levels of functionality have differing levels of communication requirements.

Recommendation 4.5: The Department of Energy, working with the utility industry, should develop use cases and perform research on strategies for intelligent load shedding based on advanced metering infrastructure and customer technologies like smart circuit breakers. These strategies should be supported by appropriate system studies, laboratory testing with local measurements, and field trials to demonstrate efficacy.

Adaptive Islanding

The process of “islanding” the grid—that is, where the interconnection breaks up or separates into smaller, potentially asynchronous portions—can result in significant outages if the islanding is the result of an uncontrolled cascading failure. However, there are opportunities to pre-plan and manage the islanding process such that outages impact significantly fewer customers. Adaptive islanding can preserve the benefits of large-scale interconnected system operations during normal conditions while reducing the risk of failures propagating across the grid during abnormal or emergency conditions.

Under normal system conditions, the track record of system protection is excellent. But performance during off-normal conditions is less predictable. When a cascading failure progresses through a power system, the individual tripping of transmission lines will often result in the formation of islands. The stability of an island post-disturbance depends predominantly on the balance of generation and load within the area and the ability to maintain that balance during the sequence of events leading up to, during, and after island formation. Generator protection might act to trip unit(s) to prevent damaging transients. The nature of these transients and their severity, and the ability of the remaining generation to match the load within the island, will determine whether the island will be stable. Other emergency controls, such as automatic under-frequency load shedding, are useful to help preserve the stability of an island as it is being formed. The goal of under-frequency load shedding is preventing the loss of generation from under-speed protection. Losing generation due to over-speed protection is less consequential because high frequency is the result of too much generation in the first place. Usually, one good indicator of whether an island will survive or fail is whether that region of the system was a net exporter or a net importer of power prior to the disturbance. It is easier for generation to throttle down than to

throttle up, although under-frequency load shedding schemes can also be used to maintain stability within the island.

Wide-area protection schemes have been developed to limit the consequences of an uncontrolled cascading failure (NERC, 2013). These remedial action schemes provide fast-acting control to preserve system stability in response to predefined contingencies. One such scheme deliberately separates the western power system into two islands by remotely disconnecting lines in the eastern portion of the system if key transmission paths in the western portion of the system become de-energized.

Adaptive islanding is an idea that has been under development for several years (You et al., 2004). The concept is predefining how to break apart the system in response to system events, by matching clusters of load and generation. The goal is to reduce the size of power system blackouts, and minimizing generation loss is a key element of this strategy. This can be accomplished through more aggressive use of fast-acting demand response to preserve the generation-load balance in each of the islands. The technology has progressed to the point where this is becoming a viable approach.

Finding: The electricity system, and associated supporting infrastructure, is susceptible to widespread uncontrolled cascading failure, based on the interconnected and interdependent nature of the networks.

Recommendation 4.6: The Department of Energy should initiate and support ongoing research programs to develop and demonstrate techniques for degraded operation of electricity infrastructure, including supporting infrastructure and cyber monitoring and control systems, where key subsystems are designed and operated to sustain critical functionality. This includes fault-tolerant control system architectures, cyber resilience approaches, distribution system interface with distributed energy resources, supply chain survivability, intelligent load shedding, and adaptive islanding schemes.

Vulnerability Due to Interdependent Infrastructures

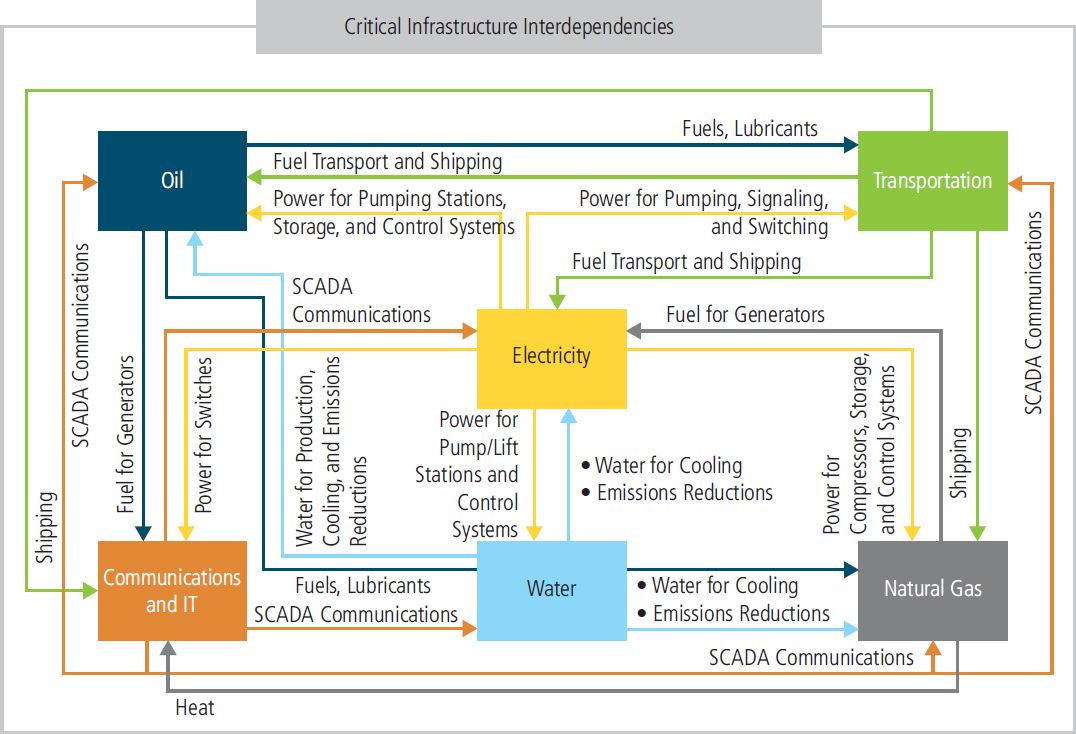

A reliable electric grid is crucial to modern society in part because it is crucial to so many other critical infrastructures, as described in Chapter 2. However, the dependency goes both ways, as the reliable operation of the grid depends on the performance of multiple supporting infrastructures. Outages can be caused by disruptions to natural gas production and delivery, commercial communications infrastructure, and transportation systems, among other critical infrastructures (Figure 4.5) (Rinaldi et al., 2001).

Natural Gas Infrastructure

As described in Chapter 2, the fraction of generation provided by natural gas—both large central generating plants and small customer-owned generators powered by internal combustion motors or microturbines—has grown substantially over the past few years. This not only exposes the industry to potential price volatility and supply chain vulnerability, but also raises the question of how utilities could restore electricity service if a major disruption to natural gas delivery occurred (e.g., one or more critical pipelines are destroyed). To date, no such outage has resulted in large electricity outages, and the minor events that have occurred fall on the scale of reliability operations that were handled relatively easily by the industry. The January 2014 Polar Vortex and the natural gas leak and subsequent closing of Aliso Canyon natural gas storage facility have already impacted utility planning and system design to be more cognizant of this critical interdependency (Box 4.2). These studies suggest that resilience can be enhanced through a diverse fuel portfolio, where a single interruption is less likely to impact a significant number of generators that cannot be overcome by reserve assets.

Finding: Constraints in natural gas infrastructure have resulted in shedding of electric load, and the growing interdependency of the two systems poses a vulnerability that could lead to a large-area, long-duration blackout.

Recommendation 4.7: The Federal Energy Regulatory Commission and the North American Energy Standards Board, in conjunction with industry stakeholders, should further prioritize their efforts to improve awareness, communications, coordination, and planning between the natural gas and electric industries. Such efforts should be extended to consider explicitly what recovery strategies should be employed in the case of failed interdependent infrastructure. Fuel diversity, dual fuel capability, and local storage should be explicitly addressed as part of these resilience strategies.

Commercial Communications Infrastructure

Another example of coupled infrastructure is telecommunications. While many utilities utilize their own dedicated telecommunication assets to support critical communication and automation functions, there is a substantial dependency on communications and internet-based technologies that facilitate the daily operation of the modern electricity system, including coordination among personnel, managing markets, and financial structures, as well as supporting automation and control technology. With growing deployment of smart grid technologies and automated controls, this dependency may continue to increase. In the event of loss of external communications networks, many utility operations may be compromised, requiring greater reliance on manual operation and assessment of the state of damage. As an example, with the failure of multiple communications systems, it may be difficult to coordinate the activities of repair crews in the field with operational decisions, thus attenuating the hazards for workers and slowing the restoration.

NOTE: SCADA, supervisory control and data acquisition.

SOURCE: DOE (2017).

Design for Cyber Resilience

The electric power system has become increasingly reliant on its cyber infrastructure, including computers, communication networks, other control system electronics, smart meters, and other distribution-side cyber assets, in order to achieve its purpose of delivering electricity to the consumer. A compromise of the power grid control system or other portions of the grid cyber infrastructure itself can have serious consequences ranging from a simple disruption of service to permanent damage to hardware that can have long-lasting effects on the performance of the system. Any consideration of improved power grid resilience requires a consideration of improving the resilience of the grid’s cyber infrastructure.

Over the past decade, much attention has rightly been placed on grid cybersecurity, but much less has been placed on grid cyber resilience. In particular, there has been significant research and investment in technologies and practices to prevent cyber attacks. Some of the many methods include the following: (1) identifying and apprehending cyber criminals, (2) defending the perimeter of a network with firewalls and “white listing” and “black listing” certain communications sources, (3) practicing good cyber “hygiene” (e.g., protecting passwords and using two-factor authentication), (4) searching for and removing suspect pernicious code continuously, and (5) designing control systems with safer architecture—for example, segmenting systems to slow or prevent the spread of malware. The sources of guidance on protection as a mechanism to achieve grid cybersecurity are numerous (DOE, 2015); one good source of reference materials specific to industrial control systems can be found at the Department of Homeland Security’s Industrial Control System Cyber Emergency Response Team website.7 Another good source of information is the Energy Sector Control Systems Working Group’s Roadmap to Achieve Energy Delivery Systems Cyber Security (ESCSWG, 2011). Furthermore, strategies to achieve power grid cybersecurity are documented in the National Institute for Standards and Technology Internal/-

___________________

7 The website for the Industrial Control System Cyber Emergency Response Team is https://ics-cert.us-cert.gov/Standards-and-References, accessed July 4, 2017.

Interagency Report 7628 Guidelines for Smart Grid Cyber Security (NISTIR, 2010). A good source of basic information is Security and Privacy Controls for Federal Information Systems and Organizations (NIST, 2013), which, although nominally applying to federal information technology systems, has some guidance that can be useful in protecting grid cyber infrastructure.

It is now, however, becoming apparent that protection alone as a mechanism to achieve cybersecurity is insufficient and can never be made perfect. Cyber criminals are difficult to apprehend, and there are nearly 81,000 vulnerabilities in the National Institute of Standards and Technology (NIST) National Vulnerability Database, making it challenging to use safe code (NVD, 2016). An experiment conducted by the National Rural Electric Cooperative Association and N-Dimension in April 2014 determined that a typical small utility is probed or attacked every 3 seconds around the clock. Given the relentless attacks and the challenges of prevention, successful cyber penetrations are inevitable, and there is evidence of increases in the rate of penetration in the past year, particularly ransomware attacks.

Fortunately, the successful attacks to date have largely been concentrated on utility business systems as opposed to monitoring and control systems (termed operational technology [OT] systems), in part because there are fewer attack surfaces, fewer users with more limited privileges, greater use of encryption, and more use of analog technology. However, there is a substantial and growing risk of a successful breach of OT systems, and the potential impacts of such a breach could be significant. Serious risks are posed by further integration of OT systems with utility business systems, despite the potential for significant value and increased efficiency. Furthermore, the lure of the power of Internet protocols and cloud-based services threatens some of the practices that have historically protected the grid. Cloud-based services provide the potential for better reliability, resilience, and security versus on-premises computing, particularly for smaller utilities. For example, major commercial clouds, like the Amazon cloud, have a very high level of around-the-clock monitoring by a well-provisioned security operations center, better than that operated by some utilities. The cloud does, however, present another attack surface. Utilities that choose to use the cloud must explicitly consider the security of the cloud and how to secure the communications bi-directionally.

Given that protection cannot be made perfect, and the risk is growing, cyber resilience, in addition to more classical cyber protection approaches, is critically important. Cyber resilience aims to protect, using established cybersecurity techniques, the best one can but acknowledges that that protection can never be perfect and requires monitoring, detection, and response to provide continuous delivery of electrical service. While some work done under the cybersecurity nomenclature can support cyber resilience (e.g., intrusion detection and response), the majority of the work to date has been focused on preventing the occurrence of successful attacks, rather than detecting and responding to partially successful attacks that occur.

Cyber resilience has a strong operational component (mechanisms must be provided to monitor, detect, and respond to attacks that occur), but it also has important design-time considerations. In particular, architectures that are resilient to cyber attacks are needed to support cyber resilience. Work during the past decade has resulted in “cybersecurity architectures” for the power grid cyber infrastructure, such as those described by NIST (2015), but there has been much less work done to define “cyber resilience architectures.” Some preliminary discussion of such an architecture can be found in MITRE’s Cyber Resiliency Engineering Framework (Bodeau and Graubart, 2011) and in NISTR’s Guidelines for Smart Grid Cyber Security (NISTIR, 2010), among other places.

Generally speaking, a cyber resilience architecture should implement a strategy for tolerating cyber attacks and other impairments by monitoring the system and dynamically responding to perceived impairments to achieve resilience goals. The resilience goals for the cyber infrastructure require a clear understanding of the interaction between the cyber and physical portions of the power grid as well as how impairments on either (cyber or physical) side could impact the other side. By their nature, such goals are inherently system-specific but should balance the desire to minimize the amount of time a system is compromised and maximize the services provided by the system. Often, instead of taking the system off-line once an attack is detected, a cyber resilience architecture attempts to heal the system while providing critical cyber and physical services. Based on the resilience goals, cyber resilience architectures typically employ sensors to monitor the state of the system on all levels of abstraction. The data from multiple levels are then fused to create higher-level views of the system. Those views aid in detecting attacks and other cyber and physical impairments, as well as in identifying failure to deliver critical services. A response engine, often with human input, determines the best course of action. The goal, after perhaps multiple responses, is complete recovery (i.e., restoring the cyber system to a fully operational state).

Further work to define such cyber resilience architectures that protect, detect, respond, and recover from cyber attacks that occur is critically needed. Equally important, but just as challenging, is work to validate that proposed cyber resilience architectures achieve cyber resilience and cybersecurity requirements (see Recommendation 4.10).

Regulatory and Institutional Opportunities

As described in Chapter 2, utilities seek and regularly receive regulatory approval for routine preventative maintenance activities such as vegetation management and hardening investments. While FERC regulates generation and interstate transmission, individual states are responsible

for approving investments in local transmission and the distribution system. There is wide variety in public utility commission (PUC) approval of utility investment across the United States and between geographically similar Gulf states (Carey, 2014). States along the hurricane-prone southeastern coast are more likely to allow alternative mechanisms to finance such investments, including the addition of “riders” to customer bills, securitization and issuance of bonds, and creation of reserve accounts that utilities can use as a form of self-insurance (EEI, 2014).

In addition to approving investments in hardening and preventative strategies, several states, such as California, Florida, and Connecticut, require utilities to regularly submit and update emergency preparedness plans, which often require input and coordination from city and county officials. Others provide performance-based incentives or penalties—for example, based on improvements to reliability measures such as SAIDI and SAIFI (although most reporting standards do not include large-area, long-duration outages when calculating these metrics)—to encourage best practices in the absence of standards on distribution systems. Other states impose penalties for inadequate levels of service or performance during storm events and recovery. Funding of grid modernization investments likewise varies across states, with some regulator commissions such as California and Massachusetts researching and investing significantly in advanced communications and automation technologies. In the absence of regulatory approval, there is a critical opportunity for continuing federal grants (e.g., the Smart Grid Investment Grant provided to Chattanooga Electric Power Board) to further demonstrate the viability of such technologies and promote wider adoption across states.

In response to large outages such as those that resulted from Superstorm Sandy and other high-profile storms, state PUCs and, to a lesser extent, state legislatures across the country have considered investments in system hardening and implementing assorted grid modernization strategies with the goal of preventing or mitigating the impact of future large outages (Box 4.3).8 Historically, such crises often provide the opportunity to focus attention and resources on costly robustness and resilience enhancements in a system that may be optimized economically without systematic consideration of the value of avoiding or responding quickly to these extreme events. Nonetheless, regulators’ and the industry’s efforts are more often reactive than proactive,

___________________

8 A more complete review of state regulatory actions related to robustness and resilience is provided by EEI (2014).

and a focus on near-term cost-benefit optimization may not have resulted in investments that provide cost-effective benefits from a more resilient power grid. Thus, the committee expects that successfully funding cost-effective investments in resilience will require novel approaches, as described in Chapter 7, and proper metrics, as described in Recommendation 2.1.

OPERATIONS

Much can be done in the area of real-time electric grid operations to enhance physical and cyber resilience. With the advent of smart grid devices, the electric grid is getting more intelligent with more sensing and embedded controls. While they are certainly beneficial, smart grid devices make the grid more complex. While this automatic control is helpful, any consideration of power system operations needs to recognize that the human operators are still very much “in the loop” and will continue to be so for many years into the future. Therefore, strategies to enhance operational resilience need to include tools to enhance the capabilities of the operators and engineers running the system.

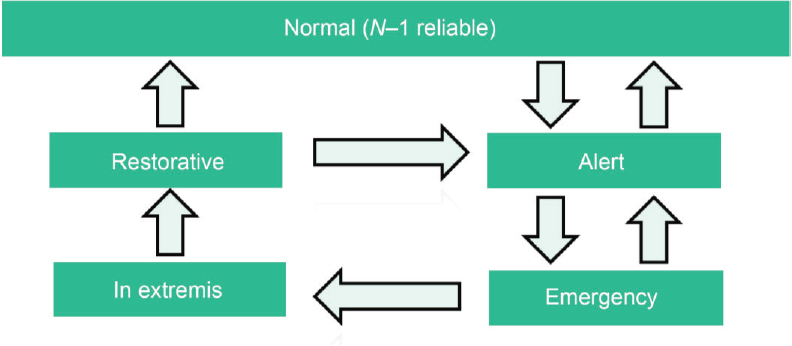

In order to understand operations, it is useful to consider the different power system operating states shown in Figure 4.6. By far the majority of the time is spent in the normal state—that is, ready to handle the N-1 reliability criteria. This is the state in which people have the most experience; hence, many of the tools used in the control center are focused on normal operations. More rarely, the system moves into alert, emergency, and restorative situations. However, such situations are encountered often enough that there is good historical experience; control room personnel train for such situations, and, for the most part, they have adequate tools for dealing with these situations.

Enhancing grid resilience requires that more attention be given to the alert, emergency, in extremis, and restorative stages of these operating states. In these stages, the previously interconnected grid may be broken into a number of electrical islands, and the operation of these islands may need to be performed by entities that are not normally responsible for grid operations (NERC, 2012a).

Sometimes, threats such as hurricanes can be identified with sufficient warning time to allow system operators to preemptively position the system to be more robust and able to respond to emerging conditions. This often involves curtailing any avoidable outages that might be caused by maintenance or other activities, deploying additional reserves to the extent possible, and even powering down certain critical components to minimize potential damage. This strategy is often less expensive than hardening strategies previously discussed. All major events are managed by operators in the control center, and their skills and training, as well as their tools and supporting technologies, are critical factors for how effectively the event will be managed.

Wide-Area Monitoring and Control

As the power grid becomes more complex and is operated closer to reliability limits, the need for greater remote control increases. Fortunately, the technologies needed for such “wide-area control,” principally sensors and communications, are becoming cheaper and more powerful. The increasing use of high-speed wide-area measurements, including synchrophasors that measure currents and voltages 30–60 times a second and communicate them to distant computers, allows the design of controls that can use input data from different parts of the system and send control signals to equipment in different locations. The combination of PMUs, distribution automation, dedicated fiber-optic cable communications infrastructure, and affordable computing will likely lead to increasing reliance on artificial intelligence

SOURCE: © 1978 IEEE. Reprinted, with permission, from IEEE Spectrum Operating under Stress and Strain [electrical power systems control under emergency conditions].

in the power system. Additionally, remedial action schemes9 are increasingly being deployed to increase the throughput of the grid, while minimizing the risk of cascading failures, by appropriately tripping loads and generators after an event on the system. The measurements for these automatic relays can often be hundreds of miles apart. These automated systems are able to sense and take action in real time, and can be thought of as a stepping stone to wider application of artificial intelligence and machine learning applied to the power grid.

Although such wide-area controls are appearing all over the world, the design, simulation, on-line testing, and cyber protection of such controls are expensive and time-consuming. Moreover, the architecture of the power grid and its overlaid control system has a direct impact on the design of such controls. For example, how centralized or decentralized a control scheme should be is constrained by where the measurements are, the communication paths to gather these measurements in the controller, and which equipment are available to this controller for control. Such controllers are in their evolutionary stages, so they should be designed not just for economic and reliability benefits, but also for resilience.

Often the term smart grid is used in reference to electronic meters and sensors. However, it also encompasses the wide-area monitoring and control considered here. That is, smart grids could include automatic sectionalizing, smart islanding to prevent cascading failures, the ability to operate these islands in a degraded state, and supercomputing resources to support system operators. For example, during the August 14, 2003, blackout, there was almost an hour of opportunity to intervene before the cascading event initiated (USCPSOTF, 2004). With better operational intelligence, a preventative shedding of approximately 2,000 MW load in the Cleveland area would have prevented the cascading failure that affected more than 60 million people.

During a major event such as Hurricane Katrina or Superstorm Sandy, thousands of alarms can overwhelm the system operator. Artificial intelligence could help quickly prioritize these alarms that come in over the supervisory control and data acquisition (SCADA)/energy management systems (EMS) and provide the operator with suggestions for the most important alarms to focus on, the root cause(s) of the event, and the most important actions to prevent further degradation and start restoration. The inherent complexity that power system operators have to face every day used to be addressed through detailed procedures. Today, with the system growing in complexity, the assistance of artificial intelligence and improved man–machine interfaces for system operators is likely to enhance both reliability and resilience. Under this scenario, all historical events and previous operators’ experiences could be accumulated by a system such as IBM’s Watson to prioritize alarms and suggest appropriate action.

As DERs and smart inverters become more and more common in the distribution system, electricity system operators need to assess whether artificial intelligence combined with closed-loop fiber-optic broadband communication can improve the reliability and resilience for distribution customers. As more DERs are connected with smart inverters, the distribution system can break into smaller microgrids that can island and maintain service to critical load. In addition to distributed generation, demand side resources (customer loads) with inverters and power electronics can improve both reliability and resilience.

The Chattanooga EPB has demonstrated this by installing fiber-optic communication and automatic sectionalizing switches. Its communication system brought fiber optics to every home with smart meters available to determine both billing information and operational data such as Volts, Volt-ampere reactives, and Amps. This alone will not improve resilience, but combined with automated switches and voltage control devices EPB has greatly improved both the reliability and the resilience of its distribution system.

Finding: New automation systems promise to enable better monitoring and control of the grid. The design of such large-scale, wide-area controllers should be done with cyber resilience in mind. Such controllers should tolerate accidental failures and malicious attacks that occur, providing degraded functionality even during recovery from such attacks, and not be a hindrance during catastrophic events or the recovery afterwards. Flexibility of the controller may be achieved with the proper centralized/decentralized design, where the centralized control may provide the best benefits during normal operation. When the grid is broken up after a catastrophic event, however, the decentralized portion may still be able to operate the various parts.

Physical and Cyber Situation Awareness

Bulk electric grids are some of the world’s largest and most complex machines, and disturbances (cyber or physical) can rapidly propagate through their systems. Hence, normal operations can quickly change, demanding quick responses by the human operators or preprogrammed automation. Resilient operation requires physical and cyber “situation awareness,” defined as “the perception of critical elements in the environment, the comprehension of their meaning, and the projection of their status into the future” (Wickens et al., 2013), so that unfavorable changes of physical or cyber state that occur can be addressed (either by human or automated means) quickly enough to prevent a catastrophic event.

In the power industry, the term “situation awareness” was popularized by the August 14, 2003, Blackout Final Report in which “inadequate situational awareness at First Energy”

___________________

9 A scheme designed to detect predetermined system conditions and automatically take corrective actions that may include, but are not limited to, adjusting or tripping generation, tripping load, or reconfiguring a system (NERC, 2014c).

was noted as the second of the four root causes of the event (USCPSOTF, 2004). The importance of system understanding was also highlighted in the first and fourth causes of the event: “FirstEnergy (FE) and ECAR (East Central Area Reliability Council) failed to assess and understand the inadequacies of First Energy’s system, particularly with respect to voltage instability and the vulnerability of the Cleveland-Akron area, and FE did not operate its system with appropriate voltage criteria. . . . [T]he interconnected grid’s reliability organizations [failed] to provide effective real-time diagnostic support” (USCPSOTF, 2004). If operators were aware of the accurate estimate of the “true state” of the grid, they could have taken appropriate actions, which would have eliminated the propagation of effects that led to the widespread blackout. Thus real-time determination of the combined physical and cyber state of the grid is needed to achieve resilience.

Whether operator action can prevent a blackout depends on the time frame and severity of the event (Overbye and Weber, 2015). Some large-scale blackouts cannot be prevented by operator action; earthquakes are examples of unanticipated events that can cause severe damage within seconds. Cyber attacks also have the potential to spread extremely quickly. Conversely, slow-moving weather systems such as hurricanes or ice storms give operators plenty of time to act, but the blackouts cannot be fully prevented. As an example, an ice storm in January 1998 resulted in the collapse of more than 770 transmission towers, causing a large-scale blackout in Canada (Hauer and Dagle, 1999), and Superstorm Sandy caused 8.5 million customer power outages in 2012 (Abi-Samra et al., 2014). The same might be true of the pandemics that would severely limit human resources for response (NERC, 2010).