8

Man vs. Machine or Man + Machine?

In this workshop session, Mary Cummings (steering committee member) focused her presentation on the following comments from the steering committee:

[A] major issue is [h]ow to allocate roles and functions between humans and computers in order to design systems that leverage the symbiotic strengths of humans and computers. Such collaborative systems should allow humans the ability to harness the raw computational and search power of computers, but also allow them the latitude to apply inductive reasoning for potentially creative, out-of-the-box thinking. Successful systems of the future will be those that combine the human and computer as a team instead of simply replacing humans with automation.

Cummings began with a discussion of human supervisory control, the situation in which a human is trying to execute a task and that process is mediated by a computer at some point. She then cautioned about focusing on specific numerical elements when considering general levels of automation and discussed numerical elements associated with levels of automation: “As an engineering professor I find levels of automation to be a very frustrating topic for my students, because my students are very literal, and when you say levels of automation, like many other literal people in the world that you’re talking to, they think okay then, if you tell me there’s a level five I’m going to design to level five. That’s the human tendency to think of these things. So I know that when I’m talking in this audience you understand that when we say levels of automation we really mean it probably means maybe for a function or a task, for any one mission you’re going to have several different levels of automation, and even depending on the phase of flight. You understand that, but most people do not understand that.”

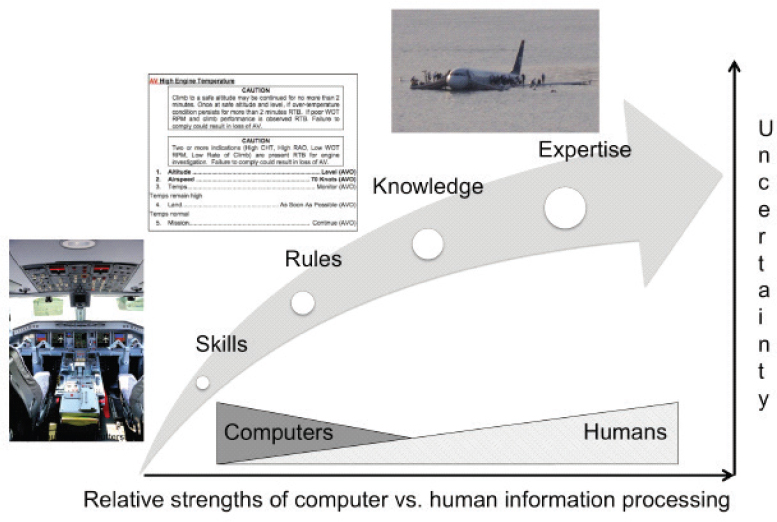

Rather, Cummings said, consider the diagram shown in Figure 8-1, known as a SRKE diagram: skills, rules, knowledge, expertise. The process starts with basic skills (S); as those basics are mastered, cognitive resources are freed to advance to rules (R); the next step is to knowledge (K); and the final step to expertise (E). Assuming the availability of good sensors (that provide the inputs to skilled behavior), automating skills can be straightforward to do, but as you move along the axis to tasks that depend on rules, then knowledge, and, finally, expertise, it becomes more and more challenging to automate those tasks, particularly in the case of tasks that depend on expertise in high-uncertainty conditions.

Cummings then discussed supervisory control in contrast with manual control. She explained that supervisory control is when a human tries to execute a task, in this case, fly an army Unmanned Aerial Vehicle, but the task is mediated by a computer somewhere in the process. With manual control, the operator interacts directly with the task. In a recent limited experiment, the results indicated that supervisory control was beneficial under normal

NOTE: This figure is an expanded version of the figure shown at the workshop.

SOURCE: Adapted from Cummings, M.L. (2018). Adaptation of Human Licensing Examinations to the Certification of Autonomous Systems. Durham, NC: Duke University.

circumstances, but when uncertainty grows (as with a simulated accident), supervisory control can slow things down, though not worse than with manual control, she reported. Supervisory control will help reduce cognitive workloads, she said, but in the face of uncertainty, there need to be ways to help people work through a situation.

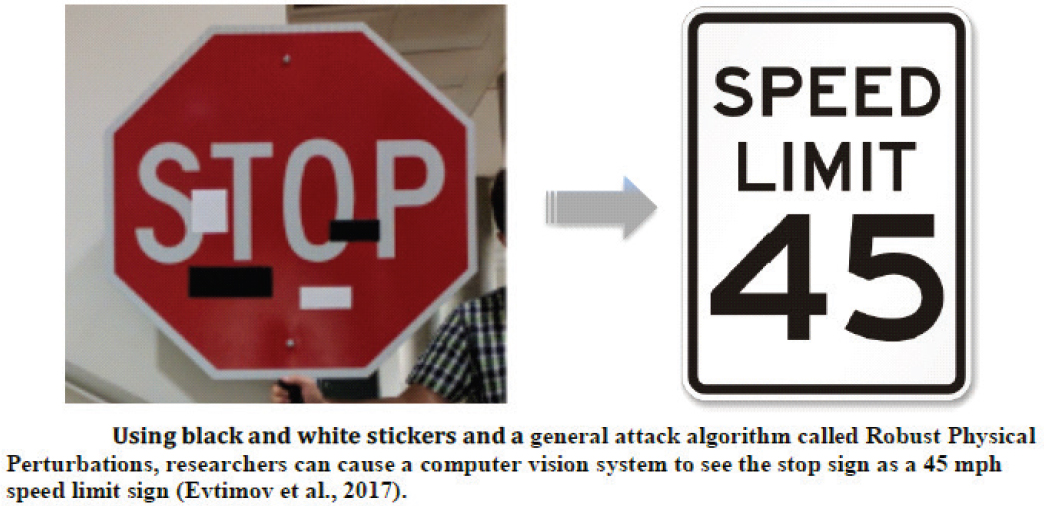

Cummings then turned to the strengths and weaknesses of artificial intelligence (AI)-enabled sensing, focusing on object identification. A great deal of work has been done, but it is critical to understand that what the sensor-computer combination “sees” is not what the human perceives and interprets. She gave several examples in which AI-enabled object perception could be manipulated into providing the incorrect interpretation and some in which it was shown that objectively correct interpretation capabilities could be interfered with in order to produce incorrect outputs. In simpler terms, that is, she showed how AI systems can be fooled. The point of her presentation was to emphasize that the capabilities are still too “brittle” to be depended upon for certifiably human-safe systems.

Using an automobile example, Cummings described how changing a few elements on an image of a stop sign using pieces of tape can make it look like a speed-limit sign to a computer-vision system: see Figure 8-2. Even a one-pixel change can convert the image to something else, a “huge weakness,” she said. Potential deficiencies like these are a signal to aviation officials in case “brittle” AI techniques become embedded in future aviation-related systems.

Cummings observed that the strengths and weaknesses in visual recognition apply to both humans and automation techniques, so it raises the question of how and why a human should be replaced with a robot. One option is to opt for all robots, so only bring a human in when something breaks. Tasks that are at the lower end of the SRKE axis can be fully automated. In those cases, people only need to be brought in when a problem arises. She offered the possible example of future cellphone factories. But when uncertainty is likely to occur, humans must be in the loop. She mentioned an approach of the Aurora Flight Sciences company, which would offload the “dull” work to a robot but bring the human in when there is maximum uncertainty.

Looking to the future, Cummings said that the business case for human-robot collaboration is that automation is seen as a way to reduce costs, but robots will break, and she noted that the future need to maintain robots

SOURCE: Adapted from Evtimov, I., Eykholt, K., Fernandes, E., Kohno, T., Li, B., Prakash, A., Rahmati, A., and Song, D. (2017). Robust Physical-World Attacks on Deep Learning Models. Ann Arbor: University of Michigan. Available: https://arxiv.org/pdf/1707.08945.pdf [February 2018].

(i.e., keep them operating and repair them when necessary) is not being considered by businesses. She expressed concern that human factors research is being marginalized by academia; it is not seen as real engineering. But human factors know-how will be necessary for the future workforce, which will be changing by reeducation and retraining: today’s cab drivers may become tomorrow’s robot maintainers. She said people will continue to be pushed up the SRKE axis, with robots at the lower end and humans at the upper end.

This page intentionally left blank.