3

Harmonization Frameworks

OVERVIEW

In session 1, moderated by Peter Clifton, three speakers offered a sketch of the harmonization work completed since the 2005 initiative. This chapter summarizes these presentations and the discussion that followed, with strategic points highlighted here and in Box 3-1.

First, Clifton described consulting work he was involved with in Australia regarding which existing (2005–2006) nutrient reference values (NRVs) to review (i.e., all or some) and how the review process should occur. The existing NRV process had followed the Institute of Medicine (IOM) recommendations almost completely, Clifton recalled, but the process had generated considerable confusion because of its lack of transparency. Among other recommendations for future NRV revisions, the consultative group called for greater transparency in the decision-making process, including clear justification for the inclusion of experts on committees and clear documentation of the process of determining nutrient values.

Next, Amanda MacFarlane discussed the challenges of developing Dietary Reference Intakes (DRIs) based on chronic disease endpoints, as opposed to traditional nutrient deficiency (or excess) endpoints. She explained how risk assessment is at the heart of the DRI framework, but that several critical assumptions of this approach, such as causality, do not always fit for chronic disease endpoints. Causality relies heavily on randomized controlled trials (RCTs), she said, yet most data comparing nutrient intake and chronic disease endpoints come from observational studies. This difference

in the nature of the evidence that is available in the scientific literature is “not good or bad,” MacFarlane said, “it just is what it is.”

Continuing the focus on chronic disease endpoints, King highlighted major findings from a recently published National Academies of Sciences, Engineering, and Medicine report, Guiding Principles for Developing Dietary Reference Intakes Based on Chronic Disease (NASEM, 2017a). A major difference between traditional versus chronic disease DRIs, she pointed out, is that the latter are not warranted unless sufficient evidence exists, in contrast to traditional DRIs, which affect everyone. Recommendations in the National Academies report (2017a) covered how to select and judge chronic disease evidence (e.g., how to extrapolate intake–response data from one population to another); how chronic disease DRIs should be structured (e.g., as ranges, rather than single numbers); and the DRI process itself (e.g., continue to use the current DRI process, but, as a first step, conduct a thorough evidence-based systematic review of the nutrient and associated chronic disease risk). King remarked that, as a first test of these guiding principles, a National Academies review of chronic disease endpoints in DRIs for sodium and potassium was already under way.

FRAMEWORK CONSULTATION PROCESS: KEY ISSUES AND CONCLUSIONS1

Peter Clifton entered this field through his involvement with two consultations of the NRV framework in Australia, which itself was the end result of the 2005–2006 NRV review process.2 The NRV framework adopted IOM recommendations almost completely, with what Clifton described as a whole array of new concepts being introduced. These included the estimated average requirement (EAR), the reference daily intake (RDI), and the adequate intake (AI), as well as acceptable macronutrient distribution range (AMDR). The introduction of AMDR in particular caused a lot of controversy and, according to Clifton, has not been used. Additionally, upper limits (ULs) were introduced for all nutrients and all age groups, again mostly as per the IOM recommendations. The introduction of the ULs caused controversy as well, he recalled, particularly with the regulatory agencies, as many of the limits were perceived to be set relatively arbitrarily and relatively low. But the major problem with the 2005–2006 NRV process, Clifton emphasized, was lack of transparency. Many people who contributed ideas about what the NRVs should be received no feedback, the reasons the 2005–2007 NRV review committee chose particular values were never recorded, and details about the process provided on the website were very limited. Clifton went on to describe these and other problems in more detail, actions taken since the consultations, and challenges with folate and vitamin D in particular.

Consultations and Recommendations

A main question addressed in the first consultation that Clifton was involved with (i.e., three public meetings in 2011 with targeted invitees from across Australia and New Zealand) was whether all of the NRVs should be reviewed or if only some of them should be reviewed and, if the latter, how the reviews should occur. The consultants found that stakeholders3 felt there would be more opportunity for more detailed investigations and more substantive values (i.e., there would be more confidence in the values) if only a couple of nutrients were selectively reviewed.

___________________

1 This section summarizes information presented by Peter Clifton, Ph.D., professor of nutrition, University of South Australia, Adelaide, Australia.

2 The 2005–2006 NRV review process led to publication of Nutrient Reference Values for Australia and New Zealand in 2006. A PDF of that publication, plus updates since then, are available on the Australian Government’s National Health and Medical Research Council website at https://www.nhmrc.gov.au/guidelines-publications/n35-n36-n37 (accessed December 14, 2017).

3 Both here and in the Chapter 4 summary of Clifton’s second presentation, “stakeholder” refers to the individuals consulted, as per the descriptions of the consultations in Australian Department of Public Health (2015).

Additionally, Clifton continued, stakeholders wanted more details on the NRV process, including how evidence is assessed, and more information on the purpose and use of NRVs.

Stakeholders also expressed ongoing confusion about inadequacy assessments of both individual and group intakes, including which particular NRV should be used and how it should be interpreted. For example, what is an acceptable level of intake below the EAR? Is 10 percent acceptable (i.e., 10 percent of the population with intakes below the EAR)? Clifton explained that, because people’s dietary intakes are diverse, there will always be a percentage of the population below the EAR.

In addition to detailed descriptions of the EAR, stakeholders wanted detailed descriptions of the RDI and AI, and a detailed handbook on how to establish an EAR.

While stakeholders felt that the terminology that had been changed in 2005–2006 should not be changed again, as they had become accustomed to and had accepted that change, they felt that there were errors in scaling and extrapolation across the various age groups that needed to be corrected and that there needed to be more consistency in the application of scaling (i.e., across nutrients).

As Clifton had previously alluded, stakeholders also reported that the ULs caused problems with Food Standards Australia New Zealand, Australia’s food regulatory agency, and that the ULs needed to be justified, not just borrowed from the IOM recommendations.

Finally, stakeholders requested a framework to guide expert working groups in how they should approach NRVs in the future. Clifton said that he had envisioned a limited handbook, but the framework ended up being a very detailed 80-page document (Australian Government Department of Health, 2015).

Following this first consultation, a series of recommendations were issued in 2012. The first was that there should be an immediate review of the chronic disease and macronutrient section of the 2006 NRVs. Many of the people who had been consulted felt there were many wrong statements in this section and that it needed more rigor. This review has not happened yet, Clifton noted. It was recommended that less comprehensive reviews be conducted for several nutrients as funding and time permit, namely B12, choline and pyridoxine, zinc, fluoride ULs, selenium ULs, energy, protein, and chloride. Additionally, it was recommended that a steering committee be established to oversee the review process and to act as an expert reference or advisory group and that a technical working group or consultant be engaged to develop a methodological framework.

In the second consultation, in 2012, again, Clifton emphasized, a major request on the part of those interviewed was greater transparency in the decision-making process. Stakeholders wanted clear justification for inclu-

sion of experts on a committee, details for accepting or rejecting certain evidence, and clear documentation of all decisions and assumptions. Additionally, there was a call for development of robust methodologies to construct recommendations, particularly for nutrients with gaps in the data for specific population groups. It was suggested that in cases where there is a gap in the data, rather than doing a “guesstimate” and coming up with something not very robust, it should be communicated that no data are available and that a recommendation cannot be made.

Methodological Framework for the Review of Nutrient Reference Values

Realizing the need for a methodological framework to guide future reviews of NRVs, a committee was formed to develop this framework,4 Clifton continued. The framework committee recommended that the first step of any NRV review should be to justify why that particular nutrient was chosen for review and what the issues are around it. For example, is there new evidence? Or are there new politics or policies (e.g., fortification) that justify a review? Then, after clearly defining the question, expert working groups need to identify which particular NRV(s) will be examined (e.g., UL, EAR), which age group will be considered (e.g., infants, children, adults), and whether the focus of the review will be nutritional deficiency or chronic disease prevention. Regarding the latter, Clifton remarked that the people consulted certainly wanted a chronic disease prevention component included in the NRV process, but separate from nutrition deficiency diseases. Additionally, the framework committee recommended the following:

- Clearly define and justify endpoints relevant for the assessment of nutrient deficiency and chronic disease prevention in advance of gathering the evidence.

- Derive recommendations for prevention of nutrient deficiency diseases using either the factorial or dose–response approach.

- Derive recommendations for chronic disease prevention using whatever evidence is available, including from both observational and intervention studies.

- Incorporate as much data as possible into meta-analyses or meta-regressions.

Clifton described the toxicologist on the framework committee as someone who was very strongly interested in harmful effects and who thought that the ULs that had been set during the 2005–2006 NRV pro-

___________________

4 The framework is described in Australian Government Department of Health (2015).

cess were very arbitrary and had implied precision where precision did not really exist. Thus, the committee suggested a UL only where there is good evidence of an adverse effect. Otherwise, a “provisional UL” should be assigned where there is probably an adverse effect, but it is unclear what the value of the UL should be, or a “not determined” or “not required” UL should be assigned when there is no evidence of hazard or it is very unlikely that a hazard could occur. The latter designations are quite different from the current IOM model, Clifton remarked, and have not been adopted yet for any of the nutrients under review.

Lack of Harmonization Within Countries: Folate as an Example

Clifton explained that his interest in harmonization included harmonization within countries, not just among different expert working groups looking at the same nutrient. He cited folate as a good example of a nutrient whose recommended intakes have varied not only among countries, but within countries over time.

In Australia, the recommended intake was 330 micrograms (µg) free folic acid per day (for men and women) in 1977. Then it went up to 400 µg in 1980, then down to 200 µg of dietary folate equivalents (DFEs) in 1991. That was a very dramatic reduction, Clifton said, as 200 µg of DFE is essentially 100 µg of free folic acid. Then, in 1998, it went back up to 400 µg DFE. These changes occurred even though the criterion being used was the same, which was a red blood cell (RBC) folate level greater than 305 nanomoles per liter (nm/L). Similar changes have occurred over time in the United States, with the recommended level being reduced from 400 µg DFE in 1980 to 180 µg DFE in 1989. Again, Clifton said, this was a very dramatic reduction. The recommendation for pregnancy was reduced as well, from 800 µg DFE in 1980 to 400 µg DFE in 1989. Then, in 1998, the IOM recommended dietary allowance went back up to 400 µg DFE, as it did in Australia. The United Kingdom (UK) reference nutrient intake, however, has been set at 200 µg and the European Food Safety Authority population reference intake for folate at 330 µg.

Clifton reiterated that all of these values rely on the same biochemical indicator. Thus, it was not that the criteria differed that led to the variation in values, rather that the data were interpreted in what he described as “an un-harmonized kind of way.”

Given these changes over time, Clifton raised the question: How should future expert working groups examine folate? How should they evaluate the data, apart from looking at all of it, especially given that data on deficiencies are very limited? Food folate is hard to measure, so there is really not even any accurate estimate of intake. That said, Clifton noted, it appears that the intake in Australia might be between 600 and 800 µg/day,

which is well above the RDI. And folate assays of serum, particularly RBC folate, provide mixed results and, thus, their reliability is limited.

Because few EAR studies actually achieve a 50 percent adequacy level, rather than picking one or two of these studies and coming up with a guesstimate, Clifton suggested that many studies with different endpoints need to be integrated into a meta-regression to determine a best estimate. He was unaware of any meta-regression for folate. Added to the challenge is that the true coefficient of variation (CV) is unknown. The 10 percent CV that is being used is what he described as a “wild guesstimate” and may be far removed from actual reality. He suspected the actual CV might be a lot higher. Compared to the EAR, the RDI is a much simpler concept and may be an easier figure to derive, as it covers essentially the whole population.

Whether one should worry about folate in Western populations is difficult to know, Clifton opined. It is known that there is a folate-sensitive population with respect to neural tube defects, but that is a very small, select population. Discussing whether folate deficiency is common in the general population, Clifton mentioned a study of inpatients and outpatients at the Royal Prince Alfred Hospital in Sydney, Australia. Of 21,000 samples, 3.4 percent of the sampled population had low RBC folate levels (i.e., below 340 nmol/L). That sampling was conducted in April 2009, before folate fortification. After fortification, in 2010, the percentage of people with low RBC folate levels fell to 0.5 percent (0.16 percent in women of childbearing age). Thus, fortification changed the folate status of the population to virtually completely folate replete. Clifton concluded, “So it would appear that whatever the population are eating now is certainly adequate.”

However, he continued, there is a bit of a mismatch between measured indicators of folate status versus intake indicators. Even with this virtually complete folate replete population, an estimated 10 percent of estimated intakes are below the EAR. “People are worried about this,” he said.

In answer to his question, a future folate NRV expert working group will need to question all of the assumptions about how all of these figures were derived and check the data upon which the figures are based. A lot of the data are historical, he noted. Additionally, the difference between free folic acid found in supplements and the EAR/RDI DFEs is “totally unrecognized,” he said. DFEs (i.e., food folate) are half as effective as folic acid or food fortified with folic acid.

In Clifton’s opinion, the up-and-down nature of the folate recommendations over the years reflect either confusion about the endpoints or confusion about the data upon which the adequacy levels have been derived. Thus, regarding international harmonization of NRV folate review methodology, he emphasized the importance of agreement around which endpoints and which health markers to use, noting that there may be some new epigenetic markers of folate sufficiency. Other NRVs face similar

issues. For example, recent changes in calcium and vitamin D recommendations similarly reflect a lack of clarity and confusion around appropriate endpoints.

Vitamin D

Vitamin D deficiency is epidemic across Australia, with millions of tests conducted yearly and millions of people being prescribed vitamin D. Thus, it is costing the country a large amount of money. Yet, Clifton remarked, it is very difficult to know whether this testing and prescribing is appropriate or inappropriate—whether it is of value or harm. In the United Kingdom, in 2016, a reference nutrient intake of 10 µg/day was established for all individuals above the age of 4 years. The aim, or endpoint, was to achieve a serum level for 25(OH)D of 25 nmol/L for 97.5 percent of the population, which Clifton said could be considered a very clear, firm, well-established endpoint. However, in Australia, using approximately the same endpoint of 27.5 nmol/L, the AI has been set at 5 µg/day for all individuals up to the age of 50 years, 10 µg/day for individuals between the ages of 50 and 70 years, and 15 µg for individuals over the age of 70 years. Thus, although the two countries use the same endpoint, they have issued different recommendations. He noted that it is difficult to know the extent to which either set of values takes into account sunshine synthesis of vitamin D. The U.S. values are different, with a recommendation of 15 µg/day for individuals up to the age of 70 years and 20 µg/day for individuals over the age of 70 years, but the endpoint is also different, such as a serum 25(OH)D level equal to or greater than 50 nmol/L.

In Clifton’s opinion, vitamin D “would probably be the real challenge for harmonization,” as it will require either persuading all countries to change their endpoints to align with the U.S. endpoint, probably in the absence of any evidence or in the presence of only a small amount of evidence, or persuading the National Academies to lower its intake recommendations.

ENDPOINTS: DIFFERENCES WHEN CONSIDERING DEFICIENCY VERSUS CHRONIC DISEASE5

In the 1990s, it was decided that Canada and the United States would move forward with a harmonized approach for setting nutrient reference values. The DRI framework was the basis of that approach, Amanda MacFarlane began. Prior to establishment of the DRI framework, both countries set their values independently, and their focus was on adequacy

___________________

5 This section summarizes information presented by Amanda MacFarlane, Ph.D., research scientist, Health Canada, Ottawa, Ontario.

and prevention of deficiencies in the two populations. But with the DRI framework, there was an added focus, first, to ensure safe ULs of intake in addition to adequacy. These values were derived from data on apparently healthy populations, thus serving apparently healthy populations. However, in the 1980s, there was a growing recognition that nutrition has an effect on chronic disease. Thus, a second focus was added in the new framework: There should also be consideration of chronic disease risk reduction where sufficient data for efficacy and safety existed. This latter focus ended up posing more challenges than people had anticipated at the time, MacFarlane noted.

At the heart of the DRI framework is a risk-assessment approach. The first step, MacFarlane explained, is to demonstrate that there is a causal relationship between the intake of a particular nutrient and the endpoint of interest (e.g., disease of deficiency), then conduct a literature review and identify and select an indicator(s) of the endpoint that would be acceptable for setting a DRI. Once the causal relationship is determined, the next step is to establish the intake–response relationship, that is, find data showing that there are changes in the endpoint of interest as nutrient intakes increase. Once an intake–response model is established, then the DRI can be set. Other parts of the assessment include intake assessments of the population of interest and implications and special concerns for particularly susceptible or vulnerable populations. But the main part of the risk assessment, MacFarlane emphasized, is identifying the causal relationship and modeling the intake–response relationship.

There are a number assumptions inherent to this risk-assessment approach to setting DRIs, including

- the essentiality of the substance;

- evidence of causality and an intake–response relationship;

- that there is a threshold for adequacy and a threshold, or an assumed threshold, for adverse effects at the high end of intakes;

- that the relevant population is known;

- that there are biomarkers on the causal pathway between the intake of a particular nutrient and the disease of interest; and

- there is evidence that dictates the absolute nature of the risk for the disease of deficiency.

Between 1997 and 2005, DRI values generally were set to achieve adequate intakes and, when the data allowed, to prevent adverse effects from excessive intakes. But it was determined over and over again, MacFarlane recalled, that when these same assumptions were applied to a nutrient–chronic disease relationship, they did not always fit. “It was kind of like fitting a square peg into a round hole,” she said. In the few cases where a

nutrient–chronic disease relationship could be demonstrated, only an AI value was set. MacFarlane then went on to highlight in detail the limitations of several of these assumptions when applied to chronic disease endpoints.

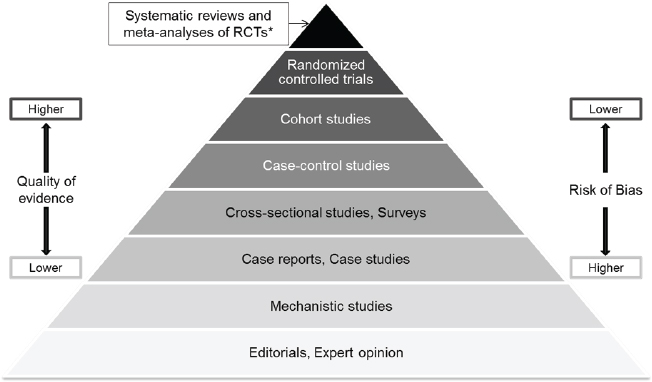

Evidence of Causality: Deficiency Versus Chronic Disease Endpoints

Again, MacFarlane continued, one of the assumptions of the DRI approach is that there is a causal relationship between nutrient intake and an endpoint. When establishing causality or an intake–response relationship, normally the gold standard evidence is from an RCT and, ideally, from systematic reviews and meta-analyses of many RCTs (see Figure 3-1). Although establishing causality relies heavily on RCTs, it can be supported by evidence from intervention trials, metabolic/balance studies, and depletion/repletion studies, MacFarlane remarked. If these same study types are used to model an intake–response relationship as well, they need to include at least three doses. “I think it’s fair to say,” she said, “in terms of nutrition studies, a lot of this evidence is somewhat limiting. But this is what you need, and this is what was used primarily for setting [the 1997–2005] EAR values based on deficiency endpoints.”

The challenge for chronic disease endpoints is that the nature of the

NOTES: RCT = randomized controlled trial; * Meta-analyses and systematic reviews of observational studies and mechanistic studies are also possible.

SOURCES: Presented by Amanda MacFarlane, HMD Workshop, Rome, Italy, September 21, 2017 (Yetley et al., 2016, by permission of Oxford University Press).

evidence available in the literature differs significantly. Most available evidence is associational, such as the cohort studies, case-control studies, and cross-sectional studies and surveys mentioned in Figure 3-1. “It’s not bad or good. It just is what it is,” MacFarlane said. The question, she said, is, “Can you establish a causal relationship or dose–response relationship in the absence of clinical trials?” Additionally, observational studies have a number of inherent biases and potential errors associated with each study type, such as confounding or selection bias and the limitations associated with self-reported intake data upon which these studies often rely.

Biomarkers on the Causal Pathway: Deficiency Versus Chronic Disease Endpoints

MacFarlane described that another assumption of the DRI framework is that there are biomarkers that are clearly on the causal pathway and that directly relate the intake status of a particular nutrient to the endpoint or disease of interest. She explained that examples of biomarkers on the clinical pathway include serum folate, serum 25(OH)D (vitamin D status), and serum ferritin. Having these biomarkers ensures a higher level of certainty when establishing a causal relationship between the intake of a particular nutrient and a disease. Ideally, MacFarlane added, the clinical outcome itself is directly observable, which is often the case with diseases of deficiency. Having this, plus an indicator of exposure directly related to that endpoint, makes it much more straightforward to demonstrate causality.

But, she said, when considering chronic disease risk, the situation is more complicated. First, chronic diseases have long pathological processes, making it difficult to demonstrate that an exposure is related to a particular clinical outcome. Additionally, sometimes a validated surrogate outcome has to be used instead of an actual clinical outcome as a predictor of the clinical outcome. There are also a number of nonvalidated intermediate outcomes that are possible predictors of the clinical outcome, but they have an even higher level of uncertainty. Either way, MacFarlane said, when the clinical outcome cannot be measured and, instead, one has to rely on either a surrogate or nonvalidated intermediate outcome, there is a higher level of uncertainty around the relationship between nutrient intake and chronic disease. A further issue is that currently there are very few validated surrogate outcomes, MacFarlane noted.

Data Available for Estimating Intake–Response Relationships: Deficiency Versus Chronic Disease Endpoints

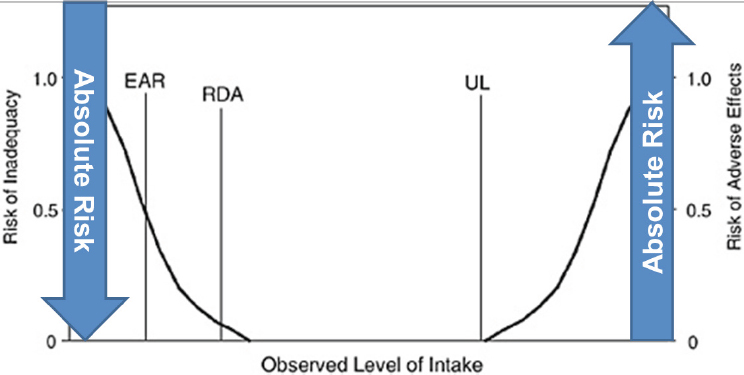

Another assumption of the DRI framework is that there are absolute risks that affect all persons and all life-stage groups such that, as intake

NOTE: EAR = estimated average requirement; RDA = recommended daily allowance; UL = upper limit.

SOURCES: Presented by Amanda MacFarlane, HMD Workshop, Rome, Italy, September 21, 2017. From IOM, 1994.

levels increase, the absolute risk of deficiency decreases (see Figure 3-2). Additionally, as also shown in Figure 3-2, there is an inflection point above which intake is associated with an increasing risk for an adverse effect. Thus, MacFarlane explained, depending on intake level, one can assume that there is a zero up to 100 percent risk of deficiency or adverse effects. She used an example from the 2011 vitamin D DRI report to illustrate. For all persons and all life stages (i.e., both younger and older age groups), as serum 25(OH)D levels (a biomarker of vitamin D status) increase, the risk of vitamin D deficiency bone problems decreases (IOM, 2011a).

However, this assumption does not apply when considering chronic disease risk. Not all persons are at risk of a chronic disease and not all life-stage groups are equally at risk of a chronic disease, MacFarlane explained. For example, the prevalence of diagnosed diabetes in Canada varies with age. Among the younger age groups, it is almost zero, and then it increases as age increases but only to a maximum prevalence of about 25 percent. In fact, often, less than 50 percent of a population is affected by any given chronic disease. So, again, an assumption of the DRI framework, in this case that every person of every life stage is affected by the same risk, does not apply when considering chronic disease.

Another difference with chronic disease risk, she added, is that it is often defined as relative risk, rather than absolute risk. In other words,

there is no one who is at either zero or 100 percent risk. People are at either higher or lower risk compared to a baseline, or background, disease risk (Yetley et al., 2016). In the literature, changes in relative risk with changes in intake are often 10–20 percent, MacFarlane noted.

Thresholds for Adequacy and Upper Intake: Deficiency Versus Chronic Disease Endpoints

Yet another assumption that applies to deficiency endpoints is that there is an inflection point between inadequate and adequate intake levels. Yet, nutrient–chronic disease relationships often do not have inflection points. The relationship is often linear, with the greatest effect (i.e., change in risk) occurring at the tail(s) of the intake distribution (i.e., highest and/or lowest intakes have the largest effect). As an example, she described the association between fiber intake and coronary heart disease, where the relative risk of coronary heart disease decreases with increasing fiber intake (Threapleton et al., 2013). “If it lacks an inflection point,” MacFarlane asked, “where are you supposed to set that EAR value?”

Similarly, it is assumed that there is a threshold for upper intake as well. But again, that is not always the case with a relationship between a particular nutrient and a chronic disease endpoint. For example, with saturated fat and low-density lipoprotein (LDL) cholesterol, as intake increases, LDL cholesterol increases linearly (IOM, 2002).

Interval Between Beneficial and Harmful Intakes: Deficiency Versus Chronic Disease Endpoints

The last assumption that MacFarlane described was that there is an interval between beneficial and harmful intakes. Again, like the other assumptions, this is not always the case with the relationship between intakes of a particular nutrient and a chronic disease. For example, the relationship between sodium and blood pressure is, again, an apparent linear relationship, with blood pressure continuing to increase as intake increases (IOM, 2005). “So where do you set a UL for a nutrient like this?” MacFarlane asked. At the time an AI for sodium was set (1.5 grams/day), it was based on adequacy for other nutrients and sweat losses, and the UL (2.3 grams/day) was based on the next higher dose in trials. MacFarlane said, “So, again, they did what they could with the data they had, but the chronic disease endpoints really challenged the DRI framework.”

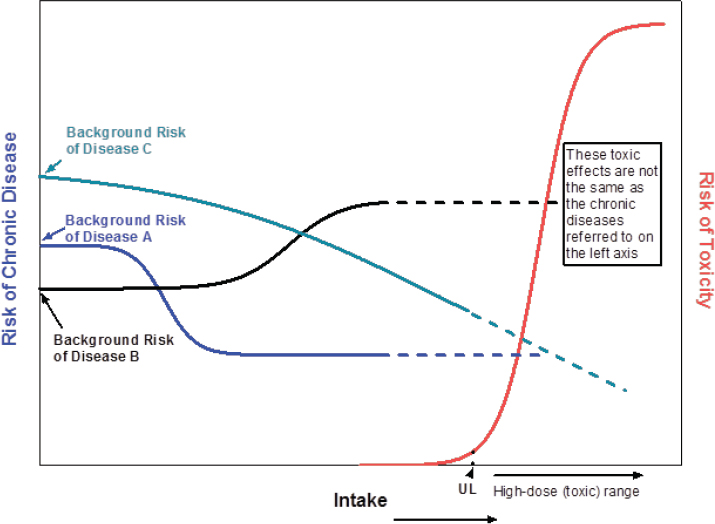

Additionally, because a single nutrient or single food substance potentially can be related to more than one chronic disease, there is also the potential for overlap of benefit and harm when considering the relationship between intake and the risk of more than one chronic disease. This is be-

cause the risk relationships between the nutrient and the different diseases could be different (Yetley et al., 2016). For example, each of the three theoretical chronic diseases (A, B, and C) illustrated in Figure 3-3 have different background risks. With increasing intake, the risks of disease A and disease C decrease, but the risk of disease B increases. “So what do you do with this kind of information?” MacFarlane asked. “Where would you set a DRI value for this kind of nutrient?”

Synopsis of the DRI Framework Approach

The DRI approach works well for estimating adequate intakes and adverse effects for essential nutrients, but it has been more challenging when using chronic disease endpoints, MacFarlane recapped. Chronic diseases are complex and can be influenced by many factors other than nutrients, including other food substances. Additionally, the available evidence relating nutrient intakes with a chronic disease can differ significantly from the

SOURCES: Presented by Amanda MacFarlane and Janet King, HMD Workshop, Rome, Italy, September 21, 2017 (Yetley et al., 2016, by permission of Oxford University Press).

evidence that is available for establishing essentiality or toxicity of a given nutrient.

GUIDING PRINCIPLES FOR DEVELOPING DIETARY REFERENCE INTAKES BASED ON CHRONIC DISEASE: HIGHLIGHTS OF THE CONSENSUS REPORT6

A National Academies consensus report was published in August 2017 titled Guiding Principles for Developing Dietary Reference Intakes Based on Chronic Disease (NASEM, 2017a). The paradigm that the workshop planning committee used for their work was based on the “Options Report” (Yetley et al., 2016) and was similar to the paradigm the workshop planning committee was asked to follow, Janet King began. She noted that both she and Patrick Stover served on both the 2017 National Academies committee and the Options Report committee, thus ensuring that the historical development of concepts was not lost. King provided an overview of the 2017 National Academies committee’s conversion of the concepts in Yetley et al. (2016) into guiding principles for how to develop DRIs for chronic disease.

The “Options Report”: Differences Between Traditional DRIs and DRIs for Chronic Disease

The charge of the Options Report committee was to address options for dealing with the challenges encountered when establishing DRIs for chronic disease endpoints and to provide future guidance to DRI committees for judging nutrients and other food substances and chronic disease risk.

The first thing the committee had to do, King recalled, was think about how DRIs for the traditional nutrient recommendations differ from DRIs for chronic disease. The committee determined that a major difference is that traditional DRIs are for essential nutrients that are needed to prevent deficiencies. In other words, traditional DRIs affect everyone. If an individual’s intake is inadequate, that individual will develop a nutrient deficiency. Because nutrient deficiencies are caused by single missing nutrients, they can be prevented through nutritional intervention, that is, by providing the missing nutrient. Chronic disease DRIs, in contrast, are not warranted unless there is sufficient evidence. That is, because the risk of acquiring a chronic disease because of dietary factors varies from individual to individual, there needs to be strong evidence that the risk occurs in the population of concern. Because chronic diseases are related to many

___________________

6 This section summarizes information presented by Janet King, Ph.D., senior scientist, Children’s Hospital Oakland Research Institute, Oakland, California.

risk factors in addition to diet and nutrition, including both genetic and environmental factors, nutritional interventions will only partly ameliorate the risk. King said, “The two parallel sets of DRIs are quite different in terms of their concepts.”

King used one of the same figures that MacFarlane used in her presentation (see Figure 3-3) to emphasize that the relationship between nutrient intake and the risk for a chronic disease differs depending on which nutrient and which chronic disease are being addressed. For example, there may be a slight decrease in risk as intake increases (e.g., disease C in Figure 3-3), or there may be a sharp decline in risk that then levels off as intake continues to increase (e.g., disease A in Figure 3-3), or the risk may increase as intake increases (e.g., disease B in Figure 3-3). “It’s a complex situation,” King said.

Selecting and Judging the Chronic Disease Evidence

The National Academies consensus committee made two sets of primary recommendations: (1) how to determine whether specific levels of nutrients and other food substances can ameliorate chronic disease risk; and (2) how to develop DRIs based on chronic disease outcomes (NASEM, 2017a).

In its deliberations, the committee first had to figure out how to select and judge the evidence for a relationship between nutrition and chronic disease, King explained. It concluded that this involves, first, identifying the presence of a chronic disease by using either an acceptable diagnostic criteria or a surrogate biomarker of the disease. For example, with diabetes, one can determine if an individual has diabetes either by measuring blood glucose and insulin levels in the individual or by determining the level of hemoglobin A1c, a surrogate marker for the risk of diabetes. “So there are different ways that these analyses can be carried out,” King said.

Next, the level of confidence of the relationship between the nutrients and other food substances and the chronic disease needs to be determined. The committee made two recommendations:

- Use the Grading of Recommendations Assessment, Development and Evaluation (GRADE) evidence review system for that analysis, which is the same system being used at the World Health Organization (WHO) for evaluating the relationship between nutrient intakes and chronic diseases; and

- The level of confidence for making a recommendation for a nutrients and other food substances level that reduces the risk of chronic disease should be based on at least a moderate level of certainty based on the GRADE system evaluation of the data.

“I think that may be a rather rigorous criteria to reach,” King said, given that most of what she has seen in the literature has a lower level of confidence. “As we go forward and try to apply these guiding principles,” she said, “we may find that we need to go back and rethink some of them.”

Additionally, outcome indicators of the intake–response relationship need to be selected. In its report, the committee recommended using a single outcome indicator on the causal pathway, rather than multiple indicators. Again, using diabetes as an example, King suggested using only the hemoglobin A1c biomarker, which is on the causal pathway, and not to also include levels of glucose or insulin.

Finally, King reported, regarding how to extrapolate the intake–response data, the committee decided that this can only be done when data are extrapolated to similar populations with similar risks for the chronic disease. These data cannot be extrapolated widely to the whole population.

Structuring Chronic Disease DRIs

According to King, in its 2017 report the consensus committee decided that a DRI for chronic disease risk should be a range, rather than a single number, because the risk for a chronic disease can be spread over a range of intakes.

Additionally, the committee decided that if an increased chronic disease risk occurs only above the traditional UL, then there is no need to develop a DRI for chronic disease, because avoiding intakes above the UL will avoid that risk for chronic disease. So the UL is the cut point, King said. “If you don’t see a risk for any disease unless the intake exceeds the UL, you don’t need to worry about setting a DRI for that chronic disease.”

Finally, there needs to be explicit and transparent descriptions of the health risks and benefits, especially when the benefits and harms of different chronic diseases overlap. “That concept needs to be very clearly laid out so individuals know how to apply the chronic disease DRIs,” she said.

Recommendations Regarding the Process

The committee recommended continuing to use the current DRI process. Interestingly, King said, when they first started this work, she thought most of the committee was expecting to have to set up a totally different process for developing dietary recommendations for chronic disease. But as they delved into their deliberations, they began to see more clearly how the process could be incorporated into the structure already in place for developing nutrient recommendations.

The first step, King explained, is for the Agency for Healthcare Research and Quality to commission a thorough systematic review of the

causal relationship between a nutrient and chronic disease. After that systematic review has been completed, then a specific nutrient-focused DRI committee will be assembled to determine if the existing evidence is sufficient to support developing chronic disease DRIs along with the traditional adequacy and toxicity DRIs. It is now recognized, she noted, that there may be a need for additional committee members who are more familiar with the disease process than with nutrient metabolism. Or, it may be necessary to appoint a subcommittee of individuals to this parent committee that includes the expertise needed to evaluate the disease process and translate that into a dietary recommendation.

This process will be applied for the first time with sodium and potassium, King said. The two nutrients will be reviewed separately, King clarified, not together or as a ratio. She clarified further that the review will determine whether there should be chronic disease DRIs for sodium and potassium along with traditional nutrient recommendations.

The first step will be a systematic review to determine if there is a causal relationship between sodium and a chronic disease. Then, after the systematic review has been completed, a DRI committee will be appointed, possibly with the assistance of a subcommittee, to judge the evidence using the GRADE system and to determine if the relationship between sodium and a single chronic disease outcome is causal in populations similar to those described in the evidence review. The recommendation for chronic disease endpoints was that a DRI committee would establish an intake range, not a single number, where the sodium-related chronic disease risk is minimal. If the sodium-related chronic disease risk occurs only above the traditional sodium UL, then no chronic disease DRI will be set. This will be the first test to “see if these guiding principles that were developed can be applied effectively,” King concluded.

DISCUSSION

Following King’s presentation, she, Clifton, and MacFarlane participated in an open discussion with the audience, as summarized here.

Chronic Disease DRI: A Range, Rather Than a Single Number

Ann Prentice asked about the concept of a chronic disease DRI range, as opposed to a single number, and how it will be interpreted by policy makers in their guidelines for the public. King replied that it will be similar to what is already being done with the dietary guidelines, which are often over a range and are not nearly as precise as what is trying to be established with the traditional DRIs. In King’s opinion, it is wise not to have specific

numbers, because variation even within a population at risk is fairly significant. Indeed, this was one of the major reasons that the 2017 National Academies consensus committee recommended using a range approach, rather than relying on single numbers.

Body Mass Index Issues for Chronic Disease DRIs

Lindsay Allen asked how body mass index (BMI) will be handled when developing chronic disease DRIs. King emphasized that the focus of the chronic disease evaluations will be on specific indicators, and she doubted that BMI would be such an indicator. However, she said that BMI “will likely track with the indicators that we are going to be evaluating.” For example, people with BMIs above the normal range likely will have elevated blood pressure or elevated levels of hemoglobin A1c. But the DRI range will not be corrected for BMI.

Chronic Disease DRIs: Existing Conditions

Mary L’Abbé asked about preexisting conditions and whether dose–response curves might be different for individuals with preexisting conditions (e.g., individuals with hypertension versus without). “How would the model handle that? Could it conceivably come up with two different answers?” she asked. King replied that she was hoping that the GRADE system will be able to eliminate a number of relationships that might exist, such as that one. “I thought we were being rigorous when we said we were going to use the GRADE system,” she said, “and also when we said we wanted it to have at least a moderate level of certainty.”

Why Hypertension?

Clifton asked why hypertension was chosen as the endpoint for the first chronic disease DRI review (i.e., for sodium and potassium), rather than a hard endpoint such as a heart attack or stroke. He opined that most people will accept that an increased intake will elevate your blood pressure. However, he pointed out, hypertension is just an intermediate health outcome, one that is affected by many different factors. “The real argument is,” he said, “does that matter in terms of hard endpoints?”

“We recognize that, and we actually spent a fair amount of time thinking that through,” King responded. The committee decided that using those clinical endpoints is not going to be very helpful in terms of setting up a relationship with nutrient intakes, so they recommended looking for a surrogate marker instead. Clifton pressed his point further: Surrogate markers

may not relate to the hard endpoints. “That’s the real crux of the question,” he said. King agreed that this will be a challenge that DRI committees will need to deal with when they develop their surrogate markers.

Patrick Stover, who, along with King, also served on the 2017 National Academies committee, added that there was also a lot of discussion about what the right endpoints are and that, whenever possible, they should be “person-important” outcomes, that is, they should be clinically meaningful outcomes. It is when those are not available, he said, that “you have to start walking back.”

MacFarlane clarified that chronic disease DRIs are different values than deficiency DRIs. So the traditional EAR and UL are maintained, with the chronic disease DRIs being in addition to those other values. Her understanding of the guiding principles was that if the data are not sufficient or not available then chronic disease values would not be set.

A Harmonized Framework for Low- and Middle-Income Countries

Catherine Leclercq asked if a framework was going to be developed for countries that will not be able to develop standards from primary data, that is, for low- and middle-income countries who will need to derive intake values from the United States, Canada, Australia, WHO, or elsewhere. King replied that chronic disease issues vary drastically from country to country, particularly when comparing low- and middle-income countries to higher-income countries. In her opinion, it has to be done within countries by experts in those countries who have the data and understand the data. MacFarlane added, “That’s the beauty of the guiding principles.” They are not specific values that are being set, rather principles that can be applied at a country or region level.

Evidence from Observational Studies Versus RCTs: Chronic Disease Endpoints

George Wells commented on the evidence pyramid that MacFarlane showed during her presentation (see Figure 3-1), with RCTs on top and observational studies in the lower tiers. He asked how much evidence would be taken from RCTs versus observational studies when dealing with chronic disease endpoints. MacFarlane reiterated her expectation that more evidence will be coming from observational studies than from RCTs, given how few nutrition RCTs relate to particular nutrients and chronic disease endpoints. The challenge comes from the complexity of the development of chronic diseases over not just years, but decades, and the difficulty in establishing an exposure relationship with those diseases. She suggested that future studies may need to focus on at-risk populations of individuals

who have not yet developed clinical outcomes but who can be identified on the basis of validated surrogate endpoints.

The GRADE System

Wells commented on the number of different ways that GRADE can evaluate risk of bias or quality and asked if the 2017 National Academies committee had decided on the GRADE system input yet. King was not part of the 2017 National Academies GRADE subcommittee and was unable to answer the question, but she commented on the considerable analysis and detail addressed when deliberating about how GRADE should be set up, how it should be used, what endpoints should be considered, and other related factors.

Folate: Assessment of Indicators

Ruth Charrondiere expressed fascination with Clifton’s data showing that among approximately 21,000 people (i.e., patients at the Royal Prince Alfred Hospital in Sydney) only 3 percent were folate deficient. She wondered if there were also any data showing what these same individuals’ folate intakes were prior to hospitalization so their RBC folate levels measured at the hospital could be correlated with folate intake estimates. She suspected that more than 3 percent of the general Australian population would show up as folate deficient based on folate intake estimates.

Clifton replied that, while it was a different population, the average folate intake measured in a later survey was around 700 mg/day, which he said was “well and truly above the RDI.” Even so, within that population, 10 percent were below the EAR. But that is to be expected in a population where young people do not consume fruits and vegetables. “That’s the reality,” he said. “It is quite possible to have a high intake and a certain percentage below the EAR, because of the nature of people’s dietary intake.” In his opinion, the Australian population is replete even without fortification. He clarified that fortification is not being done for folate sufficiency, rather it is a targeted intervention across the population to achieve a reduction in neural tube defects. It has, he said, “nothing to do with folate endpoints in red cells.”

MacFarlane underscored the need to decide, when assessing indicators being used to set nutrient reference values (for any nutrient), what the gold standard is. For folate, the microbiological assay is “really the gold standard,” she said. That was the method that WHO used to establish cutoffs for both deficiency and for prevention of neural tube defects. Hospitals usually use protein binding assays, which are very different. In the absence of conversion factors between estimates derived from a gold standard ver-

sus a regular platform, she urged serious consideration about whether data should be used or not.

Reality Check: Are Chronic Disease RDIs Achievable with Healthy Food Intake?

Charrondiere also asked if there would be what she referred to as a “reality check” when chronic disease DRIs are set. That is, will there be an additional step to check to see that the DRIs are achievable with a healthy food intake? If the recommended levels can only be achieved through fortification, she said, “there might be something wrong in our whole methodology.”

Clifton responded that, at least with folate, the normal diet in Australia is quite adequate. He reiterated that fortification was done for a totally different purpose, not for normalization of the diet per se. MacFarlane added that in Canada, in the 1970s, which was the last time a biomarker analysis of folate was conducted, about 25 percent of the population was demonstrating deficiency. With fortification, now there is less than 1 percent deficiency. More generally, she agreed that a reality check is important, but suggested that the DRIs already do that to a degree. Usually when an EAR is set, an intake assessment of the population is conducted to see where the population is. When the values are different than what intakes indicate, she suspected that some of the difference relates to what she called “that disconnect between intake and status biomarkers.” She suggested, “Perhaps we need more research to establish what those true relationships are between intake and actual status.”