5

Evolution and Evaluation

The variety of programmatic and curricular approaches described in the previous chapters points to numerous alternatives for development of data science learning in undergraduate programs. There are many different modalities for institutions to offer in data science education, as there have been in computer science and information science. Data science programs will continue to evolve within institutions and across the United States as driving factors, including student perception of career opportunities and funding for training programs from industry and government, modify student demand and associated institutional response.1 As befits a new and evolving discipline, pilot programs designed to address different needs will arise at different types of institutions.

Evaluation of a program relies upon assessing student learning and gauging how well the program meets market needs. Evaluations can be used to test what works for an institution, given relevant constraints, and suggest when these pilot programs might be generalized for other contexts. It would be beneficial to use data science to continuously evaluate and evolve data science education (see Gertler et al., 2016). In particular, it would be useful to examine the impacts that different drivers of undergraduate programs have on the development of programs and their evolution. In this way, the programs would not evolve stochastically based upon particularities of the histories and personnel associated with

___________________

1 See, for example, the Institute of Education Sciences What Works Clearinghouse, https://ies.ed.gov/ncee/wwc/, accessed February 20, 2018.

the programs, but rather they would innovate based upon data and the drivers at the institution.

The continued evolution of programs would benefit from an efficient means to collect, compare, and share evaluation data. Such capabilities could be established through collaborative efforts across a number of the professional societies that operate in the data science space or possibly from subsocieties that cross the variety of disciplinary boundaries in data science as well as the variety of application domains. Such collaborations could enhance the effectiveness and efficiency of educational programs by assisting in propagating methods for success. Eventually, formal schemes for standards, and possibly accreditation, could emerge from the application of data science methods to the variety of programs that have evolved.

EVOLUTION

There are multiple pathways to developing programs in data science, each with its own challenges and opportunities. While other domains have started by implementing undergraduate programs and expanding upward to include master’s degree programs, or with doctoral programs and expanding downward, data science has taken an unusual path to date, as many master’s degree programs have begun to be offered before undergraduate or doctoral programs. This institutional structure is perhaps driven by the rapidly arising industry demand for data scientists. This starting point influences how competencies are introduced into the curriculum. However, as Chapter 3 made clear, today different schools are developing multiple pathways from multiple starting points. This flexibility is beneficial, as the multitude of educational pathways enables students to be equipped to fill various future data science positions.

A 2015 National Science Board study described the importance of building a better understanding of the pathways taken by individuals to become part of the science, technology, engineering, and mathematics (STEM) workforce. It pointed out the need to “monitor and assess the condition of workforce pathways” as well as to “address roadblocks to the participation of groups traditionally underrepresented in STEM” (National Science Board, 2015). Reaching both of those goals requires building a model of the watershed of STEM-trained individuals and empirically assessing demand for STEM-trained employees (Metcalf, 2010).

In this section, the committee considers how data science programs might evolve. That evolution will be driven by a broad range of factors, including the starting point, the students who are the target of the program, and the institution that is developing that program. This section focuses on the maturation process and on ensuring program success.

The Maturation Process: Key Drivers of Change

Once started, programs will continue to evolve differently, based on the constraints of personnel and resources at the institution, student perceptions of careers in the area, and external influences such as industry and government partnerships. The long-term goal of any undergraduate educational program in data science is to produce successful data science students. But what constitutes success? Obtaining a degree? Gaining satisfactory employment (i.e., a job that is monetarily or intellectually rewarding)? Securing a place in graduate school? The answers to these questions will be driven by the institution as well as by the evolution of the workforce and the economy. If jobs are the students’ goals, and the institution sees itself as preparing them for the job market, then industry’s needs will be paramount, and undergraduate programs will need to evolve to meet those needs as the needs themselves evolve. Likewise, the availability of graduate programs (and their evolution over time) can also change the content and learning outcomes of undergraduate programs, which strive to ensure that their students have the right background to be admitted to these graduate programs.

Many subtler influences based on educational style may also be expected. Some institutions will tend to stress an understanding of theoretical foundations; other programs will focus on hands-on experience. The size of the school and popularity of the program will impact the types of opportunities available and the pedagogical style that may be appropriate. Over time, any of these may change, resulting in changes to individual courses and overall programs.

Since data science programs in general are new, there is little in terms of comprehensive data on general expectations for the undergraduates who enter them. Many programs that exist today were started as professional master’s degree programs. Starting at the master’s level presupposes knowledge and classes, usually with a specific disciplinary focus. There are also multiple possible pathways to data science education at the doctoral level, perhaps a Ph.D. in data science or a Ph.D. in a domain area with a specialization in data science. Many students entering such programs may not have the necessary prerequisites, so it is important for institutions to consider how to upskill and thus prepare them quickly.

Programs certainly will have more tactical intermediate goals, such as simply attracting more students to the program, broadening participation, or increasing the understanding of data science on campus. Another goal might be increasing experiential learning in data science through coursework or internships. Progress toward these goals can be measured and serve to pave the way to success—the percentage of various populations can be tracked, and delivery methods, classroom practices, or course content can be modified to attract a more diverse population as needed.

For example, with support from the National Science Foundation, the College Board developed a new advanced placement course, Computer Science Principles. This course is specifically designed to engage more high school students in computer science, and thus better prepare them for future study of STEM disciplines, by demonstrating the power of computer science to solve real-world problems through the use of a variety of computing tools and languages (NSF, 2014). Following the development of this new advanced placement course, pilot programs began at universities across the country (e.g., University of Washington2 and North Carolina State University) to develop and offer a new introductory computer science curriculum, The Beauty and Joy of Computing. This curriculum is targeted toward non-computer science majors and centers around programming (using Snap!) and creating (UC Berkeley, 2018). Although such initiatives can be beneficial for students in affluent communities whose schools have the resources to offer advanced placement curricula, students from high schools without advanced placement curricula in mathematics or science disciplines will enter their undergraduate experience at a significant disadvantage from some of their peers, thus widening the knowledge gap that currently exists across student populations. It is crucial that funding organizations and curriculum developers continue to consider approaches that will best prepare all students for potential future work in data science. Other more widely accessible initiatives to attract diverse populations include efforts by Code.org to increase access to computer science in schools, especially for women and underrepresented minorities. The data collected from these and similar efforts, coupled with formal evaluation protocols (see the section “Evaluation,” later in this chapter), can also guide the program evolution until the learning outcomes required to achieve success, however defined by a given program, are achieved.

Finding 5.1: The evolution of data science programs at a particular institution will depend on the particular institution’s pedagogical style and the students’ backgrounds and goals, as well as the requirements of the job market and graduate schools.

Pathways to Maturation

Given these various drivers, how will programs evolve? Again, there will be multiple pathways, but maturation will occur in both individual courses and at the program level. As pathways are developed, attention

___________________

2 The webpage for Computer Science Principles at the University of Washington is https://courses.cs.washington.edu/courses/cse120/, accessed March 29, 2018.

will be needed to ensure that faculty and students are upskilled, flexibility is preserved and supported, and efforts are sustained. As instructors rework individual classes based on outcomes and evaluation, it is likely that they will replace borrowed content from existing courses with original materials that fit together more naturally and better match personal educational styles or the culture of that institution or department. Materials will be tuned to meet the objectives and the abilities of the students. New units, emphases, or experiences may be added (e.g., to teach a broader range of skills).

At the program level, there is likely to be an evolution in the courses over time, as well as in the responsibility or ownership of individual courses or even entire programs. Today, data science programs often originate in one existing department—frequently statistics, computer science, mathematics, or business. However, a program, although “owned” by one department, may well be composed of classes from multiple departments. Over time, that set of classes is likely to evolve as more custom data science classes are created. In some cases, an existing class from one department may be adopted as part of another department’s data science curriculum but may eventually be duplicated (perhaps with some tailoring) to be offered by both departments; alternatively, a course may be shifted from one department to another altogether.

Recommendation 5.1: Because these are early days for undergraduate data science education, academic institutions should be prepared to evolve programs over time. They should create and maintain the flexibility and incentives to facilitate the sharing of courses, materials, and faculty among departments and programs.

Ensuring Success

With the many ways that programs can evolve, academic and career advising are key components of a successful program. These are essential to sustain the viability of programs and pathways. Prior to launching a full-fledged program, advisors will need to be trained to understand the program content, anticipated pathways, and outcomes. This cadre of advisors will include not only those specific to the new program but also advisors for existing programs who will need to field new questions and be able to position the new program for success. How is data science different from statistics? It is not, for example, computer science with a focus on machine learning. It is not applied mathematics. It is a truly interdisciplinary activity that brings together domain scientists with computer scientists, information scientists, and statisticians. What is the best way

for students to meet their goals? If they are unsure of their goals, how best can they explore the alternatives?

While institutions consider the many pathways available for their students, they will also need to consider who will develop and teach the general education and data science classes: teaching faculty, adjunct instructors, teaching assistants, or tenure track faculty. It is imperative that academic institutions have a balanced faculty with both domain knowledge and data science expertise so as to best deliver data science education that will prepare students for the varied and multidisciplinary workforce that lies ahead of them. As data science pedagogy teaches the appropriate skill sets, it may be wise for academic institutions to build up their graduate teaching assistantship programs so as to prepare more future data science educators. Both those who build the new curricula and those who teach it will need to be supported (i.e., motivated, trained, and rewarded) and upskilled in a variety of ways. More work will be needed to “teach the teachers” the multidisciplinary fundamentals of data science. Faculty could benefit from shared teaching materials (e.g., course notes, software, data, and case studies), as well as short courses and boot camps adapted to non-data scientists who will teach data science concepts. Course and curriculum builders could benefit from a roadmap on how to build curriculum and programs at all undergraduate levels, including what works (e.g., team teaching, student-initiated groups, teaching in context) and what does not.

Institutions that want to increase data science opportunities could consider the examples and lessons of interdisciplinary programs at other institutions, as well as how these may be able to provide guidance on how data science programs might evolve. Programs can benefit greatly from sharing ideas and data with each other, given the rapid emergence and evolution of this field. For example, the availability and usability (under a Creative Commons license) of the well-packaged curriculum and materials from the University of California, Berkeley, course Data 8: Foundations of Data Science (discussed in Chapter 3) has enabled several universities to quickly roll out similar courses to benefit their students.

Finding 5.2: There is a need for broadening the perspective of faculty who are trained in particular areas of data science to be knowledgeable of the breadth of approaches to data science so that they can more effectively educate students at all levels.

Recommendation 5.2: During the development of data science programs, institutions should provide support so that the faculty can become more cognizant of the varied aspects of data science

through discussion, co-teaching, sharing of materials, short courses, and other forms of training.

Finding 5.3: The data science community would benefit from the creation of websites and journals that document and make available best practices, curricula, education research findings, and other materials related to undergraduate data science education.

EVALUATION

Regardless of what educational approach is taken, it is important that rigorous evaluation of the outcomes is conducted so that the field can evolve in response to data and evidence. This evaluation is needed both at the level of individual programs as well as at the broader national level. The former could enable individual schools to continue to evolve their programs to better serve their students. The latter could enable the community to learn what works across many programs and eventually to evolve toward a common set of pathways and curricula that optimize outcomes for their students. This could also foster the development of educational research for this highly interdisciplinary field to provide a basis for determining the effectiveness of alternative approaches.

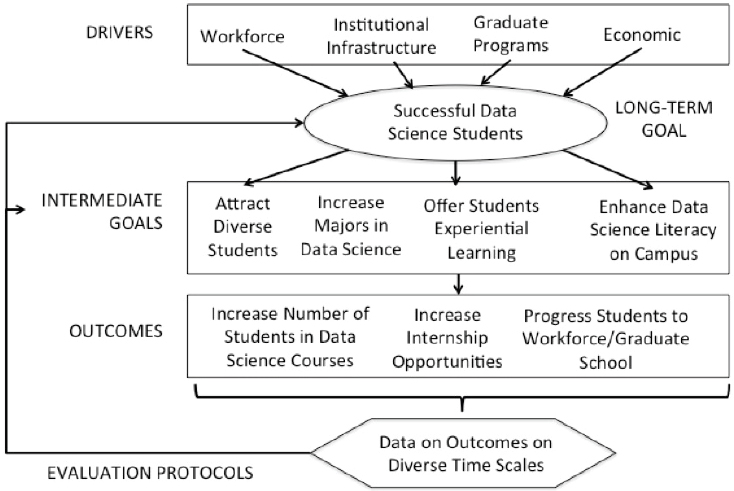

Figure 5.1 provides a simplified conceptual model that could underlie a process of evaluation at several hierarchical education levels. Obtaining and utilizing appropriate data at each step can help iteratively refine programs. Objectives, associated outcomes, metrics of success in reaching these outcomes, and the activities that impact these outcomes might be established at the level of a single program, at the level of an institution with multiple routes to success for data science students through various majors or minors, or at broader national scales.

Evaluation at the Level of an Individual Program

In developing data science pathways that align with their capabilities, academic institutions need to think about how these pathways can be evaluated. Discipline-based education research, which “investigates learning and teaching in a [science or engineering] discipline using a range of methods with deep grounding in the discipline’s priorities, worldview, knowledge, and practices . . . [and] is informed by and complementary to more general research on human learning and cognition” (NRC, 2012), can be used to inform both evaluation and revision of data science curricula to best meet student needs. Both computer science and statistics, for example, have robust education communities whose results may be leveraged to improve data science education. However, further interdisci-

plinary education research is essential, given the interdisciplinary nature of data science and the related challenges of developing and evaluating such programs. As data science programs evolve, it is reasonable to think about how these programs could be accredited. However, it is premature for this committee to prescribe if or how accreditation should be done.

Evaluation using administrative records3 has a long and distinguished history in education (Aaronson, Barrow, and Sander, 2007; see Box 5.1). Academic institutions with data science programs may consider matching their administrative records to U.S. Census Bureau or state wage record data (see Box 5.2) to track the outcomes of students trained in data science relative to other fields. The potential for low-cost evaluation is substantial. Administrative records cost pennies per record, versus several hundred dollars (at least) for high-quality survey data. Administrative data also offer the potential to study population-level data. Administrative data sets are of a scale that make it possible to more precisely identify sta-

___________________

3 Administrative records could include student transcripts (with student demographic data, academic performance indicators, and scholarship, graduation, and curriculum information), enrollment statistics, budgetary plans, and personnel demographic data.

tistical relationships, detect rare events, create comparison groups, and examine the heterogeneous effects of educational policies and practice for different groups of individuals. This last opportunity is particularly important when trying to encourage the broad participation of underrepresented student populations. Administrative data are also near real time in nature—it is possible to identify issues that may arise with particular interventions and adjust accordingly rather than waiting long after the fact. Last, administrative data are often of higher quality than survey data. Rather than asking respondents to provide retrospective information, with obvious recall problems, the data exist within administrative systems. It is possible, through links to state wage record, Internal Revenue Service, or Census Bureau data, to track the earnings and employment

outcomes of individuals longitudinally with much less attrition (and cost) than surveys.

Finding 5.4: The evolution of undergraduate education in data science can be driven by data science. Exploiting administrative records, in conjunction with other data sources such as economic information and survey data, can enable effective transformation of programs to better serve their students.

Evaluation More Broadly

Unfortunately, data that would allow the understanding of outcomes from multiple programs (or even, in fact, of a single program from outside that institution) have not previously existed in a readily available form. For example, even within an institution there are regular laments about the lack of tracking data about its graduates. Accessing the pathways that undergraduates or newly graduated students in data science follow would offer potential benefit not only for an institution, relative to the evaluation criteria for its programs and institutional strategies, but also offer the possibility, when adequately shared, to evaluate broadly the impact of major national programs at the National Science Foundation and other agencies and private foundations (see Box 5.2).

Fortunately, new data have become available to permit, for the first time, the undertaking of rich analyses. The data also enable an entirely new approach to estimating the impact of labor market demand on career pathways (University of Texas System, 2016; AIR, 2018; Heckman, 2010). The advantage is that not only can these new data compare data science graduates to one another, but they can also compare the career pathways, successes, and failures of data science graduates to those of graduates in other fields. Such information can then be used to motivate further development and revision of the evolving data science curricula at the undergraduate level.

Empirical evaluation is now possible because of the integration of multiple disparate sources of data at many academic institutions—for example, the Ohio State University Ohio Education Research Center,4 which is a collaboration of six Ohio universities and four research organizations and has data on students from preschool through the workforce. Seven states—Arkansas, Colorado, Minnesota, Tennessee, Texas, Virginia, and Washington—have linked data so that students can see the earnings of graduates from institutions within these states (see Selingo, 2017).

___________________

4 The website for the Ohio Education Research Center is http://oerc.osu.edu, accessed March 29, 2018.

When integrated, these sources provide a unique data set for examining the educational, scholarly, and career trajectories of students while providing new insights into the mechanisms that shape outcomes for students from different backgrounds, races and ethnicities, and genders. These data include measures of individual characteristics ranging from undergraduate institution and standardized exam scores to race, ethnicity, and some information on financial status. From such data, it becomes possible to identify cohorts at various levels, to characterize networks that provide social support as well as access to role models, and to provide information and resources that could contribute to career success. These data can also illuminate both the labor market outcomes and shocks to demand that can drive outcomes for recent graduates. In the conceptual framework outlined earlier, students’ own characteristics and backgrounds are some of the most important determinants of outcomes either on their own or in combination with their courses, internships, and other educational experiences. These new data also permit the measurement of these important characteristics of students.

Finding 5.5: Data science methods applied both to individual programs and comparatively across programs can be used for both evaluation and evolution of data science program components. It is essential that both processes are sustained as new pathways emerge at institutions.

Recommendation 5.3: Academic institutions should ensure that programs are continuously evaluated and should work together to develop professional approaches to evaluation. This should include developing and sharing measurement and evaluation frameworks, data sets, and a culture of evolution guided by high-quality evaluation. Efforts should be made to establish relationships with sector-specific professional societies to help align education evaluation with market impacts.

ROLES FOR PROFESSIONAL SOCIETIES

There are several challenges facing educational programs in any emerging field, and data science is no exception. As programs emerge and evolve, they look for best practices, class materials, guidance on career paths for students, and a network or community with which to share ideas. Professional societies have a role to play in facilitating the community building and resource sharing that are needed.

Data science benefits from sharing of materials whenever possible. However, there has been pushback to material collection by some

researchers. Many may not have had intellectual property rights to these materials and did not want to share materials that were not theirs; others have had concerns about loss of intellectual property. Still, it is important to learn from others’ attempts. It is also important to have a means to identify and fill gaps in a program’s resources (e.g., workflow, how to link together the tools and use them in real projects). Professional societies may play a major role in making materials available and in enabling educators to receive credit for sharing and material reuse. By valuing the high-quality application of data over the quantity of data that is collected, this effort on the part of professional societies could then incentivize even more data sharing. Professional societies can also play an important role in educating people about the process for sharing data by publishing best practices for increasing access to and awareness of useful, high-quality data sets.

Particularly for rapidly evolving fields such as data science, access to information about career paths (whether pursuing graduate education or moving into the workforce directly) may be difficult for students to obtain, significantly harder than for students in other long-standing fields. For example, many STEM areas actively recruit undergraduates to attend professional society conferences at which they offer a wide range of career option workshops and presentations. Students in data science fields may find that obtaining such information is more difficult than for their peers in various STEM disciplines with large and active professional societies. Beyond formal meetings, professional societies host a wide array of information on their websites that informs students about career options.

The data science community would be well served by having a steering body to help organize conferences, collect materials (e.g., for programs, evaluation5), provide models for industry interactions, and undertake other activities. Professional societies could explore their role in pushing industry to provide career paths for students in order to motivate student adoption of data science programs. STEM-minded students by and large can see the benefit in being trained as data scientists, especially in this competitive job environment.

Retention of talent, consequently, is a real challenge in most settings. New models may be needed to advance career progression and perhaps rotational models that allow for work flexibility and expanded problem sets. A clearinghouse or professional society, perhaps with foundation support, could help to foster further connection among the variety of established programs. However, it may be too difficult for a single data

___________________

5 See, for example, the American Evaluation Association (http://www.eval.org/) or the Association for Public Policy Analysis and Management (http://www.appam.org/), accessed January 22, 2018.

science professional society to represent and be relevant to all data science communities. It is important to be cognizant and supportive of the many data scientists likely to be working in other fields. In addition to the need to accommodate the multidisciplinarity of data science, there is also a need to consider the business case for establishing new societies. It is unlikely that the revenue required for such an endeavor exists. A more structured collaboration of existing professional societies that work well together might be more effective; subsocieties devoted to data science elements may develop in any of these societies. These subsocieties could be closely connected to the educational opportunities for their members, fostering a sense of community and improved professional development, while also promoting connections among practicing data scientists in other fields. Whatever convening mechanisms are chosen, they could cover varied topics such as curriculum, on-ramping, continuing education, and bringing in multiple viewpoints, such as those from industry. Other opportunities include syndication, filtering, and editorial roles.

Finding 5.6: As professional societies adapt to data science, improved coordination could offer new opportunities for additional collaboration and cross-pollination. A group or conference with bridging capabilities would be helpful. Professional societies may find it useful to collaborate to offer such training and networking opportunities to their joint communities.

Recommendation 5.4: Existing professional societies should coordinate to enable regular convening sessions on data science among their members. Peer review and discussion are essential to share ideas, best practices, and data.

REFERENCES

Aaronson, D., L. Barrow, and W. Sander. 2007. Teachers and student achievement in the Chicago public high schools. Journal of Labor Economics 25(1):95-135.

AIR (American Institutes for Research). 2018. “College Measures: Improving Higher Education Outcomes in the United States.” http://www.air.org/center/college-measures/. Accessed April 17, 2018.

Avvisati, F., M. Guragand, N. Guyon, and E. Maurin. 2014. Getting parents involved: A field experiment in deprived schools. Review of Economic Studies 81(1):57-83.

Behrman, J.R., S.W. Parker, P.E. Todd, and K.I. Wolpin. 2015. Aligning learning incentives of students and teachers: Results from a social experiment in Mexican high schools. Journal of Political Economy 123(2):325-364.

Buser, T., M. Niederle, and H. Oosterbeek. 2014. Gender, competitiveness, and career choices. Quarterly Journal of Economics 129(3):1409-1447.

Figlio, D., K. Karbownik, and K.G. Salvanes. 2016. Education research and administrative data. Handbook of the Economics of Education 5:75-138.

Figlio, D.N., and M.E. Lucas. 2004. Do high grading standards affect student performance? Journal of Public Economics 89:1815-1834.

Gertler, P.J., S. Martinez, P. Premand, L.B. Rawlings, and C.M.J. Vermeersch. 2016. Impact Evaluation in Practice. 2nd ed. Washington, D.C.: Inter-American Development Bank and World Bank.

Heckman, J.J. 2010. Building bridges between structural and program evaluation approaches to evaluating policy. Journal of Economic Literature 48(2):356-398.

Imberman, S.A., A.D. Kugler, and B.I. Sacerdote. 2012. Katrina’s children: Evidence on the structure of peer effects from hurricane evacuees. American Economic Review 102(5):2048-2082.

Jacob, B.A., and L. Lefgren. 2008. Can principals identify effective teachers? Evidence on subjective performance evaluation in education. Journal of Labor Economics 26(1):101-136.

Japec, L., F. Kreuter, M. Berg, P. Biemer, P. Decker, C. Lampe, J. Lane, C. O’Neil, and A. Usher. 2015. Big data in survey research: AAPOR Task Force report. Public Opinion Quarterly 79(4):839-880.

Lane, J., J. Owen-Smith, R. Rosen, and B. Weinberg. 2015. New linked data on research investments: Scientific workforce, productivity, and public value. Research Policy 44(9):1659-1671.

Machin, S., S. McNally, and O. Silva. 2007. New technology in schools: Is there a payoff? Economic Journal 117(522):1145-1167.

Metcalf, H. 2010. Stuck in the pipeline: A critical review of STEM workforce literature. InterActions: UCLA Journal of Education and Information Studies 6(2):1-20.

National Science Board. 2015. Revisiting the STEM Workforce. https://www.nsf.gov/nsb/publications/2015/nsb201510.pdf. Accessed January 23, 2018.

NRC (National Research Council). 2012. Discipline-Based Education Research: Understanding and Improving Learning in Undergraduate Science and Engineering. Washington, D.C.: The National Academies Press.

NSF (National Science Foundation). 2014. “College Board Launches New AP Computer Science Principles Course.” https://www.nsf.gov/news/news_summ.jsp?cntn_id=133571. Accessed February 13, 2018.

Pop-Eleches, C., and M. Urquiola. 2013. Going to a better school: Effects and behavioral responses. American Economic Review 103(4):1289-1324.

Selingo, J.J. 2017. Six myths about choosing a college major. New York Times, November 3. https://nyti.ms/2iYZN3r. Accessed January 22, 2018.

UC Berkeley (University of California, Berkeley). 2018. “The BJC Curriculum.” https://bjc.berkeley.edu/curriculum/. Accessed February 13, 2018.

University of Texas System. 2016. UT System partners with U.S. Census Bureau to provide salary and jobs data of UT graduates across the nation. Press release, September 22. https://www.utsystem.edu/news/2016/09/22/ut-system-partners-us-census-bureau-provide-salary-and-jobs-data-ut-graduates-across. Accessed February 13, 2018.