2

Modeling Frameworks That Fit the Defense Materials, Design, and Manufacturing Tradespace

SYSTEM DEVELOPMENT SPEED AND NATIONAL SECURITY

Paul Collopy, Professor and Chair of the Department of Industrial and Systems Engineering and Engineering Management, University of Alabama, Huntsville

Paul Collopy’s objective for his talk was to show how the Systems Engineering Research Center (SERC) is thinking about current systems engineering research and how it can be improved in the future. Collopy believes that the change involves the materials and manufacturing communities in important ways. He shared that the SERC has made great progress in the past 6 years, producing papers, research, and outcomes of great interest to the broader community.

As a way to show just how slowly systems can develop, Collopy pointed out that the F-22 aircraft took 22 years to develop from concept to introduction. Delivering systems is not the real issue, Collopy clarified; the primary challenge is to find ways of injecting new technologies or new materials into existing systems and therefore improve capability. Collopy highlighted that agility is a necessary skill. The United States is already successful in the development of new technologies; now people need to be motivated to implement changes.

To demonstrate this point, Collopy shared an example of a technology that worked well when injected into an existing system. The Joint Direct Attack Munitions, a guidance kit that transforms unguided bombs into smart bombs, is the best capability upgrade the Department of Defense (DoD) has had in 25 years, Collopy believes. The only equipment that changes is the tail kit: the bomb, ground handling

equipment, and airplane all remain the same. By injecting new technology into an existing system, the process from operational, to concept demonstration, to deployment took only 5 years. The only way for similar technological integration to work across the institution is for systems to become more modular, Collopy asserted.

Collopy’s next example was a modular truck system manufactured by Scania. These trucks are made to order by injecting new technologies into existing systems. In this instance, it is crucial that everyone knows how the systems are put together; otherwise, innovators will be limited in ways to improve the systems. The security of the systems, then, will be in the individual technologies.

Similar to Kristen Baldwin’s point about the need to move from a “stovepiped process” to “dynamic life cycle intelligence,” Collopy explained that the first step is to transform the supply chain. As an example, Collopy discussed the smart phone and its use of apps: consumers have a need, and the app responds to that need immediately. The app is a new technology injected into the phone, an already functioning system. Collopy explained that our weapons systems would benefit from instituting a similar process. An important caveat is that industry must first change the way it develops systems, and the Office of the Secretary of Defense/Systems Engineering is taking the lead on making this a reality, he said. Since the defense industry is shaped by the acquisition process, Collopy noted, DoD could write incentive contracts that motivate and reward industry to take desirable actions in the light of DoD needs. This approach toward implementing change may prove more fruitful than DoD’s placing requirements upon industry.

Another crucial change is that modeling within systems must be people-focused. Collopy explained that engineers must learn to look at systems comprehensively in order to do this. Collopy highlighted that a modern airliner is indeed a complex engineered system (as opposed to a complicated system) because of the people involved (e.g., pilots in the cockpit and their cognition of advanced cockpits). Collopy emphasized that the connections between things are often much more important than the things themselves, and that is an important consideration in the future of modeling.

Collopy suggested that engineering designers focus on what material properties are needed to create something instead of thinking about choosing from a fixed set of qualified materials. Materials scientists characterize materials (and publish these characterizations). However, additive manufacturing complicates this process because it allows the creation of functionally graded materials that can change from one material on one side of a part to another material on the other side, Collopy explained. Because there are an infinite number of materials in this one piece, it is difficult to characterize those materials. This is why, according to Collopy, parts themselves should be certified instead of the materials used to create the parts.

Collopy continued: 21st century engineers must think differently from 20th century engineers; instead of modeling the object alone, the system in which the

object is embedded should be modeled. To illustrate this point, Collopy talked about how an engine is used to “fill a hole” in an airplane to “push it around”; the most crucial element in this scenario is the airplane (system), since the engine (object) would not be useful without a plane in which to place it.

Collopy returned to his focus on people1 as his presentation drew to a close and noted for example the importance of expanding the engineering curriculum to include new kinds of math (e.g., abstract algebra, category theory, group theory, graph theory, ring theory) that better characterizes such system relationships. In addition, cost estimation has always been a challenge for big systems, and yet people keep trying to estimate cost. However, people within a program respond to estimated costs, thereby affecting the cost, so this estimation process should better incorporate people to be accurate. Materials manufacturing is both a cognitive issue and an atomic issue, and it is a system full of people, money, and organizations, which are all interesting things to model, Collopy concluded.

Discussion

Paul Kern presented the first question to Collopy. He stated that people have greater variability than material, and yet sometimes things are considered from the perspective of autonomous behaviors that lack people. Because people are usually involved in decisions, Kern said, how should they be incorporated into systems engineering? Collopy agreed that this is an important issue and has been an important issue for the past 60 years; however, engineers lack sufficient training to understand and address social science problems. In order to truly improve systems engineering processes, Collopy asserted, social scientists with expertise in organizational behavior must be brought into the conversation; statistics alone will not solve this problem.

Johan de Kleer pointed out that while the social scientists could provide helpful insights, their models are quite different from those used by engineers. What, then, he asked, is the best way to integrate the social science perspective into the engineering systems process? Collopy recommended that engineers must first better understand social science models. Collopy then suggested participants read Norman Ralph Augustine’s text Augustine’s Laws (1997),2 which discusses the inverse relationship between precision and accuracy. Many social effects do not require the precision that is customary for physical models.

Theresa Kotanchek asked whether these studies consider how people (both on the input and user sides) interface with data. She further questioned whether the user interfaces have what they need to solve the problems at hand and whether

___________________

1 The term “people” is here used in general to refer to those who work in design and manufacturing.

2 N.R. Augustine, 1997, Augustine’s Laws, American Institute of Aeronautics, Reston, Va.

the data is truly being considered as a reusable asset. Kristen Baldwin expressed her hope that the integration of the taxonomy work with the pilot programs will help communities to understand how to better merge the data they have with the data they need. Simon Goerger agreed, noting that the Army could better identify, protect, and reuse current data to create better products through enhanced transitions, more efficient work processes, and better communication of perspectives. Systems engineers need to be able to better connect and communicate with those in acquisitions, business, and operations, Goerger observed.

Michael McGrath presented the final question of the session: What are supply chain interaction issues that should be improved? Collopy responded that the current contracts that link the levels in supply chains are primarily structured around protecting people as opposed to conveying information that could aid in decision making. All involved in the design process and the supply chain need to be informed of trade factors (e.g., weight and cost) and how they affect the outcome in order to develop successful products. According to Collopy, contracts, in their current state, do not allow this kind of communication of information.

ENGINEERING RESILIENT SYSTEMS: RELATIONSHIP TO MATERIALS, DESIGN, AND MANUFACTURING TRADESPACE

Simon Goerger, ERDC Director of the Institute for Systems Engineering Research,

U.S. Army Engineer Research and Development Center (ERDC)

Simon Goerger opened his presentation with an explanation of the connection between the Engineered Resilient Systems (ERS) program and the work of the OSD regarding acquisition processes. ERS hopes to enhance the overall acquisition process for DoD while integrating three principles:

- Mitigate threats to national security,

- Enable new capabilities affordably, and

- Create technological surprise against the enemy.

Reliance 21 identifies 17 crosscutting science and technology (S&T) areas; the two primary communities of interest (COIs) in this discussion are the ERS and the Materials and Manufacturing Processes. In this case, the two communities overlap in some areas.

Currently, Goerger explained, it takes 18 to 22 years for a major weapons system to go from initial concept to deployment on the battlefield and inclusion in the DoD infrastructure. By the time these systems become operational, they are outdated because the environment, enemy, materials, and technologies have already changed. ERS reinforces the importance of understanding the impacts of

systems modifications without having to start from the beginning and create a new platform, Goerger noted. Advanced modeling can help from the beginning of the process: tradespace3 can be generated, and that will create a Digital Thread for the data for the involved communities as they make decisions based on changing requirements, such as environmental impacts on material components. If this process with advanced modeling and a Digital Thread is instituted, Goerger suggested, problems may be addressed in 1 to 2 weeks instead of 6 to18 months.

According to Goerger, high-performance computing can increase capabilities. Models generated from this process can then be used to develop solutions: mathematical optimization allows big data to be evaluated to better inform senior-level decision makers. With all of these new tools, Goerger explained, ERS tries to create an open architecture to bring diverse components together to enhance the acquisition process, as opposed to adding another layer to the acquisition process. One of the most important components of this process is the tradespace tools and analytics, which include generating and analyzing data, employing virtual prototyping, and evaluating how to enhance performance. Since engineering and materials are collaborative communities, Goerger noted, models also need to be able to consume information about materials, as well as communicate back to the materials community. Goerger reiterated that tradespace analytics, advanced modeling, and high-performance computing must integrate operation on the battlefield with data sharing back to the tradespace environments in order to make more sound decisions regarding issues from combat to cost.

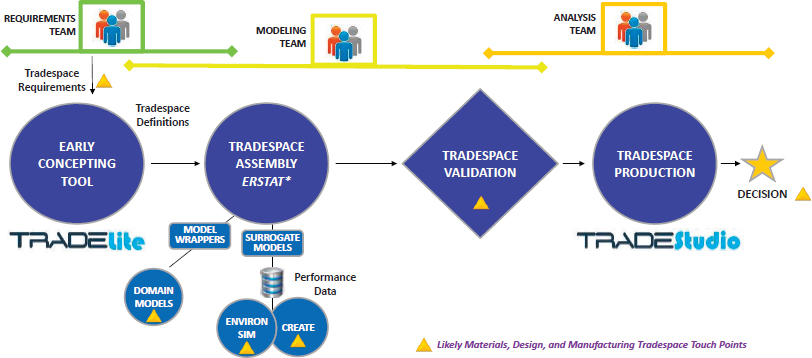

ERS uses an integrated workflow process that leverages the tools within the architecture to be useful for acquisition efforts. (See Figure 2.1.)

According to Goerger, ERS aided three programs: U.S. Naval Surface Warfare Center, Carderock Division (NSWCCD); the U.S. Army Aviation and Missile Research, Development, and Engineering; and the U.S. Air Force Life cycle Management Center/Air Force Research Laboratory (AFRL)/Air Combat Command. Goerger elaborated on ERS’s efforts with the Army’s rotorcraft to create a new design for the blade on the CH-47 after the previous blade was losing 2,000 pounds of lift in the hover position. ERS helped in the assessment of the redesigned blade made with lighter materials using the Computational Research and Engineering Acquisition Tools and Environments (CREATE) program and tradespace tools, which includes tools designed by subject-matter experts and aligns with DoD’s high-performance computing modernization program. The Army took the resulting analysis to inform and shorten developmental testing to operational testing. In addition to physics-based modeling, the “trust factor” was an important part of this process as well because they had to trust the flight data in order to modify

___________________

3 “Tradespace” refers to a collection of processes that are sourced from and used by multiple organizations or multiple departments within an organization.

their operational test within a limited time frame, Goerger explained. These tools are operational, and they are continually being improved.

ERS’s goals for 2016 included continuing to use open architecture to increase trust so that data can be shared more readily between communities. Goerger reiterated that this does not change who owns and controls the data; to protect intellectual property and security, only those who have the authority and the need will have access to the data. An additional goal for 2016 was to insert initial life cycle cost modeling into the decision-making process. This type of modeling is complicated and sensitive, Goerger explained, just like the sharing of performance data.

ERS also continues to refine its big data environment to include innovative techniques that will support increasingly complex data. In the ERS ship demonstrations Goerger referenced, data enabled them to go from 10 to 12 systems to 278,000 viable variants. Such data can then be binned and further tests can be run. The representative systems can then be run through simulations, but there remains a need to reduce the overall amount of data so that it can be used meaningfully and can be replicated, which is important to create trust in the capabilities of the design, Goerger noted.

Goerger explained that ERDC is one of the major environmental modelers for the United States (e.g., coastal regions, waterways, endangered species and landforms, environmental change). This work is important to the materials world because the diversity of environments impacts the consistency and durability of materials.

Goerger pointed out that knowledge management and strategy remains an important challenge. ERS continues to negotiate the varied connections and authoritative rights so that people have access to the information they need for varied systems. Information technology departments play a central role in establishing the Digital Thread. And even after policies are established, there is still an issue of trust about whether data is protected, relevant, and validated. ERS works with all of the military services and with members of industry (including BAE Systems, Raytheon Missile Systems, Lockheed Martin, Boeing, Kitware, and the National Defense Industrial Association) to advance these endeavors.

Goerger emphasized that ERS wants to ensure that information can be passed to industry meaningfully to improve understanding of initial requirements before platforms are created, and that industry data is passed back to the services and acquisition offices to increase understanding of relationships between parts and data. This can be done more efficiently with the use of a standard language such as the industry standard Systems Modeling Language (SysML). Goerger pointed out that this is not the only option, but it is a place to start the conversation about components (e.g., weight, size, speed, etc.) and their impact on life cycle costs.

Goerger also emphasized that material life and failure has been a significant challenge for ERS in the 2016-2018 time frame. It is a serious and costly problem if a system lasts only one year because of environmental factors or other systemic factors. This is why additive manufacturing plays an important role: it can be used in an operational environment to produce spare parts, as was also suggested by Paul Collopy. Modeling manufacturing presents another significant challenge over the next two years, Goerger noted: identifying and generating manufacturing processes and assembly operations that can predict manufacturing time and costs. Business models also play a key role here: understanding the relationships among the business model, the use and availability of materials, and manufacturing leads to a better understanding of the life cycle cost.

Discussion

McGrath posed the first question to Goerger: The tradespace clearly addresses cost, performance, reliability, and adaptability, but how does it address schedule? Timing is an important issue given that selecting new materials, and waiting for certification of those materials, will force a change in a schedule. McGrath also wondered if schedule is a part of the interface within the materials community. Goerger responded that since the tools in ERS are still under development, ERS has not yet determined how schedule will interface with the materials community. Traditionally, the acquisition community has addressed issues of schedule on its own. However, ERS recognizes that this is no longer a best practice. ERS has considered leveraging either Integrating Systems Engineering Framework capabilities

that the U.S. Army Tank Automotive Research, Development, and Engineering Center has developed or creating new tools itself, based on the cost of Tradespace Tool Requirements COI.

Kern asked who the users are of this model and about the role of sharing with the competition since there are incentives not to share materials. Last, he asked where the people are in this model. Goerger said that the user communities in the areas of engineering, budgeting, and acquisition are all able to share input and observe the outcomes of the capabilities. ERS is currently designing a tool that will allow all of these users simultaneous access. Goerger also discussed their plans to utilize prototype organizations for testing. Regarding the intellectual property (IP) issue, Goerger explained that the person developing an IP is responsible for protecting it; these tools simply create an environment within which to work. Kern followed up with a concern about the role of suppliers: even though suppliers own rights to materials, they do not seem to be included in the system. Goerger acknowledged that the architecture will need to be expanded in order to include the suppliers, and he planned to share this comment with his team for future planning purposes. Later in the discussion session, Goerger returned to this issue raised by Kern. He explained that ERS is trying to make it so that the model meets the needs of the S&T community, the acquisition community, the operation sustainment community, and the user community and presents all of their unique perspectives and data within the tradespace. In order for this collaborative community to work, all must participate in data sharing. This will help senior-level decision makers because they will be able to consider platform levels and long-run portfolio levels (“family of systems”); ideally, within 10 years, they would be able to look at all of DoD’s capabilities that meet a particular requirement.

De Kleer next asked about how diagnosability fits into the ERS model. Goerger acknowledged that ERS plans to consider this in the 2019-2020 time frame, but they must first evaluate how data relates to other elements before they can designate research efforts to this issue.

McGrath drew the focus back to mission context and asked if it is treated as a set of constraints or as a set of tradable elements. Goerger said that ERS is trying to produce an initial connection with operational simulations prior to the analysis of alternative (AoA) process. This will determine operational effectiveness of systems based on a one-for-one exchange of systems for a limited set of missions using current doctrines and tactics to identify initial constraints and inform the formal AoA efforts. Operational simulations are informing decisions and reducing overall tradespace into a more meaningful area.

SYSTEM MODELING FRAMEWORK OR TRADE EXPLORATION FRAMEWORK? WHY NOT BOTH?

Steve Cornford, Senior Engineering,

System Modeling and Analysis Program Office,

NASA Jet Propulsion Laboratory

Steve Cornford opened his presentation by emphasizing the difficulties inherent in prediction. He then explained that NASA’s Jet Propulsion Laboratory (JPL) and DoD have two things in common: both build individual systems that have to work. Missions can often become irrelevant and change unexpectedly, and valuation is difficult. Similar problems existed with phase transition, Cornford noted; JPL quickly shifted into a component-based approach, which is difficult in new environments, such as within a multifunctional system. JPL has particular experience in making long-lasting products like the Voyager, which has been in space for 35 years. Supply chain, cost, and schedule are all important factors in product development because launch dates into deep space are relatively fixed.

Cornford introduced the idea of creating an ontology for modeling to increase flexibility, as opposed to using a traditional taxonomy. Cornford provided a Wikipedia definition of ontology for the workshop participants: “a formal naming and definition of the types, properties, and interrelationships of the entities that really or fundamentally exist for a particular domain of discourse.” He explained that having an ontology is similar to having a sentence with verbs and nouns, whereas a taxonomy would be compared to having a sentence containing only nouns. An ontology allows designers to talk about types of components, their properties, and their functions, as well as how the three relate to one another. Cornford highlighted that components perform functions, and even though functions do not have costs, components do. By understanding these relationships, computational verification and validation can be performed.

Cornford offered details about JPL’s work with the Defense Advanced Research Projects Agency (DARPA). In 2010, DARPA System F6 was released, and the award (DARPA-BAA-11-01) stated that the goal “was to demonstrate the feasibility and benefits of disaggregated- or fractionated-space architectures.”4 According to Cornford, DARPA’s desire to perform “explicit trade-offs between adaptability and survivability, for example, and traditional design attributes, such as size, weight, power, cost, reliability, and performance” was of key interest to JPL. DARPA wanted the following range of uncertainties:

___________________

4 Defense Advanced Research Projects Agency, 2010, “NASA ARC Award: System F6-DARPABAA-11-01,” Aurora Flight Sciences Corporation, Manassas, Va.

- Supply chain delays,

- Technology development risk,

- Change in user needs,

- Program funding fluctuations,

- Orbital debris, and

- Technology obsolescence.

During the DARPA program, JPL sought full-breadth, scalable modeling. They planned to look at launch failure, component failure, space weather, collision, and cybersecurity, Cornford noted. The motivation behind the System F6 was to eliminate problems that surfaced when one component of a system would make an entire system obsolete. With the charge to analyze the fractionated system and to design and architect such a system, JPL joined forces with Phoenix Integration and used computer-generated models to evaluate designs automatically using Adaptable Systems Design and Analysis (ASDA). Since it is difficult to create a one-size-fits-all model for systems, Cornford explained, they aimed to optimize fidelity, cost, and schedule.

Cornford provided two additional relevant definitions to workshop participants: fractionation is the distribution of both functions and components across the system elements, while disaggregation is the distribution of only components across system elements. NASA’s A-Train, a constellation of satellites that travel one behind the other, is an example of disaggregation, Cornford said; even though all of the spacecraft are in the same orbit, and they do not communicate with one another, they can communicate to the ground and then the humans can piece that information together to get hyperspectral information.

With the ASDA, JPL produced a realistic model. The model included stimuli and responses to measure adaptability (what one can do while still on the ground) and survivability (what one has to do because the craft has already left the ground). The model automatically generated, populated, and executed clusters and then had the ability to generate populated tradespace, now called Data Explorer, Cornford explained. They created a mothership that had a downlink to be able to talk to Earth. Cornford noted that every spacecraft has a downlinker except for the daughter-ship, because it cross talks to the mothership. Cornford explained that the goal is to launch only the pieces that should be replaced, and doing this requires much thought about the production lines, supply chain, and learning curve, for example. Cornford noted that because of time and scope constraints, not all factors (e.g., weather, cybersecurity, etc.) could be included in the model.

Cornford introduced the importance of understanding net present value when thinking about “uncertain future events” and making models to order. Economists use this concept to understand potential future costs using the present knowledge. Cornford’s group relied on this method to compute present strategic value, which

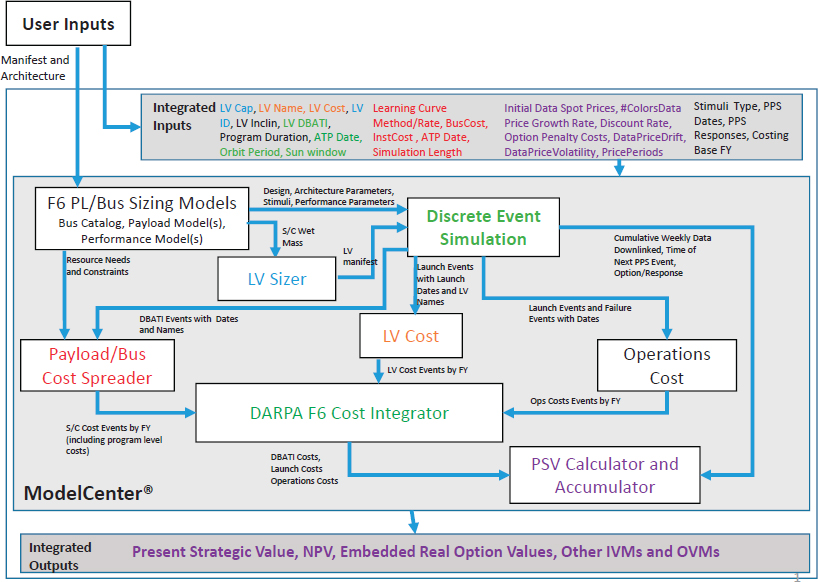

includes the value of the real option (i.e., something that can be changed to something else). Despite both limited time and funds, it created a full-breadth “Threads of Calculation Model,” which included a variety of costs, schedule, launch vehicle sizing, and varied performance catalogues. (See Figure 2.2.)

In this model, users set up a variety of constraints and the model identified the processes that worked. The discrete event simulation within the model generates data on what events occurred when the systems were built and launched, and then costs and operations are gradually integrated with a variety of factors considered. Cornford used a process similar to that of DoD called Design, Build, Assemble, Test, Integrate to distribute costs and schedule. He reiterated that JPL is fully supportive of and engaged in model-based systems engineering. Many graduate students in the field entering the workforce are not even cognizant of the fact that they too are already engaged in model-based systems engineering.

Cornford described the benefits of using SysML, specifically that it allows the users to create many relationships, constraints, and formality. Cornford emphasized the notion that humans should do what they do well, such as develop new

ideas, and computers should do what they do well, which includes repetition and accuracy. Consequently, Cornford mentioned that the creation of a large tradespace increases the quantity of things that can be explored, and that computational approaches can be used to sort through some of this information. The tools that JPL uses work well for a variety of communities, including both engineers and decision makers. Because JPL relies on an ontology, multiple experts can look at the same model at the same time, and they can focus on the component that most concerns and interests them. This, in turn, leads to the ability to make changes to certain components much more quickly. Cornford also noted that this model will be successful only if it is scalable and customizable. DARPA added the constraint that the tool had to be open sourced, which presented challenges for JPL. To do this, JPL initially populated the catalogues with generic data, and users could customize the model with their own data based on individual preferences, proprietary issues, or classification issues.

Cornford noted that his team never attempted to build a universal uncertainty model; instead, they opted for a user-focused, customizable model based on a mission’s particular needs, values, and tolerance for risk. ASDA has now been in existence for over 3 years and was just the start of JPL’s innovative work in this area. JPL plans to use model-based systems engineering exclusively for its next flagship mission, according to Cornford. This model can be examined for inconsistencies: approximately 50,000 consistency checks are performed per night, and a list of missing elements is then generated. Though improvement is needed on the programmatic side, the ontology demonstrates proof of correctness in the software. The model also allows for one authoritative data source and the creation of artifacts, including project documents. This eliminates the need to dictate tools to users and allows them to stay within their realm of comfort. This model can be transformed for a variety of platforms, and it enables collaborative design. Variables can be traded and the integration of legacy code enhances comprehension of that legacy code. Despite all of these advantages of model-based systems engineering, it requires discipline and rigor on the part of the user: large tradespaces can be overwhelming to explore, and the models may introduce new, previously unknown problems.

Cornford concluded his presentation by encouraging participants to try model-based systems engineering and to adopt full-breath modeling because it offers four benefits: (1) it incorporates the programmatic and technical aspects, (2) it integrates implementation and operational risks, (3) it facilitates trades against all resources, and (4) it allows compounded effects of changes observable across the system. Though model-based acquisition is difficult, with models it is possible to identify key trade loops within the design using simulations, Cornford concluded.

Discussion

Jesus de la Garza introduced the scenario of the Mars orbiter that crashed during landing in 1999. The crash was attributed to a systems engineering failure: the orbiter launched using two different units of measurement, metric and imperial. De la Garza pointed out that the newer models can discover these inconsistencies prior to launch. He asked if Cornford’s team has applied model-based systems engineering retrospectively. Cornford responded that they do not tend to use that method because it rarely generates new information. He also admitted that he is skeptical of trying to identify the root cause of any problem because there are very few problems that relate back to a singular moment. Cornford shared anecdotes of two failed spacecraft. For the craft that had a failure due to metric-imperial conversion, the mission control centers looked the same but used different units, making the training that operators received useless in half of the cases. This was a process control issue. When they found a software bug, it was immediately repaired, but since they were not tracking it, people just assumed there was a lag in the fix despite the fact that it had been fixed months earlier. This is when they were alerted to the conversion issue. Problems would likely be caught by using the new systems, though the models will also identify additional problems, Cornford noted. Real-time integration is crucial in this field where launch dates cannot be delayed.

Mike Yukish asked if the fractionated architecture and the modeling approach are complementary or orthogonal. Cornford responded that they are somewhat orthogonal: within 10 years, he suspects all system development processes will be based on model transformations.

Pamela Kobryn voiced her support of the notion of ontology and shared that the Air Force is moving in a similar direction. Kobryn asked how business is transformed when the odds of being successful change, and she also asked if any evidence of the benefits exists. Cornford noted that documentation currently exists for pieces of it, but not yet for the whole. However, he explained that this is a significant proprietary advantage because it helps get proposals approved more quickly, which allows designers to continue developing tradespaces while competitors are still brainstorming. Cornford shared a related anecdote about 12 model-based systems engineering efforts at JPL that were using the same 6 DARPA-team people. The groups were allowed to continue developing each of the 12 efforts independently; however, all efforts eventually and unknown to the team participants converged. The goal, though, is to achieve breadth (cost, schedule, performance) so that the benefits are apparent. Cornford acknowledged that though there are some papers published on this work, it is still too early to determine how well this process will work in all cases.

RECONFIGURABLE MANUFACTURING SYSTEMS: PRINCIPLES AND EXAMPLES

A. Galip Ulsoy, C.D. Mote, Jr.,

Distinguished University Professor of Mechanical Engineering and the William Clay Ford Professor of Manufacturing, University of Michigan

A. Galip Ulsoy opened his presentation with a definition of reconfigurable manufacturing: a manufacturing system that can be changed over time in response to environmental circumstances. Reconfigurable systems are common: a convertible and a wrench, two systems that the user reconfigures, and an automatic transmission, a self-reconfigurable system, are examples of everyday reconfigurable systems. Ulsoy next shared a quote often attributed to Charles Darwin that best illuminates the concept of reconfigurable systems: “It is not the strongest species that survive nor the most intelligent, but those that are most responsive to change.”5

Ulsoy noted that the two primary factors in manufacturing are cost and quality. However, he added a third factor: responsiveness. Manufacturing should respond to changes in market demand, Ulsoy explained. For example, consumers’ car purchase choices often reflect the current price of gasoline. Changing needs can often disrupt the manufacturing process. In addition to consumer needs, there are also commercial needs to consider, especially in the area of military manufacturing. In a traditional dedicated manufacturing system, the “ramp-up” time, though important to ensure quality and quantity of parts, is often time-consuming. Ulsoy asserts that the primary goal should be to decrease the lead time. Systems should be designed quickly, and production systems should be designed simultaneously. Responsiveness is key in reducing ramp-up time, design time, and production time because the system should be easily reconfigured to produce a new product or multiple products. Traditionally, Ulsoy explained, dedicated manufacturing systems produce high volumes with very limited variety at low cost and high quality. Dedicated systems also use minimal software, if any at all.

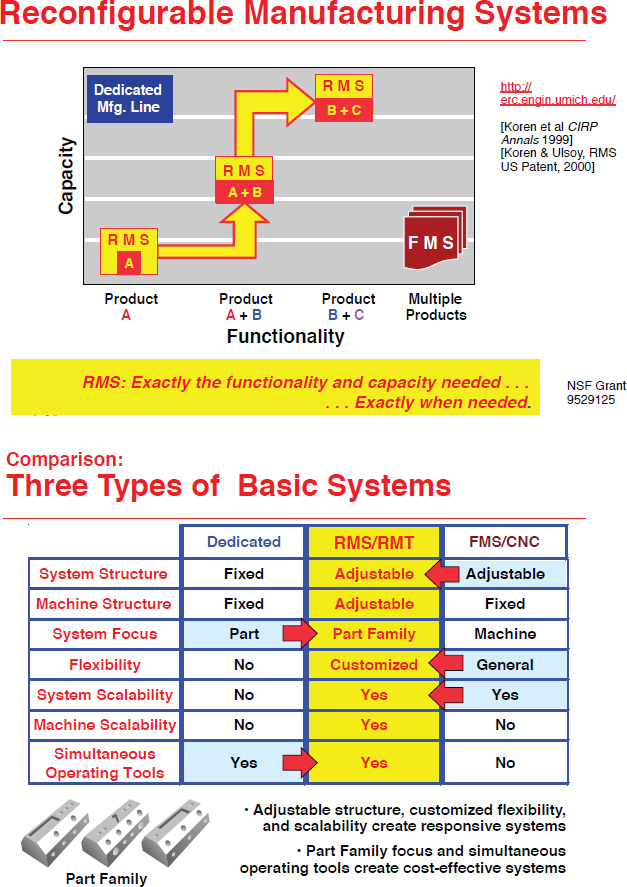

Flexible manufacturing systems, on the other hand, are more adjustable and flexible, and so will likely decrease volume (because of cost and quality concerns) and increase variety. Flexible manufacturing systems also include control software. Reconfigurable manufacturing systems (RMS) combine the advantages present in both the dedicated manufacturing systems and the flexible manufacturing systems. RMS allows even greater flexibility within the functionality-capacity space, according to Ulsoy. RMS also provides a space in which both the hardware and the software can be adjusted or reconfigured. This allows for the addition or removal of software at any time, for example. Ulsoy highlighted that reconfigurable manu-

___________________

5 C. Darwin, 1859, On the Origin of Species, John Murray, London.

facturing uses the ideas of model-based systems engineering in that singular parts within machines can be replaced easily. Not only do such systems allow for change, but they are also designed with structural change in mind. (See Figure 2.3.)

Based on the consumer or commercial need or demand, one product can be produced, phased out, and replaced with a new product all on the same manufacturing line because the system itself can be reconfigured. RMS is designed around creating functionality of one part family, as opposed to one part, as in the dedicated manufacturing systems. Designing the system to be able to handle part variations leads to greater efficiency. It is important to note that setting up RMS requires much work at the onset, including life cycle cost analysis to justify additional initial costs and ensure future cost recovery. The process of doing this can be complicated, Ulsoy noted. When changes are made, quality issues and errors can arise within the system, so it is important to develop a method to diagnose and control those issues. Using stream of variation theory and taking measurements during the process to be able to detect and correct these errors will aid in rapid ramp-up of systems.

Ulsoy asserted that the mass production paradigm of the early 1900s, when Henry Ford was focused on using interchangeable parts for automobiles to reduce cost to the consumer, is not so different from today’s manufacturing strategies. Ulsoy also described the 1960s as an influential era of modern manufacturing: the notion that cost and quality do not have to be mutually exclusive was inspired by changes in Japanese manufacturing. Management techniques improved over time, and flexibility improved with the increased uses of computers in the 1980s. Computers enhanced flexible manufacturing because many types of machines and tools could be controlled by computers and produced on one system, thus lowering cost and increasing quality and variety.

The 21st century adds the component of responsiveness that Ulsoy introduced at the start of his presentation. Knowledge is what Ulsoy deems the “enabler,” because the design, operation, and management of reconfigurable systems is a challenging endeavor that requires a certain level of expertise. Reconfiguration can occur at different levels (system, machine, component), and a certain level of knowledge is necessary to decide when and where to do this. He noted that systems must be modular, have compatible interfaces, and work quickly and cheaply if they are to be reconfigurable. Recalibration of such a system comes at a great expense, and customization and scalability are essential.

Ulsoy next provided an example of a system-level problem surrounding plant capacity management: If a plant is producing one product on modular machines, and market demand changes, this issue of production volume can be resolved by looking at the problem as a stochastic dynamic programming problem. In other words, Ulsoy explained, because there is uncertainty in precisely how and when the market will change, the market demand should be modeled as stochastic. In contrast, the cost of machines, capital, and installation are known factors and can

be included in a cost model. What results is a strategy for timing of increasing and decreasing plant output based on market demand. Ulsoy’s team partnered with Cummins to implement this model in a diesel engine machining line. An important lesson learned was that implementation delays must also be introduced in the model to produce an optimal result.

Ulsoy then shared an example of a machine-level problem in which a plant wants to combine various axis modules in many ways quickly and efficiently. It is crucial that the module can convert between different configurations without introducing errors into the system. Ulsoy presented a second example of a reconfigurable machine built around a parts family: the full-scale archetype reconfigurable machine tool is a 3-axis computer, numerically controlled milling machine tool designed around a product family of cylinder heads. Ulsoy noted that chatter could be avoided with careful selection of operating speeds. He emphasized that, even when reconfigured, the machine can retain good performance and chatter-free operation because components such as depth of cut and spindle speed can be selected appropriately.

Ulsoy introduced the stamping process as another example of a reconfigurable system. Floor panels for automobiles are produced using this method: the hydraulic cylinders under the die can be controlled individually to alter how the sheet metal is formed. A system such as this requires many controls, but it is automatically tuned. This drastically reduces the time it takes to produce parts, as well as the amount of scrap generated, Ulsoy explained. This process benefits from the use of the open architecture module discussed by previous speakers because functions can easily be added or deleted and vendors can be changed. This approach, as mentioned previously by Paul Collopy, is most commonly used in the applications added to smart phones.

Ulsoy highlighted many of the problems associated with designing electromechanical systems simultaneously: organization and optimization issues surface that can take much time to address. Ulsoy’s team is working on a control proxy function method to rectify such problems by creating a more easily controllable system.

Ulsoy noted that an additional objective for reconfigurable systems is that they have a “plug and play capability.” With this strategy, modules can be controlled to minimize retuning while maintaining good performance. Ulsoy used the example of plug-in hybrid electric vehicles to describe this phenomenon: for people who drive fewer miles than the all-electric range, a control system could be designed in which the battery characteristics are changed while performance is maintained and cost decreases. Ultimately, distributing some control into the module gives the same outcome.

Ulsoy pointed out that reconfigurable systems do not apply only to manufacturing; they also can be useful in computing and robotics. Modular robots are currently being designed with the capabilities of a natural gait and self-regulation. Ulsoy concluded by reiterating his central point: reconfigurable manufacturing allows designers to respond to environmental changes.

Discussion

De la Garza referenced Steve Cornford’s discussion of value assessment and asked Ulsoy how he quantifies the value of reconfiguration. Ulsoy responded that the time value of the money is included in the cost function that is being minimized. Initial investments must be in line with those made while reconfiguring. Kern then asked if any union representatives were involved in Ulsoy’s projects. Ulsoy responded that, yes, one project in particular with Chrysler and General Motors included union members, as well as a plant manager and a university team. Ulsoy acknowledged that unions seemed satisfied with the project because it allowed Chrysler and General Motors to better compete with global production capabilities.

Yukish compared Ulsoy’s discussion on part families to a DARPA project that had similar constraints. The manufacturers intentionally restricted designers by creating only certain design tools for them to use to gather information. Ulsoy agreed that allowing the manufacturers to generate rules for the design is useful. McGrath concluded the question and answer session with an observation that the human dimension once again plays a crucial role in systems manufacturing.

AIRCRAFT DIGITAL THREAD: AN EMERGING FRAMEWORK FOR LIFE CYCLE MANAGEMENT

Pamela Kobryn, Senior Aerospace Engineer, U.S. Air Force Research Laboratory

Pamela Kobryn provided an overview of the role of AFRL, which develops science and technology for the Air Force in ways not always possible in the acquisition environment. She next provided the history of two key concepts of her talk, Digital Thread and Digital Twin. In June 2013, the Air Force chief scientist released Global Horizons. This document identified three ways in which manufacturing and materials could be improved: (1) advanced modeling and simulation; (2) virtual representation of systems; and (3) archived digital descriptions. However, Kobryn noted, because the Air Force’s current state of the art is inadequate to achieve this vision, AFRL plays a crucial role. The primary objective is to move away from paper and digital artifacts to model-centric artifacts, and then eventually to digital surrogate artifacts.

Kobryn summarized the definition of Digital Thread from the Defense Acquisition University Glossary of Defense Acquisition Acronyms and Terms: an “analytical framework that seamlessly expedites the controlled interplay of authoritative technical data, software, information, and knowledge.”6 Kobryn clarified that this

___________________

6 Defense Acquisition University, 2015, “DAU Glossary of Defense Acquisition Acronyms and Terms,” https://dap.dau.mil./glossary/Pages/Default.aspx, accessed December 31, 2015.

term is not associated only with manufacturing and engineering. The Digital Twin, then, is an “integrated multiphysics, multiscale, probabilistic simulation of an as-built system enabled by Digital Thread.”7 The Digital Twin is similar to an avatar that can forecast performance, no matter the environmental or operational circumstances. The Digital Twin, then, is a subset of the Digital Thread (i.e., a digital surrogate). Kobryn clarified that a Digital Twin is not a digital tool for configuration management, a three-dimensional (3D) model of an as-built system, or a model-based definition of an as-built system.

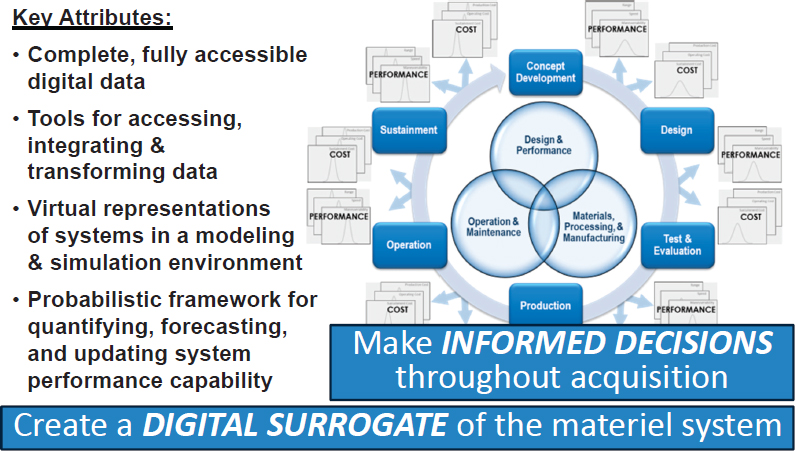

Kobryn then shared a “pinwheel” chart to demonstrate the attributes of the Digital Thread. (See Figure 2.4.)

According to Kobryn, the purpose of the Digital Thread is to improve decision making by connecting the realms of design and performance; operations and maintenance; and materials, processing, and manufacturing that traditionally have operated independently. Then, through an analysis of performance and cost, a link is made into the life cycle of design; test and evaluation; production; operation; sustainment; and concept development. This allows the acquisition program to be designed along the way to increase fidelity of cost and performance probability estimates. In order for this to work, Kobryn explained, data must be digital,

___________________

7 Ibid.

accessible, and usable, and there must be tools readily available to obtain, study, and understand the data. She noted that the system must also have the capability to be represented virtually, and there should be a probabilistic framework, on which AFRL is currently most focused.

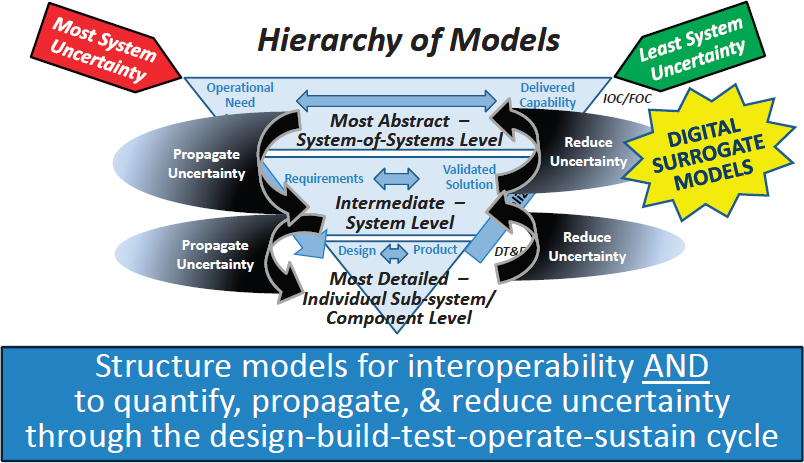

In 2000, DARPA had a program called Accelerated Insertion of Materials, in which they connected various material model levels from the bottom up. Today’s systems approach does modeling from the top down instead. The DoD hierarchy of models, using model-centric systems engineering with the Digital Thread, connects operational need, delivered capability, requirements, validated solutions, design, and product, Kobryn explained. Digital Surrogate models (i.e., digital representations of the Digital Thread) should be visible at every level. AFRL continues to work on this capability, Kobryn said, but the goal is to “structure models for interoperability and to quantify, propagate and reduce uncertainty through the design-build-test-operate-sustain cycle.” (See Figure 2.5.)

With its use cases, AFRL is trying to cover various points in the acquisition life cycle. Kobryn summarized the details of the Engineering and Manufacturing Development and Service Life Extension Program studies conducted with Boeing, Lockheed Martin, and Northrop Grumman. The studies demonstrated that the Digital Thread has value for both the U.S. Air Force and industry, especially for service life

extensions, for both systems and structures. More detailed reports of these studies, Kobryn noted, are available through the Defense Technical Information Center.8

Kobryn began a discussion about the Airframe Digital Twin by making a comparison to human health care: AFRL wants to better manage aircraft life cycle just as humans want to better manage their health and prolong their lives with complete information, preventative screenings, and personalized treatments. Similarly, air-crafts can undergo preventative maintenance and repairs to extend life cycle. The four primary objectives in aircraft life cycle management are as follows: (1) use all available information; (2) use physics (fundamental properties instead of empirical models) for analyses; (3) use probabilistic methods to quantify risks; and (4) close the loop, after evaluating performance, and update the model.

Kobryn introduced AFRL’s Individual Aircraft Tracking Program, which aims to avoid catastrophic failure and part replacement by tracking aircraft usage and adjusting maintenance schedules. Digital Thread can help modernize and improve this process through the development of an analysis framework for Individual Aircraft Tracking. This program is called the Airframe Digital Twin (ADT) Spiral 1, because AFRL is linking to actual usage data and nondestructive evaluation data, using relevant probabilistic analysis. AFRL currently has contracts with General Electric and Northrop Grumman to work on the ADT Spiral 1. Because ADT Spiral 1 includes a full-scale physical demonstration, there are many lessons to be learned. AFRL was able to reuse data and models from the F-15 fighter aircraft and will be performing full-scale testing. Kobryn suggested that this is not without its challenges, since teams had preconceived notions about their preferred processes. They have learned that instead of trying to eliminate uncertainty, they should quantify and estimate it instead as they consider updates to the aircraft.

Kobryn pointed out that the Airframe Digital Twin is a multifaceted approach: the technology is useless if costs are not clear up front. Business cases and technology have to be integrated, which presents an additional challenge since the communication between experts in the two fields has historically been limited. Through the ADT program and through a workshop with Lockheed Martin, AFRL realized that feedback, interoperability, and accelerated timelines are keys to success. Kobryn concluded by emphasizing that Digital Thread and Digital Twin are synergistic and that multiple users of data and models for different purposes are essential. The most effective strategy is to begin with data and models that are already available and then, based on value, create new data and new models.

___________________

8 For more information about these studies, see http://www.dtic.mil/dtic/, accessed May 18, 2018.

Discussion

McGrath asked how AFRL would like to use the Integrated Computational Materials Engineering (ICME) data and models captured in the Digital Thread. Kobryn responded that ICME is simply “another tool in the toolbox” to produce data. Regarding the validation of ICME data, she said one must be able to find where the data came from so that it can be used to update uncertainty. However, where ICME fits into exactly will depend on the application. Haydn N.G. Wadley, University of Virginia, suggested that the “readiness” of systems is important and asked if AFRL’s approaches can help to figure out how to maximize the number of platforms available at any given time. Kobryn said that this question is part of their long-term “what-if” vision. AFRL wants to provide decision makers with even better information about risks and maintenance, for example.

Collopy asked about the model certification effort versus the interoperability effort. Kobryn explained that AFRL’s primary focus is on model interoperability; however, certification is the first step to achieving this goal. She noted that the Digital Thread is the enhanced combination of model-based enterprise and model-based system engineering. Simon Goerger then asked if the Airframe Digital Twin has been used for systems other than airframes. Kobryn said that this is happening with turbine engines, but that it is more difficult to translate into other realms because of the increase in vendors, and thus an increase in data.

McGrath posed the final question of the session: he asked if this process is useful only for mechanical structures, or if it can be applied to electronics and software functionality of the aircraft as well. Kobryn responded that this can be used for the entire aircraft, though it will take time to develop the capability to look at the full system.

MATERIALS-BASED CHARACTERIZATION IN THE DIGITAL THREAD

Rosario A. Gerhardt, Goizueta Foundation Chair and Professor,

School of Materials Science and Engineering, Georgia Institute of Technology

Rosario A. Gerhardt opened with an explanation that her presentation would be about an idea she has been working on for 30 years: using electromagnetic nondestructive methods to monitor the state of a material. She shared the notion that materials respond differently, depending on their composition, their micro-structure, and the environment in which they are used. Gerhardt said that it is impossible to have just one test that will provide all of the desired information; instead, many connected tests are needed because the underlying material structure often impacts its performance in the field.

The electromagnetic nondestructive testing uses several complex techniques, according to Gerhardt: 4-point probe direct current (DC) resistivity; dielectric properties; alternating current (AC) conductivity; impedance spectroscopy; optical spectroscopy; scanning electron, tunneling electron, and atomic force microscopy; X-ray and neutron scattering; and multiphysics simulations. Impedance spectroscopy is the method primarily used to obtain the AC properties. Gerhardt explained that “the permittivity is inversely proportional to the product of the impedance, which has a real and imaginary part, times the frequency.” Gerhardt then mentioned that most of her work uses measurement ranges between micro-hertz and megahertz, which affects the speed of data production.

Gerhardt noted that low frequency AC measurements are important because all materials undergo electrical polarization. This approach has been used to study nickel alloys, ceramics, ceramic composites, polymers and their composites, semiconductors, and devices. Gerhardt explained that as the density, composition, or orientation of a material is changed, the electrical response can change by several orders of magnitude. In-plane properties are especially sensitive to contact dimensions.

Gerhardt next presented five different cases. The first considered quantification of Ni3Al precipitates, nucleation, and growth in nickel-based superalloys, which can create two-phase systems. The solution treatment temperature is reached and then maintained, the superalloy is quenched, and then they are reheated so that the precipitates can be formed when the desired aging temperature is reintroduced. The purpose of this first case study was to monitor changes in the precipitate population for the material. Gerhardt noted that the 4-probe DC resistivity measurement was also collected and small-angle scattering spectra were determined to quantify the number and size of the scattering objects in a statistical manner.

She then explained that neutron-scattering tests are also useful for people who want to pair electrical testing with a way to quantify the structure. There are also other ways to get such information, such as through imaging methods. However, Gerhardt acknowledged, it is difficult to use the imaging methods with opaque materials. The second case Gerhardt described quantified porosity in thermal barrier coatings and other insulating materials, which can be understood with a measurement of the capacitance of the coating. The third case was also about impedance properties of insulating materials; however, this case included a water adsorption component that changed the dielectric properties and conductivity of the material. Gerhardt pointed out that water adsorption characteristics are important to consider when using porous materials in a high-humidity environment, especially since the electrical behavior of materials shows a signature response as a function of frequency based on the environment the material is exposed to. The fourth case discussed the contact effects of various conducting materials, and demonstrated conductivity as a function of the orientation, as well as the electrode

type and size. The fifth and final case discussed the detection of different types of interfaces in insulator-conductor composite materials, which can be better understood with equivalent circuit and microstructural models, Gerhardt affirmed.

To conclude her presentation, Gerhardt reiterated that AC measurements over a wide range of frequencies are the most useful and that important data can be missed by examining only gigahertz-level properties. Further, based on the material development process used, properties of the same material can vary by many orders of magnitude. This is why it is essential to consider the properties in many directions and to use a wide variety of methods. Gerhardt recommended the following procedure:

- Establish a baseline electrical testing setup to obtain desired response for the materials system of interest;

- Develop master curves for the parameters of interest;

- Model the response using both equivalent circuits and electrical interface-based microstructural modeling;

- Corroborate models with complementary experimental characterization techniques to validate analysis;

- Model the expected changes, considering environmental factors; and

- Compare the changes to the predicted changes in order to develop new models.

Discussion

Kobryn asked about the impact of complex geometry on these techniques—specifically, how the changes in the internal characteristics compare to changes in the external geometry. Gerhardt said that her team has not examined the role of macroscopic object geometry in detail but that the technique is not limited by size. Gerhardt said that when surface changes happen, it is difficult to monitor the exposed portion of the material, and this needs to be accounted for in the simulations. Howard Last asked if this equipment is easily transportable and if the measurements can be taken to the field. Gerhardt admitted that a more portable machine would be useful, though it is indeed possible to move the current machines. Last then asked if this could be used as a nondestructive test. Gerhardt said, yes, but how well this works depends on material type and that the main challenge is to separate the effect of surface layers that may have altered the material response during usage. McGrath pointed out that these data could be best captured in a Digital Thread and he asked if this is possible based upon the level of standardization of the testing techniques and environmental conditions. Gerhardt responded that it is possible but that there are challenges with using the Digital Thread taken from various sources because most data are not being analyzed appropriately or have been obtained using different conditions or differently processed materials.

Valerie Browning asked how this technique compares to AC susceptibility. Gerhardt explained that AC susceptibility works best for ferromagnetic and highly conductive ferromagnetic materials, whereas her technique works with any materials, regardless of magneticity. Browning disagreed and noted that materials do not need to be magnetic for AC susceptibility; rather, they just need to be somewhat conductive. Gerhardt responded that materials actually need to be highly conductive in order for AC susceptibility to work and to provide the type of information that one can obtain using dielectric and impedance spectroscopy, which can sense both insulating and conducting materials.

PANEL DISCUSSION:

UNCERTAINTY AND CHANGE PROPAGATION IN MODELING

| Participants: |

Jay Martin, Research Associate, Pennsylvania State University Applied Research Laboratory Saigopal Nelaturi, Palo Alto Research Center Rosario A. Gerhardt, Goizueta Foundation Chair and Professor, School of Materials Science and Engineering, Georgia Institute of Technology Pamela Kobryn, Senior Aerospace Engineer, U.S. Air Force Research Laboratory |

| Moderator: |

Valerie Browning, Consultant, ValTech Solutions, LLC |

Valerie Browning opened the panel discussion by introducing the topic of uncertainty and change propagation in modeling and reviewing the relevant themes of previous speakers. Since DoD is shifting toward increasingly more complex systems, it makes sense to discuss challenges associated with quantifying, tracking, and managing uncertainty and change propagation in materials, components, and systems, Browning noted. However, it is also important to discuss the driving force behind this trend toward systems and focus on the advantages and opportunities available to address these challenges. Browning recalled Baldwin’s focus on adaptable and reconfigurable systems and their influence from the “globalization of technology.” DoD’s strategy, called the “third offset strategy,” includes these new operational ideas that have the potential to extend current platforms and better compete against opponents with innovative technology. Browning reiterated that investments are primarily attributed to networking legacy platforms with smaller, less costly platforms as a way to extend these legacy platforms, but DoD has plans to change its practices to make more data available for the development of new systems. Browning then summarized Cornford’s presentation by recognizing that there have been advances in the ASDA to model fractionated and disaggregated

systems. To summarize Kobryn’s talk, Browning noted that the Digital Thread creates interfaces between model layers.

With the reminder of these three speakers’ themes, Browning encouraged the members of the panel to focus on the advances in addition to the challenges of these emerging technologies. Browning outlined the subtopics for the panel discussion: Rosario Gerhardt would focus on uncertainty in materials systems; Saigopal Nelaturi would discuss uncertainty in manufacturing and systems; Pamela Kobryn would focus on uncertainty in the life cycle; and Jay Martin would discuss a methodology for networks of simpler models.

Gerhardt opened the discussion by emphasizing that well-characterized materials have specific properties that connect directly to the structure of the system. When samples are well made with repeatable characteristics, the properties can be reproduced and therefore be used as the initial input into an application or design. The information and uncertainty about these properties can be transferred to the creation of the system model. She explained that those who take a systems approach will need to lay the framework they desire to monitor first in order for the materials properties that are collected to be relevant.

Nelaturi noted that all manufacturing is subject to error; uncertainty is unavoidable and has traditionally been defined using a computer-aided design model. Additive manufacturing, however, uses different combinations of materials (with their respective uncertain properties) to make new, more complex shapes. He added that the way one makes a part during additive manufacturing affects the material’s properties, which in turn affects uncertainty. This leads to both challenges and opportunities in the way that systems are designed because one can no longer assume that parts and design models follow an existing function. When constructing new models using additive manufacturing, Nelaturi said that one must conduct simulations on as-built parts, keeping in mind uncertainty in materials and manufacturing.

Kobryn suggested that the enormous volume of readily accessible data makes data-driven decision making a realistic approach, therefore opening possibilities for the materials genome and integration of data with the design system. Data can be used in a less rigorous sense as a way to begin the process, she said, but should still be used rigorously in the design system. She commented that additive manufacturing shows great promise to advance development but poses challenges for design engineers who prefer homogeneity.

Kobryn asserted that there is a paradigm shift: not only should engineers predict answers with a measure of confidence, but they should also be able to link this answer to the cost of making the change. The fundamental question moving forward is how to use all of these tools and link them together intelligently to give that engineer a bigger design space.

Martin noted that, during conceptualization, there must be a rapid trade-off in technologies and tactics, keeping in mind the impacts on performance and cost. Modeling and simulation are the best ways to balance these needs and make informed decisions. Essentially, decisions are best made through the use of a network of simple models that can manage constraints and measure uncertainty. Martin commented that there should be a balance in having adequate modeling while avoiding processes that are too computationally difficult.

Nelaturi commended Gerhardt for her presentation because of its emphasis on how modeling the materials can affect the design properties. Nelaturi said that multiscale models are essential in relating properties at both low and high levels. To achieve this, Nelaturi says, design tools could be redesigned to incorporate new modeling and simulation capabilities. The additive manufacturing space is characterized by trial and error; modeling and simulation tools cannot yet quantify primary tools at multiple scales. Nelaturi explained that the paradigms are still evolving—for example, topology optimization is beginning to improve additive manufacturing, but it may not work for all design processes. He noted that there is an opportunity for product life cycle managers (for example, businesses using Digital Thread models) to move into additive manufacturing; the way materials are made has to be codified within the design process, which is an issue of uncertainty.

Kobryn responded to Nelaturi’s points by explaining that complexity (due to increased model fidelity) is expensive and is usually defined by the given time frame. Kobryn believes that there is still work to be done to identify, as Martin discussed, the right place to add the complexity to the system. Much more collaboration than currently exists is required for design system abstraction. Kobryn noted that communities have to expand their thought processes in order to move forward, and the design community needs to be convinced that adding complexity is a good idea.

Wadley noted that original equipment manufacturers are expected to reduce costs to automobile companies each year, and this cost reduction is projected by a learning curve. He wondered if bringing the learning curve to the front of the design process is worth the effort required for one part. Kobryn shared his concerns and reiterated the notion that just because you can do something does not mean you should. Kobryn said that the connection Martin made is critical in understanding this problem. She said that people who use their product data will be far ahead of competitors.

McGrath raised the challenge of certification for parts created using additive manufacturing. He argued that it does not make sense to make 19 parts, for example, for certification, when you only want to make one part. McGrath asked how Bayesian methods, where all probability is conditional, inform this conversation about modeling, especially in cases when not all data is taken into account. Kobryn responded that although the cultural barriers are high, they are not insurmountable. Because

the weapons system is aging and the budget is limited, engineers are currently more open to engaging in a new approach based on Bayesian methods.

Cornford commented that for distributions that have tails with some overlap, the margin can be shrunk so that each tail is within the boundary. He presented this example as a way to demonstrate that they are trying to improve decision making. He asked if Kobryn’s work considers the same ideas. Kobryn affirmed that they are starting this process and she would like to see an approach with quantified margins and uncertainties in all analyses to improve decision making.

Martin advocated keeping models as simple as possible but acknowledged that higher fidelity analyses are often necessary. He explained that the conceptual focus should be on eliminating bad designs as opposed to choosing the “best” design. The goal, then, is to figure out, through modeling, if one has more uncertainty than the other or if the two are comparable. Simplifying the process in this way reduces both time and cost.

Kobryn and Martin noted that engineers often have limited training in statistics, and limited experience with Bayesian methodologies in particular, and this lack of education poses a challenge. McGrath recommended that statistics be included in the undergraduate engineering curriculum. He stressed that this change in culture is necessary if uncertainty analysis is to become standard practice. Martin noted that this lack of education is also an issue for high-level decision makers who are presented with uncertainty analyses. To address this issue, Penn State has begun to use a two-dimensional color-coded chart to present information, but this system leaves out much crucial information that is available in a multidimensional tradespace.

Cornford noted that engineers sometimes are correct in their lack of trust in math models. For example, the Voyager outlived its predicted life cycle by 15 years. He asked how this notion should factor into the conversation, particularly with respect to propagating epistemic uncertainty. Martin acknowledged that that is a challenge, and that Bayesian methods can be helpful. Kobryn added that this is a reliability-based design as opposed to a reliable system. She explained that the uncertainty quantification process is rigorous but is not an exact science; it allows the user to insert a large margin of error at the start and update once more information is available. There are many options, but all depend on the nature of the decision, and the allowance for trial and error is crucial. At this point in the discussion, Kern referenced the text To Engineer Is Human,9 which emphasizes that failure is essential to learning. Martin agreed with this sentiment.

Kern then asked if components should be qualified instead of materials, which would be a fundamental change in the way things have been done. Gerhardt said that coupon testing to predict material failure is insufficient because components

___________________

9 H. Petroski, 1992, To Engineer Is Human: The Role of Failure in Successful Design, Vintage, New York.

have uncommon shapes and varied properties. Her solution is to do both: qualify the material and the component. Matthew Begley suggested that the process itself needs to be qualified. He noted that the length of development time for validating processes should be considered, as should the way people think about properties. Nelaturi agreed that properties of parts should be characterized. If numerical methods are then used to analyze the additive manufacturing parts, those parts can be validated computationally. Wadley compared a part developed through additive manufacturing to a part made in a traditional machine and asked if the life span of the additive manufacturing part would be lower as a result of existing cracks and fatigue life. Kobryn said that robust design may help overcome additive manufacturing challenges. A more pressing issue in additive manufacturing is collecting enough data through low-risk applications to understand reliability. She suggested that the best way to approach this problem is to acknowledge ahead of time that the risk is unknown and that anomalies will impact the design.

McGrath said that DARPA launched an Open Manufacturing Program with industry to “lower the cost and speed of the delivery of high-quality manufactured goods with predictable performance.”10 There is much work being done in this realm, he said, because time must be spent on the design of experiments to capture parameters. Charles Fisher, NSWCCD, mentioned that there are companies working on computational simulations (e.g., 3Dsim and Cube), but it can take time to see their results. Yukish said it can take 2 years to decide if a particular product is good. Kobryn noted that it is also a matter of the level of risk a person is willing to accept. One has to prove that something works even if it comes with a big uncertainty to it, and get rid of as much uncertainty as possible elsewhere to accommodate the situation. The business case for implementation of a product is based on the biggest uncertainty element, but that is exactly where one would not want to start in deployment. Kern said that following this logic would have resulted in the Lockheed SR-71 Blackbird not being built, but Kobryn reminded him that risk tolerance has changed over time. Now, she said, it is necessary to find a balance between the local risk and the global risk. Martin highlighted a new DARPA program, Enabling Quantification of Uncertainty in Physical Systems (EQUiPS),11 created by Fariba Fahroo, that studies uncertainty and high-fidelity models. The DARPA team plans to look at stochastic finite elements. Yukish commented that uncertainty does not always show up in models, and Charles Fisher wondered if Yukish’s experience factored in people. Martin said that even when presented with the right information, people can still make the wrong decisions. De la Garza asked

___________________

10 Defense Advanced Research Projects Agency, 2015, “Open Manufacturing,” http://www.darpa.mil/program/open-manufacturing, accessed March 18, 2018.

11 For more information about EQUiPS, see http://www.darpa.mil/program/equips, accessed May 18, 2018.

if anyone had insight into the 1986 Challenger decision-making process. Martin said this was a cultural issue of people being uncomfortable challenging decisions, but Cornford disagreed and suggested that it was also an issue of data growing faster than information. Martin noted that this is the appropriate time to analyze the data to identify the cause of the problem: there may be correlated or direct effects.

McGrath then referred to earlier discussion about the human factor in the decision-making process. He asked how the human factor affects the engineering as well as how to avoid unintended consequences when humans are involved in risk assessment. Martin noted that humans can create intentional biases, especially in cost estimation, in order to make deals that will work in their favor. De Kleer suggested jokingly that the decision makers should be modeled. Martin then introduced the notion of game theory as a way to counter the human biases. Yukish said there are inefficiencies in the design process because some project developers hold back margins. Cornford said that when contractors bid low, they then overcharge for changes, but if the changes are not requested, they ultimately go bankrupt.

Kotanchek noted that, to combat this problem, data exist to identify the individuals who present accurate estimates, and their bids should be preferentially managed. The credibility issue erodes the ability to bring the best programs forward and increases the risk of losing relationships with good suppliers, Kotanchek explained. Martin agreed that, essentially, people who do honest work are penalized. A participant noted this to be a leadership issue, but Kobryn said that this is also a policy and expertise problem. Sometimes the proposal structure limits the type of information that can be shared and evaluated during the bidding process. Kotanchek responded that leadership and policy should go hand in hand and that it is important that this community starts to challenge these behaviors if change is to occur. Martin noted, though, that the industry base is already small and lacking competitors, so the programs have a responsibility to keep them afloat. Cornford referred to this problem as a cultural issue: he referenced an article from the Harvard Business Review12 that said no one changes because things have always been 15-20 percent overrun. Kobryn reminded the audience not to confuse the DoD acquisition process with the consumer product process. She highlighted the need to better understand risk.

McGrath mentioned an introspective Naval Air Systems Command analysis that uncovered that their acquisition programs were typically 50 percent over budget and 50 percent overschedule. The analysis revealed that programs that made incremental improvements were in better shape. He asked if there is a way that modeling can be used to remind program managers that incremental improvement is easier to do. Martin highlighted the success of the automakers: new models

___________________

12 A. Gallo, 2015, A refresher on cost of capital, Harvard Business Review, April 30, https://hbr.org/2015/04/a-refresher-on-cost-of-capital.

have more problems than long-standing models with incremental updates. Kobryn introduced a new focus for the Air Force: prototyping. Systems need to be built and operated in order to better understand the developmental stage and associated risks. Goerger said that the most successful systems are in the Air Force because they took a platform that worked and continued to inject new technologies. Goerger pointed out that it is important not to make comparisons to civilian systems in this discussion. It is also important to consider how entities like the Air Force will continue to be able to compete with their adversaries. Kern noted that the real issue will be when an adversary builds an unmanned aerial vehicle using 3D printing.