3

Changing the Design Paradigm

LINKING DESIGN AND MANUFACTURING THROUGH TOPOLOGY OPTIMIZATION

James Guest, Associate Professor,

Department of Civil Engineering, Johns Hopkins University

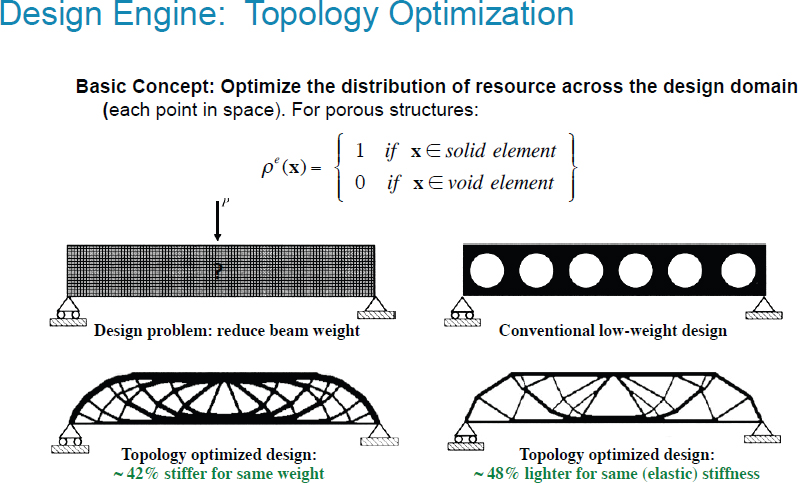

James Guest opened his presentation with an explanation of Johns Hopkins’s approach to design: it is important to rethink both the design and the components and systems, as well as the design process itself. Guest’s research group focuses on topology optimization as a design tool that is grounded in mathematical algorithms. Topology optimization was first introduced about 25 years ago; however, it has only recently been used for engineering design applications. He emphasized that physics-based models are part of the optimization formulation and design decisions are driven through mathematical programs to avoid random searching. Guest next provided an example of topology optimization to maximize stiffness and reduce mass in a beam. (See Figure 3.1.)

Instead of relying on the traditional designs that are used primarily for their ease of manufacture, Guest explained, topology optimization “starts with a blank slate” and though the manufacturing process may become more complex, the improvements to the structure and use of resources are ultimately better. Guest next provided an example of designing a force invertor, utilizing topology optimization to find and manipulate the load path to maximize negative displacement.

Guest next introduced the example of the passive valve in a fluidic diode design where the objective is to maximize diodicity (ratio of pressure drop for flow from left to right to the drop flowing from right to left). Traditionally, a valve with moving parts might be inserted, but such solutions are difficult to miniaturize and a better solution is through topology optimization, Guest explained. Similar to the force invertor example, the process begins with a uniform initial guess. The passive valve ultimately creates an obstacle that, because it is well shaped, manages the flow effectively in the desired direction, making it significantly easier to flow in one direction than another.

Guest shared his “vision of design” in which there is an envelope for a part, and there are demands and performance needs on that envelope. As the envelope changes, so too does the topology. Guest then presented a refined definition of topology optimization: “a free-form approach to optimizing size, shape, and connectivity of a structure [or system] using mechanics-integrated mathematical programming.” He elaborated that there are two sets of constraints with optimization problems: (1) analysis-based design constraints (i.e., mechanics and performance metrics); and (2) topological-based design constraints (i.e., manufacturing and functional).

Guest reiterated that topology optimization is based on gradient-based algorithms, not random searches, and it is imperative for the algorithm to consider an

engineer’s design environment in order to get meaningful solutions. In fact, Guest explained, rigorous optimization will reveal the following errors in the formulation of the problem or the model itself:

- Manufacturability,

- Sensitivity to uncertainties,

- Reliance on linear mechanics,

- Dependence on single physics, and

- Lack of system-level design.

To combat these problems that could lead to a need for redesign, it is essential to maintain physical meaning and mathematical rigor in the model. Additionally, Guest noted, the parameters must be directly related to manufacturing processes from the start.

Guest then shifted to the issue of manufacturability, which has been the most significant barrier in topology optimization. To overcome such barriers, Guest suggested using projection methods. With this process, design variables are projected onto the physical design space based on the manufacturing process. In other words, a blueprint for the design (and the part) is created and analyzed. Guest returned to his initial beam example to highlight the trade-off between complexity and performance that is considered in topology optimization. For example, basic solutions can lead to reduced performance, but higher performance can lead to increased manufacturing costs. Although current tools allow for optimization within certain manufacturing conditions, they do not currently incorporate manufacturing costs directly.

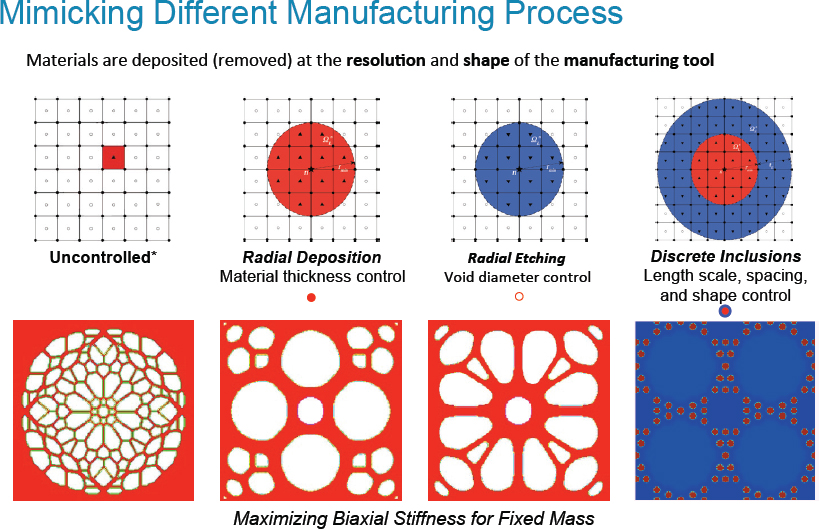

Guest noted that topology optimization offers additive manufacturing an increased level of complexity. Additive manufacturing allows for multiple material solutions to be created based on topology optimization, though it remains important to consider design and associated manufacturing constraints. For example, with topology optimization, unnecessary parts can be removed, costs can be decreased, and materials used can be reduced if appropriate constraints are integrated into the design algorithm. (See Figure 3.2.)

Guest moved from this discussion at the component level to a discussion of material architecture and topology optimization through the use of existing material chemistries. Currently, designers pass a particular material through the topology optimization engine, with a particular objective in mind, and end up with an optimized material architecture that is dependent on the base material. Eventually, Guest surmised, designers would like to develop algorithms that will actually choose between materials. Guest noted that the issue of cost and performance trade-offs resurfaces in these scenarios with additive manufacturing. However, the improvements offered may outweigh the increased cost.

Guest revealed that, in a recent Defense Advanced Research Projects Agency (DARPA) project, topology optimization was also being used in conjunction with additive manufacturing to develop multiple patterns for three-dimensional (3D) weaving that would result in increased permeability while preserving strength. The designs are developed by integrating and varying the manufacturing constraints during the optimization process. Materials that have been developed through this process have been characterized, which then allows the designers to provide feedback to the algorithm to reduce future uncertainty. Guest explained that topology optimization can also be used for problems with nonlinear properties, though this is more challenging. Guest concluded his presentation by repeating his ultimate visions for topology optimization: the algorithm should be able to create a structure from scratch and the optimizer should factor in cost models. Challenges that remain are in choosing appropriate manufacturing spaces and incorporating varied materials into one design engine. A long-term goal, currently in development, is to incorporate manufacturing flaws directly into the algorithms to increase robustness of designs or insensitivity to flaws. Ultimately, Guest hopes that topology optimization will focus on design through the scales as well.

Discussion

Saigopal Nelaturi asked Guest if topology optimization is scalable; he also asked Guest to elaborate on the topic of 3D topology optimization, as well as how high-performance computing is used in the process. Guest affirmed that most of his laboratory’s material design projects are 3D. He mentioned that finite element analysis governs the cost of this process, which usually scales well. Michael McGrath asked about robustness. He wondered about the level of sensitivity to variation when something is optimized based on a predicted load. Guest responded that load drives engineering design. He noted that it is easier to incorporate multiple deterministic load cases than probabilistic loads, but that this is an area in which they are making progress. Steve Cornford asked if a parameter could be used to estimate manufacturing time. James Guest stated that this is a nonconvergence issue because as the finite element mesh is refined, the solutions become finer and finer. Guest acknowledged that a perimeter constraint was developed about 20 years ago to prevent this. Guest hopes that this idea can be joined to a tool path and to costs in the future.

EXTREME SCALE SIMULATION OF COMPLEX MATERIAL RESPONSE

Raul Radovitzky, Professor, Department of Aeronautics and Astronautics,

Massachusetts Institute of Technology

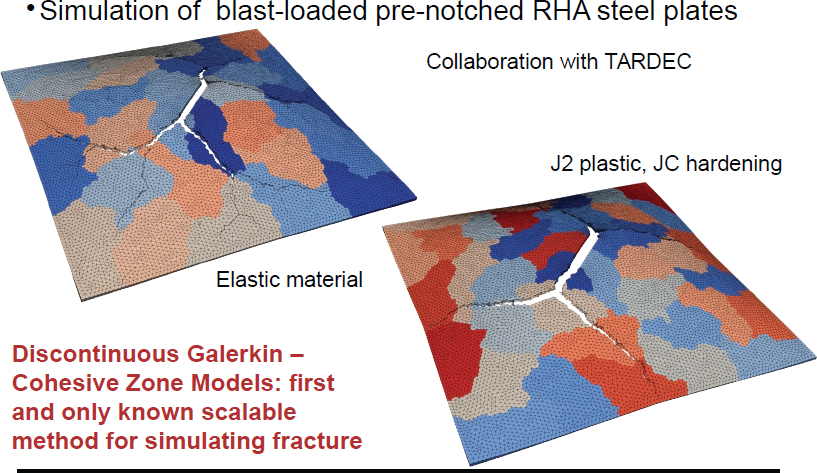

Raul Radovitzky opened his presentation on modeling material failure by showing two images of a crack; he then asked the audience to determine which was a model and which was an experiment. Though an incredibly difficult task, he proved that it is possible, in modeling fracture, to do better things than the legacy code can do.

Radovitzky reminded participants that modeling is not a new concept: the Boeing 777 was the first aircraft designed completely computationally, and it began commercial service in 1995. What is new, he explained, is the ability to model damage. Design is dictated by failure. Because some things are out of our reach (e.g., rock formations thousands of feet beneath the ground), models similar to the hydraulic fracturing simulator allow for exploration at minimal cost. While the study of rock fracture is important, Radovitzky said, what is even more valuable is a study of the surrounding material that remains undamaged, which is a challenge to model.

Currently, the legacy approach is based on continuum damage models, which means that damage is modeled as a process of degradation of material properties, making it difficult to predict things like stress intensity factors, fragmentation, crack extent, and crack propagation. Although fracture can be modeled at a basic

level, he noted, the nature of the fracture is difficult to understand. In the damage zone, the mathematical equations change character, for example, from hyperbolic to elliptic. This is a problem because wave speeds then become imaginary and are thus ignored. Other drawbacks of the legacy approach include extreme mesh sensitivity and lack of convergence: it is difficult to get the undamaged zone in front of the crack in the model correct.

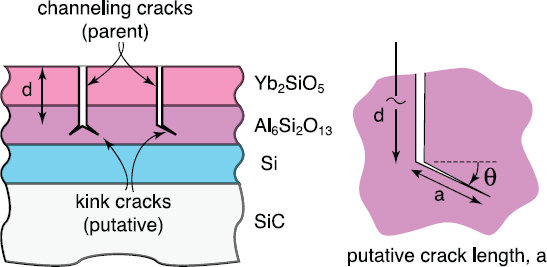

Fracture mechanics is well understood, according to Radovitzky; however, it does not scale well three-dimensionally. The question remains, is it possible to capture 3D conical and radiant cracks computationally using two dimensions? Radovitzky’s team is working on this and the simulations that result are promising. (See Figure 3.3.)

Radovitzky next discussed the issue of validation for models of crack propagation. Validation was attempted with micro X-ray computer tomography, and though the results appeared qualitatively sound, defined metrics were not used, which decreases the validity. The question that arises is whether there are emerging metrics that can be used to define validation. This could increase experimental repeatability. Though Radovitzky’s team has done parametric analysis, for example, there is still work to be done to improve validation methods.

An additional challenge, Radovitzky explained, is the preservation of intact pieces in simulations, since the numerical methods used to shatter things is easier

to develop. Simulations can capture radiant cracks, ring cracks, and conical cracks. The simulations, Radovitzky continued, can also reveal the effects on the cracks from the boundaries, if the right conditions are arranged at the start.

The next issue Radovitzky discussed was the trade-off between convergence and scalability when developing models. Radovitzky used the example of a simple plate impact to demonstrate the complexity of this issue: he explained that one would need to figure out where to insert the billion degrees of freedom that are needed in order to obtain converging crack lengths. This is possible with the help of supercomputers. In terms of scalability, Radovitzky noted, the code remains fairly stable up to 20 billion degrees of freedom. However, scalability is in jeopardy when the problem becomes too small.

There are still deficiencies in the crack propagation models, however. Radovitzky highlighted this with a one-dimensional simulation of wave propagation. Because many systems rely on stress wave management, higher order methods are often needed so that modeling is possible. Radovitzky’s team has begun using a higher order for crack propagation.

Most of the digital approaches explored in Radovitzky’s talk happen at the coupon component level; however, he has begun exploring modeling for larger structures. Often when there are small cracks in one section of a larger structure, this will cause problems throughout the structure. Radovitzky referred to the Boeing 737-200 Aloha Airlines Flight 243 crash in 1988. He described the simulation performed by his student, Brandon Talamini. Though this work has not yet been formally validated, the simulation showed that small cracks do have widespread implications and that there is indeed a future for modeling structures that are thought difficult to model.

Radovitzky also emphasized the importance of emerging technologies in complex models for complex systems like human tissue and biomechanics, as well as emerging technologies in producing stable nanocrystalline aluminum alloys that perform similarly to steel. Radovitzky also discussed his and colleague Chris Schuh’s work with shape memory alloys, creating materials like ductile ceramics that have a world record in energy dissipation.

In summary, Radovitzky explained that there are still two interconnected challenges in modeling fracture: (1) modeling is a multiscale problem; and (2) failure is three-dimensional. He said that mainstream production codes need to be of a higher order so that they can be adapted to deal with complex phenomena, and the platform needs to be expanded to allow the incorporation of new ideas to model fracture.

Discussion

Hadyn Wadley asked Radovitzky to walk the participants through the Boeing 737-200 simulation. Radovitzky noted that the shear effects of the discontinuous Galerkin framework cannot be ignored. This model demonstrated that a shear deformable field needed to be incorporated into the shell framework. The formulation is based on the resulting bending and shear membrane. Failure, then, was initiated by the cabin under pressure, causing the cracks to propagate. A participant noted that there were experiments of a Boeing wing that show similar waves to this Airbus simulation. John H. Beatty asked about additional parameters needed to run discontinuous Galerkin models. Radovitzky responded that there are no additional parameters: the only parameters are the constitutive models. He also noted that it is important for the models to focus more on damage and code infrastructure. McGrath then referenced a previous National Materials and Manufacturing Board/Board on Army Science and Technology study of protection materials of which Radovitzky was a part.1 McGrath asked what parameters are needed for modeling purposes since there are two evolving communities, mechanical and numerical. Radovitzky asserted that these communities are not incompatible and that the intricate details of physics have a place in mathematical modeling. Software is what allows these two communities to integrate.

DESIGN WITH SELF-AWARENESS

Johan de Kleer, Palo Alto Research Center (PARC)

Johan de Kleer opened his presentation by providing an overview of his experiences with qualitative physics, DARPA’s Adaptive Vehicle Make program, and Xerox product manufacturing and design. De Kleer explained his frustration that current design tools cannot keep up with new materials, platforms, and systems, which prevents the realizaiton of possibilities. New ways of thinking about design, including unique representations and algorithms, are needed to move the field forward.

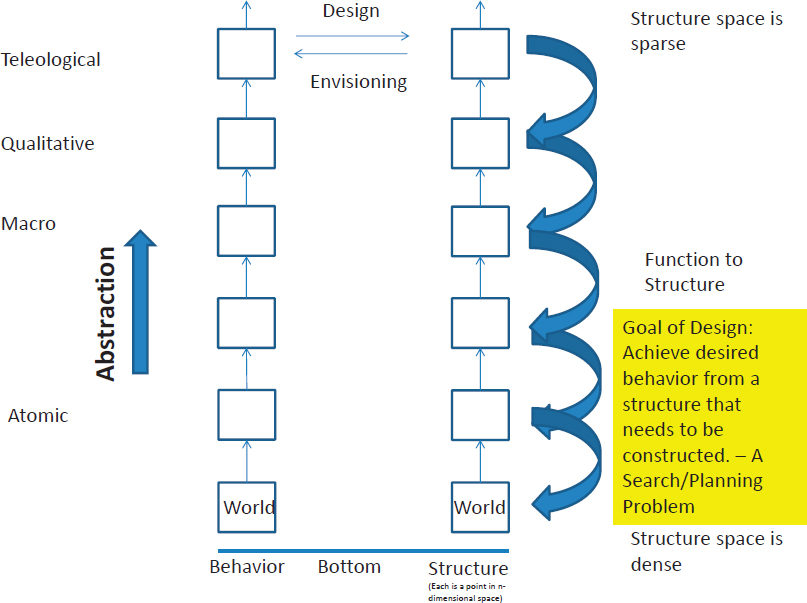

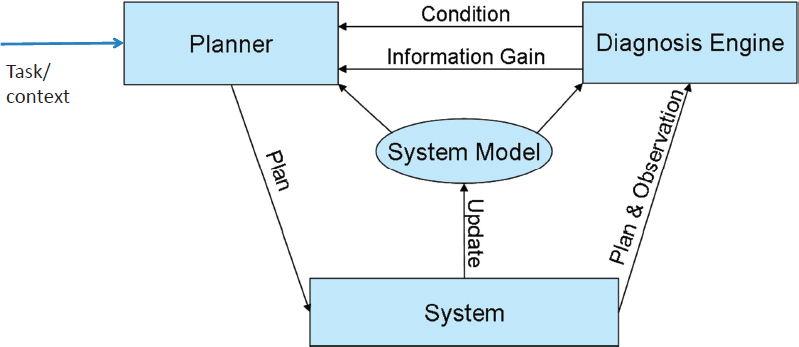

De Kleer defined the goal of design as “achieving a desired behavior from a structure that needs to be constructed.” This, he noted, is an inverse problem. (See Figure 3.4.)

De Kleer noted that designers should be working at a high level of abstraction, which enables them to limit the search space and to identify the best design. Design should also be defined, de Kleer explained, as a search and a planning problem. He noted that in order for this paradigm to work, qualitative and teleological languages

___________________

1 National Research Council, 2011, Opportunities in Protection Materials Science and Technology for Future Army Applications, The National Academies Press, Washington, D.C.

that do not yet exist are needed. The mathematics for this paradigm is also still in development, primarily using category theory: this will help ensure that a design crafted at one level will still work at the next level.

De Kleer presented three types of designers: insane, sane, and analogical. The insane designer completely exhausts the search space without receiving any feedback and continues to try various structures until finding one that exhibits the desired behavior. The sane designer instead works backward after a design fails to identify the problem and then decreases the search space. The analogical designer uses concepts from known systems to create new systems. Qualitative physics tries to capture the envisioning and reasoning that engineers do at a high level of abstraction in design, de Kleer explained. Qualitative reasoning, then, is used for system design by taking the derivatives of quantities and defining them against thresholds. In other words, qualitative reasoning allows the designer to think at multiple levels of abstraction and to discover whether or not a function can be realized, to find undesirable interactions, and to focus redesign. Envisionment—simulation—reveals all possible behaviors for the parameters provided.

De Kleer provided an example of this at the macro level from his work with Xerox on the one billion dollar Igen3 research and development project. The task at hand was to make the printer’s program work for all possible configurations. The solution was to build a model of the many components using the planning domain definition language. According to de Kleer, this demonstrates that model-based engineering by designing at a high level of abstraction and envisioning the outcome can be successful. Such models can then simply be rewritten when new problems arise.

De Kleer continued with a definition of self-awareness: “the capacity for introspection and the ability to recognize oneself as an individual separate from the environment and other individuals.” As it relates to design, de Kleer noted, introspection is simply looking at what you are doing. A self-aware system, then, is able to maintain its own models that can examine and make adjustments to achieve the desired outcomes. Xerox allocated $20 million for work on a new printer that would be both more reliable and more cost effective than earlier printers. The printer they designed had numerous individual modules for various tasks: they created a self-aware machine capable of functioning without all of its components. This allows a customer to configure the machine in a way that works best based on individual needs. For example, if the paper jams or a component fails, the printer will continue to work automatically by rerouting print jobs. It is important to note that the software used was the same, but it was configured in real time. De Kleer shared a visual of this self-aware architecture. (See Figure 3.5.) The planner commands the system and the true model updates itself.

The purpose of the “diagnosis engine” here is to estimate parameters, since the designer has only “partial observability.” Additionally, de Kleer noted, the human being still plays an important role in creating the initial goals for the system, and though the model can update itself, the model cannot change the goals.

De Kleer acknowledged that these new systems generate new design questions: What kinds of design tools are needed? How homogeneous should the system be? What are the different types of modules? If abstraction boundaries are removed, will resilience, adaptability, and robustness increase?

De Kleer also spoke about the vision for 2035. Systems can continuously be transformed by “automating the science of flexible design to enable real-time reconfiguration of form that can accomplish previously unknown functions.” In this type of cognitive-physical system, the planner essentially becomes the designer, which concerns some members of the design community because the systems software can be more complicated than the design software or the systems software may even contain the design software.

As he concluded his presentation, de Kleer noted that black box abstractions can actually get in the way if one wants to look far into the future, and while they can be good for people, they can be bad for computers.

Discussion

Cornford cautioned that a computer may create a nonintuitive solution that may be undesirable, and de Kleer agreed with this statement. McGrath asked how this concept is fundamentally different from the idea of topology optimization (in which the model is given a goal and it provides a solution) that was presented by James Guest. De Kleer highlighted that self-aware systems are going to use formal language. An additional difference de Kleer identified is the emphasis on math in the self-aware systems. James Guest interjected that the “insane designer” in topology optimization is the enumeration: the space for topology optimization cannot possibly be fully explored in its entirety. Overall, Guest compared topology optimization to de Kleer’s vision of the “sane designer” because it involves formal sensitivity analysis for design changes and physics-based language. De Kleer noted that designing languages is a difficult process.

Cornford asked how simulated annealing fits into this discussion. De Kleer said this would fall somewhere between the “sane” and “insane” designer definitions. Making such a distinction between “sane” and “insane” forces people to separate enumeration and smart control and to avoid optimization at the beginning. Beatty asked how to make future aerospace designs adaptable to future needs, noting that modular design allows for adaptability but is expensive and may create loopholes in the system. He also asked how compositionality and modularity in manufacturing can be balanced, and he asked if it is possible to design interfacial design frameworks. De Kleer acknowledged that these are important questions to consider that require more attention. De Kleer also acknowledged the importance of compositionality and noted that PARC is working on this issue. Beatty then asked, if the

starter in an engine were changed, for example, would the whole engine need to be recertified? De Kleer noted that replacing a component with different properties still guarantees that the module is going to work, since module descriptions are written in logic, which implies certification is unnecessary.

INTEGRATING DESIGN, DEVELOPMENT, AND MANUFACTURE OF HIGH-TEMPERATURE MATERIAL SYSTEMS

Matthew R. Begley, Professor of Mechanical Engineering and Materials,

University of California, Santa Barbara

Matthew Begley opened his presentation by emphasizing that many of the points made during the previous day’s sessions about systems engineering and system design should also be applied to the materials world. He outlined three key points for his presentation: (1) defining material system design as component design; (2) identifying the value of virtual simulation for developing materials; and (3) embracing 3D printing of composites. Begley noted that building a finite element code may not be the best design strategy; new design tools are needed instead. Additionally, simulations should be done before experiments are done. Begley noted that the application should be used to design the material manufacturing approach.

As an example of material system design, Begley discussed a turbine application. Traditionally, when the operating temperature is increased, efficiency is gained and savings are increased. The design for the operating temperature, Begley asserted, relies on human involvement. While the thermal barrier coatings on super alloys have functioned well, those (and other systems with failure mechanisms) can be improved through other complex material systems like Ceramics Matrix Composites. Once the failures are better understood, tools can be built to better design materials.

Material system design also allows greater exploration to calculate the likelihood of failure. (See Figure 3.6.)

The code that is created should predict what one would expect and what one observes. Furthermore, Begley explained, the new tools that are built specifically for material systems should allow designers to evaluate the space quickly, preserving the good and eliminating the bad. This would be far more efficient than the current process, which involves materials personnel and mechanics personnel going back and forth with trial and error.

Begley pointed out that his models are linear elastic, unlike the more sophisticated models presented by Raul Radovitzky, which makes this process doable. The only drawback to this new tool is that one can end up with more data than it is possible to analyze without a data-mining specialist.

Begley next introduced the importance of virtual testing in the advancement of material system development. He explained that material scientists are using composite theory to make a synthetic version of Nacre, a strong composite material. Others are also investigating ways to develop artificial Nacre. These conversations illuminate the different ways that micromechanics can be used for system design.

Begley pointed out that even though it is possible to make such micromechanical models, they do not predict the engineering properties, like fracture toughness, of the microstructures. This is where virtual simulation comes in: they built a code with particular assumptions and did a graphics processing unit parallelization of Monte Carlo minimization (which allows thousands of functions evaluations at a time) to predict fracture toughness. Ultimately, Begley and his team realized that in order to make synthetic materials, it is necessary to make two-phase materials.

Begley introduced the concept of 3D printing as his final point. He noted that they have taken epoxy and added silicon carbide in a distributed or localized way. Begley then explained that specialized software is needed to address the specific needs when envisioning this type of complex material systems containing gradients of materials concentrations. However, successful design for material systems relies on the intersection of design theory, systems engineering, computer science, mechanical engineering, and materials science. Begley reminded participants that although virtual simulations can reduce time in the development cycle, choices must be made carefully and thoughtfully.

Discussion

Valerie Browning posed the following question: Since so much modeling looks at structural properties, how challenging is it to overcome the stovepipe mentality and focus on ultimate system performance between the structural and functional? Begley noted that, just as Guest presented during his talk, the tools to do this do exist, so it is possible; multifunctional exploration simply needs to be done more often. He noted that this is consistent with the intersection of systems engineering and materials science. Wadley asked if, given the recent focus on cellular structures and the ability to track acoustic wave propagation, Abacus does electromagnetic calculations as a way to measure things like radar and topology. Begley responded that it is difficult to adapt a commercial code; instead, the same result can be reached more efficiently by writing and inserting the needed capability. He also noted that developing smaller codes could be useful to help engineers eliminate poor designs in the future. Rosario Gerhardt added that COMSOL2 multiphysics works well for electromagnetic properties, and this could be a way for the two communities (physics and mechanical engineering) to work together, as long as the users of the framework first understand what you are doing in order for it to be useful. Begley noted that one must consider time when contemplating the use of COMSOL multiphysics.

McGrath revisited the workshop focus: 21st century paradigm change. He asked Begley to explain the nature of his paradigm change and to elaborate on exactly what he would need for it to work. Begley noted that while experiments are viewed as being expensive and worthy of dedicated staff support, a cultural shift is needed before simulations are viewed with the same importance and supported equally. Theresa Kotanchek said that in industry, both sides participate and enhance the understanding of what needs more exploration and the trajectory of the research. This model of work is necessary for progress; the alternative, working independently, is both costly and time consuming, Kotanchek explained. Begley agreed with Kotanchek and noted that this has to occur from the start of a project in all arenas, not just in academia.

___________________

2 COMSOL multiphysics is a finite element analysis, solver, and simulation software/finite element analysis software package for various physics and engineering applications.

EXPEDITIONARY MANUFACTURING: OPPORTUNITIES AND CHALLENGES

Kyu Cho, Research Area Lead of Manufacturing and Sustainment

Science & Technology (or Materials and Manufacturing Science Division),

Weapons and Materials Research Directorate, ARL

Kyu Cho provided a brief overview of the Weapons and Materials Research Directorate at ARL, which focuses on developing material and manufacturing processes for lethality and protection (i.e., armor) needs. Cho noted that the Army’s current systems are quite heavy. Moving forward, Cho explained, there needs to be an adjustment in the methods used to protect soldiers. The soldiers themselves need to be more agile, and the program and the acquisition process would also benefit from increased agility. Cho emphasized that there is little money available to make such changes: they must achieve maximum performance and limited weight at controllable cost and short of schedule in order to improve protection for soldiers. The life cycle of the Army’s major weapon systems, Cho pointed out, is typically 75 years or longer.

Cho asserted that the United States is investing in manufacturing because it recognizes its importance. The acquisition system dictates the relevant environment, and so the technology must also be relevant. Cho noted that the Army is moving in the right direction with their platform by developing relevant toolsets and methodology. The Army’s largest investments have been in manufacturing science and ManTech3 in order to prepare for the 2040 operational vision: by this time, Cho noted, the acquisition time frame should be reduced.

Cho suggested that high-fidelity integrated process design and process control tools are needed at all length scales, likely using a multiscale from atom-to-system-level parallel computing concept. If such tools are available, then more research can be done in less time, and the right materials and manufacturing investments can be made in the most useful and affordable protection for soldiers. Cho emphasized the need to integrate and assemble various materials and manufacturing technologies in order to improve soldier protection, for example. Cho’s current research focuses on protecting both mounted and dismounted soldiers with lighter armor systems that can survive in many cycles. However, because the Army represents a relatively small market, complex multimaterial manufacturing processes can introduce challenges associated with using expensive high technology in producing low-demand products. His Materials and Manufacturing Science Division executes integrated research in the following areas: emerging materials, soldier materials,

___________________

3 For more information about ManTech, see https://www.dodmantech.com/ManTechPrograms/Army, accessed May 18, 2018.

vehicle materials, lethality materials, and manufacturing and sustainment of science and technology.

Cho noted that the Army’s vision focuses on current and future readiness through expeditionary4 manufacturing: “research to enable the soldier to outfit expeditionary, lighter, survivable, lethal, adaptive materiel systems anywhere, anytime, on demand, and by design sustaining expeditionary missions for the operational Army of 2040.” In order to provide material “on demand” in the field, manufacturing itself must become highly efficient and mobile, according to Cho. This is not possible without the development of new physics-based crosscutting tools and the use of precisely controlled thermodynamics, kinetics, solidifications, and so on into the mobile manufacturing processes. ARL’s mission for the Army’s science and technology strategy is to “Discover, innovate, and transition.”

Cho next described manufacturability as a multifaceted issue. Process design, control, visualization, reconfigurability, and multifunctionality are key features of the Army’s long-term strategy for enabling limitless manufacturability. Cho noted that there has been a paradigm shift in the Army: they are now developing a research portfolio that addresses near-, mid-, and long-term goals. As part of the Army’s vision, Cho highlighted that additive manufacturing can be one of the manufacturing techniques used in adaptive manufacturing for the Army’s pervasive applications. For example, technology readiness level (TRL) of 95 is almost a fully mature technology readiness level, and cold spray is one of many of the Army’s additive manufacturing processes used at that level to repair and maintain structures.

Another unique additive technique in which the Army is investing is called ultrasonic additive, which can soften materials by applying an acoustic field. Varied physics-based modeling techniques that can predict component structures and properties are used to improve the performance at the lowest cost and in the shortest manufacturing cycle. Cho shared a secondary example of possible solutions for the vulnerable underbelly of the combat vehicle to show that traditional processes and infrastructure take far too long to get to those who need the equipment. Another important goal, Cho noted, is to enable design to direct manufacturing. In order for this to become a reality, the Army has to push the original equipment manufacturers to innovate. Big manufacturing data analytics also play an important role in advancing the Army’s vision of predicting whether or not the design is manufacturable at an affordable cost. The Army recognizes that it needs help from

___________________

4 “Expeditionary” means adaptive manufacturing systems anywhere, anytime, on demand, and by design sustaining expeditionary missions.

5 For a description of the technology readiness levels, see http://www.acq.osd.mil/chieftechnologist/publications/docs/TRA2011.pdf, updated May 13, 2011.

academia, industry, and defense laboratories, for example, in the form of an “open campus” for collaboration in order to achieve all of these goals.

Discussion

Beatty asked if Cho could talk more about process modeling. Cho explained that there are a lot of proprietary designs of secondary processes that may not be freely used because almost all of them are the works of private industry; the infrastructure exists, but it is difficult to utilize. Cho noted that the Army should invest in process modeling. Cornford noted that the Jet Propulsion Laboratory created the TRL system, but it does not sufficiently address the users’ needs. Cho added that research communities are not routinely using the technology readiness levels to advance science. McGrath said that there is still value added; for example, TRL provided a vocabulary for communication among diverse communities.

PANEL DISCUSSION: HIGH LEVERAGE ON COST, SCHEDULE, PERFORMANCE, AND ADAPTABILITY METRICS

| Participants: |

Steve McKnight, Vice President for the National Capital Region, Virginia Polytechnic Institute and State University (Virginia Tech) Theresa Kotanchek, Chief Executive Officer, Evolved Analytics, LLC Ray O. Johnson, Consultant |

| Moderator: |

Denise Swink, Independent Consultant |

Denise Swink introduced the panelists and presented the objective for the panel discussion: to reinforce the technology leverage aspects previously discussed and to highlight best practices for and potential improvements in workforce, education, and value chain.

Ray Johnson highlighted the important connection among advanced technology manufacturing, materials science, production, and efficiency. He also noted that chemistry now has a place in materials science, which allows design engineers to do, and ultimately to impact, more than they ever have before. Advanced manufacturing that includes rapid prototyping is another revolutionary aspect of the field; Lockheed Martin integrated this technology as a way to adopt a systems engineering approach of balancing size, weight, cost, and power challenges.

Johnson next noted that cost scheduling and performance management benefits should be looked at separately from operational performance benefits in the task statement for a project. Lockheed Martin also used the “digital tapestry” that digitized the supply chain. However, Lockheed Martin’s advanced manufacturing teams are coming up with ideas for original equipment manufacturers, but they

have limited control over how these new capabilities are used. Johnson commented that there is a desire to understand the connection between the large amount of funds allocated for advanced manufacturing and the role of materials science. Johnson emphasized the importance of developing integrated multifunction components; he used integrated electronics as an example. He also suggested that design engineers need to be taught to design without manufacturing constraints, and that the design tools in place have to foster this. Johnson said that we must find new ways to certify and qualify components, through public-private partnerships. Regarding workforce development, Johnson noted the positives and negatives of retirement turnover: while tacit knowledge is lost, the incoming generation of engineers can enable new capabilities because they have been educated in new tools and techniques.

Steve McKnight reinforced the theme from the first day of the workshop that people (as users, creators, developers, and translators) play a crucial role in complex systems. There is an opportunity, he explained, to have a new type of workforce that is comfortable with the new tools; however, the traditional pedagogical approaches and curricular standards will need to change first to engage this generation. One approach is to expand extracurricular opportunities within the field, like Virginia Tech does with its annual challenge for students to manufacture unmanned aerial vehicles, based on a particular mission profile, using additive manufacturing for all but the motor and controllers. McKnight described the program as a great success, with over 40 teams competing last year using topology optimization. Because this is extracurricular and cross-disciplinary, students gain cultural awareness in addition to experience working with diverse teams to achieve a goal and discovering new tools. McKnight credits the teams for the variety and quality of their designs, which reinforces the notion that humans are central to the decision-making process.

McKnight asserted that because government and defense agencies are not viewed as “innovative,” they have a difficult time recruiting people from this new generation to their workforce; this is a challenge that must be addressed, he said. He also discussed the current organization of funding agencies as a barrier to progress. Directed programs that emphasize integration and collaboration between communities are essential, especially for an agency like the Department of Defense (DoD), in order to address future needs and overcome traditional stovepipes created by humans that impede scientific discovery. According to McKnight, cultural issues are more pressing than any technological issues right now.

Theresa Kotanchek first provided an overview of Making Value for America, a recently released National Academy of Engineering report.6 The report presents three fundamental issues in the transformation of businesses: globalization,

___________________

6 National Academy of Engineering, 2015, Making Value for America: Embracing the Future of Manufacturing, Technology, and Work, The National Academies Press, Washington, D.C.

advanced technology digitalization, and reengineering and redesign of business operations. Kotanchek also shared that funding for start-ups has decreased in the United States. This is particularly problematic for materials and manufacturing-based initiatives, as those are traditionally capital-intensive startups. These issues influenced the discussions that appeared in the report:

- Education. Because the marketplace is changing, and the skills needed for this marketplace are changing, more than 50 percent of the workforce is at risk. For example, the manufacturing workforce today is now designed for those with advanced degrees. University, agency, and industry partnerships can help address these workforce changes.

- Collaboration. Entrepreneurs, investors, academics, industry leaders, and laborers need to work together to establish best practices in the creation of robust business models, for example. College students, additionally, need to be taught these skills prior to entering the workforce. For example, lean manufacturing still has not been implemented effectively in many companies.

- Inclusion and diversity. Some of the best talent emerging from U.S. universities is pursuing work outside the United States because of increased opportunities abroad. Within the science and technology sector, females are particularly underrepresented.

Kotanchek also highlighted the role of data in this conversation. She explained that organizations might collect and store a large quantity of data, but they do not analyze it in a useful manner. For example, data is an asset managed properly only 5 percent of the time, according to Kotanchek. DoD especially could benefit from greater attention to data management.

Jesus de la Garza commented on the educational dimensions of the conversation. He compared the inherent interdisciplinarity of life to the need for interdisciplinarity in science and engineering. He said that this can be achieved using a T model7 for student development: students are given a solid basic understanding of the discipline, and then they can feel comfortable branching into other disciplines. This will allow these students flexibility within the workforce to interface with those in other fields. Swink added that there is a lack of widespread knowledge about industry-sponsored consortia that exist at universities (e.g., there are 15 at Virginia Tech). She emphasized that these institutes should be leveraged and involved in the emerging connection between materials and manufacturing. This can be done fairly quickly, according to Swink. McKnight noted that Virginia Tech

___________________

7 For more information about the T model for student learning, see http://groupcvc.com/service-thinking/t-shaped-professionals/.

is large enough to attract and support these many partnerships. He introduced an initiative between Virginia Tech and Gallup to measure students’ well-being post-graduation based on their college experiences. The study revealed that there are certain attributes connected to success: (1) internship; (2) multisemester project; (3) mentorship; and (4) involved faculty. These attributes, all symbolic of T-shaped learning, can also be used to develop relevant and enriching industrial partnerships. Kotanchek voiced her support of undergraduate and graduate project-based learning as an essential component to eventual workforce success. Johnson noted that the national security enterprise is constantly changing, which makes it difficult to predict future challenges. He posed two questions to the panel and the participants: (1) How will we be able to respond to these challenges from a talent perspective? (2) How will we attract recent graduates to positions in government agencies that are eliminating pensions, reducing employee benefits, and requiring U.S. citizenship?

McGrath shifted the topic to issues relating to the supply chain by referencing Johnson’s previous point about the digital tapestry. In a best-case scenario, the digital tapestry would extend to the bottom of the chain and provide information about material models. The actual situation, however, includes a proprietary global supply chain. There also exist external constraints (e.g., desire to purchase American-made products, to use secure components, etc.) The customer, then, has the chance to construct a supply chain different from the one discussed. The result is a lack of fit in the supply chain. McGrath posed the following question to the panel: What does the materials community need to be thinking about in the context of supply chains?

Johnson agreed that the digital tapestry is a new idea that is still in the development stage. He noted that the terms and conditions are flowing down, and that it is possible to make demands, but that the supply chain is still fragile. His hope is that the benefits (e.g., cybersecurity) to the suppliers are great enough to encourage their participation. A survey of the Lockheed Martin supply chain revealed that 75 percent did not follow security procedures, so there is evidence that this is a needed change. Though it will take time before this system is fully integrated, Johnson acknowledged, the Office of the Secretary of Defense could now begin standardizing within the ecosystem. Swink noted that it is important to stop perpetuating the idea of a linear supply chain; instead, the term “value network” should be used. This conceptual change would impact the ecosystem by encouraging dialogue and connection among the involved communities. Kotanchek supported this ideological change: value networks are important, especially for the defense system that is predominantly electronic, as a way to ensure that all perspectives are brought to the table and a broad perspective is used to make decisions. Johnson responded to McGrath’s earlier question about the role of materials in the supply chain. He noted that materials development can work with the supply chain, helping people

better understand and characterize new materials, which is most useful for the end user. The more descriptive the statistics that go into the process, the better the outcome for design engineers. McGrath wondered if the risk for using a new material is higher for a large supplier or for a small supplier. Johnson responded that the large supplier has a strong incentive to adopt new materials and meet competitive performance needs, so its risk might be lower.

Browning said that the gap between the amount of data produced and the global storage will near 80 percent by 2020, which means that new data centers will likely need to be created. She asked if there is a concern about the cost and security of generating and storing more data. Kotanchek responded that there are, at least, advances in data storage methods. However, these statistics should force people to think carefully about the amount of data truly needed, she said. In response to Browning’s question, Johnson said that he does not think data storage should be a concern. The concern should be, he said, the advances in security, especially in a world of highly connected people. Swink shared an anecdote about General Mills, which captures over 50 million data points per day, but which is actually less than previous times. The focus, then, should be on making better decisions about data collection and developing clearer parameters for data ownership. McGrath interjected that Jim Wetzel, the smart manufacturing leader at General Mills, spoke about the process used to determine when to make a box of Cheerios, for example. Swink explained that this is referred to as the “ecosystem of stuff.” Instead of making single-point solutions, they are trying to get their systems to work together. Such types of decisions can be analogous to those made by DoD.

Cornford noted that the top 10 jobs in the marketplace did not exist 10 years ago. He asked how we ensure that people know what they are getting and what they need. Kotanchek said that all planning at Dow is done with a “just-in-time” approach: They examine the drivers from the consumer marketplace, the best mode of delivery, the benefits, and the risk, for example.

McGrath asked McKnight to share more of Virginia Tech’s experiences with cybersecurity and manufacturing. McKnight revealed that there are a number of vectors and a number of threats. There are currently teams of students working on projects both to create and to solve problems related to threats to the digital system. Open source approaches such as the digital tapestry can create a challenge in terms of threat level and in future use if it is not a trusted source. McKnight noted that if we stop trusting things from the industrial base, this can compromise progress. And, as systems get more and more sophisticated, it will become increasingly difficult to detect changes. This is where the collaborative value network can play an important role in addressing the diversity of cyber challenges in materials and manufacturing, McKnight explained. Swink commented that perimeter security is challenging to maintain and is typically infected for over 260 days before users realize they have been hacked. Though manufacturers are hesitant to use Cloud-

based data storage, it can more easily detect attacks sooner and offer immediate protection. Kotanchek said that many companies are reintegrating as they see opportunities for efficiencies in and control of cybersecurity instead of relying on third parties.

To bring the workshop to a close, McGrath asked if there were any further comments that participants wanted to make. Charles Ward noted that the workshop’s presentations were primarily high level and that the conversation frequently returned to traditional ways of describing materials. He asked how the paradigm shift is actually made, how the move toward model material certification happens, and how the model-based definition of materials is actually adopted. McGrath said that this paradigm shift definitely should be considered by this community. De Kleer said that most advances in technology are in materials and that there is a disconnect between materials sciences and the physical design world. Gerhardt added that the reason for this is because there has been too much emphasis on the chemistry and the structure of materials in the research community; the way things are made controls everything. A link needs to be made, she explained, so that materials are looked at more complexly. Begley said the lack of communication goes both ways: the materials community often does not appreciate the trade-offs that go into the material selection. McGrath added that the notion of cultural change is still central to this issue. Begley noted that Ward’s ideas about the model for materials do address the cultural problem. Kotanchek added that the reality of delivering multiple functionalities needs to be a part of this discussion. Beatty said that success can be measured when the line between the two communities disappears. Howard Last noted that an actionable item is to better combine simulation modeling with experimentation to move testing forward in a shorter time period. Radovitzky noted that this is being done in small pockets across the country by groups like the ARL—for example, Beatty’s program should be run across the agencies. De Kleer commented that security is limited in many commercial applications. The primary security threat in this instance is people. Because of this, de Kleer wondered if there is a way to model the people themselves (i.e., human behavior) to address this issue. McGrath thanked participants for their contributions and adjourned the workshop.