2

Broadening Access to the Results of Scientific Research

SUMMARY POINTS

- The concept of open science, as it has emerged over the past several decades, is tightly linked with traditional scientific values and norms. At the same time, the digital revolution makes possible a restructuring of research practices and institutions built around the openness of publications, data, code, and other research products.

- Open science is motivated by a number of actual and anticipated benefits. They include the availability of the results of publicly funded research to the public, as well as more reliable and efficient research. Openness also enables researchers to address entirely new questions and work across national and disciplinary boundaries. Open science supports expanded access to the research process itself through citizen science activities.

- Despite the advantages and motivations for open science, significant barriers and limitations remain. These barriers and limitations include aspects of research culture and incentives that work against open science, insufficient infrastructure, resource constraints, disciplinary differences, policy and legal constraints, and lack of awareness.

ORIGINS AND SIGNIFICANCE OF OPEN SCIENCE

The concept of open science, sometimes also referred to as “open scholarship,” is an ambitious goal that aims to ensure the availability and usability of (1) scholarly publications, (2) the data that result from scholarly research, and (3) the methodology, including code or algorithms, that was used to generate those data. The first of these is often known as open access. Since the term open access is sometimes used in other contexts, this report will use the term open publication instead. Ensuring the availability and usability of data resulting from research is known as open data. Ensuring the availability and usability of methods, in the case of computational work, is known as open code, and it is related to the concept of open source software.

Open science typically refers to the entire process of conducting science and harkens back to the original precepts underpinning the conduct and goals of the scientific enterprise (Storer, 1966; Borgman, 2010; Neylon, 2017). Openness has been seen as a “norm” of science: “The substantive findings of science are a product of social collaboration and are assigned to the community…. The institutional conception of science as part of the public domain is linked with the imperative for the communication of findings” (Merton, 1942). In addition, openness facilitates realization of the scientific norm that results are critically examined before they are accepted (Merton, 1942). The digital revolution of the past several decades has vastly increased the possibilities of openness and lowered the costs:

Shifting from ink on paper to digital text suddenly allows us to make perfect copies of our work. Shifting from isolated computers to a globe-spanning network of connected computers suddenly allows us to share perfect copies of our work with a worldwide audience at essentially no cost. About thirty years ago this kind of free global sharing became something new under the sun. Before that, it would have sounded like a quixotic dream (Suber, 2012).

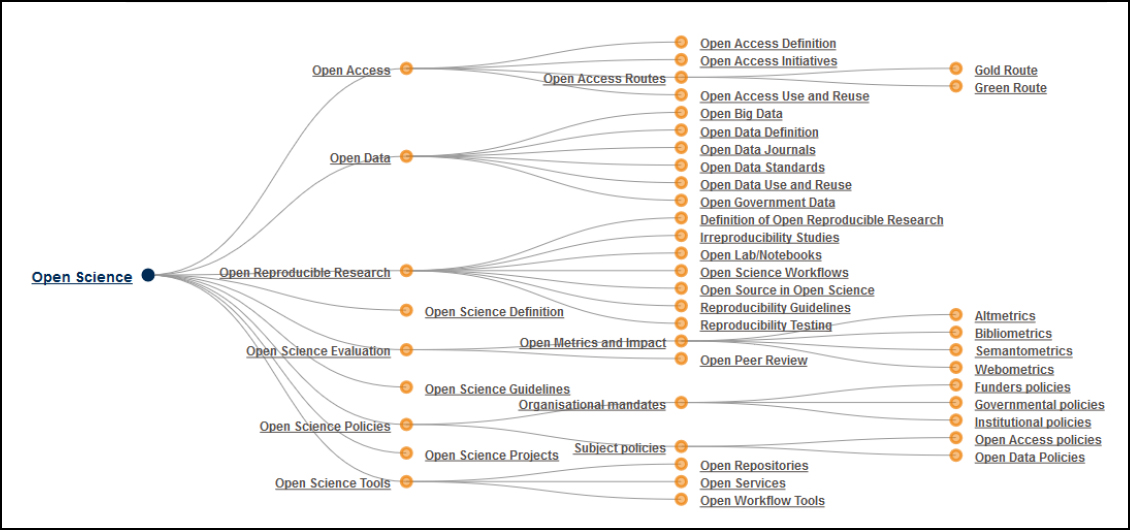

More recently, the InterAcademy Council and the National Academies of Sciences, Engineering, and Medicine have reaffirmed openness as a core value of science (IAC-IAP, 2012; NASEM, 2017b). The European FOSTER (Facilitate Open Science Training for European Research) group has argued that open science is a concept that applies to the “whole research cycle, fostering sharing and collaboration as early as possible thus entailing a systemic change to the way science and research is done” (FOSTER, 2018; Figure 2-1).

The contemporary focus on openness in science has evolved in the context of the public Internet and the communication opportunities it has afforded, as well as the broadening of the scientific enterprise to include many new institutions worldwide. Distinct, but interrelated, motivations also include: the taxpayer’s right to the results of publicly funded research; the ability of any member of society to scrutinize, evaluate, challenge and reproduce scientific claims; and the opportunity for anyone, including private citizens, to build directly on the scientific investigations of others. The motivations, benefits, and challenges of open science will be explored in more detail below. These factors all influence how open science is perceived, defined, implemented, and promoted (Royal Society, 2012; Fecher and Friesike, 2014; Pomerantz and Peek, 2016; Tennant et al., 2016).

Open publication is the most developed aspect of open science and has become more widely implemented over the past decade. Open publication refers to free and unrestricted access to publications with the only restriction on use being that proper attribution and credit needs to be given to the original creator of the work, as originally advocated by the Budapest Open Access Initiative, 2002, see

Box 2-1).1 Further, publications are to be deposited in “an appropriate standard electronic format” in at least one archive maintained by a reputable institution “that seeks to enable open access, unrestricted distribution, interoperability, and long-term archiving” (Open Access Max-Planck-Gesellschaft, 2003).

In the years since the first open access or open publication definition was put forward, open journals have emerged and traditional journals have, in some cases, revised their relevant policies. In an attempt to delineate the variation in interpretation of openness by journal publishers, the Public Library of Science (PLOS), Scholarly Publishing and Academic Resources Coalition (SPARC), and Open Access Scholarly Publishers Association (OASPA) have published the guide HowOpenIsIt? (Table 2-1). The guide assesses the spectrum of policies and approaches from fully open to closed along multiple dimensions. It suggests that fully open publication means that all articles in the journal are freely available to readers immediately upon publication. Immediate availability of articles at no cost to the reader beyond that required to access the Internet is known as gold open access. Other aspects of fully open publication in the realm of articles include generous reuse rights; the author holding copyright with no restrictions; the author being able to post any version to any repository or website with no delay; journals making copies of all articles automatically and immediately available in a trusted repository; and the full text of articles and supporting data being accessible via an application program interface (API) (SPARC et al., 2014). Less open approaches to publication include green open access, in which authors are able to self-archive a version of the article in an open access repository when access to the final published version requires a subscription to the journal.

___________________

1 The Bethesda Statement on Open Access Publishing is a related statement with a focus on the biomedical research community (Bethesda Statement, 2003).

TABLE 2-1 HowOpenIsIt?

| ACCESS | READER RIGHTS | REUSE RIGHTS | COPYRIGHTS | AUTHOR POSTING RIGHTS | AUTOMATIC POSTING | MACHINE READABILITY | ACCESS |

|---|---|---|---|---|---|---|---|

| Free readership rights to all articles immediately upon publication | Generous reuse & remixing rights (e.g., CC BY license) | Author holds copyright with no restrictions | Author may post any version to any repository or website with no delay | Journals make copies of all articles automatically available in trusted third-party repositories (e.g., PubMed Central, Open Aire, institutional immediately upon publication) | Article full text, metadata, supporting data (including format and semantic markup) & citations may be accessed via API, with instructions publicly posted | ||

| Free readership rights to all articles after an embargo of no more than 6 months | Reuse, remixing, & further building upon the work subject to certain restrictions & conditions (e.g., CC BY-NC & CC BY-SA licenses) | Author retains/publisher grants broad rights, including author reuse (e.g., of figures in presentations/teaching, creation of derivatives) and authorization rights (for other to use) | Author may post some version (determined by publisher) to any repository or website with no delay | Journals make copies of all articles automatically available in trusted third-party repositories (e.g., PubMed Central, OpenAire, institutional) witin 6 months | Article full text, metadata, & citations may be accessed via API, with instructions publicly posted | ||

| Free readership rights to all articles after an embargo greater than 6 months | Reuse (no remixing or further building upon the work) subject to certain resturctions and conditions (e.g., CC BY-ND license) | _____________ | Author may post some version (determined by publisher) to any repository or website with some delay (determined by the publisher) | Journals make copies of all articles automatically available in trusted third-party repositories (e.g., PubMed Central, OpenAire, institutional) witin 12 months | Article full text, metadata, & dations may be crawled without special permission or registration, with instructions publicly posted | ||

| Free and immediate readership rights to some, but not all, articles (including "hybrid" models) | Some reuse rights beyond fair use for some, but not all, articles (including "hybrid models") | Author retains/publisher grants limited rights for author reuse (e.g., of figured in presentations/teaching, creation of derivatives) | Author may post some version (determined by publisher) to certain repositories or websites, with or without delays | Journals make copies of some, but not all, articles automatically available in trusted third-party repositories (e.g., PubMed Central, OpenAire, institutional) within 12 months | Article full text, metadata & citations may be crawled with permission, with instructions publicly posted | ||

| Subscription, membership, pay-per-view, or other fees required to read all articles | No reuse rights beyond fair use/dealing or other limitations or exceptions to copyright (All Rights Reserved) | Publisher holds copyrights, with no author reuse beyond fair use | Author may not deposit any versions to any repositories or websites at any time | No automatic posting in third-party repositories | No full text articles available for crawling |

SOURCE: SPARC, PLOS, and OASPA. 2014. Online. Available at https://www.plos.org/files/HowOpenIsIt_English.pdf. Licensed under CC BY.

Note that copyright holder consent is a key requirement for making a publication openly available. Licenses designed to allow authors to retain copyright to their work have been developed by the Creative Commons organization, which allows authors to choose from one of several licenses consistent with copyright law (Carroll, 2011, 2015). The retention of copyright by authors for the purpose of making publications openly available has been one of the most contentious issues surrounding open publication, since it goes against journal publishing practices that require authors to assign the copyright to their work to the journals through copyright transfer agreements as a condition for publication.

Beyond open publication, much recent activity has been dedicated to the concept of open data, such as the availability of the data that support the research results reported in an article. Increasingly, the openness of data is seen as being critical to the progress of science, stimulating innovation, enhancing reproducibility, and enabling new research questions. Combining datasets for new insights and mining data through sophisticated machine learning algorithms are made possible by the open availability of datasets (Hrynaszkiewicz and Cockerill, 2012; Tennant, 2016). The Open Data Handbook (2018) offers this definition for open data: “Open data is data that can be freely used, reused and redistributed by anyone – subject only, at most, to the requirement to attribute and share alike.” (Open Data Handbook, 2018). This implies that the data are available “in a convenient and modifiable form” such that there are no unnecessary technological obstacles to exercising licensed rights (Open Data Handbook, 2018).

The Panton Principles for Open Data in Science, among other points, emphasize that when publishing data, authors need to “make an explicit and robust statement” about their wishes regarding how their data can be used (Murray-Rust et al., 2010; Molloy, 2011). With a focus on data accessibility, stewardship, and reuse by humans as well as machines, the FAIR Guiding Principles were developed by an international group including individuals representing academia, industry, funding agencies, and publishers (Wilkinson et al., 2016; see Box 2-2).

It is important to note that FAIR data and open data are distinct but complementary concepts. FAIR data are not necessarily open, and open data are not necessarily FAIR. Data that are open and FAIR will maximize the impact of open science.

Finally, the concept of open code is fundamentally linked to open source software and the Open Source Initiative that was founded in 1998 (Open Source Initiative, 2018). Open source licenses allow users the right to modify software code and freely redistribute it. The licenses are motivated by a desire to share and improve code by participating in an engaged community of users and software developers. The recent focus on open code differs in that it has not been concerned solely with the collaborative nature of software development, but ties in with the broader goals of open science. With computation becoming an increasingly integral part of scientific research in many domains, the availability of data and computational methods for many research studies is critical to the evaluation, reproducibility, and extension of those studies. A workshop held at the American

Association for the Advancement of Science in early 2016 led to a set of recommendations to address this problem (Stodden et al., 2016). In order to allow for reproducibility, the group recommended that “data, code, and workflows should be available and cited” (Stodden et al., 2016).

The Transparency and Openness Promotion (TOP) Guidelines promulgated in 2015 are a set of recommended standards for adoption by journals to promote open practices, which encompass open data, research materials, and code (Nosek et al., 2015). The Guidelines are further described in Chapter 4.

MOTIVATIONS FOR OPEN SCIENCE

A vision of open science is unfolding in research communities across a wide range of scientific domains, driven by the expanding use of digital, easily shareable products of scientific research. These products range from publications to software used to produce results; from raw and/or processed data associated with research to digitized representations of physical artifacts. The rationale for opening the methods and outcomes of research is strong, multifold, and increasingly accepted by scientific, engineering, and biomedical investigators.

Published science has traditionally operated as a form of open or partially open commons or common-pool resource, subject to legal frameworks such as intellectual property rights and with a few exceptions such as those for proprietary research and research related to national security (Hess and Ostrom, 2003). Intellectual property issues are covered in Chapter 5. Researchers publish their work if they want to get credit and recognition, which sustains and advances their careers. Advances in information technology are greatly expanding the possibilities for using this resource. To the extent that science becomes more open and accessible, there should be more rapid and efficient progress in generating reliable knowledge. The more science is used, the more valuable it is. Individual researchers benefit as their own contributions become more widely known and recognized.

At the same time, there is a need to develop rules and norms to manage and cooperate in the use of this shared resource. What rules are needed to align the self-interests of the variety of stakeholders so that they contribute to the larger vision and realize the advantages of open science? Are specific efforts needed to ensure that the open science enterprise remains sustainable—that efforts to feed and replenish the commons run ahead of efforts to exploit it? What does sustainability mean in different national and disciplinary contexts? The economic analysis of open source software provides some insight on how communities can come together to create and sustain shared resources (Lerner and Triole, 2000).

This section describes the motivations for open science as well as the benefits: both those that are being realized today and those that can currently be anticipated. Chapter 3 includes more detailed descriptions of approaches to open science that are being taken in several different disciplines and their benefits. These benefits include enhancing the ability of the general public to access knowledge generated through publicly supported research, strengthening the reliability and efficiency of research, enabling researchers to address new questions, including those that cross disciplinary boundaries, and allowing a broader group of scientists to participate in the research enterprise on a global basis. The following section describes various barriers and limitations to wide implementation of open science.

Certainly, given the fact that the research enterprise as a whole is some distance from fully realizing open science, and since many of the benefits have yet to be realized, they are difficult to quantify. To that extent, this discussion is forward-looking. Many important transformations and innovations in the history of

science, and in history more broadly, have been opposed at first because of difficulty in quantifying or even imagining the benefits. For example, much of the biomedical research community was strongly opposed to the Human Genome Project when it was first proposed, believing that it diverted resources from more valuable investigator-driven work (Palca, 1992). The project and its impact look much different in hindsight. Today’s advances in biomedical research, and many other fields such as archaeology, would not be imaginable without genomic mapping and analysis.

While there are undeniably significant costs associated with implementing policies and practices that support open science, realizing the benefits discussed in this section translates into a higher return on the investment of financial and human resources in research activity. Likewise, downstream societal benefits of research such as improved medical treatments and economically valuable technological advances can be realized more quickly and efficiently.

Ensuring the Reliability of Knowledge and Facilitating the Reproducibility of Results

Ensuring the reliability of knowledge and reported results constitutes the heart of science and the scientific method. Experimental research progresses by testing and refining hypotheses and building understanding based on the accumulated evidence. Throughout the history of science, there are examples of widely-accepted hypotheses being superseded or overturned due to failures to reproduce or replicate findings. Recent concerns about reproducibility and replicability in science emerged first in fields such as biomedical research and social psychology, but have become a broader issue in science (Economist, 2013).

In recent years, a number of efforts to reproduce or replicate published results have been undertaken. Several efforts in biomedical research found rates of reproducibility of fifty percent or lower (Begley and Ellis, 2012; Prinz et al., 2012). In 2015, the Open Science Collaborative attempted to replicate100 psychological studies published in leading journals (Nosek, 2015). Although 97 percent of the original studies had statistically significant results, OSC researchers were only able to replicate 39 percent of the findings. Camerer et al. (2016) replicated 18 laboratory experiments in economics and confirmed over 60 percent of the published findings. However, Chang and Li (2015) could only replicate half of the results in published economics journals using author-provided code and data because many journal data archives did not have the code and data.

John Ioannidis has highlighted issues such as underpowered studies, flexibility in study design and analysis, and publishing bias that favors articles reporting positive results as causes of irreproducibility (Ioannidis, 2005). Other causes include the use of underperforming computational tools in data analysis and cross contamination or misidentification of cell lines in biological research (Offord, 2018; Huang et al., 2017). Outright fabrication or falsification of data is also a cause of lack of reproducibility. Although there is not enough information available to estimate the percentage of published work that is fabricated or falsified,

there has been a steady stream of high-profile cases from countries around the world, and several examples of researchers in fields such as anesthesiology who have built entire careers on fraudulent work spanning 100 or more articles (NASEM, 2017b). While some level of irreproducibility is normal in research, the inability to replicate a very high percentage of scientific findings undermines the credibility of science (Wykstra, 2017).

How does open science relate to concerns about reproducibility? Certainly, open science in the form of open publication, open data, and open code supports the ability of researchers to confirm and reproduce findings. Ensuring openness and access facilitates better quality research through prevention of mistakes and more rapid and efficient discovery and correction of mistakes that do occur. Once it becomes common practice for significant and relevant portions of digital representations of scientific results to be open and shared, one can anticipate more care and attention will be paid to the process of preparing and producing the results—including their documentation—so that others can follow the process in more depth than was possible previously. Expectations and requirements for openness also allow for a more rapid discovery of fabrication and falsification of data, serving as deterrents to misconduct (NASEM, 2017b). In short, open science strengthens the self-correcting mechanisms inherent in the research enterprise.

Greater transparency is a major focus of those working to increase reproducibility and replicability in science (e.g., Munafò et al., 2017). The Reproducibility Initiative, launched in 2012 by Science Exchange, PLOS, Figshare and Mendeley, identifies and rewards high-quality reproducible research through validation of critical research findings (Science Exchange, 2018). Recent concerns over reproducibility have served to reinforce and catalyze progress toward open science in the form of new policies and practices adopted by research funders, research institutions, and publishers, as will be explored in more detail below.

Yet open science is not the only factor or solution to addressing the reproducibility issue, and open science will not automatically solve whatever problems there are. It should also be noted that some have questioned whether reproducibility is a significant issue for science (Fanelli, 2018). As this report was being completed in 2018, the National Academies of Sciences, Engineering, and Medicine was undertaking a study on reproducibility and replicability of research, that “will draw conclusions and make recommendations for improving rigor and transparency in scientific and engineering research and will identify and highlight compelling examples of good practices” (NASEM, 2018b).

Faster, More Creative, and More Efficient Knowledge Creation

In addition to improving the reliability and reproducibility of research, open science can aid the advance of knowledge in several other ways. First, open science can accelerate progress by making research more efficient. When scientific results are made openly available in digital form, they enable faster, deeper, and broader dissemination of the results to other researchers. Wider sharing and collaboration allows research communities to quickly access results and underlying

information, which, in turn, stimulates more, and more rapid, scientific discovery. New networking tools hold out the possibility of marshalling large collaborations of researchers who will be able to tackle problems more quickly and effectively than what is feasible today (Nielsen, 2011). When data resulting from clinical research on humans and on animals is reused, it maximizes the value of the contributions made by those research subjects to the advance of knowledge. It is important to note that sharing and reuse of data vary widely between disciplines. As will be explored in more detail in Chapter 3, significant data resources have been created in genomics and astronomy that demonstrate the value and logic of data sharing and reuse. In other domains, particularly those where the culture of sharing and reuse has not taken hold, benefits are not being realized (Wallis et al., 2013).

Second, open science enables researchers to ask and address entirely new sorts of questions. Semantically linked, machine-readable data can be analyzed by computers in order to reveal relationships within and between systems that would be impossible to discover otherwise (Science International, 2015). The potential for data from different disciplines being linked in this way and queried to understand complex phenomena and systems is particularly exciting. Increasingly, addressing complex problems of interest in science and society requires a multitude of methods and scientific results from different communities. This interdisciplinary work will be greatly aided by open, searchable, digital results that are made more available across communities. Without such interdisciplinary exchanges, modern problem-solving is hindered by leaving knowledge to be in effect locked inside a particular community—even when most members of a given scientific society have free access to journals and digital artifacts in a particular field. Furthermore, as search engines are able to go beyond keywords to follow scientific arguments from one paper and even community to another, interdisciplinary science has the potential to be highly accelerated.

While the above discussion implies that many benefits of this sort of work will be reaped in the future, as open science practices become more widespread, some examples can be seen today. What is needed to address complex problems is the ability to find and integrate results not only within communities, but also across communities—without paywalls or subscription barriers. Utilizing advanced machine learning tools in analyzing datasets or literature, for example, will facilitate new insights and discoveries. Further, digital platforms for extending and repurposing scientific results and connecting them across multiple communities, as well as sophisticated search engines that can follow scientific arguments from one result to another, will need to be developed and made available. Making data available under FAIR principles is critical to facilitating this acceleration in knowledge creation. For example, when data, software, algorithms, and other digital artifacts of the scientific process are made available and interoperable, they can more easily be reprocessed, modified, extended, or used for other purposes. For example, fields such as ecology and epidemiology combine disparate data from multiple sources to analyze phenomena such as oil spills and the spread of disease (Pasquetto et al., 2017).

What evidence is there that open science will deliver these benefits? Economists have studied the knowledge production process at a broad level and largely concluded that open science promotes knowledge discovery and better science. For example, Mukherjee and Stern (2009) developed an overlapping generations model that elucidates the tradeoff between secrecy and disclosure. Secrecy yields private returns whereas the private and social returns to disclosure and the benefits of open science depend on the use of scientific discovery by subsequent generations. The model shows that open science is associated with a higher level of social welfare. Another study examined the relationship between the innovative performance of biotechnology firms and their activity in academic publishing, and found that open science strategies had a positive impact on innovation (Jong and Slavova, 2014).

Economists have also studied the returns to open science in the context of publications and patents. Publications promote open science whereas intellectual property rights assigned by patents exchange public disclosure of an invention for the right of the inventor to exclusively exploit the invention for a limited time. (Chapter 5 further explores intellectual property issues related to open science.) Researchers have examined whether there is a trade-off between patenting inventions and publishing results, and found that these research activities are complements instead of substitutes (Stephan et al., 2007; Fabrizio and DiMinin, 2008; Azoulay et al., 2009). However, Murray and Stern (2007) and Fehder et al. (2014) identified publication-patent pairs and examined the impact of patenting on subsequent research. Publications appear before the patent is granted, and citations to the publication could potentially change once intellectual property rights were assigned. They found that papers were less likely to be cited after the patent was assigned, suggesting that patenting may close off inquiry and reduce knowledge creation in areas related to the patented invention. Aghion et al. (2010, 2016) studied the impact of NIH agreements that increased academics’ access to patented, genetically engineered mice. They found that increased openness, measured by access to mice, prompted entry by new researchers and increased the diversity of research topics. They concluded that intellectual property rights decrease research interest and diversity. Williams (2013) examined the effect of Celera’s patents on human genes on subsequent research and innovation. She found that patenting reduced research and innovation related to the patented genes by between 20 to 30 percent. The topic of how proprietary concerns may act as a barrier to openness is discussed below.

Researchers have also examined the impact of online access and open publication of scholarship on the number of citations. Online access to articles via subscription reduces search costs and likely increases citations, but the citation impact may be conflated with the quality of the journal. Evans and Reimer (2009) found that open publication increased citations to multidisciplinary journals by 20 percent. However, McCabe and Snyder (2015) showed that this estimated increase resulted from a specification error and disappeared when time effects were included in the model. They concluded that the citation benefit of open publication

in the previous literature was attributable to omitted variable bias from not controlling for journal quality. McCabe and Snyder (2015) found that JSTOR (an article repository) increased citations to economics and business journals by about 10 percent, but Elsevier’s Science Direct appeared to provide no citation boost. Both JSTOR and Science Direct provide online access but are subscription-based, not open. McCabe and Snyder (2014) found that open publication increased citations to science journals by about 8 percent.

Eysenbach (2006) demonstrates that open articles have higher citations in PNAS than subscription access articles. Gaule and Maystre (2011) revisited this question and found no significant citation effect. Davis et al. (2008) and Davis (2010, 2011) conducted an experiment where submissions to 11 American Physiological Society journals were randomly assigned to open publication or subscription access. They found that open articles were more likely to be downloaded but received the same number of citations as subscription access articles one and three years after publication. McCabe (2013) concluded that the citation impact of open publication may have been overestimated by open access supporters. On the other hand, Wagner (2014) summarized a large, annotated bibliography on the topic with the conclusion that open access articles have a persistent citation advantage that varies by discipline.

How can we reconcile the findings of Aghion et al. (2010) and Williams (2013) which show that intellectual property rights were associated with less diversity in science, with the conclusions of Davis et al. (2008) and McCabe and Snyder (2015), which found limited impact of online and open publication on citations? First, genetically engineered mice and genetic tests patented by Celera are high-impact scientific discoveries. Limiting access to these discoveries closed down some productive avenues of inquiry. However, not all published articles are of the same quality. McCabe and Snyder (2013, 2014) found that open publication increased citations to the highest quality articles and decreased citations to the least-cited articles.

Expanding Access to Knowledge and to the Research Enterprise

Open science also expands access to knowledge and to the research process itself. One important justification for expanded access is the public support for a large portion of the research activity that leads to reported results. The federal government invested $121 billion in research and development (R&D) spending in fiscal year 2015. About $34 billion of the total is allocated to university R&D, resulting in datasets, publications, and other outputs (Rosenbloom et al., 2015; Edwards, 2017; NSB, 2018). Federal spending on intramural research totaled about $36 billion in 2015 (NSB, 2018). Over the past several decades, the belief that knowledge whose creation has been supported by the public should be accessible to the public has gained considerable ground. For example, disease advocacy organizations and consumer groups played an important role in support of NIH’s policy of requiring that publications based on NIH-funded work be made available to the public following an embargo period (Albert, 2006). As will be explored

in more detail below, support for open science is growing among researchers, although attitudes are ambiguous (Odell et al., 2017). In 1997, the National Research Council recommended that:

Full and open access to scientific data should be adopted as the international norm for the exchange of scientific data derived from publicly funded research. The public-good interests in the full and open access to and use of scientific data need to be balanced against legitimate concerns for the protection of national security, individual privacy, and intellectual property (NRC, 1997).

The proposition that research data created through public funding should be publicly accessible as a default position has been advocated as an international standard. According to Science International, “if this social revolution in science is to be achieved, it is not only a matter of making data that underpin a scientific claim intelligently open, but also of having a default position of openness for publicly funded data in general” (Science International, 2015).

The strongest early practical rationale for this position came from biomedical research; the idea was that the public should be able to see and utilize the latest research relevant to promoting health and curing disease. This rationale spurred policy makers to support the development of the National Library of Medicine’s PubMed interface to MEDLINE, NLM’s database of citations to the literature, in the 1990s and to PubMed Central, NLM’s full text article repository, in the 2000s (Varmus, 2009). Knowledge of biomedical research has helped communities facing health crises, such as AIDS activists, to better pursue their goals (NASEM, 2016). Health literacy and broader science literacy can help individuals, communities, and entire societies to benefit from research in areas such as popular epidemiology and participatory environmental monitoring (NASEM, 2016).

Open science may also contribute to a democratization of knowledge and a better informed citizenry (Arza and Fressoli, 2017). The proposition that scientific knowledge is a global public good raises an international dimension to this particular benefit of open science (NRC, 1997; Science International, 2015). Expanded international use of publicly-funded research may deliver positive benefits without disadvantaging the researchers who originally performed it or the national government that supported it. Developing country researchers are often enthusiastic users of open science resources (Swan, 2012). An estimated 80 percent of active journals in Latin America are open access (Science International, 2015). There are several open data initiatives in Africa, including the African Open Science Platform, which aims to “promote the development and coordination of data policies, data training and data infrastructure” across the continent (CODATA, 2016).

It may also be the case that the impacts of data-enabled science and technology on individuals and societies are so profound and potentially disruptive that deeper engagement with society is necessary both in solving existing problems

and legitimating emerging technologies (NASEM, 2017a). One-way communication of science to society is not enough. In many domains, science needs actively to engage with other societal actors as knowledge partners in jointly framing questions and jointly seeking solutions. The unprecedented ubiquity and diversity in modes of modern digital communication lend themselves to this task.

An additional reason for supporting broader access to scientific knowledge and the research process is that this access may speed scientific progress. The involvement of the broader public in the research enterprise, which is also called citizen science, has become more prominent in recent years, largely due to the progress of digital technologies and open science practices (Smith et al., 2017). For example, Zooniverse is a citizen science web portal that hosts projects in which volunteers assist professional researchers (zooniverse.org). There are many examples of citizen contributions to research in areas such as data gathering and environmental monitoring (Arza and Fressoli, 2017).

Although the benefits of open science are increasingly being realized and recognized, there are significant barriers to a research enterprise and environment where access to research products is routinely expected. These barriers as well as approaches to overcoming them will be discussed in the next section.

BARRIERS TO OPEN SCIENCE

Some barriers to open access to research products may be addressed through the development of new tools and institutions. While some barriers can only be lowered through thoughtful changes in the policies and practices of research enterprise stakeholders, others are interrelated in complex ways. Some barriers are more relevant to one component of open science than to others (i.e., open publications, open data, or open code). This section will provide an overview of the major barriers, including information on how difficult change is likely to be.

Economic Barriers

Some of the most challenging barriers to open science are the incentives of market participants and the structure of the market for scholarly communication, particularly in the area of open publication. The scientific article, which is peer reviewed and compiled with other articles within a journal, is the traditional approach to disseminating new research. Scientific journals emerged during the 17th century (Fyfe et al., 2015). Traditionally, journals have been distributed to institutions (e.g., university libraries) and individuals via subscription. Since World War II, there has been a global expansion of research activity, leading to rapid growth in the number of articles published.

Publishers perform many important functions as a key component of the research enterprise. These functions include organizing the peer review process, developing and implementing policies in areas such as responsible conduct of research; addressing authorship problems; performing an array of technical tasks such as format migrations; and managing relations with authors, vendors, and the

media (Anderson, 2016). Journal publishers also maintain the information technology infrastructure that supports and controls access to content as well as the development of new infrastructure and platforms. Publishers of scientific journals have included a range of for profit and nonprofit entities, many of the latter being scientific societies. Robert Maxwell’s UK-based Pergamon Press worked to make journal publishing a profitable business starting in the 1950s by launching new journals and recruiting top scientists to edit and contribute to them (Buranyi, 2017). Pergamon and other commercial publishers also took on the task of publishing the journals owned by some scientific societies. Profits increased with the number of journals, as libraries would simply add new journals requested by faculty to their subscription lists. From the 1970s on, scientists began to pay more attention to the prestige and visibility of the journals in which they published. The advent of the journal impact factor, described in more detail below, contributed to this focus on prestige. Publishing in a “high-impact” journal came to be seen as essential to career progress in many fields (Buranyi, 2017). Annual subscription prices rose as well.

The 1990s brought a wave of consolidation among scientific publishers, as Netherlands-based Elsevier acquired Pergamon, leaving it in control of over 1,000 journals (Buranyi, 2017). Further increases in subscription prices and the advent of “big deal” agreements between publishers and libraries followed in the late 1990s. Under these agreements, publishers agree to provide online access to a bundle of their journals, including all back issues, priced at a discount to the sum of the individual journal subscriptions (Bergstrom et al., 2014). Despite paying lower per journal prices, total outlays by libraries increased to the point where this has been called the “serials crisis” (Panitch and Michalak, 2005). In 2015, Larivière et al. found that the five most prolific publishers, including Reed-Elsevier, Taylor & Francis, Wiley-Blackwell, Springer, and Sage, control over one-half of all the scientific journal market, and that the profit margins of these companies have been in the range of 25 to 40 percent in recent years (Larivière et al., 2015). According to one economist who studies the industry, this situation “demonstrates a lack of competitive pressure in this industry, leading to so high profit levels of the leading publishers that they have not yet felt a strong need to change the way they operate” (Björk, 2017a).

Unlike some other intellectual property-based businesses such as recorded music, the incumbent firms in commercial scientific publishing have been able to navigate technological and other changes while maintaining a profitable business model based largely on subscription revenue. In contrast to music or other parts of commercial publishing, where firms pay creators for content, authors of research articles are not paid by the publishers. Research is supported by public and private funders and by the performing institutions.

Nonprofit publishers also occupy an important place in the scholarly communications ecosystem. The most prominent of these are scientific society publishers, although university presses and other nonprofit organizations, such as the Public Library of Science (PLOS, described in more detail in Chapter 3), also participate. Publishing has long been a core activity of many societies. The size

and relative importance of society publishers varies considerably by discipline and according to the specific society in question. For example, the American Chemical Society publishes 50 peer-reviewed journals and is one of the top five publishers of articles in chemistry (ACS, 2018; Larivière et al., 2015). By contrast, in the social and behavioral sciences, society publishers play a smaller role in overall scholarly communication than in disciplines such as physics and chemistry (Larivière et al., 2015).

Society publishers undertake publishing activities as part of their overall mission of providing service to their members and disciplines. They have traditionally used a business model centered on subscription income. For some societies, publishing operations generate a surplus that they use to subsidize other activities, such as education programs or meetings (Collins et al., 2013). Available information indicates that there is a considerable variation among disciplines and individual societies regarding the size of the surplus (if any) generated by publishing and the extent of the society’s dependence on that income. For example, in 2011 subscriptions and manuscript charges accounted for 53 percent of the revenues of the Ecological Society of America and journal publication accounted for 43 percent of expenses, with society revenue and expenses each totaling over $6 million (Collins et al., 2013).

Over the past several decades, as technological change has transformed scientific publishing and for-profit publishers have increased their overall share, society publishers have faced the challenge of investing in digital production and distribution systems and responding to changes in markets and author preferences. For example, in the life sciences, where the number of journals offered by for-profit publishers has increased rapidly, some society journals have faced increased competition for manuscripts. Whereas 20 years ago an author whose manuscript was rejected by, say, Nature might then submit it to a society journal, today the author is more likely to submit to Nature Microbiology or another disciplinary journal offered by a for-profit publisher (Schloss, et al., 2017). Some societies have entered into partnerships with for-profit publishers, in which the company performs most non-editorial functions and includes the society’s journals in its own subscription bundles, paying the society a fee in return. The American Geophysical Union’s partnership with Wiley-Blackwell is a good example (AGU, 2012).

Competition from self-publication and open science have not seriously affected the market share of commercial and nonprofit publishers of high-prestige journals. Exploring the incentives of stakeholders gives some insight into why this may be the case:

- Researchers: Researchers have the incentive to maximize the visibility of each scientific discovery. These incentives are reinforced by the academic promotion and tenure processes at universities and by funders. Promotion and tenure requirements incentivize researchers to maximize the prestige of the journal in which their papers are published. Funders also require proposals to include publications, and journal impact factors are used as

-

proxies for the quality of science (Ginther et al., 2018). Researchers both consume and produce scholarship. Researchers prefer to read and cite high-quality work (McCabe, 2013). Researchers have no market power when it comes to publishing their research, and they prefer to publish work in a widely read journal. Researchers provide free labor to journals in addition to production of research articles in the form of editing and peer review (Bergstrom, 2001). Researchers also do not typically bear the costs of subscribing to journals if they are affiliated with an institution. Finally, researchers may bear the cost of open publication through article processing charges, while publishing an article in a traditional subscription journal is generally without cost to the researcher. Of course, researchers who are working at institutions that cannot afford subscription fees and cannot themselves afford to pay the article processing charges levied by open publication journals do not enjoy legal access to the system. To reduce the knowledge gap across the globe, Research4Life, a public-private partnership of international organizations, universities, and 175 international publishes, provides developing countries with affordable access to research and scholarly information (Research4Life, 2018).

- Universities: Universities seek to maximize the visibility and productivity of their faculty. Because university administrators and tenure review committees may not be subject matter experts, they rely on signals of quality for their research faculty. These include the number of publications, the prestige of the journals where faculty publish, and their success in research funding. All of these outcomes are linked to scholarly publication. Universities also purchase journals for their students and faculty at fees increasing faster than the rate of inflation, especially from commercial publishers (Bergstrom et al., 2014).

- Research funders: Federal research funders are held accountable by Congress. The peer review process is designed to allocate funding to the “best” science. Past accomplishments in terms of the prestige of publishing venues are used to forecast whether the current research proposal is of sufficient quality to be funded. Thus, research funders also use journal publications as proxies for quality (Ginther et al., 2018).

- Scientific societies and other nonprofit publishers: Scientific societies promote the scholarship of their disciplines for their members. They typically publish journals, and journal revenues may in turn support the activities of the association (Willinsky, 2004). Other nonprofit publishers such as university presses also seek to maximize the readership of their journals and cover their costs via subscription fees. Publishers pursuing open access business models are discussed in more detail in Chapter 3.

- Commercial publishers: Typically, publishers bundle journal subscriptions as a way of cross-subsidizing lesser journals by including high profile journals in the bundle.

Given these incentive structures, it becomes easier to understand the market structure of scholarly publication. Economists have studied the scholarly communication market structure in order to understand why for-profit publishers continue to have market-pricing power in the face of competition from self-publication and open access journals. Furthermore, while there are significant “first copy” costs, the marginal cost of providing online access to journal content is essentially zero. This situation persists because many of the incentives of researchers, universities, and funders create a powerful motivation to leave the current system in place: when the contribution of an idea is difficult to measure, institutions use signals of quality (e.g., citations, prestige of the journal) to infer quality (Bergstrom, 2001).

Varian (1994) argued that marginal cost pricing is not profit-maximizing for information goods such as scholarly publications. Thus, publishers have an incentive to engage in first-degree price discrimination, where they sell the same bundle of journals at different prices to different consumers. Bergstrom et al. (2014) examined the prices paid by public university libraries for “big deal” journal bundles from commercial and nonprofit publishers. They found significant price discrimination by commercial publishers by the research-intensiveness of the university, and a lesser amount of price discrimination by nonprofit publishers.

The “big deal” pricing strategies of journal publishers have played a major role in shaping the market for research journals. First, publishers recognized that demand for the journals was inelastic and priced subscriptions to maximize rents. Second, the shift from a physical journal to online access meant that libraries effectively “rented” access to the current journal as well as the older volumes of the journal. “Big deal” bundle pricing may have also made it difficult for new journals to enter the market given that university library budgets were being squeezed (McCabe 2013). McCabe (2013) argued that the cost pressures on libraries associated with “big deal” pricing led to the open access business model. This business model shifts the costs from subscribers (university libraries) onto the researchers. The Public Library of Science (PLOS, the largest and most highly cited open access journal publisher) charges publication fees ranging from $1,595 for PLOS ONE to $3,000 for PLOS Biology (PLOS, 2018). McCabe, Snyder and Fagin (2013) argue that the current pricing structure of open access journals may dissuade publication. The higher publication fees distort the market, leading to fewer submissions and potentially reducing the volume of publications. Further, Poynder (2018) argues that national open access “big deals” of the type that publishers conclude with higher education bodies in some European countries allow publishers to protect their market positions. These agreements combine subscription fees with discounts on the APCs paid to the journals by researchers at institutions covered by the agreement. One important aspect of these and other large subscription agreements is that they generally include non-disclosure agreements, so that purchasing organizations are not able to discern the prices that others are paying.

In response to competition from open access journals, some subscription-based publishers are offering a hybrid open access model, where authors can pay

a publication fee and the article is freely available. Mueller-Langer and Watt (2014) examined the impact of hybrid open access (HOA) pilot agreements between commercial publishers and the University of California system, the Universities of Hong Kong and Goettingen, all universities in the Netherlands, and the Max Planck Institutes. They found that HOA has no significant impact on citations after controlling for institution quality and citations to preprint versions of the article.

Society publishers are also responding to these trends. As discussed above, the size and importance of publishing activities varies by discipline and society. Societies have adopted new policies and expressed varying perspectives on trends in scholarly communication and open publication in particular. Some societies with large publishing operations have adapted their approaches to the movement toward open publication. For example, ACS offers a range of HOA (hybrid open access) options for authors, with the APC to be charged varying according to the license desired, the length of the embargo period to be followed, whether ACS is responsible for depositing the final published article in a designated repository or whether the author is responsible for depositing the accepted manuscript, and so forth (ACS, 2018). ACS has also launched its own open access journal and a preprint service.

Society publishers have expressed a range of perspectives in their public statements and policy positions as well. They are generally supportive of open publication in principle, but are skeptical about the imposition of funder mandates that require gold open access at the time of publication, or green open access with embargo periods of less than one year (Collins et al., 2013). The American Physical Society “supports the principles of Open Access to the maximum extent possible that allows the Society to maintain peer-reviewed high-quality journals, secure archiving, and the Society’s long-term financial stability, to the benefit of the scientific enterprise” (APS, 2009).

It is important to remember that scholarly communications involves real costs, and that the current state of the subscription journals market is the result of choices made by publishers, institutions, researchers, and funders over many years. Some experts argue that moving away from traditional publishers operating on a subscription model would entail forgoing the benefits of significant investments in digital infrastructure that publishers are making, and would constitute a short-sighted “race to the bottom” (Anderson, 2018). As noted above, journal revenues play an important role in supporting the programs and activities of scientific societies that advance individual disciplines and science as a whole. Some pathways to open publication, such as mandates that specify immediate gold open access or eliminate embargo periods for green open access, would be problematic for many societies and their ability to sustain their professional infrastructure.

Yet the issue is complex. Some might question why research library budgets that have been under considerable pressure should be expected to generate surplus funds to support the professional activities of societies. Others are more skeptical about the ultimate value provided by commercial publishers in particular, given

their large profit margins (discussed above), arguing that they benefit from publishing research that is funded by other sources, and that writing, reviewing, and some portion of editing tasks are performed by volunteers (Conley and Wooders, 2009). Publishing journals as a profit-maximizing business is certainly as legitimate as it is for other distributors of digital content based on intellectual property protections. The research enterprise and its stakeholders are responsible for the future of scholarly communication. Chapters 5 and 6 will cover the issues and choices facing the research enterprise in moving forward.

Academic Culture and Misaligned Incentives

One important set of barriers to open science springs from the fact that many of the benefits redound to research communities and the broader research enterprise itself, yet researchers are recognized and rewarded largely based on their individual production and accomplishments. The culture of open science is seen as being about advancing the public interest—when research products are broadly available and discoverable, they benefit more people and drive more innovation than when they are not. Research also has some characteristics of a public good in economic terms, in that use by one individual does not reduce availability to others. However, researchers can be excluded from using publications and other research products.

Getting Scooped

Barriers related to culture and incentives operate at several levels. At one level, researchers might be concerned about being “scooped” by other researchers if data are shared openly and reused by others before the researchers who generated them are able to fully exploit them in multiple publications (EC, 2018b). In some fields and disciplines, particularly those where acquiring data involves considerable effort or expense, such as collecting specimens from remote areas, or undertaking epidemiological studies that require a number of complicated steps, delays in sharing data underlying the first publications may be an accepted practice (Pearce and Smith, 2011). Whether or not the risk of being scooped is overstated, some adjustments in rewards and expectations may be necessary to address this concern in the fields where it exists in order to facilitate more rapid and complete data sharing. For example, institutions and disciplines might work to ensure that the first person to share research outputs receives appropriate credit, and that researchers who generate valuable and widely reused datasets receive proper attribution. Ultimately, the solution to ensuring that data are shared quickly and lessening the perceived need for delays motivated by career interests is ensuring that those who create valuable data are recognized and rewarded, but restructuring reward systems is not straightforward or easy. The rationale that sharing data quickly will deliver public health benefits and perhaps even save lives may not win out over the desire to hold data closely in order to ensure that one’s postdocs and graduate students are able to author publishable work based on this data. Note

also that the same rules should apply to all as efforts are made to appropriately reward data creation and sharing. If some researchers practice open science and others do not, the ones who do not may enjoy competitive advantage. When funders and other stakeholders require openness of publications and data as a consequence of receiving funding, a more level playing field can be created.

Exposure of Errors

Another concern that might make researchers reluctant to share data and methods is that such sharing would expose their errors to the community. New research workflows in which reporting results and sharing research products takes place within a process where community review helps to uncover error will improve the reliability of results, as described above. Preregistration of studies can help to uncover mistakes in analytical approaches before data are collected. Journals such as PeerJ and Open Science, the latter published by the Royal Society, have instituted open peer review, another mechanism aimed at improving the quality of research (McKiernan et al., 2016). It may take time for research communities to transition to open practices that enable wider review and scrutiny of research. Psychology is a current encouraging example. Concerns about reproducibility led many inside and outside the field to critically examine practices and standards, and new open practices such as preregistration and replication studies are being tried and refined (Winerman, 2017).

At the same time, some experts have raised concerns in recent years about the nature of scientific disputes in the context of changing standards related to transparency or reproducibility. The rise of blogs, social media, and venues for post-publication comment and review has greatly expanded opportunities to correct, criticize, raise questions, and make accusations against researchers, often anonymously (NASEM, 2017b). Disciplines where standards and practices are being reexamined, such as psychology, have seen intense disputes over the validity of widely heralded results as well as over the tone and personal nature of the critiques. While some prominent leaders in the discipline have identified the harsh nature of criticism itself as a significant issue, others argue that raising concerns over tone diverts attention and focus away from the substance of critiques (Singal, 2016). It is important for errors or misconduct to be identified and corrected; it is also important that small errors or legitimate differences in analytical choices not be cast as malfeasance. In order to maximize the value of greater openness and transparency, disciplines and the research enterprise itself may need to devote some attention to developing new norms around the pursuit of accuracy and related issues (Gelman, 2018).

Career Considerations

In addition to concerns arising from relatively short-term potential impacts of sharing specific research products, longer-term career considerations may also explain reluctance on the part of some researchers to adopt open practices.

Achieving the vision of open science requires scientists to make results publicly accessible and to engage in sharing data with the community as an expected practice. Researchers are motivated by the possibility of gaining career advancement, support, and recognition for their work in addition to curiosity and the desire to advance their fields (EC, 2017b). Career prospects in science are increasingly challenging especially for early-career researchers because of the scarcity of permanent academic positions and the difficulty of getting funded (Stephan, 2012a). Individual researchers may not perceive that taking the steps necessary to make their own work accessible will be in their best interests. Data sharing requires a focus on data preparation and infrastructure for stewardship, preservation, and broad use. In the absence of clear requirements to do so, scientists who take the time to make sure that software is robust, data are sufficiently described, and data stewardship and preservation meet good practice and community standards may not be rewarded by higher education institutions (e.g., through promotion and tenure or infrastructure support) or recognized within their disciplines. Preparing data and code for deposit involves considerable time costs. Researchers may suffer if they prioritize their open science work that benefits the community at the expense of publishing more journal articles.

Some aspects of current research evaluation practices may contribute to concerns about how openness and open practices affect the career prospects of researchers. The most salient issue is the importance of bibliometric indicators such as the Journal Impact Factor (JIF) in evaluating research and researchers (Declaration of Open Research Assessment, DORA, 2013; Casadevall and Fang, 2015). Developed in the 1960s by the Institute for Scientific Information (and now a product of Clarivate Analytics), JIF measures the yearly average number of citations to recent articles in a particular journal (Cross, 2009). The ability to digitally index articles, which allows JIF and other indicators to be automatically tracked and calculated, has enabled the development and wide use of JIF and other bibliometric indicators.

The use of bibliometric indicators in research evaluation affects researcher rewards and incentives both directly (in hiring or promotion) and indirectly (as a factor in funding or publication decisions). It is widely perceived around the world that the JIF of the journals that researchers have published in plays an outsized role in hiring and promotion decisions in research institutions (Abbott et al., 2010; Casadevall and Fang, 2015). JIF was not developed as a tool for evaluating research or researchers, and there are numerous reasons why using it in this way is inappropriate. These reasons include: (1) citation distributions within journals are highly skewed, meaning that JIF may not accurately track the citation profile of individual articles; (2) there are wide differences between fields in typical citation patterns, so researchers in fields where influential articles may take several years to be heavily cited are disadvantaged; (3) JIF and other indicators can be gamed by journal editors, research institutions, and individual researchers; and (4) JIF is not transparent, as the data and methodologies underlying it are proprietary (DORA, 2013; Wilsdon et al., 2017).

Some experts argue that the misuse of JIF and other bibliometric indicators may even cause broader harm to researchers and to the research enterprise itself. The contention is that apparent imbalances within some parts of the science and engineering workforce and low rates of success in research funding proposals to U.S. federal agencies have helped to create an environment of hypercompetition that discourages risk taking, shortchanges quality control, and dissuades researchers from sharing (Alberts et al., 2014; Fang and Casadevall, 2015; NASEM, 2017b; Stephan, 2012b). Such hypercompetition may directly discourage open practices such as sharing data and other research products if researchers are primarily concerned with maintaining an advantage. Vale and Hyman (2016) argue the heightened competition between scientists in high-profile journals has strained the peer-review system; however, “the need for a system of validation has only become more pronounced as the volume of scientific work has increased” (p. 4). Researchers in a hypercompetitive environment might also prioritize publishing their work in journals with the highest possible JIFs, regardless of whether publication in such journals is consistent with making research products available under open principles. No researcher’s career has been harmed by publishing in high-impact journals.

Countervailing Factors and Efforts to Address Barriers Related to Culture and Incentives

All of the barriers to open science discussed above related to culture and incentives are likely higher and more challenging for early career researchers than they are for their senior colleagues (Eveleth, 2014; The Guardian, 2018). Although some of these barriers may take considerable time and effort to address, there are some encouraging signs of positive change. First, the potential negative effects of open practices on careers, including anxieties about being “scooped,” may be shrinking over time as advantages become more apparent. As discussed above, open publication may confer an advantage in terms of citations (Hitchcock, 2018; Wang et al., 2015). This merits continued study. There is also evidence that media coverage and social media discussion of openly published research is greater than that for traditionally published work (Wang et al., 2015). Further, there are indications that JIFs of indexed open access journals may be increasing compared with those of traditional, subscription journals (McKiernan et al., 2017). Moreover, more subscription journals are allowing authors to deposit preprints or postprints that are openly available (sometimes in response to funder mandates) or offering an open publication option for purchase by the author. The benefits and downsides of these options are discussed in more detail below.

In addition to encouraging progress toward open practices within the context of conventional reward and incentive systems, the participants in the research enterprise can also take steps to change cultures and incentive systems in ways that explicitly encourage and reward open practices. For example, a number of prizes and funding programs launched in recent years have recognized and supported open

science (McKiernan et al., 2017). Funder, institutional, and publisher policies mandating open policies also contribute to changing culture and incentives.

New efforts to publicly track the extent to which researchers follow open practices are also being developed. One well-known example is the initiative led by the Center for Open Science (COS) and several journals to assign badges to accompany published articles where authors have shared data or materials, or preregistered their studies (COS, 2018a). While this initiative has yielded encouraging results, further work is necessary to separate the impact of badges from other editorial changes supportive of open practices introduced at the same time, and to confirm other results of introducing badges (Kidwell et al., 2016; Bastian, 2017). At a broader level, funder and journal openness mandates may generate data that can be utilized by community compilation and reporting efforts aimed at improving transparency. For example, FDAAA Trials Tracker is a website launched in 2018 that gathers information on compliance with U.S. Food and Drug Administration requirements that all clinical trial results be reported and makes the information available in an accessible format (FDAAA Trials Tracker, 2018). Box 2-3 describes additional requirements related to open access to clinical studies.

Another approach is to modify researcher evaluation criteria and tools in order to avoid discouraging open practices or even to explicitly reward them. Preventing the misuse of JIF and other bibliometric indicators in the evaluation of research and researchers is one possible approach. The 2013 San Francisco Declaration on Research Assessment is one prominent effort that has gained many signatories among institutions, funders, and journals (DORA, 2013). The 2015 Leiden Manifesto for Research Metrics is a parallel effort (Hicks et al., 2015). Both of these statements emphasize the importance of expert judgement in the evaluation process.

Efforts are also ongoing to take advantage of the capabilities of information technologies and the explosion of online interactions to develop new measures of research impact that would address some of the negative aspects of the JIF and enable a broader consideration of the value of articles and other research products. Taken together, these new measures have been labeled alternative metrics or altmetrics. For example, efforts are underway to develop substantially new citation-based indicators based on transparent metric calculations that are open to scientifically based oversight (Hutchins et al., 2016). Others are developing metrics that go beyond citation-based indicators, incorporating information on downloads, mentions on social media, and other online reader behavior (NISO, 2014; Howard, 2013). Developing new indicators to evaluate research and researchers and facilitating their use will require a better understanding of technical and institutional prerequisites to their use—such as standards for digital author identifiers—and how these might be put in place. Indeed, the open science movement itself can provide the impetus to the improvement and wide use of high-quality metrics, and these metrics can play an important role in recognizing and rewarding open practices (Wilsdon et al., 2017).

Finally, broader efforts are underway to rethink research evaluation practices and develop new approaches that place less emphasis on JIF and other bibliometric indicators and more emphasis on other contributions of researchers, including adherence to open practices. For example, the Peer Reviewers’ Openness Initiative proposes that peer reviewers commit to withholding comprehensive review of submissions where data or materials are not openly available (Morey et al., 2016). A 2017 European Commission (EC) report describes a new approach to evaluating researchers and their career contributions where open practices are

central (EC, 2017b). Some experts advocate a fundamental rethinking of approaches to peer review characterized by openness, with scholarly communications organized around network or library concepts rather than fixed journal articles (Kriegeskorte et al., 2012; Kennison and Norberg, 2015).

Privacy and Security Concerns

Privacy Concerns

As described above, open science is critical for addressing the reproducibility challenge in scientific research while facilitating future research that validates or builds on previous results. An unintended and potentially harmful consequence of publicly sharing research data, however, is the possible effect on privacy. Researchers have long recognized the privacy implications of publicly sharing research data, especially when such data involve human subjects, such as patients in a clinical trial. The tension between privacy protection and scientific openness is longstanding. For example, many studies in the area of public health pertain to health care records and medical history, which makes it extremely difficult, if not impossible, to maintain patient privacy while openly sharing all the information necessary to reproduce or replicate a published study (O’Neill et al., 2016).

Traditionally, researchers rely on anonymization, or “de-identification,” methods to strike a balance between open data and human subject privacy. The idea is that once all personally identifiable information has been removed from a published dataset, an individual would no longer be associated with any record in the dataset. Participants in research studies expect that the data collected about them will be handled with care and that, unless they have given explicit consent to have their personal information shared, their data will be safeguarded. The federal government has provided specific guidance through its HIPAA legislation, which provides standards for the electronic exchange, privacy, and security of health information.2 The intent of the legislation is to safeguard personally identifiable information, known as PII. HIPAA’s “safe harbor” defines 18 specific attributes (e.g., name, phone number, medical record number) as “protected health information” in need of suppression (CDC, 2003).

In recent years, however, it has become clear that even anonymized data can reveal private information about the human subjects. The key challenge here is that even attributes that are not labeled as personally identifiable may still contain sensitive information that associates an individual, and that by linking those data to other publicly available resources, individuals can be reidentified. (Sweeney, 1997, 2002, 2003, 2009; Malin and Sweeney, 2001). In a case study of a state-released dataset containing 2.8 million hospital records, investigators showed that even after removing from the dataset all information except the pro-

___________________

2 The Health Insurance Portability and Accountability Act of 1996 (HIPAA), Public Law 104-191. See https://www.hhs.gov/hipaa/for-professionals/privacy/laws-regulations.

cedures received by a patient, the percentage of patients with a unique set of procedures is still 42.8 percent; in other words, as the investigators state, “an adversary would have about a 42.8 percent chance of linking the anesthesia record to the hospital database, thereby discovering the patient’s sensitive information.” (O’Neill et al., 2016)

In August 2016, after AOL Research released 20 million search queries issued by its users (with no user identifier or personal information attached), a reporter from The New York Times was still able to locate an individual from the anonymized search records by cross referencing the contents of the queries with phonebook listings (Barbaro and Zeller, 2006). Similarly, researchers were able to re-identify individuals in an anonymized version of Netflix’s movie preference database for a contest that challenged researchers to try to improve its recommendation engine. By comparing rental dates and ratings in the Netflix database with reviews posted on the Internet Movie Database, the researchers were able to discover individuals’ entire rental histories, potentially revealing sensitive information about them (Narayanan and Shmatikov, 2008). As a result of this re-identification, a class-action lawsuit was filed against Netflix, and, as part of the settlement, Netflix cancelled a second planned contest.