Proceedings of a Workshop

IN BRIEF

May 2018

Understanding Pathways to a Paradigm Shift in Toxicity Testing and Decision-Making

Proceedings of a Workshop—in Brief

Advances in new tools and tests of chemical toxicity—from high throughput, cell-based, in vitro studies to tissue chips to environment-wide association studies—have led to a new understanding about the effects of chemical exposures in humans. These new approaches are faster, less expensive, and increasingly more relevant to human exposures than legacy animal toxicity testing approaches. Additionally, the passage of the Frank R. Lautenberg Chemical Safety for the 21st Century Act1 (Lautenberg Act), which amends the Toxic Substances Control Act (TSCA), has encouraged opportunities for industry and government agencies to use data from emerging toxicity testing approaches, particularly in risk assessment and analysis contexts. However, many questions remain about whether and how to make the paradigm shift away from traditional approaches and toward using new data streams as the basis for the wide array of research, policy, and regulatory decisions facing the environmental health field.

On November 20–22, 2017, the National Academies of Sciences, Engineering, and Medicine (the National Academies) Standing Committee for Emerging Science for Environmental Health Decisions held a 2-day workshop to explore key factors that influence how scientists, policy makers, risk assessors, and regulators incorporate new science into their decisions. In the lead up to the workshop, members of the planning committee considered whether regulatory reform was sufficient to encourage the environmental health community to adopt emerging toxicity testing approaches and what other steps may be necessary to support a paradigm shift away from legacy animal testing toward the usage of the novel testing approaches. The committee noted that the process for determining when and how to use data from emerging approaches to assess the toxicity of environmental exposures varies within institutions, among institutions, and among countries. This workshop aimed to raise awareness about the questions and trade-offs that need to be addressed in order to facilitate a systematic use of data from new and emerging approaches to toxicity testing to maximize confidence and public health protection. The committee also wanted to learn from the social sciences about decision-making processes and what is required to build confidence during times of change. Other goals envisioned by the planning committee included identifying and inventorying stakeholder concerns regarding the paradigm shift from traditional toxicity methods to the vast array of newly available methods.

The audience of the workshop represented various regulatory agencies, state and federal public health experts, researchers from private industry as well as academic and government laboratories, and members of the public. This workshop was also attended by environmental health decision-makers from other countries, such as Canada and European Union countries. Workshop participants discussed empirical social science evidence on issues such as perceptions on the type and quality of data that are “sufficient” for different types of decisions and the associated trust in decisions influenced by new types of data. Case studies from different decision contexts were used to investigate key considerations and questions about what builds confidence in new scientific approaches among members of the environmental health community. This workshop allowed for the participants to engage in detailed discussions, exchange ideas, and identify the limitations and opportunities in building the confidence needed for a paradigm shift toward the use of new tools and technologies for decision-making and toxicity testing. Participants and presenters explored the

___________________

1 See https://www.congress.gov/114/plaws/publ182/PLAW-114publ182.pdf.

![]()

use of new toxicity approaches, the level of confidence needed to use new approaches, and issues in achieving acceptance. Topics that were covered included the use of new tools for data poor chemicals currently in use, screening of alternative (less toxic) ingredients, and evaluating new chemicals for toxicity. This Proceedings of a Workshop—in Brief summarizes the discussions that took place at the workshop, with emphasis on the comments from invited speakers.

In his opening remarks, John Bucher from the National Toxicology Program at the National Institute of Environmental Health Sciences (NIEHS) challenged the participants to view the paradigm shift from a holistic perspective in considering what a strategic roadmap to accomplish the paradigm shift would look like. Bucher indicated that this will most likely require decision-makers to move away from their comfort zones and to accept new data streams that are different from currently accepted practices. He asked participants to consider how to convince decision-makers to familiarize themselves with new data types and move toward incorporating them in their decision-making process. He also suggested the need to identify when new data streams are useful for screening and prioritization before proceeding with more extensive toxicity testing. In addition, Bucher raised the importance of remembering the value of “old data” as a way to balance the discussion and ensure a safe transition from old data streams to novel data streams.

SCIENCE AND PRACTICE OF DECISION-MAKING UNDER SHIFTING PARADIGMS

Decision Context

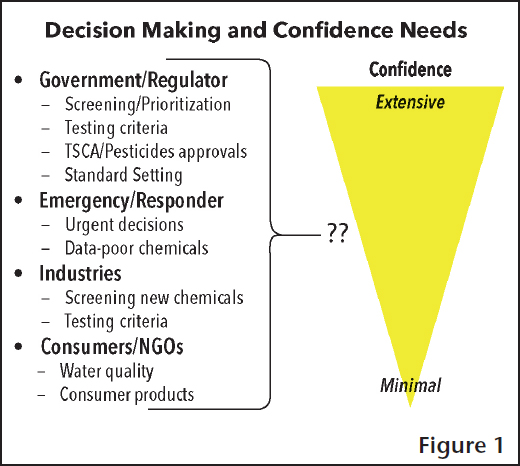

Gary Ginsberg from the Connecticut Department of Public Health started the scientific presentations addressing how new technologies need to be considered in the appropriate decision context and in conjunction with the problem formulation. “Scientists need to be aware of what [the] science needs to do,” he said in introducing the concept of applying new scientific developments to a decision context. Specifically, what level of confidence is needed in the data to engender confidence in the decision-making process that data will inform? Figure 1 presents several decision-making scenarios, raising the question of where on the confidence continuum, from minimal to extensive, it is best to place the associated scenarios. Ginsberg discussed social and psychological factors and how they affect confidence levels in data, as well as the types of decisions data can inform (e.g., qualitative or quantitative). He asked questions such as what are the expectations of decision-makers, what does it take to be convinced that these novel data types can be used in decision-making, and are there case studies of prior success with new data streams?

For example, Ginsberg suggested that during emergency response situations (such as a chemical spill or a natural disaster) many decisions may be better informed by advanced toxicology methods. He also mentioned that there is a current opportunity with the changes to TSCA to lead the way in the utilization of new and emerging toxicity data streams.

Proof, Presumptions, and Defaults

Carl Cranor, University of California, Riverside, used standards of proof, presumptions, and defaults as models for how evidence may be used in decision-making and explored how these models can be used in the environmental health field. The specific standard of evidence needed for decision-making is dependent on the decision context and potential outcome. He stated that legal standards of proof (e.g., beyond a reasonable doubt) generally do not apply to toxicity testing and environmental decision-making. However, standards such as “balance of evidence” and “clear and convincing (or highly probable) evidence” are more typically used in the scientific realm and seem most appropriate for decision-makers to adopt. It is critical when evaluating data from 21st-century science to establish levels for the evidentiary bar that are appropriate to the decision context, or specifically fit for purpose, stated Cranor. Setting the evidence bar too low for new substances entering the market may result in excess risk to human health, just as setting the bar too high for removing substances already in the market may also leave susceptible populations inadequately protected.

Cranor also stressed that the selection and use of defaults in regulatory science are particularly important. Defaults are the values used when the actual value is not available or practical to obtain. He stated that a report from the National Academies previously addressed defaults including recommending that defaults should be consistent with evolving science, have a clear standard “to justify the plausibility of the default for a wide array of circumstances,” have

clear scientific standards for identifying when a departure from the default is appropriate, and additionally, how to “determine when evidence supporting an alternative assumption is robust enough” to depart from the default.2

The default of toxicological presumption3 may be used to address the toxicity of chemicals without available toxicity data by using toxicity equivalent factors. The best known example is the use of toxicity data from 2,3,7,8-tetra-chlorodibenzo-p-dioxin (TCDD) to address the relative toxicity of related chlorinated and brominated dibenzo-p-dioxin, dibenzofurans, and planar biphenyls.4 Cranor indicated that this methodology appeared promising for addressing chemicals with little or no available toxicity data.

In the discussion that ensued, Rick Becker from the American Chemistry Council reminded participants that decision-makers need to take into account exposure as well as toxicity, along with considering endogenous levels (e.g., formaldehyde) when making decisions about safe levels. John Balbus from NIEHS questioned Cranor on what a values-based process versus a science-based process would look like in evaluating the toxicity of chemicals. Cranor indicated that a values-based process may look at protecting children, rather than the general population, when the resultant disease will be evident for their life time.

Building and Sustaining Trust

Branden Johnson with Decision Research discussed the importance of building and sustaining trust in all aspects of environmental health decision-making. Johnson outlined communication strategies that build trust and generate solutions that could be better accepted. Among these strategies, he listed understanding the perspectives, concerns, and knowledge level of the interested stakeholders; opening up two-way risk communication; discussing and displaying vulnerabilities; and combining analysis with deliberation. Effective communication includes detailed plans for goals, audiences, messages, and methods.

Additionally, Johnson stated that building trust is not the only way to move toward a paradigm shift. He explained that when there are common goals and a willingness to cooperate, trust can come later in the process. By focusing on the behavior or actions needed to maintain cooperation, positive outcomes are achievable in the absence of trust. He suggested that success may be gained in the absence of trust through building strategic alliances and cooperation with and among the regulated community, regulators, and stakeholders.

IMPACTS OF POLICY CHANGE ON POPULATION HEALTH OUTCOMES

Mark Hayward of The University of Texas at Austin reminded participants to consider the many intended and unintended consequences of policy changes. He presented a review of how public policy and a changing America are shaping health outcomes, focusing on the rapidly changing relationship between education (a key marker of socioeconomic inequality) and adult mortality. Institutional forces, such as technological and public policy changes at federal and state levels, and the emergence of changes in institutional policy, shape trends and inequality in population health. Hayward used three major periods of technological and institutional changes (technological innovation and the rise of the New Deal in the 1930s, Great Society and the culmination of the New Deal in the mid-1960s, and New Federalism and the federal devolution of power back to the states in the 1980s) and the change in adult mortality data during those periods to illustrate this point. In the ensuing discussion, past examples of changes to policy that improved population health and reduced disparities (e.g., development of mammography, antiviral drugs, and targeted cancer therapies) were compared to those that have led to declines in population health and rising disparities (e.g., obesity epidemic and rapid rise in Internet use).

CASE STUDIES ON THE USE OF NEW DATA IN DECISION-MAKING

A number of speakers were asked to prepare presentations that explored the adoption and use of novel testing methods for various applications. These presentations helped to illustrate and provide context for the process, opportunities, and challenges associated with shifting from a traditional testing paradigm toward novel testing methods. These presentations serve as case studies and each highlight a different set of conditions, challenges, and strategies for the use of novel testing assays.

___________________

2 National Research Council. 2009. Science and Decisions: Advancing Risk Assessment. Washington, DC: The National Academies Press. https://doi.org/10.17226/12209.

3 More commonly referred to as read-across.

4 See https://archive.epa.gov/raf/web/pdf/tefs-for-dioxin-epa-00-r-10-005-final.pdf.

Regulatory Decision-Making—Endocrine Disruptor Screening Program

Lynn Goldman from The George Washington University Milken Institute School of Public Health introduced the Federal Food, Drug, and Cosmetic Act, which was amended in 19965 requiring the Environmental Protection Agency (EPA) to “develop a screening program, using appropriate validated test systems and other scientifically relevant information, to determine whether certain substances may have an effect in humans that is similar to an effect produced by a naturally occurring estrogen.” This gave EPA the statutory authority to create what is now known as the Endocrine Disruptor Screening Program (EDSP). This program uses a two-tier approach to screen chemicals (tier one) for potential interactions with hormonal systems (mainly estrogen, androgen, or thyroid). Those chemicals that have an apparent interaction are then further characterized with more testing (tier two). Goldman eloquently summarized the activities of the preceding 21 years as “3 advisory committees, 7 policy statements, tier one and tier two tests, 67 [chemicals] referred for screening, 15 [chemicals] off the market, 18 [chemicals] went into tier two.” She cautioned participants to remember the apparent limited progress reflects the complexity of the task before EPA and highlights that this is the first screening and testing regime to be put into place.

Stanley Barone, Jr., from EPA’s Office of Chemical Safety and Pollution Prevention (OCSPP) detailed research by EPA’s Office of Research and Development and its National Center for Computational Toxicology (NCCT) that created the potential for high throughput techniques to replace some of the EDSP required assays. The EDSP Tier 1 Screening Battery in 2013 included the in vivo (rat) uterotrophic assay. EPA used an estrogen receptor agonist area under the curve (AUC) model, developed using high throughput screening data, to demonstrate excellent performance against in vivo (uterotrophic) reference chemicals. He explained that in 2015 EPA acknowledged a link between AUC value and bioactivity, showing that high throughput screening data could be used to identify substances with potential estrogen receptor bioactivity.6 Barone suggested that the use of computational tools and models serves to rapidly screen chemicals for endocrine bioactivity, contributes to the weight of evidence screening level determination of a chemical’s potential bioactivity, provides alternative data for specific endpoints in the EDSP Tier 1 battery, and screens thousands of chemicals in a short period of time. The estrogen agonist bioactive model is the most mature of the endocrine pathways of interest to EPA, though work is under way to use a similar approach for the androgen and thyroid pathways. Barone indicated the EDSP program is currently exploring using the adverse outcome pathway (AOP) approach to be able to move farther and faster in addressing a large volume of chemicals. Barone was also careful to point out the limitations of alternative methods and explain the efforts to overcome some of these technical limitations.

Jim Jones from the Consumer Specialty Products Association, and formerly Assistant Administrator for EPA’s OCSPP, addressed the non-science factors associated with integrating 21st-century science into regulatory decision-making and the decision to rely on non-animal studies in the EDSP. His comments focused primarily on the period following the amendment to the Federal Food, Drug, and Cosmetic Act in 1996 and the agency response. Jones discussed how the agency pivoted away from a framework that was created with major time investments by various groups that relied on the use of medium throughput assays and toward the framework that incorporates the high throughput assays discussed by Goldman and Barone. Also discussed was the importance of managing dialog between program offices, the difficulty of applying techniques developed for screening to risk assessment, and ensuring that the process was “fit for purpose.” This meant stressing the importance of understanding “what is the problem that you’re trying to solve and that will help inform the level of rigor that you need to bring to the science.” By having a focus on developing the science needed to bring these high throughput assays to use for screening, Jones acknowledged that the added time needed to achieve this could impact EPA’s ability to meet its statutory deadlines and that there was a need to balance concerns and priorities.

Aquatic Monitoring of Emerging Contaminants

Tara Sabo-Attwood from the University of Florida presented two case studies addressing aquatic monitoring of emerging contaminants. The first accomplished monitoring throughout a wastewater treatment plant (WWTP) pipeline to assess the efficacy of the treatment process and the second linked in vitro responses to in vivo effects. Sabo-Attwood said that these two case studies were used for a comprehensive workflow for organic micropollutant identification and targeted analysis in water reuse on Kiawah Island, South Carolina, to show how the assays may be integrated into monitoring programs.

She explained that the WWTP pipeline samples were obtained and analyzed using a battery of testing approaches that included the induction of xenobiotic metabolism, endocrine disruptions, reactive modes of action (geno-

___________________

5 See https://www.epa.gov/endocrine-disruption/endocrine-disruptor-screening-program-federal-register-notices.

6 See https://www.federalregister.gov/documents/2015/06/19/2015-15182/use-of-high-throughput-assays-and-computational-tools-endocrine-disruptor-screening-program-notice.

toxicity), adaptive stress response (oxidative stress), and cytotoxicty and systemic response. The work used this battery of assays to demonstrate the different types of data that can be obtained by these novel approaches and used to guide tier frameworks for monitoring water quality.

The second case study Sabo-Attwood presented linked in vitro responses to in vivo effects to establish quantitative linkages between in vitro assays and in vivo traditional endpoints of adversity, with a focus on existing AOP knowledge. Using a fish model, activation of estrogen receptors and subsequent induction of egg transcripts (gene expression changes) and sex ratios were examined. This case study demonstrated how in vitro assays could provide information below the threshold of where in vivo effects are observable.

Product Development and Market Decisions

Meredith Williams from the California EPA Department of Toxic Substances Control (DTSC) presented the framework DTSC uses for the development of safer consumer products. It selects combinations of products and chemicals that may be harmful for consumers and asks manufacturers to evaluate alternatives. These alternatives have the same or similar functionality or are engineered to avoid the need of the particular chemical additive of concern. Williams explained that the alternatives analysis is accomplished in two stages. The first stage includes identification of relevant factors and initial screening that is accomplished in 180 days. The second stage takes 1 year and includes an in-depth analysis that addresses the life cycle of the alternative agent(s) under consideration.

Product-chemical combinations are selected based on “potential exposure to the candidate chemicals in the product and the potential for exposures to contribute to or cause significant or widespread adverse impacts,” explained Williams. The Stochastic Human Exposure and Dose Simulation High Throughput (SHEDS-HT)7 model and product intake fraction modeling are used to evaluate exposure potential. Chemicals under consideration include flame retardants, antimicrobials, per- and polyfluoroalkyl substances (PFASs), and azo and benzidine dyes and fragrances.

Williams elaborated that the alternatives analysis includes chemical hazard assessment and exposure assessment along with life cycle analysis. The alternatives analysis is not a traditional risk assessment. Methods utilized in the analysis include high throughput exposure modeling, mixtures and cumulative exposures, assessment of chemical groups, quantitative structure activity relationship (QSAR) models, and data mining. This process uses a precautionary approach to incentivize innovation in the search for safer alternatives while shifting the toxicity testing responsibility to manufacturers. She concluded by saying that California EPA’s goal is for transparent and science-based decision-making.

INCORPORATION OF ALTERNATIVE TOXICITY TESTING METHODS

United States

Anna Lowit from EPA spoke about her involvement on the Interagency Coordinating Committee on the Validation of Alternative Methods (ICCVAM). She explained how in 2000, Congress passed the ICCVAM Authorization Act and established the committee comprising “16 federal regulatory and research agencies that require, use, generate, or disseminate toxicological and safety testing information.”8 The ICCVAM released a strategic roadmap9 in early 2018 titled Establishing New Approaches to Evaluate the Safety of Chemicals and Medical Products in the United States. This roadmap helps agencies identify consensus goals and coordinate key activities required to achieve those goals. It also provides a framework to support the planning and coordination of technology development by facilitating communication and collaboration “within and between government agencies, stakeholders, and international partners.”

In order to make progress on the incorporation of 21st-century science, federal agencies and stakeholders should continue to collaborate through the ICCVAM “to build a new framework to enable development, establish confidence in, and ensure utilization of new approaches to toxicity testing that improve human heath relevance and reduce or eliminate the need for testing in animals,” explained Lowit. Current ICCVAM projects include addressing acute toxicity, skin sensitization, ocular and dermal irritation, reference chemicals, development and reproductive toxicology, in vitro to in vivo extrapolation, and read-across assessments of toxicity.

European Union

Maurice Whelan from the European Commission Joint Research Centre detailed for the workshop participants the related activities going on in Europe. He discussed the drivers motivating the shift away from the heavy reliance on animal

___________________

7 See https://www.epa.gov/chemical-research/stochastic-human-exposure-and-dose-simulation-sheds-estimate-human-exposure.

8 See https://www.gpo.gov/fdsys/pkg/FR-2016-08-19/pdf/2016-19774.pdf.

9 See https://ntp.niehs.nih.gov/iccvam/docs/roadmap/iccvam_strategicroadmap_january2018_document_508.pdf.

testing. The European Commission Directive 2010/63/EU10 on the protection of animals used for scientific purposes announced the “goal of full replacement of procedures on live animals for scientific and educational purposes as soon as it is scientifically possible to do so.” Whelan explained how European regulatory science is leading the way on this effort and progress is occurring through collaboration with many organizations outside of the European Union. Validation of alternative methods is a major focus of current efforts and an essential part of gaining acceptance and uptake. Whelan detailed the factors that go into determining the strength of the data and knowledge in addition to validation, such as qualitative and quantitative concordance, explanatory power, internal coherence, and external consistency to name a few.11 He noted that establishing scientific credibility is as important as the result in proceeding from research to method development, validation, demonstration, acceptance, and finally application. Whelan predicted that a paradigm shift in toxicology will center on problem formulation and redefining toxicological hazard.

BREAKOUT DISCUSSIONS

The breakout sessions enabled workshop participants to engage in detailed discussion and idea exchange addressing the limitations and opportunities in building confidence to facilitate a paradigm shift toward using new tools and technologies for toxicity testing. A number of participants remarked that increased trust and expansion of use would likely stem from repeated success in using the new toxicity testing and decision-making tools. The following describes the report out from the individual groups and should be taken as the perspectives of individuals and not necessarily the agreement of the respective breakout groups.

Water Monitoring

The water monitoring breakout groups explored how the new toxicology data streams contribute to environmental and public health decisions concerning various types of water (e.g., industrial wastewater discharges, WWTP effluents, river water, recycled water, or drinking water for public supplies). Limitations, acceptance, and confidence issues of novel tools were explored. Among those concerns was how to determine which in vitro endpoints could use follow-up animal studies for both targeted and untargeted screening. Another limitation identified was that bioassay data do not equate to traditional dose-response functions and therefore are not able to be used in traditional risk assessments.

Product Development

The product development breakout groups explored product development from a consumer perspective. They discussed issues such as improved confidence and acceptance by the general population as well as the regulatory community. Novel data streams for better decision-making that encompass social, economic, and health protective perspectives would probably have greater acceptance by the general population, regulators, and the regulated community. The reports from these groups stated that transparency in decision-making will build trust only if the information/data are understandable to the public. Improved confidence will come with repeated success and, conversely, problems in initial trials and implementation will cause a loss of confidence that may not be recoverable. These groups also discussed some of the limitations of the novel approaches for testing chemicals, outlining the challenges associated with translating doses, exposures, and the relevance of particular models compared to humans.

Public Health Emergencies

The public health emergency breakout groups identified the availability of short-term toxicology data and general preparedness as keys for sound decision-making in public health emergencies. Due to the time-sensitive nature of an emergency, these situations often require decision-making with imperfect or missing information. Additionally, consideration of the emotional state of the audience is important when delivering messages. Discussions focused on recent public health emergencies (e.g., World Trade Center demolition, Deepwater Horizon oil spill, and Elk River chemical spill) and how new toxicity testing data were used to improve responses, confidence in the steps taken, and to rapidly assess the situation. These examples may help create strategies that would be useful in deploying new types of data for use in public health emergencies prior to the initiation of an emergency.

___________________

10 See https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=celex%3A32010L0063.

11 Patterson, E. A., and M. P. Whelan. 2017. A framework to establish credibility of computational models in biology. Progress in Biophysics and Molecular Biology 129:13–19.

TSCA Implementation

The TSCA implementation groups identified the importance of training and bringing toxicologists and risk assessors up to date on new methods in order to achieve the provisions in the Lautenberg Act encouraging the reduction of vertebrate testing. Defining equal or better scientific reliability, identifying gaps in current efforts, focusing problem formulation on users of the new testing methods and subsequent data, ensuring transparency for all stakeholders, and addressing consumer concerns about maintaining and improving public health were also discussed.

FINDING A PATH FORWARD

Melissa Perry from The George Washington University summarized the workshop as an example of epistemological philosophy, the study of efforts to gain understanding or knowledge of the nature and scope of human knowledge. More simply put, the workshop illustrated the continuous pursuit of the truth represented by the scientific endeav- or. Perry said that the continuous development of assays, experiments, instruments, and analyses along with their validation and acceptance is key to developing pathways to a paradigm shift in toxicity testing and decision-making. Acceptance of the use of new testing methods will center on societal influences, including those in the legal, commercial, and political arenas. Perry concluded by recapping the key messages she heard during the workshop to build confidence in the paradigm shift toward using new toxicity testing for decision-making. Seeking acceptance of a paradigm shift through consensus methods would include participation of all stakeholders in a transparent manner, including engagement, communication, Federal Register notices, public comments, scientific conferences, and public forums. Consensus decision-making, while ideal, cannot be achieved without good relationships or without careful deliberations.

Planning Committee on Understanding Pathways to a Paradigm Shift in Toxicity Testing and Decision-Making: Stanley Barone, Environmental Protection Agency; John Bucher, National Toxicology Program/National Institute of Environmental Health Sciences; Kevin Elliott, Michigan State University; Kristi Pullen Fedinick, National Resources Defense Council; Gary Ginsberg, Connecticut Department of Public Health; Patrick McMullen, ScitoVation; Jennifer McPartland, Environmental Defense Fund; Heather Patisaul, North Carolina State University; Melissa Perry, The George Washington University; John Vandenberg, Environmental Protection Agency

Disclaimer: This Proceedings of a Workshop—in Brief was prepared by Julie Fitzpatrick as a factual summary of what occurred at the workshop. The planning committee’s role was limited to planning the workshop. The statements made are those of the rapporteur or individual meeting participants and do not necessarily represent the views of all meeting participants, the planning committee, or the National Academies of Sciences, Engineering, and Medicine.

Reviewers: To ensure that it meets institutional standards for quality and objectivity, this Proceedings of a Workshop—in Brief was reviewed by Ann Bostrom, University of Washington; Alissa Cordner, Whitman College; Gary Ginsberg, Connecticut Department of Public Health; Samantha Jones, Environmental Protection Agency

Sponsor: This workshop was supported by the National Institute of Environmental Health Sciences.

About the Standing Committee on Emerging Science for Environmental Health Decisions

The Standing Committee on Emerging Science for Environmental Health Decisions is sponsored by the National Institute of Environmental Health Sciences to examine, explore, and consider issues on the use of emerging science for environmental health decisions. The Standing Committee’s workshops provide a public venue for communication among government, industry, environmental groups, and the academic community about scientific advances in methods and approaches that can be used in the identification, quantification, and control of environmental impacts on human health. Presentations and proceedings such as this one are made broadly available, including at http://nas-sites.org/emergingscience.

Suggested citation: National Academies of Sciences, Engineering, and Medicine. 2018. Understanding Pathways to a Paradigm Shift in Toxicity Testing and Decision-Making: Proceedings of a Workshop—in Brief. Washington, DC: The National Academies Press. doi: https://doi.org/10.17226/25135.

Division on Earth and Life Studies

Copyright 2018 by the National Academy of Sciences. All rights reserved.