Neurotechnologies, both old and new, are making it possible to introduce neuroscientific evidence into the legal system, including in the courtroom and for administrative decisions, said Joshua Sanes. This chapter provides a snapshot of how neuroimaging technologies, among others, have been used to detect deception, identify pain, and decode neuronal activity in the brain, and discusses the contributing role of genetics to predict human behavior and decision making.

STATE OF THE ART OF TECHNOLOGIES RELEVANT TO THE LEGAL SYSTEM

Riding a wave of expanded interest in the development of neuroscience technology, the BRAIN Initiative and other international projects have generated technologies that enable peering into the living human brain to look for evidence of mental status and other capabilities, said Sanes. These technologies include measures of structure, neural activity and connectivity, molecular composition, and genomic variation. The explosion in their capabilities has been facilitated by increased computational ability, artificial intelligence, machine learning, and the development of large databases, he added.

These technologies may, in the future, have the potential to enable prediction of the dangerousness of individuals or their likelihood to recidivate, assess volition and intent, determine competence to stand trial, reveal biological mitigating factors that might explain criminal behavior, distinguish chronic pain from malingering, recover lost memories, or distinguish between real and false recovered memories, said Sanes. They also offer the potential to optimize treatment and reduce recidivism, he added. Sanes predicted that advances in technology will be rapid and shocking—not necessarily more reliable, but more compelling.

Yet, Joshua Buckholtz suggested that the overlap is relatively small in regard to what the law wants neuroscience to do or thinks neuroscience could do better than what is done now; what people, including some scientists, have claimed neuroscience can do; and what neuroscience can actually do, now or in the future. Moreover, he said, no one knows what falls into that intersection because no systematic evaluation of the overlap has

been conducted; thus, there has been no consensus framework for aligning legal goals, social expectations, and neuroscientific methods.

To create such a framework, Buckholtz cited three domains of engagement among neuroscience and law: revealing mental states, determining the capacity for self-control, and predicting future behavior. In each of these domains, brain imaging offers some promise, although Buckholtz cautioned that expectations should be modest. Mental states that might be elucidated by brain imaging techniques such as fMRI could enable identifying levels of intent, demonstrating bias in a witness or juror, quantifying suffering, or detecting lies, said Buckholtz. The use of fMRI to detect deception is discussed in greater detail below.

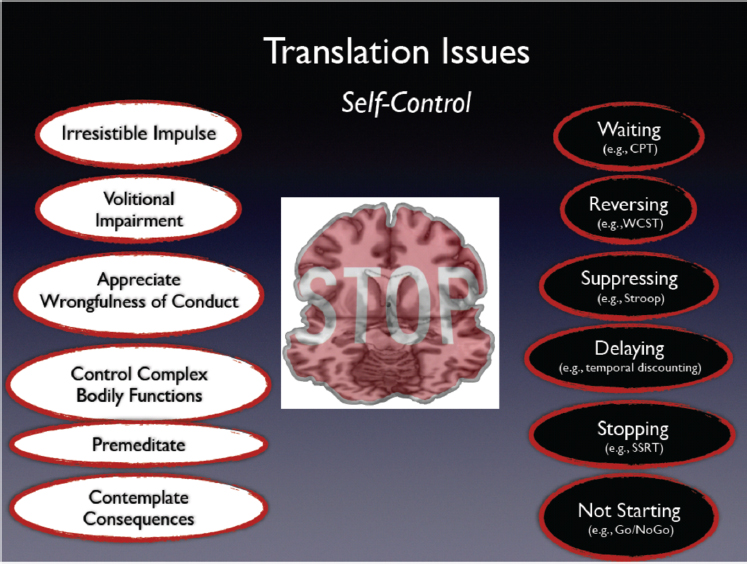

At the intersection of law and neuroscience, the capacity for self-control involves three elements: (1) measuring maturity, (2) detecting impairments, and (3) validating the presence of legally relevant disease states, said Buckholtz. Neuroscientific evidence in this domain is typically aimed at reducing responsibility by introducing biology as a mitigating factor, he said, but there has been no coherent mapping between the legal and scientific constructs of self-control (see Figure 2-1). To explain the gap between the legal and scientific constructs, Buckholtz offered a hypothetical example of a man charged with a violent crime who performs poorly on tests that measure response inhibition, action cancellation, impulsive choice, and intentional control. But to conclude that performance on these tests reflects a mental defect that caused a substantial inability for a man to conform his behavior to requirements of the law is impossible at this time because the law’s language is incompatible with measurements of neuroscience, said Buckholtz. To a cognitive neuroscientist, constructs like volitional capacity or irresistible impulse are meaningless, said Buckholtz; conversely, standard cognitive neuroscience terms such as “action cancellation” and “response inhibition” mean nothing to people making legal policy. If policy makers want to take advantage of neuroscientific knowledge, they need to engage in some hard thinking about whether any of the self-control domains that neuroscientists can access affect legal liability, he said.

NOTE: CPT = continuous performance task; SSRT = stop-signal reaction time; WCST = Wisconsin Card Sorting Test.

SOURCE: Presented by Buckholtz, March 6, 2018.

Predicting future behavior such as violence risk—for example, genetic studies have shown links between certain genotypes and violent behavior (Tiihonen et al., 2015)—is particularly fraught, with a high potential for misuse of science to determine treatment response in parole and civil commitment hearings, said Buckholtz.

DETECTING DECEPTION WITH NEUROIMAGING

Lie detection using a polygraph, which is generally not admissible in court, is a neuroscience technology that looks at the autonomic nervous system to deduce whether a person is telling the truth or lying, said Sanes. Original lie detection technologies relied on assessment of the peripheral measures of autonomic (sympathetic and parasympathetic) activity such

as skin conductance, pulse, and breathing rate. Newer technologies assess central nervous system activity and are therefore more powerful, he said, yet still have significant inferential issues.

For example, science focuses on aggregating data across groups of individuals to make generalized inferences; whereas, the law focuses on making a determination about one specific individual. This problem—known as group to individual or G2i—is discussed in more detail in Chapter 4. According to Buckholtz, group or population-level data are only relevant to the law to the extent that they bolster or weaken the evidence provided in an individual case. For example, he described a hypothetical study using fMRI to compare the brain activity of people lying versus telling the truth about a set of facts. If the differences are statistically significant, they can be used to make a generalizable universal inference about lying. One example might be that lying recruits a network involving specific brain regions. However, for some people, brain activity in that region stays the same or is even lower in the lying condition. Relying on this brain-based measure thus can lead to erroneous conclusions, said Buckholtz (Buckholtz and Faigman, 2014).

He added that reverse inference can lead to equally erroneous conclusions. Reverse inference relies on the following logic: If X brain network is activated during some psychological state or behavior Y, one might infer that whenever network X is active, that state or behavior must be occurring. However, even though it might be possible to identify a brain network that is reliably activated when a person is lying, one cannot assume that this network is activated only when the person is lying.

Another problem with detecting lies is that deception is not unitary. Complex behaviors such as lying have complex causes and, because motivation varies depending on circumstances, different instances of lying likely involve different patterns of brain activity, said Buckholtz. Ecological validity also limits the reliability of neuroscientific measures of deception, he said. For example, lying under stressful conditions such as a police interrogation is very different from lying in the sterile environment of the scanner.

Stuart Hoffman, senior scientific advisor for brain injury at the Department of Veterans Affairs, added that among incarcerated felons, there is also a high rate of traumatic brain injury associated with a decoupling of the regulation of blood flow in the brain. Because blood flow is what fMRI measures, the ability to detect deception in individuals with brain injury could be compromised. Profound childhood trauma and social dep-

rivation can also imprint the brain, said Buckholtz. Indeed, criminal behavior is multifactorial and cannot be reduced to a single brain imaging measure, he said. Thus, in a study by Aharoni and colleagues, low activity within the anterior cingulate cortex (ACC) correlated with earlier rearrest (Aharoni et al., 2013). But Poldrack and colleagues showed that the predictive power of the ACC, while statistically significant, is small and impacted by a range of cognitive processes and behavioral dispositions (Poldrack et al., 2009). Although some of these factors, such as risk of anxiety and schizophrenia, may in aggregate affect risk of antisocial behavior, they are not isometric with crime risk, said Buckholtz.

Given the expense and potential prejudicial nature of neuroscientific evidence, Buckholtz emphasized the need for rigorous evaluation of the incremental validity of brain imaging data over behavior itself or actuarial measures.

IDENTIFYING PAIN THROUGH NEUROIMAGING

Pain intersects with legal issues in cases related to determining qualifications for disability, workers’ compensation, or insurance benefits, as well as in determining the size of those awards and as evidence in tort claims, said Tor Wager, professor of psychology and neuroscience at the University of Colorado Boulder. Yet, determining the presence, severity, and causes of pain has proven difficult and is complicated by personal predispositions and emotional responses to the experience of pain as well as to gender and racial health disparities in the treatment of pain (IOM, 2011).1

Neuroscience can illuminate plausible mechanisms, said Wager, and while much is known about the neurophysiology and pharmacology of pain, much less is known about how pain is represented as a physiological response that could be assessed as a biomarker or mental experience. Although the gold standard for reporting pain remains a subjective numerical rating scale, neuroimaging and other neurologically based technologies such as electroencephalography (EEG) or magnetoencephalography (MEG) have been invoked in legal settings as potentially more objective measures of pain. More recently, fMRI and other measures are increasingly being used as biomarkers to assess patterns of brain activity as potential biomarkers linked to self-reports of pain. These technologies will

___________________

1 For more information, go to https://painconsortium.nih.gov/sites/default/files/NINDS-Pain-Infographic_508C.pdf (accessed June 18, 2018).

augment rather than replace pain reports and provide insight into the mechanisms underlying pain, said Wager.

However, Wager noted that whether imaging scans are admissible as scientific evidence is determined in large part by adherence to the Frye and Daubert criteria and Rule 702 of the Federal Rules of Evidence2 (see Box 2-1).

Wager cited a case in which a man was burned by a glob of molten asphalt, developed chronic pain, and sued his employer for damages. Joy Hirsch, then a professor of neuroscience, radiology, and psychology at Columbia University, presented expert testimony, including data that she said showed that patterns of fMRI activity in the man’s somatosensory cortex were consistent with claims of pain. An expert representing the defendant countered that Hirsch’s testimony did not meet the Frye standard. However, the judge ruled in favor of allowing Hirsch’s testimony, saying it would be based on “generally accepted scientific principles and inductive reasoning from her own research” (Davis, 2016). The employer settled the case and it did not go to trial.

Wager referred to this case as a “cautionary tale.” He cited “the problem of specificity,” noting that even very small areas of the brain—a single

___________________

2 For more information, go to https://www.rulesofevidence.org/article-vii/rule-702 (accessed April 26, 2018).

voxel on a scan, for example—represent multiple neural populations with different functional properties that can be activated across many different tasks (Logothetis, 2008). For pain, the positive predictive value of these measures is quite low, he said, but can be improved. Working with a Presidential Task Force of the International Association for the Study of Pain, Wager, Hank Greely, and others recommended a consensus set of standard criteria for establishing biomarkers of chronic pain (Davis et al., 2017) (see Box 2-2).

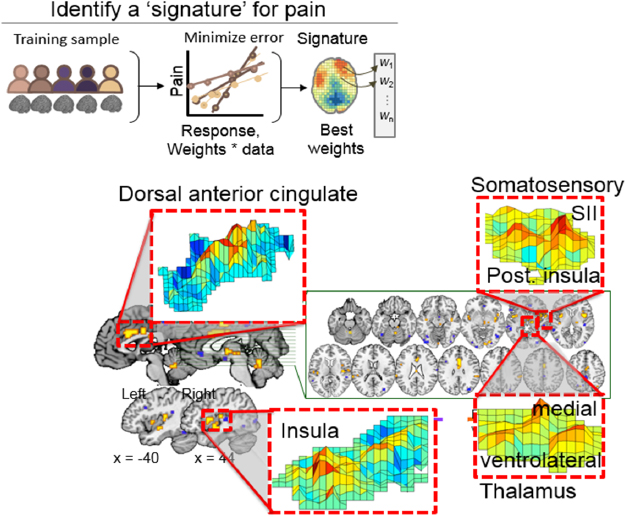

Wager and colleagues have developed a framework for biomarker development that uses machine learning or multivariate pattern-recognition techniques to optimize patterns that are predictive of clinical outcomes such as the pain experience; generalize the pattern across multiple populations, scanners, and labs; and characterize the properties of the pattern when applied to conditions that might be confused with the targeted outcome (Woo et al., 2017). To apply this framework to pain, they developed an fMRI-based neurological pain signature (NPS) based on scans done in individuals exposed to different intensities of painful heat (Wager et al., 2013). These scans showed activity in several brain regions known to be related to pain: the cingulate cortex, insula, medial and ventrolateral thalamus, somatosensory cortex, and posterior insula (see Figure 2-2). By collaborating with other investigators worldwide, sharing data, and prospectively testing hundreds of individuals across many different conditions, Wager and colleagues have demonstrated that NPS is sensitive and generalizable across multiple types of evoked pain and multiple testing sites (Zunhammer et al., 2017).

In terms of specificity, NPS has been tested in different ways and shown to differentiate between physical and non-physical pain, such as that experienced as a result of social rejection (Woo et al., 2014), exposure

to aversive images (Chang et al., 2015), or vicarious experience of other people’s pain (Krishnan et al., 2016), even though these experiences activate some, but not all, of the brain regions activated by physical pain. Wager said his team has also shown that NPS is sensitive to drug treatment, but insensitive to placebo effects (Zunhammer et al., 2018).

For use in legal settings, further validation of NPS and other brain signatures in development as biomarkers of pain will require additional testing for sensitivity, specificity, and generalizability as well as more extensive tests of the effects of countermeasures such as distraction, said Wager. Large data resources and biobanks will be essential in this regard, he said.

SOURCES: Presented by Wager, March 6, 2018; Wager et al., 2013.

“BRAIN READING”

Neuroimaging techniques such as fMRI and EEG enable scientists to measure and quantify patterns of brain activation, and then use those patterns to decode or reconstruct stimuli perceived by an observer. Jack Gallant, Chancellor’s Professor of Psychology at the University of California, Berkeley, has been exploring the use of fMRI to decode conscious and unconscious information from the brain. His research suggests that this could potentially be used as a form of lie detection that, by comparison to traditional lie detectors, makes more nuanced kinds of judgment beyond whether someone is lying or not. So-called brain reading might also make it possible to recover memories or other kinds of information such as emotional state or subconscious desires or goals, according to Gallant.

Brain reading involves assessing brain activity in response to various types of stimuli (visual, semantic, emotional, etc.) and then mapping the systematic relationship between those stimuli and brain activity, said Gallant. Creating these maps is extremely complicated, he said, given that the brain contains some 500 distinct brain areas and brain structures, organized into a giant, highly interconnected network. Moreover, brain activity is distributed not only spatially but also evolves over time (Breakspear, 2017), yet no current non-invasive technology can measure human brain activity in both space and time. EEG and MEG, for example, have good temporal but poor spatial resolution, while fMRI and functional near-infrared spectroscopy (fNIRS) have good spatial but poor temporal resolution. fMRI signals, for example, are captured on a time scale of about 2 seconds, so everything a person thinks, says, perceives, or feels during those 2 seconds is captured in a single measurement, discarding most of the information generated.

Gallant and colleagues have built a “brain viewer” that combines fMRI with machine learning methods to make complicated, detailed, 3D functional maps of information encoded in the brain during semantic or visual tasks.3 Every brain location represents multiple related kinds of information, and every type of thought is represented in a network of regions across the brain, said Gallant. So, for example, if one thinks about a dog, more than a dozen different brain locations represent different aspects of the concept of dog: how dogs look and sound, memories of previous dogs one has encountered, emotional reactions to dogs such as fear, and so on.

___________________

3 The interactive 3D semantic map can be viewed at http://gallantlab.org/huth2016 (accessed April 26, 2018).

Once these encoding models of brain activity in association with specific kinds of conceptual information have been generated, they can be converted into decoding models that are beginning to allow investigators to recover what a person experienced by measuring brain activity, said Gallant. For example, his lab has been able to reconstruct the physical structure and decode contents of photographs and movies presented to subjects during fMRI scans (Huth et al., 2016; Nishimoto et al., 2011; Stansbury et al., 2013). Gallant noted that decoded fMRI signals are fairly representative of the image presented, although not sufficiently accurate or precise to be used as evidence in court.

Adrian Nestor, assistant professor of psychology at the University of Toronto, Scarborough, has also been working with fMRI and EEG to decode patterns of brain activation. Nestor and other investigators have, for example, demonstrated the ability to decode faces from brain activity (Cowen et al., 2014; Nestor et al., 2016). While Nestor agreed that EEG has poor spatial resolution, his research suggests that information from EEG data can be extracted to reconstruct meaningful visual stimuli. The appeal of EEG is that it is widely available, portable, and much cheaper than fMRI, he said. The approach used by Nestor and colleagues is to determine the neural correlates of facial identification by extracting and aggregating spatiotemporal information from components of EEG signals obtained from healthy volunteers in response to viewing face images, then using pattern analysis to reconstruct images. Because EEG signals are intrinsically noisy, a proof-of-concept study required collecting large amounts of data from 13 individuals, presented multiple times over multiple sessions, with 54 faces and developing a procedure to map specific components of the EEG signal onto specific facial features. The results of this study indicated that EEG indeed supports facial image reconstruction; although the perceptual quality of the reconstructions was poor, according to Nestor (Nemrodov et al., 2018).

Nestor noted that the appearance of an individual as captured by a photograph can vary dramatically due to lighting, facial hair, makeup, etc. One advantage of his approach is that it reconstructs images based on facial structure such as the distance between the corner of an eyebrow and the tip of the nose. These facial landmarks are more consistent for a given individual than other characteristics that affect appearance such as those mentioned above. His lab has also shown that optimal reconstruction is obtained using information extracted from EEG signals captured between 50 and 600 milliseconds after stimulus onset (Nemrodov et al., 2016).

He concluded that while facial image reconstruction from EEG signals is feasible, additional studies will need to be conducted with a more heterogeneous group of subjects, including older adults and individuals with various types of deficits. Nestor also suggested that it may be possible to apply the approach to memory-based rather than perception-based reconstruction, and to reconstruct signals other than faces, such as auditorily presented words, although he acknowledged that these techniques will require substantial advances in hardware optimization and statistical methodology. This research may have implications in the legal system in the future, said Nestor, when identifying a person of interest, or establishing a mode of neural-based communication with someone who is incapable of verbal communication.

Gallant also urged caution in terms of expectations about whether and when these approaches might be ready for use in the legal system. With current technologies, Gallant said it is only possible to decode visual imagery from the brain about one-third as well as decoding an actual visual experience (Naselaris et al., 2015). However, Gallant predicted that as neuroscience progresses, advances in the ability to measure brain activity will inevitably be translated into better methods for decoding the brain. His lab has been working on systems to decode speech and social information, while others have attempted to decode dreams, he said. While networks involved in processing visual information are fairly well understood—which greatly facilitates the decoding of visual information—decoding language is limited by the poor temporal resolution of fMRI. Decoding emotional information is even more difficult because emotions are stored in brain structures whose activity is more difficult to measure. Even information stored in long-term memory is potentially recoverable. However, the way that long-term memories are stored in the brain is not understood, so decoding long-term memories would require that they be accessed and moved into working memory, said Gallant.

The ability to decode the brain is primarily limited by the quality of brain measurements, although progress in this area is moving forward rapidly, thanks to research funding programs such as the BRAIN Initiative. Gallant predicted that the next generation of fMRI will markedly improve the spatial resolution and improve the quality of spatial maps, but still will not address the time issues. The accuracy of brain models has also advanced in recent years due to advances in computer power and machine learning technologies, he said. He was less sanguine about the potential for other technologies such as fNIRS, focused ultrasound, photoacoustic

approaches, and microwave radar to substantially advance the field of human brain decoding in the near future, although they have worked well in rodents, whose thinner skulls allow deeper penetration into the brain.

Gallant stated that current methods of non-invasive brain measurement lack the precision and accuracy to provide evidence for use in legal settings. With the possible exception of detecting lies under very restricted circumstances, he said information decoded from the brain is no more reliable than taking testimony from a person. He noted, however, that improvements in another imaging technology, diffusion MRI, which detects anomalies in structural connectivity in the brain, may enable better associations between brain damage and criminal behavior (Waller et al., 2017).

GENETIC CONTRIBUTIONS TO BEHAVIOR PREDICTION

DNA has entered the realm of the law primarily based on the idea that genetic variation gives each individual a unique “genetic barcode.” However, Benjamin Neale, assistant professor in the Analytic and Translational Genetics Unit at Massachusetts General Hospital, noted that genetic variation also influences mental illnesses such as schizophrenia and bipolar disease and may also contribute to behavioral characteristics such as the propensity for violence. Technological advances over the past two decades have vastly improved the ability of scientists to capture all the genetic variations in a population and then, through genome-wide association studies (GWASs), to ask whether individual variants are more common in a group of individuals with a certain trait. The key to the successful identification of risk variants is to collect very large samples, said Neale.

Neale described a 2011 study by the Psychiatric Genetics Consortium (PGC) as an example of how genetic data may be used to predict the risk of schizophrenia. This example is not intended to conflate propensity for violence with schizophrenia, but merely to illustrate the potential predictive power of genetic studies. In this study of more than 9,000 individuals with schizophrenia and more than 12,000 controls, variants at five new genetic loci were identified that are associated with schizophrenia (Schizophrenia Psychiatric Genome-Wide Association Study Consortium, 2011). Neale noted that carrying these variants does not guarantee someone will develop schizophrenia, but rather each influences the risk for schizophrenia in the population as a subtle nudge. By 2013, with more than 35,000 cases and nearly 47,000 controls, PGC had identified 97 genome-wide significant sites. Each of these sites individually increases

the risk of developing schizophrenia by only a tiny percentage, but they can be aggregated into a polygenic risk score to create a predictor (International Schizophrenia Consortium et al., 2009; Schizophrenia Working Group of the Psychiatric Genomics Consortium, 2014). Neale said that with this approach, polygenic scores with an odds ratio of around 20 can be constructed contrasting the highest and lowest risk groups. However, he cautioned that the absolute risk is only about 3 percent, even in those people with the highest risk scores.

A similar approach has been used to build a polygenic risk score for heart attack. Importantly, said Neale, the cardiology community has incorporated traditional epidemiological risk factors such as age, sex, cholesterol levels, and smoking status into these risk scores to come up with a composite score that does a much better job of predicting heart attack risk. Similarly, in the context of adjudicating the degree to which someone has a tendency toward violence, Neale advocated examining not only genetics but behavior as well, rather than treating these two factors in isolation.

Neale also cited substantial pitfalls with using genetic risk predictors. Most GWASs have been conducted in populations of European ancestry, yet a recent study showed that these scores translate poorly to different ancestral populations (Martin et al., 2017). Even as these predictions improve with the aggregation of more data, they will always remain probabilistic, said Neale. In nearly all circumstances, genes are not fate, he said, and with complex behavioral phenomena such as violence or criminality, environment plays an essential role. A purely genetic explanation for an individual’s phenotypic presentation is thus highly unlikely. Even if a risk score had a 90 percent chance of predicting a certain trait or behavior, Neale said that it should not have undue influence over the evaluation of the individual. Genes can help build a story, he said, but should not be considered de facto or declarative in terms of evidence.

Another problem with associations of genetics and behavior is the failure to robustly replicate studies, said Neale. He cited the case of the monoamine oxidase (MAOA-L), the “warrior gene” genotype, which has been linked to aggression and antisocial behavior (Dorfman et al., 2014) and has been cited in at least 11 legal cases, usually in the context of sentencing rather than determining guilt (McSwiggan et al., 2017). However, a meta-analysis of studies demonstrated that the contribution of this gene to aggression was weak and heterogeneous (Ficks and Waldman, 2014). Many studies have also cited a gene–environment interaction in which MAOA-L gene carriers who experienced childhood trauma showed more antisocial behavior, although these studies also have not been consistently replicated

(McSwiggan et al., 2017). Indeed, according to Buckholtz, the biggest predictor of antisocial behavior, swamping other predictors by far, is early life maltreatment. Neale noted that the field of genetics is rapidly evolving, and as a result, even many commonly accepted associations between genes and behavior have proven unreliable.

This page intentionally left blank.