9

Effective Implementation: Partners and Capacities

Large-scale implementation requires more than a well-researched program design based on clear evidence for the efficacy of both core components and the specific practices through which those components take effect, thoughtfully adapted to meet the needs of diverse communities. The process of implementing an intervention at a scale that maximizes its impact also requires the system capacity—organizational infrastructure, resources, and abilities—to deliver it to a broad population and sustain the effort. That is, it requires not only effective methods and tools that can affect the behaviors and actions of people and organizations and thereby bring about change, but also an engineering process that produces the capacities required to sustainably support the use of those methods and tools in local settings.

In this chapter, we explore primary elements of this process, based on the now voluminous number of implementation models, frameworks, and strategies in implementation science (Tabak et al., 2012; Waltz et al., 2015). We look first at an integrated model of the overall functioning of the system, synthesizing key features and foundational concepts from across many of the more applied implementation science models. We then explore the roles of key partners involved in this model—the “co-creation” partners who each play a part in developing the capacities needed to make programs and interventions work and sustain them at scale. Next we focus on some key elements of effective overall system capacities for scale-up: collaboration, including leadership and implementation teams, community coalitions, and learning collaboratives; workforce development systems; systems to monitor and improve quality and outcomes; and communications and media systems for disseminating science-based information within communities beyond direct intervention services alone.

A MODEL OF SYSTEM FUNCTIONING

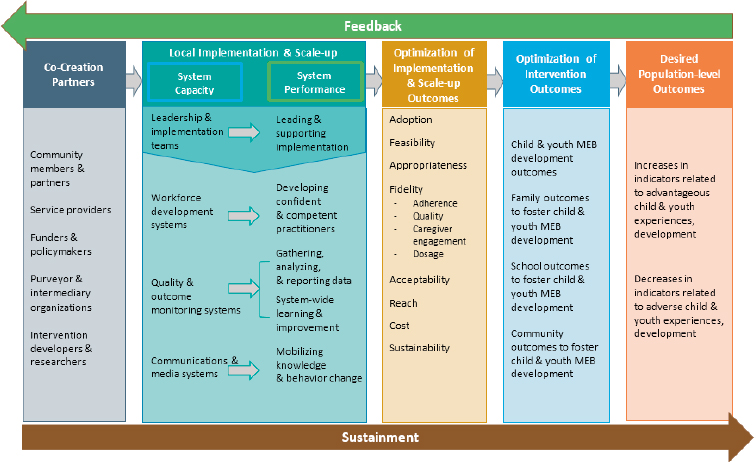

Researchers have created models of how partners work together to develop the capacity for successful implementation of a program at scale and the key functions involved. Figure 9-1 depicts a model of essential partners, capacities, and processes needed to achieve sustained benefits at a population level—a theory of change. Co-creation partners1 (Metz, 2015), shown at the left of the figure, work together, each contributing in a different way but collectively supporting the development of a system with the capacity to implement and scale the intervention, which has the elements shown in the green band. The system is optimized to pursue key implementation outcomes as it is first put into practice and then adapted (center band); information is collected about initial results and developments on the ground related to feasibility, fidelity, cost, how the intervention is received by participants, and the like (Proctor et al., 2011). As this system becomes operational, attention focuses on outcomes at the individual, family, school, and community levels, and further modifications are made to optimize these outcomes (band second from right), with the objective of ultimately effecting robust improvement that is evident in population-level indicators, represented in the band on the right.

SOURCE: Adapted from Aldridge, Boothroyd, Veazey, et al. (2016) and Chinman et al. (2016).

___________________

1 “Co-creation” is a term also used in a business context to refer to strategies for blending ideas and contributions from varied partners interested in a shared goal.

If successful, this process can contribute to the establishment of learning-based partnerships and shared accountability for strategies and outcomes. Figure 9-1 highlights the importance of each of the partners and each link in the process, but it is important also to emphasize that the scaling system is a feedback loop, as indicated by the arrows in the figure representing support and feedback. At each stage of the process, practitioners and researchers collect data and other kinds of feedback about results, unexpected difficulties, and the ideas and experiences of practitioners and program participants. This information is used continuously (but especially in the early stages) to refine the design of the intervention and plans for implementation, develop effective adaptations for diverse community needs, improve intervention and implementation as scaling continues, and sustain the intervention and the scaling process as needs and challenges develop.

CO-CREATION PARTNERS

Researchers have used the term “co-creation” to describe situations in which partners are closely involved in both the identification of the problem to be solved and its solution, and whose involvement is both coordinated and aligned with program goals (Metz, 2015; Metz, Albers, and Albers, 2014; Pfitzer, Bockstette, and Stamp, 2013; Voorberg, Bekkers, and Tummers, 2015). Although the literature on co-creation partners includes field and case studies that characterize the contributions of the various partners and how they coordinate, little evidence has emerged thus far about the direct outcomes of co-creation. Nevertheless, the importance of each of these groups of partners is clear.

Community Members

As co-creation partners, community members are those who can be expected to benefit broadly from the implementation or scaling of an effective intervention, including individuals and families who would benefit directly from participation and other community stakeholders who might benefit indirectly from the improved outcomes for individuals and families. Apart from participating in the program, community members may help spread information about it or provide tangible and intangible supports (International Association for Public Participation, 2014). They also may help shape the political and policy climate to support the program’s scaling. From case studies of the implementation of a practice model in county child welfare environments, Boothroyd and colleagues (2017) identified five key functions that community members may play in the development of system capacity for program implementation and scale-up: (1) relationship building, (2) addressing system barriers, (3) establishing culturally relevant supports and services, (4) meaningful involvement in implementation, and (5) ongoing communication and feedback for continuous improvement.

Community members may also be partners in research that supports effective program implementation (Deverka et al., 2012; Graham et al., 2016;

Lavallee et al., 2012). While seeking community input can slow the change process and may add an additional layer of complexity, it is key to true collaboration (Barnes and Schmitz, 2016; Boothroyd et al., 2017). Researchers have drawn lessons from efforts to engage community members. For example, D’Angelo and colleagues (2017) examine the implementation of a policy in Washington State designed to increase the availability of and access to mental, emotional, and behavioral health-related practices and describe successful strategies for planning, education, financing, restructuring, and quality management. Walker and colleagues (2015) explore the use of a statewide Tribal Gathering for multiphased engagement of tribal communities in the planning of behavioral interventions for youth. And Sanders and Kirby (2012) use examples from large trials of Triple P (Positive Parenting Program) to highlight community engagement strategies, means of enhancing program fit with community needs and preferences, and ways to increase population reach. Nevertheless, more research is needed to clarify effective strategies for engagement and their outcomes.

Service Providers

Service providers are leaders, managers, supervisors, and practitioners who have a stake in the adoption, implementation, and outcomes of a program, and they play at least two key roles. First, practitioners and their direct supervisors have a distinct perspective on program fit, delivery, and reception. They may be able to point to gaps in the program, its organization, and the system that supports its implementation that are more difficult for leaders and external partners to see, thus creating unique opportunities to advance desired outcomes strategically during planning and improvement processes. Second, the readiness of service providers to change and act when a new program is implemented is critical to its success, although “readiness” is not simple to assess and should perhaps be viewed as a process rather than a state (Dymnicki et al., 2014).

While researchers are only beginning to look in detail at what readiness entails, some have suggested that it is a combination of willingness and ability in the context of a program that fits the context and community well (Dymnicki et al., 2014; Flaspohler et al., 2012; Horner, Blitz, and Ross, 2014; Scaccia et al., 2015; Weiner, 2009). It is important to note further that, while the concept of readiness for implementation may have primary relevance to service providers, the concept may also be meaningful across all co-creation partners.

Additional work has also pointed to the importance of strong organizational leadership; communication; and openness to trying new policies, procedures, and programs, as well as to the value of careful site selection (Chilenski et al., 2015; Romney, Israel, and Zlatevski, 2014). Additional research on factors, strategies, and outcomes related to organizational readiness is clearly needed.

Funders and Policy Makers

Funders are individuals and organizations, whether public or private, that provide financial support for a program’s implementation or scale-up, while policy makers set legislative or administrative policy related to factors known to contribute to MEB well-being (e.g., the availability of effective services, the community environment). Both groups are key to making the environment hospitable for a sustainable program (Chapter 10 reviews recent developments in funding and policy making at the federal state, and local levels).

Powell and colleagues (2016) analyze efforts in Philadelphia to transform a behavioral health system. These authors suggest that policy strategies at the service provider, state agency, political, and social levels show promise. They see little benefit from strict mandates that might force top-down approaches, finding instead that developing broad political support by engaging multiple stakeholders is the approach most likely to succeed. This perspective is supported by a study of policy makers’ perspectives on the implementation of an evidence-based program, SafeCare, in two state child welfare systems (Willging et al., 2015). This study showed that SafeCare was sustained where policy makers had strong partnerships with service providers and academic institutions. Policy makers who participated in this study also pointed to robust planning and collaborative problem solving by all stakeholders involved as elements in program success. Also aligned with this perspective is work demonstrating the value of networking in helping all partners gain access to information, resources, and tools for decision making (Armstrong et al., 2013; Tricco et al., 2016). The important role of legislative staff members in advancing mental health–related policy has also been noted (Purtle, Brownson, and Proctor, 2017).

Purveyors and Intermediary Organizations

Purveyors are people who provide training and technical assistance for implementers or supporters of a program, usually through a close relationship to the program’s developer (Fixsen et al., 2005). Their interactions with service providers may include both formal interactions addressing such matters as program guidelines, adherence, training, and supervision, and informal interactions addressing personal and professional issues outside the scope of primary work efforts (Palinkas et al., 2009). Purveyors also may collect evaluation data, as well as local and clinical knowledge, from the service providers with whom they work as part of effective adaptation of the program to the local context. Among the factors that promote effective interactions between service providers and purveyors are accessibility, mutual respect, a shared language, and a willingness to engage in negotiation and compromise (see, e.g., McWilliam et al., 2016; Schoenwald and Henggeler, 2003; Webster-Stratton, Reid, and Marsenich, 2014).

Whereas purveyors typically represent a single program, intermediaries—organized centers or partnerships developed to support state and local agencies—-

support a wide range of programs (Mettrick et al., 2015). Often housed within universities or nonprofit organizations, they take direction from state and local governments and complement state and local efforts to use research evidence to improve child, family, and community outcomes. Their functions may include providing support in the identification of promising programs and service delivery models; conducting research, evaluation, and data linking; supporting partnership engagement and collaboration; assisting in workforce development activities, including training; and providing expertise in policy and financing (Mettrick et al., 2015).

A study of two centers that play the intermediary role—the Evidence-based Prevention and Intervention Support Center at Penn State University’s Prevention Research Center and the Center for Effective Practice at the Child Health and Development Institute of Connecticut—highlights ingredients that appear to promote successful interactions between such groups and the other stakeholders with whom they interact (Bumbarger and Campbell, 2012; Franks, 2010; Rhoades et al., 2012). This work points to the importance of, for example, attending to the immediate needs of practitioners and policy makers, ensuring clear communication and recommendations by using media common to and accessible to these audiences, balancing research and science with local expertise and wisdom, and establishing mutually reinforcing activities and shared objectives among partners.

Intervention Developers and Researchers

Individuals and organizations that conduct the research needed to generate or improve the design of a program clearly play a critical role, as do those that carry out the continued work necessary to increase the program’s utility and support its implementation and scale-up. Progress in methods for consistently identifying a program’s core components (discussed in Chapter 8) should allow developers, program purveyors, and intermediaries to support service providers with feasible and valid fidelity assessment and adaptation processes.

The value of partnerships between researchers and other stakeholders is also garnering increased attention. One key benefit of such collaboration is in the translation of field evidence to ongoing program improvements that support more efficient implementation processes, better implementation outcomes, and stronger program outcomes (Chambers, Glasgow, and Stange, 2013). An ongoing process of development, evaluation, and refinement allows for the ultimate achievement of effective programs, as long as that process is supported by shared access to data obtained through program and implementation monitoring (discussed below) (Chambers and Azrin, 2013). This approach is illustrated by the Plan-Do-Study-Act cycle (Taylor et al., 2014) (see Box 9-1). It is also of benefit in guiding the necessary iterative actions of other co-creation partners, particularly those closer to the ground level of implementation efforts. We discuss monitoring and related issues more fully in Chapter 11.

KEY ELEMENTS OF CAPACITY FOR SCALE-UP

Several key elements support effective implementation of a program at scale, including collaboration, workforce development systems, quality and outcome monitoring systems, and communications and media systems.

Collaboration

Collaboration is needed at multiple levels, including both within and among leadership and implementation teams and broader community coalitions. In some cases, these collaborations have been augmented by the use of learning collaboratives.

Leadership and Implementation Teams

Local leadership and implementation teams design and lead an organization-wide strategy for bringing about a targeted change (Higgins, Weiner, and Young, 2012). They act as internal change agents, ensuring that core components of a program are carried out and that it is implemented with fidelity (Aldridge,

Boothroyd, Fleming et al., 2016). Researchers who have examined implementation frameworks suggest that to be effective, leadership and implementation teams need to include individuals who have decision-making authority within the organization or community, some form of oversight over front-line practitioners’ delivery of a program, and the capacity to both engage others (secure buy-in) and foster a supportive climate for the program (Meyers, Durlak, and Wandersman, 2012).

Implementation teams are part of effective blended strategies for implementation, such as the strategies of Communities That Care (CTC) and Promoting School-Community-University Partnerships to Enhance Resilience (PROSPER) (see Chapter 8). However, research to date has focused more on the factors that influence the functioning of such teams than on their specific effects on the implementation process (Feinberg et al., 2007; Perkins et al., 2011). An exception is a randomized controlled trial of a program now known as Treatment Foster Care Oregon, which targets adolescents with behavioral and other problems. This study showed that although community development teams did not lead to higher rates of or faster implementation, they were associated with greater program reach and more thorough completion of stage-based implementation activities relative to implementation efforts that did not use such teams (Brown et al., 2014). A study of Triple P service organizations found that leadership team capacity was associated with greater organizational implementation capacity and predicted agency sustainment of program delivery (Aldridge, Murray, et al., 2016).

Researchers have also focused on specific aspects of leadership in the context of implementation and pointed to various reasons for its importance. For example, two studies of transformational leadership strategies (those that are motivational and promote innovation and change) compared with other strategies, such as those focused on bidirectional relationships between leaders and followers, found that the former strategies tend to foster a sense that new programs are attainable and reduce perceptions that the program imposes burdens, as well as to promote favorable attitudes toward a new program or practice (Aarons and Sommerfeld, 2012; Brimhall et al., 2016). Strategies often associated with transformational leadership styles include recruiting and selecting staff members receptive to change, offering support and requesting feedback during the implementation process, and ensuring opportunities for hands-on learning experiences (Guerrero et al., 2016).

Other observational studies have identified features of system leadership that contribute to successful, sustained program implementation. These include setting a project mission and vision, planning for program sustainment early and often, setting realistic program plans, and having alternative strategies for program survival (Aarons et al., 2016). Qualitative studies have pointed to other roles played by leadership teams, such as championing the program and marketing it to stakeholders; institutionalizing the program through a combination of funding, contracting, and improvement plans; and fostering multilevel

collaborations among state, county, and community stakeholders (Aarons et al., 2016).

Community Coalitions

Successful leadership and implementation teams bring together individuals and groups within organizations and single-system environments and, depending on the scale of the program, across organizations and system environments. For large-scale programs, links across communities are needed. A community coalition is a relatively formal alliance of local organizations and individuals that have engaged to address a community issue collectively.2 It serves as a hub for integrating and coordinating efforts, facilitating communication, and mutually reinforcing activities (Billioux, Conway, and Alley, 2017; Hanleybrown, Kania, and Kramer, 2012). The development of a community coalition is therefore a strategy for linking leadership, implementation teams, and other system partners in cross-sector community environments (Hawkins, Catalano, and Arthur, 2002; Spoth and Greenberg, 2011).

Researchers have examined the effectiveness of community coalitions developed for varied purposes. For example, a meta-analysis of studies of 58 community coalition-driven interventions to reduce health disparities among racial and ethnic minority populations yielded several conclusions (Anderson et al., 2015). It showed that coalitions focused on broad health and social care system-level strategies had modest but positive effects, as did coalitions that used lay community health outreach workers or group-based health education led by professional staff. More inconsistent results were found for coalitions that focused on more targeted system-level changes, such as improvements in housing, green spaces, neighborhood safety, and regulatory processes, as well as coalitions that used group-based health education led by peers.

Several studies have investigated factors associated with the success or sustainability of such coalitions and provided evidence for the value of a number of process and structural elements: community readiness; training and fidelity to the coalition process; the presence and formalization of rules; staff competence, focus, cohesion, and enthusiasm; effective board functioning; skilled, capable, and shared leadership models; membership diversity, engagement, and cohesion; member agency collaboration; diversity and leveraging of funding sources; and increases in coalition capacity, data resources, and funding resources (Brown et al., 2015; Brown, Feinberg, and Greenberg, 2010; Feinberg, Bontempo, and Greenberg, 2008; Feinberg et al., 2002; Feinberg, Greenberg, and Osgood, 2004; Gomez, Greenberg, and Feinberg, 2005; Johnson et al., 2017; Kegler and Swan, 2011; Zakocs and Edwards, 2006; Zakocs and Guckenburg, 2007).

___________________

2 See https://www.med.upenn.edu/hbhe4/part4-ch15-community-coalition-actiontheory.shtml for information about Community Coalition Action Theory.

One study focused specifically on the impact of community coalitions on outcomes for youth. A study of coalitions funded through the federally sponsored Strategic Prevention Framework State Incentive Grant showed that internal organization and structure, community connections and outreach, and funding from multiple sources each predicted reductions in one or more outcomes related to underage drinking that were sustained through young adulthood, especially for males (Flewelling and Hanley, 2016; Oesterle et al., 2018).

Learning Collaboratives

Learning collaboratives populated by independent programs with similar goals have been efficient mechanisms for program implementation and improvement over time. Used successfully in health care (Margolis, Peterson, and Seid, 2013), they serve not only as vehicles for joint planning, but also as laboratories for implementing, testing, and improving programs across a spectrum of sites. Sharing of learning within such collaboratives has considerable potential to accelerate the development and dissemination of effective programs, but also to support testing of outcomes in multiple sites. As implementation is a time-consuming and expensive process, novel approaches to effective and efficient scaling of programs will become increasingly important. As with any effort of this complexity, strong leadership, sharing among participants, and infrastructure are key ingredients in success.

Workforce Development Systems

The effectiveness of any program to foster healthy mental, emotional, and behavioral (MEB) development will depend on a well-trained workforce. However, both shortages in the numbers of individuals interested in this work and insufficient professional development for the existing workforce have been documented in health care, early childhood education, K–12 education, and community-based programs (such as home visiting) (Institute of Medicine and National Research Council, 2015). Documentation of the rising prevalence of adverse early childhood experiences, disadvantageous social determinants of behavioral health, and increasing health and educational disparities has focused the attention of policy makers and leaders on the need to strengthen this workforce.

A substantial body of evidence from fields including industrial and organizational psychology points to methods for identifying the attributes needed for particular roles; recruiting, selecting, and retaining workers likely to be successful; and providing continuous opportunities for both formal and informal learning (see, e.g., National Academies of Sciences, Engineering, and Medicine, 2017, 2018b). Education researchers and others have also contributed to a substantial body of work on both preparation and professional development for teachers (see, e.g., Institute of Medicine and National Research Council, 2012, 2015; National Research Council, 2010). We do not review these and other ways

of strengthening the early childhood workforce (see, e.g., Institute of Medicine and National Research Council, 2012, 2015; National Research Council, 2010) here but we note several areas in which researchers have focused on challenges for the MEB health–related workforce (see also Boat, Land, and Leslie, 2017).

The demands on those who work in MEB health-related settings are significant. Effective staffing of child-focused programs and sites requires a pool of individuals who can reflect in their daily activities the delicate balance between fidelity to core program components and the flexibility to meet the needs of those they serve. Staff in such programs frequently work with distressed children and families, and are called upon to bring compassion, patience, and a wide range of skills and strategies to such challenges as helping families provide supportive environments for children. These individuals must also be comfortable with and skilled at working in teams. At the same time, programs associated with fostering MEB health will increasingly integrate research into daily activities, and workers must contribute to ongoing data gathering and be responsive to the resulting need for modifications of programs and practices. Thus, these workers are called on to engage in quality improvement and to work collaboratively in interdisciplinary settings and across sectors of the landscape of programs that serve children and families and promote MEB health.

Program developers frequently specify criteria for recruitment and selection of workers based on the professional qualifications and experience determined to be necessary for staff who can deliver core components of the program design. For example, intensive family interventions that integrate cognitive-behavioral approaches may require practitioners with related training, experience, and possibly certification. However, for a program that relies on more straightforward behavioral practices shown to be effective in a variety of service settings, individuals with less formal training may be able to deliver the intervention reliably and effectively (Embry and Biglan, 2008). These individuals, including peer counselors, parenting counselors, and community health workers, come to the job with varied training, credentialing, and licensing; in many cases they are deeply culturally connected with the communities in which they work, and work at lower salaries than workers with more substantial credentials (Boat et al., 2016).

While many program purveyors provide training and materials as a foundational learning experience for new practitioners, it is important that training be well aligned with the core program components, not only to enhance practitioners’ understanding of the essentials of program delivery but also to increase the efficiency of training processes (not spending an undue amount of time on peripheral or nonessential intervention ingredients). Despite the importance of foundational training in evidence-based practices, such basic training strategies as workshops, reading of treatment manuals, and brief supervision have not been shown to produce adequate training outcomes for practitioners or clients (Beidas and Kendall, 2010; Herschell et al., 2010). Other work has also supported the importance of active learning experiences for training practitioners in evidence-based practices (e.g., Beidas and Kendall, 2010). Other elements of effective training include experiential learning in a multidisciplinary

and well-supervised model setting, with frequent evaluation and two-way feedback.

Ongoing coaching and supervision of practitioners and other individuals also play an important role in maintaining an effective workforce, and researchers have examined features that enhance the effectiveness of these activities. For example, Nadeem and colleagues (2013) identify as particularly valuable continuing training close to the time when new skills are to be put into practice, the application of new skills to cases, a focus on skill building and mastery, problem solving related to implementation barriers, adaptation of treatments to meet circumstantial needs, planning for how to sustain the trained skills, and promotion of engagement and accountability. Worker-specific collection of performance data and feedback can be a helpful improvement tool.

The impact of coaching on the actual use of program practices has been demonstrated in varied contexts, including K–12 teaching and medical health coaching3 (see, e.g., Kraft and Blazar, 2018; Kresser, 2017; National Academies of Sciences, Engineering, and Medicine, 2017, 2018b). A theme in this work is that coaching and training can be effective if they incorporate such key features as targeting specific skills practitioners need and helping them link those skills to direct applications. For example, the authors of a meta-analysis of the impact of coaching on teachers found that training alone, even when it included integrated demonstrations, practice opportunities, and feedback, resulted in little applied transfer of innovative practices (Joyce and Showers, 2002). However, when ongoing coaching was provided within classroom environments, large gains were seen in the use and application of practices. Similarly, a study of training workshops for mental health care providers showed that feedback, consultation, and coaching provided as follow-up on material presented in the workshop were essential for improving adoption of the new practices, the development and retention of skills, and outcomes for clients (Herschell et al., 2010). A study of the implementation of new practices in community-based mental health and social service settings reinforces the finding that supportive coaching environments and systematic quality feedback are associated with favorable outcomes even beyond those related to fidelity, such as reduced practitioner turnover and no impact on increasing practitioner burnout (Aarons, Fettes, et al., 2009; Aarons, Sommerfeld, et al., 2009). Thus while these approaches require time and expertise, they appear to have clear benefits for effective implementation.

Quality and Outcome Monitoring Systems

The collection of information about quality and outcomes is vital to the continuous improvement that fuels effective implementation, and can be done in

___________________

3 A medical coach supplements care given by the physician by providing patient education and supporting patients in adhering to prescribed care or treatments; see, e.g., https://www.ama-assn.org/practice-management/payment-delivery-models/why-yourmedical-practice-needs-health-coach.

a variety of ways. Effective monitoring requires a multipurpose data infrastructure that includes systems for monitoring implementation quality and intervention and community-level outcomes, as well as the integration of other sources of relevant data. Quality monitoring systems collect data on implementation or scale-up, including the elements of fidelity (see Chapter 8) and other implementation outcomes as noted in Figure 9-1 and discussed by Proctor and colleagues (2011). Monitoring an array of implementation outcomes can ensure that quality benchmarks are being met and gives early indication of the extent to which intervention and community-level outcomes should be expected. For example, if intervention fidelity is low, it may be useful to increase practitioner supports during program training or coaching or to refine practitioner recruitment and selection criteria. Likewise, if reach is low, community leaders and implementation teams may seek to increase program adoption through the community, train more practitioners, or involve the support of community members and partners to address access problems or stigmas that may be associated with seeking support. Similar strategies could be used to address warning signs in other implementation outcomes.

Intervention and community-level outcome monitoring is a communitywide assessment process for monitoring the well-being of children and youth; its purpose is to identify trends or flag issues across different geographic areas and to contribute to assessments of the progress of programs and practices designed to bring about change. Researchers can use the data collected through such an infrastructure to continually improve a program or practice over time as they learn how the intervention functions in diverse contexts (Chambers, Glasgow, and Stange, 2013). The data can also provide feedback to practitioners and other stakeholders, who can use it to strengthen their contributions to the implementation process.

Community outcome monitoring systems are a critical element of a public health approach to promotion of child well-being and prevention of MEB problems.4 Such public health surveillance systems—which typically collect information about aspects of the health and well-being of children and adolescents—provide local assessment and monitoring data that can be used to prioritize needs, select evidence-based programs, and monitor program results. They identify the existence of problems, their effects, trends in their incidence, and the results of interventions (Rivara and Johnston, 2013). Such systems are also critical to implementation research, allowing scholars to pinpoint problems to be addressed, provide a basis for sound choices of interventions, and assess program impacts (Spoth et al., 2013). The Society for Prevention Research has described the key features of successful community monitoring systems (see Box 9-2). Spoth and colleagues (2013) also highlight the importance of using repeated

___________________

4 For more information on community monitoring systems, see https://www.preventionresearch.org/advocacy/community-monitoring-systems.

assessments to monitor progress toward goals once a plan for implementing promotion and prevention programming is in place. The ideal may be to collect data and provide real-time feedback to participants continually so that implementation considerations and improvement opportunities remain closely connected.

It is essential that both relevant MEB outcomes and the prevalence of risk and protective factors be included in these measures, and that local-level data be collected to capture variations in the incidence of MEB problems and the presence of risk and protective factors across communities and neighborhoods (Fagan, Hawkins, and Catalano, 2008). Local variations in need and risk can be quite marked, as demonstrated by data from the Communities That Care Youth Survey (Arthur et al., 2007; Briney et al., 2012; Fagan, Hawkins, and Catalano, 2008). For example, a study comparing two high school populations from the same city showed that elevated risk factors at one high school included poor family management, parental attitudes favorable toward substance use, friends’ use of drugs, and prevalence of favorable attitudes toward drug use (Briney et al., 2012). Elevated risk factors at the other high school indicated a need for programs to reduce community disorganization, academic failure, and interaction with antisocial peers.

Once a monitoring system is in place, periodic reassessment will identify changes in levels of MEB outcomes and risk and protective factors, which can in turn be used to assess progress in reducing problems and provide early warning

of emerging problems related to, for example, an economic downturn, rapid population growth, or other influences. Selection of the survey instrument, procedures for administering the survey and scoring the data, and training for all stakeholders who will use the data are all critical to the utility of a community monitoring system. Surveys that have been or could be used for this purpose include

- the Communities That Care Youth Survey (Beyers et al., 2004; Bond et al., 2005; Catalano et al., 2012; Fagan, Hawkins, and Catalano, 2008; Fleming et al., 2019; Glaser et al., 2005; Hemphill et al., 2011; Oesterle et al., 2012);

- Monitoring the Future (Johnston et al., 2010);

- the Centers for Disease Control and Prevention’s Youth Risk Behavior Survey;

- the European School Survey Project on Alcohol and Other Drugs (Hibell et al., 2009);

- the Global Student Health Survey (World Health Organization, n.d.); and

- the Early Development Index (Catalano et al., 2012; Janus and Offord, 2007).

We note also that a considerable amount of data is routinely collected as children and youth go about their lives, and much of these data have potential utility for researching and monitoring MEB health and development. Electronic data capture systems are used in health care, schools, and other child care and services settings. The opportunity to use big data techniques5 for observational research has not yet been well utilized for MEB health–related research, but use of these techniques is likely to increase in this context (Van Poucke et al., 2016). See Chapter 11 for further discussion of quality and outcome monitoring.

Communications and Media Systems

The presence of digital media in daily life, particularly among children and youth, provides the opportunity to include targeted outreach as part of almost any kind of large-scale MEB health program. Existing research suggests that mass media could contribute to efforts both to strengthen prosocial behavior and to prevent MEB problems in families and schools.

A 2010 review of studies on the impact of media campaigns designed to affect public health showed that mass media—both radio and television—can have a significant impact on a wide variety of health behaviors (Wakefield,

___________________

5 While there is no one best definition of the term “big data,” it is generally used to refer to extremely large sets of digital data that cannot be digested without advanced analytic techniques (National Academies of Sciences, Engineering, and Medicine, 2019, pp. 2–10).

Loken, and Hornik, 2010). The authors found evidence that such campaigns may both influence individuals’ decisions about their behavior and have indirect effects—for example, through the influence of people directly exposed to the campaign on others not exposed to it, or by increasing general support for both norms and public policies. Campaigns appear to have increased effectiveness when products and services to support health behavior change are concurrently available through community-based programs and, more broadly, policies are in place to support changes in targeted health behaviors. Media campaigns targeting smoking have been studied especially thoroughly, and have been shown to both promote quitting and discourage young people from starting the habit, but effects have been found in other areas as well.6 The Triple P system of interventions has also developed universal media-based strategies. The premise of these strategies is that media have the potential to influence aspects of child and adolescent development by directly affecting young people or by influencing parents’ behavior and shaping norms and public policies (Sanders and Prinz, 2008). More recently, researchers have focused on the potential value of communicating through existing, or natural, community, social, and professional networks (Palinkas et al., 2011; Valente et al., 2015). (See Chapter 10 for discussion of technology-based developments.)

SUMMARY

By 2009, researchers had identified many approaches that can be effective in improving MEB health and development and began to focus on the challenges of implementing those approaches at scales broad enough to benefit large populations. In the past decade, researchers have learned more about what goes into effective adaptation of programs, tracking of fidelity, and other elements of this complex process. There is more to learn about how to support and sustain implementation systems, but it is clear that successful implementation of an MEB health promotion or prevention program at a population scale is a complex endeavor that depends on the involvement of multiple partners to create system capacity:

- Community members provide relationship building, support culturally relevant adaptation, provide communication and feedback, and partner with researchers and service providers.

- Service providers execute the program strategies and provide feedback on program fit, delivery, and reception.

___________________

6 Behaviors for which effects were found included physical activity, nutrition, cardiovascular disease prevention, birth rate reduction, HIV infection reduction, cervical cancer screening, breast cancer screening, immunization, diarrheal disease, and organ donation, seat belt use, and reduction of drunk driving. However, for some behaviors, such as promoting parenting strategies for reducing drug use, media campaigns were not effective (Wakefield, Loken, and Hornik, 2010).

- Funders provide or help secure sustained resources.

- Policy makers secure resources as well as political and community support, and act in partnership with local service providers and researchers.

- Purveyors and intermediary organizations oversee program delivery, provide expertise, collect evaluation data and feedback from practitioners and clients, and collaborate with local service agencies, community members, and researchers.

- Researchers generate and improve the program design to meet community needs, analyze data and collaborate with service providers and purveyors to fine tune the program and address problems, monitor program fidelity, conduct evaluations, and analyze results.

These stakeholders work together to develop and operate the complex system that makes implementation possible.

Key elements that strengthen organizational infrastructure for the implementation system include

- leadership and implementation teams (including their collaboration and coordination within community coalitions);

- workforce development systems;

- quality and outcome monitoring systems; and

- communications and media systems.

- trends in risk and protective factors and other influences on MEB development, and other relevant data;

- learning through evaluation and improvement; and

- multiple methods of communication to publicize program objectives and share them with stakeholders and the community at large.

REFERENCES

Aarons, G.A., and Sommerfeld, D.H. (2012). Leadership, innovation climate, and attitudes toward evidence-based practice during a statewide implementation. Journal of the American Academy of Child & Adolescent Psychiatry, 51(4), 423–431.

Aarons, G.A., Fettes, D.L., Flores, L.E., Jr., and Sommerfeld, D.H. (2009). Evidence-based practice implementation and staff emotional exhaustion in childrens’ services. Behaviour Research and Therapy, 47(11), 954–960.

Aarons, G.A., Green, A.E., Trott, E., Willging, C.E., Torres, E.M., Ehrhart, M.G., and Roesch, S.C. (2016). The roles of system and organizational leadership in system-wide evidence-based intervention sustainment: A mixed-method study. Administration and Policy in Mental Health, 43(6), 991–1008.

Aarons, G.A., Sommerfeld, D.H., Hecht, D.B., Silovsky, J.F., and Chaffin, M.J. (2009). The impact of evidence-based practice implementation and fidelity monitoring on staff turnover: Evidence for a protective effect. Journal of Consulting and Clinical Psychology, 77(2), 270–280.

Aldridge, W.A., II, Boothroyd, R.I., Fleming, W.O., Lofts Jarboe, K., Morrow, J., Ritchie, G.F., and Sebian, J. (2016). Transforming community prevention systems for sustained impact: Embedding active implementation and scaling functions. Translational Behavioral Medicine, 6(1), 135–144.

Aldridge, W.A., II, Boothroyd, R.I., Veazey, C.A., Powell, B.J., Murray, D.W., and Prinz, R.J. (2016). Ensuring active implementation support for North Carolina counties scaling the Triple P system of interventions. Chapel Hill: Frank Porter Graham Child Development Institute, University of North Carolina at Chapel Hill.

Aldridge, W.A., II, Murray, D.W., Prinz, R.J., and Veazey, C.A. (2016). Final report and recommendations: The Triple P implementation evaluation, Cabarrus and Mecklenburg counties, NC. Chapel Hill: University of North Carolina at Chapel Hill. Available: https://ictp.fpg.unc.edu/sites/ictp.fpg.unc.edu/files/resources/TPIE%20Final%20Report_Jan2016_1.pdf.

Anderson, L.M., Adeney, K.L., Shinn, C., Safranek, S., Buckner-Brown, J., and Krause, L.K. (2015). Community coalition-driven interventions to reduce health disparities among racial and ethnic minority populations. Cochrane Database of Systematic Reviews, 2015(6), CD009905.

Armstrong, R., Waters, E., Dobbins, M., Anderson, L., Moore, L., Petticrew, M., Clark, R., Pettman, T.L., Burns, C., Moodie, M., Conning, R., and

Swinburn, B. (2013). Knowledge translation strategies to improve the use of evidence in public health decision making in local government: Intervention design and implementation plan. Implementation Science, 8(1), 121. doi:10.1186/1748-5908-8-121.

Arthur, M.W., Briney, J.S., Hawkins, J.D., Abbott, R.D., Brooke-Weiss, B.L., and Catalano, R.F. (2007). Measuring risk and protection in communities using the communities that care youth survey. Evaluation and Program Planning, 30(2), 197–211.

Barnes, M., and Schmitz, P. (2016). Community engagement matters (now more than ever). Stanford Social Innovation Review, 16. Available: https://ssir.org/articles/entry/community_engagement_matters_now_more_than_ever.

Beidas, R.S., and Kendall, P.C. (2010). Training therapists in evidence-based practice: A critical review of studies from a systems-contextual perspective. Clinical Psychology: Science and Practice, 17(1), 1–30. doi:10.1111/j.1468-2850.2009.01187.x.

Beyers, J.M., Toumbourou, J.W., Catalano, R.F., Arthur, M.W., and Hawkins, J.D. (2004). A cross-national comparison of risk and protective factors for adolescent substance use: The United States and Australia. Journal of Adolescent Health, 35(1), 3–16.

Billioux, A., Conway, P.H., and Alley, D.E. (2017). Addressing population health: Integrators in the accountable health communities model. Journal of the American Medical Association, 318(19), 1865–1866.

Boat, T.F., Land, M.L., Jr, and Leslie, L.K. (2017). Health care workforce development to enhance mental and behavioral health of children and youths. JAMA Pediatrics, 171(11), 1031–1032.

Boat, T.F., Land, M.L., Leslie, L.K., Hoagwood, K.E., Hawkins-Walsh, E., McCabe, M.A., Fraser, M.W., de Saxe Zerden, L., Lombardi, B.M., Fritz, G.K., Frogner, B.K., Hawkins, J.D., and Sweeney, M. (2016). Workforce development to enhance the cognitive, affective, and behavioral health of children and youth: Opportunities and barriers in child health care training. Discussion Paper, National Academy of Medicine, Washington, DC. doi:10.31478/201611b.

Bond, L., Toumbourou, J., Thomas, L., Catalano, R., and Patton, G. (2005). Individual, family, school, and community risk and protective factors for depressive symptoms in adolescents: A comparison of risk profiles for substance use and depressive symptoms. Prevention Science, 6(2), 73–88.

Boothroyd, R.I., Flint, A.Y., Lapiz, A.M., Lyons, S., Jarboe, K.L., and Aldridge, W.A., 2nd. (2017). Active involved community partnerships: Co-creating implementation infrastructure for getting to and sustaining social impact. Translational Behavioral Medicine, 7(3), 467–477.

Brimhall, K.C., Fenwick, K., Farahnak, L.R., Hurlburt, M.S., Roesch, S.C., and Aarons, G.A. (2016). Leadership, organizational climate, and perceived burden of evidence-based practice in mental health services. Administration and Policy in Mental Health, 43(5), 629–639.

Briney, J.S., Brown, E.C., Hawkins, J.D., and Arthur, M.W. (2012). Predictive validity of established cut points for risk and protective factor scales from the Communities That Care youth survey. Journal of Primary Prevention, 33(5-6), 249–258.

Brown, C.H., Chamberlain, P., Saldana, L., Padgett, C., Wang, W., and Cruden, G. (2014). Evaluation of two implementation strategies in 51 child county public service systems in two states: Results of a cluster randomized head-to-head implementation trial. Implementation Science, 9(1), 134.

Brown, L.D., Feinberg, M.E., and Greenberg, M.T. (2010). Determinants of community coalition ability to support evidence-based programs. Prevention Science, 11(3), 287–297.

Brown, L.D., Feinberg, M.E., Shapiro, V.B., and Greenberg, M.T. (2015). Reciprocal relations between coalition functioning and the provision of implementation support. Prevention Science, 16(1), 101–109.

Bumbarger, B.K., and Campbell, E.M. (2012). A state agency–university partnership for translational research and the dissemination of evidence-based prevention and intervention. Administration and Policy in Mental Health, 39(4), 268–277.

Catalano, R.F., Fagan, A.A., Gavin, L.E., Greenberg, M.T., Irwin, C.E., Jr., Ross, D.A., and Shek, D.T. (2012). Worldwide application of prevention science in adolescent health. The Lancet, 379(9826), 1653–1664.

Chambers, D.A., and Azrin, S.T. (2013). Research and services partnerships: Partnership: A fundamental component of dissemination and implementation research. Psychiatric Services, 64(6), 509–511.

Chambers, D.A., Glasgow, R.E., and Stange, K.C. (2013). The dynamic sustainability framework: Addressing the paradox of sustainment amid ongoing change. Implementation Science, 8(117).

Chilenski, S.M., Olson, J.R., Schulte, J.A., Perkins, D.F., and Spoth, R. (2015). A multi-level examination of how the organizational context relates to readiness to implement prevention and evidence-based programming in community settings. Evaluation and Program Planning, 48, 63–74.

Chinman, M., Acosta, J.D., Ebener, P.A., Sigel, C., and Keith, J. (2016). Getting to Outcomes® guide for teen pregnancy prevention. Available: https://www.rand.org/content/dam/rand/pubs/tools/TL100/TL199/RAND_TL199.pdf.

D’Angelo, G., Pullmann, M.D., and Lyon, A.R. (2017). Community engagement strategies for implementation of a policy supporting evidence-based practices: A case study of Washington state. Administration and Policy in Mental Health and Mental Health Services Research, 44(1), 6–15.

Deverka, P.A., Lavallee, D.C., Desai, P.J., Esmail, L.C., Ramsey, S.D., Veenstra, D.L., and Tunis, S.R. (2012). Stakeholder participation in comparative effectiveness research: Defining a framework for effective engagement. Journal of Comparative Effectiveness Research, 1(2), 181–194.

Dymnicki, A.B., Wandersman, A., Osher, D., Grigorescu, V., and Huang, L. (2014). Willing, able Æ ready: Basics and policy implications of readiness

as a key component for implementation of evidence-based interventions. Washington, DC: Office of the Assistant Secretary for Planning and Evaluation, Office of Human Services Policy, U.S. Department of Health and Human Services.

Embry, D.D., and Biglan, A. (2008). Evidence-based kernels: Fundamental units of behavioral influence. Clinical Child and Family Psychology Review, 11(3), 75–113.

Fagan, A.A., Hawkins, J.D., and Catalano, R.F. (2008). Using community epidemiologic data to improve social settings: The Communities That Care prevention system. In M. Shinn and H. Yoshikawa (Eds.), Toward positive youth development: Transforming schools and community programs (pp. 292–312). New York: Oxford University Press. doi:10.1093/acprof.oso/9780195327892.003.0016.

Feinberg, M.E., Bontempo, D.E., and Greenberg, M.T. (2008). Predictors and level of sustainability of community prevention coalitions. American Journal of Preventive Medicine, 34(6), 495–501.

Feinberg, M.E., Chilenski, S.M., Greenberg, M.T., Spoth, R.L., and Redmond, C. (2007). Community and team member factors that influence the operations phase of local prevention teams: The PROSPER project. Prevention Science, 8(3), 214–226.

Feinberg, M.E., Greenberg, M.T., and Osgood, D.W. (2004). Readiness, functioning, and perceived effectiveness in community prevention coalitions: A study of Communities That Care. American Journal of Community Psychology, 33(3–4), 163–176.

Feinberg, M.E., Greenberg, M.T., Osgood, D.W., Anderson, A., and Babinski, L. (2002). The effects of training community leaders in prevention science: Communities That Care in Pennsylvania. Evaluation and Program Planning, 25(3), 245–259.

Fixsen, D.L., Naoom, S.F., Blase, K.A., Friedman, R.M., and Wallace, F. (2005). Implementation research: A synthesis of the literature. (Publication No. 231). Tampa: University of South Florida, Louis de la Parte Florida Mental Health Institute, National Implementation Research Network.

Flaspohler, P.D., Meehan, C., Maras, M.A., and Keller, K.E. (2012). Ready, willing, and able: Developing a support system to promote implementation of school-based prevention programs. American Journal of Community Psychology, 50(3–4), 428–444.

Fleming, C.M., Eisenberg, N., Catalano, R.F., Kosterman, R., Cambron, C., David Hawkins, J. D., Hobbs, T., Berman, I., Fleming, T., and Watrous, J. (2019). Optimizing assessment of risk and protection for diverse adolescent outcomes: Do risk and protective factors for delinquency and substance use also predict risky sexual behavior? Prevention Science, 20(5), 788–799. doi:10.1007/s11121-019-0987-9.

Flewelling, R.L., and Hanley, S.M. (2016). Assessing community coalition capacity and its association with underage drinking prevention effectiveness in the context of the SPF SIG. Prevention Science, 17(7), 830–840.

Franks, R. (2010). Role of the intermediary organization in promoting and disseminating best practices for children and youth: The Connecticut Center for Effective Practice. Emotional & Behavioral Disorders in Youth, 10(4), 87–93.

Glaser, R.R., Lee Van Horn, M., Arthur, M.W., Hawkins, J.D., and Catalano, R.F. (2005). Measurement properties of the Communities That Care® Youth Survey across demographic groups. Journal of Quantitative Criminology, 21(1), 73–102.

Gomez, B.J., Greenberg, M.T., and Feinberg, M.E. (2005). Sustainability of community coalitions: An evaluation of communities that care. Prevention Science, 6(3), 199–202.

Graham, P.W., Kim, M.M., Clinton-Sherrod, A.M., Yaros, A., Richmond, A.N., Jackson, M., and Corbie-Smith, G. (2016). What is the role of culture, diversity, and community engagement in transdisciplinary translational science? Translational Behavioral Medicine: Practice, Policy, Research, 6(1), 115–124.

Guerrero, E.G., Padwa, H., Fenwick, K., Harris, L.M., and Aarons, G.A. (2016). Identifying and ranking implicit leadership strategies to promote evidence-based practice implementation in addiction health services. Implemention Science, 11, 69.

Hanleybrown, F., Kania, J., and Kramer, M.R. (2012). Channeling change: Making collective impact work. Available: https://www.iowacollegeaid.gov/sites/default/files/D.%20Channeling_Change_Article%202.pdf.

Hawkins, J.D., Catalano, R.F., and Arthur, M.W. (2002). Promoting science-based prevention in communities. Addictive Behaviors, 27(6), 951–976.

Hemphill, S.A., Heerde, J.A., Herrenkohl, T.I., Patton, G.C., Toumbourou, J.W., and Catalano, R.F. (2011). Risk and protective factors for adolescent substance use in Washington state, the United States and Victoria, Australia: A longitudinal study. The Journal of Adolescent Health: Official Publication of the Society for Adolescent Medicine, 49(3), 312–320.

Herschell, A.D., Kolko, D.J., Baumann, B.L., and Davis, A.C. (2010). The role of therapist training in the implementation of psychosocial treatments: A review and critique with recommendations. Clinical Psychology Review, 30(4), 448–466.

Hibell, B., Guttormsson, U., Ahlstrom, S., Balakireva, O., Bjarnason, T., Kokkevi, A., and Krause, L.K. (2009). The 2007 ESPAD report: Substance use among students in 35 European countries. Stockholm, Sweden: Swedish Council for Information on Alcohol and Other Drugs.

Higgins, M.C., Weiner, J., and Young, L. (2012). Implementation teams: A new lever for organizational change. Journal of Organizational Behavior, 33(3), 366–388.

Horner, R.H., Blitz, C., and Ross, S.W. (2014). The importance of contextual fit when implementing evidence-based interventions. Washington, DC: Office of the Assistant Secretary for Planning and Evaluation, Office of Human Services Policy, U.S. Department of Health and Human Services.

Institute of Medicine and National Research Council. (2012). The early childhood care and education workforce: Challenges and opportunities: A workshop report. Washington, DC: The National Academies Press. doi:10.17226/13238.

Institute of Medicine and National Research Council. (2015). Transforming the workforce for children birth through age 8: A unifying foundation. Washington, DC: The National Academies Press. doi:10.17226/19401.

International Association for Public Participation. (2014). IAP2’s Public Participation Spectrum. Available: https://cdn.ymaws.com/www.iap2.org/resource/resmgr/foundations_course/IAP2_P2_Spectrum_FINAL.pdf.

Janus, M., and Offord, D.R. (2007). Development and psychometric properties of the early development instrument (EDI): A measure of children’s school readiness. Canadian Journal of Behavioural Science, 39(1), 1–22.

Johnson, K., Collins, D., Shamblen, S., Kenworthy, T., and Wandersman, A. (2017). Long-term sustainability of evidence-based prevention interventions and community coalitions survival: A five and one-half year follow-up study. Prevention Science, 18(5), 610–621.

Johnston, L., O’Malley, P.M., Bachman, J.G., and Schulenberg, J.E. (2010). Monitoring the future: National survey results on drug use, 1975–2009 (Vol. II). Available: http://monitoringthefuture.org/pubs/monographs/vol2_2009.pdf.

Joyce, B.R., and Showers, B. (2002). Student achievement through staff development (3rd edition). Alexandria, VA: Association for Supervision and Curriculum Development.

Kegler, M.C., and Swan, D.W. (2011). An initial attempt at operationalizing and testing the community coalition action theory. Health Education & Behavior: The Official Publication of the Society for Public Health Education, 38(3), 261–270.

Kraft, M.A., and Blazar, D. (2018). Taking teacher coaching to scale. EdNext, 18(4). Available: https://www.educationnext.org/taking-teacher-coachingto-scale-can-personalized-training-become-standard-practice.

Kresser, C. (2017). The importance of health coaches in combating chronic disease. Available: https://kresserinstitute.com/importance-health-coachescombating-chronic-disease.

Lavallee, D.C., Williams, C.J., Tambor, E.S., and Deverka, P.A. (2012). Stakeholder engagement in comparative effectiveness research: How will we measure success? Journal of Comparative Effectiveness Research, 1(5), 397–407.

Margolis, P.A., Peterson, L.E., and Seid, M. (2013). Collaborative Chronic Care Networks (C3Ns) to transform chronic illness care. Pediatrics, 131(Suppl. 4), S219–S223.

McWilliam, J., Brown, J., Sanders, M.R., and Jones, L. (2016). The Triple P implementation framework: The role of purveyors in the implementation and sustainability of evidence-based programs. Prevention Science, 17(5), 636–645.

Mettrick, J., Harburger, D.S., Kanary, P.J., Lieman, R.B., and Zabel, M. (2015). Building cross-system implementation centers: A roadmap for state and local child serving agencies in developing centers of excellence (COE). Baltimore, MD: University of Maryland, The Institute for Innovation and Implementation.

Metz, A. (2015). Implementation brief: The potential of co-creation in implementation science. National Implementation Research Network. Available: https://nirn.fpg.unc.edu/sites/nirn.fpg.unc.edu/files/resources/NIRN-Metz-ImplementationBreif-CoCreation.pdf.

Metz, A., Albers, B., and Albers, B. (2014). What does it take? How federal initiatives can support the implementation of evidence-based programs to improve outcomes for adolescents. Journal of Adolescent Health, 54(Suppl. 3), S92–S96.

Meyers, D.C., Durlak, J.A., and Wandersman, A. (2012). The quality implementation framework: A synthesis of critical steps in the implementation process. American Journal of Community Psychology, 50(3-4), 462–480.

Mrazek, P.B., Biglan, A., and Hawkins, J.D. (2004). Community-monitoring systems: Tracking and improving the well-being of America’s children and adolescents. Falls Church, VA: Society for Prevention Research.

Nadeem, E., Gleacher, A., and Beidas, R.S. (2013). Consultation as an implementation strategy for evidence-based practices across multiple contexts: Unpacking the black box. Administration and Policy in Mental Health and Mental Health Services Research, 40(6), 439–450. doi:10.1007/s10488-013-0502-8.

National Academies of Sciences, Engineering, and Medicine. (2017). Building America’s skilled technical workforce. Washington, DC: The National Academies Press. doi:10.17226/23472.

National Academies of Sciences, Engineering, and Medicine. (2018). Workforce development and intelligence analysis for national security purposes: Proceedings of a workshop. Washington, DC: The National Academies Press. doi:10.17226/25117.

National Academies of Sciences, Engineering, and Medicine. (2019). A decadal survey of the social and behavioral sciences: A research agenda for advancing intelligence analysis. Washington, DC: The National Academies Press. doi:10.17226/25335.

National Research Council. (2010). Preparing teachers: Building evidence for sound policy. Washington, DC: The National Academies Press. doi:10.17226/12882.

Oesterle, S., Hawkins, J.D., Steketee, M., Jonkman, H., Brown, E.C., Moll, M., and Haggerty, K.P. (2012). A cross-national comparison of risk and protective factors for adolescent drug use and delinquency in the United States and the Netherlands. Journal of Drug Issues, 42(4), 337–357.

Oesterle, S., Kuklinski, M.R., Hawkins, J.D., Skinner, M.L., Guttmannova, K., and Rhew, I.C. (2018). Long-term effects of the Communities That Care

trial on substance use, antisocial behavior, and violence through age 21 years. American Journal of Public Health, 108(5), 659–665.

Palinkas, L.A., Aarons, G.A., Chorpita, B.F., Hoagwood, K., Landsverk, J., and Weisz, J.R. (2009). Cultural exchange and the implementation of evidence-based practices: Two case studies. Research on Social Work Practice, 19(5), 602–612.

Palinkas, L.A., Holloway, I.W., Rice, E., Fuentes, D., Wu, Q., and Chamberlain, P. (2011). Social networks and implementation of evidence-based practices in public youth-serving systems: A mixed-methods study. Implementation Science, 6(1), 113.

Perkins, D.F., Feinberg, M.E., Greenberg, M.T., Johnson, L.E., Chilenski, S.M., Mincemoyer, C.C., and Spoth, R.L. (2011). Team factors that predict to sustainability indicators for community-based prevention teams. Evaluation and Program Planning, 34(3), 283–291.

Pfitzer, M.W., Bockstette, V., and Stamp, M. (2013). Innovating for shared value. Harvard Business Review, September 2013. Available: https://hbr.org/2013/09/innovating-for-shared-value.

Powell, B.J., Beidas, R.S., Rubin, R.M., Stewart, R.E., Wolk, C.B., Matlin, S.L., Weaver, S., Hurford, M.O., Evans, A.C., Hadley, T.R., and Mandell, D.S. (2016). Applying the policy ecology framework to Philadelphia’s behavioral health transformation efforts. Administration and Policy in Mental Health, 43(6), 909–926.

Proctor, E., Silmere, H., Raghavan, R., Hovmand, P., Aarons, G., Bunger, A., Griffey, R., and Hensley, M. (2011). Outcomes for implementation research: Conceptual distinctions, measurement challenges, and research agenda. Administration and Policy in Mental Health and Mental Health Services Research, 38(2), 65–76.

Purtle, J., Brownson, R.C., and Proctor, E.K. (2017). Infusing science into politics and policy: The importance of legislators as an audience in mental health policy dissemination research. Administration and Policy in Mental Health and Mental Health Services Research, 44(2), 160–163.

Rhoades, K.A., Leve, L.D., Harold, G.T., Mannering, A.M., Neiderhiser, J.M., Shaw, D.S., Natsuaki, M.N., and Reiss, D. (2012). Marital hostility and child sleep problems: Direct and indirect associations via hostile parenting. Journal of Family Psychology, 26(4), 488–498.

Rivara, F.P., and Johnston, B. (2013). Effective primary prevention programs in public health and their applicability to the prevention of child maltreatment. Child Welfare, 92(2), 119–139.

Romney, S., Israel, N., and Zlatevski, D. (2014). Exploration-stage implementation variation: Its effect on the cost-effectiveness of an evidence-based parenting program. Journal of Psychology, 222(1), 37–48.

Sanders, M.R., and Kirby, J.N. (2012). Consumer engagement and the development, evaluation, and dissemination of evidence-based parenting programs. Behavior Therapy, 43(2), 236–250.

Sanders, M.R., and Prinz, R. (2008). Using the mass media as a population level strategy to strengthen parenting skills. Journal of Clinical Child & Adolescent Psychology, 37(3), 609–621. doi:10.1080/15374410802148 103.

Scaccia, J.P., Cook, B.S., Lamont, A., Wandersman, A., Castellow, J., Katz, J., and Beidas, R.S. (2015). A practical implementation science heuristic for organizational readiness: R = mc(2). Journal of Community Psychology, 43(4), 484–501.

Schoenwald, S.K., and Henggeler, S.W. (2003). Introductory comments. Cognitive and Behavioral Practice, 10(4), 275–277.

Spoth, R., and Greenberg, M. (2011). Impact challenges in community science-with-practice: Lessons from PROSPER on transformative practitioner–scientist partnerships and prevention infrastructure development. American Journal of Community Psychology, 48(1–2), 106–119.

Spoth, R., Rohrbach, L.A., Greenberg, M., Leaf, P., Brown, C.H., Fagan, A., Catalano, R.F., Pentz, M.A., Sloboda, Z., and Hawkins, J.D. (2013). Addressing core challenges for the next generation of type 2 translation research and systems: The translation science to population impact (TSci Impact) framework. Prevention Science, 14(4), 319–351 doi:10.1007/s11121-012-0362-6.

Tabak, R.G., Khoong, E.C., Chambers, D.A., and Brownson, R.C. (2012). Bridging research and practice: Models models for dissemination and implementation research. American Journal of Preventive Medicine, 43(3), 337–350. doi:10.1016/j.amepre.2012.05.024.

Taylor, M.J., McNicholas, C., Nicolay, C., Darzi, A., Bell, D., and Reed, J.E. (2014). Systematic review of the application of the plan-do-study-act method to improve quality in healthcare. BMJ Quality & Safety, 23(4), 290. doi:10.1136/bmjqs-2013-001862.

Tricco, A.C., Cardoso, R., Thomas, S.M., Motiwala, S., Sullivan, S., Kealey, M.R., Hemmelgarn, B., Ouimet, M., Hillmer, M.P., Perrier, L., Shepperd, S., and Straus, S.E. (2016). Barriers and facilitators to uptake of systematic reviews by policy makers and health care managers: A scoping review. Implementation Science, 11, 4.

Valente, T.W., Palinkas, L.A., Czaja, S., Chu, K.H., and Brown, C.H. (2015). Social network analysis for program implementation. PLoS One, 10(6), e0131712.

Van Poucke, S., Thomeer, M., Heath, J., and Vukicevic, M. (2016). Are randomized controlled trials the (g)old standard? From clinical intelligence to prescriptive analytics. Journal of Medical Internet Research, 18(7), e185.

Voorberg, W.H., Bekkers, V.J.J.M., and Tummers, L.G. (2015). A systematic review of co-creation and co-production: Embarking on the social innovation journey. Public Management Review, 17(9), 1333–1357.

Wakefield, M.A., Loken, B., and Hornik, R.C. (2010). Use of mass media campaigns to change health behaviour. The Lancet, 376(9748), 1261–1271.

Walker, S.C., Whitener, R., Trupin, E.W., and Migliarini, N. (2015). American Indian perspectives on evidence-based practice implementation: Results from a statewide tribal mental health gathering. Administration and Policy in Mental Health and Mental Health Services Research, 42(1), 29–39.

Waltz, T.J., Powell, B.J., Matthieu, M.M., Damschroder, L.J., Chinman, M.J., Smith, J.L., Proctor, E.K., and Kirchner, J.E. (2015). Use of concept mapping to characterize relationships among implementation strategies and assess their feasibility and importance: Results from the Expert Recommendations for Implementing Change (ERIC) study. Implementation Science, 10(1), 109. doi:10.1186/s13012-015-0295-0.

Webster-Stratton, C.H., Reid, M.J., and Marsenich, L. (2014). Improving therapist fidelity during implementation of evidence-based practices: Incredible years program. Psychiatric Services, 65(6), 789–795.

Weiner, B.J. (2009). A theory of organizational readiness for change. Implementation Science, 4, 67.

Willging, C.E., Green, A.E., Gunderson, L., Chaffin, M., and Aarons, G.A. (2015). From a “perfect storm” to “smooth sailing”: Policymaker perspectives on implementation and sustainment of an evidence-based practice in two states. Child Maltreatment, 20(1), 24–36.

World Health Organization. (n.d.). Global School-based Student Health Survey (GSHS). Available: https://www.who.int/ncds/surveillance/gshs/en.

Zakocs, R., and Guckenburg, S. (2007). What coalition factors foster community capacity? Lessons learned from the fighting back initiative. Health Education & Behavior, 34(2), 354–375.

Zakocs, R.C., and Edwards, E.M. (2006). What explains community coalition effectiveness?: A review of the literature. American Journal of Preventive Medicine, 30(4), 351–361.

This page intentionally left blank.