Appendix A

The Role of Assessment in Supporting Science Investigation and Engineering Design

A reader of this report may notice the absence of a chapter titled Assessment. This was a deliberate choice by the committee, first recognizing the contribution of the report Developing Assessments for the Next Generation Science Standards (National Research Council, 2014), and second, noting the importance of seamlessly integrating assessment throughout the vision of science investigation and engineering design articulated throughout the report. Table A-1 below provides a guide to the reader of the places in the report most relevant to assessment. The next section contains an overview of three empirically supported ideas for assessment systems that provide strong evidence of student learning in science investigations and engineering design. We then provide some worked examples of the design and enactment of classroom assessment that can be used to support science investigation and engineering design (Kang, Thompson, and Windschitl, 2014) to illustrate ways that this approach can be used with investigation and design. Finally, the last section includes an example of how discourse can be used as assessment (Coffey et al., 2011). This approach can also be applied to assessment of engineering design (Alemdar et al., 2017; Purzer, 2018).

EMPIRICALLY SUPPORTED IDEAS FOR ASSESSMENT SYSTEMS

- The Assessment Triangle (National Research Council, 2001) identifies three components of an assessment system that when aligned provides strong evidence of student learning: the learning goals (cognition), the tasks (observation), and the system of interpretation,

-

including the coding rubric (interpretation). As learning goals have shifted to Framework-inspired (National Research Council, 2014) three-dimensional (3D) learning, modifications to assessment tasks and interpretation systems that maintain this alignment must be considered.

- Classroom-based investigation and design assessment systems have the following characteristics, regardless of whether they are used for formative or summative purposes:

- The student’s performance on the tasks reveal evidence of progress on 3D learning along a continuum between expected beginning and ending points relative to the learning expectations.

- The coding rubric and system of interpretation provide evidence of students’ progress across a range of student abilities (Gotwals and Songer, 2013).

- The tasks and coding rubric provide a range of opportunities for students to demonstrate 3D learning with and without guidance, such as scaffolds (e.g., Kang, Thompson, and Windschitl, 2014; Songer, Kelcey, and Gotwals, 2009).

- The coding rubric and system of interpretation are specific enough to be useful in guiding teachers in either next instructional steps (formative) or in determining the amount and rate of progress in 3D learning (summative) (National Research Council, 2014).

- Research studies demonstrate that three-dimensional assessment tasks of a short answer and/or scaffold-rich format can provide stronger evidence of 3D learning than multiple choice items. For example, a research study conducted with 1,885 Detroit Public School sixth graders in 22 classrooms evaluated the relative amount of information on 3D learning demonstrated through embedded, multiple choice (called standardized) and 3D learning tasks (called complex) in association with a 3D learning-fostering 8-week unit on ecology and biodiversity. Results demonstrated that the embedded assessment tasks revealed both the largest amount of information and the greatest range of information across student abilities (Songer, Kelcey, and Gotwals, 2009). A similar study also demonstrated that 3D assessment systems provided opportunities for students at a range of ability levels to demonstrate evidence of both successes and challenges in 3D learning along a unit learning progression (Gotwals and Songer, 2013).

TABLE A-1 Where Is Assessment in This Volume?

| Chapter | Subject | Focus | Pages |

|---|---|---|---|

| 4 | How Students Engage with Investigation and Design | Communicate reasoning to self and others | 97–98 |

| 5 | How Teachers Support Investigation and Design | Embedded assessment | 127–129 |

| Features come together for investigation and design | 131–138 | ||

| 6 | Instructional Resources for Supporting Investigation and Design | Assessment and communicating reasoning to self and others | 162–163 |

| 7 | Preparing and Supporting Teachers to Facilitate Investigations | Equity and inclusion | 205 |

| 10 | Conclusions and Recommendations | Conclusion #5 | 270 |

| Conclusion #7 | 271–272 | ||

| Recommendation #2 | 275–276 | ||

| Research questions | 278 |

Worked Examples of the Design and Enactment of Classroom Assessment

Kang and colleagues (2014) described five different types of scaffolding in formative assessment tasks. These scaffolds appear to show promising benefit for student learning. They help to support students in making their ideas explicit and providing guidance to students as they develop higher-quality explanations. Examples of each of these different scaffolding types for formative assessment tasks are shared here from Kang, Thompson, and Windschitl (2014):

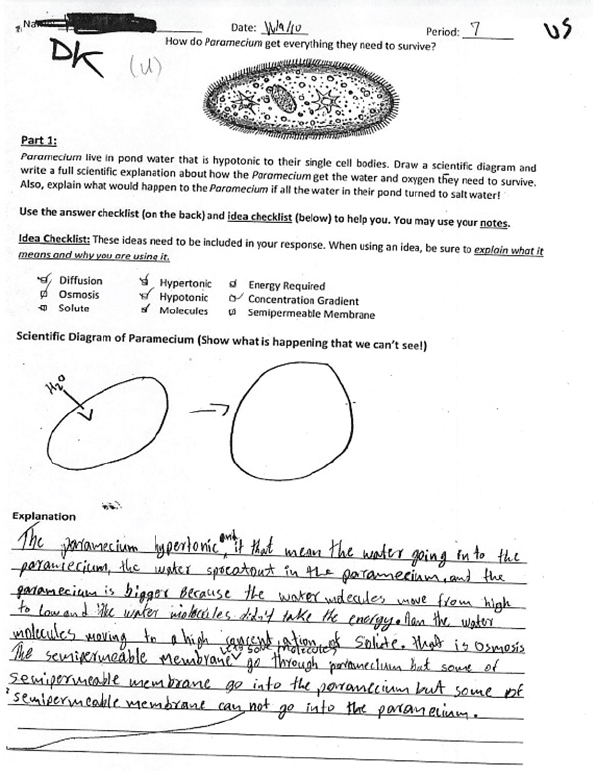

1. Allowing students to draw in combination with writing to explain focal phenomena

When students were asked to draw unobservable underlying mechanisms that caused an observable phenomenon or event, they engaged in the scientific practice of modeling and in more challenging intellectual work. The example shown below (see Figure A-1), taken from a 9th-grade biology classroom (p. 679), illustrates how students are asked to show how a paramecium gets everything it needs to survive.

SOURCE: Kang et al. (2014)

2. Asking a question with a contextualized phenomenon

Contextualized phenomena also help students provide better explanations. That is, rather than asking students to explain a generic event or scientific idea, these tasks ask students to place the idea in context. An example is provided below (p. 679):

A skater girl is flying down the big hill on 102nd (right in front of Steve Cox Memorial Park, where that cabin is, behind McLendon’s Hardware) when she realizes that some jerk has built a huge brick wall across the road. She knows that she won’t be able to stop in time. What should she do to minimize, or decrease, her injuries? Explain why this is the best option for the skater girl.

3. Providing sentence frames

Teachers used both focusing and connecting sentence frames to help students draw their students to focal phenomena and lead in to explanations. While some sentence frames helped students get started with their explanation (e.g., “What I saw was _______________” . . . “I know this because_______________,”) higher-quality, connecting sentence frames helped students to more deeply connect evidence and reasoning to make scientific explanations. These sentence frames included, for example, starters such as “Evidence for _________ comes from the [activity on] _______________ because ______________.”

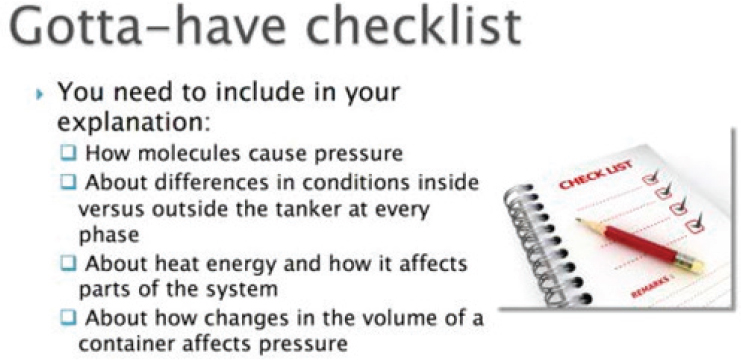

4. Scaffolding by providing students with a checklist

An additional form of scaffolding is a checklist, which can either provide students with a word bank to use when creating an explanation or a model (called a “simple checklist”) or an “explanation checklist” that prompts students to provide information about aspects of a model or explanation or relationships among ideas (see Figure A-2).

SOURCE: Kang et al. (2014)

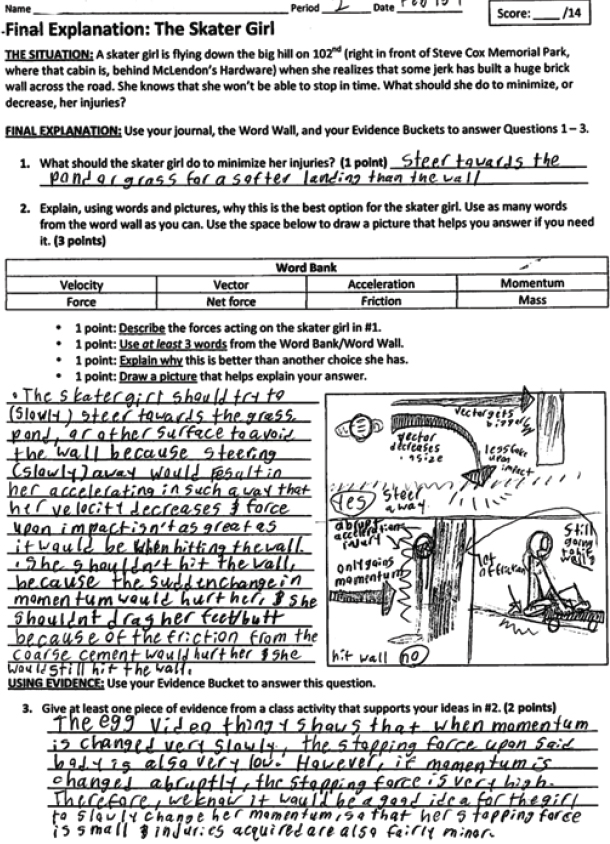

5. Scaffolding by providing a rubric

Consistent with studies performed in other disciplinary areas (e.g., Andrade, 2010; Kang, Thompson, and Windschitl, 2014) found that providing a rubric in a task also helped explicitly provide students with criteria that helped raise the quality of their explanation. The example of the “skater girl” assessment, shown below (see Figure A-3), illustrates how such a rubric with points for higher-quality work can be embedded into a task, making clear the ways in which students’ work will be evaluated by the teacher (p. 680).

SOURCE: Kang et al. (2014)

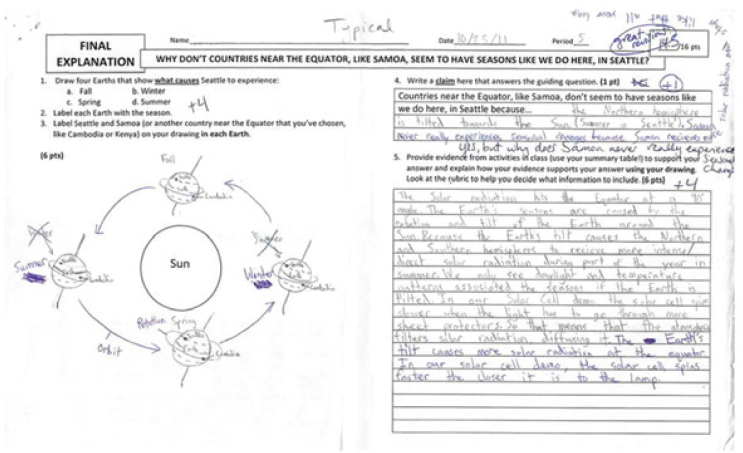

Combining multiple scaffolds in one assessment

These different forms of scaffolds can be combined in one assessment, as illustrated by the task shown below (see Figure A-4), which illustrates a contextualized phenomenon, a sentence frame, and the combination of drawing plus writing (Kang et al. 2014, Figure 5, p. 692).

SOURCE: Kang et al. (2014)

INFORMAL ASSESSMENT THROUGH CLASSROOM DISCOURSE: THE EXAMPLE OF TERRY’S CLASSROOM DISCUSSION

Assessment does not need to use a formal instrument. It can occur by way of classroom discourse. Box A-1 provides an example of how this was done in a high school chemistry course.

REFERENCES

Alemdar, M., Lingle, J.A., Wind, S.A., and Moore, R.A. (2017). Developing an engineering design process assessment using think-aloud interviews. International Journal for Engineering Education, 33(1), 441–452

Andrade, H., Du, Y., and Mycek, K. (2010). Rubric-referenced self-assessment and middle school students’ writing. Assessment in Education: Principles, Policy & Practice, 17, 199–214.

Coffey, J.E., Hammer, D., Levin, D.M., and Grant, T. (2011). The missing disciplinary substance of formative assessment. Journal of Research in Science Teaching, 48(10), 1109–1136.

Gotwals, A.W., and Songer, N.B. (2013) Validity evidence for learning progression-based assessment items that fuse core disciplinary ideas and science practices. The Journal of Research in Science Teaching, 50(5), 597–626.

Kang, H., Thompson, J., and Windschitl, M. (2014). Creating opportunities for students to show what they know: The role of scaffolding in assessment tasks. Science Education, 98(4), 674–704.

National Research Council. (2001). Knowing What Students Know: The Science and Design of Educational Assessment. Washington. DC: The National Academies Press.

National Research Council. (2014). Developing Assessments for the Next Generation Science Standards. Washington, DC: The National Academies Press.

Purzer, S. (2018). Engineering Approaches to Problem Solving and Design in Secondary School Science: Teachers as Design Coaches. Paper commissioned for the Committee on Science Investigations and Engineering Design for Grades 6–12. Board on Science Education, Division of Behavioral and Social Sciences and Education. National Academies of Sciences, Engineering, and Medicine. Available: http://www.nas.edu/Science-Investigation-and-Design [October 2018].

Songer, N.B., Kelcey, B., and Gotwals., A.W. (2009). When and how does complex reasoning occur? Empirically driven development of a learning progression focused on complex reasoning about biodiversity. Journal of Research in Science Teaching, (46)6, 610–631.