CONTINUOUS MANUFACTURING FOR THE MODERNIZATION OF PHARMACEUTICAL PRODUCTION: PROCEEDINGS OF A WORKSHOP

INTRODUCTION

On July 30-31, 2018, the National Academies of Sciences, Engineering, and Medicine held a workshop titled Continuous Manufacturing for the Modernization of Pharmaceutical Production. The workshop was convened under the auspices of the Board on Chemical Sciences and Technology and was sponsored by the U.S. Food and Drug Administration (FDA) and the Biomedical Advanced Research and Development Authority (BARDA). This workshop1 discussed the business and regulatory concerns associated with adopting continuous manufacturing techniques to produce biologics such as enzymes, monoclonal antibodies, and vaccines (see Box 1, Statement of Task). The Statement of Task mentioned discussing upstream challenges of small molecules, however the planning committee determined that the discussions would be richer if focused solely on biologics. The workshop also discussed specific challenges for integration across the manufacturing system, including upstream and downstream processes, analytical techniques, and drug product development. The workshop addressed these challenges broadly across the biologics domain but focused particularly on drug categories of greatest FDA and industrial interest such as monoclonal antibodies and vaccines. The summary below describes the individual talks given at the workshop and tabular summaries of points made across themes are provided in Appendix C.

Public–Private Partnerships to Help Realize the Promise of Continuous Manufacturing

Kelvin Lee from the University of Delaware and the Director of the National Institute of Innovation in Manufacturing Biopharmaceuticals (NIIMBL) noted that continuous manufacturing is foundational to the production of iron, steel, paper, oil, cement, glass, synthetic fibers, electricity, clean water, and cars. For the same reason, it holds promise for the production of pharmaceuticals, which includes a lower cost of production with increased output to meet growing demand; the potential for higher and more reproducible quality; and superior

___________________

1 This proceedings was prepared by the workshop rapporteur as a factual summary of what occurred at the workshop. The planning committee’s role was limited to planning and convening the workshop. The views contained in the proceedings are those of individual workshop participants and do not necessarily represent the views of all workshop participants, the planning committee, or the National Academies of Sciences, Engineering, and Medicine.

productivity that uses equipment and space more efficiently. He added that while the demand for biopharmaceuticals is relatively small compared to the products listed above, the cost of manufacturing therapeutic proteins is high, and industry, patients, and developing nations would benefit from lowering that cost.

Mature processes—the production of iron or glass, for example—can implement continuous approaches more quickly because the chemical reactions are well-defined, the critical quality attributes (CQAs) are well characterized, and approaches to controlling the manufacturing process have been thoroughly tested. Continuous manufacturing processes are starting to emerge for small molecule drugs for the same reasons. The challenge with large molecule drugs, such as monoclonal antibodies, is that the biochemical process by which cells produce antibodies is not as well defined, and though there is a reasonable understanding of CQAs, the ability to control the production process is limited. These challenges are even more substantial for some biologics such as vaccines, cell-based therapies, and gene therapies. While there may not be business drivers in the near-term to explore producing these therapeutics via continuous manufacturing, continuous upstream processing of some biologics has become an accepted part of their production.

The challenges to adopting continuous manufacturing methods today include technological issues, quality and regulatory concerns, economic shortcomings, and perceived and actual risks. Regarding risk, Lee said that the robustness of continuous manufacturing methods as applied to therapeutic proteins is not well characterized, largely because of a lack of analytic and process control methods. Logistical concerns, such as the need for custom equipment and demands on the supply chain, can also increase the risk of transitioning from a bulk to a continuous manufacturing process. Given the pharmaceutical industry’s aversion to risk, related to the large percentage of revenues reinvested in research and development, the high rate of failure at every stage of product development, and the years needed to bring a product to the market, industry’s cautious approach to continuous manufacturing should not be surprising (Jacoby et al., 2015).

Going forward, while many regulatory agencies have expressed strong support for continuous manufacturing, adopting continuous processes for purifying an already marketed biologic product would mean going first, which is not the preference of most pharmaceutical companies. In addition, risk assessment likely overrides any cost-benefit calculation given that the revenue from a biologic is many multiples of the cost of goods. Industry’s hesitancy is also related to its unwillingness to confront regulatory uncertainties and necessary changes to established quality systems associated with batch production capacity. Finally, said Lee, continuous manufacturing requires a high level of process understanding and control and highly robust and reliable methods for at-line process analytics and real-time monitoring that are still being developed. His solution to lessening the risk of developing the needed technologies and answering regulatory questions is to do so in consortia and public–private partnerships.

NIIMBL,2 with $70 million in funding from the National Institute of Standards and Technology (NIST) and more than $180 million in commitments from its member partners, is an example of such a public-private partnership. Part of NIIMBL’s mission, explained Lee, is to accelerate innovation in biopharmaceutical manufacturing and support the development of standards that enable more efficient and rapid manufacturing capabilities by bringing together partners from industry, academia, and regulatory agencies to solve common problems. NIIMBL is part of the Manufacturing USA Network, which formed to address market failure of insufficient industry research and development in the so-called advanced manufacturing “valley of death” between when technology is produced in the laboratory and when it is produced in a representative manufacturing environment, corresponding to Manufacturing Readiness Levels 4 through 7. By addressing this “missing middle,” the network aims to reduce risk and accelerate the adoption of new manufacturing technologies and to simultaneously address any regulatory issues that may be associated with those new technologies.

The pharmaceutical industry, argued Lee, requires further industrialization in manufacturing technology if it is to meet variable market needs for lot size and demand, address speed to market, improve flexibility, and enable patient access to emerging therapies through the development of new automated, small-scale manufacturing platforms that are integrated with robust product and process measurement capabilities. Other than NIIMBL, there has been no coordinated effort to ensure that biomanufacturing keeps pace with an increasingly diversified product pipeline, to support the biomanufacturing supply chain in the United States, and to develop the knowledge base needed to mitigate the risk of adopting new processing technology in a highly regulated industry.

The Future of Access to Medical Countermeasures

Rick Bright from BARDA explained that his agency is interested in continuous manufacturing as a means of rapidly producing medical countermeasures to evolving and increasing natural and human-made health security threats. Established in 2006, BARDA’s mission is to build unique public–private partnerships to bridge the manufacturing “valley of death” that Lee described. Congress has empowered BARDA with flexible and nimble authorities to work with industry in ways that were challenging for other federal entities. BARDA, for example, has the authority to fund projects for multiple years to create a strong commitment to its industry partners to address the most difficult challenges. It can also make direct hires of industry experts who can work hand in hand with its more than 200 industry partners on development and production of medical countermeasures. Evidence that this model works includes the 38 BARDA-supported FDA licensure and approvals for 35 different medical countermeas-

___________________

2 See https://niimbl.force.com/s (accessed September 6, 2018).

ures, the addition of 14 products to the Strategic National Stockpile including 8 new FDA licensures, the 10-fold expansion of domestic influenza vaccine production capacity, and accelerated antibacterial product development to address critical vulnerabilities raised by antimicrobial resistance.

At the end of the day, said Bright, BARDA and its partners are addressing patient access to medical countermeasures in a timely manner to protect the health of Americans when a threat occurs. These efforts include tackling manufacturing challenges and working with FDA on regulatory issues to create a mechanism to allow the nation to mount a rapid response to any threat that comes its way. They also include strengthening the U.S. manufacturing base to address the fact that commercial manufacturing, for many reasons, has moved offshore. BARDA’s ultimate goals are to be proactive, create a distributed manufacturing and delivery system, eliminate drug shortages, and limit the need to manufacture and stockpile medical countermeasures.

Bright noted that FDA has been a leader in the initiative to create the desired state in which every American has access to medical countermeasures when needed. Toward that end, BARDA and FDA established a continuous manufacturing partnership in 2015 to help drive this field. FDA is focused on developing the regulatory science to address operational and technical challenges, while BARDA focuses on evaluating continuous manufacturing processes, improving process efficiency, and ensuring the sustainability of medical countermeasure manufacturing. Ensuring drug access for all, said Bright, requires a continued strong partnership with FDA to address regulatory challenges as new technologies emerge. He encouraged everyone to work with FDA proactively.

Through the multi-agency National Biopharmaceutical Manufacturing Partnership, BARDA and the U.S. Department of Defense (DoD), with industry and academic partners, have built four new manufacturing facilities, each with the capacity to produce drugs and vaccines. Capacity is one thing, said Bright, but response capability is a different matter, so BARDA and DoD are in the process of building a unified, U.S. whole-of-government pharmaceutical manufacturing capability that would manufacture and have the ability to support the development of new drugs and vaccines, but also serve as a rapid turn-on switch that would function as surge capacity for the nation.

BARDA’s newest initiative, empowered by the 21st Century Cures Act, is its Division of Research, Innovation, and Ventures (DRIVe), which has the mission of accelerating the research, development, and availability of transformative countermeasures. BARDA is using DRIVe to transform the way government works with industry to make it easier—and faster—to work with the federal government. For example, DRIVe intends to approve meritorious proposals within 30 days of receiving the proposal. More importantly, said Bright, DRIVe will focus on everything it can to increase timely access to medical countermeasures, including earlier detection of threats and earlier notification of individuals who have been exposed to a biological threat so that those who are exposed to an agent, such as pandemic influenza, can perhaps get treatment before symptoms appear and the infectious agent is spread from person to person.

Toward that end, DRIVe aims to create a “pharmacy on demand” that makes medicines readily available to everyone through emerging technologies, perhaps via a delivery service or a booth that dispenses medications via a smartphone eScript app.

FDA’s Interest in Continuous Manufacturing

Janet Woodcock from FDA noted that biopharmaceutical manufacturing is an undervalued component of the biopharmaceutical industry and the nation’s drug supply. As the nation deals with shortages of some drugs and issues with storage capacity, lack of surge capacity, and natural disasters such as hurricanes, the health care sector is concerned over its inability to care for its patients because the manufacturing sector has not been able to respond to these challenging circumstances. In addition, the global supply chain that helps reduce the cost of production has also increased the vulnerability of the nation’s drug supplies.

As a result of these and other factors, FDA has long been interested in furthering the science of pharmaceutical manufacturing, starting in the small molecule space. In the early 2000s, FDA started its Pharmaceutical Manufacturing for the 21st Century initiative to bring the challenges of drug manufacturing to light. One response to this initiative from industry was that FDA would be resistant to changing manufacturing technologies, and Woodcock admitted there was some truth to that sentiment. This prompted FDA to adopt the role of advocate for change rather than be an obstacle to change. FDA began encouraging companies to adopt on-line monitoring, which required equipment manufacturers to develop new instrumentation. It also worked to harmonize international regulations of small molecule manufacturing to address barriers to continuous manufacturing.

Continuous manufacturing of both small and large molecules, said Woodcock, can dramatically shorten the time that it takes to scale up manufacturing for newly approved drugs. At a time when FDA is approving drugs designated as breakthrough products after an abbreviated clinical trial process, companies can have less time to develop commercial scale processes, which puts a premium on approaches that can scale quickly. FDA is now overseeing the development of continuous processes in the small molecule space, as well as model-based control strategies that would lead to real-time release of products, and it expects a handful of regulatory submissions over the coming year. The agency is also overseeing work being done on the continuous synthesis of biopolymers such as therapeutic DNA and RNA molecules. In summary, continuous manufacturing is gradually seeping into the small molecule space as commercial opportunities emerge, and the agency is prepared to handle and approve those applications.

In the biologics space, continuous manufacturing is more advanced at the upstream end of the process, and progress going forward requires integration with continuous downstream processing. Here, Woodcock noted, the barriers to adoption are more about the business case than technical challenges, though

there are technical issues to address. The value proposition for business includes reducing the number of steps involved in manufacturing and the need for human handling during intermediate stages of production, improved safety, shortened processing time, smaller equipment and facility needs, more flexible operations, reduced capital expenditures, decreased environmental footprint, feasibility of manufacturing small batches economically, on-line monitoring for better quality control, and real-time release of final product.

One challenge according to Woodcock is to develop advanced control strategies and incorporate real-time data strategies for CQAs. Others are to integrate downstream unit operations in an effective manner that satisfies purity requirements and for the community to agree on real-time release methods. The real barriers, though, are questions that industry has about return on investment in continuous manufacturing and the established fixed infrastructure. Regulatory un-certainty is another barrier even though FDA is actively promoting this transition. It was suggested by an audience member that FDA could hold webinars with international regulators and industry to clarify where other nations stand on these technologies.

FDA’s current approach to push this initiative, said Woodcock, is to collaborate with NIIMBL, BARDA, NIST, the Defense Advanced Research Projects Agency (DARPA), academia, and industry. When the initiative started, there was little academic work in this field, but FDA has been able to nurture academic research through a grant program on continuous manufacturing. Internally, FDA’s emerging technology team has been working inside the agency to educate the regulators. In terms of next steps, FDA hopes that this workshop will move the field forward, and Woodcock stated that the agency is ready to work with any group that is developing continuous bioprocessing, even before the process is fully finished. She suspects that it is possible in the biologics space that some of the special concerns—anti-terrorism concerns, outbreaks, and shortages—will stimulate adoption of continuous bioprocessing approaches that will then diffuse into more standard bioprocessing.

Twenty years from now, biological product manufacturing is not likely to look like it does today. The field will advance, said Woodcock, and the outlines of where the field should go are already visible. The question is how fast will this evolution occur, and FDA believes that by working together, collaborating, and taking incremental steps, that future can occur faster.

BUSINESS CASE FOR CONTINUOUS MANUFACTURING

Transforming Biopharmaceutical Production Through the Deployment of Next-Generation Biomanufacturing

Art Hewig from Amgen said that biomanufacturing has been evolutionary when compared to other industries, and that a changing business landscape requires agility, flexibility, modularity, and dematerialization; or reducing the size of biomanufacturing networks. Continuous manufacturing can support this

transition. He noted that the business case for adopting continuous manufacturing depends on the specific company and how that company operates. Making the business case, he said, “begins with understanding your business and your portfolio mix as it exists and as you see it coming, and where your manufacturing network is at, and what you think your overall plan for the future is.” Intensification, or shrinking the manufacturing footprint, is an important aspect of a business case, but Hewig cautioned to make sure that the footprint of a redesigned continuous manufacturing process actually takes up a smaller footprint when all is said and done.

The biopharmaceutical landscape itself is changing in terms of its focus on patient needs, its move to flexible drug discovery and development, and its expanding global presence. As a result, product mix is becoming more heterogeneous with the development of more targeted products beyond monoclonal antibodies, demand uncertainty has increased, and the volume of product needed is dropping. Overall, this requires balancing the use of existing facilities and the investment made in those facilities against the addition of new capabilities to lower costs and increase flexibility and speed.

Hewig said that productivity improvements in fed-batch manufacturing processes have plateaued after three decades of commercial production of therapeutic proteins. As a result, companies have been turning to new technologies such as perfusion processes and high cell density processes to start shrinking the footprint from a productivity standpoint. Today, a highly productive 2,000 liter perfusion system can match the productivity of a 15,000 liter fed-batch bioreactor, which at that size creates the possibility of having single-use, disposable bioreactors. He noted that single use solutions also include mixing and chromatography systems, creating the opportunity to update the biomanufacturing paradigm to include off-the-shelf, deployable, and scalable technologies.

Amgen, he explained, started its efforts to revamp its manufacturing processes in 2010, and it expects this effort to take years to complete given the need to develop new processes and technologies and have them approved by regulatory authorities. This effort is paying off, though, and the company’s next generation manufacturing facility in Singapore, which is 80 percent smaller than its older facilities, was approved for commercial manufacturing. The company is building a duplicate facility in Rhode Island.

The next evolution of biomanufacturing, Hewig predicted, will involve additional process intensification and integration that will result in further shrinking of the manufacturing footprint. This will allow companies to take a modular approach to scale out their production capacity to meet increasing demand. It will also enable companies to work at a commercial production scale earlier in the development process and avoid the uncertainties of moving from a pilot scale to a commercial scale reactor.

Turning to the business case, shrinking the manufacturing footprint results in a significant reduction in capital investment and the time needed to deploy a new process. It also enables miniaturization and intensification of process work-flows. At the same time, the cost structure shifts from fixed to variable, and it

allows for better targeting investment based on market demand and product mix. Technology transfer becomes less risky with the ability to scale out instead of scale up. He estimated that continuous manufacturing could reduce capital expenditures from $1 billion per facility to hundreds of millions to eventually tens of millions and the time for construction from years to months. What this allows from a business viewpoint is to delay deploying a new facility in the face of the uncertainty associated with introducing a new product to the market. This is a huge advantage to the current situation of having to decide to invest billions of dollars on building production capacity based on an assumption that a new drug will be a blockbuster long before it has even finished clinical trials. In his view, a hybrid network of conventional and flexible plants will create a responsive and efficient supply chain for therapeutic proteins.

In the end, the business case for continuous manufacturing is not a simple yes or no answer, said Hewig, and how it provides the greatest value will depend on the specific needs of each company (Pollock et al., 2017; Walther et al., 2015). Important considerations include a company’s product portfolio, market demands, the existing manufacturing network, and the manufacturing business model. Continuous manufacturing does offer several opportunities, including the possibility of identifying a “best” approach to flexible mass output and developing improved single-use systems. Challenges include the need to balance labor costs and automation and integrate the different components of a continuous process, develop new control strategies, and understand the costs of analytical testing associated with continuous manufacturing.

Continuous Manufacturing for Large Molecule Drugs

Mauricio Futran from Janssen Pharmaceuticals reiterated that there is no single business case for continuous manufacturing of large molecules. Asset utilization, process flexibility, quality efficiency gains, and time to market are all possible drivers for deciding to use continuous manufacturing. In addition, the ability to monitor product quality during production, rather than at the end of a batch process, can improve resource and process efficiency. Futran added that a company should not implement a continuous process for the sake of having a continuous process—what problems is the company trying to solve? The hurdle to implementing continuous processes is easier with known unit operations transformed from batch to continuous, rather than starting from scratch.

From his perspective as a chemical engineer, Futran said the biggest benefit of continuous manufacturing is that all operations can be run simultaneously in a single room, enabling continuous monitoring of all production steps, something that is lost in batch production. A one-room situation for large molecule production could raise questions about contamination that could spread downstream, however, and the promised improvements in quality control efficiency and process robustness depends on developing in-line assays and methods for fully characterizing the entire process. Continuous manufacturing can enable

more agile supply planning and response. It may also reduce the material needed for development and technology transfer.

Additional considerations such as the need to integrate continuous manufacturing with modern supply chain capacity management includes working with external contract manufacturers and dealing with starts and stops in the production process. From his experience with continuous manufacturing of small molecule drugs, startups and stops are difficult, take time, and have significant risk, and doing so with the living systems used to produce large molecules will be even more challenging. The ability to measure critical attributes for control is necessary, he added, and developing the appropriate methods will be challenging for a continuous bioreactor given that living systems are often not operating at a steady state.

Despite these challenges, continuous manufacturing of large molecules offers many benefits for the company, patients, and regulators. Enhanced quality control efficiency has the potential to reduce regulatory review timelines. Development times can be faster, improving speed to market, while continuous manufacturing of large molecules may provide benefits in terms of the ability to better manage demand fluctuations and the supply chain.

Continuous Processing Beyond Financials

Franqui Jimenez from Sanofi explained that there are two general approaches to continuous manufacturing: a fully integrated process that physically connects every step from media generation through purification, and a hybrid process that is integrated through the capture phase, with the product then feeding into subsequent batch purification processes. In both cases, the process is considered continuous if it comprises integrated, continuous unit operations with zero or minimal hold volume between them and balances mass and flow throughout the process (Konstantinov and Cooney, 2015).

When developing a continuous manufacturing process, it is important to consider that steady state conditions are expected to lead to consistent product quality, which could reduce or replace lot release testing. Development packages, however, require increased understanding of failure modes, which can be intensified in continuous manufacturing, as well as safe production stop points and the linkages between operations. Continuous processing, said Jimenez, produces large material volumes quickly, which increases the availability of material for characterization. On the other hand, moving to a continuous process may require more complex analytical capabilities with respect to instrumentation and technology. Additionally, it is expected that companies will need staff capable of responding to production issues associated with these advanced technologies, stated Jimenez.

Regarding operational considerations, Jimenez said that continuous processes require improved process controls, including the need for on-line, in-line, and at-line measurements, to maintain a steady state in the bioreactor. This is needed to lower the complexity of the process and reduce shop floor instruc-

tions. In addition, the manufacturing facility must be capable of running continuously for longer periods, requiring both more durable instrumentation and increased reliance on engineering controls to prevent contamination. In his opinion, the most prudent approach to developing a continuous process is to develop it in islands of continuous processes that can then be linked together as the understanding of those processes grows.

On a final note, Jimenez discussed the challenge that continuous manufacturing presents for regulation in terms of process verification. For example, the current paradigm requires running five batches to demonstrate process consistency, but this would become a demanding requirement for a continuous process, which may run for 60 days, with little gain. Jimenez described an example of what this new paradigm could look like: a process performance qualification run followed by two continuous process verification runs. Sanofi has been arguing that the increased process knowledge required for a continuous production scheme and the fact that process development occurs at the commercial scale offers the opportunity to create a new paradigm for approving a manufacturing process.

In a panel discussion following the three talks, moderated by Gintaras V. Reklaitis from Purdue University, the session speakers discussed that the challenges for moving existing approved products to continuous processes and establishing comparability between the processes is not trivial. The three speakers agreed that there are fewer drivers to implement fully continuous immobilized cell bioreactor compared to implementing a continuous perfusion bioreactor only, and that it is best to implement continuous processes in stages, not all at once. Hewig said that Amgen learned the benefits of single use facilities from the Singapore facility because modifications can be made in response to changes in the supply chains. In response to a question about what drove change at companies, Hewig said that Amgen did not want to build another stainless facility so they embraced single use. Jimenez said that at Sanofi, Konstantin had to champion and invest in the technology.

UPSTREAM PROCESSING

Fitting a Continuous Process into Existing Facilities

Daisie Ogawa from Boehringer Ingelheim reported on a collaborative project between Boehringer Ingelheim and Pfizer. For 3 years, the two companies have been partnering to research and develop radically cheaper and more rapidly produced clinical material. These speed and cost improvements allow research and development to explore additional clinical options and enable faster proof of concept with a clear path to commercialization. A major task for this project has been to develop an integrated skid based on single-use technology that includes a single-use bioreactor, elution stream chamber, and continuous viral inactivation up to the single mixer, which then feeds into a batch process viral reduction filter. The integrated pieces run continuously with no air gaps, she explained.

Perfusion cell culture, explained Ogawa, must still address two limitations relative to the fed-batch bioreactor in their facility: the unacceptably long run times to produce comparable amounts of material—30 to 90 days compared to 14 days—and the extremely large volumes of media required for a perfusion cell culture system. To address these limitations, she and her colleagues first worked to intensify the seed train. Traditional batch “N-1” seeding requires 7 to 10 days just to ramp up production, but using a perfusion N-1 stage produced a 10-fold higher N-stage seeding density, reducing the time to reach the production phase by approximately 5 days.

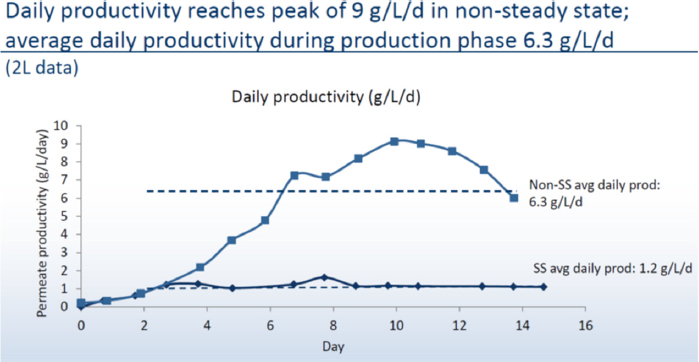

To shorten the production time further, Ogawa’s team shifted to a non-steady state perfusion culture for which they do not control cell density. The challenge then became supplying adequate nutrients while maintaining a low perfusion rate and the solution was to use concentrated media and a diluent, feed them to the cells based on osmolality demands, and balance that with waste flushing. She noted that the logistical challenges of media preparation, storage, and transport are reduced substantially using concentrated feeds. For example, perfusing a 1,000-liter bioreactor over a 14-day process at two vessel volumes per day would take approximately 30,000 liters of media prepared at the usual concentration, but only 7,000 liters using concentrated feeds. This change reduces costs and allows for preparing the media onsite, and more importantly, generates high viable cell densities and 5-fold higher daily average productivity relative to a steady state system. Compared to a fed-batch process, the non-steady state bioreactor produced material equivalent to a 76 gram/liter fed-batch reactor, or greater than 10-fold higher productivity than a fed-batch system. This intensified process scaled successfully from a 2-liter reactor to a 100-liter reactor (see Figure 1).

Non-steady state perfusion using media concentrates produces more product per liter of media consumed than steady state perfusion. The resulting process looks more like a fed-batch system in that it operates over 14 days, and even though media usage is higher, it is still at a scale that it can be produced in the same 12-kiloliter facility with minimal capital expenditures. The challenge now, said Ogawa, is that the amount of material produced by this intensified process exceeds the purification train’s maximum capacity. What Boehringer Ingelheim envisions is building a new facility using the integrated SKID with a 100-liter bioreactor to produce enough material for toxicology testing and a 1,000-liter bioreactor for commercial-scale production that would be capable of producing 1,200 kilograms of material per year.

In summary, Ogawa said that a non-steady state perfusion system using concentrated media feeds could fit into a commercial 12-kiloliter facility with little capital investment. In addition, this intensified, highly productive perfusion process allows one facility to produce multiple products, which is expected to translate into faster and less expensive production of biologics. Moreover, the integrated SKID platform can achieve commercial-scale production using 500 to 1,000-liter bioreactors, which is expected to lead to faster, more efficient, and less expensive production.

Intensification of a Multi-Product Perfusion Platform

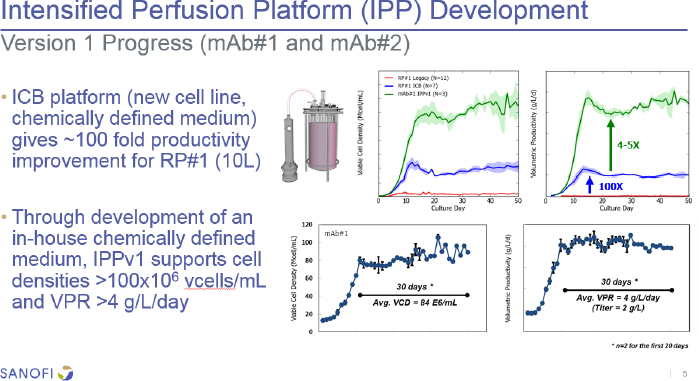

Shawn Barrett from Sanofi explained that his company is moving from its legacy microcarrier Chinese Hamster Ovary (CHO) culture system with a batch downstream processing system to a single-use system with a suspension CHO culture and continuous capture to reduce cost of goods and process footprint and increase robustness and flexibility. Going forward, Barrett’s upstream technology development team is working on perfusion intensification beyond integrated continuous biomanufacturing. The aim is to increase volumetric productivity, leverage in-house medium formulations, and reduce cell-specific perfusion rate to minimize media flow through the system, while maintaining desirable product quality attributes, process robustness over extended run times, and consistent cell densities in a system that can be scaled to support intensified processes. The technology development focus, he added, is on monoclonal antibodies and monoclonal antibody-like products that are in the company’s early pipeline with the intent of minimizing or eliminating the need for process optimization in the early stage of development. The idea, explained Barrett, is to take a new cell line, put it in a perfusion bioreactor, and then go right to scale up.

The first version of Sanofi’s integrated continuous biomanufacturing perfusion system (see Figure 2), using a new cell line and chemically defined medium, yielded a 100-fold improvement in productivity over the legacy process. Further development has produced as much as a 5-fold improvement at the 10-liter scale with other biologics. There were some issues when using different cell lines, resulting in unexpected growth arrest, lower cell viability, and a 40 to 60 percent decline in productivity. The latest platform version uses a new

perfusion supplement and additional concentrates as needed. He noted that all clones used in the intensified perfusion platform have been generated from a fed-batch clone screening workflow that the company would prefer not to change. The new version has now demonstrated with eight different clones that it can produce perfusion titers anywhere from half to equivalent to those obtained with a fed-batch system, with a specific productivity boost of 30 to 60 percent. Barrett described their current focus toward confirming scale-up readiness in 500-1,000 L single-use bioreactors (SUBs), and that one SUB vendor did not have enough gas mass transfer capacity and another was not a preferred vendor, so they have been working with their preferred vendor to modify the oxygen transfer rates to be more supportive of the cell densities they want to achieve.

Modeling the cost of goods for a generic monoclonal antibody produced using intensified perfusion integrated with continuous capture showed that a 2,000-liter perfusion system had the potential to produce a dramatic reduction in cost of goods. He noted that with increasing plant capacity, a 2.5-fold increase in titer could decrease cost of goods by approximately 50 percent.

Drivers, Challenges, and Implementation Solutions for Continuous Perfusion Manufacturing

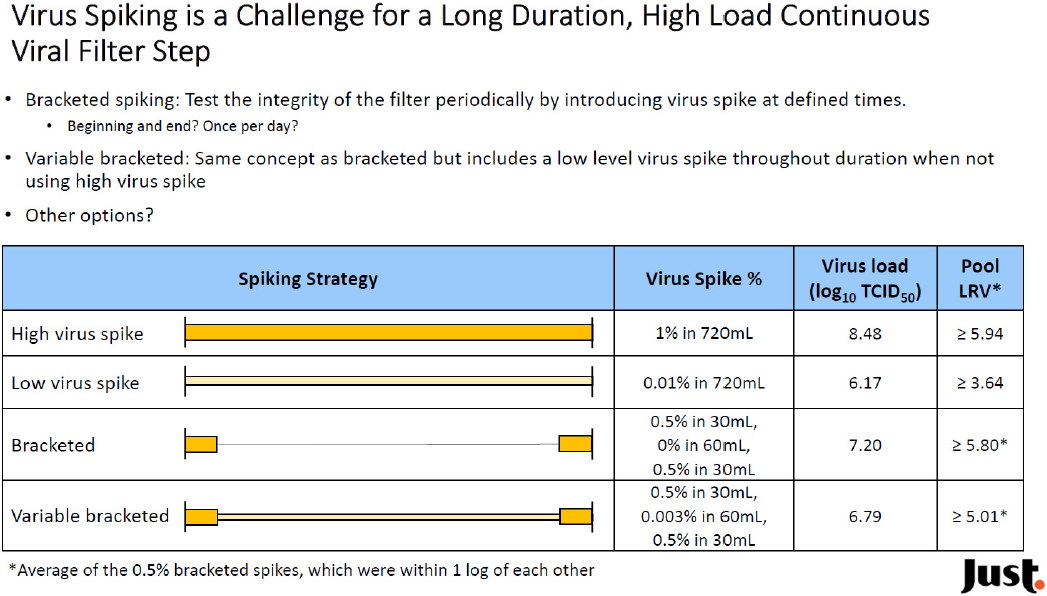

Eva Gefroh from Just Biotherapeutics explained that her company’s goal is to design and apply innovative technologies to expand global access to breakthrough biotherapeutics dramatically by reducing the cost of these products by at

least a factor of 10. The company aspires to develop technology to reduce the time to an investigational new drug application for a monoclonal antibody to less than 10 months, produce more than 10 new molecules per year, and reduce the cost of goods to under $10 per gram, which has proven to be difficult. It also wants to enable smaller, more reconfigurable facilities that can be built at lower cost and on a much faster timeline.

To meet that goal, Just Biotherapeutics is designing a semi-continuous process with continuous capture chromatography and batch downstream processing for final purification and virus removal, similar to the approach Barrett discussed. The idea is to deploy this system in a small footprint facility containing modular, reconfigurable cleanroom pods that can be built and validated within 18 months. Modeling studies suggest that a high-productivity perfusion process can produce upward of 1,000 kilograms of product per year, with increasing volumetric productivity playing a large role in driving down the cost of goods manufactured. Flexible manufacturing, said Gefroh, is fundamental to managing demand uncertainty and driving toward a low cost of goods. It also enables a startup company such as hers to build a relatively low cost facility—she estimated the cost to be $60 million to $80 million—that can be modified and expanded as needed.

The activities that she and her colleagues have been undertaking to develop the company’s continuous perfusion process platform include attaining low cell-specific perfusion rates, maintaining high cell density at a steady state through media development and cell bleed, developing the perfusion filter, and addressing the sensitivity of cells to shear conditions and pluronic concentration. They are also addressing the challenges that come with scale up from a 2-liter bioreactor to the 500-liter scale, including carbon dioxide stripping, vent filter sizing, and shear in the perfusion loop, oxygen availability, and agitation rate.

Handling the complexity of media usage is also a big development area. For example, at a 1,000-liter scale, media and buffer preparation would have to be scheduled in separate pods at close to full capacity. To reduce this demand, the company is developing proprietary, in-house produced media, working to reduce perfusion rates, and preparing media concentrates that can be stored at room temperature to reduce the high cost of refrigerated storage. Gefroh explained that a concentrated, stable at room temperature media would create the potential for outsourcing liquid media production to an outside vendor, which would reduce solution preparation areas and associated labor costs. Challenges in creating media concentrates include limited solubility at high concentrations, filterability, and the number of feed solutions required to have the desired solubility. Once those issues are solved, strategies for delivering the concentrated media to the bioreactor need to be worked out to ensure a consistent and accurate feed to the bioreactor. So far, results with a continuous perfusion process using concentrated media at the 500-liter scale have shown this approach to be feasible with respect to productivity over 21 days.

DOWNSTREAM PROCESSING

Practical Considerations for Adoption

Lindsay Arnold from MedImmune discussed her company’s efforts to develop downstream processing for monoclonal antibody production on the assumption that it can operate regardless of the upstream system that feeds into it. She noted that the continuous downstream process—comprising multi-column chromatography for continuous Protein A capture, low pH virus inactivation in a packed column, a filter train, multi-column chromatography for continuous polishing, and single-pass buffer exchange and final concentration—has been run with continuous upstream manufacturing in a fully integrated system for 2 weeks.

The pilot downstream facility, she explained, uses a 50-liter perfusion bioreactor and can handle up to a 200-liter perfusion bioreactor, and it processes 200 to 300 liters of fed-batch material over 2 to 3 days. Each of the five components of the downstream system are currently automated individually, but the goal is to develop a fully integrated control system for all five units. Arnold explained that the primary capture stage is agnostic to the equipment in the upstream supply chain, but the company has developed its own approach to low pH virus inactivation using in-line titration followed by a static mixer. Residence time, she said, is achieved in a packed agarose column. The company is taking a plug-and-play approach for the filter train and wants to work with a vendor to supply the entire filtration-anion exchange membrane-virus filtration combination.

One of MedImmune’s challenges in moving this system from the pilot scale to commercial production is that the company and its parent AstraZeneca currently have excess production capacity, which means that any changes become cost prohibitive given the existing installed capital base. Low utilization, said Arnold, does not push innovation. The strategy, then, is to take a modular, one-unit-at-a-time approach and introduce continuous processing in places where batch processing comes up short in terms of productivity and efficiency, thereby gaining experience and building a knowledge base for when the company needs to expand its production capabilities and build a new facility.

Given the opportunity for a large cost-of-goods savings in the initial capture step, Arnold and her team have been evaluating scale up opportunities for this unit. Going forward, she has been focusing on identifying other places where continuous bioprocessing can increase productivity and cost of goods, offer opportunities for enhancing process control, and where a particular molecule or therapeutic modality comes with specific processing needs, such as with labile molecules, cell and gene therapies, and non-monoclonal antibody modalities that would drive adoption of single-use systems.

In summary, Medimmune is not likely to adopt fully continuous biologics manufacturing in a 5-year period, but is likely to adopt it in a step-wise fashion with multi-column chromatography as a first step. The business cases, said

Arnold, will vary based on the particular sets of drivers that are important to different companies, such as safety, cash flow, capital reduction, space constraints, and capital improvement, and will vary between clinical and commercial settings. “The best we can do right now is prepare an almanac of technologies so that when there is a crisis, we can be there,” she said.

What Can Be Learned from the Chemical Industry

Andrew Zydney from The Pennsylvania State University reiterated Lee’s statement in the day’s first presentation that many industries have converted from batch to continuous processing. The chemical industry, for example, made this transition nearly one century ago, which provided significant increases in productivity, reductions in pollution, improved product quality, and enhanced process safety. He explained that the chemical industry used multiple strategies to develop continuous processes, many of which are based on countercurrent staging. Countercurrent staging, he added, can also be used to develop continuous bioprocesses. Examples include diafiltration for buffer exchange and formulation, purification using continuous chromatography, and capture via continuous precipitation.

Conventional diafiltration, which is used to replace buffer from an upstream chromatography step with another buffer, is typically the final step in the formulation of a drug substance. As practiced today, a batch process requires multiple pump passes through the diafiltration membrane unit and uses a large amount of buffer. Zydney has been developing a continuous, countercurrent staged system that uses in-line dilution instead of a mixer. Diafiltration buffer is added to the product stream and membrane modules are used to remove buffer. Countercurrent staging, he said, significantly enhances buffer exchange by effectively re-using the buffer multiple times. Tests have shown that impurity removal remains constant throughout the process in a true steady state operation, and that the entire product “sees” the exact same process conditions, which offers the potential for improved product quality. He noted that he could design systems to produce a desired level of impurity removal simply by changing the number of stages and the flow rate of the diafiltration buffer relative to that of the feed buffer. A three-stage system, for example, can easily achieve a 1,000-fold removal of impurities and 99.9 percent buffer exchange, similar to what would be achieved in a batch system that uses more buffer.

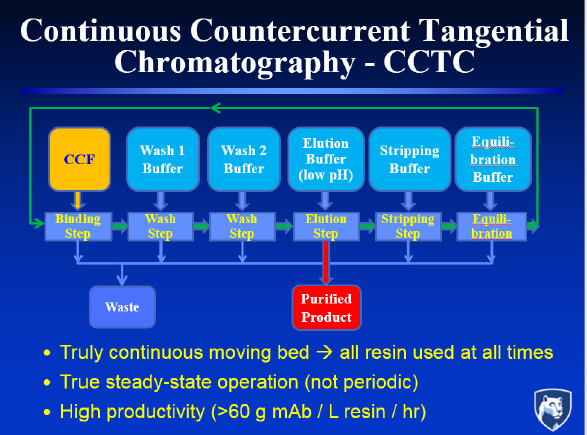

Countercurrent staging can also be used in the chromatography step. In this case, it replaces a series of columns producing a cyclical rather than steady state response with a system that flows the chromatography resin as a slurry through a series of static mixers and hollow fiber membrane modules (see Figure 3). In this approach, which Zydney calls continuous countercurrent tangential chromatography, binding, washing, elution, and stripping are performed directly on the slurry, with the hollow fiber membranes and static mixers controlling separation and residence time. Countercurrent staging, he added, makes more efficient use of the buffer than would ever be achieved by mixing the slur-

ry with the elution buffer, and it achieves purity levels that are “reasonably competitive” with multi-stage batch column configuration.

Continuous staged precipitation offers the opportunity to provide a low-cost method of product purification by using targeted precipitating agents such as metal chelators, volume exclusion agents, solvents, and affinity ligands. Continuous staged precipitation is the workhorse of the plasma fractionation industry, but it was never considered viable for most biotherapeutics when titers were in the one-tenth of 1 gram per liter range. Now that titers are pushing 10 to 20 grams per liter, it can be practical as an early capture step, replacing chromatography. Impurities would be removed using a countercurrent staged operation requiring small amounts of wash buffer.

Turning Concept into Reality

Mark Brower from Merck noted that the pipeline of biologics is evolving, which means that a new production platform must be flexible to be able to handle a variety of different types of products. He and his colleagues have been looking at various design concepts around single-use facilities that would afford the ability to respond to different product demand profiles and rely on various supply partners for the single-use components. The overriding philosophy would be to build out the facility as needed and at the right time.

To date, Brower’s team has demonstrated that it can scale a continuous chromatography system and get consistent production of high-quality material, and it is now working to run the system over an extended period. The company has also built a pilot lab to test new system designs and learn how the different unit operations function. The lab includes every step of production from the bioreactor through final purification, all connected in a single-use, automated, closed system that is agnostic to the equipment within the supply chain. Two people, not including those doing media and buffer preparation, can run and monitor the entire system and change out consumables. The system includes process analytical tools that enable operators to intervene if the system deviates from the desired parameters. These tools also generate data that power multivariate modeling for process control and improvement.

The challenges Brower wants to address include validating the system, particularly during startup and shutdown. Run-time evaluation will look at real-time data to identify deviations and responses to those deviations. He is also concerned about how the organization deals with this new technology and how it responds to changes in the current development paradigm. There is also a number of technical challenges, including optimizing facility design for different operations, residence time distribution modeling, developing a control strategy for microbial agents, viral safety validation, demonstrating robust, process-scale good manufacturing process (GMP) systems, developing single-use sensors and consumables, and demonstrating connectivity using plug and play automation solutions.

PRODUCT MANUFACTURING

Drying Technologies to Stabilize Labile Molecules

Satoshi Ohtake from Pfizer reiterated Woodcock’s message that FDA is encouraging industry to adopt novel technologies. As other speakers noted, there are both benefits and risks to adopting continuous manufacturing for biologics, and one question Pfizer has been asking is whether it should be looking at integrated, continuous manufacturing from start to finish or if it should consider piecemeal continuous manufacturing, which are two different value propositions. Toward that end, the company has been asking what Ohtake called a very simple set of questions:

- Is it feasible or necessary to implement continuous manufacturing?

- What are the available technologies and what is their readiness for implementation?

- When should these new technologies be implemented—at the time of new production introduction, as a part of life-cycle management, or as part of general technology development?

- How can it overcome the inevitable hurdles that come with new technology implementation?

- Who will take ownership of development and implementation?

Turning to the subject of drying process technologies, Ohtake said there is a great deal of knowledge to glean from other industries, including the food industry. The two approaches that have been applied to drug manufacturing are batch process freeze-drying and continuous process spray drying. Freeze-drying, or lyophilization, is the current gold standard and the bottom line is that it works and there are many applications for this technology. Freeze-drying, however, is expensive and time consuming, and there are issues with heterogeneity and scaling. With spray drying, product flow in equals product flow out, so scaling is not a problem.

One company in the food processing space is using microwaves instead of applied heat in the lyophilization process, and the company recently announced that it was collaborating with an equipment supplier to manufacture and deploy a continuous lyophilization system for use in pharmaceutical production. Another technology under development is spray freeze-drying, which generates frozen microspheres by dispersing substrate liquid using high-frequency nozzles into single droplets that then fall through a cooling zone and congeal into frozen spheres, which are then moved into a rotary vacuum dryer. This approach produces a narrow particle size distribution that is large enough to not be affected by static and can be filled into vials more easily. This instrument has been scaled from 1 to 2 liters per day to more than 100 liters per day. The one drawback is that the instrument’s footprint is large. Ohtake noted that rotary vacuum drying is a batch process, so it would be necessary to couple droplet formation to multiple drying units to create a semi-continuous process. A third company is developing a continuous spray freeze-drying technology that replaces the rotary vacuum dryer with a vibrating, agitated drying chamber. Academic researchers, he said, are also developing continuous freeze-drying processes, one of which uses spin-freezing to create a thin layer on the outside of a drug vial filled with liquid product. The result is product coating the walls of the vial.

Ohtake said an important challenge for implementation is a lack of precedence, that is, nobody wants to go first when approaching regulators. The onus to work with regulators cannot be on the technology companies because they do not have the resources to do so. This needs to be a collaborative effort between industry and technology companies. Today, he said in summary, promising technologies are available, and FDA is supportive of innovative processing technologies. What is limiting progress is a lack of clarity on whether these technologies can be approved as platforms independent of a particular product that everyone can then use, or if the technology needs to be approved with a specific product. Also challenging is the prospect of introducing new technology as part of life-cycle management for an existing product as it would require changing the product license in every country in which it has been approved.

Solutions to Continuous Biomanufacturing Challenges

William Whitford from GE Healthcare said that there is an ongoing revolution in digital manufacturing brought on by the explosion in monitoring, analytics, artificial intelligence, automation, and advances in robotics. This revolution is enabling continual quality verification, product quality-based control of processes, and real-time release testing. Digital biomanufacturing consists of many disciplines (Whitford, 2017; Whitford and Julien, 2007), and one of the challenges for implementing it is that there are few people who are proficient in all of the component fields and the expertise often remains siloed, he said.

There are many information technology enablers of digital biomanufacturing, including adaptive systems employing artificial intelligence, the availability of big data and the effective management of large and complex data sets, and software suitable for FDA regulation. Whitford explained that digital biomanufacturing features include support for real-time prediction, analysis, and control of CQAs and critical process parameters. As a resident source of data, it also supports continuous optimization of process parameters and assists in the development and control of process intensification initiatives. Some of the performance goals for digital biomanufacturing include having a self-aware, continuously adaptive, autonomous plant monitored by remote experts. Such systems, said Whitford, are being implemented in other industries and are being considered for piecemeal adoption in biomanufacturing. Digital biomanufacturing should also support business continuity with incident control, management, and reporting capabilities, and in-line or on-line, real-time, orthogonal process monitoring and adaptive control. In short, digital biomanufacturing has the potential to support enterprise-level manufacturing intelligence in the form of reporting analytics for quality assurance and quality control support; monitoring analytics for process control, development, and optimization; and predictive analytics for scheduling, supply chain optimization, and optimal harvesting of product.

Digital biomanufacturing also fits well with next generation quality-by-design initiatives. One enabler of such efforts is multi-attribute methods of analysis, such as quadrupole Dalton mass spectroscopy, that can replace multiple traditional assays and identify process impact on multiple CQAs. Multi-attribute methods, said Whitford, are regulator friendly, cost-effective, and support both advanced process control and reporting on multiple product attributes in near real time.

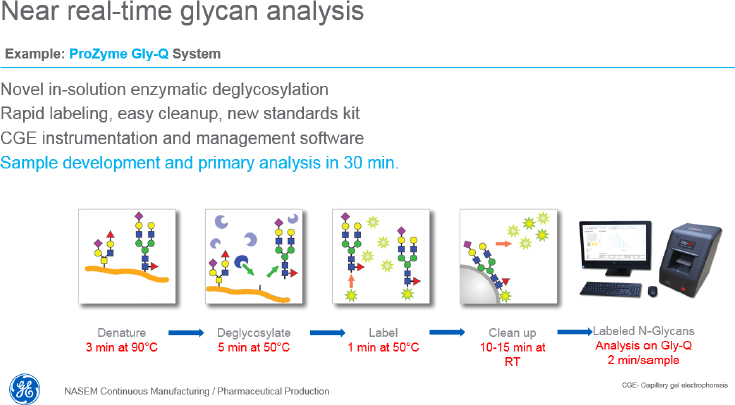

Regarding monitoring solutions, Whitford said that automated at-line sampling technologies are commercially available, including on-line manifolds ready for in-line sensors, automatic at-line analytics, hands-off, contamination-free sampling. In fact, three companies now support cell-free sampling for multiplexed analysis from bioreactors and various downstream processes. Also available, he said, are various monitoring technologies for making specific types of continuous, real-time measurements. Single-use, adaptable in situ Raman probes, for example, can simultaneously measure glutamate, lactate, glucose, glutamine, ammonium ion, osmolality, and viable and total cell density. One

company has reported that its technology can provide glycan analysis within 30 minutes (see Figure 4), while another uses nanofluidic chips to assay thousands of single cells in parallel and provide a point analysis of modal cellular characteristics rather than an average of all cells in a reactor. Whitford said he is excited by the possibility of using field-effect biosensing technology based on graphene to monitor discrete local changes in reactor conditions in real time.

At a larger scale, predictive control products that support multiplexed analysis, often in real or near real time, are commercially available. This type of technology is part of what GE Healthcare is working toward with its bioreactor digital twin project, a digital, mixed model, in silico representation of the bioreactor. Each implementation of the bioreactor digital twin would be specific for each bioreactor, updated with data from sister reactors as well as from the individual bioreactor. He noted that the continued advances in computing power open a wealth of possibilities for the future of digital biomanufacturing that cannot even be imagined.

Advanced process monitoring, using new sources of data and new analytical techniques, will lead to changes in process development and control. For example, being able to measure glycoform production in near-real time offers new approaches to using changes in product attributes for process control functions rather than using a representative value, such as pH or glucose levels. In addition, being able to monitor many divergent process parameters in both upstream and downstream processes offers the possibility of using this mass of data to control processes more efficiently.

Opportunities for Low-Cost Vaccine Manufacturing

Historically, said Tarit Mukhopadhyay from University College London, continuous manufacturing for vaccine manufacturing was rarely considered based on the small doses needed for vaccine efficacy, and when it was used, yields were low, requiring long production times to produce the needed material. However, a problem the vaccine industry faces is that vaccine manufacturing processes that were developed more than 50 years ago are largely unprofitable, which has led to many companies dropping out of the vaccine business. He noted that the Global Vaccine Action Plan, which the World Health Assembly endorsed in 2012 to increase global coverage for vital immunizations by 2020, is failing largely because of the unprofitability of vaccine manufacturing. In addition, because those vaccine manufacturers are operating at full capacity, any production glitch has an outsized effect on global availability of the affected vaccine.

The Bill & Melinda Gates Foundation is tackling this problem with its Innovations in Vaccine Manufacturing for Global Markets Grand Challenge, which seeks innovative approaches to producing low-cost vaccines with platforms suitable for 40 million doses annually at a target production cost of less than $0.15 per dose. The caveat to this challenge, said Mukhopadhyay, is that the core cost reduction cannot be realized primarily through economies of scale. His group, in collaboration with researchers from the Massachusetts Institute of Technology (MIT) and the University of Kansas, is tackling this challenge for a novel recombinant protein rotavirus vaccine.

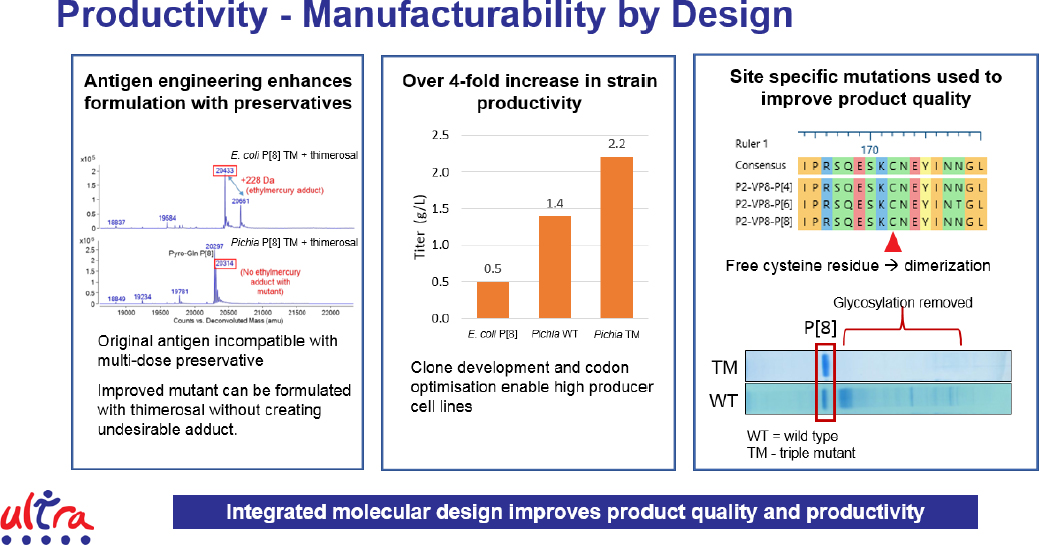

To break the economies of scale model for vaccine manufacturing, it is necessary to realize cost savings through strain engineering and molecular design to improve product titer and quality, cut material and labor costs through simplified and intensified processes, and reduce facility-related costs and the overall footprint of the production process. Mukhopadhyay and his collaborators learned the first step was to engineer the antigen and select more productive cell lines, leading to a 4-fold increase in strain productivity (see Figure 5). They have also redesigned the antigen to remove glycosylation sites, producing a higher quality product. This approach of integrated molecular design improves product quality and productivity.

Turning to the manufacturing process itself, the team took advantage of the fact that engineered cells secrete the desired antigen, eliminating the need to harvest the cells, lyse them, and collect the product. This enabled the team to use a 50-liter perfusion reactor and couple it directly to the downstream processes, thereby reducing the labor component and the need for repetitive quality control testing, creating a highly intensified process through fill and finish. Overall, process yields are high enough to enable the entire process to fit inside a refrigerator-sized unit complete with automated process control. What this means, said Mukhopadhyay, is if three refrigerator-sized units can meet production needs, the process footprint can be 50-fold smaller and capital expenditures can be 15-fold less, reducing the barrier for entry for new companies.

Modeling shows that a fully integrated process can not only meet the target for cost of goods, but also actually come in at an estimated 22 percent below that target. In a multi-product facility, cost of goods would be an estimated 36 percent less than the targeted $0.09 per dose. The benefits of moving to such an integrated process, however, could extend beyond profitability. Currently, vaccines are manufactured in centralized facilities requiring long distribution routes that lead to stagnant coverage and missed immunization opportunities. A distributive manufacturing network, based on an integrated and intensified process, would likely improve local and regional supply that could be tailored to meet demand and democratize vaccine supply. A distributed network would also increase manufacturing capacity overall and greatly increase the availability of vaccines to respond to emerging epidemics.

In closing, Mukhopadhyay said that applying the principles of continuous manufacturing to vaccines opens up new possibilities for increasing capacity and affordability. Realizing this promise, however, will require regulatory engagement to approve reduced quality control testing and real-time release based on the use of multi-attribute testing and improved in-process analytics. It will also require advocacy and regulatory support for a licensed product.

INTEGRATION

A Case Study: Biologically Derived Medicines on Demand

Christopher Love from MIT noted that integration is more than just connecting parts together, but rather involves syncing technology with the biology to be implemented in a continuous process. To him, mapping out the integration process before attempting to do it is imperative. The project his group has been working on is part of DARPA’s Biologically Derived Medicines on Demand project, which envisions a different supply chain in which raw materials are transported around the world and the final product is made in a multi-product facility near the site of intended use. This approach would be useful for producing drugs to treat rare and orphan diseases, responding rapidly to outbreaks, and expanding global access to medicines.

Love defined integration as the act of combining or adding parts to make a unified whole in a way that blends or fuses the parts together so that the whole is greater than the sum of the parts, and his approach has been to embrace the entire design cycle at once. While he did not go into the details of the technological advances that his team made as part of this project, he noted that their development was driven by the notion of integrating the biology with the hardware (Matthews et al., 2017; Timmick et al., 2018). The result is a process for developing new molecules that in the best case takes approximately 12 weeks. This platform uses yeast as the production vehicle with a new type of media, developed using RNA sequencing and metabolomics, that is better defined, less expensive, and performs better than existing media. One advantage of working with yeast is that there are only 150 proteins in its secretome, an order of magni-

tude less than for CHO cells. Given that tractable number, it was possible to build a database for how a number of different resins interacted with each of these proteins, as well as drug products, under different conditions. This database enabled Love and his colleagues to create in silico algorithms for designing continuous, multi-column processes designed to work together in a flow-through sequence for any new molecule. Love’s group also built a hardware system that enabled testing at scale and would be suitable for manufacturing, reducing technology transfer issues.

To date, his team has built three benchtop systems all operating with similar specifications. Each system comprises unit procedures: upstream, downstream, and formulation. One system produced clinical-grade G-CSF comparable to the FDA-approved product. He noted that his team used extensive gene sequencing, which is affordable because of the small size of the yeast genome, to characterize what is happening in the yeast cells during product production and use that to fix the yeast strain to be fit for purpose.

In short, said Love, deep biological knowledge has accelerated process development. This approach has enabled him to think about plug-and-play process development for new molecules, including human growth hormone, interferon-α2b, and rotavirus-specific nanobodies. For this last project, it took him and his team approximately four weeks from having the DNA sequence of the nanobody to producing six grams of product suitable for preclinical studies. Typically, the process takes 12 to 16 weeks from having a DNA sequence for the product to having material suitable for toxicology studies.

All of this information is building on itself, said Love, enabling his team to shorten the time for development and understanding what the production organism needs from a design perspective to maximize production. Integrating biological knowledge, he said, facilitates quality by design and has enabled his group to build a refrigerator-sized unit capable of producing up to 1 kilogram of product per year, which is equivalent to between 10 and 100 million doses of vaccine, 1 to 10 million doses of a cytokine, or 100 to 1,000 doses of a monoclonal antibody. His team has tested a single-use prototype, which currently sits at technology readiness level 5. In closing, Love said that integrated thinking is essential for achieving holistic designs and solutions, dematerializing and intensifying processes, and providing the agility to meet rapidly changing demand.

Strategy for Implementing Real-Time Release Testing

Richard Braatz from MIT recalled that an MIT-Novartis project several years ago had designed a plant-wide control system from first principles, built the fully integrated, end-to-end system, and showed that it reduced production costs by approximately 50 percent and met all purity specifications (Lakerveld et al., 2015; Mascia et al., 2013). That kind of system is continuing to move forward, he said.

Achieving the goal of having fully automated process development requires greatly increased understanding and optimization of each unit operation and exploiting process intensification, said Braatz. Fully automated high-throughput microscale technology can enable fast, continuous process research and development by generating a large amount of data to inform development of larger-scale systems. In addition, plug-and-play modules with integrated control and monitoring and corresponding in silico models will facilitate system deployment, as will dynamic models for unit operations for plant-wide simulation and control design. Smart data analytics and model-based control systems will help optimize operations, including startup, changeover, and shutdown. Addressing the design of control systems based on a virtual production facility, Braatz said it would ideally be built from first principles when possible using the highest complexity models available and be suitable for purpose for inventing and optimizing process designs and lower complexity models for process control design and quality monitoring (Lu et al., 2015).

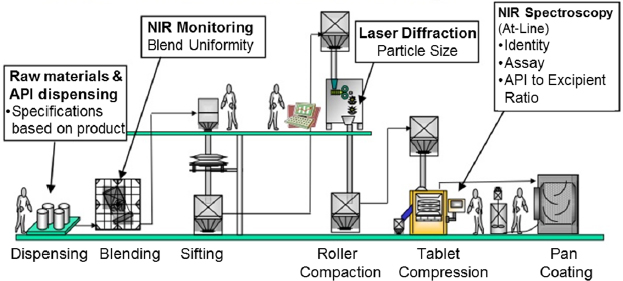

Real-time release testing, said Braatz, requires being able to evaluate and ensure the quality of in-process and/or final drug product based on process data, which typically include a valid combination of measured material attributes and process controls (see Figure 6). Real-time release testing has the potential, he said, to increase quality assurance, increase yield by lowering rejection rates, and reduce cycle times. The key to enabling real-time release testing is the availability of integrated sensor technologies, mathematical models, and control strategies.

Braatz has utilized four strategies for ensuring that a particular CQA specification is satisfied (Jiang et al., 2017; Lee et al., 2015; Myerson et al., 2015), starting with direct measurement of CQAs. If that is not possible, the second strategy is to predict CQAs based on a first principles model powered by measurements of related variables and running in parallel with operations. The third strategy predicts CQAs based on a semi-empirical model, such as a response surface map or a partial least-squares model, powered by measurements of other variables, and the fourth strategy is to operate the critical process parameters so that they fall within a design space or a specified set of parameters shown in offline studies to provide assurance. The first three are applicable for close-loop feedback control strategies and the fourth is used for an open-loop strategy.

He noted that strategies to assure on-specification product have already been demonstrated for an end-to-end plant as far back as 2012 (Lakerveld et al., 2015). Braatz and his colleagues built first principles dynamic models for each unit operation, validated the models, and then placed them into a simulation to design unit operation and plant-wide controls. The first time the controllers were turned on for the real system, the resulting small molecule was on specification, he said.

Advancements Toward Real-Time Release

Doug Richardson from Merck spoke about how he and his colleagues are addressing adaptive process control and process analytical technology to learn more about these processes in the near term and with the eventual goal of realizing real-time product release. Merck, he said, has a long history of developing and using process analytical technologies, which include both the analyzers and the system in which they fit. This process comprises four principles:

- Measure process parameters and CQAs in a timely manner.

- Model the process using information about the process conditions and product attributes to increase process understanding.

- Using the improved process understanding to apply an appropriate control strategy to maintain the process in a state of control and product attributes within specifications.

- Use improved process understanding to optimize process operation to ensure consistent attainment of CQAs and realize process efficiency improvements.

Richardson added that the earlier process analytical technologies are added to a process under development, the more information they will generate to improve process development and ease the transition into manufacturing.

Richardson explained the differences between at-line, on-line, and in-line process analytical technologies. At-line refers to the case where samples are collected manually and the analyzer is located next to the process. On-line systems are connected directly to the process and collect and automatically analyze samples, which are never returned to the process. In-line process analytical technology systems, the desired setup, are incorporated into the flow of the process and produce continuous data without sampling using capacitance, light scattering, spectroscopy, on-line liquid chromatography, and other types of sen-

sors. Technology requirements for in-line systems include fast acquisition frequency, no required recalibration, an operation time of 2 weeks to 60 days, sterilizability, and the ability to monitor CQAs. He noted that his group has tested Raman devices, light-scattering technologies for real-time molecular mass measurements (Patel et al., 2018), and on-line ion exchange technologies to assess charge variants (Patel et al., 2017).

Today, it takes approximately 30 days to run the dozens of assays required for quality control leading to product release. To disrupt the quality-control process, Richardson and his team have been investigating peptide mapping mass spectrometry as a multi-attribute analytical tool (Rogers et al., 2015). This approach uses a streamlined mass spectrometry appropriate for quality control that has the potential for automated sample preparation and automated data processes. Currently, his group is using this tool to develop cell lines and to monitor in-process quality attributes. He noted that multi-attribute mass spectrometry performs comparably to individual assays and represents an orthogonal method for in-process control, release, and stability assessment. It is not a high-throughput approach, but it is high-value, sensitive, and selective. Richardson added that this multi-attribute method might also be able to provide a link to clinical data to help identify early in development those CQAs that are important for drug performance and perhaps streamline what needs to be tested for product release.

In his opinion, there is no path to real-time release without process analytical technologies, and mass spectrometry will likely play a role in getting to real-time release. He explained that the adoption path will be to measure one attribute at a time and add additional attributes with experience, and that even without the ability to measure everything, multi-attribute mass spectrometry has the potential to reduce the number of required assays for quality control. There are several technical challenges to address before process analytical technologies can enable real-time release, including the development of robust, single-use sensors, advanced data analytics and process modeling, and robust aseptic sampling and clarification.

In a panel discussion with all the session speakers, Charles Cooney, the discussion moderator, summarized some themes from the session: (1) integration is the start of the design, (2) innovate by choice not chance, (3) automation is not the end point; build from the start, (4) real-time release testing is an overarching strategy for integration, (5) modularity is important (of sensors and unit ops); plug and play, and (6) measure the minimum number of attributes; only test the essence of what is needed, not all of the attributes that it is possible to test.

REGULATORY AND QUALITY ASPECTS OF CONTINUOUS MANUFACTURING

Key Aspects of Regulation

Moheb Nasr from Nasr Pharma Regulatory Consulting recapped discussions held during two international symposia on continuous manufacturing that

occurred in 2014 and 2016 at MIT. The outcome of the first symposium, which focused primarily on small molecule production, was a white paper on the regulatory and quality considerations for continuous manufacturing that established a regulatory baseline for continuous manufacturing (Allison et al., 2015). The second symposium, which split the discussion equally between small molecules and biologics, supported the need for a harmonized International Council for Harmonization of Technical Requirements for Pharmaceuticals for Human Use (ICH) guideline on technical and regulatory aspects. A second white paper resulted (Nasr et al., 2017). A third symposium took place in London on October 3-4, 2018, with a focus on case studies, business cases, and supply chain impact in both the small molecule and biologics spaces.

The currently regulatory environment, said Nasr, supports advancing innovation and the scientific and technical foundation on which regulations are based. Nasr noted that regulatory authorities within ICH, as well as an increasing number of non-ICH regulators, are encouraging industry to adopt new technologies. Recent ICH guidelines, in fact, emphasize science- and risk-based approaches for quality assurance. He added that regulatory expectation of assurance of reliable and predictable quality is much the same for both batch and continuous manufacturing and that a proposed ICH guideline, called ICH Q13, would establish appropriate standards to facilitate harmonized global implementation for continuous manufacturing.