In this session of the first workshop, presenters and workshop participants discussed the structures in various institutions that incentivize the maintenance of the current data generation process, identified disincentives and barriers to the incorporation of real-world evidence (RWE), and considered ways in which incentives could be better aligned. Presenters included researchers, product developers, and data aggregator and analytics companies.

THE USE OF REAL-WORLD EVIDENCE AND REAL-WORLD DATA IN PRODUCT DEVELOPMENT

Elliott Levy, senior vice president of global development at Amgen Inc., began by challenging the notion that there are perverse incentives that encourage companies to develop drugs in ways that are unnecessarily time consuming and costly. Levy said there is not “resistance from above” to the use of real-world data (RWD) and RWE; to the contrary, the pharmaceutical industry is acutely aware of the need to transform the drug development system in ways that acknowledge the revolution of big data and the potential for RWE and RWD. Drug developers, said Levy, are already making extensive use of RWD and big data for internal decision making along the entire process of drug development and marketing. Although traditional clinical trials still play a big role, the evidence from these trials and from RWE are “complementary sources of insight.” RWD can help to improve the pragmatism and relevance of clinical trials, for example, by adding patient-reported outcomes, by making the intervention more similar to real-life clinical interventions, or by removing unnecessary exclusion criteria. RWE and clinical trials are currently playing complementary roles, he said, but the next step would be for RWE to actually replace clinical trial evidence. This would be a dramatic change to the process of product development, and would address the fundamental challenge of drug development today—the extraordinary cost.

There are some very significant barriers to replacing randomized controlled trials (RCTs) with RWE, said Levy, as described in the following paragraphs.

Lack of Knowledge and Awareness

Levy noted that many of the people working in product development are those “who are steeped in the randomized clinical trial model, who have benefited from the prestige and scientific cache of the randomized clinical trial, and who have deep faith in it and, to some degree, distrust for non-interventional methods.” The physician scientists who lead product teams are accustomed to and comfortable with working with traditional forms of evidence generation, and simply are not aware of the potential uses and benefits of RWE.

Talents and Capabilities

Few people working in product development have experience conducting observational or non-interventional research, said Levy. Those who do, he said, are usually situated in groups (e.g., safety surveillance) that do not contribute to drug development.

Systems and Processes

Drug development organizations have been optimized to generate evidence from randomized interventional trials, and there are simply no systems or processes in place to efficiently and effectively implement alternative trial designs.

These barriers present a significant challenge for replacing RCTs with RWE, said Levy. However, the “good news” is that all of these barriers can be addressed. While the topic area—RWE and RWD—is new, the barriers are not. “These are classic challenges that organizations face as they try to develop new ways of working or launching new products,” he said. Addressing these barriers will require senior leaders to demonstrate commitment to the adoption of RWE, investment in training of team members, and a willingness to examine and change organizational structures.

Brian Bradbury, executive director in the Center for Observational Research at Amgen Inc., offered his perspective as an epidemiologist within a product development organization. Bradbury said that although he and his team have conducted RWE studies on the effectiveness and/or safety of a therapeutic intervention, motivating colleagues within his organization who are less familiar with non-interventional studies to embrace RWE-based approaches is a challenge. Bradbury identified a number of existing

obstacles to the adoption of RWE-based approaches in drug development organizations:

- Lack of understanding of non-interventional research methodology;

- Inability to distinguish between higher and lower quality RWE;

- Mistrust of data that were captured outside of traditional RCTs; and

- No clear regulatory pathway for RWE-based approaches.

These barriers, said Bradbury, discourage product teams from pursuing RWE-based approaches. In particular, he said, the lack of a clear regulatory pathway means there is less willingness to mobilize resources and develop capabilities for an approach that may not be acceptable to regulators.

MAKING CHOICES ABOUT RESEARCH DESIGN

Clinical researchers have choices about how to design their research, said Daniel Ford. Different research designs have advantages and disadvantages, and these must be weighed against each other when determining what path to take. Johns Hopkins has a resource called the “Research Studio,” said Ford, which provides researchers with expert advice on how to choose the appropriate methodology to answer a research question. Ford explained some of the considerations that might come into play when a researcher is deciding between a database observational study and a traditional RCT.

Traditional RCTs, said Ford, are what people have “grown up” with and make them feel more comfortable. Although RCTs require a commitment to recruitment and data collection, and are highly regulated, the analysis is fairly straightforward and the conclusions can have a high impact. If a researcher wishes to publish in a high-impact journal, a standard RCT is the “short ticket” to get there, Ford said. Database observational studies, on the other hand, are seen as riskier by many researchers, he said. It is more difficult to investigate biological pathways and causation with database studies, and analysis of the research depends on highly complex statistics. Ford said the complexity of analysis often means that researchers will need to find and rely on a colleague who is an expert in the area. In addition, the data in real-world sources tend to be less precise than data from clinical trials, and sometimes do not meet the needs of researchers. For example, electronic health records (EHRs) often have incomplete or missing data points, or the information is not readily accessible (e.g., test results are in PDF format). However, database observational studies are considerably less regulated and take a relatively short time to complete.

These challenges of observational database studies, said Ford, can dissuade researchers from choosing this pathway. Ford noted that younger

investigators can sometimes be hesitant to “rock the boat” by choosing the riskier path before establishing themselves with more traditional research methods. One particular challenge to using RWD for research is that phenomena are limited to what are available (e.g., the data in the EHR); this can be difficult for some researchers to accept, said Ford. To breach the divide between RCTs and RWE, said Ford, researchers need to consider hybrid research approaches such as pragmatic trials or cluster randomized designs. These approaches, too, have challenges—they require engagement of the health system and health providers, incorporation of disparate real-world data sources, and analysis that is usually more complicated than an RCT. However, these approaches can offer the rigor of an RCT with the cost and time savings of RWE. Clinical researchers need support, training, and tools to choose appropriate methodologies and to feel comfortable working with RWE and hybrid methods, concluded Ford.

ACCELERATING EVIDENCE GENERATION THROUGH DEFRAGMENTATION

One of the biggest barriers to the adoption of RWE and RWD, said Marcus Wilson, president of HealthCore, Inc., is the fact that there is a “natural gravitational pull” back to the old, traditional ways of doing things—in this case, RCTs. Unfortunately, RCTs have limitations, said Wilson. RCTs cannot generate clarity about how the product will perform in the real world. Differences between the patients studied in the trials and the patients using the product in the real world may include age, race, ethnicity, gender, comorbidities, concomitant drugs, lifestyle variances, and varying levels of compliance. RCTs also leave us with a lack of precision, said Wilson, which is a hindrance to effective clinical support. Clinical trials can indicate if a product works, and if a product is safe, but they cannot explain precisely for whom the product is effective or safe, he said. Individual patient decisions are still based on inferences and intuition, rather than on precise evidence. Data on how a product performs in the real world—and on how a patient’s phenotype matters for efficacy and safety—are not collected until the product is already on the market, which means that many decisions are being made without this evidence.

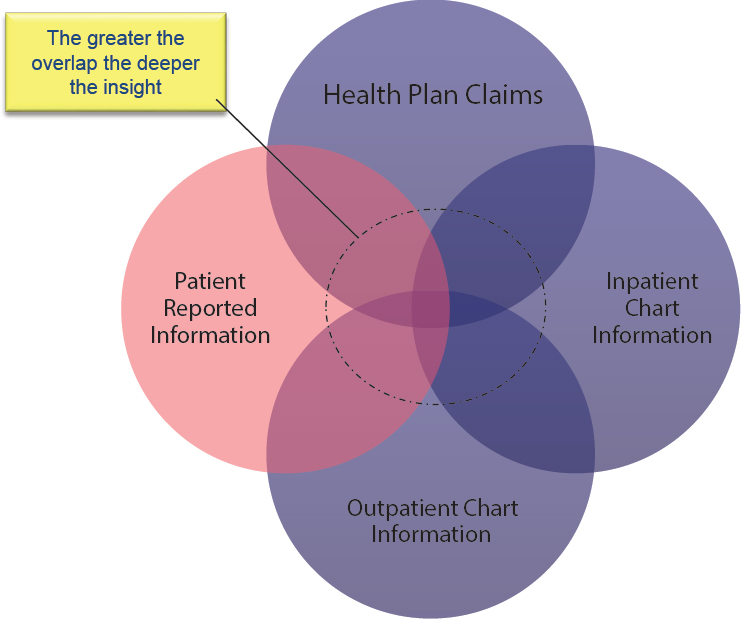

Collecting these types of RWE that can support decision making is challenging, said Wilson, because patient data are highly fragmented. Most RWD—no matter the sources—are fairly limited in value on their own. Once the fragmented data are integrated together, however, one can “begin to see things you couldn’t see before” (see Figure 4-1). Wilson said that while there is a great deal of attention on “big data,” the availability of “deep data” is more relevant. Unfortunately, stakeholders are often reluctant to share or integrate their data due to a long history of a fragmented

SOURCE: Wilson presentation, September 19, 2017.

system, competing priorities and agendas of institutions, and a lack of trust among stakeholders.

Wilson gave an example of his experience attempting to integrate data from different stakeholders. The California Integrated Data Exchange (Cal INDEX),1 which launched in 2014, was a nonprofit organization seeking to develop a next-generation health information exchange. The idea, said Wilson, was to collect data from multiple health providers and health insurers across California, and these data could be accessed by providers in order to administer the highest quality care possible. Wilson said that in initial talks with California providers, 32 of the largest health care systems expressed a strong interest in participating. However, in the following 3 years, only one provider actually signed up. The biggest challenge, he said, was trust. Data sharing among stakeholders—even if it is beneficial

___________________

1 Cal INDEX eventually merged with another health information exchange organization and the project is now known as Manifest MedEx.

to all parties—requires trust and collaboration, and this remains a huge barrier, he said. Wilson closed by identifying some of the core principles of defragmentation:

- Patient privacy and data security: This is essential to defragmentation because patients will only share data if they are confident that their information will remain private and secure.

- Avoid data misadventuring: RWD are often generated for a specific purpose, and can only be understood in context. Because of this, it is risky to simply pull RWD into research without understanding where they came from and how they can be used.

- Protect business interests of data sources: Much of the distrust among business stakeholders stems from a fear that the data they share will be used to their disadvantage. These stakeholders may be incentivized to share data if they are convinced of the benefits of an active learning health system. For these stakeholders, “it becomes a wonderful trade-off” to share their data in exchange for a richer and more useful system of data.

- Accelerate progress from RWD to RWE: RWD are not generally created with research in mind, so more work needs to be done to understand how and for what purpose RWD should be used, and stakeholders should work collaboratively to improve the data sources.

EVIDENCE HIERARCHIES

A major barrier to the adoption of RWE, said Hui Cao, executive director, Center of Excellence for RWE, Global Medical Affairs, Novartis, is a “fixed mindset” introduced in medical school that evidence from RCTs is the best possible evidence. There are “evidence hierarchies,” said Cao, that place RCTs at the top, and put RWE at level 2 or even lower. Researchers and providers are comfortable with RCTs, and they understand the benefits and limitations. However, Cao suggested that in order to truly make change, these hierarchies need to be revisited. Many years ago, the tools and methods for generating RWE did not exist, said Cao, but now there is a better understanding of what can be derived from the real world. The hierarchies of evidence should be adjusted accordingly, she said. Wilson said some of the reticence of researchers to embrace RWE stems from a lack of trust in the data source and the methods used to assess the data. Different types of RWE—and different sources of RWD—are variable in quality, and should be judged accordingly. Not every data source, he said, is fit for every purpose: there is a need for more rigor in vetting of RWD sources, as well as the methods used to assess RWD. Cao responded that while she agreed

that RWD should be scrutinized carefully, evidence from RCTs needs to be scrutinized as well. Some other workshop participants discussed additional barriers to using RWD and RWE in research (see Box 4-1).

OPPORTUNITIES TO INTEGRATE REAL-WORLD DATA AND REAL-WORLD EVIDENCE IN RESEARCH

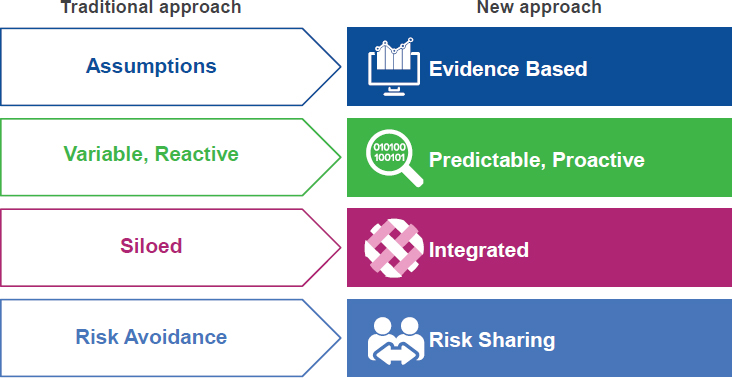

There is an ongoing transformation in the health research world, said John Doyle, senior vice president and general manager, Real-World and Analytics Solutions at IQVIA (formerly QuintilesIMS), with trends toward growing costs, shrinking reimbursements, and more personalized medicine. This transformation is leading to an increased demand and need for real-world data and evidence, he said. For example, patients and payers are demanding proof of value of new interventions compared with standard of care, and the move to precision medicine requires generating evidence for smaller and more diverse subgroups. The traditional approach to health research, said Doyle, involved a systematic, methodical process of solving a problem for a single, isolated stakeholder. The new approach, by contrast, seeks to design studies in ways that can align the needs and requirements of multiple stakeholders and solve problems in a more integrated, evidence-based way with the use of RWD and RWE (see Figure 4-2).

SOURCE: Doyle presentation, September 19, 2017.

All stages of clinical research have challenges and barriers, said Doyle, but the use of RWD can begin to address some of these barriers. For example, in the study design and planning phase, RWD can be used to validate protocol feasibility. Alternative and more efficient pathways to patient recruitment and enrollment can be achieved through the use of RWD. During the data collection and analysis phase, automated tools can be used for real-time tracking, analysis, and reporting. Researchers have already realized some of the advantages of the use of RWD—anecdotally, Doyle reported that recruitment times are being reduced and recruitment rates are increasing, start-up time lines are compressed, and the cost of evidence generation has been reduced.

Using RWD and RWE in clinical research, said Doyle, addresses one simple but often overlooked fact: “Real-world patients are fundamentally different than clinical trial patients.” No matter how well a clinical trial is conducted, questions remain about how the findings will extend to more diverse patient populations, how the lack of perfect adherence will affect outcomes, or what the longer term outcomes will be. “We need to bridge that gap with real-world evidence,” said Doyle.

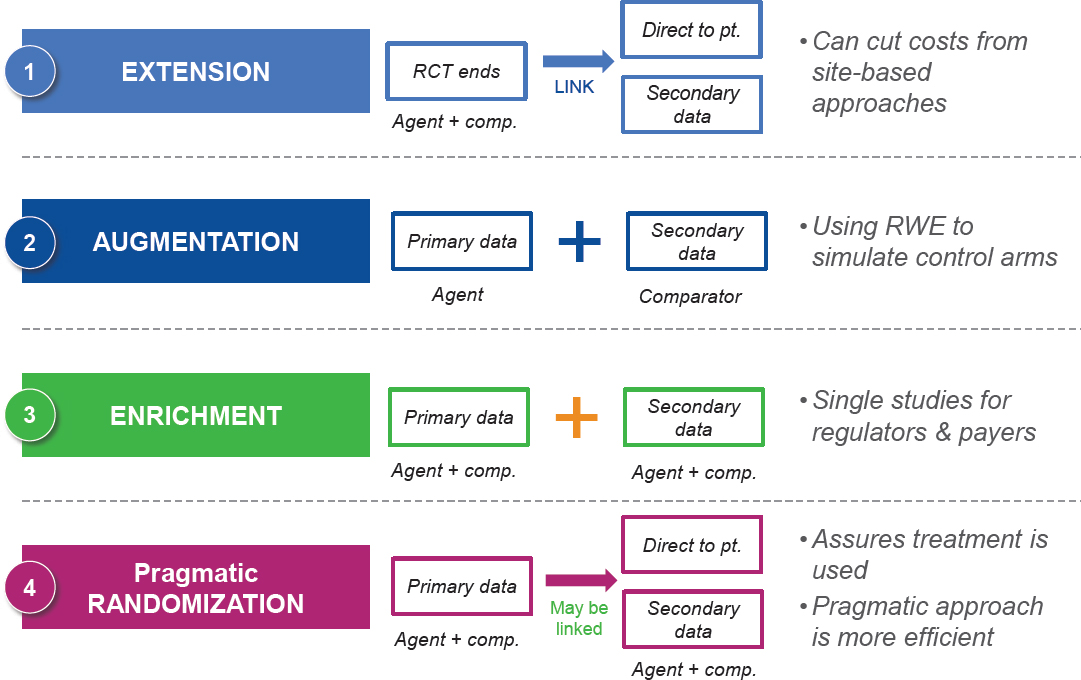

Doyle discussed several “mosaic methodologies” in which traditional RCT components are blended with newer RWE components (see Figure 4-3). The first methodology is called “extension.” This approach starts with a traditional RCT and patients consent to link their data from other sources, such as EHRs or Fitbits. This allows a researcher to conduct an initial RCT

SOURCE: Doyle presentation, September 19, 2017.

and then collect follow-up data that can be linked. The second approach is “augmentation,” in which RWE is used as control data for a single-arm trial. “Enrichment,” the third approach, is similar, but combines primary data collected by patients and practitioners with secondary data from EHRs and other sources (e.g., registries). Finally, pragmatic design is a method in which study participants are randomized, initial data are collected, and follow-up is conducted through the collection of RWD. This method is “the best of both worlds,” said Doyle, because it uses randomization to address the threats to internal validity, while also providing RWD for generalizability.

Doyle closed with a story about how integrating RWD into clinical research can lead to stronger, more sustainable research design. QuintilesIMS was building an evidence platform for lung cancer, and had designed it with input from multiple experts, including oncologists, epidemiologists, and patient advocates. After developing a dozen protocols, the designers decided to “hit the pause button” and test the design with patients and physicians in the real world. The designers found that although there was a lot of agreement with what they had already developed, there were a few places where they had prioritized outcomes that did not matter to patients while completely missing other outcomes that did matter. This was an “eye-opening” experience, said Doyle.

DISCUSSION

One of the biggest barriers to adopting RWE and RWD, said Anna McCollister-Slipp, chief advocate for participatory research at Scripps Translational Institute, founder of VitalCrowd, and co-founder of Galileo Analytics, is a “lack of a sense of urgency.” Researchers, funders, reviewers, institutions, and other stakeholders are “stuck in a paradigm” that they are accustomed to, and they are unable or unwilling to break away from traditions and explore new and alternative sources of evidence. McCollister-Slipp said there are real consequences to overreliance on RCTs, and it is past time to change the traditional paradigm. One way to break down this barrier, she said, would be to invite other perspectives into the decision-making process from people who are not usually involved, for example, patients and people from other fields of study. Currently, the involvement of patients and communities in medical research is limited mostly to participating as subjects and consulting on Institutional Review Board requirements and ethical guidelines. Instead, patients and communities should be involved from the beginning stages of research, she said, and help to guide the design process. McCollister-Slipp urged workshop participants to think big: “We have got to stop tinkering at the edges of the way we do things. . . . We need to think very holistically about how we can . . . truly disrupt this process and create change.”

Levy noted that randomization is still vitally important for determining if a product works, particularly in disease areas with small effect sizes. Rory Collins agreed and said the method of randomization in general is often conflated with the way in which RCTs are actually conducted. Rather than changing the methodology—that is, not using randomization—“we should use the methodology differently,” said Collins. Michael Horberg added that not every question can be answered with an RCT, just as not every question can be answered with RWE. The research question, the clinical context, and the decision to be made all influence how a trial is designed, and what the sources of data are. RWD are not simple and cheap alternatives to RCTs, said Bradbury; the data are complex and need to be appropriate for the research question.

A primary goal for the research community, said John Graham, is to understand and accept that there is a role for RCTs, prospective observational studies, retrospective observational studies, and many other methodologies that span the spectrum. Together, the wealth of different data sources and methodologies can come together to give the full answer to the research question. Researchers need to start, he said, with the patients’ needs, and develop the appropriate methodologies and data sources to find the answers.