2

Applying the Guiding Principles Report

This chapter describes how the committee applied the recommendations from the Guiding Principles for Developing Dietary Reference Intakes Based on Chronic Disease (Guiding Principles Report) (NASEM, 2017a) to its review of the Dietary Reference Intakes (DRIs) for potassium and sodium. The committee’s interpretation of the Guiding Principles Report described in this chapter sets the stage for the evidence review, methodological details, and rationale presented for DRIs based on chronic disease for potassium and sodium (see Chapters 6 and 10, respectively).1 This chapter also describes the committee’s approach to the new DRI category in context of the DRIs for adequacy and toxicity for potassium and sodium.

BACKGROUND

Historically, undernutrition and nutritional deficiencies were prevalent in the population, contributing to high rates of diet-related disease. Although the standardization of food fortification and enrichment along with dietary guidance to the public contributed to reducing the prevalence of nutrition deficiencies, there was a subsequent rise in the prevalence of obesity and related chronic diseases. As the public health burden in the

___________________

1 Throughout this report, DRIs based on chronic disease is used when broadly describing the category, such as when referring to the guidance in the Guiding Principles Report (NASEM, 2017a). This aligns with the committee’s use of the phrases DRIs for adequacy, which broadly refers to the Estimated Average Requirement, Recommended Dietary Allowance, and Adequate Intake, and DRIs for toxicity, which refers to the Tolerable Upper Intake Level.

United States and Canada shifted toward risk of chronic disease, nutrition science has increasingly focused on the effect of dietary determinants, including nutrients and other food components, as potential modifiers of chronic disease risk.

The public health significance of chronic disease warrants concerted efforts to understand the relationships between diet and chronic disease risk, but such efforts must navigate methodological challenges. Understanding dietary determinants of chronic disease often requires different kinds of conceptual approaches and evidence than are needed for the evaluation of nutrient deficiencies and toxicities. Dietary intake patterns are multidimensional, dynamic, and change over the course of a lifespan. Chronic diseases are complex, multifaceted, and develop over time. These complexities make identifying the relationship between nutrient intake and chronic disease difficult, especially when longitudinal data are limited or unavailable. Additionally, the extended time between exposure and outcome often precludes the use of randomized controlled trials to establish a causal relationship.

Since its inception, the DRIs were intended to consider chronic disease risk (IOM, 1994), but available evidence on chronic disease outcomes was typically too limited to inform the derivation of specific reference values. Furthermore, the DRI process lacked a mechanism for evaluating the evidence for causal and intake–response relationships between nutrient intake and chronic disease risk—two components of the DRI organizing framework (see Chapter 1, Box 1-2). As described in Chapter 1, efforts to overcome these challenges ultimately led to the Guiding Principles Report (NASEM, 2017a), which expanded the DRI model to include a new DRI category based on chronic disease.

THE COMMITTEE’S INTERPRETATION OF THE GUIDING PRINCIPLES REPORT

The Guiding Principles Report provides recommendations on methodological approaches to establishing DRIs based on chronic disease (see Box 2-1). Pursuant to its task (see Chapter 1, Box 1-1), the committee applied those recommendations in context of the available evidence on potassium and sodium. The following sections not only summarize the committee’s interpretation of recommendations in the Guiding Principles Report that were central to its approach to the new DRI category, but they also describe a few instances in which the committee considered it important and necessary to adapt some of the guidance. The committee notes that adaptations made for potassium and sodium do not invalidate potential applications of the concepts articulated in the Guiding Principles Report to future DRI reviews.

Nomenclature and Conceptual Underpinnings

Guidance from the Guiding Principles Report

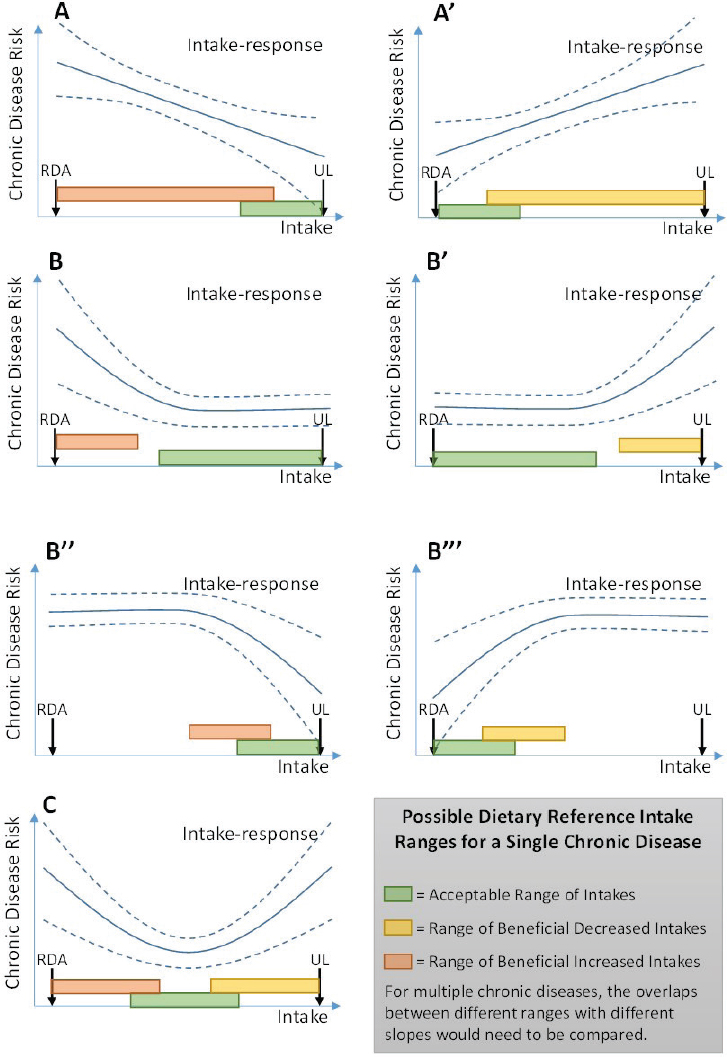

Nutrient deficiency diseases from inadequate intake and adverse effects from excess intake are well established for many essential nutrients. These relationships are based on the concept of a threshold effect. Intake of an essential nutrient below a certain threshold inevitably leads to deficiencies. For some nutrients, intakes above a certain upper threshold increase risk for adverse effects. As described above, the relationship between intake of a nutrient and risk of chronic disease is more complex; it is unlikely that there is a threshold intake level for which zero risk of chronic disease exists. The Guiding Principles Report presented a conceptual diagram of potential intake–response relationships that show variable types of relationships including curvilinear, linear, or U-shaped curves (see Figure 2-1). The different possible intake–response relationships set chronic disease risk apart from the threshold concepts of adequacy and toxicity.

The Guiding Principles Report described possible complications in translating the evidence for a chronic disease intake–response relationship into a reference value. For instance, such relationships are often continuous, and benefits of increasing or decreasing intakes could exist across a broad

SOURCE: NASEM, 2017a.

range of intakes. Another potential challenge is that individuals within a population may have different baseline risks for chronic disease, owing to factors other than dietary intake (e.g., genetics, other environmental exposures). Such considerations were anticipated to hinder DRI committees’ ability to identify a single value to characterize the complexities of the intake–response relationship. The Guiding Principles Report therefore described three possible ways to define a DRI based on chronic disease (see Table 2-1).

Committee’s Application of the Guiding Principles Report

Although the scope of its work was limited to potassium and sodium, the committee was mindful that its application of the Guiding Principles Report might have implications for future DRI reviews, particularly in assessing nonessential nutrients and food substances. One such consideration was the nomenclature the committee used for the new DRI category. In an effort to promote consistency with future DRI reviews, the committee sought to use terminology that would be broadly applicable, yet sufficiently descriptive. The committee acknowledges, however, that the nomenclature used in this report may be reevaluated in future DRI reviews.

The committee considered the use of distinct terms to describe the different types of intake–response relationships that could exist within a new DRI category based on chronic disease (see Figure 2-1 and Table 2-1). However, introducing multiple names and acronyms may be confusing for DRI users. For example, unique terminology for each intake–response relationship has the potential to subdivide the new DRI category in a way that may make it difficult for users to understand the relationship between the DRI values and chronic disease risk. By contrast, a single DRI category that allows flexibility in characterizing the different types

TABLE 2-1 Three Possible DRIs Based on Chronic Disease, as Identified in the Guiding Principles Report

| Possible DRI for Single Chronic Disease Relationshipa | Description | Region of Intake–Response |

|---|---|---|

| Acceptable Range of Intakes | Range of usual intakes of a food substance without increased risk of chronic disease | Region where slope is flat, outside of which there is increased risk of chronic disease, deficiency, or toxicity |

| Range of Beneficial Increased Intakes | Range of usual intakes of a food substance where increasing intake can reduce risk of chronic disease | Region where slope is negative, outside of which slope is non-negative, or there is increased risk of deficiency or toxicity |

| Range of Beneficial Decreased Intakes | Range of usual intakes of a food substance where decreasing intake can reduce risk of chronic disease | Region where slope is positive, outside of which slope is non-negative, or there is increased risk of deficiency or toxicity |

aIn each case, defining the region of the intake–response relationship corresponding to the DRI requires judgment as to what “slope” is small or large enough, and at what confidence level to consider flat, negative, or positive.

SOURCE: Adapted from NASEM, 2017a.

of intake–response relationships for chronic diseases risk would provide greater simplicity and conceptual unity. The committee determined that, in the context of this DRI review, the three terms and descriptions presented in the Guiding Principles Report (see Figure 2-1 and Table 2-1) should be consolidated into a single DRI category called the Chronic Disease Risk Reduction Intake (CDRR).

The committee considered several options for the new DRI category before selecting the CDRR. Because the DRIs comprise a set of different reference value categories, labeling the new category itself the chronic disease DRIs or DRI based on chronic disease had the potential of dividing the DRIs into “the adequacy and toxicity DRIs” and “the chronic disease DRIs.” Such a distinction would appear to counter the Guiding Principles Report recommendation that a single DRI committee be convened to establish the adequacy, toxicity, and chronic disease reference values for a specific nutrient (see Box 2-1, Guiding Principles Report Recommendation 10). Accordingly, the committee considered it important to use nomenclature that positioned the new category as one of several DRI categories. The committee also considered how to align the nomenclature with the naming convention used for the other DRI categories, which include descriptions such as “level,” “requirement,” and others. Although the Guiding Princi-

ples Report conceptualized this new category to be expressed as a range, the committee’s experience suggested that there may be circumstances in which a range may not be a sufficiently clear, effective, or appropriate expression of the CDRR. One option considered was using “target” or “goal,” but such descriptions had the potential to convey a threshold between risk and no risk for chronic disease. Ultimately, the committee determined that “intake” was sufficiently descriptive and would likely be broadly adaptable to different scenarios. The omission of the “I” in the acronym for this category is for simplicity, similar to the abbreviation of UL (for Tolerable Upper Intake Level).

Although its approach to reviewing the evidence to establish DRIs based on chronic disease and deriving the sodium CDRRs was conceptually aligned with the Guiding Principles Report, the committee further considered issues of implementation and clarity of communication in the expression of values. The sodium CDRR values established in this report were informed by the shape and strength of evidence2 for the intake–response relationship over the studied range of intakes. Defining the upper end of the range for sodium posed challenges. Had the committee established the sodium CDRR as a range and required moderate strength of evidence for an intake–response relationship to do so, the upper bound would be a sodium intake level that is exceeded by a portion of the population (see Chapter 11, Tables 11-4 and 11-6). Such a range would be subject to possible misinterpretation. First, it could be incorrectly viewed as a desirable range of intakes (akin to the concept of the Acceptable Macronutrient Distribution Range), rather than a range of intakes over which reductions in sodium intake are expected to reduce chronic disease risk. Second, it could be incorrectly interpreted as suggesting that high intakes are not associated with chronic disease risks, whereas intakes above this range are likely to pose a continuing risk. The committee was further challenged by the lack of evidence suitable for deriving a sodium UL based on toxicological adverse effects. As shown in Figure 2-1, the Guiding Principles Report had conceptualized the UL as intake level above which the CDRR would not need to be characterized, because the potential for toxicological risk would be increasing. Without a UL for sodium, this principle could not be applied. This situation fit the scenario anticipated by the Guiding Principles Report in which a lower strength of evidence of the intake–response relationship could be used to support a DRI based on chronic disease (see Box 2-1, Guiding Principles Report Recommendation 8). Thus, the committee extrapolated

___________________

2 For consistency throughout this report and in alignment with the terminology used in the AHRQ Systematic Review, the committee uses the term strength of the evidence instead of quality of the evidence or certainty of the evidence when describing the grading of the evidence used to derive DRIs based on chronic disease.

the intake–response relationship for sodium and chronic disease risk above the intake range where the strength of evidence was at least moderate. As detailed in Chapter 10, the committee expressed the sodium CDRR as the lowest intake level of intake for which there was sufficient evidence to characterize chronic disease risk reduction (see Box 2-2).

Strength of the Evidence

Guidance from the Guiding Principles Report

One of the general assumptions underpinning the DRI model is that available data are often insufficient to draw conclusions, and scientific judgment and transparent documentation must be used when assessing scientific uncertainties (Taylor, 2008). Another general assumption is that failure to derive a reference value is often not a viable public health option (Taylor, 2008). In the case of essential nutrients, there is an obligation for a DRI committee to determine DRIs for adequacy (i.e., Estimated Average Requirements [EARs] and Recommended Dietary Allowances [RDAs], or Adequate Intakes [AIs] when an EAR and an RDA cannot be derived). Accordingly, a DRI committee uses the best available evidence to do so. Similarly, if there is evidence of adverse effects from high levels of intake, a DRI committee uses its expert judgment and best available evidence to determine a level of intake after which risk increases to establish a UL. In contrast, the Guiding Principles Report described the new DRI category as being established only when the body of evidence on the relationship between a nutrient and chronic disease risk is sufficient and when an intake–response relationship can be characterized. The conceptual distinction between these DRI categories is summarized in Table 2-2.

TABLE 2-2 Conceptual Distinction Between DRIs for Adequacy and Toxicity and DRIs Based on Chronic Disease

| DRIs for Adequacy and Toxicity | DRIs Based on Chronic Disease |

|---|---|

| Needed because deficiencies (of essential nutrients) and toxicities: | Are not warranted unless sufficient evidence exists because: |

|

|

SOURCE: Adapted from NASEM, 2017b.

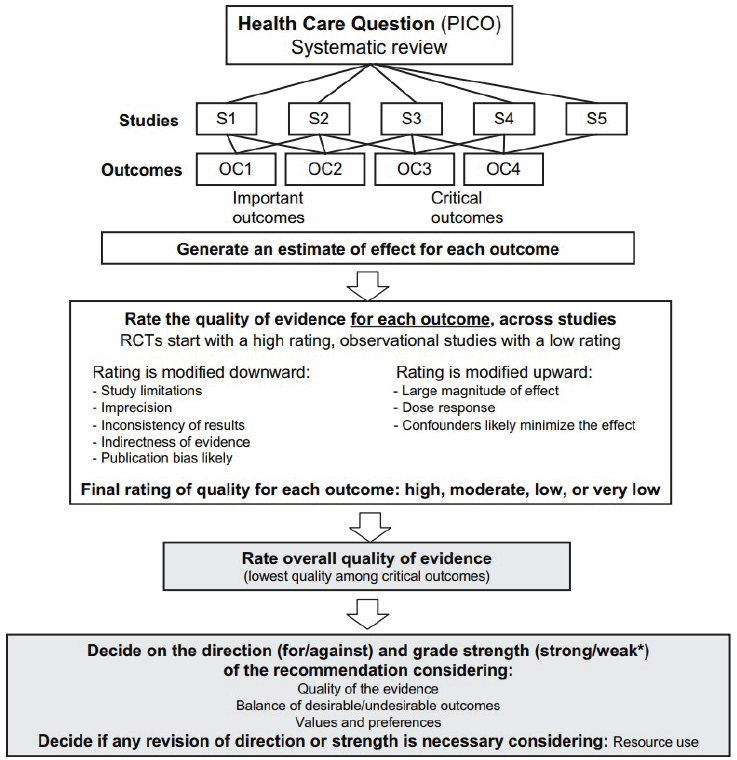

Recognizing the potential for misinterpretation from the results of individual studies, various tools have been developed to assess the strength of scientific evidence that examines a specific health-related question. These tools support a more objective and transparent process, although expert interpretation and judgment are still needed. To determine the strength of the body of evidence for a relationship between intake and chronic disease risk, the Guiding Principles Report (NASEM, 2017a) recommended using the Grading of Recommendations Assessment, Development and Evaluation (GRADE) system. GRADE, which was developed in the health care context (see Figure 2-2), rates a body of evidence by assessing five domains that may reduce the strength of the evidence and three domains that may increase the strength (see Box 2-3). This assessment leads to one of four ratings—high, moderate, low, or very low—to describe the certainty in how close the estimated effect is to the true effect (Balshem et al., 2011). The Guiding Principles Report recommended a GRADE rating of at least moderate strength for both the causal relationship and the intake–response relationship for the DRI based on chronic disease to be established, although it was also noted that “when a food substance increases chronic disease risk, the level of certainty considered acceptable might be lower” (NASEM, 2017a, p. 220).

Committee’s Application of the Guiding Principles Report

The DRI organizing framework guides DRI committees to establish reference values based on the strength of the evidence. The DRI organizing framework provides flexibility to accommodate different evidentiary scenarios that DRI committees may encounter and allows committees to factor in public health ramifications. To that end, the processes for assessing the

NOTE: GRADE = Grading of Recommendations Assessment, Development and Evaluation; OC = outcomes; PICO = population, intervention, comparator, and outcome; RCT = randomized controlled trial; S = studies.

*Also labeled “conditional” or “discretionary.”

SOURCE: Reprinted from Guyatt et al., 2011a, with permission from Elsevier.

strength of evidence and integrating such an assessment into the decision-making process for the DRIs for adequacy and the DRIs for toxicity are not yet standardized. Recommendations in the Guiding Principles Report introduce a more formal strength-of-evidence assessment to the DRI process, specifically for informing decision making related to DRIs based on chronic disease. The fundamental conceptual differences outlined in Table 2-2 call for a more standardized approach to assessing and applying strength of

evidence to the derivation of DRIs based on chronic disease compared to the other DRI categories.

In its application of the Guiding Principles Report guidance, the committee explored the body of evidence provided in the Agency for Healthcare Research and Quality systematic review, Sodium and Potassium Intake: Effects on Chronic Disease Outcomes and Risks (AHRQ Systematic Review) (Newberry et al., 2018). Although the tool that was used in the AHRQ Systematic Review was not GRADE, it is conceptually similar (Berkman et al., 2013). One of the noted differences is terminology. For example, the AHRQ Systematic Review referred to the assessment of the body of evidence as “strength of the evidence,” whereas GRADE refers to “quality (or certainty) of the evidence.” Furthermore, where GRADE uses the ratings of high, moderate, low, and very low, the AHRQ Systematic Review used high, moderate, low, and insufficient. Given that the two

approaches are similar, the committee elected to use the AHRQ Systematic Review terminology throughout this report.

To effectively use the strength of evidence ratings in the AHRQ Systematic Review, the committee first evaluated the methodological approaches taken (see Appendix C). From this evaluation, the committee identified two components of the strength-of-evidence assessment that merited further consideration: risk of bias and inconsistency. Risk of bias was considered an important domain requiring further investigation because of its use in determining the validity of individual study results. The domain of inconsistency assesses the comparability of results in a body of evidence. The committee found that the AHRQ Systematic Review did not thoroughly investigate and explain causes of heterogeneity in the results when high levels of inconsistency were found; in some cases, such an investigation was needed to interpret the results of meta-analyses. Accordingly, the committee also investigated the domain of inconsistency. These additional analyses informed and clarified the committee’s approach.

Risk of bias Before the strength of a body of evidence can be determined, the individual studies are assessed. The quality of an individual study can vary depending on its specific design features and conduct. To account for this in rating the strength of evidence, consideration is given to risk of bias (see Box 2-4).

The AHRQ Systematic Review assessed risk of bias for all studies meeting the inclusion criteria. The committee reviewed the risk-of-bias criteria for both randomized controlled trials and observational studies (for the risk-of-bias criteria, see Appendix C, Annex C-1). One of the risk-of-bias domains was considered by the committee to be of particular importance—methods of potassium and sodium intake assessment. The method used to assess potassium and sodium intake can affect the strength of diet–indicator relationships, the strength of intake–response relationships, and the estimation of usual intake distribution for a population (see Chapter 3). The committee reviewed this and the other domains and concurred with the tools that the AHRQ Systematic Review used to assess risk of bias.

The committee also considered the inclusion of observational studies in determining the strength of the evidence for the relationship between potassium or sodium intake and each indicator selected for establishing a CDRR. According to GRADE (Guyatt et al., 2011b), although evidence that relies only on observational studies can be upgraded in rating, such evidence is generally classified as low strength of evidence because observational studies have an inherently weaker design for evaluating evidence on causal effects. Only when there is a large effect size, an intake–response relationship is observed, or plausible residual confounding increases confidence in the estimates can the strength of evidence be upgraded. In addition, in the

case of sodium, the majority of the observational studies were rated as high risk of bias, mainly because of the biases in the sodium intake ascertainment methods used in observational study designs (for strengths and weaknesses of these methods, see Chapter 3). For these reasons, the committee primarily relied on randomized controlled trials to inform its decision making regarding establishing DRIs based on chronic disease for potassium and sodium. The committee considered observational studies rated as having low risk of bias to supplement the decisions from randomized control trials, particularly when randomized controlled trial data were few or unavailable.

Inconsistency Meta-analyses use statistical methods to compare results from different studies, as a means to identify a consistent pattern across studies. Heterogeneity across the studies in a meta-analysis can arise for a variety of reasons, including variability in the participant characteristics, interventions, and outcomes evaluated; there can also be trial-level variability in study

design and risk of bias. As described in Box 2-5, unexplained heterogeneity can affect the interpretation of results in meta-analyses. As such, the strength-of-evidence domain of inconsistency, which characterizes heterogeneity, can play an important role in synthesizing and interpreting a body of evidence.

The AHRQ Systematic Review performed meta-analyses for key questions and subquestions when randomized controlled trials were available, but it did not explore the potential sources of heterogeneity. Recognizing the importance of explaining the inconsistencies in order to have confi-

dence in the meta-analyses results, the committee carried out subgroup analyses and meta-regression analyses in instances in which heterogeneity was judged to be high. Details of the committee’s analyses to explore unexplained heterogeneity are described in Chapters 6 and 10.

Use of strength-of-evidence rating Pursuant to the guidance provided in the Guiding Principles Report, the committee determined that it would establish a DRI based on chronic disease if there was at least moderate strength of evidence for both a causal and an intake–response relationship between potassium or sodium intake and chronic disease risk. In this approach, situations can arise in which there is moderate or high strength of evidence of a causal relationship between intake of a nutrient and a chronic disease indicator, but insufficient or low strength of evidence of an intake–response relationship. Pursuant to the guidance in the Guiding Principles Report, a DRI based on chronic disease would generally not be established in this case because of limitations in the evidence. The lack of a DRI based on chronic disease, however, does not necessarily mean that no benefit exists; rather, there is a lack of evidence of sufficient strength to characterize the intake–response relationship and thereby establish a DRI based on chronic disease.

The committee primarily used the strength-of-evidence grades provided in the AHRQ Systematic Review for causal relationships. In select instances in which the committee explored unexplained heterogeneity, the strength-of-evidence grading was reassessed. The AHRQ Systematic Review did not conduct intake–response analyses. Accordingly, for chronic disease indicators with moderate strength of evidence selected to inform the derivation of the CDRRs, the committee sought to characterize the intake–response relationship; details of the committee’s additional analyses are provided in Chapters 6 and 10.

Qualified Surrogate Markers

Guidance from the Guiding Principles Report

A surrogate marker is “a biomarker that is intended to substitute for a clinical endpoint” (Biomarkers Definitions Working Group, 2001, p. 91) by accurately predicting the effect of a measured intervention on an unmeasured clinical outcome. Surrogate markers are particularly useful when evaluating the effect of interventions on chronic disease relationships for which a long duration and large sample sizes are needed to evaluate chronic disease outcomes but are not feasible.

The Guiding Principles Report recommended that if evidence on the relationship between intake and a qualified surrogate marker is to be used

in establishing the DRI based on chronic disease, it ideally would be used as supporting evidence (NASEM, 2017a) (see Box 2-1, Guiding Principles Report Recommendation 2). Qualifying a surrogate marker involves “assessment of available evidence on associations between the biomarker and disease states, including data showing effects of interventions on both the biomarker and clinical outcomes” (IOM, 2010, p. 2). The Guiding Principles Report further recommended that “qualification of surrogate markers must be specific to each nutrient or other food substance, although some surrogates will be applicable to more than one causal pathway” (NASEM, 2017a, p. 8). This suggests that, for a DRI committee to use a qualified surrogate marker for the purposes of informing a DRI based on chronic disease, fit for purpose needs to be demonstrated. This type of evaluation stemmed from the recognition that caution is needed when generalizing surrogate marker qualification status from one context to another (IOM, 2010; Yetley et al., 2017).

Committee’s Application of the Guiding Principles Report

A 2010 Institute of Medicine report developed a conceptual framework for qualifying surrogate markers for specific uses (IOM, 2010). The two key components of the qualification framework are (1) an objective and rigorous evaluation of the available evidence, and (2) a scientific judgment that the potential surrogate marker is fit for the purpose for which it is intended (e.g., for setting DRIs for the apparently healthy population within a dietary context).

The guidance described in the Guiding Principles Report (NASEM, 2017a) and the framework for surrogate markers (IOM, 2010) provided a conceptual foundation that the committee used in reviewing the evidence in support of establishing DRIs based on chronic disease for potassium and sodium. For the committee to consider whether a biomarker was a qualified surrogate marker and use it as supporting evidence to establish CDRRs, a moderate strength of evidence for both a causal relationship and an intake–response relationship between potassium or sodium intake and the biomarker was deemed necessary.

In its application of this guidance, the committee encountered two different scenarios for blood pressure. The details of the evidence and the committee’s decision making are presented in Chapters 6 and 10, but because the two scenarios exemplify the concepts related to the use of qualified surrogate markers and fit for purpose, a brief description of the difference is provided here. For potassium, there was evidence of a significant reduction in both systolic and diastolic blood pressure with potassium supplementation. However, an intake–response relationship could not be discerned. Furthermore, there was insufficient evidence of an effect of potassium intake

on cardiovascular disease outcomes. This lack of evidence prevented the committee from considering blood pressure a qualified surrogate marker for cardiovascular disease in the context of potassium intake and from using the evidence to support the derivation of potassium CDRR values. In contrast, the evidence on blood pressure in the context of sodium intake was more robust, and the committee was able to consider blood pressure as a qualitied surrogate marker for hypertension and cardiovascular disease (details of this assessment are provided in Chapter 10, Annex 10-2).

Balancing Benefits and Harms

Guidance from the Guiding Principles Report

The Guiding Principles Report states, “deficiency, toxicity, and multiple chronic diseases need to be considered when balancing benefits and harms” (NASEM, 2017a, p. 227). For example, when making decisions about establishing an adequate intake level, the committee may need to evaluate evidence as to whether nutrient intakes below the AI might increase the risk of a chronic disease. That is, DRI committees need to consider whether the benefits associated with the AI might be adversely affected by harms associated with a chronic disease at or below this intake level. Conversely, DRI committees would need to consider a similar evaluation as to whether intakes above a UL might confer benefits that needed to be balanced against the harms associated with intakes at this level.

Committee’s Application of the Guiding Principles Report

The information gathered by the committee contained evidence on different indicators related to potassium and sodium intakes that might result in benefits and harms. In deriving the DRIs for adequacy and toxicity, the committee made an effort to consider all types of benefits and harms, including potential chronic disease effects, and to be transparent about its rationale for the decisions made for each DRI category for both nutrients.

THE CHRONIC DISEASE RISK REDUCTION INTAKE IN CONTEXT OF THE OTHER DRI CATEGORIES

In its review of the evidence and application of the guidance in the Guiding Principles Report, the committee considered the conceptual interrelationships among the DRI categories. The following sections briefly summarize how the committee applied its collective expert judgment to make the distinction between the CDRR and the other DRI categories for potassium and sodium. It was beyond its scope to determine how future

DRI committees can systematically make such decisions moving forward. The committee acknowledges the challenges that the CDRR might present for DRI users as they attempt to interpret it in the context of the DRI model that existed prior to the Guiding Principles Report. The need for additional guidance on the expanded DRI model, for both DRI committees and DRI users, is described as a future direction in Chapter 12.

The CDRR and the DRIs for Adequacy

The committee interpreted the CDRR as distinct from the DRIs for adequacy (i.e., EAR and RDA, or AI). In its approach, the committee attempted to make a delineation between the evidence it reviewed for establishing the DRIs for adequacy (which ultimately remained AIs for both potassium and sodium) and the evidence it reviewed for establishing the CDRRs.

AIs are established when evidence is insufficient to establish EARs and RDAs. The AI is “a recommended average daily nutrient intake level based on observed or experimentally determined approximations or estimates of nutrient intake by a group (or groups) of apparently healthy people who are assumed to be maintaining an adequate nutritional state” (IOM, 2006, p. 11). An adequate nutritional state is defined in various ways, including normal growth, maintenance of normal plasma levels of nutrients, and other features of general health (IOM, 2006). Before DRIs based on chronic disease were included in the DRI model, evidence on chronic disease–related indicators had been considered, and in some cases used to inform the derivation of an AI. The AI for total fiber, for instance, was established based on evidence of its relationship to coronary heart disease (IOM, 2002/2005).

The expanded DRI model allows for a more nuanced characterization of the relationship between nutrient intake and chronic disease risk reduction. Although an important step forward, the expansion of the DRI model created challenges, particularly once the committee determined there was insufficient evidence to establish EARs and RDAs for potassium and sodium. For instance, in the 2005 DRI Report, the “adequate nutritional state” for potassium encompassed indicators that the committee considered in context for establishing a CDRR. The approach taken to the evidence in support of establishing the potassium DRIs for adequacy is therefore markedly different than that taken in the 2005 DRI Report.

Despite the conceptual delineation, the review of the evidence indicators was context specific. For instance, the committee reviewed evidence of potential harmful health effects of a range of sodium intakes that was likely to extend below an AI. The range of potential harmful health effects included indicators related to chronic disease. In this context, the evidence was reviewed to ensure that the selected AI values did not potentially lead

to detrimental effects. This use is different than using such evidence as an indicator to establish the sodium CDRRs.

In the case of sodium, failure to identify chronic disease risk reduction at intakes below the CDRR reflects a lack of evidence rather than a lack of effect. This distinction is important from a practical perspective. In the past, the range of intakes between the RDA or AI and the UL has often been characterized as “safe and adequate.” The committee cautions against interpreting the gap between the sodium AI and CDRR in such a manner, because intake levels below the sodium CDRR do not reflect a known absence of chronic disease risk. Moreover, as discussed in Chapter 10, there is evidence of benefits with respect to blood pressure with reducing intakes below the CDRR, but the evidence alone was not of sufficient strength to support chronic disease risk reduction.

The CDRR and the UL

The UL is “the highest average daily nutrient intake level likely to pose no risk of adverse health effects for nearly all people in a particular group” (IOM, 2006, p. 11). The Guiding Principles Report recommended that the UL be retained in the expanded DRI model, but that it characterize toxicological risk (NASEM, 2017a). This recommendation narrows what would qualify as an adverse effect for a UL.

In the expanded DRI model, consideration of both a UL and the CDRR is necessary because the meanings of both are different and valuable. The UL connotes an intake level after which toxicological risk increases with increasing intakes. For sodium, the CDRR reflects the lowest level of intake for which there was sufficient strength of evidence to characterize a chronic disease risk reduction. According to the Guiding Principles Report, if increases in chronic disease risk only occur at intakes greater than the UL, then no CDRR would be necessary.

SUMMARY

The Guiding Principles Report served as a foundation as the committee considered the evidence to support DRIs based on chronic disease for potassium and sodium. As the first to implement an expanded DRI model, the committee recognized opportunities to adapt some of the guidance—particularly related to nomenclature—to ensure concepts were clearly and concisely conveyed. The committee followed the guidance on using strength-of-evidence grading in its decision making regarding the potassium and sodium CDRRs. To do so, it relied on evidence in the AHRQ Systematic Review. The committee concurred with the risk-of-bias tool that was used in the AHRQ Systematic Review and expanded the strength-of-

evidence assessment to explore unexplained heterogeneity. To satisfy the criteria for establishing a CDRR—moderate strength of evidence for both a causal and an intake–response relationship—the committee also conducted intake–response analyses for selected indicators. The committee assessed whether select biomarkers with at least moderate strength of evidence for a causal and an intake–response relationship could serve as a qualified surrogate marker and be used as evidence to support the derivation of a CDRR, in the context of potassium and sodium intake. The committee also considered benefits and harms in its derivation of the potassium and sodium DRIs. The committee interpreted the Guiding Principles Report as creating a new DRI category, termed in this report the Chronic Disease Risk Reduction Intake (CDRR), which is distinct from the AI and UL. In moving from the previous DRI model to an expanded model, the committee needed to consider conceptual interrelationships among the DRI categories.

REFERENCES

Balshem, H., M. Helfand, H. J. Schunemann, A. D. Oxman, R. Kunz, J. Brozek, G. E. Vist, Y. Falck-Ytter, J. Meerpohl, S. Norris, and G. H. Guyatt. 2011. GRADE guidelines: 3. Rating the quality of evidence. Journal of Clinical Epidemiology 64(4):401-406.

Berkman, N. D., K. N. Lohr, M. Ansari, M. McDonagh, E. Balk, E. Whitlock, J. Reston, E. Bass, M. Butler, G. Gartlehner, L. Hartling, R. Kane, M. McPheeters, L. Morgan, S. C. Morton, M. Viswanathan, P. Sista, and S. Chang. 2013. Grading the strength of a body of evidence when assessing health care interventions for the Effective Health Care Program of the Agency for Healthcare Research and Quality: An update. In Methods Guide for Effectiveness and Comparative Effectiveness Reviews. Rockville, MD: Agency for Healthcare Research and Quality.

Biomarkers Definitions Working Group. 2001. Biomarkers and surrogate endpoints: Preferred definitions and conceptual framework. Clinical Pharmacology and Therapeutics 69(3):89-95.

Guyatt, G., A. D. Oxman, E. A. Akl, R. Kunz, G. Vist, J. Brozek, S. Norris, Y. Falck-Ytter, P. Glasziou, H. DeBeer, R. Jaeschke, D. Rind, J. Meerpohl, P. Dahm, and H. J. Schunemann. 2011a. GRADE guidelines: 1. Introduction-GRADE evidence profiles and summary of findings tables. Journal of Clinical Epidemiology 64(4):383-394.

Guyatt, G., A. D. Oxman, S. Sultan, P. Glasziou, E. A. Akl, P. Alonso-Coello, D. Atkins, R. Kunz, J. Brozek, V. Montori, R. Jaeschke, D. Rind, P. Dahm, J. Meerpohl, G. Vist, E. Berliner, S. Norris, Y. Falck-Ytter, M. H. Muran, and H. J. Schunemann. 2011b. GRADE guidelines: 9. Rating up the quality of evidence. Journal of Clinical Epidemiology 64(12):1311-1316.

Higgins, J. P. T., and S. Green. 2011. Cochrane handbook for systematic reviews of interventions: Version 5.1.0. http://handbook.cochrane.org (accessed December 16, 2018).

Higgins, J. P. T., S. G. Thompson, J. J. Deeks, and D. G. Altman. 2003. Measuring inconsistency in meta-analyses. BMJ 327(7414):557-560.

IOM (Institute of Medicine). 1994. How should the Recommended Dietary Allowances be revised? Washington, DC: National Academy Press.

IOM. 2002/2005. Dietary Reference Intakes for energy, carbohydrate, fiber, fat, fatty acids, cholesterol, protein, and amino acids. Washington, DC: The National Academies Press.

IOM. 2006. Dietary Reference Intakes: The essential guide to nutrient requirements. Washington, DC: The National Academies Press.

IOM. 2010. Evaluation of biomarkers and surrogate endpoints in chronic disease. Washington, DC: The National Academies Press.

NASEM (National Academies of Sciences, Engineering, and Medicine). 2017a. Guiding principles for developing Dietary Reference Intakes based on chronic disease. Washington, DC: The National Academies Press.

NASEM. 2017b. Guiding principles for developing Dietary Reference Intakes based on chronic disease—Highlights from the consensus report. https://www.nap.edu/resource/24828/GuidingPrinciplesforDRIs-ReleaseSlides.pdf (accessed January 28, 2019).

Newberry, S. J., M. Chung, C. A. M. Anderson, C. Chen, Z. Fu, A. Tang, N. Zhao, M. Booth, J. Marks, S. Hollands, A. Motala, J. K. Larkin, R. Shanman, and S. Hempel. 2018. Sodium and potassium intake: Effects on chronic disease outcomes and risks. Rockville, MD: Agency for Healthcare Research and Quality.

Schunemann, H., J. Brozek, G. Guyatt, and A. D. Oxman. 2013. Introduction to GRADE handbook. https://gdt.gradepro.org/app/handbook/handbook.html (accessed January 16, 2019).

Sterne, J. A., M. A. Hernan, B. C. Reeves, J. Savovic, N. D. Berkman, M. Viswanathan, D. Henry, D. G. Altman, M. T. Ansari, I. Boutron, J. R. Carpenter, A. W. Chan, R. Churchill, J. J. Deeks, A. Hrobjartsson, J. Kirkham, P. Juni, Y. K. Loke, T. D. Pigott, C. R. Ramsay, D. Regidor, H. R. Rothstein, L. Sandhu, P. L. Santaguida, H. J. Schunemann, B. Shea, I. Shrier, P. Tugwell, L. Turner, J. C. Valentine, H. Waddington, E. Waters, G. A. Wells, P. F. Whiting, and J. P. Higgins. 2016. ROBINS-I: A tool for assessing risk of bias in non-randomised studies of interventions. BMJ 355:i4919.

Taylor, C. L. 2008. Framework for DRI development: Components “known” and components “to be explored.” https://www.nal.usda.gov/sites/default/files/fnic_uploads/Framework_DRI_Development.pdf (accessed April 9, 2019).

Yetley, E. A., A. J. MacFarlane, L. S. Greene-Finestone, C. Garza, J. D. Ard, S. A. Atkinson, D. M. Bier, A. L. Carriquiry, W. R. Harlan, D. Hattis, J. C. King, D. Krewski, D. L. O’Connor, R. L. Prentice, J. V. Rodricks, and G. A. Wells. 2017. Options for basing Dietary Reference Intakes (DRIs) on chronic disease endpoints: Report from a joint US-/Canadian-sponsored working group. American Journal of Clinical Nutrition 105(1):249S-285S.