2

Evaluation of Existing Resilience Measurement Efforts

The community resilience movement has made significant advances over the past decade. Initiatives explicitly intended to build resilience have been promoted by organizations such as the Rockefeller Foundation, The Nature Conservancy, and the Z Zurich Foundation; by government agencies such as the National Oceanic and Atmospheric Administration, the National Institute of Standards and Technology (NIST), the Federal Emergency Management Agency, and the Department of Homeland Security; and by the private sector such as Kaiser Permanente, IBM, and Citi Bank. Significant amounts of money, time, and human resources have been invested in resilience—conceptually, analytically, and in practice.

But despite the growth and investments in resilience efforts, resilience science and measurement still lag behind resilience practice, and key questions remain: What is the current state of the science in community resilience measurement?

How well do the designs of measurement efforts match the practical needs and demands of end users? How well has resilience measurement been translated into action at local to national scales? Has measuring resilience made a difference? If the concept of community resilience can and should be measured as indicated in the National Research Council’s report Disaster Resilience: A National Imperative (2012), then there is a need for basic measurement efforts that are useful, usable, and used (Aitsi-Selmi, Blanchard, and Murray, 2016; Wall, McNie, and Garfin, 2017).

Charges 1, 2, and 3 of the Statement of Task (see Box 1-2) call for an examination of resilience measurement scholarship and practice under way and a description of the similarities and differences among these efforts and the methodologies they use for data collection and analysis. This chapter addresses these charges through an assessment of 33 community resilience measurement efforts, policies, and programs designed to capture various aspects of resilience (see Box 2-1 for Chapter 2’s findings).

ASSESSMENT OF CURRENT RESILIENCE MEASUREMENT EFFORTS

The committee reviewed dozens of measurement efforts and relied on its expertise—representing diverse academic disciplines (sciences, engineering, human health) and community resilience experience (researchers, funders, practitioners)—and the resilience literature to narrow the list to 33 efforts. These 33 efforts are meant to represent a diverse sample of currently available community resilience tools and frameworks. The committee sought to examine resilience measurement efforts that specifically purport to assess community resilience or provide guidance to resilience assessment. The committee was not charged with finding specific resilience measures, metrics, or indicators, nor was it charged with either identifying the best or most applicable measurement efforts for communities or providing a comprehensive list of resilience measurement tools and frameworks. Rather, the committee was charged with documenting the similarities and differences among these different approaches and describing methodologies used for quantitative and qualitative data collection and analysis.

The committee identified dozens of measurement efforts through literature reviews and committee members’ professional knowledge about the current state of practice (e.g., Beccari, 2016; Cutter, 2016b; NRC, 2012; ODI and RMEL CoP, 2016; Ostadtaghizadeh et al., 2015; Sharifi, 2016). There are overviews of many of the current efforts in the literature (e.g., Cutter, 2016b; NIST, 2016; ODI and RMEL CoP, 2016) that describe the basic measurement elements (e.g., purpose, target categories, scale, and/or type of measurement). Because few of these overviews offer detailed comparative analyses, communities are challenged to distinguish among the different measurement efforts or identify which measurement tool might be best for their individual purposes.

Resilience measurement efforts vary widely and include: (1) defining and explaining (or operationalizing) a specific resilience construct, indicator, or community capital; (2) promotion of checklists or scorecards that centrally assemble indicators or subjects associated with community resilience; (3) processes and guidance to communities on indicators or subjects that could be measured locally; or (4) the encouragement of the use of specific databases, analytical methods, or tools for communities’ use in measuring. The intent and design of the measurement effort determines whether it is a holistic accounting of community resilience for any context, a specific instance (past, present, future) or case (place), or an exploration of community conditions. Virtually all resilience measurement efforts that the committee considered attempt to describe the current resilience of a community (often, as an exploratory diagnostic) rather than predict what the future resilience of a community could be if it took certain actions in the present. None of the measurement efforts captures the full variety of operational variables for community resilience.

The committee assessed community resilience measurement efforts to describe the current state of scholarship and practice, explicitly examining published efforts that are intended to serve as measurements of community resilience. Of the dozens of resilience measurement efforts considered, the committee selected 33 whose methodological development and operational definitions of resilience are publicly available (see Table 2-1; Appendix C provides a brief description of each effort). These 33 measurement efforts include those that focus on data collection, analysis, and interpretation, and those that attempt to measure resilience both before and after events. Because these efforts have not undergone validity testing (see “Construct Operationalization, Reliability, Validity” below), neither scientific rigor of the measurement itself nor its implementation was considered as a selection criterion. Efforts that are intended to measure specific resilience-related concepts—such as social vulnerability or a specific environmental hazard—were excluded since such efforts are not designed to capture the holism of resilience, as encompassed by the six community capitals.

The committee systematically evaluated each of these measurement efforts, paying particular attention to those with a real or potential application in the United States and a focus at the community scale, with a noted bias toward geographic communities at a metropolitan or regional scale or smaller. This bias is intentional. As indicated in the National Research Council report Disaster Resilience: A National Imperative (NRC, 2012), resilience efforts should provide actionable evidence for policies, programs, and funding allocations for communities (where regional, county, and city or township governments are the primary unit of policy) and serve as vehicles for community engagement and awareness. Therefore, the committee focused its review on measurement efforts that could be applied at this level of geography.

Building on previous reviews of peer-reviewed, professional, and “grey” literature, as well as on global and U.S.-based resilience-explicit interventions,

TABLE 2-1 The Resilience Measurement Efforts Reviewed by the Committee (listed alphabetically)

| Resilience Measurement Effort | Source |

|---|---|

| Alliance for National and Community Resilience Benchmarking System | The International Code Council |

| Baseline Resilience Indicators for Communities (BRIC) | Cutter, Burton, and Emrich, 2010; Cutter, Ash, and Emrich, 2014; Cutter and Derakhshan, 2018 |

| Characteristics of a Disaster-Resilient Community | Twigg, 2007, 2009 |

| City Resilience Index (CRI, also referred to as the City Resilience Framework or CRF) | Arup |

| Climate Resilience Screening Index (CRSI) | Environmental Protection Agency |

| Climate Risk and Adaptation Framework and Taxonomy (CRAFT) | Arup (C40, Bloomberg Philanthropies) |

| Coastal Resilience Decision Support System | The Nature Conservancy |

| Coastal Resilience Index | Mississippi-Alabama Sea Grant Consortium and NOAA Coastal Storm Program |

| Community Assessment of Resilience Tool (CART) | National Consortium for the Study of Terrorism and Responses to Terrorism (START) |

| Community Disaster Resilience Index (CDRI) | Texas A&M |

| Community Resilience Indicators and National-Level Measures | Federal Emergency Management Agency |

| Community Resilience Manual | The Canadian Centre for Community Renewal |

| Community Resilience Planning Guide | National Institute for Standards and Technology |

| Community Resilience System (CRS) | The Community and Regional Resilience Institute (CARRI) |

| Community Resilience: Conceptual Framework and Measurement | U.S. Agency for International Development |

| Community-Based Resilience Analysis (CoBRA) | United Nations Development Programme Drylands Development Centre |

| Conjoint Community Resilience Assessment Measure (CCRAM) | Ben-Gurion University of the Negev’s Prepared Center for Emergency Response Research and Tel-Hai Academic College |

| Resilience Measurement Effort | Source |

|---|---|

| Disaster Resilient Scorecard | IBM and AECOM |

| Disaster Resilient Scorecard for Cities | The United Nations International Strategy for Disaster Risk Reduction, IBM, and AECOM |

| Earthquake Recovery Model | SPUR (San Francisco Planning + Urban Research Association), 2008 |

| Evaluating Urban Resilience to Climate Change | Environmental Protection Agency |

| Flood Resilience Measurement Framework | Z Zurich Foundation |

| Framework for Community Resilience | International Federation of the Red Cross |

| Indicators of Disaster Risk and Risk Management | Inter-American Development Bank |

| National Health Security Preparedness Index | Centers for Disease Control and Prevention and the Robert Wood Johnson Foundation |

| PEOPLES Framework | National Institute for Standards and Technology |

| Resilience Capacity Index (RCI) | Foster, 2011; OPDR, 2012 |

| Resilience Index Measurement and Analysis (RIMA) | United Nations Food and Agriculture Organization |

| Resilience Inference Measurement (RIM) | Lam et al., 2016 |

| Resilience Measurement Index (RMI) | The Infrastructure Assurance Center at Argonne National Laboratory |

| Resilience Scorecard | Berke et al., 2015 |

| Resilience United States (ResilUS) | Miles and Chang, 2011 |

| Rural Resilience Index (RRI) | Cox and Hamlen, 2014 |

the committee assessed the 33 resilience measurement efforts according to three categories: content (what is being measured), use (the intended or actual use of the tool or framework), and status (the current state of its development or implementation). In addition, the committee noted thematic, aspirational aspects of the efforts including their being adaptable to the unique characteristics of communities and across multiple capitals; scalable for community size and needs; quantifiable; easy to use; and/or integrated across diverse stakeholders and decision makers. The degree to which the various measurement efforts embody these characteristics is discussed below within the context of each measurement’s content, use, and status.

Content: What the Measurement Effort Is Measuring

The committee analyzed what each of the measurement efforts attempted to define by looking at what the measurement effort does across eight subject areas (see Table 2-2). These areas ranged from the focus of the measurement effort (e.g., event types, geographic context, disaster stage) to attributes of the effort itself (e.g., unit of analysis, type, approach, capitals, structure).

Each of the Content Subjects highlighted in Table 2-2 that are used to assess resilience measurement efforts is described below.

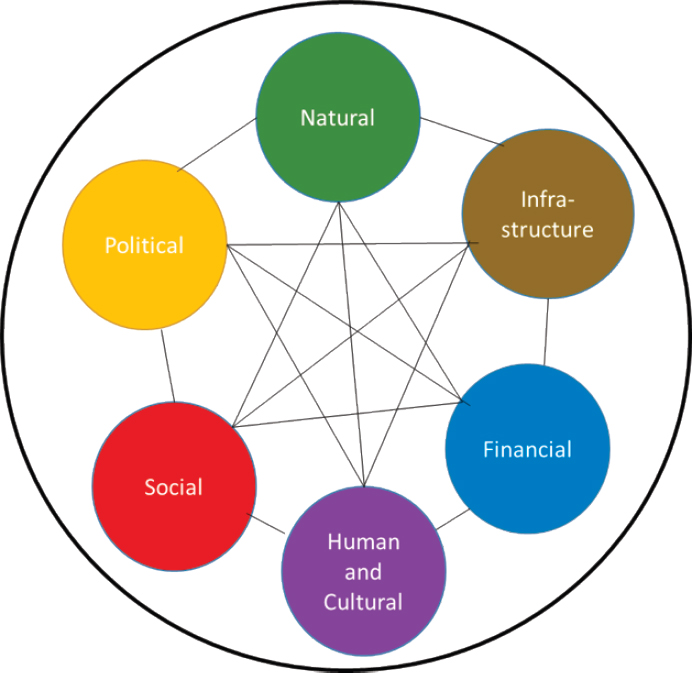

Capitals: Does the measurement effort capture the multiple dimensions or capitals of resilience?

The types of shocks and stressors and the purpose of the measurement influence which of the community capitals are incorporated into the measurement effort. A resilience measurement effort may capture one, several, or all of the six capitals. In the early phase of resilience measurement development, there was a focus on natural hazards, and consequently, the physical aspects of resilience were often prioritized (Aldrich, 2012; Aldrich and Meyer, 2015). However, the committee’s review of the resilience literature highlighted the importance of the role of social capital and cohesion in response to adverse events, economic and financial preparations to and impacts from these events, and the political will to invest in both mitigation and recovery. In general, resilience measurement efforts are increasingly integrating the multiple dimensions of resilience. And, recently developed efforts that do not consider all of the community capitals—for example, Argonne National Laboratory’s Resilience Measurement Index and NIST’s Community Resilience Planning Guide, which focus on the built environment—acknowledge that there are other components of resilience phenomena and activities that their efforts do not address.

Despite this increased awareness of the multidimensional nature of resilience, many efforts still do not integrate the various dimensions. Typically, this exclusion does not exist as a rejection of the broader multidimensional definition

TABLE 2-2 Characteristics Used to Assess the Content of Current Resilience Measurement Efforts

| Content Subject | Relevant Characteristics |

|---|---|

| Capitals (Tierney, 2006) | Natural Built Financial/economic Human/cultural Social Political/institutional |

| Adverse event (Choularton et al., 2015) | Acute or chronic shocks or stressors Natural shocks or technological shocks Universality/shock neutrality or singular shocks |

| Context (Moench, 2014) | Urban Rural Coastal Inland Universal Site-specific |

| Disaster event stage (MacAskill and Guthrie, 2014) | Mitigation and preparedness Relief and response Recovery Long-term community planning or nontemporal |

| Object of analysis (Cutter, 2016b; Sherrieb, Norris, and Galea, 2010) | Asset-based Capacity-based Combination of assets and capacities |

| Community unit of analysis (Sirotnik, 1980) |

Administrative boundaries below municipal borders (neighborhood) Municipal boundaries (city-wide) Administrative boundaries above municipal borders (regional) Nonadministrative or user-defined community Sum or average of smaller unit in community (households, buildings, etc.) Administrative or environmental unit equal to the community (city government, watershed, etc.) |

| Content Subject | Relevant Characteristics |

|---|---|

| Data type, source, quality (Olsen, 2011) | Qualitative Quantitative Mixed-methods Primary data Secondary data Community data collection Self-reported Independently assessed and reliable Representative Anecdotal Frequent data collection Single data collection (to date) |

| Construct operationalization, reliability, validity (Shadish, Cook, and Campbell, 2002) | Aggregation Un-aggregated Single metric output Reliability testing of individual variables Validity testing with comparable measures |

of resilience, but is due to the need to operationalize resilience for a specific purpose, such as a building department looking at measures of resilience for the built environment only. A few efforts focus on one capital but also reflect on its relationship to others (e.g., the NIST Community Resilience Planning Guide and the National Health Security Preparedness Index). Collectively, the measurement efforts assessed by the committee have produced a rich set of variables and data collection opportunities that could inform new integrative approaches to measure across resilience dimensions. For example, in the Environmental Protection Agency’s Climate Resilience Screening Index, The Nature Conservancy’s Coastal Resilience Decision Support System, and Argonne National Laboratory’s Resilience Measurement Index, the environmental conditions and projections of critical infrastructure indicators are directly connected to relevant literature in those fields while simultaneously relevant to community resilience.

In general, resilience measurement efforts provide an incomplete view of community resilience by assuming that measurement of only one or two of the capitals is the equivalent of measuring overall community resilience—in other words, they ignore the foundational premise that community resilience is multidimensional (see Figure 2-1).

Finding 2.1. Few of the measurement efforts consider all of the six commonly used community dimensions or capitals. Gaps in coverage of all six community capitals limit a resilience measurement effort’s robustness in measuring the holistic nature of community resilience.

SOURCE: Measuring Community Resilience Committee.

Adverse Event: Does the measurement effort focus on one kind or a variety of adverse events?

Disaster Resilience: A National Imperative (NRC, 2012) defines resilience in relation to “adverse events.” A resilience measurement effort may be agnostic to the shocks and stressors to which a community is exposed (universal) or it may explicitly focus on a singular shock or stressor or a select combination (singular). For example, a resilience measurement effort may be designed to capture a system’s ability to bounce back from flooding (a natural, acute adverse event), homelessness (a nonnatural, chronic adverse event), the effects of climate change (multiple natural, chronic, and acute adverse events), or dam failure (a nonnatural, acute adverse event).

The concept of an adverse event is implicit in all resilience measurement efforts but not necessarily represented explicitly by specific variables or indicators.

For example, some nonspecific hazard-related efforts as well as pre-hazardpreparedness measurement efforts include general public emergency management capacity indicators. These tend to measure only the existence of such a function in a local government, such as the presence or absence of an approved disaster recovery plan, rather than the quality of the plan or the capacity to implement it.

Approximately half of the community resilience measurement efforts reviewed do not specify a specific adverse event type. Of those that do, the largest subset is focused broadly on natural hazards, often related to climate change. For example, a measurement effort assesses specific contributors to resilience against numerous effects of climate change (e.g., increased severe weather events, sea level rise) or focuses on a single effect (e.g., sea level rise). A handful of the efforts reviewed address only a specific hazard type (e.g., San Francisco Bay Area Planning and Urban Research Association’s (SPUR’s) Earthquake Recovery Model and Zurich Flood Resilience Measurement Framework). Many, such as the City Resilience Index and the Resilience Capacity Index, propose an even broader definition of shock to include social and economic adversity.

Context: Does the measurement effort apply to an entire subset of geographic conditions or to site-specific exposure profiles?

Every community is characterized by a unique set of social, environmental, cultural, economic, and institutional conditions. A resilience measurement effort may select a universally defining set of measurements, which are then applied to all communities within a region, country, etc.; may be tailored to a specific sample of communities (urban, rural, coastal, etc.); and/or may utilize a unique set of characteristics applicable only to select communities. While most efforts do not specify the geographic locations or contextual categories to which they should be applied, several are explicitly intended for a specific geographic area or community size. For example, the Rural Resilience Index is exclusively focused on rural communities, the City Resilience Index is most relevant to urban areas, and the Earthquake Recovery Model is (not surprisingly) relevant to earthquake-prone areas. Many of these place-specific efforts use similar dimensions as non-contextual ones and vary only in the operationalization of indicators and the types of data used or proposed for use.

Disaster Event Stage: Does the measurement effort apply to one stage of a disaster cycle or to an ongoing condition or capacity?

The definition of resilience from Disaster Resilience: A National Imperative (NRC, 2012) includes all periods before, during, and after an adverse event. Using a resilience measurement effort to identify which areas in a community are performing well or poorly is useful for general community planning purposes. Alternatively, the use of a measurement effort may be more nuanced, such as tracking and capturing changes over space and time with regard to post-disaster

recovery, assessing progress in mitigating adverse outcomes, or examining the effectiveness of resilience project investments.

An ongoing debate in the resilience literature and practice around the definition of resilience is whether resilience should be operationalized as (1) the ongoing condition that precedes an adverse event that enables a community to bounce back, or (2) by the bouncing back itself (Cutter, 2016b; Patel et al., 2017). Most of the resilience literature argues for the former but does not necessarily dismiss the latter as an outcome of interest.

Most community resilience measurement efforts approach resilience as a general condition that fluctuates with time and in relation to an adverse event. A handful of the efforts considered in this report focus solely on post-disaster recovery measures (e.g., the Conjoint Community Resilience Assessment Measure, the Earthquake Recovery Model, and Resilience United States). The differences in approach are helpful for advancing the field in the long term, since change in a pre-disaster capacity measurement should correlate with expected disaster losses and post-disaster recovery times and qualities.

Object of Analysis: Does the measurement effort focus on a community’s assets or its capacities?

The purpose and utility of a resilience measurement effort have an influence on the number and choice of variables or indicators and the information these convey. Some efforts rely on variables and indicators that count what is currently within a community, as opposed to what can be. Asset-based indicators capture information on what assets or resources exist within a community. Capability or capacity-focused indicators communicate a community’s ability to be resilient in the face of a shock independent of the absolute number of assets.

Variations in asset-based versus capability-focused indicators were noted by the committee as potentially being a useful source of distinction in measurement efforts. The community resilience measurement efforts reviewed for this report typically combined both in some fashion, but tended to rely more on asset-based indicators (e.g., Baseline Resilience Indicators for Communities and the Zurich Flood Resilience Measurement Framework) because data are more readily available and because the literature on capacity measurement is more nascent. A few of these measurement efforts are capacity-based such as Community Assessment of Resilience Tool, Community Resilience Indicators and National Level Measures, and the Resilience Capacity Index.

Community Unit of Analysis: Does the scale of the measurement effort correspond to the definition of the unit of analysis—“community”—that is being measured?

A “community,” defined as a subnational and substate geography (see Chapter 1), was the unit of analysis for all the resilience measurement efforts the committee reviewed. The unit of analysis used to define a community is generally

an administrative boundary (e.g., zip codes, census tracts/block, cities, counties, metropolitan statistical areas), and the measurement efforts reviewed by the committee vary somewhat on the specific geographic boundary in question. For example, Baseline Resilience Indicators for Communities measures resilience at the county level whereas the City Resilience Index does so at the city level (but with significant slippage into the use of regional, state, and national level data sources in cases where the indicator does not apply to a city scale or in which city-scale data are not available). In theory, however, these measurement efforts could be used for other units of analysis used to define a community.

The measurement efforts reviewed use a variety of community-level data sources that come from different units of analysis within a community such as parcel boundaries, topographic groups, households, or political districts. Many resilience measurement efforts that have been operationalized define communities using a combination of institutional capacities (e.g., government functions) measured at a single community unit and smaller units (e.g., household counts) within a community.1 More holistic measurement efforts typically use many kinds of data sources. But the more data sources that are used (e.g., environmental data, health data, and demographic), the greater the chance that they are measured on different spatial and temporal scales, which could create challenges in combining them to effectively measure the unit of analysis being used to define the community.

Data Type, Source, and Quality: What types of data are collected to conduct the measurement?

Are the variables and indicators quantitatively or qualitatively measured? What are the data sources for the measurement effort’s variables? What is the quality of those data? How often are the data collected? The analytical nature of the data within a community resilience measurement effort often dictates whether any group or composite measure is comparable across communities. As mentioned above, the data format used in a resilience measurement may be qualitative (e.g., present/absent, complete/incomplete, high/low) or quantitative. Quantitative data range from absolute values (e.g., number of doctors) to standardized (e.g., percentages) or normalized values. Occasionally, a mixed-methods approach is used in which both qualitative and quantitative data are employed. Depending on the measurement effort’s purpose, both types of data and resultant indicators are

___________________

1 There are instances in which operationalizing various units becomes problematic. For example, nonwhite households generally have less access to resilience-supporting services and resources; this is typically operationalized with a count of the number or proportion of nonwhite households in a community. However, having nonwhite households that have less access to resilience-supporting services and resources may represent a potential proxy variable of structural racism or white privilege—an underlying process that is more difficult to adequately measure but nonetheless influences the unequal access to resilience services and resources (Gee and Ford, 2011; Pulido, 2000).

useful and provide a richness of information along with the potential for community comparisons over time.

Establishing the quality of data used in resilience measurement is important because there may be variations in how data are collected that may not allow for comparison and contrast across difference processes, capabilities, organizations, sectors, etc. within the same community over a long period of time (Carmines and Zeller, 1979). For example, some data may be collected in regular intervals (e.g., quarterly, annually, biannually) while other data may be collected as part of a one-time effort (e.g., to define resilience goals or priorities, or to provide a proof of concept).

The measurement efforts reviewed by the committee employed quantitative data, qualitative data, and/or a combination of both. Approximately half of the efforts rely solely on quantitative data, which requires availability of and access to large quantitative data sets. For example, Baseline Resilience Indicators for Communities and the Community Disaster Resilience Index focus on quantification to produce a comparable set of dimensional measures. The Coastal Resilience Index, on the other hand, relies on expert judgments based on qualitative data. The City Resilience Index combines both quantitative and qualitative indicators. Test-retest activities for hybrid composite measures must be conducted to ensure that the data, proposed quantification scales, and indicators are reliable. The National Health Security Preparedness Index is a good example of the test-retest activities and subsequent adjustments in their measurement effort.

Among the measurement efforts, there are generally two approaches to sources of data. The most common approach is a reliance solely on national data sources such as the U.S. Census Bureau’s American Community Survey or Federal Emergency Management Agency flood maps, despite their known limitations. This is the case with Baseline Resilience Indicators for Communities, Community-Based Resilience Analysis, Resilience Capacity Index, Resilience United States, and the Resilience Measurement Index, reflecting their quantitative and comparative approaches to measuring communities’ resilience, and to some extent The Nature Conservancy’s effort, which also overlays environmental data from a variety of nationally credible sources. National data are limited by the low frequency of national collection and by geography—that is, desired data often do not exist at the most appropriate level of granularity such as a case where the available data are at the county level but neighborhood-level data would be more reflective of the underlying resilience indicators. The second approach is a combination of national and local data sources, often with qualitatively derived local data from focus groups, interviews, and expert review, an approach favored by the City Resilience Index and the Zurich Flood Resilience Measurement Framework. For qualitatively derived primary data, there is no clear test-retest protocol, triangulation, or other analytics used to ensure the quality of the data. These methodological techniques are needed to construct validity of qualitative data.

Construct Operationalization, Reliability, Validity: How does the measurement effort place individual variables and their indicators into a holistic or aggregate structure?

The configuration of a resilience measurement effort is closely tied to its purpose and utility. For example, a resilience measurement effort may be a simple list of characteristics with little or no post-processing of the collected data (quantitative or qualitative). Certain components or data inputs may be combined and aggregated to synthesize information at different levels (e.g., capitals or objects of analysis). Or, all input data sets and measurement levels may be aggregated to produce a single, numeric output, referred to as an index.

Box 2-2 illustrates known procedures and steps for constructing indexes of various types to help researchers understand the inherent limitations and/or biases in their tools. As Box 2-2 indicates, an important methodological step to ensure a robust measurement effort is validity testing, in other words, testing to confirm that the resilience measurement is gauging resilience and not some other characteristic such as sustainability, economic productivity, inclusivity, equity, or vulnerability. There are two ways to assess validity. Convergent validity looks at whether individual variables correlate with other constructs that measure similar

phenomena; predictive validity looks at whether individual variables can predict those other constructs. The measure should highly correlate with other measures typically associated with community-scale resilience, such as recovery times and costs, and predict outcomes affected by resilience such as hazard losses.

Another key step for building a robust measurement effort is reliability. A fundamental point of measurement is to ensure that identical units score in identical ways across different time points and survey modalities (Carmines and Zeller, 1979). In other words, the reliability of a resilience measurement effort depends on the ability of users to reproduce the same results for the same community. Thus, an important step in the structure of a measurement effort is the testing and re-testing of reliability activities needed for each indicator’s data. The reliability of a measurement effort’s output has to do with its internal consistency—that is, how each contributing variable “hangs” with the others, and whether and how it ultimately contributes to the overarching construct of community resilience. It is important that the various indicators are coherent and individually contribute to the intended measurement, irrespective of whether a single resilience dimension (e.g., economic) is being measured or a resilience composite measure is being generated. The degree to which inferences can be made from measured observations (also known as construct validity) is especially challenging when measuring things that do not have natural units of measurement. Even in those cases, a range of uncertainty is possible either because the measurement is predictive rather than actual (such as ranges of climate change effects) or because the tests from which measurements are drawn are not reliable (from inadequate survey instruments, for example). Generally, most resilience measurement efforts do not adequately consider test-retest reliability assessment in data collection or fully disclose confidence intervals in observations.

Almost all the efforts the committee assessed relied on an inductive selection of indicators to start their exploration; however, only a handful of these have revisited and revised their selection of indicators. Of the ones that have done so, only four have performed any internal consistency analysis that is available publicly: Baseline Resilience Indicators for Communities, the Community Disaster Resilience Index, Conjoint Community Resilience Assessment Measure, and the Resilience Inference Measurement. The committee found few community resilience measurement efforts with documented reliability and validity analyses (though, admittedly, methodological rigor is not always a purposeful intention of their development).

None of the operationalized measurement efforts has undergone the complete methodological testing for composite statistics, as is typical in other fields of measurement such as psychometrics or medical prognostic indicators. One effort, Resilience United States, was tested against a single actual hazard event for its predictive value, but this was more of a reliability test than a validity one because of the post-disaster recovery nature of this effort. The lack of validity testing is likely more reflective of the nascent stage of operationalized efforts than of their developers’ attempts at measurement rigor.

Use: The Intended or Actual Use of the Measurement Effort

Because of the individualized application of many of the resilience measurement efforts examined for this report, the committee analyzed the underlying purpose of each and assessed whether the intended purpose directly shaped the usefulness, transferability, and applicability of the measurement effort itself. The use subject areas include the intended purpose of the effort, the process employed, and the ease of use (see Table 2-3).

Purpose of the Measurement Product: What is the intent of the measurement effort and how does that influence the quality, reliability, and complexity of its use?

Understanding why any of the reviewed measurement efforts were created and their intended and actual applications provides critical insight into how the measurement effort was operationalized. In a few cases, the output of these efforts is a single number or qualitative value for a community that is meant to describe a current state or prescribe a desired one. This is especially true of efforts produced within scholarly circles without actual application, and among those designed for reflection and engagement within a community. For other measurement efforts that have been operationalized, such as the Baseline Resilience Indicators for Communities and The Nature Conservancy’s effort, the output is a comparison of these values between communities for the purposes of self-assessment and local action.

Many diagnostic measurement efforts are intended to be used for national or state decision making or investment prioritization. While there has been movement toward this purpose—such as the Federal Emergency Management

TABLE 2-3 Characteristics Used to Assess the Use of Current Resilience Measurement Efforts

| Use Subject | Relevant Characteristics |

|---|---|

| Purpose of measurement: Effort’s product | Prescriptive Descriptive Diagnostic Evaluative |

| Purpose of measurement: Effort’s process | Community engagement Scholarly inquiry Investment decisions or prioritization Mandate as part of a program or initiative |

| Effort’s complexity | Resources needed (e.g., knowledge, software, staff) Ease of use, accessibility of product Practicality of implementation |

Agency’s 2017 push toward a National Risk Index2 that relies on some of the underlying measurement efforts that evolved into those reviewed here as well as on the varied efforts by the Environmental Protection Agency and U.S. Agency for International Development—the committee found only a few examples of the direct application of resilience measurement efforts for decision making. In at least one case (The Nature Conservancy), the measurement effort had been employed because the decision to invest resources or activities in a specific community had already been made. Some of the measurement efforts used for community visioning exercises (e.g., the City Resilience Index) have led to local policy and program changes, or at least offering activities to address some of the challenges in the visioning process (e.g., Zurich Flood Resilience Measurement Framework; also see Box 2-3). The committee, therefore, could not assess the effectiveness or impact of the resilience measurement efforts on those resilience interventions or on variations of investments (such as efficacy, efficiency, and equity) at this point in time.

Purpose of the Measurement Process: What is the intention of the process in which the measurement is created and applied, if any?

In several instances, the exercise of producing or applying a measurement effort has an implicit purpose that is just as significant as the end product. For example, a measurement effort that seeks to gather input from community residents (bottom-up measurement efforts, discussed below) could satisfy the need for engagement as well as integrate that input into top-down efforts by private investors or higher-governance entities such as states or nations that may need the resulting measurement for prioritization or decision making. Sometimes, the process of measuring is more important than the outcome—particularly if it is designed to elicit some sort of action, policy, or program in support of community resilience.

Many of the resilience measurement efforts the committee examined have been used in community engagement activities by local governments or civil sector entities. In some cases, such as the National Oceanic and Atmospheric Administration’s Coastal Resilience Index and the NIST Community Resilience Planning Guide, the efforts are intended as community engagement efforts with resilience ostensibly to raise awareness and provide guidance for decision making. In others, the efforts engage citizens in selecting and measuring predetermined indicators (e.g., Zurich Flood Resilience Measurement Framework and the City Resilience Index). However, for a handful of measurement efforts (e.g., Baseline Resilience Indicators for Communities and the Resilience Inference Measurement) that fall under the top-down development process, the purpose of the measurement effort’s output is to inform local actors and other stakeholders about resilience conditions and suggest opportunities for change. There are a handful of

___________________

2 For information about the National Risk Index: http://riskindex.atkinsatg.com.

top-down efforts (e.g., the United Nations Office for Disaster Risk Reduction’s Disaster Resilient Scorecard for Cities) that are mainly procedural checklists for conducting resilience-related activities or embarking on resilience conversations that ultimately translate into the beginning of the process of measurement.

In addition to measuring resilience, one goal of a resilience measurement process may be one of scholarly inquiry; for example, the Zurich Flood Resilience Measurement Framework was beta tested in several countries to help the developers identify the best indicators of flood resilience and improve the framework. A resilience measurement may also be mandated as part of a program or initiative.

Complexity: Is the measurement effort feasible and does it result in actionable information?

The entire process of collecting and analyzing data and synthesizing the information to generate an assessment of resilience in or across communities can be resource-intensive, inaccessible to the citizens or policy makers within the communities, and/or impractical, thereby limiting its usefulness.

The level of complexity in the number and quality of indicators and resulting tabulations in a resilience measurement effort can be challenging to communicate and undertake, and make it difficult to implement. This challenge presents a measurement conundrum where user-friendly efforts are wanted but simplistic methodologies do not (yet) exist to produce such efforts unless dimensions of resilience are foregone or the applicability is very narrow. Most of the measurement efforts the committee examined have retained their level of complexity in order to be true to the goal of measuring resilience by employing data transformation techniques that are incomprehensible to the end user. Other measurement efforts (e.g., the Resilience Capacity Index) that have traded complexity for fewer and more accessible explications of indicators have, in contrast, lost a significant amount of empirically defined reliability and validity.

Status: Current State of Development or Implementation of the Measurement Effort

Because resilience measurement is still in its infancy, most of the efforts that have been developed and implemented to date have had limited application and impact. To document the status of various efforts, the committee relied on current documentation and professional knowledge of each effort’s theoretical and practical evolution. The committee also evaluated the level of community engagement both in the effort’s development and with regard to its communication at a community level, since this has been an underlying motivator for many measurement efforts. Table 2-4 lists the three subject areas that were explored.

TABLE 2-4 Characteristics Used to Compare the Status of Current Resilience Measurement Efforts

| Status Subject | Relevant Characteristics |

|---|---|

| Development stage of the effort | Inception Peer-reviewed Implementation case studies (similar context) Implementation case studies (different context) Informational stage only Action or policy stage |

| Frequency of effort’s application (to date) | One-time Intervals |

| Creation and use process | Co-development Top-down (government, scholar, or organizationally led) Bottom-up (community level) |

Development Stage: Where is the measurement effort along the development and use life cycle?

Developing a theoretical construct of measuring resilience overall or a singular application is a process that includes the initial design phase (inception), scientific review and testing (peer-review), and implementation in similar (e.g., developing countries, cities, coastal areas) or different contexts, with respect to the context for which a resilience measurement was originally designed. The committee considered the overall rate of production of new resilience measurement efforts and the status of existing ones.

The number of resilience measurement efforts continues to grow, but the rate of growth appears to have tapered in recent years, and there have been surprisingly few applied efforts to measure community resilience. Most efforts continue to focus on varying definitions of resilience, schematic frameworks, and potential indicators, but not on operationalizing definitions, conducting exploratory testing of the reliability and validity (internal and external) of the effort’s output, or collecting and testing data. These efforts are not without their merit and, in some cases, the developers had no intention of moving beyond exploration or focusing on a specific dimension of resilience. Notable efforts in this subset of early efforts are the NIST Community Resilience Planning Guide, the Resilience Scorecard, and the Resilience Inference Measurement. Efforts that have advanced the field into implementation include Baseline Resilience Indicators for Communities, the City Resilience Index, Community-Based Resilience Analysis, the Community-Based Resilience Analysis, the Community Disaster Resilience Index, Climate Resilience Screening Index, the Disaster Resilience Scorecard for Cities, and the Zurich Flood Resilience Measurement Framework.

Replication and Frequency: Has the effort been applied more than once?

Among the resilience measurement efforts that have been piloted in communities, only a few have been applied more than once in the same community or in more than one community. As of early 2019, the City Resilience Index, the Disaster Resilience Scorecard, and the Zurich Flood Resilience Measurement Framework have undergone or are currently piloting in more than one community, in a wide range of a few to over 100 communities, although the results of these pilots have not been widely publicized. Part of the reason for the low frequency of application is that underlying data for many efforts (like the Baseline Resilience Indicators for Communities) are themselves not updated frequently. More comparative efforts like Baseline Resilience Indicators for Communities, Conjoint Community Resilience Assessment Measure, and Resilience Inference Measurement have been updated two to four times with newer data. Another common reason for the lack of frequent and repeated measurement attempts is the lack of resources for conducting a measurement effort beyond a preliminary assessment or diagnostic, especially for those efforts reliant on qualitative data.

Creation and Use Process: Has the effort relied on a top-down or a bottom-up process in its development and/or dissemination?

The design of a resilience measurement effort may be the result of a co-creation process between a community or communities and resilience experts in the consulting, government, and/or academic sectors, or may be developed by just one side—either from the bottom-up (the design principles emerge from the community) or from the top-down (experts act in isolation).

Among the resilience measurement efforts that have been used more than once—either in the same communities or in multiple communities—only a few have been updated or refined to improve their usability and/or usefulness. Some efforts initially designed to be community engagement tools have not been implemented as operationalized measurement efforts. But a few of these have moved to the implementation phase, especially those that rely on qualitative data. For example, the City Resilience Index and the Zurich Flood Resilience Measurement Framework have engaged in a hybrid of bottom-up, top-down strategy using predetermined indicators to elicit responses from citizenry and/or leadership. The Zurich Flood Resilience Measurement Framework, for example, has been implemented in dozens of communities (twice in each community) across the world as of early 2018, and its developers have used the data and results from these implementation efforts to validate and update the framework. Most measurement efforts that were developed in more formal top-down approaches have been replicated in the same communities or updated, but without any significant community-level input.

EXAMPLES OF COMMUNITY RESILIENCE MEASUREMENT

Two examples of communities measuring resilience are highlighted in Boxes 2-3 and 2-4. It is worth noting that both of these measurement efforts were underwritten or undertaken with an influx of intellectual support or financial resources (e.g., from NIST, Z Zurich Foundation, Resilient America Program). Box 2-3 highlights the implementation of the Zurich Flood Resilience Measurement Framework in Cedar Rapids, Iowa, and Charleston, South Carolina, to assess their flood resilience. The Zurich framework, which has been tested in more than 100 communities around the world, considers resilience across five community capitals and considers multiple data sources. Box 2-4 highlights the use of the NIST Community Resilience Planning Guide (2016) in Boulder County, Colorado. The NIST guide has been implemented in six communities. Both of these measurement activities included data collection and rubrics for measuring resilience.

REMAINING GAPS, CHALLENGES, AND OPPORTUNITIES TO IMPROVE MEASUREMENT

Based on its review of 33 measurement efforts detailed in Table 2-1 (and Appendix C), the committee identified a number of limitations and challenges in current resilience measurement science and practice. These challenges can be grouped into three areas: measurement intent, methodological rigor, and application and practicality.

Intent of the Measurement Activity

- In many cases, the resilience measurement effort is a scholarly pursuit meant to contribute to the literature on drivers rather than providing practical guidance for communities.

- Some efforts are techniques that facilitate community engagement and visioning exercises that are undertaken after disaster has struck. The process of trying to define resilience may be more important than the accuracy of the measurement effort’s outcome itself.

- The drive to measure is often dictated by policy makers who are motivated to direct governmental funds to specific geographic areas, populations, or activities rather than provide consistent and frequent assessments of all potential factors that could contribute to resilience.

Methodological Rigor

- Comparative studies that evaluate the methodological approaches, intent, and audience of resilience measurement efforts are lacking. In the absence of actual use cases and an assessment of the applicability and reliability of measurement efforts, the tradeoff between more holistic measurement

- The connection between input data and measurement design is largely a black box lacking empirical justification for the selection of input data (beyond pointing at existing literature) and design choices for the measurement effort itself. This paucity of evidentiary, objective information makes it extremely difficult for end users to judge the reliability of a measurement effort, identify differences between them, and/or select one that would aid in decision making.

- There is a lack of research identifying the most relevant indicators based on capitals, purpose, context, etc., as well as their measurement and aggregation.

- There is an extensive reliance among the resilience measurement efforts on secondary data, which limits innovation or creativity in terms of more suitable or more accurate data sets.

efforts and those limited to specific places or capitals that reduce the complexity and make measurement more manageable are currently unknown.

Application and Practicality

- Although simplicity should be preferred (as it supports end user interpretation, acceptance, and understanding), it is difficult to achieve due to the multidimensionality of resilience.

- Generally, measurements—especially those relying heavily on aggregating, averaging, or categorizing data—are not designed with repeat assessments in mind that would be capable of capturing changes over time (e.g., semi-annual, annual, bi-annual).

- Local and practical needs (e.g., operational accountability and transparency tools) outpace the existing knowledge base of resilience measurements. The committee’s site visits (Chapter 3) revealed a lack of resilience metrics at the very local or project-specific planning level (e.g., for cost-benefit analyses) with dynamic measures able to forecast, or at least estimate, the resilience impact of mitigation projects.

The goals of resilience measurement efforts are sometimes at odds with how they are applied and what they were intended to accomplish. As discussed in later chapters, a few efforts are designed to identify increased investments (such as the NIST Community Resilience Program and Baseline Resilience Indicators for Communities), but do not necessarily make the link from the general resilience conditions they attempt to measure and the specific intervention in which investment might occur. Furthermore, the availability of too many efforts with untested accuracy, reliability, and validity might make the process of measurement seem insurmountable. Resilience practitioners might be confused about which measurement effort is best for them.

Finding 2.2. Resilience measurement science and practice are not mature enough to clearly articulate which resilience measurement approach is best or works best in practice.

CONCLUSION

The current state of resilience measurement is not developed enough to reveal a single best measurement approach, scientifically or in practice. They are generally not usable by communities because they are either too difficult, costly, or cumbersome to use or data are not available at the requisite spatial scale. Existing efforts assess capacity, are capital- or sector-specific, and do not address policy or programmatic needs such as targeting for resource allocation, investments, or disaster relief. Many are theoretical or conceptual exercises, and relatively few have been implemented, replicated, or adapted for application. Few resilience measurement efforts are driven by local needs and co-developed with communities, leading to a disconnect between what the effort’s developers

think is relevant and useful knowledge versus what the community desires or can use. In other words, the science of resilience measurement has not been put to the test of practice of implementing strategies to enhance resilience at the community level.

There is a presumption that every resilience measurement effort captures resilience, but this has not been validated. Common methods used in formal resilience measurement include limited quantitative methods to produce an index; qualitative assessments to produce a scorecard or ranking; narratives based on guided qualitative questions to determine capacities and capabilities; and geospatial representations to illustrate intersections of attributes and capacities to compare places. Few efforts follow established indexing guidelines such as testing for sensitivity and internal consistency and data procedures (e.g., weighting, normalization, missing value replacement).

Most community resilience measurement approaches lack temporal sensitivity to monitor change over time. The majority of the existing efforts are single-use applications and have not been applied repeatedly in the same place at different points in time, thus making it difficult to gauge a measurement’s ability to track changes over time. Without the ability to capture change over time, measurement efforts lose value as decision-making tools. Furthermore, most of the current efforts are descriptive, not predictive, and do not provide clear guidance about what actions to take to improve resilience. Communities want to design resilience efforts that respond specifically to future predicted challenges. However, a major research challenge persists: how to predict or project a community’s future resilience to enable that community to design resilience actions in the present that will address those projected resilience challenges in the future (see Chapter 5).

This page intentionally left blank.