Proceedings of a Workshop

| IN BRIEF | |

|

August 2019 |

Performance Management and Financing of Facility Engineering Programs at the Veterans Health Administration

Proceedings of a Workshop—in Brief

The National Academies of Sciences, Engineering, and Medicine convened a workshop on May 8-9, 2019, to gather data on performance management and financing associated with the complex and diverse physical plants that support a wide variety of Veterans Health Administration (VHA) facilities. This workshop was the fourth in a series undertaken to assist the larger effort by an ad hoc committee of the National Academies for the Veterans Administration (VA) to prepare a resource planning and staffing methodology guidebook for VHA Facility Management (Engineering) Programs.

Committee Co-Chair James Smith, a retired U.S. Ambassador, opened the first day by welcoming attendees. He then briefly explained the status of the larger study effort and how this particular workshop fits in. He stressed that this was an information-gathering session and the committee was in the process of assembling materials to be used in making findings, conclusions, and recommendations. He said an interim report was at the editors, that comments or probing questions by committee members should not be interpreted as positions of the committee or the National Academies and may not be indicative of their personal views, and that the final report must go through a rigorous review process before publication. The attendees were then introduced and the presentations began.

CURRENT VHA PERFORMANCE MEASUREMENT

Steven Broskey is a compliance engineer with the VHA Office of Capital Asset Management Engineering and Support, which is sponsoring the larger study. Broskey began by thanking the National Academies and the committee for their work on the study and for the preparation of this workshop. He thought it would be helpful to present the performance measures that his organization currently uses on a national level, one set of measures dealing with operations and maintenance (O&M) and a second set addressing management of capital assets. The O&M-related system provides quarterly self-reported, critical facility inventory and work-order completion data along with completion-rate data for critical planned maintenance (commonly called preventive maintenance) of utilities, which is required to be 100%. On the chart, critical system was defined as having “direct support to safety or quality of care, treatment, or services to patients . . . loss or interruption would have a direct impact to VA’s mission, including support of emergency operations.” But discussion revealed some issues here, on one hand indicating that there currently was not full standardization of “critical” because the specifics are up to each facility, yet on the other hand a view that standardization is forced by the VA’s Joint Commission accreditation process (Joint Commission on Accreditation of Healthcare Organizations, or JCAHO). During the session, there was much attention to standardization. For example, regarding the work-order accounting system, VA representative Oleh Kowalskyj said, “There is no real definition of what is a work order and what is not,

![]()

whether it’s a mini project or whether it’s an hour’s worth of work or 300 hours’ worth of work, it can all be listed as one work order and put in as such.” After some discussion, committee member Robert Anselmi concluded by urging that use of such data for true analytics requires a good system and policy, standardizing all of it, and making sure staff enters data in a consistent way. Moving to capital assets, Broskey indicated that metrics should represent success relative to other facilities. He illustrated the current metrics of facility condition assessment (FCA), non-recurring maintenance execution, and minor construction execution, and described some future updates for those metrics, such as all obligations to be included in the FCA, not just active projects. On construction, Broskey summed his measure of capital by saying, “Are we putting the money where it needs to go for patient care? How do you measure that, a whole different question? But in theory that’s what it’s about, providing the best possible facility for the veteran.” Smith, suggesting that something was missing, said, “My problem on the engineering side is that we equate the success of the engineering organization almost exclusively on inspection data. That just tells you if you’ve got the things done. But nowhere are we assessing the health of the organization.”

MEASURING AND VALUING RISK AT THE VA

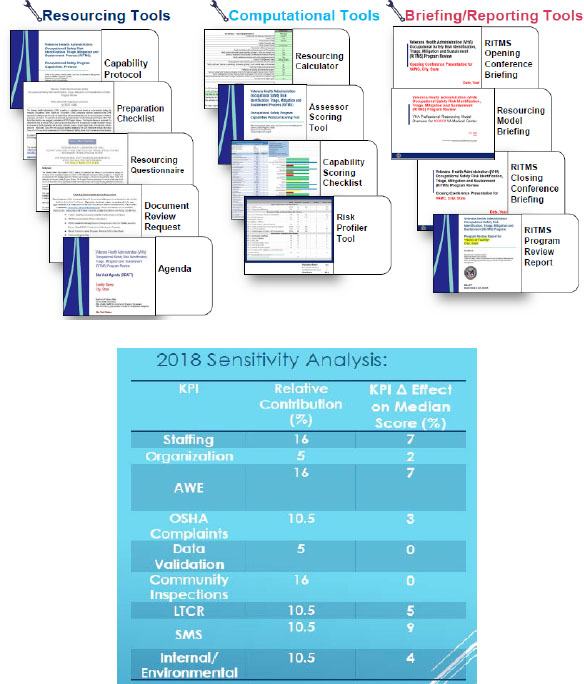

Doug Dulaney, a VHA director for occupational safety, health, and the Green Environmental Management System (GEMS), described VHA’s Occupational Safety Risk Identification, Triage, Mitigation, and Sustainment (RiTMS) process, including the risk assessment model, pilot results, structure and function, and future direction. The model was developed to (1) target facilities needing assistance or risking untoward employee safety events, (2) be easily adapted to model risk in other business lines throughout VHA, and (3) be used to create administration-wide risk profiles for facilities so they can better deploy resources and support in advance of serious situations. The model assigns a risk score (1-100, with a higher score meaning lower risk) based on evaluation of selected data sets coupled with a site visit that evaluates 53 key performance indicators (KPIs) in a single specified business line. The model’s data comes from over nine different data sets (including HAIG Survey, SAFE Database ALL VISN, CPTrack, and Triennial Audit Reports, among others), coupled with a site visit that evaluates the 53 different KPIs. The model does not indicate what will go wrong at a facility (or when), tell how to fix an at-risk facility, or explain deployment of resources. Model information focuses attention to collections of sites, and low-scoring facilities can receive visit teams. Illustrating how these risk levels can be used, Dulaney explained his pitch to facility directors: “How much risk are you willing to take if the mean average TMS score in the country is 77 and you’re pulling in about a 60? Is that a level of risk you’re willing to take? If it is, I’ll go back to the office and help somebody else. If it isn’t, I’ll send a team out there, help you identify your at-risk area, and start pushing resources to you.” He said that some facilities with above average scores, like 80, still wanted site visits because 80 is too low. Figure 1 summarizes the many tools in the model and the sensitivity analysis. Roughly half the sensitivity is in three KPIs, 16 percent each, but the point was made that staffing is the most important of those three. Future directions for the model include continued site visits, targeting support as needed, briefings, data visualization (Tableau Software), and annual updates. To date, this process has been well received by VHA senior leadership and in the field. Committee member Brian Yolitz commented at that end that this process is an effectiveness or risk assessment with the ability to move the center of mass up, and everybody gets better, reducing relative risk. Dulaney responded by saying, “I can tell you from the first data set I ran on this model, it has gone up six or seven points as a median.”

DISCUSSION OF MEASURING PERFORMANCE

Committee C0-Chair Smith introduced committee member Brian Yolitz to lead the discussion. Yolitz told a story about an Air Force maintenance-shop chief who, when asked how he knew things were going really good said, “Well, I’ve been around this for 27 years.” Believing there was a need to get beyond the “gut” into the data, Yolitz appealed to the group to discuss performance measures. An immediate response by Anselmi, referring to the earlier comment by Alvarez, was, “Are we doing the right thing is pretty important.” A bit later he followed with, “So whatever performance measures we come up with

FIGURE 1 Risk Identification, Triage, Mitigation, and Sustainment (RiTMS) site-visit documents, scoring tools, and sensitivities. NOTE: AWE, Annual Workplace Evaluation; LTCR, Lost Time Claims Rate; OSHA, Occupational Safety and Health Administration; SMS, Safety Management System. SOURCE: Doug Dulaney, Veterans Health Administration, presentation to the workshop, May 8-9, 2019.

we’re going to have to make sure that what’s being done is right and being done the way it should be done.” Related to that thought, committee member Robert Goodman remarked, “In primary care it is generally accepted that for every provider you have to have about 3.3 to 3.5 support staff . . . the ratio is actually more important; that gets you the right number of people, but that’s different from ensuring that they’re doing the right things.” Smith added the following thoughts about data input: “You’ve got to have people and time dedicated to input, [which] has to be relevant and important to the individual inputting the data. . . . If that person sees value to his or her organization by inputting the data, then it’s much more likely to be valid input.” Goodman introduced a somewhat different track by referring to population shifts that drive lowered workloads and asking about the VA headquarters’ investment strategy. He said, “It would be cheaper to just buy the care, much cheaper to just go out and buy it from a local hospital. And I think that, if there was ever a time to start with key measures, what would the strategic things be from the VA headquarters as to where they want to do investments?” A Western Michigan University website was displayed that demonstrated costs as part of performance measures. The graphic described a range of different metrics, such as information showing total annual maintenance cost per student, “bang for the buck,” at the university.1 Additional discussion went to satisfaction surveys of patients and employees, but then how they might mesh with other performance measures was questioned. For instance, Alvarez said, “If I skew the numbers, I can have incredible customer service numbers by work-order turnaround, where you, my customer, thinks I’m doing a horrible job.” NASA representative Kim Toufectis observed that an economist named George Box said all models are wrong, and some are useful. Toufectis then said, “What you’re going to be measuring is a second indicator at best to what you really want. . . . You’re going to deal with the army of the available data and try to extract knowledge from it that helps you decide if this is well correlated with organizational success or operational success, and that is always going to be an imperfect process.” Another participant disagreed in some respects, indicating a need to involve input from subject-matter experts all through the process of determining what the things are that would make sense to measure, which ones can be measured accurately and reliably, which ones do we already measure, and including Dulaney’s presentation; the goal has to be expert input on top of whatever mathematical models that are put together. Final discussion centered on notions like (1) measuring things that engineers do not necessarily think are important but yet are very important to the organization; (2) it matters what things cost, so showing cost of the engineering-facilities function per patient visit, over 141 sites, would be interesting; (3) an inpatient-satisfaction model drove huge change in terms of how nurses interacted with patients, resulting in a huge difference in quality of care; and (4) if either side of who will be stakeholder of the data metric is ignored, then something is missing.

IMPACTFUL DASHBOARDS FOR FACILITIES PERFORMANCE METRICS

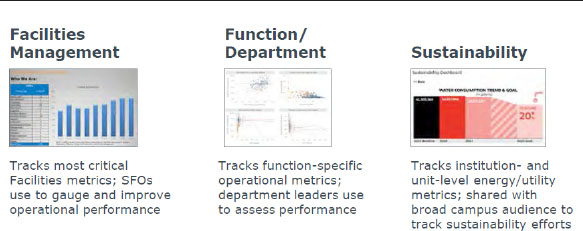

Lisa Berglund, a senior analyst at EAB Global, a company that provides advice to university leaders, gave a presentation focused on dashboards that could provide useful yet succinct performance information to VHA leaders at various levels. Starting with a story about the Oakland Athletics’ success after recruiting inexpensive and undervalued players based on key metrics, she described struggles that facilities have with translating reams of data into actionable insights. She discussed the use of dashboards by private companies across all sectors of the industry. Illustrating with Ohio State University, she showed that implementing dashboards saved both time and money in response to work-order fulfillment. Figure 2 shows three types of dashboards—facilities management (~15-20 metrics), department functioning (~8-12 metrics), and sustainability (~6-30 metrics)—the last being most prevalent among universities. In building effective dashboards, she discussed the challenges of which metrics to elevate (the KPIs),

___________________

1 See Western Michigan University, “Measuring Our Performance,” https://wmich.edu/facilities/maintenance/ performance and “Maintenance Total Cost Per Student,” https://wmich.edu/sites/default/files/attachments/u670/2019/Maint.%20Total%20Cost%20Per%20Student.pdf.

displaying them in a compelling way, and changing targets and triggers. For the KPIs, she showed a screening process, mapping to strategic objectives, use of leading rather than lagging indicators, elevating “hot-seat” metrics, ensuring balance, and a split of 20 percent volume indicators with 80 percent relative indicators. She also highlighted the importance of gauging performance through customer-service metrics, employing user-friendly layouts and formats, and setting principled targets and action triggers. She closed by displaying a handout for creating a dashboard starter list. Her presentation was very well received. At the end, there was a brief discussion about an ISO (International Organization for Standardization) standard for facility management.

TENANT SATISFACTION SURVEY

Beginning the afternoon of Day 1, Rob Lacey, a program manager at the General Service Administration (GSA), described GSA’s Tenant Satisfaction Survey (TSS). Lacey gave a snapshot of GSA’s portfolio and described major features and uses of the TSS. GSA’s activities cover over 9,000 tenants occupying 1,646 owned assets (184 million sq. ft.) and 7,100 leased assets (185 million sq. ft.). However, the TSS is sent to the entire federal inventory, both GSA and non-GSA managed locations. Discussion indicated that the response rate last year was about 16 percent with a bit over 200,000 responders from the entire federal government. Discussion also indicated that some VA employees do receive the survey, but Anselmi said, “I have never seen one 40 years working in a hospital,” and Alvarez replied, “No,” when Goodman asked if anyone from engineering in the VA sees any results. Most of GSA’s space is for offices (78 percent), with another 16 percent for courthouses and warehouses, and the remainder for land ports of entry, laboratories, and other uses. Lacey described eight categories of questions, each with 2 to 4 individual questions, for a total of 22 TSS questions. Purposes of the questions are to determine satisfaction of federal tenants with building services, provide actionable feedback to both GSA and other land-holding agencies, and identify and analyze trends year over year. No new questions are planned at this time; the primary focus has been on drop-down options that allow survey takers to expand on their responses. He explained that GSA uses survey data to determine annual action-planning strategies, and action plans are monitored and tracked in a sales-force action-planning tool. The TSS provides tactical information to GSA’s office of facilities management, and action plans have been developed based on survey feedback. GSA also uses TSS scores in other areas (annual reporting to the Office of Management and Budget, internal KPIs, and correlation analysis with other GSA data sets). Lacey noted that the TSS dashboard includes links to applicable NCMMS (National Computer Maintenance Management System) data, and

FIGURE 2 Illustrative dashboards. NOTE: SFO, senior facilities officer. SOURCE: Lisa Berglund, EAB Global, presentation to the workshop, May 8-9, 2019.

analysis is performed against budgets to determine cause and effect, such as whether spending more on a building equals higher scores. He also discussed a short bounce-back survey that is sent to a tenant when GSA completes a work request; this process has several values, such as providing prompt feedback of tenant experience, awareness of unsatisfactory work, and pointing out areas for improvement, both on a daily basis as well as monthly in a collective fashion to higher management. There was discussion on bounce-back specifics, such as dealing with bathroom conditions and, more broadly, limits of what is considered a work order. Toufectis complemented GSA for their sharing of data, including raw responses, to an agency that wants to look, and he could connect most responses to individual buildings with reasonable confidence. Smith said, “It’s a great briefing, but I don’t have a good sense of what the application is to VHA yet.” He asked Broskey if he could task someone to connect with GSA to find out what agency information they had and see if there is any value to it. Broskey agreed to make every effort to run down and try to ascertain where that information went and what was done with it.

MEASURING PERFORMANCE AT NASA THROUGH USER SURVEYS

Kim Toufectis, a master-planning program manager, said that he had worked with NASA operations researcher Bill Brodt on this workshop presentation, but Brodt could not attend. Toufectis focused on measuring asset performance through occupant surveys. His main points were built on three learning objectives: a true “total cost of ownership” approach to facility stewardship involves mission and people factors; actionable science-based research is needed (and available); and facility professionals need compelling stories supported by credible internal data for management to pay attention. He emphasized that stewardship goals involve people, and he referred to facilities industry research on health and building codes (getting better but lagging). He also noted some federal research on indoor air quality, a tool on sustainable facilities, a health-in-buildings roundtable, and a “cool/sustainable” NASA facility. However, he said that only 45 NASA buildings meet federal sustainable building criteria, and most NASA assets are obsolete. Re-framing his data in people terms, he showed a graph in which most workers were in marginal, fair, poor, or terrible conditions. Toufectis indicated a need to dig deeper than possible by using ordinary building data, such as capacity, age, etc., and ask the question, “Do the people doing NASA’s work see their individual workplaces supporting/constraining them?” Looking beyond NASA, he pointed to the GSA TSS as “timely, tied to research, lets us compare with peers, and free!” He then posed a value proposition as “facilities readiness enables workforce satisfaction, which in turn enables organizational productivity” and observed that degraded buildings certainly cost more to run, but he asked if occupants judge them the same way. Using data, he offered insights related to building and workspace conditions—for example, striving for sustainable buildings has a positive impact on occupants, and relying on many older NASA buildings may already impede occupant productivity. He said that NASA’s Marshall Space Flight Center evaluates, for its 20-year facilities master plan, each asset in five dimensions, one of which is “how occupants perceive the facility” (the other four dimensions are mission related, such as supporting agency goals, center roles, core products; importance to those products; readiness; and facility impact on agency goals). This final point returned Toufectis to his opening learning objectives, which included people factors as part of a true total-cost-of-ownership measure. A couple of questions at the end dealt with building age and conditions relative to occupants’ satisfaction. Yolitz, for instance, asked about correlation of satisfaction with the way buildings were built 40 or 50 years ago versus those built 60 or 70 years ago, which seem to have better bones, are often more iconic, and have more of an attachment compared to later buildings where the metric was how fast one can build it and how cheaply. Toufectis responded that, while he could not rule it out, each NASA building boom was related to a war; they were all built quickly. In sum, he doubted a correlation like that could be found in NASA’s population.

FINANCIAL MANAGEMENT FOR FACILITY MANAGERS

Turning to finance for this final Day 1 presentation, Richie Stever, director of O&M at the University of Maryland Medical Center, and National Academies staff member Cameron Oskvig announced that Ste-

ver was voted Facility Executive of the Year for 2019. Stever began by describing the not-for-profit Center’s three-fold mission—deliver superior health care, train next-generation health professionals, discover ways to improve health outcomes worldwide. There are two campuses a mile apart: one downtown 800-bed academic medical center (2.5 million sq. ft. total [2.2 million sq. ft. hospital only], $18 million/ year total budget [$14 million for energy], 41 full-time equivalents [FTEs], no boilers), and a second 200-bed community teaching hospital (0.8 million sq. ft. total [0.35 million sq. ft. hospital only], $8 million/ year total budget [$3 million/year for energy], 21 FTEs, boilers). These dollar and FTE data are important because, in response to a question by VHA representative Mike Reed, Stever said he is graded on both. After showing the facilities organization chart, he discussed two types of FTE health-care-facilities benchmarks: from (1) the IFMA (International Facility Management Association) for years 2010 and 2013 and (2) a database called Action OI, which his organization now uses. Based on the latter benchmark, he was being asked by the finance people to reduce FTEs to reach a chosen benchmark at the 75th percentile level—a 0.5 FTE reduction downtown and a 2.82 FTE reduction at the facility with fewest FTEs. On the larger reduction, Stever said he was struggling, and his reaction to the finance office was, “That’s people.” Amidst a flurry of participant questions and comments about benchmarks and his situation, Stever said he was still learning about this and trying to figure out how to adjust to hit the targets. He showed graphs indicating his FTE loads during normal and off-normal hours, as expected for 24/7 responsibilities. Looking to the future, he showed labor-force projections to year 2024 that had declines in the 16 to 24 years age category and increases in the 55+ years age category, meaning fewer entry-level (lower-wage) candidates and more older but experienced (higher-wage) workers. Regarding his own department, Stever said 28 percent were nearing retirement and average tenure was 24 years, leading to an unprecedented increase in turnover, departure of most leaders, loss of institutional knowledge, no succession plan nor career ladder, and high-wage demands by highly skilled candidates. His final chart showed unemployment rates and earnings by educational attainment; only the bottom categories (lowest education and lowest wage) indicated potential availability for employment. A group of nuggets from the discussions follow, based on Stever’s responses to numerous inquiries: the hospital is experiencing an $80 million/year deficit on capital repairs, making it harder to operate a building; he expects two outcomes from his team (1) performance on any inspection and (2) how the equipment is running (work-order productivity and equipment up time); his workers can close out work orders without a data-entry person; if he cuts FTEs, he still must do the work and spend money, likely 2 to 3 times the amount for an in-house person, to purchase the service (note: he pays just salaries, not overhead paid by the university); he contracts out big services, such as water treatment, elevators, etc.; finding engineers has proven difficult, so he is looking to outsource more boiler technology (no engineer problem at the downtown campus because energy is bought, but it costs more); an issue is how to bring in people to start transferring institutional knowledge because it takes ~ 5 years to learn all that is involved, so he focuses on apprentices in the 16 to 24 years range (not costly, not stuck in old ways), no degrees, but becoming licensed journeymen.

MEASURING PERFORMANCE OF FACILITIES AND ANALYSIS OF THEIR MAINTENANCE

On Day 2, returning to performance management, Richard Iofolla, a retired VHA chief engineer, described his two conclusions up front: First, there are too many variables to be able to set up a clean equation that one can just stick numbers in and then answer how many FTE are needed; a lot of assumptions are necessary. So basically, if someone came to him with a million-square-feet facility and asked how many FTE are needed, he cannot answer that. Second, measures of performance need to be developed that are not affected by available resources. Essentially, he meant that one cannot use a measure of performance that depends on how many resources one has, because that is what one is trying to figure out. He next offered an equation for determining cost: (units of work) × (cost/unit of work) / (efficiency) = appropriate Cost; each term of this equation is a variable. By assuming efficiency to be a constant (such as 100 percent for a properly functioning department) and making the term cost per unit of work a constant by management, deciding on functions to be done in house or contracted, the equation can be solved. He noted that resources needed cannot be determined until methods of accomplishing the

work are first determined (“I can’t tell the hospital director how many FTE he needs if he doesn’t already know how he’s going to do the work.”). Iofolla observed that the first two terms in the equation can be defined at either the macro level or micro level, and efficiency can be defined as how well measures of performance are met. He then pointed out that cost per unit of work could be determined by comparing to contracted costs or average costs for similar work performed at similar facilities that meet Joint Commission and other requirements, thus creating a comparison that can only be determined to plus or minus a percentage, a range rather than an exact number. As his presentation continued, there was much discussion intertwined with Iofolla’s moving ahead to illustrate certain points in line with comments. For example, he called for corrective-maintenance wrench time as part of the work-order system, but not corrective-maintenance completion percentages or turn-around time; he also discussed customer feedback and, in general, advocated management by walking around (not typically reported but a very good way of measuring employee performance and customer satisfaction). In addition, he stressed the importance of a good administrative assistant (frees up time of supervisors and technical staff) and addressed other issues impacting costs, staffing with generalists versus specialists, the area maintenance plan, and facility layout, age, and functions. Iofolla closed by repeating his opening conclusions. Some of the back-and-forth dialog during the presentation is illustrated by the following exchanges. Regarding defining terms at either the macro or micro level, Gransberg explained that, with highway departments nationally, they found that the more variables one adds to the model, the more noise it adds and the less accurate it becomes. He said, “We call it bottom up and top down. . . your top-down model really has to be limited to where’s the money, where’s the real value at the end.” Relative to Smith’s concerns about being only “on deep” in certain functions and accounting for that with modeling, Iofolla responded that one has to have redundancy or cross-training, and if there is no one to train, it is critical to set up short-turn-around service contracts, which means bouncing back and forth between FTE and contract costs. Gransberg declared, “The thing I’m really enjoying about this presentation is that he’s talking about the assumptions. We never challenge the assumptions. . . . At the end of the day, and he’s spot on, we have to be talking about ranges for answers, not discrete things. . . the problem with the bottom-up estimating approach, bottom-up models, is as engineers we believe we can solve any problem you give us.” In a later response Iofolla said, “I don’t believe there’s a way to specifically say it’s going to take a plumber 2 hours to install a sink; it’s always going to come back to doing a comparison to what other people are doing. . . you need to compare to facilities that are doing a good job.” There was much discussion about data entry (its accuracy, skills to do it, keeping up with preventive maintenance), old versus upgraded work-order accounting systems and standardization (elaborated by Ed Litvin, VHA representative), and Smith’s stated need to “change the culture” to avoid corrupted data. Iofolla also discussed experiences with private hospitals as compared to the VA; for example, he said, “In the VA the Chief Engineer touches almost everything that goes on at that facility. He’s a member of just about every committee.” At private hospitals he simplified by saying, “My job was to be sure that the toilets didn’t overflow.”

STAFFING FOR ALTERNATIVE CONTRACTING METHODS

Douglas Gransberg, Iowa State University and a business leader in construction engineering, first referenced a 2007 study on design-build (DB) contracting (Keston Institute for Public Policy, University of Southern California), which found—most importantly—that DB requires a more competent and experienced workforce. He then turned to the NCHRP (National Cooperative Highway Research Program) 518 report, which addressed and bench-marked staffing for alternative contracting methods (ACMs) to note that state departments of transportation (DOTs) have a professional and technical workforce crisis due to increased availability of funding for national infrastructure construction projects and public agencies having to compete with private industry for qualified personnel. Gransberg discussed a range of circumstances associated with ACM implementation. At this point, it is interesting to highlight Gransberg’s in-depth elaborations throughout his presentation, either relative to particular charts or in response to the few participant inputs. He colorfully described what a public agency has to deal with now, such as the current generation not seeing loyalty to a VA or Corps of Engineers as a big deal; engineering schools producing too few entry-level engineers, less qualified than in the past; losing mid-level engineers (ages

30 to 40 years) to a $30,000- to $40,000-per year private-sector pay raise; project sizes getting ridiculous ($200 million/year used to be a big deal, and now the Georgia DOT is doing $11 billion); schedule changes due to inability to spend so much money as fast as planned; and on bidding he said, “I believe that the low bid is nothing more than getting the worst contractor that you could possibly find, and getting a facility that’s as cheap as possible . . . why are we building the facility? It’s not to get it cheap, it’s so that it will work for 50 years.” Against the backdrop of his experiences in accelerating project delivery, and not as an evangelist for any particular ACM type, Gransberg discussed key findings of NCHRP 518; for example, there is no preferred or optimum organization structure used by DOTs for implementing ACM; the fast-paced and collaborative nature of database projects requires higher-level management and decision-making skills that place DOT engineers earlier in leadership positions; multi-disciplinary teams (finance, legal, environmental, public relations, and dedicated program managers) are needed; across ACM types (DB, CM/GC [construction manager/general contractor], and P3 [public-private partnerships]) critical staff skills are leadership and coordination ability, risk identification and analysis, strong partnership and team-building, knowing project delivery and procurement procedures as well as construction-contract administration, and ability to analyze constructability reviews and project phasing; and most out-sourced work is due to lack of qualification and ability of in-house staff. After going through extensive lists of ACM staffing lessons learned for different ACM types, including the overall importance of identifying qualified, experienced, flexible, and responsive personnel dedicated to the fast ACM pace, Gransberg summarized by saying that ACM staffing issues are complex; using ACM means accelerated schedules, reduced agency planning and design input, need for experienced agency project personnel, increased ability to tolerate risk, and a paradigm shift in procurement culture (construction-centric development and delivery plus changing from minimize cost and time to maximize cost and schedule certainty). Gransberg closed with quotes, including these 2010 words from Federal Highway Administrator Victor Mendez, “It’s imperative we pursue better, faster, and smarter ways of doing business.”

A DISCUSSION OF RESOURCE MANAGEMENT AND FINANCE

Committee member Robert Goodman opened with several observations and said that he was not speaking for the committee. Goodman believes that, relative to peer portions of the VHA, such as physicians, nurses, and bio-med specialists, there is relatively poor data available and being used by facilities personnel as a central system. He noted that values and uses of such data for physicians, nurses, and bio-med specialists have grown over time, leaving facilities personnel behind their peers and leading to the critical question of whether there will be a push to improve the quality of facilities-related data. He offered that, with no VHA-headquarters, useful facility measures, nor a “burning platform,” to get a response, the question becomes how can one get a scoring methodology that will elicit behavior changes or maybe recognition of a risk level; the FCA will not do it, and the current VERA (Veterans Equitable Resource Allocation) model, which is focused on patient care, does not account for facility uniqueness. Goodman then raised the issue of what is the follow-on to ensure the facility is also going to be cared for. He also hit lack of standardization, later elaborating with, “There’s very little standardization out there.” Turning to finance, he noted different kinds of health care (government-run, not-for-profit, and for-profit) and how strategic goals, such as those involving efficiency, effectiveness, and safe and efficacious care, can be established and linked in with the financial and business-development elements. Directing a question at the VA in general, he asked, “Because you’re a huge system, and it’s nationwide, do you have the ability to set what the strategic goals are in terms of what your facility construction requirements are going to be for renovation, for whatever? What’s the strategy, and how do you link in with the finance folks, and how do you link in with the people that are doing business development, because the VA population is changing dramatically?” He referred to projections that VA’s current 20 million population will drop to about 12 million in 30 years, a net loss of about 250,000 eligible beneficiaries a year, and they are leaving the northeast, California, and the rust belt, all of which raise issues about investment strategy for facilities. He referred to VHA’s unique patients, such as those suffering spinal cord injuries, amputations, and invisible wounds; VHA’s unique facility needs, like much ambulatory care, boilers, fire

stations; and VHA’s overall infrastructure scope (5,900+ buildings [2,100+ “historic”], ~153 million sq. ft. and 16,400 acres, plus 1,665 leases of 18.6 million sq. ft.). Against the dual backdrop of demographic shifts and uniqueness, and the fact that the VA has responsibilities, many of which no private institution would want to deal with (“There is no money in that, but you [VA] do it because there’s a congressional mandate and the population that’s being served requires that.”), Goodman focused on a range of strategic financing issues and concerns associated with VHA, VISN (Veterans Integrated Service Networks), and VAMCs as they relate to the health-care elements of facilities affected. After observing that whatever model emerges would probably be used differently by different portions of the organization, and from a financing perspective, questioning what management metrics would be used, he opened to questions. For example, Alvarez made the point that the engineer cares about where things are headed in the future, but he has to maintain it until the door closes. The dialog then drifted to maintaining parts of the space and divestment versus demolition. Anselmi challenged poor-data claims, which he believed were overemphasized by the work-order focus. He said, “VA does have huge databases that have a huge amount of really good data.” Goodman responded that the question constantly returning is how many people do you need, and that is not the same question; it is a database reflective of what happened but not necessarily what needs to be done. Moving through further exchanges about data and dashboards, at the close Smith returned to effectiveness and efficiency, observing that as more creeping privatization happens the more the conversation swings to efficiency and to subjects that will be driven by policy considerations, not facilities engineering.

WRAP-UP DISCUSSION

Committee Co-Chair Smith recalled the values of the past 8-months and this meeting, which coalesced a lot of thinking. He commended Broskey for setting the tone about critical systems and spawning discussion of standardization. He then quickly reviewed other key elements of the meeting, the risk assessment process, education performance measures, satisfaction surveys and need for VHA follow-up, the financial presentation that dealt mainly with hospital benchmarking, discussions with Iofolla (bottom-up, top-down, micro versus macro, “don’t count beans but whether people can do the jobs and how you can quantify that,” “don’t let perfect be the enemy of good enough”), and explanations of different contracting options (atrophy of mid-level managers, moves to private sector, non-competitive government salaries, effects on VHA engineering as older generations leave). He then turned to others.

Yolitz said, ”We’ve got to make sure that we’re measuring not just for measurement’s sake but that there is some value.” Mentioning other key factors (leading-lagging balance, assured data integrity, etc.), he intended to put all of this in a note for the committee. Yolitz also supported continued challenging of understandings and assumptions and checking biases.

Delany moved next to a question about identifying proper staffing levels, a step that led to a long conversation with Broskey and others about performance measures, historical demand, satisfaction surveys, knowing success, micro versus macro modeling, and being wary of self-reported data and very simple ratios.

Reed was intrigued by the risk presentation, noted that it will take a few years to mature, but thought performance metrics can be presented credibly to limit or know the risk level, perhaps a “burning platform” that medical center directors would want to pay attention to. He believed much groundwork may be needed to present the “burning platform” and what should be done first. Smith agreed about tempering expectations; the result would take a half-dozen years, “a long row to plow.”

Alvarez echoed Reed’s comments and turned toward human-factors work because what is measured, and how, has to resonate at all levels. He then noted the success of comparisons and lack of success by calculating from a micro basis.

Anselmi accepted uniqueness of this risk-process presentation, but said it was not the first time risk was talked about, because Toufectis had been emphasizing risk from the beginning. Anselmi believed telling a center director that X people are needed because of a standard would result in nothing happening, but if the director could be shown a certain amount of risk is being accepted, and asked if that is acceptable, the case is a lot more persuasive. He then described the Portland discussion with

O&M folks about exercising valves (“Oh no, we don’t have time for that.”), and then when he told the director (an ex-nuclear submariner who believed exercising valves was very important) it is not being done, the response was, ”We’re doing everything. My chief engineer takes care of everything, everything is perfect.” Anselmi summed up by urging that, in addition to coming up with some broad staffing standards, combining with a risk analysis would be very useful to explore. Smith endorsed the point of asking executives what risk they are willing to accept.

Litvin appreciated discussions on performance metrics and the idea of a “burning platform.” He then related those to experiences with his current leader involving daily healthcare-operations briefings and key questions, “What facilities do I have out there across VHA that are out of operation, or not at normal operation, and why, and when are they scheduled to be back online?” The typical questions Litvin gets are, “What is the cause, is it because we’re deferring maintenance, are our facilities old and we don’t have enough funding to maintain it like we should?” Litvin believed bringing all these things together in the committee’s report could help identify key metrics. Smith agreed and, referring back to the risk presentation, observed that if done right it could provide a lead assessment on problems.

Goodman said the VA has remarkable visibility at the patient level on millions of eligible patients, and 5,900 buildings, scale and scope. Inside a human, ~21 systems are managed through health-care metrics; buildings have the same thing. Next, referencing VA healthcare-data systems and risk-mitigation framework, he suggested thinking about what happens when serious health-care events occur, such as inspections, questioning why did things go wrong, distributing the findings to aid prevention in the future, etc., and then use that same risk-mitigation framework to look at buildings, for example, operating rooms (OR) going down. He the mentioned a case of a real Army OR, which was going down because the facilities’ O&M work wasn’t being done the right way. He urged using the above methodology to think differently about how to get in front of O&M problems.

A participant urged considering human factors so the committee’s results, such as the importance of correct work-order processing, are meaningful not just to directors but down to actual workers and unions. Committee member Eugene Hubbard (offsite), mentioning risk and hospital-finance briefings plus this wrap-up, agreed with a lot of these comments. Gransberg and Iofolla had no comments. Smith thanked everyone and adjourned the meeting.

DISCLAIMER: This Proceedings of a Workshop—In Brief has been prepared by Norman Haller as a factual summary of what occurred at the meeting. He was assisted by Daniel Talmage and Kelly Arrington. The committee’s role was limited to planning the event. The statements made are those of the individual workshop participants and do not necessarily represent the views of all participants, the planning committee, or the National Academies. This Proceedings of a Workshop—In Brief was reviewed in draft form by Doug Gransberg, Gransberg & Associates, Inc.; David Nash, Dave Nash & Associates International, LLC; and Kim Toufectis, National Aeronautics and Space Administration, to ensure that it meets institutional standards for quality and objectivity. The review comments and draft manuscript remain confidential to protect the integrity of the process. All images are courtesy of workshop participants.

PLANNING COMMITTEE: Committee Co-Chair, James B. Smith, U.S. Ambassador (retired); Robert Anselmi, VA Hospital Engineer (retired); Robert Goodman, Principal, The Innova Group; and Brian Yolitz, Associate Vice Chancellor for Facilities, Minnesota State College and University System.

STAFF: Cameron Oskvig, Director, Board on Infrastructure and the Constructed Environment; Daniel Talmage, Program Officer, Board on Human-Systems Integration, Kelly Arrington, Senior Project Assistant, Board on Human-Systems Integration.

SPONSORS: This workshop was supported by the Veterans Health Administration.

Suggested citation: National Academies of Sciences, Engineering, and Medicine. 2019. Performance Management and Financing of Facility Engineering Programs at the Veterans Health Administration: Proceedings of a Workshop—in Brief. Washington, DC: The National Academies Press. doi: https://doi.org/10.17226/25451.

Division on Engineering and Physical Sciences Division of Behavioral and Social Sciences and Education

Copyright 2019 by the National Academy of Sciences. All rights reserved.