3

Quantum Computing Systems

Rudy Wojtecki, IBM, introduced three speakers who were invited to address quantum computing systems: Pat Gumann, IBM Research; Ravi Pillarisetty, Intel; and Irfan Siddiqi, University of California, Berkeley. Stephen Rossnagel, University of Virginia, moderated a short Q&A that followed.

A SYSTEM OVERVIEW OF QUANTUM COMPUTING

Pat Gumann, IBM Research

Gumann offered insights about quantum technology, the current state of quantum computing (in particular, his work on IBM’s superconducting quantum computing platform), and the continued need for fundamental research into various fields of science and engineering—microwave electronics, low-temperature physics, quantum-limited amplifiers, and quantum mechanics. He outlined two main tasks for the quantum field: understanding fundamental research and scaling the technology into a functional, useful system.

Evolution and the Current State of Quantum Computing

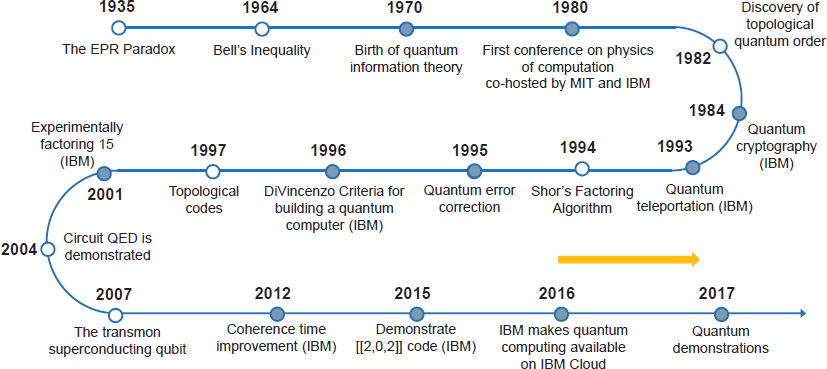

Gumann traced the development of quantum computing back to the 1935 discovery of the Einstein-Podolsky-Rosen (EPR) paradox1 (see Figure 3.1), with

___________________

1 The EPR paradox (or the Einstein-Podolsky-Rosen paradox) is a thought experiment intended to demonstrate an inherent paradox in the early formulations of quantum theory. It is among the best-known examples of quantum entanglement.

important advances also made in the 1970s, 1980s, and 1990s. In 2007, the superconducting transmon qubit was created at Yale University, and in 2016, IBM made the unconventional decision to put its 5-qubit quantum computer in the cloud, allowing academics and other researchers to experiment with the technology and enabling the first quantum computing demonstrations in 2017.

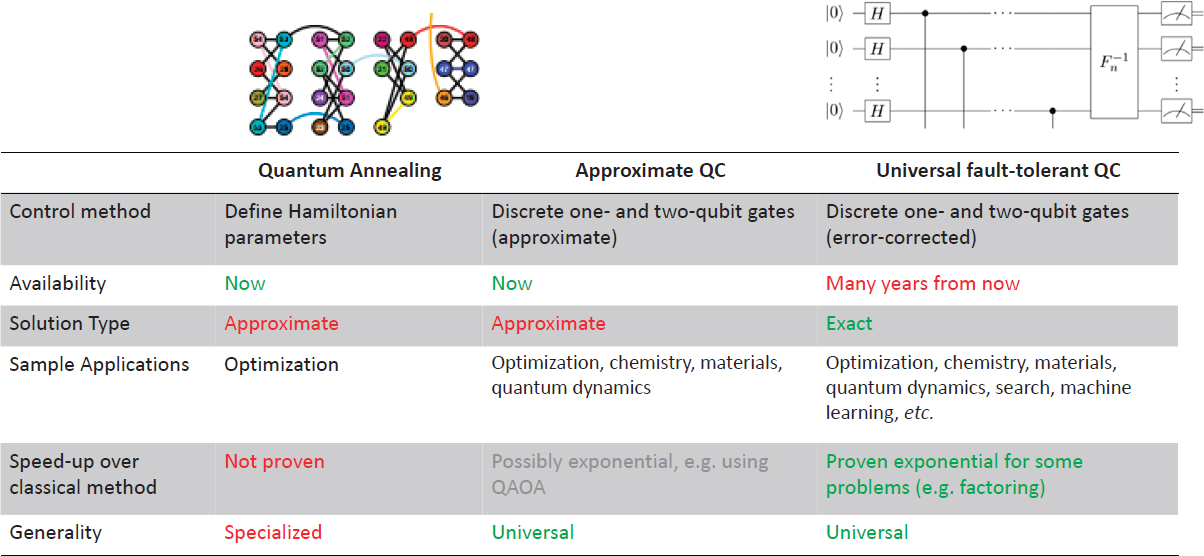

There are three quantum computing paradigms: quantum annealing, approximate quantum computing, and universal fault-tolerant quantum computing (see Figure 3.2). They differ in their control method, solution type, speed, and generality, with the general agreement being that fault-tolerant quantum computing, which is currently only at the conception stage, is the end goal. A universal fault-tolerant quantum computer could potentially perform tasks such as molecular structure and material science simulations, integer factoring, Grover’s algorithm searching, and Harrow-Hassidim-Lloyd algorithms for solving linear systems of equations (HHL) in a more efficient way than classical computing, Gumann said.

Quantum computing is in its noisy intermediate-scale quantum (NISQ) era. Today’s quantum computers can perform short depths limited by coherence and heuristic applications with possible (but not provable) speedups. There is still a lot of work required to improve their performance toward the universal quantum computer; current key challenges for IBM’s systems include coherence improvements (materials science and thermalization), frequency crowding, and minimizing errors, Gumann said.

IBM’s computers on the cloud are not yet fault tolerant. Nevertheless, researchers can take advantage of them for testing certain hypotheses. So far, IBM has released three generations of quantum computers: first, the Quantum Experience

5-qubit and 14-qubit public devices; second, the commercial 20-qubit machines (Quantum Volume 8); and most recently, the “System One,” another 20-qubit commercial quantum computer (Quantum Volume 16). All of these are based on superconducting circuits hosted in a dilution refrigerator to maintain millikelvin (mK) temperatures. In addition to the refrigerator, the hardware includes microwave I/O lines, cryogenic electronics, field-programmable gate array (FPGA)-based room-temperature electronics, and a quantum processor with qubit chips mounted at ~20 mK temperature stage. Architecture in the processing unit includes quantum-limited amplifiers, radiation, and magnetic shielding.2 Gumann stressed that the right software is as important as the right hardware and impacts computation time.

IBM’s superconducting quantum computers combine single-junction fixed-frequency transmons (which have reduced noise sensitivity) and superconducting readout resonators to measure states of the qubit. Many of the components that IBM uses, such as the room-temperature electronics, are available commercially. However, there are crucial gaps in what is available, especially in cryogenic technology. As much as IBM would like to be able to address those gaps, doing so is not feasible taking into account all the various issues that need to be addressed. Advancing and scaling quantum technology will therefore require strong collaboration between industry and universities, including partnerships with capable manufacturers and vendors, Gumann said.

The Need for Fundamental Research

Getting quantum to scale is very important, but there is still a need for a fundamental understanding of quantum science, Gumann said, in order to understand and overcome current restraints and enable scaling. For example, it is important to control the qubit state, which requires manufacturing advancements for room-temperature electronics, cryogenic components, and two-dimensional (2D) superconducting qubit circuits. Gumann stressed that quantum computing should be viewed as a new market for these products. In addition, the conflict of controlling and observing quantum systems without causing interference must be overcome, and qubit coherence times need to improve. Gumann stressed that investments in quantum research should be kept broad, not focused exclusively on one narrow area.

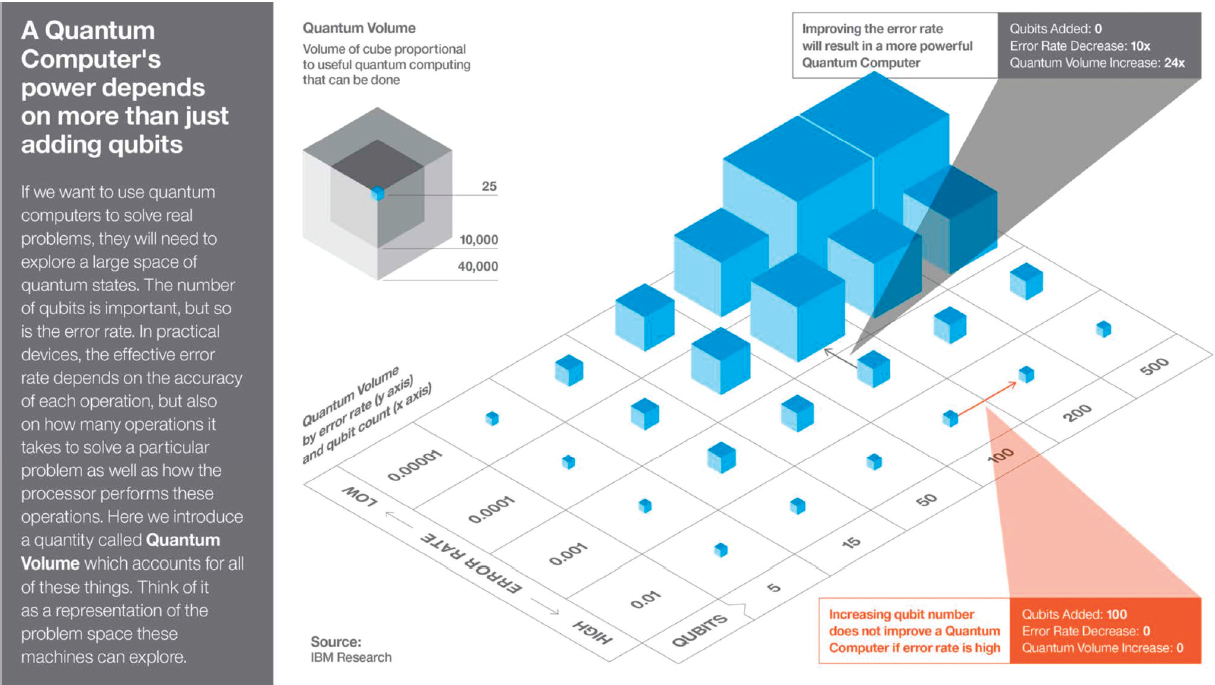

A new set of metrics would also help, he suggested, both within a quantum device and for overall system performance. Toward that end, IBM developed a new metric of “quantum volume,” (see Figure 3.3) a procedure for assessing the

___________________

2 A.D. Corcoles, J.M. Chow, J.M. Gambetta, C. Rigetti, G.A. Keefe, M.B. Rothwell, M.B. Ketchen, and M. Steffen, 2011, Protecting superconducting qubits from radiation, Applied Physics Letters 99:181906, https://aip.scitation.org/doi/10.1063/1.3658630.

performance of a whole quantum system that depends on number of qubits, error rates, connectivity, gate set, and compiler efficiency. Improving all of these will improve quantum computing performance more effectively and lead to better performing quantum systems, Gumann said.

Concluding, Gumann stressed that it is essential to continue to explore and build various architectures for quantum systems. In this NISQ era, benchmarks may differ because there are still multiple problems to solve, but well-defined, universal metrics are needed. Device-level metrics and tests are needed to test noise, and model algorithms and device-independent metrics, like quantum volume, are needed to test overall performance. To accomplish all of these objectives, he urged continued support for the overall quantum technology ecosystem.

SPIN QUBITS DEVICE INTEGRATION

Ravi Pillarisetty, Intel

Pillarisetty discussed the challenges within quantum computing and Intel’s efforts to overcome them by applying its transistor expertise to semiconductor-based spin qubits.

Quantum computing has the potential to provide exponential computational speedup, with broad applications across the economy, including logistics, image processing, pharmacology, and cryptography. Intel is actively working on every part of the quantum computing stack, with a particular focus on building a scalable system rather than meeting arbitrary milestones with brute force. Pillarisetty summarized the three challenges Intel faces inherent to leveraging its transistor technology to quantum mechanics: process innovations needed at the 300 mm level, the creation of high-volume testing, and interconnects and arrays that can be created at scale.

Spin Qubits and Quantum Dots

Like IBM, Intel is investigating superconducting qubit-based quantum computing. In addition, Intel is attempting to leverage its expertise in transistors and semiconductor manufacturing to create spin qubits, gated semiconducting nanostructures that can be used to independently control and interact with individual electrons. Spin qubits offer significant promise, Pillarisetty said, although a great deal of work is still needed. Spin qubits have unique process challenges, such as with barrier gate interfaces and thermal SiO2. To enable qubit manufacturing, new innovations are needed for etches, cleans, thin films, and polish. To pave the way toward creating qubits at scale, Intel is developing customized test chips—full reticles to process entire 300 mm wafers with more than 10,000 different qubit test

structures. This enables the ability to collect a high volume of statistical data across various designs and approaches. This process is an essential ingredient in getting to high-volume research and development (R&D), Pillarisetty said.

Quantum dots are the bridge between traditional electronics and spin qubits, and they face many process challenges similar to those that Intel has tackled during its development of transistors. Moving to a 300 mm qubit flow is not easy. While universities may create working models, large-scale fabrication introduces many challenges. Just as it took 9 years to go from initial 300 mm research to actual manufacturing of trigate transistors, Pillarisetty posited, there will be a long path, requiring new supply chains, to move from basic quantum research to scalable manufacturing.

Intel’s quantum dot gate yield is nearly 100 percent, enabling statistical data collection and scaling up to larger arrays. Intel researchers use transistor metrics to characterize the interference quality of the various gates in the quantum dot device. At T = 1.5 K, Intel substrate plus thermal oxide produces clean data on the academic flow, but shows noise on the 300 mm integrated flow, which Pillarisetty said is not surprising given that the transistor metrics indicate a nonoptimized barrier gate interface.

Intel sees a clear developmental path from transistors to quantum dots to spin qubits. Spin qubits are the most complex, however, with more masks, layers, process steps, and increased testing complexities. Substrate development is also important, and Intel has created partnerships with vendors who can supply high-quality, high-volume precursors for 300 mm silicon 28 epitaxial growth.

Testing Innovations Needed

Quantum advancements require testing innovations, Pillarisetty stressed. There is currently no high-volume testing ecosystem, creating a bottleneck where it takes several days to get feedback on a single device—far too slow for industry R&D. A major reason is the system’s cryogenic requirements. These low temperatures increase measurement time and decrease the amount of data collected. Intel is pushing its vendors to create automated testing ecosystems to enable screening and data correlation at room temperature, 1.5 K, and 10 mK. Intel is also driving development of cryogenic e-test automation at T = 1.5 K, which will be the first of its kind. In addition, Intel researchers have demonstrated their first proof-of-concept data for cryoprobing.

Beyond the device level is the interconnection level, but brute force will not work for millions of qubits. According to Pillarisetty, a necessary reshaping of Rent’s rule, defining the relationship between the number of external signal connections to a logic block with the number of logic gates, and its impact on scaling, is one of the most overlooked areas in quantum computing.

Pillarisetty concluded by pointing to “Franke’s rule,” a way of optimizing the quantum Rent exponent “p” by reducing the number of wires coming out of the refrigerator through cryogenic control, reducing the number of I/Os per chip by multiplexing on the chip (which unfortunately generates heat) or gate selector schemes (which require high uniformity), and reducing the number of wires per gate.3

After Pillarisetty’s presentation, a participant asked how a silicon–silicon dioxide interface could be improved. Pillarisetty acknowledged how important that is to any gated device, and noted that there are many opportunities for innovation in this space, especially in the thermal processing step. Another participant asked how Intel is addressing the fact that conventional high-volume electrical characterization relates to quantum computing coherence, and Pillarisetty responded that his team is extrapolating from 1 K measurements back to room temperature. Last, another participant asked if it was possible to ensure that each qubit is the same. Pillarisetty answered that his team is trying to make the system clean enough to support consistency while establishing how much variation can be handled during scale-up. It is important to remember that the goal is not just for individual devices but also for systems-level scaling, he concluded.

SUPERCONDUCTING DEVICES: PACKING AND UNPACKING QUANTUM INFORMATION

Irfan Siddiqi, University of California, Berkeley

In the past 20 years, quantum science has moved from fundamentals to manufacturing and applications, and it is now possible to engineer individual quanta for quantum information systems. Siddiqi outlined the differences between quantum materials and quantum devices, and detailed the two strategies needed to make them both a reality: packing, and then unpacking, quantum information.

Quantum Materials Versus Quantum Devices

Quantum materials focus on assisting nature to assemble complex structures. In this context, quantum coherence is preserved through structural perfection and symmetry, collectively creating emergent phenomena that could be harnessed for engineering applications.

Engineered quantum devices at this time, in contrast, do not have a natural lattice structure that permits fast information exchange without potentially opening

___________________

3 D.P. Franke, J.S. Clarke, L.M.K. Vandersypen, and M. Veldhorst, 2019, Rent’s rule and extensibility in quantum computing, Microprocessors and Microsystems 67(6/2019):1-7, https://doi.org/10.1016/j.micpro.2019.02.006.

up decohernence channels. The introduction of asymmetry, surfaces and interfaces to enable read–write access on one hand may introduce decoherence on account of information leaking out of the system, but they also permits the use of active control to dynamically stabilize a quantum array. This is a valuable resource for the quantum systems engineer.

Packing Quantum Information onto Chips

First, quantum information must be packed into small coherent objects: chips. In order to do that, engineers must understand high-coherence device structure and geometry, working with low-loss materials that can be designed for tolerance, connectivity, and scalability. The quest to understand quantum coherence began more than 10 years ago,4 and researchers are currently able to have well-controlled experiments in the 10-100 minimalist qubit era, Siddiqi said. However, there are many other, more flexible qubit designs to explore, including tunable, topological circuits; non-S wave materials; and novel tunnel barriers, which could have better noise properties or compatibility with fault tolerance.

While there are applications for current achievements, to get to the 100- or 1,000-qubit control level, additional fundamental research is necessary. For example, researchers must learn how to suppress quantum jitter, remove cross talk at all levels, and eliminate dissipation on the chip, which causes coherence loss. It is also critical to understand how surface and interface characteristics, such as the existence of defects or water, create variation in quantum coherence. Design uniformity can also affect performance, depending on which algorithm is being run. Process benchmarks and rapid testing are also needed to measure coherence time and understand how it can be applied. Siddiqi shared the details of a chip that his laboratory is able to process cleanly, and noted that his team is also working on methods to shuttle information between different chips and layers.

Unpacking Quantum Information

The second task is to unpack quantum information—that is, to produce a structure with long coherence that can be read out. This task has several challenges to overcome. One limitation engineers face is the “tyranny of wires,” Siddiqi said, underscoring the importance of reducing the number and complexity of wires, filters, circulators, directional couplers, and other such components.

An additional challenge is a lack of standardization, which leads to “magic” recipes that produce widely varying results and are difficult to re-create. Often,

___________________

4 Y. Nakamura, Yu.A. Pashkin, and J.S. Tsai, 1999, Coherent control of macroscopic quantum states in a single-Cooper-pair box, Nature 398:786-788, https://doi.org/10.1038/19718.

researchers work with altered commercial components that are not rated for quantum use. Standardization and characterization of certain components would benefit the field, and also help determine whether current amplifiers, devices, and other classical electronics could be used in quantum, Siddiqi said.

In addition, prototype controls from accelerator technologies could be very promising for intermediate-level scaling. They have a low cost per channel, and there has been some success with on-board signal processing. Siddiqi also emphasized the need for a better understanding of quantum signals, conversion, and scaling and a need for optimizing cryogenic data processing.

Envisioning the Path Forward

Far more information can be processed with quantum computing than with classical computing. In an ideal world, quantum information is packed in, quantum “magic” happens, and an answer is given. One major drawback, however, is that it may not always be possible to check to make sure that quantum systems are working properly. To address this issue, Siddiqi suggested exploring the use of machine learning (ML) to run quantum-assisted classical routines that process large quantities of data.

It is also possible that quantum filters could be used to continuously monitor qubits and train neural networks with tomographic validation, pushing beyond the Markov approximation and resolving non-Markovian qubit dynamics, Siddiqi noted. However, classical cross talk and active cancellation also need to be accounted for.

Closing, Siddiqi noted that there are key materials, design, and manufacturing challenges that must be surmounted to enable quantum-compatible devices and applications. For functioning high-coherence circuits, low-loss substrates and source materials are needed, and several new processes and tools need to be created. Quantum signal processing hardware requires high-coherence compatible cryogenic high-noise reduction filters, diplexers, circulators, and amplifiers; scalable digital and analog microwave technologies; and signal multiplexing, modulation hardware, and quantum–classical signal converters. Last, a flexible hardware stack that allows for hierarchical operations, fast feedback, and active quantum control are needed.

In response to a question, Siddiqi stated that better chip designs would either use more junctions for increased functionality, use materials with highly sophisticated inductances, add error correction or bring in fault tolerance. Researchers are actively pursuing all of these avenues, he noted.

A participant asked how perfection is possible when every edge of a chip matters. Siddiqi answered that many people are studying this problem, and he

credited the Intelligence Advanced Research Projects Activity (IARPA) with funding research in this domain. Aluminum is the workhorse, but it does have flaws, he noted. Another participant asked if magnesium diboride or another metal could be used, making it possible to raise the operating temperature. Siddiqi replied that single metals work well, so the advantage of compounds is not obvious. Operating temperature is important, but one needs to cool to freeze out other noise sources.

In response to a question about ML, Siddiqi said that ML brings utility, functionality, and speed to quantum, and so it is a legitimate pursuit. ML is also able to provide insights into noise processes, but it is a classical computing concept and therefore not truly quantum yet, which means that, blindly applied, it could also slow down the system.