4

Hazard Identification

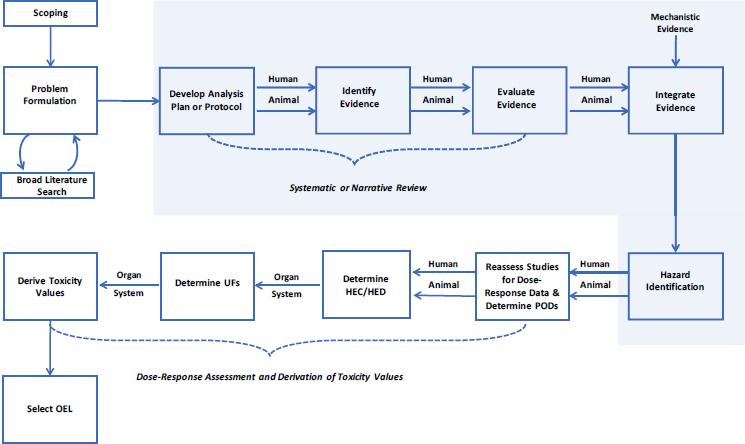

Hazard identification generally relies on an evaluation of the entire body of available scientific literature on a chemical, and different approaches have been used to perform this step in support of developing exposure guidelines (see Chapter 2). In Trichloroethylene: Occupational Exposure Level for the Department of Defense, the U.S. Department of Defense (DOD) “developed a systematic review of the recent literature [on trichloroethylene (TCE)]” (Sussan et al. 2019, p. 1) to use in conjunction with older literature identified in an authoritative review (EPA 2011). Figure 4-1 illustrates how systematic reviews and integration of evidence are used to draw hazard conclusions. This chapter provides a discussion of DOD’s methods for conducting these steps.

SYSTEMATIC REVIEW

In the Institute of Medicine (IOM) report Finding What Works in Health Care: Standards for Systematic Review a systematic review is defined as “a scientific investigation that focuses on a specific question and uses explicit, prespecified scientific methods to identify, select, assess, and summarize the findings of similar but separate studies” (IOM 2011, p. 1). The IOM methodological standards detail elements that are essential to producing a scientifically valid, transparent, and reproducible systematic review. The 21 standards (with 82 elements) address the entire systematic review process, from the development of an appropriate review team to completing the final report. Many of these elements are also in the tools that have been developed to assess systematic reviews, including ROBIS (Whiting et al. 2016) and AMSTAR-2 (Shea et al. 2017), as well as in the guidelines for reporting systematic reviews (i.e., PRISMA [Moher et al. 2009]). The committee discusses DOD’s methods for conducting the systematic review of TCE below, including how they relate to the IOM standards.

Develop a Protocol

A protocol is a detailed plan, developed before initiating the review, which describes the scope and methods of the systematic review (IOM Standard 2.6). For instance, the protocol step of identify evidence would entail documenting the

search terms, search strategy, inclusion/exclusion criteria, and procedures for study selection. The protocol is a critical component to a systematic review because it minimizes author bias, allows for feedback at the early stages of the review, facilitates reproducibility, replication, and future updates, and increases transparency and scientific rigor.

Past reports on systematic review by the National Academies of Sciences, Engineering, and Medicine have highlighted the critical importance of a peer-reviewed and pre-published protocol prior to undertaking the review (NRC 2011, 2014; NASEM 2017, 2018). The IOM (2011) standards provide guidance as to what details need to be included in a protocol, as do current standards for reporting protocols (e.g., PRISMA-P). Although systematic review methodologies as applied in the environmental health field are relatively novel (Woodruff and Sutton 2014; NTP 2019), many examples of published protocols exist (e.g., NTP 2013a,b; NASEM 2017). Prospective registers, such as PROSPERO (https://www.crd.york.ac.uk/ prospero), currently accept environmental health-related protocols for registration (e.g., Lam et al. 2015a,b). Furthermore, scientific journals are also now accepting systematic review protocols for peer-reviewed publication or requiring them in support of publishing the results of systematic reviews (e.g., Mandrioli et al. 2018; Wikoff and Miller 2018).

No mention of a protocol is made in DOD’s draft report (Sussan et al. 2019), and the methods described were insufficient for understanding all of the steps that

were performed. This led to a lack of clarity as to whether a particular step was performed but not discussed in DOD’s draft report, whether the step was omitted, what decisions were made before performing the review, and what decisions were made or changed during the course of the review. To address this, a protocol that includes detailed descriptions of the review methods should be developed, peer-reviewed, and made publicly available prior to the start of the review (IOM Standards 2.6, 2.7, and 2.8).

Identify Evidence

Utilizing a systematic review approach sets the expectation that the resulting assessment will provide a complete picture of relevant evidence about the question of interest. However, a systematic review is only as good as the scientific evidence on which it is based. Systematic reviews rely on comprehensive, objective, transparent, and reproducible literature searches that support the identification of all potentially relevant literature. In contrast to narrative literature reviews, systematic reviews allow for reproducibility of the search, which is critical not only to ensure the independent reproducibility of the search results, but also to support updates to the systematic review. Furthermore, systematic reviews strive to minimize reporting bias by searching a variety of sources, not just published literature databases (NRC 2014). Lastly, the screening process identifies relevant literature in a transparent manner with clearly defined inclusion/exclusion criteria that reduces reliance on subjective judgment to select studies. These areas are discussed in more detail below.

Searching for Evidence

The IOM (2011) provides several standards about how to conduct the search, and how to report the search results for a systematic review (IOM Standards 3.1 and 3.2). For instance, the IOM standards recommend the use of a trained systematic review librarian or information specialist to plan the search strategy and develop database-specific search terms. Given the wide range of different bibliographic databases, information sources, and grey literature available, the specialized training and skills of a librarian can navigate these resources by casting a wide net to capture all relevant literature while also maintaining specificity to limit the amount of irrelevant data captured.

The DOD literature search relied on a 2011 assessment of the toxicity of TCE conducted by the U.S. Environmental Protection Agency (EPA) Integrated Risk Information System program as a starting point. The EPA (2011) assessment was not a systematic review, but rather a comprehensive narrative review that included approximately 2,000 studies published through 2010. DOD conducted a new literature search spanning January 2010 to November 2017. It is not clear from the DOD report exactly how the synthesis or results from the EPA review were used. Several critical limitations related to the identification of evidence in the DOD review include:

- The EPA (2011) assessment only reported specific search terms for cancer outcomes, which included “‘trichloroethylene cancer epidemiology’ and ancillary terms, ‘degreasers,’ ‘aircraft, aerospace or aircraft maintenance workers,’ ‘metal workers,’ and ‘electronic workers,’ ‘trichloroethylene and cohort,’ or ‘trichloroethylene and case-control’” to search PubMed, Embase, and TOXNET. DOD reported using a broader set of search terms but these were not explicitly defined. For example, PubMed was searched using the terms “Trichloroethylene [All Fields] OR Trichloroethene [All Fields],” Embase using “a similar search” (which was not described), and TOXNET using the terms “trichloroethylene, including synonyms and CAS No.” The rationale for deviating from EPA’s original search terms for DOD’s updated search was not provided and doing so raises concern regarding the similarities in the evidence retrieved post-2010 compared to the existing evidence retrieved in the original EPA search. Current standards (IOM Standard 2.6.4) require a description of the search strategy, including search terms, for identifying relevant evidence.

- DOD’s search terms offer some transparency but still raise questions as to the exact nature of each search within the three databases, limiting the ability of an independent third party to reproduce the search. For instance, describing the search in Embase as “a similar search” to that in PubMed indicates that the same search terms were used, but it is unclear whether this was the case. The TOXNET search describes using “synonyms” for TCE but does not provide a list of exact synonyms that were used. Lastly, a targeted literature search was reported for in vitro and in silico studies as supporting coherence and plausibility of the included in vivo evidence, but no databases or search terms were provided, limiting the transparency of this aspect of the literature search. Current standards for systematic reviews (IOM Standard 3.4) and for reporting reviews (PRISMA Checklist Item 8) require inclusion of the detailed search strategies.

- An additional literature search (on January 23, 2019) and literature screening was conducted that identified 7 human and 11 animal studies. However, these 18 studies were only listed in the report (Appendix G in the DOD report) and not incorporated into the review. Although the effort to incorporate newer studies is commendable, listing newer studies without any assessment or integration into the existing body of relevant literature appears minimally useful. Instead, it would be more appropriate to clearly state within a pre-published protocol the stopping criteria for the review (such as a date after which no new studies would be considered) or the procedures for how updates are to be conducted and newer studies integrated into the review. Current standards for systematic review (IOM Standard 3.1.7), including guidance from Cochrane (Higgins and Green 2011), require periodic updates.

- Several other search strategies commonly implemented in systematic reviews were not a part of the DOD process, such as searching grey literature databases or other sources of unpublished information (IOM Standard 3.2.1), reviewing citation lists of included studies (IOM Standard 3.1.6), or hand-searching selected journals and conference abstracts (IOM Standard 3.2.4).

Selection of Evidence

It is crucial that the selection of eligible studies is based on prespecified criteria in a manner that limits potential for bias (IOM Standard 3.3). Screening studies is typically a labor-intensive process involving at least two screeners and requires careful judgments and meticulous documentation about eligibility. Use of a software or database program to track the inclusion/exclusion process aids in resolving conflicts between screeners, documentation of the process, and efficiency of the screening task. A recent review of software tools for literature screening in systematic reviews is available (van der Mierdan et al. 2019). Furthermore, several tools1 incorporate semi-automated machine learning functionality that uses natural language processing to evaluate studies as they are screened to prioritize unscreened studies based on their predicted relevance. This may reduce the time and resources required for screening references, particularly when the number of studies is on the order of tens of thousands.

Several critical limitations were present in the screening methods described in the DOD draft report. First, the eligibility criteria were not explicitly stated and were not pre-specified in a protocol. Second, how DOD determined the subset of older studies (in EPA [2011] and other expert reviews) to include in its evaluation was unclear. A standard flow chart of literature search and screening results should document how a final subset of “included” studies in a systematic review was determined (PRISMA flow diagram). The number of included studies should be specified, and the references provided. Excluded studies should also be specified and accompanied by the reasons for exclusion (IOM Standards 3.4 and 5.1). DOD’s report does not provide a complete set of information to determine the studies that were included in the systematic review. Figure 1 of the DOD report is a flow chart that documents the number of references cited in the report. The literature search and screening of the newer literature on TCE is documented with the specific numbers of studies (58 human studies and 43 animal studies) and a reference list is provided in an appendix. The number of older studies identified by previous expert reviews and targeted searches is recorded as “>400” studies, and these are added with the new studies to indicate that DOD’s toxicity summary includes more than 500 references. How the more than 400 older studies were selected is not described. Furthermore, how 56 animal studies were selected for critical evaluation was not described. In contrast, special attention is given to evaluating the evidence on congenital heart defects, with particular emphasis on reasons to exclude a study (see Chapter 6 for more details). The lack of transparency and inconsistency with standard reporting practices limits the ability to determine the appropriateness of the results from this review or to reproduce and/or update it.

___________________

1 Tools include Abstracker (https://www.brown.edu/public-health/cesh/resources/software); Distiller AI (https://www.evidencepartners.com/distiller-ai); and SWIFT-Active Screener (https://www.sciome.com/swift-activescreener).

Evaluate Evidence

In a systematic review, the individual studies are critically appraised using pre-defined criteria (IOM Standard 3.6) and then the body of evidence (all of the included studies for a particular question and outcome) is synthesized (qualitatively or quantitatively) (IOM Standard 4.2, 4.3) and evaluated (IOM Standard 4.1) to draw a conclusion and specify a level of confidence in that conclusion.

Critically Appraise Individual Studies

DOD created a custom approach that it described as a quantitative weight-of-evidence (WOE) scoring system to evaluate the quality, relevance, and risk of bias of individual studies. The result is a numerical score of overall study applicability (see Tables 3-5 in Sussan et al. [2019]). The committee found that the study applicability tool had several problematic limitations. For instance, the tool conflates appraisal of individual studies and considerations of the body of evidence to evaluate individual animal studies. Aspects of risk-of-bias assessment of individual studies are included in the tool, but DOD’s approach is based primarily on the qualitative considerations defined by Bradford Hill (1965), which address the confidence in an association when considering a body of evidence. For instance, constructs such as coherence or consistency (called “reproducibility” in the DOD draft report) are considered when evaluating a body of evidence, not an individual study. Furthermore, inappropriate concepts are included in the DOD study applicability tool. For instance, DOD’s tool includes statistical significance as a criterion for assessing a study. Statistical significance is not a measure of association or strength of association and should not be used to evaluate studies. DOD’s scoring tool also integrated the relevance of certain studies to be utilized for dose-response assessment and derivation of toxicity values, which is not appropriate to include as part of a systematic review for hazard identification.

The DOD study applicability tool has nine domains that are evaluated using a point system to achieve a study “score.” Detailed criteria for determining the number of points in each domain are described. Two independent reviewers individually judged each of the 56 selected studies for a possible score of up to 100 points per study based on the criteria, and then a consensus score of the reviewers was developed. However, the use of scores does not follow current standards of practice for the conduct of systematic reviews (Higgins and Green 2011). Use of scores can obscure important differences in study quality and combines aspects of risk of bias, quality of reporting, and, in the case of the DOD approach, certainty in the body of evidence. Furthermore, the reliance on scores to indicate quality is problematic, as it has been shown empirically (Jüni et al. 1999; Herbison et al. 2006) and theoretically (Greenland and O’Rourke 2001) that the relationship between scores and effect or association is inconsistent and unpredictable. DOD’s draft report mentioned that a workshop that included federal stakeholders was held in February 2018 that focused on the agency’s proposed scoring approach.

What stakeholder input was provided and how it was used in developing the scoring tool was not discussed.

DOD’s scoring tool “was designed to score in vivo controlled animals studies; hence, data from epidemiological studies were considered separately” (Sussan et al. 2019, p. 44). A review of the scores in Appendix D of the DOD draft report suggests that the tool was applied only to studies of noncancer outcomes in animals. No explanation was provided for why it was not applied to cancer studies in animals. A risk of bias or quality assessment of the epidemiological literature also was not performed. Why this line of evidence was not evaluated for quality, relevance, and risk of bias of individual studies was unclear, particularly since the epidemiological studies are used by DOD to determine potential cancer risks at the proposed occupational exposure level.

Synthesis of Evidence

All systematic reviews include a qualitative synthesis (IOM Standard 4.2) and some, when there is an adequate number of similar studies, include a quantitative analysis (i.e., meta-analysis) (IOM Standards 4.3 and 4.4). It appears that DOD only performed qualitative syntheses. Ideally, a qualitative synthesis provides details about the methods and characteristics of the included studies, including a discussion about the influence of these characteristics on study findings and patterns of results across studies. In the DOD draft report, the use of summary scores obscures the judgments about the strengths and limitations of study design and execution. Missing from the DOD report were evidence tables that could provide many of the study-level details needed to interpret the results.

Certainty of Evidence

Following the best practices for a systematic review, an assessment of the certainty of evidence is made after evidence synthesis (IOM Standard 4.1). This step involves rating the certainty that an association observed in the body of evidence (across the studies addressing a particular question and outcome, within a line of evidence [e.g., human, animal]) contains the true association, within bounds of reason. The “association” here may refer to an apparent adverse health effect from exposure, a beneficial effect, or no association. “Certainty” is also sometimes called confidence or strength of the evidence.

Certainty of evidence includes risk of bias or study limitations, as well as the domains of precision, consistency, directness or applicability, and reporting bias across the body of evidence. DOD’s approach included some, but not all, of these aspects, and inappropriately applied them to individual studies. There are several frameworks for assessment of certainty of evidence, including those that have been applied in the environmental field (Guyatt et al. 2011; Woodruff and Sutton 2014; NTP 2019). NTP (2019) provides an explanation of its approach for rating certainty (called “confidence” in its guidance for systematic reviews) as a

modified application of the GRADE (Grading of Recommendations Assessment, Development and Evaluation) framework. Most existing guidance focuses on evidence from human and animal studies, with very little guidance for assessing the certainty of mechanistic data. An applied example can be seen in a National Academies (2017) report on endocrine active chemicals.

DOD did not explicitly assess the certainty of evidence. Instead, as noted earlier, features and characteristics that would affect certainty for a body of evidence (e.g., consistency, coherence) were included in its scoring on a study-by-study basis. By doing so, the DOD scoring conflated risk-of-bias and quality evaluation of individual studies with assessment of certainty for a body of evidence. In addition, certain concepts were inappropriately included in the DOD scoring tool, such as the biological significance of the effect (importance of the effect for human health). The importance of the effect does not have any bearing on the level of certainty of the apparent effect, and consideration of the importance would more appropriately be applied at the outset when defining the scope of the systematic review (by only including important effects in the review) or after hazard identification when selecting studies for dose-response assessment.

An assessment of certainty may have been useful in DOD’s evaluation of the evidence on congenital heart defects. Separation of an overall rating of the certainty of evidence from the animal studies on congenital heart defects from risk-of-bias considerations would provide more transparency in DOD’s conclusions regarding this body of evidence (see Chapter 6 for more detail on this topic). Similarly, in the evaluation of immune studies, a preponderance of animal studies with an ingestion exposure route (i.e., indirectness of evidence for the inhalation pathway) may have resulted in downgraded certainty if accompanied by evidence of unexplained route-specific effects.

INTEGRATE EVIDENCE

In a toxicological assessment, evidence from human and animal studies are first considered as separate lines of evidence. After the systematic reviews are complete, the lines of evidence are integrated to address a particular question about an outcome and its association with a particular chemical. Mechanistic data are considered at this stage to inform the biological plausibility of conclusions. Several approaches to evidence integration have been developed, including those specific to toxicological assessments (Woodruff and Sutton 2014; NTP 2019).

In the DOD assessment, no separate synthesis and determination of certainty of evidence was conducted for animal and human studies. It was not clear how mechanistic evidence was identified or assessed. Furthermore, Figure 2 in the DOD draft report illustrates that the three evidence streams were to be considered but it is not clear from this figure, or accompanying text, how or if evidence integration was conducted in making any conclusions about hazard.

FINDINGS

Although the DOD draft report asserts that a systematic review of the newer TCE literature was performed, the committee found that only selected elements of systematic review were followed. If DOD’s intent is to perform a credible systematic review, the committee suggests following one of the established methods (e.g., Woodruff and Sutton 2014; NTP 2019) and the following specific recommendations:

- Preparation of a systematic review protocol that details the pre-defined methods and criteria, which is peer-reviewed and publicly posted before the review is undertaken;

- Documentation of how studies from each evidence stream (human, animal, and mechanistic) are identified, assessed, and synthesized;

- Use of a trained librarian or information specialist to develop tailored search terms and to design a strategic approach to searching the literature;

- Use of several approaches to identifying relevant literature, including searching the reference lists of included studies, grey literature and other sources of unpublished information, and hand-searching selected journals and conference abstracts;

- Pre-specifying the criteria that will be used to include or exclude studies;

- Documentation of excluded references and their reasons for exclusion;

- Assessment of risk of bias and quality of individual studies and then, separately, determine certainty in the body of evidence;

- Statistical significance is not a criterion of study quality;

- Numeric scores are not used to evaluate studies;

- Provide details about what studies were selected for assessment and included in synthesis;

- Conduct separate evidence synthesis and determinations about the certainty of the evidence for each stream of evidence; and

- Describe how different streams of evidence are integrated.

These elements are also relevant to other hazard-identification approaches, and can be used as a guide to ensure consistency and transparency in approaches with less formal structures.

DOD was inconsistent in its approach to evaluating the data on TCE. A formal process for evaluating study quality and applicability was applied only to animal studies of noncancer end points. Qualitative narrative reviews of the animal cancer data and the epidemiological studies were conducted, but it is unclear how these different lines of evidence were used to draw hazard conclusions. The committee recommends that all studies used to inform the hazard identification be evaluated for quality using approaches appropriate for the types of studies (e.g., animal, epidemiological) or justification provided for using different approaches.

REFERENCES

Bove, F.J. 1996. Public drinking water contamination and birthweight, prematurity, fetal deaths, and birth defects. Toxicol. Ind. Health 12(2):255-266.

Bove, F.J., M.C. Fulcomer, J.B. Klotz, J. Esmart, E.M. Dufficy, and J.E. Savrin. 1995. Public drinking water contamination and birth outcomes. Am. J. Epidemiol. 141(9): 850-862.

Bradford Hill, A. 1965. The environment and disease: Association or causation? Proc. R. S. Med. 58(2):295-300.

EPA (U.S. Environmental Protection Agency). 2011. Toxicological Review of Trichloroethylene (CAS No. 79-01-6) in Support of Summary Information on the Integrated Risk Information System (IRIS), September 11, 2011. EPA/635/R-09/011F. Washington, DC: EPA [online]. Available: https://www.epa.gov/iris/supporting-documents-trichloroethylene [accessed April 24, 2019].

Greenland, S., and K. O’Rourke. 2001. On the bias produced by quality scores in meta-analysis, and a hierarchical view of proposed solutions. Biostatistics 2(4):463-471.

Guyatt, G.H., A.D. Oxman, V. Montori, G. Vist, R. Kunz, J. Brozek, P. Alonso-Coello, B. Djulbegovic, D. Atkins, Y. Falck-Ytter, and J.W. Williams, Jr. 2011. GRADE guidelines: 5. Rating the quality of evidence—publication bias. J. Clin. Epidemiol. 64(12):1277-1282.

Herbison, P., J. Hay-Smith, and W.J. Gillespie. 2006. Adjustment of meta-analyses on the basis of quality scores should be abandoned. J. Clin. Epidemiol. 59(12):1249-1256.

Higgins, J.P.T., and S. Green (eds). 2011. Cochrane Handbook for Systematic Reviews of Interventions Version 5.1.0 [updated March 2011]. The Cochrane Collaboration [online]. Available: https://training.cochrane.org/handbook [accessed July 3, 2019].

IOM (Institute of Medicine). 2011. Finding What Works in Health Care: Standards for Systematic Reviews. Washington, DC: The National Academies Press.

Jüni, P., A. Witschi, R. Bloch, and M. Egger. 1999. The hazards of scoring the quality of clinical trials for meta-analysis. JAMA 282:1054-1060.

Lagakos, S.W., B.J. Wessen, and M. Zelen. 1986. An analysis of contaminated well water and health effects in Woburn, Massachusetts. J. Am. Stat. Assoc. 81(395):583-596.

Lam, J., P. Sutton, A. Halladay, L. Davidson, C. Lawler, C. Newschaffer, A. Kalkbrenner, G. Windham, N. Daniels, S. Sen, and T. Woodruff. 2015a. Applying the Navigation Guide Systematic Review Methodology. Case Study #4: Association Between Developmental Exposures to Ambient Air Pollution and Autism. PROSPERO 2015 CRD42015017890 [online]. Available: http://www.crd.york.ac.uk/PROSPERO/display_record.php?ID=CRD42015017890 [accessed July 1, 2019].

Lam, J., P. Sutton, J. McPartland, L. Davidson, N. Daniels, S. Sen, D. Axelrad, B. Lanphear, D. Bellinger, and T. Woodruff. 2015b. Applying the Navigation Guide Systematic Review Methodology. Case Study #5: Association Between Developmental Exposures to PBDEs and Human Neurodevelopment. PROSPERO 2015 CRD42015019753 [online]. Available: http://www.crd.york.ac.uk/PROSPERO/display_record.php?ID=CRD42015019753 [accessed July 1, 2019].

Mandrioli, D., V. Schlünssen, B. Adam, R.A. Cohen, C. Colosio, W. Chen, A. Fischer, L. Godderis, T. Goeen, I.D. Ivanov, and N. Leppink. 2018. WHO/ILO work-related burden of disease and injury: Protocol for systematic reviews of occupational exposure to dusts and/or fibres and of the effect of occupational exposure to dusts and/or fibres on pneumoconiosis. Environ. Int. 119:174-185.

Moher, D., A. Liberati, J. Tetzlaff, and D.G. Altman, and The PRISMA Group. 2009. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. Ann. Intern. Med. 6(7):e1000097.

NASEM (National Academies of Sciences, Engineering, and Medicine). 2017. Application of Systematic Review Methods in an Overall Strategy for Evaluating Low-Dose Toxicity from Endocrine Active Chemicals. Washington, DC: The National Academies Press.

NASEM. 2018. Progress Toward Transforming the Integrated Risk Information System (IRIS) Program: A 2018 Evaluation. Washington, DC: The National Academies Press.

NRC (National Research Council). 2011. Review of the Environmental Protection Agency’s Draft IRIS Assessment of Formaldehyde. Washington, DC: The National Academies Press.

NRC. 2014. Review of EPA’s Integrated Risk Information System (IRIS) Process. Washington, DC: The National Academies Press.

NTP (National Toxicology Program). 2013a. Draft Protocol for Systematic Review to Evaluate the Evidence for an Association Between bisphenol A (BPA) Exposure and Obesity. Research Triangle Park, NC: Office of Health Assessment and Translation, Division, National Toxicology Program, National Institute of Environmental Health Sciences. April 9, 2013 [online]. Available: https://ntp.niehs.nih.gov/ntp/ohat/evaluationprocess/bpaprotocoldraft.pdf [accessed July 1, 2019].

NTP. 2013b. Draft Protocol for Systematic Review to Evaluate the Evidence for an Association Between perfluorooctanoic acid (PFOA) or perfluorooctane sulfonate (PFOS) Exposure and Immunotoxicity. April 9, 2013 [online]. Triangle Park, NC: Office of Health Assessment and Translation, Division, National Toxicology Program, National Institute of Environmental Health Sciences [online]. Available: https://ntp.niehs.nih.gov/ntp/ohat/evaluationprocess/pfos_pfoa_immuneprotocoldraft.pdf [accessed July 1, 2019].

NTP. 2019. Handbook for Conducting a Literature-Based Health Assessment Using OHAT Approach for Systematic Review and Evidence Integration. Triangle Park, NC: Office of Health Assessment and Translation, Division, National Toxicology Program, National Institute of Environmental Health Sciences. March 4, 2019 [online]. Available: https://ntp.niehs.nih.gov/pubhealth/hat/review/index-2.html [accessed July 3, 2019].

Ruckart, P.Z., F.J. Bove, and M. Maslia. 2013. Evaluation of exposure to contaminated drinking water and specific birth defects and childhood cancers at Marine Corps Base Camp Lejeune, North Carolina: A case-control study. Environ. Health 12:104.

Shea, B.J., B.C. Reeves, G. Wells, M. Thuku, C. Hamel, J. Moran, D. Moher, P. Tugwell, V. Welch, E. Kristjansson, and D.A. Henry. 2017. AMSTAR2: A critical appraisal tool for systematic reviews that include randomized or non-randomized studies of healthcare interventions. BMJ 358:j4008.

Sussan, T.E., G.J. Leach, T.R. Covington, J.M. Gearhart, and M.S. Johnson. 2019. Trichloroethylene: Occupational Exposure Level for the Department of Defense. January 2019. U.S. Army Public Health Center, Aberdeen Proving Ground, MD.

van der Mierdan, S., K. Tsaioun, A. Bleich, and C.H.C. Leenaars. 2019. Software tools for literature screening in systematic reviews in biomedical research. ALTEX 36(3): 508-517.

Whiting, P., J. Savović, J.P.T. Higgins, D.M. Caldwell, B.C. Reeves, B. Shea, P. Davies, J. Kleijnen, and R. Churchill, The ROBIS Group. 2016. ROBIS: A new tool to assess risk of bias in systematic reviews was developed. Clin. Epidemiol. 69:225-234.

Wikoff, D.S., and G.W. Miller. 2018. Systematic reviews in toxicology (editorial). Toxicol. Sci. 163(2):335-337.

Woodruff, T.J., and P. Sutton. 2014. The Navigation Guide systematic review methodology: A rigorous and transparent method for translating environmental health science into better health outcomes. Environ. Health Perspect. 122(10):1007-1014.