Proceedings of a Workshop

| IN BRIEF | |

January 2020 |

Recognizing and Evaluating Science Teaching in Higher Education

Proceedings of a Workshop—in Brief

A fundamental goal of undergraduate education is to provide all students, regardless of ethnicity, gender, or age, with an excellent educational experience that will prepare them for success post-graduation. Evidence-based instructional practices have been linked to positive student outcomes, including retention in college, improved academic and cognitive skills, and improved student performance by groups historically underrepresented in science, technology, engineering, and mathematics (STEM) fields.2 However, as stated by Gabriela Weaver (University of Massachusetts, Amherst) in her opening comments, the shift toward implementation of research-based pedagogy has proven difficult. Teaching evaluations offer one potential lever for driving adoption of evidence-based instructional approaches in undergraduate STEM education. The strategies used to recognize excellence in teaching can drive the behavior of faculty, according to Weaver. For this reason, it is critically important that those evaluation strategies are consistent with an institution’s objectives, both in terms of quality teaching and in support of the overarching goal of student success.

In many undergraduate institutions, including community colleges, student ratings are employed as a primary tool for the assessment of teaching, and student ratings data are often used for promotion and tenure decisions. As noted by Ann Austin (cochair of the Roundtable, Michigan State University),

___________

1 See https://sites.nationalacademies.org/DBASSE/BOSE/recognition-and-evaluation-of-teaching-in-higher-education/index.htm.

2 National Research Council. (2012). Discipline-Based Education Research: Understanding and Improving Learning in Undergraduate Science and Engineering. Washington, DC: The National Academies Press. See also Freeman, S., Eddy, S.L., McDonough, M., et al. (2014). Active learning increases student performance in science, engineering, and mathematics. Proceedings of the National Academy of Sciences, 111(23), 8410–8415.

![]()

broad concerns have emerged in recent years regarding the validity of student ratings as a measure of effective teaching.3 Student ratings often do not reflect the use of evidence-based instructional approaches and, according to Andrea Follmer Greenhoot (University of Kansas), can even negatively correlate with the use of these instructional strategies.4 Additionally, Greenhoot stated, student ratings can be biased against underrepresented groups5 and, according to several workshop participants, these ratings also fail to capture the full array of behind-the-scenes work that goes into teaching. It is widely recognized that additional forms of teacher evaluation would provide a more complete picture of faculty work, and as a result, could encourage improvements in undergraduate STEM education.

The Roundtable on Systemic Change in Undergraduate STEM Education, convened by the National Academies of Sciences, Engineering, and Medicine, works to address and coordinate efforts to improve undergraduate STEM education, including improving teaching and learning. In this context, the roundtable conducted a workshop titled Recognizing and Evaluating Science Teaching in Higher Education to begin to frame the national conversation around effective ways to evaluate undergraduate teaching, particularly in STEM areas, that can help drive adoption of evidence-based instructional approaches. The goal of the workshop was to identify the questions, challenges, and levers for change that may be useful to consider in order to implement improvements in the teaching evaluation process, with the core mission of improving instruction and contributing to students’ success. To accomplish this goal, the workshop included members from a variety of efforts active in national conversations around improving undergraduate education, including the Association of American Universities (AAU), Accelerating Systemic Change Network, and Transforming Higher Education—Multidimensional Evaluation of Teaching (TEval) project.

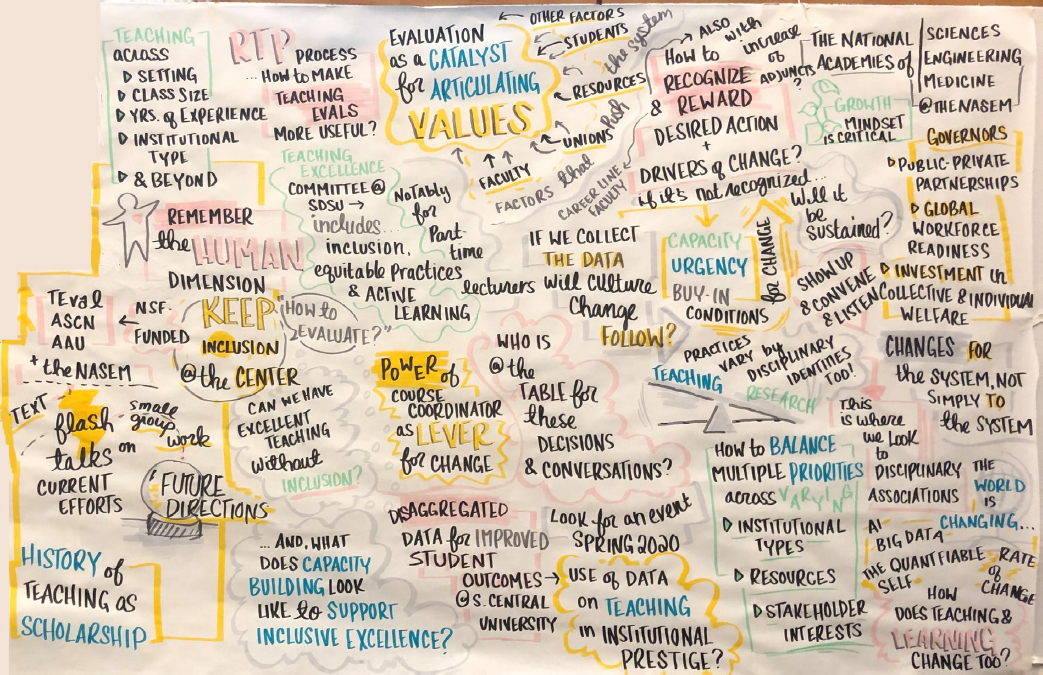

Austin noted that the process of improving the way teaching is currently evaluated involves more than just identifying better evaluation measures, but must comprise a systemic change at the institutionwide level, because “teaching evaluation (is) actually embedded in bigger conversations…about the place of teaching and the mission of higher education at all of our institutions.” More specifically, broad institutional structures that devalue teaching in favor of research must be addressed, according to Jim Fairweather (Michigan State University), because institutional policies and departmental norms, along with the strong financial incentive of publication, influence the way faculty view teaching responsibilities. As such, the broad changes in institutional culture necessary to support improvements in the teaching evaluation process feed into the larger mission of the roundtable, which aims to collectively move the needle in the direction of effective, systems-level change in several areas of undergraduate STEM education. The discussions at the workshop focused on historical perspectives on teaching evaluation, current initiatives to improve the evaluation process, ways to support systemic change, and future directions that may be appropriate to consider as institutions begin the process of reforming evaluation systems. (Figure 1 graphically presents some of the comments on the system raised at the workshop.)

EVALUATION OF TEACHING: PAST TO PRESENT

A panel of scholars specializing in teaching and learning practices in higher education discussed historical perspectives on the way teaching is viewed within institutions, research efforts undertaken to improve teaching evaluation and the lessons learned from these efforts, and the focus of the current national conversation around the evaluation of teaching.

Jim Fairweather provided a historical perspective on teaching and how faculty approach the teaching profession. He described the traditional Germanic model of teaching as a “calling,” a view that can lead to a focus on the individual teacher as the locus of potential change. This idea that dedication to teach-

___________

3 Boring, A., Ottoboni, K., and Stark, P.B. (2016). Student evaluations of teaching (mostly) do not measure teaching effectiveness. ScienceOpen Research. DOI: 10.14293/S2199-1006.1.SOREDU.AETBZC.v1. See also Uttl, B., White, C.A., and Wong Gonzalez, D. (2017). Meta-analysis of faculty’s teaching effectiveness: Student evaluation of teaching ratings and student learning are not related. Studies in Educational Evaluation, 54(1), 22–42.

4 Deslauriers, L., McCarty, L.S., Miller, K., Callaghan, K., and Kestin, G. (2019). Measuring actual learning versus feeling of learning in response to being actively engaged in the classroom. Proceedings of the National Academy of Sciences, 116(39), 19251–19257.

5 MacNell, L., Driscoll, A., and Hunt, A.N. (2015). What’s in a name: Exposing gender bias in student ratings of teaching. Innovative Higher Education, 40(4), 291–303. See also Basow, S.A., and Martin, L.J. (2012). Bias in student evaluations. Pgs. 40–49 in Effective Evaluation of Teaching: A Guide for Faculty and Administrators, edited by Mary E. Kite. Washington, DC: Society for the Teaching of Psychology. Available: http://teachpsych.org/ebooks/evals2012/index.php. See also Spooren, P., Brockx, B., and Mortelmans, D. (2013). On the validity of student evaluation of teaching: The state of the art. Review of Educational Research, 83(4), 598–642.

ing is internally and individually motivated, according to Fairweather, largely ignores the influence that institutional policies and practices can have on faculty behavior or on the quality of teaching. In addition, many institutions have emphasized the value of research over teaching in their reward systems, which include hiring, salary, and promotion and tenure. These reward systems, according to Fairweather’s research, strongly affect faculty’s commitment to teaching. Fairweather noted a generally inverse relationship between the amount of time faculty spend on research and the amount they spend on teaching. In Fairweather’s words, “academic institutions, through cultural norms and rewards, play an active role in how faculty view the importance and relative value of teaching.” Therefore, Fairweather stated, “active engagement by institutional leaders is a prerequisite for teaching reforms to succeed.”

Mary Huber (Bay View Alliance) described the ongoing shift toward a view of teaching as scholarship and the challenges of implementing new evaluation methods that support this view. Huber suggested that the scholarship of teaching and learning “has contributed to moving the discussion of the evaluation of teaching from questions of student satisfaction alone to include a focus on the quality of the intellectual work in teaching.” This shift, according to Huber, has expanded the definition of excellent teaching. However, she explained, issues persist around incorporating this intellectual work into new systems of evaluation and considering such work when making decisions about promotion and tenure. These issues affect both the individuals performing and receiving evaluations and those on promotion and tenure committees, Huber stated, with the issues to include learning about new approaches to evaluation, the extra time that some evaluation approaches require, and persuading departments and institutions to support the work.

Greenhoot presented the limitations of student ratings as a method of evaluation and the challenges faced in creating new evaluation practices based on current definitions of effective teaching. She remarked that, at many institutions, proposed improvements often do not result in meaningful, systemic changes to the teaching evaluation process. She noted research indicating that overreliance on student evaluations still exists, despite institutional policies and guidance from national organizations caution-

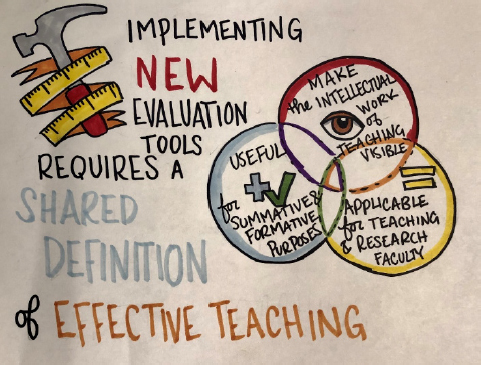

ing against reliance on these ratings.6 She stressed that putting improved evaluation tools into broad practice is predicated on a shared definition of effective teaching, which may be a useful discussion point for workshop participants. New approaches to evaluation based on a shared definition of effective teaching, continued Greenhoot, should make the intellectual work of teaching visible, should be useful for both summative and formative purposes, and should be applicable for teaching-stream faculty as well as those with research responsibilities (see Figure 2).

In the discussion that followed the panel presentations, several participants highlighted differences between teaching and research and the implications for evaluation. Sierra Dawson (University of Oregon) noted that faculty members often exhibit “an underlying fear of being evaluated in teaching that does not show up in their research.” Paula Lemons (University of Georgia) suggested that this difference might exist because research is frequently performed in community, with many opportunities for feedback, while teaching often remains an individual activity. Another difference between research and teaching that impacts the evaluation process, according to Huber, is that of attribution. She asserted that, while the work of researchers is consistently acknowledged through the publication process, widespread adoption of new teaching practices often occurs with no attribution to the original developer. This lack of a systematic attribution process for intellectual developments in teaching, continued Huber, can make it difficult for teachers to demonstrate the influence of their work.

Discussion also revolved around the degree to which review systems for teaching and research might be shared. Mark Lee (Spelman College) asked how the peer review process used in research might also be used to establish a standard of quality in teaching. Several participants described challenges around the use of peer review to evaluate teaching. Carl Wieman (Stanford University) stated that peer review in research is based on a consensus of quality, which, in his opinion, does not currently exist for teaching. Barbara Schaal (Washington University in St. Louis) noted that classroom teaching practices are the most difficult aspect of teaching to peer review and statistically reliable measures of student learning that reflect quality teaching practices must be identified. Jose Herrera (Mercy College) expressed concern over the use of student learning as a proxy for effective teaching, because measures of student learning can vary according to class size and level. As an additional challenge, Greenhoot noted that although institutions willingly support time spent performing peer review of research, many are less supportive of the time necessary to implement peer review of teaching.

Lee summarized a number of potential approaches to effective evaluation of teaching raised by various panelists and participants. He noted the importance of valid and reliable measures, clear methodology, and identification of important stakeholders, among others. Further, Lee emphasized that excellent teaching often happens in community, and changes to the evaluation process provide an opportunity to influence institutional culture in a way that can remove existing biases and increase inclusion.

PRESENT-DAY INITIATIVES TO IMPROVE TEACHING EVALUATION

To highlight current work performed around recognizing and evaluating science teaching at various types of institutions, 14 individuals who are leading efforts on their campuses each gave 3-minute presentations describing useful ideas or methods. (A full list of flash talks with descriptions of each initiative can be found on the workshop’s Webpage7). Following the talks, workshop participants met in breakout groups to reflect on the promising approaches and key challenges, levers, and opportunities linked to

___________

6 Dennin, M., Schultz, Z.D., Feig, A., Finkelstein, N., Follmer Greenhoot, A., Hildreth, M., et al. (2017). Aligning practice to policies: Changing the culture to recognize and reward teaching at research institutions. CBE—Life Sciences Education, 16(5), 1–8.

7 See https://sites.nationalacademies.org/DBASSE/BOSE/recognition-and-evaluation-of-teaching-in-higher-education/index.htm.

these approaches. A designated reporter from each group shared the main discussion points with the larger group upon reconvening.

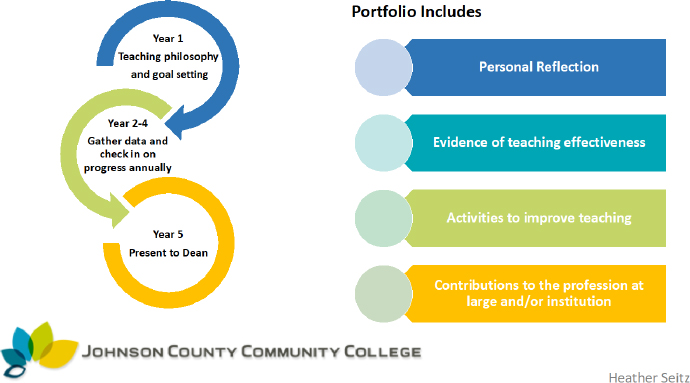

SOURCE: Presentation by Heather Seitz, September 11, 2019.

Using a Suite of Measures

Many of the initiatives highlighted the importance of structuring teacher evaluations as a suite of assessment strategies, as opposed to reliance on a single measure. In several examples, student evaluations were not eliminated but were instead included as one of several measures. Specifically, Dawson explained that evaluation data for the University of Oregon’s Continuous Improvement and Evaluation of Teaching System are drawn not only from students, but also from faculty peers and faculty self-reflections. The multiuniversity TEval project, as described in a flash talk video, also uses student, peer, and faculty data for evaluations.8 Doune MacDonald (University of Queensland) shared that her institution uses various methods of evaluation to make a broad case for a teacher’s effectiveness, but is “not ready to give up [the student voice], which is important in our teaching.” To include the student voice while attempting to reduce the level of importance of student evaluations in promotion and tenure decisions, Sara Marcketti (Iowa State University) remarked that a task force at her institution recommended renaming student evaluations as “student ratings of teaching.” As an example of the role faculty members can play in their own review processes, Heather Seitz (Johnson County Community College) described a 5-year portfolio project at her institution, in which the final portfolio contains the faculty member’s teaching philosophies, goals, activities to improve teaching, data gathered in support of the goals, and personal reflections on the work (see Figure 3). Loretta Brancaccio-Taras (Kingsborough Community College) also mentioned portfolios as a possible replacement for scholarly publications, which are currently a required component of the evaluation process at her institution.

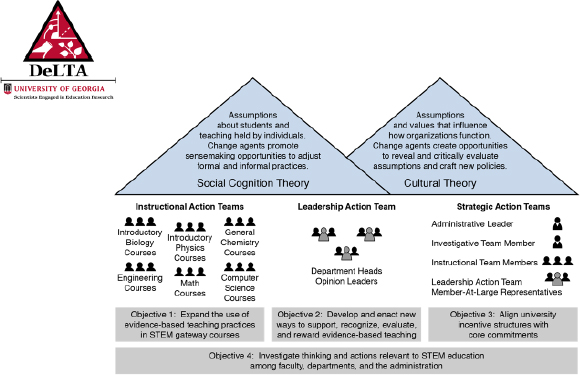

Adopting Shared Governance Models

Several breakout groups noted a shared-governance model as a second promising idea that surfaced from multiple presentations. In a shared-governance approach, disciplines and departments at various institutions are allowed a degree of autonomy in the creation and implementation of new methods of teaching evaluation. Lemons described the Department and Leadership Teams for Action (DeLTA) project at the University of Georgia, which involves faculty working at the course level to broaden the use of evidence-based teaching practices, together with leadership action teams that work opportunistically on ongoing department efforts (see Figure 4). Ginger Clark (University of Southern California) highlighted the involvement of faculty in the creation of a definition of teaching excellence that applies to most USC faculty. Ingrid Novodvorsky (University of Arizona) described the implementation of a peer review teaching protocol that includes a teaching observation tool suite customizable by departments to reflect the aspects of teaching of primary value in each discipline.

Building Support Across Campus

An additional promising approach highlighted during the breakout sessions is to bring people from across an institution together to collectively support efforts for change and achieve systemwide agreement. As an example, Dawson described how the faculty senate, provost, unit heads, and union at

___________

8 TEval is an NSF-funded project that seeks to advance educational practices and models of more effective teaching evaluation at universities. In addition to developing tools and practices for improved evaluation, they work to advance understanding of the institutional change process for the adoption and integration of new approaches. The overall project encourages the use of evaluation, incentive, and reward processes as a lever to promote greater use of evidence-based teaching within universities as complex systems. More information can be found at https://teval.net.

the University of Oregon all worked together to achieve alignment and consensus across disciplines, resulting in an addendum to their collective bargaining agreement outlining the definition and standards of teaching excellence. The TEval project video,9 shown with the flash talks, illustrated the creation of communities of change within and across participating universities to promote information sharing, problem solving, and community building. Flower Darby (Northern Arizona University) stressed the importance of faculty working together in community and pointed to teaching circles at her institution as an example. Seitz highlighted the importance of collective support of change initiatives, describing how the lack of alignment between faculty and administration at Johnson County Community College presented a barrier to the effective implementation of faculty portfolios as a method of evaluation.

SOURCE: Presentation by Paula Lemons, September 11, 2019.

Summary of Session

Emily Miller (Association of American Universities) summarized the session, stating “in all of these efforts, there was a real consideration of how to value, assess, and reward faculty for the full range of teaching activities and the intellectual work that goes into them.” Fairweather noted that the initiatives presented in the flash talks demonstrated that each institution can develop appropriate evaluation methods and outcome measures based on institution-specific considerations. Several workshop participants mentioned the importance of valuing incremental progress over perfection, or “bootstrapping,” to create lasting, systemwide change. Susan Singer (Rollins College) explained that, in the process of improving teaching evaluation, the Associated Colleges of the South are working iteratively with faculty, tenure and promotion committees, and department chairs. Weaver emphasized the broader need for incremental progress to achieve the overarching goal of changing the way excellent teaching is valued and rewarded in the sphere of higher education, and Fairweather highlighted the value of incremental progress. Fairweather also suggested encouraging each institution to consider whether its goal is “to have an optimal assessment process for teaching that is equivalent to peer review research, or to improve what’s going on at your current institution? And if it is the latter, how much?”

Weaver emphasized that, in order to ultimately drive teaching behavior in ways that will positively impact student learning, new evaluation tools must feed into forms of institutional or departmental recognition that both reinforce the use of research-based teaching methods and reward the practice of effective teaching. She said, “We may not be weighing teaching very heavily in merit and promotion because we do not feel that we have reliable measures, and because teaching is not weighted heavily, we are not willing to put the time into evaluating teaching because, in the end, we are not sure it is actually going to be taken seriously. So we just have to jump in and start to chip away at that.”

SUPPORTING SYSTEMIC CHANGE IN TEACHING EVALUATION

A panel of leaders who study and implement systemic change in undergraduate education highlighted some key components to improve teaching evaluation, including the choice of appropriate measures; the value of effective communication; and the institutional, disciplinary, and national actors that can be leveraged by institutions to encourage the change process.

Carl Wieman stressed that valid measures of teaching effectiveness are a necessary first step toward promoting systemic change around the way teaching is evaluated. While many discussions of teaching

___________

9 The video can be found at https://teval.net.

evaluation focus on improving the teaching process or elevating the importance of teaching within institutions, Wieman noted little focus on establishing the basic criteria to develop a successful evaluation system. The first important requirement, according to Wieman, is that it be consistent, with the same defined criteria applied across courses and departments. He also emphasized that an effective evaluation method must be fair and unbiased, supported by data that help all stakeholders to perceive the method as valid. An effective evaluation system must also allow for comparison of teaching across departments and institutions, because, according to Wieman, behavior of universities is strongly driven by rankings and comparisons. Wieman described how he developed the Teaching Practices Inventory to provide an objective, reliable form of evaluation based on the full set of instructional practices used, augmented by the Classroom Observation Protocol for Undergraduate STEM (COPUS) method to characterize the ways that students and faculty spend their time in the classroom. The evaluation does not include judgments as to the quality with which the various practices are used, only the extent to which effective research-based practices are used. He concluded that it was almost impossible to obtain consistent peer observations.

Sierra Dawson emphasized that organizational change is best achieved when multiple components of an institution work together. At the University of Oregon, she explained, the faculty senate initiated the conversation around improving evaluations, but the ensuing collaboration between faculty and administration led to the success of the initiative. In Dawson’s view, actions can be taken to encourage the institutionwide collaborations to bring about large-scale change. For example, campus visits by members of respected professional organizations or by other well-known outside experts who support the proposed changes can capture the attention of multiple groups on campus and draw them into conversation, which can propel the change initiative forward. Dawson also discussed the importance of identifying and leveraging the interests or concerns of various stakeholders, including the faculty union, to establish them as partners in the change initiative. She encouraged workshop participants to consider ways to build such collaborations at their institutions and to engage “early and often” with different stakeholders. The arc of human connections, according to Dawson, was key to implementing institutionwide changes to the evaluation process at the University of Oregon.

Susan Musante (Howard Hughes Medical Institute [HHMI]) described the role that funders can play in promoting systemic change at undergraduate institutions by promoting campuswide conversations around how to transform the student learning experience. When funding organizations issue initiatives, like HHMI’s Inclusive Excellence Initiative, even the initial process of considering whether to submit a proposal can draw people together and stimulate important discussions that might not otherwise occur. Musante noted two elements of effective systemic change that can emerge from such discussions. First, change moves beyond a small team of champions to include “a truly fundamental shift in the way [an] institution does business.” Second, individuals initiating change develop a common understanding of an institution’s historical, cultural, and political context, including commonly accepted ideas around how and why change happens. Musante stressed that understanding how these institution-specific contextual factors could play into the possible success or failure of a change initiative is a critical indicator of an institution’s readiness to engage in systemic change efforts. In terms of improving the way teaching is currently evaluated, Musante stated that HHMI’s Inclusive Excellence Initiative is “encouraging schools to take on the challenge of developing, implementing, and assessing approaches to measure the extent to which an instructor’s teaching is effective and inclusive.” She emphasized her view that teaching cannot truly be described as excellent if it is not inclusive.

Toby Smith (Association of American Universities) highlighted the role that disciplinary societies and professional organizations can play in promoting change. Smith suggested that an important point of leverage AAU has in common with other higher education associations is the power to assemble groups of people and stimulate conversations, through top-down, middle-out, or bottom-up approaches. As an example of a top-down approach, respected professional associations or societies can convene other influential organizations, as well as institutional leaders who can then be encouraged to implement change initiatives on their own campuses. As an example of a middle-out approach, Smith suggested that professional organizations could ask provosts to convene department chairs to discuss a change initiative, which can help to disseminate an understanding of the importance of change. As an example of a bottom-up approach, the legitimacy provided by a respected organization’s backing of a change initiative can empower faculty who support the initiative to call it to the attention of department chairs.

Another point of leverage shared by professional organizations, according to Smith, is their ability to foster improvements in undergraduate teaching by making membership contingent on excellent teaching in addition to excellent research. He further noted that promotion of best practices by disciplinary societies and professional organizations can foster “coopetition,” a collaborative competitiveness to keep pace with peer institutions. Because of the strong identification that many faculty members feel with their disciplines, respected societies can influence how effective teaching is both defined and evaluated. Last, Smith mentioned that professional organizations can often encourage funding agencies to contribute resources toward improvements in undergraduate education.

Following the presentations, Michael Dennin (University of California, Irvine) raised the example of youth sports, in which a national-level organization defines professional standards for coaching that are subsequently implemented at the local level. In order to achieve successful systemic change around the use of effective teaching practices, Dennin asserted, a similar process should occur, in which statements on professional teaching are issued from a recognized national authority. Smith also acknowledged the role of national-level guidelines to help improve the evaluation of teaching, given that evidence exists that describes effective teaching.

Several workshop participants remarked on the importance of addressing the human aspects of a change initiative, including fear and vulnerability that faculty often feel around evaluations. Darby asked the panel about approaches to confront these human factors. Musante suggested the creation of groups or communities in which people can learn from and support each other during the change process. Dawson observed the fear and vulnerability that exist around the evaluation of teaching can often be traced back to issues of an individual’s identity as a good teacher. According to Dawson, these fears can be addressed through thoughtful communication with all groups involved, as well as through one-on-one conversations, even though these efforts take considerable time. Wieman also noted that communication around change is vital, stating that although not everyone will agree on the change to be implemented, “everybody has to understand what’s being done and why it is being done, so that the discussions and disagreements, are at least focused on the real issues.”

LOOKING FORWARD

Following the panel, participants broke into small groups to discuss potential next steps for recognizing and evaluating effective teaching. Key ideas that emerged from the break-out sessions included establishing a definition of excellent teaching and the associated expectations for faculty members, identifying the appropriate methods and measures to be used for evaluation, championing a commitment to diversity and inclusivity, and recognizing institution-specific factors that can contribute to long-term, systemic change.

Definitions and Expectations

Throughout the flash talks and panel discussions, several participants commented on the benefit of a definition of excellent teaching as a prerequisite for establishing effective evaluation methods. Dennin and Smith suggested that a common framework for identifying teaching excellence is necessary at the national level. Wieman stressed the importance of applying the same criteria across courses and departments. However, several other participants voiced a desire for context-dependent flexibility in the evaluation of teaching. Specifically, as noted by multiple participants, the mission of the institution, the discipline being taught, the type of faculty appointment (part- or full-time, tenure-stream or nontenure stream), the career stage of the teacher, the type of teaching being performed (classroom or online), and the class size (large, required, entry-level class or smaller, elective upper-level class) might impact the evaluation process. Several participants, including Darby, Austin, and Singer, remarked on the benefit of considering related nonclassroom activities, such as mentoring, service work, and curriculum development, in the evaluation process. Weaver summed up the challenges inherent in establishing a definition of excellent teaching, asking, “Do we need to be to the point where all of the academics in the nation, or internationally, have come to an agreement on exactly what defines excellence in teaching, or do we have enough to make a start at changing the approaches to evaluation that currently exist?”

Methods and Measures

Several participants said that selection of appropriate evaluation methods and reliable outcome measures are of primary importance to create an effective evaluation process. Although the greater aim of improving the evaluation process is the promotion of student learning, Fairweather noted that “assessment of teaching and [assessment] of student learning are not the same” and, therefore, it is important to clearly identify the target of evaluation, be it student learning or otherwise, before selection of valid measurement methods. Several participants, including Marcketti and Fairweather, noted challenges around the use of student learning as a proxy for effective teaching. One such challenge, according to Fairweather, is the fact that “much student learning is longitudinal. It doesn’t show up in a class, it shows up down the road.” Fairweather noted further that, if proxies related to student learning outcomes are used for evaluation, each institution must consider questions about what measures to use, including whether mean assessment results or measures of student improvement will be employed, or whether “gateway” courses (courses agreed upon as difficult to teach) will receive greater weight in the evaluation process.

Wieman maintained that documenting the use of certain high-impact teaching practices is an alternative to using measures of student learning when evaluating teaching. However, Wieman noted that a simple inventory of the practices used during teaching does not provide feedback on their effectiveness. Huber and Greenhoot also pointed out that an effective evaluation system systematically measures the intellectual work in planning instruction, in addition to behavior observed in the classroom.

Flash talk presentations illustrated examples of institutions employing a suite of measures, including student ratings, portfolios, peer-observations, and personal reflections. This combination of methods, according to various participants, can provide a broader assessment of teaching than any single measure. A few participants further noted that the choice of appropriate measures of teaching effectiveness may depend on the overall purpose or function of the evaluation. Specifically, Stephanie Fabritius (Associated Colleges of the South) reported that her breakout group discussed the possibility of different evaluation techniques for formative purposes of continuous improvement than for summative issues such as promotion and tenure.

Diversity and Inclusion

The importance of addressing challenges around honoring diversity, establishing and maintaining inclusion, and avoiding bias in evaluation methods was repeatedly highlighted by several workshop participants. Concepts of diversity and inclusion, said Singer, “need to be much more at the center, and a driving factor in our conversations…about assessing teaching effectiveness.” Musante noted that, despite many efforts to the contrary, the opportunity gap for students from groups historically underrepresented in STEM fields has not decreased, indicating that a “systems-level change-of-course” is needed. Musante re-emphasized the belief that true excellence in teaching cannot exist without inclusion, and Mica Estrada (University of California, San Francisco) articulated that the process of improving teaching evaluation is a good opportunity for redefining excellent teaching to include learning experiences that are inclusive of all students. Miller affirmed that efficacious teaching practices “create a sense of belonging for students in the classroom, and a welcoming space.” Further, Estrada asserted, efforts to improve the evaluation of teaching are opportunities for cultural transformation on campuses, through which existing biases supported by current evaluation methods, such as those against people of color or women in certain disciplines, can be eliminated. Establishing inclusive teaching and eliminating bias in evaluation methods, continued Estrada, requires inclusion throughout the entire change process, including diversity in committees deciding what constitutes excellent teaching, among students in any pilot group, and administrators.

Implementation and Organizational Change

Austin noted that work around improving teaching assessment is essentially an organizational change challenge and treating it that way allows efforts to evolve from a “coalition of the willing” to a long-lasting, systems-level change within institutions. Throughout the flash talks and panel discussions, various practices important for advancing systemic change within and across institutions were discussed, including use of multiple levers for change, the practice of clear and effective communication during all stages of the change process, and identification and engagement of key stakeholders.

Several participants noted the importance of identifying and utilizing various external levers for change, including professional organizations and disciplinary societies that support the change initiative. These organizations, according to Miller, can both provide legitimacy to change efforts and convene groups of stakeholders, which can stimulate conversations within and between campuses. Dennin reported that his breakout group discussed peer rankings and a desire to stay competitive with peer institutions as a leverage point. Musante referred to her earlier presentation about the several ways that funding organizations can be levers for change. However, as expressed by Estrada, levers for systemic change may differ between institutions, so it is important for each to ask, “Who has pressure? Who has leverage? Who has influence that would help your institution be enthused about making change?”

To implement successful organizational-level change, several participants noted the importance of identifying the key on-campus collaborators and determining how to engage those individuals or groups. Involving the entire range of stakeholders, addressing their concerns, and meeting their goals can create a community of support around a change initiative that can help to ensure its institution-wide adoption, according to Dawson. Collaborative partners may vary at each institution, but, as noted by the designated reporters from several breakout groups, can include both STEM and non-STEM faculty, department chairs, students, union representatives, parents, campus centers for teaching and learning, campus governance, community business leaders, policy makers, accreditors, funders, and disciplinary societies. According to Austin, in order to implement systemic change, “we need to be able to work together effectively and think beyond our individual purview of teaching.”

Another significant aspect of successful organization-level change discussed by multiple participants was effective communication throughout all stages of the change process. According to Dawson, when changes in the evaluation of teaching are initially considered, communication should focus on creating community and collaboration. To gain the support of faculty members, it is helpful for early conversations to address the human elements of fear and vulnerability that surround the evaluation process. As noted earlier, Weaver and Fairweather described how changes to the way that teaching is valued are frequently incremental and that quick progress should not be expected. Several participants pointed out that, as the change process moves forward, effective communication can also involve the provision of appropriate faculty development for evaluators, administrators, and the faculty members under review. Specifically, noted Dennin, Huber and Dawson, individuals overseeing promotion and tenure may need guidance in how to use new evaluation methods and assessment tools for administrative decisions and how to ensure that expectations and evaluation criteria are clear to all. As presented in several flash talks, a “toolkit” of resources can be helpful, including evaluation tools, supporting research, and metrics, to guide both faculty and evaluators through the new evaluation process and provide them with practical alternatives to their current methods.

While the important steps in the process of improving the evaluation of teaching and many of the associated challenges may cut across the entire sector of higher education, Austin stated they play out differently in different institutional contexts. Box 1 pulls from the flash talks, panel presentations, and discussions to present important questions that institutions of all types could ask as they consider addressing issues around the way science teaching is recognized and evaluated on their campuses.

FINAL REMARKS AND REFLECTIONS

In closing, Austin reminded participants of the overarching goal encompassing the reform of teaching evaluation to fit within a larger national commitment to student learning. In comments and summative remarks throughout the workshop, multiple participants noted that currently accepted methods of teaching evaluation sit within the broader context of institutional culture, and that work to improve the evaluation of teaching can act as a lever for cultural change within institutions. For example, the flash talks and subsequent discussions highlighted the varied emphasis placed on teaching and the balance between teaching and research at different institutional types. Austin noted that reform of teaching evaluation may involve a change in the way some institutions value the importance of teaching. Estrada stressed the importance of using the cultural change necessary for successful evaluation reform as a lever for eliminating long-held biases against certain groups and promoting inclusivity and diversity. She cautioned, however, that the change process requires inclusion at all steps in the process. Fairweather

warned against the attempt to implement changes to an evaluation system in the absence of underlying systemic change in institutional culture, noting that, if following mandated guidelines for excellence in teaching does not ultimately appear to be valued in decisions of tenure and promotion, faculty will be unlikely to support a new system. Austin summed up the magnitude of this task with her final remarks: “[Teaching evaluation] is not only an activity…[instead], a number of us would like to see it as an avenue into the bigger issue of what kind of teaching and learning experiences are necessary for the context that we’re in as a country and in the global environment. That’s a big challenge for all of us.”

PLANNING COMMITTEE FOR WORKSHOP ON RECOGNITION AND EVALUATION OF SCIENCE TEACHING IN HIGHER EDUCATION

Ann E. Austin (Chair), Michigan State University; Christine Broussard, University of La Verne; Noah Finkelstein, University of Colorado Boulder; Andrea Follmer Greenhoot, University of Kansas; Mark Lee, Spelman College; Emily Miller, Association of American Universities; Barbara Schaal, Washington University in St. Louis; and Gabriela Weaver, University of Massachusetts.

DISCLAIMER: This Proceeding of a Workshop—in Brief was prepared by Susan J. Debad, rapporteur, as a factual summary of what occurred at the workshop. The statements made are those of the rapporteur or individual meeting participants and do not necessarily represent the views of all meeting participants; the planning committee; the Roundtable on Systemic Change in Undergraduate STEM Education; or the National Academies of Sciences, Engineering, and Medicine. The planning committee was responsible only for organizing the public session, identifying the topics, and choosing speakers.

REVIEWERS: To ensure that it meets institutional standards for quality and objectivity, this Proceedings of a Workshop—in Brief was reviewed by Diane O’Dowd, University of California, Irvine and Emily Miller, Association of American Universities. Kirsten Sampson Snyder, National Academies of Sciences, Engineering, and Medicine, served as review coordinator.

SPONSORS: The workshop was supported by the National Science Foundation via a subaward from the University of Massachusetts, Amherst. Financial support was also provided by the Association of American Universities.

Suggested citation: National Academies of Sciences, Engineering, and Medicine. (2020). Recognizing and Evaluating Science Teaching in Higher Education: Proceedings of a Workshop—in Brief. Washington, DC: The National Academies Press. https://doi.org/10.17226/25685.

Division of Behavioral and Social Sciences and Education

Copyright 2020 by the National Academy of Sciences. All rights reserved.