Summary

The U.S. Department of State needs Foreign Service officers who are proficient in the local languages of the countries where its embassies are located. To ensure that the department’s workforce has the requisite level of language proficiency, its Foreign Service Institute (FSI) provides intensive language instruction to Foreign Service officers and formally assesses their language proficiency before they take on an assignment that requires the use of a language other than English. The State Department uses the results of the FSI assessment to make decisions related to certification, job placement, promotion, retention, and pay.

To help FSI keep pace with current developments in language assessment, the agency asked the National Academies of Sciences, Engineering, and Medicine to conduct a review of the strengths and weaknesses of some key assessment1 approaches that are available for assessing language proficiency2 that FSI could apply in its context. FSI requested a report that provides considerations about relevant assessment approaches without making specific recommendations about the approaches the agency should adopt

___________________

1 Although in the testing field “assessment” generally suggests a broader range of approaches than “test,” in the FSI context both terms are applicable, and they are used interchangeably throughout this report.

2 This report uses the term “language proficiency” to refer specifically to second and foreign language proficiency, which is sometimes referred to in the research literature as “SFL” or “L2” proficiency. The report does not address the assessment of language proficiency of native speakers (e.g., as in an assessment of the reading or writing proficiency of U.S. high school students in English) except in the case of native speakers of languages other than English who need to certify their language proficiency in FSI’s testing program.

and without evaluating the agency’s current testing program. This request included an examination of important technical characteristics of different assessment approaches. The National Academies formed the Committee on Foreign Language Assessment for the U.S. Foreign Service Institute to conduct the review.

Specific choices for individual assessment methods and task types have to be understood and justified in the context of the specific ways that test scores are interpreted and used, rather than in the abstract: more is required than a simple choice for an oral interview or a computer-adaptive reading test. The desirable technical characteristics of an assessment result from an iterative process that shapes key design and implementation decisions while considering evidence about how the decisions fit with the specific context in which they will be used. The committee calls this view a “principled approach” to assessment.

USING A PRINCIPLED APPROACH TO DEVELOP LANGUAGE ASSESSMENTS

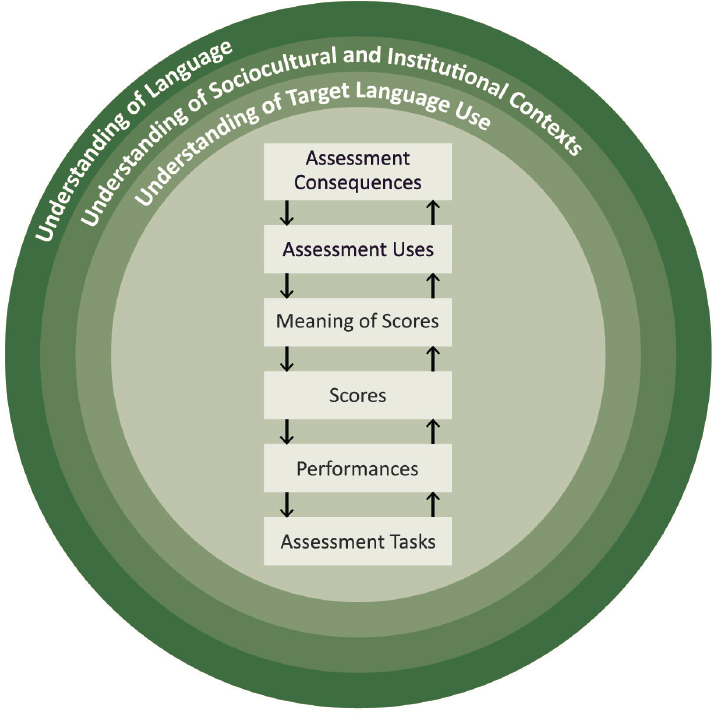

The considerations involved in developing and validating language assessments and the ways they relate to each other are shown in Figure S-1. The assessment and its use are in the center of the figure, with the boxes and arrows describing the processes of test development and validation. Surrounding the assessment and its use are the foundational considerations that guide language test development and validation: the understanding of language, the contexts influencing the assessment, and the target language use that is the focus of the assessment.

A principled approach to language test development explicitly takes all these factors into account, using evidence about them to develop and validate a test. In particular, a principled approach considers evidence in two complementary ways: (1) evidence that is collected as part of the test about the test takers to support inferences about their language proficiency, and (2) evidence that is collected about the test and its context to evaluate the validity of its use and improve the test over time.

FOUNDATIONAL CONSIDERATIONS

One key aspect of a principled approach to developing language assessments involves the understanding of how the target language is used in real life and how that use motivates the assessment of language proficiency. This understanding is crucial not only for initial test development, but also for evaluating the validity of the interpretations and uses of test results and for improving a test over time. There are a number of techniques for analyzing

language use in a domain that could be used to refine FSI’s current understanding of language use in the Foreign Service context.

Research in applied linguistics over the past few decades has led to a nuanced understanding of second and foreign language proficiency that goes well beyond a traditional focus on grammar and vocabulary. This newer perspective highlights the value of the expression of meanings implied in a given context, multiple varieties of any given language, the increasing use of multiple languages in a single conversation or context, and the recognition that communication in real-world settings typically uses multiple language skills in combination, frequently together with nonlinguistic modalities, such as graphics and new technologies.

Many of these more recent perspectives on language proficiency are relevant to the language needs of Foreign Service officers, who need to use

the local language to participate in meetings and negotiations, understand broadcasts and print media, socialize informally, make formal presentations, and communicate using social media. The challenges presented by this complex range of Foreign Service tasks are reflected in the current FSI test and its long history of development.

THE CURRENT FSI TEST

FSI’s current test is given to several thousand State Department employees each year. It is given in 60 to 80 languages, with two-thirds of the tests in the five most widely used languages (Arabic, French, Mandarin Chinese, Russian, and Spanish). The assessment involves a set of verbal exchanges between the test taker and two evaluators: a “tester,” who speaks the target language of the assessment and interacts with the test taker only in the target language, and an “examiner,” who does not necessarily speak the target language and interacts with the test taker only in English.

The test includes two parts: a speaking test and a reading test. The speaking test involves (1) conversations between the test taker and the tester about several different topics in the target language; (2) a brief introductory statement by the test taker to the tester, with follow-up questions; and (3) the test-taker’s interview of the tester about a specific topic, which is reported to the examiner in English. The reading test involves reading several types of material in the target language—short passages for gist and longer passages in depth—and reporting back to the examiner in English, responding to follow-up questions from the examiner or the tester as requested.

The tester and the examiner jointly determine the test-taker’s scores in speaking and reading through a deliberative, consensus-based procedure, considering and awarding points for five factors: comprehension, ability to organize thoughts, grammar, vocabulary, and fluency. The final reported scores are based on the proficiency levels defined by the Interagency Language Roundtable (ILR), a group that coordinates second and foreign language training and testing across the federal government. The ILR level scores are linked to personnel policies, including certification, job placement, retention in the Foreign Service, and pay.

POSSIBLE CHANGES TO THE FSI TEST

The committee considered possible changes to the FSI test that might be motivated in response to particular goals for improving the test. Such goals might arise from an evaluation of the validity of the interpretations and uses of the test, guided by a principled approach, which suggests particular ways the current test should be strengthened. Table S-1 summarizes changes that

TABLE S-1 Possible Changes to the FSI Test to Meet Potential Goals

| Possible Change | Potential Test Construct, Reliability and Fairness Considerations | Potential Instructional and Practical Considerations |

|---|---|---|

| Using Multiple Measures |

|

|

| Scoring Listening on the Speaking Test |

|

|

| Adding Target-Language Writing as a Response Mode for Some Reading or Listening Tasks |

|

|

| Adding Paired or Group Oral Tests |

|

|

| Using Recorded Listening Tasks That Use a Range of Language Varieties and Unscripted Texts |

|

|

| Incorporating Language Supports (such as dictionary and translation apps) |

|

|

| Adding a Scenario-Based Assessment |

|

|

| Possible Change | Potential Test Construct, Reliability and Fairness Considerations | Potential Instructional and Practical Considerations |

|---|---|---|

| Incorporating Portfolios of Work Samples |

|

|

| Adding Computer-Administered Tests Using Short Tasks in Reading and Listening Using Automated Assessment of Speaking |

|

|

| Providing Transparent Scoring Criteria |

|

|

| Using Additional Scorers |

|

|

| Providing More Detailed Score Reports |

|

|

the committee considered for the FSI test in terms of some potential goals for strengthening the current test. Given its charge, the committee specifically focused on possible changes that would address goals for improvement related to the construct assessed by the test, and the reliability and fairness of its scores. In addition, the committee noted potential instructional and practical considerations related to these possible changes.

CONSIDERATIONS IN EVALUATING VALIDITY

Evaluating the validity of the interpretation and use of test scores is central to a principled approach to test development and use. Such evaluations consider many different aspects of the test, its use, and its context.

Several kinds of evidence could be key parts of an evaluation of the validity of using FSI’s current test:

- comparisons of the specific language-related tasks carried out by Foreign Service officers with the specific language tasks on the FSI test;

- comparisons of the features of effective language use by Foreign Service officers in the field with the criteria that are used to score the FSI test;

- comparisons of the beliefs that test users have about the meaning of different FSI test scores with the actual proficiency of Foreign Service officers who receive those scores; and

- comparisons of the proficiency of Foreign Service officers in using the local languages to carry out typical tasks with the importance of those tasks to the job.

As a “high-stakes” test—one that is used to make consequential decisions about individual test takers—it is especially important that the FSI test adhere to well-recognized professional test standards. One key aspect of professional standards is the importance of careful and systematic documentation of the design, administration, and scoring of a test as a good practice to help ensure the validity, reliability, and fairness of the interpretations and decisions supported by a testing program.

BALANCING EVALUATION AND THE IMPLEMENTATION OF NEW APPROACHES

At the heart of the FSI’s choice about how to strengthen its testing program lies a decision about the balance between (1) conducting an evaluation to understand how the current program is working and identifying changes that might be made in light of a principled approach to assessment design, and (2) starting to implement possible changes. Both are necessary for test improvement, but given limited time and resources, how much emphasis should FSI place on each?

Two questions can help address this tradeoff:

- Does the FSI testing program have evidence related to the four example comparisons listed above?

- Does the program incorporate the best practices recommended by various professional standards?

If the answer to either of these questions is “no,” it makes sense to place more weight on the evaluation side to better understand how the current program is working. If the answer to these questions is “yes,” there is probably sufficient evidence to place more weight on the implementation side.

On the evaluation side, one important consideration is the institutional structure that supports research at FSI and provides an environment that allows continuous improvement. Many assessment programs incorporate regular input from researchers into the operation of their program, either from technical advisory groups or from visiting researchers and interns. Both of these routes allow assessment programs to receive new ideas from experts who understand the testing program and can provide tailored advice.

On the implementation side, options for making changes may be constrained by two long-standing FSI policies:

- Assessing all languages with the same approach: the desire for comparability that underlies this policy is understandable, but what is essential is the comparability of results from the test, not the comparability of the testing processes.

- The use of the ILR framework: the ILR framework is useful for coordinating personnel policies across government agencies, but that does not mean it has to be used for all aspects of the FSI test.

These two policies may be more flexible than it might seem, so FSI may have substantially more opportunity for innovation and continuous improvement in its testing program than has been generally assumed.

Complicated choices will need to be made about how to use a principled approach to assessment, select which language assessment options to explore, and set the balance between evaluation and implementation. In requesting this report, FSI has clearly chosen a forward-looking strategy. Using this report as a starting point and thinking deliberatively about these complicated choices, FSI could enhance its assessment practices by improving its understanding of the test construct and how it is assessed; the reliability of the test scores and the fairness of their use; the potential beneficial influence of the test on instruction; and the understanding, usefulness, and acceptance of the assessment across the State Department community.