2

Modeling the Electric System: Approaches and Challenges

The workshop was bookended by two keynote addresses. John Weyant, Stanford University and Energy Modeling Forum (EMF), offered a conceptual overview of why models are used, their limitations and challenges, and future opportunities. John Grosh, Lawrence Livermore National Laboratory (LLNL), described several large-scale efforts to integrate models across domains and sectors to address anticipated needs for informing electric system design and event response planning.

MODELING THE ELECTRICITY SUBSECTOR FOR ENERGY POLICY AND STRATEGY ANALYSIS

John Weyant, Stanford University and Energy Modeling Forum

Weyant began the workshop with an introduction to the use of modeling in the electric subsector. While he recognized that models present many challenges, he stressed that they are well worth the effort when created and used appropriately. Most often, he asserted, the downsides of modeling stem not from the models themselves, but from the ways they are used.

The Value of Modeling

Modeling any complex process is difficult; the outputs of various models often differ dramatically and are rarely precise. Electric system modeling in particular has always been especially difficult, but the

challenge has become much greater over the past 30 years in the context of rapid changes in the technologies, institutions, and regulations that shape our electric systems. Modelers struggle to keep up with these developments while attempting to understand a future that is likely to have little resemblance to the past.

Despite these daunting challenges, modeling is worth doing, Weyant asserted, because it can provide context for anticipating the costs, market dynamics, environmental impacts, and distributional impacts of different technologies or policies. For example, models can help researchers understand how emerging issues, such as increased use of electric vehicles or growing wildfire risks from downed power lines, may impact capacity planning, production cost, load flow, and other key factors.

Weyant noted that there is some disagreement regarding the relative importance of modeling versus experience in the ability to respond to circumstances that are in a constant state of change. The two can be bridged, he suggested, by incorporating expert opinions across scientific disciplines to understand the growing number of complex interactions within and across sectors. He stressed that even inaccurate forecasts can be useful for exploring possible futures, gathering information, and exchanging ideas.

Modeling Challenges

Weyant highlighted several key challenges involved in the creation and application of models. Chief among them is the difficulty of matching the right model with the right problem. “These studies can tell us about likely cost-market dynamics, environmental impacts, and distributable impacts—but choosing and designing the right model for a given question remains an ongoing challenge,” Weyant said. “There’s little consensus for when details can be ignored, and the cost of building a new model sometimes encourages the use of suboptimal existing models.”

When it comes to creating models, a pervasive challenge is the large number of variables involved. Modeling electric power systems can require accounting for a wide range of power sources, local geographies, and climate considerations; multiple and overlapping jurisdictions, such as federal, state, or local governments; multiple stakeholder interests, such as producers, customers, and regulators; and the pervasive uncertainties endemic to system operation, such as policy changes and unexpected outages. New risks, such as cybersecurity threats, are constantly emerging, further complicating the picture.

The application of models in the context of decision making poses additional challenges. Use cases, which Weyant described as “a specific problem that a number of stakeholders have consistent interest in or well-known differences of opinion about,” are often poorly defined. Addressing the smaller-scale dynamics necessary to understand what models

mean for very different geographic regions is another common challenge. Last, there is a disconnect between the experts who develop the models and the decision makers who rely on the information that models produce. Challenges related to characterizing uncertainty and communicating about model outcomes can lead to misunderstandings and ineffective model use.

“The models could be used in a totally useless way … but they also, I think, could be used quite productively,” Weyant said. “Often, it’s not the models themselves, it’s how they’re used that tends to be the constraint on them being more used and useful.”

Making Better Models

Weyant expressed his view that this multifaceted challenge is an optimization problem that can be solved by making better models, with the goals of minimizing costs, maximizing revenue, and matching supply with demand, while understanding constraints and possible market pivots or evolutions. For example, the increasing impact of multisector, dynamic interactions on the electric system necessitates the inclusion of as many interactions as possible in new models. However, he allowed that a model with certain exclusions could still be useful as long as its users are aware of the parameters and exclusions. If a model for the western United States does not account for wildfires or drought, for example, another researcher could add those event possibilities as needed.

Weyant highlighted several example models and frameworks. One useful model is the Electric Power Research Institute (EPRI) in-house energy-economic model, U.S. Regional Economy, Greenhouse Gas, and Energy (US-REGEN), whose outputs include generation, capacity, prices, end-use mix, emissions, air quality, and water. It also offers model-to-model comparisons to gain deeper insight into outcomes, such as emissions from different generation methods.

Another useful model is EMF’s Program on Coupled Human and Earth Systems (PCHES). This multisector framework can be valuable for considering a wide range of factors, such as water, land, and socioeconomic factors, to understand how events might drive electric system demand. PCHES’s strengths include its fine-scale climate data translation and use of large-scale Earth systems information, although it falls short in its ability to address administrative and bureaucratic complexities and characterize uncertainty, Weyant noted.

Wrapping up, Weyant suggested that future models could be improved by implementing machine learning and data assimilation techniques, incorporating ideas from behavioral and institutional economics, and taking advantage of increased data generation and computing power to create broad-based model diagnostics.

Discussion

Morgan moderated a question-and-answer period that focused on understanding uncertainties, human behavior, and how political realities influence the use of models.

Dealing with Uncertainties

A participant asked Weyant to expand on how modeling could be better used to understand the impacts of disruptive technologies and events, which are by definition difficult to predict. Weyant replied that it is important for researchers to think as broadly as possible, perhaps collaborating with futurists alongside more conventional technology analyses. He emphasized that the difficulty of modeling extreme and unpredictable events means that this modeling deserves more effort, not less. Building on this point, Morgan stressed the importance of thinking long term—on the scale of decades rather than years—and incorporating multiple perspectives in order to foresee so-called black swan events that are in fact becoming less and less rare.

Asked how modelers could better account for uncertainty, Weyant replied that it is important to carefully frame the problem at the outset, understand what information is required, and then design or apply a model to answer the question, instead of applying models to answer questions that decision makers are not in fact asking or forcing a question into a model that is ill-suited to answering it. Another attendee noted the importance of including Investor-Owned Utilities (IOUs) in the modeling process, noting that the California electricity shortage in the early 2000s was a result of the state government ignoring key uncertainties, partly because IOUs were not appropriately included in discussions.

Human Behavior

Morgan raised the importance of institutional or behavioral dimensions for creating change. Acknowledging that it can be difficult for institutions to act on a model’s suggestions, Weyant said that it is important to study the human dimension in order to understand why.

Political Realities

Politics also has an effect on how models are used. A participant asked Weyant to expand on revenue recycling, particularly in an era of frequent political and media controversies. Weyant pointed to EMF’s attempt to model for clean power in a politically neutral way, which created productive outcomes but was also subject to political and institutional

negotiations. He suggested that other experts could further elaborate about the interaction between modeling and politics.

THE GRID MODERNIZATION LABORATORY CONSORTIUM AND THE NORTH AMERICAN ENERGY RESILIENCE MODEL

John Grosh, Lawrence Livermore National Laboratory

Grosh closed the workshop with a reflection on modeling needs and opportunities. He shared examples of several emerging tools and detailed a large-scale effort to better understand how an extreme event would affect the grid and its interdependencies.

Next-Generation Tools

The U.S. Department of Energy is driving the creation of next-generation tools to accomplish three goals: (1) creating cross-domain simulations that can account for the world’s increasing interdependencies; (2) minimizing solution times so that research innovations can become real-world tools quickly; and (3) planning for unpredictable events such as natural disasters or cyberattacks to help decision makers understand their consequences. While these tools will take years to mature, work is well under way.

Creating these tools requires collaboration and shared goal setting. To increase both, leaders from multiple national laboratories created the Grid Modernization Laboratory Consortium (GMLC) and launched the Grid Modernization Initiative (GMI) to focus on six grid modernization objectives. Most relevant to this workshop is the goal of designing and planning of next-generation tools to address evolving needs.

This objective has three components: (1) building a software framework to enable coupling and cross-grid modeling; (2) incorporating uncertainty and system dynamics into the tools for accurate modeling; and (3) creating computational innovations to enable enormous performance improvements. The GMLC will address the capability gaps, partner with industry to refine the tools, and transition them into practice on the grid. Each tool will be carefully measured through assessments of how it impacts the economy, how it improves reliability and resilience, and how it can be enhanced via computational technologies and high-performance computing (HPC).

Achievements to Date

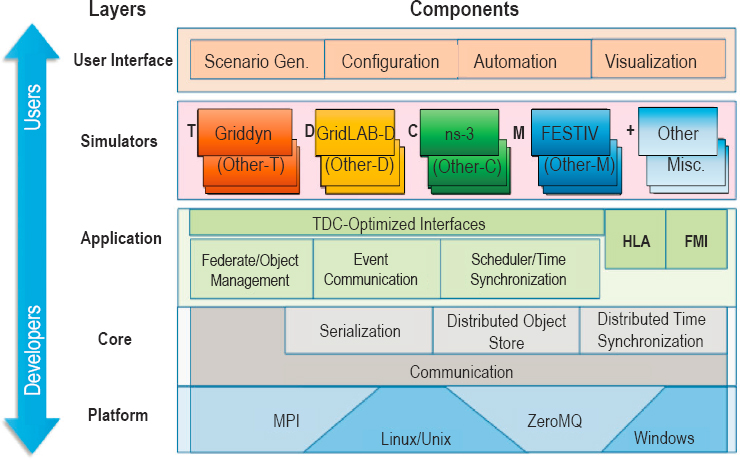

The GMLC has initiated multiple planning and design artifacts, including software, data sets, and algorithms. The first artifact Grosh described, HELICS, is an open source co-simulation platform that enables

the coupling of multiple simulations in order to combine models for different elements such as transmission, distribution, communications, and the economy (Figure 2.1). This platform, which merges multiple base technologies created at several U.S. Department of Energy laboratories (Pacific Northwest National Laboratory [PNNL], Lawrence Livermore National Laboratory [LLNL], and National Renewable Energy Laboratory [NREL]) in order to run them together as a unified simulation, is an important advancement to help planners understand complex emerging issues such as distributed energy resources (DERs) integration and two-way power flows, Grosh said.

The second artifact is multiscale production cost modeling (PCM) to predict generation dispatch, commitment, and costs. PCM tools are used widely to perform economic assessments for renewable integration, transmission, and generation planning. Fully detailed regional models are so computationally intensive that they require simplification, which reduces accuracy. Researchers at NREL and Sandia National Laboratory have made significant advances in reducing time-to-solution by dividing the modeling so that it can be run in parallel on different high-performance computers. For a high-resolution Eastern Interconnect model, NREL reduced the run time from a year of desktop computer time to about 1 week on a high-performance computing system. Historically, the electric grid community

has been resistant to using HPC systems, Grosh said, although he noted that this has changed over the past decade with the introduction of commercial (e.g., Plexos) and open source (Prescient) tools.

To better incorporate stochastics and uncertainty, the third artifact is extreme event modeling, which incorporates multiple components to model cascading effects and contingency analyses to help inform defensive actions. Improvements to this tool, such as accelerating dynamic electromechanical simulations, reduced output time from weeks to minutes at very low cost, demonstrating that existing systems can be reworked to enhance analysis without requiring development of a high-cost system from whole cloth.

Grosh also briefly described an effort to create a unified grid math library to integrate optimization of dynamics and uncertainty. While he said that the idea addresses key needs in grid modeling, he noted that the transition into practice has been difficult due to the cost of reengineering existing software and the lack of a clear business case for such enhancements.

The North American Energy Resilience Model

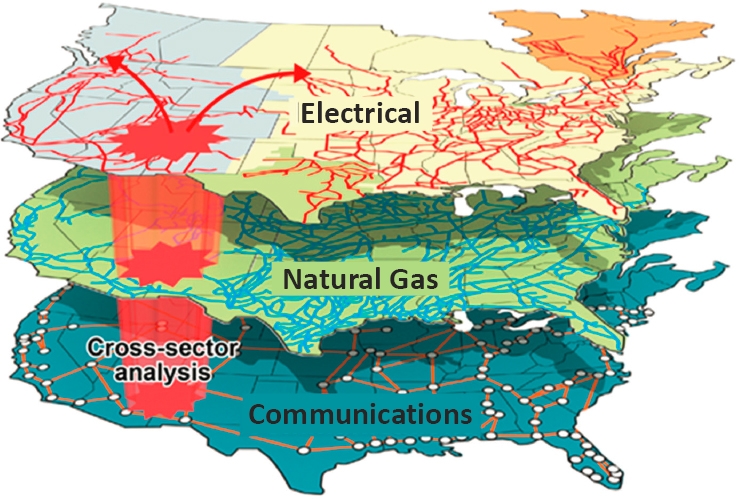

Grosh then discussed the North American Energy Resilience Model (NAERM), which was created in response to the devastation of Puerto Rico’s electric grid after Hurricane Maria, an unprecedented event that exceeded utility, regulatory, and industry boundaries. The project’s goal is to develop a modeling and simulation system to understand energy, communications, and natural gas system interdependencies; rapidly predict national consequences of an extreme event; and model responses to create solutions (Figure 2.2).

NAERM will ingest threat and hazard information into an integrated modeling environment that can include multiple data streams. When completed, it will be able to launch models, archive data sets, manage all computing and security, and enable analyses such as predicting outages or impacts, performing economic and resiliency assessments, proposing operations options, and creating near-real-time situational awareness, Grosh said.

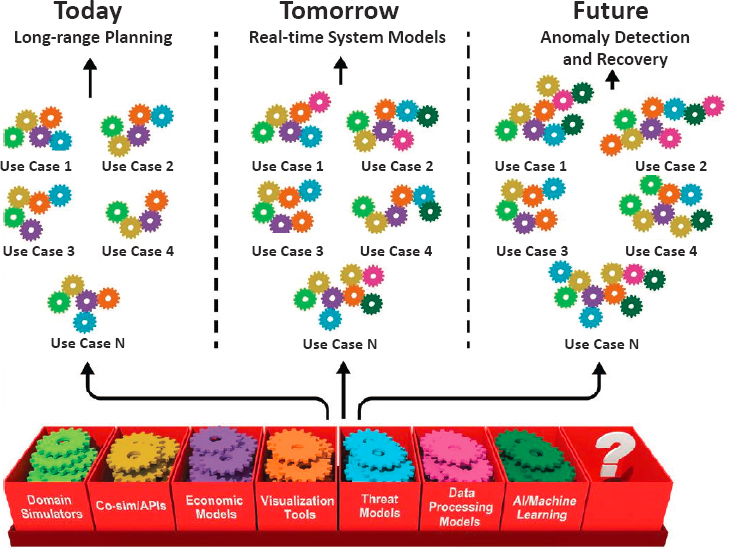

NAERM is not one monolithic piece of software, but rather a toolbox of different elements that can be assembled in relation to the threat being addressed (Figure 2.3). “As we face these new challenges in grid modeling … we need a flexible strategy, [to] be able to assemble modeling solutions,” Grosh said. To that end, NAERM aims to allow users to stack different models and codes together and manage a sequence of workflows and models in multiple ways to account for a wide range of scenarios, from a slow-moving hurricane to a sudden high-frequency electromagnetic pulse.

Concluding, Grosh identified future needs and opportunities. He urged a close examination of approaches to modeling uncertainty and error; suggested that a yearly conference be held to facilitate dynamic exchange of ideas among vendors, users, and researchers; and stressed the importance of effectively extending and connecting software to create truly integrated systems.

Discussion

Following Grosh’s remarks, Jeffery Dagle, PNNL, moderated a short question-and-answer period. A participant asked Grosh to reflect on lessons learned about the power system since beginning the work he described. Grosh replied that he was impressed with the richness of the field’s tools and capabilities. Jason Fuller, PNNL, added that from his perspective, disentangling modeling limitations from systems limitations has posed an interesting challenge. Another participant shared that he was glad to learn that the relationship between gas disruption and electricity was more robust than he had thought.

J.P. Watson, LLNL, asked if previously unknown vulnerabilities had been surfaced through these modeling approaches, especially in the context of attacker-defender models. Grosh assented, and added that he was surprised to learn how much work is needed to align current models with today’s threat environment. He added that while progress is being made on anticipating and modeling the impacts of major disruptions, capabilities for modeling response and recovery approaches are far less mature.

Another participant asked how realistic it is to believe that different software can be effectively integrated, especially considering that some are proprietary while others are open source. Grosh answered that the NAERM team is developing an open architecture to integrate commercial software. The team is working with software vendors to develop interfaces and utilities to connect these tools into the NAERM framework. For advanced NAERM features, the team is preparing a roadmap to address critical changes such as extending planning models to incorporate protection systems. Software vendors have largely been cooperative, he

said. Getting the required data sets, on the other hand, has proved more challenging.

Another participant asked if healthcare infrastructure was being included in NAERM. Grosh replied that that was outside the project’s scope and would be addressed by other agencies.