9

Reflections on the Democratization of Emerging Technologies at Scale

Margaret Kosal, Georgia Institute of Technology

Margaret Kosal is an associate professor at the Georgia Institute of Technology where she directs the Sam Nunn Security Fellows Program among other activities and honors.

She began her talk by noting that, in general, scientists’ and policymakers’ ability to predict and anticipate the next misuse of technology has been historically limited by a lack of creativity and by a narrow focus on complex, hard technologies with large impact. Yet, she argued, the slow, non-state trivial mechanisms for sowing confusion and impeding responses continue to surprise us. For example, she noted that mace spray was used to render people helpless during the 9/11 terrorist event, even as the use of box cutters was widely reported. She warned that continuing to ignore the easy, slow, non-disruptive techniques should be done “at your own risk” for those watching for the next big thing.

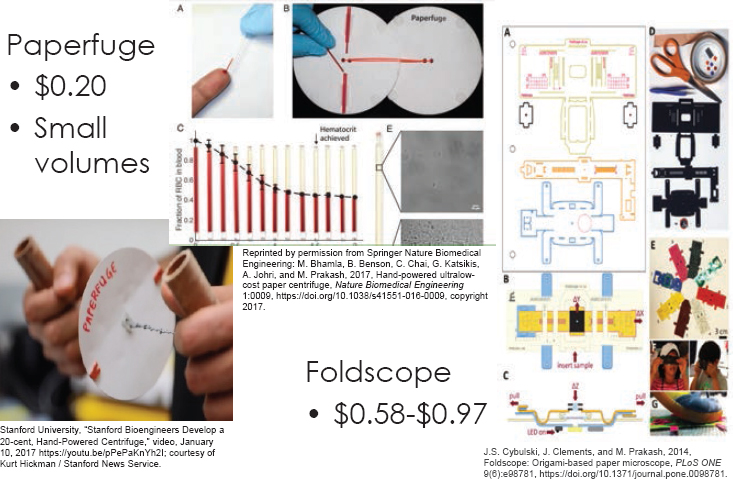

Kogal used the example of frugal science to highlight her point. Frugal science, defined by G.M. Whitesides as “designed to generate knowledge (and technology based on that knowledge) with cost as an integral part of the subject,” is promoted as a good

thing by academics.1 Kosal acknowledged that frugal science has led to improvements in the developing world, citing the development of paper-based microfluidic products (see Figure 9.1). She noted that, in frugal science application, one does not need stringent standards or controls for success. Standards for success are lower, she explained, because the effect has been established, and it is now being utilized in real-world situations, in non-ideal conditions. Kosal advised that frugal science that helps the broader population should be supported but that one needs to be watchful for unintended uses that could cause harm to the public, when the ability to perform certain technical tasks becomes inexpensive, lowering the barriers to proliferation. She also highlighted the tension between legitimate uses and uses intended to do harm.

Kosal’s presentation noted tertiary effects of restricting access to certain technologies. The example she highlighted was the reaction by the Iraqi government to evidence of chlorine-coupled vehicle-borne improvised explosive devices (VBIEDs) in Mosul in late 2006 to 2007. Although the majority of the VBIED casualties were due to shrapnel, the addition of chlorine caught everyone’s attention. In response to the perceived threat,2 the Iraqi government restricted the use of chlorine, which was mainly used to make drinking water safe, with the unintended (or tertiary) consequence of many deaths due to cholera outbreaks from unsafe drinking water, she noted.

One way to think creatively, Kosal said, is to work with students. The Sam Nunn Security Program, which she directs, is designed to have Ph.D. students from a diversity of disciplines to explore national security issues for an academic year. She presented the results from one of her students, who explored frugal science examples and their impact on the CBRNE threats using matrices to rank threats and capabilities in the near and far term.

___________________

1 G.M. Whitesides. “The Frugal Way,” The Economist, November 17, 2011.

2 National Counterterrorism Center, 2007 Report on Terrorism, April 30, 2008, https://www.hsdl.org/?view&did=781179.

When exploring new and emerging technologies and the threats that can emerge as new knowledge becomes democratized, the state that developed the technology has an initial advantage; however over time, non-state and disruptors learn how to adapt the technology to their purposes, Kosal explained. She emphasized out that some ideas become threats in part because they are advertised as such—she observed that biological weapons were identified by the United States as a threat due their ease of conversion and use—and that Al Qaeda took notice.

In conclusion, she said, “Emerging technologies can potentially improve the capabilities of terrorists or proliferating states.” However, she explained, we do not want to imperil our own economies by limiting science. Basic research in the United States is its strongest economic asset and is the envy of the world, stated Kosal. She

believes that threats can be better identified and predicted using the social sciences than is currently being done.

A workshop participant asked whether tacit knowledge required for some of the complex technologies mentioned can be easily obtained via YouTube or Google searches. Kosal pointed to work by Professor Kathleen Vogel, University of Maryland, who has explored this issue and has shown that even with YouTube, complex science is still difficult to do.

Mallory Stewart, a planning committee member, noted that democracy and the open sharing of information and knowledge is fundamental to this issue and that autocratic regimes that control information may have an easier time addressing this issue. For example, she asked, “The rise in the ability to falsify information through deep fakes, through spoofing, through all sorts of issues that we face more significantly in the United States when you rely on the spread of information through a free press than maybe a society such as Russia and China face—has this topic been explored?”

Kosal replied with a three-part answer:

- Part 1. “This issue of authoritarian regimes exploiting our technologies has been looked at and is well recognized, particularly in cyber. For example, Iran, an authoritarian regime, is very good at using cyber against its own people. So what do we do about it? What do we do about wanting to value our democracy?”

- Part 2. With deep fakes and false information, the challenge is less about democracy than about trust in government and institutions. She observed that the younger generations, Gen Z and Millennials, are not fooled by fake content online but that the older generation, over 65, are the most gullible because fundamentally they trust the government and institutions.

- Part 3. Kosal asserted that we need to increase science diplomacy and continue to support our basic research. U.S. science is respected across the world—this is an opportunity to expand influence.

Jackson noted that natural causes are responsible for the most catastrophic events in recorded history. Therefore, the intent of using frugal science for good, to democratize them so that they can improve things such as public health, interacts with this discussion in a really interesting way, he said. Kosal responded that, certainly, we need to look at this much more seriously than we have historically. It is difficult to get funding for this work, she noted, but the Sam Nunn Security Program is fortunate to be able to do some of it.

Robert Dynes, planning committee member, recalled that during the Cold War, we as a nation pulled together to develop new and better nuclear weapons. The community was unified in its concerns and brought together intelligence, policy, and the entire national security apparatus, and there was mutual respect for everybody’s role. But, he said, we have lost that. Scientific diplomacy—techies talking to techies—will not fix this larger problem, he asserts. Kosal noted that this issue highlights the value of meetings such as this workshop, that leverage the convening power of the National Academies.

Stewart responded to Dynes comment, noting that the different communities from the Cold War have become stove-piped in the U.S. government, resulting in separation between different levels of expertise and policy making and operational capacity. Authoritarian regimes have fewer people involved, she said. Coordination may therefore be easier and access to decision makers by the experts may be more direct. Alan MacDougall added to the discussion noting that it is worth remembering that there are nations where the total population is less than the workforce of the federal government, who do a very good job of being modern societies with modern national defense establishments.