Summary1

Exposures to industrial chemicals in food, water, air, and consumer products can cause harm to human health and the environment. Risk assessment is a key public policy tool to inform decision making to protect public health and ecological receptors2 from unsafe environmental exposures to chemicals. In recent years, there has been a trend to apply systematic review for gathering evidence within the risk assessment process to increase transparency, objectivity, and reproducibility. Consequently, the U.S. Environmental Protection Agency (EPA) has been adopting systematic review within its risk assessment processes since the 2011 National Research Council review of the Integrated Risk Information System (IRIS) Program’s formaldehyde assessment. In 2016, when the Frank R. Lautenberg Chemical Safety for the 21st Century Act (“Lautenberg Act”) (Pub L No. 114-182) was signed to overhaul the Toxic Substances Control Act (TSCA) after 40 years, many stakeholders called for the adoption of systematic review within these important risk assessments.

The Lautenberg Act provides EPA’s Office of Pollution Prevention and Toxics (OPPT) with increased authority to regulate chemicals existing before the original 1976 TSCA was amended. To exert this new authority, EPA needed to promulgate rules to implement the new requirements and responsibilities under the law. The conduct of risk evaluations is determined by the Procedures for Chemical Risk Evaluation Under the Amended Toxic Substances Control Act, often referred to as the “Risk Evaluation Rule” (40 CFR Part 702, 82 FR 33726). The statute requires that EPA must establish by rule a process for risk evaluation and that the risk evaluation will contain a scope or problem formulation, a hazard assessment, an exposure assessment, a risk characterization, and a determination of unreasonable risk.3 The new authority afforded to EPA came with a timetable that imposed tight deadlines on OPPT as it assembled teams, promulgated rules, and drafted the guidance documents and operating procedures that prescribe how OPPT exerts its new authority. The Lautenberg Act also required strict statutory deadlines of 9 to 12 months for chemical prioritization, the process to determine which chemicals should undergo risk evaluations. The first 10 high-priority chemicals then underwent risk evaluations, which were to be completed within 3 years of initiation. An additional 20 were to follow.

Within the Risk Evaluation Rule, EPA chose only to define terms used within the statute, including “best available science” and “weight of the scientific evidence.” The term “systematic review” appears within the definition of weight of the scientific evidence; EPA chose to leave a reference to systematic review in the preamble of the Risk Evaluation Rule but not to codify a definition. Furthermore, EPA states that it will use a systematic review approach not only for the hazard assessment but also throughout the risk evaluation process.

As defined by the 2011 Institute of Medicine report Finding What Works in Health Care: Standards for Systematic Reviews, systematic review is “a scientific investigation that focuses on a specific question and uses explicit, prespecified scientific methods to identify, select, assess, and summarize the findings of similar but separate studies.” Systematic review has become the foundation for assessing evidence to be used for decision making in a variety of health contexts, including health care and public health.

___________________

1 This Summary does not include references. Citations for the findings presented in the Summary appear in the subsequent chapters.

2 Ecological receptors include any living organisms other than humans, the habitat that supports such organisms, or natural resources that could be adversely affected by environmental contaminations resulting from a release at or migration from a site.

3 In TSCA, the risk assessments are termed risk evaluations because they contain the risk determination, an element that is traditionally outside the risk assessment process.

Well-conducted systematic reviews methodically identify, select, assess, and synthesize the relevant body of research, and clarify what is known and not known about the potential benefits and harms of the exposure being researched.

In 2018, after beginning the first 10 chemical risk evaluations under the Lautenberg Act, OPPT released the document Application of Systematic Review in TSCA Risk Evaluations to guide the agency’s selection and review of studies. OPPT did not directly draw on existing methods, such as those being used and developed by the European Food Safety Authority, the Office of Health Assessment and Translation (OHAT) of the National Toxicology Program, the Navigation Guide, the Texas Commission on Environmental Quality, the World Health Organization, and the International Labour Organization, for occupational exposures. These methods, however, apply systematic review only to the hazard assessment portion of a risk assessment. Instead, OPPT developed a new approach that applies systematic review to the hazard assessment, the exposure assessment, data on physical and chemical properties, and other components for which systematic review is not generally applied. The approach taken by OPPT is presumably based on the definition of weight of evidence (WOE) in the Risk Evaluation Rule and the decision by OPPT to apply a systematic review method for evaluation of the evidence streams outside those included in the hazard assessment.

THE COMMITTEE’S APPROACH

EPA requested that the National Academies of Sciences, Engineering, and Medicine convene a committee to review EPA’s 2018 guidance document on Application of Systematic Review in TSCA [Toxic Substances Control Act] Risk Evaluations and associated materials (see Box S-1; the full Statement of Task is included in Chapter 1). The committee considered public comments on the document, EPA’s responses to public comments, and enhancements to the systematic review process reflected in documentation of the first 10 chemical risk evaluations. The committee was also asked to make recommendations for enhancements to EPA’s 2018 guidance document.

SYSTEMATIC REVIEW

Over the past decade, several approaches to applying systematic review for assessing evidence on risks of environmental agents have been elaborated, drawing on methods developed in other fields. These approaches to systematic review for chemical risk assessments have been typically applied to research questions about hazards to humans or ecosystems.

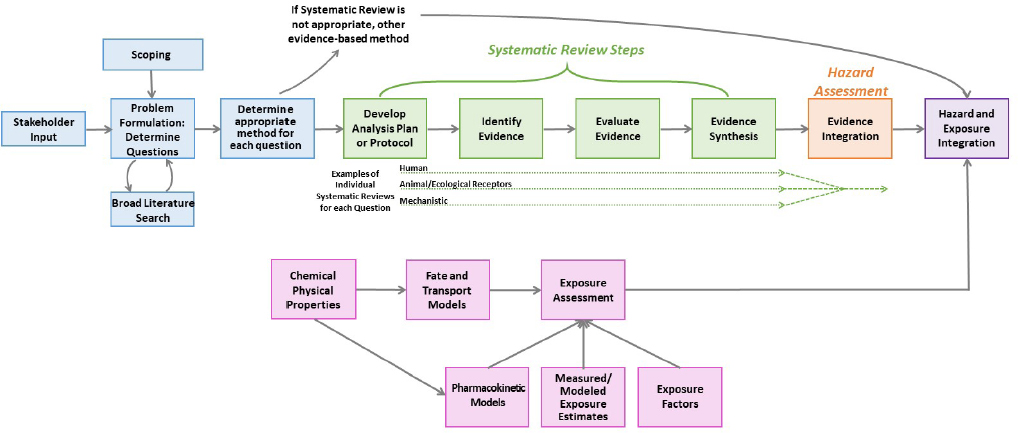

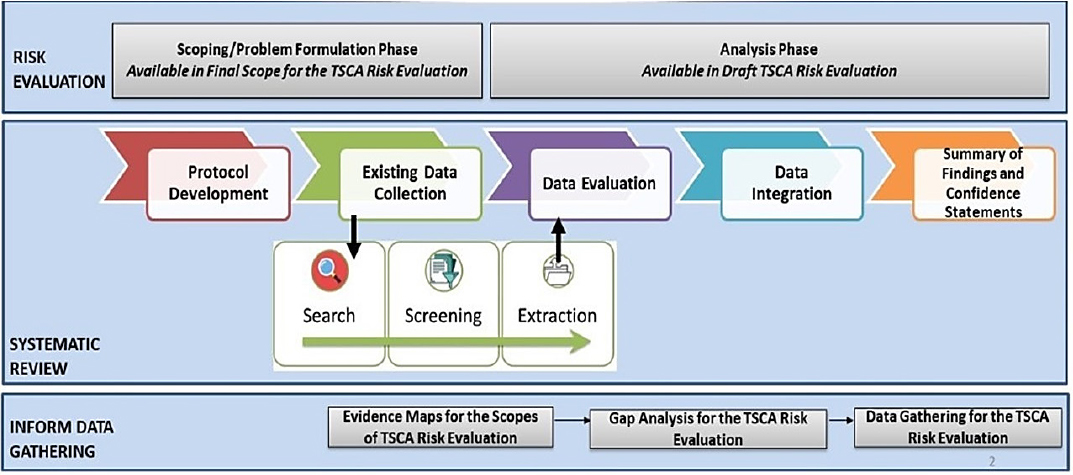

Figure S-1 provides a schema for how systematic review can be conducted to inform hazard assessment and make risk determinations. Figure S-2 illustrates the OPPT approach to systematic review within TSCA risk evaluations, which differs to an extent from the generic approach in Figure S-1 in that OPPT applies systematic review to all elements of the risk evaluation. Prior to the conduct of a systematic review, planning and problem formulation should take place. The planning and problem formulation step should include stakeholder engagement, broad literature searching to map the evidence on the topic, and identification of the most important questions and the best approach for answering such questions. The research questions and the approach should inform the first step of the systematic review, the development of the protocol. Each research question entails, in a sense, a separate systematic review; thus, the protocol should contain a Population (including animal or plant species), Exposure, Comparator, and Outcome (PECO) statement, the inclusion and exclusion criteria, and the plans for the synthesis of the data for each research question. Evidence identification, which includes searching and screening the literature and finding the reports as prescribed in the protocol, is the next step. Evidence evaluation follows. This step includes evaluation of the internal validity (i.e., Is the study biased?) of the individual studies, usually by using an accepted and evaluated risk-of-bias assessment tool appropriate to the design of the studies considered. Evidence synthesis should consist of a qualitative evaluation of the evidence, which can be complemented by a quantitative pooling of the data or a meta-analysis. The synthesis is completed by evaluation of the confidence in the overall body of evidence for a given data stream (e.g., human, animal or ecological receptors, or mechanistic) and its endpoints. This synthesis of the various, specific streams of evidence is followed by hazard assessment with integration of the multiple evidence streams of human, animal or ecological receptors, and mechanistic. Questions about human and ecological exposures could also be evaluated with systematic review, but systematic review tools for gathering and evaluating exposure data are not well developed. Exposure and hazard data are integrated to characterize risk (see Figure S-1).

Systematic review approaches have been widely used to assemble the evidence needed to assess human health and ecological receptors. Yet, the use of systematic review to collect, evaluate, and synthesize evidence streams that contribute to the exposure assessment of human and ecological receptors is not established and there is very little precedent for applying systematic review to these streams of evidence. Within the agency, the guidance that dictates how exposure, fate and transport, and physical chemical property data should be assembled for decisions about risks to human health and ecological receptors is contained in the Guidelines for Human Exposure Assessment, the Guidelines for Ecological Risk Assessment, and the operating procedures for the use of the ECOTOXicology (ECOTOX) knowledgebase.

Figure S-2 illustrates OPPT’s approach to systematic review, which differs to an extent from the above description and includes the systematic review as part of the broader process of risk evaluation. The OPPT process has problem formulation and scoping occurring somewhat in parallel with the protocol development and data collection process for both the hazard assessment and the exposure assessment. Within this step, OPPT uses a variety of software tools and approaches to conduct broad searching and to map the available evidence. OPPT uses exhaustive search strategies that include major scientific databases, backward searching for studies in previous chemical risk assessments, additional gray literature sources, studies submitted under TSCA, and studies identified in peer review. OPPT then screens the titles and abstracts against its list of needs for the evaluation (although it was unclear if the PECO statements are always used or if a list of data needs is used, as for the TCE evaluation). Next, it conducts a full-text screening of the papers to determine relevance prior to extraction for evaluation.

To evaluate the evidence, OPPT has developed an extensive de novo critical appraisal tool, termed TSCA’s “fit-for-purpose evaluation framework,” which is applied to human, animal or ecological receptors, mechanistic, exposure, fate, and chemical–physical property studies. OPPT has stated that the evaluation strategies were developed after review of various qualitative and quantitative scoring systems. The critical appraisals for different types of studies use different domains, and within each domain there are several metrics or questions.

After evaluation, OPPT merges the steps of evidence synthesis within a stream, and the step of evidence integration across streams makes it difficult to determine the general approach followed at this point. In the 2018 guidance document, OPPT notes that the evidence integration step has three phases.

Planning involves developing a strategy for analyzing and summarizing data across studies within each evidence stream and a strategy for weighing and integrating evidence across those streams. The execution phase involves the implementation of the strategies developed in the planning phase and the development of WOE conclusions. The third phase involves a check on the quality of the data used. Ultimately, this leads to a summary of findings and confidence statements.

Due to the decision to apply systematic review beyond the evidence streams of hazard assessment, OPPT developed approaches to apply systematic review to data types such as exposure, fate and transport, and chemical and physical properties.

CRITIQUING THE OPPT APPROACH

Looking at the core review elements of the Statement of Task, which address whether the TSCA approach to systematic review is “comprehensive, workable, objective, and transparent,” the committee finds that the approach presented by OPPT could be broadly improved to better meet these characteristics for the major review steps. The committee notes that its review was complicated by the challenge posed by inadequate documentation, itself an indication of failing at being comprehensive, workable, objective, and transparent. This discussion provides an overview of the critique of OPPT’s approach within Chapter 2, which also provides recommendations that would improve these aspects of OPPT’s approach.

Comprehensive

The committee found that the OPPT approach was not comprehensive at each step. The approach to problem formulation and protocol development did not result in refined research questions or a documented protocol for how the review should be conducted. This failing had implications for the number of studies identified and evaluated and resulted in challenges to integration across evidence streams. While the OPPT approach for identifying the evidence is comprehensive in regard to searching for literature in many databases, it is less clear how comprehensive the searches are for data that support models for ecological assessment and human health exposure assessment. In the TCE evaluation, for example, the hydrology data and product use information were both decades old.

The OPPT approach also does not give guidance on how physiologically based pharmacokinetic models will be evaluated, and, while it provides how the exposure models will be scored, little seems to be done to actually evaluate the models. With regard to synthesis, the approach does not contain elements important to addressing the research question. Finally, the WOE determination or evidence integration process was only detailed for one specific example, but it is uncertain whether this process represents a limited use for a specific endpoint for TCE or represents a method that will be used (in its current form) for future risk assessments. The committee finds that OPPT has merged aspects of important elements of evidence-based methods: evaluating individual studies, evaluating a body of evidence (i.e., strength or certainty of evidence for a conclusion), and evaluating level of confidence in a recommendation or determination of causation. In addition, the synthesis of the evidence within a specific data stream is not differentiated from integration of the evidence across the data streams. This condensation of steps makes it difficult to assess the extent to which the procedures used are comprehensive.

Workable

Considering whether the OPPT approach is workable, the report notes several concerns at each step. The current approach taken to problem formulation and protocol development is adding to a laborious process for searching, screening, and evaluating the literature. Completing a scoping review prior to the development of the PECO statements could narrow the search to appropriate studies and help

in selecting the appropriate evidence-based methodology for the review. The broad PECO statements led to inclusion and exclusion criteria that allowed inclusion of studies that may not be relevant. The broad searches for exposure data may have provided some efficiency, as it would be difficult to conduct the searches for chemicals that have many exposure scenarios relating to the conditions of use. Although OPPT is using a number of validated artificial intelligence–based tools to help make the process of screening hundreds of references more efficient, their use requires that precise and explicit inclusion and exclusion criteria are used consistently by all reviewers.

The evidence evaluation step includes items that do not assess risk of bias, most notably relevance. Relevance should be handled prior to study evaluation. Later, relevance can also be addressed in the evaluation of the body of evidence. The use of numerical scoring in critical appraisal does not follow standards for the conduct of systematic reviews and no justification is provided for the weighting of the specific metrics within the domains to create the overall quality score, making it hard to determine if the weights are appropriate.

Lastly, without a clear, documented approach to evidence synthesis and to integration, the risk evaluation process becomes unworkable because staff have to decide on approaches for these critical steps for each new evaluation rather than relying on a protocol or guidance.

Objective

The committee found the OPPT approach to be lacking objectivity at each step, from not using a defined approach to documenting how the problem formulation and protocol are developed. Further examples include inclusion and exclusion criteria that are too broad to identify the evidence, inherent subjectivity within the metrics that make up the evaluation score for study quality (without providing evidence that the metrics had been validated or tested for reliability as well as allowing a single reviewer to override them), and the lack of a consistent approach for documenting the objectives or methods for synthesis and evidence integration. The committee found that many of these concerns were related to the absence of a protocol a priori or the combination of the traditionally discrete and distinct steps of a systematic review. For example, OPPT does not clearly distinguish among scoping, problem formulation, and protocol development and merges the steps of evidence synthesis and integration.

Another problematic element of the TSCA evaluation framework is that the studies that are scored unacceptable are excluded from further analyses. Any fatal flaws in the methodology or conduct that preclude including a study should be used as eligibility criteria during the screening process. Once a study is determined to be eligible, the study should be included in the synthesis and the risk-of-bias assessment, with its limitations accounted for in any qualitative or quantitative synthesis. Given the large number of metrics scored for these data types, the possibility that a single unsatisfactory rating could completely nullify the use of a particular study from synthesis is problematic as it may lead to a biased review. Statistical power and statistical significance are not markers of risk of bias or quality. Statistical significance is not a measure of association or strength of association and should not be used to evaluate studies. In fact, combining multiple small, low-powered but similar studies in a synthesis is one of the benefits of systematic review.

Transparent

The committee found that transparency of the entire risk evaluation process is compromised across all of its elements. Neither clear questions nor protocols have been developed for the systematic reviews. Consequently, the review process is not documented from its start, and clarity is lacking when the review is finished and published. Overall, the committee found that the lack of information and details about the specific processes used for the identification of evidence reduced confidence in the findings. The OPPT processes and practices are not consistent with the standard of practice for systematic review.

Information about the search process was scattered across multiple documents within the docket for TCE and 1-BP, making the identification of details laborious and time consuming. The use of numeric scores for the evaluation of the studies obscures key differences between studies and prevents users of the reviews from making their own determinations about important strengths and limitations in study methods. OPPT reported to the committee that it generally follows standard practice by using two independent reviewers, but it was unclear how discrepancies between the two reviewers were handled. Furthermore, the committee noted that there were changes in study scores from one document to another. Evaluation of evidence synthesis was also complicated by the merger of evidence synthesis (within an evidence stream) and evidence integration (across multiple evidence streams) into a concurrent process, further reducing transparency and consistency. Confusing terminology used in the various documents (and sometimes even the variations in use within one document), the lack of information presented to describe the process, and the lack of documentation to explain deviations from the processes that were documented all contributed to the lack of transparency.

GENERAL FINDINGS

The committee finds that the process outlined in the 2018 guidance document, and as elaborated and applied in the example evaluations, does not meet the criteria of “comprehensive, workable, objective, and transparent.” The committee’s evaluation was made difficult by the incomplete and hard-to-follow documentation of many details of the process—adequacy of documentation is requisite for achieving transparency, objectivity, and replicability. In the committee’s judgment, the specific and general problems in TSCA risk evaluations are partially due to the decision to develop a largely de novo approach, rather than starting with the foundation offered by approaches that were extant in 2016. OPPT was further challenged by the statutory schedule for completing assessments.

As a general finding, the committee judged that the systematic reviews within the draft risk evaluations considered did not meet the standards of systematic review methodology. Given that systematic review is well established for application to the hazard stream of evidence, the committee reviewed the hazard component of the Draft Risk Evaluation for Trichloroethylene, applying a tool used to assess bias in systematic reviews (AMSTAR-2). The appraisal process was unnecessarily complicated due to insufficient and unclear documentation. Despite this barrier to applying the AMSTAR-2 instrument, the committee found that the TCE hazard assessment did not perform positively on the vast majority of AMSTAR-2 questions.

Another crosscutting finding relates to terminology and specifically to the interchangeable use of the terms “weight of evidence” and “systematic review.” The Risk Evaluation Rule (40 CFR Part 702) specifies that weight of the scientific evidence “means a systematic review method.” However, the language may not in and of itself require a systematic review. The Draft Risk Evaluation for Trichloroethylene refers to a “weight of the evidence analysis” for an individual outcome involving successive determinations: first, considering individual lines of evidence; and second, integrating these disparate lines of evidence. Throughout the report, the committee comments on the consequences of the definition of WOE in the Risk Evaluation Rule and the conflation of “weight of the scientific evidence” with “a systematic review method” as necessitated by the Risk Evaluation Rule. The committee understands that the definition of WOE within the Risk Evaluation Rule is difficult to change but suggests that OPPT adopt a different specific term to be used during the evidence integration step, such as “strength of evidence” or “certainty of evidence” as utilized in the Grading of Recommendations Assessment, Development and Evaluation process.

Regardless of terminology, a narrative description should be provided that describes the basis for the determination of the strength of evidence during the evidence integration step for all applicable data streams. We urge the use of standard descriptors for the strength of evidence as with the Integrated Science Assessments for the National Ambient Air Quality Standards.

OVERALL RECOMMENDATIONS

The committee was in strong consensus that the processes used by OPPT do not meet the evaluation criteria specified in the Statement of Task (i.e., comprehensive, workable, objective, and transparent). The committee recognizes that OPPT faced substantial challenges in implementing review methods on the schedule required by the Lautenberg Act. Those challenges have not yet been successfully met. The committee makes a number of specific recommendations in Chapter 2 as to how to improve the methods for assessments, both in general and with reference to particular elements of the evaluation process.

The general recommendations are summarized as follows:

- The OPPT approach to systematic review does not adequately meet the state of the practice. The committee suggests that OPPT comprehensively reevaluate its approach to systematic review methods, addressing the comments and recommendations provided in Chapter 2.

- With regard to hazard assessment for human and ecological receptors, the committee comments that OPPT should step back from the approach that it has taken and consider components of the OHAT, IRIS, and Navigation Guide methods that could be incorporated directly and specifically into hazard assessment.

- The committee finds that OPPT’s use of systematic review for the evidence streams, for which systematic review has not been previously adapted, to be particularly unsuccessful. Given these novel applications of systematic review, the committee suggests that OPPT should elaborate plans for continuing the refinement of methods, ideally in collaboration with internal and external stakeholders. The committee also suggests that OPPT evaluate how the existing OHAT, IRIS, and Navigation Guide methods could be modified for the other evidence streams. In addition, OPPT should use existing guidance within the agency such as the Guidelines for Human Exposure Assessment, the Guidelines for Ecological Risk Assessment, and the operating procedures for the use of the ECOTOXicology knowledgebase. Following these existing guidelines would improve transparency of the assessments.

- The committee recommends that a handbook for TSCA review and evidence integration methodology be put together that details the steps in the process. Throughout this report, the committee points to problems of documentation. The committee believes that the effort of developing and publicly vetting a handbook will pay off in the long run by making the process more straightforward, transparent, and easier to follow.

There is an ongoing cross-sector effort to develop and validate new tools and approaches for exposure, environmental health, and other new areas of application of systematic review. The committee strongly recommends that OPPT staff engage in these efforts. The approaches used for TSCA evaluation would benefit from the substantial external expertise available as well as additional transparency and acceptance by the different stakeholders and society in general as these tools are developed. The refinements recommended by this committee would boost the ability of actions taken under the Lautenberg Act to advance the mission of EPA: “to protect human health and the environment.”