The Inconvenient Public: Behavioral Research Approaches to Reducing Product Liability Risks

BARUCH FISCHHOFF JON F. MERZ

There would not be any product liability suits if there were not any people involved with engineered systems. Unfortunately, people are everywhere, and they sometimes make mistakes—as consumers, operators, and patients. They misunderstand instructions, overlook warning labels, and employ equipment for inappropriate purposes.

In many cases, they realize that any ensuing misfortune is clearly their own fault, as when they have been drinking or using illicit drugs. Often, though, their natural response is to blame someone else for what went wrong. In psychological terms, there are both cognitive and motivational reasons for this tendency. Cognitively, injured parties see themselves as having been doing something that seemed sensible at the time, and not looking for trouble. As a result, any accident comes as a surprise. If it was to be avoided, then someone else needed to provide the missing expertise and protection. Motivationally, no one wants to feel responsible for an accident. That just adds insult to injury, as well as forfeiting the chance for emotional and financial redress.

Of course, the natural targets for such blame are those who created and distributed the product or equipment involved in an accident. They could have improved the design to prevent accidents. They should have done more to ensure that the product would not fail in expected use. They could have provided better warnings and instructions in how to use the product. They could have sacrificed profits or forgone sales, rather than let users bear (what now seem to have been) unacceptable risks.

It is equally natural for producers and distributors to shift the blame back to the user. Cognitively, the wisdom of hindsight makes it obvious to

them what the user should have done or seen in order to avoid an accident. They remember all the care that was taken in the design process. They see no ambiguity in the instructions and accompanying warnings. They would not have dreamed of using the system or product in the way that led to the accident. Motivationally, no one wants to be responsible for another's misfortune, even where there are no financial consequences. No one is in the business of hurting people.

To the extent that this description is accurate, it depicts an unhappy situation. Accidents keep happening, while each side blames the other. At the extreme, injured users may translate their grievances into lawsuits, while the producers and distributors fume about the irresponsible public. There is, of course, a steady supply of lawyers, politicians, and pundits ready to fan these frustrations. In the short run, it can be reinforcing to hear about the other party's venality or incompetence. In the long run, though, such sweeping claims merely reinforce prejudices and obscure the opportunities for progress.

In this light, technical innovation is threatened not just by an unthinking public, but also by an unthinking attitude toward the public. Few people in the technical community have any significant training in the behavioral sciences. As a result, it is hard for them to make sense of the behavior that they see or to devise creative improvements in design. They may have been drawn to engineering because it promised greater predictability than did dealing with fallible people. They may be reluctant to acknowledge the limits of their expertise or to include new kinds of expertise in already complex design processes.

This paper will analyze the opportunities for incorporating scientific knowledge about one aspect of human behavior in the product design and management process: how people understand the risks of the products they use. It will look at both quantitative understanding, regarding the magnitude of risks and benefits, and qualitative understanding, regarding how risks are created and controlled.

Quantitative understanding is essential if people are to realize what risks they are taking, decide whether those risks are justified by the accompanying benefits, and confer informed consent for bearing them. Qualitative understanding is essential to using products in ways that achieve minimal risk levels, to recognizing when things are going wrong, and to responding to surprises.

After presenting some of the empirical and analytical procedures for assessing and improving these kinds of understanding, this paper will consider the extent of their possible contribution to product safety and innovation. Its goal is to encourage attention to these issues in the product stewardship process.

ARENAS FOR RISK PERCEPTIONS

Although technical experts have the luxury of specializing in the management of particular risks, members of the general public do not. They face too many risks in their lives to acquire detailed knowledge of more than a minute portion of those risks. Their risks include affairs of the heart, ballot box, and pocketbook, as well matters of health and safety. Even in matters of physical welfare and survival, the list of concerns can be very long. Table 1 provides an illustrative list of situations in which the risk perceptions of individuals have consequences.

The enormous range of risks creates both challenges and opportunities for the manufacturers and distributors of potentially hazardous products. On the one hand, they must fight to divert a portion of the public's scarce attention to the potential risks of their products. In so doing, they may imperil their financial security by diverting attention from the benefits of

TABLE 1 Arenas for Risk Perception

|

Workplace |

|

On-the-job safety |

|

Right-to-know laws |

|

Workers' compensation |

|

Neighborhood |

|

Rumors |

|

Emergency response |

|

Community right-to-know |

|

Siting |

|

Courts |

|

Informed consent |

|

Risk-utility analysis |

|

Psychological stress |

|

Regulation |

|

Agenda setting |

|

Safety standards |

|

Local initiatives |

|

Industry |

|

Innovation |

|

Public relations |

|

Insurance |

|

Product differentiation (by safety) |

those products or by making their products seem riskier than other (possibly competing) products whose risks are presented less diligently. On the other hand, they can rely on users having considerable experience with related processes, as well as a repertoire of cognitive and physical skills acquired in a wide variety of situations. Indeed, new product introductions can be particularly complicated when the target audience lacks relevant experience. Introductions may be quicker in the short run, but more expensive in the long run, when that audience puts too much faith in its existing knowledge and skills.

SOURCES FOR UNDERSTANDING THE PUBLIC

Just as it is sensible for laypeople confronted by a new product to look for familiar experiences and general knowledge, its producers might do the same when anticipating the response of that public. Responsible firms can be trusted to examine the specific experiences that members of the public have with their own and competitors' existing products. They may not, however, find their way to the general research literature on risk-related behavior. The next section of this paper is intended to improve access to that literature by summarizing general patterns and providing representative references. It draws primarily on the research literature in human judgment and decision making and its subspecialty focused on the perceptions of technological hazards. These fields are, roughly, 35 and 20 years old, respectively. Their roots are in the literatures on attitude change, clinical judgment, and human factors, each of which received a major push as part of the U.S. effort during World War II, as well as the much older fields of experimental psychology and decision theory.

These literatures provide substantive results that can be tentatively extrapolated to predict or explain people's responses to new products. For example, many studies have found that people are relatively insensitive to the extent of their own knowledge (Fischhoff et al., 1977; Wallsten and Budescu, 1983). The most common result is overconfidence, for example, being correct on only 80 percent of those occasions when one is absolutely certain of being correct. The generality of these findings (in those settings that have been studied) suggests that people might have undue confidence in their beliefs about new products and about how the attendant risks can be controlled. If this seems like a reasonable and worrisome hypothesis, then the research literature might be consulted for procedures able to improve people's judgment. For example, telling people that overconfidence is common seems to have little effect, whereas presenting people with personalized feedback regarding the appropriateness of their own confidence can make a positive difference (Fischhoff, 1982).

If one wanted to test these or other hypotheses, then the existing research

also provides well-understood methodologies for conducting studies specific to particular risks. Ascertaining people's beliefs and values is a craft having as many nuances as does assessing their physiological functions or conducting measurements in the natural or biological sciences. For example, two formally equivalent ways of asking people to estimate how large a risk is can produce estimates that vary by several orders of magnitude (Fischhoff and MacGregor, 1983). A study that used one method might make it seem as though people underestimate the risk, whereas a study using the other method would produce apparent overestimates. As in other sciences, such measurement artifacts are sometimes predicted on the basis of general theories (Poulton, 1968, 1982), whereas in other cases they are discovered by trial and error. Exploiting this experience offers the opportunity to avoid the mistaken interpretations, and perhaps even mistaken policies, that such experimental artifacts can produce.

The applications of these methods to people's perceptions of technological hazards have seldom produced results challenging the overall conclusions from the general literature on judgment and decision making. These studies have, however, provided important elaborations, for example, showing just what people believe about particular risks, just how confident they are in those beliefs, or just how far they trust risk information coming from particular sources. They have also drawn attention to general issues with particular significance for consumer products and workplace processes, such as how people evaluate the trustworthiness of risk information (Baum et al., 1983; Johnson and Tversky, 1983; Richardson et al., 1987; Weinstein, 1987).

These detailed, systematic empirical studies stand in stark contrast to the casual observations that dominate many discussions of the public's behavior. Perhaps surprisingly, even scientists, who would hesitate to make any statements about topics within their own areas of competence without a firm research base, are willing to make strong statements about the public on the basis of anecdotal evidence. Unfortunately, immediate appearances can be deceiving, as when salient examples of public behavior are not particularly representative. And, as mentioned, even systematic observations can be misleading if not undertaken with a full understanding of the relevant methodology. An unfounded belief in having understood the public is a serious barrier to acquiring a genuine understanding.

The limits to casual observation might be seen in the coexistence of conflicting claims about the public, often associated with conflicting recommendations regarding how to deal with it. For example, advocates of deregulation frequently describe members of the public as understanding risks so well that they can readily fend for themselves in an unfettered marketplace. This confidence in the public is usually shared by those who advocate extensive public participation in risk management, through such

avenues as hearings and information campaigns (Magat and Viscusi, 1992). Quite the opposite conclusion about public competence underlies proposals to leave risk management to technical experts or to force people to adopt risk-management practices that are "for their own good." Examples here include seatbelts, crash helmets, and dietary restrictions. Given the political and safety implications of these conflicting perceptions about the public, laypeople's behavior would seem to merit careful study. Good, hard evidence could provide guidance for managing risks, resolving conflicts between the public and technical experts, supplying the information that the public needs for better understanding, and creating technologies whose risks are acceptable to the public (Viscusi, 1992). The following section provides a summary of conclusions that can be drawn from studies of risk perception, as well as from the general research literature regarding judgment and decision making.

WHAT IS KNOWN

People Simplify

Most substantive decisions require people to deal with more nuances and details than they can readily handle at any one time. People have to juggle a multitude of facts and values when deciding, for example, whether to change jobs, trust merchants, or protest a toxic landfill. To cope with the overload, people simplify. Rather than attempting to think their way through to comprehensive, analytical solutions to decision-making problems, people try to rely on habit, tradition, the advice of neighbors or the media, and on general rules of thumb, such as nothing ventured, nothing gained. Rather than consider the extent to which human behavior varies from situation to situation, people describe other people as encompassing personality traits, such as being honest, happy, or risk seeking (Nisbett and Ross, 1980). Rather than think precisely about the probabilities of future events, people rely on vague quantifiers, such as "likely" or "not worth worrying about"—terms that are used differently in different contexts and by different people (Beyth-Marom, 1982).

The same desire for simplicity can be observed when people press risk managers to categorize technologies, foods, or drugs as "safe" or "unsafe," rather than to treat safety as a continuous variable. It can be seen when people demand convincing proof from scientists who can provide only tentative findings. It can be seen when people attempt to divide the participants in risk disputes into good guys and bad guys, rather than viewing them as people who, like themselves, have complex and interacting motives. Although such simplifications help people to cope with life's complexities, they can also obscure the fact that most risk decisions involve

gambling with people's health, safety, and economic well-being in arenas with diverse actors and shifting alliances.

Once People's Minds Are Made Up, It Is Hard to Change Them

People are quite adept at maintaining faith in their current beliefs unless confronted with concentrated and overwhelming evidence to the contrary. Although it is tempting to attribute this steadfastness to pure stubbornness, psychological research suggests that some more complex and benign processes are at work (Nisbett and Ross, 1980).

One psychological process that helps people to maintain their current beliefs is feeling little need to look actively for contrary evidence. Why look if one does not expect that evidence to be very substantial or persuasive? For example, how many environmentalists read the Wall Street Journal and how many industrialists read the Sierra Club's Bulletin to learn something about risks (as opposed to reading these publications to anticipate the tactics of the opposing side)? A second contributing thought process is the tendency to exploit the uncertainty surrounding apparently contradictory information in order to interpret it as being consistent with existing beliefs (Gilovich, 1993). In risk debates, a stylized expression of this proficiency is finding just enough problems with contrary evidence to reject that evidence as inconclusive.

A third thought process that contributes to maintaining current beliefs can be found in people's reluctance to recognize when information is ambiguous. For example, the incident at Three Mile Island would have strengthened the resolve of any antinuclear activist who asked only, ''How likely is such an accident, given a fundamentally unsafe technology? —just as it would have strengthened the resolve of any pronuclear activist who asked only, "How likely is the containment of such an incident, given a fundamentally safe technology?" Although a very significant event, Three Mile Island may not have revealed very much about the riskiness of nuclear technology as a whole. Nonetheless, it helped the opposing sides to polarize their views. Similar polarization followed the accident at Chernobyl, with opponents pointing to the consequences of a nuclear accident, which they see as coming with any commitment to nuclear power, and proponents pointing to the unique features of that particular accident, which are unlikely to be repeated elsewhere, especially considering the precautions instituted in its wake (Krohn and Weingart, 1987).

People Remember What They See

Fortunately, given their need to simplify, people are good at observing those events that come to their attention and that they are motivated to understand

(Hasher and Zacks, 1984; Peterson and Beach, 1967). As a result, if the appropriate facts reach people in a responsible and comprehensible form before their minds are made up, there is a decent chance that their first impression will be the correct one. For example, most people's primary sources of information about risks are what they see in the news media and observe in their everyday lives. Consequently, people's estimates of the principal causes of death are strongly related to the number of people they know who have suffered those misfortunes and the amount of media coverage devoted to them (Lichtenstein et al., 1978).

Unfortunately, it is impossible for most people to gain first-hand knowledge of many hazardous technologies. Rather, what laypeople see are the outward manifestations of the risk-management process, such as hearings before regulatory bodies or statements by scientists to the news media. In many cases, these outward signs are not very reassuring. Often, they reveal acrimonious disputes between supposedly reputable experts, accusations that scientific findings have been distorted to suit their sponsors, and confident assertions that are disproven by subsequent research (MacLean, 1987; Rothman and Lichter, 1987).

Although unattractive, these aspects of the risk-management process can provide the public with potentially useful clues to how well technologies are understood and managed by industry and regulatory agencies. Presumably, people evaluate these clues just as they evaluate the conflicting claims of advertisers and politicians. It should not be surprising, therefore, that the public sometimes comes to conclusions that differ from what risk managers hope or expect. For example, it was reasonable to conclude that saccharin is an extremely potent carcinogen after seeing the enormous scientific attention that it generated some years back. Yet, much of the controversy actually concerned how to deal with a food that was strongly suspected of being a weak carcinogen. In some cases, the public may have a better overview on the proceedings than the scientists and risk managers mired in them, realizing perhaps that neither side knows as much as it claims.

People Cannot Readily Detect Omissions in the Evidence They Receive

Unfortunately, not all problems with information about risk are as readily observable as blatant lies or unreasonable scientific hubris. Often the information that reaches the public is true, but only part of the truth. Detecting such systematic omissions proves to be difficult (Tversky and Kahneman, 1973). For example, most young people know relatively few people suffering from the diseases of old age, nor are they likely to see those maladies cited as the cause of death in newspaper obituaries. As a result,

young people tend to underestimate the frequency of these causes of death, while most people overestimate the frequency of vividly reported causes, such as murder, accidents, and tornadoes (Lichtenstein et al., 1978).

Laypeople are even more vulnerable when they have no way of knowing about information that has not been disseminated. In principle, for example, one could always ask physicians if they have neglected to mention any side effects of the drugs they prescribe. Likewise, people could ask merchants whether there are any special precautions for using a new power tool, just as they could ask proponents of a hazardous facility if their risk assessments have considered all forms of operator error and sabotage. In practice, however, these questions about omissions are rarely asked. It takes an unusual turn of mind and personal presence to recognize one's own ignorance and insist that it be addressed.

As a result of this insensitivity to omissions, people's risk perceptions can be manipulated in the short run by selective presentation. Not only will people not know what they have not been told, but they will not even feel how much has been left out (Fischhoff et al., 1978). What happens in the long run depends on whether the unmentioned risks are revealed by experience or by other sources of information. When deliberate omissions are detected, the responsible party is likely to lose all credibility. Once a shadow of doubt has fallen, it is hard to erase.

People May Disagree More about What Risk Is Than about How Large It Is

Given this mixture of strengths and weaknesses in the psychological processes that generate people's risk perceptions, there is no simple answer to the question, How much do people know and understand? The answer depends on the risks and on the opportunities that people have to learn about them.

One obstacle to determining what people know about specific risks is disagreement about the definition of "risk" (Crouch and Wilson, 1981; Fischhoff et al., 1983; Fischhoff et al., 1984; Slovic et al., 1979). The opportunities for disagreement can be seen in the varied definitions used by different risk managers. For some, the natural unit of risk is an increase in probability of death; for others, it is reduced life expectancy; for still others, it is the probability of death per unit of exposure, where "exposure" itself may be variously defined.

The choice of definition is often arbitrary, reflecting the way in which a particular group of risk managers habitually collects and analyzes data. The choice, however, is never trivial. Each definition of risk makes a distinct political statement regarding what society should value when it judges the acceptability of risks. For example, "reduced life expectancy"

puts a premium on deaths among the young, which would be absent in a measure that simply counted the expected number of premature deaths. A measure of risk could also give special weight to individuals who can make a special contribution to society, to individuals who were not consulted (or even born) when a risk-management policy was enacted, or to individuals who do not benefit from the technology generating the risk.

If laypeople and risk managers use the term "risk" differently, then they can agree on the facts about a specific technology but still disagree about its degree of riskiness. Some years ago, the idea circulated in the nuclear power industry that the public cared much more about multiple deaths from large accidents than about equivalent numbers of casualties resulting from a series of small accidents. If this assumption were valid, the industry would be strongly motivated to remove the threat of such large accidents. If removing the threat proved impossible, then the industry could argue that a death is a death and that, in formulating social policy, it is totals that matter, not whether deaths occur singly or collectively.

There were never any empirical studies to determine whether this was really how the public defined risk. Subsequent studies, though, have suggested that what bothers people about catastrophic accidents is the perception that a technology capable of producing such accidents cannot be very well understood or controlled (Slovic et al., 1984). From an ethical point of view, worrying about the uncertainties surrounding a new and complex technology, such as nuclear power, is different from caring about whether a fixed number of lives is lost in one large accident rather than in many small accidents.

People Have Difficulty Detecting Inconsistencies in Risk Disputes

Despite their frequent intensity, risk debates are typically conducted at a distance (Krimsky and Plough, 1988; Mazur, 1973; Nelkin, 1978). The disputing parties operate within self-contained communities and talk principally to one another. Opponents are seen primarily through their writing or their posturing at public events. Thus, there is little opportunity for the sort of subtle probing needed to discover basic differences in how the protagonists think about important issues, such as the meaning of key terms or the credibility of expert testimony. As a result, it is easy to misdiagnose one another's beliefs and concerns.

The opportunities for misunderstanding increase when the circumstances of the debate restrict candor. For example, some critics of nuclear power actually believe that the technology can be operated with reasonable safety. However, they oppose it because they believe that its costs and benefits are distributed inequitably. Although they might like to discuss these issues, public hearings about risk and safety often provide

these critics with their only forum for venting their concern. If they oppose the technology, then they are forced to do so on safety grounds, even if this means misrepresenting their perceptions of the actual risk. Although this may be a reasonable strategy for pursuing their ultimate goals, it makes them look unreasonable to observers who hold opposing views of nuclear power.

Individuals also have difficulty detecting inconsistencies in their own beliefs or realizing how simple reformulations would change their perspectives on issues. For example, most people would prefer a gamble with a 25 percent chance of losing $200 (and a 75 percent chance of losing nothing) to a sure loss of $50. However, most of the same people would also buy a $50 insurance policy to protect against such a loss. What they will do depends on whether the $50 is described as a "sure loss" or as an "insurance premium." In such cases, one cannot predict how people will respond to an issue without knowing how they will perceive it, which depends, in turn, on how it will be presented to them by merchandisers, politicians, or the media (Fischhoff, 1991; Fischhoff et al., 1980; Turner and Martin, 1984; Tversky and Kahneman, 1981).

Thus, people's insensitivity to the nuances of how risk issues are presented exposes them to manipulation. For example, a risk might seem much worse when described in relative terms, such as doubling their risk, than in absolute terms, as in increasing that risk from one in a million to one in a half million. Although both representations of the risk might be honest, their impacts would be quite different. Perhaps the only fair approach is to present the risk from both perspectives, letting recipients determine which one, or hybrid, best represents their world view.

Experts Are People, Too

Obviously, experts have more substantive knowledge than laypeople. Often, however, the practical demands of risk management force experts to make educated guesses about critical facts, taking them far beyond the limits of their data. In such situations, debates about risk are often conflicts between competing sets of risk perceptions, those of the public and those of the experts. As a result, one must ask how good those expert judgments are. Do experts, like laypeople, tend to exaggerate the extent of their own knowledge? Are experts more sensitive than others to systematic omissions in the evidence that they receive? Do they, too, tend to oversimplify policy issues?

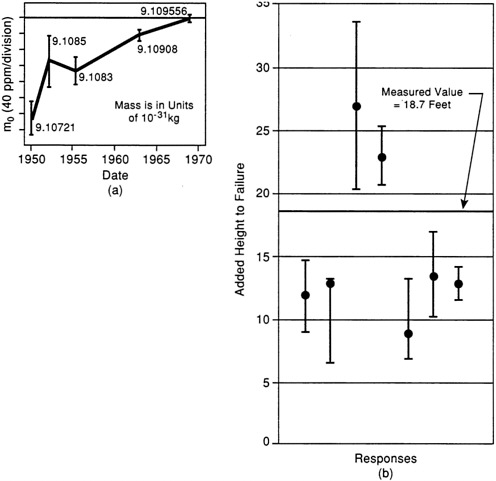

Available studies suggest that when experts must rely on judgment, their thought processes often resemble those of laypeople (Fischhoff, 1989; Kahneman et al., 1982; Mahoney, 1979; Shlyakhter et al., 1994). For example, Figure 1 displays two cases of overconfidence in the judgments of senior

FIGURE 1 Two examples of overconfidence in expert judgment. Overconfidence is represented by the failure of error bars to contain the true value. (a) Subjective estimates by senior scientists of the rest mass of the electron. (b) Subjective estimates by civil engineers of the height at which a test embankment would fail. In each example, the range of values provided by the experts failed to contain the true value as it was later determined. SOURCE: Fischhoff and Svenson (1988).

scientists in their attempts to determine ranges of possible values (or confidence intervals) for topics within their areas of expertise (Henrion and Fischhoff, 1986; Hynes and Vanmarcke, 1976). Anecdotal evidence of the limits to expert judgment can be found in many cases where fail-safe systems have gone seriously awry or where confidently advanced theories have been proved wrong. For example, DDT came into widespread and uncontrolled use before the scientific community had seriously considered the possibility of side effects. Medical procedures, such as using DES to prevent miscarriages, sometimes produce unpleasant surprises after being judged safe enough to be used widely (Berendes and Lee, 1993; Grimes, 1993). The accident sequences at Three Mile Island and Chernobyl seem to have been out of the range of possibility, given the theories of human behavior underlying the design of those reactors (Aftermath of Chernobyl, 1986; Hohenemser et al., 1986; Reason, 1990). Of course, science progresses by absorbing lessons that prompt it to discard incorrect theories. However,

society cannot make rational decisions about hazardous technologies without knowing how much confidence to place in them and in the scientific theories on which they are based.

In the academic community, vigorous peer review offers technical experts some institutional protection against the limitations of their own judgments. Unfortunately, the exigencies of risk management, including time pressures and resource constraints, often strip away these protections, making risk assessment something of a quasi science (like much cost/benefit analysis, opinion polling, or evaluation research), bearing more of the rights than the responsibilities of a proper discipline.

On the basis of psychological theory, one would trust experts' opinions most where they have had the conditions needed to learn how to make good judgments. These conditions include prompt, unambiguous feedback that rewards them for candid judgment and not, for example, for exuding confidence. Weather forecasters do have these conditions, and the result is a remarkable ability to assess the extent of their own knowledge (Murphy and Winkler, 1984). It rains almost exactly 70 percent of the time when they are 70 percent confident that it will. Unfortunately, such conditions are rare. When feedback is delayed, as with predictions of the carcinogenicity of chemicals having long latencies, learning may be very difficult. Further problems arise when expert predictions are ambiguous or the lessons of subsequent experience are hard to unravel. Psychological theory also suggests that learning is likely to be fairly local. Thus, one might question toxicologists' judgments about social policy just as much as social policymakers'· judgments about toxicology (Cranor, 1993).

APPLYING BEHAVIORAL RESEARCH

The Interface of Products, People, and Law

Manufacturers have a legal obligation to produce products that are "duly safe" (Wade, 1973). Unfortunately, the law fails to specify clearly how much safety is required. One source of uncertainty is the lack of detailed feedback from the courts (Saks, 1992), making it difficult for citizens or scholars to discern general patterns (Eisenberg and Henderson, 1993; Henderson, 1991; Merz, 1991a). The news media may compound problems by disproportionately reporting cases with unusual fact situations or particularly large punitive damage awards. Much less attention is devoted to the remitter of that award or subsequent settlement or reversal on appeal (Nelkin, 1984; Rustad, 1992).

Even if more detailed information were available regarding litigated cases, that information might be of limited usefulness because of systematic biases in the choices of cases for trial. Lawsuits arise from a very small

proportion of claims that are, in turn, a small subset of the injuries associated with a product (Hensler et al., 1991; Localio et al., 1991). Thus, manufacturers receive meager signals from the courts regarding how to manage their affairs (Huber and Litan, 1991). Perhaps the best they can do to anticipate legal problems is compare the attributes of their product with those of existing products. When they share features with products that have proven problematic, they should make extraordinary efforts at each stage of the design, testing, production, marketing, and post-marketing process (Weinstein et al., 1978).

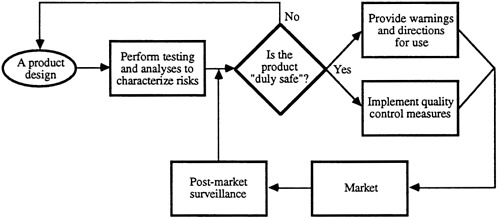

Product liability may be viewed as attempting to regulate these various aspects of a manufacturer's design, production, and marketing behaviors. To explore this aspect of the law, it is useful to draw an analogy to the FDA licensing process as a prototype for product development, production, and marketing, because this process is the one our society uses to ensure that new drugs are acceptably safe.1 Figure 2 conceptualizes that process to highlight the interaction between product liability law and manufacturers' product decisions. This figure presents a discrete set of steps and a key decision. All of the steps must be taken adequately and the decision must be answered correctly in order to shield a manufacturer from liability for any injuries caused by the resulting products. We depict the process as a loop, because the obligation to collect information and to act on it transcends any one sale, leading to a continuous process of learning (e.g., how the product is actually used or misused by consumers) and modification of the marketing process.2 Liability may result from departures from the "expected" norms for the management of product-related information (e.g., regarding the risks and benefits of the products) and

FIGURE 2 Simplified view of a generalized product marketing process based on the Food and Drug Administration licensing process.

decision making. Absent regulatory oversight, we do not propose that following the process in itself is a defense, but, rather, that following this process may assist manufacturers in placing into the stream of commerce products that are "duly safe."

In detail, the steps in this process are as follows:

-

Testing and analysis to characterize risks—performing reasonable research and testing to ascertain the risks and benefits of alternative designs arising from foreseeable uses of the product, considering (a) who the target consumers and users of the products are and whether these users are particularly susceptible to injury from the product, (b) the potential for misuse and abuse of the product, (c) the nature of the injuries caused and the base rate of similar injuries in the user population attributable to similar products or nonattributable to any known causes, (d) the state of scientific knowledge about the risks, and (e) uncertainties in the above factors.

-

Determining whether the product is duly safe—making design choices to ensure that the products are "duly safe" by balancing the risks and benefits of alternative designs, expressly considering (a) the state of the art, (b) the reasonable availability and practicability of modifications and safety enhancements, (c) regulatory and industrial standards applicable to product safety, and (d) features of competing products.

-

Providing warnings and directions for use—providing warnings of any remaining latent and nonobvious risks so that consumers can make informed choices about product purchase and use and to provide adequate directions to enable them to use the products properly, taking into account (a) the obviousness of the risks, (b) whether a warning is feasible (i.e., whether users are children, the risk is to a third party, or there is no practicable way to place a useful warning on the product), and (c) labeling standards, if any.

-

Quality control measures—implementing adequate production techniques to assure that products meet design specifications, and instituting quality control mechanisms to reduce the chance of release of any products with undetected flaws, taking into account the probability and nature of any injuries that may be associated with such flaws and the marginal costs of reducing those risks.

-

Marketing—marketing the product in a responsible manner, ensuring that advertising and promotion techniques do not constitute warranties, misrepresent the product, or target especially susceptible users for whom the product design, instructions, or warnings are inadequate.

-

Post-market surveillance—monitoring product performance in the marketplace and modifying product design, production, and warnings,

-

recalling products for correction, or pulling the product off the market in response to user feedback (Lamken, 1989).

Many of the decisions that manufacturers must make in the process outlined above are extremely difficult. To help inform these decisions, we first link the process with product liability law, then identify what the law requires at each step of the process. Product liability encompasses several distinct doctrines, each providing an independent basis on which liability can be predicated. Each doctrine places different obligations on a manufacturer and seller. Each offers different opportunities for using an understanding of how consumers perceive products in order to reduce their product liability exposure. The first doctrine is that the way a product is represented to consumers may give rise to warranties, whether intended or unintended. Painting an unduly glowing picture of a product may increase sales. However, it may also mislead consumers into thinking that there are differential quality and safety features, which could, in turn, lead reasonable consumers to lower their guard inappropriately. The law obliges the seller to ensure that seller and buyer have the same product in mind at the time of a sales transaction.

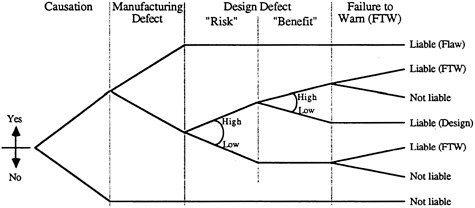

Second, manufacturers will be liable for injuries caused by a "defective" product (American Law Institute, 1965). A product can be deemed defective if it has a manufacturing defect or flaw, if its design is defective, or if the warnings and instructions provided with the product are defective. Thus, an injured consumer has several tiers of alleged defectiveness on which to base a claim for damages, and manufacturers must run a gauntlet to avoid liability. Figure 3 depicts the three bases of a plaintiff's claim for injuries caused by an allegedly defective product, each of which provides an independent and sufficient basis for liability.

At the most basic level, sellers will be liable only for injuries caused by

FIGURE 3 A logically structured schema of product liability law.

their products. The causal inquiry may be extremely complex because of uncertain exposures, confounding causes and base rates of injury in the population at risk, and limited scientific knowledge about causal relationships (Brennan, 1988). In the figure, causation is presented deterministically to reflect the strong dependence of liability on it.

Moving across the figure to the right, the diagram depicts strict liability for manufacturing defects or flaws. Manufacturers are held strictly liable for products that deviate from the intended design or from expected or normal production (Henderson, 1973). The producer must make a trade-off between the real costs of the quality controls needed to avoid such manufacturing flaws and the potential costs of injury and liability from substandard products.

The third basis of liability is design defect, meaning either that the benefits of the product did not justify the risks or that the design failed to meet consumers' reasonable expectations for that product (Henderson, 1979). In either case, the manufacturer must decide how safe a product must be to justify its benefits as well as the utility that would be lost were the product made safer. Liability will usually turn on the availability and feasibility of alternative product designs, which could have made the product safer with no or little loss of consumer utility. Understanding how people use different products and perceive risks and benefits is a key to making good design decisions (Chapanis, 1959). It is also important for a manufacturer to be aware of technological developments as they define the state of the art, to keep abreast of the design standards set by competitors, and to comply with industry and regulatory requirements. Deviations from expected norms, as embodied in industry practices and applicable standards, may provide strong evidence of substandard design. Justifications for any such deviations must be embodied explicitly in analysis, testing, and managerial decision making (Twerski et al., 1980).

The fourth basis for product liability arises from inadequate directions for the proper use of a product or from failure to provide warnings of known but nonobvious risks associated with its use (Henderson and Twerski, 1990). Regardless of the utility of the low-risk product, any known nonobvious or latent risk must be disclosed to avoid potential liability. This obligation may extend to providing subsequently acquired information to consumers after the sale, or even to product redesign, recall, and modification. The duty to disclose is not absolute, however, because consumers are generally charged with knowing open and notorious hazards, although exactly what such hazards are may be a question of interpretation. Here, too, better understanding of consumers' perceptions, as well as their interpretations of warnings and instructions when provided, will lead to better and more defensible products.

The following sections discuss several general strategies for exploiting

the research described above in order to meet these conditions. Each successive option affords an increasingly central role to concern about the public in the product-stewardship process. The analysis begins with the reactive approach of attempting to predict the extent of problems arising from the way a product is used and ends with the proactive approach of basing product design on concern about risk to users.

Predicting Product-Use Problems

The least that manufacturers can do is to face up to their problems. A systematic analysis of the likelihood and severity of incidents is essential to any reasoned allocation of resources in product management. It can help determine how much to spend on different kinds of design, testing, training, and warning. It may even identify cases where a product is too risky to manufacture, considering the direct costs of compensating those who are injured and the indirect costs of diminished reputation. Such analyses must be performed during the initial design and testing of a product and continue through product marketing and post-market surveillance. The goal is to ensure that products are marketed to consumers without creating unwarranted and unwanted representations of the quality and functionality of the product.

As many of the other chapters in this volume indicate, many manufacturers have procedures in place for making such determinations. Behavioral research might contribute to these processes in several ways. One is identifying circumstances that call for analysis. For example, many products are in constant development, as manufacturers generate new styles or exploit advances in materials or fabrication techniques. In addition to these changes over time, many products are simultaneously manufactured in a variety of related forms to suit the needs of different market niches.

A careful analysis might reveal surprises in how these alternative versions are used. At times, even cosmetic changes can affect the way a product is used, such as by suggesting less need for caution or a broader range of applications, not all of which were intended. At other times, meaningful changes may go unnoticed, leading to negative transfer—the interference of old habits with new situations.3 At still other times, users may simply need time to master the quirks of the new version of a familiar product. Until they do, they are exposed to ''predictable surprises."

Ironically, these transitional problems can be exacerbated by the natural desire for design improvement. A system may be in constant flux, so that no configuration is ever really mastered. Its rate of problems and near problems may be too low to allow for learning. Training exercises may be unrealistic, or telegraph their punches, or evoke abnormal levels of vigilance.

Automatic controls, such as aircraft landing systems, may "deskill" users, depriving them of a feeling for how the system operates and decreasing their ability to respond when human intervention is needed. Of course, many products suffer from persistent problems rather than frustrating near-perfection (National Research Council, 1993).

Assuming that problems seem possible, one must estimate their probability and severity, as critical inputs to deciding whether to pursue a project or invest in redesign. With a stable system, one can learn a great deal from properly conducted statistical analyses. Those estimates become less reliable when things (the product, the users, the uses, the usage environment) change. In such cases, the impact of those changes must be assessed.

The most elaborate assessments are applications of probabilistic risk assessment (PRA) to estimate the reliability of systems with human components. These procedures attempt to reduce complex, novel systems to components whose reliability can be estimated on the basis of historical data. Although these analyses are standard practice in many settings, they must be interpreted cautiously, especially when used to estimate the magnitude of risks and not just to clarify the relationships among system components. Even if ample historical data are available at the component level, identifying the relevant components and relationships requires the exercise of judgment. Further judgment is needed to adapt general data to specific circumstances, to assess the uncertainty in the estimates, and to determine the definitiveness of the analysis. A good deal is known about the imperfections in these processes and the procedures for making the best use of expert judgment. They build, in part, on research showing the judgmental problems encountered by technical experts when they run out of data and rely on educated intuition. Unfortunately, not all analyses follow the best procedures (Morgan and Henrion, 1991).

Done right, a good PRA can be much better than no analysis at all. Done uncritically, it can create an illusion of understanding. Done with the aim of demonstrating (rather than estimating or improving) safety, it can be a tool of deception and self-deception. Even the best and best-understood estimates will be useful only if there is a legal and logical decision-making scheme for using them. They may be worthless if management cannot face an unpleasant message. They may be injurious if a firm is penalized for thinking explicitly about risk issues, as in some interpretations of the Ford Pinto case.

Warning Users about Potential Risks

The least that one can do when a product poses nonnegligible risks is to inform possible users, allowing them to determine whether the benefits justify those risks. In some cases, doing so will allow producers to claim

that users have provided informed consent, assuming upon themselves responsibility for any adverse consequences.

Selection. The first step in designing warnings is to select the information that they should contain. In many communications, that choice seems fairly arbitrary, reflecting some technical expert or communication specialist's notion of "what people ought to know." Poorly chosen information can have several negative consequences, including both wasting recipients' time and being seen to waste it. It exposes recipients to being judged unduly harshly if they are uninterested in information that, to them, seems irrelevant. In its fine and important report, Confronting AIDS, the Institute of Medicine (1986) despaired after a survey showed that only 41 percent of the U.S. public knew that AIDS was caused by a virus. Yet, one might ask what role that information could play in any practical decision, as well as what those people who answered correctly meant by "a virus."

One logically defensible criterion is to use value-of-information analysis to identify those messages that ought to have the greatest impact on pending decisions. Such information would resolve major uncertainties for recipients regarding the probability of incurring significant outcomes, as the result of taking different actions. Doing so requires taking seriously the details of potential users' lives, looking hard at the options they face and the goals they seek.

Merz (1991b; Merz et al., 1993) applied value-of-information analysis to a well-specified medical decision, whether to undergo carotid endarterectomy. Both this procedure, which involves scraping out an artery that leads to the head, and its alternatives have a variety of possible positive and negative effects. These effects have been the topic of extensive research, which has provided quantitative risk estimates of varying precision. Merz created a population of hypothetical patients, who varied in their physical condition and relative preferences for different health states. He found that knowing about a few, but only a few, of the possible side effects would change the preferred decision for a significant portion of patients. He argued that communications focused on these few side effects would make better use of patients' attention than laundry lists of undifferentiated possibilities. He also argued that his procedure could provide an objective criterion for identifying the information that must be transmitted to ensure that patients were giving a truly informed consent.

Although laborious, such analyses offer a possibility of closure that is unlikely with the traditional ad hoc procedures of relying on professional judgment or conventional practice.

Presentation. Once information has been selected, it must be presented in a comprehensible way. Many of the principles of presentation are well studied and established. For example, research has shown that comprehension

improves when text has a clear structure and, especially, when that structure conforms to recipients' intuitive representations; that critical information is more likely to be remembered when it appears at the highest level of a clear hierarchy; and that readers benefit from "adjunct aids," such as highlighting, previews (showing what to expect), and summaries. Such aids can even be better than full text for understanding, retaining, and being able to look up information (e.g., Ericsson, 1988; Garnham, 1987; Kintsch, 1986; Reder, 1985; Schriver, 1989).

As suggested by the research reviewed earlier, information about the magnitude of risks poses some particular challenges to communicators. The units, orders of magnitude, and even the very idea of quantitative risk estimates may be foreign to many recipients. Under those circumstances, it may seem appealing to provide no more than a general indication of risk levels. However, that situation may also produce the greatest variability in the magnitudes attributed to such verbal quantifiers. The very fact that risks are mentioned may suggest that they are relatively severe, even though that act reflects no more than the caution of a particular producer or the idiosyncrasies of a particular legislative or regulatory process (which mandated labeling).

Although there may be no substitute for providing explicit quantitative information, there is also no guaranteed way to do it effectively. For the time being, we must resign ourselves to an imperfect process in which producers gradually learn how to communicate and users gradually learn how to understand. Fortunately, many decisions are relatively insensitive to the precision of perceived risk estimates (von Winterfeldt and Edwards, 1986). As a result, imperfect communication may still allow people to identify the courses of action in their own best interests. Recipients can always choose to ignore quantitative information or to convert it to some intuitive qualitative equivalent (Beyth-Marom, 1982; Wallsten et al., 1986). In the domain of weather forecasting, studies have found that people appreciate the quantitative information in probability of precipitation forecasts, although they are sometimes unsure about exactly what event is being forecast (Krzysztofowicz, 1983; Murphy and Brown, 1983; Murphy et al., 1980).

Intuitively recognizing the difficulty that people may have with understanding small risks, technical experts have often sought to provide perspective by embedding a focal risk in a list of equivalent risks. Those lists might show the doses of a variety of activities that produce one chance in a million of premature death, for example, hours of canoeing, teaspoonfuls of peanut butter, years living near the boundary of a nuclear power plant, or the loss of life expectancy from various states and activities (Cohen and Lee, 1979; Wilson, 1979). Assuming that recipients had accurate feelings for the likelihood of some of the items in the list, this strategy might be useful.

Unfortunately, risk comparisons are often formulated with transparently rhetorical purpose, attempting to encourage recipients' acceptance of the focal risk—"if you like peanut butter, and accept its risks from aflatoxin, then you should love nuclear power." By failing to consider the other factors entering into others' decisions, for example, the respective benefits from peanut butter and nuclear power, such comparisons have no logical force (Fischhoff et al., 1981; Fischhoff et al., 1984). They can, however, alienate recipients, who prefer to make their own decisions. To date, there is no clear demonstration of risk comparisons being an effective communication technique (Covello et al., 1988; Roth et al., 1990).

Improving the Usage of Existing Products

Telling people about residual risks is, in effect, an admission of engineering failure. It says, "this is the best that we could do, see if you want to live with it." To some extent, that failure may be inevitable. Engineers cannot do it all, and must rely on responsible use of their products. Indeed, in many situations, the product user is in the best position to assess and minimize the risks. However, to fulfill their obligation, engineers must provide users with the information that they need for successful operation. Providing estimates of the magnitude of potential risks (discussed in the previous section) is a part of that story, insofar as it helps users decide how seriously to take safety issues.

Additional steps range from general warnings ("poisonous"), to specific warnings ("use in a well-ventilated area"), to detailed instructions, to training courses of various length. The challenge in designing such materials and procedures is to achieve an acceptable level of understanding at minimal cost in time, money, and effort to producer and user. The required understanding might be defined as whatever is needed to achieve that announced safety level, assuming responsible use.

Training is a heavily studied topic, another beneficiary of the demands of World War II and the Cold War. A producer who did not exploit its potential would arguably bear some responsibility for whatever misfortunes followed. However, it could not be expected to eliminate all risks. Many modern products are so complex and used in such diverse circumstances that it is impossible to anticipate all contingencies or to train users to the desired proficiency. One response to this reality has been to shift the focus of training from what to do to what is happening. It attempts to provide users with accurate mental models of how the product works, so that they can anticipate the results of their actions and diagnose potentially problematic circumstances. Doing so requires a user-centered, rather than a product-centered approach. It might be advantageous also in situations

that less obviously strain the limits of traditional training (Laughery, 1993; Norman, 1988; Reason, 1990).

The state of the art for written instructions seems rather more primitive. As with informing users about the magnitude of risks, the selection and organization of operational information often seem somewhat arbitrary. To take an example that we have studied intensively, the U.S. Environmental Protection Agency (EPA) invested heavily in the development, evaluation, and dissemination of a Citizen's Guide to Radon. Yet, despite this laudably broad and deep effort, the resulting brochure uses a question-and-answer format, with little attempt to summarize or impose a general structure. It relies on an untested risk-comparison scale, which, for example, uses relatively similar risks, but presents them on a logarithmic scale. It does not explicitly confront a misconception that could undermine the value of other correct beliefs about radon: because radon is radioactive, it can contaminate permanently, making it infeasible to remediate should a problem be found (hence not even worth testing).

That misconception was identified in a series of open-ended interviews intended to characterize laypeople's mental models of this risk and confirmed in studies using structured questionnaires (Bostrom, 1990; Bostrom et al., 1992). This set of procedures was used to examine lay understanding of a variety of risks, including those from electromagnetic fields, Lyme disease, lead poisoning, and nuclear energy sources in space (Fischhoff et al., 1993; Morgan et al., 1992). Leventhal and his colleagues have used similar approaches in studying adherence to medical regimes such as routine screening, hypertension drugs, and diets (Leventhal and Cameron, 1987). Yet other investigators have looked at lay conceptualizations of such diverse processes as macroeconomics, physics, computers, and climate change (Carroll and Olson, 1988; Chi et al., 1981; Jungermann et al., 1988; Kempton, 1991; MacGregor, 1989; Voss et al., 1983). Typically, these studies find a mixture of accurate beliefs, on which instructions can build; misconceptions, which need to be eliminated; peripheral beliefs, which need to be placed in proper perspective; and vague beliefs, which need to be sharpened before they can be used, or judged for accuracy. Such procedures provide one of the only ways of identifying beliefs that would not have occurred to technical experts.

Open-ended procedures also provide one of the few ways of identifying discrepancies in how terms are used by people from different linguistic communities. Consider, for example, the common, simple warning, "Don't drink and drive." Recipients could hear, but not get, the message if they guessed wrong about what was meant in terms of the kind and amount of "drinking" and "driving,'' not to mention any special pleading as far as how the general message applied to them personally (Svenson, 1981). Quadrel (1990) asked adolescents to estimate risks using deliberately

vague questions, such as, "What is the probability of getting into an accident if you drink and drive?" She found that even teens with poor education were quite sensitive to imprecisions in how risks were described. There was also considerable variability in how her subjects "filled in the blanks," in the sense of supplying missing details. As a result, they ended up answering different questions even when looking at the same words. As mentioned, earlier studies found that the disagreements between experts and laypeople about the magnitude of risks are due, in part, to disagreements about the definition of "risk" (Slovic et al., 1979; Vlek and Stallen, 1980).

Effective risk communications can help people to reduce their health risks or to get greater benefits in return for those risks that they do take. Ineffective communications not only fail to do so but also incur opportunity costs, in the sense of occupying the place (in recipients' lives and society's functions) that could be taken up by more effective communications. Even worse, misdirected communications can prompt wrong decisions by omitting key information or failing to contradict misconceptions, create confusion by prompting inappropriate assumptions or by emphasizing irrelevant information, and provoke conflict by eroding recipients' faith in the communicator. By causing undue alarm or complacency, poor communications can have greater public health impact than the risks that they attempt to describe. It may be no more acceptable to release an untested communication than an untested drug. Because communicators' intuitions about recipients' risk perceptions cannot be trusted, there is no substitute for empirical validation (Fischhoff, 1987; Fischhoff et al., 1983; National Research Council, 1989; Rohrmann, 1990; Slovic, 1987). Failing to develop and test messages systematically raises legal, ethical, and management questions.

Improving Product Design

Instructing users in how to avoid potential problems leads, in effect, to teaching them how to make the best of an imperfect situation. A more satisfying response is designing user problems away. That means treating users as a resource, rather than as a source of difficulties. Understanding their problems might mean gaining market share, as well as avoiding litigation. Even an explicit commitment to safety and operability can mean something in the marketplace. Delivering on that commitment could be worth even more.

Industries, companies, and even divisions differ greatly in their commitment to the human factors engineering needed to achieve operability. For example, on the same plane, one might find fancy cockpit displays, clumsy tray tables, and metal coffee pots that induce carpal tunnel syndrome.

Inattention to these issues may reflect disinterest in the users (pilots may matter more than flight attendants) or simply the pecking order in design departments. An examination of the skills appearing in a firm's organizational chart provides one indication of how seriously it takes human factors, and of how well it is positioned to exploit the opportunities.

A second indicator of a firm's ability to improve its design is its reluctance to attribute mishaps to human (or user or operator) error. The demands of product liability suits may force a firm to make and defend such claims. However, making improvements requires sharing responsibility. The sort of user-centered, or mental-models, procedure described earlier provides one place to start that process. It means trying to bring designs closer to users' expectations, rather than vice versa (as discussed in the previous section).

One common source of discouragement is sometimes called the theory of "risk homeostasis" (Wilde, 1982). It holds that users frustrate safety improvements by finding ways to use products more aggressively. The evidence supporting this hypothesis is mixed (Slovic and Fischhoff, 1983). Were it true, it might suggest that users are so irrational that there is no point in trying to improve safety. An alternative interpretation would be that users are responding rationally, trying to get more benefit from a product, at the price of forfeiting the increase in safety. If users understand the risks and benefits involved, then they have, arguably, given informed consent for whatever happens. Their desire for greater benefit suggests a design opportunity: providing that benefit without sacrificing safety.

CONCLUSION

The suggestions in the preceding sections dealt with strategies that are within the control of a product's manufacturer. They are, in effect, proposals for improved product stewardship. How effective each proposal is depends, in part, on how skillfully it is implemented and, in part, on how good it conceivably could be. The limits to performance depend, in turn, partly on the strategy. A warning label cannot do as much as a user-centered redesign, although it may be the most cost-effective response.

Those limits also depend on the environment and how it rewards or punishes different strategies. For example, there is no incentive to sweat the details of message design if presenting a laundry list of potential problems is construed as ensuring informed consent. There is a disincentive for doing so if changing how risks are described can be construed as admitting the inadequacy of previous descriptions, which may be in litigation. There may be a disincentive for creating safer designs if that, too, can be construed as an admission of previous failure, or if stricter demands are made of new products. Firms may be penalized for rigorous testing if they

can later be accused of releasing products with imperfections that they themselves have documented.

In addition to removing such obstacles to considering behavioral issues, incentives are needed to take them seriously. Generally speaking, firms should get credit for vigorously studying the behavior of potential users, for having behavioral specialists involved throughout the design process, for evaluating the residual risks of their products, for communicating both the extent and the sources of those risks to users, and for measuring how successfully those messages have gotten across.

NOTES

REFERENCES

Aftermath of Chernobyl. 1986. Groundswell 9:1–7.

American Law Institute. 1965. Restatement (Second) of the Law of Torts.

Baum, A., R. Gatchel, and M. Schaeffer. 1983. Emotional, behavioral and physiological effects of chronic stress at Three Mile Island. Journal of Consulting and Clinical Psychology 12:349–359.

Berendes, H. W., and Y. J. Lee. 1993. Suspended judgment: The 1953 clinical trial of diethylstilbestrol during pregnancy: Could it have stopped DES use? Controlled Clinical Trials 14:179–182.

Beyth-Marom, R. 1982. How probable is probable? Journal of Forecasting 1:257–269.

Bostrom, A. 1990. A Mental Models Approach to Exploring Perceptions of Hazardous Processes. Ph.D. dissertation. School of Urban and Public Affairs, Carnegie Mellon University.

Bostrom, A., B. Fischhoff, and M. G. Morgan. 1992. Characterizing mental models of hazardous

processes: A methodology and an application to radon. Journal of Social Issues 48(4):85–100.

Brennan, T. A. 1988. Causal chains and statistical links: The role of scientific uncertainty in hazardous substance litigation. Cornell Law Review 73:469–533.

Carroll, J. M., and J. R. Olson. 1988. Mental models in human-computer interaction. Pp. 45–65 in Handbook of Human Computer Interaction, M. Helander, ed. Amsterdam: Elsevier.

Chapanis, A. 1959. Research Methods in Human Engineering. Baltimore, Maryland: Johns Hopkins University Press.

Chi, M. T., P. J. Feltovich, and R. Glaser. 1981. Categorization and representation of physics problems by experts and novices. Cognitive Science 15:121–152.

Cohen, B., and I. S. Lee. 1979. A catalog of risks. Health Physics 36:707–722.

Covello, V. T., P. M. Sandman, and P. Slovic. 1988. Risk Communication, Risk Statistics, and Risk Comparisons: A Manual for Plant Managers. Washington, D.C.: Chemical Manufacturers Association.

Cranor, C. F. 1993. Regulating Toxic Substances: A Philosophy of Science and the Law. New York: Oxford University Press.

Crouch, E.A.C., and R. Wilson. 1981. Risk/Benefit Analysis. Cambridge, Mass.: Ballinger.

Eisenberg, T., and J. A. Henderson, Jr. 1993. Products liability cases on appeal: An empirical study. Justice System Journal 16:117–138.

Ericsson, K. A. 1988. Concurrent verbal reports on text comprehension: A review. Text 8(4):295–325.

Fischhoff, B. 1982. Debiasing. Pp. 422–444 in Judgment Under Uncertainty: Heuristics and Biases, D. Kahneman, P. Slovic, and A. Tversky, eds. New York: Cambridge University Press.

Fischhoff, B. 1987. Treating the public with risk communications: A public health perspective. Science, Technology, and Human Values 12:13–19.

Fischhoff, B. 1989. Eliciting knowledge for analytical representation. IEEE Transactions on Systems, Man and Cybernetics 13:448–461.

Fischhoff, B. 1991. Value elicitation: Is there anything in there? American Psychologist 46:835–847.

Fischhoff, B., A. Bostrom, and M. J. Quadrel. 1993. Risk perception and communication. Annual Review of Public Health 14:183–203.

Fischhoff, B., S. Lichtenstein, P. Slovic, S. L. Derby, and R. L. Keeney. 1981. Acceptable Risk. New York: Cambridge University Press.

Fischhoff, B., and D. MacGregor. 1983. Judged lethality: How much people seem to know depends upon how they are asked. Risk Analysis 3:229–236.

Fischhoff, B., P. Slovic, and S. Lichtenstein. 1977. Knowing with certainty: The appropriateness of extreme confidence. Journal of Experimental Psychology: Human Perception and Performance 20:159–183.

Fischhoff, B., P. Slovic, and S. Lichtenstein. 1978. Fault trees: Sensitivity of assessed failure probabilities to problem representation. Journal of Experimental Psychology: Human Perception and Performance 4:330–344.

Fischhoff, B., P. Slovic, and S. Lichtenstein. 1980. Knowing what you want: Measuring labile values. Pp. 117–141 in Cognitive Processes in Choice and Decision Behavior, T. Wallsten, ed. Hillsdale, N.J.: Lawrence Erlbaum Associates.

Fischhoff, B., P. Slovic, and S. Lichtenstein. 1983. The "public" vs. the "experts": Perceived vs. actual disagreement about the risks of nuclear power. Pp. 235–249 in Analysis of Actual vs. Perceived Risks, V. Covello, G. Flamm, J. Rodericks, and R. Tardiff, eds. New York: Plenum.

Fischhoff, B., and O. Svenson. 1988. Perceived risk of radionuclides: Understanding public

understanding. Pp. 453–471 in Radionuclides in the Food Chain, J. H. Harley, G. D. Schmidt, and G. Silini, eds. Berlin: Springer-Verlag.

Fischhoff, B., S. Watson, and C. Hope. 1984. Defining risk. Policy Sciences 17:123–139.

Fitts, P. M., and M. I. Posner. 1967. Human Performance. Belmont, Calif.: Brooks/Cole.

Garnham, A. 1987. Mental Models as Representations of Discourse and Text. New York: Halsted Press.

Gilovich, T. 1993. How We Know What Isn't So. New York: Free Press.

Grimes, D. A. 1993. Technology follies: The uncritical acceptance of medical innovation. Journal of the American Medical Association 269:3030–3033.

Hanan, A. 1992. Pushing the environmental regulatory focus a step back: Controlling the introduction of new chemicals under the Toxic Substances Control Act. American Journal of Law and Medicine 18:395–421.

Hasher, L., and R. T. Zacks. 1984. Automatic and effortful processes in memory. Journal of Experimental Psychology: General 108:356–388.

Henderson, J. A., Jr. 1973. Judicial review of manufacturer's conscious design choices: The limits of adjudication. Columbia Law Review 73:1531–1578.

Henderson, J. A., Jr. 1979. Renewed judicial controversy over defective design: Toward the preservation of an emerging consensus. Minnesota Law Review 63:773–807.

Henderson, J. A., Jr. 1991. Judicial reliance on public policy: An empirical analysis of products liability decisions. George Washington Law Review 59:1570–1613.

Henderson, J. A., Jr., and A. D. Twerski. 1990. Doctrinal collapse in products liability: The empty shell of failure to warn. New York University Law Review 65:265–327.

Henrion, M., and B. Fischhoff. 1986. Assessing uncertainty in physical constants. American Journal of Physics 54:791–798.

Hensler, D. R., M. S. Marquis, A. F. Abrahamse, S. H. Berry, P. A. Ebener, E. G. Lewis, E. A. Lind, R. J. MacCoun, W. G. Manning, J. A. Rogowski, and M. E. Vaiana. 1991. Compensation for Accidental Injuries in the United States. Santa Monica, Calif.: RAND.

Hohenemser, C., M. Deicher, A. Ernst, H. Hofsass, G. Lindner, and E. Recknagel. 1986. Chernobyl: An early report. Environment 28:6–43.

Huber, P. W., and R. E. Litan, eds. 1991. The Liability Maze: The Impact of Liability Law on Safety and Innovation. Washington D.C.: Brookings Institution.

Hynes, M., and E. Vanmarcke. 1976. Reliability of Embankment Performance Predictions. Proceedings of the ASCE Engineering Mechanics Division Specialty Conference. Waterloo, Ontario: University of Waterloo Press.

Institute of Medicine. 1986. Confronting AIDS: Directions for Public Health, Health Care, and Research. Washington, D.C.: National Academy Press.

Johnson, E. J., and A. Tversky. 1983. Affect, generalization and the perception of risk. Journal of Personality and Social Psychology 45:20–31.

Jungermann, H., R. Schutz, and M. Thuring. 1988. Mental models in risk assessment: Informing people about drugs. Risk Analysis 8:147–159.

Kahneman, D., P. Slovic, and A. Tversky, eds. 1982. Judgments Under Uncertainty: Heuristics and Biases. New York: Cambridge University Press.

Kempton, W. 1991. Lay perspectives on global climate change. Global Environmental Change 6:183–208.

Kintsch, W. 1986. Learning from text. Cognition and Instruction 3:87–108.

Krimsky, S., and A. Plough. 1988. Environmental Hazards. Dover, Mass.: Auburn House.

Krohn, W., and P. Weingart. 1987. Commentary: Nuclear power as a social experiment—European political "fall out" from the Chernobyl meltdown. Science, Technology, & Human Values 12:52–58.

Krzysztofowicz, R. 1983. Why should a forecaster and a decision maker use Bayes Theorem? Water Resources Research 19:327–336?

Lamken, J. A. 1989. Note, efficient accident prevention as a continuing obligation: The duty to recall defective products. Stanford Law Review 42:103–162.

Laughery, K. R. 1993. Everybody know—or do they? Ergonomics in Design (July):8–13.

Leventhal, H., and L. Cameron. 1987. Behavioral theories and the problem of compliance. Patient Education and Counseling 10:117–138.

Lichtenstein, S., P. Slovic, B. Fischhoff, M. Layman, and B. Combs. 1978. Judged frequency of lethal events. Journal of Experimental Psychology: Human Learning and Memory 4:551–578.

Localio, A. R., A. G. Lawthers, T. A. Brennan, N. M. Laird, L. E. Hebert, L. M. Peterson, J. P. Newhouse, P. C. Weiler, and H. H. Hiatt. 1991. Relation between malpractice claims and adverse events due to negligence. New England Journal of Medicine 325:245–251.

MacGregor, D. G. 1989. Inferences about product risks: A mental modeling approach to evaluating warnings. Journal of Products Liability 12:75–91.

MacLean, D. 1987. Understanding the nuclear power controversy. In Scientific Controversies: Case Studies in the Resolution and Closure of Disputes in Science and Technology, H. T. Engelhadt, Jr. and A. L. Caplan, eds. Cambridge: Cambridge University Press.

Magat, W. A., and W. K. Viscusi. 1992. Informational Approaches to Regulation. Cambridge, Mass.: MIT Press.

Mahoney, M. J. 1979. Psychology of the scientist: An evaluative review. Social Studies of Science 9:349–375.

Mazur, A. 1973. Disputes between experts. Minerva 11:243–262.

Merz, J. F. 1991a. An empirical analysis of the medical informed consent doctrine: Search for a "standard" of disclosure. Risk 2:27–76.

Merz, J. F. 1991b. Toward a Standard of Disclosure for Medical Informed Consent: Development and Demonstration of a Decision-Analytic Methodology. Ph.D. dissertation. Carnegie Mellon University.

Merz, J. F., B. Fischhoff, D. J. Mazur, and P. S. Fischbeck. 1993. A decision-analytic approach to developing standards of disclosure for medical informed consent. Journal of Products and Toxics Liabilities 15:191–215.

Morgan, M. G., B. Fischhoff, A. Bostrom, L. Lave, and C. J. Atman. 1992. Communicating risk to the public. Environmental Science and Technology 26:2048–2056.

Morgan, M. G., and M. Henrion. 1991. Uncertainty. New York: Cambridge University Press.

Murphy, A. H., and B. G. Brown. 1983. Forecast terminology: Composition and interpretation of public weather forecasts. Bulletin of the American Meteorological Society 65:13–22.

Murphy, A. H., S. Lichtenstein, B. Fischhoff, and R. L. Winkler. 1980. Misinterpretations of precipitation probability forecasts. Bulletin of the American Meteorological Society 61:695–701.

Murphy, A., and R. Winkler. 1984. Probability of precipitation forecasts: A review. Journal of the American Statistical Association 79:391–400.

National Research Council. 1989. Improving Risk Communication. Washington, D.C.: National Academy Press.

National Research Council. 1993. Workload Transition: Implications for Individual and Team Performance, B. M. Huey and C. D. Wickens, eds. Washington, D.C.: National Academy Press.

Nelkin, D., ed. 1978. Controversy: Politics of Technical Decisions. Beverly Hills, Calif.: Sage.

Nelkin, D. 1984. Science in the Streets: Report of the Twentieth Century Fund Task Force on the Communication of Scientific Risk. New York: Priority Press.

Nisbett, R. E., and L. Ross. 1980. Human Inference: Strategies and Shortcomings of Social Judgment. Englewood Cliffs, N.J.: Prentice Hall.

Norman, D. A. 1988. The Psychology of Everyday Things. New York: Basic Books.

Peterson, C. R., and L. R. Beach. 1967. Man as an intuitive statistician. Psychological Bulletin 69:29–46.

Poulton, E. C. 1968. The new psychophysics: Six models for magnitude estimation. Psychological Bulletin 69:1–19.

Poulton, E. C. 1982. Biases in quantitative judgments. Applied Ergonomics 13:31–42.

Quadrel, M. J. 1990. Elicitation of Adolescents' Risk Perceptions: Qualitative and Quantitative Dimensions. Ph.D. dissertation. Carnegie Mellon University.

Reason, J. 1990. Human Error. New York: Cambridge University Press.