3

Effective Training with Simulation: The Instructional Design Process

Many current marine education and training approaches are outgrowths of an established profession with strong, traditional professional development practices. These practices reflect an approach to instruction in which instructors often served as both teacher and mentor, and ship's officers were trained to be generalists.

The use of ship-bridge simulators is becoming an accepted method of training in the international marine industry. Yet even as more simulators are being used, their use has not dovetailed smoothly into comprehensive training programs. Many simulator-based training courses were developed ad hoc, often designed to individual requirements of a shipping company or training establishment.

Among the experts the committee consulted, there was strong evidence that they had thought seriously about training needs and had organized their programs to address those needs. There were also diverse opinions on the exact character of those training needs. In some instances it appeared that training emphasis was guided by equipment capabilities such as simulators. The committee concluded that professional training in the maritime industry could be improved by effective advancement and systematic application of instructional design concepts.

DEVELOPING AN EFFECTIVE TRAINING PROGRAM

A simulator does not train; it is the way the simulator is used that yields the benefit. "It is easy to be impressed by the latest, largest full-mission simulator, but what is more important than the technology is how educational methodology

is applied and whether it increases training effectiveness significantly, incrementally, or at all" (Drown and Mercer, 1995).

Trainees taking part in most simulator-based training courses can be divided loosely into two groups:

- unlicensed cadets who work through a series of structured courses; and

- fully qualified, licensed mariners who take stand-alone courses for updating, refreshing, and refining skills.

Although instruction design theories can be applied to both groups, the training programs and corresponding instructional processes will vary by trainee level.

An effective training program addresses the student's training needs with respect to knowledge, skills, and abilities. It exploits all media, from personal computer-based training to limited-task and full-mission simulators and applies the appropriate training tool to the specific level of training. For example, it would not be necessary to use a full-mission simulator for early instruction in rules-of-the-road training. Rather, a systematic approach to training promotes convergence toward full-mission expertise by developing basic modules of skills in several steps. This approach encourages the assembly of ever-larger skills modules until the trainee can exploit training on a full-mission simulator.

The instructional process is central to the overall focus of this report. Instructional design is a relatively new process. It has not advanced sufficiently to the point where this committee can provide a complete vision of how it should be implemented. The committee can, however, provide guidance.

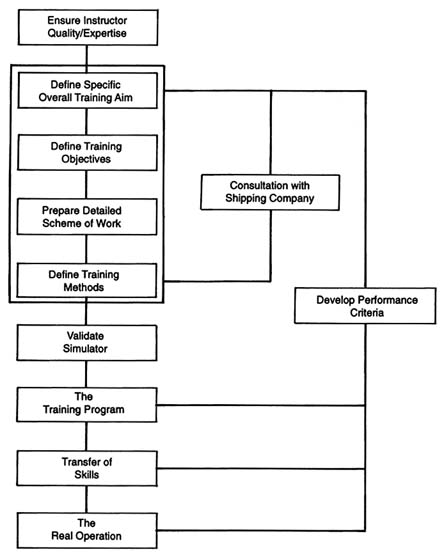

Instructional design is an iterative process whereby training managers continually test innovations and improve training. It is an incremental approach that involves inserting new pieces developed by the instructional design process into existing training programs, assessing results, and then revising the program as necessary. This systematic application yields simulator-based training programs with clearly defined objectives, carefully designed training and evaluation scenarios, and qualified instructors. Figure 3-1 illustrates the iterative nature of this training process.

There are several stages to implementing the instructional design process (see Box 3-1). A vital first stage is determining training needs. This stage is important because current national and international licensing and certification requirements and guidelines focus primarily on knowledge rather than skills and abilities needed to effectively apply knowledge (Froese, 1988). Training needs can be developed by identifying gaps or missing elements between the trainee's required and actual knowledge, skills, and abilities.

The second stage is to determine specific training objectives (i.e., goals). Objectives identify each attitude, skills, and block of knowledge the trainee should have on successfully completing the course (Drown and Mercer, 1995). Once the course has started, these objectives should be clearly stated to orient the trainee. Development of training objectives should also include developing performance

|

Box 3-1 Elements of Instructional Design Process

|

measures for determining whether or to what degree trainees have achieved the training objectives. These important elements of instructional design have not been well addressed and have sometimes even been neglected in simulator-based courses.

The third stage is to determine the training methods to be used. This stage includes an assessment of whether simulation use is relevant to achieving the training objectives. Assuming simulation is to be used, two things must be determined:

- the level of simulation (i.e., level of realism with respect to simulator components [see Chapter 4]) required to achieve training objectives (Hays and Singer, 1989) and

- the type of simulator—full-mission, multi-task, limited task, or special task (see Box 2-1)—that will be of most value.

The development of the training approach should also consider factors such as:

- the total training program of which the simulator-based training is part (e.g., cadet training toward a first license),

- trainee experience,

- type of training media,

- instructor's qualifications and experience, and

- cost benefit and effectiveness of the training program.

Once it has been determined that a simulator-based course is relevant to training needs, it is necessary to develop a detailed course outline. Finally, there

needs to be a validation of the simulator, the simulation, and the curriculum to ensure relevance and suitability.

Anecdotal evidence, as well as experience and observations of the committee, suggests that differences in instructional techniques can result in a significant range of material that can be covered. The way material is covered also affects the relative value of the learning experience. These factors may be affected by simulator features and fidelity; however, limitations in these areas can be minimized or offset to a large extent for certain instructional objectives. For example, committee members found that, for bridge team and bridge resource management training, creative instructional design can be used to compensate for limitations in simulator capabilities.

According to a group of mariner instructors, many of whom met with the committee, the practical considerations shown in Box 3-2 are particularly relevant when structuring a mariner training program. These observations generally correspond with the results of human performance research.

APPLYING INSTRUCTIONAL DESIGN

Defining Training Needs and Objectives

The Trainee Population

There is great diversity in the professional backgrounds and maturity of trainees. Group members may range from entry-level to mates and masters to marine pilots and shore-based management officials. This diversity can affect the development of effective training programs because of the possible range of training needs—from entry-level training; license upgrades and renewals; refresher training; and familiarization training for specific ports, routes, vessels, or vessel types. For these reasons, simulator-based courses will be most useful when developed systematically.

Job-Task Analysis

The systematic definition of training needs requires a detailed understanding of tasks and subtasks necessary to perform the function to be trained. Traditionally, professional development aboard commercial ships and tugs has relied pre-dominantly on "modeling the expert" for complex cognitive tasks—the person undergoing training watches and imitates the performance of senior professionals. This modeling is generally accomplished through on-the-job observation and hands-on experience aboard vessels. In the absence of comprehensive instructional design and attention to instructional abilities of the expert who is being modeled, this approach may have important limitations with respect to the quality of the learning experience.

|

BOX 3-2 Training Insights from Mariner Instructors

|

There are two obvious difficulties in using direct modeling for complex cognitive tasks. First, the rationale for the performance of the tasks is not only opaque to observers, but may also be implicit for the experts: they may not be able to describe their own thought processes or the rationale for them, even though they can perform the tasks. Second, in order to properly coach a novice, an expert may have to formulate an accurate mental model of the novice's understanding of the task (sometimes called the student model). But a novice's understanding of a task is not always obvious to the expert. These two key problems… suggest that there might be a limitation on the extent to which direct modeling of complex cognitive skills can be done (NRC, 1991).

The instructional design process offers an alternative to on-the-job or "modeling-the-expert" training methods. Without a more fully developed basis for quantifying actual training needs, the use of simulators in professional development and marine licensing will continue to be based on perceived needs and professional estimates. To apply instructional design, it is necessary to have detailed, relevant task and subtask analyses.1 As noted in Chapter 1, although

there is a significant amount of literature on job-task analysis, much of that material is either dated and needs to be updated or has only limited application to the marine industry and to mariners' training needs. The task analyses needed to define training needs in instructional design should be detailed and include descriptions of steps required to complete identified subtasks.

Applying Job-Task and Performance Analysis

Instructional objectives, curricula, and, to some extent, instructional approaches are designed to satisfy perceived needs and expectations of training sponsors. Perhaps as a result, the instructional quality of the simulator experience at all ship-bridge simulator facilities is generally reported to be good by companies that have supported simulator-based training and mariners who have attended courses using ship-bridge simulators. However, because the committee could not find evidence of programs designed to measure and analyze resulting performance, it could not determine whether these courses are achieving optimal effects in improving mariner preparation and performance.

To determine whether a training program meets its defined objectives, it is necessary to develop a system to measure and analyze resulting performance. A systematic approach to marine professional development necessitates improving understanding of job performance. This improved understanding could be accomplished by expanding existing job-task analyses to include dimensions that are generally missing with respect to behavioral elements and specific steps needed to execute each subtask. It is important to recognize that not all tasks contribute in the same way to overall performance of functions and duties of the job.

There is little current task analysis work in the marine industry. One example of recent work (Sanquist et al., 1994) is a study done by Battelle Seattle Research Center under contract to the U.S. Coast Guard (USCG) to develop a systematic approach for determining the effects of new automation on mariner qualification and training. The program was undertaken in connection with the agency's responsibilities in marine licensing and its goal to ''determine the minimum standards of experience, physical ability, and knowledge to qualify individuals for each type of license or seaman's documentation." Although the report,Cognitive Analysis of Navigation Tasks: A Tool for Training and Assessment and Equipment Design (Sanquist et al., 1994), is targeted to automated systems aboard ships, the results should provide task analyses at a level that is detailed enough to effectively apply instructional design to relevant training program development.

The following descriptions of the process are summarized from the report.

To maintain the safety of our waterways, the U.S. Coast Guard needs to assess how a given automated system changes ship-board tasks and the knowledge and skills required of the crew.… Four different, but complementary, methods are being developed.

- Task analysis. A task analysis technique has been devised that breaks down a ship-board function, such as collision avoidance, into a sequence of tasks… the current approach synthesizes various existing methods and adapts them to the marine operating environment.

-

Cognitive analysis. This method looks at the mental demands (such as remembering other vessel positions, detecting a new contact on the radar, etc.) placed on the mariner while performing a given task. The cognitive analysis identifies the types of knowledge, skills, and abilities (KSAs) required to perform a task and highlights differences in mental demands as a result of automation.

Cognitive analysis is applied to an operator function model (OFM) for task analysis. OFM provides a task analysis that is independent of the automation, i.e., OFM defines the (1) tasks, (2) information needed to perform the tasks, and (3) decisions that direct the sequence of tasks, regardless of whether they are performed by the mariner or the equipment.

- Skills assessment. This method evaluates the impact of automation. It takes the results of the task and cognitive analyses and determines the types of training required to instill the needed KSAs for performing the ship-board tasks. These will be compared with current training courses to highlight any new training needs.

- Comprehensive assessment. This method addresses the large number of problems resulting from an operator's misunderstanding of the capabilities and limitations of an automated system. For example, when the radar signal-to-noise ratio is poor, the ARPA [automatic radar plotting aids] may "swap" the labels of adjacent targets. If the mariner is not aware of this limitation, he may be navigating under false assumptions about the position of neighboring vessels, increasing the chances of a casualty. Comprehensive assessment will identify misconceptions about automated systems that could then be remedied through training or equipment redesign.

The USCG intends to use this methodology to highlight necessary training and licensing changes. In licensing, for example, the agency's analysis found that use of automatic radar plotting aids in performing the collision avoidance function eliminated nearly all computational requirements on the mariner. Yet application of the cognitive analysis technique to a sample set of questions from the radar observer certification test found that approximately 75 percent of the questions tested computational skills. Given these results, the report concluded "it would appear that there needs to be a shift in emphasis from computational to interpretive questions on the radar observer certification exam."

DETERMINING TRAINING METHODS

Selecting the Training Media

Mere possession of a ship simulator or other training device and the presence of licensed mariners as instructors do not guarantee the effective and credible

use of simulation or effective learning. The presence of specialized features, such as physical motion platforms and high-fidelity graphic images, do not in themselves guarantee a relevant and meaningful training experience. To be effective, simulator resources must be matched to instructional objectives. If simulation is relevant to training objectives, then the type (see discussion in Chapter 2) and level (see discussion in Chapter 4) of simulation, including fidelity requirements, need to be determined.

The way a simulator is treated is important in creating a perception of reality among trainees. If the instructional staff treats a simulator as if it were a ship and the simulator environment as real operating conditions, then the trainees are more likely to treat the experience as "real." In creating the illusion of reality for a limited-task or higher-level simulator, attention needs to be given to accuracy requirements for the mathematical models that drive the simulation (Appendix D). Creation of the training environment is discussed in greater detail in Chapter 4.

No quantitative research was identified by the committee that would establish the relative merits of different approaches to marine training and the types of training offered. There is, however, a research basis that supports the application of different levels of simulation to achieve certain training objectives for cadets, mates, masters, and pilots (Hammell et al., 1980, 1981a, 1981b, 1985; Gynther et al., 1982a, 1982b, 1985). The guides from this research are either preliminary or dated as a result of recent changes in manning and automation. Nevertheless, the guides remain a principal reference and could be used as a starting point for instructional design. The committee observed that few, if any, facilities appear to be using these materials for this purpose.

Mariner instructors reported to the committee that the degree to which a participant is familiar with the training media affects the media's relative value with respect to individual learning. Media familiarity also influences individual performance during a simulation. Just as in real life, a mariner becomes more confident in his or her operating performance of a ship-bridge simulator, manned-model, or radar simulator as his or her familiarity with operating characteristics and specific operating conditions increases. The instructional and training value of all marine simulator-based training media are also affected by the nature and form of instruction, including the operational training scenarios (see discussion of type of scenarios in Chapter 4.)

Defining the Training Program

Curricular

A complete ship-bridge simulator-based training course curriculum will typically include information on the following:

- overall course objectives,

- characterization of course participants and numbers,

- characterization of participant educational and professional backgrounds,

- course structure,

- course timetable,

- individual simulation exercise planning sessions,

- individual simulator exercises and their content,

- individual exercise objectives,

- instructions to staff on the methodology of simulation exercises and subsequent debriefings, and

- method of evaluating participants, as applicable.

Appendix E contains outlines of two simulator-based training courses.

Exercise Scenarios

Scenario Design. Once a simulator-based training program and its objectives have been defined, exercise scenarios should be developed. The following factors should be taken into account when designing these scenarios:

- type of simulator (e.g., special task, full-mission);

- geographical database;

- mathematical model ship type and, to the degree relevant to training objectives, the model's fidelity with respect to ship maneuverability in restricted shallow water with small underkeel clearances;

- type and structure of exercise scenario required to achieve the exercise objectives;

- exercise length;

- method of briefing and debriefing;

- cost effectiveness;

- level of fidelity and accuracy needed to support training objectives (e.g., quality, field of view, cues in the visual scene, and accuracy of trajectory prediction); and

- validation requirements.

Scenario creation is crucial to optimizing the training value of individual exercises. Simply creating a realistic scenario does not necessarily result in operating conditions that will evoke desired student responses, create an effective illusion of reality, or create real life pressures (Edmonds, 1994).

Developing situations intended to challenge or test trainees is sometimes accomplished through scenarios involving role playing. In one possible situation, assignments could be reversed, with seniors placed in subordinate positions and junior personnel in senior positions. The objective is to create a pressure situation in which it becomes apparent to participants that improved interpersonal

dynamics and communications are needed to reduce the potential for human and organizational error. This form of role playing seems to work. It should, however, be very carefully debriefed to avoid any negative impact on the confidence of junior personnel whose performance may have resulted in a failed solution during the exercise.

In some bridge team management training, positional shifts in roles can be used to show junior officers the tasks of a master and to remind senior deck officers of the difficulties and limitations of watchkeeping. In the case of pilot training, the pilot and master roles can be reversed to increase the pilot's sensitivity to the master's concerns when the pilot is controlling ship movement.

It also is possible to stimulate lessons through more subtle, yet equally effective, means. For example, a delegation from the committee participated in a simulation involving a crew change, a watch relief, two ports unfamiliar to the new watch officers, and a transit speed that was excessive for the situation but not readily apparent. As the scenario unfolded, bridge team members created enough pressures and problems for themselves without any assistance from the instructional staff. The need for more effective passage planning and improved communications among bridge team members was no less apparent than it might have been in a situation artificially influenced by role reversals or problems inserted by the instructor.

Scenario Validation. Once designed, an exercise scenario must be validated. Validation is necessary to avoid variations in the scenarios that could adversely affect training objectives or provide inaccurate information or insights and therefore contribute to human error during real operations. Care must be taken to ensure that relevant cues are present. In cases where individuals are being prepared for shiphandling and piloting on specific waterways or vessels, higher levels of visual scene fidelity and trajectory prediction accuracy are indicated. These factors are especially important in operating conditions involving restricted shallow water with small underkeel clearances.

It can be an advantage for instructors to visit and familiarize themselves with the real geographical area they are simulating. A visit and local knowledge also help instructors incorporate appropriate visual cues and local operating procedures, particularly if the instructor may have to play the role of a local pilot. Alternatively, a mariner with prior experience in the simulated area could serve as a design consultant.

The instructor must be satisfied that the exercise can be concluded in a way that is relevant to exercise objectives and that the scenario can achieve exercise objectives enroute. The operational result may be successful or unsuccessful, as long as training objectives are satisfied. Only then can the scenario be used with confidence to effectively satisfy training objectives.

There is no standard methodology for validating exercise scenarios. The instructor or instructional staff generally perform this function subjectively,

sometimes in coordination and consultation with representatives of organizations sponsoring the training.

Use of a Control and Monitoring Station

Once an exercise is validated and is part of the training course, it must be correctly controlled within the objective parameters. During an exercise, the instructor has to control and monitor environmental conditions and other vessel traffic and initiate or respond to internal and external communications. Depending on the complexity of the scenario, the instructor may also directly control pilot boats and tugs to initiate equipment failures, or he or she may delegate some control to the simulator operator. More important, the instructor must monitor trainee actions and performance, noting mistakes, omissions, and any other relevant information for subsequent discussion and analysis at the debriefing.

Exercises using a ship-bridge simulator (limited task to full mission) are about navigating and handling a ship—not about driving a simulator. The more the trainee "thinks ship," the more he or she will benefit. The instructor's actions must be aimed at making the training environment as realistic as possible. The training process may be helped if the instructor feels like he or she is aboard ship, not just in a simulator control station. This situation is particularly important if the instructor also performs a dual role as master in the bridge watchkeeping course for cadets and junior officers.

When an exercise commences, the vessel's passage and safety are in the hands of the master and bridge team and will be successfully or unsuccessfully concluded by their efforts alone. The instructor is often not present on the bridge during a normal exercise. The exception is when trainees will benefit more by the instructor's presence as an advisor or teacher.

While a simulator exercise is running, the instructor must ensure that the exercise objectives are followed. Input must be consistent with the course objectives. It is all too easy for an inexperienced instructor to "inject some excitement" into the proceedings. This action may destroy planned objectives and, at worst, cause trainees to believe they are in a "simulator versus the students" scenario. If this occurs, all realism and training effectiveness will be lost. Incidents must not be input unless they are planned. In any case, trainees, cause occasional incidents themselves through human error.

Complex scenarios, particularly those involving shepherding using tugs, may so overload the instructor that effective performance monitoring of trainees is precluded. In such situations, two or more instructors may be needed to run the exercise, each with specifically allocated functions.

Effectively observing students in a simulated maritime environment is a skill in itself and is central to conducting an effective debriefing. The seagoing experience of a senior mariner is essential to this role and further emphasizes the need for highly qualified and motivated instructors and assessors.

Duration of the Training Program

The committee found no studies of the optimum length of simulator training time or of the optimum balance among lecture, simulator operation, and review of performance in maritime training. Conceptually, the duration of the course needs to be synchronized with the curricula and learning patterns to support overall training objectives. This approach may or may not be cost effective. Most existing simulator-based training courses last between one and two working weeks. This decision may be due as much to commercial constraints of existing simulator-based training as it is to requirements of the training itself. Other factors that may affect simulator training time include:

- the relatively high front-end cost of intensive simulator-based training compared with the low direct cost of on-the-job learning,

- shipping companies have limited resources available for training, and

- some prospective trainees may be unwilling to devote personal time to training.

Short courses typically compress course content, which may be a disadvantage from the perspective of learning and transfer effectiveness. Although mariners can be exposed to training scenarios that might take years to experience during actual operations, compressed courses provide little opportunity to contemplate results of individual training sessions.

This lack of time to reflect may be especially significant for individuals who have limited nautical experience, such as cadets, or are unaccustomed to simulator-based training media. Conducting training with the same content and actual training time but over a somewhat longer period, provides time for students to contemplate results and plan for subsequent training.

An alternative might be to divide course content equivalent to five days of actual training time into training modules performed one day per week over five weeks or more. This approach has been used successfully at the U.S. Merchant Marine Academy to progressively improve cadet bridge team and watchkeeping performance (Appendix F).

Debriefing Techniques

The final and particularly important part of each training session is the debriefing, which takes place once the simulator exercise is concluded, successfully or unsuccessfully. At this point in the program, lessons of the actual exercise are reinforced, and the trainee is reminded of the exercise objectives and informed of any additional objectives that were not previously divulged.

The simulator can be an effective tool in the debriefing. The ability to record and play back a scenario and to analyze the actions and judgments of the bridge team can assist in assessing team and individual performance.

The debriefing can be led by the instructor or by the trainees themselves (usually with an appointed observer leading), with the instructor acting as a "facilitator." A debriefing of a bridge watchstanding course for cadets or junior officers, for example, is better led by the instructor, whose experience and firm hand will keep the session "on course". A bridge team management course, on the other hand, would probably benefit from the facilitator approach because of the general experience and interactions of a given group. Deciding which method to use should be based on the trainee's level, experience, cultural and ethnic background, and the course type. Language limitations may also have to be taken into account.

To apply the debriefing method with a facilitator, one or more trainees are delegated prior to the simulator exercise to observe the actions of their colleagues throughout the exercise. This observer will open the debriefing by critically examining and commenting on two questions: what went right and what could be improved?

The role of the facilitator or instructor is to allow students, through their discussions, to discover why some things went right and others went wrong. The observer's comments can be recorded for further discussion by group members. The facilitator must be free to criticize and focus attention on lessons learned. Each member of the student team may then be asked to comment before the facilitator or instructor summarizes the session. A decision follows and conclusions are drawn.

Using such a technique for debriefing means that the instructor provides little direct advice during the session. Criticisms are made by group peers and are thus often more readily accepted (even between junior and senior officers). Trainees control the discussion and maintain their own defined reference boundaries. This method encourages trainees to draw their own conclusions and assess their own performance, strengths, and weaknesses. Experience has demonstrated that this approach is most effective if debriefing rules are established at the beginning of the course.

The relationship between instructor and trainees should be a relationship between professionals. Debriefings are vital. If they are too short, unimaginative, or instructor-dominated, little will be gained from the exercise. Trainees must have the liberty to express misgivings and admit failure without fear of penalty or ridicule. They should be encouraged to perceive where they could have performed better to learn from the experience.

Advantages of group discussion include:

- discussion stimulates critical thought,

- trainees learn to substantiate their statements, and

- trainees learn to systematize their thoughts.

During group discussions, the facilitator must avoid:

- discussions that become too time consuming,

- misdirection of group discussions,

- session domination by a few trainees,

- social tensions, and

- animosity among participants.

TRANSFER AND RETENTION OF TRAINING

Transferability of Simulator Training

As discussed in Chapter 2, use of simulators for training is based on the assumption of transfer (i.e., skills and knowledge learned in the classroom can be applied effectively to relevant situations outside the classroom). One unresolved question is a quantitative assessment of the transferability of simulator training to the realworld. Historically, training effectiveness evaluations of simulators have been developed within the commercial air carrier industry. Studies undertaken in the late 1940s by Williams and associates (Flexman et al., 1972) established the effectiveness of flight simulators for training pilots to fly light, single-engine aircraft. The methodologies developed for these evaluations have been used to demonstrate the effectiveness of simulators for the instruction of a variety of flight skills (Povenmire and Roscoe, 1971, 1973; Waag, 1981; Lintern et al., 1989, 1990).

These methodologies have been adapted to assess training effectiveness from specific simulator features. While some studies support the notion that higher levels of fidelity add to training effectiveness (Lintern et al., 1987; 1990; Hays and Singer, 1989), others do not (Waag, 1981; Hays and Singer, 1989; Lintern et al., 1989; Lintern and Koonce, 1992). For example, research has failed to support the belief in the commercial air carrier community that motion systems add to the training effectiveness of a simulator. Despite the widespread acceptance of motion systems, the scientific evidence is inconsistent.

It is difficult to determine the validity and degree of equivalency between simulator training and shipboard experience without an evaluation of transfer. The issue is whether it can be determined that skills learned in a simulator can be employed aboard ship. The most systematic way to test the application of this training to shipboard performance would be to systematically compare shipboard performance of simulator-trained individuals (as group) to performance of a group whose only difference is the lack of simulator training. Logistically, these studies are difficult to execute within the commercial air carrier industry and may be even more difficult to execute in the marine industry, which lacks systematic organizational structure.

Available research generally supports the proposition that there is a meaningful transfer of knowledge and skills developed through simulator-based training

to actual operations (Kayten et al., 1982; Multer et al., 1983; D'Amico et al., 1985; Hammell et al., 1985; Miller et al., O'Hara and Saxe, 1985; Froese, 1988; Douwsma, 1993). If there is a concern, it is the lack of reinforcement of newly learned skills in the traditional workplace. Failure to reinforce skills on board ship is a contributing cause in the failure to transfer knowledge from simulators to real life.

Evidence of Transfer Effectiveness

Systematic Studies

An early study conducted at the Computer Aided Operations Research Facility (CAORF), Kings Point, New York, reported that on entering a particular harbor, students who had received simulator training significantly outperformed students with the same background and experience, but with no simulator training (Miller et al., 1985). However, the methodology employed was elementary, and the results are not conclusive.

Appendix F includes a committee-developed case study of the U.S. Merchant Marine Academy cadet watchkeeping course that has used the CAORF simulator since the early 1980s. The case study strongly indicates that watchstanding knowledge, skills, and abilities can be significantly improved using marine simulation, and that this training carries over to watchstanding aboard ship.

Anecdotal Evidence

Simulators are used in a growing number of training programs. In addition to their long-standing use at some maritime academies,2 and a number of private and union facilities, simulators have been widely used in the commercial air carrier industry, are increasingly used in the nuclear power industry, and are used in medical training and a variety of other areas.

Since objective evaluation of training effectiveness for any specific use is the exception rather than the rule, the committee believes that widespread use of simulators for training and the accompanying belief in their effectiveness constitutes anecdotal evidence of training effectiveness. Indeed, one reason offered for the steady improvement in airline safety since the 1970s has been use of advanced simulators to train pilots for situations too dangerous to try in the air. In

the commercial air carrier industry, for example, it is believed that simulator-based training has considerable value. This belief is bolstered by the observation that airline pilots who transition into a new role in the cockpit via simulator-based training (with no formal in-the-air training for that role) are competent.

The general opinion of mariners who have taken simulator-based courses and the shipping companies who sponsored them is that those courses are effective, if not optimal. Shipping companies are using simulators more frequently. In the absence of requirements, they would not be doing so if they thought the training was not cost effective.

Some of the lessons in these training courses, however, may not completely or uniformly be applied in the real-world. Learning transfer may fall short because shipboard organization and operating practices have not, in many instances, been restructured to facilitate the introduction and use of these concepts.

MEASURING TRAINING PROGRAM EFFECTIVENESS

The Need for Training Program Evaluation

Anecdotal evidence can, however, be suspect, and apparent effectiveness based on usage patterns and opinions can be misleading. Successful on-the-job performance of those who have undergone simulator-based training could be due to factors unrelated to the training itself.

One element of the instructional design process is continual analysis and improvement of the training program. There is always a concern about the effectiveness of a new or even existing training program. Essentially, the issue is whether trainees learn what is necessary for on-the-job performance. Belief in the training effectiveness is generally based on whether trainees pass the course and perform successfully on the job. There are, however, a number of uncertainties in this sort of evaluation. There is, for example, the question of short-term versus long-term effects, an issue of particular concern for intensive courses of short duration.

In cases where evaluations are conducted within the structure of a training program, the course material may be only marginally relevant. Recent evidence suggests that many technical training programs teach marginally relevant skills, and graduates of those courses have to be retrained when they are placed in an operational environment (Lave and Wenger, 1991). It is not uncommon to hear complaints that new graduates from a training course do not have the necessary operational skills and must be retrained on the job (Lave and Wenger, 1991; Hutchins, 1992). Thus, even when graduates are successful, it is possible that they acquired essential skills on the job, as is typically the case in marine operations.

Satisfactory performance on an examination within a course structure does not ensure that the training was effective. Tests are invariably oriented toward

the material taught in the course and may be no more relevant than the course itself. In addition, formal testing may fail to capture subtle but critical aspects of operational skills. Typically, formal tests examine those aspects that are easy to frame and evaluate by standard grading methods. In complex and diverse tasks, formal testing rarely succeeds in evaluating the depth of knowledge and skill needed for operational performance of a multiplicity of tasks while under stress.

Strategy for Evaluating Training Programs

Training program effectiveness should be evaluated as a part of the instructional design process. There are systems for evaluating programs, but many of these are flawed or are not properly applied. The committee developed the following procedures to guide training program evaluation.

A strategy to evaluate the effectiveness of training programs should be relevant, comprehensive, and consistent. To be relevant, the strategy should assess skills that are central to the job, especially those that are difficult to teach and difficult to acquire. To be comprehensive , the strategy must permit evaluation of the quality of all essential skills and detection of critical omissions. One method is to evaluate the performance of individuals who have completed the course. The aim would be to ensure that ongoing programs realize essential goals and to evaluate whether modifying the training would result in desired enhancements for on-the-job performance both immediately following training and in the long term (NRC, 1991).

These goals might best be met by assigning experienced practitioners to evaluate bridge performance. These evaluators should be carefully selected and trained, remain independent of the conduct of training (to avoid ''ownership" in the training product or interpersonal relationships that could influence their evaluations), and be experienced enough to qualitatively judge the effectiveness of operational performance. In addition, they must remain familiar with current practice and, ideally, should periodically cycle through line operations, as is required by most commercial air carrier operators.

It is probably not desirable for the evaluators to be totally independent from organizational goals and policy. They must perform their duties according to shared goals and values established by management. They would also, however, have higher-level goals, such as safety and production. The assumption is that experienced practitioners can recognize how well such goals are being satisfied and are sensitive to tradeoffs that are sometimes essential in the pursuit of diverse goals. Nevertheless, evaluators need to be advised of organizational goals so they will consistently evaluate and logically communicate deficiencies to management and the training department.

The evaluation process would need to be minimally intrusive; it should not markedly change behavior from what it would be in the absence of the ongoing evaluation. Although most individuals perform more conscientiously under

evaluation conditions, it would be impractical (and probably unethical) to conceal from a bridge team that they are being evaluated. A poorly trained crew is, however, unlikely to be able to perform at a high standard only while they are being evaluated.

The training program evaluation strategy outlined above corresponds in some respects to procedures currently in use at some simulator facilities. Nevertheless, it should be emphasized that his strategy incorporates several key features, the omission of any one of which will jeopardize the process. Evaluation within a course lacks the required independence.

Positive but unsolicited testimony about the quality of training from those who supervise graduates or from graduates themselves are not sufficiently systematic and may not be very reliable. Although statutory authorities sometimes have an on-the-job evaluation process, their evaluators are rarely as experienced with the actual job as is desirable, are rarely current in practice, and are not necessarily sensitive to all competing work goals.

The difficulty of achieving comprehensive and operationally relevant evaluation of those who have graduated from training programs should not be minimized. However, these features are essential if training programs are to be evaluated effectively. The results of training program evaluations should be used to make periodic program improvements.

SIMULATOR-BASED TRAINING INSTRUCTORS

The role and qualification of marine simulator instructors evokes considerable discussion and debate. Some people in the marine simulator field believe the instructor is the most important training element; others believe the trainee is the most important part of the simulation because beneficial changes in trainee behavior and performance are the desired product. A third view is that the simulator and the simulation produced are particularly important.

The view taken in this report is that although all design components are important to an effective course of instruction, the relative quality of simulator-based training depends more on the instructor's capabilities than those of the simulator or the role of the trainee. The instructor is of primary importance because it is the instructor's role to ensure that all of the instructional objectives are met.

In developing a training program, the instructional design process requires consideration of the following factors:

- curricula requirements;

- instructor recruitment or selection to meet curricula requirements;

- instructor professional credentials and their maintenance;

- instructional capabilities, including their development and maintenance; and

- instructor capability to operate simulator resources and integrate them into effective learning programs.

Instructor Knowledge and Expertise

As a practical matter, the instructor's subject-matter expertise is essential to instructional design. The instructor's tasks, however, are multifaceted. Many of the instructor's tasks (Box 3-3) are in addition to and lie outside of the mariner's nautical expertise.

There are limited opportunities for mariners to undertake instructional roles aboard modern ships. One possible exception is in piloting, where apprentices are developed under the supervision of marine pilots as a normal practice (NRC, 1994)3. Although some mariners develop good instructional capabilities during their seagoing service, effective application of the instructional design process requires specialized skills that must be developed or refined separately through specialized programs.

The need for specialized skills is even more important in the use of ship-bridge simulators. As an instructional tool, simulation has evolved to a level of technical and instructional sophistication that often requires multidisciplinary expertise and technical support. In such cases, the instructor needs to be capable of working as a member or leader of an instruction team.

The Instructor's Role

In conducting training, the instructor lectures, role-plays, and facilitates. He or she is the intermediary for:

- creating a synergism among student, curricula, and simulator; and

- making the simulation believable and meaningful.

Objectivity

Although watchkeeping courses have not been mandatory for certification of officers in charge of a navigation watch, some national agencies (including the USCG) grant remission of sea time after completion of such courses. In these cases, the instructor, by virtue of the instructional role and student evaluations, is involved in the award of a completion certificate. The instructor has either moral or official responsibility, or both, for ensuring that each trainee's performance has been satisfactory. At the same time, care must be exercised to ensure that interpersonal relationships do not influence performance evaluations and that the

|

BOX 3-3 Instructional Tasks

|

award of credit toward a certification requirement does not unduly influence training program integrity.

Flexibility and Sensitivity

The instructor must be capable of adjusting to trainees' different professional experiences. Experienced trainees are already a professional in their field and should be treated accordingly. In these cases, the instructor's role as a facilitator takes on more importance than it does with less-experienced deck officers or cadets.

Currency

The main demand on the instructor who teaches professional courses is one of stimulating the trainee to rethink his or her own performance objectively and

constructively. The instructor must not only know the subject-matter thoroughly, but be up to date on recent events, such as marine accidents and their proximate and underlying causes and developments in equipment and operating practices worldwide.

Instructor Qualifications

Professional Credentials

There is a strongly held position among maritime instructors that all simulation instructors should possess the highest seagoing qualification awarded by a flag state, which is commonly understood to mean a master's license with no restrictions or an unlimited master's license. In principle, the marine license ensures subject-matter expertise and the institutional consideration of nautical credibility.

Some people believe that knowledge of the course content and proficiency in instructional skills are paramount, and that possession of a senior marine license alone guarantees neither relevant nor recent content knowledge nor instructional skills. Instructors without formal instructional skills training are most likely applying instructional knowledge rooted in informal on-the-job and apprenticeship approaches to professional development. Although the insights that accompany this background are important for discerning and conveying the marine operations subtleties, practical experience does not by itself prepare an individual to apply modern learning concepts.

The Need for Guidelines or Standards

Instructor qualification must consider both instructional and institutional factors. From an instructional perspective, the instructor must possess the right content knowledge as well as instructional skills. From an institutional perspective, the instructor must be credible to trainees and sponsors. In addition, if marine licensing is involved, the appropriate form and level of instructor qualification is also important to the licensing authorities.

The rapid evolution of simulator capabilities, from desktop computer-aided instruction and presentations to full-mission ship-bridge simulator, suggests that there should be more formal standards for qualification of simulator instructors. With a few notable exceptions (discussed below) there are no professional, industry, or national guidelines, standards, or requirements for certifying instructors, either through professional organizations, marine industry, education, training programs, or government agencies. Nor is there a specific professional code of ethics for instructors involved in mariner training. Generally, the instructional capabilities of instructors is determined by employers through job interviews and review of professional credentials.

|

BOX 3-4 Samples of Instructor Training Programs, Maritime Academy Simulator Committee (MASC): Draft "Train-the-Instructor" Course In pursuit of one of its goals as a committee, MASC has been working to develop standards for simulator instructors. MASC considers it mandatory that all simulator instructors be required to attend a course that covers the following subjects:

MASC is currently refining the course structure and curricula. |

Boxes 3-4, 3-5, and 3-6 summarize the focus of three different "train-the-trainer" programs. Box 3-4 summarizes an effort by the Maritime Academy Simulator Committee to develop a training program for simulator instructors at U.S. merchant marine academies. Box 3-5 gives samples of extensive courses at the Southampton Institute, Warsash Maritime Centre in the United Kingdom, for training instructors who teach on a full-mission ship-bridge simulator. Box 3-6 summarizes a government-required training program in the Netherlands.

In the United States, a de facto certification of instructors occurs through the USCG's administration of course approvals for training programs used, in part or in whole, to satisfy certain federal marine licensing requirements. The agency has established criteria regarding instructor qualifications that must be met to receive course approvals. Evidence of training in instructional techniques is required, and a simulator facility must notify the agency of any changes in instructors, including the credentials of the individual who will be teaching the course in cases where some sea-time equivalency or licenses are awarded.

In December 1994, the USCG began examining an internal proposal to establish a formal certification requirement that it would administer. The proposal envisioned three categories of certification: certified maritime instructor, designated simulator examiner, and designated practical examiner.

The proposal also featured a requirement to use licensed mariners or individuals with comparable experience as instructors. The USCG tasked its Merchant Personnel Advisory Committee to review this proposal as an initial step in determining whether to seek implementation authority and resources. The agency's interest in formal

instructor certification is driven by its interest in encouraging quality training programs, concerns over instructor competency, and interest in using training and marine simulation actively within the marine licensing process.

Teaching Methods

Interdisciplinary Instructional Teams

The instructor needs a wide range of maritime skills. Realistically, it is difficult to find all these skills and qualifications in one individual. Additional training can overcome deficiencies, and spreading of instructional skills over the entire staff can enable a training establishment to focus the required specialization to a particular training need.

A full instructional team would consist of subject-matter experts supplemented by individuals with specialized instructional and technical capabilities in

|

BOX 3-5 Samples of Instructor Training Programs The Southampton Institute, Warsash Maritime Centre, United Kingdom Full-Mission Ship-Bridge Simulator Key elements of the Maritime Simulation Instructor Training Program include the following:

|

|

the application of simulation and the setup and operation of computer-based simulators and manned models. In practice, the members of a simulator facility's staff are routinely called on at appropriate times during the course of instruction to support simulations through role playing, to observe student performance, and to provide specialized instruction or technical support. This practice satisfies multidisciplinary needs within the limits of the staffs' resident expertise.

Sometimes specialized support is obtained from parties external to the simulator facility. For example, few facilities maintain a hydrodynamicist on staff unless the facility is also involved with channel design. There may be occasions when an expert in a nonmaritime field may have to be brought in to assist on a simulator-based course. The best example is that of bridge resource management training, where psychologists and specialist in human factors and stress and fatigue can contribute greatly to course content and presentation. The importance of highly qualified, trained, and motivated senior mariners as instructors cannot be overstated.

|

BOX 3-6 Samples of Instructor Training Programs MarineSafety International, Rotterdam The Netherlands government requires instructors to complete a formal course of instruction to prepare them for service at the new MarineSafety International Rotterdam simulator facility. Originally developed by FlightSafety International for commercial air carrier simulator instructors, the course was adapted to the marine simulator field. The one-week course was developed by the facility and was based on the parent company's earlier development of flight simulator instructor training. The course consists of 17 hours of classroom instruction in varying formats plus 2 days of training in the use of the facility's simulators. The classroom segment of the course includes lessons on:

|

Lead Instructors

Use of Lead Instructors. Use of lead instructors has been possible because of small class sizes and the individual lesson content. As a practical matter, the content of each exercise or drill that can be effectively overseen by a single instructor more or less coincides with the level of detail and interaction that trainees can accommodate. On the other hand, the use of a single instructor rather than a dedicated, multidisciplinary instructional team is often influenced by cost. If a facility can afford only a single instructor or a small instructional staff, then the emphasis will be on nautical credibility rather than on staff instructional skills and proficiency. These factors may or may not result in optimization of either instruction or learning, depending on all factors present in a given simulation.

Criteria for Ideal Instructor. The committee believes that the ideal lead instructor should have the following skills and qualifications:

- possession of an unlimited master's license or other high-level qualification for specialized training—for example, a license as a marine pilot for pilot training;

- command experience;

- demonstrated effective teaching and communication skills;

- knowledge of the simulator capabilities;

- expert shiphandling skills;

- strong analytical capabilities; and

- current general knowledge of the industry and trainee sector in particular.

Many trainees attending simulator courses are either serving masters in command or senior officers. It is desirable for nautical credibility that the lead instructor's professional qualification be at least the same as the highest qualification for which the trainees are being trained or examined. Perhaps more important, however, the instructor should possess appropriate subject-matter expertise (i.e., if the course is in pilotage, the instructor should be an expert in pilotage). Command experience would be an advantage and is desirable, but is not absolutely necessary.

Many U.S. training establishments provide training for deck officers and vessel operators other than, or in addition to, masters and pilots. For example, some facilities provide training for coastwise tug and barge operations, and one provides training for operators of inland tug and barge flotillas. In these cases, the highest relevant mariner qualification is important. In addition to establishing credibility, the instructor and trainees must be able to comfortably relate to each other.

FINDINGS

Summary of Findings

The current approaches to training and professional development in the marine industry are based on a tradition of "modeling-the-expert" and on-the-job training. Many courses that currently use simulation in their curricula have followed the approach of "inserting" the simulation into the training program rather than following a more structured approach to course development.

Systematic application of the instructional design process offers a strong model for structuring new courses and continuously improving existing ones. The primary elements of the instructional design process include:

- determining training needs, including characterizing the trainee population and analyzing job-tasks and subtasks;

- determining specific training objectives, including performance measures to determine whether or to what degree the objectives have been met;

- determining training methods to be used, including assessing whether simulation is appropriate to the training objectives;

- developing a detailed course curriculum, including designing exercise scenarios (if simulation is used), determining the duration of the training program, and debriefing techniques;and

- validating the simulator, the simulation, and the curriculum.

Instructional design is an evolving concept. Application of the process should include periodic evaluation of the success or failure of course elements, periodic assessment of the program's overall effectiveness, and regular innovative modifications, as appropriate.

Another issue of concern in the mariner training process is transfer and retention of the training. Use of simulators in training is based on subjective observation and anecdotal evidence that the training system is effective. Very little recent quantitative research has been conducted to determine whether or how effectively simulator training transfers to the work environment.

One of the most critical elements in the application of instructional design is the effectiveness of the instructor. It is the instructor's responsibility to ensure that all training objectives are met. The instructor must possess both content knowledge and instructional skills, especially if he or she is responsible for teaching in a simulator environment. Standards or guidelines defining instructor qualifications are necessary to ensure instructional effectiveness.

Research Needs

In the course of its investigation of the uses of simulators in training and the instructional design process, the committee identified a number of areas where existing research and analysis did not provide sufficient information for the committee to extend its own analysis.

Among the most significant areas identified was the need to update and expand relevant task and subtask analyses for application to the mariner's training needs. For the instructional design process to be effective, the course design should include the definition of training needs based on the steps required to complete identified tasks and subtasks for specific functions. This analysis should include dimensions that have been missing with respect to behavioral elements and specific steps needed to execute each subtask.

This analysis is important for several reasons. First, not all tasks contribute in the same way to overall performance of functions and duties of the job. Second, task analysis is necessary in training course design and performance evaluation. Third, a clear understanding of the skills and abilities required for job performance is necessary for effective performance evaluation (Chapter 5). Fourth, task descriptions and related performance criteria are necessary to design an effective licensing program (Chapter 5).

Other areas for research identified by the committee include:

- the need for a standard methodology for validating exercise scenarios;

- the need for guidelines or standards for qualifying or certifying training instructors;

- research on the optimum sequencing of simulator training;

- the effect of course duration (i.e., short courses that typically compress course content versus courses spread over weeks or months) on learning and transfer effectiveness by different categories of the training population;

- a subset of the study of course duration—this would be an investigation of whether the effects differ in classroom versus simulator-based training for different categories of the training population; and

- a study on whether skills learned in a simulator can be employed aboard a ship. The study might employ a method such as comparing the shipboard performances of simulator-trained individuals to shipboard performances of a similar group with no simulator training.

REFERENCES

D'Amico, A.D., W.C. Miller, and C. Saxe. 1985. A Preliminary Evaluation of Transfer of Simulator Training to the Real-World. Report No. CAORF 50-8126-02. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Douwsma, D.G. 1993. Using frameworks to produce cost-effective simulator training. Pp. 97–101 in MARSIM '93, International Conference on Maritime Simulation and Ship Maneuverability, St. Johns, Newfoundland, Canada, September 26–October 2.

Drown, D.F., and R.M. Mercer. 1995. Applying marine simulation to improve mariner professional development. Pp. 597–608 in Proceedings of Ports '95. New York: American Society of Civil Engineers.

Edmonds, D. 1994. Weighing the pros and cons of simulator training, computer-based training, and computer testing and assessment. Paper presented at IIR International Human Factors in Shipping Week 1994: Strategies for Achieving Effective Maritime Manning and Training, London, England, October 4.

Flexman, R.E., S.N. Roscoe, A.C. Williams, Jr., and B.H. Williges. 1972. Studies in pilot training: the anatomy of transfer. Aviation Research Monographs 2(1). Champaign: Aviation Research Laboratory, University of Illinois.

Froese, J. 1988. Can simulators be used to identify and specify training needs? Fifth International Conference on Maritime Education and Training. Sydney, Nova Scotia, Canada: The International Maritime Lecturers Association.

Gynther, J.W., T.J. Hammell, J.A. Grasso, and V.M. Pittsley. 1982a. Simulators for Mariner Training and Licensing: Functional Specification and Training Program Guidelines for a Maritime Cadet Simulator. Report Nos. CAORF 50-8004-02 and USCG-D-8-83. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Gynther, J.W., T.J. Hammell, J.A. Grasso, and V.M. Pittsley. 1982b. Simulators for Mariner Training and Licensing: Guidelines for Deck Officer Training Systems. Report Nos. CAORF 50-8004-03 and USCG-D-7-83. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Gynther, J.W., T.J. Hammell, and V.M. Pittsley. 1985. Guidelines for Simulator-Based Marine Pilot Training Programs. Report Nos. CAORF-50-8313-02 and USCG-D-25-85. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Hammell, T.J., K.E. Williams, J.A. Grasso, and W. Evans. 1980. Simulators for Mariner Training and Licensing. Phase 1: The Role of Simulators in the Mariner Training and Licensing Process (2 volumes). Report Nos. CAORF 50-7810-01 and USCG-D-12-80. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Hammell, T.J., J.W. Gynther, J.A. Grasso, and M.E. Gaffney. 1981a. Simulators for Mariner Training and Licensing. Phase 2: Investigation of Simulator-Based Training for Maritime Cadets. Report Nos. CAORF 50-7915-01 and USCG-D-06-82. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Hammell, T.J., J.W. Gynther, J.A. Grasso, and M.E. Gaffney. 1981b. Simulators for Mariner Training and Licensing. Phase 2: Investigation of Simulator Characteristics for Training Senior Mariners. Report Nos. CAORF 50-915-02 and USCG-D-08-82. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Hammell, T.J., J.W. Gynther, and V.M. Pittsley. 1985. Experimental Evaluation of Simulator-Based Training for Marine Pilots. Report No. CAORF 50-8318-03 and USCG-D-26-85. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Hays, R.T., and M.J. Singer. 1989. Simulation Fidelity in Training System Design: Bridging the Gap Between Reality and Training. New York: Springer-Verlag.

Hutchins, E. 1992. Learning to navigate. In Understanding Practice. S. Chaiklin and J. Lave, eds. New York: Cambridge University Press.

Kayten, P., W.M. Korsoh, W.C. Miller, E.J. Kaufman, K.E. Williams, and T.C. King, Jr. 1982. Assessment of Simulator-Based Training for the Enhancement of Cadet Watch Officer. Kings Point, New York: National Maritime Research Center.

Lave, J., and E. Wenger. 1991. Situated Learning: Legitimate Peripheral Participation. New York: Cambridge University Press.

Lintern, G., and J.M. Koonce. 1992. Visual augmentation and scene detail effects in flight training. International Journal of Aviation Psychology 2:281–301.

Lintern, G., K.E. Thomley-Yates, B.E. Nelson, and S.N. Roscoe. 1987. Content, variety, and augmentation of simulated visual scenes for teaching air-to-ground attack. Human Factors 29(1):45–51.

Lintern, G., D.J. Sheppard, D.L. Parker, K.E. Yates, and M.D. Nolan. 1989. Simulator design and instructional features for air-to-ground attack: a transfer study. Human Factors 31(1):87–99.

Lintern, G., S.N. Roscoe, and J.E. Sivier. 1990. Display principles, control dynamics, and environmental factors in pilot training and transfer. Human Factors 32:299–317.

Miller, W.C., C. Saxe, and A.D. D'Amico. 1985. A Preliminary Evaluation of Transfer of Simulator Training to the Real-World. Report No. CAORF 50-8126-02. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Multer, J., A.D. D'Amico, K. Williams, and C. Saxe. 1983. Efficiency of Simulation in the Acquisition of Shiphandling Knowledge as a Function of Previous Experience. Report No. CAORF 52-8102-02. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

NRC (National Research Council). 1991. In the Mind's Eye: Enhancing Human Performance. D. Druckman and R.A. Bjork, eds. Committee on Techniques for the Enhancement of Human Performance, Commission on Behavioral and Social Sciences and Education. Washington, D.C.: National Academy Press.

NRC (National Research Council). 1994. Minding the Helm: Marine Navigation and Piloting. Committee on Advances in Navigation and Piloting. Marine Board. Washington, D.C.: National Academy Press.

O'Hara, J.M., and C. Saxe. 1985. The Development, Retention, and Retraining of Deck Officer Watchstanding Skills in Maritime Cadets. Report No. CAORF 56-8418-01. Kings Point, New York: Computer Aided Operations Research Facility, National Maritime Research Center.

Povenmire, H.K., and S.N. Roscoe. 1971. An evaluation of ground-based flight trainers in routine primary flight training. Human Factors 15:109–116.

Povenmire, H.K., and S.N. Roscoe. 1973. Incremental transfer effectiveness of a ground-based general aviation trainer. Human Factors 15:534–542.

Sanquist, T.F., J.D. Lee, and A.M. Rothblum. 1994. Cognitive Analysis of Navigation Tasks: A Tool for Training Assessment and Equipment Design. Report USCG-D-19-94. Washington, D.C.: U.S. Department of Transportation.

Waag, W.L. 1981. Training Effectiveness of Visual and Motion Simulation. AFHRL-TR-79-72. Air Force Human Resources Laboratory, Brooks Air Force Base, Texas. Moffett Field, California: NASA Ames Research Center.