3

A Model for the Development of Tolerable Upper Intake Levels

BACKGROUND

The Tolerable Upper Intake Level (UL) refers to the highest level of daily nutrient intake that is likely to pose no risk of adverse health effects to almost all individuals in the general population. As intake increases above the UL, the risk of adverse effects increases. The term tolerable is chosen because it connotes a level of intake that can, with high probability, be tolerated biologically by individuals; it does not imply acceptability of that level in any other sense. The setting of a UL does not indicate that nutrient intakes greater than the Recommended Dietary Allowance (RDA) or Adequate Intake (AI) are recommended as being beneficial to an individual. Many individuals are self-medicating with nutrients for curative or treatment purposes. It is beyond the scope of this report to address the possible therapeutic benefits of higher nutrient intakes that may offset the risk of adverse effects. The UL is not meant to apply to individuals who are treated with the nutrient under medical supervision. It is designed to be applied to almost all individuals in the general healthy population.

The term adverse effect is defined as any significant alteration in the structure or function of the human organism (Klaassen et al., 1986) or any impairment of a physiologically important function, in accordance with the definition set by the joint World Health Organization, Food and Agriculture Organization of the United Nations, and International Atomic Energy Agency Expert Consultation in Trace Elements in Human Nutrition and Health (WHO, 1996). In the

case of nutrients, it is exceedingly important to consider the possibility that the intake of one nutrient may alter in detrimental ways the health benefits conferred by another nutrient. Any such alteration (referred to as an adverse nutrient-nutrient interaction) is considered an adverse health effect. When evidence for such adverse interactions is available, it is considered in establishing a nutrient’s UL.

As is true for all chemical agents, adverse health effects can result if the intake of nutrients from a combination of food, water, nutrient supplements, and pharmacological agents is excessive. Some lower level of nutrient intake will ordinarily pose no likelihood (or risk) of adverse health effects in normal individuals even if the level is above that associated with any benefit. It is not possible to identify a single risk-free intake level for a nutrient that can be applied with certainty to all members of a population. However, it is possible to develop intake levels that are likely to pose no risk of adverse health effects to most members of the general population, including sensitive individuals. For some nutrients these intake levels may still pose a risk to subpopulations with extreme or distinct vulnerabilities.

A MODEL FOR THE DERIVATION OF TOLERABLE UPPER INTAKE LEVELS

The development of a mathematical model for deriving the Tolerable Upper Intake Level (UL) was rejected for reasons described elsewhere (IOM, 1997). Instead, the model for the derivation of ULs consists of a set of scientific factors that always should be considered explicitly. The framework under which these factors are organized is called risk assessment. Risk assessment (NRC, 1983, 1994) is a systematic means of evaluating the probability of occurrence of adverse health effects in humans from excess exposure to an environmental agent (in this case, a nutrient) (FAO/WHO, 1995; Health Canada, 1993). The hallmark of risk assessment is the requirement to be explicit in all the evaluations and judgments that must be made to document conclusions.

RISK ASSESSMENT AND FOOD SAFETY

Basic Concepts

Risk assessment is a scientific undertaking having as its objective a characterization of the nature and likelihood of harm resulting from

human exposure to agents in the environment. The characterization of risk typically contains both qualitative and quantitative information and includes a discussion of the scientific uncertainties in that information. In the present context the agents of interest are nutrients and the environmental media are food, water, and nonfood sources such as nutrient supplements and pharmacological preparations.

Performing a risk assessment results in a characterization of the relationship between exposure to an agent and the likelihood that adverse health effects will occur in members of exposed populations. Scientific uncertainties are an inherent part of the risk assessment process and are discussed below. Deciding whether the magnitude of exposure is acceptable or tolerable in specific circumstances is not a component of risk assessment; this activity falls within the domain of risk management. Risk management decisions depend on the results of risk assessments but may also involve the public health significance of the risk, the technical feasibility of achieving various degrees of risk control, and the economic and social costs of this control. Because there is no single, scientifically definable distinction between safe and unsafe exposures, risk management necessarily incorporates components of sound, practical decision making that are not addressed by the risk assessment process (NRC, 1983, 1994).

A risk assessment requires that information be organized in rather specific ways but does not require any specific scientific evaluation methods. Rather, risk assessors must evaluate scientific information using what they judge to be appropriate methods; must make explicit the basis for their judgments about the uncertainties in risk estimates; and, when appropriate, must include alternative scientifically plausible interpretations of the available data (NRC, 1994; OTA, 1993).

Risk assessment is subject to two types of scientific uncertainties: those related to data and those associated with inferences that are required when directly applicable data are not available (NRC, 1994). Data uncertainties arise during the evaluation of information obtained from the epidemiological and toxicological studies of nutrient intake levels that are the basis for risk assessments. Examples of inferences include the use of data from experimental animals to estimate responses in humans and the selection of uncertainty factors to estimate inter- and intraspecies variabilities in response to toxic substances. Uncertainties arise whenever estimates of adverse health effects in humans are based on extrapolations of data obtained under dissimilar conditions (e.g., from experimental animal

studies). Options for dealing with uncertainties are discussed below and in detail in Appendix J.

Steps in the Risk Assessment Process

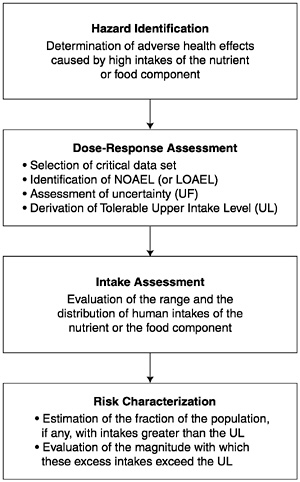

In this report the organization of risk assessment is based on a model proposed by the NRC (1983, 1994) that is widely used in public health and regulatory decision making. The steps of risk assessment as applied to nutrients are as follows (see also Figure 3-1):

-

Step 1. Hazard identification involves the collection, organization, and evaluation of all information pertaining to the adverse effects of a given nutrient. It concludes with a summary of the evidence concerning the capacity of the nutrient to cause one or more types of toxicity in humans.

-

Step 2. Dose-response assessment determines the relationship between nutrient intake (dose) and adverse effect (in terms of incidence and severity). This step concludes with an estimate of the Tolerable Upper Intake Level (UL) —it identifies the highest level of daily nutrient intake that is likely to pose no risk of adverse health effects to almost all individuals in the general population. Different ULs may be developed for various life stage groups.

-

Step 3. Intake assessment evaluates the distribution of usual total daily nutrient intakes for members of the general population. In cases where the UL pertains only to supplement use, the assessment is directed at intake from supplements only. It does not depend on step 1 or 2.

-

Step 4. Risk characterization summarizes the conclusions from steps 1 and 2 with step 3 to determine the risk. The risk is generally expressed as the fraction of the exposed population, if any, having nutrient intakes (step 3) in excess of the estimated UL (steps 1 and 2). If possible, characterization also covers the magnitude of any such excesses. Scientific uncertainties associated with both the UL and the intake estimates are described so that risk managers understand the degree of scientific confidence they can place in the risk assessment.

The risk assessment contains no discussion of recommendations for reducing risk; these are the focus of risk management.

FIGURE 3-1 Risk assessment model for nutrient toxicity.

Thresholds

A principal feature of the risk assessment process for noncarcinogens is the long-standing acceptance that no risk of adverse effects is expected unless a threshold dose (or intake) is exceeded. The adverse effects that may be caused by a nutrient almost certainly occur only when the threshold dose is exceeded (NRC, 1994; WHO, 1996). The critical issues concern the methods used to identify the approximate threshold of toxicity for a large and diverse human population. Because most nutrients are not considered to be carcinogenic in humans, the approach to carcinogenic risk assessment (EPA, 1996) is not discussed here.

Thresholds vary among members of the general population (NRC, 1994). For any given adverse effect, if the distribution of thresholds in the population could be quantitatively identified, it would be possible to establish ULs by defining some point in the lower tail of the distribution of thresholds that would protect some specified fraction of the population. However, data are not sufficient to allow identification of the distribution of thresholds for the B vitamins or choline. The method for identifying thresholds for the general population described here is designed to ensure that almost all members of the population will be protected, but it is not based on an analysis of the theoretical distribution of thresholds. By using the model to derive the threshold, however, there is considerable confidence that the threshold, which becomes the UL for nutrients, lies very near the low end of the theoretical distribution and is the end representing the most sensitive members of the population. For some nutrients there may be subpopulations that are not included in the general distribution because of extreme or distinct vulnerabilities to toxicity. Such distinct groups, whose conditions warrant medical supervision, may not be protected by the UL.

When possible, the UL is based on a no-observed-adverse-effect level (NOAEL), which is the highest intake (or experimental oral dose) of a nutrient at which no adverse effects have been observed in the individuals studied. If there are no adequate data demonstrating a NOAEL, then a lowest-observed-adverse-effect level (LOAEL) may be used. A LOAEL is the lowest intake (or experimental oral dose) at which an adverse effect has been identified. The derivation of a UL from a NOAEL (or LOAEL) involves a series of choices about what factors should be used to deal with uncertainties. Uncertainty factors are applied in an attempt to deal both with gaps in data and with incomplete knowledge regarding the inferences required (e.g., the expected variability in response within the human population). The problems of both data and inference uncertainties arise in all steps of the risk assessment. A discussion of options available for dealing with these uncertainties is presented below and in greater detail in Appendix J.

A UL is not in itself a description of human risk. It is derived by application of the hazard identification and dose-response evaluation steps (steps 1 and 2) of the risk assessment model. To determine whether populations are at risk requires an intake assessment (step 3, evaluation of their intakes of the nutrient) and a determination of the fractions of those populations, if any, whose intakes exceed the UL. In the intake assessment and risk characterization steps (steps 3 and 4; described in the respective nutrient chapters),

the distribution of actual intakes for the population will be used as a basis for determining whether and to what extent the population is at risk.

APPLICATION OF THE RISK ASSESSMENT MODEL TO NUTRIENTS

This section provides guidance for applying the risk assessment framework (the model) to the derivation of Tolerable Upper Intake Levels (ULs) for nutrients.

Special Problems Associated with Substances Required for Human Nutrition

In the application of accepted standards for risk assessment of environmental chemicals to risk assessment of nutrients, a fundamental difference between the two categories must be recognized: within a certain range of intakes, nutrients are essential for human well-being and usually for life itself. Nonetheless, they may share with other chemicals the production of adverse effects at excessive exposures. Because the consumption of balanced diets is consistent with the development and survival of humankind over many millennia, there is less need for the large uncertainty factors that have been used for the risk assessment of nonessential chemicals. In addition, if data on the adverse effects of nutrients are available primarily from studies in human populations, there will be less uncertainty than is associated with the types of data available on nonessential chemicals.

There is no evidence to suggest that nutrients consumed at recommended intakes (the Recommended Dietary Allowance [RDA] or Adequate Intake [AI]) present a risk of adverse effects to the general population. It is clear, however, that the addition of nutrients to a diet through the ingestion of large amounts of highly fortified food, nonfood sources such as supplements, or both may (at some level) pose a risk of adverse health effects. The UL is the highest level of daily nutrient intake that is likely to pose no risk of adverse health effects to almost all individuals in the general population. As intake increases above the UL, the risk of adverse effects increases.

If adverse effects have been associated with total intake from all sources, ULs are based on total intake of a nutrient from food, water, and supplements. For cases in which adverse effects have been associated with intake only from supplements and food fortificants, the UL is based on intake from those sources only rather

than on total intake. The effects of nutrients from fortified foods or supplements may differ from those of naturally occurring constituents of foods because of the chemical form of the nutrient, the timing of the intake and amount consumed in a single bolus dose, the matrix supplied by the food, and the relation of the nutrient to the other constituents of the diet. Nutrient requirements and food intake are related to the metabolizing body mass, which is also at least an indirect measure of the space in which the nutrients are distributed. This relation between food intake and space of distribution supports homeostasis, which maintains nutrient concentrations in that space within a range compatible with health. However, excessive intake of a single nutrient from supplements or fortificants may compromise this homeostatic mechanism. Such elevations alone may pose risk of adverse effects, and imbalances among the vitamins or other nutrients may also be possible. Thus, assessment of risk from high nutrient intake includes the form and pattern of consumption, when applicable.

Consideration of Variability in Sensitivity

This risk assessment model must consider variability in the sensitivity of individuals to adverse effects of nutrients. Physiological changes and common conditions associated with growth and maturation that occur during an individual’s lifespan may influence sensitivity to nutrient toxicity. For example, sensitivity increases with declines in lean body mass and with declines in renal and liver function that occur with aging; sensitivity changes in direct relation to intestinal absorption or intestinal synthesis of nutrients; in the newborn infant sensitivity is also increased because of rapid brain growth and limited ability to secrete or biotransform toxicants; and sensitivity increases with decreases in the rate of metabolism of nutrients. During pregnancy the increase in total body water and glomerular filtration results in lower blood levels of water-soluble vitamins for a given dose and therefore in reduced susceptibility to potential adverse effects. However, this effect may be offset by active placental transfer to the unborn fetus, accumulation of certain nutrients in the amniotic fluid, and rapid development of the fetal brain. There are no data to suggest increased or reduced susceptibility to adverse effects from high intake of B vitamins and choline during lactation. For the B vitamins and choline, different ULs are developed for some life stage groups, but the ULs for adults apply equally to pregnant and lactating women. For the B vitamins and choline, the ULs for infants were judged not determinable because

of the lack of data on adverse effects in this age group and concern about the infant’s ability to handle excess amounts of nutrients. Even within relatively homogeneous life stage groups, there is a range of sensitivities to toxic effects. The model described below accounts for normally expected variability in sensitivity but excludes subpopulations with extreme and distinct vulnerabilities. Such subpopulations consist of individuals needing medical supervision; they are better served through the use of public health screening, product labeling, or other individualized health care strategies. The decision to treat identifiable vulnerable subgroups as distinct (not protected by the UL) is a matter of judgment and is discussed in individual nutrient chapters, as applicable.

Bioavailability

In the context of toxicity, the bioavailability of an ingested nutrient can be defined as its accessibility to normal metabolic and physiological processes. Bioavailability influences a nutrient’s beneficial effects at physiological levels of intake and also may affect the nature and severity of toxicity due to excessive intakes. The concentration and chemical form of the nutrient, the nutrition and health of the individual, and excretory losses all affect bioavailability. Bioavailability data for specific nutrients must be considered and incorporated into the risk assessment process.

Certain B vitamins may be less readily absorbed when part of a meal than when taken separately. Supplemental forms of vitamins require special consideration if they have higher bioavailability and therefore may present a higher risk of producing adverse effects than does food (e.g., see Chapter 8).

Nutrient-Nutrient Interactions

A diverse array of adverse health effects can occur as a result of the interaction of nutrients. The potential risk of adverse nutrient-nutrient interactions increases when there is an imbalance in the intake of two or more nutrients. Excessive intake of one nutrient may interfere with absorption, excretion, transport, storage, function, or metabolism of a second nutrient. Possible adverse nutrient-nutrient interactions are considered as a part of setting a UL. Nutrient-nutrient interactions may be considered either as a critical endpoint on which to base a UL or as supportive evidence for a UL based on another endpoint.

STEPS IN THE DEVELOPMENT OF THE TOLERABLE UPPER INTAKE LEVEL

Hazard Identification

Based on a thorough review of the scientific literature, the hazard identification step outlines the adverse health effects that have been demonstrated to be caused by the nutrient. The primary types of data used as background for identifying nutrient hazards in humans are as follows:

-

Human studies. Human data provide the most relevant kind of information for hazard identification and, when they are of sufficient quality and extent, are given greatest weight. However, the number of controlled human toxicity studies conducted in a clinical setting is very limited because of ethical reasons. Such studies are generally most useful for identifying very mild (and ordinarily reversible) adverse effects. Observational studies that focus on welldefined populations with clear exposures to a range of nutrient intake levels are useful for establishing a relationship between exposure and effect. Observational data in the form of case reports or anecdotal evidence are used for developing hypotheses that can lead to knowledge of causal associations. Sometimes a series of case reports, if it shows a clear and distinct pattern of effects, may be reasonably convincing on the question of causality.

-

Animal studies. Most of the available data used in regulatory risk assessments come from controlled laboratory experiments in animals, usually mammalian species other than humans (e.g., rodents). Such data are used in part because human data on nonessential chemicals are generally very limited. Because well-conducted animal studies can be controlled, establishing causal relationships is generally not difficult. However, cross-species differences make the usefulness of animal data for establishing Tolerable Upper Intake Levels (ULs) problematic (see below).

Six key issues that are addressed in the data evaluation of human and animal studies are the following (see Box 3-1):

-

Evidence of adverse effects in humans. The hazard identification step involves the examination of human, animal, and in vitro published evidence addressing the likelihood of a nutrient eliciting an adverse effect in humans. Decisions regarding which observed effects are adverse are based on scientific judgments. Although toxicolo-

|

BOX 3-1 Development of Tolerable Upper Intake Levels (ULs) Components Of Hazard Identification

Components Of Dose-Response Assessment

|

-

gists generally regard any demonstrable structural or functional alteration as representing an adverse effect, some alterations may be considered to be of little or self-limiting biological importance. As noted earlier, adverse nutrient-nutrient interactions are considered in the definition of an adverse effect.

-

Causality. As outlined in Chapter 2, the criteria of Hill (1971) are considered in judging the causal significance of an exposureeffect association indicated by epidemiological studies.

-

Relevance of experimental data. Consideration of the following issues can be useful in assessing the relevance of experimental data:

-

Animal data. Animal data may be of limited utility in judging the toxicity of nutrients because of highly variable interspecies differences in nutrient requirements. Nevertheless, relevant animal data are considered in the hazard identification and dose-response assessment steps where applicable.

-

Route of exposure. Data derived from studies involving oral exposure (rather than parenteral exposure) are most useful for evaluating nutrients. Data derived from studies involving parenteral routes of exposure may be considered relevant if the adverse effects are systemic and data are available to permit extrapolation between routes. (The terms route of exposure and route of intake refer to how a

-

-

substance enters the body, for example, by ingestion, injection, or dermal absorption. These terms should not be confused with form of intake, which refers to the medium or vehicle used, e.g., supplements, food, and drinking water.)

-

Duration of exposure. Consideration needs to be given to the relevance of the exposure scenario (e.g., chronic daily dietary exposure versus short-term bolus doses) to dietary intakes by human populations.

-

Mechanisms of toxic action. Knowledge of molecular and cellular events underlying the production of toxicity can assist in dealing with the problems of extrapolation between species and from high to low doses. It may also aid in understanding whether the mechanisms associated with toxicity are those associated with deficiency. In the case of the B vitamins, knowledge of the biochemical sequence of events resulting from toxicity and deficiency is still incomplete, and it is not yet possible to state with certainty the extent to which these sequences share a common pathway.

-

Quality and completeness of the database. The scientific quality and quantity of the database are evaluated. Human or animal data are reviewed for suggestions that the substances have the potential to produce additional adverse health effects. If suggestions are found, additional studies may be recommended.

-

Identification of distinct and highly sensitive subpopulations. The ULs are based on protecting the most sensitive members of the general population from adverse effects of high nutrient intake. For some nutrients, however, there may be distinct subgroups that have extreme sensitivities that do not fall within the range of sensitivities expected for the general population. The UL for the general population may not be protective for these subgroups. As indicated earlier, the extent to which a distinct subpopulation will be included in the derivation of a UL for the general population is an area of judgment to be addressed on a case-by-case basis.

Dose-Response Assessment

The process for deriving the UL is described in this section and outlined in Box 3-1. It includes selection of the critical data set, identification of a critical endpoint with its NOAEL (or LOAEL), and assessment of uncertainty.

Data Selection

The data evaluation process results in the selection of the most appropriate or critical data sets for deriving the UL. Selecting the critical data set includes the following considerations:

-

Human data are preferable to animal data.

-

In the absence of appropriate human data, information from an animal species with biological responses most like those of humans is most valuable.

-

If it is not possible to identify such a species or to select such data, data from the most sensitive animal species, strain, and gender combination are given the greatest emphasis.

-

The route of exposure that most resembles the route of expected human intake is preferable. This includes considering the digestive state (e.g., fed or fasted) of the subjects or experimental animals. Where this is not possible, the differences in route of exposure are noted as a source of uncertainty.

-

The critical data set defines a dose-response relationship between intake and the extent of the toxic response known to be most relevant to humans. Data on bioavailability are considered and adjustments in expressions of dose-response are made to determine whether any apparent differences in response can be explained.

-

The critical data set documents the route of exposure and the magnitude and duration of the intake. Furthermore, the critical data set documents the intake that does not produce adverse effects (the NOAEL), as well as the intake producing toxicity.

Identification of NOAEL (or LOAEL) and Critical Endpoint

A nutrient can produce more than one toxic effect (or endpoint), even within the same species or in studies using the same or different exposure durations. The NOAELs (and LOAELs) for these effects will differ. The critical endpoint used in this report is the adverse biological effect exhibiting the lowest NOAEL (e.g., the most sensitive indicator of a nutrient’s toxicity). The derivation of a UL based on the most sensitive endpoint will ensure protection against all other adverse effects.

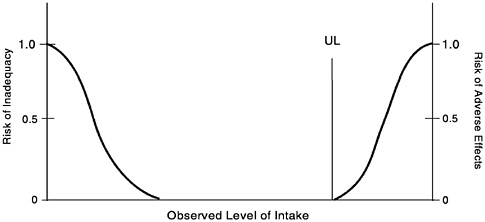

For some nutrients there may be inadequate data on which to develop a UL. The lack of reports of adverse effects after excess intake of a nutrient does not mean that adverse effects do not occur. As the intake of any nutrient increases, a point (see Figure 3-2) is reached at which intake begins to pose a risk. Above this point,

FIGURE 3-2 Theoretical description of health effects of a nutrient as a function of level of intake. The Tolerable Upper Intake Level (UL) is the highest level of daily nutrient intake that is likely to pose no risk of adverse health effects for almost all individuals in the general population. At intakes above the UL, the risk of adverse effects increases.

increased intake increases the risk of adverse effects. For some nutrients, and for various reasons, data are not adequate to identify the point where intake begins to pose a risk or even to estimate its location.

Because adverse effects are almost certain to occur for any nutrient at some level of intake, it should be assumed that such effects may occur for nutrients for which a scientifically documentable UL cannot now be derived. Until a UL is set or an alternative approach to identifying protective limits is developed, intakes greater than the Recommended Dietary Allowance (RDA) or Adequate Intake (AI) should be viewed with caution.

Uncertainty Assessment

Several judgments must be made regarding the uncertainties and thus the uncertainty factor (UF) associated with extrapolating from the observed data to the general population (see Appendix J). Applying a UF to a NOAEL (or LOAEL) results in a value for the derived UL that is less than the experimentally derived NOAEL unless the UF is 1.0. The larger the uncertainty, the larger the UF and the smaller the UL. This is consistent with the ultimate goal of

the risk assessment: to provide an estimate of a level of intake that will protect the health of the healthy population (Mertz et al., 1994).

Although several reports describe the underlying basis for UFs (Dourson and Stara, 1983; Zielhuis and van der Kreek, 1979), the strength of the evidence supporting the use of a specific UF will vary. Because the imprecision of these UFs is a major limitation of risk assessment approaches, considerable leeway must be allowed for the application of scientific judgment in making the final determination. Because the data on nutrient toxicity may not be subject to the same uncertainties as are data on nonessential chemical agents, the UFs for nutrients are typically less than 10. They are lower with higher-quality data and when the adverse effects are extremely mild and reversible.

In general, when determining a UF, the following potential sources of uncertainty are considered:

-

Interindividual variation in sensitivity. Small UFs (close to 1) are used if it is judged that little population variability is expected for the adverse effect, and larger factors (close to 10) are used if variability is expected to be great (NRC, 1994).

-

Extrapolation from experimental animals to humans. A UF is generally applied to the NOAEL to account for the uncertainty in extrapolating animal data to humans. Larger UFs (close to 10) may be used if it is believed that the animal responses will underpredict average human responses (NRC, 1994).

-

LOAEL instead of NOAEL. If a NOAEL is not available, a UF may be applied to account for the uncertainty in deriving a UL from the LOAEL. The size of the UF involves scientific judgment based on the severity and incidence of the observed effect at the LOAEL and the steepness (slope) of the dose response.

-

Subchronic NOAEL to predict chronic NOAEL. When data are lacking on chronic exposures, scientific judgment is necessary to determine whether chronic exposure is likely to lead to adverse effects at lower intakes than those producing effects after subchronic exposures (exposures of shorter duration).

Derivation of a UL

The UL is derived by dividing the NOAEL (or LOAEL) by a single UF that incorporates all relevant uncertainties. For infants, ULs were not determined for any of the B vitamins or choline because of the lack of data on adverse effects in this age group and concern regarding infants’ possible lack of ability to handle excess amounts.

Thus, caution is warranted; food should be the source of intake by infants.

ULs for niacin, vitamin B12, and choline in children and adolescents were determined by extrapolating from the UL for adults based on body weight differences by using the formula

ULchild = (ULadult) (Weightchild/Weightadult)0.75.

See Chapter 2 for related information about extrapolation.

With the use of data from Table 1-2 (Chapter 1), the reference weight for males ages 19 through 30 years was used for adults and the reference weights for female children and adolescents were used in the formula above to obtain the UL for each age group. The use of these reference weights yields a conservative UL to protect the sensitive individuals in each age group.

The derivation of a UL involves the use of scientific judgment to select the appropriate NOAEL (or LOAEL) and UF. The risk assessment requires explicit consideration and discussion of all choices made, both regarding the data used and the uncertainties accounted for. These considerations are discussed in the chapters on nutrients. Because of lack of suitable data, ULs could not be set for infants or for thiamin, riboflavin, vitamin B12, pantothenic acid, or biotin.

Characterization of the Estimate and Special Considerations

ULs are derived for various life stage groups by using relevant databases, NOAELs and LOAELs, and UFs. Where no data exist for NOAELs or LOAELs for the group under consideration, extrapolations from data in other age groups and/or animal data are made on the basis of known differences in body size, physiology, metabolism, absorption, and excretion of the nutrient.

If the data review reveals the existence of subpopulations having distinct and exceptional sensitivities to a nutrient’s toxicity, these subpopulations are considered under the heading “Special Considerations.”

REFERENCES

Dourson ML, Stara JF. 1983. Regulatory history and experimental support of uncertainty (safety) factors. Regal Toxicol Pharmacol 3:224–238.

EPA (U.S. Environmental Protection Agency). 1996. Proposed guidelines for carcinogen risk assessment; Notice. Fed Regist 61:17960–18011.

FAO/WHO (Food and Agriculture Organization of the United Nations/World Health Organization). 1995. The Application of Risk Analysis to Food Standard Issues. Recommendations to the Codex Alimentarius Commission (ALINORM 95/9, Appendix 5). Geneva: World Health Organization.

Health Canada. 1993. Health Risk Determination—The Challenge of Health Protection. Ottawa: Health Canada, Health Protection Branch.

Hill AB. 1971. Principles of Medical Statistics, 9th ed. New York: Oxford University Press.

IOM (Institute of Medicine). 1997. Dietary Reference Intakes for Calcium, Phosphorus, Magnesium, Vitamin D, and Fluoride. Washington, DC: National Academy Press.

Klaassen CD, Amdur MO, Doull J. 1986. Casarett and Doull’s Toxicology: The Basic Science of Poisons, 3rd ed. New York: Macmillan.

Mertz W, Abernathy CO, Olin SS. 1994. Risk Assessment of Essential Elements. Washington, DC: ILSI Press.

NRC (National Research Council). 1983. Risk Assessment in the Federal Government: Managing the Process. Washington, DC: National Academy Press.

NRC (National Research Council). 1994. Science and Judgment in Risk Assessment. Washington, DC: National Academy Press.

OTA (Office of Technology Assessment). 1993. Researching Health Risks. Washington, DC: OTA.

WHO (World Health Organization). 1996. Trace Elements in Human Nutrition and Health. Prepared in collaboration with the Food and Agriculture Organization of the United Nations and the International Atomic Energy Agency. Geneva: WHO.

Zielhuis RL, van der Kreek FW. 1979. The use of a safety factor in setting health-based permissible levels for occupational exposure. Int Arch Occup Environ Health 42:191–201.