2

Use of Probabilistic Methods

INTRODUCTION

This chapter is intended to serve two distinct purposes. First, it provides a general background on the objectives of a probabilistic analysis, the benefits that can be realized from such an approach, and the manner in which it can be applied to practical geotechnical engineering problems. The second purpose is to respond to two of the questions raised in Chapter 1. Question 1, which deals with factors inhibiting the use of probability theory, and Question 2, which deals with potential applications and benefits, are addressed in this chapter.1 The remaining questions are considered in subsequent chapters.

BACKGROUND

Uncertainties of many types pervade the practice of geotechnical engineering. Included are uncertainties due to the variable nature of soil and rock properties and other in situ conditions, uncertainties about the reliability of design and construction methods, and uncertainties about the costs and benefits of proposed design strategies. Probability theory is a mathematical tool that can be used to formally include such uncertainties in an engineering design and to assess their implications on performance. As an example, its use in this context may result in a statement about the likelihood (probability) that a particular design will be successful or, conversely, about its likelihood of failure.

Probability theory has a wide range of potential applications in engineering practice. The following situations, which are open to probabilistic treatment, should give the reader a better understanding of the depth and breadth of such applications.

-

Alternative strategies can be evaluated using probability theory, whereas with conventional methods it might not be possible. One example would be to compare designs by comparing probabilities of failure. To do so with

1

An attempt has been made in this chapter to minimize mathematics and jargon; Appendix C is included in this report for a fuller overview of basic concepts and uses of probability.

conventional methods would involve comparing factors of safety for different modes of failure. The shortcoming of the conventional approach arises from the fact that the same value of factor of safety for different modes of failure may correspond to significantly different values of failure probability. Hence comparing factors of safety between different failure modes is analogous to comparing apples and oranges.

-

Where alternative courses of action are cast in terms of likely ranges of cost and benefit, probability theory can provide an avenue for better communication between the geotechnical engineer and the layman (e.g., owners, regulators, and the public). For instance, one may not necessarily adopt the most conservative, worst-case scenario as is commonly done in a deterministic approach. There may be a more economical alternative with a risk that is acceptable to the owner or regulator due to a small likelihood of an undesirable consequence.

-

At a given stage in evaluating a particular problem, one may wish to know whether the remaining uncertainties can be reduced appreciably by further site exploration. Additionally, the question may arise as to how sensitive the solution is to uncertainties in construction imperfections or whether spending more to upgrade a part of the design is likely to produce a worthwhile benefit.

-

By adopting a probabilistic approach, a construction quality assurance (CQA) program can be designed to achieve a target level of system performance. Measured data from a CQA program can be used to reassess the performance reliability of the system by taking advantage of the site-specific information. Cost- effectiveness of CQA programs can be compared in terms of probability of achieving program goals.

In many instances, the input (e.g., statistics, such as the means and standard deviations of uncertain quantities) required for probabilistic analyses are themselves highly uncertain and can only be established approximately through the reasoned judgment of trained professionals. Even so, the discipline and logic of these analyses provide a useful approach to a solution. Further, this framework can be used to evaluate the sensitivities to uncertainties, and hence the implications, of professional judgments. This type of understanding can be helpful in making decisions.

It is also important to point out that probabilistic methods should not be viewed as a panacea. There are a number of problems for which they are not likely to be effective. One such set of problems are those where the approach is evident and obvious, for example, in establishing suitable bearing pressures for spread footings on compacted fill. In this case, methods based on experience normally are fully adequate.

Another group of problems for which probabilistic methods may not be effective are those involving highly variable or chaotic conditions such as extremely erratic depths to rock in some residual soils. Such problems represent situations where the engineer may be unable to assess accurately enough for design decisions the probability distributions of important variables, such as depth to rock at a specific site, before some key decisions are

made. In this case, it is preferable to determine the depth to rock at the site in advance of construction or to adopt a type of foundation that can accommodate variable depths of soil.

A third type of problem involves specific projects where predictions (either deterministic or probabilistic) based on current information cannot provide the required accuracy, because suitable models are not available or the failure cost is very large. An example is Terzaghi 's treatment of potential seepage layers at the Cheakamus Dam, British Columbia, where trenches were cut to find and seal potential seepage layers, and drainage galleries and observation wells were installed to intercept and detect seepage (Terzaghi, 1960).

Even in these situations, probabilistic analysis—or at least probabilistic thinking—can be of value. In the case of highly variable depths to rock, it may be possible to estimate, for costing purposes, the probability distribution concerning quantity of rock to be removed from a foundation excavation. It may be noted that B.C. Hydro has recently embarked upon a program of applying risk analysis to dams such as Cheakamus to guide efforts regarding maintenance and upgrading of these facilities (Nielson et al., 1994).

Some geotechnical engineers have incorrect perceptions that (1) a large body of data is needed in order to be able to apply probabilistic methods to geotechnical problems, and (2) probabilistic and deterministic methods are mutually exclusive in their use. Neither is true. The lack of a large data set does not preclude the use of probability theory. Probability theory can be used to evaluate the uncertainties involved in working with meager information. In fact, it can help to identify the optimal type of information to be acquired if reduction of uncertainties is desired. Probabilistic methods and deterministic methods complement each other in solving geotechnical problems.

Probability is not a substitute for traditional design approaches such as comprehensive site investigations, laboratory test programs, mechanistic analyses, or engineering judgment; rather, it should be used to complement these more traditional geotechnical engineering tools. Probabilistic methods are most effective when used to organize and quantify pertinent uncertainties for engineering designs and decisions.

FACTORS THAT HAVE INHIBITED USE

The first question (Question 1) addressed by workshop participants and subsequently by the committee concerned determination of the factors that have inhibited the use of probability methods in geotechnical practice. In addressing the fact that probability has not been widely used in geotechnical engineering, Whitman (1984, p. 145) stated:

Recent years have seen rapidly growing research into applied probability and increased interest in applications to geotechnical engineering practice. Unfortunately, probability still remains a mystery to many engineers, partly

because of a language barrier and partly from lack of examples showing how the methodology can be used in the decision-making process.

To understand whether probability methods have potential for more-widespread use in geotechnical engineering, it is important to understand whether their current seemingly low level of use is attributable to rational choice, bias, or ignorance. This issue was discussed at length at the workshop, and a better understanding of the influential factors emerged from the discussion.

Probability theory is being used in some areas of geotechnical engineering. Where regulations require it, for example, in many types of geo-environmental problems, probability theory has been adopted and used effectively. Probability theory has also been used in connection with economic analysis of alternative courses of action, where it provides a useful basis for decision making, and in geotechnical engineering for offshore structures, where a significant effort has been made to assess the uncertainties and biases in offshore pile design methods and to use the information to improve these practices.

However, in conventional geotechnical practice, such as foundation engineering and embankment dam design, probabilistic methods have not seen extensive use. In these older, more conventional areas of geotechnical engineering, well-developed, effective, and successful methods are available that embody the lessons learned from decades of professional practice. Many geotechnical engineers practicing in these traditional areas apparently have seen little need to change from using methods that have served them well to new and largely untried methods with questionable potential benefit.

These thoughts were expressed at the workshop in the following words by Ralph Peck:

We see geotechnical engineering as developing into two somewhat different entities: one part still dealing with traditional problems such as foundations, dams, and slope stability, and another part dealing with earthquake problems; natural slopes; and, most recently, environmental geotechnics. Practitioners in the first part have not readily adopted reliability theory, largely because the traditional methods have been generally successful, and engineers are comfortable with them. In contrast, practitioners in environmental geotechnics and to some extent in offshore engineering require newer, more stringent assessments of reliability that call for a different approach. Therefore, we may expect reliability methods to be adopted increasingly rapidly in these areas as confidence is developed. It is not surprising that those engineers working in environmental and offshore problems should be more receptive to new approaches, and it should not be surprising that there may be spillback into the more traditional areas.

Many of the examples of the applications of probabilistic methods that are described in the literature appear to some engineers to be oversimplified. The lack of a

substantial number of case histories in conventional application areas is another factor that may have inhibited the use of probability. As noted by Christian et al. (1992, p. 1073):

The established methods for performing probabilistic analysis of slope stability deal primarily with uncertainty in the analyst's knowledge of the properties of the materials and the loading conditions. They do not address inadequacies in spillway capacity, failure to prevent or detect internal erosion, or errors in construction, and unless special attention is devoted to the problem, they seldom consider that an inadequate field exploration program might miss a critical geological detail. Nevertheless, these are among the major causes of failure of slopes and embankments. Clearly any probability of failure that is to be used in benefit-cost analysis of probabilistic risk assessment must be based on more than the uncertainty in known geotechnical properties.

Christian et al. may have exaggerated the narrowness of the earlier applications that have concentrated primarily on the variation of soil strengths. Nevertheless, this statement underscores the importance of including all potential failure mechanisms, such as internal erosion, construction and human errors, and hydraulic performances, in a comprehensive system risk analysis of the stability of an embankment. In fact, recent applications have already begun to address some of these issues. As a greater number of significant case histories become available to the profession, understanding of, and credibility in, the method will be expected to increase.

Widespread use of probabilistic methods is also inhibited by the fact that most geotechnical engineers have not been educated in the use of these methods. Currently, most civil engineering curricula do not include extensive coverage of probability theory, especially within the context of engineering. Instruction in formal applications to geotechnical problems is rare. Furthermore, there is a lack of mechanisms for educators and practitioners to learn to use the methods.

There appears to be no single reason why probabilistic methods have not been more readily adopted and more widely used. Where regulations require it, or where the benefits are clear, probability theory has been adopted readily in geotechnical engineering. Where the benefits of change are not clear, probability theory has not been widely used. In effect, the choice not to adopt probability theory has been, in some instances, because of the feeling that it does not address the real problems and, in other instances, because of the philosophy “if it ain't broke, don't fix it.” Wider use of probabilistic methods is expected as geotechnical engineers become more proficient in the methods and as more significant applications become available. Nevertheless, care must be exercised not to press for use of probabilistic methods where they are inappropriate.

AREAS THAT HAVE POTENTIAL FOR WIDESPREAD USE

The second question identified in Chapter 1 is “In what areas of geotechnical engineering does probability theory have potential for more-widespread use and application? What might be the attendant benefits for the profession, its clients, and the public?”

The committee decided to address this question by presenting a series of actual case studies in which probability theory has been applied or misapplied. The following nine examples illustrate the range of applications where probabilistic analysis can be brought to bear on geotechnical problems. Each example shows a different application and describes, in a problem-oriented context, how the use of probabilistic methods was advantageous (or inappropriate); what information was required and how it was applied; and how the results of the analysis were useful.

The first four examples illustrate the use of probability theory in making decisions on alternative courses of action. Examples 1 and 2 concern decisions on remedial measures for existing facilities. Example 3 involves exploration strategies and design options for new construction. Example 4 involves environmental cleanup decisions. Example 5 illustrates how probabilistic analysis can be used to plan an efficient site-investigation program. Example 6 compares the reliability between two design approaches and demonstrates setting design criteria. Example 7 shows how the probabilistic method can be used to establish quality-control guidelines. Example 8 shows how the probabilistic method can be used to predict the skirt penetration resistances of an offshore gravity platform during installation. And Example 9 illustrates the dangers of an overreliance on probability theory as a substitute for engineering judgment.

From these examples, it is evident that there are substantial potential benefits from using probabilistic methods in geotechnical practice. They are

-

Probabilistic methods are very useful as a basis for making economic decisions. In areas such as dam rehabilitation, landslide hazard mitigation, environmental remediation, and infrastructure rehabilitation, effective allocation of available funds relies on evaluating the tradeoff between benefits and risks resulting from various uncertainties. Probability methods provide a quantitative basis through which the relative contribution of risk can be systematically analyzed. In this way, decisions can be made more rationally and justified more logically.

-

Geotechnical engineers are increasingly asked to tackle many types of non-traditional problems for which there is little or no experience to provide guidance. Examples include the burgeoning involvement of geotechnical engineers in geo-environmental engineering, the use of geosynthetics in many geotechnical applications, and designs of foundations for deep-water offshore structures. In these situations, where close precedents are not available, the geotechnical engineer 's experience and judgment are stretched to the limit. It is very helpful in these circumstances to use

-

probabilistic methods, which can provide a systematic means of assessing the reliability of engineering analyses.

-

As the public takes on a more active role in issues related to technological advances, society is demanding more-explicit assessments of risk. Some regulatory agencies, such as the Environmental Protection Agency and the Nuclear Regulatory Commission, require formal risk assessments for licensing. To work effectively with the public and these regulatory agencies, geotechnical engineers must have some knowledge of probability theory and reliability methodologies, as well as traditional geotechnical expertise. Probabilistic methods can be used to evaluate risks on the basis of available data, current technology, and engineering judgment.

Example 1: Probability of Levee Failure

This example, described by Duncan and Houston (1983), concerns a study performed to predict how many levee failures will occur in the California Delta region in the period from 1977–2016 and the benefits that would accrue from various possible mitigative measures. The example illustrates the use of probability theory to assess the likelihood of failure in a system of levees that is so long (1,770 km) that it is infeasible to base the assessment on detailed stability analyses. Historic failure rates, together with simplified stability analyses, were used to predict future rates of failure.

The California Delta, about 40 km northeast of San Francisco, includes 45 islands used principally for farming. The “floors” of the islands, 3 m to 6 m below sea level, are surrounded by levees to protect them from flooding. The island floors continue to subside as peat soil is lost by oxidation and wind erosion. As the island floors have subsided over a period of 80 years, the levees have been built up gradually, and the water head they resist has continued to increase. Over this period, 70 levee failures have occurred. As the water head that is resisted by the levees continues to increase in the future, the likelihood of failure will also increase, unless mitigative measures are taken.

The main difficulty in the analysis performed by Duncan and Houston was that detailed geotechnical information was not available, and could not be obtained at reasonable cost, for the full 1,770-km length of the levees. What appeared likely to be critical sections of the levees were selected by Duncan and Houston using judgment, based on topographic information, field inspection, and experience. Stability analyses were performed for these sections using assumed strength parameters and pore pressures. The analyses modeled the failure mechanism observed in the field—sliding on a nearly horizontal plane, with a wedge of levee driven inland by the external water pressure. The calculated minimum factors of safety for each island were termed “factor of safety indices” because of their semiquantitative nature.

Duncan and Houston (1983) found that it was possible to correlate values of factor of safety index with historical failure rates. To do this effectively, they had to account for two very important factors. First, failures prior to 1950 appeared to be due to overtopping rather than instability. For this reason, only failures that occurred after 1950 were included

in the correlation. Second, failures proved to be more frequent where the depths of peat beneath the levees were great. An empirical adjustment was included to account for this important observed effect of peat depth.

Using the relationship that was established between factor of safety index and historical probability of failure, Duncan and Houston estimated the future probability of failure, considering the effects of (1) increased water head due to continued wind erosion of the island floors and (2) various mitigative measures. Because the results were expressed in terms of probability of failure, they provided a useful basis for estimating the future costs of taking no action and the benefits of various possible remedial measures. The results of the study were used by the Sacramento District of the U.S. Army Corps of Engineers to evaluate probable costs and benefits of upgrading the levees.

Example 2: Seismic Hazard Analysis of an Earth Dam

The Mormon Island Auxiliary Dam (MIAD) studied by Sykora et al. (1991) is part of the Folsom Dam project, which is located on the American River about 32 km upstream of Sacramento, California. The Folsom Dam project was designed and constructed by the U.S. Army Corps of Engineers between 1948 and 1956 and is now owned and operated by the U.S. Bureau of Reclamation. MIAD is a zoned, rolled-fill embankment with a central clay core. It was constructed across an old channel of the American River that contained auriferous gravels. In the deepest portion of the channel, a zone about 244 m wide , the 18 m thick gravels have been dredged for their gold content and then redeposited in the channel in a very loose condition. Hence, this section is susceptible to liquefaction during an earthquake of significant magnitude.

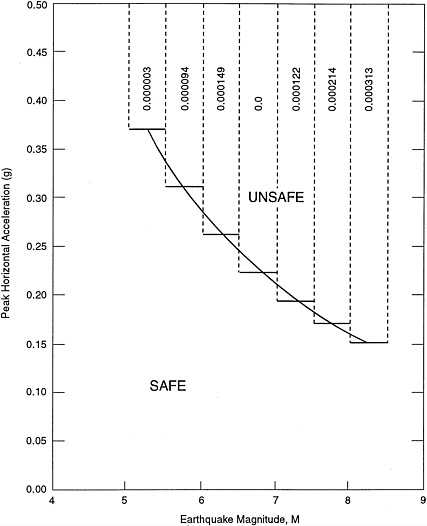

According to the results of extensive laboratory/field tests and analytical studies conducted by the Corps of Engineers (summarized by Hynes-Griffin, 1987), an earthquake of magnitude 6.5 with a peak acceleration of 0.25 g at the rock outcrop near MIAD would initiate liquefaction of sufficient extent to develop critical failure surfaces passing through the core of the dam and potentially lead to loss of the reservoir. For an earthquake of smaller magnitude, for example 5.25, a higher peak acceleration of 0.37 g would be required to initiate liquefaction. The region above the curve shown in Figure 2-1 represents those combinations of magnitude and peak acceleration of an earthquake that would induce liquefaction.

The Bureau of Reclamation wanted to know if any remedial actions were needed to improve the seismic safety of the dam. More urgently, if such improvements were needed, they wanted to know if any interim safety actions were necessary until the remedial actions were completed. To address these concerns, it was necessary to obtain a measure of the risk to the existing dam by estimating the average time until the next earthquake that is large enough to induce liquefaction (also known as the return period of the triggering earthquake). A seismic hazard analysis was performed using standard methodology (McGuire, 1976) and the respective seismic-source-zone information for the site. Because liquefaction is affected by earthquake magnitude, the probability of a triggering earthquake associated with each interval of earthquake magnitude was

Figure 2-1 Probabilities of occurrence for different ranges in magnitude at combinations of peak accelerations and earthquake magnitude causing liquefaction of dredged foundation gravel. Source: Sykora et al., 1991.

calculated as shown in Figure 2-1. The annual probability of exceeding triggering levels of peak acceleration and magnitude, which is the sum of the probabilities for the various magnitudes, was found to be 0.0009. The return period of (i.e., average time until) the triggering earthquake is simply the reciprocal of 0.0009, or 1,100 years. Since liquefaction resistance is also a function of the confining stress, the effect of varying storage level with time was also considered in the risk analysis. A reliability analysis on the basis of the historical distribution of pool levels showed that the net effect of pool variation was to

increase the return period to about 1,500 years. Although the uncertainties considered in this study pertain only to those of the seismic parameters, conservative estimates were used for the other input values, including soil parameters. Moreover, sensitivity analyses were also performed to examine the effect of different input assumptions on the estimated return period.

Despite the simple seismic risk study performed for this dam, the information obtained with respect to the annual risk of a triggering earthquake and to the average time to the next triggering earthquake was useful to the Bureau of Reclamation in addressing their earlier concerns. Although some remedial actions are required to assure the long-term safety of the dam, the likelihood of a triggering earthquake event occurring when the pool is sufficiently high to cause damage is remote during the short exposure period while remedial actions are designed and constructed. Therefore, interim safety actions were not considered necessary.

Example 3: Risk Analysis for Dam Design in Karst Terrain

This case history concerns the use of probability methods during the early phase of dam design, in which design options are being compared and exploration strategies planned (Vick, 1992). At this early stage, few data are usually available and judgment-based decisions are very important. Probabilistic methods were found to provide an effective vehicle for communicating geotechnical judgments, risk, and alternative courses of action to the project owner.

The project site is located in central Florida in a region of karst terrain. Expansion of a mining operation required the construction of a dike 10 m high and 1,200 m long to retain fresh water. Although sinkholes were not revealed by conventional exploration and geophysical surveys, their potential in the dike foundation and the resulting risk due to dike failure from sinkhole collapse were clear. An indication of this potential was provided by slime ponds in an adjacent area that contained tailings from rock washing operations. Periodic drops in fluid level of as much as 1 m in these impoundments provided evidence of sinkhole activity. No sinkhole-related damage or failure of the dikes had ever occurred despite the total dike length of 13 km. The dilemma was whether to proceed with construction of the proposed dike in the face of risks posed by the known presence of sinkholes in the area or to carry out a costly program of intensive sinkhole exploration and mitigation in spite of the entirely satisfactory performance of the existing tailings-pond dikes. It was unlikely that this dilemma could have been resolved with great confidence relying only on unquantified engineering judgment. Hence, the geotechnical engineer elected to use a probability estimate to characterize sinkhole uncertainties in order to elicit the owner's informed involvement in the decision. Details of the geologic conditions and probabilistic formulation have been presented by Vick and Bromwell (1989).

From a model based on geologic mapping and regional information, the probability of sinkhole occurrence underneath the proposed dike was estimated to be 0.45. Also, the frequency of observed sinkhole collapses from records maintained in the area allowed a

conditional probability of sinkhole collapse of 0.08 to be estimated. However, the performance of existing tailings-pond dikes suggested that even if sinkhole collapse were to occur, dike breach would not necessarily result. Failure in this case would require the joint occurrence of three sequential events, namely, dike-fill cracking, piping, and breaching of the dike. Likelihoods of these component events were estimated based on the engineer's judgment. The diagram in Figure 2-2 shows how the probabilities of these component events, estimated from either statistical data or subjective judgment, can be combined to estimate the probability of uncontrolled reservoir release due to foundation sinkholes as about 0.01. This probability estimate was judged to be reasonable when it was compared with dam failure frequencies from other causes.

This probabilistic formulation of geologic uncertainty was extended to a formal decision analysis by estimating failure consequences and identifying alternative measures to reduce either consequences or failure likelihood. The alternatives considered included (1) performing additional exploration, (2) implementing a warning system, and (3) constructing a secondary dike. This analysis concluded that constructing an inexpensive secondary dike of mine waste to retain floodwaters that might be released by dike breach had the lowest expected cost of the alternatives evaluated. Even so, the owner elected to accept the risks associated with constructing the dike without implementing any supplementary risk-reduction measures. The owner perceived that the incremental risk added by the relatively short new dike would not materially increase the risk exposure already present from the existing tailings ponds. Upon subsequent dike construction, difficulties in reservoir filling were encountered. These difficulties, while not unanticipated, required time-consuming sinkhole treatment. Nevertheless, performance of the dike proved to be satisfactory on first filling and thereafter.

The outcome of this case history was favorable insofar as probabilistic characterization helped to address conflicting inferences that could not likely have been resolved by informal processes alone. In addition, geologic uncertainties and risk were effectively communicated by the geotechnical engineer to the owner. This allowed the owner to become involved in an important part of the design process; and by being willing to accept project risks, the owner has avoided the cost of risk-reducing contingencies that might otherwise have been adopted.

Example 4: Selecting Pumping Rates for an Extraction Well

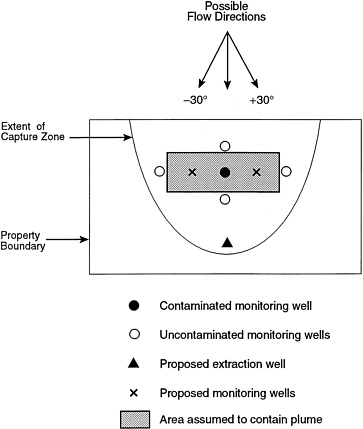

This example illustrates the use of the decision-analysis approach to select a pumping rate for an extraction well that is intended to capture a small plume of contaminated groundwater (Massmann et al., 1991). The contamination is a result of an accidental spill of petroleum products at an unidentified site. To define the scope of the contamination, five groundwater monitoring wells were installed, as shown in Figure 2-3. Since only one of the wells indicated contamination, the size and shape of the area containing the contamination plume has been conservatively assumed as shown. One remedial action alternative considered was to install a single extraction well at the location shown to capture the plume before it leaves the property boundary.

Figure 2-2 Scenario for uncontrolled reservoir release. Source: Vick, 1992.

From the perspective of the owner, the extraction system could be considered to have failed if contamination leaves the property. The effectiveness of the proposed extraction system was uncertain because of uncertainties in the direction and magnitude of the regional groundwater flow at the site. As the designed pumping rate at the extraction well increased, the risk associated with the plume escaping the site would decrease. Higher pumping rates would also result in increased pumping costs and, more importantly, the additional cost of treating more extracted water. In view of the uncertainties, one option for the owner was to adopt a high pumping rate by means of a conservative (but excessively costly) design in order to minimize the risk of failure. Alternatively, the optimal pumping rate might be obtained by a proper tradeoff between these costs and risks. A decision-analysis approach was applied with the objective of

Figure 2-3 Plan view for groundwater extraction problem. Source: Massmann et al., 1991.

minimizing the overall cost, which is the sum of (1) the cost of installing the pumping system and its associated operating and treatment costs and (2) the expected consequence that is the cost of failure weighted by the probability of failure.

The primary source of uncertainty affecting the performance of the extraction well was the uncertainty in the direction and magnitude of the regional groundwater flow. The direction is known within plus or minus 30 degrees, and the magnitude is assumed to range from 100 to 200 m per year. The relative likelihoods of the actual direction and magnitude over these respective ranges are subjectively estimated from topographic and hydrogeologic characteristics of the site. A flow-simulation program with inputs that reflect the site characteristics was used to determine if failure occurred for each combination of groundwater-flow direction and magnitude as a function of the pumping rate. The total probability of failure for the system was calculated by summing the probabilities of those combinations that yielded failures.

In evaluating the overall cost for a given extraction rate, difficulties arose in estimating the failure cost. To overcome this, a range of possible failure costs was used in the analysis to evaluate how these costs might affect the decision. Table 2-1 presents the results for the three alternative pumping rates and for two failure costs: $250,000 and $500,000. These costs could be estimated with the help of the owners by considering costs of litigation, fines, additional remedial activities, consultants' fees, and the like. In both cases, the preferred alternative based on minimum overall cost was to pump at the higher rate of 40 m3/hr. Hence the preferred higher pumping rate is robust with respect to failure costs since these costs did not affect the owner's decision.

One strategy that the owner might consider in such a case would be to obtain a more reliable estimate of the extent of the contaminant plume by placing two additional wells at the locations shown in Figure 2-3. If the plume was smaller than originally assumed, then perhaps a smaller pumping rate would suffice. To study the benefit of a smaller plume area, the decision analysis was repeated assuming a potential plume area one-third the size of the original area. As shown in Table 2-1, the preferred alternative

Table 2-1 Overall Costs, Assuming Large and Small Plumes

|

Case I |

Case II |

Case III |

|

|

Pumping Rate |

20 m3/hr |

30 m3/hr |

40 m3/hr |

|

Total Installation, operation, treatment cost |

$68,700 |

$84,400 |

$97,700 |

|

(a) Large Plume (Size shown in Figure 2-3) Probability of failure: |

0.484 |

0.133 |

0.017 |

|

Risk for Cf = $500,000 |

$242,000 |

$66,500 |

$8,500 |

|

Overall cost for Cf = $500,000 |

$310,700 |

$150,900 |

$106,200* |

|

Risk for Cf = $250,000 |

$121,000 |

$33,250 |

$4,250 |

|

Overall cost for Cf = $250,000 |

$189,700 |

$117,650 |

$101,950* |

|

(b) Small Plume (One-third the size shown in Figure 2-3) Probability of failure: |

0.084 |

0.000 |

0.000 |

|

Risk for Cf = $500,000 |

$42,000 |

$0 |

$0 |

|

Overall cost for Cf = $500,000 |

$110,700 |

$84,400* |

$97,700 |

|

Risk for Cf = $250,000 |

$21,000 |

$0 |

$0 |

|

Overall cost for Cf = $250,000 |

$89,700 |

$84,400* |

$97,700 |

|

* denotes preferred alternative Cf = cost of failure Source: Massmann et al., 1991. |

|||

for the range of failure cost is now shifted from 40 m3/hr to 30 m3/hr, which represents a less expensive design for the owner. The saving in the overall cost may be calculated by the difference between $106,200 and $84,400, which is $21,800 for the case of failure cost equal to $500,000. Similarly, the savings for the lower failure cost of $250,000 is $17,550. These savings estimates assume that the engineer believes strongly that the plume would be only one-third the size of the original area. The expected savings would be less if it is believed that a larger plume is also likely. Nevertheless, this information was helpful to the owner in deciding if the cost associated with the two additional wells is justified relative to the expected savings.

Example 5: Site Investigation Strategy

Engineers often encounter the problem of selecting the soil exploration plan and tests that would be most effective in defining the site characteristics of the project. An example described by Wu et al. (1989) shows how a probabilistic approach can logically quantify and combine the individual sources of uncertainties and then compares the effectiveness between several soil exploration alternatives in terms of the implied level of uncertainties.

The site considered is the location of the Brent B-G drilling platform in the North Sea, where the soil is primarily stratified stiff clay, silt, and sand. The stability of the platform is affected by the type of soil material and its strength along potential slip surfaces. In spite of information from five boreholes and cone penetration tests (CPTs) at the site, uncertainty existed with respect to the accurate determination of soil material and its strength throughout the site. For simplicity, the geology was idealized as a series of horizontal layers; each layer was composed of either clay or sand. The material type (sand or clay) in each layer was estimated from classification based on examination of borehole samples or cone penetration records. Obviously, the actual material type at any individual point of each layer could deviate from that estimated. To assess this uncertainty in the material type, the probability of encountering clay at a given point can be estimated from the sampling observations and the number of boreholes and CPTs, as well as from their locations. With the construction of a set of contours depicting the probability of encountering clay over the site, the effect of this mapping error on the uncertainty of the predicted soil strength along a potential slip surface can be assessed. This component of uncertainty can be conveniently represented by the coefficient of variation (c.o.v.), Ω1, as tabulated in column 2 of Table 2-2. As stated in Appendix C, c.o.v, is a dimensionless parameter describing the amount of variation about a given predicted value.

Uncertainties about the properties of sand were estimated by analyzing the various models used to correlate penetration resistance with angle of internal friction. During this initial phase of site exploration, the strength of the clay was estimated from cone penetration resistance and results of triaxial compression tests. The model error for the strength which was estimated using cone penetration resistance was evaluated from calibration tests with the internal friction angle of sand (Wu et al., 1987), while that from triaxial compression tests was estimated using the experience of Hoeg and Tang (1977).

The c.o.v. that denotes this component of uncertainty resulting from calibration of test results is listed as “Ω2” in column 3 of Table 2-2. Lastly, the analytical model used to determine the stability performance for given soil-strength values can be subject to error. This additional component of uncertainty is denoted by the c.o.v., “Ω3”, in column 4 of Table 2-2. The total uncertainty of the soil resistance defining the stability performance can be denoted by another c.o.v., which is the square root of the sum of squares of the three component c.o.v.s (see Appendix C), as shown in the last column of Table 2-2 under case (a).

The options for additional site exploration considered in this case include: (1) use additional boreholes and CPTs, (2) use the ADP (active direct simple shear and passive test) method to estimate the undrained shear strength of the clays, and (3) perform load tests to infer the internal friction angle of the sand in addition to the use of ADP method for clay. The c.o.v. of the resistance for each of these options was similarly computed and is summarized under cases (b), (c), and (d) in Table 2-2. Comparison of the values in the last column indicates that, for this particular site, additional boreholes would not be

Table 2-2 Comparison of Uncertainties Between Exploration Programs at Brent B-G Platform in North Sea

|

Coefficients of Variation |

||||

|

Exploration program |

Mapping variability |

Strength model |

Analytical model |

Total |

|

Ω1 |

Ω2 |

Ω3 |

|

|

|

(a) 5 boreholes and CPTs φ' of sand from CPT Su of clay from triaxial compression tests |

0.14 |

0.14 |

0.10 |

0.22 |

|

(b) 8 boreholes and CPTs φ' of sand from CPT Su of clay from triaxial compression tests |

0.13 |

0.14 |

0.10 |

0.22 |

|

(c) 5 boreholes and CPTs φ' of sand from CPT Su of clay from ADP method |

0.14 |

0.11 |

0.10 |

0.20 |

|

(d) 5 boreholes and CPTs φ' of sand from CPT and load tests Su of clay from ADP method |

0.14 |

0.07 |

0.10 |

0.19 |

Source: Wu et al., 1989

effective in reducing the c.o.v. of the soil resistance against foundation instability. Greater reduction in the c.o.v. can be achieved by further investigation of the soil strength with load tests. Hence, uncertainty analysis such as this can identify the phases of site investigation that are most efficient in reducing the level of uncertainty in a given problem. The values of the c.o.v. in the table also show that the three sources contribute about equally to the overall c.o.v. Hence, no specific source can be identified as a dominant one, reduction of which would yield substantial reduction of the total c.o.v. Despite the reduction in error in the strength model with the performance of load tests, the reduction in the total c.o.v. of the soil resistance along a potential slip surface is small. Significant reduction in the total c.o.v. can be achieved only if errors from all three sources are reduced.

Example 6: Selecting the Design Safety Factor for a Dike

Christian et al. (1992) described the use of probabilistic methods in comparing the uncertainties and reliabilities associated with two different dike design and construction procedures for the James Bay hydroelectric project in Canada. The results helped in the selection of an appropriate and consistent set of design safety factors.

Two types of designs were proposed for the approximately 50 km of dikes which are founded on soft and sensitive clays. They included (1) construction of the dike in a single stage, to a height of either 6 m or 12 m, and (2) two-stage construction, in which a berm 12 m high was initially constructed, then after 80 percent consolidation was completed in the underlying clay, the berm was built to a height of 23 m at the second stage. The single-stage construction involved many kilometers of dike, with a limited exploration program along the dike; the undrained shear strength was measured by field vane tests, and the stability was evaluated by the simplified Bishop method. On the other hand, since there was less experience with the two-stage construction, extensive exploration and instrumentation programs were to be launched even if only a limited length of dike was involved. For two-stage construction, sophisticated laboratory soil tests, such as consolidated-undrained shear tests, were performed, and the Morgenstern-Price method was used for stability analysis. Hence, the component sources of uncertainties and their relative contributions were different between the two designs. The components of uncertainties include data scatter, spatial variation, and systematic uncertainty (as described in Appendix C) of each soil parameter associated with each design.

After the components of uncertainties were individually estimated based on the available soil data, they were then propagated through the stability analysis using the standard first-order uncertainty analysis method to estimate the mean and the coefficient of variation of the factor of safety (see Eq. C-11 in Appendix C), as well as the associated reliability index. Some observations worth noting in this analysis are as follows:

-

The high dikes involved larger slip surfaces than the low dikes. When the random spatial variations of soil properties were averaged over the slip surface, the variance of that average decreased with the size of the slip

-

surface. Hence the uncertainty due to random spatial variation was smaller for the higher dikes.

-

Uncertainty in the mean soil properties for the high dike was smaller than that for the low dike because of sophisticated strength testing and field monitoring for the high dike as compared with the low dike, which was designed using field vanes.

-

The slip surface in the field is actually curved (i.e., three-dimensional) instead of planar as assumed in the two-dimensional analysis. The effect of using the simpler two-dimensional model was accounted for by a random variable representing the model error. This component increased the c.o.v. obtained earlier for the two-dimensional model, which in turn reduced the reliability against sliding. However, the beneficial additional resistances at the two ends, because of curved slip surfaces, also increased the mean overall resistance, which in turn would increase the reliability against sliding. For the 6-m dike, whose factor of safety already had a fairly large c.o.v., the adverse effect of the added component was minimal; hence, its reliability actually increased over the two-dimensional case. On the other hand, the added component reduced the reliability of the 12-m dike from that of the two-dimensional case.

The results of the probabilistic study showed that the reliability level of the two-stage construction was higher. Hence, the required safety factor for design would be smaller than for single-stage construction alternatives if the same reliability were prescribed for all the alternatives. However, the selection of the design safety factor should also consider issues other than the reliability level. For instance, a major portion of the uncertainties in the soil resistance for the high dike was systematic in nature, whereas the uncertainties in the soil resistances for the lower dikes were dominated by the random spatial variation in soil properties. Thus, failure of the lower dike would likely involve a number of small failed 6-m or 12-m high sections scattered along the entire dike, whereas failure of the high dike would likely involve a single large continuous section. The consequence of failure is thus more serious in the latter case. Moreover, the profession has more experience with rapidly constructed low embankments than with the two-stage constructed embankments. Therefore, the target probability between the design alternatives should be different to reflect these considerations. A probability of slope-stability failure of about 0.001 seemed reasonable for design purposes and was consistent with past experience, for example, for the 12-m dike. However, the target probability for the 23-m dike would have to be reduced to 0.0001, whereas that for the 6-m dike could be 0.01, as the consequence of its failure might be only one-tenth as great as for the 12-m dike. These target probabilities yielded calculated factors of safety of 1.63, 1.53, and 1.43 for the 6-m, 12-m, and 23-m embankments, respectively. Because of the small range of the values, these results led to the recommendation that a common safety factor of 1.5 be adopted in this project for feasibility studies and for preliminary cost estimates of the dikes.

Example 7: Quality Assurance of Geomembrane Liners

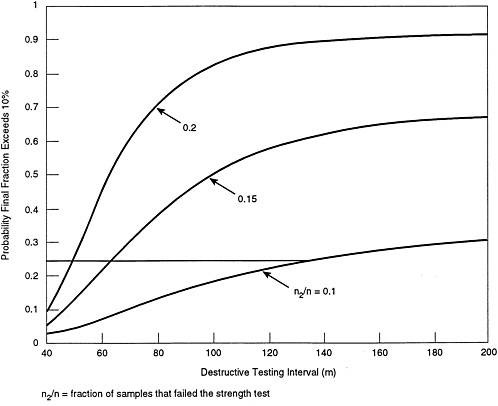

In landfills, geomembrane liners are often used, either alone or overlying compacted clay, to minimize leakage of waste materials to the environment. During installation, geomembrane defects can result from inadequate seams between geomembrane panels or from punctures and tears. In order to minimize defects in the final product, a CQA program is usually implemented. Gilbert and Tang (1993) described how probability theory can be used to obtain a consistent CQA program.

To evaluate the integrity of the seam, samples for destructive testing are commonly required at a minimum frequency of 1 per 150 m of seam; if a given sample fails to meet the specified strength, the extent of the incompetent seam is determined by additional testing on either side of the initial sample location. Although all the incompetent seams that are detected are subsequently repaired, questions can be raised on the competency of the remaining seams, such as:

-

What fraction of the remaining seams is still incompetent? Is that amount acceptable? If not, can it be improved by sampling at more frequent intervals?

-

How reliable is the estimate of the remaining fraction of incompetent seam? How will that affect the reliability of the CQA program?

-

Can the site-specific CQA measurements be used to update the predicted performance of the given geomembrane liner?

In view of the uncertainties involved in these inferences, deterministic approaches are of limited use in measuring or quantifying quality. In their formulation, Gilbert and Tang first used a probabilistic model to describe the frequency of occurrence of the incompetent segments along the seams and the length of each incompetent segment. The parameters of these probabilistic models were then calibrated with the observed frequency and lengths of detected incompetent segments for the specific project. A probabilistic formulation was also used to assess the uncertainty in these calibrated parameters to account for the effect of sampling only at a limited number of locations. One could then estimate the probability of an undesirable event where the fraction of incompetent seams remaining in the system exceeded a given target value.

A landfill lining project requiring 10,000 m of seams can be used as a demonstration of the results of the probabilistic procedure. If a testing interval of 150 m was used for destructive testing, and nine of the required sixty-seven samples failed the strength tests (i.e., fraction of samples that failed the strength test = n2/n = 9/67 = 0.134), the fraction of incompetent seams after repair is estimated to average 0.12. The average fraction of incompetent seams after repair is smaller than that observed in the strength test because of the replacement of those incompetent segments that have been inspected. The standard deviation describing the uncertainty of the fraction about this average value of 0.12 is 0.05. A reasonable measure of quality that incorporates both the average value and the uncertainty of the fraction is the probability that the fraction exceeds some target fraction, for example, say 0.1 or 10 percent. In this case, the probability that the fraction

after repair exceeds 10 percent for the 150-m testing interval is 63 percent. On the other hand, for a more intensive testing program, such as one with a testing interval of 100 m, one would expect a smaller probability of exceeding the target 10 percent if the same fraction (equivalent to n2/n) of failed samples were observed. Indeed, the probability that the fraction after repair exceeds the target 10 percent is reduced to 50 percent for this testing interval.

A plot of the probability that the final fraction exceeds 10 percent versus the destructive testing interval is shown in Figure 2-4 for several measured fractions of failing destructive tests. Prior to installation, this plot can be used to specify a testing interval to meet a selected design criterion. For example, if the design criterion is that the probability

Figure 2-4 CQA effectiveness measured by the probability that the fraction of incompetent seam exceeds a target level as a function of the destructive testing interval. Source: Gilbert and Tang, 1993. Reprinted from: Schuëller, G.I., M. Shinozuka & J.T.P. Yao (eds.), Structural safety & reliability—Proceedings of the 6th international conference on structural safety and reliability, ICOSSAR '93, Innsbruck, 9-13 August 1993. 2348 pp., 3 volumes, Hfl.375/US$210.00. Please order from: A.A. Balkema, Old Post Road, Brookfield, Vermont 05036 (telephone: 802-276-3162; telefax: 802-276-3837).

of exceedance should be less than 25 percent, the required testing interval is determined to be approximately 60 m for a typical installation that has an expected measured fraction of 15 percent (i.e., n2/n = 0.15).

This plot can also be used to modify the testing program during installation once more information is obtained. For example, suppose that the measured failure rate is closer to 20 percent than to 15 percent as the project progresses. To maintain the same level of quality in the final product (i.e., a 25-percent probability that the final rate exceeds 10 percent), the testing interval should be decreased to 50 m. Likewise, the testing interval can be increased if the measured failure rate is less than 15 percent. Finally, once the installation is complete, the expected value and the coefficient of variation of the fraction of incompetent seams remaining after repair, as determined from the probabilistic procedure, can be used to reassess the seam strength and performance reliability of the given installation.

Another issue concerning the quality of geomembrane is the presence of defects (i.e., holes and slits) along the seams. These defects could induce excessive leakage from the waste containment system. To check for these defects, nondestructive testing (NDT) is also implemented in the CQA program. In general, the ability of an NDT procedure to detect small defects is limited. Thus, even though all defects that are detected by the NDT are subsequently repaired, there are questions about the effectiveness of a given NDT procedure in reducing defects remaining in the system. In particular, are there defects in the seams that were not detected? How many and how large are these defects? Should a more costly NDT procedure that has a higher detectability (i.e., larger probability of detecting a given defect) be adopted? Probabilistic methods can be again applied to develop graphs similar to those shown in Figure 2-4 for assisting CQA procedures in using NDT to achieve a desirable level of liner-leakage reliability (Gilbert and Tang, 1993).

The purpose of the NDT is to detect defects, whereas that of the destructive test is to detect strength incompetency. However, a liner containing a larger fraction of incompetent seams is more susceptible to defects. Hence, the information derived from the two tests can be interrelated. Those previous estimates of the final fraction of incompetent seams and the remaining number and sizes of defects can be further refined by incorporating results from both tests. Probabilistic methods would be a valuable tool in treating this problem.

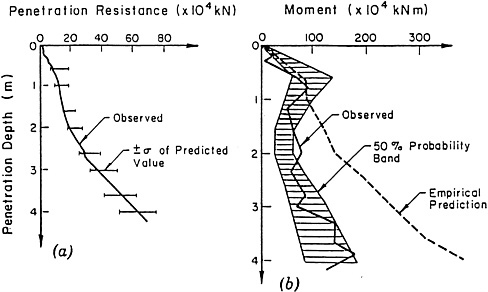

Example 8: Penetration Resistance of an Offshore Gravity Platform

Concrete gravity platforms have been used extensively for offshore oil exploration and production in the North Sea. These platforms are constructed in sheltered water near shore and then towed out to the site. The installation of a gravity platform requires penetration of “skirts” at the bottom of the platform into the seabed. Proper embedment of the skirts increases safety with respect to sliding failure and reduces the magnitudes of movement during storms. It is vital that the skirts penetrate to uniform depth all around a structure and that they achieve the necessary depth of penetration. The structure can be

ballasted to achieve full and even skirt penetration, but there are limits to the magnitudes of the forces that can be applied. It is thus essential to predict the maximum possible resistance to skirt penetration with high reliability.

Borings and CPTs performed at several platform sites reveal that layers of dense sand or hard clay may be encountered at some locations, giving rise to locally very high resistance to penetration of the skirt system. Measurements of cone penetration resistance are available only at a few points in the vicinity of the proposed platform location. Even if cone penetration resistance values were known at all points, the penetration resistance could not be predicted with certainty, since the actual location of the platform can be 20 to 30 m from the planned locations.

A probability model was used by Tang (1979) to evaluate the individual sources of uncertainties and their effect on predicted penetration resistances. The CPT data recorded at discrete locations near a site were first converted to skirt penetration resistance values at those points. These values were then analyzed to yield an average penetration resistance at a given depth; the standard deviation was also estimated, to serve as a measure of the spatial variability of penetration resistance between points at the given depth. The resistance values were expected to be similar at adjacent locations, whereas they might be practically unrelated at locations that are distant from one another. The recorded cone penetration resistance values were analyzed to estimate this correlation of penetration resistance with distance. The model was then used to calculate the expected total penetration resistance, the possible unbalanced moment, and their standard deviations as functions of depth of skirt penetration. Besides accounting for the spatial variation of penetration resistance, these standard deviations included the uncertainty in correlation of skirt resistance with cone resistance, and the uncertainty due to the limited number of CPTs that were performed at the site.

The probability model was applied to the Brent D platform, where 31 CPTs were carried out within an area of 50,000 m2. To describe the uncertainty of the prediction using the probability model, a band of one standard deviation of total penetration resistance (corresponding to about 68 percent probability) and the central 50 percent band of the unbalanced moment were developed. These are shown in figures 2-5a and 2-5b. The figures show that there is good agreement between the predicted and observed values. The width of the probability bands depends on the accuracy of the correlation of skirt penetration resistance with cone penetration resistance values, the degree of spatial variation of the resistances, and the number of CPTs performed, and it would thus vary from site to site.

As an alternative to probability analysis, a commonly used empirical method to estimate the unbalanced moment assumes that one-half of the platform base will be subjected to 130 percent of the average skirt penetration resistance at a given depth whereas the other half will be subjected to only 70 percent. From this differential pressure on adjacent halves of the platform base, the unbalanced moment can be estimated as a function of penetration depth. The result is shown by the “empirical prediction” curve in Figure 2-5a. Using a 50 percent probability band provides a more realistic approach by accounting for the degree of inherent spatial variability at different depths, as shown in Figure 2-5b. The result was useful in choosing the design ballast capacity, so that a given

Figure 2-5 Observed versus predicted: (a) penetration resistance; (b) unbalanced moment (Brent D). Source: Tang, 1979. Reprinted by permission of the American Society of Civil Engineers.

level of reliability would be achieved with respect to satisfactory penetration of the platform.

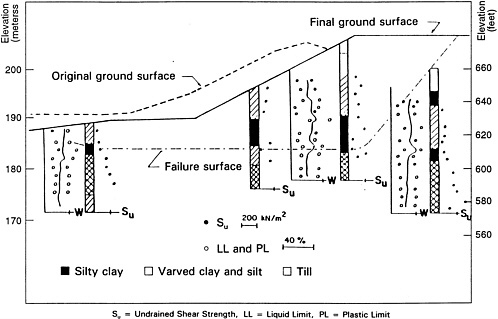

Example 9: Selecting Design Strength for Slopes in Glacial Lacustrine Soil

An example of excavation in glacial lacustrine soil, as described by Wu et al. (1975), is used to illustrate how probabilistic methods applied without good engineering judgment can yield erroneous results. It is well known that the composition and properties of glacial lacustrine soils are extremely complex. Figure 2-6 shows results of unconfined compression tests on samples taken from four boreholes at the site of an interchange along Interstate Highway 71 in Ohio. The problem that confronted the engineer in this case was how to select the undrained shear strength of the soil for the design of the slope, in view of the large variation in the measured strengths. This slope actually failed after construction, and the average shear strength at failure was about 970 psf (pounds per square foot). Given this hindsight, Wu et al., examined the validity of several hypotheses that were proposed by different engineers before construction.

The hypotheses represent different geotechnical engineers' best estimate of the strength, here represented by the mean strength, and the uncertainty associated with that

Figure 2-6 Soil information for section of I-71 studied. Source: Wu et al., 1975. Reprinted by permission of the American Society of Civil Engineers.

estimate, here represented by the c.o.v. Hypothesis (1) assumes that the deposit consists of essentially one type of soil. Hence, all data can be combined to yield a mean strength of 1,620 psf for slope-stability analysis. The total uncertainty of the strength along a potential slip surface in this case was estimated to have a c.o.v. of 0.14 using the methodology described in Appendix C. This value of c.o.v. includes contributions from the insufficient number of soil samples, spatial variability of soil strength along the slip surface, and discrepancy between the measured strength and that mobilized in situ.

Hypothesis (2) considers the possible presence of slickensides in the weaker soil, which could initiate progressive failure. If slickensides are present in sufficient numbers, the mobilized shear strength may be close to the residual strength. One may infer from a study by Skempton (1964) that the mobilized shear strength in this case may be as low as 50 percent of that of intact clay. Hence, a correction factor, N, ranging between 0.5 and 0.9 may be used to reduce the measured strengths. The value of 0.9 at the high end represents materials with very few slickensides. A triangular distribution is used over the range of N between 0.5 and 0.9, with the highest likelihood at 0.5, to reflect the engineer's judgment that the site is more likely to contain many slickensides. In this case, the mean shear strength is 1,020 psf. The total c.o.v. of the mobilized shear strength is increased to 0.26 due to the additional uncertainty in the correction factor N.

Two other hypotheses assume a layer of weaker soil between elevation 186 m and 189 m as reflected by the low strengths of samples taken near elevation 186 m. In

Hypothesis (3), the measured strengths are divided into two populations, and the mean shear strength is estimated to be 1,380 psf for the assumed slip surface shown in Figure 2-6 Hypothesis (4) uses the lowest strength from each borehole to represent the weaker layer. A mean shear strength of 1,288 psf is obtained. The means and c.o.v.s of the four hypotheses are listed in Table 2-3 as “![]() ” and “Ω”, respectively.

” and “Ω”, respectively.

To choose the design shear strength using the probabilistic method, first an acceptable failure probability is chosen, here assumed to be 0.01, or a reliability of 0.99. This corresponds to a target reliability index β = 2.3. By assuming a lognormal distribution for the undrained shear strength, the design shear strength can be determined approximately by multiplying the mean strength by eΩβ. The design shear strengths for the four hypotheses are listed in Table 2-3 as “Sd”. For comparison, the ratios Sd/![]() are also shown in Table 2-3. Even though the design undrained shear strength obtained for each hypothesis is based on the same level of reliability, the ratio S d/

are also shown in Table 2-3. Even though the design undrained shear strength obtained for each hypothesis is based on the same level of reliability, the ratio S d/![]() differs, because it reflects the different degree of uncertainty (or c.o.v.) associated with each hypothesis. It should be pointed out that the main source of uncertainty in this case is associated with the soil strength along the sliding surface. Contributions from other sources, such as the uncertainty of the weight of soil, construction deficiency, and human error can be also included. These will increase the value of the c.o.v. and in turn reduce the value of the design strength. However, these other contributions are assumed to be negligible in this example.

differs, because it reflects the different degree of uncertainty (or c.o.v.) associated with each hypothesis. It should be pointed out that the main source of uncertainty in this case is associated with the soil strength along the sliding surface. Contributions from other sources, such as the uncertainty of the weight of soil, construction deficiency, and human error can be also included. These will increase the value of the c.o.v. and in turn reduce the value of the design strength. However, these other contributions are assumed to be negligible in this example.

The results of using the four hypotheses can be seen by comparing the design strengths with the strength at failure s = 970 psf. If a given hypothesis yields a design strength, Sd, larger than 970 psf, that method of obtaining the design strength would result in failure. This is the case with Hypothesis (1). Hypotheses (2) – (4) would all result in a stable slope.

The four hypotheses are independent of the method, whether probabilistic or deterministic, used in design. Hypothesis (1), which can be considered as an incorrect assessment of the site conditions, would result in failure with either method. If a deterministic design is made with Hypothesis (1) and a conventional safety factor of 1.5 on the mean undrained shear strength of 1,620 psf, the design strength is 1,080 psf and failure would also result.

Table 2-3 Design Strengths for Four Hypotheses on Soil Conditions

|

Hypothesis |

Sd(psf) |

Sd/ |

||

|

(1) One statistically homogeneous deposit |

1620 |

0.14 |

1174 |

0.72 |

|

(2) Presence of slickensides |

1020 |

0.26 |

561 |

0.55 |

|

(3) Presence of a weak layer |

1380 |

0.16 |

955 |

0.69 |

|

(4) Slip surface through the weakest material |

1288 |

0.16 |

891 |

0.69 |

|

At failure |

970 |

|||

|

Source: Wu et al., 1975. |

||||

This example reemphasizes the importance of site characterization, which is a familiar theme with geotechnical engineers. An experienced engineer would recognize that weak materials or slickensides could be present in this soil deposit. The engineer may adopt any of the last three hypotheses and produce a successful design using either a deterministic or a probabilistic analysis. This shows that probabilistic analysis can reflect the judgment and reasoning behind each hypothesis, but it cannot replace the important role of judgment in choosing the correct hypothesis.

REFERENCES

Christian, J.T., C.C. Ladd, and G.B. Baecher. 1992. Reliability and probability in stability analysis. Pp. 1071–1111 in Stability and Performance of Slopes and Embankments—II, R.B. Seed and R.W. Boulanger, eds. Geotechnical Special Publication 31. New York: American Society of Civil Engineers.

Duncan J.M., and W.N. Houston. 1983. Estimating failure probabilities for California levees. Journal of Geotechnical Engineering, American Society of Civil Engineers 109(2): 260–268.

Gilbert, R.B., and W. H. Tang. 1993. Quality assurance of geomembrane liners. Pp. 1985–1992 in Proceedings of the Sixth International Conference on Structural Safety and Reliability, ICOSSAR '93, Innsbruck, August 9–13, G.I. Schuëller, M. Shinozuka and J.T.P. Yao, eds. Rotterdam: A.A. Balkema.

Hoeg, K., and W. H. Tang. 1977. Probabilistic considerations in the foundation engineering for offshore structures. Pp. 267–296 in Proceedings, 2nd International Conference on Structural Safety and Reliability, Munich, Germany. Dusseldorf, Germany: Werner-Verlag.

Hynes-Griffin, M.E. 1987. Seismic Stability Evaluation of Folsom Dam and Reservoir Project, Report 1: Summary Report. Technical Report GL-87-14. Vicksburg, Mississippi: U.S. Army Engineer Waterways Experiment Station.

Massmann, J., R.A. Freeze, L. Smith, T. Sperling, and B. James. 1991. Hydrogeologic decision analysis: 2. Applications to ground-water contamination. Ground Water 29(4): 536–548.

McGuire, R.K. 1976. FORTRAN computer program for seismic risk analysis. Open File Report 76-67. Denver, Colorado: U.S. Department of the Interior, Geological Survey.

Nielson, N., S. Nick, and D. Hartford. 1994. Risk analysis in British Columbia. International Water Power and Dam Construction. 46(3): 35–40.

Skempton, A.W. 1964. Long term stability of slopes. Geotechnique 14(2): 77–101.

Sykora, D.W., J.P. Koester, and M.E. Hynes. 1991. Seismic hazard assessment of liquefaction potential at Mormon Island Auxiliary Dam, California. Pp. 247–267 in Proceedings, 23rd US-Japan Joint Panel Meeting on Wind and Seismic Effects, May 13–23, Tsukuba, Japan. NIST SP 820. Gaithersburg, Maryland: National Institute of Standards and Technology.

Tang, W.H. 1979. Probabilistic evaluation of penetration resistances. Journal of Geotechnical Engineering, American Society of Civil Engineers 105(GT10): 1173–1191.

Terzaghi, K. 1960. Report on the Proposed Storage Dam South of Lower Stillwater Lake on the Cheakamus River, B.C. From Theory to Practice in Soil Mechanics . New York: John Wiley & Sons.

Vick, S.G. 1992. Risk in geotechnical practice. Pp. 41–62 in Geotechnique and Natural Hazards. Richmond, British Columbia: BiTech Publishers, Ltd.

Vick, S.G. and L.F. Bromwell. 1989. Risk analysis for dam design in karst. Journal of Geotechnical Engineering, American Society of Civil Engineers 115(6): 819–835.

Whitman, R.V. 1984. Evaluating calculated risk in geotechnical engineering. Journal of Geotechnical Engineering, American Society of Civil Engineers 110(2): 145–188.

Wu, T.H., O. Kjekstad, I.M. Lee, and S. Lacasse. 1989. Reliability analysis of foundation stability for gravity platforms in the North Sea. Canadian Geotechnical Journal 26: 359–368.

Wu, T.H., I. Lee, J.C. Potter, and O. Kjekstad. 1987. Uncertainties in evaluation of strength of marine sand. Journal of Geotechnical Engineering, American Society of Civil Engineers 113(7): 719–738.

Wu, T.H., W.B. Thayer, and S.S. Lin. 1975. Stability of an embankment on clay. Journal of Geotechnical Engineering, American Society of Civil Engineers 101(GT9): 913–932.