2

Characterizing Exposures

Characterizing the potential or actual exposures of deployed troops to harmful agents is vital for determining the health risk of contamination, defining a level of protection if operation in contaminated areas is required, and providing medical treatment, if necessary. Characterizing exposures involves several processes: (1) detecting agents; (2) assessing and monitoring concentrations; (3) tracking time-specific locations of troops relative to these concentrations; and (4) understanding exposure pathways. Subsequent chapters treat these elements individually. However, none of these elements alone provides sufficient information for characterizing exposures in real time or for characterizing potential future exposures or past exposures. Moreover, the information must be linked in a way that provides useful input for decision makers.

Various methods have been developed for combining detection and monitoring data on agent concentrations with troop tracking data. These methods can be divided into two groups: (1) sampling strategies to detect an imminent threat (i.e., high-level exposures); and (2) sampling strategies to collect information on low-level exposures to single or multiple agents but not immediate/short-term life-threatening levels of toxic agents.

The following topics are addressed in the sections below: the need for exposure characterization; strategies for assessing exposure to harmful agents; the collection of environmental samples; the use of modeling, simulation, and decision trees; and needs, capabilities, and opportunities for the future. The final section contains key findings and recommendations for characterizing exposures.

NEED FOR EXPOSURE CHARACTERIZATION

Characterizations of exposure provide three different types of information:

-

estimates of potential exposures—harmful agents likely to be present, weather patterns, and troop activities likely to bring troops in contact with agents

-

estimates of actual exposures, or of exposures avoided, during deployment—monitoring of harmful agent concentrations in the deployment area, the number of troops threatened, and the implications of spatial and temporal changes of concentrations and troop locations

-

assessments of exposure1—a basis for understanding or predicting postdeployment health effects

Monitoring requires a network of instruments to detect and record concentrations, as well as to gather information on environmental factors, such as wind, that can affect the dispersion and concentration of the agent. Perhaps the best way to monitor the movement of an agent is with a combination of a monitoring network and dispersion simulations. But detailed information on space and time distributions of concentrations is not sufficient to characterize troop exposures. The location of the troops and the rate and direction of their movements with respect to the concentrations must also be known.

Although tracking every individual would be desirable, it may not be practical in the near future. Individuals could be tracked with GPS, but the amount of data could overload the data fusion process and equipment. Modeling and war games could be used to determine the feasibility of tracking every individual. DoD's current strategy is to track units by tracking representative samples of the individuals in that unit. If the unit has a high probability of being exposed, all members of the unit would be assumed to be at risk. If tracking and exposure information on individuals could be temporarily stored and then, at a later date, retrieved for historical purposes, this could alleviate the near-term problem of data overload and enable DoD to analyze the effects of low-level exposures to CB agents and other toxic agents on a given individual.

Exposure Information

The information required to characterize exposures includes data gained from monitoring (e.g., the nature, size, and location of the agent concentration); the tracking information on the location and previous exposures of troops; and time-activity data during the exposure.

Combining these for tracking purposes will be different for short-term exposures that could pose an imminent threat, than for low-level exposures that could have long-term chronic health effects. Record keeping must start at the predeployment stage, with determinations of past and current exposures, health factors indicating susceptibility, and job-activity classifications. Different combinations of these data will be necessary to characterize exposure for individuals and groups.

Strategies for Characterizing Exposures

Strategies for characterizing exposures can be defined in terms of time scales—real-time, prospective, or retrospective. Real-time sampling strategies are used for determining exposures of deployed personnel (in various settings) to protect them against imminent threats. Sampling may be used in future analyses to determine the probability of an exposure that may have occurred in a recent, well defined setting, as well as to evaluate factors that can explain observed levels of exposure. Prospective monitoring refers to sampling taken before the appearance of health effects. For example, consider exposure to benzene. Prospective sampling would be sampling to identify who has been exposed to benzene prior to the appearance of health effects. Typically, the sampled population is then tracked to determine if an increase in the incidence of any disease correlates with the level of sampled benzene concentration. Retrospective sampling takes place after an exposure has occurred and is based on records or proxy indicators, which are used to determine the magnitude of the exposure. In the example just described, for instance, retrospective sampling would be used to sample a group of people who already have a disease, such as leukemia, to determine which of them was exposed to benzene and at what levels.

The spatial scales in exposure characterization depend on whether one is tracking dispersed agents (e.g., in air or water) or nondispersed agents (e.g., in soil or food). Stand-off sampling is better suited for real-time assessments of potential threats, but stand-off sampling is often time consuming and not always reliable. Therefore, proximate samples are often collected at, or near, the point of contact.

Characterizing exposures that have chronic and latent adverse health effects from low-level (single or multiple) exposures presents

many problems for strategists, policy makers, and health-care systems. These exposures add a new dimension to the requirements for operational planning and research. A study by the General Accounting Office indicated that DoD does not have a strategy for characterizing low-level exposures and that risk assessment standards need to be improved, including the standards for assessing multiple exposures (GAO, 1998).

Uncertainty, Variability, and Reliability

Current estimates of potential troop exposures to harmful agents are based on large amounts of data collected by different instruments and individuals. The data are so complex that in some cases models and simulations must be used to interpret the results. Because these data and models must be used to characterize many things (e.g., individual and group behaviors, engineered system performance, contaminant transport, human contact, and skin absorption) in a variety of geographical locations, often under less than ideal conditions, uncertainties and variabilities are "facts of life."

An uncertainty refers to an error, bias, or lack of information that results in an inherent uncertainty in measured exposure factors (e.g., concentrations, locations, activities). Characterizations of exposures are bound to include large uncertainties (e.g., errors, incomplete data) associated with the information collected and ultimately provided to the decision makers. Uncertainties in exposure tracking information are the results of high detection thresholds, false alarms, improper sampling, improper documentation, lost or incomplete records, miscalculations, and subjective interpretations of results. Variability refers to natural variations or heterogeneities in human populations and natural systems. Reliability refers to the overall precision and accuracy of an assessment and is related to both the uncertainties and variabilities in the components of the assessment.

The greater the uncertainties and variabilities in the exposure information, the lower the reliability. Although many factors can be quantified based on variance propagation techniques, uncertainties that are difficult to characterize cannot be reduced. Thus, exposure information should not be provided as single values but should be accompanied by some measure of reliability. In some cases, some uncertainties and variabilities can be resolved using decision trees and event trees (see Appendix A).

STRATEGIES FOR ASSESSING EXPOSURES DURING DEPLOYMENTS

The first priority in the DoD strategy for assessing exposures to CB and other harmful agents is to detect, monitor, and avoid life-threatening

situations, particularly from CB agents. However, DoD recognizes that low-level exposures and multiple exposures to other hazards (e.g., TICs) during deployments must also be assessed. These assessments will require that DoD continue to modify its strategies for collecting exposure information.

A growing body of evidence in the public health field indicates that determining total exposure would greatly facilitate the identification, assessment, and management of health risks. To date, exposure assessments (see the Technical Annex to this chapter) have focused primarily on exposures to contaminants in specific media or occupational exposures to specific environmental pollutants (Krzyzanowski et al., 1990; Krzyzanowski, 1998; NRC, 1981a, 1991a; RIVM, 1989; U.S. Army, 1991; WHO, 1982a, 1983, 1989). But DoD now recognizes the need for a strategy of "total exposure assessment" (i.e., the cumulative effects of multiple contacts with harmful agents in multiple media) (GEO-CENTERS and Life Systems, 1997).

Detection and Monitoring Strategies

Chemical agent concentrations can be monitored either by fixed-site monitors, portable monitors, or personal monitors. Fixed-site monitors involve measuring chemical concentrations at specific fixed locations. Portable monitors track chemical concentrations at various locations as troops move around or use a sampling strategy. Personal monitors track exposure concentrations by individuals. Current DoD practice relies primarily on fixed-site monitoring by point or stand-off detection.

Fixed-Site Monitoring

Sampling strategies for monitoring civilian air pollution rely on a few stationary monitors for each area of interest (usually population centers near major point sources). Monitoring strategies have been generally limited to identifying common TICs, including particulate matter, lead and lead compounds, ozone, nitrogen dioxide, sulfur dioxide, and carbon monoxide (EPA, 1982, 1986a, 1992a, 1993, 1996a; WHO, 1982a). Military fixed-site monitoring networks are similar to their civilian counterparts (U.S. Army, 1991). During the Gulf War in 1991, an attempt was made to set up a monitoring network, essentially a garrison-based system for monitoring air, water, and soil; however, little monitoring was actually accomplished (Heller, 1998; U.S. Senate, 1992).

Limited monitoring of hazardous air pollutants (HAPs) and CB agents in the Persian Gulf area (U.S. Army, 1991) was conducted, as well as some other scattered monitoring (U.S. Senate, 1998). No source-specific

monitoring was done of TICs, such as petroleum products, lubricants, cleansing solvents (including degreasers), off-gases from weapons discharges, outdoor and indoor nonoperational (combustion and other) sources, toxic waste dumping, stored toxic substances, or transported toxic substances. Although the military has CB defense plans and reconnaissance operations for field situations, as well as some emerging strategies (i.e., predeployment environmental sampling) for toxic agents, they were not used extensively prior to the Gulf War. Even during the Gulf War, they were used inconsistently and sporadically.

Multimedia Monitoring

Environmental media that can be monitored include air, water, food, and soil. A multimedia monitoring strategy is designed to assess the cumulative effect of exposures of a single individual to a single agent from multiple media. In general, DoD does only limited multimedia monitoring. For example, the military conducted some water and soil monitoring in the Persian Gulf area in connection with its air monitoring (Knechtges, 1998).

A few studies have been done on a few biological aerosols (pollen, bacterial endotoxins, and mold), but only research studies and specialized indoor environments (e.g., hospitals) monitor for infectious agents. Indigenous sources of nonwarfare biological agents during previous deployments have not been monitored because it was not required and funding was not provided.

Using Statistics

Environmental monitoring protocols can be an essential component of research studies on health effects or exposure trends. These studies typically include statistical sampling methods, and in some cases, monitoring is stratified using probabilistic sampling methods (the type of stratification depends on the objectives of the study). DoD's CB agent reconnaissance operations also include sampling protocols designed to provide comprehensive area coverage. However, at this point, DoD uses few, if any, statistical sampling or stratification methods, which could facilitate the characterization of variations in exposures within a population.

At present, two probability-based statistical sampling protocols have been used in the U.S. Environmental Protection Agency's (EPA's) National Human Exposure Assessment Studies (NHEXAS) (Lebowitz, 1995; Pellizzari et al., 1995; Sexton et al., 1995a, 1995b) and the Total Exposure Assessment Methodology (TEAM) studies (Wallace, 1987a, 1987b, 1992). These studies were carefully designed to assess the relative magnitude

and variation of exposures to commonly found TICs, such as benzene, lead, and pesticides. The NHEXAS studies include multimedia exposure assessments.

Using Monitoring Data with Exposure Models

Tracking exposures requires integrating monitoring data and time-activity data in a structured, time-dependent fashion. Computer models provide an automated process for combining, storing, and assessing the types of information that must be merged to characterize exposures. Modeling is particularly useful for interpreting environmental samples for low-dose assessments. Exposure characterization can also be improved by dispersion models (as is widely recognized by the military), models of chemical infiltrations of indoor environments, or models of indoor/outdoor ratios. An essential component of these models is accurate activity data for tracking individuals.

Simulations

Currently, DoD makes limited use of simulations or intelligent systems to interpret environmental samples (Knechtges, 1998). DoD is working with and developing a number of systems to simulate exposure patterns, but most of these systems are not currently available. Among these systems are the Army's Automated Nuclear, Biological and Chemical Information System (ANBACIS), the emerging Joint Warning and Reporting Network (JWARN) system, the Navy's Vapor, Liquid and Solid Tracking (VLSTRACK) model, the BIO 911 Advanced Concept Technology Demonstration (ACTD) simulation model for biological organisms, and the Joint Biological Remote Early Warning System (JBREWS) ACTD. A version of ANBACIS was used to reconstruct chemical exposures in the Gulf War (DoD, 1999b). To date, the military has not used these systems for prospective or real-time assessments, but that is the explicit goal of systems such as JWARN, which is being designed to integrate information from several detectors, monitors, and soldier-tracking devices with simulation models. Little information on how this will be done is available. Moreover, systems such as JWARN will only be used as tactical systems to monitor immediate threats. Currently there are no plans to apply them for documenting long-term health hazards (U.S. Army, 1994).

COLLECTION OF SAMPLES

Much more detailed sampling will be necessary for deployments abroad than for troops stationed in the United States, where emissions

data for occupational and environmental settings are well characterized. In contrast, for most deployments abroad (with the exception of standard overseas locations where sources of harmful agents are already known and well characterized), harmful agents will have to be identified in real time and analyzed for their potential effects. Few or no industrial and/or agricultural emissions data are likely to be available for most deployments.

When environmental samples are used to characterize exposure, the accuracy of the characterization depends on the types of samples collected. No monitoring strategy can completely eliminate uncertainties about agent concentrations and provide a sufficient number of samples to characterize precise exposure variabilities among deployed troops. In many situations, only surrogate or remote samples are available. In other situations, proximate samples may be available but may not be representative of the groups or individuals for which exposure data are needed. Personal sampling and biomarkers have the potential to characterize the range of exposures experienced by individuals, but these methods also have inherent limitations.

Surrogate Samples

Surrogate exposure information is obtained by linking characteristics of each individual's environment, residence, and workplace, to historical or actual knowledge of concentrations in those locations or in similar or typical locations (Lebowitz et al., 1989). Assessments based on surrogate samples are likely to be more reliable than assessments based simply on general categories. Surrogate samples require careful calibration and are often more useful for retrospective analyses than for prospective assessments. Although surrogate samples would seem to be feasible, they have not been thoroughly tested in actual deployment settings. If surrogates are available, DoD would benefit from investigating their use for assessing CB and other harmful agents.

Stand-off Sampling

Stand-off sampling is frequently used in the environmental health field, sometimes in conjunction with dispersion modeling. As noted in Appendix D, stand-off sampling has been used for sampling both CB agents and industrial chemicals, mainly to monitor air pollution. However, measurements taken at a ''safe" distance from the source of contamination are often unreliable as measures of personal or group exposures because they cannot directly measure microenvironmental contamination.

Proximate Sampling

Proximate sampling involves measuring concentrations from a location near (proximate to), but often different from, the location of the person. For example, indoor and outdoor exposures could be estimated from a single indoor monitor. Proximate sampling is very useful for evaluating total exposures in a logical way (Colome et al., 1982, 1992; EPA, 1996b; Krazyzanowski, 1998; Letz and Spengler, 1984; NRC, 1981b, 1985a; Quackenboss et al., 1991; Spengler et al., 1981; WHO, 1982a, 1982b). Personal gaseous monitors (discussed below) also can be used as proximate instruments. Monitoring (with data loggers) in locations where individuals and/or small groups are present provides information on exposures during the time periods that are monitored and can be used to model exposures, and help calibrate models, to estimate exposures in these locations at other times. The method depends both on the level of information required and on the feasibility of collecting detailed individual data and making microenvironmental and personal exposure measurements (Colome et al., 1982, 1992; Krzyzanowski, 1998; Lebowitz et al., 1989; NRC, 1981b; Quackenboss et al., 1991; Spengler et al., 1981). Temporal measurements can also be made for evaluations.

Proximate continuous monitoring (with data loggers) of various airborne pollutants can be done in the field (in garrisons and for support personnel), aboard ships, and in aircraft cockpits; the National Aeronautics and Space Administration uses some cockpit monitors (e.g., NRC, 1988, 1992). Some CB monitoring capabilities exist, and more are being developed. However, proximate chemical agent monitors did not seem to work well during the Gulf War. The problems were attributable as much to operational factors, however, as to the devices themselves (DOE, 1998; Knechtges, 1998).

Proximate (active or passive) monitors could have been used in some of the tents where kerosene space heaters, which emit excess amounts of particulate matter, nitrous oxide, sulfur dioxide, carbon monoxide, and hydrocarbons, were used during the Persian Gulf deployment. Instead, postdeployment studies with simulants were conducted (U.S. Senate, 1998).

Personal Sampling

The most direct approach to characterizing human exposures is personal exposure monitoring. Passive monitoring of atomic radiation has been used successfully for many decades in limited situations. However, active monitoring of toxic gases and particulate matter requires a good deal of effort (especially if a pump is involved) and is usually only

practical for a limited number of subjects for short periods of time. Gaseous passive integrated monitors (such as the volatile organic compound [VOC] badges and the Palmes tubes for monitoring nitrous oxide) have been developed and appear to be more promising for widespread use in situations where the threat is not imminent. Personal exposure monitoring works well for VOCs, which can be generated indoors or diffuse in from outdoors (EPA, 1993; Lioy et al., 1991; Moschandreas and Gordon, 1991; Perry and Gee, 1993; Wallace et al., 1989; Wallace, 1992, 1993). Participants in the SBCCOM Man-in Simulant Test (MIST) Program use the passive Natick Sampler to detect simulant vapors. The sampler is as thick as a common adhesive bandage and less than an inch square (NRC 1997b). Continuous time-location monitors with data loggers have also been available for some time (Ott, 1995). GPS with data loggers (see Chapter 6) is another promising technology for linking data from field locations.

Biological Markers

Biomarkers are biological samples that can be used to assess current and past exposures and health effects of CB agents and other harmful agents. Biomarkers can be obtained from samples of blood, urine, or hair. The analyses of biomarkers for the agent of concern, its metabolites, enzymes induced, and/or adducts formed in endogenous proteins and/or deoxyribonucleic acid (DNA) can indicate the presence of agent or its metabolites in the body (Lippman, in press). To date, biological markers have not been useful for low-level exposures. Improved methods are increasing the number and sensitivity of useful biological markers, although they have been limited to higher exposures usually in occupational settings. Biomarkers are used for measuring lead, and the Centers for Disease Control and Prevention (CDC) is currently investigating their use for measuring classes of organophosphate pesticides. If successful, biomarkers could also be used for measuring other organophosphate chemicals, such as nerve agents.

Emerging sampling strategies are relying more on biomarkers; and less invasive biomarkers, such as urine, saliva, or hair, might eventually be used for monitoring exposures to a large number of harmful chemicals. Urinary biomarkers have worked very well for measuring the presence of metals, tobacco smoke, and some other pollutants. In the future, DoD may be able to evaluate more DNA adducts, possibly even after the exposure of embedded personal DNA worn by individuals as a monitor (Lebowitz, 1999).

Limited studies of biological samples were performed on U.S. troops in the Persian Gulf. Among these were two separate CDC studies of VOCs

in the blood of Persian Gulf troops (U.S. Senate, 1998). Only tetrachloroethylene (PCE) was found to be higher than usual in a few individuals, and this was related to their degreasing activities. Also, the USAEHAKRAT program studied biomarkers in some troops before, during, and after their deployment from Germany to Kuwait (U.S. Army, 1991). Generally, metals were found to either remain the same (e.g., nickel, vanadium) or were not detected (e.g., arsenic, mercury). Only lead increased in troops deployed in Kuwait (although the levels were still within normal limits). No substantial changes in VOCs were found, and most were within the range found by the National Center for Environmental Health in studies in the United States. Five VOCs were significantly lower in Kuwait (ethylbenzene, two xylenes, styrene, toluene); PCE was higher (U.S. Senate, 1998), as was acetone; benzene increased; chlorobenzene decreased; chloroform fluctuated, but increased only slightly. Polycyclic aromatic hydrocarbon (PAH) DNA adducts were higher in predeployment samples, implying that there were reduced exposures in Kuwait. A study of nine U.S. firefighters before deployment and within three weeks of their return from a six-week deployment showed levels of DNA adducts within the range reported by their laboratory for nonexposed groups.

MODELING, SIMULATIONS, AND DECISION ANALYSES

Modeling, simulations, and decision analysis can greatly improve interpretations of information obtained from CB detection equipment by providing a systematic and iterative process for assessing the value of improved or new information. To date, only limited modeling has been used to interpret chemical agent detection, and it is unclear how much DoD intends to use modeling, simulations, and decision analysis methods in deployment settings to identify and interpret information obtained from CB detection equipment. Although DoD acknowledges that these methods will be necessary for exposure and health hazard assessments (Heller, 1998), no systematic evaluation has been made of how they could be used in real time to anticipate acute exposures (especially imminent threats).

Exposure Modeling

Exposure models are being used to evaluate activities that would bring troops in contact with a contaminated medium in a specified microenvironment at a given location. To construct an exposure model, an individual or a population group is linked with a series of time-

specific activities and with the geographic locations and microenvironments associated with those activities. In addition, a combination of detection and monitoring data and process models are used to define contaminant concentrations (and sometimes contact time) in each combination of location and microenvironment. An exposure model must represent peak exposure concentration, average exposure concentration, the number of times the concentration exceeds specified levels, and the cumulative intake or uptake during a series of exposures.

Exposure prediction models can take various forms. One commonly used approach is to estimate the average exposure at each location (for an individual or group) using the time budget (as collected or even predetermined by job) and integrated samplers in that location. Differences between the integrated average exposure estimate in a location and the actual exposure measured for an individual or group may be due to the uneven spatial distribution of the pollutant in the compartment, room, building, or geographic area. Differences can also result when the pollutant concentration is associated with the presence of the individual or group (e.g., the use of a stove or space heater, resuspension of particles on floors or soil, or cigarette smoking).

Follow-up questionnaires, as well as time-activity data, are used to evaluate reasons for variations to facilitate assessments of the time relationship between the presence of the sampled individual or group and the source. Based on the time spent in each sampled location, the average exposure received by the individual or group at a given location can be calculated directly. The ratio of this partial exposure component to the cumulative exposure calculated for that individual or group can then be compared with the estimates based on the integrated samplers to assess the magnitude of error. If these data are supplemented by portable, proximate, continuous sampling, the estimates are much more accurate.

Although continuous monitoring is required for acute, especially imminent, threat situations in the field, continuous monitoring on the ground will only be possible using reconnaissance vehicles, in an aircraft, on board a ship, or in a garrison situation. For long-term effects we must rely on integrated averages.

Time-weighted averages (TWAs) of personal or group exposures are typically based on the time and location information derived from the time-activity data, as well as on monitoring data. The TWA contains a discrete sequence of time periods, j, that are spent in a limited number of locations; each period has a unique duration, tj. For each time period, a concentration, cj, can be estimated from a passive or active integrated sampler or from continuous data (if available) for that location and time period. The TWA is calculated as follows:

TWA = Σ(tj cj)/Σ tj

for j = 1, . . . , number of time periods.

The calculated TWA can be compared with the integrated personal exposure measurement using an analysis of covariance procedure to assess the agreement between the estimated and measured exposure and to estimate the average pollutant concentrations in nonmeasured locations and their importance from the value and relative significance of the regression coefficients (Quackenboss et al., 1986; Spengler et al., 1985).

Models of Daily Intake

An alternative to exposure modeling frequently used for chemicals with long-term cumulative health effects (e.g., carcinogens) is a model of daily intake. A general EPA model states that the potential average daily intake dose, ADDpot, over an averaging time (A), is given by:

where Ci is the contaminant concentration in the exposure media i; Ck is the concentration in environmental media k; IUi is the intake/uptake factor (per body weight [BW]) for exposure media i; EF is the exposure frequency (days/year) for this population; ED is the exposure duration (years); and AT is the averaging time for population exposure (days).

Models of daily intake link sources to exposure pathways. Establishing human activity patterns associated with exposures are, thus, critical to these models.

Simulations

Simulations of CB and other toxic chemical releases and of their subsequent atmospheric dispersion are still being developed. Most current simulations deal primarily with air dispersion (Heller, 1998; U.S. Senate, 1998). Simulations for personal and group exposures must use monitoring data linked to time-location-activity data and the results of exposure modeling of different scenarios. These results could then be used to determine preventive measures, as well as to assess other scenarios, such as acute short-term vs. long-term exposures. In turn, these results could be stored for long-term retrospective health evaluations, as well as for determining short-term medical response.

NEEDS, CAPABILITIES, AND OPPORTUNITIES

DoD is currently devoting significant resources to improving its capabilities to anticipate life-threatening exposures. But DoD will also have to collect and store information on low-dose exposures to CB agents, TICs, environmental and occupational contaminants, and endemic biological organisms.

Different capabilities will be required to (1) anticipate life-threatening exposures, (2) monitor low-dose CB and other agent exposures, (3) monitor potential exposures to harmful microorganisms, and (4) maintain complete exposure records for all military personnel. Allocation of resources for these different capabilities should be based on the following factors:

-

priorities among harmful agents and among multiple exposure pathways based on the dimensions of harm (e.g., severity of impacts, number of people affected, persistence of the harm) (See the Technical Annex to this chapter.)

-

strategies for dealing with uncertainties, including incomplete information, proxy indicators of exposure, reliability problems with equipment, and lack of real-time information

-

the relative value of new equipment, increasing surveillance, and improving documentation

Tracking Strategies and Emerging Needs

For determining health effects, assessments of total exposures in microenvironments are much more meaningful than assessments based on stationary monitoring alone (Bertollini et al., 1995; Lebowitz, 1995; Pellizzari, 1991; Wallace, 1992). Total exposure assessments includes measurements, or estimates, of contact with contaminants of concern through inhalation, ingestion, and dermal contact. The estimates of total exposure for deployed forces from this combination of data will probably be much higher than estimates based on either occupational or ambient pollutant concentrations (Bertollini et al., 1995; Corn, 1971; Moschandreas, 1981; NRC, 1981b, 1985a, 1985b, 1991b; Ott, 1995; Pirkle et al., 1995; Quackenboss et al., 1991; Sexton et al., 1992, 1995a, 1995b; Sexton and Ryan, 1988; Spengler et al., 1981, 1985; Wallace, 1992; WHO, 1982a, 1982b, 1983, 1989).

Real-Time Monitoring Strategies

Detecting imminent CB threats requires real-time monitoring strategies (e.g., Heller, 1998; JSMG, 1998; U.S. Army and U.S. Marine Corps, 1993). Determining CB agent concentrations before they reach troops is

important for minimizing immediate casualties. A chemical stand-off system, with alarm, has been developed for the Fox reconnaissance vehicle. Also, stand-off monitoring may be simulated by models based on likely emissions from remote "imminent threat" sources (Resta, 1998).

The issue of low-level exposures must still be addressed. Because there are so many agents troops may be exposed to at low levels and so many troops that could be exposed, the low-level issue involves more than just technology and equipment. It also involves strategies for interpreting trends from measurements collected near the detection limit of the equipment and methods for using exposure data for only a fraction of the exposed population.

Continuous monitoring (with data loggers) of CB agents and other airborne toxicants can theoretically be performed in the field by reconnaissance units (also in field garrisons and by support personnel), on board ships, and inside aircraft. Although sampling strategies did not seem to work well in the Gulf War, the sampling strategies were mostly haphazard, and no apparent effort was made to select the most likely sample locations or to sample media for future applications. In the future, an effort should be made to use data loggers with continuous time-location monitors and, if possible, GPS receivers.

Prospective Monitoring Strategies

Prospective monitoring strategies for acute high-and low-level surveillance monitoring for TICs have been defined, and strategies for the long-term investigation and surveillance of TICs are being developed. These strategies could be adapted for low-level monitoring of CB agents (as they are for TICs), since they are needed for deployed personnel, but the capabilities are currently even more limited than for higher levels (see Chapter 4).

Volatile Chemicals

Passive monitoring badges worn by a small number of individuals for 24- to 72-hour periods during days in the field can be used to monitor hazardous volatile chemicals (Coutant and Scott, 1982). Because of the burden associated with wearing and collecting these badges, only a small sample of deployed troops should be required to wear them. The badges could be similar to the Natick Sampler, which has been used in the MIST program to detect simulant. The permeable membrane in the Natick Sampler has also been tested successfully with a number of chemicals (NRC, 1997b). Badges could also be used as proximate monitors and as monitors for subgroups known to be sensitive to these toxic chemicals. New badges could be developed or the current badges used for monitoring chemical

agents in the parts-per-million and even parts-per-billion range—a level of sensitivity adequate for many but not all chemical vapors (e.g., GB and VX). Inferential statistics could be used to test the impact of several exposure variables on personal exposures to airborne toxic agents. For instance, one could compare the personal exposure sampling results with exposure estimates based on the indirect method of combining area sampling with personal time budgets.

Aerosol and Particulate Matter

Potential health impacts of exposure to particulate matter are related to particle size. Small particles (less than 2.5 microns) are deposited deep in the lung and are potentially more damaging per unit mass than large particles. Monitoring for particulate matter is currently done with real-time (one hour), 24-hour integrated personal, indoor, and ambient sampling techniques. The samples are then analyzed for total mass chemical speciation (e.g., trace metals) and selected anions and cations to determine the emission sources, topography, meteorology and climate, and relationships between coarse and fine particulate distributions. Diaries or recorders for time-location and activity levels are used to index individuals (and groups) and to provide the results and individual calculations of particulate-matter dosimetry. Continuous monitors with data loggers could be placed on key individuals within a deployed group (e.g., a platoon) for a convenient (e.g., 3- to 14-day) sampling period to compile a real-time (one-or two-hour intervals, as well as cumulative) exposure file of daily time and activity data.

Summary

Prospective sampling could be used to evaluate acute and semiacute exposures of individuals and groups, either with data loggers or by electronic transfer of laboratory analyzed data. The monitoring could be done with real-time or integrated samplers worn by individuals of concern or designated individuals within a platoon (or smaller unit), along with miniaturized GPS (with data loggers) and time-activity data loggers. The latter and any real-time monitoring on the loggers could be downloaded when convenient. Like epidemiological and occupational studies, these samples would be supplemented by predeployment and postdeployment questionnaires (for past and current exposure information) and biological samples and the results entered into the electronic databases. Prospective sampling techniques are readily available for all standard chemical agents. Sampling techniques for biological agents are being developed (Ali et al., 1997; Lioy, 1999; U.S. Army SBCCOM, 1998).

Retrospective Monitoring Strategies

Estimates of prior exposures can be based on current monitoring, historical monitoring, and questionnaires. Retrospective sampling is more difficult to carry out than prospective sampling. Predeployment questionnaires, and all questionnaires asking about past exposures are, by their very nature, retrospective and uncertain. The availability of modeling and simulation for retrospective exposure assessments is very limited. Some biomarkers could be used for short-term retrospective estimates.

Data Storage, Management, and Analyses

Agent monitoring data will have to be stored, managed, and analyzed. For this, the capacity and batteries of data recorders and loggers will have to be improved. Near-term downloading could be performed by the larger units; real-time acquisition, storage, and analyses could only be done in real-time, acute situations. DoD should begin working to meet the enormous challenges of collecting and storing large amounts of data. One way to reduce the demand for data acquisition and storage would be to rely more on statistical sampling schemes, simulations, and modeling, as long as the decrease in reliability associated with statistical sampling can be accounted for.

Use of Scenarios, Training, and Exercises

All aspects of the exposure characterization process must be integrated into the deployment plan and included in soldier training. Exercises that incorporate this information gathering would benefit both mission planners and troops. The first step for developing exercises would be to consider the range of exposure scenarios likely to be encountered. The scenarios should then be designed to capture the taxonomy of probable exposure situations (see Appendix A), including exposures to CB agents, TICs, and environmental and occupational contaminants.

The training exercises and/or scenario evaluation should be designed to help commanders and troops, as well as system developers (R&D groups), medical support groups, policy makers, and operations groups to clarify the issues related to the mission (see Table 2-1).

Making Exposure Assessment Operational

Exposure tracking will be useful only if it is integrated into all aspects of military operations. This means that policies must be linked to field activities at all levels of command. Specified individuals must be

TABLE 2-1 Questions To Be Answered by a CB Training Exercise

|

Specific Group |

Questions that Should Be Answered by a CB Training Exercise |

|

Commanders |

What to do? |

|

Troops |

How to do it? |

|

R&D Groups and Policy makers |

What types of emerging detector and tracking technologies are available to assess exposure to the harmful agents and what impact will these technologies have on policy and training? |

|

Medical support and Policy makers |

What types of exposure information are needed? That is: exposure concentrations, exposure media (indoor air, ambient air, water, soil, food, etc.), duration, location, activity, etc. |

|

R&D groups and Policy makers |

How much information is needed? That is: which individuals (all, selected subgroups), which locations, what time intervals (days, hours, or minutes) should be represented. |

|

R&D groups and Operations groups |

How is the information collected and by whom, including the equipment used, the protocol for monitoring, data entry, quality control/assurance, limits of detection? How and to whom is the information transferred? |

|

R&D groups |

How is the information assessed before action is taken to prevent or limit exposure, including the use of simulation models to enhance measurements, issues of uncertainty and variability, likelihood and cost of false positives and false negatives? How, how much of, and where is the information stored? |

responsible for setting up detection and monitoring equipment, tracking troops, and assessing information collected from monitors and data storage systems. Even under low threat conditions, data collection should remain a priority up and down the chain-of-command.

FINDINGS AND RECOMMENDATIONS

Finding. To date, exposure assessments for both civilian and military populations have focused primarily on exposures to contaminants in a specific medium (e.g., air, water, soil, food) or on exposures to specific environmental pollutants. DoD's current plans for monitoring CB agents would also be limited to a specific medium and would not be time-space specific, would not include time-activity records, and would not account for both short-term and long-term exposures. These factors would only be

included in settings where deployed personnel were active (in garrisons or in the field).

Most of the sampling protocols included in CB agent reconnaissance operations are designed to provide comprehensive area coverage, rather than statistical sampling or stratification. Neither has DoD systematically evaluated how modeling, simulations, and decision analysis could be used in real time to anticipate acute exposures (especially imminent threats). DoD's current capabilities and strategies have not been structured for making optimum use of these tools.

Recommendation. The Department of Defense (DoD) should devote more resources to designing and employing both statistical sampling and sample stratification methods. Two useful examples of probability-based statistical sampling are the National Human Exposure Assessment Studies (NHEXAS) and Total Exposure Assessment Methodology (TEAM) studies. DoD should modify these sampling techniques to meet its needs and should evaluate how modeling, simulations, and decision analysis could be used in real time to anticipate acute exposures.

Finding. Personal passive monitoring of atomic radiation, in the form of dosimeters and radiation badges, has been successfully used for many decades. In some limited situations, small passive monitors have been used to detect chemicals. However, current technology limits personal monitoring of many toxic gases and particulate matter to the use of active monitoring, which is a complex process.

Recommendation. The Department of Defense should explore and evaluate the use of personal monitors for detecting chemical and biological agents, toxic industrial chemicals, and other harmful agents at low levels. If all personnel were equipped with monitors, probabilistic sampling could be used to select a subset of data for short-term, immediate use (e.g., to define the contaminated parts of the deployment area). The full data set could be used for long-term purposes (e.g., recording an individual's exposure to low-level toxic agents). Stratification of the subsets should be decided based on exposure attributes, such as location, unit assignment, and work assignment. If the logistics problems can be solved, every deployed person could ultimately wear a personal monitor.

Finding. DoD is currently devoting significant resources to improving its capabilities of monitoring life-threatening exposures, but not significant exposures to other harmful agents. At this time, DoD also recognizes the value of, but has taken little action, collecting and storing information on low-level exposures to CB agents, TICs, environmental and occupational

contaminants, and endemic biological organisms. Different capabilities will be required for detecting life-threatening exposures, monitoring low-level exposures to CB and industrial agents, monitoring potential exposures to harmful microorganisms, and maintaining complete exposure records for all military personnel.

Recommendation. The Department of Defense should rank the threat levels of all known harmful agents and exposure pathways based on the dimensions of harm (e.g., health consequences, the number of personnel affected, the time to consequences). When assessing the need for and applications of new equipment, increased surveillance, and improved documentation, DoD should include these data, and, if applicable, use decision analysis methods (e.g., probabilistic decision trees) to make decisions and prepare operations orders.

Technical Annex

Exposure assessment is a key step in analyzing the links between contaminant sources and human health risks and, ultimately, in developing effective risk-management strategies. This annex describes the components of an exposure assessment and a ''dimensions of harm scale," an approach to setting priorities among exposure assessment capabilities.

COMPONENTS OF AN EXPOSURE ASSESSMENT

The science of exposure assessment is related to toxicology and risk assessment, but in the last decade it has emerged as an independent discipline (EPA, 1992b; Lioy and Pellizzari, 1996; McKone and Daniels, 1991; NRC, 1991a, 1991b; Zartarian et al., 1997). Exposure is defined as the contact over a specified period of time of a chemical, physical, or biological substance with the visible exterior of the person, including the skin and openings into the body, such as the mouth and nostrils.

In the past, exposure assessments often relied implicitly on the assumption that exposures could be linked by simple parameters to observed concentrations in the air, water, or soil proximate to the exposed population. However, this is rarely the case. Total exposure assessments that include time and activity patterns and microenvironmental data have revealed that an exposure assessment is most valuable when it provides a comprehensive view of exposure pathways and identifies major sources of variability and uncertainty.

To assess human exposure exhaustively, investigators would have to measure or estimate the time spent by each person in the presence of each concentration of each contaminant in each exposure medium. However,

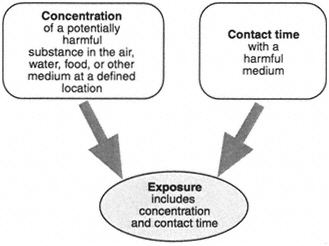

Figure 2-1 Links between concentration data and time-activity data.

in most cases, this is neither technically feasible nor even desirable. Even with precise exposure data, a determination of harm must be based both on exposure data and knowledge of an unsafe dose, which is typically available only at a population scale, not for individuals. For a specified contaminant, the most general way to define exposure is in terms of a concentration in a specified medium and the time that the person is in contact with that concentration. This concept is illustrated in Figure 2-1, which shows that an exposure characterization is based on both concentration information and time histories of the exposed population.

The standard approach to assessing exposure is to use the model equations proposed by Duan (1982). In this model, exposure is equal to the product of the concentration of the agent and the time of exposure. The sum of all exposures divided by the total time of exposure is the average exposure. This is shown in the following equation:

where ξi is the average exposure of person i; cj is the concentration that person i encounters in microenvironment j; and tij is the time spent by person i in microenvironment j. J is the total number of microenvironments visited over the total time person i is exposed to CB agents. The

successive times, tij, person i spends in various microenvironments is referred to as the person's "activity budget."

When assessing human exposure, it is useful to focus on contact media, which include the envelope of air surrounding a human receptor; the water and food ingested; and the layer of soil, water, or other substances that contacts the skin surface, including inoculations. The magnitude and relative contribution of each exposure route and environmental pathway must be considered in an assessment of total human exposure to a potentially harmful agent to determine the best approach for reducing exposure.

Exposure assessments of deployed forces would require that the following steps be taken:

-

Establish and target potentially harmful agents based on the dimensions of harm (discussed below) and on issues addressed in other studies (IOM, 1999a; NRC, 1999a, 1999b).

-

Document and monitor geographic and temporal trends in exposures to the deployment population from CB agents through multiple media (e.g., air, water, soil), multiple pathways (e.g., indoor air, dust, food, water), and multiple routes (e.g., inhalation, ingestion, dermal uptake).

-

Identify and gather critical data for linking exposure, dose, and health information in ways that enhance epidemiological studies, improve environmental surveillance, improve predictive models, and enhance risk assessment and risk management (NRC, 1994a).

-

Assess contaminant transport in a consistent manner over a wide range of spatial and time scales, from minutes and hours to weeks and months, on local and regional scales.

-

Account for interactions and coupling of media through detailed measurements and/or models.

DIMENSIONS OF HARM

Exposure assessment is a prerequisite for both risk assessment and risk management. Not every exposure necessarily causes harm or has a health effect. Controlling the exposure of human populations to CB contaminants using a risk-based approach requires both an accurate metric for the effects of contaminants on human health and a defensible process for determining which exposures will be measured and controlled (NRC, 1994a).

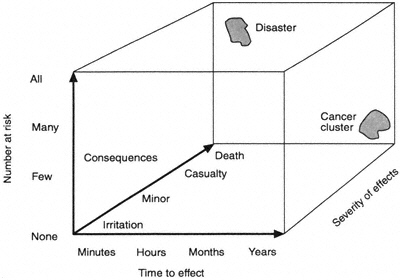

Assessment capabilities for exposures to harmful agents should be classified and prioritized before resources are allocated for reacting to potential threats and R&D projects are prioritized for new detection and monitoring technologies. A useful approach to setting these priorities

Figure 2-2 The dimensions-of-harm scale.

could be based on an index of hazard, such as the dimensions of harm developed for the Deployment Toxicology Research and Development Master Plan (Figure 2-2) (GEO-CENTERS and Life Systems, 1997). The dimensions of harm are measured along three scales—time to effect, number at risk, and severity of the consequences. Along the "Number at Risk" and "Consequences" axes, greater is a measure of importance. However, on the "Time to Consequences" axis, shorter (minutes) is generally more important than longer. For example, the effects of some agents, such as phosgene and mustard, are delayed, which may cause a delay in assuming a protective posture and thereby lead to increased morbidity and mortality.