7

The Role of Monitoring and Modeling

KEY POINTS IN CHAPTER 7

This chapter reviews monitoring and modeling and how each can best be used to increase understanding of coastal nutrient over-enrichment and develop management approaches. It finds:

-

There is still great need for better technical information on status and trends in the marine environment to guide management and regulatory decisions, verify the efficacy of existing programs, and help shape national policy.

-

Effective marine environmental monitoring programs must have clearly defined goals and objectives; a technical design based on an understanding of system linkages and processes; testable questions and hypotheses; peer review; methods that employ statistically valid observations and predictive models; and the means to translate data into information products tailored to the needs of their users, including decisionmakers and the public.

-

There is no simple formula to ensure a successful monitoring program. Adequate resources—time, funding, and expertise—must be committed to the initial planning. The program should address all sources of variability and uncertainty, as well as cause and effect relationships. A successful monitoring program requires input from everyone who will use the data—scientists, managers, decisionmakers, and the public.

-

Calibrated process models of estuarine water quality tend to be more useful forecasting (extrapolation) tools than simpler formulations, because they tend to include a greater representation of the physics, chemistry, and biology of the physical system being simulated.

-

When model results are presented to managers, they should be accompanied by estimates of confidence levels.

-

Agencies should develop standards for storing and manipulating hydrologic, hydraulic, water quality, and atmospheric deposition time series. This will make it easier to link models that may not have been developed for similar purposes.

-

Managers are often concerned with the effects of nutrient loading on commercial and recreational fisheries and other higher trophic levels. These linkages are not always clear, and the use of modeling to understand cause and effect relationships is in its infancy. The lack of knowledge about the connections among nutrient loadings, phytoplankton community response, and higher trophic levels makes modeling difficult. New models are needed that use comparative ecosystem approaches to better understand key processes and their controls in estuaries.

In 1990, a major report on marine environmental monitoring (NRC 1990) concluded that: “There is a growing need for better technical information on the condition and changes in the condition of the marine environment to guide management and regulatory decisions, verify the efficacy of existing programs, and help shape national policy on marine environmental protection.” The situation has not improved dramatically in the decade since this statement was published.

Environmental monitoring involves the observation or measurement of an ecosystem variable to understand the nature of the system and changes over time. Monitoring can have other important uses beyond mere observation. For instance, compliance monitoring can trigger enforcement action. In research, monitoring is used to detect interrelationships between variables and scales of variability to improve understanding of complex processes. The data acquired during monitoring can be used to specify parameters needed to create useful models and to help calibrate, verify, and evaluate models.1 When planning a monitoring program, important decisions must be made before the first observation is made, including what to measure, where to measure, when, how long, at what frequency, and which techniques to use. How these decisions are made often reflects important underlying and frequently unstated assumptions concerning how the ecosystem functions.

Monitoring can play an important role in understanding and mitigating nutrient over-enrichment problems by helping pinpoint the nature and extent of problems. Because nutrient over-enrichment often results in local problems, the management responses, including monitoring programs, is typically local. This local emphasis influences the scales of

measurements; resources available to the program; decisions about what, how, and when to monitor; and the comparability of monitoring results among programs.

One of the biggest challenges to effective monitoring is deciding how to allocate scarce resources. If the goal is to map a coastal characteristic with a given accuracy, then statistical techniques (e.g., Bretherton et al. 1976) provide methodologies to estimate the expected error associated with any given array of sensors. If, however, particular areas must be protected, for example a swimming beach or a fish farm, then monitoring efforts must be more focused.

Unfortunately, the spatial coherence scales of eutrophication and related processes are often very small in comparison to the body of water in which they occur, with the result that what constitutes a significant variation from normal can be difficult to determine. Also, the distribution of affected areas within a given system can be patchy. When resources to support monitoring are limited, decisions concerning where to monitor may favor economically or politically sensitive regions.

Typical monitoring programs are built around fixed devices and sampling schemes. To design an appropriate sampling scheme, an estimate of the important scales of variability must be made. Sampling does not need to take place at all of these scales, but, if a particular scale is not sampled, its effects must be averaged out of the record by the design of the measurement device. Otherwise, the resulting record would appear to have significant variability at scales where, in fact, it does not. Thus, through careful design, a program can conserve resources and sample only the important scales.

For example, both semi-diurnal and diurnal tidal variations often affect an estuary. Nonetheless, these scales may not be the dominant scales at which eutrophication or other adverse processes take place. By averaging over a tidal cycle, the important parameters may be sampled at a lower repetition rate and still retain all the important information. Additional savings may be obtained if that sampling need not occur throughout the year. In many locations, cold temperatures, reduced metabolic rates, reduced discharge, and increased wind stirring (during certain seasons) eliminate the potential development of hypoxic conditions. If monitoring such conditions is the program goal, the monitoring may be restricted or discontinued during these seasons.

Modeling and monitoring share a close interdependence. Modeling synthesizes the results of observational programs. As such, models provide important assistance for the development of monitoring arrays. Monitoring data, however, are necessary for the calibration, verification, and post-auditing (or evaluation) of models. They also provide the initial conditions, boundary conditions, and forcing functions for these models.

Finally, they provide data for assimilation when the models are used in a predictive mode. In these cases, real data are blended with model output to keep the model from diverging too far from reality.

At least two kinds of data are necessary to run models accurately. For water quality models of receiving basins, the first category includes necessary model input parameters, such as inflows, input loads, wind vectors, hypsographic data, and tides. For watershed models, key data includes topography, precipitation, and land use characteristics. The second data category contains measured values that correspond to model output (e.g., flows, velocities, concentrations, ambient loads) for purposes of calibration, verification, and post-auditing.

An iterative process of modeling, verification through careful statistical comparison of model output with observations (Willmott et al. 1985), and model modification is necessary (e.g., Herring et al. 1999) to obtain results in which managers can have confidence. Useful models require close interaction among model developers, field scientists who monitor and describe the real world, and theoreticians who explain the observations. Once quantitative measures of a model’s ability to calculate the state of the system on certain space and time scales are specified, managers can determine whether the observed level of reliability is acceptable.

It can be argued that no model is truly able to predict, that is, to provide perfect estimates of future conditions. The term “predict” is used in this chapter to mean “forecast” or “estimate” for future or hypothetical conditions. The accuracy of such predictions will vary depending on the degree of integration of those who monitor with those who model. Prediction is the ultimate management use of models. While one can argue about the relative predictive skill of existing models, it is clear that prediction is an important goal justifying their development.

The detail and complexity of a model is often reflected in the amount of data required to initialize and run the model. Many mathematically simple models require extensive and expensive monitoring programs to provide data before they can produce accurate results. Thus, the level of model sophistication does not necessarily indicate savings in the resources that must be devoted to monitoring in order to produce accurate hindcasts or predictions.

Finally, mention should be made of the use of data assimilation. Numerical models have a tendency for their computation results to drift away from reality as they are run for longer and longer periods of time. One method used to correct this problem is to assimilate field data as they become available. If one observes a discrepancy between observations and model output, the model state is pulled back toward the observed state of the system being modeled. There exist many numerical techniques for achieving this goal. Meteorologists have used this approach

for many years, and physical oceanographers and biological oceanographers are beginning to incorporate it into their models.

It must be remembered, however, that models are not a substitute for measurements. A properly calibrated and verified model can be useful for producing estimates of future conditions and guiding management, but field measurements, when available, are always superior to model computations.

INTRODUCTION TO MONITORING

Monitoring provides long-term data sets that can be used to verify or disprove existing theories developed from shorter, more focused data sets. Monitoring characterizes the scales of variability, in both space and time, thus allowing modification of sampling schemes to maximize the use of available resources. In particular, monitoring allows determination of long-term climatic scales of change, which can be mistaken for trends in shorter records.

Exploratory data analysis suggests that carefully manipulated data sets from monitoring programs, along with a fair share of serendipity, may result in new insights into functional relationships among variables of an ecosystem (Tukey 1977). While this is clearly an avenue of productive future research, the number of examples of such insight remains small.

Focused monitoring programs are generally established in response to, rather than in anticipation of, a problem. This means that baseline information can be missing from a monitored region. Once established, monitoring programs are useful for identifying events, but unless maintained for long periods, their utility for determining the existence of a trend is far less. In a similar sense, they are also useful for monitoring the effectiveness of remediation activities, if maintained for sufficiently long periods (i.e., periods longer than the natural scales of variability of the system).

Long-term monitoring programs are necessary to isolate subtle changes in the environment. Only through data gathering programs that are sufficiently interdisciplinary in their design it will be possible to develop and test hypotheses concerning the processes and impacts of eutrophication.

As pointed out by the 1990 National Research Council report Managing Troubled Waters: The Role of Marine Environmental Monitoring, monitoring is generally carried out to gather information about regulatory and permit compliance, model verification, or trends in important environmental or water quality parameters. These data can play an important role in: 1) defining the severity and extent of problems, 2) supporting

integrated decisionmaking when coupled with research and predictive modeling, and 3) guiding the setting of priorities for management programs. Because establishing and maintaining targeted monitoring programs is expensive and complex, greater use of environmental data collected for a variety of purposes is gaining appeal. For example, data collected through the National Pollutant Discharge Elimination System permitting process (the process of permitting point source pollutant discharges in compliance with the Clean Water Act) has demonstrated great utility for developing and evaluating the effectiveness of regional stormwater management plans and for characterizing local stormwater discharges in diverse settings (Brush et al. 1994; Cooke et al. 1994). These efforts to use data derived for regulatory purposes can provide valuable insights into the impact of land use on the concentration of a variety of constituents and thus have implications for developing loading estimates and other watershed management applications. Efforts should be made to encourage greater accessibility to similar permitting data, including associated metadata, and compliance with accepted collection and analysis protocols.

Ever widening use of electronic storage and management of data sets and the greater accessibility provided by the internet hold great potential for reducing the cost of environmental monitoring by obtaining full value from data already being collected. Such a shift in philosophy, while already under way, would be facilitated if the basic guidelines and philosophies espoused herein are more fully integrated with established or contemplated regulatory monitoring plans. The committee believes that monitoring data are frequently not accessible to all who could benefit from their use (Chapter 2). Data management and the development of informational synthesis products should, therefore, be a major part of all monitoring programs—federal, state, and local. These data and syntheses should be available quickly to all users who could benefit from them at a reasonable cost. The internet offers a relatively simple, widely accessible route for distribution.

ELEMENTS OF AN EFFECTIVE MONITORING PROGRAM

Effective marine environmental monitoring programs must have the following features: clearly defined goals and objectives; a technical design that is based on an understanding of system linkages and processes; testable questions and hypotheses; peer review; methods that employ statistically valid observations and predictive models; and the means to translate data into information products tailored to the needs of their users, including decisionmakers and the public (NRC 1990).

Monitoring programs are costly undertakings and need to be care-

fully planned with specific goals in mind. They often are established in response to strong public pressure, leading to situations in which program managers are expected to perform good science in a situation driven not by scientifically justifiable design but by political expediency. Legal mandates may cause duplication of effort, leave gaps in the required data records, and monitor the wrong system measures. Under the best of conditions, only a limited number of measures can be monitored for a sustained period of time. If resources are inappropriately used, the situation worsens.

A related problem arises with poor sampling design. If the wrong questions are being asked, undersampling may result in not being able to sort out a weak anthropogenic signal from the natural variations in the environment. Alternatively, oversampling may result in wasted resources. Problems arise with sampling spatial scale, as well as temporal scale. Monitoring for regulatory compliance is often inappropriate for determining regional and national trends.

There is no simple formula that will ensure a successful monitoring program, but much has been written on the topic over the years. In planning a monitoring system, there must be implicit decisions about how monitoring information will be used to make decisions (Box 7-1). It is imperative that all stakeholders—public, managers, policymakers, and scientists—be involved in the plan’s development, understand the implications of the various options, and agree on what results can be expected at what times in the course of the program. It is important that everyone involved harbor realistic expectations. Natural systems are complex and highly variable in time and space. Risk-free decisionmaking is an impossible goal (NRC 1990).

Current monitoring programs generally do not provide integrated data across multiple natural resources at the different temporal and spatial scales needed to develop sound management policies (CENR 1997). A number of issues must be addressed in order to enhance the probability of success of future monitoring programs (NRC 1990; CENR 1997; Nowlin 1999).

First, it is imperative that adequate resources—time, funding, and expertise—be committed to the initial planning of a monitoring program if the probability of success is to be maximized. These resources must be used to design a program that incorporates all sources of variability and uncertainty, as well as the best scientific understanding of cause and effect relationships. Objectives and information needs must be defined before the program design decisions can be made rationally. Successful monitoring programs strive to gather the long time series data needed for trend detection, but at the same time must be flexible to allow reallocation of resources during the program. They need to use adaptive strategies for

|

BOX 7-1 Serious signs of environmental degradation, including much publicized episodes of oxygen deficiency in the Kattegat during the 1980s, led the Danish government to create the Action Plan Against Pollution of the Danish Aquatic Environment with Nutrients in 1987. The Action Plan called for total discharges of nitrogen and phosphorus from agriculture, individual industrial outfalls, and municipal sewage works to be reduced by 50 percent and 80 percent, respectively. Because of great uncertainty about the sources of discharges as well as about the effectiveness of intervention measures, three related programs were initiated: 1) a nationwide monitoring program, 2) a marine research program, and 3) a wastewater research program. Using $1.8 million, universities, consulting firms, the Danish environmental protection agencies, and local governments created a highly effective, joint effort that has resulted in a very broad and detailed understanding of coastal nutrient over-enrichment. The Danish Nationwide Monitoring Program was undertaken to: 1) describe the quality of the aquatic environment; 2) determine where, how, and why environmental changes occur; 3) assess the effectiveness of environmental programs; and 4) determine compliance with water quality objectives. Fundamental requirements of the program were to describe geographical variation and short- and long-term temporal variation so that impacts could be identified and defined with an acceptable degree of certainty. The scope of the monitoring program was extensive and included descriptions of oxygen concentrations, marine sediments, benthic fauna, benthic vegetation, zooplankton, phytoplankton, nutrient concentration and loading, atmospheric inputs, and hydrographic conditions. Monitoring of all estuaries, bays, and coastal waters was undertaken by county governments, while open marine waters were monitored by the National Environmental Research Institute. The monitoring program incorporated data from several other national forestry, fishery, and meteorological institutes. Study results were stored in a centralized, systematic database designed to provide ready access to all potential end-users, from government officials to the public. Data and metadata are compiled and presented in a goal-oriented manner (Figure 7-1). A final component of the Action Plan was to evaluate the results of the Nationwide Monitoring Program after its completion, and then refine to the program to better meet national nutrient pollution reduction goals. The evaluation resulted in several suggestions for modifying components of the monitoring program. One important conclusion was to better couple modeling and monitoring efforts. Choice of monitoring sites often can be guided by modeling, both with respect to data requirements for model development and with respect to sites identified by the model as being sensitive to nutrient over-enrichment. Another conclusion was that |

incorporation of future scientific and technological advances. Finally, expectations and goals must be carefully defined, clearly stated, and agreed to by all involved. These actions require input from everyone who will use the data—scientists, managers, policymakers, and the public. As is often the case, resource limitations may play a role in the monitoring program strategy. Effective priority setting must be based on a full understanding of the impact such decisions will have on the reliability of key model output or other information derived from the monitoring program.

Retrospective analyses of historical data and preliminary research are invaluable in steering the design of sampling strategies. Planners must clearly define what will be considered a meaningful change in the parameters selected for measurement, in light of expected levels of natural variability. At this point, based on existing understanding of how the ecosystem functions, selection of parameters to measure can be made. These decisions will necessarily be influenced by existing technology, signal to noise ratios in the environment, and the existence of surrogate variables.

If the motivation for the monitoring program includes management and regulatory issues, the decision of what to monitor will depend on the final desired state of the system and preconceived ideas of how the system functions. Monitoring strategies for nutrients are often more complicated than the strategies commonly applied to other pollutants (e.g., toxins or carcinogens). For example, if a particular carcinogen is being regulated in order to maintain its concentration below a given level in an estuary, then this chemical constituent’s concentration is the variable targeted for measurement. Similarly, if an ambient nutrient concentration in a stream is to be used to help calculate the nutrient load that stream is contributing to a downstream receiving body, then such a concentration may be targeted for measurement. Unfortunately, this similarity does not hold for monitoring nutrient concentrations in the actual coastal receiving body, because the effects of nutrients on an ecosystem are complex and not necessarily measureable. For example, if an estuary is nitrogen limited, primary productivity may be stimulated by nitrogen loading. This increased primary productivity will then remove nitrogen from the water column at a high rate and tie it up in organic matter. Thus the ambient nitrogen concentration in the water column of the estuary may never rise significantly, or remain elevated long enough to be observed, even as eutrophication takes place. Thus, the ambient concentration of a nutrient in receiving waters rarely reflects the degree to which the body has been affected by nutrient over-enrichment.

Monitoring is often targeted to study more characteristics than simply the eutrophication of a particular coastal waterbody. Often, monitoring programs focus broadly on health of the ecosystem. The decision frequently is made to monitor a variable that is believed to integrate the

effects of numerous processes (Naiman et al. 1998). Such variables could be the areal extent of seagrass beds, the number of commercially harvestable oysters, or the number of waterfowl nesting in a region. The choice of which variables to monitor makes a statement, both about the values that the manager and advisors share and about their inherent beliefs concerning how the system functions. If eutrophication is a concern and the extent of seagrass beds is the observable monitored, there is an inherent belief that this characteristic parameter varies with the degree of nutrient enrichment of the system. The economic and ecological impacts of coastal eutrophication are often not demonstrable from the available scientific data (Chapter 4). Therefore, monitoring should include biological, physical, and chemical properties on all relevant time and space scales. Monitoring should be on scales appropriate to capture all variability and linkages between variables. Often, this will mean that biological and chemical measurements will need to be made on finer scales than is presently the norm.

The duration of any monitoring program is particularly important. Since the purpose of monitoring involves, among other things, the detection of trends, the length of monitoring must be sufficiently long to allow separation of naturally occurring trends from anthropogenically induced changes. Unfortunately, the political will to maintain long-term funding for monitoring programs is often lacking because such programs rarely (and were never intended to) produce major breakthroughs in understanding. The U.S. Geological Survey (USGS) stream monitoring program has provided excellent data on stream flow and nutrient content for many years. These long data sets allow monitoring of changes in runoff characteristics on decadal time scales and development of statistical models of discharge and load. But the gradual reduction of this network over recent years, primarily because of budget pressures, has had dramatic effects, reducing our capability to estimate flow. The data collected by USGS are invaluable, and continuation of this monitoring is essential. However, USGS monitoring network was not designed specifically to assess inputs to coastal regions. The committee concludes that there are major missing pieces in the resultant data set that are needed to support the management of healthy coastal ecosystems; for instance, monitoring sites “below the fall line” (the transition point between lowland and upland portions of rivers, marked by waterfalls and other rocky stretches that limit navigability) are few and far between. Since many of our older, eastern cities arose at these transition points, the network is failing to cover areas down river containing significant population centers. An important aspect of any discussion of national monitoring should be expansion of the USGS monitoring program so that it better assesses nutrient inputs to estuaries and tracks how these change over time.

The distinction between monitoring and research can be vague. Few programs have been able to combine the need for multiple use measurements effectively, but careful evaluation of multiple tier measurements (NRC 1990; CENR 1997) will help alleviate these problems. A careful program of quality control must be established at the outset, and a data management structure to convert data into knowledge must be designed. This will include analysis, interpretation, and modeling and must be carefully attuned to the myriad sources of data and uses of the resultant knowledge. Finally, rapid dissemination of data and knowledge products to all users is essential.

The final step in design of any successful monitoring program should be establishment of a process of independent review and, if necessary, protocol modification. Such reviews are necessary for monitoring programs to take advantage of new measurement and analysis techniques, and they help determine if programs are effectively answering the questions for which they were designed.

Much of the preceding discussion can be applied equally to national, regional, and local monitoring programs. Although the national scale of nutrient over-enrichment of coastal waters in recent years has been documented (NOAA 1999a), the United States still lacks a coherent and consistent strategy for monitoring the effects of nutrient over-enrichment in coastal waters. This seriously constrains the ability to assess the effects and costs of eutrophication regionally or nationally. The National Oceanic and Atmospheric Administration (NOAA) National Estuarine Eutrophication Assessment (Bricker et al. 1999) is an admirable effort, but it is limited by the inconsistency of data collection. To avoid similar limitations in the future, a national monitoring strategy for the United States should be implemented by all relevant agencies. Such a national monitoring strategy should recognize regional differences and should be based on a classification scheme that reflects an understanding of the similarities and differences among estuaries, as discussed in Chapter 6. The development of such a classification scheme is a major research challenge.

DEVELOPING QUANTITATIVE MEASURES OF ESTUARINE CONDITIONS

The primary quantitative indicators may include variations in algal composition, elevated concentration of chlorophyll a, and an increase in the extinction coefficient for a given waterbody (which is adequately reflected by the depth of light penetration adequate to support photosynthesis). Because algal composition changes are typically determined with expensive techniques such as microscopy or pigment analyses, this indicator is less useful for large-scale screening analyses. Secondary indi-

cators of eutrophication include changes in the dissolved oxygen regime of the system; changes in the areal extent of seagrass beds; and incidence of harmful algal blooms or nuisance blooms and changes in their frequency, duration, and areal extent.

An assessment of the condition of the nation’s estuarine and coastal waters needs to be based on a consistent, broad-scale monitoring. This requires a reduced suite of measures that are easy to obtain, easy to calibrate, and that do not require massive commitment of resources to cover the broadest geographic region. For mesoeutrophic (or less impacted) systems, annual measures in July or August when symptoms could be expected to be most severe, should suffice to characterize worst-case conditions. For eutrophic or hypereutrophic systems more frequent measurements are needed, at least twice per year. Light conditions, chlorophyll a, gross primary production and respiration from diurnal patterns in dissolved oxygen content, and mean dissolved oxygen content likely could all be obtained through month-long near-surface and near-bottom deployment of off-the-shelf sensors and digital recording packages.

Although federal, state, and local agencies conduct monitoring activities at thousands of sites nationwide, the efforts are not sufficiently coordinated to provide a comprehensive assessment of the trophic status of U.S. coastal ecosystems (see “The State of the Nation’s Ecosystems”; http://www.heinzctr.org/). In response to this shortfall in our assessment capability, the Environmental Monitoring Team, established by the Committee on Environment and Natural Resources, has recommended a three-tiered conceptual framework for integrating federal environmental monitoring activities. The first tier includes inventories and remote sensing; the second tier includes national and regional surveys; the third tier includes intensive monitoring, research, and modeling. Integration across tiers should provide the understanding that will enable evaluation of the status, trends, and future of the state of the environment. This three-tiered framework is a sound approach for gathering and integrating information, and can be applied to monitoring coastal systems.

A broadscale assessment of the trophic status of the nation’s estuaries should be based on inventories and remote sensing at Tier I sites. Tier I sites should be representative of the 12 major types of coastal systems described in Chapter 6, the four major estuarine circulation types, and the seven biogeographic provinces. It would also be useful to sample both from systems believed to be generally healthy and ones believed to have experienced nutrient related problems.

No such consistent, coherent monitoring program now exists. One program, the Environmental Protection Agency’s (EPA) Environmental Monitoring and Assessment Program, is at a similar scale, but with broader (non-coastal) goals and sampling protocols. Local monitoring of

these variables occurs, for example, at National Estuary Program sites, but the programs are subject to funding vagaries. The Committee on Environment and Natural Resources framework document recommends monitoring some 20 to 25 sites to identify national trends, far less than necessary to adequately represent the diversity of estuarine types, circulation types, and biogeographic provinces necessary to fully characterize the status of coastal waters. Rather, up to 25 sites will be required as Tier III, or index sites, to conduct the temporally and spatially intensive, monitoring and process-based research necessary to develop predictive models able to determine cause and effect relationships.

Monitoring to increase basic scientific understanding of the ecosystem and for validating process-based predictive models should be carried out at an intermediate number of Tier II sites. At these sites, basic data should be collected to determine the food web structure, including secondary producers, primary production structure, nutrient dynamics, hydrodynamics, and details about external nutrient loads. Estuaries within NOAA’s National Estuarine Research Reserve System (NERRS) and/or EPA’s National Estuary Program (NEP) might be appropriate Tier II sites. However, the current suite of sites under these two programs was not selected with nutrient over-enrichment or the variability of estuarine types in mind. If nutrients are the major interest driving study of the Tier II sites, many new sites will need to be added, and some of the current NEERS and NEP sites could probably be dropped.

This monitoring scheme is not without problems. In systems dominated by submerged aquatic vegetation, benthic macrophytes, or coral reefs, the proposed measures may be inadequate. To document nutrient impacts, it will be necessary to look for trends in parameters such as areal extent of submerged aquatic vegetation or changes in algal growth on corals and epiphytic growth on seagrasses. These measures tend to require intensive efforts, however, and thus are contrary to the idea of using simple, cheap measures wherever possible. When placing recording devices in estuaries, one should be in the surface layer and one in the deep layer, but the location of other stations remains an open question. Decisions should be based on local knowledge of sensitive areas of the estuary.

DEVELOPING QUANTITATIVE MEASURES OF WATERSHED CONDITIONS

For a number of reasons, the development of watershed monitoring programs has proceeded for many years relatively independently of receiving water monitoring programs. The relative temporal stability of terrestrial distributions, when compared to estuarine and coastal distributions, has resulted in the development of map-based studies. While the

development of quantitative measures of watershed condition has proceeded rapidly, empirical studies that test for significant relationships between watershed metrics and ecological condition (e.g., presence or abundance of species, water quality) are still few in number (Johnston et al. 1990). There is a clear need to identify the most important watershed metrics to monitor. In addition, it is essential to be aware of the assumptions and constraints that are implicit in the metrics, a problem that is not unique to watershed studies. For example, the selection of the land cover categories to be used in the analysis partially determines the results and the spatial scale of the data—both the total extent of the area and the grid cell size—and thus can strongly influence the numerical results (Turner et al. 1989a, b).

In agriculture-dominated watersheds, monitoring and management practices take on their own unique character. The long-term monitoring of various soil and water indicators is essential to document the status and trends of nutrient sources and coastal impacts. Monitoring is also essential to document any changes in nutrient inputs, system response to best management practice implementation, and impact of any land management changes. Effective monitoring strategies, however, must be spatially extensive as well as sufficiently frequent to detect real and statistically valid changes. Unfortunately, the outcomes and benefits of most monitoring programs will not be manifested for several years before yielding useful information. Monitoring programs are costly, labor intensive, and in most cases will need to be in place for several years. Overcoming these challenges, through documentation and education, is critical to the continued support of existing monitoring programs.

The long history of watershed monitoring in the United States provides examples of programs that have experienced varying degrees of success. These programs can be grouped into those that focus on nutrient sources and those that concentrate on coastal water impacts. It must be emphasized that the choice of both soil and water quality indicators will vary from situation to situation. Soil and water quality monitors will have to decide what they need to measure and how often (Sparrow et al. 2000).

Environmental concerns have forced many state and federal agencies to consider adopting standard soil phosphorus fertility tests as indicators of the potential for phosphorus release from soil and its transport in runoff. Environmental threshold levels range from two (Michigan) to four (Texas) times agronomic thresholds. In most cases, agencies proposing these thresholds plan to adopt a single threshold value for all regions under their jurisdiction. However, threshold soil phosphorus levels are too limited to be the sole criterion to guide manure management and phosphorus applications. For example, adjacent fields having similar soil

test phosphorus levels but differing susceptibilities to surface runoff and erosion due to contrasting topography and management should not have similar soil phosphorus thresholds or management recommendations (Sharpley 1995; Pote et al. 1996). Therefore, environmental threshold soil phosphorus levels will have little value unless they are used with estimates of site-specific potential for surface runoff and erosion.

The intent of these soil phosphorus thresholds was to limit the land application of phosphorus, particularly in manures, biosolids, and other by-products. In all cases, the legislation was repealed because it directly related these thresholds to water quality degradation in a technically in-defensible way. New legislation in various stages of development and adoption (i.e., Arkansas, Maryland, and Texas) will state that standards or threshold values will be based on the best science available and on soil-water relationships being developed (Lander et al. 1997; Simpson 1998). This course is also followed in the joint EPA-U.S. Department of Agriculture (USDA) strategy for sustainable nutrient management for animal feeding operations (USDA and EPA 1998b). This draft strategy proposes a variety of voluntary and regulatory approaches, whereby all animal feeding operations develop and implement comprehensive nutrient management plans by 2008. These plans deal with manure handling and storage, application of manure to the land, record keeping, feed management, integration with other conservation measures, and other options for manure use. The draft strategy is out for public comment, and will be revised and in place by the end of 2001 for poultry and swine operations and by 2002 for cattle and dairy facilities. This leaves scientists only two to three years to develop “the best science available” that includes technically defensible thresholds or indicators. Irrespective of how these thresholds are developed, a plan to monitor these indicators will be needed to establish baseline data and to document any changes in status as a result of land use changes and best management practice implementation.

In the United States, states are required to set their own water quality criteria, but so far only 22 states have quantitative standards and only Florida has adopted the federal EPA levels (Parry 1998). These standards include designated uses, water quality criteria to protect these uses, and an anti-degradation policy. Where water quality standards are not attained, even after best management practices have been implemented, response actions are defined through the total maximum daily load process of the 1998 Clean Water Act (EPA 1998b). Rather than just addressing constituent concentrations in stream and rivers, the total maximum daily load (TMDL) process considers system discharge and thereby the total constituent load, as well as the designated use and potential impact on the receiving waterbody. This approach offers tremendous advantages over other approaches and can be expected to become widely imple-

mented. Again, spatially and temporally extensive monitoring programs will be needed to assess compliance and remediation impacts.

It would be useful to combine the two approaches—source monitoring and impact monitoring—to benefit from the best aspects of each. One approach to a soil and water quality index would be to integrate soil fertility measures and land management with a site’s potential to transport nutrients to water bodies in surface and subsurface runoff. This approach is being advocated by researchers and an increasing number of advisory personnel to address nutrient management and the risk of nutrient transport at multi-field or watershed scales (Lander et al. 1997; Maryland General Assembly 1998; USDA and EPA 1998b). In cooperation with research scientists, USDA’s Natural Resource Conservation Service has developed simple nutrient indexes as screening tools for use by field staff, watershed planners, and farmers to rank the vulnerability of fields as sources of nitrogen and phosphorus loss (Sharpley et al. 1998). The indices account for and rank transport and source factors that control nitrogen and phosphorus loss in subsurface and surface runoff and identify sites where the risk of nutrient movement is expected to be higher than others. These indexes have been incorporated into state and national nutrient management planning strategies that address the impacts of animal feeding operations on water quality to help identify agricultural areas or practices that have the greatest potential to impair water resources (Simpson 1998; USDA and EPA 1998b).

Inherent differences exist in the geography and biology of agricultural regions, and the same applies to forests, wetlands, and water. Since water bodies reflect the lands they drain (Hunsacker and Levine 1995), an ecoregional framework that describes similar patterns of naturally occurring biotic assemblages, such as land-surface form, soil, potential natural vegetation, and land use was proposed by Omernik (1987) and later refined by EPA (1996). The ecoregion concept provides a geographic framework for efficient management of aquatic ecosystems and their components (Hughes 1985; Hughes et al. 1986; Hughes and Larsen 1988). For example, studies in Ohio (Larsen et al. 1986), Arkansas (Rohm et al. 1987), and Oregon (Hughes et al. 1987; Whittier et al. 1988) have shown that distributional patterns of fish communities approximate ecoregional boundaries as defined a priori by Omernik (1987). This, in turn, implies that similar water quality standards, criteria, and monitoring strategies are likely to be valid in a given ecoregion (EPA 1996).

CONTROLLING COSTS

As with all extensive monitoring programs, one of the main chal-

lenges is controlling the costs. Cost concerns apply not only to the farmer wanting to characterize his own property but also to the policymaker seeking to draw conclusions at a watershed, regional, or national scale.

Whatever the technology used to assess soil and water quality, a universal requirement will be locating the places where measurement occurs so that data can be integrated for assessment at larger scales. When working at small scales, such as on a particular farm developing a runoff management plan, great accuracy is not essential. But understanding cumulative inputs and larger scales require more accuracy and consistency. Meeting this requirement is being made increasingly easy by the falling prices of Global Positioning System technology, which provides quick, precise location information.

One strategy to reduce monitoring costs is to recruit unpaid volunteers to do part of the work (Box 7-2). Such volunteers are often obtained by building relationships with local groups who care about the issues or locality (e.g., small environmental advocacy groups, school groups, or senior citizen groups). Working with these stakeholder groups can have important benefits. In the Chesapeake Bay area, for instance, stakeholder alliances have developed among state, federal, and local groups to work together to identify critical problems, focus resources, include watershed goals in planning, and implement effective strategies to safeguard soil and water resources (Chesapeake Bay Program 1995, 1998).

INTRODUCTION TO MODELING

One system is said to model another when the observable parameters in the first system vary in the same fashion as the observable parameters in the second. Models, therefore, may take many forms. They may be empirically derived statistical relationships plotted on a graph. They may be systems that have little in common with the system being modeled other than similar variations of, and relationships between, observables; a classic example from physics is the use of water flow through pipes to model the flow of electrons through an electrical circuit. Such system models are often called analogs. Models may be scaled approximations to the real system, such as the Marine Ecosystems Research Laboratory mesocosms in Narragansett Bay (Frithsen et al. 1985). Or they may be numerical models run on computers that are based either on first principles or empirical relationships. Each type of model has benefits and drawbacks.

Statistical models are empirical and are derived from observations. The relationships described must have a basis in our understanding of processes, if we are to have faith in the predictive capabilities of the model (Kinsman 1957). Furthermore, extrapolation from empirical data is known

|

BOX 7-2 Monitoring the many parameters important for understanding and managing watersheds is an intensive undertaking. Although many of these parameters require significant expertise and technology to obtain valid observations, some useful observations can be collected through techniques that can be learned by non-experts. Concerned and educated citizens represent a potentially large, and, until recently, virtually untapped reservoir of human resources that could be brought into action. Involving citizens has many advantages beyond the obvious “free labor.” For instance, public involvement can instill a sense of stewardship for the local environment. These same citizens then become a resource to elected officials and decisionmakers by providing first-hand observations and informed opinions about what the public wants. Citizen participation can increase the range and impact of existing monitoring efforts. All agencies have limited budgets and staffs, and resources are generally directed first toward the most severe problems, while less degraded areas—equally in need of monitoring to keep watch that problems do not occur or worsen—are ignored by necessity. But volunteer efforts can address these other sites, providing valuable baseline and trend information on watershed conditions. Such information can give early warning when problems start to develop in new areas, so such problems can be addressed before they become severe. Local participants can be a critical resource for agency staff because they can bring an historical perspective and often know the landscape intimately. At times, public involvement in monitoring can help ensure data continuity, if volunteer efforts continue unabated when staff turnover within public agencies occurs during the course of a long-term monitoring activity. Obviously, there are some potential disadvantages to public involvement in monitoring. For instance, it can be a challenge to maintain volunteer interest over long periods of time, which can lead to data gaps, and turnover in volunteer labor can lead to problems in data collection consistency (Ralph et al. 1994). Citizens participating as volunteers in monitoring activities must, of course, be trained, which requires resources and organization from the relevant agency. Even with basic training, volunteers are best used to take water samples and perform routine scientific tasks, and generally do not work with sophisticated equipment or participate in the collection of biological samples. However, there are a number of important monitoring activities well within the capabilities of average citizens, using basic equipment and not requiring specialized skills. These include: Photographs. Historical and time-series photographs of sites in a watershed can provide important information to managers. People’s family photo albums often contain important images of past conditions, uses, and resources. Water samples. Local volunteers can easily be trained to take periodic water samples, and volunteers can be linked in a network to provide wide coverage of a watershed. This is especially effective for monitoring easily observable parameters |

|

such as suspended sediment. This kind of activity needs to be coordinated by an appropriate regulatory agency, which would supply the sample bottles, provide training, process samples, and maintain a database of results. Habitat measurements. Stream morphology is an integrative measurement of overall watershed condition, and pools are particularly sensitive to change. Volunteers can help make simple habitat measurements, such as counting the number of large pools or other features, and tracking such areas to monitor for change, particularly after large storm events. Riparian area surveys. Volunteer help is especially valuable for one-time surveys to establish baseline conditions, because they can cover large areas. Because riparian areas are critical to watershed health, such surveys can be very valuable. Volunteers can be used to survey marked plots in riparian areas to identify and count trees and other species, and even return periodically to note changes in species composition and growth and mortality. Volunteer participation in monitoring programs is not a replacement for professional expertise in all instances, but the value of getting local citizens involved may make the effort worth considering. |

to be uncertain. Thus, these models are most judiciously used in the range of observational situations used to derive the model. Analog models are most useful for explaining processes in general ways to people lacking technical expertise, rather than in understanding or predicting information about the system being modeled, since they involve neither observations nor fundamental principles associated with the system’s behavior. Scaled models are useful for both prediction and understanding. One must, of course, always remain cognizant that system function may be scale dependent. Thus, problems similar to those encountered with the extrapolation of statistical models exist when one extrapolates the results of scaled experiments to full-sized natural systems. Numerical models are most useful when they are based on first principles. The ability to describe system functions in terms of mathematical equations often gives the impression that the underlying principles are fully understood, as might be the situation in basic physics. Unfortunately, empirical coefficients introduced into these equations often hide the degree of uncertainty concerning these principles.

A subset of numerical and statistical models often encountered in watershed modeling uses empirical relationships, such as a runoff coefficient, coupled with event mean concentrations (i.e., a flow-weighted average concentration) to estimate constituent loads. Alternatively, export coefficients (e.g., kg ha yr−1), might be employed. These models may or may not be linked to a geographical information system for land use data and are often implemented on spreadsheets, and therefore could loosely be called spreadsheet models. These models are especially sensitive to empirical coefficients that do not always correspond to known parameter ranges, and should be checked carefully to ensure that they reflect a reasonable physical reality.

Although each modeling approach involves a unique set of problems, some are more suited than others to a particular situation. Understanding involves the development of heuristic and conceptual models, followed by carefully developed analytical and/or numerical models. These may not need to involve the total complexity of the system being modeled, if interest is focused on a particular sub-process. Management decisions may not allow the luxury of complete knowledge of the system. Results from analogous situations elsewhere, statistical (correlation) models, and other simplified models may provide sufficient predictive skill even though they do not incorporate full understanding of the processes involved.

Because they yield clear numerical results with which to gauge progress, models have a strong appeal to policymakers and managers, an appeal that can sometimes bring false confidence and misconception (Boesch et al. 2000). Complex numerical models are gaining greater acceptance by managers. It has been said that while all models are wrong, some models are useful. It is the talent of the proficient and successful modeler to understand for what problems the model is useful and to be able to explain its limitations. Numerical models must be stable, consistent, convergent, and of known accuracy (Messinger and Arakawa 1976). Further credibility of model results can be achieved through a careful process of calibration, verification, and periodic post-auditing.

Skill assessment is a term used to describe the estimation of the improvement in predictability of future states of the system through use of the model, as opposed to some simpler scheme such as persistence. A whole field of study has grown up to facilitate this effort, focused primarily on numerical models (Lynch and Davies 1995). General guidance in evaluation of the goodness-of-fit of hydrologic and water quality models that produce time series of hydrographs and quality parameters is provided by Legates and McCabe (1999). Such efforts are a necessary but time-consuming and costly undertaking.

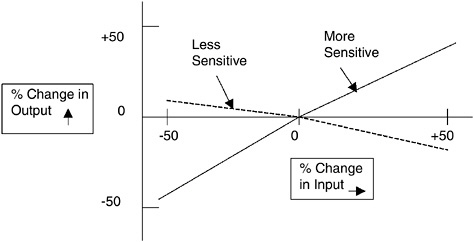

Assembling the types of data necessary for running and calibrating a model is typically expensive and time consuming. A sensitivity analysis

can provide guidance for this effort. When first encountering a model, a user establishes a realistic set of input parameters and systematically varies the input parameters to examine the effect on output values, in the manner suggested in Figure 7-2. This often indicates the relative importance of model input parameters and indicates which parameters should receive the most management attention. In addition, sensitivity analysis may indicate a need for measurements corresponding to model output where they may be missing. The overall goal is to learn as much about the models and from the models as possible before investing large amounts of time and money in data collection and processing.

In spite of the expense of data collection, modeling exercises without field data for calibration and verification, or simply for a reality check, usually have minimal credibility. In the absence of data for the watershed or receiving waterbody being studied, it should be demonstrated that the model satisfactorily represents the same physical processes on a similar watershed or waterbody. At worst, model output should be compared to

FIGURE 7-2 Example of sensitivity analysis: the relative change in an output variable from a model due to the relative change in an input variable to a model. The analysis is performed about a reference condition. An output variable (e.g., watershed runoff) is more “sensitive” to an input variable (e.g., watershed area) if the slope is steep, whereas a small slope illustrates relative insensitivity of an output variable (e.g., watershed runoff) to a change in an input variable (e.g., evaporation). Negative slope simply means an inverse relationship between an output and input variable; the magnitude of the slope is still the criterion that dictates whether an input variable is “sensitive” or not (unpublished figure by W. Huber).

theoretical solutions for simple problems. The modeler should have confidence that the processes are being represented in a way that assures realistic model estimates.

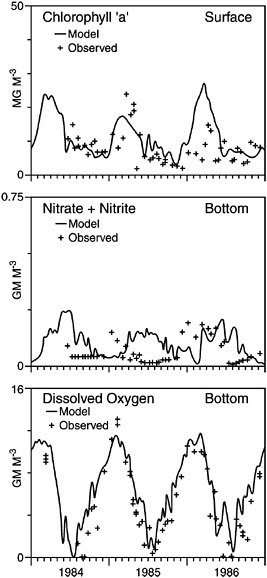

Numerical models solve equations, often differential equations, on a computer. The ability of these models to hindcast observations accurately is, too often, only qualitatively assessed. Certain notable exceptions, however, should be indicated. Radach and Lenhart (1995) and Varela et al. (1995) compare two years of North Sea nutrient concentration and phytoplankton data, with the annual cycle of the same parameters derived from the European Regional Seas Ecosystem Model (Baretta et al. 1995). Since the data were, in general, not used to tune the model parameters, the comparison represents a verification test of the model. Part of the Chesapeake Bay Program included a significant modeling effort. The model was calibrated using three years of data (Cerco and Cole 1993). Statistics that group data in large space-time bins for comparison indicate that this model generally reproduces calibration data to better than a 50 percent relative error and better than 20 percent for nitrogen and dissolved oxygen (Figure 7-3). The calibrated model was verified against a 30-year record with similar results for dissolved oxygen and chlorophyll (Cerco and Cole 1995). Other three-dimensional eutrophication models have been used successfully, with only moderate variations of the tunable free parameters (Hydroqual, Inc. 1995).

DiToro et al. (2000) calibrated a eutrophication model to a series of mesocosm experiments (Frithsen et al. 1985; Box 7-3). Since the mesocosms are well-mixed systems, there is no need for a hydrodynamic variable and no errors need be attributed to problems associated with this variable. The probability distributions of the variables are, in general, well reproduced near the means, but outliers are poorly predicted. Furthermore, there are significant phase errors in the model predictions of certain variables. This implies that comparison of means over long periods appear to be better than comparisons over shorter periods.

It should be stressed that, even when statistical or at least graphical comparison between observations and model output is made, the results are limited primarily to calibration. The cogent argument is often made that all available data should be used to calibrate a model to provide the best estimates of free parameters. Such an approach is clearly defensible if the model is to be used solely to forecast situations within the range of variability of parameter space sampled during calibration. It is far less clear that the unverified model will perform well when the boundary conditions, forcing functions, and loadings vary significantly from those used during the calibration phase. While such models are expected to be based on more fundamental assumptions than simple correlation, the tunable parameter values defined may still hide uncertainty concerning

FIGURE 7-3 Comparison of modeled and observed surface chlorophyll a, bottom nitrate+nitrite, and bottom dissolved oxygen for the years 1984-1986 at a main stem station in Chesapeake Bay between the mouths of the Patuxent and Potomac Rivers. The model results are from the CE-QUAL-ICM model. Solid, continuous lines show the model output; dots with lines represent observations (mean and observed variability). Note the difference in phase between modeled and observed bottom nitrate+nitrite, which occurs due to the inability of the model to capture large observed negative nitrate fluxes accurately. The bottom dissolved oxygen concentrations are well reproduced by the model (modified from Cerco and Cole 1993).

|

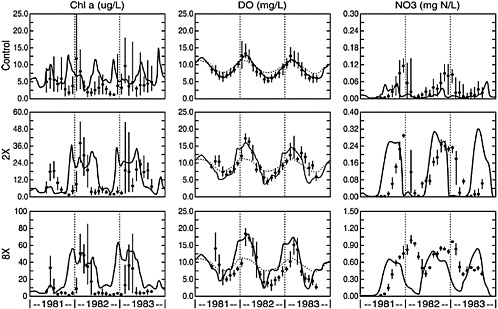

BOX 7-3 A series of mesocosm experiments were run at the University of Rhode Island Marine Ecosystems Research Laboratory in the early 1980s. Mesocosms, large (13.1 m3) continuously stirred reactors, were filled with water from Narragansett Bay and a layer of bay sediment placed at the bottom of the tanks. Water was replaced four times daily at a mean rate of 480 liters per day. NH3, PO4, and SiO2 were added in the molar ratios appropriate to sewage entering the upper bay. These nutrients were added at 1, 2, 4, 8, 16, and 32 times the areal average of nutrient addition to the bay. The six manipulated mesocosms and three control mesocosms were monitored for slightly more than two years. A modified version of a model called the Water Quality Analysis Simulation Program (Appendix D) was calibrated to the ensemble of experimental configurations. Thus, one set of calibration coefficients was used to model both natural and highly eutrophic conditions. For the set of conditions illustrated in Figure 7-4, the model reproduced observed variations of dissolved oxygen very well. Chlorophyll a concentrations tended to be over-predicted, although the magnitudes of the peaks were generally captured. Nitrate and silica were not well reproduced because of problems with the sediment flux model for these two parameters. Even in these cases, however, the magnitude and shape of the annual variation was well reproduced; the annual phasing was not. Interestingly, the model did not capture the ammonia sediment flux accurately during the initial year of the model run, indicating a phase lag before the model could track the observations. The model tended to underestimate the range of the observations, although the variability away from the extremes was well represented in a statistical sense. The discrepancies between the model and the observations were similar to those observed when the calibrated model is applied to a natural coastal setting. (Also, the calibration coefficients are similar between these natural settings and the mesocosm experiments, except when they relate to species-dependent phytoplankton parameters.) This suggests that the capabilities of existing coastal circulation models are generally sufficient as input to drive existing coastal eutrophication models. In an attempt to model the system’s recovery from excessive nutrient loading, the model was run for 15 years after nutrient loading ceased. Although loading was applied for less than 3 years, certain parameters of the system required the full 15 years to recover to pre-loading conditions. The implication for the needed long-term duration of monitoring programs is significant. |

processes and not be valid over all forcing and loading conditions. For example, a change in higher trophic-level community structure will alter grazing and, consequently, affect biomass, organic carbon flux to the bottom, and related processes.

Most of the successful examples mentioned above have been able to reproduce the range of observed variability in parameters during a seasonal cycle, as well as the important processes inferred from the field data. They are not as good at capturing the phasing of all important seasonal variations or producing large-scale space-time means that match observations. For example, they often fail to reproduce important small-scale blooms. In spite of the effort and resources expended on the Chesapeake Bay model, for instance, it has been suggested that “three caveats need to be appreciated in interpretations of the watershed-water quality models: (1) the model predictions are very sensitive to several uncertain assumptions, (2) the models calculate ‘average’ conditions in a variable world, and (3) the models assume immediate benefits of source reductions in the Bay’s tidal waters” (Boesch et al. 2000).

State-of-the-art models have difficulty reproducing the observed amplitudes and phases of observed nutrient cycles in large continental shelf domains (e.g., Radach and Lenhart 1995). Because of the reduced residence time of material, success is often greater in smaller estuarine systems. Particularly problematic are nonlinear processes, many of which are poorly understood even in estuarine situations. For instance, it has been suggested that the generation of hypoxic bottom waters over the shoal regions of the Chesapeake Bay may be reducing the rates of denitrification occurring near the seabed.

Simpler statistical and spreadsheet models are not without their problems, as well. Frequently, export coefficient models have been used in the management of estuaries to determine sources of inputs. These generally are very poor at dealing with atmospheric deposition of nitrogen onto the landscape, if they make any attempt at all. These export coefficient models seldom, if ever, have any independent verification.

The Long Island Sound nutrient input analysis illustrates the problems that can be encountered by applying export coefficient models in an area of high atmospheric deposition of nitrogen. The Long Island Sound study concludes that most of the nonpoint inputs of nitrogen to Long Island Sound come from natural sources not subject to human control (CDEP and NYSDEC 1998). The core approach for estimating nitrogen loads from nonpoint sources used in the Long Island Sound study is fairly simple: using a spreadsheet approach, land use in the watersheds is divided into three categories (urban/suburban, agriculture, and forest), and a nitrogen export from each land type is assumed. Similarly, an

export value for nitrogen from an undisturbed “pre-colonial” landscape of forested land is assumed.

The problem with these estimates is that such export coefficients are poorly known, and can be greatly in error. For the Long Island Sound study, the pre-colonial export of nitrogen is assumed to be 920 kg N km−2 yr−1 (CDEP and NYSDEC 1998). This is a high value, not seen anywhere on Earth in undisturbed landscapes of the temperate zone (Howarth et al. 1996). The analysis of the International Scientific Committee on Problems of the Environment (SCOPE) Nitrogen Project concluded that for regions surrounding the North Atlantic Ocean (both in Europe and in North America, but excluding the tropics), it is likely that the flux of nitrogen from landscapes prior to human disturbance would be of the order of 133 kg N km−2 yr−1, and is unlikely to be greater than 230 kg N km−2 yr−1 (Howarth et al. 1996; Howarth 1998) (Chapter 5). Indeed, data from a century ago for the Connecticut River show fluxes into Long Island Sound of only a little more than 100 kg N km−2 yr−1 (Jaworski et al. 1997). Most of the watershed for Long Island Sound is forested (CDEP and NYSDEC 1998), but the forested landscape is more affected by human activity than is assumed by the Long Island Sound study. The high export of nitrogen from forests there probably reflects the high level of atmospheric deposition of nitrogen. As stated in the 1994 the National Research Council report, “It is important that the watershed models under development be calibrated with accurate and detailed data from each region. It is equally essential that these calibrated regional watershed models be verified with other data. . . .” (NRC 1994).

Several topics in the previous discussion highlight uncertainty in model computations. Uncertainty arises in connection with an imperfect representation of the physics, chemistry, and biology of the real world, caused by numerical approximations, inaccurate parameter estimates and data input, and errors in measurements of the state variables being computed. Whenever possible, this uncertainty should be represented in the model output (e.g., as a mean plus a standard deviation) or as confidence limits on the output of a time series of concentrations or flows. The tendency described earlier for decisionmakers to “believe” models because of their presumed deterministic nature and “exact” form of output must be tempered by responsible use of the models by engineers and scientists so that model computations or predictions are not over-sold or given more weight than they deserve. Above all, model users should determine that the model computations are reasonable in the sense of providing output that is physically realistic and based on input parameters that are within accepted ranges. When model results are presented to managers, they should be accompanied by estimates of confidence levels.

Watershed models and estuarine and coastal models have developed independently because of the scales involved, the connectivity, and the dominant processes in each system. The following discussion briefly discusses characteristics of each type. More detailed characterizations of specific models are presented in Appendix D.

WATERSHED MANAGEMENT MODELS

Models that simulate the runoff and water quality from watersheds are categorized in several ways, but for purposes of this brief review they are segregated into three groups:

-

Models that explicitly simulate watershed processes, albeit usually conceptually. These models typically involve the numerical solution of a set of governing differential and algebraic equations that are a mathematical representation of such processes as rainfall-runoff, build-up and wash-off of surface pollutants, sorption, decay, advection, and dispersion.

-

Models that rely on land use categorization (sometimes through linkage to a geographic information system evaluation) coupled with export coefficients or event mean concentrations. These models are sometimes called spreadsheet approaches, but they actually can be highly sophisticated. These models rarely, if ever, involve solution of a differential equation and almost always rely on simple empirical formulations, such as the use of a runoff coefficient for generation of rainfall runoff.

-

Statistical models involve regression or other techniques that relate water quality measures to characteristics of the watershed. These models range from purely heuristic regression equations (e.g., Driver and Tasker 1990) to relatively sophisticated derived-distribution approaches for estimation of the frequency distribution of loadings and concentrations (e.g., DiToro and Small 1984; Driscoll et al. 1989; Smith et al. 1997).

In addition to these categories, the simplest modeling techniques involve the use of constant concentrations applied to measured or simulated flows, or alternatively, export coefficients, in the form of mass/area-time. Constant concentrations are usually obtained from measurements based on land use and other parameters and are in the form of flow-weighted averages or event mean concentrations. EPA’s Nationwide Urban Runoff Program, for example, provides a good basis for these numbers for urban areas (EPA 1983), as well as storm event sampling from hundreds of cities around the United States that have submitted

National Pollutant Discharge Elimination System permit applications for their stormwater and combined sewage. Unfortunately, much sampling data languishes in state agency or consultant files; a coordinated effort on the part of EPA is sorely needed to publish and analyze the tens of thousands of samples collected as part of the National Pollutant Discharge Elimination System permitting process. Export coefficients may be derived from event mean concentration values, if runoff volumes are known, and this is a common method for obtaining these somewhat less common parameters. Both event mean concentrations and export coefficients fit easily into spreadsheet formats for watershed loading estimates. An advantage of event mean concentrations is that they may be coupled with any hydrologic simulation model to produce loads.

The committee recognizes that, especially in the urban environment, there is no coordinated effort to maintain a database of samples collected under the National Pollutant Discharge Elimination System and similar nationwide monitoring efforts. Such a database would be of inestimable value for developing loading estimates to receiving waters. Consequently, as the agency responsible for implementing National Pollutant Discharge Elimination System legislation, EPA should develop and maintain a current nationwide database of urban and other surface runoff samples for use in nonpoint source water quality analyses and modeling. Additional effort should be made to analyze such information in a manner similar to that of Driver and Tasker (1990), for purposes of developing simplified relationships between concentrations, loads, and causative factors.

There are many ways to characterize watershed runoff models, such as transient versus steady-state and lumped versus distributed. Most hydrologic simulation models are transient models in the sense that they produce a hydrograph (flow versus time) that is based on a time series input of precipitation. An especially useful additional categorization of such models is whether they can generate a continuous (long-term) hydrograph (e.g., for a period of many years) or whether they are event models (e.g., just for one storm event). Continuous models (sometime referred to as period-of-record models) may use a longer time step (often one hour to correspond to hourly precipitation data) and must rely on a statistical screening of very long time series of flows and quality parameters. The output may be the basis for a frequency analysis in the absence of long-term measured data from which design events may be selected for more detailed analysis (Bedient and Huber 1992). Continuous models are especially suited to planning and frequency-based analysis since the output time series is based on historic precipitation data and is representative of climatological extremes that influence the basin and its runoff and loadings. Event models typically use more detailed schemes (i.e., the level of detail in characterizing the watershed) and shorter time steps, and

are executed for a single storm. The output hydrograph and pollutograph (concentration versus time) can be viewed graphically, and a model run in this mode is often used for detailed design (e.g., of a hydraulic structure or for a best management practice). Several watershed models can be run in either mode, and fast computers with extensive memory make the distinction between degree of schematization and time step less of an issue.

Growing interest in the application of statistical models is taking place. Such models can vary in complexity from simple regression models such as used in the International SCOPE Nitrogen Project, to more complex models such as the the Spatially Referenced Regressions on Watersheds (SPARROW) model developed by USGS. This class of models represents useful tools for understanding the relative roles various sources may contribute to the overall nutrient load delivered to a receiving body from a complex or extremely large watershed where insufficient observational data are available to initialize or verify a process based model.

ESTUARINE AND COASTAL MODELS

Development of process models for estuaries and open coastal systems is still in its infancy. While it is clear that transport by both advection and diffusion is important for controlling the final distribution of nutrients and carbon in a system, existing models generally uncouple the hydrodynamics from the biological and chemical kinetics. One justification for this is that the time step necessary to accurately describe the hydrodynamics of the system is much smaller than that assumed necessary to describe the biological and chemical processes. However, this assumption has not been carefully examined for the full extent of potentially important situations, and efforts are under way to examine its validity for shallow estuaries (e.g., Inoue et al. 1996). The solution techniques used in most existing hydrodynamic models encourage acceptance of this assumption.

The number of published model studies of physical-biological interactions in the coastal zone is increasing. Generally, they can be divided into two groups: (1) qualitative process models designed to increase understanding of the interactions observed in nature (e.g., Chen et al. 1997), and (2) prognostic models designed to enhance management decisions. The former often limit the size of the state space simulated (i.e., the number of independent variables). For example, many models of the lower trophic levels model generic categories termed phytoplankton, zooplankton, and nutrients. This ignores the significant differences in the interactions between subgroups of these three categories (e.g., diatoms and flagellates respond differently to various nutrient loadings and dif-

ferent classes of zooplankters prefer different phytoplankters as food sources). This, though, is a two-edged sword as there is evidence that increasing the number of dependent variables being modeled promotes the development of wildly varying (chaotic) solutions (Nihoul 1998).

The predictive models used as management tools often are limited in the level of sophistication used in developing the dynamics that force the final biological and chemical kinetics module. On the other hand, these biological and chemical kinetics are generally more sophisticated than those appearing in the process study models.