5

Professional Development

Teachers, teacher educators, professional-development specialists, and administrators may be most interested in this chapter.

Improvement by teachers of formative assessment practices will usually involve a significant change in the way they plan and carry out their teaching, so that attempts to force adoption of the same simple recipe by all teachers will not be effective. Success will depend on how each can work out his or her own way of implementing change. (Black, 1997)

Just as there is powerful evidence that formative assessment can improve students' learning and achievement, it is just as clear that sustained professional development for teachers is required if they are to improve this aspect of their teaching. Clear goals are necessary, along with well-understood criteria for high-quality student work. To accurately gauge student understanding requires that teachers engage in questioning and listen carefully to student responses. It means focusing the students' own questions. It means figuring out what students comprehend by listening to them during their discussions about science. They need to carefully consider written work and what they observe while students engage in projects and investigations. The teacher strives to fathom what the student is saying and what is implied about the student's knowledge in his or her statements, questions, work and actions. Teachers need to listen in a way that goes well beyond an immediate right or wrong judgment.

Once the current level of understanding is ascertained, teachers need to use data drawn from conversations, observations, and prior student work to make informed decisions about how to help a student move toward the desired goals. They also need to facilitate and cultivate

peer and self-assessment strategies among their students. Although this list is not complete, it does begin to show the scope of professional development that is required to achieve high-quality classroom assessment. Many teachers already engage smoothly and effectively in the processes associated with effective classroom assessment, but these practices need to be developed and enhanced in all classrooms and among all teachers.

FEATURES OF PROFESSIONAL DEVELOPMENT

Change in assessment practices that are closely linked to everyday teaching will not come about through occasional in-service days or special workshops. Teacher professional-development research (Loucks-Horsley, Hewson, Love, & Stiles, 1998) indicates that a “one-shot” teacher professional-development experience is not effective in almost any significant attempt to improve teaching practice. Because the kind of assessment discussed in this document is intimately associated with a teacher's fundamental approach to her responsibilities and not simply an add-on to current practice, professional development must permit the examination of basic questions about what it means to be a teacher. Professional development needs to become a continuous process (see Professional Development Standards, NRC, 1996), where teachers have opportunities to engage in professional growth throughout their careers.

Rooted in Practice

As Black's statement at the outset of this chapter suggests, widespread formative assessment will not come about solely through changes in policies nor solely by adopting specific programs. New techniques can help, but understanding the basis for the new techniques also is necessary if it is to be implemented in a manner consistent with its intent. Yet a teacher cannot successfully implement all of the changes overnight. Successful and lasting change takes time and deep examination. It becomes critical to root professional-development experiences in what teachers actually do. This approach also is consistent with what research says about teacher learning. A recent study by the NRC (1999a) asserts that teachers continue to learn about teaching in many ways. Primarily, the study states, “they learn from their own practice” (p. 179). Teachers develop repertoires of action that are shaped both by standards and by the knowledge that is gleaned in practice (Wenger, 1998).

Reflective Practice

The standards for assessment and teaching stress the importance of

incorporating reflection into regular teaching practice. The Teaching Standards (NRC, 1996) state that teachers should, “Use student data, observations of teaching, and interactions with colleagues to reflect on and improve teaching practice” (p. 42). Underlying many of the successful professional growth strategies is the use of data from a teacher's own classroom and experience. When teachers examine their own teaching, they begin to notice incidents and patterns that may otherwise have been overlooked. It is important that teachers allow feedback from their own practice to inform their future practice, including their beliefs and understandings involved in teaching. Reflection and inquiry into teaching, and the local and practical knowledge that results, is a start towards improved assessment in the classroom.

One form this inquiry into teaching practice could take is action research, research conducted by teachers for improvement of aspects of their teaching. This form of research is based on the principle that the practical reasoning of teachers is directed toward taking principled action in their own classrooms (Atkin, 1992). By making changes in their own professional activities, teachers learn about themselves and the improvements they desire. Their understanding is deepened when they discuss these experiences with peers who share similar values and who are trying to make similar changes (Atkin, 1994; Cochran-Smith & Lytle, 1999; Elliot, 1987; Hargreaves, 1998).

Collaborative

For teachers working in what is often considered a solitary culture, collaboration with peers is thus another feature of improving practices. This is supported by research findings that teachers learn through their interactions with other teachers (NRC, 1999a):

. . . research evidence indicates that the most successful teacher professional development activities are those that are extended over time and encourage the development of teachers' learning communities. These kinds of activities have been accomplished by creating opportunities for shared experiences and discourse around shared texts and data about student learning, and focus on shared decision making. (p. 192)

Deliberation among peers is a fundamental feature of professional development in any field. These deliberations can be formal or informal and also can occur among colleagues who teach the same grade level or across grades. The exact composition of the group is secondary to the common interest and goal of improved practice. Parallels do exist between what we know about teacher learning and our understanding of

student learning. One such parallel is the importance of collecting information that can be used to inform teaching. Collaboration and cooperative groups help facilitate feedback; thus, opportunities that allow colleagues to observe attempts to implement new ideas —by visits to other classrooms and by watching videotape—should be built into professional-development experiences (NRC, 1999a). As well as finding out about effective practices, teachers can glean valuable lessons from sharing and discussing practices that are less than successful (NRC, 1999a). To paraphrase Thomas Edison: I didn 't fail; I found out what doesn't work.

Multiple Entry Points

Because teachers have different professional needs, designers of professional-development programs usually try to provide multiple points of entry to the experience as well as to encourage multiple forms of follow-up. Furthermore, they are cognizant of the fact that change does not happen all at once. To facilitate long-term growth, professional-development experiences need to provide for, and foster as a desired skill, sustained reflection and deliberation.

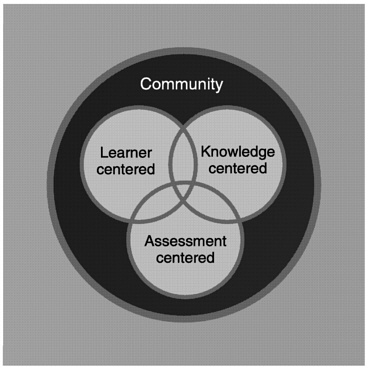

A major theme throughout this report is that formative assessment practices are, or ought to be, so deeply embedded in instructional practice that efforts to improve them open up a broad agenda of issues associated with curriculum, instruction, as well as assessment, and the interactions among all three. Figure 5-1 offers a graphic illustration of learning environments. The diagram illustrates that while assessment is a subject for study in and of itself, there will be overlaps with the areas of curriculum (knowledge) and instruction (the students), as well as an inescapable impact on the context in which the learning is taking place. Because of this close integration, examination of classroom assessment is a particularly fertile entry point for the study and improvement of a range of teachers' professional activities, all within an integrated context of content, teaching, and learning. It can give impetus and shape to teacher education at all levels, preservice and inservice.

FIGURE 5-1 Perspectives on learning environments.

SOURCE: NRC (1999a).

Discussions with groups of teachers focusing on assessment that goes on in their classrooms can quickly lead to some basic questions: What is really worth knowing? What is worth teaching? What counts as knowing? What is competence? What is excellence? How does a particular piece of work reflect what a student understands and is able to do? After conducting classroom assessment professional-development programs with teachers, staff members at TERC (Love, 1999) in Cambridge, Massachusetts, concluded:

When done well, a discussion by teachers of students' endeavors can lead to deeper understandings about individual students and can provide information about the quality of assignments, teaching strategies and classroom climate. Perhaps most important of all, it provides a rich professional learning opportunity for teachers. (p. 1)

AN AGENDA FOR ASSESSMENT-CENTERED PROFESSIONAL DEVELOPMENT

This section articulates an agenda necessary to enhance these professional perspectives and to improve these skills. There is no single and clear sequence in which the various issues, skills, and perspectives that are entailed might best be explored and understood in teacher development. A variety of components will be called into play, sooner or later, in any rich program of professional development that starts from a focus on formative assessment. The order in which they arise may well depend on the particular interests and starting points of the teachers involved.

Any comprehensive professional-development program associated with improved formative classroom assessment corresponds closely to the framework for formative assessment itself. That is to say, professionaldevelopment activities need to address establishing goals for student learning and performance, identifying a student's understanding, and articulating plans and pathways that help students move towards the set goals. In addition, assessment-centered, professional-development activities need to attend to providing feedback to students, science subject matter, conceptions of learning, and supporting student involvement in assessment.

Establishing Goals

Clarity about the purposes and goals being pursued in and through the curriculum is essential. Learning how to establish these goals is an important step to improving assessment in one's classroom. In inquiry

activities, for example, it is important to keep in mind both the development of the students' understandings and skills about the process of investigation and also the aim of developing concept understanding in relation to the phenomena being studied in that investigation. If skills of communication, or the development to reflect on one 's own thinking (metacognition), are aims of the curriculum, then these also have to be in a professional-development agenda.

Identifying Student Understanding

Implementing effective formative assessment requires that a teacher elicit information about the students' understandings as they approach any particular topic. This is particularly important since a student will likely interpret new material in the framework of her preexisting knowledge and understanding (first main principle from How People Learn, NRC, 1999a). Professional development that will lead to improved assessment must begin with the sensitivity to the need of the teacher to learn how to obtain information about a student's current level of understanding of the subject to be taught and learned.

A teacher influenced by the importance of probing student current knowledge started his teaching of a new science topic with questions designed to elicit the existing understanding. He found that the class knew far more about energy than he had anticipated but lacked a coherent structure in which they could relate their various ideas. He thus abandoned the formal presentations of the whole menu of relevant knowledge that he had emphasized in previous years, and had intended to use again, and attempted instead to help them reorganize their existing understandings. He was able to incorporate student investigations into the unit that helped students' challenge their ideas and apply concepts to everyday events. Overall, the work now took less time than before but was more ambitious in developing understanding of the concepts involved.

As this example demonstrates, teachers must develop and use means to elicit students' existing ideas and understandings. This may be achieved by direct questioning, whether orally, with individuals or in group discussions, or in writing. However, such questioning may be more evocative if it is indirect, if it is about relevant phenomena or situations that are put before students and about which they have to think in order to respond. The responses may then indicate how they interpret the concepts and skills that they possess and choose to bring to bear on the specific problem. For example, the teacher above could ask his students at the outset to try to define mechanical, kinetic or potential energy, or he could provide the students with a

scenario, and ask them to discuss the scenario in terms of the types of energy. How best to evaluate and to use the data that come from questioning is equally important to consider and is discussed in a later section.

The analysis here is not simply about a single starting point in a teaching plan. Curriculum also needs consideration. Content has to be considered in a meaningful way so that subgoals help lead to main goals. Box 5-1 provides an example of subgoals for inquiry science. Teachers may determine the subgoals with different levels of specificity. Some teachers may find dividing a concept into too fine a level of detail overly formal. It may deprive them the flexibility of addressing the needs of individual students. Although many of these goals can be determined beforehand, they also may emerge and need to be reevaluated based on assessments occurring during the course of instruction. A check on one step or goal becomes part of the design for the next.

To best help students meet their learning goals, subgoals often have to be identified and articulated. Coming to understand a particular model requires well-organized knowledge of concepts and inquiry procedures, which often requires time and many “little steps” to reach the larger goal. With a solid understanding of science, the underlying structure of the discipline can help serve as the roadmap to guide a teacher in selecting and sequencing activities, assessments, and with their other interactions with students (NRC, 1999a).

Inquiry and the National Science Education Standards (NRC, 2000) elaborates on some of the more particular elements of inquiry as ability and understanding for the K-4, 5-8, 9-12 grade spans. Mastering the abilities and understandings associated with inquiry in particular is difficult and can seem elusive even for the most experienced teacher. Such detail would be useful for a teacher when articulating subgoals to support student inquiry in the classroom. Box 5-1 is an example of delineation of the fundamental abilities and understandings for inquiry at the 9-12 level. For further elaboration, the Standards (NRC, 1996) offer complete descriptions of scientific inquiry as abilities and understandings at the K-4, 5-8, and 9-12 levels.

Articulating a Plan

This process of organizing content into meaningful steps and activities is one of the most demanding aspects of teaching. The teacher needs both a clear idea about the structure of the concepts and skills involved and knowledge of the ways in which students may progress. If intermediary goals are too ambitious, the step towards growth may be too difficult, while if they are too slight, students may not be challenged. An appropriate subgoal is one that goes beyond what the student can learn without help but is within reach given a reasonable degree of teacher support. For more background on the theoretical roots presented here, see Vygotsky's discussion (1962) of the zone of proximal development. Teacher knowledge of common misconceptions and of tools available to promote conceptual reconstruction or to promote fluency with new skills can powerfully inform the process of structuring the curriculum.

Responding to Students—Feedback

Teachers also need ways to respond to the information they elicit from

students. One necessary step is to be able to analyze and interpret students' responses to questions, or their actions in problem situations. In short, teachers need to use data from assessment in order to make appropriate inferences that form the basis of their feedback. This can require careful analysis to probe the meanings behind what students say, write, or do. Questions of good quality are those that evoke evidence relevant to critical points of understanding, but students may often respond in ways that may be hard to interpret. There are many studies that show that seemingly incorrect responses to questions are evidence of a misinterpretation of the question rather than of misunderstanding of the idea being questioned (NRC, 1981). Difficulties with language or in the contexts or purposes of a question are often the cause. Although such difficulties can undermine the validity of formal tests, they need not undermine formative work by the teacher, provided that follow-up questions are used to check, as will happen if question responses are shared and explored in discussion with the teacher or with peers.

Understanding of Subject Matter

A teacher's interpretation of a student response, questions, and action will be related to that teacher's understanding of the concept or skill that is at issue. Thus a solid understanding of the subject matter being taught is essential. Performance criteria need to be based on authentic subject matter goals and on a depth of understanding of the subject matter. For formal tests, sound scoring requires careful rubrics—assessment tools that articulate criteria for differentiating between performance levels—that help the assessor to distinguish between the fully correct, the partially correct, and the incorrect response. Such rubrics are even more useful if the variation of common ways in which answers can be partially correct are identified, inasmuch as each partially correct response requires a different kind of help from a teacher in helping a student to progress in overcoming particular obstacles. For an example of a rubric, see Table 4-3 in Chapter 4.

Similarly, less formal assessments also may benefit from a rubric-type tool for interpretation. For example, during a classroom discussion, a teacher can draw on her previous experience with a student's particular difficulty in order to formulate the most helpful oral response.

Exploring Conceptions of Learning

Underpinning such appropriate rubrics or frameworks will be the teacher 's conception of how a student learns both generally and in the particular topic of study. A vision of learning will inform teachers' guidance to students. Addressing issues related

to learning may sound formidable but all teachers already have such conceptions, even if they are incomplete and implicit. Ideas about learning are part of any teacher's pedagogic skills; making them explicit so that they can be shared and reflected upon with colleagues may refine these skills.

SUPPORTING STUDENT INVOLVEMENT IN ASSESSMENT

A central issue for an assessment-centered, professional-development agenda is the development of self-reflection, or metacognition, among students. Evidence of the powers of metacognition can be evoked through a variety of activities: when students are asked to review what they have learned, compose their own test questions, justify to others how their work meets the goals of the learning, and assess the strength and weaknesses of their own work or work of their peers.

Attending to the ways in which students arrived at their results, as well as to the qualities of those results, bears upon and helps give guidance about the metacognitive aspect of students' development. Here, as elsewhere, a clear notion of the meaning and importance of the concept of metacognition has to be developed by the teachers and this notion has to be related to students' work in practice. Reflection and discussion with peers can help begin the examination of these notions.

A focus on student self-reflection raises a final issue. As argued in Chapter 3, an important task required by and promoted by good formative assessment is the cultivation of self-assessment and peer-assessment practices among students. The agenda for the development of the professional capabilities of teachers, or much of it, also can be viewed as an agenda for the development of the capabilities of students to become independent and lifelong learners. In particular, to share with students the goals, as perceived and pursued by their teachers, and to share the criteria of quality by which those teachers guide and assess their work, are essential to their growth as learners. For teachers, this implies a change of understanding of their role, a shift away from being seen as director or controller towards a model of guide or coach.

An Example

The following vignette highlights many issues previously discussed, offering an example of the sometimes serendipitous nature of assessment-centered professional development. In this case, teachers were working together over the course of a year to design summative assessments and scoring mechanisms and discussing the student work generated during larger scale summative assessment tasks administered at the state level in Delaware.

Vignette

The task of the Lead Teacher Assessment Committees seemed straightforward: develop end-of-unit assessments for the inquiry-based curricular modules being used in elementary schools across the state. After months of often frustrating efforts, it was not until the teachers recognized that they first needed to examine their values, beliefs, and assumptions about student learning, and, in turn, the design and purpose of the assessments they were being asked to develop, that any substantive progress was made. What emerged from this process was a self-created learning organization in which assessment became a force that would support and inform instructional decision making at multiple levels of Delaware's science education system. Just how this process evolved will be related through the professional-development experiences of a team of fifth-grade teachers charged with the responsibility of creating a performance-based assessment for an ecosystem module.

From the very beginning in 1992, Delaware's standards-based reform initiative included teachers in crucial roles, such as the development of the state science content standards and with the framework commission. Within this 1997 reform context, elementary lead teachers from across the state began to collaborate on the development of end-of-unit performance assessments for the curricular modules used in their classrooms. Even though the teaching guides that accompanied the modules included assessments, many Delaware teachers felt that the majority of the assessment items did not elicit the kinds of responses needed to determine if their students really understood the major concepts central to the State Science Standards. Although the teachers were in agreement that the accompanying assessments were inadequate, there was very little agreement as how to best proceed in developing alternative assessments. After days of discussion, the team of fifth-grade teachers decided to begin by constructing a concept map to ensure that there was consensus about which “big ideas” and processes from the ecosystem module they considered important enough to assess.

What happened next was not only a surprise to the teachers but also to the leaders facilitating the development process. As the concept map began to take shape, it became apparent that many of the teachers were confusing the skills their students needed to perform the ecosystem activities with the major concepts they needed to understand. Attempts to clarify the confusion led to a series of conversations in which the teachers realized that in their zeal to provide “hands-on” experiences, they had often taught scientific processes in isolation from or at times, to the exclusion of, scientific concepts. Consequently, observing the ecocolumn and constructing observation charts had taken precedence over students explaining the relationship between the living and nonliving components of the ecocolumn they were studying.

In efforts to determine the cause of the over-emphasis, the assessment-development team made some interesting discoveries. The teachers began to openly acknowledge that even though each of them had participated in 30 hours of professional development centered on the ecosystem module they still did not feel comfortable with some important ecological concepts. This conversation was especially insightful for the leaders facilitating the development process, since they had assumed that 30 hours of professional development were adequate. At that point in the process, it became clear

that once again the time table for developing the assessments needed to be modified for the teachers to better understand the concepts they were being asked to teach and develop an assessment around.

Through this period of self-discovery, the teachers also began to realize as they reviewed the teacher's guide, that their perceptions regarding what should be emphasized during the course of the unit had been strongly influenced by the wording and formatting of the guide. Because the titles of most of the ecosystem activities began with action verbs, such as “observing,” “adding,” “setting up,” they had naturally inferred that their instructional focus should be process oriented. As the team of fifth-grade teachers became more confident about their content knowledge and more comfortable with their newly acquired role of being a “wise curricular consumer,” they were able to identify the major concepts from the Delaware Science Standards they did not feel had been made explicit enough in the ecosystem module. They also began to rethink how the investigative activities needed to be presented and taught to support and strengthen students' conceptual understandings. This rethinking automatically led to the kinds of assessment discussions they had been unable to engage in for weeks. With a new and collective understanding about the module's instructional goals, the challenge for the team of teachers became twofold: how to create a performance-based assessment that could be used to evaluate both a student's skill level and conceptual understandings and how not to reinforce the process/concept dichotomy they themselves had experienced.

Several other crucial lessons learned by the assessment-development team were the importance of using educational research to inform decision-making processes and the need to seek outside expertise to stretch the thinking of team members. One of the most challenging issues facing the team was ensuring that the assessment items under development could be used to evaluate a range of student capabilities —from making accurate observations to formulating well-reasoned explanations. They included discussions about how items had to match what they were being asked. For example, if they wanted to assess critical reasoning, they had to probe in areas that would elicit that.

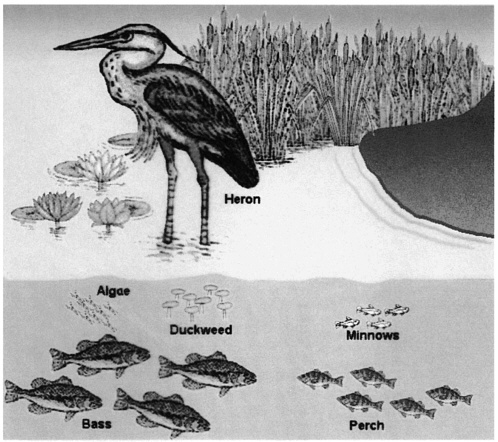

As Figure 5-2 shows, once a draft version of the ecosystem assessment was finally developed, the team began efforts to construct scoring criteria. Initially, attempts were made to develop very generic or holistic scoring rubrics, but the team soon realized that if these assessments were going to be used to inform teachers' instructional practices, generic rubrics were simply not diagnostic enough. The problem then became “so now what?” Leaders facilitating the team efforts once again realized the need to look beyond the expertise of the group for an alternative to generic rubrics so they brought in an assessment expert who was willing to work with the teachers in the process.

Bringing in an outside expert was good for the entire process. In conversations that ensued in the expert's presence, the teachers accepted that all of the students did not get the answers wrong for the same reason. It also was through the expert's insistence that the team began to explicitly state the criteria for a complete response. This exercise would initiate some of the most interesting discussion that occurred during the entire development process. As the debates about the criteria got into full swing, the teachers recognized that, because they each had their own set of internalized criteria for evaluating student work, what was considered quality work in one of their classes

was not necessarily considered quality work in another. Having to explicitly state the criteria became the impetus for very intense discussions regarding what counts as evidence of student learning and how good is good enough. These discussions would be revisited again and again and evidence of student learning would ultimately become the foundation for the decision-making assessment process.

Although the plan had always been to pilot draft versions of the ecosystem assessment so that student work could be used to modify both the instrument and scoring criteria, none of the team members anticipated how profoundly many of their assumptions about student learning would be challenged by critically examining and analyzing student work. Because so much time and effort had gone into the design of the assessment instrument and scoring rubrics, team members felt very confident that they had developed a quality product. The teachers naturally experienced a great sense of ownership regarding the assessment and although the process at times had been grueling they felt very proud of their efforts. When samples of student work from the pilot were returned for scoring and analysis and were not the quality anticipated, the first tendency was to blame the students. It took several rounds of discussions and more objective evaluations of student responses before the team was ready to admit that at least some of the problem was the way in which the items or rubrics had been designed. By examining student responses from across the state it was difficult to ignore converging evidence, which strongly suggested that some items were obviously confusing and therefore did not allow students the best opportunity to demonstrate their understandings. Box 5-2 presents two samples of student work to illustrate this point. The samples are typical student responses for the item that appeared on the piloted version, as shown in Figure 5-2.

After samples of earlier versions of the assessment-generated student work had been analyzed, it became apparent that the item itself was contributing to students' oversimplification of an important scientific concept—interdependency of organisms within an ecosystem. In their efforts to reduce the complexity of the wetland assessment item, the lead teachers were inadvertently fostering a very linear model of interdependency and were actually setting the students up to respond incorrectly. The overwhelming state-wide response, if the large-mouth bass disappeared then the heron would automatically die and nothing would happen to other organisms, literally forced the lead teachers to not only take a much closer look at the item but also to begin to question reasons for the prevalence of such a response.

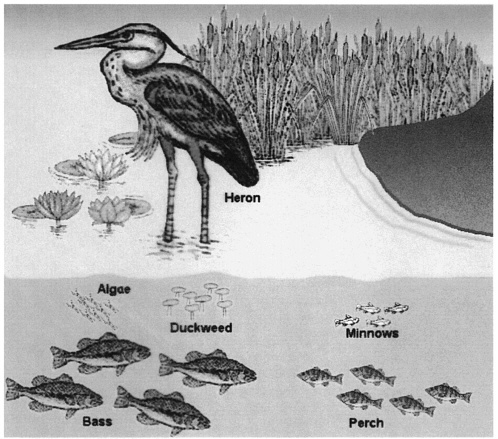

In a revised version in Figure 5-3, the item was rewritten to reinforce a web-like model of interdependency that more closely approximates what actually occurs in the wetland ecosystems. Results from the subsequent field tests indicated that the modifications to the item are in part responsible for more complete and accurate student responses as seen in Box 5-3. In their responses to this item, more students included mention of how populations of organisms would be impacted rather than how a single organism would be affected.

Several other factors contributed to an increase in student performance on this item. As the lead teachers began to focus on acquiring evidence of student understanding, they realized several important things about their instructional practice. For one, most of their instructional emphasis had focused exclusively on the student-built ecocolumns and not on creating a learning environment in which students were encouraged and challenged to extend their own understandings beyond their own ecocolumn model to other local ecosystems. The responses to the item have prompted teachers to go beyond the kit to exploring local habitats. Additionally, the conversations revealed that many of the teachers also had developed the linear interdependency model that their student subscribed to in their responses. Clarifying this particular content issue with teachers resulted in immediate and significant improvement in student responses. Teachers continue to work collaboratively to develop assessment items and rubrics aligned with their curriculum and to identify areas that would prove rich for further professional development.

Wetland Ecosystem

Look at the picture of the wetland ecosystem. You could find several food chains in this ecosystem. Here is one example.

Algae→ Minnows → Perch → Large-Mouth Bass → Heron

If all the Large-Mouth Bass disappear, explain how the remaining organisms in the ecosystem would be affected.

FIGURE 5-2 First version of assessment item.

SOURCE: Adapted from Delaware Science Coalition (1999).

|

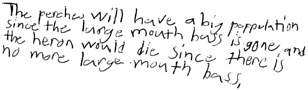

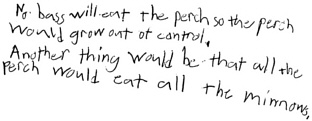

BOX 5-2 Student Work from Original Version Sample #1

Sample #2

SOURCE: Delaware Science Coalition (1999). |

As the Delaware experience indicates, groups of teachers and other experts coming together around student work can be a powerful experience. The “messiness” of this professional-development experience is in many ways its very strength. It also demonstrates a realistic view of the complexity of assessment-related discussions. Allowing the valuable conversations to emerge and run their course provided a richness that may not have been captured in a session with a strict agenda. Also, it certainly would not have happened in a single scoring session. The conversations about scoring student work, assessment criteria, or assessment designs quickly get to issues of content and questions of worth.

One important element highlighted in the Delaware experience was the discussion concerning the valid inferences that can be made from assessment data. All teachers must grapple with this issue as they use assessment data to inform teaching decisions. In this instance, asking critical questions such as, “What does this piece of evidence show?” and “What else do I need to find out?” led the teachers to identify the flaws in this experience.

Also conveyed in this case, efforts to use assessment as a cornerstone of teacher-professional development can spawn a deeper knowledge of science content. To design the assessment and score the students ' work, teachers had to probe and extend their own understandings. Many of the teachers discovered that even they did not fully grasp all of the ecology concepts being taught and assessed. The teachers recognized that they were led by the wording and format of the curriculum guide rather than their own understanding of the material. Identifying and addressing areas where teachers need additional support to better learn the content is very important especially when one considers research showing that a lack of science-content knowledge limits a teacher's ability to give appropriate feedback, including identifying misconceptions (Tobin & Garnett, 1988). In her 1993 work, Deborah Ball demonstrated the importance of subject-matter knowledge in teaching students for understanding, which requires careful listening to why and what the students are saying.

Wetland Ecosystem

Look at the picture of the wetland ecosystem. You could find several food chains in this ecosystem. Here is one example.

Sun → Algae → Minnows → Perch → Large-Mouth Bass → Heron.

The heron is a bird that can eat many kinds of fish in the wetland. If all the Large-Mouth bass disappear, explain how the interdependency of two of the remaining organisms in the food chain may be affected.

FIGURE 5-3 Revised version of assessment item.

SOURCE: Adapted from Delaware Science Coalition (1999).

|

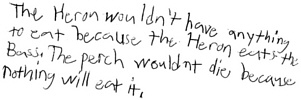

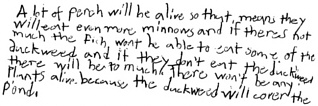

BOX 5-3 Student Work from Revised Version Sample #1

Sample #2

SOURCE: Delaware Science Coalition (1999). |

Numerous routes can be taken for professional development aimed at improving assessment and understanding of students. For example, placing student work at the center of their efforts, Project Zero brings teachers together to discuss and reflect on assessment practices and student work. Teachers involved in the project engage in a “ collaborative review process” as they look critically and deeply at student work. They are urged to stick to the piece of work and not look for psychological and social factors that could prevent a student from producing strong work (NRC, 1999a).

In a 13-country study of 21 innovations in science, mathematics, and technology education, the researchers noted (Black & Atkin, 1996) seven essential elements of programs that were successful in promoting changes in teachers. These elements are displayed in Box 5-4.

Attention to the assessment that occurs in their classrooms forces teachers to focus on some aspect of their practice. Assessment-centered, professional development regardless of the starting point can be a powerful vehicle for teacher professional growth when performed collaboratively, with regular reflection, and based on knowledge gleaned in practice.

KEY POINTS

-

Professional development becomes a lifelong process directed towards catalyzing professional growth.

-

Assessment offers fertile ground for teacher professional development across a range of activities because of the close integration of assessment, curriculum, teaching and learning. There is no “best” place to start and no “best” way to proceed.

-

Professional development should be rooted in real-world practice.

-

Regular and sustained reflection and inquiry into teaching is a start towards improved daily assessment.

-

Collaboration is necessary, as is support at the school and broader systems level.

|

BOX 5-4 Some Basic Features of Professional Development

SOURCE: Black and Atkin (1996). |