5

Program Achievements

CHALLENGES TO IDENTIFYING ACHIEVEMENTS

There are four challenges to evaluating the National Science Foundation’s (NSF’s) program Grants for Vertical Integration of Research and Education in the Mathematical Sciences (VIGRE). The first arises from the fact that the goals of the program shifted somewhat as the program evolved from 1998 on, as noted in Chapter 3. For example, in his initial “Dear Colleague” letter, Donald Lewis, then Director of NSF’s Division of Mathematical Sciences (DMS) said: “We want to emphasize again that the purpose is to increase the quality and breadth of mathematical sciences education, not the size of graduate programs.”1 However, the 1999 request for proposals (RFP) included the explicit goal of increasing the number of U.S. citizens, nationals, and permanent residents who receive training for and subsequently pursue careers in the mathematical sciences.2 So it is not surprising that one of the most frequent claims of success the committee heard from funded programs was how much their graduate programs had grown in size. Similarly, a component of outreach was originally stated quite clearly as strictly being optional, but all the successful programs encountered by the committee had very strong outreach programs. These two instances demonstrate why clarity and consistency of goals are necessary for effective evaluation and administration.

A second evaluational challenge concerns the lack of consistent and comparable indicators of success. This challenge is twofold, consisting of both a conceptual and a data issue: there has not been a consistent list of indicators, and the data collected have not been consistent over the course of the VIGRE program.

A third challenge to evaluating the VIGRE program comes from the difficulties in comparing awardees’ achievements to those of a control group. One approach is to do this chronologically and to

|

1 |

“Dear Colleague” letter, September 10, 1997, from Donald Lewis, Director, Division of Mathematical Sciences, National Science Foundation, to the mathematical sciences community (hereafter cited as “Dear Colleague” letter, September 10, 1997). Available at http://www.nsf.gov/pubs/1997/nsf97170/nsf97170.htm. Accessed July 6, 2009. |

|

2 |

From the program solicitation: “Grants for Vertical Integration of Research and Education in the Mathematical Sciences (VIGRE),” NSF 99-16, available at http://www.nsf.gov/pubs/1999/nsf9916/nsf9916.pdf. Accessed June 29, 2009. |

consider the control group to be the group of awardees prior to the receipt of their VIGRE awards; that is, to have a before-and-after comparison. A second approach is spatial and compares the awardees to a group of similar departments or institutions that did not receive an award. Finally, it is possible to combine these approaches and to compare the two types of departments at two different points in time. Although data tracking numbers and progress of undergraduate students, graduate students, and postdoctoral fellows at selected VIGRE and non-VIGRE departments could have been extracted from existing reports and other sources, comparable comparison would have been challenged in three ways:

-

The departments that received awards are different from those that did not. In particular, the departments funded through the VIGRE program have tended to be at top institutions as ranked according to NRC (1995). As noted in Table 5-1, 19 of the top 25 mathematics departments have received a VIGRE award. However, among those 25, 9 schools either never received a VIGRE grant (Massachusetts Institute of Technology [MIT]; Stanford University’s Department of Mathematics [although Stanford’s Department of Statistics received a grant]; California Institute of Technology; University of California, San Diego; University of Minnesota), or were judged by NSF to have failed in their VIGRE programs (University of California, Berkeley; Yale University;

TABLE 5-1 VIGRE Grants Received Among 25 Top-Ranked Mathematics Departments Since the Inception of the VIGRE Program in 1998

-

State University of New York, Stony Brook; Rutgers University). These top departments tend to be larger, both in terms of faculty and students; they support more research; and they might offer graduate students more opportunities for teaching or research or offer more postdoctoral positions. Finding similar departments that did not receive a VIGRE award is difficult.

-

Additionally, because the VIGRE program is still relatively young, many of the awardees are still in their first 5-year grant, and so there is not much “after” history to compare with the performance of departments prior to the award. Only a few departments have 5 or more years of VIGRE experience, and none has reached the 10-year mark. Unfortunately, there was no requirement that VIGRE awardees collect data after the award ended, so few data have been accumulated about the longer-lasting effects of an award.

-

Finally, awardees tried to meet basic goals in somewhat different ways. That is, all departments increased vertical integration, but they used different approaches within that general goal. Although this flexibility is a good thing, it also makes comparison difficult.

The fourth challenge to an evaluation of the VIGRE program is in determining causality—that is, proving that the VIGRE program caused particular outcomes, as opposed to these outcomes being caused by other factors that operated at the same time. Consider, for example, the fact that the number of postdoctorals in mathematical sciences departments rose substantially after the VIGRE program began. One reasonable explanation is that the VIGRE program created new postdoctoral appointments and is responsible for the increase. However, the explanation might also be that the labor market for new PhDs changed substantively (for example, less chance for new doctorates to go straight into employment might add to the appeal of the postdoctoral positions as temporary places of employment). Of course, the VIGRE program could also be one of several factors explaining recent trends in the mathematical sciences. The difficult challenge is testing for the impact of VIGRE in isolation from these other forces.

SOME EFFECTS OF VIGRE AWARDS

Given these challenges, the committee first looked at trends in the mathematical sciences post 1998. These data are presented in Appendix D. The committee thought that these data were insufficient to attribute the changes in the size and composition of the graduate student population in the mathematical sciences to VIGRE. The committee considered quantitative data provided by departments that received a VIGRE award—such data were either provided directly by departments to the committee in response to a committee request for information or were culled from data collected by NSF during the application process. The focus of comparison is on VIGRE awardees, examining the state of the departments prior to the award and during the award period.

Summary of Four Cases

Anecdotal evidence suggests that the VIGRE grants have improved the situation for mathematical science departments that receive them. Cozzens (2008), for example, gives a number of illustrative examples of success by VIGRE awardees.

A second source of information is individuals at departments that had received VIGRE grants and who spoke to the committee. Their presentations suggested that, while the VIGRE grants had produced a lot of new or improved activities at the institutions and some notable successes, there were also challenges. Four cases are briefly summarized here. As noted earlier in this chapter, the diversity of characteristics among departments makes drawing general conclusions from individual examples difficult.

University of California, Berkeley

The differing experiences of the Departments of Mathematics and Statistics at the University of California, Berkeley, illustrate very clearly the failures and successes of the VIGRE program. The Department of Mathematics, under the direction of then-chair Calvin Moore, was awarded a grant during the first year of the VIGRE program, but NSF terminated the grant in 2003 after only 3 years. Moore’s presentation “Reactions to VIGRE from the Trenches”3 records his responses to the NSF’s actions. Following are some of his observations:

NSF has … constructed a program that does not give sufficient recognition to the diversity of departments and institutional goals…. A second and related problem is that VIGRE guidelines call for changes in departmental programs, even when there is no reason for change or when some significant changes have already been made…. the VIGRE program … has become encrusted and weighed down with many specifics that go far beyond the original intent….

An additional concern voiced by Moore was that the VIGRE grant was removed with no warning, and in particular with no chance to rectify the perceived shortcomings.

By contrast, the VIGRE experience at Berkeley’s Department of Statistics has been far more positive. The Department of Statistics won an initial grant in 2002 and a renewal in 2007, for what will be a total of 10 years of support. The department’s recent activities include research assistantships, a seminar series, and a summer statistics camp for undergraduates; traineeships, travel support, and a summer “camp” used to introduce incoming graduate students to their new environment; and fellowships for postdoctorals, as well as an ongoing VIGRE seminar.4

University of Chicago

The University of Chicago VIGRE program, which began in 2000 and will continue through 2010, has been particularly successful.5 The largest VIGRE influence has been the new summer Research Experiences for Undergraduates (REU) program, which in 2008 will have 82 University of Chicago undergraduate participants mentored by 30 graduate students and taught by 9 faculty members. The undergraduates themselves will serve as counselors to approximately 100 Chicago-area high school students and 120 grade school teachers. This REU has become a central part of the mathematics undergraduate experience at Chicago, and its sustainability is an issue, as it now costs nearly $300,000 per summer. Other smaller-scale programs initiated with VIGRE funding include the following:

-

The warm-up program for entering graduate students is a 2-week program preceding the start of the school year, organized and run by graduate students for the benefit of incoming graduate students;

-

The directed reading program comprises graduate students mentoring undergraduates one-on-one; about 15 to 20 mentor-mentoree pairs participate per quarter;

-

VIGRE course assistants are not just graders, but assistant teachers, holding independent office hours and meeting at least once a week with the graduate student teacher; some 25 undergraduates participate each year; and

-

Under the Young Scholars program, about 25 undergraduates each quarter serve as counselors to about 60 Chicago-area high school students every other Saturday morning.

The VIGRE grant has also offered substantial funding of graduate students and postdoctorates, both groups gaining time off from teaching to focus on their research. The support of graduate students has helped cut the attrition rate, to the point that at most, two or three students drop out of each entering class before achieving a PhD.

University of California, Davis

The VIGRE program at the University of California, Davis, illustrates what can be done at a large state school.6 The Davis VIGRE grant is principally built around Research Focus Groups (RFGs), each consisting of undergraduates, graduate students, postdoctorates, and a faculty member, who get together to explore a specific research area. Each year there are four such groups, which conduct special topics courses, seminars, mini-workshops, and REU projects. Postdoctorates help co-organize these groups, undergraduate students work on projects, and the faculty member receives one-course teaching relief. The department also has formal mentoring structures for graduate students, undergraduate students, and postdoctoral fellows. Communications skills are fostered by oral presentations within the RFGs, and written skills are fostered as graduate students write up expository or instructional material, postdoctorates prepare grant applications, and RFG participants help students apply for mini-grants for travel, summer support, visitor support, and so on.

The recruitment and outreach efforts of the VIGRE RFGs are remarkable. Graduate students have created and run a program for high school and young undergraduate students in the Sacramento area. Outreach to the general public is done with math festivals, which put on a show of puzzles and math games. At one such festival, a PIXAR scientist came to talk about mathematics in the movies, drawing an audience of 400 people. Students are aggressively recruited from California programs that target talented high school students and minorities and from local high schools, which include a large Hispanic population. The department-wide nature of VIGRE is crucial; the committee is convinced that the culture has changed dramatically owing to the VIGRE-funded initiatives, becoming much livelier and more open, and that the curriculum has undergone major revision. Statistics show an increase in the number of graduate students (from 58 to 119 in 8 years) and of undergraduate majors (from 294 to 385 in 6 years).

University of Maryland

The Department of Mathematics at the University of Maryland, College Park, received a VIGRE grant in 2003, after four previous applications had failed to be accepted.7 The department has about

450 undergraduate majors (many pursuing other majors as well) and about 250 graduate students. The mathematics faculty of 65 is large, but there are few postdoctorals.

The centerpiece of VIGRE at the University of Maryland, College Park, is a system of Research Interaction Teams (RITs), small, informal groups mainly composed of graduate students and often postdoctorals or undergraduate students, led by faculty members. RITs were originally augmented by a minicourse series, designed to draw undergraduate students into research. By the end of the second year of the grant, the pace seemed too intense, and some of the minicourses were replaced with panel discussions and special colloquia. The RITs, which were initiated on the basis of feedback about a rejected VIGRE proposal, continue and thrive; some semesters have seen as many as 20 active RITs. Several faculty members who had never worked with undergraduates have had successful mentoring experiences, some of which resulted in joint publications. Graduate student supervisors have also been instituted for the Undergraduate Math Club, the members of which became more involved in the minicourse/panel discussion/colloquium series. These new activities were informal and broadly accessible.

The VIGRE project at the University of Maryland, College Park, was designed to change the culture of the department, but in several important ways progress has been disappointingly modest. The department’s many failed attempts for a VIGRE grant led to a winning proposal that has been almost impossible to administer as proposed; the project became so complicated that it risked defeating the very goals that VIGRE was meant to accomplish. Some unwieldy parts of the project were eliminated, and others were substantially modified as it became clear that their demands on the faculty and staff outweighed their benefits.

Another disappointment of the VIGRE grant concerns the lack of permanent graduate program reform, an ongoing problem because topics courses in areas basic to current research are not guaranteed to continue to attract the necessary enrollment. Also, students still take 6 or more years to complete their doctorates.

Approaches to Evaluating VIGRE Achievements

Final reports from awardees also present a positive picture of the impact of the grants. For example, summarizing their first VIGRE award, the leadership team at the University of Washington wrote:

VIGRE support has made a significant impact on the three departments involved in this endeavor:

-

The number of majors in the mathematical sciences has dramatically increased.

-

The graduate programs in the mathematical sciences have grown in both size and quality.

-

Undergraduate research projects, some with industry, have been initiated in all three departments.

-

Communication among our departments has improved substantially, particularly at the graduate level, and cross-departmental committees of VIGRE fellows and postdocs are helping to run the VIGRE program.

-

The VIGRE program has grown from one focusing only on applied aspects to one encompassing all aspects of the mathematical sciences.

-

Panel discussions on job interviews (for graduate students) and on graduate studies (for undergraduates) have been a success.

-

The curriculum has been reformed at both the undergraduate and the graduate level in all departments.

-

K-12 outreach activity has increased in all departments.8

|

8 |

Final Report from VIGRE Grant DMS-9810726, University of Washington, available at http://www.math.washington.edu/vigre/vigre-docs/VIGRE1FinalReport.pdf. Accessed June 26, 2009. Note that most awardees do not put their reports on their Web sites. |

To evaluate the achievements of the VIGRE program, one can ask whether individual elements of the program are effective. One approach to such an evaluation focuses on comparing the effectiveness of each element. For example, hypothetically, the mentoring program might be judged successful, while another component might not have achieved the goals set by NSF. Alternatively, one might ask whether one awardee’s approach to a VIGRE component is better than that of other awardees. The committee considered all of these approaches, but it did not attempt to evaluate the experiments conducted by the individual departments in the process of applying for, administering, and conducting their VIGRE grant. More broadly, it would like to be able to answer this question: Is the community better off overall—are the mathematical sciences in the United States healthier with VIGRE than they would be without VIGRE? That difficult question is taken up in the next section.

VIGRE APPLICATIONS AND AWARDS

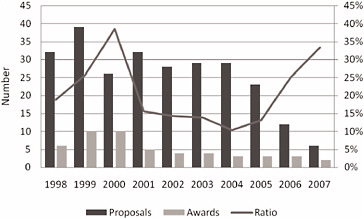

Figure 5-1 shows the annual number of proposals to the VIGRE program from 1998 through 2007 and the number and percentages of successful applications. Note the high success rate of about 40 percent in 2000, and just 4 years later the low rate of about 10 percent in 2004. The success rate has been rising recently, but the drop in the number of proposals is startling. (Recall that VIGRE is now part of EMSW21, so fewer VIGRE awards are made.)

As noted in Chapter 3, 52 departments at 39 different institutions have been awarded VIGRE grants over the lifetime of the program (excluding Louisiana State University, whose award was too recent to be included in this study).

Tables 5-2 and 5-3 reveal the patterns of applications from institutions that have never succeeded in receiving VIGRE funding. Table 5-2 lists the number of proposals considered in a given year’s competition that came from departments that never received an award.

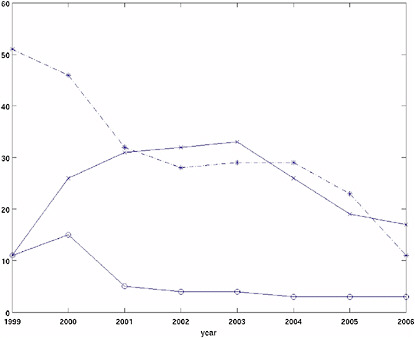

Figure 5-2 shows the number of VIGRE awards. It shows the number of VIGRE grants that were funded each year since the program’s inception, the number of VIGRE grants in operation each year, and the total number of submitted proposals each year.

A total of seven grants have been renewed for a second 5-year period, and nine grants have been terminated after 3 years.

In order to learn more about how the VIGRE program is perceived and how departments decide whether or not to invest in the effort to apply, the committee sent an e-mail to the 238 chairs of mathematics, applied mathematics, and statistics departments that have never received VIGRE funding.9 Responses were received from 122 of these departments. (The committee suspects that these responses might be more heavily weighted with feedback from departments that are unhappy with the VIGRE program, and thus the information should be interpreted with that in mind.) As the committee had suspected, many of the departments have never applied for a VIGRE award. Of those 122 departments, 47 said that they had applied for an award,10 and 75 said that they had not. Of those who had not, some gave no reason and some gave multiple reasons including the following:

-

Did not expect to receive an award (27),

-

Conditions of award are too burdensome (15),

FIGURE 5-1 Number of VIGRE proposals and new awards and percentage of successful applications. SOURCE: Data provided by the National Science Foundation.

TABLE 5-2 Number of Proposals from One or More Departments at an Institution That Never Received a VIGRE Award, by Year, 1999-2008

|

Year |

Number of Proposals |

|

1999-2000 |

23 |

|

2000-2001 |

21 |

|

2001-2002 |

24 |

|

2002-2003 |

24 |

|

2003-2004 |

25 |

|

2004-2005 |

19 |

|

2005-2006 |

6 |

|

2006-2007 |

5 |

|

2007-2008 |

1 |

|

SOURCE: Data provided by the National Science Foundation. |

|

TABLE 5-3 Number of Unfunded Proposals from Institutions, Among Those That Never Received a VIGRE Award

|

Number of Proposals |

Number of Departments |

|

1 |

22 |

|

2 |

18 |

|

3 |

13 |

|

4 |

7 |

|

5 or more |

4 |

|

SOURCE: Data provided by the National Science Foundation. |

|

FIGURE 5-2 Grants for the Vertical Integration of Research and Education in the Mathematical Sciences, 1999-2006: the number of awards made by year (bottom solid line), the number awards in operation each year (upper solid line), and the total number of submitted proposals by year (dotted line). SOURCE: Data provided by the National Science Foundation.

-

Insufficient interest within department (14),

-

Application process is too burdensome (12),

-

A VIGRE award is not the preferred way for our department to advance mathematics and/or statistics (12),

-

Our department already undertakes activities that would be pursued via a VIGRE award (10), and

-

Other (27 responses, ranging from “Not aware of the opportunity” to “We have the impression that NSF does not have that much interest in funding biostatistics departments. It is a lot of work to apply, so we haven’t.”).

However, applying for a VIGRE grant, regardless of receiving an award, might have had some positive effects on a department. Some departments told the committee in response to its e-mail that they had made changes in preparation for applying, including changing or improving the curriculum and adding components of vertical integration.

The committee was unable to determine from available data any pattern about which departments were selected for pre-award site visits and which were not. Many of the top mathematics departments in the United States repeatedly applied for a VIGRE grant and were not selected for site visits for reasons that remain unclear both to the proposers and to the committee. The only clear pattern that the committee observed is that funding was always denied if a site-visit team reported the lack of a plan or that faculty was unaware of a plan.

The American Mathematical Society classifies PhD-granting mathematics departments (other than those representing themselves as “applied mathematics” departments) in Groups I, II, and III according to the National Research Council’s ratings of departments (NRC, 1995). The highest group—Group I—is further broken down into Group I Public and Group I Private. Of the 48 Group I departments, 30 (60 percent) eventually received a VIGRE grant for at least 3 years; this breaks down further to 13 of the 23 Group I Private departments (52 percent) and 17 of the 25 Group I Public departments (68 percent). In Group II, which contains 56 departments, 7 VIGRE awards were made (12.5 percent), and no awards were made to Group III departments.

Thus, the large majority of VIGRE grants went to top-ranked mathematics departments. Even so, nearly half of the Group I Private and one-third of the Group I Public institutions never received VIGRE grants. Harvard University and Princeton University received 5-year grants in the early years of the program, as did the Department of Mathematics at the University of California, Berkeley, and Yale University, although the latter were both terminated after 3 years. The University of Chicago is the only Group I Private department whose VIGRE program was renewed for an additional 5 years. Committee members reported that colleagues in several Group I Private schools had said that their departments have applied without success, sometimes repeatedly, and some did not even receive site visits.

A pattern, evident in Table 5-4, is that VIGRE funding seems to be shifting more and more over time from top-ranked private schools to lower-ranked large state universities. Currently, the only one of the top-10 mathematics departments (according to the NRC [1995] rankings) that has an active VIGRE grant is the University of Chicago.

According to the committee’s own data collecting, 31 schools applied for 5-year renewals of their VIGRE grants, 7 did not, and 4 did not report back. There seems to be no clear picture as to why schools did not reapply, although 3 noted that they judged their chances of renewal to be small. A few cited administrative burden. Of the 31 departments that applied for renewal, 9 reported to the committee that their reapplications were successful, 19 reported that the reapplications were unsuccessful, and 3 did not respond.

The committee’s e-mail polling collected many comments, some fairly angry, to the effect that NSF’s evaluation processes for VIGRE proposals were unfair and/or inconsistent. This quote, sent to the committee from the then-chair of a top mathematics department, expresses a common view: “I did not feel that scientific merit was ever a factor in the turndown but we were defeated by a combination of perceptions that … was an already very wealthy institution and what I can only describe as a combination of bureaucracy and the NSF staff caring more about doing things that made them look good than truly improved scientific outputs of infrastructure.”11 In particular, among VIGRE schools that failed in their renewal applications, 8 out of 13 were definitely dissatisfied with the valuation process.

The DMS program manager for the VIGRE program, Henry Warchall, explained something of the NSF procedures in an e-mail to the committee:

TABLE 5-4 VIGRE Awards by Department Type, 1999-2008

|

Year |

Active Grants |

Group I Departments |

Group II Departments |

Applied Math/Statistics |

Percent Group I Departments |

Percent Applied Math/Statistics |

Percent Group II Departments |

|

1999-2000 |

18 |

10 |

1 |

7 |

55.56 |

38.89 |

5.56 |

|

2000-2001 |

34 |

22 |

2 |

10 |

64.71 |

29.41 |

5.88 |

|

2001-2002 |

39 |

26 |

2 |

11 |

66.67 |

28.21 |

5.13 |

|

2002-2003 |

39 |

25 |

3 |

11 |

64.10 |

28.21 |

7.69 |

|

2003-2004 |

39 |

25 |

3 |

11 |

60.10 |

28.21 |

7.69 |

|

2004-2005 |

41 |

25 |

3 |

13 |

60.98 |

31.71 |

7.32 |

|

2005-2006 |

32 |

20 |

3 |

9 |

62.50 |

28.13 |

9.38 |

|

2006-2007 |

23 |

13 |

2 |

8 |

56.52 |

34.78 |

8.70 |

|

2007-2008 |

21 |

9 |

4 |

8 |

42.86 |

38.10 |

19.05 |

|

SOURCE: Award data from the National Science Foundation. |

|||||||

I wanted also to mention that none of the recommendations for award or declination of VIGRE proposals were made by a single program director in isolation. The recommendations are always made in consultation at least with other program directors in DMS. The group arriving at the recommendation has varied over the years. Some years, the entire Division (with the exceptions of DMS staff members who had conflicts of interest) was involved in formulating the recommendations. Other times, the VIGRE Management Group or the Workforce program management team were responsible. The variation is due to variation in the administrative structure used to handle the VIGRE proposals. I’ll also mention that each VIGRE recommendation is further approved by the DMS Division Director (or designee) after it is formulated by the team of program directors who are involved. Thus, the VIGRE recommendations are by no means the work of a single NSF staff member.12

OUTCOMES AT AWARDEE DEPARTMENTS

As might be expected, departments granted VIGRE funding were more positive about the program than were other departments; but even among the former group, comments about the overall success of VIGRE were not unanimous. The committee classifies 7 of the open responses from this group as very positive, 5 as neutral, and 11 negative. The primary complaints concerned the exclusion of foreign students from funding and the excessive demands on faculty time to administer and coordinate the many activities.

Among the 122 non-VIGRE institutions that responded to the committee’s e-mail request for information, only 5 declared that VIGRE is a good program. The committee classified 25 responses as being neutral with respect to the program and another 25 as being negative. A common comment, in about 20 percent of all respondents, was that VIGRE was a way for “the rich [to] get richer.”13

In response to the committee’s request for information from VIGRE awardees, 24 responding departments reported that the quality and quantity of mathematics students have gone up in recent years, whereas 4 said that there has not been much change. Non-VIGRE departments saw things quite differently: 24 reported recent improvements and 12 reported recent deterioration. Furthermore, 21 of

the non-VIGRE departments did not see much change in the past few years. Four of the responses could not be clearly classified.14

The committee asked those departments that had grants if in fact the VIGRE funding helped. As may be predicted, 18 asserted that it had helped a lot and just 5 said that it helped little or not at all. There were, however, also 5 fairly ambiguous responses.

The committee also asked departments about trends, with results shown in Table 5-5.

As shown in the table, concerning undergraduates, almost all the VIGRE schools—31 in all—said that mentoring of undergraduates by postdoctorals went up during the program; and just 2 schools said that there had not been much change. The committee recorded 28 VIGRE departments reporting increased research experiences for undergradates, and 4 reporting an unchanged level. Summer mathematics programs and camps increased at 18 of the VIGRE departments and, surprisingly, stayed the same at 9. In summary, VIGRE schools nearly uniformly self-reported that numbers and quality of students went up; non-VIGRE departments reported much more varying results. As expected, graduate traineeships and postdoctoral fellowships increased. Interdisciplinary collaboration increased for about two-thirds of respondents. Outreach to K-12 students and teachers increased among half the respondents; it stayed about the same for the others.

The committee also noted an effect on faculty. It received many comments from VIGRE awardees that demands on faculty contributing to the VIGRE program were very great. Some VIGRE departments, in fact, paid faculty for some of their participation, while others did not. The following comment is from a Group I Public university: “It was extremely demanding to administer. Based on my own experience as the director of the program, it is easy for such activities to ‘burn out’ the faculty who are most involved. This is extremely counterproductive.”15 In response to the committee’s inquiry about this issue, NSF’s Henry Warchall wrote:

As far as I am aware, no VIGRE funds were allocated for academic-year faculty salary. I believe that in the beginning years of the VIGRE program, no faculty salary whatsoever was allocated in VIGRE awards. Later, as the first projects received 3rd-year site visit evaluations, the magnitude of the effort required to organize and conduct a successful VIGRE program in a large department became evident, and DMS began to grant requests in VIGRE (and in EMSW21 generally) for faculty summer salary for the purposes of administration only. For several years, it was the operational practice that the amount of faculty salary in a VIGRE award would not exceed 10% of the total budget. In recent years, that strict 10% cap on the amount of faculty salary was relaxed in principle, but faculty salary still constitutes a very small percentage of each EMSW21 award. I am not aware of any academic-year teaching reduction that was funded directly by a VIGRE award.16

The committee had no objective way of measuring the effect of the VIGRE program on curriculum change at departments that obtained VIGRE grants. As for non-VIGRE departments, of the 122 who replied to the committee’s questionnaire, 47 had applied for a VIGRE grant, and 31 had applied more than once. Of those 47, 21 said the process of applying had stimulated change in their departments, but only 5 explicitly mentioned curriculum review and/or change. Others mentioned vertically integrated seminars and mentoring.17

TABLE 5-5 Trends in Departments That Received a VIGRE Award

|

Topic Reported On |

Reported by Departments (no.) to Have Increased |

Reported by Departments (no.) to Have Stayed About the Same |

Reported by Departments (no.) to Have Decreased |

Not Applicable |

|

Mentoring of students by postdoctorals or graduates |

31 |

2 |

0 |

0 |

|

Teaching collaborations with departments outside mathematics or statistics |

11 |

21 |

0 |

1 |

|

Research collaborations with departments outside mathematics or statistics |

22 |

11 |

0 |

0 |

|

Group activities that include undergraduates, graduates, postdoctorals, and faculty |

29 |

4 |

0 |

0 |

|

Outreach to K-12 students |

16 |

16 |

0 |

0 |

|

Outreach to K-12 teachers |

17 |

15 |

0 |

0 |

|

Summer camps in mathematics/statistics |

18 |

9 |

0 |

6 |

|

Postdoctoral fellowships |

26 |

6 |

1 |

0 |

|

Graduate traineeships |

25 |

6 |

2 |

0 |

|

Undergraduate research experiences |

28 |

4 |

0 |

0 |

|

SOURCE: Data provided by departments in response to committee survey dated November 15, 2007. |

||||

Most VIGRE schools reported in the committee’s survey that programs begun under the impetus of VIGRE that required funding (especially graduate and postdoctoral fellowships) would be discontinued, but many reported that low-cost programs such as research-training groups and student-run seminars were likely to continue. The following quote, from a Group I Public university, characterizes the responses on this subject: “My feelings about VIGRE are mixed: VIGRE encouraged and enabled us to do all sorts of wonderful things; this was nice while it lasted, but in the end we failed to get our university to support any of these programs after VIGRE ended, and the post-VIGRE financial situation was brutal.”18

DMS’s RFP already raises this as an issue: “A successful EMSW21 proposal must convince reviewers that the project … has a post-EMSW21 plan. The EMSW21 program is intended to help stimulate and implement permanent positive changes in education and training within the mathematical sciences in the U.S. Thus it is critical that an EMSW21 site adequately plan how to continue the pursuit of EMSW21 goals when funding terminates.”19 However, it is not clear that the proposal review process has put enough emphasis on the sustainability of proposed plans.

CONCLUSION

Although it is difficult to attribute changes (e.g., enrollments, degrees) in an institution’s mathematical sciences department to the VIGRE program as opposed to other factors, it does seem that the VIGRE program has produced a number of qualitative changes in mathematics and statistics departments that

|

18 |

Ibid. |

|

19 |

From the program solicitation: “Enhancing the Mathematical Sciences Workforce in the 21st Century (EMSW21),” NSF 05-595, available at http://www.nsf.gov/pubs/2005/nsf05595/nsf05595.pdf. Accessed June 29, 2009. |

have held a grant. These include increasing the integration of students and faculty, providing more opportunities for students, helping effect a more welcoming culture (at least in the sense of mentoring), and introducing somewhat more interdisciplinarity and outreach.

Two further avenues of research are to work (1) to quantify these changes, and (2) to assess the effects of VIGRE funding beyond the departments that received a grant. In the first instance, it seems clear that some effort should be made to survey (in some fashion) administrators, faculty, and students who are or were involved in the VIGRE program as well as students who are not involved, to ascertain their views on the importance and impact of VIGRE funding. In the latter instance, for NSF to maximize the potential of VIGRE funding, at least some of the impact must transcend those who directly receive funding. One could ask, for example:

-

What is the effect of VIGRE on the U.S. scientific workforce?

-

What is the effect on the culture of mathematical sciences higher education?

Although the second question above seems unanswerable, the committee believes that the first would be answerable if, for instance, NSF tracked and surveyed mathematicians graduating from VIGRE programs. Such a survey could give some indication of the influence of these VIGRE graduates and of those trained by them.