2

A Framework for Building Patient Safety Defenses into Nurses’ Work Environments

BUILDING ON TO ERR IS HUMAN AND CROSSING THE QUALITY CHASM

As noted in Chapter 1, this study builds upon the findings of two prior Institute of Medicine (IOM) reports that address mechanisms for improving patient safety—To Err Is Human: Building a Safer Health System (IOM, 2000) and Crossing the Quality Chasm: A New Health System for the 21st Century (IOM, 2001). To Err Is Human identifies a national agenda for change, specifying actions that entities—primarily those external to organizations directly delivering health care (Congress, regulators, accreditors, public and private purchasers, health professional licensing bodies, and professional societies)—should take to better safeguard patients. The report additionally devotes a chapter and two recommendations to actions that health care organizations (HCOs)—those organizations employing health care workers to deliver direct patient care—should take to improve patient safety. The first recommendation calls for HCOs to establish “patient safety programs with defined executive responsibility” that:

-

provide strong, clear and visible attention to safety;

-

implement non-punitive systems for reporting and analyzing errors within their organizations;

-

incorporate well understood safety principles, such as standardizing and simplifying equipment, supplies, and processes; and

-

establish interdisciplinary team training programs for providers that incorporate proven methods of team training such as simulation. (IOM, 2000:156)

The second recommendation calls on HCOs to “implement proven medication safety practices” (IOM, 2000:157).

Crossing the Quality Chasm further addresses patient safety as one of six highlighted aims for U.S. health care: that it be safe, effective, patient-centered, timely, efficient, and equitable. To achieve these six aims, the report specifies actions that HCOs and other entities should take to improve all aspects of health care quality—not just patient safety. The report’s recommendations call on HCOs to (1) redesign care processes; (2) make effective use of information technologies; (3) manage clinical knowledge and skills; (4) develop effective teams; (5) coordinate care across patient conditions, services, and settings over time; and (6) incorporate performance and outcome measurements for improvement and accountability.

The authors of Crossing the Quality Chasm identify four different levels for intervening in the delivery of health care: (1) the experience of patients; (2) the functioning of small units of care delivery (“microsystems”), such as surgical teams or nursing units; (3) the functioning of organizations that house the microsystems; and (4) the environment of policy, payment, regulation, accreditation, and similar external factors that shape the environment in which health care delivery organizations deliver care. Whereas To Err Is Human speaks mainly to the fourth level, Crossing the Quality Chasm addresses primarily the first and second levels—how the experiences of patients and the work of microsystems of care, such as health care teams, nursing units, or individual health care workers delivering care to patients, should be changed (Berwick, 2002). Both of these reports direct less attention to the third level above—the organizations (HCOs) that house the microsystems.

This report emphasizes this level of the HCO. HCOs—by virtue of their employment of health care providers, establishment of work processes, and management of the resources used to deliver health care—are the primary developers of the structures and processes used by health care workers to deliver care. For purposes of this study, the committee defines these internal HCO structures and processes as the “work environment.” We recognize that organizations and factors external to HCOs also shape work environments, but note that these external elements have been strongly addressed in the two prior IOM reports.

This report, with its focus on HCOs and the work environments they contain, therefore complements the work of the two prior IOM reports in three ways:

-

It provides greater detail on how HCOs can and should implement key recommendations of To Err Is Human and Crossing the Quality Chasm in such areas as cultures of safety and work design.

-

It addresses aspects of the work environment that are critical to patient safety but are not addressed in either of the two prior reports, such as the adequacy of staffing levels and worker fatigue.

-

It unifies the prior two IOM reports and this report into a framework that all HCOs can use to construct work environments more conducive to patient safety. This framework integrates the multiple, mutually reinforcing strategies that are needed within various components of the work environment to keep patients safe from the ever-present latent conditions and human errors that pose risks to patient safety (as described in Chapter 1).

THE NEED FOR BUNDLES OF MULTIPLE, MUTUALLY REINFORCING PATIENT SAFETY DEFENSES

Research from a variety of disciplines clearly documents that errors and adverse events, especially those that are difficult to correct, often result from multiple, interdependent factors that converge to impair the performance of organizations (Goodman, 2001; Perrow, 1984; Ramanuajm, forthcoming). Errors and accidents often originate within multiple steps in work design and implementation—in fact, in all steps of a production process—and in several components simultaneously. Consequently, reducing error and increasing patient safety are not likely to be achieved by any single action; rather, a comprehensive approach, addressing all components of health care delivery within an organization, is required.

Evidence in support of this contention comes from health services and nursing research; behavioral and organizational research on work and workforce effectiveness; human factors analysis and engineering; studies of organizational disasters and their evolution; and studies of high-reliability organizations.1 For example, intensive study of individual disasters has yielded valuable information about the circumstances leading up to each catastrophic error. The combined knowledge obtained from multiple case studies yields a body of principles that, when applied, can reasonably be expected to reduce the occurrence of errors, their adverse consequences, or both (Reason, 1990). This approach is employed in the Joint Commission on Accreditation of Healthcare Organizations’ (JCAHO) analyses of the root causes of sentinel events.

Similarly, organizational research conducted by social scientists has provided a multilevel view of organizations by focusing on the complex levels of human organizing, including individuals, dyads, groups, networks, firms,

|

1 |

As noted in Chapter 1, high-reliability organizations are defined as high-risk industries (e.g., nuclear power production) with low accident rates. |

and interfirm arrangements (House et al., 1995). This research has identified sets of practices and contextual factors that support or impede effective organizing. For example, the characteristics of world-class manufacturing systems have been identified not in terms of any one practice, but as bundles of mutually reinforcing practices (e.g., quality improvement structures; participative decision making; worker training; and access to unit or organization quality, financial, and managerial information) (MacDuffie, 1995). Such research has found that focusing on only one piece of the problem can backfire. Implementing a single practice, such as teamwork or new incentives, without supporting practices, such as access to pertinent information or education, may yield few practical consequences. In health care settings, for example, changes in work procedures without attention to their impact on staffing demand and existing workflow may actually reduce patient safety.

Studies of high-reliability organizations also have identified multiple, related practices associated with the achievement of high levels of safety in production processes. These include ensuring ongoing vigilance of workers to detect unexpected sequences of events that pose the risk of errors; constantly training workers in knowing how to detect errors in the making and respond to errors once they occur; incorporating personnel and equipment redundancy in work design; managing work flow, especially in interdependent work components; and practicing nonhierarchical decision making so that decisions are made at that point in the organization where expertise is greatest—often the point where the action is to be implemented, which can often be at lower levels of the organization’s hierarchy (Roberts, 1990; Roberts and Bea, 2001).

As discussed in Chapter 1 and reinforced by the above research, then, reducing errors and increasing patient safety require multiple, mutually reinforcing changes—bundles of changes that support each other in altering the context of worker behavior within a work environment—not isolated interventions or a single “silver bullet” (Itner and MacDuffie, 1995; Pil and MacDuffie, 1996). These bundles of changes need to be applied throughout an organization’s production processes. Fortunately, an evidence-based model for applying error-defense strategies throughout organizational work processes has been developed. This framework, based on empirical research on organizational safety for health care and other industries, is described below.

AN EVIDENCE-BASED MODEL FOR SAFETY DEFENSES IN WORK ENVIRONMENTS

An old fable describes a group of blind men touching an elephant. Each alternatively describes the elephant as “a massive wall,” “a thin cylindrical

whip-like animal,” a “muscular, tubular creature,” or a “hard, rock-like being with sharp knife-like protuberances.” As the fable illustrates, all of these characterizations are correct, just incomplete when isolated from each other. Early in the course of this study, it became apparent that the work environment of nurses as related to patient safety is similarly multidimensional. The committee noted evidence that patient safety is threatened by inadequate staffing levels, long work hours, poor education and training, unsafe work practices, underutilization of information technology, and a variety of other work conditions. It also quickly became apparent that these are not competing, but complementary views of the threats to patient safety.

The complementarity of these threats to safety is validated by the work of James Reason, whose analyses and writings provided much of the evidence base used by the IOM committee that produced To Err Is Human. In his widely cited book, Human Error, Reason (1990) reviews the basic components of and contributors to any organization’s production processes and describes how errors arise in each. He notes that the concept of “production” is one on which there is wide agreement. All enterprises are involved in some form of production, whether the product is energy, chemical substances, the mass transport of people, or health care. Using the basic components of production, Reason develops a multifaceted model of organizational errors that has been used to analyze and develop error-defense strategies for health care settings (Meurier, 2000), as well as other lines of business (Helmreich, 2000).

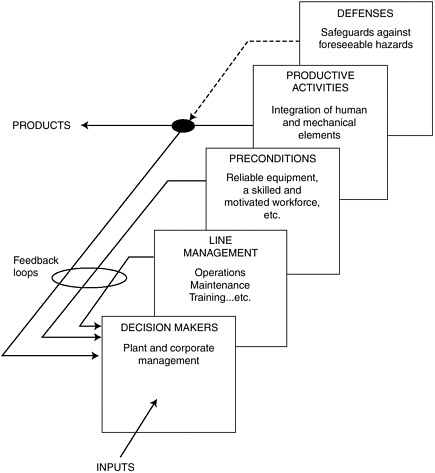

Figure 2-1 identifies the basic elements of any organization’s production process. In this model:

-

Decision makers are both the designers and high-level managers of the organization. They set the goals for the organization as a whole in response to inputs from the external environment. They also direct, at a strategic level, the means by which organizational goals should be met. A large part of their function is concerned with the allocation of finite resources—money, equipment, people, and time. Their aim is to deploy these resources to maximize both productivity and the welfare of the organization’s resources.

-

Line management consists of departmental specialists who implement the strategies of decision makers.

-

Preconditions include the necessary resources and environmental conditions for production, such as reliable and appropriate equipment, a skilled and knowledgeable workforce, an appropriate set of attitudes and motivators, work schedules, environmental conditions that permit efficient and safe operations, and codes of practice that give clear guidance to workers regarding desirable and undesirable performance.

FIGURE 2-1 The basic elements of production.

SOURCE: Reprinted with the permission of Cambridge University Press from Human Error by James Reason, copyright 1990.

-

Productive activities are the actual performance of humans and machines used to “deliver the right product at the right time.”

-

Defenses include structural and procedural safeguards to prevent foreseeable injury, damage, or costly outages.

Reason notes that each of the above elements of the production process is shaped by the fallible decisions and actions of humans, thereby creating the ever-present risk of error.2

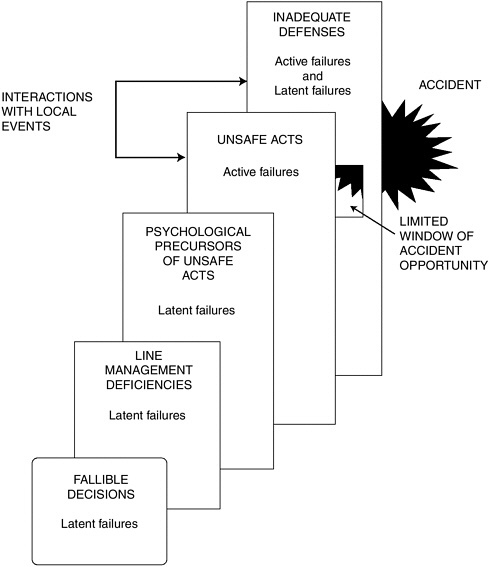

In Figure 2-2, Reason maps the human decisions that are made within the various production elements, and identifies the role played by each in creating latent error-producing conditions or active errors at “the sharp end” (see Chapter 1) both of which ultimately lead to accidents when organizational defenses are inadequate.

Using Reason’s model and the strong and convergent evidence obtained from studies of highly reliable organizations, research on work and work-

FIGURE 2-2 Human contributions to error within each production component.

SOURCE: Reprinted with the permission of Cambridge University Press from Human Error by James Reason, copyright 1990.

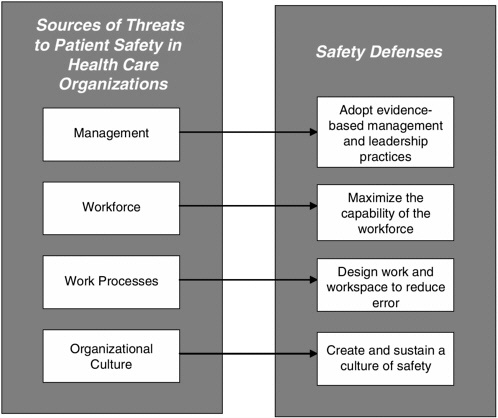

force effectiveness, health services research, and human factors analysis and engineering, the committee has sought to identify those evidence-based, mutually reinforcing practices essential to successful error reduction and patient safety within each of the four fundamental components of all organizations introduced in Chapter 1: (1) management and leadership, (2) workforce deployment, (3) work processes, and (4) organizational culture. These safety defenses are summarized in Figure 2-3. These interventions map to Reason’s schema and together constitute a framework for increasing patient safety through the modification of nurses’ work environments. The committee notes that this framework applies to all HCOs, and has made recommendations that, unless explicitly stated otherwise in the recommendation itself, also are intended to apply to all HCOs. As Figure 2-3 illustrates, these recommendations are aimed at creating work environments with built-in patient safety defenses that include (1) adopting transformational leadership and evidence-based management practices, (2) maximiz-

FIGURE 2-3 Basic work production components of all organizations and corresponding patient safety defenses.

ing the capability of the workforce, (3) designing work and workspace to defend against errors, and (4) creating and sustaining cultures of safety.

The evidence with respect to these practices and recommendations for their application by HCOs are discussed in Chapters 4 through 7, respectively. The implementation of these recommendations should recognize the unique features of health care that make it especially vulnerable to error production and escape from detection and remediation.

UNIQUE FEATURES OF HEALTH CARE THAT HAVE IMPLICATIONS FOR PATIENT SAFETY DEFENSES

In his more recent studies of patient safety, Reason has identified characteristics of the health care industry that distinguish it from other high-risk industries and make it more vulnerable to the production and effects of errors.3 These include the greater diversity and associated risks of actions undertaken in health care, the greater vulnerability of health care consumers, differences in the delivery of health care services in contrast to other human services, the uncertainty of the health care knowledge base, and the less explicit and open investigation of errors.

Diversity of Tasks and Tools

Much of the complexity of health care systems stems from the enormous diversity of the tasks to be performed and the tools to be used in performing them. By contrast, aviation, nuclear power generation, and railway systems are relatively homogeneous in terms of both their functions and the equipment they use. Transport systems move people and goods from point A to point B, mainly in a tightly scheduled fashion, while power-generating systems produce megawatts in as stable a manner as possible. Each domain has a very limited number of equipment types. In modern commercial aviation, for example, two manufacturers—Boeing and Airbus—supply the vast majority of aircraft. Less standardization of activities and tools is found in health care activities. Health care encompasses a large, complex set of tailored services, as opposed to fewer, standardized products.

Greater Risk Associated with Health Care Activities

Human performance in complex systems can be assigned to one of three categories: routine operations, coping with abnormal or emergency condi-

tions, and maintenance-related tasks (inspection, repair, calibration, and testing) (Reason and Hobbs, 2003). In the commercial aviation and nuclear power production industries, pilots and nuclear power plant operators spend the greater part of their time performing routine control and monitoring activities (mostly the latter). Health care professionals, in contrast, are more often dealing with abnormal, person-specific conditions and performing maintenance-equivalent work. Both of these operational modes are considerably more error-provocative and risk-laden than routine control. Two factors in particular are important in shaping error probabilities and their consequences: (1) the amount of “hands-on” work and (2) its safety criticality (i.e., the degree of hazard associated with less-than-adequate performance). Error opportunity is a function of the amount of immediate human involvement. Both emergency conditions and maintenance-related activities involve more direct physical contact than do routine operations. And in both cases, the safety criticality of errors is high.

Vulnerability of the Consumers of Production

In health care, individuals (i.e., patients) are an integral part of the “production process” in addition to being the recipients of health care services. Unlike passengers or the consumers of electrical power, however, patients are, by definition, vulnerable people. They are sick, injured, old, or very young. In nursing homes, for example, nearly half of all residents have some type of dementia. This vulnerability makes them much less able to participate in their own care and more liable to being seriously damaged by unsafe acts. Moreover, even when they are receiving safe and appropriate care, some patients’ underlying physical condition can make that care ineffective. These poor outcomes are not the same as adverse events resulting from inappropriate and unsafe care.

Mode of Delivering Health Care

The processes and products of commercial transportation, nuclear power, and other industries often are delivered to end-users in a fairly impersonal “few-to-many” fashion; that is, few individuals are involved in transmitting the service to many individuals. In contrast, the delivery from the health care professional to the patient is mainly “one-to-one” or “few-to-one.” This makes health care delivery a very personal, face-to-face transaction. The individual characteristics of the professional are likely to play a greater part in service delivery than in these other domains. Whether the health care professional chooses to go the extra mile is likely to have a far greater impact in health care than elsewhere.

Uncertainty of the Knowledge Base

Compared with many other highly technical hazardous endeavors, health care activities—despite many advances—are inexact procedures based upon incomplete knowledge, and are performed in a rapidly changing world on an increasingly aging population. Uncertainty is large, and error margins are small. Health care professionals and their patients both possess incomplete medical knowledge. As one surgeon recently noted:

We look for medicine to be an orderly field of knowledge and procedure. But it is not. It is an imperfect science, an enterprise of constantly changing knowledge, uncertain information, fallible individuals, and at the same time lives on the line. There is science in what we do, yes, but also habit, intuition, and sometimes plain old guessing. The gap between what we know and what we aim for persists. And this gap complicates everything we do (Gawande, 2002:7).

Event Investigation

Accidents in non–health care domains, such as transportation, are newsworthy and publicly investigated, and the results are widely disseminated. In contrast, mishaps in health care, again with some exceptions (e.g., radiological events), tend to be investigated quietly at the local level, and, until recently, findings were neither shared nor made available for public scrutiny.

Summary

In summary, health care institutions are complex systems, and their complexity includes features that are less often present in the kinds of hazardous hi-tech systems that are often used as models for effective safety management. This does not mean that health care professionals cannot learn valuable safety lessons from these other domains; rather, HCOs, policy officials, nurses, and all parties working to increase patient safety need to be mindful of the distinctive features of health care delivery that make it even more susceptible to the production of errors.

REFERENCES

Berwick D. 2002. A user’s manual for the IOM’s “Quality Chasm” report. Health Affairs 21(3):80–90.

Gawande A. 2002. Complications: A Surgeon’s Notes on an Imperfect Science. New York, NY: Metropolitan Books, Henry Holt and Company.

Goodman P. 2001. Missing Organizational Linkages: Tools for Cross-Level Organizational Research. Thousand Oaks, CA: Sage Publications.

Helmreich R. 2000. On error management: Lessons from aviation. British Medical Journal 320:781–785.

House R, Rousseau D, Thomas-Hunt M. 1995. The meso-paradigm: A framework for integration of micro and macro organizational behavior. In: Research in Organizational Behavior (Vol. 17). Greenwich, CT: JAI Press. Pp. 71–114.

IOM (Institute of Medicine). 2000. To Err Is Human: Building a Safer Health System. Washington, DC: National Academy Press.

IOM. 2001. Crossing the Quality Chasm. Washington, DC: National Academy Press.

Itner C, MacDuffie J. 1995. Explaining plant-level differences in manufacturing overhead: Structural and executional cost drivers in the world auto industry. Production and Operations Management 4:312–334.

MacDuffie J. 1995. Human resource bundles and manufacturing performance: Organizational logic and flexible production systems in the world auto industry. Industrial and Labor Relations Review 48:197–221.

Meurier C. 2000. Understanding the nature of errors in nursing: Using a model to analyse critical incident reports of errors which had resulted in an adverse or potentially adverse event. Journal of Advanced Nursing 32(1):202–207.

Perrow C. 1984. Normal Accidents. New York, NY: Basic Books.

Pil F, MacDuffie J. 1996. The adoption of high-involvement work practices. Industrial Relations 35:423–455.

Ramanuajm R. Forthcoming. The effects of discontinuous change on latent errors in organizations: The moderating role of risk. Academy of Management Journal.

Reason J. 1990. Human Error. Cambridge, UK: Cambridge University Press.

Reason J, Hobbs A. 2003. Managing Maintenance Error: A Practical Guide. Hampshire, UK: Ashgate.

Roberts K. 1990. Managing high reliability organizations. California Management Review Summer:101–113.

Roberts K, Bea R. 2001. When systems fail. Organizational Dynamics 29(3):179–191.