2

Reconceptualizing Admissions Policies and Practices

Health professions training programs’ admissions policies and practices vary widely from discipline to discipline and from institution to institution. These variations are reflected in differences in entrance requirements, discipline-specific criteria, and institutional mission. Almost all, however, rely upon a combination of quantitative information (e.g., high school and/or collegiate grade point average, particularly in science courses, and standardized admissions test data) and qualitative information (e.g., applicants’ personal characteristics, background, and motivation to enter health professions fields) to arrive at admissions decisions.

This chapter will explore commonly used admissions policies and practices and their impact upon racial and ethnic diversity in graduate health professions training programs, with particular attention to the role of high-stakes, standardized, norm-referenced tests (e.g., the MCAT, DAT, GRE) in the admissions process. In addition, the chapter reviews data regarding minority applicants’ standardized test performance and explores how these tests are used in typical admissions processes. The benefits and limits of the use of these tests are also discussed. Alternative admissions models are then reviewed, and their implications for underrepresented minitory (URM) applicant success in the admissions process are explored. Throughout this discussion, the committee attempts to reconcile two objectives that have often been viewed as competing concerns, but which, as outlined in the introductory chapter, can be seen as complementary. How can health professions training programs reconceptualize admissions policies and practices to reduce barriers to URM participation in health professions, while at

the same time adopting admission practices that more accurately reflect the desired skills and attributes needed by future health professionals? In other words, how can diversity and quality goals coexist in admissions practices?

The chapter will begin with a brief description of the history, intent, and purposes of standardized tests used in higher education admissions. Though the discussion in this chapter is intended to provide general recommendations applicable to admissions policies for all health professions, it must be noted that admissions policies and practices vary considerably among the health profession disciplines studied here. Nursing education, for example, operates in a very different context for admissions decisions, with varying levels of “selectivity” depending on the type of degree program. Admission to many masters degree and doctoral level nursing programs is highly competitive, but admission to registered nurse (RN) education at the college or vocational institutional level is far less competitive than the post-graduate, doctoral educational settings of nursing, medicine, dentistry, and psychology. Many community college nursing education applicants are accepted if they meet minimum criteria for completing required courses, and in many states, community colleges are oversubscribed, and eligible applicants are put on a waiting list or go through a lottery process to matriculate. Even for baccalaureate-level nursing programs, the process is less competitive than for the doctoral health professions programs in other fields. Most of the baccalaureate nursing programs, for example, are at state colleges and not at “elite” universities.

STANDARDIZED TESTS AND HIGHER EDUCATION ADMISSIONS

A Brief Background on Standardized Tests

Historically, standardized admissions tests emerged from the early work of psychometric psychologists who attempted to quantify human intelligence through a variety of testing and assessment tools. In the late nineteenth and early twentieth centuries, these efforts were driven in large part by Darwinian theories of individual variation and natural selection (McGaghie, 2002) but were often accompanied by explicitly racist and eugenicist ideology regarding the racial superiority of European descendants and inferiority of non-Europeans. Many of the leading test developers, such as Alfred Binet, E.L. Thorndike, and others, saw the broad use of intelligence tests as not only an efficient means of distinguishing between individuals of differing intellectual ability, but also as a scientific means of verifying the intellectual superiority of Caucasians and inferiority of non-Caucasian racial groups, in accordance with laws of natural selection (Gould, 1996).

Early efforts to develop achievement tests that could be administered in

large groups led to their widespread use, particularly by the Army, which during World War I sought a means to identify promising officer candidates. The Army’s Alpha and Beta tests served as early precursors of school-based intelligence and aptitude tests, which became broadly used in schools following the war. An early developer of the Army’s tests, Carl Brigham, was commissioned by the College Board to develop a test that could be administered to high school students, and in 1926 Brigham experimentally administered the first Scholastic Aptitude Test (SAT) to 10,000 students. This test, modeled on the Army Alpha test, was soon adopted by several Ivy League colleges, which sought an efficient means to screen applicants and “expand opportunities throughout the country for students who did not come from the upper class” (Calvin, 2000, p. 24). By the beginning of World War II, the SAT became widely used by selective colleges and universities as part of the admissions process (National Research Council, 1999).

Following World War II, the demand for standardized admissions tests increased sharply as the number of applicants—fueled in part by expanded opportunities to attend college as a result of the GI Bill—rose (Wightman, 2003). Standardized tests scores were viewed as an efficient mechanism with which college admissions committees could assess the talent and skills of a growing applicant population (increasingly composed of individuals who were not part of the existing educated class) with which admissions officials were largely unfamiliar. Tests were therefore viewed by many, including college administrators, applicants, and the general public, as an opportunity to “open the doors of educational opportunity to a broad range of students … particularly … to the elite schools in the northeast” (Wightman, 2003, p. 2).

Health professions training programs also experienced needs for standardized tests to assist admissions decisions, given greater public demand for access to medical education and the wide range of academic preparation of applicants. In 1930 the first version of the Medical College Admissions Test (MCAT) (then called the Scholastic Aptitude Test for Medical School) was developed and implemented (Wightman, 2003). Its potential value in admissions was clear: given high rates of attrition among freshman medical students (chiefly for academic reasons), medical schools sought a means to predict success in medical education and avoid wasting student slots. With greater use of subsequent versions of the MCAT, national medical student attrition rates declined from 20 percent in 1925 to 7 percent in 1946 (McGaghie, 2002).

Admissions tests have evolved considerably since their early versions (the MCAT has undergone five substantive revisions; McGaghie, 2002), and in some cases reflect different purposes. The SAT, for example, was originally developed to assess general verbal and mathematical reasoning as a means of predicting applicants’ aptitude to do college-level work. The

American College Test (ACT), which evolved primarily in the Midwestern United States, offered colleges a tool to assess students’ explicit content knowledge and their ability to apply this knowledge in college. The ACT was therefore not only an admissions tool, but also offered a means of assisting students in course placement and academic planning (National Research Council, 1999). More recently, the SAT II (Scholastic Achievement Test) has been developed to assess content knowledge in academic subjects.

The Benefits of Standardized Tests

Standardized admissions tests remain beneficial in assisting admissions decisions for at least three reasons. First, the educational experiences of applicants vary considerably, as the U.S. educational system emphasizes local control of educational standards, funding, and curricula. In addition, applicants often have different educational experiences and opportunities. Standardized tests offer an efficient means of comparison among students who have diverse educational backgrounds.

Second, standardized test data are often useful for higher education institutions that must sort through a large number of applications, often with limited resources and time constraints. Standardized tests can be administered at low costs to students and the institutions that use test data and therefore offer efficiencies for admissions officials. Finally, standardized tests offer an opportunity for students to demonstrate their academic abilities, particularly in instances where students’ classroom performance is not indicative of their abilities, and help students to realistically assess their chances of gaining admission to the institution of their choice (National Research Council, 1999).

In this context, standardized admissions tests offer an efficient way to compare diverse applicants. Their appropriate use can be summarized as follows: tests are designed to sort applicant pools into broad categories, “those who are quite likely to succeed academically at a particular institution, those who are quite unlikely to do so, and those in the middle” (National Research Council, 1999, p. 24).

Common Misinterpretations and Misuses of Standardized Tests

Unfortunately, in some instances standardized tests have been employed beyond their original intents and current usefulness, and they are commonly misinterpreted, both by the general public as well as university officials, in ways that are inconsistent with their design and stated purpose. Two such misuses, as identified by the NRC (National Research Council, 1999), include:

The belief that admissions tests measure a “compelling distillation of academic merit.” Admissions tests do not measure the full range of abilities that are needed to succeed in higher education—nor were they designed to. Important attributes such as persistence, maturity, intellectual curiosity, creativity, the ability to work with others, and motivation are all associated with high academic achievement but are not measured by admissions tests. Instead, these attributes must be assessed by other means, such as applicants’ essays, letters of recommendation, and record of extracurricular and community activities. Admissions tests therefore provide only part of the information a university admissions officer might need to fully assess the likelihood of a student succeeding in higher education, or whether the student fits within the institution’s mission.

The belief that admissions tests provide a precise measure of student performance. As noted above, standardized admissions tests are best used to sort applicants into broad categories. In some instances, however, academic institutions and the general public have viewed test data as an indication of fine distinctions between individual applicants. This perception is not only untrue, but it also leads to contentious debate regarding the fairness of using test data to sort applicants whose test performance varies only minimally. Admissions tests, whether they measure aptitude or achievement, are imprecise estimates of how students might be expected to perform in specific educational contexts. Student performance on admissions tests often varies, and therefore test scores are best viewed as a point within a range of possible scores. Individual variation in test performance can occur without special preparation (e.g., intensive test preparation courses), and marked variation can occur following such preparation. “Given that a score is a point in a range on a measure of a limited domain,” the NRC notes, “the claim that a higher score should guarantee one student preference over other another is not justifiable. Thus, schools that rely too heavily on scores to distinguish among applicants are extremely vulnerable to the charge of unfairness” (National Research Council, 1999, p. 24).

An additional misconception regarding standardized tests is brought about by the use of test data in popular publications to “rank” the quality of academic institutions. Several popular publications, including the U.S. News and World Report, publicize “rankings” of U.S. colleges and universities, using average admission test scores of entering classes as part of the criteria to assess institutional “selectivity.” The use of average admissions test scores in this fashion not only provides a misleading indicator of selectivity, it also results in pressure on some institutions to weigh test data more heavily in admissions, as a means of raising the average test scores of entering students. As noted above, almost all institutions (particularly selective private institutions) weigh test scores in concert with other information, such as prior grades, essays, and letters of recommendation. Other

factors, such as whether the applicant’s parents are alumni of the institution, are also given weight at many institutions. Test scores alone therefore provide little information about the “selectivity” of an institution. The pressure on institutions to weigh test data more heavily is perhaps a natural result of having average test scores published and compared with other institutions, but having higher average test scores—as noted above—does not necessarily result in a class of students who are better prepared for or more likely to succeed in higher education.

How Effective Are Standardized Tests in Predicting Performance?

The predictive validity of standardized tests may be assessed by comparing test scores to at least two criteria of interest. The first, more common method is to assess how well test scores predict student academic performance following admission. In the case of health professions education, for example, students’ test performance may be used to predict cumulate grade point average at the end of the first or second year of training. A second, but no less important, criterion of interest is students’ subsequent clinical and professional performance, which may be assessed by comparing students’ pass/fail rates on professional licensure exams, clinical clerkship or internship grades, or other measures of professional performance. In general, studies indicate that standardized tests are better predictors of the former criterion, that is, academic performance, particularly in the early years of training (e.g., first- or second-year grades), than in predicting the latter criteria (e.g., professional skill or competence).

When used appropriately, standardized test scores—assessed in conjunction with other data—have proven useful in assisting admissions committees to predict which students are likely to succeed in a given educational context, and which are not likely to succeed. Several decades of research demonstrates that undergraduate admissions tests (i.e., SAT or ACT) have an average correlation with first-year college grades that ranges from .45 to .55, indicating that such tests explain approximately 25 percent of the variance in predicted grades (National Research Council, 1999). The predictive power of standardized tests improves when used in conjunction with prior grades, and therefore most undergraduate admissions offices rely on a combination of high school grade point average and standardized test scores to assess applicants’ academic potential, although in many cases prior grades are a more powerful predictor of future academic performance than standardized tests (Bowen and Bok, 1998).

Similarly, standardized tests used in graduate health professions training program admissions have moderately strong correlations with early academic performance in these settings. The MCAT, for example, has been found to have a moderately strong correlation (r = .59) with medical stu-

dents’ first- and second-year grades, but this prediction improves when test scores are used in combination with undergraduate grade point average (Association of American Medical Colleges, 2000). The predictive power of both the MCAT and undergraduate grades declines slightly when third-year grades or cumulative medical school grades are the criterion. When grades and test scores are used in combination, both undergraduate grades and MCAT scores are strong predictors of student performance on the U.S. Medical Licensing Exam (USMLE) Steps I–III, with coefficients ranging from .72 for Step I, .63 for Step II, and .65 for Step III (Association of American Medical Colleges, 2000).

As with the MCAT, the Graduate Record Examination (GRE) has modest value in predicting students’ academic performance in graduate school, particularly in the early years of graduate study. Yet the predictive power of the test falls precipitously as students advance in graduate training. Sternberg and Williams (1997) found that the median correlation of overall GRE scores with graduate psychology students’ first-year academic performance was .17, while the GRE Advanced test in psychology correlated more strongly with first-year performance (.37). Neither the GRE overall score nor the Advanced test in psychology, however, were significant predictors of second-year performance among psychology graduate students, with correlation coefficients of .10 and .02, respectively (Sternberg and Williams, 1997).

Graduate test scores are generally less effective in predicting expected outcomes of graduate training, such as the clinical performance of physicians, or research and analytic abilities of doctoral-level psychologists. Silver and Hodgson (1997), for example, found that undergraduate grade point average and MCAT scores were only moderately predictive of students’ scores on the National Board of Medical Examiners (NBME) Part I (grades and MCAT scores predicted only about one-third of the variance in NBME scores). These same predictors, however, were unrelated to clinical performance, as measured by clerkship grades (Silver and Hodgson, 1997). Similarly, Sternberg and Williams (1997) found that the GRE was not a significant predictor of graduate psychology students’ analytical, creative, practical, research, and teaching abilities, as assessed by students’ primary advisors. The GRE Analytical scale, however, was moderately predictive of students’ dissertation scores (as assessed by dissertation committee members), analytic abilities, and creativity, with correlation coefficients ranging from .16 to .24 on these measures—but only for male students.

Given that standardized tests are good, but not entirely consistent or strong predictors of students’ future academic or career performance, some scholars have sought to better understand how standardized tests can be supplemented and/or more appropriately used in the admissions process.

Robert Sternberg and his colleagues at Yale University’s PACE Center, for example, are developing an assessment instrument that, drawing on Sternberg’s theory of “successful intelligence,”1 provides a supplementary test of analytic skills, as well as practical and creative skills, to improve prediction of undergraduate students’ academic performance when used in conjunction with SAT scores (Sternberg et al., in review). In addition, some admissions committees have sought to better understand how standardized test data can be used as an initial “screen,” allowing committees to winnow applicants who are unlikely to succeed academically in health professions educational settings based on poor test performance, while retaining applicants for consideration whose scores suggest that they are able to manage the academic rigors of health professions education. Some admissions committees have identified a cut-off “range” of scores, below which students are unlikely to succeed academically (Garcia et al., 2003). In this model, applicants whose test scores fall above the cut-off range are retained for consideration, but other “qualitative” attributes of applicants are assigned greater weight in an attempt to identify students whose personal characteristics and diverse experiences are predictive of success as health professionals (see below for more discussion of model admissions practices).

Evaluating the Predictive Validity of Standardized Test Scores for Diverse Applicants

While standardized tests have been found to be a useful tool when used in conjunction with prior grades and other information in predicting applicants’ future (particularly short-term) academic performance, the predictive power of standardized tests has been found to vary by test takers’ race and ethnicity. URM students tend to perform more poorly relative to white students than would be predicted by standardized test scores. That is, URM students with the same standardized test scores as whites tend to receive lower grades than these white students, a phenomenon that is termed “underperformance” by some scholars (e.g., Bowen and Bok, 1998), or “overprediction” by others (e.g., Wightman, 2003). Overprediction occurs when test takers’ performance on the criterion of interest (typically first-year grades following admission) is lower, on average, than would be predicted by these individuals’ standardized test scores (Wightman, 2003).

While the differential predictive validity of admissions tests has largely been assessed using undergraduate admissions test data and freshman grades, the phenomenon also extends to graduate health professions training settings. Koenig, Sireci, and Wiley (1998), for example, in a study of the relationship between medical students’ MCAT scores and cumulative medical school grade point average, found that whites’ MCAT scores tended to underpredict their performance (although the magnitude of this underprediction was small), while the test scores of African American, Hispanic, and Asian American students tended to overpredict their medical school performance (for the latter two minority groups, this overprediction was statistically significant).

The tendency of URM students to perform academically at lower levels than their standardized tests would suggest, does not, in itself, indicate that tests are systematically biased against minority students. Little is known about why tests tend to overpredict academic performance among minorities, and inferences about bias based on the differential validity of tests are difficult to draw unless the criteria (grades) are assumed to be unbiased (Wightman, 2003). The trend toward test overprediction among minorities, however, suggests that admissions committees must be aware of special considerations in evaluating minority applicants’ test performance and likely academic potential. Potential reasons for minority “underperformance” are explored below, following a review of group differences in standardized test performance.

Group Differences in Standardized Test Performance

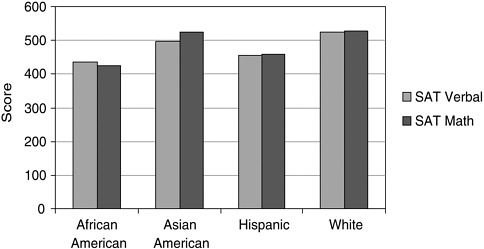

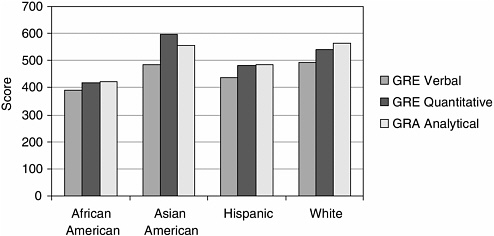

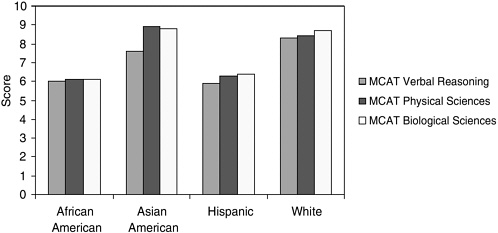

Underrepresented minority students, on average, perform poorly relative to whites and Asian Americans on standardized admissions tests. These differences are consistent across a range of tests, with the largest gaps found between white and African American test-takers, followed by gaps between whites and Hispanics. Asian-American test-takers’ mean test scores are similar to those of whites, with some exceptions. Group means for three commonly used admissions tests (SAT I, GRE, and MCAT) are displayed in Figures 2-1, 2-2, and 2-3.

Scholastic Aptitude Test

The College Board’s Scholastic Aptitude Test is the most commonly used undergraduate admissions test for selective public and private colleges. As such, it is the “gateway” examination for most students interested in pursuing health professions careers. SAT I verbal and math scores range from 200 to 800, with a standard deviation of 111 to 112. As shown in Figure 2-1, whites achieve, on average, the highest scores on both scales,

FIGURE 2-1 Group means on Scholastic Aptitude Test (SAT) performance by ethnicity and race.

SOURCE: Camara and Schmidt, 1999.

with a mean SAT verbal score of 526 and a mean SAT math score of 528. African American test-takers score nearly a full standard deviation unit lower than whites on the SAT verbal and math scales (standardized difference2 [SD] = .83 and .92, respectively), while Hispanics score about three-fifths of a standard deviation unit lower than whites on both scales. Asian American test-takers perform nearly identically to whites on the SAT math scale, but about one-quarter standard deviation unit lower than whites on the SAT verbal scale (Camara and Schmidt, 1999).

Graduate Record Examination

The Graduate Record Examination Verbal, Quantitative, and Analytic scales range from 200 to 800, with a standard deviation of 108 for the Verbal scale and 127 for both the Quantitative and Analytic scales. The GRE is commonly used for admission to selective graduate (e.g., Ph.D.) programs in a range of academic fields, including most “scientist-practitioner” clinical psychology programs (many programs typically also require applicants to take a subject-area examination). Group differences in performance on the GRE are displayed in Figure 2-2. As with the SAT, white test-

FIGURE 2-2 Group means on Graduate Record Examinations (GRE) Performance by ethnicity and race.

SOURCE: Camara and Schmidt, 1999.

takers typically score higher than other racial and ethnic groups, with the exception of the GRE Quantitative, where Asian Americans score nearly a half-standard deviation unit (SD = .46) higher than whites. African Americans score, on average, one full standard deviation unit below whites on all three scales, while Hispanic test-takers score approximately half to three-fifths of a standard deviation unit below whites on all scales (ranging from .46 on the Quantitative scale to .62 on the Analytical scale; Camara and Schmidt, 1999).

Medical College Admissions Test

The Medical College Admissions Test Verbal Reasoning, Physical Sciences, and Biological Sciences scales range from 1 to 15, with a standard deviation of 2.4 for all scales in 1998 (Camara and Schmidt, 1999). Group differences in performance on the MCAT are displayed in Figure 2-3. As is the case with the GRE and SAT, African American and Hispanic test-takers score lower than whites and Asian Americans, who tend to perform at similar levels on this test. African American and Hispanic test-takers score approximately 1 full standard deviation unit lower than white test-takers on all three scales; this difference ranges from .96 to 1.08 on all scales for African Americans and from .88 to 1.00 on all scales for Hispanics. Asian American test-takers score .29 of a standard deviation unit lower than whites on the Verbal Reasoning scale, perform slightly higher on the Physi-

FIGURE 2-3 Group means on Medical College Admissions Test (MCAT) performance by ethnicity and race.

SOURCE: Camara and Schmidt, 1999.

cal Sciences (.21) scale, and score equivalently on the Biological Sciences scale (Camara and Schmidt, 1999).

What Causes Group Differences in Standardized Test and Academic Performance?

The causes of the persistent gap in test performance between URM and non-URM students have been the subject of hotly contested debate, with explanations ranging from accusations of test bias to assertions of genetic differences in intellectual ability among racial and ethnic groups (e.g., Hernnstein and Murray, 1999). A growing plurality of social scientists, however, agree that historic and contemporary social and economic forces and discrimination play a powerful role in shaping differences in racial and ethnic groups’ educational opportunities and life experiences that affect test performance (American Sociological Association, 2003).

Bowen and Bok (1998), in their seminal research on the academic performance of African American and white students who attended selective U.S. colleges and universities, suggest two sets of factors that may contribute to poorer URM performance on standardized tests, as well as their tendency to perform at lower levels academically than non-URM students who achieve the same standardized test scores. The first set of factors refers to pre-college influences, such as the quality of prior academic preparation and family influences on educational preparation. The second set of factors emerges from experiences in higher education settings (and in this instance, health professions education institutions [HPEIs]).

Pre-College Influences on Educational Preparation

In the United States, educational opportunities are unevenly distributed by race and ethnicity. Racial and ethnic minority students are far more likely than white students to attend majority-minority schools, even when the former group of students is from middle- and upper-income families (Orfield, 2001). For example, more than 70 percent of African American students and 76 percent of Latino children attend schools that are majority non-white (American Sociological Association, 2003). Such schools tend to be characterized by fewer academic and financial resources, fewer credentialed teachers, fewer advanced placement courses, and higher dropout rates, even when in similar neighborhoods as predominantly white schools (American Sociological Association, 2003). Students in higher-income, predominantly white schools, in contrast, are exposed to more rigorous coursework, take more courses, and have greater exposure to college preparatory and advanced placement coursework (Camara and Schmidt, 1999). Such students are also more likely to take test preparation courses outside of regular coursework. Even among racial and ethnic minority students who attend integrated schools, segregation within schools in common; African American and other URM students are more likely to be “tracked” into vocational or lower-level academic programs, which offer little or no college preparatory content (Camara and Schmidt, 1999).

To a great extent, school-based inequities reflect patterns of racial housing segregation and inequities among localities in school funding (American Sociological Association, 2003). African American and Hispanic students, particularly those in inner-city and rural communities, are more likely to live in neighborhoods characterized by high poverty rates and few local resources for schools. Not surprisingly, average per-pupil expenditures for these schools are in some cases one-half to two-thirds lower than per-pupil expenditures in some of the wealthiest public school districts, resulting in inequities in teaching resources, teacher pay and qualifications, and physical accommodations (Orfield, 2001). As Camara and Schmidt (1999) note:

The stark differences across [standardized test] assessments and other measures collectively illustrate the inequities minorities have suffered through inadequate academic preparation, poverty, and discrimination; years of tracking into dead-end educational programs; lack of advanced and rigorous courses in inner-city schools, or lack of access to such programs when available; threadbare facilities and overcrowding; teachers in critical need of professional development; less family support and experience in higher education; and low expectation (p. 13).

In addition to inequality of educational opportunities, differences in family influences may be associated with poorer URM academic perfor-

mance. Bowen and Bok (1998) note that despite their efforts to account statistically for racial and ethnic differences in family socioeconomic background, students’ academic performance is likely to be affected by family attributes that are difficult to measure. “College grades,” they write, “may well be less affected by family income and parental education … than they are by the number of books at home, opportunities to travel, better secondary schooling, the nature of the conversation around the dinner table, and more generally, parental involvement in their children’s education” (Bowen and Bok, 1998, p. 80). Students from higher socioeconomic backgrounds, more often non-URM students, benefit disproportionately from these influences.

Experiences in Higher Education Institutions and HPEIs

In addition to educational and socioeconomic inequities between United States racial and ethnic groups, the poorer performance of minority students on standardized tests, and their lower academic performance than would be expected on the basis of these tests, may also be traced to experiences of URM students in higher education and HPEI settings. Bowen and Bok (1998) note that these explanations “range from … psychological theories to assertions about discrimination by faculty members, low motivation on the part of [URM] students, special problems of adjusting to predominantly white environments, and poorly conceived institutional policies that at best accept and at worse encourage lower academic aspirations by [URM] students” (Bowen and Bok, 1998, p. 81).

URM students, particularly those that are a visible yet small minority on college and HPEI campuses, may experience academic pressure or feelings of insecurity, and especially so in contexts where URM students have been historically excluded. Pressures to perform as well as non-URM students and manage stress stemming from campus racial tensions have been found to be negatively associated with URM undergraduate students’ academic performance, after controlling for standardized test scores and prior academic performance in college (Smedley et al., 1993). Other researchers suggest that peer group influences, particularly within African American peer groups, may cause minority students to be less invested in academic performance and to “experience inordinate ambivalence and affective dissonance in regard to academic effort and success” (Fordham and Ogbu, 1986, p. 177).

Among the most extensively studied social and psychological factors affecting URM academic and test-taking performance is research on “stereotype threat.” Beginning with the work of Claude Steele and colleagues (Steele, 1997; Steele and Aronson, 1995), psychologists have found that African Americans and other groups whose intellectual abilities are stigma-

tized by widely held societal stereotypes (e.g., women undertaking mathematical problems) may respond to these stereotypes by performing poorly relative to their actual abilities. This tendency, termed stereotype threat, presents an additional emotional and cognitive burden for individuals who are members of the group for which the stereotype might apply. Stereotype threat exerts its influence in the form of performance-disruptive anxiety and apprehension about the possibility of confirming the stereotypes’ validity in the eyes of others or for the individual affected by the stereotype. This threat applies to those who are aware of the stereotype and value high academic performance, as is the case for most African American students in higher education settings. African Americans and others affected by stereotype threat need not believe in the stereotype’s validity to be affected by their consequences, given the widespread nature of many stereotypes about minority intellectual ability.3 Rather, it is the students’ concern about disproving the stereotype that confers anxiety and affects academic performance (Aronson et al., 2001; Steele, 1997; Steele and Aronson, 1995).

The impact of stereotypes on URM students’ test performance has been demonstrated in a number of laboratory studies. Steele and Aronson (1995), for example, administered difficult GRE-type verbal questions to a sample of African American and white Stanford University undergraduates. In one condition, students were told that their performance on the test items was diagnostic of their intellectual abilities, while in another condition, students were told that their performance was nondiagnostic (i.e., the investigators were merely interested in how students solve problems, and their performance was unrelated to ability). After controlling for students’ initial skills (as measured by SAT verbal scores), the investigators found that African American students performed significantly worse than their white peers in the “diagnostic” condition, but equaled the performance of whites in the “nondiagnostic” condition. To further assess this phenomenon, the investigators asked students in both the diagnostic and nondiagnostic conditions to complete word fragments, some of which were symbolic of African American stereotypes and self-doubt. African American students in the diagnostic condition, who were expecting to take an ability-diagnostic test, completed more stereotyping word fragments and self-doubt words compared to African American students in the nondiagnostic condition or whites in either condition, indicating that the former group of students were more

aware of and concerned about stereotypes of black academic performance. When the same students were asked to list their race on the exam, all of the African Americans in the nondiagnostic condition and whites in both conditions complied, whereas only 25 percent of African American students in the diagnostic condition did so, further indicating anxiety about test performance being viewed as confirming racial stereotypes (Steele, 1997).

What Are the Consequences of Heavy Reliance on Quantitative Measures in Admissions Processes?

Given that URM students tend to perform poorly relative to their white and Asian American peers on standardized tests, and that social and psychological factors may contribute to poorer test performance among URM students and other stigmatized groups, it is not surprising that admissions models that weigh quantitative data more heavily in admissions decisions often fail to achieve a racially and ethnically diverse class of students. Evidence, from both hypothetical analyses and policy changes in several states, suggests that absent admissions policies that allow for the consideration of applicants’ race or ethnicity, URM student participation in health professions will drop precipitously.

What Would Happen to URM Enrollment in Medical Schools Without Race-Conscious Admissions?

Were medical school admissions committees to drop any consideration of race or ethnicity in admissions, URM students would find difficulty gaining admission in U.S. medical schools. Cohen (2003) reports on an exercise, based on data from URM and non-URM applicants to medical schools in 2001, in which researchers used an algorithm to calculate the numbers of students that would gain admission based on undergraduate grades and MCAT scores. This analysis assumes that all other differences between URM and non-URM students are held constant, including industriousness, leadership, or other qualities that medical schools might consider in the admissions process. Using data from all applicants to 119 nonminority medical schools (excluding historically African American and Puerto Rican medical schools), this analysis revealed that only 513 URM students would have gained admission, a figure that is 70 percent lower than the actual number (1,697) of URM students that gained admission in 2001. These 513 students would have constituted approximately 3 percent of all medical students, levels that have not been seen since the early 1960s.

Effect of “Race-Neutral” Admissions on URM Participation in Higher Education in California, Texas, and Florida

In response to public referenda, judicial decisions, and lawsuits challenging affirmative action policies in 1995, 1996, and 1997 (notably, the Fifth District Court of Appeals finding in Hopwood v. University of Texas, and the California Regents decision to ban race or gender-based preferences in admissions), three states—California, Texas, and Florida—developed “race-neutral” undergraduate admissions policies that guaranteed admission to the state university system to applicants who graduated within the top tiers of their high school class. In Texas, the state legislature passed legislation that guaranteed admission to the state institution of choice for applicants who graduated in the top 10 percent of their high school class. Under this plan, qualifying applicants must complete an application for admission and provide standardized admission test scores, but test scores are not considered in admissions decisions. In California, the University of California (UC) Board of Regents approved a plan to confer eligibility for admission to the UC system to the top 12.5 percent of the state’s high school graduates, as well the top 4 percent of graduates from each high school. The latter pool of students (termed “eligibility in the local context” [ELC]) includes several hundred students who are at the top of low performing schools and thus would not qualify as being in the top 12.5 percent statewide. The vast majority of students in the top 4 percent of their class also qualify on statewide criteria, however. Because URMs are disproportionately from low performing schools, the ELC program tends to increase the number of URMs eligible for admission. Students who clear the eligibility hurdle are admitted to the University of California, but not necessarily to the campus or major of their choice. Similarly, Florida adopted a “percent plan” admissions policy when the state’s governor, in an attempt to preempt a statewide referendum similar to that passed in California, approved a plan to guarantee admission to the University of Florida system to the top 20 percent of the state’s high school graduates.

Conservative critics of these plans have charged that percent plans are merely affirmative action plans under a different name, given that the plans’ proponents argue that the plans will maintain racial and ethnic diversity while eliminating the explicit consideration of race and ethnicity in the admissions process. In addition, these critics charge, guaranteeing admission to even the narrowest top percentage of high school graduates threatens to weaken state university admission standards, and that because of the relatively poorer academic resources of majority-minority high schools, even top minority graduates who gain admission will be poorly prepared for the academic rigors of selective state universities (Horn and Flores, 2003). Progressive advocates are similarly dismayed by percentage plans.

They charge that the plans are perversely dependent upon racial segregation in high schools (given that the racial and ethnic diversity that the plans’ proponents expect depends largely on the assumption that top graduates of majority-minority high schools will matriculate within the state university system); that the plans are likely to miss many high-achieving minority applicants who do not graduate within the top percentage of “automatic” admits; that a guarantee of admission only within state systems does not prevent the possibility that minority admits will be “clustered” in lower tiered schools within state systems; and that, for purposes of enhancing student diversity in public institutions, such plans are an inadequate substitute for the explicit consideration of applicants’ race and ethnicity (Horn and Flores, 2003).

Several major studies have been conducted to evaluate the impact of percentage plans on undergraduate student diversity in the three states (Tienda et al., 2003; Horn and Flores, 2003; Marin and Lee, 2003). Each concludes that while most students eligible for admission to state universities under the percentage plans would also have been admitted under previous admissions policies, percent plans have largely failed to restore minority enrollments to levels seen prior to the ban on the consideration of applicants’ race or ethnicity imposed in each state (Horn and Flores, 2003).

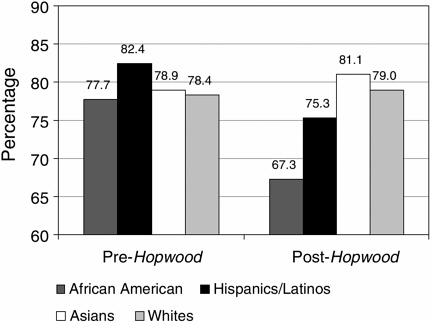

Texas’ “Top 10 Percent” Plan

Marta Tienda and colleagues (Tienda et al., 2003) evaluated trends in admission and enrollment of African American and Latino students at the University of Texas’ flagship public institutions, the University of Texas (UT) and Texas A&M University (A&M), before and after implementation of the “Top 10 Percent” plan, and found that while some minority students who graduated among the top 10 percent of their high school class gained admission to UT and A&M under this plan (students who might previously have been rejected because of low standardized test scores or poor essays) the plan has resulted in lower minority admissions and matriculation rates for African American and Latino students than during the pre-Hopwood era. Post-Hopwood, for example, African American students constituted only 2.4 percent of enrollees at A&M, a decline from 3.7 percent prior to the ruling. Hispanic student representation similarly declined, from 12.6 percent pre-Hopwood to 9.2 percent following the ruling. Similar, but less striking trends were observed at UT, where African American and Hispanic students declined from nearly 20 percent prior to the Hopwood ruling to less than 17 percent of the undergraduate population post-Hopwood. More importantly, the probability of admission to the two flagship institutions for Asian American and white students increased following implementation of the “Top 10 percent” plan, while this probability declined for African

FIGURE 2-4 Probability of admission pre- and post-Hopwood by race and Hispanic origin: Texas public flagship.

SOURCE: Tienda et al., 2003.

American and Hispanic students (see Figure 2-4). Tienda and colleagues note that while the decline in URM representation at Texas’ flagship institutions was not as dramatic as some critics had predicted (due in large part to the heavy weight placed on high school grades and class rank in UT undergraduate admissions prior to the Hopwood decision), the decline is particularly disturbing given the fact that Texas’ “minority” population will soon become a demographic majority (Tienda et al., 2003).

University of California

As with the Texas percent plan, the UC admissions plan guarantees admission to the state system to the states’ top high school graduates but differs from the Texas plan in that eligible applicants are not guaranteed admission to the campus of their choice. While data are incomplete because of California’s relatively recent (1999) move toward a “percent” admissions plan, preliminary data indicate that minority admission and matriculation in the UC system has declined and that this decline was sharpest shortly after the California Regents’ decision to eliminate the consideration of race and ethnicity in admissions. As shown in Figure 2-5, both African

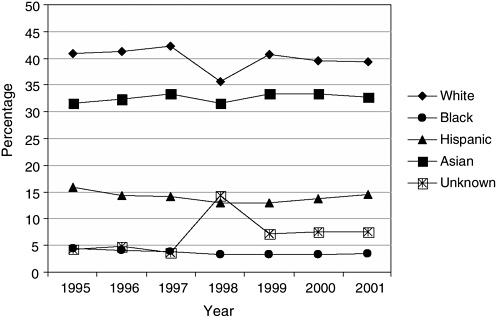

FIGURE 2-5 University of California system-wide Fall state resident freshman admissions offers, by race/ethnicity, 1995–2001.

SOURCE: UC Office of the President, Student Academic Services, OA&SA, REG004/006, January 2002, http://www.ucop.edu.news.studstaff.html.

American and Hispanic freshman admissions dropped significantly from 1995, when African Americans and Hispanics represented 4.4 percent and 15.8 percent of freshman admissions, respectively, to 1998, when African American admissions fell to 3.2 percent, while Hispanic admissions fell to 12.9 percent of the freshman class (Horn and Flores, 2003). By 2001 URM representation among freshmen in the UC system increased slightly to 3.4 percent African American and 14.6 percent Hispanic students, but these percentages are still below the levels attained prior to the Regents’ decision. The decline in URM representation was more profound at the state’s flagship institutions, the University of California at Los Angeles and the University of California at Berkeley. African American freshman admissions offers at UC Berkeley declined from 7.3 percent in 1995 to 3.2 percent in 1998 and 4.1 percent in 2001, and from 6.7 percent in 1995 to 3.3. percent in 2001 at UCLA. Similarly, Hispanic freshman admissions offers declined from 1995 (18.5 percent and 20.1 percent at Berkeley and UCLA, respectively) to 2001 (12.5 percent at Berkeley and 12.7 percent at UCLA), with these percentages dwindling to under half their 1995 levels in 1998 at both campuses (Horn and Flores, 2003).

In light of these data, Horn and Flores (2003) conclude that “ … in all three states, the gap between the racial distribution of college-freshman-age population and that of the applications, admissions, and enrollments to the states’ university systems and to their premier campuses is substantial and has grown even as the states have become more diverse…. [I]n California in particular, proportional representation of applied, admitted, and enrolled blacks and Hispanics on the flagship campuses has decreased since the end of race-conscious policies” (p. 50).

ALTERNATIVE ADMISSIONS MODELS

Given the barriers that “race-neutral” and heavily quantitatively weighted admissions policies pose to achieving racially and ethnically diverse classes, many leaders in the academic health professions have begun to reconceptualize admissions policies and practices in an attempt to enhance both the diversity and quality of admitted students. Increasingly, these needs are seen as linked. As Edwards, Elam, and Wagoner (2001) note:

Complex societal issues affect medical education and thus require new approaches from medical school admission officers. One of these issues—the recognition that the attributes of good doctors include character qualities such as compassion, altruism, respect, and integrity—has resulted in the recent focus on the greater use of qualitative variables, such as those just stated, for selected candidates … [t]he second and more contentious issue concerns the system used to admit white and minority applicants. Emphasizing character qualities of physicians in the admission criteria and selection process involves a paradigm shift that could serve to resolve both issues (p. 1207).

The trend toward emphasizing professionalism and “humanistic” factors is also reflected in recent efforts by licensing and accreditation bodies to assess these qualities among both trainees and training institutions (see also chapter on accreditation and diversity). The Liaison Committee on Medical Education, for example, is reviewing efforts by medical schools to teach professionalism and demonstrate the effectiveness of these efforts. Similarly, the NBME will require that examinees pass measures of professionalism, communication, and interpersonal skills, while the Accreditation Council on Graduate Medical Education and the American Board of Medical Specialties have defined several areas of professional competency that include among them communication and interpersonal skills and understanding and sensitivity to diversity. Specialty groups such as the American Board of Internal Medicine (ABIM) are adapting similar approaches; ABIM now requires physicians seeking board certification to demonstrate integrity, respect, and compassion in their relationships with patients and their

families. These trends place greater responsibility and pressure on health professions training programs to screen applicants for humanistic attributes and enhance training to help students further develop these skills (Edwards et al., 2001).

Efforts to identify and assess important qualitative attributes of applicants to health professions training programs are not without challenges. As noted above, screening and interviewing applicants for admission to health profession training programs is a labor- and time-intensive, expensive process. Assessing applicants’ qualitative attributes often requires more of an admissions committee’s time and energy than simply reviewing quantitative data (e.g., grades and test scores), as committee members must glean this information from essays, letters of recommendation, and personal interviews. In addition, while most health professions training faculty agree on the core humanistic attributes that ideal applicants should possess (e.g., compassion, sensitivity, commitment to service), there is not always agreement on the full range of important attributes that should be assessed, or how these attributes should be assessed. Moreover, admissions committees often respond—sometimes consciously, while in other cases unconsciously—to external pressures that discourage the broader use of qualitative data in the application process. These include, as noted above, the pressure to admit applicants with higher test scores, given that some in the media and in health professions fields will misinterpret the average test scores of admitted students as an indication of institutional “selectivity,” and the fear that the institution will be less able to defend admissions decisions that consider qualitative data in the face of lawsuits filed on behalf of rejected applicants (Edwards et al., 2001).

Despite these challenges, many institutions, recognizing the need for an admissions “paradigm shift” advocated by Edwards and colleagues (Edwards et al., 2001), have begun to devise new admissions models that balance consideration of applicants’ quantitative and qualitative information in an effort to achieve both quality and diversity in assembling a student body.

Conforming Admissions Policies to the Institutional Mission

A fundamental paradigm shift in health professions training programs’ admissions policies and practices begins with an assessment of whether admissions process and practices conform to the institutional mission. Examples of innovative admissions practices examined by the study committee suggest that this is an important first step that is not consistently addressed by many institutions. The Texas A&M College of Medicine, for example, began its reconsideration of its admissions policies and practices in the wake of the 1997 Hopwood decision with “a mindfulness of the

vision and mission of the institution in assessing and selecting students” (Maldonado, 2001). Similarly, Stanford University’s School of Medicine restructured its admissions process by emphasizing the need for a “mission-oriented” review of each applicant, which emphasizes an assessment of applicants based on the institution’s mission statement and goals (e.g., categorizing and ranking applicants’ skills and attributes in a manner that reflects the institution’s educational goals; Garcia et al., 2003). Examples of how the institutional mission helped to shape new admissions practices at these schools are described below. In both cases, a review of the institutional mission suggested that medical student diversity is critically important to achieving these institutions’ goals of expanding research and service to underserved communities and improving the health of individuals in the region and nation.

Training and Composing Admissions Committees

Another important step toward a fundamental paradigm shift in health professions training programs’ admissions policies and practices involves an assessment of the admissions committee itself, including giving careful consideration to issues such as who should serve on the committee, how will committee service be rewarded, and how should committee members be trained.

Demographic Characteristics of Admissions Committees

Little empirical research sheds light on the demographic composition of admissions committees for health professions education programs. Anecdotal evidence suggests that the vast majority of health professions training programs tend to appoint senior faculty (who tend to be predominantly white and male) to admissions committees and occasionally include representation from one or more students. Rarely are communities affected by admissions decisions, including members of communities served by teaching hospitals and community-based training institutions, represented on admissions committees.

Kondo and Judd (2000), in one of the few empirical studies of the demographic composition of admissions committees, surveyed deans or directors of admission at 85 U.S. medical schools to assess the presence of women and racial and ethnic minorities on medical school admissions committees. The results confirmed many of the anecdotal observations noted above: medical school admissions committees were largely male (the overall ratio of men to women was 1.77 to 1.0) and predominantly white (on average, 16 percent of committee members were from URM groups, only half of the URM members were physicians, and over half of the schools

surveyed reported that their admissions committees had no or only one URM physician). While 74 percent of committees had at least one medical student representative, medical students constituted only 15 percent of total admissions committee members. In addition, over nine of ten admissions committees operated on a volunteer basis (Kondo and Judd, 2000).

These data suggest that admissions committees have a great deal of room for improvement with regard to representation of diverse groups. This is not to suggest that racial and ethnic minorities or women should be expected to advocate for URM candidates, or that admissions committees should be “stacked” with individuals who might be more sympathetic to URM applicants. Rather, it suggests that admissions committees can improve on their ability to incorporate the perspectives of diverse groups that contribute to the educational experience on health professions education campuses. Racial and ethnic minority members of admissions committees might be better able to understand and contextualize the life circumstances and experiences of URM applicants and help other admissions committee members to understand a URM applicant’s abilities. Furthermore, racial and ethnic minority members of admissions committees may be well-positioned to assess URM applicants’ academic and nonacademic accomplishments, and commitment to service in racial and ethnic minority communities.

Unfortunately, because of the lack of URM faculty in many health professions training programs, the few that are present are often “pulled” in many directions and pressed into service on admissions committees and other important institutional committees. Kondo and Judd’s (2000) finding that service on the vast majority of medical school admissions committees is voluntary suggests that few rewards (e.g., consideration of committee service during promotion review) are provided for those faculty who serve in this capacity. And because minority faculty often face additional demands such as mentoring and recruiting URM students, service on institutional committees can present additional pressures that compete with teaching, research, and other work important for promotion. Service on important institutional committees, such as admissions committees, should be more appropriately rewarded to encourage the participation of URM faculty and staff.

Training of Admissions Committee Members

Another important component of fundamental change in the admission process involves training of admissions committee members. Several examples of innovative training programs are described below, including national training efforts of the Association of American Medical Colleges

(AAMC). Common attributes of admissions committee training programs include skills and knowledge development in areas such as:

-

How to assess qualitative attributes of applicants;

-

How to interpret the academic and standardized test performance of URM students, whose performance may be influenced by a range of factors, such as poor prior academic training and psychosocial factors (e.g., stereotype threat);

-

How to assess the academic and nonacademic achievements of URM students, whose life circumstances and experiences may differ from non-URM students; and

-

Interviewing skills, to identify ways to better elicit and manage information from applicants.

These skills and areas of knowledge are increasingly important for admissions committee members, who, as a result of the Supreme Court decision in the Grutter v. Bollinger case, may be asked to provide comprehensive reviews of applicants’ files and reduce dependence upon standardized test data as a means of winnowing applications.

AAMC Expanded Minority Admissions Exercise

In 1970, the AAMC began exploring new approaches to medical school admissions that consider strategies to balance applicants’ “noncognitive” qualities with other traditional predictors of medical school performance (e.g., MCAT scores, undergraduate science curriculum, and grades) in an effort to increase minority admissions. Beginning with the Simulated Minority Admissions Exercise in 1970, AAMC developed a program of case studies and training for medical school admissions officers that is designed to enhance these officers’ understanding of the importance of “noncognitive” factors (such as altruism, leadership, commitment to service, and empathy), to foster an appreciation of cultural diversity in medical training, and to model interviewing skills (Cleveland, 2003). In its current iteration, the Expanded Minority Admissions Exercise (EMAE) uses case studies of actual medical school applicants and videotaped admissions interviews to help admissions officials compare their assessment of applicants and with applicants’ actual admissions outcomes. Workshops are held at 28 medical schools and emphasize such strategies as:

-

Reconceptualizing the initial review of applicant files to assess whether applicants have the academic skills to succeed in medical school (as assessed by MCAT scores and grades), while considering other relevant

-

factors, such as educational and socioeconomic barriers that applicants have overcome;

-

Evaluating candidates’ skills and experiences, if they meet basic academic requirements in the initial review, such as clinical and health-care experiences, volunteer activities, research experiences, work activities, and leadership; and

-

Better assessing applicants’ personal qualities in the admissions interview.

A new edition of EMAE will focus on helping admissions committee members to evaluate personal characteristics more effectively and efficiently, to understand how admissions processes relate to the development of medical students’ cultural competency and care-giving skills, and to develop a greater appreciation of the different forms of “intelligence” (e.g., analytic, creative, and practice intelligence) that applicants may possess (Cleveland, 2003).

Examples of Admissions Practices That Attempt to Increase URM Admissions

Several HPEIs have developed innovative admissions strategies that utilize one or more of the concepts discussed above in an attempt to better assess attributes of applicants that are consistent with the institutional mission, as well as to increase URM admissions. Two such examples, drawing on admissions strategies at Texas A&M and Stanford Medical Schools, are offered here. These examples are not intended to suggest that these strategies represent the best efforts in the field, not are they offered as models that must be replicated at other HPEIs. In addition, the description of these institutions’ efforts should not suggest that innovative admissions strategies are being developed only in medical schools. Rather, they reflect innovative adaptations of these unique institutions to particular circumstances and policy contexts: one, at a state institution that faced a court-mandated ban on the consideration of applicants’ race or ethnicity in the admissions process, and the other, at a prestigious, well-funded private institution that, like many other similar institutions, did not have an impressive record of inclusion of underrepresented groups. The effectiveness of these strategies in increasing URM admissions has not been rigorously evaluated; data presented below suggest that the institutions’ efforts are likely to result in increases in URM admits, but future research should more thoroughly assess the long-term effect of these strategies on URM admission rate. For other examples of admissions policies and practices that endeavor to increase racial and ethnic diversity across a range of health

professions and types of institutions, see the contribution by Garcia, Nation, and Parker in this volume.

The Texas A&M Medical School Experience

Prior to the Supreme Court ruling in Grutter v. Bollinger, the decision by the U.S. Fifth Circuit Court in the Hopwood v. University of Texas suit created a mandate that all public higher education institutions in Texas (as well as other states in the Fifth Circuit) abandon the consideration of applicants’ race or ethnicity in the admissions process. In response, the Texas A&M Medical School, like other institutions in the state, attempted to develop a “race-neutral” admissions process that would allow the institution to continue to admit and enroll URM students. As a first step, the admissions committee considered the Health Sciences Center and College of Medicine’s (COM) mission and institutional goals to reassess the admissions process and, in particular, reconsider the weights placed on applicants’ MCAT scores, academic records, and personal and experiential qualities. The COM’s mission and institutional goals “became the philosophy by which the admissions committee guided and directed the admissions process” (Maldonado, 2001, p. 314). Texas A&M’s new admissions plan called for:

-

“A mindfulness of the vision and mission of the institution in assessing and selecting students;

-

A more inclusive approach to assessing cognitive abilities;

-

A broad-minded scrutiny of applicant’s noncognitive characteristics at the pre-interview and interview phases of the evaluation process;

-

Enhanced interview techniques;

-

Improved protocol for admissions committee deliberations; and

-

Frequent self-monitoring” (Maldonado, 2001, p. 314).

Subsequently, COM admissions officials decided to widen the pool of applicants to be interviewed by carefully analyzing the distribution of academic scores (i.e., a combination of MCAT scores and undergraduate grade point average) of applicants. A top tier of applicants was identified with superior academic scores and were considered “automatic” interviews. These applicants, approximately 20 percent of the applicant pool, tended to be less racially and ethnically diverse, but also were less likely to enroll in the medical school if admitted (less than one in five accepted offers of admission). The admissions committee identified a middle group of applicants whose academic scores were considered acceptable (average MCAT scores and GPA of this group were 26 and 3.45, respectively) and indicative

that the applicant could complete the medical school curriculum, if admitted. This middle pool included a broader representation of “underrepresented and disadvantaged” applicants and tended to be more likely to accept offers of admission to the medical school than the top pool of applicants. Finally, the admissions committee identified a pool of applicants that were considered “high-risk” on the basis of academic scores. Applicants in this pool were not considered for interviews unless they self-identified as “disadvantaged” (Maldonado, 2001).

The Stanford University Model

The Stanford University School of Medicine has adopted a model of medical school admissions that reflects the institution’s mission, which includes recognition that racial and ethnic diversity in the medical school is essential to achieving the institution’s educational goals. This commitment to diversity begins with strong leadership from the university president and others who have articulated clear diversity goals and strongly support diversity as central to the institution’s mission (Garcia et al., 2003). The admissions process is driven by the institutional mission and includes:

-

Training of admissions committee members, who are taught about the impact of “stereotype threat” on minority test performance and other contextual factors that are important for assessing minority applicants, such as the role of cultural and language barriers, family background, and the educational “distance traveled” (e.g., the impact of prior experiences of prejudice or discrimination, the greater likelihood that minority students must work during college to meet financial obligations);

-

A “mission-oriented” file review, which emphasizes assessment of applicants based on the institution’s mission statement and goals (e.g., categorizing and ranking applicants’ skills and attributes in a manner that reflects the institution’s educational goals); and

-

Developing partnerships with undergraduate faculty and advising staff of undergraduate colleges to support the admissions process.

Stanford’s admissions committee has developed extensive barometers for assessing applicants’ qualitative attributes. For example, candidates’ success as a role model for others is assessed by looking for evidence that the applicant has participated in activities that are visible and are intended to influence members of the community in a positive manner. Similarly, applicants’ commitment to service is assessed by evidence of community involvement, volunteer service, and other activities. Applicants’ degree of

engagement in these activities is assessed by evidence of participation, particularly over a long period of time; assuming a leadership role; advocacy on behalf of the organization or issue; programmatic innovation; and, perhaps more importantly, evidence that the applicant has left a legacy or lasting impact in the community served.

MCAT data are considered early in the admissions process as an initial “screen” to identify applicants who are not likely to be able to manage the medical school curriculum. A range of scores has been identified as a “qualifying range,” based on the admissions committee’s prior experience and knowledge of the curriculum. Applicants who perform at or above the cut-off range are likely to possess the academic skills and background to pass the school’s core curricula. When the admissions committee makes final decisions, quantitative variables are not considered as “individual items of great importance” (Garcia et al., 2003), but rather, the committee attempts to answer two questions:

-

“How will this candidate contribute to and benefit from the learning climate at [the] institution?; [and]

-

Will accepting this candidate be in line with the mission and values of the school?” (Garcia et al., 2003).

Stanford has achieved significant success in recruiting and admitting URM students (over 20 percent of the medical students in 2002–2003 were African American, Hispanic, or Native American). Of these, nearly three in five (57 percent) are involved in scholarly research through the institution’s Medical Scholars Program, a rate that is comparable to participation in research among Stanford’s non-URM students. Stanford’s success with URM students is providing longer-term benefits: follow-up data reveal that 18 percent of the school’s URM graduates have a full-time career in academic medicine (Garcia et al., 2003).

The institution supports its admissions efforts by extending academic and social support to URM students and assesses its progress in attracting and retaining URM students. The Early Matriculation Program (EMP), in particular, offers URM and non-URM students a summer premedical curriculum that provides an early introduction to the culture of the medical school, builds student confidence and leadership skills, and promotes scholarship. Follow-up data reveal that EMP participants have a slightly higher than average rate of receipt of Stanford Medical Student Scholar awards, have a lower rate of attrition, and have published at slightly higher levels (22 percent, or nearly one in four) than other Stanford Medical School students (Garcia et al., 2003).

SUMMARY AND RECOMMENDATIONS

This chapter has reviewed typical admissions policies and practices of health professions educational programs, with particular attention to the role of standardized tests in the admissions process. Standardized test scores are generally good predictors of subsequent academic performance, but they have been used—in some cases inappropriately—as a barometer of applicants’ academic “merit,” often to the detriment of URM students. URM students often score lower than their white or Asian American peers on a range of standardized tests, including the SAT, GRE, and MCAT. This disparity occurs for a variety of reasons, principally because of poorer educational opportunities afforded to African American, Latino, and American Indian/Alaska Native students. These students are more likely than non-URM students to attend racially and economically segregated, poorly funded schools that offer few (if any) advanced placement and college preparatory classes, have fewer credentialed teachers, and suffer from a climate of low expectations. Standardized test performance is variable and may be improved significantly through costly private test preparation classes. Moreover, even among those URM students who are invested in high academic performance, social and psychological factors, such as the pressure to perform above levels suggested by stereotypes of low minority academic ability, may serve to suppress their test performance.

When quantitative variables such as standardized test scores are weighted heavily in the admissions process, URM applicants, because of their generally poorer academic preparation and test performance, are less successful in gaining admission than non-URM applicants. Absent admissions practices that allow applicants’ race or ethnicity to be considered along with other personal characteristics of applicants, URM student participation in health professions education is likely to decline sharply. States that have implemented “percent solution” admissions strategies (i.e., where a top percentage of high school graduates are guaranteed admission to the state university system) have found that URM admissions have generally declined. In California, even when URM students gain admission, these students are not guaranteed a seat at the university of their choice, which may result in URM students disproportionately matriculating in “lower-tier” campuses within the University of California system.

These barriers to URM admission have led some organizations and institutions to reconceptualize their admissions policies and practices to place greater weight on applicants’ qualitative attributes, such as leadership, commitment to service, community orientation, experience with diverse groups, and other factors. This shift of emphasis to professional and “humanistic” factors is also consistent with a growing recognition in health professions fields that these attributes must receive greater attention in the admissions process to maintain professional quality, to ensure that future

health professionals are prepared to address societal needs, and to maintain the public’s trust in the integrity and skill of health professionals. Anecdotally, evidence suggests that this shift may also reduce barriers to admission of qualified URM applicants, thereby achieving the dual goals of improving both the quality and diversity of health professions students.

Recommendation 2-1: HPEIs4 should develop, disseminate, and utilize a clear statement of mission that recognizes the value of diversity in enhancing its mission and that of the relevant health care professions. The mission statement should identify the stakeholders (e.g., the community or public served) to whom the institution is accountable as well as (where applicable) state, regional, or national health workforce goals.

Recommendation 2-2: HPEIs should establish explicit policies regarding the value and importance the institution places on the teaching and provision of culturally competent care and the role of institutional diversity in achieving this goal.

Recommendation 2-3: Admissions should be based on a comprehensive review of each applicant. Admissions committees should determine which attributes of applicants best support the mission of the institution and assess these attributes as part of the admissions process. Such attributes include, but are not limited to, applicants’ race or ethnicity, socioeconomic background, cross-cultural experience, life choices, multilingual abilities, interpersonal skills, cultural competence, leadership qualities, barriers the applicant has overcome, and other attributes that reflect the institutional mission. Admissions models should balance quantitative data (i.e., prior grades and standardized test scores) with these qualitative characteristics.

Recommendation 2-4: Admissions committees should include voting representation from underrepresented groups, including, but not limited to, racial/ethnic minorities, and should reflect the geographic and socioeconomic diversity of the communities served by the institution. In addition, health professions education institutions should provide special incentives to faculty for participation on admissions committees (e.g., by providing additional weight or consideration for service during promotion review) and provide training for committee members on the importance of diversity efforts and means to improve diversity within the committee purview.

REFERENCES

American Sociological Association. 2003. Brief of the American Sociological Association et al., as Amicus Curiae in Support of Respondents. Merritt DJ, Lee BL, Attorneys for Amici Curiae. Washington, DC: American Sociological Association.

Aronson J, Fried CB, Good C. 2001. Reducing the effects of stereotype threat on African American college students by shaping theories of intelligence. Journal of Experimental Social Psychology 38(2):113–125.

Association of American Medical Colleges (AAMC). 2000. The predictive validity of the Medical College Admission Test. Contemporary Issues in Medical Education 3(2):1–2.

Bobo LD. 2001. Racial attitudes and relations at the close of the twentieth century. In: Smelser NJ, Wilson WJ, Mitchell F, eds. America Becoming: Racial Trends and Their Consequences. Vol.1. Washington, DC: National Academy Press. Pp. 264–301.

Bowen WG, Bok D. 1998. The Shape of the River: Long-Term Consequences of Considering Race in College and University Admissions. Princeton, NJ: Princeton University Press.

Calvin A. 2000. Use of standardized tests in admissions in postsecondary institutions of higher education. Psychology, Public Policy, and Law 6(1):20–32.