4

Technology Issues

As described in Chapter 1, an election is not a single event but rather a process. It is thus helpful to consider the information technology (IT) of voting in two logically distinct categories: IT for voter registration and IT for voting.

4.1 INFORMATION TECHNOLOGY FOR VOTER REGISTRATION

Voter registration is affected by information technology. Though the subject has received comparatively little attention in the public debate, it is beginning to receive attention. Voter registration is the gatekeeping process that seeks to ensure that only those eligible to vote are indeed allowed to vote when they show up at the polls to cast their votes. Although much of the voter registration process unfolds before Election Day, the final step generally occurs on Election Day. Specifically, citizens register to vote before Election Day, and presuming that they vote at the polls, their voting credentials are checked on Election Day.

Voter registration is a complex process, as one might expect of a decentralized endeavor that involves millions of voters. Historically, voter registration has been a local function, and the primary function of election officials. However, under the Help America Vote Act of 2002 (HAVA), states are required to assume responsibilities that have previously been the province of individual local election jurisdictions. Specifically, HAVA calls for the states to create, for use in federal elections, a “single, uniform,

official, centralized, interactive computerized statewide voter registration list defined, maintained, and administered at the State level,” containing registration information and a unique identifier for every registered voter in the state. This requirement applies to essentially all states; according to the Department of Justice, this requirement would not be satisfied by local election jurisdictions continuing to maintain their own nonuniform voter registration systems in which records are only periodically exchanged with the state. Rather, HAVA requires a true statewide system that is both uniform in each local election jurisdiction and administered at the state level.1

Once a voter registry has been established, two primary technology-related tasks for voter registrars are to keep ineligible individuals off the registration lists and to make sure that eligible ones who are on the lists stay on the lists. A third task—registering new voters—occurs on a regular basis as people come of age or move into a community and want to vote and normally spikes right before or during an election. However, registering new voters occurs on a “retail” case-by-case basis, in contrast to the purging function, which is necessarily done “wholesale.”

Purging tasks arise because individuals identified as eligible voters may lose their eligibility for a number of reasons. A list of such reasons from Florida is typical2—voters may lose eligibility due to felony convictions, civil court rulings of mental incapacity, death, and inactivity. In addition, a voter may cease to be properly registered, because his or her eligibility to vote in particular electoral contests can be affected by a change in residence or by redistricting that places his or her residence in a different voting district. Finally, an individual registered to vote in more than one local election jurisdiction, even if he or she is otherwise an eligible voter, may vote only in the location in which he or she is legally entitled to vote.

Because lists of registered voters contain millions of entries, the purging of a voter registration list must be at least partially automated. That is, a computer is required to compare a large volume of information received from other secondary sources (e.g., departments of vital statistics for death notices, law enforcement or corrections agencies for felony convictions, departments of tax collection or motor vehicles for recent addresses) against its own database of eligible voters to determine if a given individual continues to be eligible. Note also that states do not in general

|

1 |

|

|

2 |

Florida Department of State, Florida Voter Registration System: Proposed System Design and Requirements, January 29, 2004. Available at http://election.dos.state.fl.us/hava/pdf/FVRSSysDesignReq.pdf. |

check across state boundaries to see if voters are registered in more than one state or if they have voted in two states on Election Day.

Though this task sounds like a relatively simple one—just compare the lists3—it is enormously complicated by two facts: (1) the same individual may be represented on the different lists in different ways (John Jones and John X. Jones may refer to the same person, and he may have given the former name in registering to vote and the latter name in obtaining a driver’s license) and (2) the same name (e.g., John Jones) may refer to many different people. (This problem would be greatly ameliorated by the use of an identifier unique to the individual, such as a Social Security number, but for a variety of historical and legal reasons, the nation has chosen to eschew such use.)

Thus, there must be some specific criteria for determining whether or not different names refer to the same person. For example, to deal with the first fact above, one criterion might be this: If similar names have the same home address associated with them, the names refer to the same individual. Such a criterion thus requires a rule for determining “similarity” or a match. One such matching rule might be “if the first and last names are identical, consider the full name a match.” Under this approach, John Jones and John X. Jones would be deemed to be the same individual only if they share the same home address, but John Jones and Mary Jones would be deemed different individuals even if they shared the same home address. Suffixes on names, such as Jr. and Sr., can also cause problems in a similar manner.

Similarly, the second fact involving identical names might require a criterion such as, “If the name is associated with several different home addresses, there are as many different individuals as there are home addresses.” In this case, the matching criterion applies to home addresses, which are somewhat less ambiguous than names.4

The problem of determining whether names match is an algorithmic one. A simple and obvious algorithm calls for a perfect character-by-character match between names. But names in a database may be misspelled (e.g., due to typographical errors), and thus an algorithm that is relatively insensitive to such errors may be of more utility in determining

a match. Names can be pronounced the same way but spelled differently and vice versa. One class of algorithms developed to handle such problems is Soundex algorithms.5 These algorithms are widely used today for applications involving name matching, and their applications include name matching in comparisons of voter registration databases with other databases.

It is useful to distinguish between a “strong match” and a “weak match.” A strong match is one in which there is a very high probability that two data segments represent the same person. A weak match indicates that two data segments are similar, but additional information or research is necessary to determine if the two data segments represent the same person. In addition, there can be many legal ways to identify a citizen who is eligible to vote, which suggests that information in multiple databases can be used to determine eligibility.

Whatever the approach, it is important to realize a trade-off between false negatives and false positives. Any approach will identify some names as different when they do refer to the same individual (false negative) and other names as similar when they do not refer to the same individual (false positive).

Consider the significance of this problem for purging of a voter registration list. Any approach will incorrectly identify some registered voters as ineligible and thus improperly purge them (false positive) and will also fail to find ineligible voters who are not identified as such and thus remain on the list (false negative). For example, John Jones on the voter registration list and Jahn Jones on the convicted felon list may constitute a weak match, and without additional research, John Jones may be improperly removed from the voter registration list (a false positive). On the other hand, the names Sam Smith on the voter registration list and Sam X. Smith on the convicted felon list (with both names referring to the same person) may result in Sam Smith improperly remaining on the voter registration list (a false negative).

It is a fundamental reality that the rate of false positives and the rate of false negatives cannot be driven to zero simultaneously. The more demanding the criteria for a match, the fewer matches will be made. Conversely, the less demanding the match, the more matches will be

made. For example, a requirement that names match (using all of the letters), addresses match, and dates of birth match is more demanding and will result in fewer matches than if the requirement is that only names and addresses match and only some of the letters and/or sounds in the name are used to determine a match. The choice of criteria for determining similarity is thus an important policy decision, even though it looks like a purely technical decision.

Furthermore, the considerations discussed above suggest that the presence or absence of human intervention in the purging process is important. That is, one should regard as very different a purging system that is fully automated and one that uses technology only to flag possible individuals for further attention by some responsible human decision maker. Because the human decision maker would use different criteria to render a decision (including the use of common sense and contextual factors), the rate of false positives would be reduced—and considerably so if the different criteria could be applied consistently.

In addition, the use of lists of inactive voters can provide some protection against false positives. A purge removes a voter from the voter registration list entirely, and thus this voter would either be denied the ability to vote or might be allowed to cast a provisional ballot. But if a voter who might otherwise have been purged is moved instead to an inactive voter list, the voter still remains on the rolls—and may vote in a subsequent election.

Finally, the purging of voter registration lists must itself be seen in a larger context, as such purging can be used as a political tool to manipulate the outcome of elections. One such use is to purge in local election jurisdictions chosen so that a purge would have differential effects on various voting blocs. Statewide management of voter registration lists reduces the possibility that decisions to purge are made locally, but there may be nothing in state law that in principle or in practice prevents state officials from ordering such purges for political reasons.

The issue above is important because there must be some criterion by which to determine if a purging is undertaken overaggressively or underaggressively. An overaggressive purge purges individuals who should be retained on the rolls. An underaggressive purge does not purge individuals who should not be retained on the rolls. Either type of purge can be undertaken for political reasons, depending on the demographics of those inappropriately retained on or purged from the rolls.

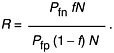

One approach to understanding the nature of a purge is to compare the rate at which eligible voters are inappropriately purged (E) with the rate at which ineligible voters are not purged (I). That is, define R as the ratio of I to E. Thus, R reflects the number of ineligible voters who are not purged for every eligible voter who is purged. Those who put a very high

premium on eligible voters not being purged want E to be as low as possible, and thus tend to favor large R. Those who put a very high premium on purging the voter rolls of all ineligible voters want I to be as small as possible, and thus tend to favor small R.

In any event, given a certain fraction of ineligible voters in the voter registration database, the choice of R determines a great deal about the performance requirements of the purging process. As Box 4.1 illustrates, the choice of R fixes the relative effectiveness of the purging process in identifying eligible voters for retention compared with not identifying ineligible voters for purging.

Note also that Election Day credential checking involves a similar set of considerations. A citizen presents his or her credentials at the polling place, and these credentials are checked against a listing of eligible voters. Again, the issue of similarity is relevant. If the eligibility credential is an excerpt from the voter registration database (e.g., a voter registration card), the possibilities for error are minimized. But if, instead, the requirement is to prove one’s identity with some other set of credentials, such as a driver’s license, a judgment of similarity must again be made. However, this time the criteria—which may or may not be the same as those used for purging voter registration lists—work in the opposite direction. A demanding similarity criterion will tend to exclude eligible voters, while a less demanding criterion will allow more ineligible individuals to vote (or at least result in more confusion between different individuals).

Against the discussion above, a number of important questions arise:

4-1. Are the relative priorities of election officials in the purging of voter registration databases acceptable? As noted above, purging databases can be conducted in an overaggressive manner or in an underaggressive manner. The politically correct response for public consumption is that it is equally important to purge the registration rolls of ineligible voters and to ensure that no eligible voters are purged, but of course in practice officials must choose the side on which they would prefer to err. An explicit statement of R—the number of ineligible voters who are not purged for every eligible voter who is purged—is thus a quantitative measure of the direction in which a given policy is leaning. (Of course, being able to make an estimate of R requires that data be collected that indicate the probability that an eligible voter on the voter registration rolls is wrongly purged, the probability that an ineligible voter on the voter registration rolls fails to be purged, and the fraction of the voter registration rolls that actually consists of ineligible voters.)

4-2. What standards of accuracy should govern voter registration databases? In voting machines, a Federal Voting Systems Standard specifies a maximum error rate of 1 in 500,000 voting positions (e.g., 1 in every

|

Box 4.1 Let Pfp = the probability that an eligible voter on the voter registration (VR) rolls is wrongly purged. Let Pfn = the probability that an ineligible voter on the VR rolls fails to be purged. Let f = the fraction of the VR rolls that actually consists of ineligible voters. Each cell entry in the table below indicates the probability of the action taken given the status of an individual on the VR roll. In the ideal case (a perfect algorithm), the likelihood of purging an eligible individual is zero, as is the likelihood of not purging an ineligible individual.

In the more realistic case, with nonzero Pfp and Pfn, the probabilities are as follows:

By definition, f is the fraction of the database of size N that consists of ineligible individuals. Based on the tables above, the cell entries below indicate the number of people who are eligible (ineligible) who are subsequently purged or not purged.

If we define R as then  |

|||||||||||||||||||||||||||||||||

2,000 punch card ballots with 250 voting positions on each card). What might be a comparable standard for the accuracy of a voter registration database, taking into account that people move frequently and die eventually?

4-3. How well do voter registration databases perform? How many people who think they are registered really are registered? How many people who are registered should be registered? The first question requires a general population survey that is linked to registration records (the American National Election Studies did this for many years). The second question requires a sample from the registration list followed up with diligent efforts to contact the people and the collection of information about them.

4-4. What is the impact on voter registration database maintenance of inaccuracies in secondary databases? The quality of databases other than those for voter registration affects maintenance of voter registration databases. In general, databases such as those of departments of motor vehicles (DMVs), departments of correction, and departments of vital statistics are not under the control of the state election officials. (Vital statistics are usually under the control of a county or municipality.) For example, if a DMV database is highly inaccurate in its recording of addresses, and a decision on voter eligibility depends on a match between the address on the voter registration database and that of the DMV, the probability of purging an eligible voter increases, all else being equal. A related point is the fact that database interoperability is in general a nontrivial technical task. The secondary databases needed for verification of voter registration are developed for entirely different purposes, and both the syntax and semantics of those databases are likely to be different from those of the voter registration databases.

Finally, these secondary databases are subject to state legislative control as well, and there are a wide range of options for how legislatures can affect their disposition and use in the voter registration process. For example, states could explicitly disclose these sources, so that a voter could be especially careful to ensure that he or she is not being misrepresented in such databases. States could mandate that secondary databases be managed with a higher level of care when they are used for purposes related to voter registration. Or states could mandate that in the interests of protecting voter privacy only certain types of data in these secondary databases would be available to the voter registration process. More generally, refining criteria for the various legal reasons for purges has been and will be on the agenda of many legislatures, and discretion based in local election jurisdictions about how to conduct purges will probably be subject to increased scrutiny.

4-5. Will individuals purged from voter registration lists be notified in enough time so that they can correct any errors made, and will

they be provided with an easy and convenient process for correcting mistakes or making appeals? From the discussion above, it is clear that some number of eligible voters will be inappropriately purged in any large-scale operation. Given that the right to vote is a precious one, voters who may have been purged incorrectly should have the opportunity to correct such mistakes before they cast their votes.6

4-6. How can the public have confidence that software applications for voter registration are functioning appropriately? As the discussion in Section 4.2.1 indicates, software for voting systems is subject to a variety of certification and testing requirements that are intended to attest to its quality. But there are no such standards or requirements for software associated with voter registration. Voters who lack confidence in the operation of voter registration systems will be uncertain about their ability to vote on election day. Large numbers of such voters will almost surely result in reduced turnouts.

4-7. How are privacy issues handled in a voter registration database? In many states, much of the information in a voter registration database is public information. HAVA directs states to coordinate those databases with drivers’ license databases of state DMVs and with the U.S. Social Security Administration. States may choose to coordinate with other databases as well, such as databases containing identification information for felons and death records. Much of the information in these other databases is not relevant to one’s eligibility. For example, one’s driving record is contained in a database of licensed drivers maintained by the state DMV. This database may be used to verify names and addresses for voter registration purposes (checking consistency, for example), but one’s driving record is not relevant for determination of voting eligibility. How do state laws, regulations, or guidelines limit the fields that constitute public information or the extent to which the interfacing agencies are permitted to retain personal data received from the other agencies during the matching process required for voter registration? How, if at all, is such nonrelevant information protected from inappropriate disclosure? How might such nonrelevant information be used to bias voter turnout for partisan

purposes? (Indeed, much of the information contained in these databases is for sale by the states, and the purchasers of such information are often political parties.)

4-8. How can technology be used to mitigate negative aspects of a voter’s experience on Election Day? For example, in many large jurisdictions, check-in lines at polling places can be both long and uneven. One frequently heard reason for this phenomenon is that any given poll worker checking registration can only check certain last names (e.g., all those names starting with letters A through G). This is true because the roll books containing lists of registered voters are broken up that way, and the poll workers have no flexibility on this point. However, information technology might be used to provide such similar information to poll workers without the need for such a procedure.7

4-9. How should voter registration systems connect to electronic voting systems, if at all? Today, there is an “air gap” between voting, even if done electronically, and checking for voter registration, which is done manually. However, in the interests of efficiency and rapid movement through polling places, it is easy to see a persuasive argument for why these functions should be integrated. A voter could simply present an electronic registration card to a voting station and be allowed to cast a ballot. This arrangement might facilitate easy, vote-anywhere voting in thousands of locations across a state rather than in just one precinct location and also early voting, in which a voter could vote at a central site. In both situations, a voter could have high assurance that he/she received the correct ballot form corresponding to his or her registration address. The most obvious argument against this arrangement is that it potentially compromises the secrecy of voting in a major way. Nevertheless, it is easy to imagine that both voter registration and voting might be integrated in packages of services offered by election service vendors.

4.2 INFORMATION TECHNOLOGY FOR VOTING

IT for balloting is what is usually meant by “electronic voting systems”—the systems described in Chapter 3. This section addresses security and usability issues. Usability can be characterized as functionality that facilitates a voting system’s accurate capture of a voter’s intent in casting a ballot and assures the voter that his or her ballot has been so captured. Furthermore, the voting system must record that ballot accu-

rately until it is tabulated, even in the face of deliberate wrongdoing (security) or accidental error or mishap (reliability).

4.2.1 Approaching the Acquisition Process

In considering the purchase of any given voting system, an election official’s first step is often to consider systems that have been qualified under a process established by the Election Assistance Commission (EAC). Specifically, a vendor’s voting system is qualified if an Independent Testing Authority (ITA) asserts that the system in question meets or exceeds the Federal Elections Commission’s 2002 Voting Systems Standards (Box 4.2).8 ITAs are designated by the National Association of State Election Directors, and a vendor pays an ITA for its work in qualifying a system.

Knowledge that a given voting system has been qualified according to a particular standard provides some degree of assurance that the system in question meets a minimum set of requirements. Nevertheless, the fact that a given voting system has been qualified may not be the only criterion that affects a decision maker’s procurement decision.9 This is because voting systems fit into a larger context that cannot be separated from an assessment of fitness for purpose. The election official is responsible for the conduct of an election with integrity, and the equipment used in the election is only one part of that election. Yet, the qualification process evaluates voting systems, making just such a separation. This is not the fault of the qualification process—it is simply a consequence of the fact that any testing process must necessarily set bounds on the scope of the evaluation.

Of particular significance is the fact that various jurisdictions have long-established policies, procedures, and practices that govern the conduct of elections. Introduction of new technology into established practices almost always results in some degree of conflict and difficulty, even when the authorities seek to adjust existing practices to accommodate the new technology. Technology may work properly only if certain pro-

|

Box 4.2 To address some of the difficulties of technology assessment for state and local election officials, the Election Assistance Commission (EAC) has responsibility, with assistance from the National Institute of Standards and Technology (NIST), for developing voluntary standards that help to provide assurance that conforming voting systems are accurate, reliable, and dependable. Initially approved by the Federal Election Commission (FEC) in 1990, with a revised edition released on April 30, 2002, these standards are again being revised as this report goes to press. The FEC 2002 Voting Systems Standards (VSS) cover functional capabilities required of a voting system—what a voting system is required to do—but not election procedures or report formats. The functional capabilities include (1) a set applicable to all parts of the election process, including security, accuracy, integrity, system auditability, election management system, vote tabulation, ballot counters, telecommunications, and data retention; (2) prevoting capabilities, used to prepare the voting system for voting, such as ballot preparation; (3) voting capabilities, such as the casting of ballots at the polling place by voters; (4) postvoting capabilities that are relevant after all votes have been cast, such as obtaining reports for individual voting machines, polling places, and precincts; and (5) maintenance, transportation, and storage capabilities relevant to voting system equipment. In addition, the FEC 2002 VSS cover hardware standards for performance, physical characteristics, and design; software standards intended to ensure that the overall objectives of accuracy, logical correctness, privacy, system integrity, and reliability are achieved; telecommunications standards that govern the capability to transmit and receive data electronically (e.g., via modem); security standards intended to achieve acceptable levels of integrity, reliability, and inviolability in conforming systems; standards for quality assurance such as documentation of the software development process; and standards for configuration management of voting systems. In April 2005, the EAC’s Technical Guidelines Development Committee released a first draft of technical guidelines that add to the FEC 2002 VSS in the areas of security and transparency of voting systems, usability of voting systems, and core requirements and testing. After a period of comment, it is expected that the EAC will promulgate the augmented Voluntary Voting System Guidelines (VVSG)—Version 1 as the first round of a new set of standards. A second round of review for all of the VVSG is expected to follow, resulting in an integrated and forward-looking version of the VVSG that should be available in FY 2006. |

cedures are followed by poll workers, for example, and any given set of standards may—or may not—presume that these procedures are followed.

Moreover, the qualification process may not be adequate for a particular jurisdiction’s needs. For example, an election official from a jurisdiction with a long history of fraud and corruption may perceive security

issues in a different light than an administrator from another jurisdiction without such a history. For the former, a given set of security standards may be inadequate, but for the latter, the same set may be more than adequate.

An important technical point is that the voting stations deployed in a particular jurisdiction may not be identical. A great deal of hard-earned experience in the IT world suggests that a station running software version A may work perfectly with other stations running software version A, and a station running software version B may work perfectly with other stations running software version B, but that a station running software version A is unreliable when it connects to a station running software version B. Or, a station may be secure when in stand-alone operation but much less secure when connected to a network.

Similar points apply to hardware and software qualification. The same body of experience suggests that especially when custom hardware is involved (as it is for nearly all voting systems), it is the total package—software of a specific version running on hardware of a specific model—that must be evaluated. And, a small change to a qualified piece of software can in principle render it noncompliant with the relevant standards.

For such reasons, election officials may wish to go beyond the qualification process in their assessments of vendor offerings. The discussion below focuses on two areas of particular significance: security and usability/accessibility.

4.2.2 Security

4.2.2.1 Perspectives on Security

A very important requirement of any information technology deployed in a critical application is that it be secure and reliable. Security involves its resistance to deliberate acts of fraud that cause the system to record votes differently from what was intended by the voters who cast them.10 Thus, a voting system must ensure that ballots are counted as cast and that the resulting vote counts are accurate, despite malicious hacker attacks or insiders hired or planted to alter election results. (The system must also be reliable—that is, resistant to unplanned events that

render it unavailable for normal use by voters; such events include power failures, unanticipated input sequences that might cause the system to freeze, accidentally introduced software bugs, and potential administrative mishaps or errors. These are not security issues per se and are not addressed further in this report.)

Moreover, in the electoral context, the public must have reason to believe in the security of the system, even in the face of those inclined to challenge it. That is, even if a system is in fact robust against such problems, perceptions of a system’s security depend on people’s experience with those systems, media exposure, and public debate. With new technologies being frequently deployed, election officials may face the task of assuring the public that the new systems are in fact secure and reliable, even if no problems arise immediately. At the same time, the consequences of inaccuracy and/or system failure place election officials on the front line of responsibility that could ultimately affect the outcome of any election. This point is particularly relevant given the discussion in Chapter 2 about a polarized electorate.

Security issues in voting are among the most difficult that arise in the development of secure systems for any application. Systems to manage financial transactions, for example, must also be highly secure, and much of the experience and knowledge needed to develop secure systems for financial applications is directly relevant to the development of secure systems for voting. But these applications differ from voting applications in at least two important ways.

First is the need to protect a voter’s right to cast a secret ballot. Developing an audit procedure (and the technology to support audits) is enormously more difficult when the transactions of an individual must not be traceable to that individual. (Consider, for example, the difficulties in reconciling accounts if it were by design impossible to associate an individual with the amount of a specific transaction.)

Second, under many circumstances, the value of security in financial systems can be quantified as just another cost-benefit trade-off. For those instances in which it is possible to estimate the likelihood of a particular kind of security breach, it is possible to compare the cost of securing that breach to the expected loss if the breach is not secured. Such a cost-benefit analysis is difficult for voting applications, because there is no commonly accepted metric by which one can quantify the “value” of a vote. Thus, an advocate of one position might argue that the relevant point of comparison for the security of voting systems should be the nuclear command-and-control system, while another might argue that commercial banking security is the appropriate comparison.

Also, election systems must declare a winner even when the margin of victory is minuscule. When the vote is close, a very small number of

votes can sway the election one way or another. Thus, in closely contested races, an election fraudster must manipulate only a small number of votes in order to obtain the desired outcome—and small manipulations are almost invariably more difficult to detect than large ones.

From the perspective of the computer scientist, security is a particularly elusive goal. Except in very rare instances that are for practical purposes not relevant to complex systems (and electronic voting systems count as complex systems), it is impossible to achieve 100 percent security in a system. Even worse, it is impossible to specify in any precise way what it would mean for a system to be 99 percent or 90 percent secure.

To illustrate, system testing is a process that is used to identify defects in a system (e.g., security vulnerabilities, software bugs). A vulnerability or a bug is detected when there is evidence that indicates its presence. But because the conditions under which a complex system can operate are so varied, no reasonable amount of testing can prove that the system is free of vulnerabilities or bugs. Moreover, the fixing of a particular system vulnerability takes place in the context of a would-be attacker who is motivated to continuously explore a system for such vulnerabilities. This implies that system security must also be a continuous and ongoing process that searches for vulnerabilities proactively and fixes them immediately.

A key point about security is that a system is only as strong as its weakest link. System security is a holistic problem, in which technological, managerial, organizational, regulatory, economic, and social aspects interact,11 and the attacker’s search for vulnerabilities is not limited to technological vulnerabilities. The technological security provided to pre-World War II France by the Maginot Line was high—but German tanks circumvented the line. In an election context, it makes little sense to enhance security in particular areas (e.g., in the computer-related parts of the election system) if enormous vulnerabilities remain in the other parts of the system whose exploitation could be problematic. At the same time, security in particular areas has to be compared by asking how much damage an adversary can do with a given amount of effort and a given risk of discovery. That is, gaping security holes in one part of the system (e.g., the noncomputer part) may be of lesser concern than smaller security holes in another part of the system if the latter can be exploited on a large scale more easily and more anonymously.

Cybersecurity experience suggests that there is only one meaningful technique by which the operational security of a system can be assessed: an independent red team attack.12 The term refers to tests conducted by

independent groups, often known as “red teams” or “tiger teams,” that probe the security of a system in order to exploit security flaws just as they would be uncovered by a committed attacker in an actual attack.13 Flaws are then reported to the party or parties who hired the red team. Vendors sometimes use red teams as a way of improving their products, while customers sometimes use red teams as a way of assessing the security present in a product they may buy or have bought. Conducted properly, a red team attack does whatever is necessary to compromise the security of a system, exploiting technological or procedural flaws in the system’s security posture or flaws in the human infrastructure in which the technology is embedded. (A technological flaw might be the use of a weak encryption algorithm. A procedural flaw might be a poll worker who can be bribed to take an improper action.) Red team attacks are also unpredictable, in contrast to scripted tests in which the system’s developer tests what it believes to be likely attacks.

As a general rule, many computer scientists are also skeptical of “security by obscurity,” a practice that involves hiding vulnerabilities rather than fixing them. The reason is that information about vulnerabilities, especially those of high-value systems, is enormously difficult to keep secret. Moreover, such vulnerabilities are often discoverable through the application of enough technical expertise and experimentation. Open discussion of vulnerabilities, argue these individuals, provides strong incentives for system owners to fix them or to configure their systems in such a way that hostile exploitation of the vulnerabilities is less (or not) harmful.14

For such a strategy to be meaningful, the source code of the system in question must be available for inspection, because it is the code actually running on the system that defines its behavior under all possible circumstances. Without access to source code, it would be essentially impossible to discover, for example, that the system is programmed to behave in one way until a specific sequence of keys is pressed with the right timing between key presses, at which time the system’s behavior shifts into an entirely different mode that allows access to and manipulation of the data

contained within the system. Indeed, such practices are common in software developers, who often install such “back doors,” known as maintenance traps, to facilitate system maintenance and debugging. While traps are a convenience for system developers, they are also blatant security holes and as such should not be included in production versions of the software. Alas, the pressures of software development under deadline are such that they are often included in production versions anyway.

When approaching any computer security problem, the computer scientist’s perspective can be summarized as a worst-case perspective—if a vulnerability cannot be ruled out, it is necessarily of concern. Furthermore, the computer scientist argues, a wealth of experience suggests that even obscure vulnerabilities in a system can be and often are exploited to the detriment of the system owner.

Computer scientists also note that the use of computers in voting makes possible the commission of automated fraud. Throughout most of the history of voting, the magnitude of fraud was strongly dependent on the number of people or on the effort required to commit fraudulent acts such as stuffing ballot boxes—larger numbers of fraudulent votes required a larger number of people. However, when computers are involved, a small number of individuals—albeit technically sophisticated individuals with high degrees of access to the internals of these computers—become capable of committing fraud on a very large scale indeed. Furthermore, because the software of computer systems is intangible, the difficulty of detecting such attempts is greatly increased.

It is thus not surprising that these perspectives shape the way that computer scientists look at security issues in electronic voting systems. In the words of one computer scientist:

As a general rule, the burden and cost should be on advocates of a particular voting product to provide evidence to the panel that the product is safe, rather than on critics to prove to the panel that it is unsafe. In case of doubt, a voting system should be considered unsafe until proven safe, and election officials should refrain from certifying, purchasing, or deploying voting equipment until independent security reviewers are confident that the technology will function as desired.15

The perspective of the election official is quite different. From a public policy perspective, it is desirable for election officials to have open attitudes about election concerns raised by members of the public, to welcome skepticism as a way of reassuring the public about how elections are conducted, to treat every election as precious, and to strive to eliminate

|

Box 4.3 As a matter of public policy, many states have adopted legal frameworks to promote a high degree of scrutiny for documents and processes related to the operation of government. According to this freedom-of-information philosophy, information related to the operation of government must be available to the public unless specifically exempted by law—the essential notion being that the making of public policy should itself be public. Against this standard, every aspect of the election process, including records, procedures, and vote-counting mechanisms, ought to be subject to public inspection. However, in practice, the convergence of several issues has attenuated the degree to which such inspection is possible. Vendors have asserted intellectual property rights in order to keep the source code of electronic voting systems out of public view (and most freedom-of-information laws specifically exempt proprietary information from disclosure)—a point of controversy in the public debate. The short period available to election officials for declaring a winner means that the time available for public inspection and access is short. And, the political pressures from all sides in an election to know its outcome rapidly mean that election officials have strong incentives to avoid recounts that might delay the declaration of a winner.1 If election processes—and in particular, source code—were available for inspection, critics of electronic voting systems could reasonably be expected to assume the burden of demonstrating that security problems exist. But because such information is not available, these critics become “outsiders” to the election process and thus must use the tools available to outsiders—public discussion of potential vulnerabilities, close scrutiny of election events, and media attention—to draw attention to the issues they raise. |

every possibility of error. Indeed, election officials are responsible for the safety and security of an election, and as a rule, they accept that the burden of assurance properly rests on their shoulders (Box 4.3).

But in practice, resource constraints, time pressures, the lack of administrative control, and simple mistakes make the normative goals described in the previous paragraph difficult if not impossible to achieve. How election officials actually behave ranges from idealistic to pragmatic (and in some—hopefully rare—cases, politically expedient or partisan as well).

There is also the point that the victors in an election are—by definition—transient. The preservation of democracy has historically depended much more on the integrity of elections taken over time than it does on the outcome of any single election. In the more than 200-year history of the nation, there have been hundreds of thousands of electoral contests,

and despite more than occasional fraud or irregularity in elections, the democracy endures—at least in part because election officials have taken measures to fix the problems that allowed those problems to occur.

Election officials also have multiple goals. Sharon Priest, once secretary of state for Arkansas and a former president of the National Association of Secretaries of State, notes that most election officials are necessarily as concerned with affordability, system usability, turnout, and compliance with the federal, state, and local laws that govern elections as they are with security—which suggests that security is not the only, sole, or primary issue for them, but rather is one of several equally important issues.

Indeed, election officials have learned over the years that misfeasance is typically a greater risk than malfeasance. That is, election workers routinely make mistakes and technologies routinely fail without obvious partisan bias. Ballots are lost, procedures are not followed, and improvised solutions are put into place to respond to pressures of the moment on Election Day. Although the impacts of misfeasance are likely to be more or less random, they still account for the majority of obvious problems that election officials must address with limited resources. And, as a result, administrators have generally paid more attention to improving the procedures that have led to such problems than to improving technology.

From the point of voter registration to the moment of winner certification, there are many opportunities for something to go wrong—both deliberately and accidentally—that can potentially affect an election outcome. As with all public officials, election officials do not have the resources to deal with all problems, and they necessarily leave some unaddressed. Within the constraints of their limited resources, they must set priorities—and their perceptions of the likelihood of various problems play an important role in setting those priorities. If it can be shown that a set of events has actually affected the outcome or tallies of an election, it is inevitable that an administrator will believe the likelihood of that kind of problem is greater than the likelihood of other sets of events that have not yet affected outcomes or tallies.

While political loyalties can and do protect the tenure of some election officials, other election officials realize they can lose their jobs if an election is not carried off correctly. Elections still must be decided, even when races are close. Close races increase the likelihood of recounts, and recounts dramatically increase the likelihood of vulnerabilities being exposed. For understandable reasons, many election officials would prefer to avoid such careful scrutiny.

Consider how these different perspectives play out in the consideration of election fraud. Election fraud, or the appearance of fraud or impropriety, can undermine public confidence in elections. But, of course,

the nondetection of fraud, whether in traditional or electronic voting systems, can mean either that there has been no fraud or that the fraud was successfully concealed—and there is no a priori way of determining which of these is true. That is, although some statistical techniques can suggest that fraud may have been committed,16 these techniques are based largely on historical data, and their indications do not come anywhere near a legal standard for asserting that fraud has occurred. In short, no one knows the baseline level of fraud in elections, regardless of what technologies have been used,17 and because there are many impediments to conducting recounts (especially in high-profile races),18 it is unlikely that fraud—if it exists—will be discovered.

Election officials and legislators tend to respond to fraud cases that have come to light during their tenure. By this standard, some election officials are skeptical of the claim that electronic voting systems without paper trails are less secure than nonelectronic systems, partly because most proven instances of election fraud to date have involved nonelectronic voting systems.19 And, in response to the possibility of fraud, many election officials have worked to improve procedures and organization that enhance the overall security posture of elections.

On the other hand, electronic voting systems have not been in use for very long, and so it may simply be that election irregularities and fraud associated with these systems have not yet come to light. By contrast, computer scientists see myriad possibilities for fraud, and because there is no way to rule out those possibilities or to bring them to light, they tend to behave as though such possibilities must be taken for granted. Moreover, they are concerned that the use of electronic technology enables the

|

16 |

See, for example, Jonathan N. Wand et al., “The Butterfly Did It: The Aberrant Vote for Buchanan in Palm Beach County, Florida,” American Political Science Review 95(4): 793-810, 2001. |

|

17 |

See, for example, Fabrice Lehoucq, “Electoral Fraud: Causes, Types, and Consequences,” Annual Reviews of Political Science 6:233-256, 2003; Larry Sabato and Glenn Simpson, Dirty Little Secrets: The Persistence of Corruption in American Politics, New York, N.Y.: Random House/Times Books, 1996; John Fund, Stealing Elections: How Voter Fraud Threatens Our Democracy, San Francisco, Calif.: Encounter Books, 2004. |

|

18 |

Such impediments include the high cost of recounts and the fact that a winning candidate is virtually certain to oppose a recount using any legal mechanism available—and there are many such mechanisms. |

|

19 |

Dozens of problems with electronic voting systems have been documented, and allegations of fraud involving electronic voting have appeared in the form of signed affidavits. Testifying before the U.S. House of Representatives Committee on House Administration, July 7, 2004, Michael Shamos reported that since 1852, the New York Times has published over 4,000 articles detailing numerous methods of altering the results of elections through physical manipulation of ballots (available at http://euro.ecom.cmu.edu/people/faculty/mshamos/ShamosTestimony.htm). |

commission of fraud in ways much more subtle than in the past and that these technology-enabled frauds may be much more difficult to detect.

Whereas computer scientists often compare what they have today with what could be in principle, administrators tend to compare what they have today with what they had yesterday. Computer scientists will presume a vulnerability is significant until shown otherwise, but election officials will presume that the integrity of an election has not been breached until compelling evidence is produced to the contrary. This difference in perspective largely accounts for the tendency of some election officials to blame electronic voting skeptics for scaring the public about security issues and for the tendency of some electronic voting skeptics to say that election officials have their heads in the sand.

As a baseline for comparison purposes, consider the security of a voting system based on hand-counted paper ballots. Such a system is manifestly subject to fraud if the chain of custody is not well defined or maintained, as the expression “stuffing the ballot box” indicates. Fraudulent votes can be introduced through the counterfeiting and subsequent marking of ballot documents, and while there are techniques that can be used to authenticate a document as legitimate, they all require that ballot documents be checked one by one. All else being equal, manual (re)counting of ballot documents is relatively straightforward when the number of voters involved is small, but it becomes more prone to error when hundreds of thousands of ballots are being recounted.

It is helpful to categorize security questions according to the timeline of a system’s use.20 First, a system (including all necessary hardware and software) should be assessed for its security. Second, if the system’s security is found adequate, the assessed system must be propagated to all the sites where it will be used. That is, the physical units that voters actually use should be identical to the system that was assessed. A third set of security issues arises while the systems are being operated by the voters. The fourth and final set of issues arises after the polls close and the results of each unit are passed to the parties responsible for vote tabulation.

4.2.2.2 Assessing the Security of a System Prior to Deployment

It is broadly accepted that independent testing and evaluation are an essential component of assessing the security of a system, and at this writing, the EAC is in the process of establishing Voluntary Voting System Guidelines (VVSG) in the area of security. Box 4.4 describes some of

|

20 |

Testing issues are discussed in Douglas Jones, Testing Voting Systems, available at http://www.cs.uiowa.edu/~jones/voting/testing.shtml. |

|

Box 4.4 An assessment of the security of a voting system would involve independent technical experts with backgrounds in computer security and the ability to draw on people with deep knowledge of election practices and procedures. The assessment team should control the process, and it should have full access to all system documentation, software, source code, change logs, manuals, procedures, training documents, all material provided to any other testing or review process, and working physical examples of the voting system in question (hardware and software). In addition, the assessment team must have adequate resources and time to complete its assessment, and it must have the independence to make its findings known without intervention on the vendor’s part. Assessments of this nature include but are not limited to finding specific software problems. They are intended to examine the system holistically to determine the extent to which it will be capable of resisting attempts to compromise its security (for example, how resistant is the system to the bribing of a single insider?). Collectively, the group responsible for assessing security might examine: Hardware

|

the issues that an independent laboratory might consider in such an assessment.

Security vulnerabilities introduced into an electronic voting system prior to its deployment are the most serious in terms of their potential impact on the outcomes of elections.21 The reason is that vulnerabilities built into the design of a system are propagated to every individual unit. Thus, the design and implementation phase of system development is a

Software

Procedures

SOURCE: Drawn in part from Leadership Conference on Civil Rights and the Brennan Center for Justice, New York University, Recommendations for Improving Reliability of Direct Recording Electronic Voting Systems, June 2004. Available at http://www.civilrights.org/issues/voting/lccr_brennan_report.pdf. |

point of high leverage for individuals seeking to compromise election security.

Qualification of a system according to the Federal Election Commission’s 2002 Voting Systems Standards provides some degree of assurance to a purchaser that a few security measures have been taken. Purchasers wishing to go beyond that degree of assurance might ask additional questions.22

4-10. To what extent and in what ways has a realistic risk analysis been part of the acquisition process? A risk analysis includes a threat model describing the various ways adversaries might exploit vulnerabilities in a system; a description of possible adversaries, their level of motivation and sophistication, and what resources they might bring to bear; an assessment of the likelihood of exploitation of various vulnerabilities and an estimate of the harm that might be done should exploitation occur; and a consideration of the possibility that an attack could be mounted without detection. For example, a postulated attack that involves the ability to improperly modify the code that will run on deployed voting stations presents security challenges that are very different from one that does not. Indeed, an attack involving insider access is much more serious, because of the possibility that the actions of a small number of individuals could have security ramifications in every deployment location (without such access a much larger degree of effort would be needed to achieve large-scale compromise).

In practice, a risk analysis must be undertaken by both vendors and election officials. A vendor must undertake a risk analysis in order to know what security properties a system must have. Development and design of the full system are not possible until the risk analysis has been performed. Though election officials—in their role as purchasers or lessors—are not responsible for system development or design, they too must undertake a risk analysis to determine if their own concerns about security are reflected in the vendor’s analysis. For example, if the threats of concern to election officials are not reflected in the threat model used to analyze risk, the risk analysis is not likely to provide useful guidance to those officials. Also, election officials, with input from independent security specialists and the general public, may wish to formulate the threat models of most concern to them independently of the vendors’ postulated threat models so as to avoid being captured by vendor biases.

|

|

lems Highlights Need for Heightened Standards and Testing,” undated white paper contributed to the committee, available at http://www7.nationalacademies.org/cstb/project_evoting_mulligan.pdf. The particular problem cited—the lack of guidelines relevant to human factors—was addressed explicitly in the proposed EAC revisions to the Federal Election Commission’s 2002 Voting Systems Standards. The Technical Guidelines Development Committee of NIST was specifically chartered to address such shortcomings. But the pace at which the standards-setting process works remains an important issue. It is reasonable to anticipate that over the long run, the relevant guidelines will become more comprehensive. Nevertheless, at any given moment in time, there may well be important outstanding issues that have not been addressed in the standards. |

4-11. How adversarial has the security assessment process been? Experience in the cybersecurity world has shown that adversarial techniques are generally the best for assessing security. That is, security should be assessed from the standpoint of an outsider trying to find exploitable flaws in it rather than an insider checking off a list of “good security measures.” Indeed, a system may conform to the best of checklists and still have gaping security holes.

The best example of an adversarial assessment is the use of independent red teams, or “tiger teams,” as described earlier.23 Short of a red team attack, an independent adversarial examination of the “internals” of a system (physical construction in the case of hardware, actual code in the case of software) will provide some insight into its ability to resist attack, since it is likely to uncover flaws that an adversary might use. Moreover, in the absence of such an examination, it is not possible for any amount of testing to eliminate the possibility that the system will demonstrate some improper behavior under some set of circumstances. That is, testing may be a sufficient basis for concluding that a system does meet certain requirements (e.g., produces certain outputs when given certain inputs), but it cannot show that the system will not do something else in addition that would be undesirable.24 Only by inspecting the internals does one have a chance of detecting the potential for inappropriate behavior when the system is put into use.

4-12. How has the system’s ability to protect ballot secrecy been assessed? The same kinds of adversarial techniques used to assess security are also useful for assessing the ability of a system to maintain ballot secrecy. Box 4.5 illustrates some of the issues that might come up in such an assessment.

|

23 |

An example of red team analysis is the “Trusted Agent” report on Diebold’s AccuVoteTS Voting System, prepared by RABA Technologies LLC in January 2004 and available at www.raba.com/press/TA_Report_AccuVote.pdf. The red team analysis found that the Diebold system, which Maryland had procured for use in primaries and the general election, contained “considerable security risks that [could] cause moderate to severe disruption in an election.” |

|

24 |

A simple example will illustrate the problem in principle. Using the logic described in Section 4.2.2 for maintenance traps, a system could be designed to change every 10th vote for Candidate A to Candidate B when a specific set of keys on the display is pressed in a specific sequence with a minimum time in between key presses. This particular example is contrived, as it would require quite a bit of skullduggery and the commission of a number of felony offenses on the part of a vendor, but the fact remains that no plausible testing process will ever uncover such a problem. |

|

Box 4.5 Maintaining the secrecy of a voter’s ballot is an important public policy consideration that is specified in state law. Known as “confidentiality” among computer scientists, the problem amounts to one of keeping the voter’s ballot private under all circumstances. In particular, these circumstances include voter collusion (as might be the case for a voter trying to sell his or her vote); observations of voters and voter behavior in the polling place being correlated with voting station records; and corrupt insiders who might have access to voting station records. Put differently and more generally, computer scientists believe that a system properly designed to provide ballot secrecy must be able to defeat attempts to compromise the secrecy of an individual’s ballot under all possible adverse circumstances. In the absence of a specific system design, it is impossible to anticipate all possible threats to secrecy in anything but the most general terms. The following examples are intended to suggest a range of possible threats against which a system must be designed:

|

4.2.2.3 Deploying the Assessed System to Polling Stations

Qualification and certification testing of a voting system are only the first steps in the process of assuring end-to-end security. Even a voting system that has been qualified as secure, reliable, and easy to use is useless if it is not the system that voters use on Election Day. That is, the qualified and certified system must be deployed to polling stations for actual use on Election Day.

Acceptance testing is one element in providing such assurance. According to the Federal Election Commission’s 2002 Voting Systems Standards, one purpose of acceptance tests is to ensure that the units delivered to local election officials conform to the system characteristics specified in the procurement documentation as well as those demonstrated in the qualification and certification tests. To help ensure that qualified voting systems are used consistently throughout a state, ITA labs can file digital signatures of qualified software with the software library of the National Institute of Standards and Technology (NIST).25

Acceptance testing is undertaken in the absence of a specific ballot configuration. Logic and accuracy (L&A) testing is the testing of voting systems configured with the ballot that will be used in the actual election. In principle, L&A testing serves two main functions—to account for any changes to a unit’s configuration between the point of acceptance and Election Day, and to ensure that the unit performs properly with the actual ballot to be used. Thus, L&A testing can be usefully applied to every unit that voters will use in the election, although the expense of testing generally allows only a fraction of those units to be tested. When units are known to be identically configured, only one of them needs to be thoroughly tested and the rest tested simply to ensure that no failure has occurred.

These two types of testing motivate several additional questions:

4-13. How is the security of voting stations maintained to ensure that no difficult-to-detect tampering can occur between receipt from the vendor and use in the election? In theory, this is a straightforward matter—put the voting stations in a locked building with no remote access to

|

25 |

A digital signature is a unique, algorithmically generated fingerprint of any digital object (such as a software module). By comparing signatures, one can easily determine if two objects are identical. NIST maintains a library of certified code to which ITAs can submit qualified election software versions, with a digital signature that enables states and local election officials to check whether individual machines utilize exactly the same software. But even the smallest change in software will change the signature (for example, the code for “3 + 2” will have a very different signature from the code for “2 + 3”). Practical difficulties of performing such a check are addressed in Footnote 28. |

them and ensure that no one has access until they are removed for use on Election Day. But there are several factors that complicate this simple picture. For example:

-

Vendors may need access to load ballots to individual voting stations, a task that must be performed before Election Day.26 However, the steps that must be taken to load ballots may, or may not, resemble those needed to change software. How will those supervising the loading of ballots be certain that no other changes are being made to the voting stations?

-

Third parties may masquerade as election officials or vendors and demand access to the voting stations in storage. Or moles (individuals with ostensibly authorized access but who in fact have been compromised to work in a partisan manner) may be present in the offices of election officials. What procedures are in place to guard against changes introduced by these insiders (for example, a rule that requires that access to systems in storage is never associated with only one or two persons)?27 How rigorous are the procedures for ensuring that only properly authorized parties have access to the storage facilities?

-

Early voting, an increasingly common practice that entails taking voting stations out of storage before Election Day, further complicates the achievement of security and chain-of-custody goals.

4-14. What steps have been taken (either technically or procedurally) to limit the damage an attacker might be able to inflict? As a practical matter, the compromise of one voting unit in one precinct is obviously less harmful than the compromise of all of the units in the entire jurisdiction. One approach to limit possible damage is to ensure that modifications or updates cannot be made en masse, that is, through one action updating all units. Thus, a large-scale compromise would entail significantly more effort for the attacker than a small-scale one. Of course, this approach makes it much more inconvenient and costly to deploy updates when they are necessary.

|

26 |

In principle, election staff could do so as well. But given the prominent role that vendors have often been given in providing supporting services (Section 6.7), it is entirely possible that vendors may have this responsibility. |

|

27 |

The insufficiency of a two-person rule has been noted in the finance industry, in which audit procedures typically call for involving three or more individuals. The reason is that if one party in a two-person conspiracy breaks the secrecy pact, his or her identity is known with certainty to the other party. However, if the conspiracy involves three or more individuals, the identity of the party breaking the secrecy pact cannot be inferred with certainty by any of the others. In an election context, such a procedure might involve representatives from two parties jointly picking a third. |

4-15. How can election officials be sure that the voting systems in use on Election Day are in fact running the software that was qualified/certified? For example, a vendor may uncover a potentially problematic issue in software that has been previously certified and address the issue in a program patch. Strictly speaking, any change to a program requires recertification, and some state laws require recertification after every software change, no matter how small. But because full recertification generally takes a long time (in principle, as long as the initial certification), there are strong incentives for the vendor to argue that the change can be administratively approved.

The question then arises whether the change involved is small enough to be addressed administratively. In the absence of specific criteria, vendors are in the best position to know about the scope and significance of any change. On the other hand, from the point of view of an outsider without such privileged knowledge, the nature of programming is such that it is essentially impossible to assure that changes made in one part of the program will have no effects on other parts of the program. Without inspecting the code involved (and the other parts of the program with which it interacts), there is no way to determine if a change is significant or not. Some evidence may be forthcoming if the original program is designed in a modular fashion with well-documented interfaces, the behavior of existing modules is understood, and the changes are confined to one or a few modules. But the mere assertion of a claim does not suffice for most outsiders.

If an administrative certification is not possible, election officials have the operational choice in practice between running certified code that may have problems or running uncertified code that has been fixed. Thus, some election officials may still try to think of ways to avoid this certification step, particularly if they know that a smooth election process depends on a last-minute fix.

A related issue is that despite precautions that have been taken, software may have been compromised through the introduction of an unauthorized patch. Beyond vendor assurances, what technical means are available to demonstrate that such compromise has not taken place? For example, a digital signature of the software running on any given station can be taken for comparison with a known version, though this is difficult in practice today.28

A second related issue is that the source code for software running on an electronic voting system may not be fully available.29 Some vendors of electronic voting systems may build their systems using the foundation of a (proprietary) commercially available operating system. Device drivers (programs that manage the devices attached to a computer) may also be available in object code but not in source code. (As a rule, systems that are based on the use of such commercial off-the-shelf components are generally less expensive and faster to develop than systems that are custom-designed and implemented from the ground up.) In this case, there is a strong sense in which the certification or qualification of voting system software is necessarily conditional (perhaps implicitly), because it presumes that the operating system or device drivers, or interactions between the voting application and the operating system or device drivers, do nothing strange or unexpected or malicious. Furthermore, vendors or jurisdictions managing relatively small contracts will not generally have enough leverage with the provider of operating systems or device drivers to obtain source codes for inspection.

4.2.2.4 Using the Deployed Units on Election Day

In general, the issues on Election Day are more likely to be associated with reliability than with security. That is, if rogue voters are able to compromise the security of the voting systems they use, it will almost certainly be through the Election Day exploitation of a pre-existing security vulnerability. Such situations are covered under Sections 4.2.2.2 and 4.2.2.3.

The one exception is what might be called a denial-of-service attack against voting systems in use. For example, Party A might try to deny service in an area with large numbers of people from Party B, thus reducing the turnout and vote count for Party B. Lack of availability of even a few voting stations for even a short amount of time during peak hours can result in very long lines for voting, leading to voter discouragement and an effectively lower turnout.30

From the standpoint of assuring election integrity, Election Day is also an opportunity to collect data that can be used later to audit the election and to document anomalies that might point to systemic problems that need remediation in the future.

4-16. What information must be collected on Election Day (and in what formats) to ensure that subsequent audits, recounts, or forensic analysis can take place if they are necessary? As noted in Chapter 1, elections may be subject to post-Election Day challenge. To resolve such challenges after the fact (of the election), information about what happened on Election Day must be available. Challenges to the vote as recorded and communicated by the voting station and the tabulation equipment might arise from a sufficient number of individual voters wanting evidence of how their voting intent was interpreted, or from systemic difficulties due to bad system design or fraud. Should an audit become necessary (because irregularities are charged or because a state’s best practices mandate random audits), auditors need data and records to examine. It is therefore essential that a locality collect such data before and during the election so that appropriate records are available. An example of data that might support an audit is exit poll data, which might be collected by the state rather than a media organization, for later comparison to actual totals.

This point is the primary motivator of various demands for paper trails in electronic voting systems—the concern expressed by many advocates of paper trails is that a DRE system without such a capability is unaccountable, and that such systems give election officials who are challenged the stark choice between accepting the numbers proffered by the system and redoing the election.

Box 4.6 provides some examples of relevant data that are arguably relevant for forensic analysis.

4-17. How are anomalous incidents with voting systems reported and documented? Given that in-use operations are the ultimate test of voting systems, it is important to capture as much information as possible about how voting systems perform in actual use. What incident-reporting structure will guarantee that problems are reported promptly to vendors, to states, to other local election jurisdictions within the state using the same systems, and to standards-setting organizations? How can knowledge of these anomalies be used to improve voting system performance?

For example, Florida certified an electronic voting system despite the fact that the voting machines took a long time to boot up and machines had to be opened in sequence. It took between 90 minutes and 4 hours to open a precinct. Therefore, the machines could not be turned on the same day that an election took place. The certification standards had not ad-

|

Box 4.6 1. Data to collect before the election: a. Local voter registration numbers and lists. [P,S] b. Inventories of equipment and ballots upon acceptance (e.g., date of purchase, source, maintenance records, vendors, serial numbers, retain code versions in offsite escrow). [S] c. Seal numbers for ballots and machines and storage locations for voting equipment. [S] d. A record of personnel with access to equipment, including detail such as when and where. [S] e. Changes made to the equipment (e.g., oiling, charging, battery changes, memory upgrades, putting in a module, checking odometers, code drop). [S,P] f. A list of the times and modes by which voting equipment is transported (including license plate number and driver for chain-of-custody purposes). [S] g. Inventory of equipment and materials before and after transportation. [S] h. Inventory of equipment and materials before voting begins. [S] i. Pre-election equipment testing data, including the number of systems tested and problems observed during testing. [S,P] j. Number of training sessions held for poll workers, and a roster of poll workers attending each session. [P] k. Copies of sample ballots and voter information materials. [P] When electronic voting systems are involved:

These data help assure that ballots, equipment, and polling places are usable and also makes it possible to deal with problems and questions that may arise later. 2. Data to collect during the election: l. Number of poll workers at each poll, including the times at which poll workers arrive and leave. [S] m. Signatures (not check marks) of those present. [S] n. Signatures for inventory received election night, both in precincts and when inventory is returned to the central office. [S] o. Tally at precinct and time it was conducted. [S,P] |

|

p. The number of poll and early voting sites and any rents required to use these locations. The number of workers in each poll or early voting site, their rate of pay, and their required number of hours of work. [P] q. If “parallel testing” is conducted on Election Day, the number of voting machines tested, the way in which they were selected for testing, and the results of those tests. [S,P] r. Exact time when each poll site opened. [P] (Maximum waiting times at each poll site.) s. The number of poll sites that experienced significant problems, an explanation of the problems experienced, and a description of how these issues were resolved. [P,S] The number of individuals turned away from the polls and the reasons they were turned away. When electronic voting systems are involved: