4

MS&A and Decision Making

All MS&A activities within the defense establishment must eventually be responsive (and appropriately linked) to DoD decision makers and decision-making processes. In its deliberations, the committee considered how best to ensure the responsiveness of MS&A to the needs of those decision makers. The broader mission space now facing DoD implies that decision makers will rely increasingly on MS&A, and improving this interface is critical in order to profit from the recommendations of Chapter 3. In particular, this chapter discusses how to better match DoD’s MS&A models and activities with the specific requirements of the problem; how to improve the interactions between the MS&A team and the decision makers; how to match MS&A activities and products with the styles of the decision makers; how to better quantify and manage uncertainties; and how to document and communicate the results of MS&A.

IDENTIFICATION OF THE DECISION PROBLEM AND SELECTION OF AN MS&A APPROACH

Defense decisions come in many varieties, including those that affect military strategy, technology acquisitions, and personnel management, as well as real-time decisions on the battlefield and its training equivalents. Clearly, different decision problems will require different MS&A approaches, so that selecting an approach that matches the needs of the problem to the needs of decision makers is essential.

Matching MS&A with the needs of decision makers should be a deliberate and interactive activity but not necessarily an extensive and time-consuming one. It should be taken seriously and involve direct interactions between the decision makers, the MS&A team, and eventual users of the MS&A products. According to vonWinterfeldt and Edwards (1986), three steps can be distinguished in this activity:

-

Identifying the problem. This step addresses a number of questions: What is the nature of the problem? Who is the decision maker? What decisions are to be made? What groups are affected by the decision? At this stage, simple lists of alternatives, objectives, events, and rough formal relations among them are created. To sharpen the sense of conclusions that might be salient and nontrivial, it is often useful to list potential conclusions and to imagine contradictory conclusions, so as to avoid biases and highlight difficult or controversial issues.

-

Selecting an MS&A approach. In this step, the problem identified in the preceding step needs to be matched with an MS&A approach—for example, simple or relatively complex modeling, deterministic or probabilistic simulation, one-sided analysis or a game-theoretic analysis, optimization or exploratory analysis, rational-analytic decision analysis versus subjective portfolio balancing, and fixed-model or system-dynamics model. To do this matching, the MS&A team should ask: What are the main problem complexities that the MS&A activities are intended to address? What is the purpose of the MS&A activity? Which MS&A approaches have been previously used successfully for this type of problem?

-

Developing a detailed MS&A approach and architecture. This step involves the more familiar territory of fleshing out the specific MS&A models, simulations, and analysis tools. Tools like influence diagrams, decision trees, and flow charts are used for this purpose, depending on the MS&A approach chosen. The committee suspects that the bulk of time, effort, and resources devoted to many, if not most, defense-related MS&A activities has traditionally focused on this last step, but it stresses that without proper attention paid to the first two steps, these resources might well be misplaced.

Although these steps have been developed and proven successful in the context of large, complex, and strategic

decisions requiring analytic support, a streamlined version is likely to be useful even for short-term, quick-and-dirty activities as well as for preparing MS&A for training and exercise in tactical contexts.

These three steps may take a few days to several weeks, or longer, if trust must be gained from scratch. Previous successful uses of MS&A in similar problem contexts can shorten this time. For complex strategic decisions, for which no precedent exists, iteration between the MS&A team and the decision makers at each step can produce insights for restructuring and simplification.

There exists little research to guide MS&A practitioners in these steps. It is fair to say that the first two steps are more of an art than a science, while some research support has been developed for the third step in specific MS&A subdisciplines. For example, guidelines for the third step have been developed for studying causal dynamic systems (Sterman, 2005), objectives hierarchies (Keeney, 1992), decision trees and influence diagrams (Clemen, 1996), and Bayesian belief networks.

A related issue is the choice of the level of detail for an MS&A activity. Often, a little modeling and analysis goes a long way. Rapid prototyping, followed by restructuring, followed by more detailed modeling is usually a better strategy than investing large resources in a one-shot, large-scale MS&A activity. An arguably ideal MS&A process would involve an iteration of models that are initially too simple, to models that are too detailed and complex, back to simpler models that capture the essence of the complex models yet strip details from them that are unnecessary for the final use by decision makers. In such an iterative process, models can be simplified in many ways, including aggregation of variables, approximate computations, and omission of unimportant variables. Since these simplifications have implications for the uncertainty and accuracy of the output, the MS&A team must understand the implications and communicate them carefully to the decision makers and end users of their products. The involvement of decision makers and end users in these choices is extremely important if the MS&A development is to be matched to their needs. The committee believes that this practice is already followed by the best MS&A practitioners but must become more widespread, especially as decision makers become more dependent on MS&A to supplement their experience and intuition.

INTERACTION BETWEEN THE MS&A TEAM AND DECISION MAKERS

One way to improve Steps 1-3 is to iterate with the decision makers and other users and stakeholders of the MS&A activities and results. The discussions in Steps 1 and 2 (define the purpose, scope, and approach) are often inadequate because the actual decision makers are misunderstood or overinterpreted. Access to the decision makers may be very limited, and the intent of the decision maker may be filtered through layers of staff. In addition, the modeler needs to obtain the trust of the subject-matter experts, staff, and the decision makers. As the detailed modeling approach evolves (Step 3), iterations are still useful, but they may not require involving the highest level of decision makers.

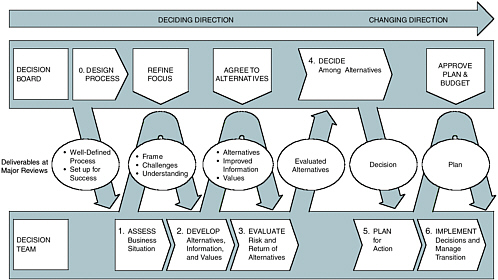

Spetzler (2007) describes an explicit process of interacting with decision makers in the so-called snake diagram (see Figure 4.1).

Another form of intensive interaction is “decision conferencing.” Decision conferences usually assemble the key decision makers and a facilitator with the intent to structure and solve a decision problem in real time—sometimes in 1 or 2 days. The process is as much about interaction and rules for interactions as it is about the formal models that are used to support decisions.

Being flexible and adaptive is very important. In one case where MS&A was applied to inform the selection of one of several technologies for producing tritium for nuclear weapons (von Winterfeldt and Schweitzer, 1998), the modeling approach was changed radically in midstream, from multiattribute evaluation to probabilistic simulation, because the decision makers had gained insights from the initial analysis and the information they needed had evolved. The issue in this application was whether to produce tritium in new nuclear reactors, accelerators, or existing commercial reactors. At the same time, a site for the facilities for these technologies was to be selected. The initial formulation of the problem was to evaluate five technologies and six sites based on 23 criteria using a multiattribute utility approach. A midanalysis briefing by the Assistant Secretary for Defense Programs made it clear that site selection was a minor issue but that uncertainties about production assurance (timeliness and capacity), as well as uncertainties about costs, were critical complexities that needed to be better understood. As a result, the analysis was turned into a risk analysis of the key production assurance and cost uncertainties surrounding the main technological alternatives.

NORMATIVE VS. DESCRIPTIVE MODELS OF DECISION MAKING

Traditional normative, or rational, models for decision making include the subjective expected utility model (SEU), Bayesian inference models, and multiattribute utility models (for introductions to these models, see Clemen, 1996; Hammond et al., 1999; von Winterfeldt and Edwards, 1986). While these models form the foundation for decision making under uncertainty and provide the links to other forms of MS&A, it has long been recognized that they are not descriptive of how people make judgments and decisions. Therefore, a question arises about how to implement rational models in the face of the possibility that decision makers’ decision styles and natural ways of thinking about problems might not agree with the rationality assumptions. Traditional decision analysis approaches have also been challenged be-

FIGURE 4.1 Snake diagram developed by the strategic decision group. SOURCE: Spetzler (2007).

cause of their limitations in dealing with problems characterized by deep uncertainty, which require strategies that are flexible, adaptive, and robust (Davis et al., 2005).

The literature on cognitive biases and heuristics, summarized in Kahneman et al. (1982) and Kahneman and Tversky (2000), is concerned with the dysfunctional nature of psychological aspects of judgments and decision making and how these biases and heuristics can lead people astray and prevent them from making sound judgments and good decisions. Subsequent research showed how simple heuristics and biases often can be very functional, approaching optimal analytical solutions to a surprising degree (Gigerenzer and Selten, 2002). An even more positive attitude is represented in the literature on naturalistic decision making (see Davis et al., 2005). Given the value of some of these naturalistic approaches to decision making, it is important to better understand the match, or lack thereof, between standard MS&A approaches and the naturalistic way decision makers think.

One suggestion is to combine analytic, rational approaches and intuitive, deliberative approaches to inform decision making, because neither MS&A alone nor unstructured deliberation without MS&A support is likely to succeed. Instead, an analytic-deliberative process that combines the strengths of both approaches may be the best way to find creative and acceptable solutions to complex decision problems.

Recommendation 10: DoD should strive to better understand the cognitive styles of decision makers and their interaction with different forms of MS&A. Research into decision-making styles would improve decision making with MS&A by affording intuition and insight into complex problems and enhancing the creativity employed in their solution.

ADDRESSING UNCERTAINTIES

Many MS&A models provide point estimates of forecasts, ordered evaluations of options, or optimal allocations of resources. Current MS&A practice often accompanies these deterministic results with sensitivity analyses to indicate (1) the robustness of solutions, (2) the sensitive parameters, and (3) the breakeven points. However, all MS&A modeling efforts face irreducible uncertainties, and these uncertainties must be characterized and made explicit in the course of the effort.

There are several kinds of uncertainties, each with its own complexities and challenges for characterization:

-

Environmental uncertainty,

-

Parameter uncertainty,

-

Model uncertainty, and

-

Deep uncertainty.

“Environmental uncertainty” refers to natural variations in the decision environment—for example, in weather conditions or in the random behavior of natural or engineered systems, such as earthquakes on a known fault line or failure rates of components of a weapons system. Often this type of uncertainty can be characterized using empirical data and frequency distributions. “Parameter uncertainty” refers to

uncertainties about model parameters that are due to not knowing the precise value of these parameters. For example, the failure rate of a new component is often characterized by a parameter that is estimated from hundreds of previous failures of similar components. This parameter cannot be observed, but its probability distribution can be constructed or assumed using expert elicitation methods and empirical data. “Model uncertainty” refers to not knowing which model is most appropriate for a given phenomenon. For example, it is well known that the assumptions underlying an exponential model of component failure are violated both by system off-on cycling and by wearout. Quantifying such model uncertainties is a great challenge that has not been sufficiently well addressed by the MS&A community. “Deep uncertainty” refers to factors that are essentially not knowable currently and for which the relevant probability distributions are simply not known.

Characterizing, quantifying, and managing these uncertainties is a critical part of MS&A. Uncertainties are often hidden behind assumptions that are not spelled out explicitly. When uncertainties are explicitly addressed, they are often assessed by experts who are prone to overconfidence and other biases. Model and deep uncertainties are very difficult to assess and therefore are often ignored. The management of uncertainties through dynamic adjustments and adaptive response strategies is a topic of interest in many fields that is only now receiving significant attention by researchers.

The expanded mission space now facing DoD necessitates that the MS&A enterprise augment its skills for interfacing with decision makers. More or less traditional defense planning provides MS&A practitioners with good guidelines for presenting assumptions, indicating missions examined, displaying cost-benefit trades, and so on. But for nontraditional missions, the MS&A practitioner needs better tools and practices for presenting and explaining overlapping types of uncertainties, some of which can be very large. A body of experience has been built up in communities that evaluate environmental risks such as those posed by the siting of industrial plants, where the uncertainties are very large and technical assumptions are key yet such evaluations must be digested by decision makers who might misinterpret some types of probabilistic information. One such approach is to use a classification system to group elements relative to perceived uncertainty. The defense MS&A community can learn from individuals with expertise in risk communication. Whatever approach is taken, it is clear that the modeling and simulation of nontraditional missions will span a wider range of phenomena, including the full range of PMESII effects, than was customary in the past. It will be imperative that MS&A practitioners have a broader background and work more routinely on teams that cover the broader range of topics that must be modeled.

Defense modeling has always involved great uncertainty—the fog of war is a reality, not merely a convenient figure of speech. Modeling and simulation of nontraditional missions involves uncertainties of a different character than those associated with force-on-force modeling. Cultural differences lead to uncertainties about enemy intent; new autonomous weapons systems lead to uncertainties about the performance of our own weapons; gaps in intelligence lead to uncertainties about enemy capability. All of these need to be incorporated into DoD’s modeling and simulation.

Recommendation 11: DoD should seek better methods to characterize, quantify, and manage the uncertainty inherent in all aspects of MS&A—including inputs, modeling assumptions, parameters, and options.

DOCUMENTATION AND COMMUNICATION

Documentation and communication are essential elements of good MS&A practice, yet there is little research supporting these activities. It is important that all elements of the MS&A activities be well documented, from the framework of the analysis, to the terms and assumptions used, to the results. The documentation should allow readers to trace every aspect of the models, simulations, or analyses, including sensitive and robust features and shortcomings and weaknesses. Simplifications should be highlighted. The importance of input parameters, if not explicitly analyzed through sensitivity or uncertainty analysis, should be discussed. The results should be provided at an aggregate level and at multiple levels of disaggregation.

Communication must take place at all levels in an organization, ranging from the immediate client for the MS&A activity to the ultimate decision maker. Often there are several layers between the immediate client and the decision maker. Sometimes there are institutions above the decision maker, like congressional oversight committees or courts, that can challenge and overturn the findings and recommendations of decisions derived from an MS&A study. It is important that the MS&A team be prepared to communicate at all levels and address the specific information need, possibly using different briefing materials.

The style of the experts or decision makers who receive the communication must also be taken into account. Some decision makers are willing to accept reasoned recommendations as long as they believe that the source is competent and trustworthy. Others want to challenge specific assumptions and numerical estimates. Yet others want to obtain a detailed justification and account of the complete analysis process, its assumptions, and results.

Most MS&A studies can be communicated at different levels of detail. At the highest level, the study simply communicates the conclusions (perhaps with a recommendation), with some minimal backup. A lower level might present an account of the pros and cons of all alternatives, which can often be presented qualitatively. Below this are many increasingly detailed levels of information. The MS&A team

should be responsive to the decision maker’s requirements at all levels of detail that are supported by the analysis.

Many decision makers do not like to be told what they should do; their job is to make decisions. The MS&A team’s job is to present the information in a way that makes choices transparent and to clarify the crucial parameters on which the choice depends (decision makers typically consider many factors other than those considered by MS&A). Simple, transparent models that allow decision makers to control input parameters and immediately observe outputs can be an effective way to develop their confidence in models. If the underlying model is very complex and time consuming to run, simplified models that capture the essence of the complex underlying model should be used.

In short, MS&A practitioners should document their activities and results in a transparent and traceable way. At all levels of presentation, all terms and assumptions should be stated explicitly, sensitive and robust features should be identified, and shortcomings and weaknesses should be discussed openly. Strategies should be identified and implemented to increase the flexibility, adaptability, and robustness of MS&A activities and results. The MS&A community should work closely with communications experts to develop and institutionalize effective techniques to communicate MS&A results.

Decision making is a highly individual and often idiosyncratic process, so it should not be surprising that a disconnect often exists between the MS&A practitioner and the decision maker. Every effort should be made to ensure that both have the same understanding of the problem, the assumptions that are necessary to model and analyze it, the alternative solutions offered, and the uncertainties associated with each. This is an area that requires technical, verbal, and presentation skills, all of which need to be adequately represented on the MS&A team.

REFERENCES

Clemen, Robert T. 1996. Making Hard Decisions: An Introduction to Decision Analysis. 2nd ed. Belmont, Calif.: Duxbury Press.

Davis, Paul K., Jonathan Kulick, and Michael Egner. 2005. Implications of Modern Decision Science for Military Decision Support. Santa Monica, Calif.: RAND.

Gigerenzer, G., and R. Selten, eds. 2002. Bounded Rationality: The Adaptive Toolbox. Cambridge, Mass.: MIT Press.

Hammond, J.S., R.L. Keeney, and H. Raiffa. 1999. Smart Choices: A Practical Guide to Making Better Decisions. Cambridge, Mass.: Harvard Business School Press.

Kahneman, Daniel, and Amos Tversky. 2000. Choices, Values, and Frames. New York, N.Y., Cambridge, England: Cambridge University Press.

Kahneman, Daniel, Paul Slovic, and Amos Tversky. 1982. Judgment Under Uncertainty: Heuristics and Biases. New York, N.Y., Cambridge, England.: Cambridge University Press.

Keeney, Ralph. 1992. Value-Focused Thinking. Cambridge, Mass.: Harvard University Press.

Spetzler, C. 2007. “Building decision competency in organizations.” Advances in Decision Analysis: From Foundations to Applications. W. Edwards, R.F. Miles, and D. von Winterfeldt, eds. Cambridge, England: Cambridge University Press, in press.

Sterman, John D. 2000. Business Dynamics: System Thinking and Modeling for a Complex World. New York, N.Y.: McGraw-Hill.

von Winterfeld, Detlof, and Ward Edwards. 1986. Decision Analysis and Behavioral Risk. Cambridge, England: Cambridge University Press.

von Winterfeldt, Detlof, with E. Schweitzer. 1998. “An assessment of tritium supply alternatives in support of the U.S. nuclear weapons stock-pile.” Interfaces 28.