Summary*

The Institute of Medicine’s Forum on the Science of Health Care Quality Improvement and Implementation held a workshop on January 16, 2007, in Washington, DC. The workshop had its roots in an earlier forum meeting when forum members discussed what is meant by the terms “quality improvement” and “implementation science” and became convinced that they mean different things to different people. At the time, the members also discussed the need to identify barriers to quality improvement research and to implementation science. Thus the purpose of this workshop was to bring people together from various arenas to discuss what quality improvement is, and what barriers exist in the health care industry to quality improvement and also to research about quality improvement.

The summary that ensues is thus limited to the presentations and discussions during the workshop itself. We realize that there is a broader scope of issues pertaining to this subject area but are unable to address them in this summary document.

LESSONS IN QUALITY IMPROVEMENT

The workshop’s first session was devoted to experiences that various institutions have had with quality improvement. Recognizing the wealth of experiences available outside of health care services, the workshop included presenters from outside the health care service industry as well as from inside. This includes discussions from a variety of perspectives: non-health care services, health plans, hospitals, and nursing. It was not possible, however, to include examples from all settings, including smaller physician practice settings and long-term care settings.

Non-Health Care Service Sector

Although improving quality requires the use of specific tools, developing those tools and putting them to use is only part of the challenge. As Scot Webster of Medtronic, a manufacturer of medical devices, explained, the larger part of improvement is actually changing culture and driving change.

Webster focused on three barriers to operating with high quality and efficiency: lead time, external variability, and internal variability. Lead time is the period of time from the beginning to the end of a process. Variability refers to differences in conditions or in how a process is performed; external variability refers to differences that cannot be controlled by the process’s operator, while internal variability refers to processes that can be. Many tools exist to improve quality and to deal with these barriers. Medtronic chose to combine the tools of Six Sigma and Lean in an innovative technique called Lean Sigma, which has positively affected Medtronic’s business. Although it can produce impressive results, Lean Sigma should not be seen as the answer for all quality problems, Webster cautioned. Quality improvement is 30 percent application of various tools and 70 percent working together to create a culture of continual change, he said, and to sustain quality improvement, institutions need to incorporate it into their culture.

Lean Sigma is also not a replacement for creativity or the experience of health care providers, Webster said. While health care has a high ratio of external to internal variability, and external variability is by definition outside of one’s ability to control, Lean Sigma could still be used as a tool to support the performance of health care professionals, he said.

Integrated Health Care Delivery System

As an integrated health care delivery system, Kaiser Permanente has a unique approach to quality improvement, Scott Young of Kaiser’s Care Management Institute said. Quality is integrated into every level at Kaiser, from medical centers to the national program office. Kaiser views quality, safety, service, and cost as the four dimensions that lead to improvements in care.

One of the factors underlying differences in health care outcomes is the uneven application of evidence-based care, which results in unwanted variations. These variations can often be linked to missed opportunities to improve quality and—at the extreme—to safety issues, close calls, and near misses that occur every day. These in turn, Young explained, are the basis for poor outcomes, adverse events, increased morbidity and mortality, and potential increased medical liability. Collectively, he said, these issues are viewed as the “iceberg of safety,” with increased morbidity and mortality and potential increased medical liability at the tip of the iceberg. Quality improvement programs can help prevent patient safety issues in health care.

The task of improving quality is made possible by support systems available throughout Kaiser, such as its electronic medical-record system called KP HealthConnect, its care-management programs, the KP Elder Care Network, and the use of evidence-based medicine. There are six components to the company’s approach to quality: measurement and evaluation, care management, evidence-based medicine, health information technology, innovative practice models, and team-based care.

Hospital Perspective

Craig Miller of Baptist Health Care System described how this hospital system changed its culture. In 1997, Miller said, Baptist was a place that provided poor quality care. Once the hospital leadership recognized that change was necessary to improve employee satisfaction and to solve financial problems, Baptist began to focus its efforts on the people associated with the system—the patients and the employees. With this focus, Baptist transformed itself into a hospital system that now provides excellent quality care, as evidenced by the system winning the 2003 Baldrige Quality Award.

Baptist built its vision of change around five pillars of excellence: people, service, quality, growth, and finance. In addition to these pillars, Baptist used the Baldrige criteria for excellence to transform

itself.1 To operationalize the changes required to achieve their vision, Baptist adopted five keys: create and maintain a great culture; select and retain great employees; commit to service excellence; continuously develop great leaders; and hardwire success with systems of accountability.

Nursing Perspective

Nurses are central to improving the quality of health care delivery, said Marita Titler of the University of Iowa Hospitals and Clinics. Titler presented four major points to illustrate the role of nurses in quality improvement, including an overview of the quality improvement program at the university, strategies used to implement performance improvement, challenges in improving quality, and markers of success. The University of Iowa Hospitals and Clinics bases its implementation of new processes and procedures on seven principles, Titler said. The first of these principles is that education is necessary but not sufficient in order to change practice behaviors. The second is that implementation is not necessarily sustainable; constant tracking and improvement are required to improve the likelihood that a change will be sustained. The third principle is to facilitate doing the right things. The fourth is that data need to be effectively transformed into useable and actionable information. The fifth principle is to have a clear focus for implementation. The sixth is coordination among all players, which is especially useful in complex interventions. The seventh principle is to pilot or try the intervention prior to implementing the change system-wide. Improving care requires a number of strategies that integrate these seven principles and at the center of them is engaging the workforce, Titler said.

APPROACHES TO QUALITY IMPROVEMENT RESEARCH

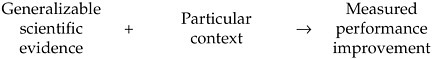

There is a lack of understanding of how to connect the different strategies available for improving quality, said Paul Batalden of Dartmouth. He offered the following formula as a way of thinking about how the various factors of quality improvement fit together:

Generalizable scientific evidence and particular contexts link together in a cycle that is a form of experiential learning. This cycle not only describes how a large majority of evidence-based medicine is developed, but it also captures how evidence-based medicine is integrated into practice. Thus, Batalden suggested, experiential learning can be seen as one of the underpinnings of quality improvement and quality improvement research.

Jeremy Grimshaw of the Ottawa Health Research Institute offered a different approach to quality improvement research. Implementation research2 can be described as studies of how the uptake of research findings is promoted. Implementation research focuses on the challenge of delivering evidence-based care to patients, specifically on the technical aspects of care. The aim is to develop a generalizable evidence base that can be used to improve the implementation of research findings and enhance decision making at the local level. This research is inherently interdisciplinary, involving health care professionals, organization scientists, engineers, and others.

Despite the suggestion by some attendees that these approaches to quality improvement research oppose one another, others thought that the discussion revealed more similarities than differences. In particular, Batalden and Grimshaw agreed on the purpose of quality improvement research and also agreed that the evidence base needs to be developed to the point that it can build on itself. Batalden and Grimshaw stated that both approaches were necessary to develop the needed body of knowledge.

Methods

Quality improvement is analyzed using a variety of study designs, including systematic reviews, controlled trials, case reports, and hybrid quantitative/qualitative reports, Batalden said. These different methods have different strengths, each with its own set of advantages and disadvantages.

There is disagreement in the field about the use of what some believe to be the “gold standard,” randomized controlled trials

(RCTs). Some people do not believe that RCTs are useful in complex social contexts, such as quality improvement processes, while others believe RCTs to be an extremely valuable method for evaluating these interventions. Given that different interventions lend themselves to specific evaluation methods, Grimshaw and Batalden concluded that one should always attempt to choose the best possible study design, given the individual circumstances.

BARRIERS TO QUALITY IMPROVEMENT AND QUALITY IMPROVEMENT RESEARCH

There is very little data available to guide the development of quality improvement research, of health sciences research, and of medicine in general, said Harold Pincus of Columbia University and New York-Presbyterian Hospital. This lack of data is closely related to the eight major barriers to quality improvement and to quality improvement research that workshop participants identified.

The first barrier is that quality improvement efforts can have many divergent purposes. Some see the purpose as improving performance, a process that occurs mainly through experiential learning. This process differs significantly from scientific research, whose purpose is to discover generalizable truths through hypothesis testing, noted Frank Davidoff of the Institute for Healthcare Improvement.

A second barrier is the role of specific contexts. Understanding the effects of specific local contexts and characteristics of what is generalizable across settings is extremely valuable in the implementation of interventions, Grimshaw said.

The third barrier is the lack of agreement about which academic area should be home to quality improvement research, Pincus said. Quality improvement research could potentially be considered an interdisciplinary research field, serving as a bridge between multiple disciplines. While many agree with the concept of interdisciplinary research in theory, it is extremely difficult to put into practice.

The fourth barrier is the “mismatch” between training and practice: Most people doing medical quality improvement projects have little or no research training, while most people with research training are not doing quality improvement projects. Different strategies will need to be developed for recruitment in different audiences.

Fifth, ethical oversight in quality improvement remains largely ambiguous and can be a large obstacle for many researchers. Quality improvement can be seen either as an intrinsic element of clinical care and medicine or as a form of clinical research. This in turn leads to questions as to whether quality improvement research should be

considered human subjects research, which would require ethics review and institutional review board (IRB) approval.

The sixth barrier identified during the workshop is the existence of methodological differences between the biological sciences and the social sciences. Quality improvement research faces the same challenges—such as biases, confounders, and difficulties with measurement—that clinical research does. However, quality improvement studies are not subject to the tightly controlled conditions of clinical interventions. It is therefore difficult to know if “proven interventions” are generalizable.

The seventh difficulty facing the development of quality improvement research is that much of what is published is poorly conducted. Because of a variety of factors, only a relatively small amount of quality improvement research is actually published, Davidoff said, and much of what is published is not generalizable and so fails to provide a basis for future efforts upon which to build.

The last barrier identified during the workshop was the barrier of communication. The lack of a common vocabulary for quality improvement and implementation research terms has hindered further progress, Grimshaw said.

OPPORTUNITIES

Both short-term and long-term opportunities exist for strengthening the science of quality improvement. In the short term, the opportunities identified by workshop participants centered on strengthening the evidence base for quality improvement. This can be achieved by using the most rigorous methods possible to assess interventions and by clarifying the focus of quality improvement projects. Long-term opportunities include creating strategies to improve professional development and effect cultural change among all stakeholders.

GENERAL REACTIONS

General reactions to the workshop discussions were given at the end of the day by both forum members and audience members. Many of their comments focused on the need to leverage experiences from other disciplines. The role of context should also be more carefully studied, as well as communication between researchers and between researchers and implementers. Other areas for the forum to pursue were also proposed.