2

Seeking Strong Evidence

The committee’s charge was to consider the scientific evidence on teacher preparation and to design an agenda for the research that is needed to provide the knowledge for improving that preparation. We found many different kinds of evidence that relate to teacher preparation: as we sifted through the available work, we repeatedly confronted questions about evidentiary standards. At times we struggled to agree on whether particular kinds of information constituted evidence and on the sorts of inferences that could be drawn from different kinds of evidence. This chapter describes the issues we identified and our approach to them.

APPROACHES TO RESEARCH DESIGN AND EVIDENCE

Much has been written about the problems of conducting research in education, specifically about the appropriateness of various research designs and methods and ways to interpret their results. In general, we are in agreement with the approach to research in education described in the National Research Council (NRC) (2002a) report Scientific Research in Education. In particular, that report identified six principles that should guide, but not dictate, the design of research in education:

-

Pose significant questions that can be investigated empirically.

-

Link research to relevant theory.

-

Use methods that permit direct investigation of the question.

-

Provide a coherent and explicit chain of reasoning.

-

Replicate and generalize across studies.

-

Disclose research to encourage professional scrutiny and critique.

The application of these principles to questions about teacher preparation poses particular conceptual and empirical challenges:

-

There are no well-formed theories that link teacher preparation to student outcomes.

-

The complex nature of schooling children makes it difficult to identify empirically the role of teacher preparation among the many intertwined influences on student outcomes.

-

The use of strict experimental design principles can be problematic in some educational settings. Teacher candidates are sorted into teacher preparation programs in nonrandom ways, just as beginning teachers are nonrandomly sorted into schools and groups of students: consequently, it is difficult to control for all the important factors that are likely to influence student outcomes.

Improving learning outcomes for children is a complex process. Both common sense and sophisticated research (e.g., Sanders and Rivers, 1996; Aaronson, Barrow, and Sander, 2003; Rockoff, 2004; Rivkin, Hanushek, and Kain, 2005; Kane, Rockoff, and Staiger, 2006) indicate that teachers have enormously important effects on children’s learning and that the quality of teaching explains a meaningful proportion of the variation in achievement among children. However, understanding that teachers are important to student outcomes and understanding how and why teachers influence outcomes are very different; our charge required us to think carefully about the evidence of the effects of teacher preparation. Student learning is affected by numerous factors besides teaching, many of which are beyond the control of the educational system. Even the factors that are affected by education policy involve intricate interactions among teachers, administrators, students, and their peers.1

Disentangling the role that teachers play in influencing student outcomes is difficult, and understanding the ways in which teacher education influences student outcomes is much more difficult. The design and the delivery of teacher education are connected to outcomes for K-12 students through a series of choices made by teacher educators and by teacher candidates in their roles as students and, later, as teachers. Identifying the empirical effects of teacher preparation on student outcomes poses many

|

1 |

We note the progress that has been made in exploring causal relationships in education in new work supported by the Department of Education and in work synthesized by the What Works Clearinghouse (see http://ies.ed.gov/ncee/wwc/ [September 2009]). |

of the problems that arise in most social science research, including: (1) the development of empirical measures of the important constructs, (2) accounting for the heterogeneous behavioral responses of individuals, and (3) the nonrandom assignment of treatments (teacher preparation) in the observable data. As in other social science research, the challenge of developing convincing evidence of the causal relationship between the preparation of teacher candidates and the outcomes of their K-12 students places strong demands on theory, research designs, and empirical models.

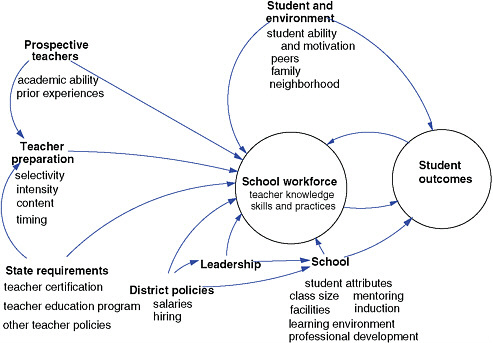

Some of these challenges are illustrated in Figure 2-1. Teacher candidates bring certain abilities, knowledge, and experiences with them as they enter teacher preparation programs. These differences likely vary within and across programs. The candidates then experience a variety of learning opportunities as part of their teacher education. Again, these experiences and the resulting knowledge and skills likely vary within and across programs. After completing their training, candidates who pursue teaching likely enter classrooms that vary greatly within and across schools on a variety of dimensions, including the characteristics of students, the curriculum, the school climate, and the neighborhood climate. Each source of variation affects individual student achievement: taken together, they com-

FIGURE 2-1 A model of the effects of teacher preparation on student achievement.

SOURCE: Adapted from Boyd et al. (2006, p. 159).

plicate the search for the empirical relationship between teacher education and student outcomes.

We note that establishing the chain of causation is a challenge not only in the field of education. Researchers and policy makers in medicine and in many other social science fields struggle to design studies that yield dependable results when carried out in real-world circumstances and to make sound decisions in the absence of a clear or complete evidentiary base (see, e.g., Sackett et al., 1996; Murnane and Nelson, 2007; Kilburn and Karoly, 2008; Leigh, 2009). Common to many fields is the challenge of thinking systematically about different sorts of evidence related to a question involving complex interactions of human behavior, which may not only vary in strength, but also vary in the mechanisms (or potential causes) about which they provide information. It is also the case that in many social science fields, as well as education, researchers have worked creatively to develop quasi-experimental research designs and other ways of making use of available data in order to examine empirical questions in complex real-world circumstances. Understanding of the nature of scientific evidence in education (and other fields) is evolving, and the diverse methodological backgrounds of the committee members enabled us to consider the issues broadly.2 We considered all of these issues as we weighed different kinds of studies and other available resources related to teacher preparation.

CAUSAL EVIDENCE

At the heart of many differences of opinion about the available research on teacher preparation (as on many topics in education) are questions about the strength of causal inferences to be made from it. One important purpose of research on teacher preparation is to provide an empirical basis for changes to policy and practice regarding the structure, content, and timing of teacher preparation, and many research methods can contribute to causal understanding. Causal understanding is built upon a body of research that usually begins with descriptive analysis and empirical efforts to identify correlations, and the development of competing theories of behavior. Refinements and adjustments are made to theoretical and empirical

models as alternative explanations are explored. Causal understanding is built on a converging body of evidence that includes research designs that support causal inference, such as random assignment of subjects to treatment and control.

Although there has rightly been much focus recently on research that uses random assignment and other methods that produce direct causal evidence, qualitative and other quantitative methods that describe institutions, participants, and outcomes also provide valuable information. Descriptive methods (whether qualitative or quantitative) shed light on the factors and forces that may affect student outcomes. Correlation does not necessarily imply cause, but it may provide useful guidance in ruling out competing alternative explanations. When combined with theory, such methods contribute to the identification and development of research hypotheses and point to methods that can identify a causal relationship between a policy and an outcome. In addition, descriptive analyses can provide information that explains the chain of behaviors that lead to various student outcomes. Because different methods have different strengths and weaknesses, it is important to seek converging evidence that draws on multiple methods. We expand below on the challenges of identifying causal pathways—as well as the strengths and weaknesses of different research methods—in the context of a hypothetical example about the impact of coursework on teachers of mathematics.

The Complexity of Analysis: An Example

Suppose policy makers are interested in understanding whether teacher preparation that includes rigorous mathematics coursework leads to higher mathematics achievement among students. There are a variety of research designs that could be used to explore this relationship. One would be to identify the teacher preparation programs in which math content is more rigorous than in other programs and compare the math achievement of the students taught by the graduates of the more rigorous program with the students taught by the teachers who completed the less rigorous math programs. However, without careful statistical controls, this simple comparison may well yield a misleading result because the other characteristics of teachers, their students, and schools that influence achievement outcomes may themselves be correlated with teachers’ mathematics preparation.

To see how misleading results can occur, consider that parents have control over which schools their children attend; teachers have control over the schools in which they choose to work; and principals have control over the teachers they hire. Each of these choices influences which teachers teach which students, and there is evidence that these choices lead to quite different outcomes across schools in a variety of settings (see,

e.g., Betts, Reuben, and Danenberg, 2000; Lankford et al., 2002; Clotfelter et al., 2006; Peske and Haycock, 2006). Teachers who score higher on measures of academic ability and teacher preparation are usually found in schools in which students come from more advantaged backgrounds and score better on achievement tests. This nonrandom sorting of teachers and their characteristics to students with systematically different achievement complicates the identification of the effect of preparation on student outcomes. Thus, one may not be able to draw valid conclusions about the effects of treatments from studies that do not adequately control for these confounding effects.

Researchers sometimes propose the inclusion of readily available measures in a statistical model to control for these kinds of differences, but this approach may not resolve the uncertainty. A variety of other important but very difficult-to-measure characteristics of students, teachers, or schools can also confound the understanding of teachers’ mathematics preparation. For example, parents who believe that education is very important to their children are likely not only to seek out schools where they believe their children will receive the best education, but also to provide other advantages that improve their children’s achievement. Teachers also make choices about how they approach their teaching, and those who make investments in rigorous mathematics preparation may also be more likely to engage in other activities (such as professional development) that support their students’ learning. Many teachers find it rewarding to work in schools where students are inquisitive and have good study skills. Principals in these schools will have strong applicant pools for vacancies and will hire the most qualified applicants. As a result, one cannot determine whether better prepared teachers lead to better student outcomes or whether the presence of students who are very likely to succeed is among the school characteristics that attract and retain better prepared teachers. Because rigorous math preparation is correlated with such difficult-to-measure attributes of teachers, students, and schools, isolating the causal effect of rigorous mathematics preparation is very difficult. The difficulty may not be overcome even with the assistance of multiple regression models that control for a long list of readily available variables. This is the challenge that often leads researchers to turn to various other research designs.

Randomized and Quasi-Experimental Designs

The strongest case for a causal relationship can often be made when randomization is used to control for differences that cannot be easily measured and controlled for statistically. In the context of our example about the impact of the teachers’ mathematics coursework, the ideal case would

be to randomly assign teacher candidates to different forms of mathematics coursework and then to randomly assign those teachers to different schools and students. If such a research design were both feasible and implemented well, a strong evaluation of the effectiveness of rigorous math preparation would be possible. When assignment is truly random, any possible effects of variation in the treatment (e.g., in the rigor of mathematics content preparation) will be evident despite variation in all of the other possible influences on student achievement. That is, any observed effect of the treatment on the outcome could be assumed to be a result of the treatment, rather than of other factors that may be unmeasured but are correlated with the treatment. For many research questions and contexts, random assignment experimental designs provide very strong internal validity when implemented well, and they are thus sometimes referred to as the “gold standard” of evaluation techniques.

However, randomized experimental designs have some potential shortcomings. Most significant is that such designs are not a feasible or appropriate research design in some education settings. To continue the example above, experimentally manipulating the extent of mathematics content preparation a teacher receives may be difficult, but it is possible. Randomly assigning those teachers across a wide range of student abilities may prove more difficult. Random assignment is also susceptible to other potentially confounding factors, such as when some parents respond to their perceptions about teacher quality by adjusting other factors, such as their own contribution toward student achievement. Also troubling is the challenge of accounting for the important individualized interactions that occur between teachers and students that lead to high student achievement. Finally, many experiments are designed tightly around a particular treatment and counterfactual case (that is, hypotheses about what the circumstances would be without the treatment). If well executed, the experiment provides very strong evidence for this specific question, but typically such experiments have limited ability to generalize to other settings (external validity) (Shadish, Cook, and Campbell, 2002; Morgan and Winship, 2007). In addition, random assignment experiments are expensive and time-consuming to carry out, which places practical constraints on the information this method has been able to provide. In general, random assignment is an important and underused research design in education; however, its strength—possibly providing clearer information about causal relationships—must be weighed against the difficulties in carrying out and generalizing from such studies.

Other research designs, often called quasi-experimental, use observational data to capitalize on naturally occurring variation (Campbell and Stanley, 1963; Cook and Campbell, 1986). These methods use varying approaches to attempt to mimic the ways in which randomized designs con-

trol the variation.3 For example, a technique called regression discontinuity analysis, an alternative method developed for the evaluation of social programs and more recently applied to educational interventions, uses statistical procedures to correct for possible selection bias and support an estimate of causal impact (see, e.g., Bloom et al., 2005). Such approaches work well when they can convincingly rule out competing explanations for changes in student achievement that might otherwise be identified as an effect of the factor being investigated. Thus these approaches work best when researchers have a strong understanding of the underlying process (in this case, both the content of teacher preparation and the other forces that shape the relationship between teacher preparation and K-12 student achievement) and can explore the validity of competing explanations (National Research Council, 2002a, p. 113; Morgan and Winship, 2007). Theory and descriptive analysis, including quantitative and qualitative studies, as well as the opinions of experts, all contribute to such an understanding.

A method that attempts to control for many alternative explanations and is receiving increasing attention especially in examining issues of teacher preparation is the value-added model of student achievement. We examine this method in a bit more detail.

Value-Added Models

Value-added modeling is a method for using data about changes in student achievement over time as a measure of the value that teachers or schools have added to their students’ learning. The appeal of value-added methods is the promise they offer of using statistical techniques to adjust for unmeasured differences across students. Doing so would make it possible to identify a measure of student learning that can be attributed to individual teachers and schools. This approach generally requires very large databases because researchers must statistically isolate the student achievement data from data on other student attributes that could affect achievement (such as the students’ prior achievement or the characteristics of their peers) to control for the confounding factors identified in Figure 2-1. The models typically include a variety of controls intended to account for many of the competing explanations of the link between teacher preparation and student achievement. The recent availability of district and statewide databases that link teachers to their students’ achievement scores (Crowe, 2007) has made this analysis feasible. However, there is substantial debate in the research community about this approach (Kane and Staiger, 2002; McCaffery et al., 2003; Rivkin, 2007; National Research Council, 2010).

There are concerns that value-added methods do not adequately disentangle the role of individual teachers or their characteristics from other factors that influence student achievement. That is, they may not statistically control for the full range of potential confounding factors depicted in Figure 2-1 (Rothstein, 2009). Accurate identification of these effects is complicated by the nonrandom assignment of teachers to schools and students, both across and within schools. In addition, there are concerns about measures of student outcomes and accurate measurement of teacher preparation attributes. For example, there is concern that commonly used measures of student achievement may assess only a portion of the knowledge and skills that are viewed as important for students to learn. It may also be the case that currently available measures of what constitutes teacher preparation are inadequate representations of the underlying concepts. Another concern is that student achievement tests developed in the context of high-stakes accountability goals may provide a distorted understanding of the factors that influence student achievement. While the tests themselves may be well designed, the stakes attached to their results may cause teachers to focus disproportionately on students who are scoring near the cut-points associated with high stakes, at the expense of students who are performing substantially above or below those thresholds.

All of these are important concerns that may affect the ability to draw inferences from the estimated model. As with any research design, value-added models may provide convincing evidence or limited insights, depending on how well the model fits the research question and how well it is implemented. Value-added models may provide valuable information about effective teacher preparation, but not definitive conclusions, and are best considered together with other evidence from a variety of perspectives.

Qualitative and Descriptive Analyses

Qualitative and descriptive analyses also have much to contribute. Proper interpretation of the outcomes of experimental designs and the statistical approaches described above are dependent on the clear identification of the treatment and its faithful implementation. Theory, case studies, interpretive research, descriptive quantitative analysis, expert judgment, interviews, and observational protocols all help to identify promising treatments and can provide important insights about the mechanisms by which a treatment may lead to improved student outcomes. For example, if it appears that stronger mathematics preparation for teachers is associated with improved math outcomes for students, there is good reason to broaden and deepen the analysis with additional descriptive evidence from other contexts and ultimately to develop research designs to investigate the potential causal links.

As detailed in later chapters, there is more descriptive research available concerning teacher education than other forms of information. Indeed, many policy and program initiatives related to teacher quality and preparation have emerged in the past 20 years, and there has been a great deal of interest in the content and effects of teacher education. Professional societies in the academic disciplines have taken seriously their responsibility to offer guidance both about what students should learn and the knowledge and skills teachers need in order to develop that learning in their students, and they have drawn on both research and the intellectual traditions of their fields in doing so. We return in subsequent chapters to questions about what can be concluded from this literature, but these contributions to the discourse on teacher preparation have identified promising approaches and pointed to the mechanisms that seem likely to have the greatest influence on teacher quality. They also allow the field to refine testable hypotheses and to develop sophisticated, nuanced questions for empirical study.

CONCLUSION

Although there has been a great deal of research on teacher education (for summaries, see Wilson, Floden, and Ferrini-Mundy, 2001; Cochran-Smith and Zeichner, 2005; Darling-Hammond and Bransford, 2005), few issues are considered settled. As a result, the field has produced many exciting research projects that are exploring a variety of ways of gaining evidence. For many questions, researchers are grappling with fundamental issues of theory development, formulating testable hypotheses, developing research designs to empirically test these theories, trying to collect the necessary data, and examining the properties of a variety of emerging empirical models.

Given the dynamic state of the research, we chose to examine a range of research designs, bearing in mind the norms of social science research, and to assess the accumulated evidence. Some research methods have greater internal or external validity than others, but each has limitations; polarized discussions that focus only on the strengths or weaknesses of a particular method have contributed little to understanding of important research questions. We concluded that the accumulated evidence from diverse methods applied in diverse settings would increase our confidence in any particular findings.

Ideally, policy makers would base policy on a body of strong empirical evidence that clearly converges on particular courses of action. In practice, policy decisions are often needed before the research has reached that state. Public scrutiny of deficiencies in teacher preparation has inspired many new program and policy initiatives that have, in turn, generated a great deal of information. Unfortunately, like most innovations in education, many of

these initiatives have not been coupled with rigorous research programs to collect good data on these programs, the fidelity of their implementation, or their effects. Thus, although policy makers may need to make decisions with incomplete information, the weaker the causal evidence, the more cautiously they should approach these decisions and the more insistent they should be about supporting research efforts to study policy experiments. We return to this point in Chapter 9 in our discussion of a proposed research agenda.