5

Collaboration

Analysis improves when analysts with diverse perspectives and complementary expertise collaborate to work on intelligence problems.

The fragmented nature of the intelligence community (IC) did not arise by accident. As Chapter 1 describes, different agencies specialize in processing different forms of intelligence, serve different customers, and need workforces with different skills. Although this “structure” allows IC entities to have close working relationships with their primary customers, the resulting specialization and “stovepipes” can frustrate the integration needed to take full advantage of diverse competencies for tackling complex problems.

In order to realize the full potential of its material and human resources, the IC has invested in improving collaboration among analysts and agencies. One example, mentioned before, is the Analytic Resources Catalog, which helps analysts to locate relevant knowledge and skills within the IC. A second example is A-Space, which allows analysts to query the community, share information and perspectives, and collaborate on problem solving. A-Space specifically facilitates self-organizing networks that take advantage of expertise spread across the IC, providing agility that is impossible with conventional organizational arrangements.

This chapter first considers the forms and dimensions of collaboration, then reviews the research on the benefits of collaboration, the right level of collaboration, and barriers to collaboration. The last section takes up the issue of evaluation that is relevant to improving collaboration in the IC.

FORMS AND DIMENSIONS

In its broadest sense, collaboration in the IC occurs whenever one analyst seeks assistance from another analyst, outside contractor, or unpaid expert. Such collaborations vary along several dimensions that, when matched appropriately to situational needs, can improve their effectiveness. One is group duration, which can range from an ad hoc one-time arrangement to long-standing working groups. A second is the number of analysts involved. A third is how direct communications are, ranging from face-to-face meetings to anonymous electronic exchanges. Fourth, collaboration can be either cooperative (seeking shared conclusions) or competitive, maintaining alternative views either deliberately (e.g., red teaming or devil’s advocacy) or naturally (e.g., genuine disagreement).

Although all collaborations involve some integration of independent perspectives, consensus is not necessary for the outcome to be useful. Indeed, research in private corporations finds that people are most satisfied with collaboration that strives for “consensus with qualification,” in the sense that the final product reflects a majority view, while preserving dissenting views as “qualifications” (e.g., Eisenhardt et al., 1997). Useful integrated outcomes need not be produced by analysts themselves, but might be aggregated by an external team or even software “robots” (as in a prediction market). Box 5-1 describes an emerging form of parallel collaboration: “idea tournaments” for producing the best ideas for solving a problem.

Intelligence in the age of global counterterrorism requires effective collaboration with groups both inside and outside the IC, including domestic and international agencies, private contractors, industry experts, and academics. These relationships can range from informal calls for advice to formal contracts.

Assessment of the value of collaborations face the same evaluation challenges that arise with individually produced analyses (Chapter 2). Independent review committees can provide empirical evaluations (e.g., those conducted by the National Institutes of Health, National Science Foundation, Institute of Education Sciences, and Office for Judicial Research; for an example in private business, see Sharpe and Keelin, 1998). The Intelligence Advanced Research Projects Activity (IARPA) performs some of this function for the IC, by evaluating new high-risk/high-payoff methods with a small probability of producing an overwhelming intelligence advantage. However, IARPA does not support research on current analytic practices. The Office of Analytic Integrity and Standards in the Office of the Director of National Intelligence (ODNI), along with units in other IC agencies, intensely evaluates analytical work done internally, but typically their pro-

|

BOX 5-1 Idea Tournaments: A collaborative tool The private sector is increasingly relying on “idea tournaments,” providing rewards to the best solution proposed for a problem. The concept of tournaments, however, is hardly new, with antecedents reaching back at least as far as the Longitude Prize offered by the British Parliament in 1714. Drawing on the fundamentals of agency theory in microeconomics and on other research, Morgan and Wang (2010) identify three conditions in which tournaments are most likely to prove useful, all of which are often satisfied in the world of intelligence analysis.

Tournaments can be a potent tool for helping organizations break out of suboptimal coping patterns. The science of behavior in tournaments is growing and offers options that could be adapted to the conditions of the IC; the results could then be subjected to empirical tests, compared with other methods of collaboration. |

cedures rely only on expert judgment to assess analyses and the value of collaboration.

BENEFITS

Although organizational specialization and separation have some advantages, enhancing collaboration across the IC has several expected benefits for analyses that demand knowledge and skills distributed among analysts and across organizational boundaries. For example, consider analysts trying to determine whether a gathering of 20 people in a Yemeni village indicates the presence of terrorists. Although knowledge of Yemeni culture is vital, so, too, might be knowledge of recent terrorist recruitment methods and illegal international financial transfers (if there have been

unusual flows of funds into the village). Experts in each area could guess at what the others know. However, they are better off consulting, especially given individuals’ tendency to underestimate how much their own knowledge is limited (Fischhoff et al., 1978; Lichtenstein et al., 1982). One reason for this bias is that the absence of information is less salient than its presence (e.g., Agnostinelli et al., 1989). Thus, the set of hypotheses an analyst entertains (e.g., about the village gathering) will be limited to what the analyst can imagine. Collaboration can add missing perspectives.

Analysts often face virtually unbounded sets of potentially relevant knowledge. Because no one can search through all relevant knowledge (Newell and Simon, 1972; Simon, 1979), people take mental shortcuts, in the form of schemas and heuristics that focus, but naturally limit, their information search and processing (Bobrow and Norman, 1975; Fiske and Taylor, 1991; Hayes and Simon, 1974; Ohlsson, 1992).

When people with heterogeneous backgrounds work together, their perspectives filter information in different ways, allowing more knowledge and solutions to emerge. Diversity can be sought in subject-matter expertise, functional background (Dearborn and Simon, 1958; Sarasvathy, 2001), personal experience (Shane, 2000), and mission perspective (Anderson and Pichert, 1978). Such sharing allows analyses to be richer and deeper, with better understood strengths and weaknesses, whereas individuals working in isolation are more limited by their assumptions and myopic about the limits to their own knowledge (Cheng et al., 2008; Leung et al., 2008; Williams and O’Reilly, 1998). Simply communicating one’s assumptions, which is more likely when team members with different backgrounds collaborate, can expose gaps in logic and information (Nemeth, 1986). Exposure to contrasting perspectives can reveal errors and promote re-conceptualization (Bobrow and Norman, 1975).

A broader definition of collaboration includes not just groups that interact directly, but also teams that work in parallel, with customers (or managers) aggregating their conclusions. In such arrangements, it may be productive to have the teams work independently and even competitively before their individual products are integrated by a manager or customer.

THE RIGHT LEVEL

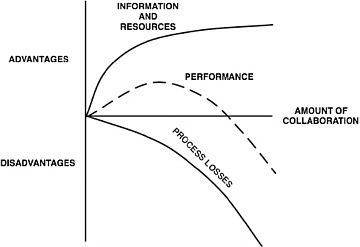

Although there are benefits to increased collaboration, there are also costs, traditionally labeled “process losses.” Figure 5-1 illustrates these benefits and costs and their net impact (subtracting costs from benefits). The result is a single-peaked performance function that has a maximum value at intermediate levels of collaboration.

One cause of “process loss” is not related to collaboration, but is exacerbated by it: overloading analysts and their organizations with more

FIGURE 5-1 Schematic summary of the tradeoffs between the advantages and disadvantages of collaboration in complex systems.

information than they can handle, especially when they must struggle to identify the relatively few relevant bits. Such overload appears to have been part of some of the most public failures of intelligence, such as the failure to detect the 9/11 attacks, the confused efforts regarding the 2009 Christmas Day bomb plot, and even Pearl Harbor (Wohlstetter, 1962). A second cause of process loss, which is a direct result of collaboration, is the time and effort invested in coordinating activities.

Some process loss is essential to achieving the benefits of collaboration, as people work to understand one another’s perspectives (Dearborn and Simon, 1958; Dougherty, 1992). Indeed, the greater the heterogeneity, the greater the potential benefit and potential process loss, in terms of intellectual effort and sometimes emotional turmoil. Would-be collaborators who cannot understand one another may fight over resources, hoard information, and interfere with others’ activities (Bonacich, 1990). In the IC context, there are often tensions regarding the value of different information sources (e.g., whether signals intelligence or human intelligence is more accurate). At some point, the gaps become too wide and the process losses too great for collaboration to be efficiently beneficial. One way to reduce that threat is to ensure that analysts have basic familiarity with one another’s expertise before they begin a collaborative process. A common training curriculum across the IC can help in that regard (see Chapter 3).

Social and emotional conflicts can be so intense that negative stereotypes about potential collaborators prevent cooperative collaboration (e.g., “Those people don’t understand … have the wrong priorities … are look-

ing for answers to the wrong questions … are lost in the weeds”). Such positions reflect the tendency to underestimate the subjectivity of one’s own perspectives (e.g., Griffin and Ross, 1991) and to overestimate the extent to which others think similarly (Dawes, 1994). Realizing that other people have totally different perspectives can be threatening, with the natural response of devaluing not only the perspectives, but also the people.

In theory, cognitive conflicts, which arise from differences in beliefs and perceptions, are different from affective conflicts, which arise from emotional differences (Guetzkow and Gyr, 1954). However, recent research on discord within teams finds that these two often go together (e.g., DeDreu and Weingart, 2003). As cognitive conflict increases, it can stimulate emotional arousal that, then, interferes with cognitive flexibility and creative thinking (Carnevale and Probst, 1998). There can be a tipping point, after which the conflict is so intense that it undermines productive teamwork.

Fortunately, team members need not share perspectives as long as they are not diametrically opposed. For example, effective teams often have members with highly differentiated roles and areas of expertise (but not direct conflict; see Hutchins, 1991). Indeed, such teams can perform better than ones whose members have more similar perspectives (Liang et al., 1995; Wegner, 1986). A study in the software development industry found that members of successful teams had perspectives that diverged over time as they took on more specialized tasks (Levesque et al., 2001). It takes careful organizational design to create the right balance between similar and divergent views for collaborative work.

Because IC entities, like all organizations, operate in a resource-constrained environment, the costs of collaboration can outweigh its benefits. A good rule of thumb is the “law of requisite variety”: a group’s heterogeneity should match the complexity of the problems it is tasked to solve. With heterogeneous teams, process losses can outweigh the benefits of collaboration for simple problems, with the balance shifting for complex problems. Of course, this principle is easier to advocate in the abstract than to execute in the world of imperfect perceptions, incomplete knowledge, and limited time.

BARRIERS

Within the IC, many barriers to collaboration fall into two main categories: the behavior of individuals, and agency divisions and specializations that isolate intelligence into “stovepipes.” In assessing individual behavior, one barrier to collaboration is not recognizing the need to collaborate, because people do not recognize the extent to which their training, socialization, and professional activities have shaped their world views and mental schemas (for a review, see Tinsley, 2011)—and not realizing the

overconfidence that comes with that narrowness (Ariely, 2008; Arkes and Kajdasz, 2011).

A second barrier arises from not knowing where to find needed complementary knowledge. A feature of successful teams is transactive memory: members learn who knows what, effectively making others’ knowledge a part of their repertoire, ready when they need it (Wegner et al., 1985). Without that shared experience, other means are needed to ensure that individuals know about one another’s expertise. Such deliberate means are essential to the IC, in which the needed knowledge can be widely distributed, inside and outside an agency and the IC itself. One deliberate way to develop transactive memory is to have potential collaborators train together (Liang et al., 1995). A second is explicitly giving team members information about one another’s domains of expertise (Moreland and Myaskovsky, 2000). The IC’s emerging databases of general expertise and specific knowledge are designed to address these needs. However, these databases need to reflect critical behavioral and social science in their design, such as ensuring that analysts can access this data (e.g., with search fields appropriate to diverse users) and can evaluate its authoritativeness (e.g., with evidence of source reliability).

A third barrier to collaboration arises when analysts resist outside perspectives because of social factors, such as intergroup rivalries. Collaboration appears to work best when collaborators’ areas of expertise (and perspectives) do not overlap and are mutually compatible, in the sense of not threatening one another’s legitimacy (Hutchins, 1991). Collaboration presupposes trust, as the parties make themselves somewhat vulnerable. The analyst who requests information exposes a need, while the responding analyst divulges information. In order to create such trust, organizations need to create general conditions favorable to the success of initial requests and responses, such as instilling norms of fulfilling promises and reciprocal disclosure.

The compartmented institutional structure of the IC poses an inherent barrier to collaboration. With many agencies having different specializations, histories, recruitment, and training methods, the IC lends itself to intergroup categorization processes that impede collaboration (see Tinsley, 2011). Those processes can encourage analysts to treat members of other groups categorically, as though they have similar, simplistic views, promoting stereotypes that spawn reciprocal antipathy (Kramer, 2005).

Categorization is one reason that information tends to be localized within dense restricted social networks, or “stovepipes,” rather than distributed throughout a community (Burt, 1992; Granovetter, 1973; Krackhardt and Brass, 1994). These natural processes may be exacerbated by IC practices that involve secrecy classifications and sharing information on a need-to-know basis, further limiting awareness of what others know. Recent IC

efforts to replace the tradition of “need to know” with a policy of “responsibility to share” are consistent with effective collaborative environments (Office of the Director of National Intelligence, 2007).

A further institutional barrier to collaboration arises when incentives are lacking. Many commentators have noted that credit in the IC seems to be assigned mainly for direct contributions to intelligence products, with no explicit mechanisms for recognizing “assists” (O’Connor et al., 2009). Indeed, the committee observed little support for collaboration in performance evaluations and, sometimes, saw incentives for the opposite. Studies have shown the pitfalls of poorly created incentive schemes and ways to avoid unintended negative consequences (e.g., Baron and Kreps, 1999).

The IC has recognized these institutional obstacles to collaboration, with a primary mission of the ODNI being to reduce them. To that end, several visible programs have been implemented, including joint agency training exercises, joint-duty job assignments, cross-functional and multiagency project teams, and promotion of superordinate goals of the IC as a whole (see Tinsley, 2011).

Paradoxically, one way to enhance collaboration in the IC may be to increase its differentiation and specialization. Publicly signaled differentiation legitimates an analyst turning to other analysts for help, while pointing out the specific individuals who might provide it—thereby identifying them with their knowledge, rather than with their agency. Increased recognition of analysts’ expertise would make it easier to recognize complementary expertise and the importance of recognizing gaps in knowledge. Moreover, narrower domains for analysts reduce the risks of analysts appearing to intrude on one another’s domains. Having analysts identified by their specialties can also help managers to think systematically about team composition (see Hastie, 2011). Thus, it should be possible to enhance collaboration by defining, cataloging, and publicizing analysts’ specialties.

EVALUATION

Research clearly shows that effective collaboration need not entail achieving consensus or even working together in groups. Rather, collaboration requires a balance, having enough tension between perspectives to elicit productive reflection, but not so much tension as to generate intergroup hostility. Creating those conditions requires recognizing the different kinds of expertise needed for particular problems. As with all other aspects of organizational design, productive collaboration requires hard-headed, outcome-based evaluations of collaborative projects, perhaps with some deliberate experimentation—followed by implementing the lessons learned from these evaluations, recognizing that the best practices may vary by problem.

Many questions can be addressed by evaluation. For example, when should a project manager task two independent teams with producing analyses that will be compared or aggregated? When is it best to keep information sources as rich and separate as possible until a final integration stage, rather than producing tentative integrative solutions early, then subjecting them to rigorous critiques from multiple perspectives? What are the best procedures for winnowing out information, in terms of balancing overload and preserving facts that might reveal flaws in reasoning? A strong commitment to empirical assessment would provide definite answers to currently unresolved questions concerning the value of adversarial methods (e.g., red team versus blue team simulations) and nominal group information integration procedures (e.g., the Delphi Method, in which experts iteratively provide independent estimates, then see pooled group judgments).

Although many in the IC express enthusiasm for collaborative aids like A-Space, there have been no rigorous evaluations of what works well and what does not. Such evaluations might reveal the value of creating programs that prompt and support collaboration between analysts who have not recognized their mutual interests. One possibility is a system like the web-based Delicious, whose users voluntarily share web bookmarks, helping others to identify shared interests.1 Even without such deliberate tagging, individual analysts’ search queries might be matched using “nearest neighbor” matching algorithms, so that the system would cue them about mutual interests. Although there are theoretical reasons to think that this solution, along with many potential innovations, might work, only empirical evaluation will provide concrete evidence about their value and about needed refinements.

Effective, innovative collaboration will not happen without incentives and strong management. Analysts need to know that they will be supported if they spend time learning other units’ perspectives, share their information, and express ranges of opinion in their reports. The various IC mission statements demonstrate that the IC’s senior leadership views such collaboration as central to its mission (see Chapter 1). The science exists to guide its implementation and evaluate its success. But to be effective, collaboration must be supported with strong, positive incentives, given the natural tendency for organizations to compartmentalize, especially when analyzing sensitive information under high-stress conditions.

|

1 |

Delicious is a Yahoo! company with free membership that allows users to save web bookmarks online, organize bookmarks by multiple categories, share personal bookmarks with friends, and see the most popular bookmarks across users. For more information about this social bookmarking site, see http://www.delicious.com [August 2010]. |

REFERENCES

Agnostinelli, G., S.J. Sherman, R.H. Fazio, and E.S. Hearst. (1989). Detecting and identifying change: Additions versus deletions. Journal of Experimental Psychology: Human Perception and Performance, 12(4), 445-454.

Anderson, R.C., and J.W. Pichert. (1978). Recall of previously unrecallable information following a shift in perspective. Journal of Verbal Learning and Verbal Behavior, 17(1), 1-12.

Ariely, D. (2008). Predictably Irrational: The Hidden Forces That Shape Our Decisions. New York: Harper Collins.

Arkes, H., and J. Kajdasz. (2011). Intuitive theories of behavior as inputs to analysis. In National Research Council, Intelligence Analysis: Behavioral and Social Scientific Foundations. Committee on Behavioral and Social Science Research to Improve Intelligence Analysis for National Security, B. Fischhoff and C. Chauvin, eds. Board on Behavioral, Cognitive, and Sensory Sciences, Division of Behavioral and Social Sciences and Education. Washington, DC: The National Academies Press.

Baron, J.S., and D.M. Kreps. (1999). Strategic Human Resources: Frameworks for General Managers. New York: John Wiley and Sons.

Bobrow, D.G., and D.A. Norman. (1975). Some principles of memory schemata. In D.G. Bobrow, ed., Representations and Understanding (pp. 131-149). New York: Academic Press.

Bonacich, P. (1990). Communication dilemmas in social networks: An experimental study. American Sociological Review, 55(3), 448-459.

Burt, R.S. (1992). Structural Holes: The Social Structure of Competition. Cambridge, MA: Harvard University Press.

Carnevale, P.J., and T.M. Probst. (1998). Social values and social conflict in creating problem solving and categorization. Journal of Personality and Social Psychology, 74(5), 1,300-1,309.

Cheng, C-Y., J. Sanchez-Burks, and F. Lee. (2008). Connecting the dots within: Creative performance and identity integration. Psychological Science, 19(11), 1,178-1,184.

Dawes, R.M. (1994). Behavioral decision making and judgment. In G. Gilbert, S.T. Fiske, and R. Lindzey, eds., The Handbook of Social Psychology. 4th ed. (pp. 497-548). New York: Oxford University Press.

Dearborn, D.C., and H.A. Simon. (1958). Selective perception: A note on the departmental identification of executives. Sociometry, 21(2), 140-144.

DeDreu, C.K.W., and L.R. Weingart. (2003). Task versus relationship conflict, team performance, and team member satisfaction: A meta-analysis. Journal of Applied Psychology, 88(4), 741-749.

Dougherty, D. (1992). Interpretive barriers to successful product innovation in large firms. Organization Science, 3(2), 179-202.

Eisenhardt, K.M., J.L. Kahwajy, and J.L. Bourgeois III. (1997). How management teams can have a good fight. Harvard Business Review, 75(4), 77-85.

Fischhoff, B., P. Slovic, and S. Lichtenstein. (1978). Fault trees: Sensitivity of estimated failure probabilities to problem representation. Journal of Experimental Psychology: Human Perception and Performance, 4(2), 330-344.

Fiske, S.T., and S.E. Taylor. (1991). Social Cognition. 2nd ed. New York: McGraw-Hill.

Granovetter, M.S. (1973). The strength of weak ties. American Journal of Sociology, 78(6), 1,360-1,380.

Griffin, D.W., and L. Ross. (1991). Subjective construal, social inference, and human misunderstanding. In M.P. Zanna, ed., Advances in Experimental Social Psychology: Vol. 24 (pp. 319-359). San Diego, CA: Academic Press.

Guetzkow, H., and J. Gyr. (1954). An analysis of conflict in decision making groups. Human Relations, 7, 367-382.

Hastie, R. (2011). Group processes in intelligence analysis. In National Research Council, Intelligence Analysis: Behavioral and Social Scientific Foundations. Committee on Behavioral and Social Science Research to Improve Intelligence Analysis for National Security, B. Fischhoff and C. Chauvin, eds. Board on Behavioral, Cognitive, and Sensory Sciences, Division of Behavioral and Social Sciences and Education. Washington, DC: The National Academies Press.

Hayes, J.R., and H.A. Simon. (1974). Understanding written problem instructions. In L.W. Gregg, ed., Cognition and Knowledge (pp. 167-200). Hillsdale, NJ: Lawrence Erlbaum.

Hutchins, E. (1991). The social organization of distributed cognition. In L.B. Resnick, J.M. Levine, and S.D. Teasley, eds., Perspectives on Socially Shared Cognition (pp. 283-307). Washington, DC: American Psychological Association.

Krackhardt, D., and D.J. Brass. (1994). Intraorganizational networks: The micro side. In S. Wasserman and J. Galaskiewicz, eds., Advances in Social Network Analysis: Research in the Social and Behavioral Sciences (pp. 207-229). Thousand Oaks, CA: Sage Publications, Inc.

Kramer, R. (2005). A failure to communicate: 9/11 and the tragedy of the informational commons. International Public Management Journal, 8(3), 397-416.

Leung, A.K., W.W. Maddux, A.D. Galinsky, and C. Chiu. (2008). Multicultural experience enhances creativity: The when and how? American Psychologist, 63(3), 169-181.

Levesque, L.L., J.M. Wilson, and D.R. Wholey. (2001). Cognitive divergence and shared mental models in software development project teams. Journal of Organizational Behavior, 22(SI), 135-144.

Liang, D.W., R.L. Moreland, and L. Argote. (1995). Group versus individual training and group performance: The mediating role of transactive memory. Personality and Social Psychology Bulletin, 21(4), 384-393.

Lichtenstein, S., B. Fischhoff, and L.D. Phillips. (1982). Calibration of probabilities: State of the art to 1980. In D. Kahneman, P. Slovic, and A. Tversky, eds., Judgment Under Uncertainty: Heuristics and Biases (pp. 306-334). New York: Cambridge University Press.

Nemeth, C.J. (1986). Differential contribution of majority and minority influence processes. Psychological Review, 93(1), 10-20.

Newell, A., and H.A. Simon. (1972). Human Problem Solving. Engelwood Cliffs, NJ: Prentice Hall.

March, J. (2010). The Ambiguities of Experience. Cornell, NY: Cornell University Press.

Morgan, J., and R. Wang. (2010). Tournaments for ideas. California Management Review, 52(2), 77-97.

Moreland, R.L., and L. Myaskovsky. (2000). Exploring the performance benefits of group training: Transactive memory or improved communication? Organizational Behavior and Human Decision Processes, 82(1), 117-133.

Office of the Director of National Intelligence. (2007). Preparing Intelligence to Meet the Intelligence Community’s “Responsibility to Provide.” ICPM 2007-200-2. Office of the Director of National Intelligence, Intelligence Community Policy Memorandum Number 2007-200-2. Available: http://www.dni.gov/electronic_reading_room/ICPM%202007-200-2%20Responsibility%20to%20Provide.pdf [April 2010].

O’Connor, J., E.J. Bienenstock, R.O. Briggs, C. Dodd, C. Hunt, K. Kiernan, J. McIntyre, R. Pherson, and T. Rieger, eds. (2009). Collaboration in the National Security Arena: Myths and Reality—What Science and Experience Can Contribute to Its Success. Multi-Agency/Multi-Disciplinary White Paper. Available: http://www.usna.edu/Users/math/wdj/teach/CollaborationWhitePaperJune2009.pdf [April 2010].

Ohlsson, S. (1992). Information processing explanations of insight and related phenomena. In M. Keane and K. Gilhooly, eds., Advances in the Psychology of Thinking (Vol. 1, pp. 1-44) London, UK: Harvester-Wheatsheaf.

Sarasvathy, S.D. (2001). Effectual Reasoning in Entrepreneurial Decision Making: Existence and Bounds. Paper presented at the 2001 annual meeting of the Academy of Management, Washington, DC. Available: http://www.effectuation.org/Abstracts/AOM01.htm [October 2010]. Darden Graduate School of Business, University of Virginia.

Shane, S. (2000). Prior knowledge and the discovery of entrepreneurial opportunities. Organization Science, 11(4), 448-469.

Sharpe, P., and T. Keelin. (1998). How SmithKline Beecham makes better resource-allocation decisions. Harvard Business Review, 76(2), 45.

Simon, H.A. (1979). Models of Thought. New Haven, CT: Yale University Press.

Tetlock, P.E. (2005). Expert Political Judgment: How Good Is It? How Can We Know? Princeton, NJ: Princeton University Press.

Tetlock, P.E., and B. Mellers. (2011). Structuring accountability systems in organizations: Key trade-offs and critical unknowns. In National Research Council, Intelligence Analysis: Behavioral and Social Scientific Foundations. Committee on Behavioral and Social Science Research to Improve Intelligence Analysis for National Security, B. Fischhoff and C. Chauvin, eds. Board on Behavioral, Cognitive, and Sensory Sciences, Division of Behavioral and Social Sciences and Education. Washington, DC: The National Academies Press.

Tinsley, C. (2011). Social categorization and intergroup dynamics. In National Research Council, Intelligence Analysis: Behavioral and Social Scientific Foundations. Committee on Behavioral and Social Science Research to Improve Intelligence Analysis for National Security, B. Fischhoff and C. Chauvin, eds. Board on Behavioral, Cognitive, and Sensory Sciences, Division of Behavioral and Social Sciences and Education. Washington, DC: The National Academies Press.

Wegner, D.M. (1986). Transactive memory: A contemporary analysis of the group mind. In B. Mullen and G.R. Goethals, eds., Theories of Group Behavior (pp. 185-208). New York: Springer-Verlag.

Wegner, D.M., T. Giuliano, and P. Hertel. (1985). Cognitive interdependence in close relationships. In W.J. Ickes, ed., Compatible and Incompatible Relationships (pp. 253-276). New York: Springer-Verlag.

Williams, K.Y., and C.A.I. O’Reilly. (1998). Demography and diversity in organizations: A review of 40 years of research. Research in Organizational Behavior, 21, 77-140.

Wohlstetter, W. (1962). Pearl Harbor: Warning and Decision. Stanford, CA: Stanford University Press.