Cost and Technical Evaluation of Priority Missions

BACKGROUND

Concerns have been voiced for some time about the accuracy of the mission cost estimates used in past decadal studies. A National Research Council (NRC) report published in 2006 concluded that “major missions in space and Earth science are being executed at costs well in excess of the costs estimated at the time when the missions were recommended in the National Research Council’s decadal surveys for their disciplines. Consequently, the orderly planning process that has served the space and Earth science communities well has been disrupted, and balance among large, medium, and small missions has been difficult to maintain.”1 In response to this concern, the same report recommended that “NASA should undertake independent, systematic, and comprehensive evaluations of the cost-to-complete of each of its space and Earth science missions that are under development, for the purpose of determining the adequacy of budget and schedule.”2

An extended discussion of cost estimates and of the technology readiness of candidate missions took place during a subsequent NRC workshop concerning lessons learned from past decadal surveys. Workshop participants found that cost and technology readiness evaluations that were conducted independently of NASA estimates would add value to the surveys. They also suggested that uniform cost-estimating methods should be used within a given survey to facilitate cost comparisons among initiatives.3

With this guidance in hand, NASA called for an independent evaluation of cost and technology readiness in the statement of task for the NRC assessment of the agency’s Beyond Einstein program.4 Finally, in an act codifying the decadal surveys, Congress mandated that the NRC “include independent estimates of the life cycle costs and technical readiness of missions assessed in the decadal survey wherever possible.”5 Therefore, the statements of task for the most recent astronomy decadal survey,6 for this study (see Appendix A), and for the heliophysics decadal survey currently in progress all call for independent cost and technical evaluations of recommended initiatives.

THE CHALLENGE OF COST, SCHEDULE, AND TECHNICAL ESTIMATES

The mission concepts used in decadal surveys are typically in preliminary stages of development. In NASA parlance these are “pre-Phase A concepts.” Experience shows that the cost of a space mission is usually not well understood until its preliminary design review (PDR) has been completed. Even then, unexpected growth of mass, cost, and schedule can occur during the later phases of design and development. Further challenging costing is the fact that some pre-Phase A concepts are more mature than others because more resources have been devoted to

their formulation. Accordingly, ensuring that a costing exercise is level and fair requires that the relative maturity of concepts be taken into account.

Several different types of cost, schedule, and technical risk evaluations are used when discussing spacecraft missions. The best known are the so-called independent cost estimates (ICEs) and NASA’s technical, management, and cost (TMC) estimates. Less well known is the cost and technical evaluation (CATE) process. Each has its own strengths and weaknesses (Table C.1).

ICEs are typically done late in the life cycle of a project after it has matured. ICEs often do not consider certain aspects of cost growth associated with design evolution in the earliest phases of a project. The objective of the CATE process is to perform a cost and technical risk analysis for a set of concepts that may have a broad range of maturity, and to ensure that the analysis is consistent, fair, and informed by historical data. Typically, a concept evaluated using the CATE process is early in its life cycle and therefore likely to undergo significant subsequent design changes. Historically, such changes have resulted in cost growth. Therefore, a robust process is required that fairly treats a concept of low maturity relative to one that has undergone several iterations and review. CATEs take into account several components of risk assessment (see Table C.1).

Because the CATE is best suited to the comparative evaluation of a family of pre-Phase A concepts, it is the methodology used in this decadal survey.

OVERVIEW OF THE CATE PROCESS

The NRC engaged the services of the Aerospace Corporation to perform independent CATEs of mission concepts identified by the committee’s steering group during this study. Aerospace’s CATE team consisted of technical, cost, and schedule experts.

The committee’s five panels identified a total of 26 missions (see the list in Appendix G) that could address key science questions within their respective purviews. To ensure that the mission concepts were sufficiently mature for subsequent evaluation by the CATE team, the committee commissioned technical studies at leading design centers, including the Jet Propulsion Laboratory, Goddard Space Flight Center, the Johns Hopkins University Applied Physics Laboratory, and Marshall Space Flight Center. The committee’s steering group selected concepts to be studied from among those recommended by the panels. One or more “science champions” drawn from the ranks of the panels were attached to each of the centers’ study teams to ensure that the concepts remained true to the scientific and measurement objectives of the originating panel.

The design centers conducted two different types of studies: rapid mission architecture (RMA) studies and full mission studies. The RMA studies were conducted for immature but promising concepts for which a broad array of mission types could be evaluated in order to choose the one most promising approach. The resulting

TABLE C.1 Similarities and Differences in Three Methodologies for Assessing the Technical, Cost, and Risk Characteristics of Spacecraft Missions

| Approach | |||

| TMC | ICE | CATE | |

| Is approach used consistently to compare several concepts? | Yes | No | Yes |

| Concept cost is evaluated with respect to what? | Cost cap | Project budget | Budget wedge |

| Maturity of concept occurs when? | Phase A-B | Phase B-D | Pre-Phase A |

| Does the evaluation process include: | |||

| Quantified schedule growth cost threat? | No | Typically | Yes |

| Quantified design growth cost threat? | No | No | Yes |

| Cost threat for increase in launch vehicle capability? | No | No | Yes |

| Independent estimates for non-U.S. contributions? | No | No | Yes |

| Reconciliation performed with project team? | No | Yes | No |

| Technical and cost risk rating (low, medium, high)? | Yes | No | Yes |

NOTE: TMC, technical, management, and cost; ICE, independent cost estimate; CATE, cost and technical evaluation.

“point design” could then be subjected to a full mission study along with more mature concepts. Not all missions receiving RMA studies were selected by the steering group for full mission studies. Nor were all full mission studies selected for CATEs.

Prior to concepts being submitted to the CATE contractor, significant evaluations of trade-offs were conducted, led by panel science champions, to initially determine the science value or science return for an initial cost estimate as determined by the relevant design center. It was understood that these numbers were rough orders of magnitude. In some cases, a down-selection was made between two planetary bodies (Uranus and Neptune as an example), and then more detailed work was performed prior to submission of the concept to the CATE contractor for evaluation.

A key aspect of the CATE process is that there were multiple interactions between the committee and the CATE contractor. For at least four concepts, the CATE contractor was redirected to consider alternative solutions, as defined by the committee, that would lower cost and risk but maintain science return. This last step or iteration was considered confidential to the committee; it was deemed unnecessary for NASA to participate in these iterations in view of the experience of the committee members and the experience and knowledge of the CATE contractor. The committee believes that this iterative process ensured a tighter correspondence between science priorities and prioritized missions.

The 13 most promising full mission studies were identified by the steering group and passed to the CATE team for detailed technical, cost, and schedule assessments. These “priority missions” are listed below in the section titled “CATE Results for Priority Missions.” When follow-up was required, the CATE team worked with the appropriate science champion to request additional information. The members of the CATE team worked interactively to determine an initial assessment of technical risk and cost and schedule estimates for each of the 13 priority missions. (Two of these 13 missions have a two-part assessment: Mars Astrobiology Explorer-Cacher and Mars Astrobiology Explorer-Cacher Descope, and Uranus Orbiter and Probe with Solar-Electric Propulsion [SEP] and Uranus Orbiter and Probe with No SEP.) The CATE team strove, to the greatest extent possible, to be consistent across all concepts presented. In particular, recognizing that the design center that studied the mission might not be the one that ultimately implements it, the CATE process made no assumptions about what would be the implementing organization.

Following an initial internal review within the Aerospace Corporation to ensure that the 13 assessments were mutually consistent, the results were presented to the committee. The committee provided feedback to the CATE team, which in turn, incorporated this feedback into revised technical, cost, and schedule risk assessments.

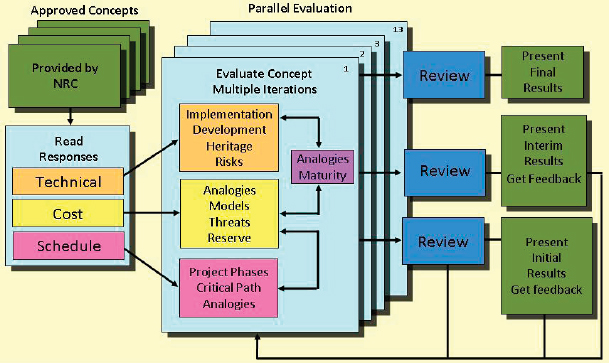

The CATE team’s approach (Figure C.1) is based on the following principles:

• Use multiple methods and databases relating to past space systems so that no one model or database biases the results. The CATE team used proprietary Aerospace Corporation models (e.g., the Small Satellite Cost Model) and space-industry standards (e.g., the NASA/Air Force Cost Model [NAFCOM]).

• Use analogy-based estimating; tie costs and schedule estimates to NASA systems that have already been built and that thus have a known cost and schedule.

• Use both system-level estimates as well as build-up-to-system-level estimates by appropriately summing subsystem data so as not to underestimate system cost and complexity.

• Use cross-checking tools, such as Complexity Based Risk Assessment (CoBRA), to cross-check cost and schedule estimates for internal consistency and risk assessment.

• In an integrated fashion, quantify the total threats to costs from schedule growth, the costs of maturing technology, and the threat of costs owing to mass growth resulting in the need for a larger, more costly launch vehicle.

In summary, an analogy-based methodology ties the estimated costs of future systems to the known cost of systems that have been built. In other words, it provides an independent estimate of the cost and complexity of new concepts anchored with respect to previously built hardware. The use of multiple methods such as analogies and standard cost models ensures that no one model or database biases the estimate. The use of system-level estimates and arriving at total estimated costs by statistically summing the costs of all individual work breakdown structure elements ensures that elements are not omitted and that the system-level complexity is properly represented in the cost estimate.

FIGURE C.1 Schematic illustration of the flow of the Aerospace Corporation’s cost and technical evaluation (CATE) process. The blocks in green indicate interaction by the CATE team with the committee.

The assessments of technology, cost, and schedule are inextricably intertwined. However, it is easier to describe each element of the overall assessment (e.g., technical, schedule, and cost) separately, noting in each instance the linkages to the overall CATE assessment.

Technical Assessment

The evaluation of technical risk and maturity in the CATE process focuses on the identification of the technical risks most important to achieving the required mission performance and stated science objectives. The assessment is limited to top-level technical maturity and risk discussions. Deviations from the current state of the art as well as system complexity, operational complexity, and integration concerns associated with the use of heritage components are identified. Technical maturity and the need for specific technology development, including readiness levels of key technologies and hardware, are evaluated by the CATE technical subgroup. During the assessment of the technical risk areas and concept maturity, the technical subgroup interacted with the cost and schedule subgroups so that technical risks could be translated into schedule and cost risk.

The CATE technical evaluation is limited to high-level technical risks that potentially impact schedule and cost. The CATE process places no cost cap on mission concepts, and hence risk as a function of cost is not considered. Concept maturity and technical risk are evaluated in terms of the ability of a concept to meet performance goals within proposed launch dates with adequate mass, power, and performance margins.

CATEs also evaluate proposed mass and power contingencies with respect to technical maturity. If the CATE technical subgroup concludes that these contingencies are insufficient, the contingencies are increased on the basis of historical data on mass and power growth. In some cases, growth in mass and power requirements necessitate the use of larger launch vehicles to execute the scientific mission. The assessments of required mass and power

increases—and the potential needs for more capable launch vehicles—are provided to the CATE cost and schedule experts for incorporation in their estimates.

Schedule Estimates

To aid in the assessment of concept risk, independent schedule estimates are incorporated as part of the CATE cost estimate. This is especially true for the assessment of risk with respect to proposed mission development and execution timelines. Like the CATE assessment of cost risk, schedule risk is also derived from analogies in the historical NASA record. Historical data from past analogous NASA missions, properly adjusted, are used to gauge the realism of the proposed durations of the development phases. Similarly, the time to critical mission reviews (e.g., PDR and critical design review [CDR]) and the time required for integration and testing are evaluated for each mission concept and contrasted with appropriate historical experience. Statistical analysis is utilized to create a schedule probability “S curve”—that is, a curve of the probability that a development time will exceed some value as a function of that value. The overall schedule, as proposed, is then adjusted with the historical data in mind. The independent schedule estimates are not tied to specific launch windows because the start dates for the concepts can be adjusted and because launch dates can usually be met by additional application of resources (e.g., double-shifting). If the schedule estimate predicted a launch date between launch windows, the cost of additional resources is used in the independent cost estimate. However, for concepts at this early stage of formulation, adding to the schedule in order to accommodate a future available launch window is not warranted. Costs incurred because the original schedule cannot be met are then added to the total cost of the mission. The committee requested that the CATE team use the 70th percentile value in its schedule estimate—i.e., there is a 70 percent probability that the schedule will be shorter than indicated and a 30 percent probability that it will be longer.

Cost Appraisal

The primary goal of the CATE cost appraisal is to provide independent estimates (in fiscal year [FY] 2015 dollars) that can be used to prioritize various concepts within the context of the expected NASA budgetary constraints for the coming decade (see Appendix E). The CATE team developed high-level cost estimates based on the information provided by the various mission study teams with a focus on treating all projects equally. To be consistent for all concepts, the CATE cost process allows an increase in cost resulting from increased contingency mass and power, increased schedule, increased required launch vehicle capability, and other cost threats depending on the concept maturity and specific risk assessment of a particular concept.

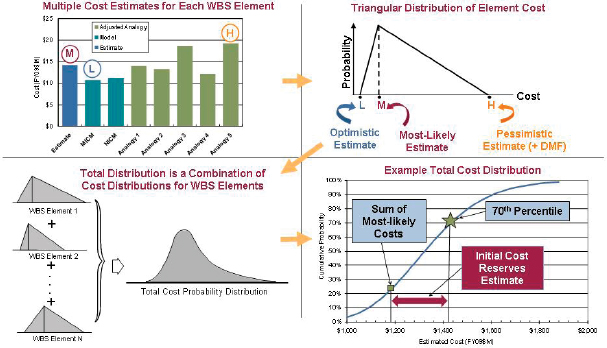

All cost appraisals for the CATE process are probabilistic in nature and are based on the NASA historical record and documented project life-cycle growth studies. Traditional S curves of cost probability versus cost are provided for each concept, with both the design center’s estimate and the CATE estimate at the 70th percentile requested by the committee indicated.

The focus of the CATE costing process is to estimate the cost of conceptual hardware—for example, instruments, spacecraft bus, landers—using multiple analogies and cost models based on historical data. A probabilistic cost-risk analysis is employed to estimate appropriate cost reserves. Ensuring consistency across the range of concepts—from those that are immature to those that are significantly more mature—the cost estimates are updated and adjusted with information from the CATE team’s technical subgroup with respect to mass and power contingencies, and potentially required additional launch vehicle capability. Using independent schedule estimates, costs are adjusted using appropriate “burn rates” to properly reflect the impact of schedule changes.

Finally, the results are integrated, cross-checked with other independent cost- and schedule-estimating capabilities, and verified for consistency before being presented to the committee (Figure C.2).

Complexity-Based Risk Assessment Comparisons

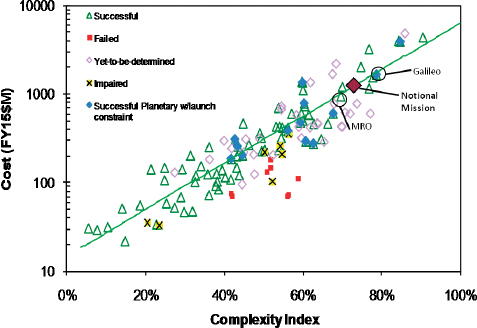

The cost and schedule estimates for the committee’s priority missions are compared to historical experience by plotting cost and schedule as a function of the estimated complexity of the mission—resulting in a CoBRA

FIGURE C.2 Schematic illustration of the process of developing cost versus cumulative risk probability S curve for a notional mission. The terms MICM and NICM in the upper-left quadrant refer to NASA-developed instrument cost models.

FIGURE C.3 Complexity Based Risk Assessment cost analysis superimposing the cost of a notional mission on historical data of cost versus complexity. A similar analysis can be performed plotting a schedule against complexity.

plot (Figure C.3). Such an analysis shows the locus of a notional mission compared to the historical experience of complexity versus cost for other missions. The expectation is that a proposed mission is on the road to success if the locus of the cost (and schedule) versus complexity point lies in the vicinity of the data for successful missions in the past.

CATE RESULTS FOR PRIORITY MISSIONS

Results for the priority missions selected by the committee and analyzed using the Aerospace Corporation’s CATE methodology are presented in Boxes C.1 through C.15 (in approximate order of the target object’s distance from the Sun). The full text of the studies for each of the missions is provided on the CD included with this report. Acronyms used in Boxes C.1 through C.15 are defined in Appendix F. Images of the missions were obtained from the respective mission studies. The missions are as follows:

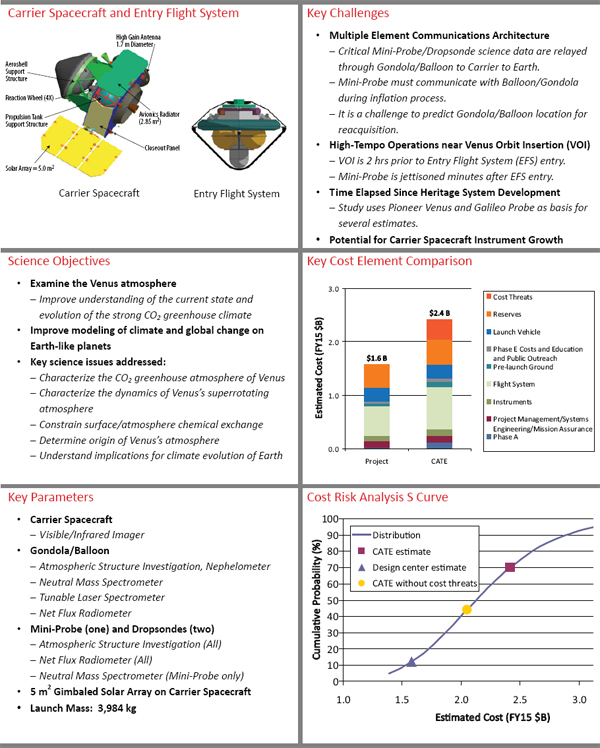

• Venus Climate Mission (Box C.1);

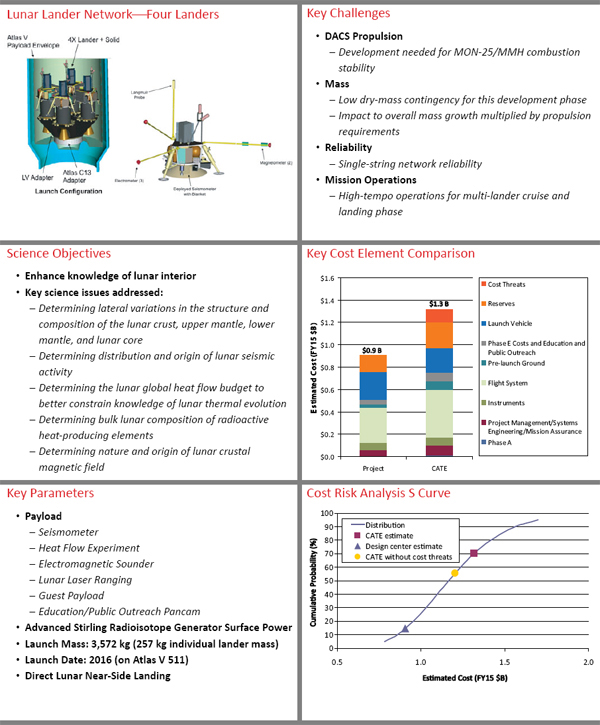

• Lunar Geophysical Network (Box C.2);

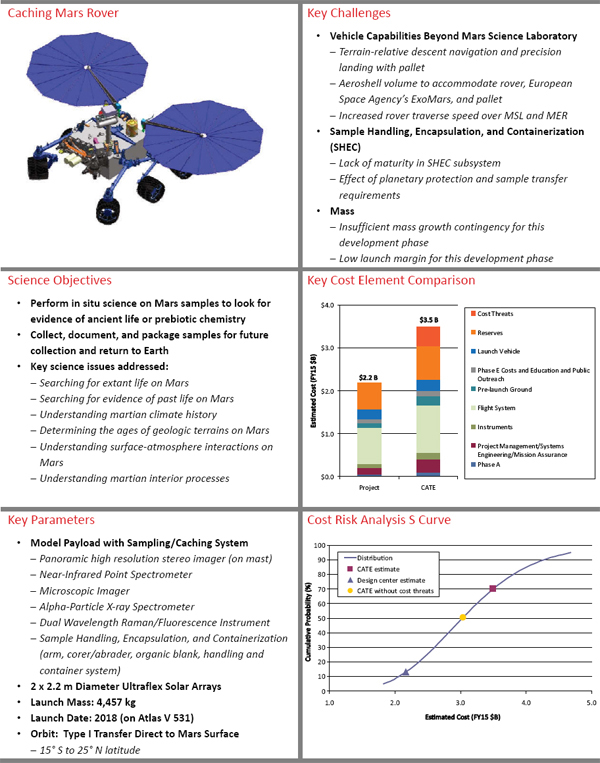

• Mars Astrobiology Explorer-Cacher (Box C.3);

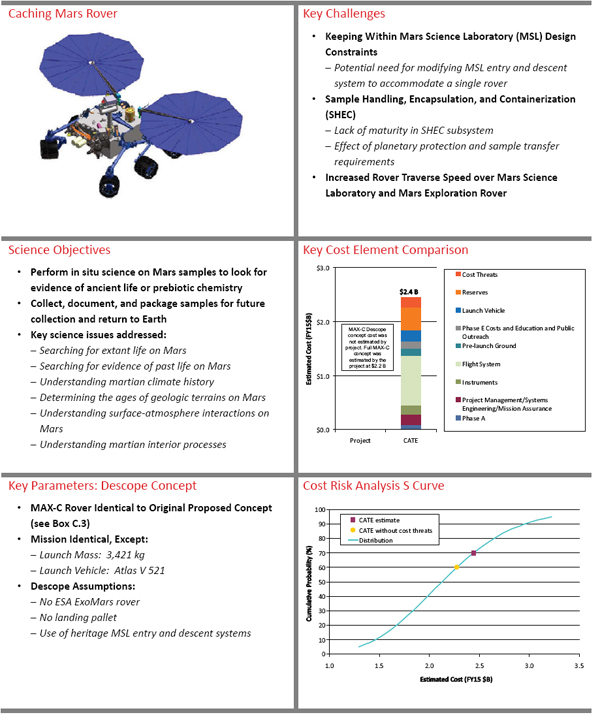

• Mars Astrobiology Explorer-Cacher Descope (Box C.4);

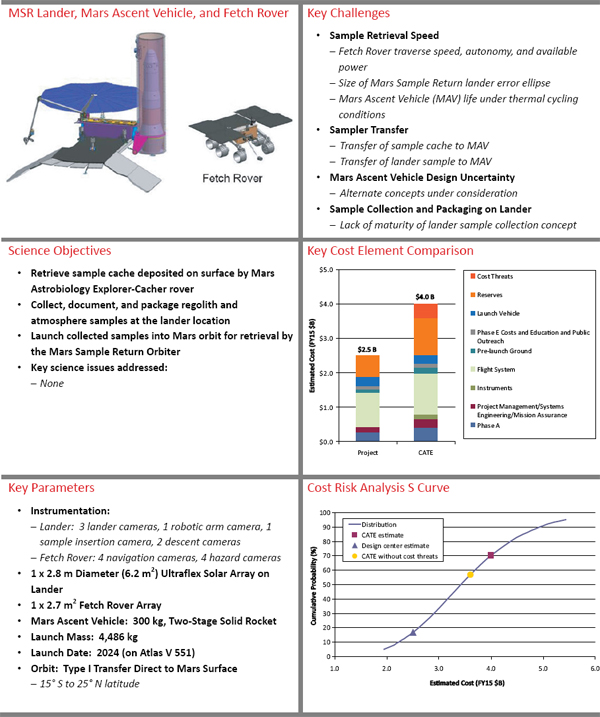

• Mars Sample Return Lander and Mars Ascent Vehicle (Box C.5);7

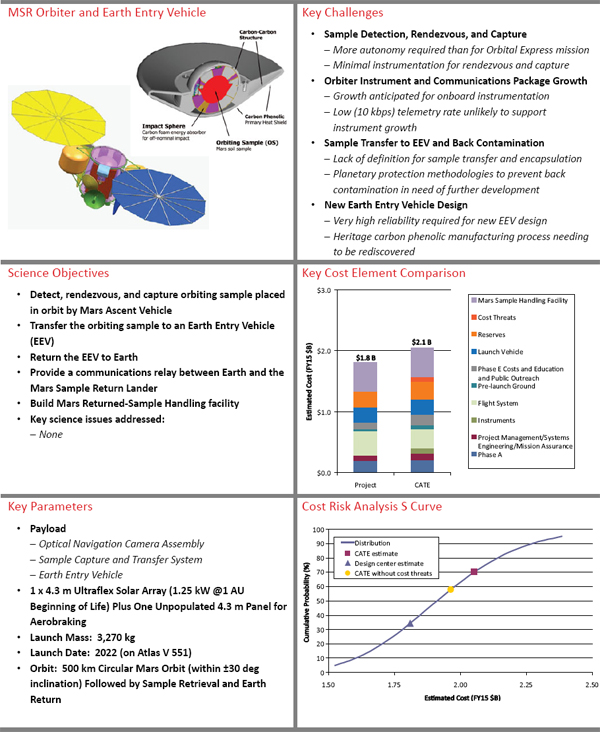

• Mars Sample Return Orbiter and Earth Entry Vehicle (Box C.6);8

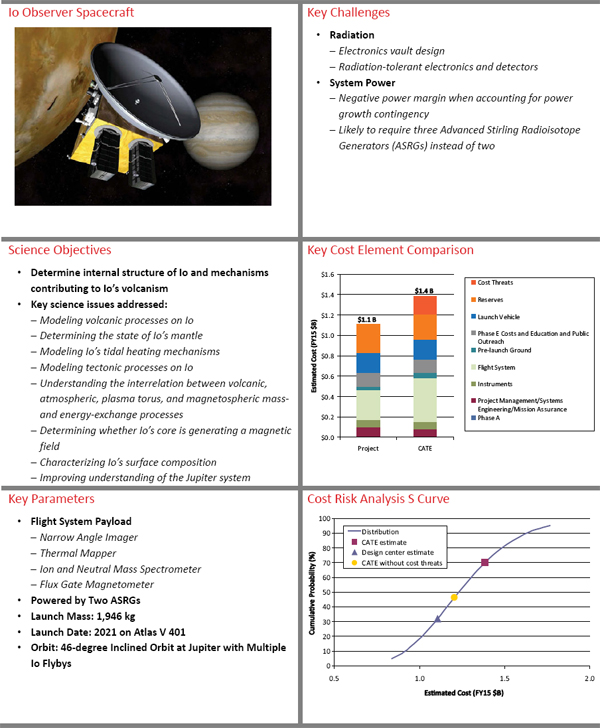

• Io Observer (Box C.7);

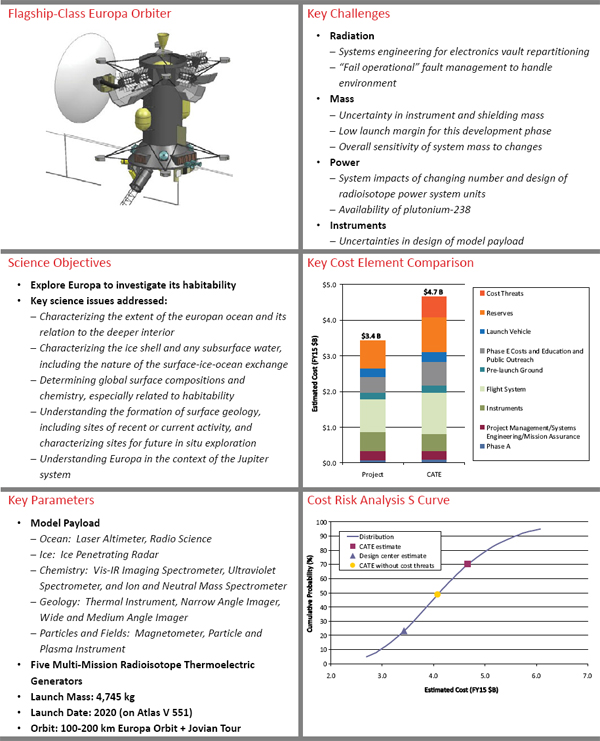

• Jupiter Europa Orbiter (Box C.8);

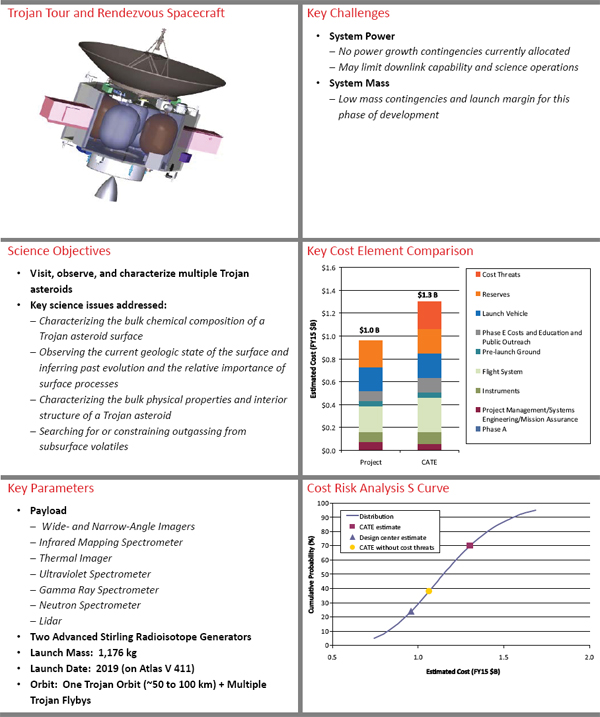

• Trojan Tour and Rendezvous (Box C.9);

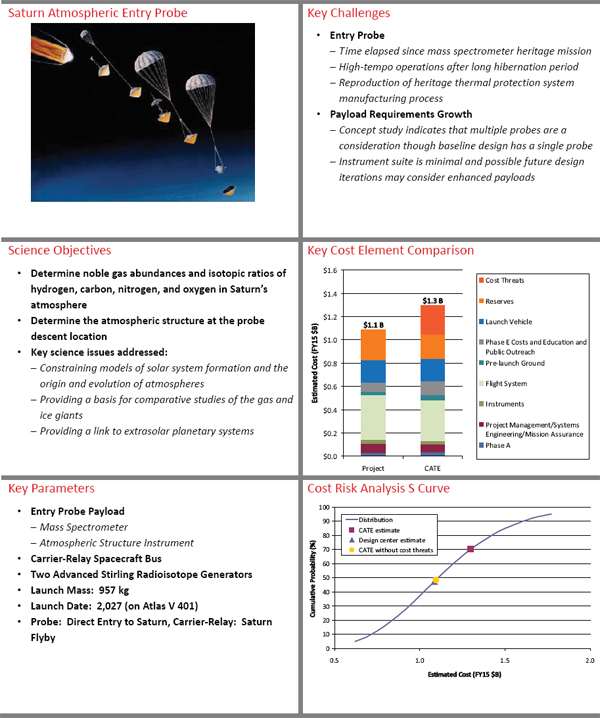

• Saturn Probe (Box C.10);

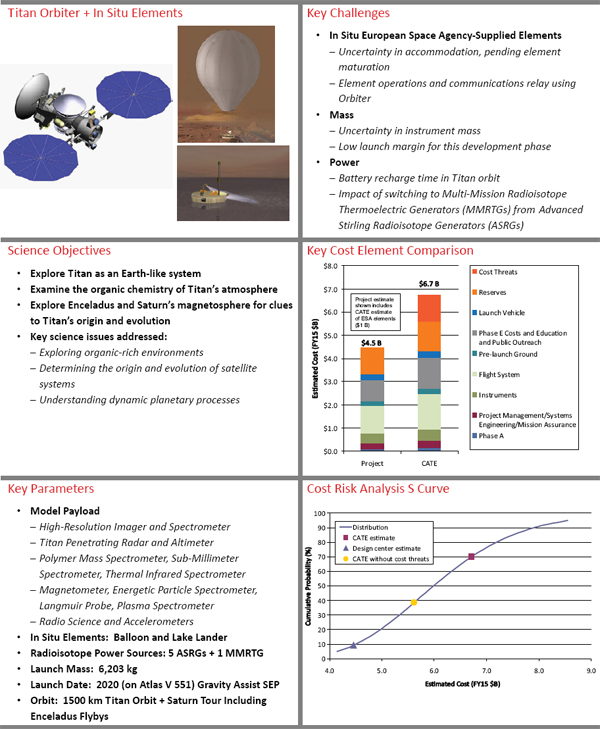

• Titan Saturn System Mission (Box C.11);

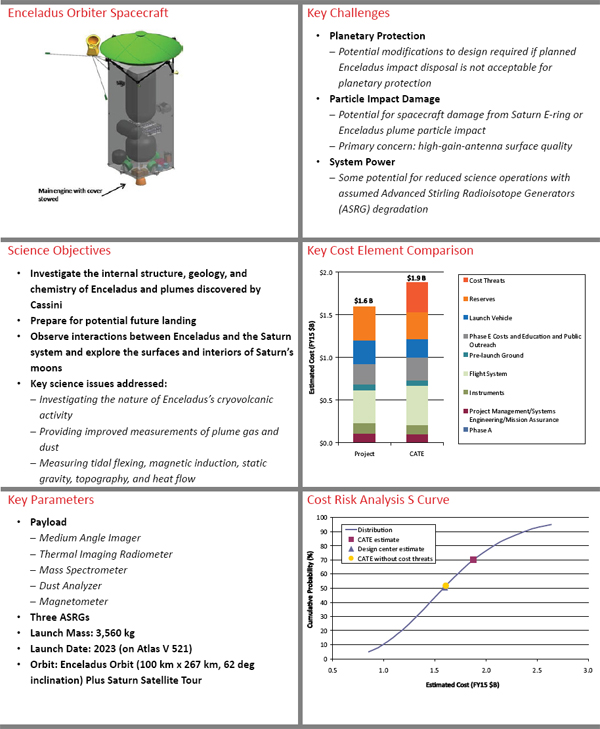

• Enceladus Orbiter (Box C.12);

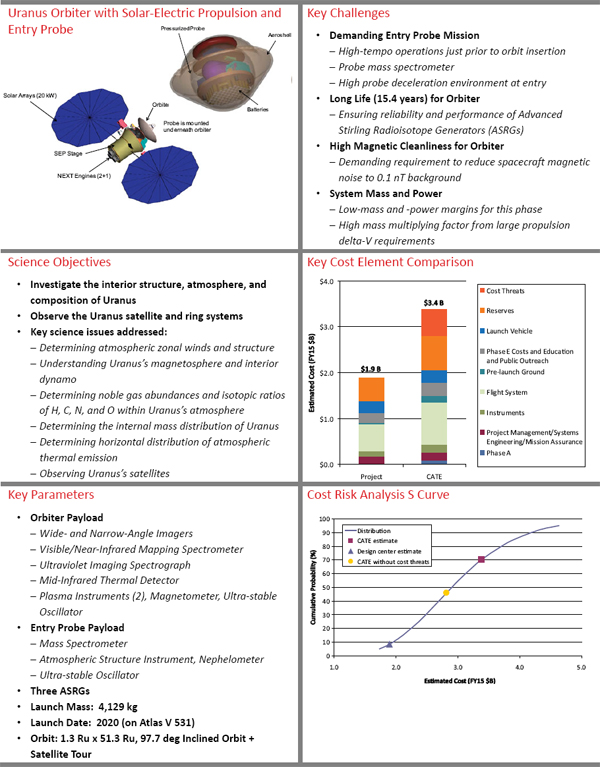

• Uranus Orbiter with Solar-Electric Propulsion and Probe (Box C.13);

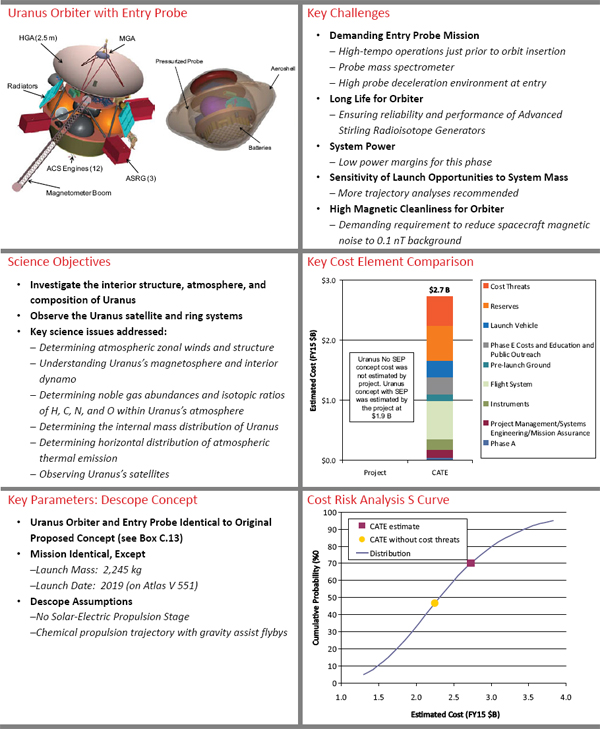

• Uranus Orbiter and Probe (No Solar-Electric Propulsion) (Box C.14); and

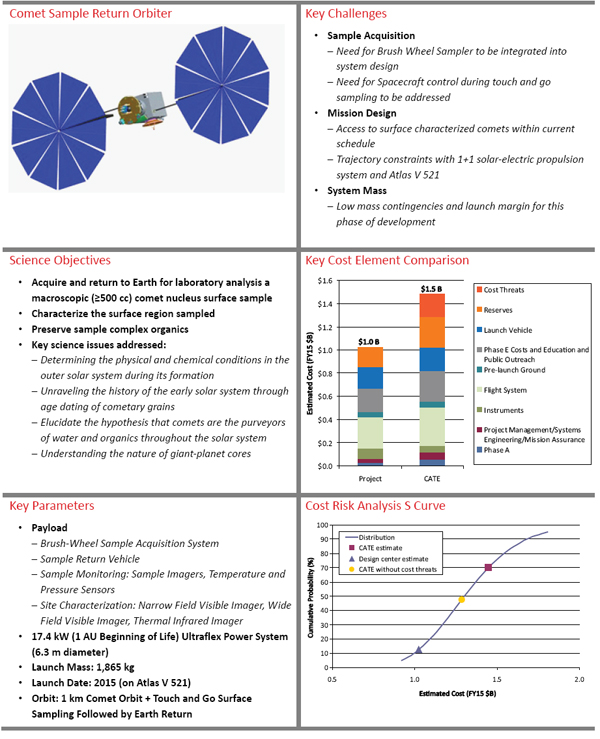

• Comet Surface Sample Return (Box C.15).

These missions were chosen by the committee on the basis of their strong science return and their potential technical readiness.

SUMMARY

Linked technical, cost, and schedule estimates were developed for each of the priority mission concepts selected by the committee. The use of historical experience databases and evaluation of the technical risk, cost, and schedule histories of analogous space systems that had already flown plus the extensive interaction of technical, cost, and schedule experts with the proposing teams provide, in toto, a high degree of confidence that the resulting assessments are realistic and credible.

The CATE process estimated mission costs that are considerably higher than the cost estimates provided by the design center study teams. The reason is that project-derived cost estimates are typically done using a bottom-up or so-called grass roots approach, and beyond standard contingencies they do not include probabilities of risk incurred by necessary redesigns, schedule slips, or launch vehicle growth. In other words, project estimates typically do not account for the “unpleasant surprises” that historically happen in nearly all space mission developments.

CATEs include a probabilistic assessment of required reserves assuming that the concept achieves the mass and power as allocated or constrained by the respective stated project contingencies within the schedule as stated by the project. In addition to these reserves, additional cost threats are also included that quantify potential cost growth based on design maturity (mass and power growth) and schedule growth. Potential cost threats for larger required launch vehicle capability are also included. It is the combination of these reserves and cost threats that are often the main reason for the large differences between the CATE appraisal and the project estimate. Differences in the estimates for hardware costs (instruments and flight systems) can also be a contributing factor.

As noted in several places in this report, the planetary program has been plagued for many years by use of cost estimates that, in retrospect, turn out to have been too optimistic. The result has been cost overruns that can be highly disruptive to the program. The CATE process, which uses history as its guide, has been designed and is used in this decadal survey to prevent this problem.

NOTES AND REFERENCES

1. National Research Council. 2006. An Assessment of Balance in NASA’s Science Programs. The National Academies Press, Washington, D.C., p. 32.

2. National Research Council. 2006. An Assessment of Balance in NASA’s Science Programs. The National Academies Press, Washington, D.C., p. 33.

3. National Research Council. 2007. Decadal Science Strategy Surveys: Report of a Workshop. The National Academies Press, Washington, D.C., pp. 21-30.

4. National Research Council. 2007. NASA’s Beyond Einstein Program: An Architecture for Implementation. The National Academies Press, Washington, D.C., pp. 66-114.

5. Congress of the United States. 2008. National Aeronautics and Space Administration Authorization Act of 2008. Public Law 110-422, Section 1104b, October 15.

6. National Research Council. 2010. New Worlds, New Horizons in Astronomy and Astrophysics. The National Academies Press, Washington, D.C., Appendix C.

7. As described in Chapter 9, the Mars Sample Return Lander mission is expected to be carried out jointly with the European Space Agency (ESA). Because the details of this collaboration have not been negotiated yet, however, the cost calculated for this mission does not include any ESA contribution.

8. As described in Chapter 9, the Mars Sample Return Orbiter mission is expected to be carried out jointly with the European Space Agency (ESA). Because the details of this collaboration have not been negotiated yet, however, the cost calculated for this mission does not include any ESA contribution.