THE NATIONAL ACADEMIES

Advisers to the Nation on Science, Engineering, and Medicine

| Board on Global Science and Technology Chair Dr. Ruth David, President and CEO, Analytic Services, Inc Director Dr. William O. Berry |

500 Fifth Street, NW Washington, DC 20001 Phone: 202 334 2424 Fax: 202 334 1667 www.nationalacademies.org |

December 5, 2011

Melissa Flagg, Ph.D.

Director, Technical Intelligence

OSD AT&L/ASD(R&E)/PD

Pentagon Room 3C855A

Dear Dr. Flagg,

On behalf of the Committee on Going Global: Lessons Learned from International Meetings on Science and Technology, I am pleased to submit the following letter report that describes the 2009-2011 activities of the Board on Global Science and Technology and provides an initial characterization of the global S&T landscape that the Board can use as a roadmap to develop future activities.

BGST met five times between November 2009 and May 2011. Board meetings were devoted to (1) identifying national security implications of the globalization of S&T, (2) building a baseline understanding of current indicators for the U.S. posture with regard to the evolving global S&T landscape, and (3) developing a BGST engagement strategy.

This letter portion of the report summarizes activities of the board in its first year, and also describes some existing approaches to identifying and/or benchmarking emerging technologies globally. Appendices A.1-2 include the names and affiliations of the committee that prepared this letter report and the names and affiliations of the Board on Global Science and Technology. Appendix B acknowledges the reviewers of the letter report. Appendix C describes the two workshops that BGST held during its first program year. Appendices D.1-3 include three experimental examples by BGST members of a qualitative approach to benchmarking. The topics are metamaterials, advanced computing, and synthetic biology, respectively. Appendix E includes brief descriptions of programs that are part of the National Academies complex, with which BGST has cooperated.

The statement of task for this letter report follows:

The Board on Global Science and Technology (BGST) was established in 2009 to provide policymakers a sustained view of the impact of the globalization of science and

technology (S&T) on U.S. national security and economic policies. 1 An ad hoc committee of the board will produce a fast-track letter report that provides a characterization of the global S&T landscape that can be used as a roadmap2 to develop future activities. The committee will gather information from relevant work from throughout the National Academies, including relevant NRC, NAE and NAS reports, BGST meetings, and from the two workshops on emerging technologies convened by BGST in the past year: “Shifting Power: Smart Energy Grid 2020” (August 2010) and “Realizing the Value from ‘Big Data’ ” (February-March 2011).

The National Security Implications of the Globalization of S&T

For many decades, U.S. technological leadership provided a solid foundation for both national security and economic competitiveness. That foundation is eroding. It is increasingly apparent that future U.S. S&T investment strategy must be informed by a comprehensive understanding of the global S&T environment.

In its 1995 report Allocating Federal Funds for Science and Technology, the NRC recommended that:

“The President and Congress should ensure that the Federal Science and Technology budget is sufficient to allow the United States to achieve preeminence in a select number of fields and to perform at a world-class level in the other major fields.”3

The most recent Quadrennial Defense Review (QDR) observed that:

As global research and development (R&D) investment increases, it is proving increasingly difficult for the United States to maintain a competitive advantage across the entire spectrum of defense technologies…. The Department will consider the scope and potential benefits of an R&D strategy that prioritizes those areas where it is vital to maintain a technological advantage.4

Planning guidance issued by the Secretary of Defense in April 2011 identified the following priority S&T investment areas:

(1) Data to Decisions – science and applications to reduce the cycle time and manpower requirements for analysis and use of large data sets.

_______________________

1 The sponsor did not seek input on economic policy during the first program year. BGST plans to investigate economic issues related to the globalization of emerging technologies as it expands its sponsor base.

2 The experimental activities that the Board conducted during its first program year have shown the complexity of creating a roadmap of global S&T—even for creating the Board’s own activity plan. The Board has decided that it would best to base any future roadmap on information that is collected from activities over a multi-year period.

3 National Research Council. 199. Allocating Federal Funds for Science and Technology. Washington, DC: National Academies Press, p. 14.

4 U.S. Department of Defense. 2010. Quadrennial Defense Review Report. Washington, DC: Government Printing Office, pp. 94-95.

(2) Engineered Resilient Systems – engineering concepts, science, and design tools to protect against malicious compromise of weapon systems and to develop agile manufacturing for trusted and assured defense systems.

(3) Cyber Science and Technology – science & technology for efficient, effective cyber capabilities across the spectrum of joint operations.

(4) Electronic Warfare / Electronic Protection – new concepts and technology to protect systems and extend capabilities across the electro-magnetic spectrum.

(5) Counter weapons of Mass Destruction (WMD) – advances in DoD’s ability to locate, secure, monitor, tag, track, interdict, eliminate and attribute WMD weapons and materials.

(6) Autonomy – science & technology to achieve autonomous systems that reliably and safely accomplish complex tasks, in all environments.

(7) Human Systems – science & technology to enhance human-machine interfaces to increase productivity and effectiveness across a broad range of missions.5

An investment strategy implied by the documents cited above requires an understanding of the global S&T landscape at a granular level not provided by current S&T indicators. It requires ongoing field/sub-field-level benchmarking to ascertain not only the current U.S. position relative to other nations but also to identify the trends and accelerators that help forecast future positions. To maintain technological advantage in the priority S&T investment areas, DoD must not only focus its investment portfolio, but also ensure that its investments are informed by an awareness of research around the world.

An effective and efficient DoD S&T investment strategy thus requires not only ongoing benchmarking at a granular level, but also sustained engagement and collaboration with other nations in order to more fully understand the nation-specific cultural factors that shape trends and accelerate (or impede) progress.

A Baseline Understanding of S&T Indicators

Science has always been a global endeavor but the 20th century birth of the Internet, which enabled a host of subsequent technological advances, created an inflection point in the evolution of the global S&T landscape. Today the 21st century scientific enterprise is more geographically distributed and more interdependent than ever before.6

Whereas advances in S&T have long fueled the pace of globalization; now globalization is accelerating the pace of advances in S&T. The physical borders that define national sovereignty pose minimal barriers to the flow of information or ideas and do little to impede the coalescence of global networks among inventors and innovators. A recent NRC report on the S&T strategies of six countries observed that “[t]he increased access to information has transformed the 1950s’

_______________________

5 Secretary of Defense Memorandum, April 19, 2011. OSD 02073-11. Available online at http://www.acq.osd.mil/chieftechnologist/publications/docs/OSD%2002073-11.pdf. Last accessed August 3, 2011.

6 See, for example, a report on the increasing globalization of science, The Royal Society. 2011. Knowledge, Networks and Nations: Global Scientific Collaboration in the 21st Century. (Hereafter Knowledge, Networks and Nations.) London, United Kingdom: Elsevier, p. 5.

paradigm of ‘control and isolation’ of information for innovation control into the current one of ‘engagement and partnerships’ between innovators for innovation creation.”7

While the globalization of S&T has become a commonplace notion, there does not yet exist a widely-accepted set of standards for understanding its extent or significance. Traditional measures (e.g.,, Science and Engineering Indicators published by the National Science Board8) are limited in both timeliness and granularity, providing a retrospective picture derived from statistical analysis of available data. While valuable, such indicators provide little insight at the sub-field level within major disciplines and in emerging interdisciplinary research domains—both of which are of vital importance in informing research investment strategies.

Analysis of published papers in peer-reviewed journals provides some improvement in both granularity and timeliness, but, as noted in Knowledge, Networks and Nations, a report of the Royal Society, “[i]t is clear that bibliometric data alone do not fully capture the dynamics of the changing scientific landscape.”9 Contributing issues include the fact that “[r]egional, national and local journals in the non-English-speaking parts of the world are often not recognised and, as a consequence, journals, conferences and scientific papers from some countries are not well represented by abstracting services.”10 In addition, “grey literature”,11 provides “potentially valuable contributions to the global stock of knowledge, but they are not accounted for in traditional assessments of research output.”12

Yet by and large, U.S. science policy is still based on traditional measures. These were very instructive for a world with a single S&T leader, but are insufficient for characterizing the growing global S&T environment. For example, An NRC committee that examined international benchmarking in 2000

acknowledged that quantitative indicators commonly used to assess research programs—for example, dollars spent, papers cited, and numbers of scientists supported—are useful information but noted that by themselves they are inadequate indicators of leadership, both because quantitative information is often difficult to obtain or compare across national borders and because it often illuminates only a portion of the research process.13

In this study, Experiments in International Benchmarking of US Research Fields, the committee employed a variety of assessment methods, including: “the ‘virtual congress’;14 citation analysis; journal-publication analysis; quantitative data analysis (for example, numbers of

_______________________

7 National Research Council. 2010. S&T Strategies of Six Countries: Implications for the United States. (Hereafter S&T Strategies of Six Countries.) Washington, DC: National Academies Press, p. 1.

8 See www.nsf.gov/statistics/.

9Knowledge, Networks and Nations, p. 23.

10 Ibid.

11 The term “grey literature” refers to non-peer-reviewed publications. Knowledge, Networks and Nations, p. 23.

12 Ibid.

13 National Research Council. 2000. Experiments in International Benchmarking of US Research Fields. (Hereafter International Benchmarking.) Washington, DC: National Academies Press, p. 6.

14 The “virtual congress” methodology involved asking panels to organize an imaginary international conference to which they would invite the “‘best of the best’” researchers from particular subfields and sub-subfields, from anywhere in the world. The purpose of the exercise was to depict the current and future position of the United States relative to other countries in a particular area of science. Ibid., p. 15.

graduate students, degrees, and employment status); prize analysis; and international-congress speakers.”15 Experiments were conducted by assembling panels of experts to conduct assessments in specific fields and results were compared across the assessment methodologies. The analysis made clear that current leadership does not ensure sustained leadership.16 It therefore is important to understand the underlying factors that enable or impede research and development.

In conducting the experiments described above, the International Benchmarking report noted the need for foreign and industry representation on the panels to ensure objectivity:

… at least one-third of panel members should be non-US researchers. An additional one-third should be a combination of researchers in industry and in related fields who use the results of research. In the experience of the panels, that mix of perspectives, including especially the representatives of research-intensive industries, was essential for understanding not only the scholarly and technical achievements of researchers, but also the broader importance of those achievements to social and economic objectives.”17

In 2005 the NRC empanelled a committee to use the benchmarking approach described in the 2000 report to assess the position of U.S. chemical engineering relative to research in other regions of the world.18 Slightly fewer than 25% of the committee members were from outside the United States; they represented academia, industry and the federal government. The committee used a mix of quantitative and qualitative evaluation tools19 recommended in the earlier report and found that the mix yielded consistently robust results.20 Their Virtual World Congress, composed of 276 “organizers,”21 resulted in a proposed speakers list of 2,997 speakers (1,897—or 63%—of who were American) and a list of “hot topics” from nine sub-fields of chemical engineering. Further experimentation with this methodology may yield a cost-effective way to maintain an ongoing and dynamic understanding of both the composition and geographical distribution of S&T leadership at a granular level.

The recognition of the limitations of traditional indicators has propelled BGST since its first meeting to seek new ways to characterize the global S&T landscape. In a November 2009 discussion with BGST, Dr. Robert Atkinson, President of the Information Technology and Innovation Foundation (ITIF), presented results from the ITIF’s February 2009 report, The Atlantic Century: Benchmarking EU and U.S. Innovation and Competitiveness, which showed U.S. innovation capacity and performance relative to other nations. The report considered the following 16 indicators:

_______________________

15 See International Benchmarking, pp. 14-17.

16International Benchmarking. Attachment 2: Appendix B.

17 Ibid., p. 23.

18 National Research Council. 2007. International Benchmarking of U.S. Chemical Engineering Research Competitiveness. Washington, DC: National Academies Press.

19 Ibid., pp. 33-35.

20 The committee’s chief finding was that “The United States is presently, and is expected to remain, among the world’s leaders in all subareas of chemical engineering research, with clear leadership in several subareas. U.S. leadership in some classical and emerging subareas will be strongly challenged.” Ibid., p. 7.

21 The term “organizers” refers to the 276 survey responses that the committee received.

- Human capital: higher education attainment in the population ages 25 to 34 years; and number of science and technology researchers per 1,000 employed.

- Innovation capacity: business investment in research and development; government investment in R&D; and the number and quality of academic publications.

- Entrepreneurship: venture capital investment; and new firms.

- Information technology (IT) infrastructure: e-government; broadband telecommunications; and corporate investment in IT.

- Economic policy: effective marginal corporate tax rates.

- Economic performance: trade balance; foreign direct investment inflows; real GDP per working-age adult; and GDP per hour worked (productivity).22

Dr. Atkinson noted that while the United States ranked 6th overall in innovation and competitiveness, it ranked last among 40 nations in terms of improving its innovation capacity and competitive position over the prior decade. In a 2011 update report, ITIF ranked the United States 43rd out of 44 nations in terms of improving its innovation capacity in spite of improvement in absolute rank from 6th to 4th.23 The 2011 report also indicates that, although the United States ranked 8th and 5th in government and business R&D investment respectively, its rate of change in these categories ranked 28th and 27th relative to other nations analyzed. Other important indicators include rate of change rankings of 39th in researchers and 44th in publications for the United States.24

A May 2011 BGST discussion with Dr. Dan Mote, chair of the NRC Committee on Global Science and Technology Strategies and Their Effect on U.S. National Security, provided insights regarding S&T investment approaches adopted by other nations. The committee was tasked to examine the S&T strategies of Japan, Brazil, Russia, India, China, and Singapore and to evaluate the implication of S&T strategy differences to U.S. national security strategy. Dr. Mote presented highlights from the committee’s report, S&T Strategies of Six Countries: Implications for the United States. The study committee observed that:

The best indicators of progress toward achieving national goals are country specific and must reflect both traditional and nontraditional factors. Traditional indicators are quantitative measures of S&T investment, activity, and outcomes such as patents per capita, S&T investment as a percentage of gross domestic product, the fraction of national research expenditures made by industry, and the number of start-up companies…Nontraditional indicators emerging from cultural contexts are country specific. They are essential to understanding each country’s S&T innovation environment and especially to predicting its future change…. No single set of common

_______________________

22 Robert D. Atkinson and Scott M. Andes. 2009. The Atlantic Century: Benchmarking EU & U.S. Innovation and Competitiveness. Washington DC: The Information Technology and Innovation Foundation, p. 3. Also available at http://www.itif.org/files/2009-atlantic-century.pdf. Last accessed August 3, 2011.

23The Atlantic Century II: Benchmarking EU & U.S. Innovation and Competitiveness, Robert D. Atkinson and Scott M. Andes, p. 9. The Information Technology and Innovation Foundation. July 2011. http://www.itif.org/publications/atlantic-century-ii-benchmarking-eu-us-innovation-and-competitiveness. Last accessed August 3, 2011.

24 Ibid., p. 11.

indicators was found by the committee to provide a complete assessment of progress toward goals for all countries.25

The importance of nontraditional factors was further corroborated by the committee’s observation that: “the S&T innovation environments of the more successful countries posses both top-down (i.e., led by government) and bottom-up (i.e., led by individuals and organizations) drivers of change.”26

The S&T Strategies of Six Countries study also noted the need for nation-specific indicators to augment measures such as patents, publications, degrees awarded, and S&T budgets, to “better monitor, track, and quantify S&T development in other countries and the United States in the future.”27

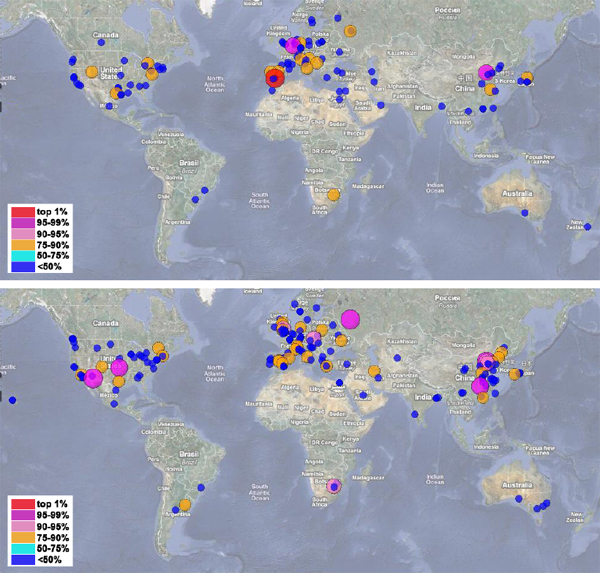

The potential value of nation-specific indicators is suggested in Figure 1, which visualizes changes in one aspect of the global S&T landscape over time: the geographic distribution of highly cited research publications in autonomous systems in 2005 and 2010.28 In addition to a near doubling of the total number of research publications, strong growth can be seen in multiple “hot spots” around the world, including Beijing, Tianjin, and Changsha which show 2.6, 3, and 4-fold increases in publications, respectively. Why some “hot spots” disappear while others flatten or grow over time is an important question for the U.S. S&T enterprise, as well as for U.S. economic and national security. For example, it can help U.S. policymakers understand where centers of excellence in particular technologies may be developing around the world, which in turn may point to the need to find other indicators that can help clarify the U.S. position in a particular technology relative to other countries.

_______________________

25S&T Strategies of Six Countries, pp. 2-3.

26 Ibid., p. 3.

27 Ibid., p. 12.

28 “Autonomous systems” is a subject area related to the S&T priorities listed in Secretary of Defense, Robert M. Gates memo of April 19, 2011. See pp. 2-3 above. According to the 2007 DD(R&E) Strategic Plan, autonomous systems technologies are defined as:

Autonomous systems technologies enable unmanned systems to sense, perceive, analyze, plan, decide, and act, in order to identify and achieve their goals. The systems include communication and interaction with humans and/or other unmanned systems. Unmanned systems in the air, on the ground, and at sea perform their functions with ever increasing capabilities and technological sophistication using autonomy/teaming, human system integration, power, communications, sensors, mobility, planning/C2, processing, and diagnostics and prognostics. All of these technologies are critical to system level capabilities.

See http://www.dod.gov/ddre/doc/Strategic_Plan_Final.pdf. Last accessed October 25, 2011.

For a discussion on autonomous systems, see the PowerPoint presentation by Dr. Bobby Junker on “Autonomy S&T Priority Steering Council” at http://www.dtic.mil/ndia/2011SET/Junker.pdf. Last accessed August 17, 2011.

Figure 1: Mapping the geographic distribution of highly cited “autonomous systems” research publications in 2005 (top) and 2010 (bottom). The location of each circle on the map represents the geographic area (“hot spot”) where one or more researchers is based. The size of the circle represents the number of publications generated in that area, and the color of the circles represents the number of publications relative to other “hot spots” shown on the map.29 The term “autonomous systems” has more than one meaning, and the SciVerse Scopus search for highly cited publications on this topic could have counted articles that use other definitions. This can be seen as a limitation of the illustrative approach described here.

Source: Board on Global Science and Technology.30

_______________________

29 Here, there is little difference between circle color and circle size due to the relatively small size of the N used to generate these maps.

30 A detailed description of the methodology used to generate these graphs, including freely available software, is available at http://www.leydesdorff.net/mapping_excellence/index.htm (Las t accessed July 26, 2011). The mapping tutorial can also be found in Bornmann L, Leydesdorff L, Walch-Solimena C, et al. 2011. “Mapping excellence in the geography of science: An approach based on Scopus data.” Journal of Informetrics 5(4):537-546. Bibliometric data were collected via SciVerse Scopus with the following search restrictions: Title/Abstract/Keywords exact phrase=“autonomous systems”; Document Type=Article; Publication Year=2005 or 2010.

It is the goal of the Board to apply these complex, but more representative, kinds of indicators to understanding the implications of the globalization of S&T for U.S. national security.

BGST’s Engagement Strategy: A Continuing Experiment

The Board is experimenting with various ways to develop new qualitative metrics that will demonstrate the significance of the evolving global S&T landscape for policymakers. During its first program year, the Board held two workshops that were highly interdisciplinary and forward looking; the second one included participants from nine countries and four continents. The Board has developed a professional networking site and questionnaires to engage participants before meetings and to keep them involved afterward. The Board is also developing a data-gathering tool that takes an interdisciplinary approach to obtain situational awareness in diverse areas of emerging science and technology.

Data-intensive Science

For its initial exploration, the Board sought an over-arching theme that would (1) lead to topics of broad interest and applicability; (2) be sufficiently focused to motivate the development of sustainable, international networks; (3) yield ongoing insights into the trends and accelerators shaping the global S&T landscape. An important consideration in topic selection and in the development of a collaborative methodology for sustained engagement was the notion of “what’s in it for them” (i.e. what would motivate researchers from the United States and elsewhere to stay engaged in such a network).

BGST chose the opportunities and challenges of data-intensive science. This selection was informed by discussions with Dan Reed (Corporate Vice President, Microsoft Corporation) and Alexander Szalay (Alumni Centennial Professor Department of Physics and Astronomy, The Johns Hopkins University), both of whom were contributing authors to The Fourth Paradigm: Data-Intensive Scientific Discovery.31 These discussions made clear that the topic was broadly applicable, spanning a range of scientific disciplines as well as a diverse array of problem domains of global importance. Selection of the data-intensive science topic was further motivated by its relevance to DoD’s priority S&T investment areas. The topic is directly linked to the DoD priority S&T investment area #1 (Data to Decisions) and plays an enabling role in several of the other priority areas due to its link to sensors, simulations, and other computationally-intensive applications.

The Board derived additional insights on data-intensive science from a discussion with Randal E. Bryant (University Professor of Computer Science and Dean, School of Computer Science at Carnegie Mellon University) on the topic of “Data Intensive Scalable Computing: Its Role in

_______________________

31 Tony Hey, Stewart Tansley, and Kristin Tolle, eds. 2009. The Fourth Paradigm: Data-Intensive Scientific Discovery. Redmond, WA: Microsoft Research.

Scientific Research,” who made a compelling case for the need for computational infrastructures that:32

- Focus on Data: Terabytes, not tera-FLOPS;

- Problem-Centric Programming: Platform-independent expression of data parallelism;

- Interactive Access: From simple queries to massive computations; and

- Robust Fault Tolerance: Component failures are handled as routine events.

A recent BGST discussion with Ben Shneiderman, University of Maryland, on the topic of “Visual Analytics Science and Technology for Collaborative Knowledge Discovery” further illustrated both the challenges and the opportunities inherent in data-intensive science. Key lessons from this presentation included not only the need for awareness of advances in the field of visual analytics, but also the potential value of using such tools to more efficiently explore and more effectively describe the complex and dynamic nature of the global S&T landscape.

Data-intensive science is rich in that it affords exploration from multiple perspectives. Board-sponsored activities to date include two meetings, each thematically focused on big data, but with different structural approaches. The first meeting brought together a multi-disciplinary group of researchers and practitioners around a common problem—“Data Analytics & the Smart Energy Grid 2020.” The second meeting, co-sponsored by Singapore’s Agency for Science, Technology and Research (A*STAR)33 also engaged a multi-disciplinary group but addressed a problem spanning multiple domains—“Realizing the Value from Big Data” (see Appendix C for descriptions of these workshops).

Building a Professional Network

The Board created an interactive website (using Ning) as a multi-party, international communication tool for the global S&T community involved in emerging science areas. To date it has been used primarily by participants in BGST workshops. As a continuation of this experiment, the Board plans to explore its use as a tool to create a continuing discussion on qualitative and quantitative assessments of emerging global S&T areas.

The BGST Template

The Board created a template to gather experts’ assessments of the global S&T landscape in selected emerging S&T domains that contribute to the DoD priority S&T investment areas. The template is intended to provide a multi-faceted yet brief snapshot of a particular subject area from several viewpoints: technology, international players, national security implications, future problems/avenues of exploration, and significant publications. To date, this experimental template has been tested only by BGST members. We believe that it can be a unique qualitative data-gathering tool because its diverse questions can yield insights into how researchers perceive their field within a global context. Thus, we plan to continue to experiment with this template by involving experts beyond the BGST’s current areas of expertise. Appendix D includes applications of the template by three BGST members in three areas of emerging S&T, respectively: metamaterials, computing performance and synthetic biology.

_______________________

32 See this PowerPoint presentation at http://sites.nationalacademies.org/xpedio/groups/pgasite/documents/webpage/pga_056621.pdf.

33 For additional information on A*STAR, see http://www.a-star.edu.sg/.

Leveraging Expertise Throughout the National Academies

BGST has worked with a number of units throughout the National Academies with subject area expertise and /or international S&T interests to take advantage of the expertise in other programs. Appendix E lists several of the programs that BGST has or intends to work with to bolster a collective understanding of global science and technology.

• • •

For the near future the BGST will continue to experiment with various ways of assessing the global S&T landscape in emerging areas, including use of the Ning site and the template assessment tool. However, the main focus will involve another experiment—using NRC study committees with a fast-track study format to assess the trends in global S&T in a specific technical domain of interest to the DoD. The goal is to have a report completed in six months of the first committee meeting. The first study being undertaken is a fast-track assessment of Global Approaches to Advanced Computing.

Sincerely,

Ruth David, Ph.D.

Chair, BGST

President and CEO, Analytic Services Inc.