Appendix B Selected Topics in Computer Security Technology

This appendix discusses in considerable detail selected topics in computer security technology chosen either because they are well understood and fundamental, or because they are solutions to current urgent problems. Several sections expand on topics presented in Chapter 3.

ORANGE BOOK SECURITY

A security policy is a set of rules by which people are given access to information and/or resources. Usually these rules are broadly stated, allowing them to be interpreted somewhat differently at various levels within an organization. With regard to secure computer systems, a security policy is used to derive a security model, which in turn is used to develop the requirements, specifications, and implementation of a system.

Library Example

A "trusted system" that illustrates a number of principles related to security policy is a library. In a very simple library that has no librarian, anyone (a subject) can take out any book (an object) desired: no policy is being enforced and there is no mechanism of enforcement. In a slightly more sophisticated case, a librarian checks who should have access to the library but does not particularly care who takes out which book: the policy enforced is, "Anyone allowed in the room is allowed access to anything in the room." Such a policy requires only identification of the subject. In a third case, a simple

extension of the previous one, no one is allowed to take out more than five books at a time. In a sophisticated version of this system, a librarian first determines how many books a subject already has out before allowing that subject to take more out. Such a policy requires a check of the subject's identity and current status.

In a library with an even more complex policy, only certain people are allowed to access certain books. The librarian performs a check by name of who is allowed to access which books. This policy frequently involves the development of long lists of names and may evolve toward, in some cases, a negative list, that is, a list of people who should not be able to have access to specific information. In large organizations, determining which users have access to specific information frequently is based on the project they are working on or the level of sensitivity of data for which they are authorized. In each of these cases, there is an access control policy and an enforcement mechanism. The policy defines the access that an individual will have to information contained in the library. The librarian serves as the policy-enforcing mechanism.

Orange Book Security Models

The best-known and most widely used formal models of computer security functionality, the Bell and LaPadula model and its variants (Bell and LaPadula, 1976), emphasize confidentiality (protection from unauthorized disclosure of information) as their primary security service. In particular, these models attempt to capture the "mandatory" (what ISO Standard 7498-2 (ISO, 1989) refers to as "administratively directed, label-based") aspects of security policy. This is especially important in providing protection against "Trojan horse" software, a significant concern among those who process classified data. Mandatory controls are typically enforced by operating-system mechanisms at the relatively coarse granularity of processes and files. This state of affairs has resulted from a number of factors, several of which are noted below:

-

The basic security models were accurately perceived to represent Department of Defense (DOD) security concerns for protecting classified information from disclosure, especially in the face of Trojan horse attacks. Since it was under the auspices of DOD funding that the work in formal security policy models was carried out, it is not surprising that the emphasis was on models that reflected DOD requirements for confidentiality.

-

The embodiment of the model in the operating system has been

-

deemed essential in order to achieve a high level of assurance and to make available a secure platform on which untrusted (or less trusted) applications could be executed without fear of compromising overall system security. It was recognized early that the development of trusted software, that is, software that is trusted to not violate the security policy imposed on the computer system, is a very difficult and expensive task. This is especially true if a security policy calls for a high level of assurance in a potentially "hostile" environment, for example, execution of software from untrusted sources.

The strategy evolved of developing trusted operating systems that could segregate information and processes (representing users) to allow controlled sharing of computer system resources. If trusted application software were written, it would require a trusted operating system as a platform on top of which it would execute. (If the operating system were not trusted, it, or other untrusted software, could circumvent the trusted operation of the application in question.) Thus development of trusted operating systems is a natural precursor to the development of trusted applications.

At the time this strategy was developed, in the late 1960s and in the 1970s, computer systems were almost exclusively time-shared computers (mainframes or minis), and the resources to be shared (memory, disk storage, and processors) were expensive. With the advent of trusted operating systems, these expensive computing resources could be shared among users who would develop and execute applications without requiring trust in each application to enforce the system security policy. This has become an accepted model for systems in which the primary security concern is disclosure of information and in which the information is labeled in a fashion that reflects its sensitivity.

-

The granularity at which the security policy is enforced is determined largely by characteristics of typical operating system interfaces and concerns for efficient implementation of the mechanisms that enforce security. Thus, for example, since files and processes are the objects managed by most operating systems, these were the objects protected by the security policy embodied in the operating system. In support of Bell-LaPadula, data sensitivity labels are associated with files, and authorizations for data access are associated with processes operating on behalf of users. The operating system enforces the security policy by controlling access to data based on file labels and process (user) authorizations. This type of security policy implementation is the hallmark of high-assurance systems as defined by the Orange Book.

Concerning integrity in the Orange Book, note that if an integrity policy (like Clark-Wilson) and an integrity mechanism (like type enforce-

ment or rings) are then differentiated, an invariant property of mechanisms is that they enforce a "protected subsystem" kind of property. That is, they undertake to ensure that certain data is touchable only by certain code irrespective of the privileges that code inherits because of the person on whose behalf it is executing. Thus a proper integrity mechanism would ensure that one's personal privilege to update a payroll file could not be used to manipulate payroll data with a text editor, but rather that the privilege could be used only to access payroll data through the payroll subsystem, which presumably performs application-dependent consistency checks on what one does.

While the Orange Book does not explicitly call out a set of integrity-based access rules, it does require that B2-level1 systems and those above execute out of a protected domain, that is, that the trusted computing base (TCB) itself be a protected subsystem. The mechanism used to do this (e.g., rings) is usually, but not always, exported to applications. Thus an integrity mechanism is generally available as a byproduct of a system operating at the B2 level.

The Orange Book does not mandate mechanisms to support data integrity, but it easily could do so at the B2 level and above, because it mandates that such a mechanism exist to protect the TCB. It is now possible to devise mechanisms that protect the TCB but that cannot be made readily available to applications; however, such cases are in the minority and can be considered pathological.

HARDWARE ENFORCEMENT OF SECURITY AND INTEGRITY

The complexity and difficulty of developing secure applications can be reduced by modifying the hardware on which those applications run. Such modifications may add functionality to the operating system or application software, they may guarantee specific behavior that is not normally provided by conventional hardware, or they may enhance the performance of basic security functions, such as encryption. This section describes two projects that serve as worked examples of what can be accomplished when hardware is designed with security and/or integrity in mind, and what is gained or lost through such an approach.

VIPER Microprocessor

The VIPER microprocessor was designed specifically for high-integrity control applications at the Royal Signals and Radar Establish-

ment (RSRE), which is part of the U.K.'s Ministry of Defence (MOD). VIPER attempts to achieve high integrity with a simple architecture and instruction set designed to meet the requirements of formal verification and to provide support for high-integrity software.

VIPER 1 was designed as a primitive building block that could be used to construct complete systems capable of running high-integrity applications. Its most important requirement is the ability to stop immediately if any hardware error is detected, including illegal instruction codes and numeric underflow and overflow. By stopping when an error is detected, VIPER assures that no incorrect external actions are taken following a failure. Such ''fail-stop" operation (Schlichting and Schneider, 1983) simplifies the design of higher-level algorithms used to maintain the reliability and integrity of the entire system.

VIPER 1 is a memory-based processor that makes use of a uniform instruction set (i.e., all instructions are the same width). The processor has only three programmable 32-bit registers. The instruction set limits the amount of addressable memory to 1 megaword, with all access on word boundaries. There is no support for interrupts, stack processing or micro-pipelining.

The VIPER 1 architecture provides only basic program support. In fact, multiplication and division are not supported directly by the hardware. This approach was taken primarily to simplify the design of VIPER, thereby allowing it to be verified. If more programming convenience is desired, it must be handled by a high-level compiler, assuming that the resulting loss in performance is tolerable.

The VIPER 1A processor allows two chips to be used in tandem in an active-monitor relationship. That is, one of the chips can be used to monitor the operation of the other. This is achieved by comparing the memory and input/output (I/O) addresses generated by both chips as they are sent off-chip. If either chip detects a difference in this data, then both chips are stopped. In this model, a set of two chips is used to form a single fail-stop processor making use of a single memory module and an I/O line.

It is generally accepted that VIPER's performance falls short of conventional processors' performance, and always will. Because it is being developed for high-integrity applications, the VIPER processor must always depend on well-established, mature implementation techniques and technologies. Many of the decisions about VIPER's design were made with static analysis in mind. Consequently, the instruction set was kept simple, without interrupt processing, to allow static analysis to be done effectively.

LOCK Project

The LOgical Coprocessing Kernel (LOCK) Project intends to develop a secure microcomputer prototype by 1990 that provides A1-level security for general-purpose processing. The LOCK design makes use of a hardware-based reference monitor, known as SIDEARM, that can be used to build new, secure variants of existing architectures or can be included in the design of new architectures as an option. The goal is to provide the highest level of security as currently defined by National Computer Security Center (NCSC) standards, while providing 80 percent of the performance achievable by an unmodified, insecure computer. SIDEARM is designed to achieve this goal by controlling the memory references made by applications running on the processor to which it is attached. Assuming that SIDEARM is always working properly and has been integrated into the host system in a manner that guarantees its controls cannot be circumvented, it provides high assurance that applications can access data items only in accordance with a well-understood security policy. The LOCK Project centers on guaranteeing that these assumptions are valid.

The SIDEARM module is the basis of the LOCK architecture and is itself an embedded computer system, making use of its own processor, memory, communications, and storage subsystems, including a laser disk for auditing. It is logically placed between the host processor and memory, and integrated into those existing host facilities, such as memory management units, that control access into memory. Since it is a separate hardware component, applications can not modify any of the security information used to control SIDEARM directly.

Security policy is enforced by assigning security labels to all subjects (i.e., applications or users) and objects (i.e., data files and programs) and making security policy decisions without relying on the host system. The security policy enforced by SIDEARM includes type-enforcement controls, providing configurable, mandatory integrity. That is, "types" can be assigned to data objects and used to restrict access to subjects that are performing functions appropriate to that type. Thus type-enforcement can be used, for example, to ensure that a payroll data file is accessed only by payroll programs, or that specific transforms, such as labeling or encryption, are performed on data prior to output. Mandatory access control (MAC), discretionary access control (DAC), and type enforcement are "additive" in that a subject must pass all three criteria before being allowed to access an object.

The LOCK Project makes use of multiple TEPACHE-based TYPE-I

encryption devices to safeguard SIDEARM media (security databases and audit) and data stored on host system media, and to close covert channels. As such, LOCK combines aspects of both COMSEC (communications security) and COMPUSEC (computer security) in an interdependent manner. The security provided by both approaches is critical to LOCK's proper operation.

The LOCK architecture requires few but complex trusted software components, including a SIDEARM device driver and software that ensures that decisions made by the SIDEARM are enforced by existing host facilities such as a memory management unit. An important class of trusted software comprises "kernel extensions," security-critical software that runs on the host to handle machine-dependent support, such as printer and terminal security labeling, and application-specific security policies, such as that required by a database management system. Kernel extensions are protected and controlled by the reference monitor and provide the flexibility needed to allow the LOCK technology to support a wide range of applications, without becoming too large or becoming architecture-dependent.

One of LOCK's advantages is that a major portion of the operating system, outside of the kernel extensions and the reference monitor, can be considered "hostile." That is, even if the operating system is corrupted, LOCK will not allow an unauthorized application to access data objects. However, parts of the operating system must still be modified or removed to make use of the functionality provided by SIDEARM. The LOCK Project intends to support the UNIX System V interface on the LOCK architecture and to attain certification of the entire system at the A1 level.

CRYPTOGRAPHY

Cryptography is the art of keeping data secret, primarily through the use of mathematical or logical functions that transform intelligible data into seemingly unintelligible data and back again. Cryptography is probably the most important aspect of communications security and is becoming increasingly important as a basic building block for computer security.

Fundamental Concepts of Encryption

Cryptography and cryptanalysis have existed for at least 2,000 years, perhaps beginning with a substitution algorithm used by Julius Caesar (Tanebaum, 1981). In his method, every letter in the original message, known now as the plaintext, was replaced by the letter that

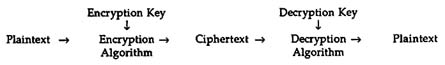

occurred three places later in the alphabet. That is, A was replaced by D, B was replaced by E, and so on. For example, the plaintext "VENI VIDI VICI" would yield "YHQL YLGL YLFL." The resulting message, now known as the ciphertext, was then couriered to an awaiting centurion, who decrypted it by replacing each letter with the letter that occurred three places "before" it in the alphabet. The encryption and decryption algorithms were essentially controlled by the number three, which thus was the encryption and decryption key. If Caesar suspected that an unauthorized person had discovered how to decrypt the ciphertext, he could simply change the key value to another number and inform the field generals of that new value by using some other method of communication. Although Caesar's cipher is a relatively simple example of cryptography, it clearly depends on a number of essential components: the encryption and decryption algorithms, a key that is known by all authorized parties, and the ability to change the key. Figure B.1 shows the encryption process and how the various components interact.

FIGURE B.1 The encryption process.

If any of these components is compromised, the security of the information being protected decreases. If a weak encryption algorithm is chosen, an opponent may be able to guess the plaintext once a copy of the ciphertext is obtained. In many cases, the cryptanalyst need only know the type of encryption algorithm being used in order to break it. For example, knowing that Caesar used only a cyclic substitution of the alphabet, one could simply try every key value from 1 to 25, looking for the value that resulted in a message containing Latin words. Similarly, many encryption algorithms that appear to be very complicated are rendered ineffective by an improper choice of a key value. In a more practical sense, if the receiver forgets the key value or uses the wrong one, then the resulting message will probably be unintelligible, requiring additional effort to retransmit the message and/or the key. Finally, it is possible that the enemy will break the code even if the strongest possible combination of algorithms and key values is used. Therefore, keys and possibly even the algorithms need to be changed over a period of time to limit

the loss of security when the enemy has broken the current system. The process of changing keys and distributing them to all parties concerned is known as key management and is the most difficult aspect of security management after an encryption method has been chosen.2

In theory, any logical function can be used as an encryption algorithm. The function may act on single bits of information, single letters in some alphabet, or single words in some language or groups of words. The Caesar cipher is an example of an encryption algorithm that operates on single letters within a message. Throughout history a number of "codes" have been used in which a two-column list of words is used to define the encryption and decryption algorithms. In this case, plaintext words are located in one of the columns and replaced by the corresponding word from the other column to yield the ciphertext. The reverse process is performed to regenerate the plaintext from the ciphertext. If more than two columns are distributed, a key can be used to designate both the plaintext and ciphertext columns to be used. For example, given 10 columns, the key [3,7] might designate that the third column represents plaintext words and the seventh column represents ciphertext words. Although code books (e.g., multicolumn word lists) are convenient for manual enciphering and deciphering, their very existence can lead to compromise. That is, once a code book falls into enemy hands, ciphertext is relatively simple to decipher. Furthermore, code books are difficult to produce and to distribute, requiring accurate accounts of who has which books and which parties can communicate using those books. Consequently, mechanical and electronic devices have been developed to automate the encryption and decryption process, using primarily mathematical functions on single bits of information or single letters in a given alphabet.

Private vs. Public Crypto-Systems

The security of a given crypto-system depends on the amount of information known by the cryptanalyst about the algorithms and keys in use. In theory, if the encryption algorithm and keys are independent of the decryption algorithm and keys, then full knowledge of the encryption algorithm and key will not help the cryptanalyst break the code. However, in many practical crypto-systems, the same algorithm and key are used for both encryption and decryption. The security of these symmetric cipher systems depends on keeping at least the key secret from others, making such systems private-key crypto-systems. An example of a symmetric, private-key crypto-system is the Data Encryption Standard (DES) (see below, "Data Encryption Standard").

In this case, the encryption and decryption algorithm is widely known and has been widely studied; the privacy of the encryption and decryption key is relied on to ensure security. Other private-key systems have been implemented and deployed by the National Security Agency (NSA) for the protection of classified government information. In contrast to the DES, the encryption and decryption algorithms within those crypto-systems have been kept classified, to the extent that the computer chips on which they are implemented are coated in such a way as to prevent them from being examined.

Users are often intolerant of private encryption and decryption algorithms because they do not know how the algorithms work or if a "trapdoor" exists that would allow the algorithm designer to read the user's secret information. In an attempt to eliminate this lack of trust, a number of crypto-systems have been developed around encryption and decryption algorithms based on fundamentally difficult problems, or one-way functions, that have been studied extensively by the research community. Another approach used in public-key systems, such as that taken by the RSA (see the section below headed "RSA"), is to show that the most obvious way to break the system involves solving a hard problem (although this means that such systems may be broken simpler means).

For practical reasons, it is desirable to use different encryption and decryption keys in a crypto-system. Such asymmetric systems allow the encryption key to be made available to anyone, while preserving confidence that only people who hold the decryption key can decipher the information. These systems, which depend solely on the privacy of the decryption key, are known as public-key crypto-systems. An example of an asymmetric, public-key cipher is the patented RSA system.

Digital Signatures

Society accepts handwritten signatures as legal proof that a person has agreed to the terms of a contract as stated on a sheet of paper, or that a person has authorized a transfer of funds as indicated on a check. But the use of written signatures involves the physical transmission of a paper document; this is not practical if electronic communication is to become more widely used in business. Rather, a digital signature is needed to allow the recipient of a message or document to irrefutably verify the originator of that message or document.

A written signature can be produced by one person (although forgeries certainly occur), but it can be recognized by many people as belong-

ing uniquely to its author. To be accepted as a replacement for a written signature, a digital signature, then, would have to be easily authenticated by anyone, but be producible only by its author.

A digital signature system consists of three elements, each carrying out a procedure:

-

The generator, which produces two numbers called the mark (which should be unforgeable) and the secret;

-

The signer, which accepts a secret and an arbitrary sequence of bytes called the input, and produces a number called the signature; and

-

The checker, which accepts a mark, an input, and a signature and says whether or not the signature matches the input for that mark.

The procedures have the following properties:

-

If the generator produces a mark and a secret, and the signer produces a signature when given the secret and an input, then the checker will say that the signature matches the input for that mark.

-

If one has a mark produced by the generator but does not have the secret, then even with a large number of inputs and matching signatures for that mark, one still cannot produce an additional input and matching signature for that mark. In particular, even if the signature matches one of the inputs, one cannot produce another input that it matches. A digital signature system is useful because if one has a mark produced by the generator, as well as an input and matching signature, then one can be sure that the signature was computed by a system that knew the corresponding secret, because a system that did not know the secret could not have computed the signature.

For instance, one can trust a mark to certify an uninfected program if

-

one believes that it came from the generator, and

-

one also believes that any system that knows the corresponding secret is one that can be trusted not to sign a program image if it is corrupted.

Known methods for digital signatures are often based on computing a secure checksum (see below) of the input to be signed and then encrypting the checksum with the secret. If the encryption uses public-key encryption, the mark is the public key that matches the secret, and the checker simply decrypts the signature.

For more details, see Chapter 9 in Davies and Price (1984).

Cryptographic Checksums

A cryptographic checksum or one-way hash function accepts any amount of input data (in this case a file containing a program) and computes a small result (typically 8 or 16 bytes) called the checksum. Its important property is that it requires that much work be done to find a different input with the same checksum. Here "a lot of work" means "more computing than an adversary can afford." A cryptographic checksum is useful because it identifies the input: any change to the input, even a very clever one made by a malicious person, is sure to change the checksum. Suppose a trusted person tells another that the program with checksum 7899345668823051 does not have a virus (perhaps he does this by signing the checksum with a digital signature). One who computes the checksum of file WORDPROC.EXE and gets 7899345668823051 should believe that he can run WORDPROC.EXE without worrying about a virus.

For more details, see Davies and Price (1984), Chapter 9.

Public-Key Crypto-systems and Digital Signatures

Public-key crypto-systems offer a means of implementing digital signatures. In a public-key system the sender enciphers a message using the receiver's public key, creating ciphertext1. To sign the message he enciphers ciphertext1 with his private key, creating ciphertext2. Ciphertext2 is then sent to the receiver. The receiver applies the sender's public key to decrypt ciphertext2, yielding ciphertext1. Finally, the receiver applies his private key to convert ciphertext1 to plaintext. The authentication of the sender is evidenced by the fact that the receiver successfully applied the sender's public key and was able to create plaintext. Since encryption and decryption are opposites, using the sender's public key to decipher the sender's private key proves that only the sender could have sent it.

To resolve disputes concerning the authenticity of a document, the receiver can save the ciphertext, the public key, and the plaintext as proof of the sender's signature. If the sender later denies that the message was sent, the receiver can present the signed message to a court of law where the judge then uses the sender's public key to check that the ciphertext corresponds to a meaningful plaintext message with the sender's name, the proper time sent, and so forth. Only the sender could have generated the message, and therefore the receiver's claim would be upheld in court.

Key Management

In order to use a digital signature to certify a program (or anything else, such as an electronic message), it is necessary to know the mark that should be trusted. Key management is the process of reliably distributing the mark to everyone who needs to know it. When only one mark needs to be trusted, this is quite simple: a trusted person tells another what the mark is. He cannot do this using the computer system, which cannot guarantee that the information actually came from him. Some other communication channel is needed: a face-to-face meeting, a telephone conversation, a letter written on official stationery, or anything else that gives adequate assurance. When several agents are certifying programs, each using its own mark, things are more complex. The solution is for one trusted agent to certify the marks of the other agents, using the same digital signature scheme used to certify anything else. Consultative Committee on International Telephony and Telegraphy (CCITT) standard X.509 describes procedures and data formats for accomplishing this multilevel certification (CCITT, 1989b).

Algorithms

One-Time Pads

There is a collection of relatively simple encryption algorithms, known as one-time pad algorithms, whose security is mathematically provable. Such algorithms combine a single plaintext value (e.g., bit, letter, or word) with a random key value to generate a single ciphertext value. The strength of one-time pad algorithms lies in the fact that separate random key values are used for each of the plaintext values being enciphered, and the stream of key values used for one message is never used for another, as the name implies. Assuming there is no relationship between the stream of key values used during the process, the cryptanalyst has to try every possible key value for every ciphertext value, a task that can be made very difficult simply by the use of different representations for the plaintext and key values.

The primary disadvantage of a one-time pad system is that it requires an amount of key information equal to the size of the plaintext being enciphered. Since the key information must be known by both parties and is never reused, the amount of information exchanged between parties is twice that contained in the message itself. Furthermore, the key information must be transmitted using mechanisms different from those for the message, thereby doubling the resources required.

Finally, in practice, it is relatively difficult to generate large streams of "random" values effectively and efficiently. Any nonrandom patterns that appear in the key stream provide the cryptanalyst with valuable information that can be used to break the system.

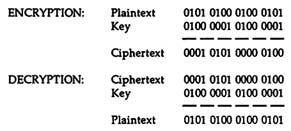

One-time pads can be implemented efficiently on computers using any of the primitive logical functions supported by the processor. For example, the Exclusive-Or (XOR) operator is a convenient encryption and decryption function. When two bits are combined using the XOR operator, the result is 1 if one and only one of the input bits is 1; otherwise the result is 0, as defined by the table in Figure B.2

FIGURE B.2 The XOR function.

The XOR function is convenient because it is fast and permits decrypting the encrypted information simply by "XORing" the ciphertext with the same data (key) used to encrypt the plaintext, as shown in Figure B.3.

FIGURE B.3 Encryption and decryption using the XOR function.

Data Encryption Standard

In 1972, the National Bureau of Standards (NBS; now the National Institute of Standards and Technology (NIST)) identified a need for a standard crypto-system for unclassified applications and issued a call for proposals. Although it was poorly received at first, IBM proposed, in 1975, a private-key crypto-system that operated on 64-bit blocks of

information and used a single 128-bit key for both encryption and decryption. After accepting the initial proposal, NBS sought both industry and NSA evaluations. Industry evaluation was desired because NBS wanted to provide a secure encryption that industry would want to use, and NSA's advice was requested because of its historically strong background in cryptography and cryptanalysis. NSA responded with a generally favorable evaluation but recommended that some of the fundamental components, known as S-boxes, be redesigned. Based primarily on that recommendation, the Data Encryption Standard (DES; NBS, 1977) became a federal information processing standard in 1977 and an American National Standards Institute (ANSI) standard (number X3.92-1981/R1987) in 1980, using a 56-bit key.

The Data Encryption Standard (DES) represents the first cryptographic algorithm openly developed by the U.S. government. Historically, such algorithms have been developed by the NSA as highly classified projects. However, despite the openness of its design, many researchers believed that NSA's influence on the S-box design and the length of the key introduced a trapdoor that allowed the NSA to read any message encrypted using the DES. In fact, one researcher described the design of a special-purpose parallel processing computer that was capable of breaking a DES system using 56-bit keys and that, according to the researcher, could be built by the NSA using conventional technology. Nonetheless, in over ten years of academic and industrial scrutiny, no flaw in the DES has been made public (although some examples of weak keys have been discovered). Unfortunately, as with all crypto-systems, there is no way of knowing if the NSA or any other organization has succeeded in breaking the DES.

The controversy surrounding the DES was reborn when the NSA announced that it would discontinue the FS-1027 DES device certification program after 1987, although it did recertify the algorithm (until 1993) for use primarily in unclassified government applications and for electronic funds transfer applications, most notably FedWire, which had invested substantially in the use of DES. NSA cited the widespread use of the DES as a disadvantage, stating that if it were used too much it would become the prime target of criminals and foreign adversaries. In its place, NSA has offered a range of private-key algorithms based on classified algorithms that make use of keys generated and managed by NSA.

The Data Encryption Standard (DES) algorithm has four approved modes of operation: the electronic codebook, cipher block chaining, cipher feedback, and output feedback. Each of these modes has certain characteristics that make it more appropriate than the others for

specific purposes. For example, the cipher block chaining and cipher feedback modes are intended for message authentication purposes, while the electronic codebook mode is used primarily for encryption and decryption of bulk data (NBS, 1980b).

RSA

The RSA is a public key crypto-system, invented and patented by Ronald Rivest, Adi Shamir, and Leonard Adelman, that is based on large prime numbers (Rivest et al., 1978). In their method, the decryption key is generated by selecting a pair of prime numbers, P and Q, (i.e., numbers that are not divisible by any other) and another number, E, which must pass a special mathematical test based on the values of the pair of primes. The encryption key consists of the product of P and Q, which is called N, and the number E, which can be made publicly available. The decryption key consists of N and another number, called D, which results from a mathematical calculation using N and E. The decryption key must be kept secret.

A given message is encrypted by converting the text to numbers (using conventional conversion mechanisms) and replacing each number with a number computed using N and E. Specifically, each number is multiplied by itself E times, with the result being divided by N, yielding a quotient, which is discarded, and a remainder. The remainder is used to replace the original number as part of the ciphertext. The decryption process is similar, multiplying the ciphertext number by itself D times (versus E times) and dividing it by N, with the remainder representing the desired plaintext number (which is converted back to a letter). RSA's security depends on the fact that, although finding large prime numbers is computationally easy, factoring large integers into their component primes is not, and it is computationally intensive.3 However, in recent years, parallel processing techniques and improvements in factoring algorithms have significantly increased the size of numbers (measured as the number of decimal digits in its representation) that can be factored in a relatively short period of time (i.e., less than 24 hours). Seventy-digit numbers are well within reach of modern computers and processing techniques, with 80-digit numbers on the horizon. Most commercial RSA systems use 512-bit keys (i.e., 154 digits), which should be out of the reach of conventional computers and algorithms for quite some time. However, the best factoring approaches currently use networks of workstations (perhaps several hundred or thousand of them), working part-time for weeks on end (Browne, 1988). This suggests that factoring numbers up to 110 digits is on the horizon.

PROTECTION OF PROPRIETARY SOFTWARE AND DATABASES

The problem of protecting proprietary software or proprietary databases is an old and difficult one. The blatant copying of a large commercial program, such as a payroll program, and its systematic use within the pirating organization are often detectable and will then lead to legal action. Similar considerations apply to large databases, and for these the pirating organization has the additional difficulty of obtaining the vendor-supplied periodic updates, without which the pirated database will become useless.

The problem of software piracy is further exacerbated in the context of personal computing. Vendors supply programs for word processing, spreadsheets, game-playing programs, compilers, and so on, and these are systematically copied by pirate vendors and by private users. While large-scale pirate vendors may eventually be detected and stopped, there is no hope of preventing, through detection and legal action, the mass of individual users from copying from each other.

Various technical solutions have been proposed for the problem of software piracy in the personal computing world. Some involve a machine-customized layout of the data on a disk. Others involve the use of volatile transcription of certain parts of a program text. Cryptography employing machine- or program-instance customized keys has been suggested, in conjunction with coprocessors that are physically impenetrable so that cryptographic keys and crucial decrypted program text cannot be captured. Some of these approaches, especially those employing special hardware, and hence requiring cooperation between hardware and software manufacturers, have not penetrated the marketplace. The safeguards deployed by software vendors are usually incomplete and after a while succumb to attacks by talented amateur hackers who produce copyable versions of the protected disks. There even exist programs to help a user overcome the protections of many available proprietary programs. (These thieving programs are then presumably themselves copied through use of their own devices!) It should be pointed out that there is even a debate as to whether the prevalent theft of proprietary personal computing software by individuals is sufficiently harmful to warrant the cost of developing and deploying really effective countermeasures (Kent, 1981).

The problem of copying proprietary software and databases, while important, lies outside the purview of system security. Software piracy is an issue between the rightful owner and the thief, and its resolution depends on tools and methods, and represents a goal, which are separate from those associated with system security.

There is, however, an important aspect of protection of proprietary software and/or databases that lies directly within the domain of system security as it is commonly understood. It involves the unauthorized use of proprietary software and databases by parties other than the organization licensed to use such software or databases, and in systems other than within the organization's system where the proprietary software is legitimately installed. Consider, for example, a large database with the associated complex-query software that is licensed by a vendor to an organization. This may be done with the contractual obligation that the licensee obtains the database for his own use and not for making query services available to outsiders. Two modes of transgression against the proprietary rights of the vendor are possible. The organization itself may breach its obligation not to provide the query services to others, or some employee who himself may have legitimate access to the database may provide or even sell query services to outsiders. In the latter case the licensee organization may be held responsible, under certain circumstances, for not having properly guarded the proprietary rights of the vendor. Thus there is a security issue associated with the prevention of unauthorized use of proprietary software or databases legitimately installed in a computing system. In the committee's classification of security services, it comes under the heading of resource (usage) control. Namely, the proprietary software is a resource and its owners wish to protect against its unauthorized use (say, for sale of services to outsiders) by a user who is otherwise authorized to access that software.

Resource control as a security service has inspired very few, if any, research and implementation efforts. It poses some difficult technical problems, as well as possible privacy problems. The obvious approach is to audit, on a selective and possibly random basis, access to the proprietary resource in question. Such an audit trail can then be evaluated by human scrutiny, or automatically, for indications of unauthorized use as defined in the present context. It may well be that effective resource control will require recording, at least on a spot-check basis, aspects of the content of a user's interaction with software and/or a database. For obvious reasons, this may provoke resistance.

Another security service that may come into play in this context of resource control is nonrepudiation. The legal aspects of the protection of proprietary rights may require that certain actions taken by a user in connection with the proprietary resource be such that once the actions are recorded, the user is barred from later repudiating his connection to these actions.

It is clear that such measures for resource control, if properly

implemented and installed, will serve to deter the unauthorized use of proprietary resources by individual users. But what about the organization controlling the trusted system in which the proprietary resource is embedded? On the one hand, such an organization may well have the ability to dismantle the very mechanisms designed to control the use of proprietary resources, thereby evading effective scrutiny by the vendor or its representations. On the other hand, the design and nature of security mechanisms are such that the mechanisms are difficult to change selectively, and especially in a manner ensuring that their subsequent behavior will emulate the untampered-with mode, thus making the change undetectable. Thus the expert effort and people involved in effecting such changes will open the organization to danger of exposure.

There is now no documented major concern about the unauthorized use, in the sense of the present discussion, of proprietary programs or databases. It may well be that in the future, when the sale of proprietary databases assumes economic significance, the possibility of abuse of proprietary rights by licensed organizations and authorized users will be an important issue. At that point an appropriate technology for resource control will be essential.

USE OF PASSWORDS FOR AUTHENTICATION

Passwords have been used throughout military history as a mechanism to distinguish friends from foes. When sentries were posted, they were told the daily password that would be given by any friendly soldier who attempted to enter the camp. Passwords represent a shared secret that allows strangers to recognize each other, and they have a number of advantageous properties. They can be chosen to be easily remembered (e.g., ''Betty Boop") without being easily guessed by the enemy (e.g., "Mickey Mouse"). Furthermore, passwords allow any number of people to use the same authentication method, and they can be changed frequently (as opposed to physical keys, which must be duplicated). The extensive use of passwords for user authentication in human-to-human interactions has led to their extensive use in human-to-computer interactions.

According to the NCSC Password Management Guideline, "A password is a character string used to authenticate an identity. Knowledge of the password that is associated with a user ID is considered proof of authorization to use the capabilities associated with that user ID" (U.S. DOD, 1985a).

Passwords can be issued to users automatically by a random generation routine, providing excellent protection against commonly used

passwords. However, if the random password generator is not good, breaking one may be equivalent to breaking all. At one installation, a person reconstructed the entire master list of passwords by guessing the mapping from random numbers to alphabetic passwords and inferring the random number generator (McIlroy, 1989). For that reason, the random generator must base its seed on a varying source, such as the system clock. Often the user will not find a randomly selected password acceptable because it is too difficult to memorize. This can significantly decrease the advantage of random passwords, because the user may write the password down somewhere in an effort to remember it. This may cause infinite exposure of the password, thus thwarting all attempts to maintain security. For this reason it can be helpful to give a user the option to accept or reject a password, or choose one from a list. This may increase the probability that the user will find an acceptable password.

User-defined passwords can be a positive method for assigning passwords if the users are aware of the classic weaknesses. If the password is too short, say, four digits, a potential intruder can exhaust all possible password combinations and gain access quickly. That is why every system must limit the number of tries any user can make toward entering his password successfully. If the user picks very simple passwords, potential intruders can break the system by using a list of common names or a dictionary. A dictionary of 100,000 words has been shown to raise the intruder's chance of success by 50 percent (McIlroy, 1989). Specific guidelines on how to pick passwords are important if users are allowed to pick their own passwords. Voluntary password systems should guide users to never reveal their password to other users and to change their password on a regular basis, a practice that can be enforced by the system. (The NCSC's Password Management Guideline (U.S. DOD, 1985a) represents such a guideline.)

Some form of access control must be provided to prevent unauthorized persons from gaining access to a password list and reading or modifying the list. One way to protect passwords in internal storage is by a one-way hash. The passwords of each user are stored as ciphertext. If the passwords were encrypted, per se, the key would be present and an attacker who gained access to the password file could decrypt them. When a user signs on and enters his password, the password is processed by the algorithm to produce the corresponding ciphertext. The plaintext password is immediately deleted, and the ciphertext version of the password is compared with the one stored in memory. The advantage of this technique is that passwords cannot be stolen from the computer (absent a lucky guess). However, a person obtaining unauthorized access could delete or change the ciphertext

passwords and effectively deny service. The file of encrypted passwords should be protected against unauthorized reading, to further foil attempts to guess passwords.

The longer a password is used, the more opportunities exist for exposing it. The probability of compromise of a password increases during its lifetime. This probability is considered acceptably low for an initial time period; after a longer time period it becomes unacceptably high. There should be a maximum lifetime for all passwords. It is recommended that the maximum lifetime of a password be no greater than one year (U.S. DOD, 1985a).

NETWORKS AND DISTRIBUTED SYSTEMS

Security Perimeters

Security is only as strong as its weakest link. The methods described above can in principle provide a very high level of security even in a very large system that is accessible to many malicious principals. But implementing these methods throughout the system is sure to be difficult and time consuming. Ensuring that they are used correctly is likely to be even more difficult. The principle of "divide and conquer" suggests that it may be wiser to divide a large system into smaller parts and to restrict severely the ways in which these parts can interact with each other.

The idea is to establish a security perimeter around part of a system and to disallow fully general communication across the perimeter. Instead, there are gates in the perimeter that are carefully managed and audited and that allow only certain limited kinds of traffic (e.g., electronic mail, but not file transfers or general network "datagrams"). A gate may also restrict the pairs of source and destination systems that can communicate through it.

It is important to understand that a security perimeter is not foolproof. If it passes electronic mail, then users can encode arbitrary programs or data in the mail and get them across the perimeter. But this is less likely to happen by mistake, and it is more difficult to do things inside the perimeter using only electronic mail than to do things using terminal connections or arbitrary network datagrams. Furthermore, if, for example, a mail-only perimeter is an important part of system security, users and managers will come to understand that it is dangerous and harmful to implement automated services that accept electronic mail requests.

As with any security measure, a price is paid in convenience and flexibility for a security perimeter: it is harder to do things across the

perimeter. Users and managers must decide on the proper balance between security and convenience.

Viruses

A computer virus is a program that

-

is hidden in another program (called its host) so that it runs whenever the host program runs, and

-

can make a copy of itself.

When a virus runs, it can do a great deal of damage. In fact, it can do anything that its host can do: delete files, corrupt data, send a message with a user's secrets to another machine, disrupt the operation of a host, waste machine resources, and so on. There are many places to hide a virus: the operating system, an executable program, a shell command file, or a macro in a spreadsheet or word processing program are only a few of the possibilities. In this respect a virus is just like a Trojan horse. And like a Trojan horse, a virus can attack any kind of computer system, from a personal computer to a mainframe. (Many of the problems and solutions discussed in this section apply equally well in a discussion of Trojan horses.)

A virus can also make a copy of itself, into another program or even another machine that can be reached from the current host over a network, or by the transfer of a floppy disk or other removable medium. Like a living creature, a virus can spread quickly. If it copies itself just once a day, then after a week there will be more than 50 copies (because each copy copies itself), and after a month about a billion. If it reproduces once a minute (still slow for a computer), it takes only half an hour to make a billion copies. Their ability to spread quickly makes viruses especially dangerous.

There are only two reliable methods for keeping a virus from doing harm:

-

Make sure that every program is uninfected before it runs.

-

Prevent an infected program from doing damage.

Keeping a Virus Out

Since a virus can potentially infect any program, the only sure way to keep it from running on a system is to ensure that every program run comes from a reliable source. In principle this can be done by administrative and physical means, ensuring that every program arrives on a disk in an unbroken wrapper from a trusted supplier. In

practice it is very difficult to enforce such procedures, because they rule out any kind of informal copying of software, including shareware, public domain programs, and spreadsheets written by a colleague. Moreover, there have been numerous instances of virus-infected software arriving on a disk freshly shrink-wrapped from a vendor. For this reason, vendors and at least one trade association (ADAPSO) are exploring ways to prevent contamination at the source. A more practical method uses digital signatures.

Informally, a digital signature system is a procedure that one can run on a computer and that should be believed when it says, "This input data came from this source" (a more precise definition is given below). With a trusted source that is believed when it says that a program image is uninfected, one can make sure that every program is uninfected before it runs by refusing to run it unless

-

a certificate says, "The following program is uninfected," followed by the text of the program, and

-

the digital signature system says that the certificate came from the trusted source.

Each place where this protection is applied adds to security. To make the protection complete, it should be applied by any agent that can run a program. The program image loader is not the only such agent; others include the shell, a spreadsheet program loading a spreadsheet with macros, or a word processing program loading a macro, since shell scripts, macros, and so on are all programs that can host viruses. Even the program that boots the machine should apply this protection when it loads the operating system. An important issue is distribution of the public key for verifying signatures (see "Digital Signatures," above).

Preventing Damage

Because there are so many kinds of programs, it may be hard to live with the restriction that every program must be certified as uninfected. This means, for example, that a spreadsheet cannot be freely copied into a system if it contains macros. Because it might be infected, an uncertified program that is run must be prevented from doing damage— leaking secrets, changing data, or consuming excessive resources.

Access control can do this if the usual mechanisms are extended to specify programs, or a set of programs, as well as users. For example, the form of an access control rule could be "user A running program B can read" or "set of users C running set of programs D can read and write." Then a set of uninfected programs can be defined, namely

the ones that are certified as uninfected, and the default access control rule can be "user running uninfected" instead of "user running anything." This ensures that by default an uncertified program will not be able to read or write anything. A user can then relax this protection selectively if necessary, to allow the program access to certain files or directories.

Note that strong protection on current personal computers is ultimately impossible, since they lack memory protection and hence cannot ultimately enforce access control. Yet most of the damage from viruses has involved personal computers, and protection has frequently been sought from so-called vaccine programs.

Providing and Using Vaccines

It is well understood how to implement the complete protection against viruses just described, but it requires changes in many places: operating systems, command shells, spreadsheet programs, programmable editors, and any other kinds of programs, as well as procedures for distributing software. These changes ought to be implemented. In the meantime, however, various stopgap measures can help somewhat. Generally known as vaccines, they are widely available for personal computers.

The idea behind a vaccine is to look for traces of viruses in programs, usually by searching the program images for recognizable strings. The strings may be either parts of known viruses that have infected other systems, or sequences of instructions or operating system calls that are considered suspicious. This idea is easy to implement, and it works well against known threats (e.g., specific virus programs), but an attacker can circumvent it with only a little effort. For example, many viruses now produce pseudo-random instances of themselves using encryption. Vaccines can help, but they do not provide any security that can be relied on. They are ultimately out of date as soon as a new virus or a strain of a virus emerges.

Application Gateways

What a Gateway Is

The term "gateway" has been used to describe a wide range of devices in the computer communication environment. Most devices described as gateways can be categorized as one of two major types, although some devices are difficult to characterize in this fashion.

-

The term "application gateway" usually refers to devices that convert between different protocol suites, often including application functionality, for example, conversion between DECNET and SNA protocols for file transfer or virtual terminal applications.

-

The term "router" is usually applied to devices that relay and route packets between networks, typically operating at layer 2 (LAN bridges) or layer 3 (internetwork gateways). These devices do not convert between protocols at higher layers (e.g, layer 4 and above).

Mail gateways, devices that route and relay electronic mail (a layer-7 application) may fall into either category. If the device converts between two different mail protocols, for example, X.400 and SMTP, then it is an application gateway as described above. In many circumstances an X.400 message transfer agent (MTA) will act strictly as a router, but it may also convert X.400 electronic mail to facsimile and thus operate as an application gateway. The multifaceted nature of some devices illustrates the difficulty of characterizing gateways in simple terms.

Gateways as Access Control Devices

Gateways are often employed to connect a network under the control of one organization (an internal network) to a network controlled by another organization (an external network such as a public network). Thus gateways are natural points at which to enforce access control policies; that is, the gateways provide an obvious security perimeter. The access control policy enforced by a gateway can be used in two basic ways:

-

Traffic from external networks can be controlled to prevent unauthorized access to internal networks or the computer systems attached to them. This means of controlling access by outside users to internal resources can help protect weak internal systems from attack.

-

Traffic from computers on the internal networks can be controlled to prevent unauthorized access to external networks or computer systems. This access control facility can help mitigate Trojan horse concerns by constraining the telecommunication paths by which data can be transmitted outside an organization, as well as supporting concepts such as release authority, that is, a designated individual authorized to communicate on behalf of an organization in an official capacity.

Both application gateways and routers can be used to enforce access control policies at network boundaries, but each has its own advantages and disadvantages, as described below.

Application Gateways as PAC Devices

Because an application gateway performs protocol translation at layer 7, it does not pass through packets at lower protocol layers. Thus, in normal operation, such a device provides a natural barrier to traffic transiting it; that is, the gateway must engage in significant explicit processing in order to convert from one protocol suite to another in the course of data transiting the device. Different applications require different protocol-conversion processing. Hence a gateway of this type can easily permit traffic for some applications to transit the gateway while preventing the transit of other traffic, simply by not providing the software necessary to perform the conversion. Thus, at the coarse granularity of different applications, such gateways can provide protection of the sort described above.

For example, an organization could elect to permit electronic mail (e-mail) to pass bidirectionally by putting in place a mail gateway while preventing interactive log-in sessions and file transfers (by not passing any traffic other than e-mail). This access control policy could be refined also to permit restricted interactive log-in, for example, that initiated by an internal user to access a remote computer system, by installing software to support the translation of the virtual terminal protocol in only one direction (outbound).

An application gateway often provides a natural point at which to require individual user identification and authentication information for finer-granularity access control. This is because many such gateways require human intervention to select services in translating from one protocol suite to another, or because the application being supported intrinsically involves human intervention, for example, virtual terminal or interactive database query. In such circumstances it is straightforward for the gateway to enforce access control on an individual user basis as a byproduct of establishing a "session" between the two protocol suites.

Not all applications lend themselves to such authorization checks, however. For example, a file transfer application may be invoked automatically by a process during off hours, and thus no human user may be present to participate in an authentication exchange. Batch database queries or updates are similarly noninteractive and might be performed when no "users" are present. In such circumstances there is a temptation to employ passwords for user identification and authentication, as though a human being were present during the activity, and the result is that these passwords are stored in files at the initiating computer system, making them vulnerable to disclosure (see "Authentication" in Chapter 3). Thus there are limitations on the use of application gateways for individual access control.

As noted elsewhere in this report, the use of cryptography to protect user data from source to destination (end-to-end encryption) is a powerful tool for providing network security. This form of encryption is typically applied at the top of the network layer (layer 3) or the bottom of the transport layer (layer 4). End-to-end encryption cannot be employed (to maximum effectiveness) if application gateways are used along the path between communicating entities. The reason is that these gateways must, by definition, be able to access protocols at the application layer, above the layer at which the encryption is employed. Hence the user data must be decrypted for processing at the application gateway and then re-encrypted for transmission to the destination (or to another application gateway). In such an event the encryption being performed is not really end-to-end.

If an application-layer gateway is part of the path for (end-to-end) encrypted user traffic, then one will, at a minimum, want the gateway to be trusted (since it will have access to the user data in clear text form). Note, however, that use of a trusted computing base (TCB) for a gateway does not necessarily result in as much security as if (uninterrupted) encryption were in force from source to destination. The physical, procedural, and emanations security of the gateway must also be taken into account as breaches of any of these security facets could subject a user's data to unauthorized disclosure or modification. Thus it may be especially difficult, if not impossible, to achieve as high a level of security for a user's data if an application gateway is traversed as the level obtainable using end-to-end encryption in the absence of such gateways.

In the context of electronic mail the conflict between end-to-end encryption and application gateways is a bit more complex. The secure massaging facilities defined in X.400 (CCITT, 1989a) allow for encrypted e-mail to transit MTAs without decryption, but only when the MTAs are operating as routers rather than as application gateways, for example, when they are not performing "content conversion" or similar invasive services. The privacy-enhanced mail facilities developed for the TCP/IP Internet (Linn, 1989) incorporate encryption facilities that can transcend e-mail protocols, but only if the recipients are prepared to process the decrypted mail in a fashion that suggests protocol-layering violation. Thus, in the context of e-mail, only those devices that are more akin to routers than to application gateways can be used without degrading the security offered by true end-to-end encryption.

Routers as PAC Devices

Since routers can provide higher performance and greater robustness and are less intrusive than application gateways, access control

facilities that can be provided by routers are especially attractive in many circumstances. Also, user data protected by end-to-end encryption technology can pass through routers without having to be decrypted, thus preserving the security imparted by the encryption. Hence there is substantial incentive to explore access-control facilities that can be provided by routers.

One way a router at layer 3 (and to a lesser extent at layer 2) can effect access control is through the use of "packet filtering" mechanisms. A router performs packet filtering by examining protocol control information (PCI) in specified fields in packets at layer 3 (and perhaps at layer 4). The router accepts or rejects (discards) a packet based on the values in the fields as compared to a profile maintained in an access-control database. For example, source and destination computer system addresses are contained in layer-3 PCI, and thus an administrator could authorize or deny the flow of data between a pair of computer systems based on examination of these address fields.

If one "peeks" into layer-4 PCI, an eminently feasible violation of protocol layering for many layer-3 routers, one can effect somewhat finer-grained access control in some protocol suites. For example, in the TCP/IP suite one can distinguish among electronic mail, virtual terminal, and several other types of common applications through examination of certain fields in the TCP header. However, one cannot ascertain which specific application is being accessed via a virtual terminal connection, and so the granularity of such access control may be more limited than in the context of application gateways. Several vendors of layer-3 routers already provide facilities of this sort for the TCP/IP community, so that this is largely an existing access-control technology.

As noted above, there are limitations to the granularity of access control achievable with packet filtering. There is also a concern as to the assurance provided by this mechanism. Packet filtering relies on the accuracy of certain protocol control information in packets. The underlying assumption is that if this header information is incorrect, then packets will probably not be correctly routed or processed, but this assumption may not be valid in all cases. For example, consider an access-control policy that authorizes specified computers on an internal network to communicate with specified computers on an external network. If one computer system on the internal network can masquerade as another authorized internal system (by constructing layer-3 PCI with incorrect network addresses), then this access-control policy could be subverted. Alternatively, if a computer system on an external network generates packets with false addresses, it too can subvert the policy.

Other schemes have been developed to provide more sophisticated

access-control facilities with higher assurance, while still retaining most of the advantages of router-enforced access control. For example, the VISA system (Estrin and Tsudik, 1987) requires a computer system to interact with a router as part of an explicit authorization process for sessions across organizational boundaries. This scheme also employs a cryptographic checksum applied to each packet (at layer 3) to enable the router to validate that the packet is authorized to transit the router. Because of performance concerns, it has been suggested that this checksum be computed only over the layer-3 PCI, instead of the whole packet. This would allow information surreptitiously tacked onto an authorized packet PCI to transit the router. Thus even this more sophisticated approach to packet filtering at routers has security shortcomings.

Conclusions About Gateways

Both application gateways and routers can be used to enforce access control at the interfaces between networks administered by different organizations. Application gateways, by their nature, tend to exhibit reduced performance and robustness, and are less transparent than routers, but they are essential in the heterogeneous protocol environments in which much of the world operates today. As national and international protocol standards become more widespread, there will be less need for such gateways. Thus, in the long term, it would be disadvantageous to adopt security architectures that require that interorganizational access control (across network boundaries) be enforced through the use of such gateways. The incompatibility between true end-to-end encryption and application gateways further argues against such access-control mechanisms for the long term.

However, in the short term, especially in circumstances where application gateways are required due to the use of incompatible protocols, it is appropriate to exploit the opportunity to implement perimeter access controls in such gateways. Over the long term, more widespread use of trusted computer systems is anticipated, and thus the need for gateway-enforced perimeter access control to protect these computer systems from unauthorized external access will diminish. It is also anticipated that increased use of end-to-end encryption mechanisms and associated access control facilities will provide security for end-user data traffic. Nonetheless, centrally managed access control for interorganizational traffic is a facility that may best be accomplished through the use of gateway-based access control. If further research can provide higher-assurance packet-filtering facilities in routers, the resulting system, in combination with trusted computing systems for

end users and end-to-end encryption, would yield significantly improved security capabilities in the long term.