Emerging Electro-Optical Technologies

This chapter focuses on emerging active electro-optical (EO) technologies that are rapidly developing and whose implementation is still evolving. The focus is on several coherent systems such as temporal and spatial heterodyning, synthetic aperture ladar, multiple-input, multiple-output (MIMO) imaging, and speckle imaging. Also discussed are emerging approaches in femtosecond sources and quantum technologies. Although these technologies are not fully matured, they may have a significant impact on the field of active EO sensing in the next 5-10 years and beyond.

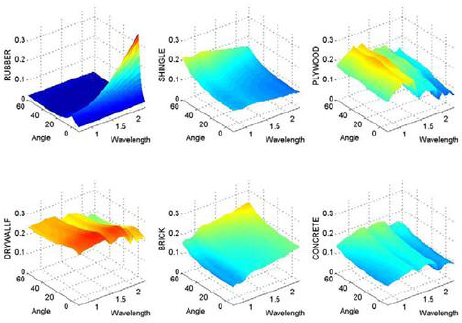

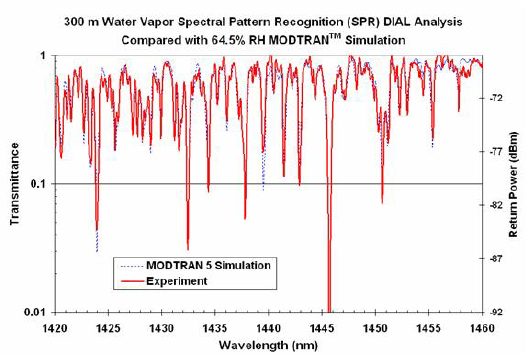

Color can be a very useful discriminant. People experience this when they compare black and white pictures to color pictures. Color distinctions are based on the difference in reflectivity versus wavelength. Active multispectral EO can complement conventional ladars when viewing solid targets by adding additional surface material discrimination information not available with just 2-D or 3-D imaging. Active multispectral imaging for targets can have an advantage over passive imaging of targets because one can control the illumination source. Therefore even at night near-IR (NIR) wavelengths can be used, whereas there would not be enough signal to use passive multispectral at these wavelengths. An active EO multispectral sensor will combine the benefits of conventional ladar and multispectral wavelengths in a band that has significant color variation in its reflectance. Conventional ladars, and some passive imaging sensors, utilize the shape of a target for detection and/or identification. Two different approaches have been used to deploy active multispectral sensors against hard targets. One is to use the laser wavelengths that are easy to generate, for example 1.064 µm and around 1.5 µm, and take whatever recognition benefit one can gain. The second approach is to determine what wavelengths offer the best active multispectral recognition probabilities and make the lasers appropriate to these discriminants. In the second case, a class separability metric is formulated and optimal wavelengths are selected.1Figure 3-1 shows reflectivity versus wavelength and angle for six different materials. Figure 3-2 shows reflectivity versus wavelength for leaves, showing significant spectral reflectivity changes versus wavelength in the NIR.

Multispectral and hyperspectral sensing depend on variation in reflectivity versus wavelength for surface materials of the object being viewed. The main fundamental limit is that reflectance, or absorption, reflects only the surface properties of a solid object being viewed.

The key technologies for active multispectral imaging are the illuminator, a multispectral laser to illuminate an object at more than one wavelength, and the associated detector technologies.

To achieve active multispectral imaging, one needs laser sources that cover all of the wavelengths of interest. Therefore, developing active multispectral sensing requires that laser sources either have multiple laser lines or be very broadband.

____________________

1 M. Vaidyanathan, T.P. Grayson, R.C. Hardie, L.E. Myers, and P.F. McManamon, 1997, “Multispectral laser radar development and target characterization,” Proc. SPIE, 3065: 255.

FIGURE 3-1 Reflectivity versus wavelength and angle for six different materials. SOURCE: D.G. Jones, D.H. Goldstein, and J.C. Spaulding, 2006, “Reflective and polarimetric characteristics of urban materials,” AFRL Tech Report, AFRL-MN-EG-TP-2006-7413.

FIGURE 3-2 Leaves from trees have different spectral qualities. Plot shows leaf reflectivity and transmission. SOURCE: Reprinted from Remote Sensing of Environment, 64/3, G.P. Asner, Biophysical and Biochemical Sources of Variability in Canopy Reflectance, 234-253, 1998, with permission from Elsevier.

As an active system, detection of NIR spectral bands is made possible even at night, and NIR bands have a significant amount of reflectivity variation, or color. This sensor type will be especially useful in high clutter situations or situations where the target is partially obscured.

Active multispectral sensing does not require precision angle/angle resolution because the surface material discrimination is based on the ratio of reflectance at various wavelengths, not on the shape of an object. Therefore, to the first order, active multispectral sensing is not dependent on the diffraction limit, so it can be a useful long-range discriminant. It is also not dependent on exact object shape, which explains its usefulness when a target is partially obscured. The only angular size effect is based on color mixing over a pixel when pixels become larger.

One disadvantage is that active multispectral requires laser illumination at all wavelengths being used. Three bands would require three lasers, or the ability to divide a single laser into sources at each of the three wavelengths, or else sequencing through the bands. An optical parametric oscillator (OPO) can be used to shift the wavelengths transmitted over time, as long as the object being viewed is stationary over that time period. Alternatively, a broadband laser source containing many wavelengths can be used. A broadband laser has the disadvantage of spreading the laser light over that broadband, reducing the available light at any particular wavelength.

Active multispectral EO sensing containing multiple lasers or a single laser shifted to multiple wavelengths, has a low scientific barrier to entry. Many countries have excellent laser technology, which is the first requirement to manufacture multiline or broadband lasers. As a result, the comparative state of the art in this area is not very meaningful. Once it is decided to provide laser sources at multiple wavelengths, it is relatively straightforward to make an active multispectral sensor. That said, development of this technology also requires investment in the associated receivers and their integration into a single unit that meets constraints of size, weight, and power (SWaP) and cost.

TEMPORAL HETERODYNE DETECTION: STRONG LOCAL OSCILLATOR

As described in Chapter 2, laser radar systems using direct detection (as in 3-D flash imaging) can be limited by the noise in the detector. One method for reducing the effect of detector noise was taken from the radio frequency (RF) community. Heterodyne detection is a method originally developed in the RF domain as a way to convert a received signal to a fixed intermediate frequency that can be more conveniently—and more noiselessly—processed than the original carrier frequency. This practice has been similarly adapted to optics as a way to detect weak signals.

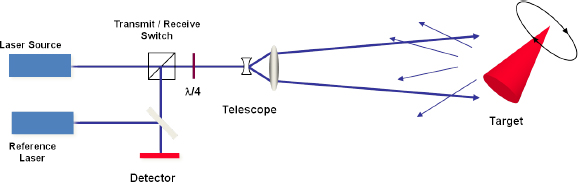

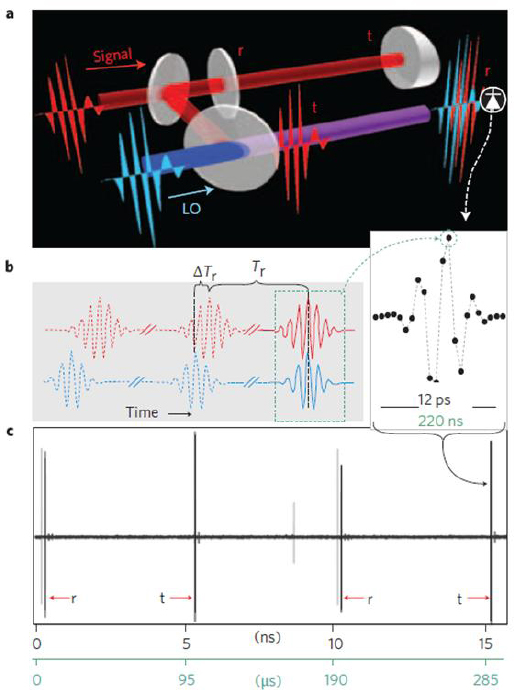

In optical heterodyne detection, a weak input laser signal is mixed with a local oscillator (LO) laser wave by simultaneously illuminating a detector with both signals. For temporal heterodyne it is very important to match the illumination angles of both the LO light and the return signal light across the detector, or else spatial fringes develop. High spatial frequency fringes smaller than a detector can average the interfering signal to zero across the detector. Figure 3-3 illustrates the arrangement of a simple optical heterodyne receiver. A laser transmits a coherent waveform of light toward a target. The reflected light beam is mixed with the reference laser (local oscillator) beam at a beam splitter in the receive optical path and the beams are superimposed on the detector. The resulting photocurrent is proportional to the total optical intensity, which is the square of the total electric field amplitude. If the LO power is increased above all other noise sources, the signal-to-noise ratio becomes limited only by shot noise of the return signal. For temporal heterodyne detection, the reference laser frequency is offset from the laser source by ωif. and the resulting optical intensity has fluctuations at the difference and sum frequencies of the two fields and at double the frequency of each of the fields. The LO frequency is offset so it is possible to determine whether any velocity is toward or away from the sensor. The coherent

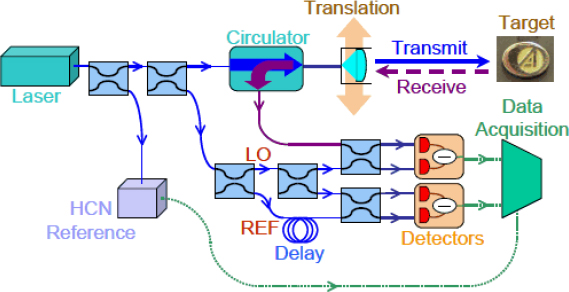

FIGURE 3-3 Simple heterodyne (coherent) laser radar configuration.

receiver is usually designed to isolate the difference frequency component from fluctuations and noise at other frequencies.2

For traditional heterodyne detection, the LO field strength must be much higher than the return signal strength in order to mitigate the effects of various noise sources. However, if the detector is sufficiently sensitive, there is little need to mitigate detector noise sources by using a strong LO. If the field strengths are similar, the noise mitigation benefit of a strong LO is lost, but the frequency comparison and narrowband filtering features are still met.

TEMPORAL HETERODYNE DETECTION: WEAK LOCAL OSCILLATOR

Traditional heterodyne detection with a strong LO has an added challenge if arrays of detectors are desired. While a high-resistance receiver, such as a focal plane array with relatively low bandwidth, can operate with low LO power, other standard high-bandwidth IR detectors used in heterodyne detection systems, such as a linear GHz-bandwidth photodiode, can require as much as 1 mW of LO power or more to reach the shot noise limit. This may result in unacceptable heat loads of greater than 10 W for large arrays of 10,000 elements.3 A 256 × 256 array would require even larger LO powers. Using a photon counting detector allows use of a low-power LO and potentially the ability to use the same detector for both coherent and direct detection. In order to use photon counting detectors such as Geiger-mode (GM) avalanche photodiodes (APDs), the LO strength must be matched to the signal strength. In this case, the noise mitigation effects from the strong LO are lost, but the ability to detect frequency shifts is maintained.

The block diagram for weak LO heterodyne detection is the same as that shown in Figure 3-3, with the detector being replaced by a GM-APD array. A laser transmits a coherent waveform toward a target, the reflected beam is mixed with the LO at a beam splitter in the receive optical path, and the beat signal is detected by the receiver. The object is imaged onto the detector array, but in this case the readout is simply the photon arrival time. The size of the angle/angle resolution element depends on the detector angular subtense and the size of the focused optical spot. To detect more than one photon per angle/angle resolution element per reset of the detector, the receive optics can be constructed so that each focused optical spot is spread across a group of pixels (called a macropixel).4 Each macropixel then acts as a photon-number-resolving detector whose dynamic range is equal to the number of pixels contained in the macropixel. For example, a 32 × 32 GM-APD can be broken up into an 8 × 8 array of photon-

____________________

2 P. McManamon, 2012, “Review of ladar: A historic, yet emerging, sensor technology with rich phenomenology,” Optical Engineering 51(6): 060901.

3 L. Jiang and J. Luu, “Heterodyne detection with a weak local oscillator,” Applied Optics 47(10), 1486-1503, (2008).

4 L. Jiang et al., 2007, “Photon-number resolving detector with 10-bits resolution,” Phys. Rev. A, 75(6): 062325.

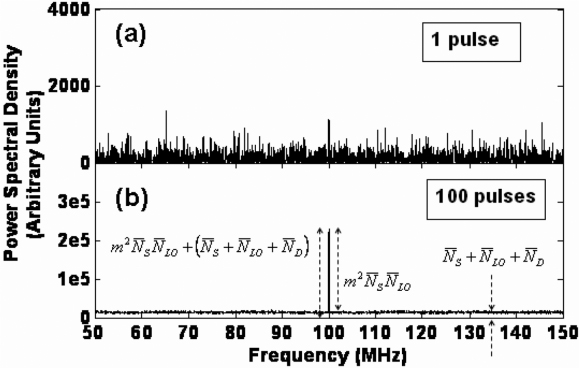

FIGURE 3-4 Power spectral density (PSD) of the detected current for a temporal heterodyne laser radar receiver, shown for (a) 1 pulse and (b) 100 pulses of incoherent averaging. SOURCE: L. Jiang, E. Dauler, and J. Chang, 2007, “Photon-number-resolving detector with 10 bits of resolution,” Physical Review A 75 (6):062325.

number-resolving detector macropixels. Each macropixel can be a 4 × 4 array of pixels, or 16 pixels, resulting in a 4-bit dynamic range. When plotted as a function of time for a single macropixel, the photon arrival times map out the frequency of the beat signal. The detector readout rate must be high enough to sample the beat frequency between the LO and the return signal, or something must be done to mitigate aliasing. For a single pixel detector this means the beat frequency cannot be sampled if it is higher than half the array read out rate, but the macropixel allows detector bandwidth-limited sampling of the time of arrival of photons. With large enough macropixels, beat frequencies can be sampled up to the detector bandwidth rather than being limited by the detector array frame rate.

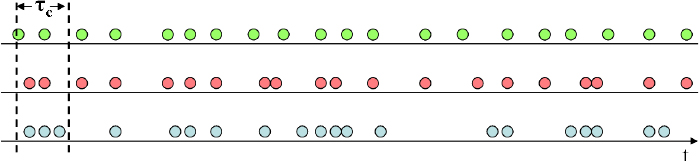

In experiments, the return signal for each pulse is coherently integrated—that is, a fast Fourier transform of the photon arrival times for each pulse is performed—then incoherently averaged over multiple pulses—that is, the power spectral densities (PSDs) are summed over all collected pulses. If the LO and transmit signals are offset in frequency by foffset, the beat signal is located at foffset,. If the target has a velocity component along the ladar’s line of sight, the PSD should show a peak at the sum of the offset frequency and the target’s Doppler frequency. The PSD of several return pulses can be averaged to smooth out the curve, as shown in Figure 3-4.

There are important limitations to this technique that limit its applicability and the measurement concept of operations (CONOPS). Principal among these are the dynamic range and visibility constraints and requirements on LO power control, as well as the timescale constraints that link photocount rate, beat frequency, and vibrational frequency. These limitations were discussed in more detail in the section on laser vibrometry in Chapter 2.

As indicated in the preceding section, it is critical to maintain coherence between the wavefronts of the signal and the LO. While it may be possible to minimize the effects on the system side, the transmission medium may ultimately determine the performance of heterodyne detection. In addition to large fluctuations of attenuation produced by things like fog, rain, and smoke, inhomogeneities in the atmosphere itself may produce wavefront distortion. As a result of this wavefront distortion caused by air turbulence, a large portion of the light power can be converted into higher order modes and makes it

difficult to match the LO and return signal patterns. Therefore, heterodyne detection over large distances through the atmosphere is inherently difficult.

Unlike direct detection receivers, the dominant noise source in heterodyne or coherent receivers is the shot noise generated by the local oscillator beam. For a matched filter receiver, that effective noise is equal to one detected photon per resolution element (in both time and space).5 In order to efficiently contend with this inherent noise, the coherent detection system design is most efficient by ensuring that on the order of 1 (or a few) signal photon(s) is detected per angle/angle/range resolution cell per pulse. Below one detected signal photon per resolution element per pulse, the required transmitter power scales as the square root of the pulse repetition frequency (PRF). Therefore, if a 10 W, 100 Hz transmitter is the optical coherent design (giving ~1 photon per resolution element), then 100 W would be required for a higher PRF, 1 KHz system. This can lead to higher pulse energies and lower PRFs being the optimal energy efficiency solution for many measurement problems, which may not be as feasible as other designs, whether technologically or where low SWaP and/or high reliability are required. Direct detection receivers do not have this fundamental noise constraint and can have much lower than one-detected-noise-photon per resolution element per pulse.6

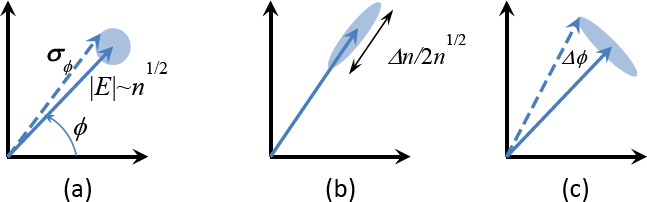

Another fundamental limit of heterodyne detection is the effect of speckle present in highly coherent light. As discussed, the LO and signal must be temporally coherent. They also need to be spatially coherent across the face of the detector and avoid having an additional linear phase difference, or they will produce spatial fringes across a detector, destroying the signal. In many usage scenarios the signal is reflected from optically rough surfaces, producing randomized phases and a “salt and pepper” intensity modulation on the image known as speckle,7 as also occurs in microwave synthetic-aperture radar, if the illumination source is sufficiently temporally coherent, as for a laser. Techniques such as averaging independent speckle realizations can be performed to reduce the speckle.8

Coherent detection techniques require narrow linewidth lasers with coherent illumination. The coherence length must be larger than the two-way round trip time of the pulse, or a sample of the outgoing signal must be stored until the signal return and used to develop the LO. In the second case the coherence length of the illuminating laser must still be longer than twice the depth of the target. A method of shifting the LO frequency is required for knowledge of the direction of the velocity. This is usually accomplished with an acousto-optical modulator. Larger, high bandwidth arrays are also important. Finally, adaptive optics may help reduce the effects of turbulence.

Published literature on the development of laser systems described above would be an indicator. Indicators of progress in heterodyne detector systems may also be found in literature and/or research about some of the applications of coherent ladar (vibrometry, spectroscopy, synthetic aperture ladar, etc.). Additional indicators may be noticed by work on high bandwidth arrays. For heterodyne approaches that do not have sensitive detectors, alternating current (AC) couple array work would also be an indicator.

There are a number of advantages to using heterodyne detection rather than direct detection. First, as mentioned above, a weak signal can be amplified by a strong LO and the signal-to-noise ratio with heterodyne detection depends only on the signal strength, the detector quantum efficiency, and the signal bandwidth. These results are true of both temporal and spatial heterodyne detection. This means that high gain detectors like those used in the direct detect 3-D ladars are not necessarily needed, and shot-noise-limited detection is possible with low-tech photodiodes.

Second, the heterodyne receiver provides high discrimination against background light and other radiation. Unlike direct detection, where background light causes problems when it is of the same order of magnitude as the signal power, in heterodyne detection the background light must be comparable to the LO power, which in many cases is made quite high and can be set to dominate the background light.

____________________

5 P. Gatt and S. Henderson, 2001, “ Laser radar detection statistics: A comparison of coherent and direct detection receivers,” Proc. SPIE, 4377: 251.

6 Personal communication from Sammy Henderson, President, Beyond Photonics, April 20, 2013.

7 C. Dainty, ed., 1984, Laser Speckle and Related Phenomena, Springer Verlag.

8 J.W. Goodman, 2007, Speckle Phenomena in Optic: Theory and Applications, Roberts & Co.

Furthermore, the coherent detection bandwidth can be controlled by a postdetection electronic filter that can be as narrow as desired.9 Heterodyne detection usually has much narrower receiver bandwidths than direct detection.

In temporal heterodyne detection, a third feature of coherent detection takes advantage of the fact that the amplified output occurs at the difference frequency between the LO and signal beams. This sensitivity to frequency difference makes it possible to measure the phase or frequency shift of the signals and hence obtain Doppler measurements for moving targets. This type of measurement is not directly possible with direct detect systems, which only measure intensity. It takes multiple range measurements to indirectly measure velocity using direct detection.

While heterodyne detection offers the potential for highly sensitive measurements, there are a number of practical limitations to the scheme. Coherent ladars are essentially interferometers. If the phases of the two beams are not well matched, the fringes will oscillate back and forth and the signal will be washed out. This feature lowers efficiency in temporal heterodyne. For heterodyne detection, the two fields from the transmitter and the LO must be spatially locked in phase at the detector. The two beams must be coincident and, to provide maximum signal-to-noise ratio, their diameters must be equal. The beams must propagate in the same direction and the wavefronts must have the same curvature. For spatial heterodyne the LO and return signal propagate in slightly different directions, causing spatial fringes to develop. Finally, for temporal heterodyne, the beams must be identically polarized, so that their electric vectors will be coincident.10 These requirements are called “coherent superposition,” and failure to meet them can cause a loss of signal reception.

A good way to deal with some of these constraints is to use a single laser for both the LO and signal and, for temporal heterodyne, coupling with an acousto-optic modulator to create the frequency difference between the two. In this configuration, the relative phase of the two beams is fairly stable even if the source does not exhibit low phase noise. In addition to the superposition requirements, the laser must be coherent and the coherent length must either be longer than the round trip distance or at least longer than the depth of the target as long as master oscillator drift is compensated by some technique, such as delaying a sample of the master oscillator to use as the local oscillator.

Heterodyne (or coherent) detection can be used in a number of applications such as coherent Doppler ladar measurements, vibrometry, spectroscopy, and very high resolution imaging techniques such as synthetic aperture ladar and inverse synthetic aperture ladar. Several of these applications will be discussed in more detail in later sections. Using a photon counting detector allows use of a low power local oscillator and potentially the ability to use the same detector for both coherent and direct detection.

Conclusion 3-1: Advantages of shot-noise-limited detection, high background discrimination, and measurement of phase or frequency shifts in addition to intensity make heterodyne detection a compelling and promising technology.

Conclusion 3-2: Heterodyne detection can be used with a weak local oscillator if detectors are already sensitive enough so that a strong local oscillator is not required as a method of increasing the receiver sensitivity.

According to Voxtel “Conventional optical imagers, including imaging. ladars, are limited in angle/angle spatial resolution by the diffraction limit of the telescope aperture. As the aperture size increases, the angle/angle resolution improves; as the range increases, spatial resolution degrades. Thus, high-resolution, real-beam imaging at long ranges requires large telescope

____________________

9 S. Jacobs, 1988, “Optical heterodyne (coherent) detection,” Am. J. Phys. 56(3): 235.

10 O.E. DeLange, 1968, “Optical heterodyne detection,” IEEE Spectrum 5(10): 77.

diameters. Imaging resolution is further dependent on wavelength, with longer wavelengths producing coarser angle/angle resolution. Thus, the limitations of diffraction are most apparent in the radio-frequency domain (as opposed to the optical domain).”11

Buell et al. describes “A technique known as synthetic-aperture radar (SAR) was invented in the 1950s to overcome this limitation: In simple terms, a large radar aperture is synthesized by processing the pulses emitted at different locations from a radar aperture as it moves, typically on an airplane or a satellite. The resulting image resolution is characteristic of significantly larger apertures. For example, the Canadian Radar Sat—II, which flies at an altitude of about 800 km, has an antenna size of 15 × 1.5 meters and operates at a wavelength of 5.6 cm. Its real-aperture resolution is on the order of 1 kilometer, while its synthetic-aperture resolution (with a transmission bandwidth of 100 MHz) is as fine as 3 m. This resolution enhancement is made possible by keeping track of the phase history of the radar signal as it travels to the target and returns from various scattering centers in the scene. The final synthetic-aperture radar image is reconstructed from many pulses transmitted and received during a synthetic-aperture evolution time using sophisticated signal processing techniques.12”

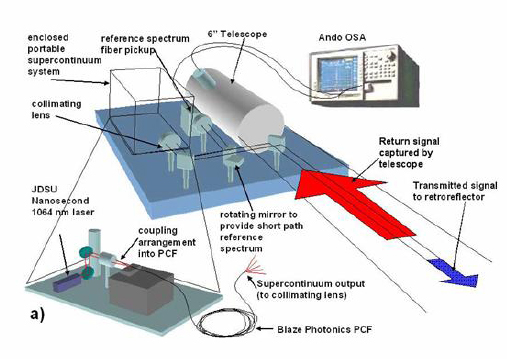

An alternative description of what is happening to create a high resolution synthetic-aperture ladar (SAL) image is that at a given instant a laser waveform is transmitted from either a monostatic or bistatic aperture. The laser light reflects off the target, and the return field is captured using either spatial or temporal heterodyne. To date, almost all SAL work has been done using temporal heterodyne, but the key issue is capturing a sample of the pupil plane field as large as the real receive aperture. At another location shortly later the same thing is done, and then again and again as the transmit and receive apertures move. If motion issues can be compensated, then a physically large representation of the pupil plane field is captured, which can then be Fourier transformed to form a high-resolution image. Since for monostatic operation both the transmitter and the receiver move, the synthesized pupil plane image is almost twice the distance flown.

In recent years, researchers have investigated ways to apply the techniques and processing tools of RF SARs to optical laser radars. According to Buell et al. “There are several motivations for developing such an approach in the optical or visible domain. The first is simply that humans are used to seeing the world at optical wavelengths. Optical SAL would potentially be easier than microwave radar for humans to interpret, even without specialized training. Second, optical wavelengths are around 20,000 times shorter than RF wavelengths, and can therefore provide much finer spatial resolution and/or much faster imaging times.”13 A typical synthetic aperture motion distance will be many kilometers for SAR but only meters for SAL, assuming the same target resolution requirement. Over time, new applications may arise for which additional resolution requirements impose longer motion distances on SAL.

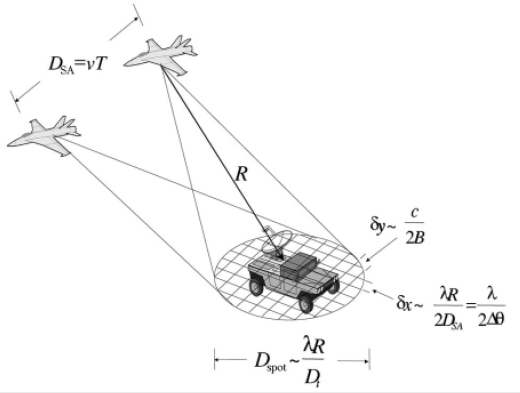

The SAL concept is illustrated in Figure 3-5. This paragraph is drawn from Beck et al. “A platform with a transmitter-receiver module moves with velocity ν while it illuminates a target with light of mean wavelength λ and receives the scattered light. The imaging angular resolution in the direction of travel of the platform is given approximately by δx = λ/2Δθ, where the change in azimuth angle Δθ as seen by an observer at the target at range R is Δθ = DSA/R and the synthetic-aperture length developed during flight time T is DSA = ν × T”.14 The range resolution, in the orthogonal direction, is determined by the bandwidth, B, of the transmitted waveform range resolution δy = c/2B, so long as the receiver can measure the returned bandwidth. Coherent (heterodyne) detection is used to measure the phase history of the returned ladar signals throughout the synthetic-aperture formation time. According to Beck et al. “Two of the main types of synthetic aperture active sensors are (1) spotlight mode (Figure 3-5), in which

____________________

11 Voxtel, http://www.virtualacquisitionshowcase.com/document/602/brochure. Accessed on March 14, 2014.

12 W.F. Buell, N.J. Marechal, J.R. Buck, R.P. Dickinson, D. Kozlowski, T.J. Wright, and S.M. Beck, 2004 “Synthetic Aperture Imaging Ladar” Crosslink (Summer): 45-49.

13 Ibid.

14 S. Beck, J. Buck, W. Buell, R. Dickinson, D. Kozlowski, N. Marechal, and T. Wright, 2005 “Synthetic-aperture imaging laser radar: Laboratory demonstration and signal processing,” Appl. Opt. 44(35): 7621-7629.

FIGURE 3-5 Spotlight synthetic-aperture ladar (SAL). The illuminating spot size D spot, at the target is determined by the diffraction limit of the transceiver optic, with diameter Dt, corresponding to the imaging resolution of a conventional imager with the same aperture. The resolution in the direction of travel (azimuthal, δx) is determined by the wavelength and evolved aperture length. (For strip-map SAL, this length is limited by the spot illuminating spot size at the target.) The resolution in the orthogonal direction (range, δy) is determined by the transmitted waveform bandwidth, B. The angle Δθ is the angle subtended by the synthetic aperture as viewed from an image element at the target. To obtain the resolution in the ground plane, a simple rotation from the slant plane to the ground plane is performed. SOURCE: S.M. Beck, J.R. Buck, W.F. Buell, R.P. Dickinson, D.A. Kozlowski, N.J. Marechal, and T.J. Wright, 2005, “Synthetic aperture imaging laser radar: laboratory demonstration and signal processing,” Appl. Opt. 44(35): 7621.

the transmitted beam is held at one position on the target for the coherent dwell period and then moved to another spot, and (2) strip mode, in which the transmitted beam is continuously scanned across a target. Most of this discussion applies to either case. In strip mode, the aperture synthesis time is limited by the beamwidth of the sensor and the velocity of the platform (the time during which the target is illuminated). Smaller real apertures result in larger illuminating spots and concomitantly longer synthetic apertures, which leads to the nonintuitive (from a conventional imaging perspective) result that the azimuthal resolution in strip-mode SAL is half of the real-aperture diameter”—the smaller the transmitter aperture the better the resolution.15 Bistatic configurations, where the transmit and receive apertures are not collocated, can also be considered.

Figure 3-6 shows an early embodiment of a laboratory SAL demonstration system developed at The Aerospace Corporation based on wide-bandwidth frequency-modulated continuous-wave (FMCW) waveforms. According to Beck et al. “The components employed are all common, off-the-shelf, telecommunication fiber-based devices allowing a compact system to be assembled that can easily be isolated from environmental effects: The source is split into five paths, for target illumination, target—LO, reference, reference—LO, and wavelength reference. A circulator is used to recover the return pulse, which

____________________

15 Ibid.

FIGURE 3-6 Component layout for the fiber-based SAIL system. The components employed are all common, off-the-shelf, telecom, fiber-based devices, allowing a very compact system to be assembled that can be easily isolated from environmental effects. The source is split into five paths for the target illumination, target-local oscillator (LO), reference (REF), reference-local oscillator, and wavelength reference. A circulator is used to recover the return pulse, which is mixed with the target-local oscillator in a balanced heterodyne detector. The reference channel is delayed by a fiber loop and then mixed with the reference-local oscillator in a similar manner. The synthetic aperture is created by using a translation stage to scan the aperture across the target. A molecular wavelength reference cell (hydrogen cyanide -HCN) provides a pulse to pulse frequency absolute reference. SOURCE: from Walter Buell (variation of Figure 3 in S.M. Beck, J.R. Buck, W.F. Buell, R.P. Dickinson, D.A. Kozlowski, N.J. Marechal, and T.J. Wright, 2005, “Synthetic aperture imaging ladar: laboratory demonstration and signal processing,” Appl. Opt. 44(35): 7621).

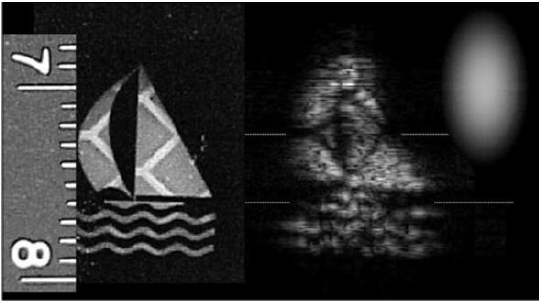

is mixed with the target—LO in a balanced heterodyne detector. The reference channel is delayed by a fiber loop and then mixed with the reference—LO in a similar manner. The synthetic aperture is created by use of a translation stage to scan the aperture across the target.”16Figure 3-7 shows a typical laboratory image from the system of Figure 3-6 (see caption for details).

In 2003, DARPA initiated the Synthetic Aperture Lidar Tactical Imaging (SALTI) program with the aim of achieving high-resolution synthetic aperture lidar imagery from an airborne platform at tactical ranges, moving SAL from the laboratory to operationally relevant environments. The performance characteristics of the SALTI program are classified, but the system did achieve synthetic aperture resolution exceeding the real-aperture diffraction-limited resolution of the system. The program progressed through DARPA Phase 3 before being terminated in 2007.

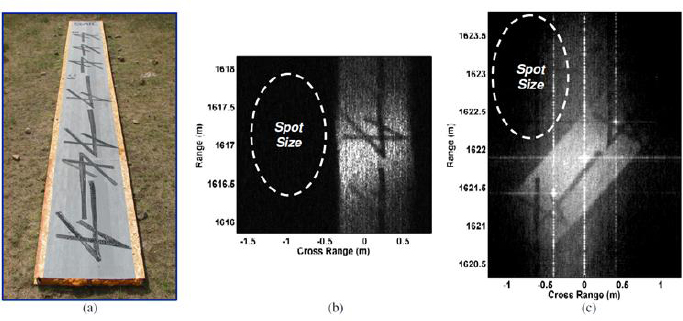

Lockheed Martin Coherent Technologies (LMCT) also pursued an airborne SAL system17 and presented ground and airborne results in the Laser Sensing and Communications, (LS&C) meeting in Toronto in 2011 (Figure 3-8). While the RSAS SALTI system employed a fiber-based linear FMCW system like the Aerospace system, the LMCT system used a wide-bandwidth (7 GHz, 2 cm resolution), pulse-coded approach.

____________________

16 Ibid.

17 B. Krause, J. Buck, C. Ryan, D. Hwang, P. Kondratko, A. Malm, A. Gleason, and S. Ashby, 2011, “Synthetic aperture ladar flight demonstration,” in CLEO:2011, Laser Applications to Photonic Applications, OSA Technical Digest (CD) (Optical Society of America, 2011), PDPB7.

FIGURE 3-7 SAL-boat target and mosaicked SAL image results. The real-aperture diffraction-limited illuminating spot size is represented at the right. A picture of the target is shown at the left. This target consists of the same retroreflective material used for the triangle images, placed behind a transparency containing the negative of the sailboat image. The image was formed by scanning the target in overlapping strips and then pasting these images together to form a larger image. Some degradation is present due to the phase-screening effects of the transparency film; however, the pattern of the retroreflective material is clearly visible. The range to target in this example was ~2 m, with range diversity achieved by placing the target at a 45 degree angle with respect to the incident light.

SOURCE: S.M. Beck et al. op. cit.

FIGURE 3-8 SAL demonstration images. (a) Photograph of the target. (b) SAL image, no corner cube glints. Cross range resolution = 3.3 cm, 30× improvement over the spot size. Total synthetic aperture = 1.7 m, divided into 10 cm subapertures and incoherently averaged to reduce speckle noise. (c) SAL image with corner cube glint references for clean phase error measurement. Cross range resolution = 2.5 cm, 40× improvement over the spot size. Total synthetic aperture = 5.3 m, divided into 10 cm subapertures and incoherently averaged to reduce speckle noise.

SOURCE: B. Krause, J. Buck, C. Ryan, D. Hwang, P. Kondratko, A. Malm, A. Gleason, and S. Ashby, 2011, “Synthetic aperture ladar flight demonstration,” in CLEO:2011 -Laser Applications to Photonic Applications, OSA Technical Digest (CD) (Optical Society of America, 2011), PDPB7.

The range resolution of any ladar, including SAL, is limited by the transmitted waveform bandwidth, assuming the receiver can capture the returned signal at this bandwidth. This limits performance from technologies that cannot achieve significant transmitter bandwidth (such as CO2 lasers as employed in the Northrop-Grumman (NGES) approach to the SALTI program). In the absence of bandwidth, angular resolution in the cross-motion direction is limited by the size of the real aperture, usually in elevation. The diffraction limit in this dimension can be expanded by a factor of almost two using techniques described in the MIMO section. The along-track angular resolution is limited by the size of the synthetic aperture:

![]()

where D is the real aperture diameter in the along-track direction and L is the distance moved in the along-track direction. In microwave SAR the size of the real aperture is neglected.

The next major limitation is on range performance (how far away the objected viewed can be, not the range resolution), which is constrained by both atmospheric transmission and the modest aperture sizes that aperture synthesis enables. The effect of the atmosphere is discussed further in a later section.

For example, consider the problem of achieving high spatial resolution at low elevation angle through long atmospheric slant paths. At shorter wavelengths, the diffraction-limited resolution is very good, but the propagation through the atmosphere, both from molecular scattering and aerosols, is quite limited. Range can be increased at longer wavelengths where the scattering attenuation is reduced, but at the expense of spatial resolution, until aperture sizes become impractical. Synthetic aperture techniques offer a solution to this dilemma by enabling operation at longer wavelengths, using aperture synthesis to achieve high resolution in the along-track direction with modest aperture sizes.

Of course the SWaP advantages of modest aperture sizes come at the price of not collecting as many photons on receive from each image resolution element, making synthetic aperture ladar a rather power-hungry technique, placing a premium on laser and detector efficiency, and driving laser power requirements. This is a fundamental consideration that whenever resolution is increased, it means photons are scattered from a smaller resolution cell. With a monolithic real aperture, the smaller resolution cell is accompanied by a proportional increase in aperture receiver collection area. In this case, the increase in resolution is not accompanied by a proportional increase in the aperture receiver collection area.

An engineering challenge for SAL is the ability to do motion compensation. The full sample of the field in the large pupil plane synthetic aperture is only available to extent that any movement while the pupil plane field is being collected has been removed. The quality of the motion compensation can limit the SAL resolution.

Atmospheric turbulence is expected to be a limiting phenomenon for SAL imaging at long range. A detailed model of the impact of refractive turbulence on image formation has been presented by Karr18 based on earlier treatments by Fried.19 A limited discussion of the effects of atmospheric turbulence on ladars is given in Chapter 4.

As a coherent ladar technique, the technology burdens on the receiver are relatively modest. Deramp-on-receive systems—linear frequency modulated (FM) systems with a chirped LO—have modest detector bandwidth requirements. They do not require the full bandwidth of the transmitted waveform, but just enough bandwidth to accommodate the range depth of the target. This is sometimes called stretch processing, with the LO chirping along with the return signal to reduce required detector bandwidth. Because the image formation is through synthetic aperture processing of the received signal, in principle only a single detector is required. If however only one detector is used, the area imaged would be limited to the diffraction limit of the real beam. This is not a problem in SAR, since real beam microwave resolution is so poor, but it would severely limit the area covered for SAL. In practical systems covering

____________________

18 T.J. Karr, 2003, “Synthetic aperture ladar resolution through turbulence,” Proc. SPIE 4976: 22.

19 D.L. Fried, 1966, “Optical resolution through a randomly inhomogeneous medium for very long and very short exposures,” Journal of the Optical Society of America, 56 (10): 1372.

TABLE 3-1 Required SAL Laser Power for Two Sets of Modeling Assumptions: Airborne System and Spaceborne System

| Airborne | Spaceborne | |

| SNR | 10 | 10 |

| D (m) | 0.05 | 0.5 |

| L | 15 | 15 |

| H (km) | 20 | 350 |

| DF | 0.5 | 0.5 |

| B (GHz) | 3.0 | 1.5 |

| P (W) | 90 | 2500 |

NOTE: D, real aperture diameter in along-track direction; L, distance moved in along-track direction; H, Height of the platform; DF, duty factor; B, bandwidth; P, Power.

SOURCE: W. Buell, N. Marechal, D. Kozlowski, R. Dickinson, S. Beck, 2002, “SAIL: Synthetic aperture imaging ladar,” Meeting of the MSS Specialty Group on Active E-O Systems, Vol. I, C15.

useful areas, typically one uses a modest array of photodetectors to build up multiple real-aperture spots to form an image of a moderate size area. The Raytheon SALTI system used a 1-D array of p-doped-intrinsic-n-doped (p-i-n) photodiodes and scanned that 1-D array. Synthetic aperture processing was performed on each detector separately. Although with sufficient LO power, coherent detection can reach the shot noise limit, there is still a premium on low-noise receivers in order to reduce the required LO power, especially for large arrays. Besides the detectors themselves, the optics of a coherent ladar system, particularly ones with an array of detector elements, can be quite challenging, since the wavefront overlap must be optimized for good heterodyne efficiency.

The requirements on the transmitter are more challenging. As noted above, the modest aperture size that SAL enables means that a smaller fraction of the return light is captured, which must be made up by increased transmitter power. Assuming resolution requirements and aperture diameter are held fixed, the required laser power for a SAL system scales as R3. Some example link budgets for notional SAL systems20 are reproduced in Table 3-1. In addition to the transmitter power requirements, SAL also places stringent requirements on local oscillator phase stability. The LO must remain phase coherent over the round trip of the range to target, unless a sample of the transmitted waveform is stored for use in beating against the return signal. One storage method to reduce coherence length requirements is to input a sample of the master oscillator into a long fiber delay and use the output of that fiber as an LO rather than creating the LO from the master oscillator as it exists when the signal is returned from the target. Finally, although less stringent than the LO phase stability requirements, the transmitter waveform quality can be challenging. For a linear FM system, the chirp must be linear to within, say, 20 degrees of phase error over the chirp. This requirement need not be levied entirely on the hardware, however. If transmit waveform phase errors can be monitored, they can be compensated for in the SAL processing. This is the approach taken in the early work at Aerospace. Later work by Bridger Photonics and the University of Montana21 employing similarly wide bandwidth chirps has achieved the required linearity through sophisticated laser frequency and phase stabilization techniques, simplifying the required processing. The

____________________

20 W. Buell, N. Marechal, D. Kozlowski, R. Dickinson, and S. Beck, 2002, “SAIL: Synthetic aperture imaging ladar,” Meeting of the MSS Specialty Group on Active E-O Systems, Vol. I, C15.

21 S. Crouch and Z.W. Barber, 2012, “Laboratory demonstrations of advanced synthetic aperture ladar techniques,” Optics Express, 20(22): 24237.

Bridger system has demonstrated resolutions of a few microns in laboratory settings and centimeter resolution in long-range field tests.

Pointing control on SAL systems is not overly stressing, as the transmitter illuminates an area large compared to the SAL spatial resolution. Pointing requirements are simply that the illumination covers the desired target area. Pointing knowledge is a much tighter requirement to a fraction of resolution element.

As noted above, several contractors have developed and demonstrated synthetic aperture ladar systems using a variety of technological approaches and with a significant range of performance parameters. The other country that has demonstrated significant interest in SAL imaging has been China, with a series of papers since 2004 addressing both hardware demonstrations22 (based largely on U.S. published results) and theoretical analyses, including an advanced algorithm for atmospheric compensation.23

It is reasonable to expect that the advantages of SAL and related advanced coherent active imaging techniques will drive the research, development, and deployment of such systems in a variety of countries. This will drive development of high power coherent laser systems capable of achieving wide bandwidth waveforms, as well as long coherence length LOs. Advances in modest size low-noise coherent receiver arrays and techniques for improving heterodyne mixing efficiency over array detectors can also be expected.

A closely related technology to SAL is inverse synthetic aperture ladar (ISAL), in which the transceiver is stationary and the target moves and/or changes aspect relative to the transceiver. This technology is of significant interest for ground-based imaging of space objects, including GEO satellites. ISAL has been the subject of research programs in the United States, such as the DARPA LongView program24 and research at AFRL.25 It has also been the subject of research in China.26,27 It is natural to expect that organizations researching SAL would also be researching ISAL. From the information available in the open literature, the technological developments in China along these lines are not as advanced as those in the United States, but there is ample evidence of interest in continued development.

Conclusion 3-3: Synthetic aperture ladar enables high-resolution active imaging at long range with modest size receiver optics. A synthetic aperture ladar has the potential to provide long-range, easily interpretable (person friendly) imagery because optical systems tend to use mostly diffuse scattering from the viewed object.

Conclusion 3-4: Significant foreign interest in synthetic aperture ladar technology has been demonstrated.

____________________

22 W. Jin, 2010, “Matched filter in synthetic aperture ladar imaging,” Acta Optica Sinica 2010-07.

23 L. Guo, M. Xing, Y. Tang, and J. Dan, 2008, “A novel modified omega-k algorithm for synthetic aperture imaging lidar through the atmosphere,” Sensors 8: 3056.

24http://www.globalsecurity.org/space/systems/long-view.htm.

25 C.J. Pellizzari, C.L. Matson, and R. Gudimetla, 2011, “Inverse synthetic aperture LADAR for geosynchronous space objects—Signal-to-noise analysis,” Proceedings of the Advanced Maui Optical and Space Surveillance Technologies Conference, Wailea, Maui, Hawaii, September 13-16, S. Ryan, ed.

26 X. Zhao, X. Zeng, C. Cao, Z. Feng, and C. Fu, 2009, “Research on inverse synthetic aperture ladar,” Proc. SPIE, 7382, International Symposium on Photoelectronic Detection and Imaging 2009: Laser Sensing and Imaging, August 27.

27 G. Liang, 2009, “Study on experiment and algorithm of synthetic aperture imaging lidar,” Ph.D. dissertation, Xi’an University of Electronic Science and Technology.

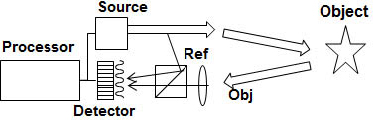

FIGURE 3-9 Diagram of off-axis holography experiment.

SOURCE: J. Marron, Raytheon, Space and Airborne Systems, “3D Holographic Laser Radar,” presentation to the committee, March 5, 2013.

In summary, synthetic aperture ladar is becoming an increasingly mature coherent ladar technology that offers considerable system-level and performance advantages over real-aperture imaging ladar systems. The technology base continues to evolve, enabling improved angular resolution and increased standoff range. It is reasonable to expect that this technology will proliferate to other countries and initial indicators of this can be seen.

DIGITAL HOLOGRAPHY/SPATIAL HETERODYNE

Digital holography, also referred to as spatial heterodyne detection, utilizes two light beams with a spatial/angular difference (as opposed to frequency difference) between them. In digital holography, the return signal from an illuminated object is coherently interfered with a reference beam. The reference beam could be a glint/retroreflector in close physical proximity to the object of interest or a local oscillator signal that transverses a similar optical path length to the return from the object in order to maintain coherence properties between the two optical beams. Figure 3-9 depicts a typical holographic arrangement using a reference or local oscillator beam. The laser is split into two beam paths; the transmitter path illuminates the target and the reflection from the target is interfered using a beam splitter with the reference beam on a detector focal plane array (FPA). The FPA for spatial heterodyne can be a framing array; it does not have to have high bandwidth, because only the fringes across the array are being detected, not any high bandwidth signals.

The interference between the object and reference beams is recorded on the charge-coupled device (CCD) detector. Although detectors record only intensity and do not directly preserve the phase profile of the electric field, digital holography provides a means to extract the spatial phase variation across an optical aperture using the spatial beat frequency between the signal and the LO. As previously discussed in the section on synthetic aperture ladar, having access to both the amplitude and phase of the optical field enables capabilities not readily possible with intensity-only imaging; with digital post-processing, the exact electric field at any point can be calculated from spatial heterodyne phase extraction. This can allow for digital refocusing and 3-D imaging.

When the scenario or requirements allow a retroreflector to be placed in proximity to the target, the coherence requirements on the system may be lessened. In addition, aberrations imparted by turbulence in the atmosphere are nearly identical if the retroreflector is within the same isoplanatic patch as the target, meaning that the effects of turbulence will be minimized. Early long-range holography experiments were performed at up to 12 km.28

In heterodyne detection, the image term is proportional to the strength of the LO component multiplied by the strength of the object component; an extremely weak image signal can be magnified by

____________________

28 J.W. Goodman, D.W. Jackson, M. Lehmann, and J. Knotts, 1969,” Experiments in long-distance holographic imagery,” Appl Optics 8: 1581.

a strong reference or LO signal. This can be strongly advantageous when extracting the image component of interest. An important feature of a strong LO arrangement is that the object’s autocorrelation term will be extremely weak and negligible compared to the strength of the image term, allowing the use of lower spatial frequencies while still achieving separation between image terms.29 The maximum strength of the local oscillator will be limited by the electron well capacity of the detector pixel. Detector saturation occurs when the well capacity is exceeded.

Techniques using this technology have several different names. In additional to digital holography, spatial heterodyne detection and lensless imaging, scientists and engineers in the field also refer to this technology as “holographic aperture ladar”30 and “spatially processed image detection and ranging (SPIDAR).”31

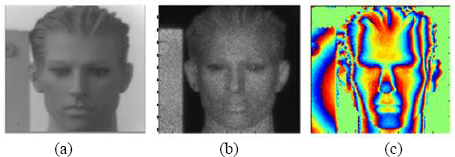

In addition to performing single-wavelength digital holography, multiple-wavelength holography is a subset that allows for fine-resolution 3-D imaging. Figure 3-10 shows results using multiple-wavelength digital holography. As shown in Figure 3-10 (b) and (c), active imaging provides intensity and phase information about the object, giving both shading and, with multiple wavelengths, depth information.

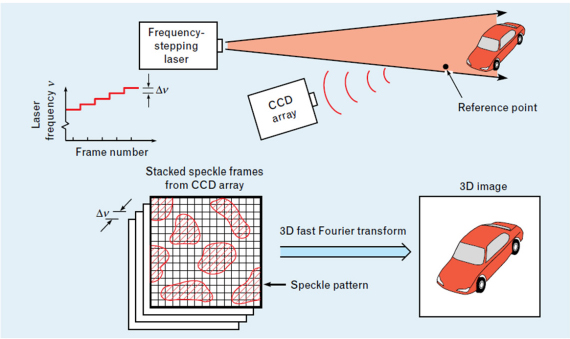

By utilizing two wavelengths, a contour map of the object surface can be generated by recording two holograms at two wavelengths; a fringe pattern is generated by reconstructing and superimposing the holograms.32 Alternatively, using a tunable laser source, a series of holograms are recorded for a set of laser frequencies.33 The collected dataset has of coordinates of (spatial frequency, spatial frequency, laser frequency). By Fourier transforming the hologram data, the resulting image has coordinates of (angle, angle, range). The range resolution ΔRres of the system is given by:34

![]()

where Δνtot is the total frequency bandwidth over which the source is tuned. Additionally, the range ambiguity interval is given by ΔRunamb = c /(2 Δνinc) where Δνinc is the frequency sampling increment for the laser. Using multiple-wavelength holography, it is possible to measure a large range with high depth resolution without 2π ambiguities of the phase difference that can occur with the two-wavelength version.

Digital holographic EO sensing has military applications in intelligence, surveillance, and reconnaissance (ISR), target tracking, target identification, and directed energy. Beyond these specific applications, digital holographic EO sensing is more broadly used in biomedical imaging/microscopy, imaging through scattering media, horizontal path imaging, and 3-D imaging for commercial and entertainment purposes. A broad overview of the latest advancements in digital holography was published recently35 and articles therein discuss research topics across a wide variety of applications.

Digital holography is a sensing technology. However, the outcomes of spatial heterodyne detection may influence the overall system architecture for a larger system, such as a directed energy system, which needs to transmit a laser beam. Using digital holography, phase aberrations present in the atmosphere between the object and sensor can be estimated. Electro-optic phase modulators, liquid crystal spatial light modulators, or piezo mirrors can pre-distort the outgoing illumination beam to compensate

____________________

29 J.C. Marron, 2009, “Photon noise in digital holographic detection,” AFRL-RD-PS-TP-2009-1006.

30 B.D. Duncan and M.P. Dierking, 2009,” Holographic aperture ladar,” Appl. Opt. 48: 1168.

31 J.C. Marron, 2008, “Spatially processed image detection and ranging (SPIDAR),” in IEEE LEOS Meeting (IEEE 2008), 509.

32 B. Hildebrand and K. Haines, 1967, “Multiple-wavelength and multiple-source holography applied to contour generation,” J. Opt. Soc. Am. 57: 155.

33 A. Wada, M. Kato, and Y. Ishii, 2008, “Multiple-wavelength digital holographic interferometry using tunable laser diodes,” Appl. Optics 47: 2053.

34 J.C. Marron and K.S. Schroeder, 1993, “Holographic laser-radar,” Opt. Lett. 18: 385.

35 M.K. Kim, Y. Hayasaki, P. Picart, and J. Rosen, 2013, “Digital holography and 3D imaging: introduction to feature issue,” Appl Optics 52: Dh1-Dh1.

FIGURE 3-10 (a) Passive broadband image of a mannequin taken at a 100 m range using 1.0 to 1.65 micron light; (b) active image of same object using 1.6 µm illumination; (c) 3-D phase difference acquired through active illumination. SOURCE: J.C. Marron, R.L. Kendrick, S.T. Thurman, N.L. Seldomridge, T.D. Grow, C.W. Embry, and A.T. Bratcher, 2010, “Extended-range digital holographic imaging,” Proc. SPIE, Laser Radar Technology and Applications XV, 7684, 76841J.

for these aberrations. As a result, digital holography may influence the entire system architecture, from how outgoing beams are transmitted to how return beams are received.

For the current horizontal path imaging, published results show an extended range of 1.5 km and voxel dimensions of 3 cm. Work was performed at 1.6 µm using an Er:YAG laser. The detector array was a commercial InGaAs array with 640 × 512 pixels. Images were taken of a truck, a mannequin, a resolution chart, a model missile, and some calibration blocks.

Spatial heterodyning is an active, emerging technology. Work has been performed in research laboratories as well as field test demonstrations.36 Trends in this area have recently focused on variations of aperture and focal plane array arrangements, discussed more in the next section on multiple-input, multiple output (MIMO) receiver and transmitter geometries. Digital holography was first performed using a single focal plane array. In order to increase the aperture size, current work is geared toward optimizing this technology for multiple apertures (with gaps between adjacent apertures)37,38 and synthetic apertures (combining multiple apertures together to form a zero-gap, larger full aperture).39,40,41,42 Proper phasing between individual arrays increases the complexity of these multiaperture apertures compared to a single FPA; this is a current area of research.43

The maximum angular resolution is set by the full effective FPA width when the image is sampled in the pupil plane. Therefore, a larger effective FPA size will result in finer angular resolution for a given wavelength and imaging distance. Utilizing synthetic aperture techniques with spatial heterodyning can further increase the effective array size. Optical magnification can also improve the

____________________

36 J.C. Marron, R.L. Kendrick, N. Seldomridge, T.D. Grow, and T.A. Hoft, 2009, “Atmospheric turbulence correction using digital holographic detection: Experimental results,” Opt. Exp. 17: 11638.

37 J.W. Haus, N.J. Miller, P. McManamon, and D. Shemano, 2011, “Digital holography for coherent imaging for multi-aperture laser radar,” Conference paper, Digital Holography and Three-Dimensional Imaging, Tokyo Japan, May 9-11, Optical Society of America, p. DMA3.

38 R.L. Kendrick, J.C. Marron, and R. Benson, 2009, “Anisoplanatic wavefront error estimation using coherent imaging,” in Coherent Laser Radar Conference (Toulouse, France), 205.

39 A.E. Tippie, A. Kumar, and J.R. Fienup, 2011, “High-resolution synthetic-aperture digital holography with digital phase and pupil correction,” Opt. Exp. 19: 12027.

40 J.H. Massig, 2002, “Digital off-axis holography with a synthetic aperture,” Opt. Lett. 27: 2179.

41 D. Claus, 2010, “High resolution digital holographic synthetic aperture applied to deformation measurement and extended depth of field method,” Appl. Opt. 49: 3187.

42 R. Binet, J. Colineau, and J.C. Lehureau, 2002, “Short-range synthetic aperture imaging at 633 nm by digital holography,” Appl. Opt. 41: 4775.

43 B.K. Gunturk, N.J. Miller, and E.A. Watson, 2012, “Camera phasing in multi-aperture coherent imaging,” Opt Express 20: 11796.

angular resolution by effectively expanding the size of the FPA. The field of view of the system will be determined by the pixel pitch of the detector elements. The smaller the pixel pitch, the larger the field of view for pupil plane based imaging. As with many optical systems, the push is still toward larger and larger arrays and smaller and smaller pixels. The roles of pixel pitch and FPA arrays size are reversed when the FPA is placed in the image plane.

Laser requirements are an important consideration for digital holographic imaging. This technology requires coherent illumination with narrow linewidths in order to record the interference between the local oscillator and object return signal. Narrow linewidths and coherence length requirements are inherent issues for all types of coherent imaging; the section on synthetic aperture ladar previously discussed provides details regarding these issues. The narrow linewidth required may be considered a constraint of the technology, as the current cost of these lasers is significant.

Another fundamental drawback of spatial heterodyning is the speckle effects inherently present in highly coherent light. Speckle effectively reduces the angular resolution from the theoretical limit;44 techniques such as averaging independent speckle realizations can be performed to reduce the speckle.45 Independent speckle realizations require multiple exposures and/or dividing the full aperture into subapertures, thus increasing the acquisition and processing time.

Atmospheric turbulence may ultimately be an external fundamental limit affecting this technology. Although digital holography allows for phase aberration correction, it may be limited over a small isoplanatic patch if the turbulence is severe enough and evenly distributed throughout the imaging path. 46

As an emerging technology, significant indicators of technology development will most likely be seen through research funding and publications in universities, research institutions, government laboratories, or private industry. Demonstration projects are most likely; production scaling would indicate significant progress and acceptance of digital holography. The further development of digital holography for commercial applications such as 3-D technology for entertainment could drive this technology forward as well.

Spatial heterodyne has two main advantages over both passive imaging systems and many other forms of ladar systems. First, spatial heterodyning is potentially a “lensless” imaging technique. Unlike conventional imaging techniques that use lenses and other optics to form the conjugate of the image on the detector plane, spatially heterodyne systems can map the pupil plane directly onto the detector. The image is then digitally converted from the recorded detection. From a practical standpoint, SWaP may be reduced compared to these other conventional systems since the weight and volume of the optics/lenses can be removed. As the desire to create larger and larger focal planes to obtain higher imaging angular resolution increases, the weight and volume of the corresponding optics for a focal plane imaging system also increases significantly. Using a spatial heterodyne imaging modality, the focal plane aperture area can continue to increase with less burden on SWaP requirements.

If the receive aperture is not a 1:1 ratio with the FPA, a telescope is required to adjust the size of the pupil plane. However, in synthetic aperture digital holography, multiple smaller telescopes may be used, still allowing for a reduction in volume and weight compared to a single (longer) focal length telescope for the entire FPA.

Atmospheric turbulence can severely degrade both the outgoing illumination beam as well as the return signal, resulting in the need for higher laser power from the increased beam divergence and reduced angular resolution and degraded imagery. Even as the aperture size of the imaging system is increased for higher angular resolution capabilities, atmospheric turbulence effects can undermine and limit the achievable angular resolution. In astronomy good seeing conditions for telescopes, defined by the coherence area, the area over which the incoming light is considered to be spatially correlated, is

____________________

44 A. Kozma, and C.R. Christensen, 1976, “Effects of speckle on resolution,” J Opt Soc Am 66: 1257.

45 J.W. Goodman, 2007, Speckle Phenomena in Optics: Theory and Applications, Roberts & Co.

46 A.E. Tippie and J.R. Fienup, 2010, “Multiple-plane anisoplanatic phase correction in a laboratory digital holography experiment,” Opt. Lett. 35: 3291.

typically on the order of 10 cm. For ground-to-ground or horizontal path imaging scenarios, the coherence area is even smaller. Adaptive optics using wavefront sensors and deformable mirrors are one way astronomers combat the turbulence problem while at the same time adding weight and complexity to the complete imaging system. Digital holographic techniques can address some of these atmospheric turbulence effects directly; initial results are presented in Marron et al.47 and Tippie and Fienup.48

The fundamental limits associated with digital holography—laser coherence requirements, speckle effects, and degradation in imaging due to atmospheric turbulence—would be factors that may be seen as downsides to implementing this technology.

Since pupil-plane spatial heterodyne does not directly acquire focal plane images on the detector array, processing is required to extract the desired information. In the case of optimizing for aberration correction and/or proper phasing of multiapertures, additional computation is required. As the focal plane size continues to increase, the computation burden will scale as well. Dedicated hardware and parallelization of processes will be required to reduce the processing time as much as possible. Parallel implementation or use of graphics cards may reduce the computation time for the image reconstruction process. As developments in processing continue, the burden of postprocessing for image correction will lessen.

As an emerging technology, there is strong possibility for digital holography to grow. As mentioned previously, digital holography has applicability in intelligence, surveillance and reconnaissance (ISR), target tracking, target identification, and directed energy. As the technology continues to advance, digital holographic systems will likely be designed with these applications in mind. Advancements in narrow laser linewidths and laser power will have direct impact on the ranging capabilities of this technology, as longer and longer ranges are desired.

If digital holography is implemented as “lensless imaging,” one possible future capability would be the use of digital holography with conformal FPAs. An array of conformal focal planes could match the shape of the surface of the designated platform (vehicle, plane, etc.), enabling more flexibility in design, as well as the collection and capture of returned light. However, many serious technical issues such as the conformal focal planes technology itself, as well as analysis and implementation of local oscillator illumination of a curved surface, would need to be researched and proven before such designs could be considered.

In the broad field of digital holography, researchers worldwide are actively involved in this field of research. Key countries outside the United States include France, Germany, Israel, Japan, and China.49 While the United States may be considered a leader in digital holography for remote sensing applications, researchers in Pacific Rim countries continue to make significant progress in holography for commercial applications for 3-D imaging and display.

In summary, current trends in spatial heterodyning have focused on variations of aperture and FPA arrangements using multiple apertures (with gaps between adjacent apertures) and using motion to form synthetic apertures (combining multiple apertures sampled at different times to form a zero-gap, larger full aperture).

Conclusion 3-5: Digital holography/spatial heterodyne is a growing segment of active EO sensing, evidenced by conferences being held across the world that cover the diverse applications of this technical area.

____________________

47 J.C. Marron, R.L. Kendrick, N. Seldomridge, T.D. Grow, and T.A. Hoft, 2009, “Atmospheric turbulence correction using digital holographic detection: Experimental results,” Opt. Exp. 17: 11638.

48 A.E. Tippie and J.R. Fienup, 2010, “Multiple-plane anisoplanatic phase correction in a laboratory digital holography experiment,” Opt. Lett. 35: 3291.

49 See, for example, H. Luo, X.H. Yuan, and Y. Zeng, 2013, “Range accuracy of photon heterodyne detection with laser pulse based on Geiger-mode APD,” Optics Express 21(16): 18983.

MULTIPLE INPUT, MULTIPLE OUTPUT ACTIVE ELECTRO-OPTICAL SENSING

Using multiple transmitter apertures and multiple receive apertures allows design flexibility not present in monolithic apertures. SAR is comprised of a single moving aperture, so the transmit and receive apertures both move.50,51,52,53 A SAL also uses motion of the single aperture to synthesize a larger effective aperture. For both SAR and SAL, it is as though the flown distance is a synthetic aperture almost twice as large as the actual flown distance, because the angle of incidence is equal to the angle of reflection. This has been experimentally demonstrated over decades in the microwave region and, recently in the optical regime, when SAL was demonstrated.54,55 Duncan56 provides a crossrange resolution equation for spotlight-mode synthetic aperture ladar:

![]()

where D is the size of the aperture, R is the distance to the object, λ is the wavelength, and L is the distance moved. As a result, the effective aperture from a diffraction point of view is

![]()

For RF systems, the real aperture is tiny (on the order of meters) compared to the distance moved in a SAR (likely multiple kilometers), so the size of the real aperture is neglected, making the effective aperture twice as large as a monolithic aperture of width equal to the distance flown. In an EO system, the real aperture can be a significant fraction of the size of the moved aperture, so the Dreal term is retained.

Instead of using motion to synthesize a larger effective aperture, MIMO active EO sensing uses multiple physical subapertures to create a larger effective aperture. An array of receive-only subapertures can synthesize an effective aperture as large as the receive array if the field across the array can be measured or estimated. With an array of transmit and receive subapertures, even more flexibility is obtained, so long as it is possible on receive to identify which transmitter each photon initially came from.

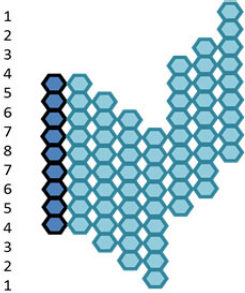

One effect of multiple transmit and receive subapertures is increased angular resolution similar to the angular resolution increase from motion based synthetic aperture sensors. Instead of motion, an array of n subapertures that both transmit and receive can be used. For nine subapertures in a row, the array will have a diffraction limit consistent with a monolithic aperture that is 1.89 times as large in diameter as the array. This is because eight subapertures are equivalent to the distance moved, L, while one subaperture is equivalent to the real aperture, Dreal. If transmission occurs from one subaperture in the middle of an array, the receive aperture array is effectively in its normal location. If the transmit beam is moved up one subaperture, it is as though the receive aperture were moved down one subaperture. If this process is continued, the result is something like what is shown in Figure 3-11, where the lighter color linear arrays indicate the perceived location of the linear receive array, depending on which transmit subaperture is used. The dark color column shows the actual location of the arrays. The full extent of the linear arrays is

____________________

50 M.I. Skolnik, 1990, Radar Handbook (2nd ed.) McGraw-Hill, New York. Chapter 17, by Roger Sullivan Eq. 17.1 and 17.2, Figure 17.2.

51 M. Soumekh, 1999, Synthetic Aperture Radar Signal Processing with Matlab Algorithms, Wiley, New York, Section 2.6, Cross Range Resolution.

52 M.A. Richards, 2005, Fundamentals of Radar Signal Processing, McGraw-Hill, New York. Chapter 8.

53 M.I. Skolnik, 1980, Introduction to Radar Systems (2nd ed.), McGraw-Hill, New York. Chapter 14.

54 B. Krause et al., 2011, “Synthetic aperture ladar flight demonstration,” Conference on Lasers and Electro-Optics, PDPB7.

55 S.M. Beck et al., 2005, “Synthetic-aperture imaging laser radar: Laboratory demonstration and signal processing,” Appl. Opt. 44: 7621.

56 B.D. Duncan and M.P. Dierking, 2009, “Holographic aperture ladar,” Applied Optics 48(6): 1168.

FIGURE 3-11 Effective receiver aperture placement based on transmit subaperture utilized. Dark color shows actual location of the arrays. Light color shows perceived location. The number on the left shows how many receive subapertures are perceived to be at that location.

1.89 times larger, but it is sampled more near the middle of the effective aperture, represented by eight samples in the middle down to one on either end. This is similar to an apodized aperture.

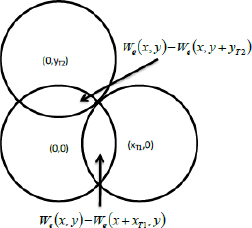

The potential increased angular resolution from the arrays of transmit and receive subapertures is just one facet provided by MIMO imaging. If tagged transmitters are spaced at distances less than the size of a receive subaperture, it provides sampling that can allow closed-form solution to differences in atmospheric path across the subaperture array.57 An example of the resulting effective receiver pupil using illuminator diversity is shown in Figure 3-12. Because of the overlap, one can solve in a closed-form manner for the phase between subapertures, allowing its use to compensate for atmospheric phase disturbances in the pupil plane.

MIMO imaging systems are very new, and all of their uses have probably not yet been discovered. Multiple transmitters of course can allow more rapid compensation for speckle because more realizations of speckle can be gathered rapidly.

MIMO techniques as described here can be implemented using either temporal or spatial heterodyne (digital holography) techniques. It will, however, be much easier to tag the emitted transmitter signals, allowing simultaneous transmission, if high bandwidth temporal heterodyne is used, since high bandwidth tagging schemes can then be used. RF MIMO techniques that use multiple simultaneous phase centers have been developed by Coutts et al.58 Tyler talks about using transmitter diversity to phase up laser beams on transmit.59 This could be used for an illuminator.

The angular resolution of an array of subapertures can be almost twice the resolution of the diffraction limit for a monolithic aperture. In addition, atmospheric turbulence between the object imaged and the sensor can be very quickly and accurately compensated. This compensation is relatively straightforward for turbulence in the pupil plane but will be more difficult for volume turbulence. These are narrow band sensors, but using multiple transmitters allows speckle mitigation. A significant

____________________

57 J.R. Fienup, 2000, “Phase error correction for synthetic-aperture phased-array imaging systems,” Proc. SPIE 4123-06: 47, Image Reconstruction from Incomplete Data, San Diego, Calif.

58 S. Coutts, K. Cuomo, J. McHarg, F. Robey, and D. Weikle, 2006, “Distributed coherent aperture measurements for next generation BMD radar,” Fourth IEEE Workshop on Sensor Array and Multichannel Processing.

59 G. Tyler, “Accommodation of speckle in object-based phasing,” 2012, J. Opt. Soc. Am. A 29(4).

FIGURE 3-12 Multiple subaperture overlap using three transmitters in a pattern about half a receive subaperture diameter apart. When images are adjusted to account for illuminator location, the receiver subaperture pupils overlap as shown.

SOURCE: D.J. Rabb, J.W. Stafford, and D.F. Jameson, 2011 “Non-iterative aberration correction of a multiple transmitter system,” Optics Express 19(25): 25048D.

limitation is that the received signal is captured in a smaller receive aperture area. The required laser power will increase by the ratio of the area of the monolithic aperture to the received aperture array area. Also, if the angular resolution is greater than a monolithic subaperture, less laser return will come from each image voxel.

Arrays of high temporal bandwidth detectors will be very helpful in implementing MIMO techniques for EO imaging. The papers cited sequenced through the multiple transmitters because they implemented MIMO using a digital holography/spatial heterodyne approach to imaging, using low bandwidth framing detector arrays. If high-bandwidth detector arrays and a temporal heterodyne approach to imaging are used, it should be possible to simultaneously emit multiple tagged transmitter beams and to have each receiver be able to distinguish the transmit aperture any photon came from. High-bandwidth detectors will allow high-bandwidth modulations to be imposed on each transmitted beam, and sorted on receive. Temporal heterodyne arrays will need to be AC-coupled, or have high dynamic range, or have high sensitivity such that temporal heterodyne can be implemented using a relatively weak LO.

A second technical hurdle to overcome is volume turbulence. Techniques for calculating and compensating for volume turbulence still need to be developed.

Published work in multiple aperture array active sensors systems using transmitter as well as receiver diversity would be one indicator of active interest in this area. Work in high-bandwidth detector arrays suitable for use in temporal heterodyne sensors would be another indicator.

MIMO technology will allow imaging with high angular resolution using much lighter and more compact aperture arrays than a monolithic aperture. An array of small subapertures can be much thinner and lighter than a monolithic aperture. Also, a MIMO approach can achieve almost twice the diffraction limited angular resolution of a monolithic aperture. Speckle averaging using multiple transmitters will be another advantage.

To be really useful in freezing the atmosphere, MIMO should be implemented using high-bandwidth detector arrays, which still need to be further developed. There will be a digital implementation requirement to conduct the required calculations. There will also be a need to have many different optical trains, complicating the optical system. Also, narrow line lasers will need to be used to

do either spatial or temporal heterodyne. Higher power lasers will be required to image a given area using a MIMO array compared with imaging with a monolithic aperture

This technology is well suited for long-range imaging applications from air or space. MIMO could be used in the cross-range dimension along with motion-based synthetic aperture imaging.

The United States appears to have a lead in this technology so far, but the developments to date have not been developments requiring large investments or significant infrastructure, so this lead could evaporate quickly. The United States also has a lead in high-bandwidth FPAs that can be used in MIMO applications. High-bandwidth FPAs are a more enduring lead in terms of infrastructure required to produce them, so that can help preserve the U.S. lead in this area.

In summary, MIMO approaches for active EO sensing can, at a minimum, increase the effective diameter of an aperture array by a factor of almost two and can allow multiple subapertures on receive to be phased using a closed-loop calculation of the phase difference between subapertures. This can compensate for atmospheric turbulence at least at some locations between the sensor and the imaged object.

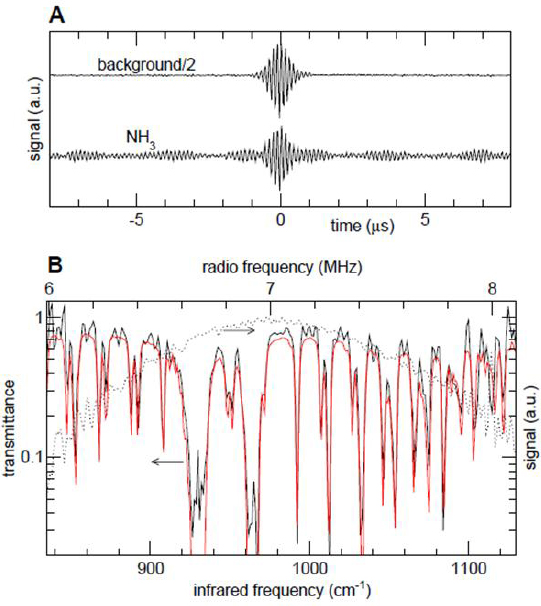

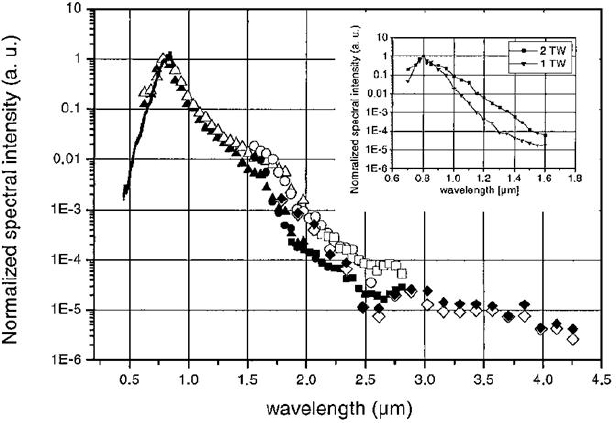

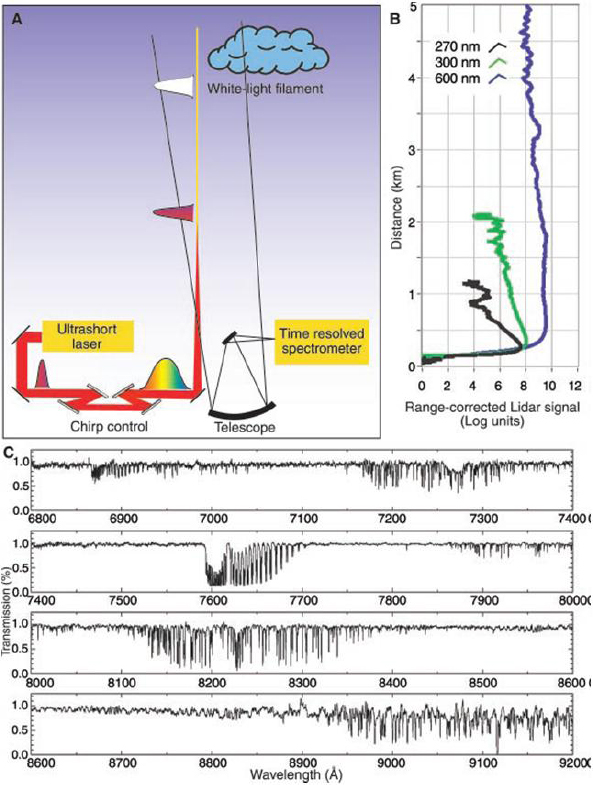

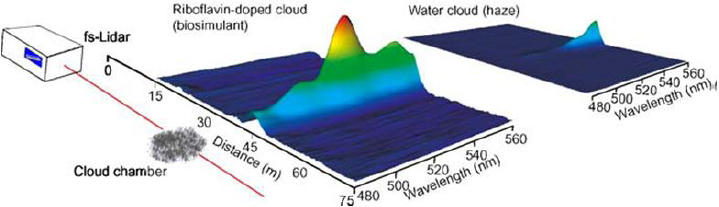

Conclusion 3-6: The multiple input, multiple output approach is a very promising active EO research area. At a minimum, it will be very valuable for longer-range imaging sensors, and is also likely to become valuable for many other applications.