Some of these collective functions can be captured by a centrally organized safety management system, but recent efforts in aviation and other disciplines have characterized how safety is reflected through the organization as a “safety culture.” Such a culture may be defined as “the product of individual and group values, attitudes, competencies, and patterns of behavior that determine the commitment to, and the style and proficiency of, an organization’s safety programs. While there are many methods for promoting a safety culture, and no one metric by which it may be judged, organizations with a positive safety culture are characterized by communications founded on mutual trust, by shared perceptions of the importance of safety, and by confidence in the efficacy of preventive measures” [U.K. Health and Safety Commission, as cited by Reason (1997, 194)]. The following paragraphs examine the extent to which FAA’s staffing processes may support or hinder key components of safety culture.22

While safety culture covers a broad range of concerns, several aspects of safety culture are important to this committee’s charter to examine FAA controller staffing. First, safety is promoted within an organization when it is informed, that is, when “those who manage and operate the system have current knowledge about the human, technical, organizational and environmental factors that determine the safety of the system” (Reason 1997, 195). Safety data would ideally be linked with facility-specific staffing data to determine, for example, whether the frequency of various types of incidents or safety reports is associated with staffing levels relative to a benchmark or standard. These and other analyses could provide insight into the effects of changes in staffing levels or practices on safety. Such analyses (and supporting data) with regard to the National Airspace System are scarce. Databases of potential value include but are not limited to FAA’s ATSAP, which allows controllers (and others) to identify and report safety and operational concerns voluntarily; the Aviation Safety Reporting System, which collects voluntarily submitted aviation safety incident and situation reports from pilots, controllers, and others; and operational databases that record when each controller is on duty and could be examined for a controller’s work history to determine whether fatigue, for example, may have contributed to an incident.

The committee was concerned with whether the requisite data are consistently collected and whether appropriate plans are in place to analyze the data to inform staffing decisions. For example, in reviewing the Traffic Analysis and Review Program (TARP), the OIG (2013b) pointed out shortcomings in the collection of program data and in integration with other data. GAO has pointed out shortcomings in FAA’s collection and analysis of incident data (GAO 2011; GAO 2012). Furthermore, the implementation of FRMS has been effectively curtailed by eliminating proactive research into fatigue risks and potential fatigue mitigations in controller staffing and scheduling.

FAA has effectively limited the ability of outside researchers to provide the independent and in-depth analyses of safety that, historically, were provided by the academic and research community. While the collected information in FAA’s Aviation Safety Information Analysis and Sharing (ASIAS) System23 features both public and proprietary data, many of the collected incident data are not available to outside researchers. A recent OIG (2013c) report criticized the limited use of ASIAS data by FAA’s own workforce, especially among its field inspectors, and recommended better dissemination of and guidance on the use of aggregated and de-identified

__________

22For more recent research on safety culture and air traffic control, see Mearns et al. 2013, Ek et al. 2007, and Gill and Shergill 2004.

23As the data steward and integrator for ASIAS data, the MITRE Corporation maintains all the data and prepares any aggregated results for the purposes of information sharing.

ASIAS data to help in distinguishing safety trends. FAA’s external Research, Engineering, and Development Advisory Committee recently wrote the FAA Administrator as follows: “Realizing the full potential of ‘Big Data’ will require development of data access policies allowing the most open possible access to researchers and other users while providing appropriate data protections … enabling a level of effort in this data analysis greater than can be conducted in-house by the FAA alone.”24

A second aspect of a safety culture is the promotion of safety within an organization through the fostering of reporting by members of the workforce themselves about hazards that they experience. This reporting function is the intent of FAA’s ATSAP. The committee received a general briefing on the program and a copy of the form that controllers fill out when they file a report, but it did not receive responses to detailed requests for data or analyses performed by FAA on the data and thus could not assess the program directly.25 Noting similar concerns with whether FAA is suitably informed by appropriate collection of accident data and by the collection and integration of TARP data, the OIG pointed out shortcomings in the collection and use of ATSAP reports provided by controllers in 2012 and 2013 (OIG 2012b; OIG 2013b). The committee’s discussions with FAA officials indicated that efforts to perform such analyses are expanding and that there are difficulties with “stove-piped” data sets, where one organization within FAA may be unaware of or find difficulty in accessing and incorporating data sets held by other organizations.

A third important aspect of a safety culture is that it must foster learning from history and from current experiences and translate such learning into the appropriate reforms. At the facility level a positive step is the nascent Partnership for Safety program, which seeks to establish (by June 2014) local safety councils at each air traffic facility to identify and resolve safety concerns as they are reported. As of January 2014, 22 of 23 en route facilities and 84 of 292 terminal facilities had completed local safety council training.26 Accounts suggest that at least some controllers may perceive that they do not have the time to contribute ATSAP reports and join in the local safety councils or that the reports are not worth providing because they do not lead to improvements, which may temper the impact of these collaborative efforts.27 More objectively, the committee found that the time required by controllers to participate in these councils is not included in the processes for determining facilities’ staffing levels, as described in Chapter 3. Thus, the formation of these councils has no impact on the assessment of required staffing levels. In the committee’s view, this suggests that a reporting culture may not be fostered in facilities where staffing concerns may warrant monitoring yet limit controller availability for providing reports and participating in collaborative safety teams.

Activities such as the Partnership for Safety program seek not only to gather and analyze data but also to translate the information into actionable responses that will prevent, reduce, or mitigate safety concerns. In the committee’s view, this aspect of safety culture is obstructed by a perceived lack of transparency in determining staffing targets and in carrying out hiring and

__________

24Letter to the Hon. Michael Huerta, October 2, 2013.

25For example, in a briefing to the committee, FAA reported that approximately 10 percent of the 30,000 ATSAP reports submitted since May 2011 contained fatigue-related input (Rick Huss, FAA, November 22, 2013). The committee was unable to obtain additional details about this input or how FAA uses it.

26PowerPoint document titled “Officers Group Brief: Safety and Technical Training, January 2014” provided to the committee on February 19, 2014.

27Andrew LeBovidge, committee member and NATCA representative, personal communication to Safety subgroup of the committee, March 19, 2013. Also, Dean Iacopelli and Eugene Freedman, NATCA, presentation to the committee, January 2013.

transfers to achieve those targets. As a consequence, staffing levels that support robust data collection, analysis, and subsequent corrective action may be compromised. Similarly, the benefits of FRMS will be achieved only when the appropriate tools are in place and when the reasons for changes in scheduling according to fatigue risk management best practices are substantiated and clearly communicated.

This chapter reviews the safety record of aviation and the role that air traffic controllers play in that record. The chapter considers how best to analyze any possible relationship between aviation safety and ATC staffing. GA accounted for 94 percent of both total accidents and all fatal accidents and for 82 percent of all fatalities over the past 22 years—far more than commercial aviation, which included air carriers and air taxis and commuters.

According to NTSB accident investigations reviewed by the committee, ATC was considered either a cause or a factor in 66 fatal accidents during the same 22-year period, or about 0.8 percent of all fatal accidents, which indicates that ATC has not been a major cause of or factor in aviation accidents in the past. Of the 66 fatal accidents linked to ATC, GA accounted for 86 percent.

Discussions of ATC safety often focus on maintaining safe separation between aircraft and on incident data that concentrate on loss of separation. Even controller workload models tend to focus on tasks related to separation. Yet almost 70 percent of fatal ATC-related accidents involve single aircraft and controllers’ failure to provide required safety information, and 91 percent of those fatal accidents involved GA. Staffing models of controller workload and analyses of safety data that include controller duties other than preventing loss of separation—such as providing safety information to pilots—would more accurately reflect controllers’ impact on accidents and would result in a more refined baseline of staffing requirements.

Published OIG reports indicate that contract towers deliver ATC services at a comparable quality level, provide these services at a lower cost, and have a lower number and rate of safety incidents than similar FAA towers. The committee has reservations about OIG’s findings relating to safety because of (a) the difficulty of establishing analogous groups of FAA and contract towers for comparison purposes and (b) the differences in safety reporting practices at the two types of tower. In addition, GAO reports that comparison of operational error rates alone is insufficient to draw conclusions about the relative safety records of different ATC facilities.

Fatigue is a risk factor for errors and accidents frequently encountered in ATC operations. The risks associated with controller fatigue are well known, and efforts to educate controllers about fatigue issues and to use best practices that address fatigue through more efficient scheduling are encouraged.

Because of the limited fatigue-related data that have been collected in accident and incident reports, the committee is unable to determine the extent to which fatigue has affected safety. The treatment of fatigue concerns in accident and incident reports by FAA and NTSB has been variable, and important elements of information relative to fatigue are often not included.

The full implementation of an FRMS could mitigate fatigue in a more comprehensive manner and could help support the requirement for better data collection and reporting. However, recent budget cuts threaten to limit FRMS’s capability of monitoring for fatigue concerns and taking appropriate actions.

Any safety management system needs a positive safety culture to reinforce an organization’s safety goals. A positive safety culture does not just materialize; it develops over time and is characterized and maintained through vital components such as the sharing of information, reporting, and continuous monitoring and learning—functions that must be accounted for within staffing plans.

Finding 2-1. FAA’s and NTSB’s methods for collecting and categorizing safety data are insufficient for establishing the relationship between ATC-related accidents and incidents and staffing indicators such as overall staffing levels relative to staffing targets and overtime.

Finding 2-2. FRMS has a scientific basis in other domains and with other ANSPs. Full execution affects staff planning, generation of intended schedules during staffing implementation, and adjustments to schedules during day-to-day operations. However, there are still practices (like the popular 2-2-1 schedule) that appear questionable from a fatigue management perspective, and mitigation may require adjustments in staffing levels. Recent initiatives to address fatigue through policy and training have not had sufficient follow-up evaluation to verify that they provide the intended fatigue risk mitigation. Furthermore, full implementation of FRMS will require the proper tools for scheduling controllers’ shifts and adjusting them on short notice in response to unexpected events and for relating these schedules to staffing levels during staff planning.

Finding 2-3. Safety results not just from the performance of individual controllers but also from the ability of the organization to foster a collective safety culture. The safety culture is affected by staffing, since staffing levels either foster or obstruct reporting, evaluation, and learning within the organization. Such reporting, evaluation, and learning are necessary for the implementation of a safety management system (including safety management focusing on fatigue, i.e., FRMS).

Recommendation 2-1. To analyze the fatigue-related hazards associated with staffing levels and to assess the effectiveness of mitigation strategies, FAA should ensure that factors increasing the likelihood of fatigue are clearly documented in incident and accident reports. Such factors can include information on prior shift schedules, time on position, and traffic density and complexity.

Recommendation 2-2. The safety of the National Airspace System has highest priority. FAA should ensure full implementation of an FRMS, with sufficient resources for proactive analysis of potential fatigue concerns and mitigations and with follow-up evaluation of all changes in policy, training, and scheduling. Before claiming success for any recent (and future) initiatives addressing controller fatigue, FAA must ensure that these initiatives are assessed properly and are having their intended impact once they are implemented at local facilities.

Recommendation 2-3. FAA should accelerate the implementation of a scheduling tool that can be used to determine staffing standards and to generate schedules at the facility level (e.g., OPAS). Safe scheduling in terms of fatigue may require the incorporation of biomathematical models into a tool that can optimize schedules relative to staffing and to fatigue, or at least illuminate schedules that do not reflect best practices with regard to fatigue management.

Recommendation 2-4. FAA should ensure that staffing levels are sufficient to foster appropriate reporting by controllers and to enable controller involvement in organizational learning and responses to safety issues (such as safety councils). In support of recent collaborative initiatives between FAA and NATCA, FAA should monitor for situations or facilities where staffing and scheduling issues may be obstructing full controller participation in these safety functions.

Recommendation 2-5. To ensure that staffing decisions are properly informed and to help communicate the basis of these decisions throughout the organization, FAA should promote data collection and analysis to improve identification of key relationships between controller staffing and safety. Specifically, (a) the data should consistently include relevant staffing and fatigue concerns so that they can be considered in the analysis, considered in accident and incident investigations, and incorporated into the appropriate questions in the forms underlying safety reports; (b) the data should be compiled and stored in a way that allows integration of data sets (e.g., integration of staffing records with incident reports); (c) the appropriate data analyses should be conducted to examine where and how controllers have contributed to accidents and incidents to help inform the models of controller activity underlying staffing standards—including whether controllers have adequate time both to separate traffic and to issue safety alerts, as required by FAA Order 7110.65—and to identify relationships between staffing and safety; and (d) especially in view of the limitations on FAA resources, FAA should ensure that enough researchers have access to the data to provide the range of perspectives and expertise necessary for identifying how staffing concerns relate to safety.

REFERENCES

Abbreviations

| GAO | Government Accountability Office |

| ICAO | International Civil Aviation Organization |

| OIG | Office of Inspector General, U.S. Department of Transportation |

Ek, Å., R. Akselsson, M. Arvidsson, and C. R. Johansson. 2007. Safety Culture in Swedish Air Traffic Control. Safety Science, Vol. 45, pp. 791–811.

Elias, B., C. T. Brass, and R. S. Kirk. 2013. Sequestration at the Federal Aviation Administration (FAA): Air Traffic Controller Furloughs and Congressional Response. Report R43065. Congressional Research Service, May

GAO. 2003. Operational Errors Data. Report GAO-03-1175R, Sept. 23. http://www.gao.gov/assets/100/92204.pdf.

GAO. 2011. Enhanced Oversight and Improved Availability of Risk-Based Data Could Further Improve Safety. Report GAO-12-24, Oct. 5. http://www.gao.gov/products/GAO-12-24.

GAO. 2012. FAA Is Taking Steps to Improve Data, but Challenges for Managing Safety Risks Remain. Report GAO-12-660T, April 25. http://www.gao.gov/products/GAO-12-660T.

Gill, G. K., and G. S. Shergill. 2004. Perceptions of Safety Management and Safety Culture in the Aviation Industry in New Zealand. Journal of Air Transport Management, Vol. 10, pp. 231–237.

Grant Thornton, LLP. 2013. FAA’s Operational Planning and Scheduling: Impact of Adjusting Minimum Rest Between Shifts on Air Traffic Controller Staffing. White paper prepared for Federal Aviation Administration, Sept. 13.

ICAO. 2011. Fatigue Risk Management Systems: Manual for Operators.

Lim, J., and D. F. Dinges. 2008. Sleep Deprivation and Vigilant Attention. Annals of the New York Academy of Sciences, Vol. 1129, pp. 305–322.

Lim, J., and D. F. Dinges. 2010. A Meta-Analysis of the Impact of Short-Term Sleep Deprivation on Cognitive Variables. Psychological Bulletin, Vol. 136, pp. 375–389.

Lowe, P. 2013. Congress Rescues Controllers, Small Towers and Flies Home. Aviation International News, June.

Mearns, K., B. Kirwan, T. W. Reader, J. Jackson, R. Kennedy, and R. Gordon. 2013. Development of a Methodology for Understanding and Enhancing Safety Culture in Air Traffic Management. Safety Science, Vol. 53, pp. 123–133.

OIG. 1998. Federal Contract Tower Program. Report AV-1998-147, May 18. http://www.oig.dot.gov/sites/dot/files/pdfdocs/av1998147.pdf.

OIG. 2000. Contract Towers: Observations on FAA’s Study of Expanding the Program. Report AV-2000-079, April 12. http://www.oig.dot.gov/sites/dot/files/pdfdocs/av2000079.pdf.

OIG. 2001. Audit Report on Subcontracting Issues of the Contract Tower Program. Report AV-2002-068, Dec. 14. http://www.oig.dot.gov/sites/dot/files/pdfdocs/av2002068.pdf.

OIG. 2003. Safety, Cost, and Operational Metrics of the Federal Aviation Administration’s Visual Flight Rule Towers. Report AV-2003-057, Sept. 4. http://www.oig.dot.gov/sites/dot/files/pdfdocs/av2003057.pdf.

OIG. 2009. Air Traffic Control: Potential Fatigue Factors. Report AV-2009-065, June 29. http://www.oig.dot.gov/sites/dot/files/pdfdocs/WEB_FILE2_Controller_Fatigue_AV2009065.pdf.

OIG. 2012a. Contract Towers Continue to Provide Cost-Effective and Safe Air Traffic Services, but Improved Oversight of the Program Is Needed. Report AV-2013-009, Nov. 5. http://www.oig.dot.gov/sites/dot/files/FAA%20Federal%20Contract%20Tower%20Program%20Report%5E11-5-12.pdf.

OIG. 2012b. Long Term Success of ATSAP Will Require Improvements in Oversight, Accountability, and Transparency. Report AV-2012-152, July 19. http://www.oig.dot.gov/library-item/5859.

OIG. 2013a. FAA’s Controller Scheduling Practices Can Impact Human Fatigue, Controller Performance, and Agency Costs. Report AV-2013-120. http://www.oig.dot.gov/node/6195.

OIG. 2013b. FAA’s Efforts to Track and Mitigate Air Traffic Losses of Separation Are Limited by Data Collection and Implementation Challenges. Report AV-2013-046, Feb. 27. http://www.oig.dot.gov/library-item/6059.

OIG. 2013c. FAA’s Safety Data Analysis and Sharing System Shows Progress, but More Advanced Capabilities and Inspector Access Remain Limited. Report AV-2014-017, Dec. 18. http://www.oig.dot.gov/node/6270.

Oster, C. V., Jr., J. S. Strong, and C. K. Zorn. 2013. Analyzing Aviation Safety: Problems, Challenges, and Opportunities. Research in Transportation Economics, Vol. 43, pp. 148–164.

Pape, A., D. Wiegmann, and S. Shappell. 2001. Air Traffic Control (ATC) Related Accidents and Incidents: A Human Factors Analysis. Presented at 11th International Symposium on Aviation Psychology, Columbus, Ohio.

Reason, J. 1997. Managing the Risks of Organizational Accidents. Ashgate Publishing Company, Farnham, Surrey, United Kingdom.

Schroeder, D. J., R. R. Rosa, and L. A. Witt. 1998. Some Effects of 8- vs. 10-Hour Work Schedules on the Test Performance/Alertness of Air Traffic Control Specialists. International Journal of Industrial Ergonomics, Vol. 21, pp. 307–321.

Scovel, C. L., III. 2014. FAA’s Implementation of the FAA Modernization and Reform Act of 2012 Remains Incomplete. Statement before the Committee on Transportation and Infrastructure, Subcommittee on Aviation, United States House of Representatives, Feb. 5. http://transportation.house.gov/uploadedfiles/2014-02-05-scovel.pdf.

Signal, T. L., and P. H. Gander. 2007. Rapid Counterclockwise Shift Rotation in Air Traffic Control: Effects on Sleep and Night Work. Aviation, Space, and Environmental Medicine, Vol. 78, No. 9, pp. 878–885.

Evaluation of Staffing Standards

The Federal Aviation Administration (FAA) describes staffing standards as mathematically derived assessments of required staffing that relate controller workload and air traffic activity on the basis of “staffing to traffic.” This chapter describes how the standards are generated. FAA’s staffing standards are not the sole determinant of staffing levels; they are one of several inputs into staffing ranges and FAA’s hiring plan.

The chapter reviews the formal staffing models and the process underlying the staffing standards. It examines the process by which the standard is estimated for each facility. The two uses of the standards—as input for the staffing range and for determination of the staffing target for the hiring plan—are described. The chapter ends with a discussion of how the standards (and resulting staffing ranges) are communicated to the field, how feedback from the field is provided to headquarters for the central generation of staffing standards, and how the standards and their implementation may be improved by the coordination of scheduling at facilities with central planning.

MODEL-BASED GENERATION OF STAFFING STANDARDS

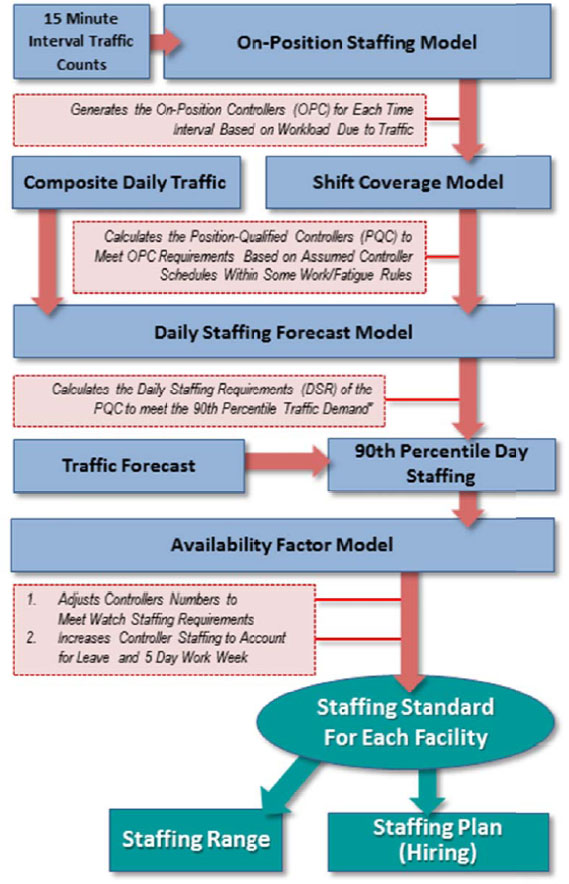

The staffing process up to the generation of the staffing standards is summarized in Figure 3-1. The standards are inputs to the staffing range published in the controller work plan and the hiring plan. The following subsections describe each of the processes in the order shown in Figure 3-1.

On-Position Staffing Models

The staffing standards process starts by applying models of controller activity and workload that identify the number of on-position controllers (OPCs) required to control traffic within 15-minute intervals. Because controllers in different facilities have significantly different tasks, separate models exist for en route centers, towers, and terminal radar approach control (TRACON) facilities. The en route model has been reviewed previously, but part of the committee’s charter is to examine that model in particular. The next section reviews the en route center on-position staffing model and discusses its status in the context of the prior reviews. The on-position staffing models for tower and TRACON facilities are then examined.

En Route Centers

As described in Chapter 1, an en route sector is staffed by one, two, or (rarely) three controllers, depending on the amount of traffic. Multiple sectors may be combined into one large sector or a large sector split into smaller sectors to create the correct balance between the amount of traffic within each sector and the number of OPCs.

A Transportation Research Board (TRB) committee (see TRB 1997) reviewed FAA’s staffing models for terminal facilities and en route centers and questioned the parameter then used: simple counts of the number of flights within a specific area of airspace. Although FAA’s

FIGURE 3-1 Planning process leading to generation of staffing standards as inputs to the staffing range and hiring plan. (SOURCE: Generated by the committee.)

staffing models were adequate for estimating national workforce needs, the committee observed that the models were too general to predict facility-level needs and often produced higher values than those from managers at the facility level. Put another way, the models did not account for how the complexity of an air traffic situation affects “controlling capacity.”

Thus, the 1997 study committee recommended that FAA develop quantitative staffing models that considered air traffic complexity. Specifically, the committee recommended modeling the time controllers spend in executing the observable tasks associated with air traffic control to estimate a controller’s “task load,” which could then be analyzed to identify the number of sectors and the number of controllers within each sector required in each 15-minute traffic interval.

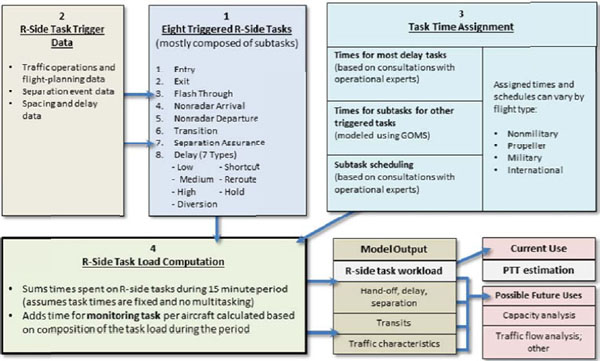

On the basis of the report’s recommendations, in the decade that followed MITRE Corporation’s Center for Advanced Aviation Systems Development converted a “task-based” model (developed for other purposes) to serve as an on-position staffing model. Figure 3-2 presents its basic structure. Box 1 in the figure shows eight of the model’s nine major radar (R-side) tasks. Tasks of the lead R-side controller trigger associated subtasks, some of which comprise detailed cognitive tasks represented by the goals, operators, methods, and selection rules (GOMS) construct. These tasks are triggered by traffic events for which historical data for that sector are available (Box 2). All identified tasks and associated detailed subtasks (Box 3) are divided into basic operators, and an execution time is assigned for each operator. The operator execution times are summed to calculate the total time required for performing each subtask, and ultimately each task, for each 15-minute period of the day. Thus, the time for each task is the sum of estimates of the times required for multiple cognitive subtasks and is not based on direct observations of controllers performing the tasks. Additional time for monitoring each aircraft—the MITRE model’s ninth R-side task—is assigned at this point (see Box 4). The MITRE model assumes that tasks are completed in sequence and that the times required for each task are independent of one another.

To date, the MITRE task load model only includes the tasks associated with the R-side controller to assess traffic capacity and does not include related data (D-side) tasks. However, determining the “positions to traffic” (PTT)—the estimated number of controllers (one, two, or three) required for handling a sector’s air traffic during a 15-minute period—requires knowledge of the total R-side and D-side task load. The MITRE model used “fuzzy logic modeling” to account for additional traffic complexity and total task load from the modeled R-side tasks. The fuzzy logic method weights an R-side task according to the task’s perceived D-side complexity. The weightings were based on input from operational experts and regarded as valid depictions of D-side task loads, even though D-side task were not explicitly identified and quantified.

MITRE has been applying this model for FAA since 2007 to support the generation of staffing standards.1 In 2009 FAA requested that TRB conduct a follow-up study of the model. The request called for an expert review of the MITRE model’s methodologies and its capabilities for estimating en route sector PTT. The committee that performed the study noted that a task-based approach is an improvement over earlier models in that it accounts for traffic complexity, and the committee noted that the model uses available traffic data. That committee made three recommendations (see TRB 2010, 63–64), which shaped this committee’s questions to FAA and MITRE.

____________

1 See http://www.faa.gov/air_traffic/publications/controller_staffing/ for the most recent air traffic controller workforce plan.

FIGURE 3-2 General structure of task load model. (SOURCE: Generated by the committtee.)

The first recommendation, summarized as “observe and measure controller task performance,” addressed questions concerning how tasks are modeled. The report noted that task times for seven of the nine modeled tasks are derived by summing subtask times that are not based on data from the field, while task times for the remaining tasks are based on input from subject matter experts—that is, task times are not derived from observing or analyzing controllers performing the tasks in the field or in experiments. The assumption that tasks are performed sequentially and separately does not consider the possibility that certain tasks are performed concurrently and does not account for variability in task completion times due to fluctuating levels of traffic activity and number of controllers working the sector.

MITRE has since reported to this committee that field observations were made in July 2013, with ongoing analysis and comparison with human-in-the-loop simulation data through 2014. The committee was not provided with sufficient detail to ascertain the extent of the completed and planned observations needed to develop and validate the model.

The second recommendation of the 2010 TRB committee, summarized as “model all controller tasks,” noted that the model’s inclusion of nine R-side tasks appears representative of the work performed by the lead controller and “may be sufficient” for analyzing traffic capacity. However, omitting all D-side tasks—those performed by the associate controller—makes the model’s task load output “inadequate for estimating PTT.” The model compensates for the lack of the D-side task load only by using techniques “that infer total task load” to estimate PTT. The techniques for converting the model’s total task load to PTT, such as fuzzy logic modeling, are similarly flawed—the methods rely on operational experts for an understanding of total task load instead of identifying and measuring the time required for a controller to perform the D-side tasks.

MITRE has since reported to this committee that its field and laboratory human-in-the-loop evaluations are designed to collect data on both the R- and D-side controller tasks and task times. MITRE further reported as follows: “The need to explicitly model D-controller tasks and add such a capability to the model will be determined by the cost vs. the potential amount of benefit to be gained, as the capability to directly model D-side tasks may or may not be feasible based on the availability of and/or accessibility to D-side task trigger data” (MITRE 2013, 2).

The third recommendation of the 2010 TRB committee, summarized as “validate model elements,” noted that key assumptions within the model—including the summation of subtasks to find total task time and conversion of R-side task time to PTT—had not been validated. The conversion of the model from its original purpose of estimating sector traffic capacity relied on estimates from subject matter and operational experts; the model was not based on tasks for which measurable performance data existed or could be gained. The 2010 study committee did not find strong documentation that explained the model’s logic and structure or that established and validated the model’s parameters, assumptions, and outputs.

The recommendation emphasized that validation should examine the underlying model itself, particularly assumptions that concern task performance by the controllers when they work alone and in teams, whether tasks are performed sequentially or concurrently, and how total task load affects the pace of task performance. This approach would justify the model’s role as an independent assessment of controller staffing. That role would be negated if the model itself is adjusted to match the current controller staffing level.

MITRE subsequently reported to this committee several model development and validation activities. As noted earlier, MITRE plans a cost–benefit analysis for incorporating the D-side controller, and it plans an evaluation of the accuracy of the task times estimated by GOMS. The results of this evaluation might enable replacement of the fuzzy logic modeling of monitoring with data gathered during human-in-the-loop simulations in a laboratory, and they might verify or contradict inferences and assumptions inherent in the current model of how the R- and D-side controllers interact. With this information, MITRE plans to identify tasks that the model needs to but does not yet account for. The intended validation of the thresholds to move from one to two controllers is to apply postscenario verbal protocol analysis during laboratory human-in-the-loop simulations in which controllers will be asked to identify the time at which they believe the D-side controller should have been added to support the R-side for each scenario, and their opinions will be contrasted with the thresholds of the model.

The discussions indicated that MITRE’s plan for observations and for validating the model may lead to large structural changes, which may require further validation. Such validation would almost certainly extend the overall timeline well beyond the currently estimated September 2014 completion date (MITRE 2013); in the interim, staffing standards would continue to be based on unvalidated model output. MITRE reported the following (MITRE 2013, 3):

One of the goals is to use the results of the evaluation effort to determine if any layers of complexity can be removed from the model while retaining its ability to accurately reflect the historical task workload and estimate the number of controllers needed to service the traffic. A fundamental question that MITRE’s evaluation is intended to answer is: “Do improved task times and task coverage from empirical observation and measurement of controller task performance

along with enhanced information on task workload thresholds allow for the removal of data-fitting elements like Fuzzy logic modeling from the model?”

The charter of this committee was broader than that of the 2010 TRB committee. In this broader context, the committee noted that the MITRE model has an extreme level of detail and includes some subtasks that are difficult to model quantitatively and for which there are no observable data. Such detail will require significant time and expense to develop and validate and will be difficult to extend to new controller tasks such as those anticipated with the Next Generation Air Transportation System (NextGen, as discussed in Chapter 5).2 Indeed, MITRE’s response above questions its own model’s complexity. In addition, as noted in Chapter 2, the model is intended to reflect the tasks believed to drive controller workload, which are largely focused on traffic separation. The controllers’ advisory functions are for the most part not included in the model and will not “roll up” in its summation of task time.

Tower Facilities On-Position Staffing Model

FAA updated the on-position staffing model for tower facilities in 2008.3 The model applies to 260 FAA-controlled facilities—130 stand-alone tower with radar (Type 7) sites and the tower portion of 130 combined TRACON–tower (Type 3) sites. It is documented in a report by an FAA–Grant Thornton study team.

The model’s underlying assumptions are that the predominant driver of workforce size is traffic volume and that controller workload (consisting of communication with pilots, airport staff, and other controllers) changes with volume. Accordingly, traffic volume is the input. For each tower facility, traffic data include all departures, arrivals, and local flight operations recorded in 15-minute intervals, 24 hours a day, 365 days a year. All categories of traffic are included—air carrier, air taxi, general aviation, and military.4 The study team visited 20 percent of the towers (representative of various types and levels) to observe and collect data relating traffic operations and controller workload. Its internal “communication model” is constructed as a regression analysis with traffic volume as input. The dimensions of the traffic input for towers are as follows:

• General aviation arrivals and departures,

• Non–general aviation arrivals and departures,

• Touch-and-go landings5 (not included in the previous two dimensions), and

• Overflights [for the Type 7 (tower with radar) facilities].

The output of the regression, controller workload, is communication measured in minutes, with a regression equation for each facility level.

For each 15-minute interval, the model converts traffic operations to controller workload (in communication minutes) for a given crew size. A capacity factor analysis makes an

____________

2 As noted in a recent report on FAA’s airway transportation systems specialists, a “robust and accessible staffing model should be capable of incorporating … additional inputs as they become available, not only with respect to NextGen but also with respect to inevitable technological advances” (NRC 2013, 4).

3 Rich McCormick, PowerPoint briefing to the committee, February 4, 2013.

4 The data are collected and maintained by the Air Traffic Organization in the Operations Network.

5 A touch-and-go landing involves landing on a runway and taking off again without coming to a complete stop.

assessment to predict the number of OPCs required to accomplish the workload. This number includes both certified professional controllers (CPCs) and non-CPCs—CPCs in training and developmentals—who are qualified to perform some of the necessary functions of each facility. As a result, the model’s output is not exclusively in terms of CPCs (FAA 2013a, 6; FAA 2013b, 7). The capacity factor analysis is performed with different parameters for each “level” of tower facilities reflecting the nature of the tower operations and positions that may need to be staffed.

As noted in Chapter 2, the model is intended to reflect the tasks believed to drive controller workload, which are largely focused on traffic separation and nominal communications. The controllers’ other advisory functions are not explicitly included in the model. Because the workload model is regressed to observed workload, the impact of these additional functions is implicitly covered to some degree in the rating of acceptable workload levels (on the modeled tasks) to OPC requirements for the traffic situation.

TRACON Facilities On-Position Staffing Model

FAA updated the on-position staffing model for TRACON facilities in 2009.6 The model applies to 157 FAA-controlled facilities: three consolidated TRACON (Type 9) sites, 24 stand-alone TRACON (Type 2) sites, and the TRACON portion of 130 combined TRACON–tower (Type 3) sites. A study team (with many of the same members as the team for tower facilities) documented the TRACON model (FAA 2013b).

From a big-picture point of view, the model for TRACONs performs the same function as the on-position staffing model for towers, namely, the conversion of traffic input for a 15-minute interval to the number of OPCs required for that interval. However, the key factors underlying the model are sector type and workload type. A sector is dynamically designated as one of the following types: departure, feeder, final approach, or mixed (FAA 2013b, 21).

Estimated controller workload is the aggregate of three component workloads, which are uniquely specified for the sector type. The component workloads are as follows:

• Fixed workload, that is, “time spent on tasks … not directly dependent on the number of aircraft in the system” (FAA 2013b, 25);

• Variable workload, that is, “time spent on activities that are dependent on operational activity in the airspace and incorporates the complexity metrics” (FAA 2013b, 26–27); and

• Active scope workload, that is, “time the controller must monitor the scope due to the complexity of airspace” (FAA 2013b, 29).

Although no “communication model” is explicitly described in the TRACON model report, the sector type and workload type equations perform the comparable function in that they estimate workload (controller activity measured in minutes) on the basis of traffic and other inputs. A capacity factor representing the maximum sustainable workload in a 15-minute period is applied to the aggregated fixed, variable, and active scope workloads. Again, the output of the model is the number of OPCs for each time interval.

As in the case of the tower model, the TRACON model is intended to reflect tasks believed to drive controller workload, which are largely focused on traffic separation and nominal communications. The controllers’ other advisory functions are not explicitly included in

____________

6 Rich McCormick, PowerPoint briefing to the committee, February 4, 2013.

this model. Because the workload model is regressed to observed workload, the impact of these additional functions is implicitly covered to some degree in the rating of acceptable workload levels (on the modeled tasks) to OPC requirements for the traffic situation.

Shift Coverage Model

Once the on-position staffing models provide an estimate of OPCs for each 15-minute time interval, the shift coverage model (SCM) determines the optimal number of controllers for meeting the on-position staffing requirements for a 24-hour day. This number is called the position-qualified controller (PQC) staffing level. It accounts for some general scheduling constraints, including the following:

• A 30-minute break for a meal within a specified window in the shift;

• Two nonconsecutive rest periods, each at least 15 minutes;

• No more than 2 consecutive hours on position; and

• The capability of allowing 8, 9, or 10 operational hours in a shift (the default maximum is set to 8 hours).

These constraints represent important concerns, but they do not reflect all of the requirements associated with FAA policy and practices or with the collective bargaining agreement. In particular, the SCM does not take into account constraints that can cross a 24-hour (midnight to midnight) period. Examples are minimum breaks between shifts (an 8-hour break before the start of evening and night shifts, a 9-hour break before the start of a day shift, and a 12-hour break after a night shift) and a maximum of 6 consecutive days without a day off.

During one demonstration to the committee, the SCM generated outputs that were not consistent with the scheduling constraints above. Furthermore, as reported to the committee, the model’s output is not always optimized to generate the minimum number of shifts to satisfy OPC requirements.

Daily Staffing Forecast Model

The daily staffing forecast model (DSFM) is a regression analysis designed to estimate the daily staffing requirements (DSRs). The DSRs can be thought of as the number of controllers present for duty at a facility each day to meet the on-position staffing requirements for each 15-minute interval. The regression maps historical total operations data7 to the output of the SCM to find the PQC associated with the 90th percentile day of operational traffic data.8

At the committee’s request, the Office of Labor Analysis (ALA) made multiple model runs in which only the percentile days chosen in the DSFM were varied, as shown in Table 3-1. The controller workforce could be 9 percent larger if it were sized to the heaviest traffic day. Conversely, it could be 8 percent smaller if it were sized to the median traffic day.

Therefore, the implications of choosing this percentile should be considered. The choice of the 90th percentile would result in acceptable staffing 90 percent of the time and in low staffing 10 percent of the time. From an operational perspective, low staffing is generally

____________

7 For FY 2014, the Office of Labor Analysis reports that the Traffic Count Index Program has replaced the Terminal Track Analysis Program.

8 For a future year, the 90th percentile operations count is modified by the traffic forecast factor.

TABLE 3-1 Percentage Change in DSRs Relative to Varying Percentile Days

| Percentile Day Chosen | ||||||

|---|---|---|---|---|---|---|

| 100th | 90th | 80th | 70th | 60th | 50th | |

| Terminal facilities | 10 | 0 | –3 | –5 | –6 | –8 |

| En route centers | 7 | 0 | –2 | –4 | –5 | –7 |

| Overall | 9 | 0 | –3 | –4 | –6 | –8 |

SOURCE: Data provided by FAA; table generated by the committee.

reflected in delays in providing services to pilots. This apparently conservative choice may compensate for unmodeled aspects of controller staffing. Thus, the impact of a change in this percentile threshold on safety and delay may be complex.

Traffic Forecasts and 90th Percentile Day Staffing

Air traffic forecasts are necessary inputs in the preparation of air traffic controller staffing plans because the DSR values provided by the DSFM are scaled to match the 90th percentile day forecast (see Figure 3-1). The amount of look-ahead time is matched to the time required to train new controllers for each facility. Thus, for some facilities a 1-year forecast is used, for others a 2-year forecast is used, and for the most complex facilities requiring the most training time a 3-year forecast is applied.

The forecasting process is independent of the staffing standards process. The forecasts of primary interest are those concerned with the number of aircraft operations at (a) airports with FAA-operated towers, (b) TRACONs, and (c) en route centers. FAA’s Forecast and Performance Analysis Division of the Office of Aviation Policy and Plans generates the forecasts on an annual basis. Forecasts for towers and TRACONs, including national totals, are provided in the Terminal Area Forecasts (TAF)9 and summarized in FAA’s annual Terminal Area Forecast Summary Report.10 Summaries of forecasts of en route (air route traffic control center) operations are published in FAA’s annual report Forecasts of IFR Aircraft Handled by FAA Air Route Traffic Control Centers. The Appendix presents a brief description of the methodological approach used in preparing these forecasts. The description is, of necessity, based primarily on interviews with FAA personnel11 and a review of a small number of technical papers. Detailed reports documenting FAA’s forecasting models and methodology either do not exist or could not be provided in response to the committee’s requests during its deliberations.

Forecasting air traffic operations is challenging. For example, in predicting commercial aircraft operations, passenger demand must be estimated and converted into aircraft operations

____________

9 See http://aspm.faa.gov.

10 Aviation forecasts are found at http://www.faa.gov/about/office_org/headquarters_offices/apl/aviation_forecasts; FAA prepares a national forecast reported annually in the FAA Aerospace Forecasts. The aggregate national forecasts reported in FAA’s Terminal Area Forecast Summary Report can differ from those in the FAA Aerospace Forecasts. For example, the TAF includes traffic activity at facilities not serviced by FAA, whereas the FAA national forecast does not. Since the focus of air traffic control staffing is on local requirements, the TAF and the air route traffic control center forecasts are more relevant than the national FAA Aerospace Forecasts. The remainder of this section focuses on the TAF and the air route traffic control center forecasts.

11 Roger Schaufele, FAA, briefing to the forecasting subgroup of the committee, December 12, 2013.

through forecasts of the characteristics of the aircraft fleet that the airlines will use and the load factors they will aim for. General aviation activity forecasts are also difficult because of the sector’s high volatility. The challenge is compounded by the need to produce forecasts for individual airports or specific origin–destination pairs (see Appendix).

The forecasting challenge is exacerbated by the difficulty in anticipating the change in air traffic operations between 2000 and present, which is partly attributable to such events as the September 11, 2001, attacks and the financial crisis of 2008–2009. These events could not have been predicted and have affected air travel negatively. Similarly, airline industry developments, such as mergers of major carriers and network consolidations, have reduced the availability of air travel options. For example, several major airports—such as those of Cincinnati, Ohio; Memphis, Tennessee; and Salt Lake City, Utah—and many secondary airports have experienced sharp reductions in the number of available flights. In addition, uncertainties may be present in some of the inputs that FAA uses in its econometric models, such as forecasts of economic growth generated by the Office of Management and Budget.

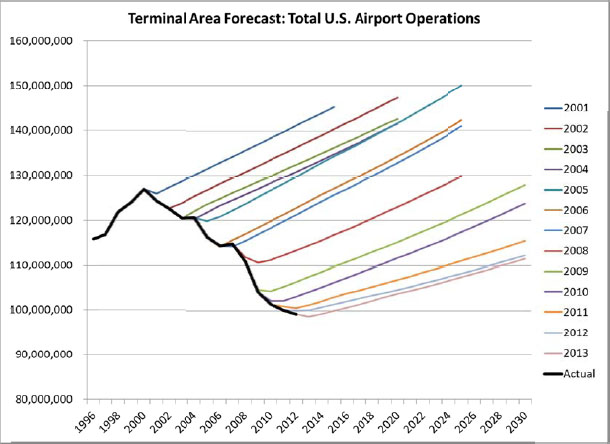

FAA’s forecasts did not capture the striking reduction in air traffic activity, as measured by aggregate numbers of aircraft operations, that has occurred since 2000. The intensity of this trend varies by location and by industry sector (commercial aviation versus general aviation), but the cumulative effect is unprecedented in modern U.S. aviation history. Table 3-2 presents a summary comparison of FAA air traffic activity levels in FY 2000 and FY 2012.

Table 3-2 shows significant declines in all three types of facilities, ranging from 13 percent for en route operations to 29 percent for tower operations. General aviation operations at airports declined by 41 percent, while commercial operations declined by only 15 percent.

Figure 3-3 shows the declining trend of actual total airport operations and the corresponding forecasts issued by FAA over the period 2001–2013. For example, the 2002 forecast for 2012 projected in excess of 30 percent more operations than actually took place in that year. In addition, according to the 2012 forecast, the total number of U.S. airport operations in 2020 is projected to be more than 40 percent lower than the number of movements projected in 2002.

TABLE 3-2 U.S. Air Traffic Operations, FY 2000 Versus FY 2012

| No. of Air Traffic Control Operations (millions) | |||

|---|---|---|---|

| FY 2000 | FY 2012 | Percent Change | |

| Tower operations (commercial, commuter, and taxi) | 24.3 | 20.7 | –15 |

| Tower operations (general aviation) | 27.8 | 16.3 | –41 |

| Tower total operationsa | 54.2 | 38.6 | –29 |

| TRACON total operations | 51.9 | 37.8 | –27 |

| En route total operations | 46.8 | 40.8 | –13 |

a Total tower operations includes military.

SOURCE: Generated by the committee; data from Air Traffic Activity Data System and TAF.

FIGURE 3-3 Total U.S. airport operations: actual traffic from 1997 to 2012 and FAA forecasts issued annually between 2001 and 2013. (All U.S. airport operations are included, not just FAA towers; actual operations data are those reported at the time of the corresponding forecast.) (SOURCE: TAF; figure prepared by MCR Federal, LLC.)

Decisions concerning new hires of air traffic controllers are essentially irreversible. For most facilities, 2 to 3 years of training are required for a controller to reach full certification (FAA 2013c). After they complete their training, air traffic controllers will typically serve until they become eligible for retirement and, most likely, for several years thereafter. The consistent optimism in traffic forecasts tends to result in the hiring of more controllers than required. hat effect can only be partially corrected in subsequent years after forecasts have been recognized as optimistic.

Availability Factor and Adjustments

The workforce calculations to this point have focused on the number of controllers required to be available to perform duties on position. Like many other workers, the controller has a 5-day, 40-hour workweek. There are many hours when the controller is not on duty, either away from the facility or at the facility but not available for position-related duties. ALA reports12 that annual off-position time (e.g., annual leave, sick leave, holiday leave usage) averages 422 hours per controller. With an annual base of 2,087 hours of work (i.e., a man-year), ALA reports “total available” time as an average of 1,665 hours—the difference between annual base and off-position time. Accordingly, the preliminary availability factor is the ratio of annual base hours to

____________

12 Rich McCormick, FAA, presentation to the committee, March 2013.

total available time, that is, 2,087/1,665 = 1.254; put another way, the total annual hours required of controllers will be this fraction multiplied by the required time on position. Accounting for the requirement for 7-day coverage in a week, during which each controller typically works five shifts, yields a factor of 7/5 = 1.4. The total availability factor is the product of these two factors (1.254 × 1.4), or 1.76.

The Organisation for Economic Co-operation and Development reports13 that the average hours worked per worker in the United States was 1,790 in 2012. At first glance, this appears to be significantly larger than the 1,665 total available hours noted above. Although the bulk of off-position time is hours not worked (annual leave, sick leave, holiday leave, etc.), it does include some hours worked, such as training, meetings, work groups, and union activities. Augmenting the 1,665 hours by these other hours worked in off-position time makes the two figures more comparable.

In some instances, traffic volume may be so light that the process does not generate staffing requirements sufficient for maintaining a minimum number of controllers14 on position (watch staffing) during all hours of operation. In such cases, typically at small facilities, the staffing standard is manually adjusted to ensure that FAA’s watch staffing levels are satisfied. Similarly, facilities having unique physical configurations, such as multiple operational towers, require special attention and adjustment to the staffing standard (FAA 2013a, Section 2.4, 34–36).

The application of the availability factor to the DSR, with any watch staffing or site-specific adjustments, completes the staffing standards process.

STAFFING RANGE INPUTS AND CALCULATION

As shown in Figure 3-1, FAA’s staffing standards have two main uses: as one input in calculating the staffing range and in determining the staffing target in the ALA-generated hiring plan. The development of the staffing ranges and staffing target is a multiple-step process and is described in the next section. The ALA hiring plan and the agreed-on staffing plan with the Air Traffic Organization (ATO) are discussed in Chapter 4.

According to the most recent controller workforce plan (FAA 2013c, 14), the four inputs to each facility’s staffing range are as follows:

• The staffing standard—the activity-based, schedule-constrained controller staffing requirements described in the above standards process;

• Service unit input (SUI)—“the number of controllers required to staff the facility, typically based on past position utilization and other unique facility operational requirements”;

• Past productivity—“the headcount required to match the historical best productivity,” where “productivity is defined as operations per controller”; and

• Peer productivity—“the headcount required to match peer group productivity.” Peer group productivity is a calculated productivity for facilities of the same type and level.

____________

13http://stats.oecd.org/Index.aspx?DatasetCode=ANHRS.

14 At many sites the minimum is one controller per shift, increased to two controllers at some times of day. At combined TRACON–towers (Type 3), the minimum is two controllers on position during hours of operation.

The staffing standard, past productivity, and peer productivity inputs to the staffing range are data driven. For the two productivity inputs, outliers are ignored. SUI is derived from a combination of past position utilization and field subject matter expertise.

The midpoint of the staffing range is calculated as the unweighted average of the four (or possibly fewer) inputs rounded to the nearest integer. The upper and lower limits of the staffing range are the values 10 percent greater and 10 percent lower than the midpoint, respectively, again rounded to the nearest integer. ALA provided the committee with each facility’s staffing range inputs and calculations for FY 2011 through FY 2013.

All facilities typically have staffing standard and SUI values. For the 3-year period for which data were provided, an average of 120 facilities (38 percent) had missing (or outlier) past productivity values, while an average of 159 facilities (50 percent) had missing (or outlier) peer productivity values. A facility or service area may raise a concern, but documentation suggesting that the majority of facilities have any direct or specific input into the staff planning process was not provided to the committee. According to FAA, the SUI incorporated into the planning process was primarily determined at the service area and headquarters levels and may not have adequately reflected operational needs expressed by the individual facilities. Thus, this process could create a dichotomy between the staffing requirements developed centrally and staffing levels deemed operationally necessary by the field. During FY 2013, FAA began to address this disconnect with the establishment of two field focus teams (FFTs)—one for en route facilities and one for terminal facilities—to facilitate workforce planning. Each FFT was made up of senior FAA managers who were tasked with developing plans that more strategically address staffing issues such as imbalances between facilities. For terminal facilities, the FFTs incorporated past position utilization data in the Terminal Validation Tool (TVT), an Excel-based application used to identify the field’s estimate of staffing needs.15 Historically, SUI for the en route centers tended to mirror ALA’s staffing standard for most cases; however, in those cases where SUI differed from the staffing standard, FAA was unable to provide documentation on how the SUI estimates were developed. Therefore, ATO has indicated that it intends to use an En Route Validation Tool (EVT), similar to the TVT, to assist in generating an objective SUI. That program has yet to be vetted. Little documentation is available on the design and use of either the TVT or the EVT, so the committee could not explore their effect on staffing models.

Operational schedules are the responsibility of each facility. A default schedule template familiar to the facility’s personnel is often used. Such schedules have evolved locally and reflect local conditions and actual schedules at each facility, but they may also reflect scheduling practices that are inconsistent with national policy or that do not incorporate best practices for managing the risk of fatigue, as described in Chapter 2.

As discussed in the staffing standards section earlier in this chapter, the SCM is a scheduling algorithm that calculates the number of PQCs needed for a facility. The algorithm is part of the centralized planning process at FAA headquarters that assumes some schedule for each facility generated from a basic set of work and fatigue rules. However, such a centralized schedule cannot work for all facilities—as reported to the committee, one size does not fit all.16

____________

15 ATO, FAA, teleconference with the committee, November 19, 2013.

16 Glen Buchanan, FAA, presentation to the committee, March 2013.

Each facility may have specific local conditions, such as varying air traffic patterns throughout the day or heavy congestion on surrounding roads at certain times of the day. As a result of such congestion, controllers’ local commutes will take longer, which may negate efforts to mitigate fatigue by extending intervals between scheduled duty times. Such variations in scheduling can result in staffing standards that are based on incorrect assumptions.

As described in Chapter 2, FAA is attempting to implement a more sophisticated scheduling tool (Operational Planning and Scheduling). Such a tool could be used at facilities, with each facility granted a level of flexibility appropriate to adapting its schedule to local conditions. The local adaptations in scheduling could be fed back to inform the centralized calculation of the staffing standards. Such a scheduling tool may allow for more efficient schedules at facilities, reduce the use of overtime, and (as noted in Chapter 2) help facility managers apply best practices in managing fatigue risk.

The generation of the staffing standards, and ultimately the staffing plan, is inherently a function of central planning units in ALA and ATO at FAA headquarters. How individual facilities interact with this centralized process with regard to specific needs is unclear and not fully documented. The operational reality of staffing at the facility level may not be reflected in the mathematical models underlying the staff planning process.

The process needs to be well defined and use the best available input. Both sides of the staffing process, facilities and headquarters, need a better understanding of how a facility’s staffing level is assessed or counted, how staffing ranges are generated, whether the staffing level accounts for all duties and demands of the position or only for “core duties,” and what is the target hiring or staffing number. ALA acknowledges that it needs better outreach to the service areas and facilities, although the staffing ranges are published. For example, ALA recognizes the need for more constructive engagement with facilities when the staffing standards vary significantly from the SUI (these two values for each facility differ from each other by 7 percent on average). Such engagement could involve facility management making a “bottom–up” business case when the two staffing range components disagree.17

The disconnect between the outputs of the modeling process and the operational perspective generated concerns that prompted ATO and the National Air Traffic Controllers Association to embark on a collaborative effort to review, revise, or improve data-based operational models for the distribution of air traffic control specialists throughout the field facilities. The effort seeks to uncover reasons why the modeling process has been generating staffing ranges that are perceived by some facilities as inconsistent with operational needs and to assist in rectifying the concerns.18 The FFTs mentioned earlier may be subsumed into this collaborative endeavor to maintain a commonality of approach so that labor and management collectively can improve the theoretical and practical aspects of staffing the air traffic system.

____________

17 Rich McCormick, FAA, presentation to the committee, March 2013.

18 Andrew LeBovidge, committee member and National Air Traffic Controllers Association representative, personal communication to Safety subgroup of the committee, March 19, 2013.

FAA operates 315 air traffic control facilities, which are staffed by approximately 15,000 controllers. FAA uses a series of mathematical models to estimate the staffing standard—the total number of PQCs needed at each facility to cover 90th percentile day traffic.

The committee had two concerns with the models common to the staffing standards for all types of facilities. The first relates to the SCM, a scheduling algorithm that has limited capabilities and may not reflect the scheduling complexities of an individual facility. The staffing standards could be improved if the central planning process and facilities used the same scheduling tool, if facilities were able to adapt their schedule to local conditions, and if central planning was aware of those adaptations.

Second, air traffic forecasts are necessary inputs for the air traffic control staffing standards process, yet over the past 12 years, the annual forecasts have been overly optimistic. The forecasting models and processes are not documented well enough for this committee to evaluate them in detail and to assess whether they provide a rigorous, consistent basis for generating staffing standards. However, the consistent optimism in traffic forecasts results in a tendency to hire more controllers than required. This effect can only be partially corrected in subsequent years after recognition that hiring has been based on inflated forecasts.

Different models are applied for different types of facilities at the first step in generating the staffing standards. While the committee found the models for tower and TRACON facilities to be based on well-documented, appropriate constructs, it had concerns with the MITRE task load model used to estimate the on-position staffing requirements for en route control centers. FAA and MITRE have presented a plan and proposed timeline for meeting the recommendations of the 2010 study committee, which also had concerns with regard to this model, but they had not completed the plan’s proposed activities at the time of this committee’s report. The current committee questions the appropriateness of continuing to use the MITRE model in determining the number of OPCs for en route facilities.

Finding 3-1. The overall process for generating staffing standards appears reasonable, except where noted below. Starting with models for estimating OPCs is logical. The models for tower and TRACON facilities, which are developed from observations of a representative sample of these facilities, relate traffic volume data for each facility to the prediction of controller activity and estimate on-position requirements on the basis of controller workload.

The analytical approach in the DSFM appears to be realistic. The “safety buffer” resulting from use of the 90th percentile day of operations permits controllers to have reasonable on-position times during a shift.19 FAA’s current availability factor calculation is consistent with generally accepted practices.

Finding 3-2. FAA’s traffic forecasts are persistently high, with correspondingly high long-term hiring estimates. The projected number of air traffic control hires needed over a 10-year period, as reflected in the controller workforce plan, relies directly on traffic forecasts generated

____________

19 ALA reports that the average controller spent approximately 4 hours on position during a nominal 8-hour shift in FY 2013—slightly more at terminal facilities, slightly less at en route centers.

by a separate organization within FAA. The medium- and long-term projections of air traffic operations appear optimistic and unreliable and could provide inaccurate long-term hiring estimates. Persistent optimism in traffic forecasts brings about a tendency to hire more controllers than required, particularly for facilities requiring 3 years of training for new controllers to qualify. This effect can only be partially corrected in subsequent years.

Finding 3-3. The ability to estimate staffing standards centrally is limited by poor assumptions about scheduling. The SCM is used at the national level to make assumptions about controllers’ schedules with regard to meeting traffic demand and constraints on allowable work shifts. The tool is limited in that it does not account for certain constraints on work shifts (e.g., rest requirements on off-duty time crossing midnight), does not account for significant considerations at local facilities requiring them to adapt their schedules, and was observed by committee members to produce results that were inconsistent with its own documentation.

Recommendation 3-1. FAA and the National Air Traffic Controllers Association should collaborate to implement an operational scheduling tool and appropriate procedures for its use at each facility. They should establish shift–schedule templates suitable for each facility’s traffic patterns. More than one standard schedule should be available to each facility, and a “request for further consideration of unique circumstances” process should be available for facilities if their managers believe that local considerations require adjustments in schedule templates. The scheduling tool and associated procedures should allow each facility to design, revise, and publish its schedules on the basis of best practices in fatigue mitigation. The scheduling templates used at the facility should be transparent and available to others, including the staffing process. This may be fostered by the scheduling tool having the capability to archive the templates in a manner that allows various organizations, including central staffing processes, to access them as appropriate.

Finding 3-4. With regard to the task load model used for estimating the number of OPCs needed for en route centers, FAA and MITRE have yet to meet the 2010 study committee’s recommendations. The current plan may address the modeling and validation recommendations in the 2010 study or identify the need for further observations and human-in-the-loop simulations to provide the necessary data. It may also indicate that extensive changes are required in the workload model.

The current committee discussed not only whether the model is (or can be made) accurate but also whether the model form is appropriate for its function in the generation of staffing standards for en route centers (which was not its original purpose). Given its extreme level of detail, the sheer number of elements needing to be modeled and validated would entail significant cost and delay. The modeling of tasks and subtasks in detail makes the output more sensitive to omitted tasks, such as the issuing of safety advisories and the provision of help for aircraft in abnormal situations, which are likely subsumed in the higher-level regression models used in the equivalent models applied to tower and TRACON facilities. The cost of adapting the model to predict NextGen staffing standards could be substantial, and the forecasts would need to be validated by monitoring the staffing they call for over the course of several years.

The detailed structure of the MITRE model—which drills down to individual cognitive subtasks and sums their task times—may itself be a concern. Such a large model may be costly to validate and may also require redefinition and revalidation. Given that the model’s output

requires validation by assessments of controllers and facility managers as to whether it correctly predicts OPCs, a simpler model based on data that can be observed easily and objectively, closer in form to the tower and TRACON models, may have greater construct validity at lower cost.

Recommendation 3-2. FAA should develop a new, simpler en route model built on data that can be observed easily and objectively, closer in form to the terminal and tower models described in this chapter. In developing the model, a cost–benefit analysis would need to be made that compares the value of the increased insight provided by greater model detail with the financial and time costs of developing and validating the model.

Finding 3-5. Most of the models underlying staffing standards generation appear to be well documented and consistently applied, but traffic forecasting and the process of calculating the staffing ranges were opaque and open to concerns that they were arbitrary or inconsistent. This is especially true of the determination of SUI. When differences occur between the staffing standard and SUI outputs (or facilities’ considerations), proper engagement and communication between headquarters and the field are necessary to ensure that the process is transparent. Likewise, it was unclear which workforce quantity is used for comparing the staffing range with the “acceptable,” “high,” or “low” assessment of staffing for each facility presented in the current controller workforce plan.

Recommendation 3-3. FAA should ensure that the field understands the staffing process by providing greater clarity and transparency, and it should continue collaborative efforts ensuring that local facility considerations are properly addressed in—and continuously fed back to—its generation of the staffing standards.

REFERENCES

Abbreviations

| FAA | Federal Aviation Administration |

| NRC | National Research Council |

| TRB | Transportation Research Board |

FAA. 2013a. Air Traffic Control Staffing Standards Update for Tower Facilities. Final report. Feb. 7.

FAA. 2013b. Air Traffic Control Staffing Standards Update for TRACON Facilities. Final report. Feb. 7.

FAA. 2013c. A Plan for the Future: 10-Year Strategy for the Air Traffic Control Workforce, 2013–2022. http://www.faa.gov/air_traffic/publications/controller_staffing/media/CWP_2013.pdf.

MITRE. 2013. En Route Workload Model Evaluation Timeline with Significant Milestones. White paper response to committee, Sept.

NRC. 2013. Assessment of Staffing Needs of Systems Specialists in Aviation. National Academies, Washington, D.C.

TRB. 1997. Special Report 250: Air Traffic Control Facilities: Improving Methods to Determine Staffing Requirements. National Research Council, Washington, D.C.

TRB. 2010. Special Report 301: Air Traffic Controller Staffing in the En Route Domain: A Review of the Federal Aviation Administration’s Task Load Model. National Academies, Washington, D.C.

Development and Implementation of Staffing Plan

The model-based staffing standards and the staffing ranges described in Chapter 3 are developed by the Federal Aviation Administration’s (FAA’s) Office of Labor Analysis (ALA) to inform an annual hiring plan. The goal of the hiring plan is to move each facility to a level consistent with the staffing range. The Air Traffic Organization (ATO) and ALA then develop an annual staffing plan, including new hires and transfers among existing staff, that serves as the basis for decisions concerning each of FAA’s 315 operational air traffic control (ATC) facilities. The execution of the staffing plans, which results in new hires and transfers and in the placement of personnel among facilities, is carried out by ATO.

This chapter focuses on the methods applied by FAA in its detailed staff planning and execution. In some cases the methods were unclear to the committee; they are analyzed on the basis of FAA data representing typical inputs to the methods and their outputs. The efficacy of staff planning and execution depends on the extent to which the result is consistent with the published controller workforce plan. That measure can change as FAA’s methods change.

The chapter describes how FAA assesses current facility staffing status and explores how FAA develops its hiring and staffing plans. It describes the committee’s understanding of the execution process and illustrates how the number and location of controllers in the workforce compare with the goals of the staffing plan. Potential strategies for improvements in staff planning are noted. The final section summarizes the chapter and gives the committee’s recommendations.

Assessment of Status

Staff planning requires a target and a clear path for reaching it. In concept, plans for individual facilities span at least 3 years to account for losses due to attrition (such as retirements, promotions, and deaths) and the period needed to select, hire, and train personnel to reach full certification, which typically ranges from 1 to 3 years. Achieving the target staffing level over time for a facility requires a good understanding of the current staffing status. ALA uses the head count of certified professional controllers (CPCs) and certified professional controllers in training (CPC-ITs) to assess each facility’s staffing status relative to the staffing range described in Chapter 3. Status is assessed as follows:

• Above range: CPC + CPC-IT is more than 10 percent above the staffing range midpoint.

• Within range: CPC + CPC-IT is within ±10 percent of the staffing range midpoint.

• Below range: CPC + CPC-IT is more than 10 percent below the staffing range midpoint.