10

Designing, Conducting, and Analyzing Programs Within the Preventive Intervention Research Cycle

Commissioned papers for this chapter were prepared by H. Kraemer and K. Kraemer and by S. Fawcett and colleagues and are available as indicated in Appendix D.

Successful science benefits from cumulative progress, and the field of prevention of mental disorders is no exception. The previous chapters have detailed the progress to this point, including the diverse lessons that can be taken from other areas in health research. It is apparent from the review in Chapter 7 that an encouraging number of well-designed research programs on the reduction of risk factors associated with the onset of mental disorders do exist. The task over the next decade will be to enlarge that body of work into a prevention science by instituting rigorous standards for designing, conducting, and analyzing future preventive intervention research programs. By adhering to such standards, prevention can achieve the credibility and validity necessary for its interventions to reduce the incidence of mental disorders.

Only rigorous standards can lead to an enrichment or expansion of the knowledge base essential for prevention efforts. Outcomes from trials built on such standards can serve to refine hypotheses and concepts related to risk and protective factors. The model building and hypothesis testing inherent in prevention research can elucidate pathways taken by individuals as they move toward or away from the onset of a mental disorder, as well as intervening mechanisms and brain-behavior-environment interactions that result in mental disorders or avert their occurrence, even in individuals at very high risk. In addition,

empirical validation of preventive interventions can usefully inform and broaden clinical practice. Epidemiological evidence, for example, can suggest causal factors that can best be tested in a preventive intervention research trial, which may, in turn, suggest molecular or behavioral mechanisms for further study.

THE PREVENTIVE INTERVENTION RESEARCH CYCLE

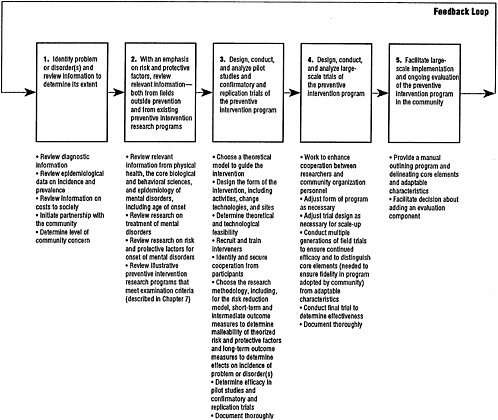

Just as the development of prevention into a science requires a series of rigorously designed research programs for its collective progress, so an individual research program requires a series of carefully planned and implemented steps for its success. Figure 10.1 presents the committee's concept of how these steps build upon another in the preventive intervention research cycle. The process proceeds in much the same sequence as it has in the report to this point. The first step is to identify and define operationally and reliably the mental disorder(s) or problem. The second step is to consider relevant information from the core biological and behavioral sciences and from research on the treatment of mental disorders, and to review risk and protective factors associated with the onset of the disorder(s) or problem, as well as prior physical and mental disorder prevention intervention research. The investigator then embarks on designing and testing the preventive intervention, by conducting rigorous pilot studies and confirmatory and replication trials (the third step) and extending the initial positive findings in large-scale field trials (the fourth step). If the trials are successful, the researcher facilitates the dissemination and adoption of the program into community service settings (the fifth step). Most of the research programs presented as illustrations in Chapter 7 are at the third step.

Although the review processes that constitute the first and second steps in Figure 10.1 are considered to be part of the preventive intervention research cycle, the original studies in these areas, with the exception of the previous studies on the prevention of mental disorders or problems, are not. For the individual researcher, it is the activities in the third and fourth steps that constitute preventive intervention research per se. Likewise, it is not the community service program and its evaluation but the facilitation by the investigator of the program 's widespread dissemination and adoption (the fifth step) that is part of the research cycle. The knowledge exchange processes that operate between the researcher and the community at this step are discussed in more detail in Chapter 11. (In this report, the term community refers not just to a community as a whole, but also to an element within a community, such as a school, health care clinic, advocacy group, or neighborhood.)

The final steps in the cycle, represented by the feedback loop, are to review the results of any subsequent epidemiological studies to determine if the prevention program actually resulted in reductions in incidence of the targeted problem or disorder(s) and to respond to community representatives regarding their research interests and suggestions for further work.

Each step in the cycle is outlined below. Sections later in the chapter present a host of issues relevant primarily to the research activities in steps three and four—including methodological issues pertaining to experimental design, sampling, measurement, and statistics and analysis, as well as documentation issues. Cultural, ethical, and economic issues that require attention throughout the cycle are also presented.

In this discussion the terms preventive intervention program and preventive intervention trial are carefully delineated. The preventive intervention program is the activity or activities that are provided to the target population (e.g., home visitation with mothers and their infants or a substance use resistance training curriculum delivered to school children by their teacher). The preventive intervention trial is the research component designed with experimental protocols to evaluate and validate the success of the intervention program. Preventive intervention research program is the inclusive term for the program plus the trials.

Identification of the Problem or Disorder(s) and Review of Information Concerning Its Extent

The first step in the preventive intervention research cycle is to identify the disorder, cluster of disorders, or problem that is to be the target of the intervention. Knowledge regarding the diagnostic criteria and course of the disorder, as well as its incidence and prevalence, can be helpful in determining whether a preventive intervention for a particular disorder is warranted. Problems that are appropriate targets for intervention can include those such as child maltreatment that are serious social problems in their own right but are also risk factors associated with the onset of mental disorders. At this step in the research cycle, the investigator also considers the personal, social, and economic costs associated with the suffering and disability resulting from the problem or disorder.

Further, because prevention research almost always touches the community in some way, even at its earliest stages, a partnership in project planning between the researcher and the community is highly desirable. Questions to ask at this point include: Is the particular problem or disorder a matter of concern within the social unit—

FIGURE 10.1 The preventive intervention research cycle. Preventive intervention research is represented in boxes three and four. Note that although information from many different fields in health research, represented in the first and second boxes, is necessary to the cycle depicted here, it is the review of this information, rather than the original studies, that is considered to be part of the preventive intervention research cycle. Likewise, for the fifth box, it is the facilitation by the investigator of the shift from research project to community service program with ongoing evaluation, rather than the service program itself, that is part of the preventive intervention research cycle. Although only one feedback loop is represented here, the exchange of knowledge among researchers and between researchers and community practitioners occurs throughout the cycle. The feedback loop demonstrates both the continuity of the cycle and the necessity to incorporate many different types of feedback into each step, including community responses, additions to the knowledge base, and ultimate effects of programs on incidence and prevalence of disorders. Cross-cutting issues regarding methodology, documentation, and cultural, ethical, and economic concerns are treated in the text.

community, school, neighborhood, mental health service agency—where the research would be carried out? Would the community be responsive to the development of a research program to address such concerns? Giving the community a voice in defining the problem and in formulating the research program and procedures can be done in many ways, such as by having a representative from the community, perhaps a delegate from a service agency, participate with the research team on an ongoing basis (Kelly, Dassoff, Levin, Schreckengost, and Altman, 1988; Weiss, 1984; Snowden, Muñoz, and Kelley, 1979; see also the commissioned paper by Fawcett, Paine, Francisco, Richter, and Lewis, and commentaries by Gallimore and Rothman, available as indicated in Appendix D.)

Review of Risk and Protective Factors and Relevant Information from the Knowledge Base

Information regarding the concept of risk reduction and how it can be applied in research programs on the prevention of mental disorders can be obtained from a review of prevention programs in physical health (see Chapter 3). Knowing specifics about the predisposing biopsychosocial risk factors and environmental and personal protective factors that converge and interact to determine the onset of any mental disorder is critical for decisions that are made about the nature and targets of any preventive intervention strategy. To acquire this knowledge, the investigator can access a panoply of research disciplines, including molecular biology; behavioral, population, and molecular genetics; gene-environment interactions; neuroscience; developmental, experimental, and social psychology; sociology; behavior analysis; cognitive science; developmental psychopathology; and population and developmental epidemiology (see Chapter 4 and Chapter 5). The investigator next examines what is known regarding the relevant risk and protective factors affecting the onset of the disorder(s) or problem(s) of interest (see Chapter 6). This review provides information that will be useful later in choosing a theoretical model that specifies the mechanism or processes through which these factors have effects. In addition, the review can reveal information on sociodemographic or biological characteristics that may be helpful in targeting a population at risk, as well as identify modifiable risk or protective factors as potential targets for preventive intervention. A review of the relevant publications on prior preventive intervention research programs (see Chapter 7) is another essential step to take before designing the research program. Finally, some of the most important information about protective factors has come from research

on the treatment of mental disorders (see Chapter 8). Treatments to strengthen the social support network and social competence of an individual afflicted with a mental disorder, for example, have consistently been shown to improve that person's outcome. This points toward preventive interventions to reinforce these protective factors and thereby diminish the likelihood of stress-induced initial onset of illness.

Pilot Studies and Confirmatory and Replication Trials

Once the pertinent information has been reviewed, the investigator can begin the process of designing, conducting, and analyzing the research program. Initially, a small-scale, rigorously designed pilot study is done in a carefully controlled setting, often within a community institution, to test methods and procedures. A pilot study is exploratory in nature, and many alterations in design are made. Then the investigator applies the methods and procedures that appear to be successful to a larger population in a confirmatory trial to determine the efficacy of the research program, efficacy being “the extent to which a specific intervention, procedure, regimen, or service produces a beneficial result under ideal conditions” (Last, 1988). If a research program proposes to change risk or protective factors and does so, but the targeted factors are not causal, then the program will lack efficacy, failing to prevent the mental disorder even if it succeeds in altering the risk factor. Thus a well-controlled confirmatory trial can provide relevant data to confirm or deny the causal roles of hypothesized risk and protective factors. Finally, if the results from the confirmatory trial are encouraging, the same methods and procedures are applied in a replication trial to ensure continued efficacy. The Prenatal/ Early Infancy Project (Olds, Henderson, Tatelbaum, and Chamberlin, 1988, 1986), discussed in Chapter 7, is an example of a research program that is now being replicated in a new location.

At this third step in the cycle, the investigator faces a number of decisions. The first of these is the choice of a theoretical model to guide the preventive intervention program. With this model in place, the form of the intervention program itself can be designed. The features of the program—including such things as intervention techniques and site—are chosen here, although they may be adjusted somewhat in step four, when the program is applied in a large-scale field trial. Intervention program design issues are distinct from the methodological issues involved in designing the research component of the program, a task that is encountered both in this step and in step four and thus is discussed as a cross-cutting issue later in this chapter. When the design work is done, the processes of recruiting and training interveners and

identifying and securing the cooperation of appropriate participants can begin. Then the studies or trials are conducted. Thorough documentation of all these choices and the reasons for them is essential to subsequent analysis, both in this step and in step four. This is discussed as a cross-cutting issue below.

Choosing a Theoretical Model to Guide the Intervention Program

To prevent the targeted disorder or problem, the investigator chooses a theoretical model based on the available body of knowledge that addresses one or more of the following factors:

-

The presence of risk factors and absence of sufficient protective factors correlated with the disorder that may be both causal and malleable, that is, can be altered through intervention.

-

The mechanisms that link the presence of risk factors and the absence of protective factors to the initial onset of symptoms (which may involve gene-environment interactions).

-

The triggers that activate these mechanisms (including stressful life events, physical illness, and developmental changes).

-

The processes that mediate the triggering event and the onset of symptoms.

-

The processes that occur once symptoms have developed. Ideally, these processes can be attenuated through indicated preventive interventions before they cross the threshold criteria for diagnosis of the disorder.

The choice of a theoretical model stems not only from formulations of risk and protective factors, mechanisms, triggers, and processes, but also from analysis of interventions. Whether a particular theoretical approach can guide prevention strategies depends on the data supporting it. Practically, most current evidence is limited to assessment of risk and protective factors, although there is considerable speculation regarding mechanisms and triggers. Therefore, for now, basing preventive interventions on the risk reduction model, that is, on theories involving the reduction of risk factors and/or enhancement of protective factors, is the most productive strategy. This may ultimately lead to studies on incidence of disorders. No matter which theoretical model is used, the ultimate goal of reducing the incidence of mental disorders is the same.

Designing the Form of the Intervention Program

The intervention program is made up of (1) the activity or activities that are provided to the targeted population, such as an educational

curriculum, supportive counseling, and child care, at a planned frequency and for a set amount of time; (2) the psychological, biobehavioral, educational, organizational, or social techniques and procedures —sometimes called change technologies—used; and (3) the site in which the intervention takes place.

Theoretical and technological factors are closely intertwined and affect the choice of the intervention activities and change technologies. For example, educational interventions require teaching techniques known to work. Specific teaching techniques may work well with certain groups but not with others. If the instructors are not able to teach the participants the skills that are thought to decrease the probability of the disorder or problem, the theory cannot be tested, nor the intervention implemented. Interventions may thus have to be redesigned for different groups to address how they learn; language, educational level, cultural background, rural versus urban setting, and generational cohort will need to be considered. In addition to learning theory, intervention activities and change technologies may draw heavily on operations research, social psychology, behavioral modification technology, and a variety of other fields. They may include the use of biological-pharmacological, educational, or skills-building programs, environmental change strategies, new social policies, and regulations or laws.

A variety of questions are typically addressed at this stage in the prevention research process, such as: Is this intervention acceptable and feasible for the targeted population? Has consideration been given to ethical concerns, cultural factors, and linguistic differences? Have issues of access been addressed, including potential barriers in the host institution or community and dissemination of information regarding the availability of the intervention? In addition, questions about intervention intensity (that is, the frequency and length of intervener-participant contacts), the feasibility of administering the intervention to a group instead of individuals, and the use of special technologies such as video tapes, computer-aided learning, and specialized medical techniques are addressed at this stage.

Preventive interventions, in general, should be short enough to be practical, yet intensive and long-lasting enough to be effective. Obviously, it is best if they are not too costly, but the more relevant issue is whether the potential benefits justify the cost. With the possible exception of certain structural interventions, such as helping a participant secure a job, brief interventions usually cannot be expected to have long-term effects in preventing major disorders. Attempts to change behavior or instill certain skills and to sustain these changes over time require intensity of effort, not only from investigators, but also from participants.

Finally, it is useful to obtain information regarding how well the program and its component parts have been received. Feedback to the prevention researcher in this stage can come from the participants in the studies and trials as well as from community leaders (Krueger, 1988; Manoff, 1985).

Recruiting and Training Interveners

The choice of the interveners can be crucial to the success of the preventive intervention program. Sometimes the interveners are professionals; often they are not. Frequently, they have a natural relationship with the participant—such as being a teacher, parent, doctor, or neighbor (see Chapter 7). Careful selection, provision of initial training and ongoing supervision, payment of a salary, a reasonable workload, and involvement, as appropriate, with the interdisciplinary research team, help ensure high quality and low attrition of interveners.

Identifying and Securing Cooperation from Appropriate Participants

The researcher next decides for whom the intervention is appropriate. In general, the less expensive and the less likely to have any unintended adverse side effects the intervention is, the more widely it can be implemented (universal). As the intervention becomes more expensive, and as it becomes more potent, it becomes increasingly important for ethical as well as economic reasons to focus its implementation to reach the population most at risk (selective, then indicated). (See Chapter 2 for a discussion of population groups.) However, this is not to say that universal interventions are inexpensive to deliver. An intervention with even a low cost per participant becomes a large expense when delivered to thousands of participants. However, these delivery costs may be more than offset by the savings realized when disorders are prevented, especially if an entire lifetime of disability and expensive treatment can be avoided.

One crucial element in identifying appropriate participants is the current understanding of the nature of the problem or disorder (reviewed in steps one and two of the research cycle), in part because individuals who already have the disorder in question must be excluded from the preventive intervention and individuals who are at especially high risk should be included. For most mental disorders, genetic predispositions have only a probabilistic influence on the manifestation of the illness. The onset of a disorder often depends on the nature of the interaction between genetic predisposition and

environment. Therefore, if genes related to mental disorders are eventually identified, individuals with these genes may be particularly appropriate participants in prevention trials for indicated interventions.

Another crucial element is information about who in the population is at risk for the disorder or problem. This information comes not only from risk studies but also from treatment research (reviewed in step two). For example, a high incidence of a particular disorder within a population group identified by age, gender, or culture provides clues about whom to target. Finally, a knowledge of the developmental periods of risk and the ages of onset (from epidemiological studies reviewed in step two) is also valuable for decisions regarding when to intervene.

The investigator next develops a plan to successfully engage the targeted participants. These participants, by definition, do not have a problem that they are necessarily motivated to cure or relieve. There is no way of ascertaining whether any one individual in an at-risk group will develop the disorder if the intervention is not received. Therefore potential participants may not be willing to participate. Influential members of the community can often help by providing access to the targeted group and gaining their cooperation. The investigator can then inform the potential participants not only about any risks involved, but also about how the intervention may be useful to them. Incentives for participation, such as payment for interviews, video tapes of children, printed educational materials, and free transportation, are often presented at this time.

Noncompliance and attrition are major issues in prevention research programs. The intervention potentially can have its largest effects on participants who are receptive to its aims, participate in all intervention sessions, follow through on requests, and continue with the program until it is completed. But participants who do not comply may be those at the highest risk. Efforts to promote compliance are essential to well-designed interventions. One way to sustain participation is to shape the intervention so it is sensitive to the local culture and customs of the targeted group. For example, it is useful to uncover the targeted population's daily routine—including their daily tasks, their values and goals, and their culturally prescribed rules, norms, and scripts —as well as the motives, feelings, and meanings they may associate with the intervention (Gallimore, Goldenberg, and Weisner, in press; O'Donnell and Tharp, 1990). Making participation easy by crafting interventions congruent with these elements, and relevant to people 's lives, will increase participation.

Large-Scale Field Trials

Large-scale field trials offer an opportunity to expand preventive intervention programs found to be efficacious in initial confirmatory and replication trials to large-scale field conditions. Here also, the benefits and costs of the intervention can be more realistically assessed. These trials help to assess the generality of the efficacy of the program with different personnel, participants, settings, cultures, and conditions. A large amount of research in the field of social innovation and organizational change has addressed these questions. Such trials may require involvement with community service agencies or organizations of various kinds, including social service agencies, mental health clinics, primary health care clinics, schools, and day care centers—all here referred to for convenience as organizations —and will definitely require the involvement of many more interveners. Therefore the investigator, although still theoretically in charge, can lose some control over the fidelity of the implementation unless considerable attention is paid to the details regarding the delivery of the intervention and the recording of data.

Experience tells us that research in naturalistic settings can be beset with complications and failure (Hiltz, 1974). The changes in personnel at this point are often the crucial element. Poor communication and operational tensions between researchers and the organization 's personnel are common (Hood, 1990). These problems can lead to certain unwelcome results: personnel may fail to follow through in filling out forms or keeping records, may slow down the work, may provide false or misleading information, may circumvent established procedures, and may even sabotage or move to terminate the project (Hiltz, 1974). Furthermore, researchers are not ordinarily trained to deal with the interorganizational and interpersonal complexities of large-scale field trials.

Projects involving collaborative work between researchers in universities and institutes, on the one hand, and organizational personnel, on the other, can be brought into focus through the lens of interorganizational theory. Hasenfeld and Furman (in press) have specifically suggested the use of the following principles to facilitate these research relationships:

-

the problem and purpose should be manifest for both parties from the start,

-

the benefits for each party and the reasons for participating should be clear, and

-

the expectations and costs should be explicit.

In addition, a more satisfactory exchange may occur at this step if a community representative was involved earlier. Such a process helps to protect the community interests and engenders commitment for participation in the field trials. Rossi (1977) pointed out, in addition, that results of projects are unpredictable and often equivocal and can result in disappointment or bitterness within the community organization. Organizational personnel typically are skeptical at the beginning, but once work is in progress they may develop high and unrealistic expectations about results, especially when their investment of time and effort is substantial. Taking time initially to jointly establish feasible objectives for both entities can minimize this kind of dissonance.

The researcher can enhance the prospects for a cooperative relationship by selecting a compatible organization whose concerns and activities are conducive to those involved in empirical study (Alkin, 1985). Good matches can be found in organizations that

-

are open to innovation,

-

have been involved in research before,

-

train students,

-

use information and research regularly in decision making,

-

encourage staff to take courses (providing released time or tuition support), and

-

include a number of staff who teach or have taught courses.

Personnel are then given information on research objectives and methods. Training sessions can include orientation meetings, workshops, special seminars, and informal discussions.

Interorganizational collaboration is also a consideration in the selection of the investigator's own staff. Shadish, Cook, and Leviton (1991) have proposed a set of attributes to look for in recruiting such individuals, including the ability to function in complex, uncertain and ambiguous situations, a programmatic leaning, negotiation and communication skills, flexibility, and the ability to respond rapidly to requests.

Additional guidelines to aid in the shaping of productive interorganizational exchange can be derived from the experiences of a team of intervention researchers with extensive experience (Schilling, Schinke, Kirkham, Meltzer, and Norelius, 1988). They advise that researchers

-

approach and orient the organization at least six months in advance,

-

invite suggestions from the organization on research objectives and procedures,

-

gear operations, if possible, to tangibly benefit the organization 's program,

-

make procedures compatible with organizational processes,

-

specify costs to the organization openly and clearly,

-

indicate personnel time demands, client risk, and potential liability,

-

provide ongoing recognition to personnel for effort and accomplishments,

-

provide ongoing feedback through progress reports, and

-

help implement intervention products in the organizational setting.

Even for the most carefully designed preventive intervention, a single randomized field trial is not likely to result in an innovation prototype ready for large-scale implementation in the community. Furthermore, critical research questions concerning such issues as the plausibility of causal hypotheses regarding the role of risk or protective factors and the assessment of subsequent rates of disorder may require multiple generations of preventive trials. Both multiple generations and multiple sites may be required to determine the “active ingredients” in the intervention, that is, to distinguish the core elements, which must be included to ensure fidelity when a program is adopted by a community, from the less essential features, or adaptable characteristics (see Chapter 11 for further discussion of this critical issue).

After efficacy has been established in large-scale field trials, a final trial is needed to determine the program's effectiveness (as distinct from its efficacy), that is, “the extent to which a specific intervention, procedure, regimen, or service, when deployed in the field, [emphasis added] does what it is intended to do for a defined population ” (Last, 1988). For this trial the investigator turns the carefully tuned intervention program over to the organization that hopes to run it, but leaves the research component in place. This stage in the research cycle is frequently not achieved, but the Centers for Disease Control and Prevention is currently planning to test the Infant Health and Development Program (see Chapter 7 and program abstract, available as indicated in Appendix D) for effectiveness in field trials. Convincing documentation of the program's effectiveness (its efficacy already having been established) would be likely to lead to widespread dissemination of the program.

Facilitation of Large-Scale Implementation of the Preventive Intervention Program in the Community

When researchers and community organizations work together at all stages of the program, they can avoid the problem of “manifest” but not “true” adoption of an innovative preventive intervention. Rappaport, Seidman, and Davidson (1979) have shown what can happen when a

community “adopts” an intervention program shown by research to be efficacious and effective, but modified by community organizations in a manner that produces unexpected negative consequences for the recipients. This need not happen, but ensuring a positive large-scale implementation effort requires considerable knowledge and attentiveness to the concepts of core elements and adaptable characteristics. At this step, the investigator can provide a manual describing the program to guide implementation. The investigator can also facilitate a decision by the organization to include an ongoing evaluation component in the program. (See Chapter 11 for a description of other ways to facilitate the exchange of knowledge between investigator and community organization.)

METHODOLOGICAL ISSUES

It is essential to delineate explicitly the goals at the outset of designing the research component of a preventive intervention research program. For example, is the goal to reduce an occupational, social, educational, family, or personal risk factor—such as child abuse, marital stress, unemployment, or aggressive behavior? Is it also to enhance protective factors? Is the goal to intervene with mechanisms, triggers, and processes related to the onset of disorder? In addition to these goals, the ultimate goal of preventing or delaying the development of a full-blown mental disorder(s) should be explicitly stated even though at this stage that may not be the goal of the preventive intervention itself.

The goals influence the types of research methodology that will be used, as well as the answers to methodological questions encountered in steps three and four of the preventive intervention research cycle. Questions concerning the structure and duration of the trial and follow-up period, sampling, measurement, and statistics and analysis are considered here, including: What are the characteristics of the population to be used in sampling? How large should the sample be? What are the methods to be used to produce and measure changes in the targeted risk and protective factors in the population? What are the methods to be used to measure changes over time in the incidence of the targeted disorder(s) or problem?

Structure and Duration of the Trial and Follow-up Period

Structure of the Trial

The randomized controlled trial, in which members of a population are randomly allocated into experimental and control groups, usually is

the preferred experimental design in research studies, and it provides the most rigorous means for hypothesis testing available in preventive intervention trials as well. Random assignment helps to ensure that the participants' responses are unbiased estimates of what the average responses would have been if all members of the population could have been assigned to one of the two groups. Frequently, when trials are designed, there are conceptual or hypothesis-driven reasons to include more than one intervention for evaluation. Hence a particular prevention trial may have more than one experimental group, and therefore random assignment is made to multiple groups.

Randomized control groups are particularly important in selective and indicated prevention trials, especially if the targeted groups have been chosen carefully enough to ensure that they are at very high risk. In such trials the interventions are working against the probabilities associated with the “natural” course of the pathological process. If this course is increasingly negative, the results of the intervention can appear to be an increase in problems that did not exist before the intervention. On the other hand, some problems are self-limiting, and positive results may be due to the passage of time rather than the intervention. A finding of lower incidence of disorder in the experimental group as compared to higher incidence in the control group is the best way of documenting the effect of a preventive intervention.

Although randomized controlled trials remain the optimal design for preventive intervention trials, quasi-experimental time series designs can sometimes permit investigators to capitalize on policy or regulatory changes and conduct natural experiments in the real world, as, for example, with the Intervention Campaign Against Bully-Victim Problems (Olweus, 1991) reviewed in Chapter 7. Campbell (1991) has described a number of policy-oriented interventions that can be analyzed by using interrupted time series and regression, discontinuity analyses.

The logic of the interrupted time series analysis is relatively straightforward. The independent variable, in this case the preventive intervention, is expected to produce a change in the group under observation. The intervention “interrupts” a series of baseline observations at a specified point. If the intervention does indeed have an effect, the time series preceding it should differ from the time series subsequent to it. In a treatment study, for example, Liberman and Eckman (1981) used an interrupted time series design to assess the impact of two brief inpatient interventions on suicide ideation and attempts. Both treatments markedly reduced suicide attempts when two years following intervention were compared with two years prior to hospitalization. Such simple

quasi-experimental time series designs cannot rule out alternative explanations for a change in the variable of interest. Thus it is more desirable to use controlled, experimental forms of time series, such as multiple baseline, multiple schedule, reversal, withdrawal, and multi-element designs (Barlow and Hersen, 1973) or to combine time series with nonequivalent control group designs, as Cook and Campbell (1979) suggest.

Obviously, one cannot have as much confidence in the results of quasi-experimental designs as true experiments. Therefore quasi-experimental designs should be used only when it is not possible to randomize. An important problem with nonequivalent control group designs is that researchers simply may not be able to create control groups that are similar enough to the experimental group.

Several other problems severely restrict the conclusions that can be drawn equally from both true experiments and quasi-experiments. Consider, for example, instances in which, despite efforts by the research team, participants in a control group know that they are not receiving the desired intervention. The control group, as an “underdog,” may be motivated to reduce or reverse the expected effect of the intervention (Cook and Campbell, 1979), the so-called “John Henry effect.” On the other hand, members of a control group may be demoralized in knowing that they are not receiving the desired intervention, and there may be a decrement in their performance. Because control groups are often intact groups that may interact with one another, such as students in a particular classroom or residents of a particular block or neighborhood, their proximity increases the likelihood that they will act in concert or develop similar perceptions of the experiment.

Duration of the Trial and Follow-up

The length of the intervention as described above—short enough to be practical and yet long enough to be effective—governs the length of the preventive intervention trial as well. In addition, because a decrease in the incidence of a disorder is the major long-term goal, participants should be followed longitudinally in prospective designs. Therefore follow-up periods can be quite lengthy, but this is a complex issue. The longer the duration of follow-up, the greater the power—that is, the statistical capacity to be able to demonstrate a significant result—may be to detect the efficacy of the program in showing short-term as well as long-term positive effects. This is not, however, necessarily so. If multiple factors are involved in the onset of a disorder, lengthy

follow-up provides more opportunity for uncontrolled factors to influence outcome.

Long time frames also may be necessary in order to get beyond the age of risk of onset. Preventive intervention trials cannot prove that a particular disorder has been permanently prevented; they can only provide evidence that the onset of the disorder has been delayed for as long as the trial proceeds. A participant still free of the targeted disorder at the end of the trial's follow-up period may have the onset of the disorder one day later, unless the trial has followed the participant completely through the age at risk. For many mental disorders, however, risk of onset continues through the life span.

The longer the trial and follow-up, however, the greater the cost and difficulty, and the longer the delay in obtaining answers to the research questions. Consideration of cost, of course, plays a crucial part in the setting of the follow-up time. Funding sources are understandably reluctant to fund research programs that will take 10 to 20 years to produce results. But the current practice of short-term support is especially limiting in regard to research on prevention of mental disorders.

Lengthy follow-up periods that delay the reporting of results have disadvantages in terms of scientific practice as well. As time goes on, the importance attached to certain research questions changes. Also changing are the methods of measurement, diagnostic criteria, and other issues that must be taken into consideration. Therefore a balance must be found between the gain in power and precision resulting from long-term follow-up and the loss in relevance and quality of content or substance that may be incurred. This also suggests that data should be kept on symptoms and behaviors because the definition of disorders is subject to change.

The practical limitations placed on the duration of a preventive intervention trial and follow-up in part can be dealt with by timing the implementation carefully. Selecting participants who are moving into their period of highest risk for the onset of the disorder, a period of critical developmental challenge and maturation, or a period of high responsiveness to protective effects, permits detection of effects that are sufficiently large and immediate.

Sampling

The choice of the sample from the targeted population has methodological repercussions. The major problem in using selective and indicated preventive interventions is the identification of the high-risk group. Obviously, it is critical that the definition of high risk be a valid

one. The sensitivity and specificity of screening tests are used in the determination of risk status. Sensitivity is the proportion of truly diseased persons in the screened populations who are identified as diseased by the screening test. Specificity is the proportion of truly nondiseased persons who are so identified by the screening test. Sensitivity and specificity of identification criteria tend to “see-saw”; that is, the cost of having high specificity is usually low sensitivity. It is possible to develop criteria with high sensitivity, but the result will be a far looser definition of what constitutes high risk.

It is useful to obtain samples that span the full range of gender and culture among individuals at risk. Since passage of the National Institutes of Health Revitalization Act of 1993, such representative samples are legally required. A trial of a preventive intervention that excludes women produces results that do not necessarily generalize to women; one that excludes minorities may yield results that do not necessarily generalize to minorities. The principle is clear: If one excludes any group from a trial, the results of that trial cannot be assumed to generalize to that group. For this reason, in implementing indicated interventions in which there are stringent inclusion and exclusion criteria, the effects of these restrictions on the generalizability of the results of the trial to the population at large must be carefully considered. In locations that include large segments of non-English-speaking individuals, studies must include assessment and interventions in the appropriate language (Muñoz and Ying, 1993; Maccoby and Alexander, 1979).

The use of a universal population presents methodological problems of a different sort. Such a population is typically diverse and may include participants who are not receptive to the program for a variety of reasons. The heterogeneity, combined with the low incidence rates of the disorder likely in such a population, creates a situation in which very large sample sizes are necessary to detect any indication of efficacy or effectiveness. Because the effects may seem quite small, the clinical or policy significance of the prevention program may be underestimated. Uniform implementation of both intervention and measurement protocols in universal interventions across entire communities may be difficult. Such problems reduce statistical power, either by increasing the heterogeneity of response or decreasing the reliability of the response measures.

For a trial using a universal population, the investigator can plan secondary analyses to focus on those subgroups likely to be most at risk and most receptive to the intervention, in order to generate results comparable to those that would be obtained with the selective or

indicated populations. Thus the investigator can have both generalizability of results and the possibility of specific a priori subgroup analyses of special interest. The costs and difficulties of such studies are, however, often substantial.

Another method for analyzing subgroups is stratification, that is, the separation of a sample into several subsamples, sometimes along a continuum, according to specified criteria such as age or level of education. Stratification can be useful when factors measurable at baseline, that is, at the beginning of the intervention, are believed to correlate strongly with onset of the disorder or problem. In such a situation, greater power can be achieved when samples are stratified before randomization (thus creating subsamples) and participants from the subsamples are randomized to the intervention and control groups.

If there are certain subgroups of the population of special interest, naturalistic sampling will likely yield too small a sample size to draw definitive conclusions that can be generalized to each subgroup separately. To answer questions about subgroups adequately, the investigator should ensure that there is adequate power through oversampling of these groups. Alternatively, it is often desirable to design a separate trial for each subgroup with a sample size adequate to answer the questions.

Measurement

Although the processes of focusing attention on the questions that require answers, selecting the appropriate measures (and thus excluding the rest), deciding when and how often to measure, choosing the best measurement techniques or instruments, and taking steps to ensure the reliability and validity of the selected measures are perhaps the most tedious and difficult parts of a trial, these are also among the most essential procedures in determining its success or failure.

What to Measure

Careful selection of primary outcome measures is essential to the success of a preventive intervention trial. These are usually the measures of changes in the theorized mediating variables, including risk and protective factors, that are assumed to be responsible for the reduction in risk. They may be psychological outcomes such as measures of precursor signs or symptoms, social outcomes such as reduction of poverty, or biological outcomes such as reduction of the incidence of low birthweight. Finally, in the prevention of mental disorders, it is particularly desirable for programs explicitly to include measures of the

incidence of mental disorders. One benefit of including incidence measures is that they may reveal that a risk factor that was found to be malleable was not causal, thus contributing to the knowledge base about etiology.

Evidence of risk reduction is used as the primary outcome measure, that is, the measure used most often to document the results of the trial, in part because it is available first. For example, in the Perry Preschool Program, a selective preventive intervention with preschoolers that was intended to increase their intellectual and social development (see Chapter 7), the long-term results, including measures of such factors as how long the children stayed in school, took many years to be documented. However, short-term measures of behavioral problems, such as lower rates of aggression, lying, and stealing, served as early evidence of the success of the intervention. Documentation of the changes in risk and protective factors for the child could have been extended for a more complete picture of mediating variables. Although disorder incidence measures were not included in the Perry Preschool Program, it is one of the few research programs to have included long-term outcome measures of any sort.

Measures of process are also appropriate. Because prevention programs typically have many components, it can be difficult to determine which one accounts for the success of the intervention. However, it may be possible to examine, on a more exploratory level, the impact of the different components by gathering data that are descriptors of process. Measures of process are selected to reflect certain characteristics of the participants, program, activities, change technologies, and so on, and of the interaction of these, that might help to generate hypotheses as to why and how the program might work. For example, what is it that the intervener actually said and did during home visits, or how do teachers respond to participants' aggressive outbursts at school? This set of measures can include consideration of risks, costs, inconvenience, and dosage effects. Measures that reflect different theoretical models can be chosen to elucidate most salient elements of the intervention.

Measures of compliance are another type of process measure. Participants at the outset of a preventive intervention trial may simply refuse to participate. If they agree to participate, they may not comply, in whole or in part, with the procedures and activities of the prevention program. It is useful to document the extent of compliance of individual participants throughout the trial. If the program does not prove to be efficacious, it is important to gain insights as to why participants did not comply, as a basis for consideration in the design of future prevention efforts.

A primary goal of a trial is efficacy, but process measures may provide information that can be useful in improving the design of a subsequent prevention effort. Furthermore, such data provide documentation of the fidelity of the program, that is, the extent to which the components of the program as designed were actually delivered and received by the participants.

When and How Often to Measure

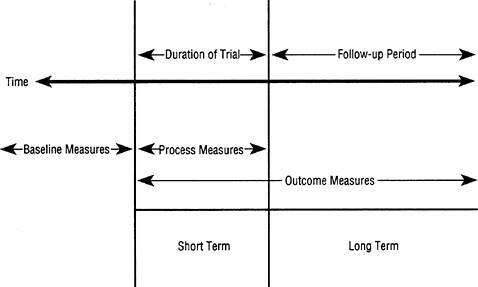

Random assignment does not yield groups that are identical on all baseline variables. Therefore an extensive collection of baseline information, including targeting variables, is necessary. Without this, the investigator's ability to draw firm conclusions about what would have happened in the experimental group in the absence of the intervention is compromised. The baseline information is also needed to determine eligibility for the program, to ensure that the elements in the prevention program are not already in place in the participants ' environment before the experiment, to describe the population to which the results might be expected to generalize, to document the success of the randomization procedures, and, in secondary analysis, to detect those subgroups for which there is differential outcome. If there are dropouts or missing data, baseline information is also necessary to investigate the possibilities of resulting sampling bias. Figure 10.2 shows the points on the time line for the trial and follow-up at which the baseline and other measures are taken.

After the baseline assessment, the greater the frequency of observation, the more precise the measurement of onset and course. Outcome measures on each participant should be taken frequently enough to determine the timing of short-term effects. Long-term outcome measures taken at follow-up to determine incidence should be continued past the mean age of onset for the disorder. Frequent follow-up can bind the participants more closely to the program and promote receptivity and compliance. However, too great a frequency of observation (particularly when the assessments are difficult, long, tiresome, stressful, or invasive) may annoy the participants and produce the opposite effect. The quality of information (validity and reliability) may suffer; dropouts may increase. Clearly, some balance must be achieved.

Which Measuring Techniques and Instruments to Use

The selection of outcome measures for use at baseline, over the short term, and during follow-up includes consideration of the relative value

FIGURE 10.2 Measurement points along the time line for the intervention trial and follow-up period. The total length of time varies according to disorder targeted (to get past mean age of onset) and how closely the program is tied to age of highest risk.

and use of continuous (that is, a scaled or dimensional response) measures and the more usual categorical (that is, a number of nonhierarchial responses) measures. Almost always, a variable can be measured with either technique, but the yield is different. When they are appropriate, continuous measures can increase statistical power, but the crucial issue in deciding on the outcome measures in a trial of a prevention program is that of selecting the most valid and reliable measures available.

The most likely strategy to detect effects and to be cost-effective includes both categorical and continuous measures. For example, the intervention may produce a modest reduction in the incidence of new cases that meet the designated diagnostic criteria for a particular disorder or in the existence of specific risk factors (categorical measures), but may produce a very great reduction or attenuation in the severity and duration of risk factors, including precursor symptoms (continuous measures). Also, even if the intervention failed to prevent the onset of a disorder, it might reduce the severity, duration, or disability of the disorder (continuous measures). An example from infectious disease may illuminate this point. If an antibiotic taken prophylactically to prevent traveler's diarrhea produced only a modest reduction in the

frequency or incidence of a threshold for diagnosing the enteritis (e.g., presence of bacteria in stool with at least one episode of diarrhea with or without abdominal discomfort), but produced a very large reduction in the severity of the diarrhea (i.e., reduced frequency and amount of loose stools as well as reduced frequency and severity of abdominal discomfort), then the verdict of that prevention trial might be that the antibiotic was indeed useful in prophylaxis. A similar argument could be made for the effectiveness of fluoridated water in preventing the number and severity of caries; the effectiveness of a cognitive behavior therapy preventive intervention on the depth, duration, and disability of major depressions; and the effectiveness of family- and school-based educational and skills training programs with children at risk for conduct disorder on the incidence and severity of subsequent delinquency, substance abuse, and antisocial behavior.

Whether the chosen measures are categorical or continuous, they should display high internal consistency and construct validity based on earlier psychometric analyses and research as well as high reliability with different assessors. With the advent of the DSM-III and DSM-III-R, certain comprehensive diagnostic instruments that can elicit all the signs and symptoms of mental disorders have come into general use and provide a means for improving the reliability and replicability of diagnosis. Diagnostic interviews such as the Diagnostic Interview Schedule (DIS), the Present State Examination, the Schedules for Clinical Assessment in Neuropsychiatry (SCAN), and the Structured Clinical Interview for DSM-III-R (SCID) (all of which can provide continuous and categorical measures) can improve the detection of symptoms. They also lead to operational criteria for improving the accuracy of rating the presence or absence of symptoms or disorders. When diagnosticians are trained in the use of these structured instruments, they become more consistent, systematic, and precise—thereby enhancing the reliability, validity, and power of the preventive intervention trial. In addition, the work groups responsible for producing DSM-IV and ICD-10 have purposely interacted in their development of diagnostic criteria, and these classification systems are coming closer together.

How Many Measures and Instruments to Use

Measures should be carefully chosen and relatively independent. Every variable measured yields both signal and noise. Multiple noisy measures (unreliable measures) of the same signal used separately add no signal to the system, only noise. The signal is merely repeated along with the noise. For this reason, in any set of highly correlated measures,

the investigator should either select the best and delete the rest or combine them into one measure. This strategy not only reduces the number of analyses, thus diminishing the risk of false positive results, but also diminishes the risk of false negative results, because combining multiple measures of the same signal frequently results in “tuning out” much of the noise and thus “tuning in” the signal, resulting in a combined measure that is more reliable.

Having many measurements and diagnostic assessments may compromise the quality control of the measurements. When there are only a few crucial measurements on which the success of a trial depends, the investigator can spend a great deal of time and effort to select the best instruments, provide adequate training and orientation to the assessors, and institute adequate quality control procedures. But if hundreds of variables are collected, expedient measures of limited validity become a temptation, and the consistency and care in assessing each variable may be compromised. The fatigue of both participants and assessors can further impair the quality of measurements. What is sometimes called a “rich” data set that contains a large number of variables may, on closer inspection, be rich only in noise, not in signal.

Very large data sets require more staff effort to maintain and analyze. A large number of variables will not be helpful if key variables that will reflect the hypotheses are inadequately measured. Investment in quality control of key variables that includes error checking, detection, and correction procedures is critical to achieve a valid result.

How to Ensure Reliability and Validity of Measures

Reliability “refers to the degree to which the results obtained by a measurement procedure can be replicated,” and validity “is an expression of the degree to which a measurement measures what it purports to measure” (Last, 1988). Seldom do diagnostic procedures in any area of medicine have a reliability coefficient above 80 percent. Many diagnostic procedures in common use have reliability coefficients between 40 and 60 percent. The issue of reliability of diagnosis in psychiatry has certainly received far more attention than has reliability of diagnosis in most other fields of medicine. But the principle remains: unreliability tends to attenuate power, necessitating larger sample sizes (Kraemer, 1979); therefore, it is especially important to develop reliable methods.

A common error made in addressing the issue of validity is to collect many poor measurements in the hope that these will somehow make up for the absence of one highly valid measure. But multiple poor measurements that do not accurately assess the construct of interest can lead

to false positive or false negative results. To add to the confusion, multiple outcome measures can produce contradictory findings, making it impossible to draw any conclusions at all. In addition, lack of sensitivity to cultural variations in meaning can confound the validity of measurement; for example, among the Navajo the concept of “home ” includes the extended family, whereas in the mainstream culture it is restricted to the nuclear family. If a measure includes only nuclear family members, it will miss an essential part of Navajo life and therefore be less valid.

To ensure validity, outcome measures ideally should be assessed “blind” to the group to which the participants have been assigned. Measurement and assessment procedures that include any subjective component may be affected by the assessor's knowledge of group membership. Thus a certain response pattern, when observed in a participant known to be in the experimental group, may be assessed differently from the same response pattern observed in a participant known to be in the control group. This phenomenon introduces measurement bias and compromises the validity of the results. A quality control check on raters' blindness can be done by administering a questionnaire to raters at several times during the prevention trial, asking them to make guesses about the assignment of the participants.

As is the case in many randomized controlled trials, however, it is simply not possible to blind all assessors to the group membership of the participants. When the measure is based on self-report, it is often not possible to blind the participants to their own group membership. This situation places a premium on measures that are objective. It also makes the implementation of training and orientation procedures for assessors, and quality control procedures such as periodic reliability testing of the assessors over the course of the study, more vital to the validity of trials of prevention strategies than might otherwise pertain.

Adherence to the measurement protocols of the research program, for both experimental and control groups, adds to validity and reliability. Requirements for such adherence to protocol are often viewed as a rigidity that runs counter to good clinical care, and maintaining these protocols is difficult over the course of a long-term study. Such requirements are often seen as a challenge to the morale and commitment of the researchers, particularly to those who are also clinicians. Special efforts must be made both to inform all research colleagues of the necessity for such adherence and the consequences of deviations from protocol in terms of the validity and power of the results, and to ensure the enthusiastic participation and commitment of all participants to the goals of the study.

Statistics and Analysis

Strategies for Data Analysis

Randomized controlled trials in which participants are followed longitudinally inevitably entail collection of a great deal of data, no matter how parsimonious the investigator has been in choosing and pruning the type and frequency of measures and instruments. Many statistical methods for analyzing these data exist. For categorical data, the most familiar of these methods are logistic regression, log-linear modeling, and discriminant analysis.

For continuous measures, methods for the analysis of repeated measures are required. Considerable interest has been generated recently by the use of random effects regression models as alternatives to repeated measures analysis of variance and covariance or MANOVA designs (Gibbons, Hedeker, Elkin, Waternaux, Kraemer, Greenhouse et al., in press; Laird and Ware, 1982). In this methodology a separate curve is fit to each participant's response data, using a few clinically interpretable parameters to define the mathematical model for the curve. Unlike the more familiar repeated measures analysis of variance designs, these methods are relatively tolerant of missing data, irregular follow-up, and dropout. Moreover, because these approaches use scaled response data, they can be more powerful than approaches using binary indicators of disorder applied in the same context.

Latent structural equation modeling is a statistical tool for examining the relationships among multiple variables. This statistical methodology is available to clinical researchers as part of major software statistical packages. It permits simultaneous testing of complex multivariate hypotheses and may have considerable promise as a tool for exploratory data analysis. However, its utility in formal statistical hypothesis testing is less clear, because the validity of the statistical tests depends on the correctness of strong assumptions about multivariate distributions and on the existence of a clear theoretical model (i.e., Fergusson, Harwood, and Lloyd, 1991).

As more data regarding age of onset are gathered, the preferred analytic strategy for comparing incidence rates across groups is likely to be survival analysis. Survival analysis is a flexible and powerful statistical method for analyzing incidence of illness when time to onset is known. Like the more familiar contingency table methods based on counts of numbers of participants who have onset of the disorder during some follow-up interval, survival analysis can be used to compare the risk of becoming ill in two or more groups. Indeed, because survival

analysis depicts incidence across the whole follow-up period, it provides a more detailed picture of outcome. Both parametric and nonparametric approaches are widely available, the former being more powerful when the distributional assumptions are valid, and the latter being less restrictive and more familiar. Survival analysis is typically more powerful than simple counts of incidence during a specified period, particularly when base rates are low. The methodology adjusts for participants who are lost to follow-up. As with regression analysis, covariates can be analyzed, including both main effects and interactions.

The probability of a participant's surviving through a period of risk without developing a disorder may change as the duration of the intervention and follow-up increases. This changing probability is called the survival function. For example, the longer a participant proceeds through the period of risk for a disorder, the lower the probability for developing the disorder. Statistical methods of survival analysis are being used in treatment trials and epidemiological studies of onset and natural history (Elandt-Johnson and Johnson, 1980).

Typically, there are individual differences in the susceptibility among participants in any group—based on risk and protective factors —and these differences are reflected in different survival function shapes. Some participants may be essentially immune to the disorder, and, at the other extreme, some may already be experiencing the precursor signs or symptoms of the disorder at the initiation of the trial. Survival function curves begin at 100 percent and either decrease or plateau as participants succumb to the disorder. Thus it is possible to identify individual differences among participants, as well as to detect differences in effects of the experimental and control conditions.

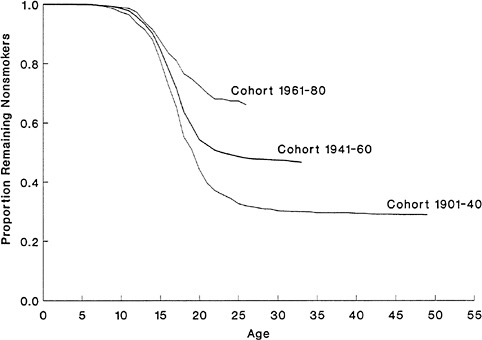

Groups of participants with high survival curves (i.e., close to the 100 percent level of survival) are “low risk,” and those with low survival curves are “high risk,” but these are relative terms, with no precise definition. Participants selected from the general population are likely to be “low risk,” and participants selected because they have risk factors such as a family history of the disorder are likely to be “high risk” in terms of lifetime risk. In the real world, an individual participant's survival curve is a hypothetical construct that cannot actually be seen. However, the average survival curve for any group of participants can be estimated and provides information about delay of onset in that group. For example, Figure 10.3 presents three survival curves using new data from the Five Cities Program of Cardiovascular Risk Prevention (see Chapter 3 and the commissioned paper by Kraemer and Kraemer, available as indicated in Appendix D). These survival curves demonstrate the reported onset of smoking in three male birth cohorts: A: (1901

FIGURE 10.3 Survival function estimates. Using data from the Five Cities Program of Cardiovascular Risk Prevention, three survival curves demonstrate the stages in the distribution of onset of smoking in three male birth cohorts: A: (1901 to 1940); B: (1941 to 1960); and C: (1961 to 1980).

to 1940); B: (1941 to 1960); and C: (1961 to 1980). The last cohort was born after the publicity and widespread education following the Surgeon General's recommendation against smoking for health reasons. The change in the distribution of onset of smoking is clear. The onset of smoking came later, particularly in cohort C, and the lifetime prevalence (indicated by the plateau value) of smoking decreased in the later cohorts, leaving more nonsmoking “survivors” and a higher plateau value.

For a given group, the point in time at which its curve reaches the 50 percent point is the median survival time or median onset time. Thus the median age of onset of smoking for cohort A was about 20 years, and for cohort B, about 22 years. For cohort C, the median time is not less than 25 years (at which point 65 percent have survived without smoking), and there may be no median age of onset overall, for fewer than 50 percent may have the onset of smoking during their lifetime.

An interesting aspect of survival analysis is the hazard function, which is the probability of becoming ill at each point in time. Analyses of changes in risk over time may be particularly sensitive indicators of a program's efficacy and effectiveness. The hope is that the intervention program might begin to exert an effect at its inception and gradually build to its full effect as it is fully implemented with desired impacts on the participants' risk and protective factors. The hazard function curve quantifies the probability per unit time that a participant who has survived up to a particular time will have the onset of the disorder in the very short ensuing time interval.

By restricting consideration to those who have survived up to a particular point in time, the investigator can control for factors before that point that have already exerted their effects. By restricting the consideration of the hazard function curve to a short time period, factors that exert their influence on onset of disorder during that time interval only can be identified. Whereas the survival function curve must be either constant or decreasing downward from its initial 100 percent level, the hazard function curve can take any shape at all. It may be flat, it may increase as it does for disorders associated with aging, or it may decrease as it does for disorders primarily associated with infancy. Depending on the natural history and risk periods for the disorder, hazard curves may grow, recede, or have one or several peaks.

Whereas survival and hazard functions can illuminate the changes in incidence of mental disorders among participants in a prevention program, impacts of the program on the severity of the disorders that do develop among participants for whom the prevention program failed, such as the degree of impairment or disability, relapse pattern, or duration of episodes, require the use of prevalence assessments to highlight the differences between the experimental and control groups.

Currently, however, it may not be practical or feasible to obtain valid measures of time to onset for survival analysis. For an insidiously developing disorder, such as schizophrenia, the time to onset may be difficult to ascertain and, at least from the point of view of analyzing a prevention research program, relatively unimportant. If survival methods cannot be used, random effects regression models permit the best use of incomplete follow-up data for participants and help avoid some of the problems of sample bias associated with low retention rates during a trial. However, such problems do not disappear; every missing data point or dropout from the study costs some degree of power.

The Unit of Analysis and Statistical Power Consideration

When interventions are delivered to groups rather than to individuals, the appropriate unit of analysis is the group. Because there are typically far fewer groups than there are individual participants, use of the group as the unit of analysis may appear to result in a major sacrifice in power. However, power is not totally determined by the degrees of freedom, that is, the number of independent comparisons that can be made between the members of a sample. Power is more strongly affected by the size of the effect. Therefore groups can be used as the unit of analysis, and “two-stage” statistical models can be used for that purpose (Gibbons et al., in press).

The number of individuals or groups necessary to detect clinically significant effects with sufficient power is dependent on the design of the trial. The number may vary from two cities per group to tens of thousands of individuals, so choices made in sampling (such as whether entire communities or individuals are being studied), as well as choices made in measurement (such as whether categorical or continuous measures are used), can have an effect on the power achieved.

Power calculations should precede the initiation of a preventive intervention trial to determine the requisite sample size (Muñoz, 1993). For example, for a universal preventive intervention trial targeting the general population with a short follow-up period to measure the onset of a disorder that has a low baseline frequency and unreliable diagnosis, having one million participants may not yield adequate power to detect statistically significant effects. On the other hand, for a trial of a potent selective preventive intervention sampling a relatively high risk population and using frequent, repeated measurements that are valid and reliable, with a long follow-up period and good retention of subjects, a sample size of 50 per group might be adequate. It is important to keep in mind that false positives will always be more frequent with small samples than with large ones. The issue, then, is not only how many participants to use, but also how to design the trial to get the greatest power within the limits of the trial's feasibility. Once issues of feasibility and likelihood of effects being found are determined, standard power calculations (Cohen, 1988) can be used to determine the number of participants that are needed.

DOCUMENTATION ISSUES

For the committee's examination of preventive intervention research programs, it compiled a list of criteria, which appear in Chapter 7, to be

used in identifying-research programs of particular merit. In documenting research programs in the future, the investigator may find these guidelines useful. But an even higher standard will be desirable in the next decade of preventive intervention research. For example, efforts will need to be made to assess costs and benefits in a realistic way (see the section on economic issues below).

When the research program has been completed, the design, sampling, measurement, and analytic decisions should be specified in the peer-reviewed literature and manuals in sufficient detail that they can be replicated by others. The background and rationale are also relevant. When the results have been analyzed, the statistical methods used should be reported in such a way that the proper inferences can be made about the effectiveness of the prevention program. Descriptive statistics can be used to describe the groups at baseline and to demonstrate the randomization of the groups. If the sample was stratified, descriptive data can be presented for each stratum.

Some details should be presented about how many participants were recruited, how many screened, how many passed and failed that screening (and why), how many consented (and why refusals occurred), how many of those who consented were actually randomized (and why some were omitted), and, of those randomized, how many entered their assigned groups (and why others did not). Of those who entered the randomized groups, how many completed the follow-up (and why did others not)? Of those who dropped out of the experimental and control groups, how long did they last in the protocol? What baseline factors were associated with dropout, and were they the same in the experimental as in the control group? How many of those in the experimental and control groups complied with the protocol (and why did others not)? In short, any information on the sample pertinent to sampling bias, measurement bias, or any other type of bias should be presented so that readers can judge how convincing the results are. A flowchart format can sometimes present these data clearly and efficiently.

There should be brief descriptions of the protocols for recruitment, retention, experimental and control delivery, and of measurement. Documentation of the quality of measurement (reliability or validity) is always valuable in aiding judgments of the results.

The estimated survival curves or hazard curves (or both), in addition to simple summary statements of statistical significance, are valuable in assessing the size and hence the clinical or policy importance of statistically significant results. They are also essential in assessing whether nonsignificant results are the result of low power and thus worth further pursuit or the result of ineffective preventive intervention

and thus not worth further consideration. If the secondary results prove informative, these might be documented with separate survival or hazard curves for subgroups found substantially different in response or for subgroups substantially different in terms of process (such as compliant versus noncompliant subjects).

ISSUES OF CULTURE, ETHNICITY, AND RACE

As discussed earlier in this chapter, the success of preventive interventions —whether at the level of the individual, family, community, or nation —depends heavily on the contexts in which they are delivered. Clearly, anticipating the social and cultural elements of these contexts and accounting for such elements in terms of content, format, staffing, and implementation are critical to subsequent outcomes. Given the cultural diversity that characterizes this country, no discussion of the current and future status of preventive intervention research is complete without systematic attention to culture, ethnicity, and race (Muñoz, Chan, and Armas, 1986).*