WORKSHOP SUMMARY

A long-held goal in oncology has been to develop therapies that target the specific abnormalities in each patient’s cancer rather than simply treating cancers based on the tissue of origin. Early pioneering efforts with cancer drugs such as Gleevec and Herceptin have shown how effective it can be to treat tumors based on the genetic anomalies they harbor. In the past decade, advances in technology have enabled researchers to relatively quickly and inexpensively determine, in minute detail, the genetic makeup of tumors. Studies using this new technology have garnered greater knowledge about the molecular underpinnings of cancer, uncovering specific genetic alterations that drive the growth of individual tumors. Consequently, the rationale for and feasibility of developing molecularly targeted cancer therapies has never been stronger. Although relatively few targeted cancer therapies are currently available in the clinic and it is not yet clear whether all cancers are driven by genetic changes that can be targeted, there is widespread optimism in the cancer community that this new ability to assess the genetic abnormalities in tumors will ultimately lead to better cancer treatments and improved patient outcomes. There are hundreds of candidate targeted drugs in the development pipeline and several new cancer drugs targeting specific genetic alterations have entered the market in the past 2 years.

However, many challenges remain in effectively and efficiently developing new targeted cancer therapies and the biomarker tests that indicate

which patients will be responsive to them, and in implementing them appropriately in clinical practice. These challenges include many policy issues, such as the level of oversight needed for test development and use, levels of evidence necessary for reimbursement decisions, and ways to meet informational needs of patients and care providers. New paradigms may be needed for assessing the efficacy of targeted therapies as well as the clinical validity and usefulness of biomarker tests. The standard approach of defining treatment based on the anatomic origin of cancer is becoming less tenable now that genomic tests are stratifying cancers into rare subsets defined instead by the molecular drivers of the tumors. As use of these tumor profiles has become more common and extensive, clinicians may need more clarity on how to interpret and act on them in the clinic. In addition, the marked complexity and rapidly evolving nature of the latest genomic tests have raised questions about whether new standards and methods are needed for assessing their validity and clinical utility, as well as for making regulatory and reimbursement decisions.

Review and oversight of test development is currently quite variable. Most tests used in clinical practice have never been reviewed by the Food and Drug Administration (FDA), but rather are offered as laboratory-developed tests (LDTs). Laboratories that perform these tests are subject to quality assurance requirements under the Clinical Laboratory Improvement Amendments (CLIA),1 but the tests are not subject to FDA review. Even when a drug and a biomarker test are co-developed and co-approved by FDA, with the companion diagnostic listed in the drug label, clinical laboratories can quickly develop similar tests as LDTs and offer them to patients without FDA review. The LDT pathway can facilitate rapid innovation in test development, but concerns have been raised about whether greater oversight is necessary for more complex tests. FDA has recently announced the intent to develop a risk-based approach to the oversight of LDTs (FDA, 2014).

Neither development pathway, as an LDT or as an FDA-approved diagnostic test, requires evidence of clinical usefulness (clinical utility), which is often expected for reimbursement. Furthermore, there is concern that prevailing reimbursement rates for diagnostic tests often do not reflect the value of clinically useful biomarker tests. Thus, developers may be

_______________

1 See http://www.cms.gov/Regulations-and-Guidance/Legislation/CLIA/index.html (accessed March 18, 2015).

reluctant to invest the time and resources necessary to demonstrate clinical utility and support reimbursement decisions.

The Institute of Medicine’s (IOM’s) National Cancer Policy Forum has been organizing a series of workshops focused on these policy issues in the development of new cancer therapies. The first, held in 2009, examined a broad range of issues in developing “personalized” or “precision” therapy for cancer (IOM, 2010a). The most recent, held in Washington, DC, on November 10 and 11, 2014, entailed a 2-day workshop on “Policy Issues in the Development and Adoption of Biomarkers for Molecularly Targeted Cancer Therapies.”2 The next workshop in the series will focus on policy issues in the development of immunotherapies for cancer, a rapidly developing therapeutic area in oncology.

At the November 2014 workshop, subject-matter experts and members of the public discussed recent trends in the development and implementation of molecularly targeted cancer therapies and explored potential policy actions to address specific challenges. Topics included

- Recent advances in tumor biomarker tests and the developmental, regulatory, clinical, and reimbursement challenges they pose;

- FDA regulation of tumor biomarker tests and how it is evolving;

- Innovative trial designs, databases, and other potential ways to generate evidence to support reimbursement decisions;

- Practice guidelines and treatment pathways that can influence clinical implementation of molecularly targeted therapies; and

- Education and research needs to support the ongoing molecular biology revolution in oncology.

This report is a summary of the presentations and discussions at the workshop. A broad range of views and ideas were presented and a summary of suggestions from individual participants is provided in Box 1. Additional details and context for these suggestions can be found throughout the workshop summary. The workshop Statement of Task and agenda can be found

_______________

2 This workshop was organized by an independent planning committee whose role was limited to the identification of topics and speakers. This workshop summary was prepared by the rapporteurs as a factual summary of the presentations and discussions that took place at the workshop. Statements, recommendations, and opinions expressed are those of individual presenters and participants, and are not necessarily endorsed or verified by the IOM or the National Cancer Policy Forum; and should not be construed as reflecting any group consensus.

BOX 1

Suggestions Made by Individual Workshop Participants

Develop New Standards for Biomarker Tests

- Standardize specimen sampling, processing, and storage. Matthias Holdhoff

- Raise the bar for proficiency testing for genomic profiling and make the results public. Mickey Williams

- Establish reporting standards both for test methods and results, including minimum reporting requirements for publishing next-generation sequencing data. Mickey Williams

- Develop standards and processes for annotation of genetic variants in tumors and for reporting a genetic variant as clinically actionable. Patricia Ganz, Mia Levy, Federico Monzon, Richard Schilsky, Deborah Schrag, Mickey Williams

- Create standards for matching treatments with genomic test results. Richard Schilsky, Mickey Williams

- Harmonize global regulation for biomarker tests. Karen Long, Anne-Marie Martin

Generate New Evidence to Support Clinical Use of Tests

- Establish a single public curated databse for annotated data on cancer mutations identified in clinical studies. Matthias Holdhoff, Richard Schilsky, Mickey Williams

- Develop policies that support data sharing among laboratories, pharma and diagnostic companies, and health care providers to help advance the clinical knowledge base. Bruce Johnson, Mia Levy, Federico Monzon, Richard Schilsky, Mickey Williams

- Develop an app to help clinicians and patients identify clinical trials relevant to the results of tumor profiling tests. Lillian Siu

- Conduct more dynamic trials in which tumors are extensively profiled both at baseline and when the cancer progresses, or that entail frequent sampling of circulating tumor DNA. Matthias Holdhoff, Lillian Siu

- Include more data on patient characteristics, such as ethnicity, smoking history, weight, etc., as well as all relevant outcomes in databases and in the annotation of stored tumor specimens. Garnet Anderson, Patricia Ganz

- Use subgroup analysis in trials to identify specific mutations associated with response to targeted therapies. David Solit

- Support postmarket research and Coverage with Evidence Development to better assess clinical utility of biomarker tests. Donna Messner, Federico Monzon, Sean Tunis

- Include adverse-event reports in a transparent public registry under the Clinical Laboratory Improvement Amendments (CLIA). Federico Monzon

- Conduct prospective studies to gather data on who is using genomic tests, patient and provider perspectives on test results, as well as the outcomes, benefits, and costs. Kathryn Phillips

- Use “root-cause analysis” to assess whether a test addresses a clinical problem, provides results that are useful for patient management, and improves existing outcomes. David Eberhard

- Establish reimbursement science. Sean Tunis

Facilitate Innovation in Test Development

- Consult with the Food and Drug Administration (FDA) early in the test development process. David Litwack

- Make CLIA regulations more stringent rather than shifting test oversight to FDA. Federico Monzon

- Provide greater clarity on how laboratories are reimbursed for the services and innovation they provide. Dane Dickson, Federico Monzon

- Evaluate the impact of the changing health care policy environment, such as new current procedure terminology codes for diagnostic tests and the rise in accountable care organizations. Kathryn Phillips

Increase Patient and Provider Knowledge About Tumor Profiling Tests

- Create publicly available databases of test availability, cost, and value. Kathryn Phillips

- Develop and assess patient education strategies and tools for different levels of health literacy. Mia Levy, Patricia LoRusso

- Develop guidance on how to structure and frame information about genomic test results to facilitate communication and decision making with patients. Kathryn Phillips

- Develop educational materials for health care providers with different learning styles. Mia Levy

in the Appendix. The speakers’ biographies and presentations (as PDF and audio files) have been archived at http://www.iom.edu/Activities/Disease/NCPF/2014-NOV-10.aspx (accessed March 18, 2015).

MOLECULAR BIOLOGY REVOLUTION IN CANCER DIAGNOSIS AND TREATMENT

A molecular biology revolution that has changed the way in which cancer is diagnosed and treated began in earnest in the 1990s and early 2000s when several molecularly targeted therapies became available. Biomarker tests were used to assess the likelihood of responding to specific treatments targeted to the genetic alterations in the tumors that drive their growth. Each of these tests detect only one specific biomarker of tumor response and thus are considered “single analyte” tests. Such tests have been followed by the development of more comprehensive genomic profiling enabled by “next-generation” sequencing technology. Other novel techniques such as RNA sequencing tests and “liquid biopsies” that sample the DNA of tumor cells circulating in the blood are also being developed as methods for molecularly profiling cancers. The rapidly changing nature of the technologies used to develop tests adds to the complexity of assessing new tests as they arise.

Lessons Learned from Single Analyte Tests

Single analyte tests and the targeted treatments associated with them have led to remarkable improvements in treatment response, noted Adrian Senderowicz, president of Oncology Drug Development, LLC. He pointed out as an example that in 2000, the response rate of advanced refractory lung cancer to standard chemotherapy was usually in the single digits and median survival was less than 6 months. But 10 years later, following the introduction of therapies targeting the epidermal growth factor receptor (EGFR), the response rate increased to about 60 percent for patients with certain EGFR mutations, and the median duration of those responses was 48 weeks (Camidge et al., 2012).

Similarly, a therapy for melanoma targeting the BRAF gene led to dramatic improvements in patients whose tumors had the variant form of the BRAF targeted by the drug, with nearly all of those patients experiencing a regression of their tumors (Sosman et al., 2012). Researchers then discovered that 1 percent of lung cancer patients have BRAF driver mutations

in their tumors and these patients also responded to the BRAF-targeted therapy. In addition, 2 percent of lung cancer patients have mutations in the HER2 gene, which had previously been identified as an effective target for certain patients with breast cancer (Gandhi et al., 2013). By 2010 researchers had identified six genetic variants in the tumors of lung cancer patients that indicated likelihood of responding to specific treatments as well as a KRAS variant that indicated a lack of response to a group of targeted treatments known as tyrosine kinase inhibitors (see Figure 1).

As noted by Mia Levy, director of cancer clinical informatics at Vanderbilt-Ingram Cancer Center, the dramatic responses achieved with targeted treatments changed the approach to treating lung cancer. Prior to the development of targeted therapies, lung cancer patients were divided up into two main groups based on the appearance of their tumor cells, with the majority being classified as non-small-cell lung cancers. All patients with this type of lung cancer were given the same treatments before 2000. But now “we have predictive biomarkers to segment out this population so instead of treating everybody the exact same way, we treat them differently with targeted therapy. So instead of having response rates of 30 percent or

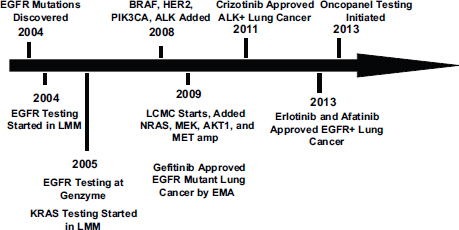

FIGURE 1 Genotyping time line for non-small-cell lung cancer.

NOTE: BRAF, EGFR, HER2, KRAS, MEK, NRAS, and PIK3CA are genes detected by biomarker tests; EMA = European Medicines Agency; LCMC = Lung Cancer Mutation Consortium; LMM = Laboratory of Molecular Medicine at the Harvard Medical School.

SOURCE: Johnson presentation, November 10, 2014.

worse [to first line therapy], we’re getting response rates of close to 80 percent in this population,” Levy said. Roy Herbst, Ensign Professor of Medicine and Professor of Pharmacology, chief of medical oncology, Yale Cancer Center and Smilow Cancer Hospital, and associate director for translational research, Yale Cancer Center, added, “We really have taken lung cancer and made it a disease where we are focusing on more and more pieces of the pie.”

Similar scenarios have evolved in the treatment of many other cancers, including breast, melanoma, lung, and colon cancers. Using tests to identify molecular drivers of tumor growth in these cancers is often key to selecting a treatment. Senderowicz stressed that “the segmentation of these patient [populations] is very important because based on the segmentation, you can treat different patients with different agents.” Therefore, it is crucial that the tests that indicate the segmentation be accurate, he added, because false negatives will prevent patients who need these more effective targeted therapies from receiving them. “It’s a big responsibility for the manufacturers of these tests, for the physicians who order them, for the pathologists who perform them, and for the regulatory agencies who regulate them,” he said. “This creates a lot of challenges for different stakeholders.”

Even when single analyte tests are accurate, patients can still be resistant to the targeted therapies or acquire such resistance after a favorable initial response to treatment, Levy pointed out. Understanding the cause of the primary or acquired resistance of these patients is now possible due to technological advances that have made it feasible and economical to decipher much or all of the entire genome of tumor cells, she added. Such “next-generation sequencing” has uncovered co-occurring genetic mutations in tumors, including molecular backup pathways that can emerge when a major tumor driver is blocked from acting by a specific treatment.

Bruce Johnson, chief clinical research officer and professor of medicine, Harvard Medical School, noted that the more detailed sequencing of tumor DNA by next-generation sequencing can preclude the need to acquire additional tumor tissue for testing to determine why patients are not responding to targeted treatments, by revealing before such treatment even begins the co-mutations that can prevent a durable treatment response. “Look at how many times you can spare yourself from having to go back and do another test as you give second and third line treatments to these patients,” he said. Lilian Siu, senior staff physician, division of medical oncology and hematol-

ogy, Princess Margaret Hospital in Toronto, agreed, adding “These genetic panels are important so that we have almost everything that we want done in one shot with one specimen.”

Levy stressed, “Genomic profiling in cancer is here to stay. Instead of just testing for a single biomarker that’s going to drive your decision for therapy, we can test for multiple types of alterations.” Anne-Marie Martin, head, molecular medicine and precision medicine & diagnostics, GlaxoSmithKline (GSK), noted, “The technology is allowing us to generate comprehensive data in much smaller patient samples.” However, Mickey Williams, director, molecular characterization laboratory, Frederick National Laboratory for Cancer Research, added the caveat that next-generation sequencing is fostering the development of tests that do not just detect a handful of genetic defects but screen the more than three billion bases in DNA for alterations. “That’s a lot of analytes and to be able to demonstrate that you can do this accurately is a daunting task,” he said.

Next-generation sequencing can determine the sequence of a portion or all of the DNA in a tumor sample, but not all that DNA will be transcribed into RNA and then into proteins that play an active role in tumor cells. To focus sequencing efforts on the genes that are being transcribed and are thus more likely to have an effect on tumors, some researchers do another type of genomic tumor testing, known as RNA sequencing. Neil Hayes, associate professor, clinical research, hematology/oncology, University of North Carolina (UNC) Lineberger Comprehensive Cancer Center, stressed that although RNA is more difficult to work with than DNA, “RNA is actually where the action is, whereas most of the genome is not transcribed and not of interest.”

According to Hayes, another advantage to working with RNA as opposed to DNA is that most mutations in oncogenes are easier to detect in RNA. One study he conducted showed that RNA sequencing integrated with DNA sequencing improved the mutation detection rate in samples with low-purity tumor cells (Wilkerson et al., 2014). Hayes said it is also less expensive and easier to find repeated or deleted sequences and other structural alterations to the genome with RNA versus DNA sequencing. This technology could be helpful in detecting genetic alterations in the many patients for which DNA analysis has not revealed mutations that are driving their cancers, he said. For example, he said that for about half of all

lung cancer patients who have had their tumor DNA sequenced, no known driver mutations were detected. In addition, RNA sequencing might prove useful in detecting altered expression of immune system components that are the targets of several new immunotherapies in development for cancer.

Tests for Circulating Tumor DNA

Other innovative biomarker tests on the horizon are those that measure tumor DNA circulating in the blood. Called liquid biopsies, these tests analyze a small blood sample to detect and screen the naked DNA released by tumor cells during cell turnover. Although these DNA fragments are small, they can contain genetic mutations, according to Matthias Holdhoff, assistant professor of oncology, Johns Hopkins Medicine. For these tests, circulating tumor DNA must be separated from the DNA of normal cells in the bloodstream, which can be like finding the proverbial needle in a haystack. But Holdhoff said one of his studies showed that using a polymerase chain reaction (PCR) to duplicate DNA sequences so they are easier to find, combined with flow cytometry to sort and quantify them, has a detection rate of 1 in 10,000 or better, which is akin to that of standard PCR-based assays (Holdhoff et al., 2009).

According to Holdhoff, such liquid biopsies are advantageous because they are non-invasive and they enable the collection of multiple specimens with minimal burden to patients. These tests can also be done on fresh samples, whereas many biomarker tests are done on paraffin-embedded samples, in which the DNA may be degraded. In addition, the DNA from multiple genetically diverse metastatic tumors can be collected in a single blood sample, unlike surgical biopsies that only sample the DNA of the specific tumor site they biopsy.

Liquid biopsy tests have many potential uses in oncology, Holdoff said, including using them to determine the mutation status of the tumor, to monitor tumor burden, and to track the development of resistance to targeted therapies. He added that if the tests are sensitive enough, oncologists could also potentially use liquid biopsies to detect residual disease after treatment, as well as early recurrence. Circulating tumor DNA could also reveal how the genetics of the tumor changes over time, and to track the emergence of new mutations that might influence response to treatment.

But Holdhoff noted that circulating tumor DNA tests might not be the best tests for every type of cancer. Initial studies of these tests in solid tumors found that although they appear to work well for bladder, colorec-

tal, breast, lung, and other cancers, they do not work as well for brain and some other types of cancer (Bettegowda et al., 2014). Tumor stage also seems to be important, with greater detection rates for higher stage tumors (Bettegowda et al., 2014).

Business Climate for Developing Diagnostic Tests

Due to the reduced costs and increased efficiency of genomic sequencing tests, and the advent of other new technologies, said Federico Monzon from Invitae, the current business climate for developing diagnostic tests is encouraging. However, he also noted increased competition in the field due to smaller laboratories having access to better technology, which has “leveled the playing field” and enabled a lot of laboratories to do genomic testing. Prior to recent technological developments fostered by the Human Genome Project, genetic testing was mainly the purview of large academic medical centers or specialized laboratories, he said. Now, smaller academic hospitals are expressing interest in doing tumor profiling, which increasingly is being required for clinical trials with targeted agents. In addition, he said the recent Supreme Court ruling that invalidated patents of isolated genes that occur in nature also triggered greater interest in developing genetic tests (U.S. Supreme Court, 2013).

Consequently, Monzon said, there is a healthy trend in investments in diagnostics, and there are projections that the field will go from a $15 billion market to a $25 billion market by the end of the decade (Personalized medicine, 2012). Currently the United States represents about half of the market. Molecular diagnostics has been the fastest growing segment within clinical diagnostics in the past decade, Monzon reported (Budel, 2013; DeciBio, 2013; Shields and Deshmukh, 2013).

But there are also reasons to be concerned about business opportunities in diagnostics, Monzon noted, including the pricing for new molecular codes by the Centers for Medicare & Medicaid Services (CMS) in 2014. These new codes caused the median price to drop by 15 percent compared to how CMS previously reimbursed molecular tests, with many prices dropping by more than 50 percent, he said (Malone, 2014).

Another area of uncertainty is how CMS will price reimbursements for molecular tests in the future, which will also impact pricing from the private payer sector. With the new “Doc Fix” law, starting in 2017, Medicare will rely on an average of private payer rates to set its fee schedule, and give special treatment to single-source proprietary tests. The Doc Fix law limits how

deep market-based rates can cut the current fee schedule for the first 6 years. From 2017 to 2019, CMS cannot reduce the payment for an individual test more than 10 percent per year, and from 2020 to 2022, not more than 15 percent per year (Malone, 2014). “There needs to be clarity on reimbursement and a path forward to actually get laboratories reimbursed for the services we provide and the innovations,” Monzon said.

He also noted concerns about FDA’s recent announcement that it will develop a risk-based approach to oversight of laboratory-developed tests, and how that could reshape the diagnostics industry. “Everyone is bracing for these increased regulations,” Monzon said.

CHALLENGES IN BIOMARKER TEST DEVELOPMENT

Workshop participants described numerous technical challenges in biomarker test development, including a lack of standards and reference materials, difficulty in gathering the evidence to assess a test’s validity and utility in the clinic, and the need for greater cooperation and sharing of data to gather that evidence.

Several presenters noted the lack of standards for developing biomarker tests. Williams pointed out the need for test reference materials for quality assurance purposes and to enable comparison of tests across different laboratories. “Every cancer center is doing next-generation sequencing, but we really don’t know if we’re getting the same results because we have an urgent need for reference materials,” he said. Williams noted that the National Institute of Standards and Technology can provide a certified reference human genome that laboratories can sequence to assess if their assays are accurate. But he added that current proficiency tests have a low bar that should be raised for genomic profiling, and that results should be made public. Holdhoff said there was a need for better standardization of specimen sampling, processing, and storage. David Solit, Geoffrey Beene Chair in Cancer Research, and director, Marie-Josée and Henry R. Kravis Center for Molecular Oncology at Memorial Sloan Kettering Cancer Center, said that the Actionable Genome Consortium aims to establish various standards for cancer genomics (see Box 2).

Williams also pointed out that there are no standard operating procedures followed by all laboratories for the same test. For example, some labs

may only sequence samples containing 20 percent tumor material, while others may sequence samples with 50 percent. With such variation, “there’s no guarantee that every lab is going to see the same genetic mutations reproducibly,” he said. Hayes agreed, noting that there is little published data on the tissue requirements and quality needed for accurate RNA sequencing tests, although various thresholds are used by different researchers. “The bottom line is more tissue is better, higher percentage tumor is better, but setting that threshold is very challenging,” he said. However, Solit cautioned that setting those thresholds might “allow the perfect to be the enemy of the good. Are we going to throw away samples and not analyze them if tumor content is too low?” he asked. Williams noted that for the National Cancer Institute’s Molecular Analysis for Therapy Choice (MATCH) Program trial (see the section on basket trials), tumor samples that do not meet threshold minimums are still analyzed, but the results are reported separately from the others.

BOX 2

Actionable Genome Consortium

The Actionable Gene Consortium was formed in 2014 to create and publicize standards for cancer genetics. Composed of representatives from the National Cancer Institute, the Memorial Sloan Kettering Cancer Center, the MD Anderson Cancer Center, the Broad Institute of the Massachusetts Institute of Technology, Cancer Research UK, Fred Hutchinson Cancer Research Center, Princess Margaret Hospital Cancer Center, the Dana-Farber Cancer Research Center, and the gene sequencing company Illumina, the consortium aims to demonstrate clinical utility, democratize genomic testing so it is more widely available to patients, contain costs, and define and standardize what actionable genes are across institutions. The Consortium aims to develop standards for sample processing, tumor content, sequencing, data analysis and reporting. All of the Consortium’s standards, standard operating procedures, analytic tools, results, and conclusions will be published and made available to the public.

SOURCES: Solit presentation, November 10, 2014; Actionable Genome Consortium to guide NGS in cancer, 2014.

Williams suggested establishing reporting standards both for methods and results, including minimum reporting requirements for publishing next-generation sequencing data, so others can reproduce the test and determine whether they get the same results. “There are just way too many parameters and if they aren’t reported we’ll never be able to know how these tests are done,” he said. He also suggested standards for matching treatments with sequencing test results.

Herbst pointed out that when different tests for the same genetic alteration first emerge from several different laboratories, the way in which the test is done and the cut-off standards used for reporting results can be quite variable. Samir Khleif, director of the Georgia Health Sciences University Cancer Center, Georgia Regents University Cancer Center, agreed this is a major problem and that when different tests are used in the same clinical studies, they can have discordant results. But Khleif also noted that better standardization in the early development of tests would require competing companies to cooperate and share their data prior to their tests entering the market, which they are unlikely to do without regulation and/or incentives to do so.

Assessing Analytical Validity, Clinical Validity, and Clinical Utility

Developers must validate their tests before they can be used for clinical care. The validation process begins with analytical validation. This reveals how accurately the test detects the specific analytes it was designed to detect, and includes assessment of the test’s range, accuracy, precision, bias, and reproducibility when used by different operators or instruments, or in different settings (Febbo et al., 2011; IOM, 2010a; Woodcock, 2010).

Clinical validation is also essential in the test development process. Clinical validity is a measure of the accuracy of a test for a specific clinical purpose, such as selection of targeted therapy in a specific patient population (IOM, 2010a, 2012). Such validation involves assessment of the sensitivity, specificity, cut-offs, and other parameters of a test (Febbo et al., 2011; Woodcock, 2010). Williams outlined the steps for validating a next-generation sequencing assay system for use in a clinical trial, as shown in Box 3.

Generally, establishing clinical validity for a test involves showing that it is “fit for purpose,” a process that relies on data collected from clinical trials or from archived samples that are well annotated with outcomes and other clinical information. More recently, sponsors have been submitting

BOX 3

Steps to Validating a Next-Generation Sequencing Assay System for Use in a Clinical Trial

Mickey Williams outlined the steps needed to clinically validate a test for use in clinical research trials, as follows:

Define Intended Use

The intended use determines what must be demonstrated for validation. Researchers may use tests in clinical studies for pure discovery purposes, such as to discover a new treatment response biomarker or to determine patient enrollment or treatment selection, the latter of which would have more stringent performance requirements.

Define the Test System

Tests are systems that include all the steps involved, from biopsy through test result reporting. Because next-generation sequencing tests are complex, any deviation from standard operating procedures can confound the data. An important step is to specify and not deviate from any aspects of the defined system during the validation process, even if improvements are later identified that could potentially make the test better. Locking down the test system in this way is challenging for genomic tests because the technology for these tests is changing rapidly, Williams noted.

Conduct Initial Feasibility Tests

These tests should reveal the strengths and weaknesses of the test in a clinical setting.

Consult with FDA

This is important if the specimens will be collected as part of a clinical trial specifically for assessing the test.

Conduct Analytical Validation of Assay Performance

This assesses how well the test measures the molecular event of interest, including its range, accuracy, and precision under conditions that replicate the clinical setting in which the test is intended to be used.

SOURCE: Williams presentation, November 10, 2014.

case studies, results from database analyses, and medical literature reviews to FDA to establish the clinical validity of their tests, noted David Litwack, Personalized Medicine Staff at FDA. “As long as the evidence is good, there’s no reason why it has to be a clinical study, and the use of databases is going to be a very important part of FDA regulation in the future that will hopefully ease the pathway for everybody,” he said. Dane Dickson, director of clinical science, MolDx, Palmetto GBA, suggested that genetic panels be disease-specific and recognize that mutations in a gene such as BRAF, although relevant to determining treatment in a melanoma patient, may not indicate proper treatment in a colon cancer patient. But Levy noted that it would be difficult for diagnostic companies providing genomic panels to clinically validate them for each individual cancer type. Rather, what they tend to do is run the comprehensive genetic test, but only report and charge for the genetic results relevant to the particular tumor sample being tested. This also helps prevent clinicians from being overwhelmed by an excessive amount of genomic information, she added.

Several speakers noted other challenges in assessing the clinical validity of biomarker tests that are frequently used by oncologists. Hayes pointed out that most archived samples are not available due to proprietary claims of the institutions that house them, and it is time consuming and expensive for a diagnostic company to collect their own samples. Johnson added that tests to assess the clinical validity of biomarker tests are often done on cancer cell lines, rather than clinical tumor specimens, which are more relevant for assessing clinical validity. He also noted that when assessing clinical validity, laboratories tend to use clinical specimens enriched with the mutations the test is designed to detect, and he questioned the relevance of those results to clinical settings.

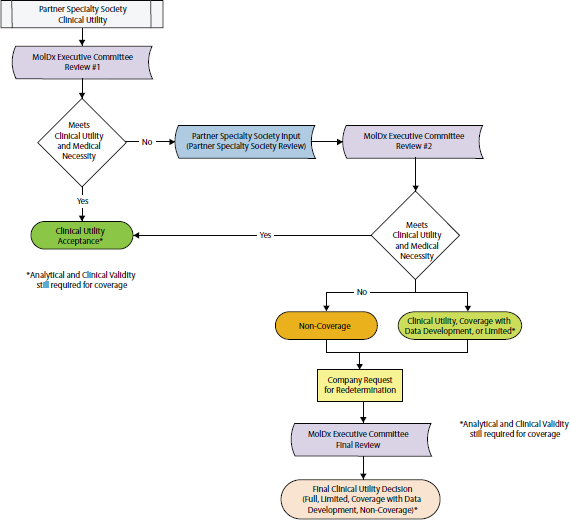

Both clinicians and insurers need to know a test’s clinical utility in order to assess its value for certain cancers. Clinical utility is a measure of whether clinical use of the test improves patient outcomes for a specific indication. Several speakers noted that many diagnostic companies and laboratories do not assess the clinical utility of their tests before they are used in the clinic. Next-generation sequencing tests especially tend to provide extensive information on genetic variants in a sample, but little to no supporting information on which of those alterations are “actionable,” that is, indicate specific clinical interventions. Williams called for guidelines for identifying and reporting a genetic variant as clinically actionable. These guidelines could specify what level of evidence is needed to take clinical action in response to a test result.

Dickson added that because “no two next-generation sequencing methods are the same, if one lab is doing it one way and has a greater sensitivity and lower specificity than another lab, how can I aggregate that information to determine clinical utility?” He also stressed that an increased sensitivity will not necessarily translate into better clinical outcomes. David Eberhard, director, Pre-Clinical Genomic Pathology, Lineberger Comprehensive Cancer Center, and associate professor, Departments of Pathology and Pharmacology, UNC at Chapel Hill, added that the sensitivity needed in a test can vary depending on how large tumor samples are likely to be. Lung cancer biopsies tend to be tiny, for example, so would require greater sensitivity to be clinically useful, he said, as would samples with a low percentage of tumor cells.

Assessing clinical utility of tests usually requires applying the test to a large number of patients or patient samples, which can be challenging to do given the rarity of some of the mutations that the tests are designed to detect. Some relevant mutations, such as those in BRAF, occur in only 1 percent or less of lung cancer patients. Johnson noted that for one study, it took 3 years at major medical centers to accrue 50 lung cancer patients with such rare mutations. Martin added that in one of GSK’s studies, researchers had to screen more than 11,000 lung cancer patients to enroll 23 patients with a specific BRAF mutation (V600E) (Marchetti et al., 2011; Paik et al., 2011).

“As we get into more complicated mutation sequencing, we are going to get into smaller and smaller datasets,” said Dickson. “It is unlikely that we are going to be able to really get some of these good datasets we have traditionally used for determining if a test is appropriate. That is a problem because even though we agree it is hard to get those levels of information in a molecular test, we also need to recognize that when we are taking people away from well-established interventions based on limited datasets, we could potentially really harm a patient.”

Eberhard reported that nearly 20 years ago the Tumor Marker Utility Grading System was developed to define levels of evidence for tumor markers (Hayes et al., 1996). The grades given in this system were determined to a large degree by the types of studies used to assess the markers. Subsequently, some consideration has also been given to how the samples were obtained and how the tests were performed to create the evidence. More recently the National Comprehensive Cancer Network (NCCN) created categories of evidence for tumor markers to aid decision making of practicing oncologists, ranging from high-level evidence that leads to

uniform NCCN consensus to weaker evidence that results in major NCCN disagreement regarding an intervention (Febbo et al., 2011).

But neither the Tumor Marker Utility Grading System nor the NCCN guidelines adequately address whether a biomarker test is medically necessary, which is a key component of clinical utility, Eberhard pointed out. To assess this aspect of molecular diagnostics, he suggested using a problem-solving approach called “root-cause analysis,” which is outlined in Box 4.

Clinical utility also entails feasibility of clinical implementation. That aspect of fitness for purpose depends on the platform the test is performed on and how robust, complex, and suitable both the platform and the test are to the clinical purpose at hand, Eberhard noted. Sample characteristics also influence clinical utility as well as how results are interpreted. The final results of a test must indicate specific actions to have clinical utility, Eberhard pointed out. But the results from molecular diagnostic tests often fall into large, uninterpretable gray zones due to a lack of evidence on the clinical significance of the alterations detected. For example, Oncotype Dx, a biomarker test for breast cancer that measures the expression of 21 genes, has a large gray zone of results called “intermediate” for which there is no one clear treatment recommended. For patients given this result, the test currently has no clinical utility (a clinical trial called TAILORx is ongoing to assess the utility of the test for this patient population). There are no standards for what size of gray zone is acceptable for a test to enter the market, Eberhard noted. Next-generation sequencing also often identifies genetic variants of unknown significance. “So if we have a variant of unknown significance, what should we tell the oncologist?” Eberhard asked.

Determining clinical validity and utility of biomarker tests would be greatly aided if companies and institutions amassing tumor profiling data and samples collaborated more and shared information, several participants suggested. “We have a great opportunity to work across different pharmaceutical and diagnostic companies’ interest and in the patients’ interest to work collaboratively to be certain that we’re bringing these tests into the clinic, and at the end of the day doing no harm, but actually really pushing the field forward,” said Williams. Monzon added, “We need to develop policies that support data sharing among laboratories and health care providers to help advance the clinical knowledge base.”

Root-cause analysis aims to a find a cause for a problem, which when removed, prevents an undesirable event from occurring. This analysis is often used in quality assurance programs.

Root-cause analysis is performed systematically with conclusions and causes backed up by documented evidence. There may be more than one root cause for a problem. The goal is to identify solutions to prevent recurrence at lowest cost in the simplest way. If there are alternatives that are equally effective, then the simplest or lowest cost approach is preferred. Root causes identified depend on the way in which the problem or event is defined. Root-cause analysis should establish a sequence of events to understand relationships among contributory (causal) factors, root cause(s), and the defined problem, and can potentially address problems before they occur or escalate rather than reacting to problems as they occur.

Eberhard gave an example of a root-cause analysis undertaken to address the problem that diagnosis of non-small-cell lung cancer based on how it appears under a microscope is imprecise and does not recognize subtypes. The root-cause analysis of this problem identified that accurate and reproducible subtyping can be compromised by samples that are too small, by inexperienced interpretation, or by being unable to distinguish poorly differentiated adenocarcinomas from squamous cell carcinomas (Grilley-Olson et al., 2013; Thunnissen et al., 2014). The solution to this problem could be new diagnostics that can distinguish adenocarcinomas from squamous cell carcinomas, which may be useful if they can provide the same result on small biopsies as what would have been obtained from larger definitive samples of the same tumor, Eberhard noted.

SOURCE: Eberhard presentation, November 10, 2014.

Schilsky noted that various databases for genomic information are being acquired through next-generation sequencing in clinical studies, including some that are publicly available, but there are no standards for how that information is reported and annotated. He suggested that a public agency develop a genetic variant annotation process and database for the community that it curated and updated. Levy responded that the National

Institutes of Health (NIH) and the National Human Genome Research Institute are already providing such public annotated databases for inherited germ-line mutations. But she added that more extensive clinical outcome data are needed for tumor mutations.

Williams suggested the American Society of Clinical Oncology (ASCO) and NCCN could be involved in creating such a clinically annotated public database for cancer mutations. “I think the feeling is shared that the time is now, everybody is acting on information, and if we could have some common data that everybody could point to so that we knew we were acting identically as we get this information is extremely important,” he said. Holdhoff also advocated for having one major database that is housed and curated by a government agency, which could have the advantage of being unbiased and long lasting. “Everyone wants to have their own database, but it’s the public trust we really have to respond to so there should be one major database that will last for the next hundred years or so and outlast everybody’s individual careers,” Holdhoff said.

Levy pointed out that as an oncologist receiving genomic profiling data, she has found a lot of detailed information missing from the reports provided by the molecular diagnostics lab that conducted the testing. She added that there needs to be a new paradigm for making data public, and pointed out that some companies have the largest collection of data on specific tumors or mutations, but those data are not accessible. She also noted that some patients have been uploading their own data onto websites that researchers can access, so more progress can be made in treating their disease.

Johnson pointed out that the Lung Cancer Mutation Consortium, a group of 16 centers across the United States, is assembling detailed sequencing information (BAM files3) and ensuring they are reproducible across institutions so they can be shared. Hayes added that BAM files can be entered into a public database known as DbGap, but because of formatting and consent issues it is difficult to do so. “We need an easier way to get these BAM files out,” he said.

_______________

3 A BAM file is the binary version of a SAM file. A SAM file is a tab-delimited text file that contains sequence alignment data.

REGULATORY OVERSIGHT CHALLENGES

Clinical tests are usually done in laboratories accredited by the College of American Pathologists (CAP). A goal of accreditation is to ensure the quality of testing systems and the reproducibility of results. Another goal is to ensure that the results from one lab are comparable to that of other laboratories, through proficiency testing of reference samples provided by CAP or other large collegiate organizations. All clinical labs must have proper CLIA certification to receive Medicare or Medicaid payments.

There are two types of laboratories: (1) laboratories affiliated with a health care institution that provide testing directly to patients in a clinical setting, and (2) those that are known as reference laboratories, which are laboratories to whom samples are sent for testing by clinician providers. But there are also hybrids, such as large institutional reference laboratories that have outreach programs to acquire samples from the community. Monzon said that reference labs can be more efficient and reduce cost compared to institution-affiliated laboratories, and can be more proficient as well because of the high volume of tests they conduct. Reference labs tend to perform tests for esoteric conditions, that is, to diagnose rare disorders that only a thousand patients may have. Because these patients are scattered across the country, there is an advantage to having only one central reference lab that offers the test for a rare disease, Monzon pointed out. But the disadvantage of reference lab tests is that because they are not offered onsite, there can be delays due to shipping samples and reporting results.

How a test is regulated is determined by how it comes to market, Hayes reported. A test may be marketed as a commercial test “kit,” a group of reagents used in the processing of samples that are packaged together and sold to multiple labs. More commonly, a test comes to market as an LDT, where the test is developed and performed by a single laboratory, and where specimen samples are sent to that laboratory to be tested. FDA regulates only tests sold as kits and, to date, has practiced “enforcement discretion” for LDTs, which it defines as in-vitro diagnostics manufactured, developed, validated, and offered by a single laboratory. There are tens of thousands of LDTs in clinical use, and most cancer diagnostics are LDTs, Monzon said.

According to FDA, LDTs are supposed to be simple, well-understood pathology tests, tests used to diagnose rare diseases, or those for which testing outside the institution would be prohibitive to patient care due to delays between test ordering and delivery of test results. FDA does not consider a diagnostic test an LDT if it was designed or manufactured completely or

partly outside of the laboratory that offers and uses them. But Hayes said that next-generation sequencing tests are neither simple nor well understood. He added that sequencing tests are often conducted by large reference laboratories, to which institutions throughout the entire country send their samples, rather than by a single institution as part of the patient care services they offer. This can cause clinically significant delays, Hayes noted. “Our pathologists who are reading cases coming out of the operating room get a sample on Monday and they need to report a result on Thursday because that patient wants to get treated for their cancer within a few days. But for the LDTs that have to be sent out, there can be very extensive delays that can be prohibitive,” he said, noting that part of those delays are due to a lack of coordination in information management. He stressed that “the [LDT] regulatory issues need legislation because it’s going to be hard for us to solve this as physicians and scientists.”

FDA approval of diagnostics tests involves more rigorous oversight along two main regulatory pathways. One, called the premarket notification (510k) process, requires showing that the test (which is considered a device) is substantially equivalent to a device that is already in the market or was on the market before 1976, and that the test meets quality standards set by FDA. The 510k pathway can only be employed for tests with moderate levels of risk linked to their use. For more complex tests that pose more risk to patients, manufacturers must submit an application for Premarket Approval (PMA) to FDA that details the safety and effectiveness of their test. The test cannot enter the market until FDA reviews and approves this application. In its review of tests, FDA considers analytical and clinical validity, but not clinical utility (IOM, 2010a, 2012).

Conducting the studies required for either the 510K or PMA regulatory pathways can be quite expensive. Hayes noted although the 510K route is the less expensive route, it can still cost millions of dollars to carry out, and no NIH grants or other public funds are allocated for this purpose, so it requires private-sector involvement. Part of the expense of acquiring FDA approval for a biomarker test can be due to having to submit to FDA review not just the test, but the platform on which the test was done. For RNA sequencing tests, for example, FDA has only reviewed one machine used for the tests, but there are several other platforms on which the tests can be run, Hayes noted. Patricia LoRusso, professor of medicine and associate director of innovative medicine at Yale Cancer Center, added, “Not all platforms are created equal, even if you are going after the same targets.” Hayes noted that “the FDA hasn’t looked at Illumina sequencers for RNA or multiplex

PCR machines, so if you want to take a test forward in the full regulatory path, you’re going to spend a lot of money getting that machine approved as well, and that’s one of our challenges.”

Monzon was also critical of having to specify and acquire FDA approval for the instrument on which the test is performed. “Response to therapy is linked to the presence of a mutation or biomarker and not to the actual result of the instrument,” he said. Monzon suggested setting standards for the minimum performance needed to achieve a positive or negative result, but not requiring specific instrumentation because “innovation allows us to move forward and do better testing with better devices.” Martin noted that her group is working with FDA to “establish a novel regulatory framework that considers both the PMA predictive or clinical claims as well as the analytical claims so as to move from one test-one drug to one test-multiple drugs.”

Evolving Regulation of Laboratory-Developed Tests

Senderowicz noted that when FDA regulations for devices were implemented in 1976, a decision was made to exercise enforcement discretion with regard to premarket review of LDTs because most LDTs were relatively simple and considered low risk. Recognizing how tests have evolved since then, becoming both more complex and higher risk, in July 2014 FDA submitted a letter to Congress with proposals for regulating some LDTs. This letter noted the problems that FDA has identified with several high-risk LDTs, including claims not adequately supported with evidence, lack of appropriate controls in studies to evaluate the test, erroneous results, and falsification of data. This has resulted in faulty LDTs that could have led to patients being over- or undertreated for heart disease, cancer patients being exposed to inappropriate therapy or not receiving effective therapy, and incorrect diagnoses of serious conditions, such as autism, FDA stated. “So it’s a serious issue and I foresee there’s going to be significant changes in the regulation of LDTs,” Senderowicz said.

Litwack reported that FDA’s current proposal for regulating LDTs is to collect basic information on all LDTs through a new notification process and to use a public process (i.e., advisory committees) to obtain input on risk and priority for regulation. FDA would then phase in a new regulatory framework based on risk over a period of about 9 years, with regulatory guidances for LDTs considered highest risk issued first, followed by regulations governing those of more moderate and then those of lowest risk.

FDA would continue some enforcement discretion for specific categories it determines to be in the best interest of public health. It would also consider tests for unmet needs as well as other factors that might require special regulation.

In contrast, Monzon proposed maintaining LDT regulatory oversight within CMS rather than shifting it to FDA, and instead making CLIA regulations more stringent, with adverse-event reports part of the CLIA registry. He also said the registry should be made public and transparent. He stressed the importance of enabling continued innovation, such as allowing academic labs to use new discoveries of genetic drivers of tumor growth by quickly translating them into LDTs performed in a CLIA environment. “Reimbursement and regulatory pressures could constrain our ability to remain in a leadership position in diagnostics development,” he warned.

Senderowicz stressed that as the science evolves, development pathways and regulation also evolve. He cited the development of the breast cancer drug Herceptin and the associated biomarker tests as an example. The first diagnostic test for this drug was introduced in 1998 as a test for HER2 protein overexpression, but now there are 10 diagnostic tests to guide decisions about treatment with Herceptin. Some of these use different methods for detecting HER2 protein overexpression, while others use various techniques to detect amplification of the HER2 gene in tumors. In addition, next-generation sequencing is revealing new mutations in HER2 that previous tests could not detect, Monzon and Solit pointed out.

Litwack noted that the use of different FDA-approved tests can affect the quality of clinical trials, which often use local test results for patient accrual and subgroup analyses. Test results obtained with different technologies may not be comparable, and can affect clinical trial results. “We need to be aware of these issues and when we see a response rate in a clinical trial, it would be good to ask to what degree is that variability in response underlain by the test,” he said.

Given the evolving nature of test technology and FDA regulations, Litwack suggested that test developers consult with FDA early in the development process. “There’s no rule about when you have to meet with FDA—you can meet with them fairly early, even during the conceptual phase,” he said. He also stressed there is a lot of back-and-forth discussion between FDA and test developers during the review process, and that developers also can use FDA resources posted online, including relevant guidances on devices, and information about FDA’s Center for Devices and Radiological Health (CDRH).

In response to a question from Monzon asking how FDA would regulate a multigene panel, Litwack noted that although FDA traditionally has reviewed the data for each analyte separately in a multianalyte test, such data could not be expected for large gene panel tests. Instead FDA may request that a representative group of analytes be validated. He stressed that it would depend on a number of factors and recommended consulting with FDA to determine what data will be required for the test to be approved.

He also said, “Just because you’re using really new technology for a serious illness doesn’t actually mean you would necessarily need an investigational device exemption (IDE). If you or your institutional review board (IRB) determined it was a significant risk study, then we would require an IDE submission,” he said. But he noted that the level of acceptable risk can vary depending on the disease and the patient, and that risk is considered along with the potential benefit during the review. Just using a test to select therapy for a patient does not necessarily indicate a high-risk situation, he pointed out, and for patients who have exhausted all other treatment options, the risk is much less than it would be for patients in which the test would be used to determine first line treatment of breast or other cancers for which several effective therapies exist. The toxicity of the drug treatment that the test would indicate would be another factor considered, he added.

Litwack reported on how FDA cleared an innovative test that detects 139 variants of the cystic fibrosis (CF) gene. The test was run on a DNA sequencing instrument called MiSeqDx. FDA separated its review of the test from its review of the instrument on which the test was run, requiring only analytical validation for the latter. That validation was done using cell-line samples for normal controls and a representative set of samples with characteristic genetic variants to assess the performance capabilities of the instrument under different scenarios, such as sequencing regions rich in certain bases, sequences from different chromosomes with different proximities to the centromere, etc. After this testing was accomplished successfully, FDA cleared the sequencing device to be used for detecting hereditary disorders from genetic sequences in blood samples. But as Litwack noted, the device was not cleared for a particular indication for a specific disease, so it cannot be used without an FDA-cleared test. “You can’t just buy the MiSeqDx and start running tests on it and assume you’re compliant with the FDA—you need to develop a specific test for hereditary disease to be used on it,” he said.

Both analytical and clinical validation were assessed for the 139-variant-CF test run on MiSeqDx. The sponsor conducted the analytical validation

using samples with the 139 variants and normal sequences. Clinical validation was done using a CF database housed at Johns Hopkins University (see Table 1). According to Litwack, the database had several features that made it useful for regulatory purposes, including being expertly curated with preclinical and clinical data, having cooperation of the patient community, and being sustainably supported through various public and private grants. In addition, because there are good CF preclinical models, researchers could take a variant of interest discovered from the database, create the same variant in a cell line, and test whether the variant affected function.

TABLE 1 Clinical and Functional Translation of CFTR (CFTR2) Database

| Data Type | Information Gathered |

| Mutation Name/Associated Nomenclature | Provides a standardized mutation name and mutation by amino acid and nucleotide number (relative to the CFTR gene). |

| Associated Clinical Characteristics/Validation |

Provides the following relevant clinical characteristics:

|

| Functional Testing/Validation of Mutation | Notes the results of in vitro laboratory tests performed for applicable mutations. Specifically, assesses protein processing and maturation, CFTR dependent chloride current, and gene splicing. |

| Literature Review | Notes research previously completed on this particular mutation. |

| Annotation History | Provides a history of changes and timestamps of any revisions to the annotation. |

NOTE: CFTR = cystic fibrosis transmembrane conductance regulator.

SOURCES: Litwack presentation, November 10, 2014; http://www.cftr2.org (accessed March 18, 2015).

Based on this experience, FDA is currently considering the essential characteristics of a “regulatory-grade” database that sponsors could use to support the clinical validity claims of their tests. Such a database would probably have to be sustainable, well annotated, follow good practices, and ensure the quality of the testing data entered into it, according to Litwack.

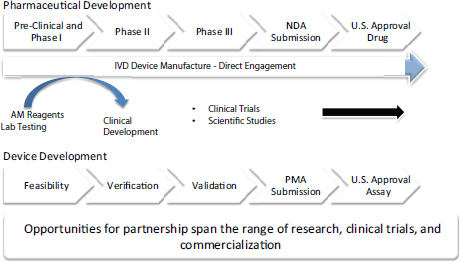

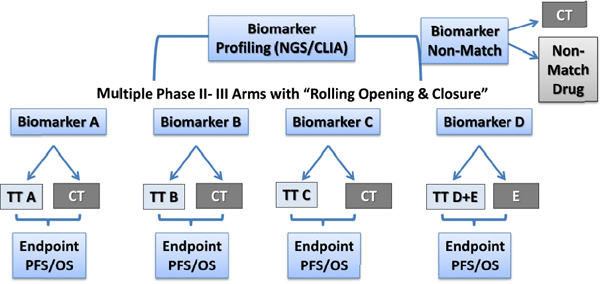

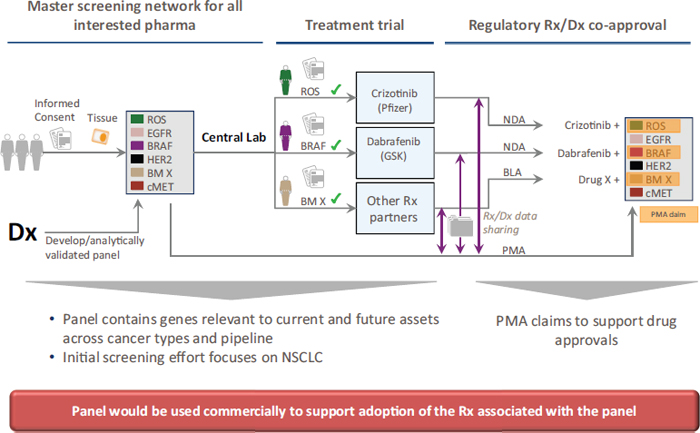

Often biomarker tests in oncology are designed to be used in conjunction with specific targeted treatments, and both the test and the experimental treatment can be co-developed and tested simultaneously in clinical trials. Safety and efficacy of the new drug and of the new diagnostic are typically demonstrated in the same clinical trial, with the goal of simultaneous FDA registration for both the drug and diagnostic. Biomarker tests that are co-developed and co-approved by FDA with a drug in this way are known as companion in vitro diagnostics (IVDs). To date, this approach has been used to gain FDA approval for less than 20 companion diagnostics in oncology (see Table 2), including tests for BRAF, HER2-neu, and EGFR,4 Monzon said. Companion diagnostics provide information that FDA considers essential for the safe and effective use of a corresponding therapeutic product, and are intended for use in the collection, preparation, and examination of specimens taken from the human body. Approved drugs and their companion diagnostics refer to each other in their labels, as indicated in FDA guidance (FDA, 2011). An example of a co-development strategy was described by Karen Long, divisional vice president, medical, regulatory, and clinical affairs at Abbott Molecular (see Figure 2).

With the companion diagnostic pathway, manufacturers have to submit to FDA the analytic and clinical validity of their tests, their intended uses, and the settings in which the devices will be used, that is, in a clinical laboratory or point-of-care setting. If a diagnostic guides patient care, that is, has substantial importance in “diagnosing, curing, mitigating, or treating disease,” then manufacturers of the diagnostic must apply for an IDE so their diagnostic can be tested in clinical trials as part of the companion diagnostic co-development process.

FDA will grant this exemption if it determines the benefits of the test, such as indicating effective treatment, likely outweigh the risks, which could

_______________

4 See http://www.fda.gov; http://www.captodayonline.com (accessed March 18, 2015); http://www.ncbi.nlm.nih.gov/gtr (accessed March 18, 2015).

TABLE 2 List of FDA Approved Companion Diagnostic Devices for Oncology

| Companion Diagnostic | Device Manufacturer | Drug(s) |

| BRACAnalysis CDx™ | Myriad Genetic Laboratories, Inc. | Olaparib |

| Therascreen KRAS RGQ PCR Kit | Qiagen Manchester, Ltd. | Cetuximab, Panitumumab |

| DAKO EGFR PharmDx Kit | Dako North America, Inc. | Cetuximab, Panitumumab |

| Therascreen EGFR RGQ PCR Kit | Qiagen Manchester, Ltd. | Afatinib |

| DAKO C-KIT PharmDx | Dako North America, Inc. | Imatinib mesylate |

| INFORM HER-2/NEU | Ventana Medical Systems, Inc. | Trastuzumab |

| PATHVYSION HER-2 DNA Probe Kit | Abbott Molecular Inc. | Trastuzumab |

| PATHWAY ANTI-HER-2/NEU | Ventana Medical Systems, Inc. | Trastuzumab |

| INSITE HER-2/NEU KIT | Biogenex Laboratories, Inc. | Trastuzumab |

| SPOT-LIGHT HER2 CISH Kit | Life Technologies, Inc. | Trastuzumab |

| Bond Oracle Her2 IHC System | Leica Biosystems | Trastuzumab |

| HER2 CISH PharmDx Kit | Dako Denmark A/S | Trastuzumab |

| INFORM HER2 DUAL ISH DNA Probe Cocktail | Ventana Medical Systems, Inc. | Trastuzumab |

| HERCEPTEST | Dako Denmark A/S | Trastuzumab, Pertuzumab, Adotrastuzumab emtansine |

| HER2 FISH PharmDx Kit | Dako Denmark A/S | Trastuzumab, Pertuzumab, Adotrastuzumab emtansine |

| THxID™ BRAF Kit | bioMérieux Inc. | Tramatenib, Dabrafenib |

| cobas EGFR Mutation Test | Roche Molecular Systems, Inc. | Erlotinib |

| VYSIS ALK Break Apart FISH Probe Kit | Abbott Molecular Inc. | Crizotinib |

| COBAS 4800 BRAF V600 Mutation Test | Roche Molecular Systems, Inc. | Vemurafenib |

SOURCE: Adapted from http://www.fda.gov/MedicalDevices/ProductsandMedicalProcedures/InVitroDiagnostics/ucm301431.html (accessed March 18, 2015).

FIGURE 2 High-level strategy for drug and test co-development.

NOTE: AM = Abbott Molecular; IVD = in vitro diagnostic; NDA = new drug application; PMA = premarket approval.

SOURCE: Long presentation, November 11, 2014.

stem from false positives indicating unneeded treatments or false negatives that would prevent patients from having an appropriate treatment. Such risks and benefits vary according to the disease involved and its standard of care, Senderowicz noted. He added that researchers working with a drug that requires an IVD to predict response are advised to consider the companion diagnostic pathway and begin consulting with not only FDA’s Center for Drug Evaluation and Research or Center for Biologics Evaluation and Research, but also with CDRH, to facilitate submission of an IDE as soon as possible, enabling simultaneous review and approval of both the drug and diagnostic.

Litwack noted that historically, FDA regulation of companion diagnostics was designed for single analyte tests that indicated treatment with a specific companion drug, and not for next-generation sequencing-based tests with multiple analytes that could indicate use of multiple drugs. “We’re talking about over three billion bases in the human genome with millions of variants that any individual can have, so how are we going to analytically and clinically validate those?” Litwack asked. Monzon added, “The model for companion diagnostics is not sustainable in the era of multianalyte tests.”

Even using the existing co-development pathway for single analyte companion diagnostics can take a long time, Johnson pointed out, noting that FDA did not approve EGFR-targeting drugs for lung cancer until nearly 10 years after researchers first discovered EGFR mutations that could drive the growth of some of these lung cancers. However, Long said that such co-development can slash 18 to 24 months off the development time line for both the diagnostic and the drug. She pointed out that the relatively quicker companion diagnostic regulatory pathway requires close coordination and communication between those developing the diagnostic and those developing the therapeutic. “We talk to our partners on a weekly basis. If it’s a fast-paced study, we want to make sure all the resources are in the right place to make sure the project is successful,” she said, adding, “We fast-track everything we need to make sure that we can file our final application with the U.S. FDA at the same time the New Drug Application is filed.” There also is close cooperation with FDA early on in the development process to “keep everybody in the loop” and ensure the correct approach is being taken, Long said.

Monzon pointed out that after a test for a specific genetic variant is approved as a companion diagnostic for one type of cancer, evidence often surfaces suggesting it is also likely to be effective as a companion diagnostic for the same treatment used in a different type of cancer. But manufacturers have to clinically validate the tests for this new use in order for it to be listed on the drug’s label. Monzon said this requires finding a pharmaceutical partner willing to share patient specimens. Such sharing is often hindered by the rareness of the specimens and informed consent limitations, he said. “This is a huge challenge,” he said.

Litwack stressed that often DNA sequencing tests in oncology are used for discovery purposes, “and you don’t know exactly what it is you’re going to end up diagnosing.” The intended use of a test often determines its regulatory path, but sometimes the intended use will change during the course of development and review, he stressed. “When you run next-generation sequencing on somebody, you may have incidental findings and diagnose diseases or conditions other than the ones you originally started testing for, and how do we apply our regulatory framework when you don’t even want to define a very precise population?” he asked.

But it is possible to make changes during a test’s development process, Senderowicz noted, giving the example of the drug Crizotinib. This drug was at first thought to specifically target the enzymes MET and ALK, which are part of a tumor growth-promoting molecular pathway. A Phase I clinical

study was started with eligibility dependent on testing positive for genetic alterations of MET and ALK to select an enriched patient population likely to respond, but only a minimal response was seen in these patients. Then researchers discovered that a specific genetic rearrangement involving the EML4 and ALK gene was a driver of tumor growth. Consequently, researchers developed an LDT that detects this ALK gene rearrangement and enriched their next clinical trial of the drug with patients who tested positive for this biomarker. This trial showed more favorable results that led to the drug’s accelerated approval by FDA. But he stressed that the latter ALK-based test system still had to be “locked down” and could no longer be modified before being used in the registration clinical trial for FDA approval of the test.

Litwack also noted that next-generation sequencing tests are frequently modified due to rapidly evolving technology, and those modifications can affect performance. But he added that it is critical to preserve the ability to make modifications to allow for innovation and to accommodate specific testing needs (e.g., detection of single-base mutations versus detection of multiple copies or deletions of longer genetic sequences). In addition, there are no FDA-cleared next-generation sequencing testing systems for oncology, although there are a few cleared instruments. So laboratories are essentially cobbling together different components and customizing software to create their tests in what he termed a “mix and match” fashion. This can lead to a lot of variability in a test. Finally, he noted that some genetic variants are so rare that it is not possible to gather sufficient evidence of their clinical validity.

Another major challenge is the development of harmonized global regulation for genomic tests, as most pharmaceutical companies and diagnostic developers operate on a global scale. Martin noted that testing platforms need to be used and accessed worldwide, and thus the regulatory path for these diagnostics needs to be consistent globally. “We want to be able to work across not only the FDA, but to engage other health authorities, especially in Europe and Japan, who are seeking to gain more regulation for companion diagnostic tests,” he said. Long agreed, stressing, “We develop one product that is sold worldwide so we are dealing with many regulatory bodies around the world.”

However, the multiple agencies worldwide that regulate biomarker tests use different approaches to regulating products, Long said. For example, some countries approve a companion diagnostic without any clinical utility data, but once those data accrue from studies of clinical use, the label for

the diagnostic is adjusted accordingly. Japan and China both have complex regulatory approval processes and require country-specific clinical studies, Long noted, while other countries will accept certification of U.S. approval along with a technical file submitted to a regulatory authority.

CLINICAL IMPLEMENTATION CHALLENGES

Speakers at the workshop noted several challenges to effectively implementing molecularly targeted cancer diagnostics and therapies in the clinic, including

- Insufficient or inadequate tissue specimens;

- An overwhelming amount of data that are difficult to interpret and relay to patients;

- A lack of standards for and comparative effectiveness data on diagnostics;

- Time delays in acquiring test results;

- Lack of financial resources and a testing infrastructure; and

- Uncertainty over how to address incidental findings and report them to patients.

Insufficient or Inadequate Specimens

Hayes noted that many medical oncologists want to test their patients’ tumor samples to discover genetic alterations that may suggest treatment avenues. However, surgeons acquire those samples, and their primary goal is to minimize harm to the patient while doing a biopsy of the tissue. Consequently, the samples obtained for testing may be of poor quality and are often insufficient for conducting the multiple tests frequently needed to select treatment. Martin emphasized that this is why it is increasingly important to use a comprehensive test that can simultaneously detect a multitude of biomarkers to guide patient treatment or to direct patients to different clinical studies. Johnson agreed, noting, “The thing to do is to try to test for every gene you think you might need to know . . . in one fell swoop.”

Doing so, Levy pointed out, can result in “a tsunami of genetic data entering the clinic at a pace that we’ve never seen before as providers. Now with next-generation sequencing, we’re testing hundreds of genes all at the same time and we do not know what to do with all of that information that’s coming into the clinic. Clinicians are clearly overwhelmed and some are staying away from genomic tests for that reason. We’re stuck with having this massive knowledge gap of what we’re supposed to do with all this information coming at us today.”

Levy added that each of the markers associated with treatment response has variable levels of evidence, ranging from only preclinical data to data demonstrating clinical validity or utility, so it is not clear what the quality of the test is and what can be done with the information it provides. There is an urgent need for bioinformatics experts to analyze the data so they can be more useful in a clinical setting, several presenters suggested.

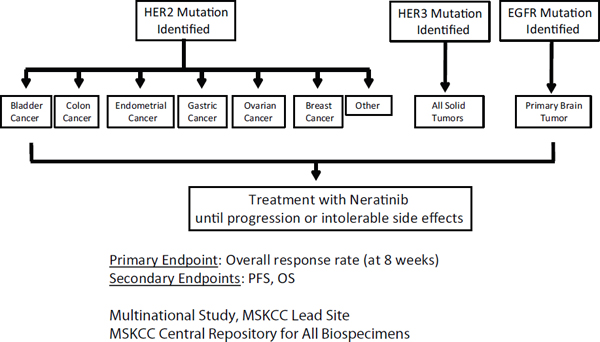

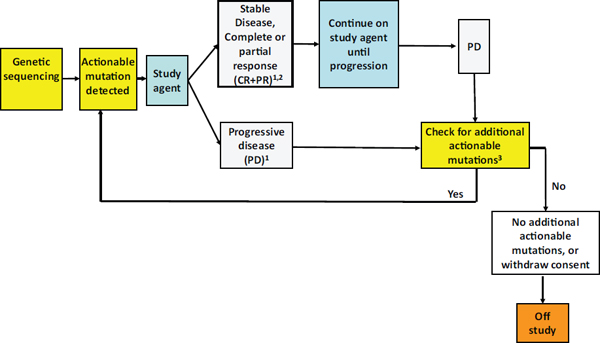

Siu agreed that clinical implementation of targeted cancer treatments is currently hampered by inadequate matching of those treatments to the genetic alterations detected in genomic tests. She cited one study of breast cancer patients that found genomic profiling of tumors only led to targeted treatment selection in 48 out of 404 patients. Such targeted treatments only proved beneficial in 13 (3 percent) of the patients (André et al., 2014).

Many patients’ tumors are not matched to treatments because of a lack of awareness of what treatments might work for their particular genomic profile, according to Siu. To increase that awareness, her institution, Princess Margaret Hospital, created a spreadsheet for each cancer patient that delineates all the individual genetic alterations detected in the tumor and the currently available and relevant clinical trials the patient could be enrolled in based on that genotyping. “We send this to our clinicians on a regular basis so they are constantly reminded that if they have a patient with this profile, they need to think about the clinical trial,” Siu said.

She suggested developing an app that can generalize this information and make it available across the entire country “so clinicians don’t forget to match their patients or try to find treatments for their patients.” She added that “a ‘genetic variant’ to a clinician means relatively little and to a patient, it almost means zero. It is important for us to use that information and make it work by finding an action that comes after the variant is discovered.”

Choosing the best test can also be challenging now that multiple tests often provide the same type of information, yet the comparative effectiveness of these diagnostics has yet to be determined. Furthermore, it is generally unclear how extensive a review process the test has undergone, several speakers noted. “How do we know, as the oncologist consumer who is ordering the test, that [the test] is really up to snuff?” asked Patricia Ganz, Distinguished Professor, University of California, Los Angeles (UCLA), Fielding School of Public Health, and director, Cancer Prevention & Control Research, Jonsson Comprehensive Cancer Center, UCLA. “Has the test actually undergone FDA review or is it just done in a CLIA-certified lab? How can the buyer beware?”